AI and Biotechnology Convergence: Revolutionizing Drug Discovery and Biomedical Research in 2024

This article provides a comprehensive overview of the transformative convergence of artificial intelligence (AI) and biotechnology for researchers, scientists, and drug development professionals.

AI and Biotechnology Convergence: Revolutionizing Drug Discovery and Biomedical Research in 2024

Abstract

This article provides a comprehensive overview of the transformative convergence of artificial intelligence (AI) and biotechnology for researchers, scientists, and drug development professionals. We explore the foundational principles, core methodologies, and real-world applications where AI—from generative models to deep learning—is accelerating the pace of discovery. We address critical challenges in data integration and model interpretability, offer comparative analyses of leading AI tools, and validate the impact through key case studies in drug design and biomarker identification. This analysis synthesizes the current landscape and outlines the future trajectory of this powerful synergy for advancing precision medicine and therapeutic innovation.

From Code to Cure: Defining the AI-Biotech Convergence and Its Core Paradigms

This whitepaper, framed within a broader thesis on AI-biotechnology convergence, delineates the core computational paradigms—Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL)—through the lens of biological systems and biomedical research. For researchers and drug development professionals, this mapping is not merely metaphorical but foundational for developing biologically-inspired algorithms and applying AI to decode complex biological data.

Conceptual Definitions in a Biological Context

- Artificial Intelligence (AI) is the overarching science of creating systems capable of performing tasks that typically require biological intelligence. In a biological context, AI aims to emulate or understand the phenomenon of intelligence itself, akin to studying the integrative function of a nervous system that processes sensory input, maintains homeostasis, and generates adaptive behavior.

- Machine Learning (ML) is a subset of AI focused on algorithms that learn patterns and make decisions from data without being explicitly programmed for every rule. This mirrors adaptive biological processes such as immunological memory, where the immune system learns from exposure to pathogens and improves its response upon subsequent encounters.

- Deep Learning (DL) is a specialized subset of ML inspired by the structure and function of the brain's neural networks. DL utilizes artificial neural networks (ANNs) with multiple layers ("deep" architectures) to learn hierarchical representations of data. This is analogous to the hierarchical sensory processing in the visual cortex, where simple edges and contours detected in early layers are progressively integrated into complex representations like objects and faces in deeper layers.

Quantitative Landscape of AI/ML in Biomedical Research

The integration of these technologies into biotechnology is evidenced by rapid growth in publications, investments, and clinical pipelines. The following table summarizes key quantitative data.

Table 1: Quantitative Metrics of AI/ML in Biomedicine (2022-2024)

| Metric Category | Specific Metric | Estimated Figure (Source Year) | Notes & Context |

|---|---|---|---|

| Market & Investment | Global AI in Drug Discovery Market | \$1.6B (2023) | Projected to grow at a CAGR of ~28% from 2024-2030. |

| Market & Investment | Venture Capital Funding (AI-Bio companies) | > \$5B (2023 aggregate) | Reflects strong investor confidence in the convergence. |

| Research Output | PubMed Citations for "Deep Learning" & "Drug Discovery" | ~4,500 (2023) | Demonstrates a near-exponential increase from ~200 in 2015. |

| Clinical Pipeline | Active Drug Discovery Programs using AI/ML | > 250 (2024) | Led by small-molecule and oncology-focused programs. |

| Performance Benchmark | AI-predicted Protein Structures (AlphaFold2) | Median RMSD ~1Å | Revolutionized structural biology with near-experimental accuracy. |

Experimental Protocol: Applying DL to Transcriptomic Data for Novel Biomarker Discovery

This protocol details a standard workflow for using a Deep Learning model (a deep autoencoder) to identify novel gene expression signatures from high-dimensional RNA-seq data.

Objective: To compress high-dimensional transcriptomic data into a latent low-dimensional representation that captures essential biological variance, enabling the discovery of novel clusters or biomarkers associated with a disease state (e.g., cancer subtypes).

Materials & Workflow:

Table 2: Research Reagent Solutions & Key Materials

| Item | Function in Experiment |

|---|---|

| Processed RNA-seq Dataset (e.g., TCGA, GEO) | Input data; matrix of normalized gene expression counts (samples x genes). |

| High-Performance Computing (HPC) Cluster or Cloud GPU (e.g., NVIDIA V100/A100) | Provides the computational power required for training deep neural networks. |

| Python 3.8+ with Libraries: TensorFlow/PyTorch, Scanpy, Scikit-learn | Core programming environment and ML/DL frameworks for model implementation and data analysis. |

| Dimensionality Reduction Tools: UMAP, t-SNE | Used post-DL for 2D/3D visualization of the latent space learned by the model. |

| Clustering Algorithm: Leiden or Louvain | Applied on the latent representations to identify novel sample clusters. |

| Differential Expression Analysis Tool: DESeq2, edgeR | Validates clusters by identifying statistically significant gene expression differences. |

Methodology:

- Data Preprocessing: Load normalized expression matrix (e.g., TPM or FPKM). Apply log2(1+x) transformation. Select top 5,000 highly variable genes (HVGs) to reduce noise and computational load.

- Autoencoder Architecture Design:

- Encoder: A fully connected neural network with layers: Input (5000 nodes) → 1024 (ReLU) → 256 (ReLU) → 64 (ReLU) → Latent Space (32 nodes, linear).

- Decoder: A symmetric network: Latent (32) → 64 (ReLU) → 256 (ReLU) → 1024 (ReLU) → Output (5000, linear).

- Loss Function: Mean Squared Error (MSE) between original and reconstructed input.

- Model Training: Split data into training (80%) and validation (20%) sets. Train using Adam optimizer with a learning rate of 1e-4 and batch size of 32 for 200 epochs. Monitor validation loss for early stopping to prevent overfitting.

- Latent Space Extraction & Analysis: After training, pass all samples through the encoder to obtain the 32-dimensional latent vector for each sample.

- Visualize the latent space using UMAP.

- Perform graph-based clustering (Leiden algorithm) on the latent vectors.

- Biological Validation: Perform differential expression analysis between model-identified clusters. Conduct pathway enrichment analysis (e.g., using Gene Ontology, KEGG) on differentially expressed genes to assign biological meaning to the novel subtypes.

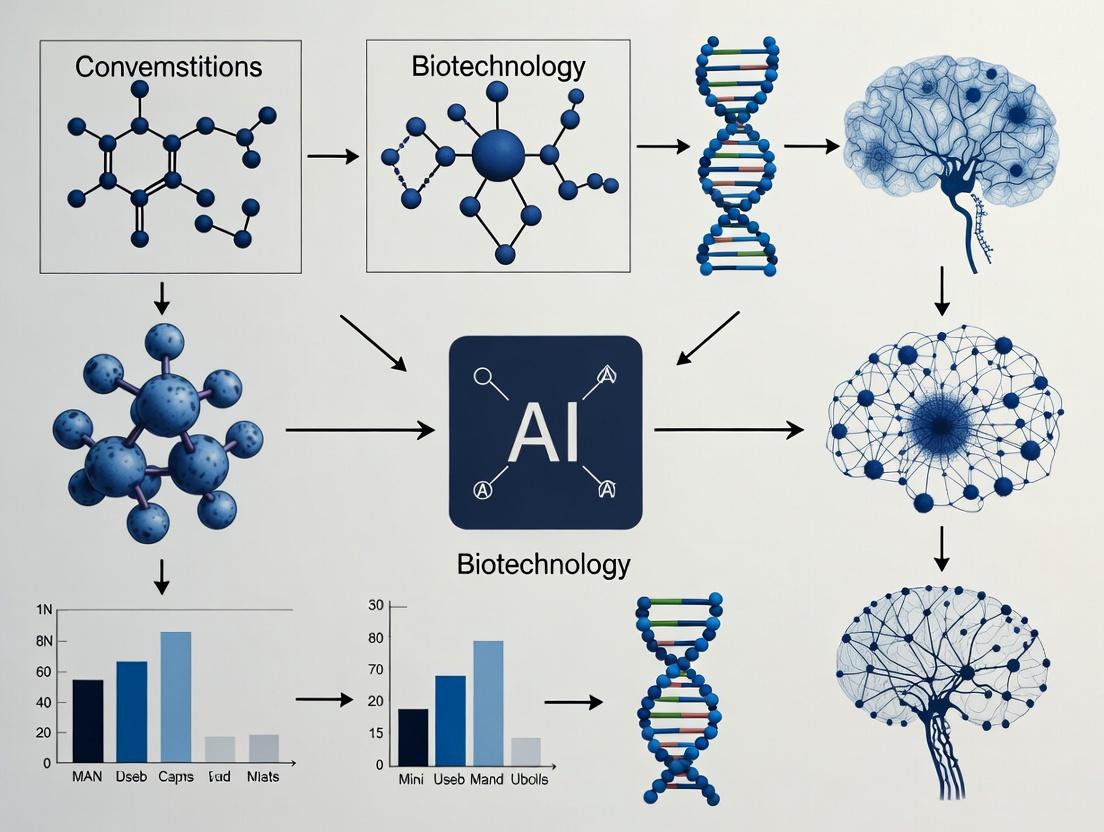

Visualizing Hierarchical Learning and Biological Analogy

AI DL vs Biological Visual Pathway Analogy

Transcriptomic Biomarker Discovery Workflow

This whitepaper, framed within a broader thesis on AI and biotechnology convergence, delineates the critical historical milestones where computational biology and artificial intelligence have synergistically advanced biological discovery and therapeutic development. The integration has evolved from early sequence analysis to the current paradigm of deep learning-driven biomolecular structure prediction and generative AI for drug design.

Key Historical Milestones and Quantitative Data

Table 1: Key Historical Milestones in Computational Biology & AI Integration

| Era | Decade | Milestone (Event/Algorithm/Tool) | Core Innovation | Primary Biological Impact |

|---|---|---|---|---|

| Foundations | 1970s | Needleman-Wunsch Algorithm | Dynamic programming for global sequence alignment | Enabled quantitative comparison of protein/DNA sequences. |

| Foundations | 1980s | Smith-Waterman Algorithm, BLAST | Heuristic local alignment & rapid database search | Revolutionized genomic & proteomic database mining. |

| Systems Biology | 1990s | Hidden Markov Models (e.g., for gene finding) | Probabilistic models for pattern recognition in sequences | Improved genome annotation and gene structure prediction. |

| Omics & Data | 2000s | SVM/RF for microarray & mass-spec data | Machine learning for high-dimensional 'omics' classification | Enabled molecular subtyping of cancers and complex diseases. |

| Deep Learning | 2010s | DeepVariant, DeepBind | CNNs for sequence variant calling & protein-DNA binding | Achieved human-expert level accuracy in genetic variant detection. |

| Structural Revolution | 2020s | AlphaFold2, RoseTTAFold | Geometric deep learning & transformer architectures | Solved the protein folding problem, enabling accurate structure prediction. |

| Generative AI | 2020s | AlphaFold3, RFdiffusion, GFlowNets | Diffusion models & generative networks for biomolecules | De novo design of proteins, antibodies, and therapeutic molecules. |

Table 2: Performance Benchmarks of Key AI Tools in Biology

| Tool/Model (Year) | Primary Task | Key Metric | Performance | Traditional Method Benchmark |

|---|---|---|---|---|

| AlphaFold2 (2020) | Protein Structure Prediction | GDT_TS (CASP14) | ~92.4 (High accuracy) | ~40-60 (Homology modeling) |

| RoseTTAFold (2021) | Protein Structure Prediction | RMSD (Å) | Often <2.0 Å for many targets | N/A |

| DeepVariant (2018) | SNP/Indel Calling | Precision/Recall | >99.5% for SNPs | ~99.0% (GATK Best Practices) |

| ESMFold (2022) | Protein Structure Prediction | Speed (predictions/day) | ~60-80 (on GPU cluster) | AlphaFold2: ~10-20 |

| AlphaFold3 (2024) | Complex Structure Prediction | Interface Accuracy (pTM) | Significant improvement over AF2 | N/A |

Detailed Experimental Protocols for Key Experiments

Protocol: Training and Inference for a Protein Structure Prediction Model (e.g., AlphaFold2 variant)

Objective: To predict the 3D atomic coordinates of a protein from its amino acid sequence using a deep learning model.

Materials:

- Hardware: High-performance computing cluster with multiple GPUs (e.g., NVIDIA A100/V100), ≥ 1TB RAM, high-speed SSD storage.

- Software: Python 3.8+, JAX/DeepMind JAX stack, CUDA/cuDNN, HH-suite, HMMER, Kalign, PDB tools.

- Data: UniRef90 (clustered sequences), BFD/MGnify (metagenomic sequences), PDB70 (structural profiles), PDB (experimental structures for training/validation).

Methodology:

Multiple Sequence Alignment (MSA) Generation:

- Input target sequence into JackHMMER or HHblits to search against sequence databases (UniRef90, BFD).

- Process results to generate a stacked, padded MSA representation.

- In parallel, search against structural database (PDB70) using Hhsearch to generate template features.

Feature Engineering:

- Compute auxiliary features: per-residue and pair representations (position-specific scoring matrices, deletion matrices, residue indices, predicted secondary structure via PSIPRED).

- Template features (if available): distances, orientations, and positional embeddings from homologous structures.

- Combine all features into a fixed-size, batched tensor for model input.

Model Inference (Evoformer & Structure Module):

- Pass processed features through the Evoformer trunk (48 blocks). This module performs iterative, attention-based refinement on the MSA and pair representations.

- Feed the refined pair representation into the Structure Module (8 blocks). This module generates initial 3D frames (rotations and translations) per residue and iteratively refines them using Invariant Point Attention.

- Output final atomic coordinates for all heavy atoms (backbone and side-chains).

Recycling & Confidence Estimation:

- The process may be recycled (3-4 times) where the output structure is used to update the input pair representation.

- The model outputs per-residue (pLDDT) and predicted TM-score (pTM) confidence metrics to assess prediction reliability.

Post-processing:

- Use Amber or OpenMM to perform a brief, constrained energy minimization on the predicted coordinates to correct minor steric clashes.

- Output final model in PDB format.

Protocol:In SilicoVirtual Screening using a Trained Deep Learning Model

Objective: To screen millions of small molecules from a library to identify potential binders for a target protein using a deep learning scoring function.

Materials:

- Software: Docking software (e.g., Autodock Vina, GNINA), deep learning scoring model (e.g., EquiBind, DiffDock), molecular dynamics suite (e.g., GROMACS, OpenMM), RDKit/Open Babel.

- Data: Target protein structure (experimental or predicted), small molecule library (e.g., ZINC20, Enamine REAL), known active/decoy set for validation.

Methodology:

Preparation:

- Prepare protein: add hydrogens, assign partial charges, define binding site box coordinates.

- Prepare ligand library: standardize tautomers, generate 3D conformers, minimize energy, convert to appropriate format (SDF, mol2).

Initial Docking (Traditional):

- Perform rapid, grid-based docking (e.g., Vina) for all library compounds to generate an initial pose and score. Retain top 100,000 poses.

AI-Based Re-scoring & Pose Refinement:

- For each retained pose, extract complex features: atom coordinates, types, distances, and protein-ligand interaction fingerprints.

- Process each complex through a trained Graph Neural Network (GNN) or SE(3)-Equivariant network. This model outputs a refined binding affinity score (pKi/pIC50) and may adjust the ligand pose.

- Rank all compounds based on the AI-predicted score.

MM/GBSA Free Energy Calculation (Optional, for top hits):

- For the top 1,000 ranked complexes, run short (5-10 ns) molecular dynamics simulations in explicit solvent.

- Use the Molecular Mechanics/Generalized Born Surface Area (MM/GBSA) method on trajectory snapshots to compute a more rigorous binding free energy estimate.

- Re-rank based on ΔG_bind (MM/GBSA).

Post-analysis:

- Cluster final top 100 compounds by chemical scaffold.

- Inspect binding modes for key interaction patterns (hydrogen bonds, hydrophobic packing, pi-stacking).

- Output list of prioritized compounds for in vitro testing.

Mandatory Visualizations

AlphaFold2 Prediction Workflow

AI-Enhanced Virtual Screening Pipeline

The Scientist's Toolkit: Research Reagent & Solution Essentials

Table 3: Key Research Reagent Solutions for AI-Driven Computational Experiments

| Category | Item / Solution | Function & Explanation | Example Vendor/Software |

|---|---|---|---|

| Data Curation | PDB (Protein Data Bank) Files | Atomic coordinate files for protein structures; essential for training structure prediction models and benchmarking. | RCSB PDB |

| Data Curation | UniProt/UniRef Clustered Sequences | Comprehensive, clustered protein sequence databases for generating evolutionary insights (MSAs). | UniProt Consortium |

| Feature Engineering | HH-suite (HHblits, HHsearch) | Toolsuite for extremely fast, sensitive protein sequence and structure homology detection. | MPI Bioinformatics Toolkit |

| Model Training | JAX / PyTorch with GPU Support | Deep learning frameworks enabling accelerated, parallel computation on GPUs for large biological models. | Google / Meta |

| Model Deployment | ColabFold (AlphaFold2/3, RoseTTAFold) | Accessible, cloud-based pipeline combining fast MSA generation (MMseqs2) with state-of-the-art folding models. | GitHub / Colab |

| Validation | Molecular Dynamics Suite (GROMACS/OpenMM) | Software for performing physics-based simulations to assess the stability and dynamics of AI-predicted structures. | Open Source |

| Validation | Cryo-EM Map Fitting Software (ChimeraX) | Visualization and tool to fit predicted atomic models into experimental cryo-electron microscopy density maps. | UCSF |

| Wet-Lab Bridge | Gene Fragments (gBlocks) | Synthetic double-stranded DNA fragments for rapid de novo gene synthesis of AI-designed protein sequences. | IDT |

| Wet-Lab Bridge | Cell-Free Protein Expression System | Rapid, in vitro protein synthesis kit to produce and test AI-designed proteins without cell culture. | NEB PURExpress |

| Wet-Lab Bridge | High-Throughput SPR/BLI plates | Microplate-based assay kits for screening binding kinetics of hundreds of AI-predicted ligands in parallel. | Cytiva / Sartorius |

This technical whitepaper, framed within a broader thesis on AI-biotechnology convergence, details the interconnected methodologies driving modern biomedical research. We provide an in-depth analysis of experimental protocols, data integration strategies, and key reagent solutions essential for researchers and drug development professionals operating at the nexus of these core synergy areas.

The convergence of artificial intelligence with biotechnology has created a synergistic feedback loop between drug discovery, genomics, proteomics, and diagnostics. This integration enables a shift from a linear, target-centric approach to a holistic, systems-biology-driven pipeline. AI algorithms, particularly deep learning models, now leverage multi-omic data to predict drug-target interactions, identify novel biomarkers, and stratify patient populations with unprecedented precision. This guide details the technical workflows underpinning this convergence.

Quantitative Data Landscape: A Comparative Analysis

The following tables summarize key quantitative metrics defining the current state and impact of integration across the core areas.

Table 1: Performance Metrics of AI-Integrated Multi-Omic Platforms (2023-2024)

| Platform/Technology Type | Avg. Prediction Accuracy (Target ID) | Time Reduction vs. Traditional Methods | Primary Data Inputs | Key Limitation |

|---|---|---|---|---|

| AlphaFold2 & Variants | 92% (RMSD < 2Å) | ~90% (Structure Prediction) | Genomics, Evolutionary Data | Dynamics/Allostery |

| Generative Chemistry AI | 40-60% (Experimental Hit Rate) | ~70% (Lead Compound Design) | Proteomics, Binding Affinity Data | Synthetic Accessibility |

| Multi-Omic Diagnostic Classifiers | 85-95% (Disease Subtype) | ~95% (Analysis Time) | Genomics (WES/WGS), Proteomics, Metabolomics | Cohort Size Dependence |

| CRISPR sgRNA Design AI | 88% (On-Target Efficiency) | ~50% (Design & Validation) | Genomics, Epigenomics | Off-Target Prediction |

Table 2: High-Throughput Screening & Sequencing Data Output Scale

| Experimental Method | Typical Data Volume per Run | Key Measured Parameters | Primary Synergy Area | Standard Analysis Tool |

|---|---|---|---|---|

| Next-Gen Sequencing (NGS) | 100 GB - 2 TB | SNPs, INDELs, Expression (FPKM/TPM) | Genomics/Diagnostics | GATK, DRAGEN |

| Mass Spectrometry Proteomics | 10 - 100 GB | Peptide Intensity, PTM Identification | Proteomics/Drug Discovery | MaxQuant, Spectronaut |

| High-Content Screening (HCS) | 500 GB - 5 TB | Cell Morphology, Fluorescence Co-localization | Drug Discovery/Diagnostics | CellProfiler, Harmony |

| Single-Cell Multi-Omics | 2 - 10 TB per study | Gene Expression, Surface Protein, Chromatin Acc. | All Four Areas | Seurat, Scanpy |

Experimental Protocols & Methodologies

Integrated Protocol: AI-Guided Target Discovery & Validation

This protocol combines genomic analysis, proteomic validation, and initial compound screening.

A. Genomic Target Identification via GWAS & AI Prioritization

- Cohort Sequencing: Perform Whole Genome Sequencing (WGS) on case-control cohorts (minimum n=5000 per group) using Illumina NovaSeq X Plus. Average coverage: 30x.

- Variant Calling & QTL Mapping: Process raw FASTQ files through BWA-MEM2 alignment and GATK4 variant calling pipeline. Perform expression/metabolite QTL (eQTL/mQTL) analysis using tools like QTLtools.

- AI-Powered Prioritization: Input significant loci (p < 5x10^-8) and linked QTL data into a graph neural network (GNN) trained on known gene-disease networks (e.g., DisGeNET). The model scores genes based on network proximity, functional impact (PolyPhen-2 score), and multi-omic evidence.

- Output: A ranked list of high-confidence candidate disease genes with associated predicted pathogenic pathways.

B. Proteomic Expression & Interaction Validation

- Sample Preparation: Isolate protein from relevant tissue or cell line models (knock-out/knock-in of candidate gene) using RIPA lysis buffer with protease/phosphatase inhibitors.

- Data-Independent Acquisition (DIA) Mass Spectrometry: Digest proteins with trypsin. Analyze peptides on a timsTOF Pro 2 with a 100-min gradient. Use a spectral library for DIA analysis.

- Interaction Proteomics: Perform affinity purification mass spectrometry (AP-MS) on tagged candidate protein. Use CRAPome to filter non-specific interactors.

- Validation: Confirm differential expression (adj. p-val < 0.01, fold change >1.5) and identify significantly enriched protein-protein interaction networks (STRING DB, Cytoscape).

C. High-Throughput Virtual & Biochemical Screening

- Structure Preparation: Obtain candidate protein structure from AlphaFold DB or generate via homology modeling. Prepare with Schrodinger's Protein Preparation Wizard.

- AI-Driven Virtual Screen: Use a generative chemistry model (e.g., REINVENT) trained on binding affinity data to propose 50,000 novel compounds. Dock top 5,000 candidates using GLIDE HTVS/SP/XP workflow.

- In Vitro Confirmation: Procure top 100 ranked compounds from Enamine REAL library. Run a biochemical activity assay (e.g., fluorescence polarization) at 10 µM concentration in triplicate.

- Hit Criteria: Compounds showing >50% inhibition/activity are considered primary hits for lead optimization.

Protocol for Multi-Omic Diagnostic Classifier Development

This protocol outlines the creation of an integrated diagnostic model from plasma samples.

- Multi-Modal Data Collection:

- Cell-Free DNA (cfDNA) WGS: Extract cfDNA from 1mL plasma (QIAseq cfDNA Kit). Prepare libraries (KAPA HyperPrep) and sequence to 0.1x coverage for copy number variation (CNV) and 30x for mutation detection.

- Proteomic & Metabolomic Profiling: Deplete top 14 high-abundance plasma proteins (MARS-14 column). Analyze via Olink Explore 3072 panel (proteomics) and LC-MS/MS untargeted metabolomics (Sciex X500B QTOF).

- Data Processing & Feature Extraction:

- Genomics: Call somatic variants (MuTect2), CNVs (ichorCNA), and fragmentome features (5' end motif analysis).

- Proteomics/Metabolomics: Normalize protein concentrations (NPX) and metabolite intensities (Probabilistic Quotient Normalization). Perform log2 transformation.

- Model Training & Integration: Use a multimodal deep learning architecture (e.g., late-fusion neural network). Train separate encoders for each data type (1D CNN for genomics, fully connected for proteomics/metabolomics). Concatenate final latent representations for a joint classification layer (Softmax output). Perform 5-fold cross-validation.

- Validation: Test on a held-out cohort (n>200). Report AUC, sensitivity, specificity, and PPV at a pre-defined decision threshold.

Visualization of Core Workflows & Pathways

Title: Convergent AI-Driven Pipeline for Diagnostics & Discovery

Title: Oncogenic GPCR Signaling & Drug Intervention Points

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents & Kits for Integrated Multi-Omic Research

| Item Name (Example) | Category | Function in Workflow | Key Synergy Area |

|---|---|---|---|

| QIAseq cfDNA All-in-One Kit | Nucleic Acid Extraction | Isolation of high-quality cell-free DNA from liquid biopsies for genomic analysis. | Genomics, Diagnostics |

| Cytiva HisTrap HP Column | Protein Purification | Immobilized metal affinity chromatography (IMAC) for purification of recombinant, tagged target proteins. | Proteomics, Drug Discovery |

| Olink Explore 3072 | Proteomics | Proximity extension assay (PEA) technology for simultaneous, high-specificity measurement of 3072 proteins. | Proteomics, Diagnostics |

| Enamine REAL Diversity Library | Compound Screening | Chemically diverse, synthesis-ready compound collection for high-throughput and virtual screening campaigns. | Drug Discovery |

| 10x Genomics Chromium Single Cell Multiome ATAC + Gene Exp. | Single-Cell Analysis | Simultaneous profiling of gene expression and chromatin accessibility in the same single cell. | Genomics, Proteomics* |

| CellTiter-Glo 3D Cell Viability Assay | Cell-Based Assay | Luminescent measurement of cell viability, optimized for 3D spheroids and organoids. | Drug Discovery |

| Crispr-Cas9 Edit-R Synthetic gRNA | Genome Editing | High-fidelity, pre-designed sgRNA for precise knockout/knock-in to validate genomic targets. | Genomics, Drug Discovery |

| Seahorse XF Cell Mito Stress Test Kit | Metabolic Assay | Real-time measurement of mitochondrial function (OCR, ECAR) in live cells. | Diagnostics, Drug Discovery |

Note: The Multiome kit captures chromatin accessibility (epigenomics) and mRNA, linking genomic regulation to phenotype.

The convergence of artificial intelligence (AI) and biotechnology is predicated on the systematic digitization and computational analysis of fundamental biological and clinical data types. This whitepaper posits that the effective integration and modeling of four core data classes—Genomic Sequences, Protein Structures, Clinical Trial Data, and Real-World Evidence (RWE)—form the essential substrate for AI-driven discovery and development. Mastery over these data types, their unique ontologies, and their interrelationships is the critical path to accelerating target identification, therapeutic design, and evidence generation in modern biopharma.

Genomic Sequences

Genomic sequences represent the primary digital code of biology. In AI-biotech convergence, they are the input layer for predicting disease susceptibility, identifying novel targets, and stratifying patient populations.

Key Quantitative Metrics & Data Standards

Table 1: Core Genomic Sequencing Metrics & File Formats

| Metric/Format | Description | Typical Scale/Size |

|---|---|---|

| Coverage Depth | Number of times a nucleotide is read during sequencing. | 30x-100x for WGS; 100x-500x for targeted panels. |

| Read Length | Number of base pairs in a single sequencing read. | Short-read: 75-300 bp; Long-read (PacBio/Nanopore): 10-100 kb+. |

| Variant Call Format (VCF) | Standard text file format for storing gene sequence variations. | ~50-500 GB for a population-scale project. |

| FASTQ | Text-based format storing raw sequence data and quality scores. | ~90-150 GB per 30x human whole genome. |

| BAM/SAM | Compressed/plain text alignment format for mapped sequences. | ~60-120 GB per 30x human whole genome (BAM). |

Experimental Protocol: Whole Genome Sequencing (WGS) for AI Training Datasets

Objective: Generate high-coverage, high-quality WGS data from patient cohorts for AI model training in variant discovery and association studies.

Methodology:

- Sample Prep & Library Construction: Extract high-molecular-weight DNA from blood or tissue. Fragment DNA, ligate adapters, and amplify using PCR.

- Sequencing: Load library onto Illumina NovaSeq or comparable platform. Perform paired-end sequencing (2x150 bp) to achieve a minimum of 30x mean coverage.

- Primary Analysis (Base Calling): Use onboard software (e.g., Illumina DRAGEN) to convert raw image data to FASTQ files, assigning quality scores (Q-scores) per base.

- Secondary Analysis (Bioinformatics Pipeline): a. Read Alignment: Map FASTQ reads to a reference genome (GRCh38) using BWA-MEM or similar aligner. Output SAM/BAM. b. Variant Calling: Process BAM files for variant discovery. Use GATK HaplotypeCaller for germline SNVs/indels. Apply hard filters (QD < 2.0, FS > 60.0, MQ < 40.0). c. Annotation: Annotate VCF with functional consequences using SnpEff/Ensembl VEP, integrating dbSNP, gnomAD allele frequencies.

Visualization: WGS Data Generation & Analysis Workflow

Diagram Title: Whole Genome Sequencing Data Generation Pipeline

The Scientist's Toolkit: Genomic Sequencing Reagents

Table 2: Key Reagents for High-Throughput Genomic Sequencing

| Reagent / Kit | Vendor Examples | Function |

|---|---|---|

| DNA Fragmentation Enzyme | Covaris dsDNA Shearer, NEBNext dsDNA Fragmentase | Creates uniformly sized DNA fragments for library construction. |

| Library Prep Kit | Illumina DNA Prep, KAPA HyperPrep | End-repair, A-tailing, adapter ligation, and PCR amplification of libraries. |

| Unique Dual Indexes (UDIs) | Illumina IDT for Illumina | Barcodes individual samples, enabling multiplexing and preventing index hopping. |

| Polymerase | Illumina NovaSeq XP, Q5 High-Fidelity DNA Polymerase | Amplifies library fragments with high fidelity during cluster generation and sequencing. |

| Flow Cell | Illumina S1/S2/S4 Flow Cell | Solid-phase surface where bridge amplification and sequencing occur. |

Protein Structures

Protein structural data provides the 3D atomic-level context for understanding function, mechanism, and interaction sites, enabling AI-driven rational drug design.

Table 3: Core Protein Structural Data Metrics & Databases

| Metric/Database | Description | Typical Scale/Resolution |

|---|---|---|

| Resolution | Clarity of detail in an electron density map (Ångstroms). | X-ray: <2.0 Å (High), 2.0-3.0 Å (Medium); Cryo-EM: 1.8-4.0 Å. |

| Protein Data Bank (PDB) | Primary global archive for 3D structural data of proteins/nucleic acids. | >200,000 entries (as of 2024). |

| AlphaFold DB | AI-predicted structure database by DeepMind/EMBL-EBI. | >200 million predicted structures. |

| PDBx/mmCIF | Modern standard file format for PDB entries, superseding legacy PDB. | Single file contains coordinates, metadata, and experiment details. |

Experimental Protocol: Determining a Protein-Ligand Complex via X-Ray Crystallography

Objective: Solve the high-resolution 3D structure of a target protein bound to a small-molecule inhibitor for structure-based drug design.

Methodology:

- Protein Expression & Purification: Express recombinant protein with affinity tag (e.g., His-tag) in HEK293 or insect cells. Purify via affinity, ion-exchange, and size-exclusion chromatography (SEC). Assess purity (>95%) by SDS-PAGE.

- Crystallization: Mix purified protein (10-20 mg/mL) with ligand at 5:1 molar ratio. Use sitting-drop vapor diffusion in 96-well plates. Screen commercial sparse-matrix screens (e.g., Hampton Research). Optimize hit conditions.

- Cryo-Protection & Harvesting: Soak crystal in mother liquor containing 20-25% cryoprotectant (e.g., glycerol). Flash-cool in liquid nitrogen.

- Data Collection: Mount crystal on synchrotron beamline. Collect diffraction dataset (180-360 images, 0.5-1° oscillation). Aim for resolution <2.5 Å.

- Structure Solution: a. Processing: Index, integrate, and scale images with XDS or autoPROC. b. Phasing: Perform molecular replacement using a homologous structure (PHASER). c. Model Building & Refinement: Iteratively build model in Coot and refine with phenix.refine (minimizing R-work/R-free).

Visualization: Protein Crystallography Workflow

Diagram Title: Protein-Ligand Complex Structure Determination

The Scientist's Toolkit: Protein Structural Biology Reagents

Table 4: Essential Reagents for Protein Structure Determination

| Reagent / Kit | Vendor Examples | Function |

|---|---|---|

| Expression Vector | pcDNA3.4, pFastBac | Plasmid for high-yield recombinant protein expression in mammalian/insect cells. |

| Affinity Purification Resin | Ni-NTA Agarose, Anti-FLAG M2 Affinity Gel | Captures tagged protein from cell lysate with high specificity. |

| Size-Exclusion Chromatography (SEC) Column | Superdex 200 Increase, ENrich SEC | Final polishing step to isolate monodisperse, homogeneous protein. |

| Crystallization Screen Kits | Hampton Research Index, JCSG Core | Pre-formulated solutions to identify initial crystallization conditions. |

| Cryoprotectant | Glycerol, Ethylene Glycol | Prevents ice crystal formation during flash-cooling for data collection. |

Clinical Trial Data

Clinical trial data is the cornerstone of regulatory decision-making, providing controlled, longitudinal evidence of a therapy's safety and efficacy.

Key Quantitative Metrics & Standards

Table 5: Core Clinical Trial Data Standards & Scales

| Standard/Scale | Description | Application |

|---|---|---|

| Clinical Data Interchange Standards Consortium (CDISC) | Global standards for clinical data (SDTM, ADaM). | Mandatory for FDA/EMA submissions. |

| Standardized MedDRA Queries (SMQs) | Groupings of MedDRA terms for adverse event monitoring. | Systematic safety analysis. |

| RECIST 1.1 | Standard for measuring tumor response in solid tumor trials. | Primary efficacy endpoint in oncology. |

| Sample Size | Number of participants needed for statistical power. | Phase 3: Hundreds to thousands. |

Experimental Protocol: Designing a Phase III Randomized Controlled Trial (RCT)

Objective: Compare the efficacy and safety of a novel investigational drug versus standard of care in a defined patient population.

Methodology:

- Protocol & Endpoints: Define primary efficacy endpoint (e.g., Progression-Free Survival), key secondary endpoints (Overall Response Rate, Quality of Life), and safety outcomes.

- Randomization & Blinding: Use interactive web response system (IWRS) to randomize patients 1:1 to treatment arms. Implement double-blinding (patient, investigator).

- Data Collection: Capture data via electronic data capture (EDC) systems. Forms include demographics, medical history, concomitant medications, lab results, efficacy assessments per schedule.

- Monitoring & Management: Conduct regular site monitoring visits. Hold blinded interim analyses by independent Data Monitoring Committee (DMC) for safety.

- Statistical Analysis Plan (SAP): Pre-specify all analyses. For primary endpoint, use Kaplan-Meier method and log-rank test. Analyze safety in treated population.

Visualization: Phase III RCT Data Flow & Analysis

Diagram Title: Phase III Clinical Trial Data Pipeline

The Scientist's Toolkit: Clinical Trial Execution Essentials

Table 6: Key Solutions for Clinical Trial Data Management

| Solution / System | Vendor Examples | Function |

|---|---|---|

| Electronic Data Capture (EDC) | Medidata Rave, Oracle Clinical | Centralized platform for electronic case report form (eCRF) data entry and management. |

| Interactive Web Response System (IWRS) | endpoint Clinical, YPrime | Manages patient randomization and drug supply inventory across trial sites. |

| Clinical Trial Management System (CTMS) | Veeva Vault CTMS, Medidata CTMS | Tracks operational aspects: site management, monitoring visits, documents. |

| Medical Dictionary (MedDRA) | MSSO MedDRA | Standardized medical terminology for coding adverse events and medications. |

| Statistical Analysis Software | SAS, R | Validated environment for executing the Statistical Analysis Plan (SAP). |

Real-World Evidence (RWE)

RWE is clinical evidence derived from analysis of Real-World Data (RWD) on patient health status and care delivery outside of traditional RCTs.

Table 7: Core RWE Data Sources & Study Types

| Source / Study Type | Description | Common Scale/Use Case |

|---|---|---|

| Electronic Health Records (EHR) | Digital patient records from hospitals/clinics. | Longitudinal data for outcomes research, patient journey mapping. |

| Claims & Billing Data | Data from insurance providers (e.g., Medicare). | Large populations for epidemiology, treatment patterns, healthcare utilization. |

| Registries | Disease-specific, prospective observational studies. | Long-term safety and effectiveness in defined populations. |

| External Control Arm (ECA) | RWD-derived control group for single-arm trials. | Provides historical/comparative context for new therapies. |

Experimental Protocol: Generating RWE via an EHR-Based Retrospective Cohort Study

Objective: Compare the time to next treatment (TTNT) for two different oncology regimens in a metastatic cancer population using de-identified EHR data.

Methodology:

- Data Extraction & Linkage: Extract structured data (diagnoses [ICD-10], drugs [RxNorm], labs [LOINC]) from EHR systems (e.g., Epic, Cerner). Link via de-identified patient token.

- Cohort Definition: Define index date (first prescription of Regimen A or B). Apply inclusion/exclusion criteria (metastatic diagnosis, ≥18 years, no prior line). Use propensity score matching (PSM) to balance cohorts on age, sex, comorbidities.

- Outcome & Variable Definition: Primary outcome: TTNT, defined as days from index to start of subsequent systemic therapy or death. Censor at last known encounter.

- Data Curation & Transformation: Curate extracted data to OMOP Common Data Model. Handle missing data via multiple imputation if applicable.

- Statistical Analysis: Perform Kaplan-Meier analysis for TTNT. Use Cox proportional hazards model, adjusted for residual confounders post-PSM, to generate hazard ratio (HR) with 95% confidence interval.

Visualization: RWE Generation from EHR Data

Diagram Title: Real-World Evidence Generation Pipeline

The Scientist's Toolkit: RWE Analytics Essentials

Table 8: Key Tools for Real-World Data Analysis

| Tool / Model | Platform Examples | Function |

|---|---|---|

| Observational Medical Outcomes Partnership (OMOP) CDM | OHDSI ATLAS, Google Health OMOP | Common data model standardizing disparate RWD sources for large-scale analytics. |

| De-Identification Engine | Privacy Analytics RISK, Microsoft Presidio | Scrubs protected health information (PHI) from datasets to enable research. |

| Propensity Score Matching (PSM) Algorithm | R MatchIt, Python scikit-learn |

Reduces confounding in observational studies by creating balanced cohorts. |

| Terminology Mappers | UMLS Metathesaurus, OHDSI Usagi | Maps local codes (ICD-10) to standard vocabularies within a CDM. |

| Federated Analysis Network | TriNetX, Flatiron Health Research Network | Enables distributed querying and analysis across multiple RWD partners without data movement. |

Synthesis: The Converged AI-Biotech Data Architecture

The thesis of AI-biotech convergence is operationalized through an integrated data architecture where these four data types interact. Genomic and protein structural data feed AI models for in silico target discovery and drug design. The resulting candidates are tested in trials, generating clinical data. RWE then extends and contextualizes trial findings in broader populations. AI models are trained and refined across this entire continuum, creating a closed-loop system for accelerated innovation. Mastery of these essential data types—their generation, standards, and integration—is the foundational competence for the next era of biotechnology.

This whitepaper, framed within a broader thesis on AI and biotechnology convergence, provides an in-depth technical analysis of the key organizations advancing AI-driven drug discovery and development. The integration of machine learning, computational biology, and high-throughput experimentation is reshaping traditional R&D pipelines, demanding a new understanding of the collaborative and competitive landscape among established pharmaceutical corporations, agile biotech startups, and foundational technology providers.

The following tables summarize the current investment, partnership, and pipeline scope of major players, based on recent data.

Table 1: Leading Pharmaceutical Companies: AI Initiatives & Key Partnerships (2023-2024)

| Company | AI R&D Investment (Est.) | Primary AI Focus Area | Key AI Partner(s) | Notable Pipeline Asset (Phase) |

|---|---|---|---|---|

| Pfizer | $200-250M annually | Target ID, Clinical Trial Optimization | CytoReason, Tempus | Immunology programs (Preclinical) |

| Merck & Co. | $300M+ annually | Drug Design, Biomarker Discovery | Absci, Iktos | Oncology candidate (Phase I) |

| Novartis | $150-200M annually | Generative Chemistry, Imaging Analytics | Microsoft, BenevolentAI | Heart failure drug (Phase II) |

| AstraZeneca | ~$180M annually | Genomics, Precision Medicine | Illumina, BenevolentAI | Chronic kidney disease (Phase II) |

| Johnson & Johnson | $250M+ annually | Compound Screening, Disease Subtyping | Janssen AI Labs, Atomwise | Alzheimer's biomarker program (Discovery) |

Table 2: Select Publicly Traded AI-Native Biotech Startups

| Company (Ticker) | Market Cap (Approx.) | Core Technology Platform | Lead Therapeutic Area | Key Pharma Collaborator |

|---|---|---|---|---|

| Recursion (RXRX) | ~$2.1B | Phenotypic Screening with CNN | Fibrosis, Oncology | Bayer, Roche/Genentech |

| Exscientia (EXAI) | ~$600M | Centaur Chemist AI Design | Immunology, Oncology | Sanofi, Bristol-Myers Squibb |

| Schrödinger (SDGR) | ~$1.8B | Physics-Based & ML Computational Platform | Oncology, Immunology | Bayer, Takeda |

| AbCellera (ABCL) | ~$1.5B | AI-Powered Antibody Discovery | Immunology, Infectious Disease | Lilly, Novartis |

| Relay Therapeutics (RLAY) | ~$1.9B | Computational Allostery, Dynamics | Oncology | Roche/Genentech |

Table 3: Technology Giants: Cloud & AI Platforms for Life Sciences

| Company | Primary Service Offering | Key Life Sciences Tool/Platform | Example Pharma Client Use Case |

|---|---|---|---|

| Google/ Alphabet | AI Algorithms, Cloud, Quantum | AlphaFold, Vertex AI, Terra | Pfizer: utilizing AlphaFold for target structure prediction. |

| Microsoft | Cloud, ML, Quantum | Azure Quantum Elements, Azure Health | Novartis: AI-powered drug design collaboration. |

| Amazon Web Services | Cloud HPC, ML Services | AWS HealthOmics, SageMaker | Moderna: scaling mRNA sequence design & analysis. |

| NVIDIA | Hardware, AI Software | Clara Discovery, BioNeMo, DGX Cloud | Recursion: powering phenotypic image analysis. |

| IBM | Hybrid Cloud, Quantum | watsonx, IBM Quantum | Cleveland Clinic: jointly running Discovery Accelerator. |

Technical Deep Dive: An AI-Enhanced Drug Discovery Workflow

A representative experimental protocol integrating technologies from across the ecosystem is detailed below.

Experimental Protocol: AI-Guided Hit Identification and Optimization

Objective: To identify and optimize a novel small-molecule inhibitor for a defined protein target using a closed-loop, AI-driven design-make-test-analyze (DMTA) cycle.

Methodology:

Phase 1: In-silico Library Design & Virtual Screening

- Target Preparation: Obtain a 3D structure of the target protein (experimental from PDB or predicted via AlphaFold2). Prepare the structure using molecular modeling software (e.g., Schrödinger's Protein Preparation Wizard) for proper protonation states and missing loop modeling.

- Generative Library Design: Use a generative chemical AI model (e.g., Exscientia's Centaur Chemist, Iktos' Makya) to propose novel compounds. The model is conditioned on:

- Known active ligands (from public ChEMBL data or internal assays).

- Calculated molecular descriptors (QED, SAscore).

- In-silico docking scores against the prepared target (using Glide, AutoDock Vina).

- Multi-Parameter Optimization (MPO): A scoring function ranks generated molecules based on a weighted sum of predicted properties: potency (docking score), synthetic accessibility (SAscore), predicted ADMET (from a model like AstraZeneca's AZOrange), and novelty (distance in chemical space from known actives).

- Compound Selection: The top 200-500 ranked virtual compounds are selected for synthesis.

Phase 2: Synthesis & Biological Testing (The Experimental "Make-Test" Loop)

- Automated Synthesis: Selected compounds are synthesized using automated, high-throughput platforms (e.g., flow chemistry systems from Merck Millipore or Chempeed).

- Primary Biochemical Assay: Purified compounds are tested in a target inhibition assay (e.g., time-resolved fluorescence energy transfer (TR-FRET) assay).

- Reagent Solutions:

- Recombinant Target Protein: Purified, tagged protein expressed in HEK293 or Sf9 cells.

- TR-FRET Substrate Pair: Europium (Eu)-cryptate-labeled antibody (donor) and d2-labeled substrate (acceptor).

- Assay Buffer: Optimized pH and ionic strength buffer (e.g., HEPES, NaCl, MgCl2, BSA).

- Positive/Negative Controls: Known high-potency inhibitor and DMSO-only wells.

- Protocol: In a 384-well plate, combine 2nL of compound (via acoustic dispensing), 5µL of target protein, and 5µL of substrate mix. Incubate for 60 min at RT. Read on a plate reader (e.g., PerkinElmer EnVision) using 340nm excitation, 615nm (Eu) and 665nm (d2) emission. Calculate inhibition % and IC50 via dose-response curves.

- Reagent Solutions:

- Cellular Phenotypic Assay: Compounds with IC50 < 1µM progress to a cell-based assay (e.g., oncology cell line viability assay using CTG).

- Protocol: Seed cells in 1536-well plates. Dose compounds via pintool transfer. Incubate for 72-96h. Add CellTiter-Glo reagent, incubate 10 min, measure luminescence. Determine cell viability %.

Phase 3: Data Analysis & Model Retraining (The "Analyze" Step)

- Data Aggregation: Biochemical IC50, cellular EC50, and compound structural data (SMILES) are aggregated into a centralized data lake (e.g., on AWS S3 or Google Cloud Storage).

- Model Retraining: The generative AI model from Phase 1 is retrained/fine-tuned on the new experimental data using a transfer learning approach. This creates an updated model that has "learned" from the last design cycle.

- Next-Generation Design: The retrained model generates a new set of proposed compounds, ideally with improved predicted potency and cellular activity, initiating the next DMTA cycle. The process iterates until a lead series with desired in-vitro and early in-vivo PK/PD profiles is identified.

Visualizing the Ecosystem & Workflow

Diagram 1: AI Drug Discovery Ecosystem Map

Diagram 2: Closed-Loop AI-Driven DMTA Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents for AI-Validated Biochemical & Cellular Assays

| Item | Function in Protocol | Example Vendor/Product |

|---|---|---|

| Tagged Recombinant Protein | The purified target for biochemical assays; tags enable immobilization or detection. | Sino Biological, Thermo Fisher Gibco. |

| TR-FRET Assay Kits | Homogeneous, high-sensitivity assay format for quantifying enzymatic activity or binding. | Cisbio, PerkinElmer. |

| CellTiter-Glo 3D | Luminescent assay for quantifying viable cells in 2D or 3D cultures post-treatment. | Promega. |

| Acoustic Dispensing-Compatible Plates | Low-volume, high-density microplates for non-contact compound addition. | Labcyte Echo-qualified plates. |

| DMSO-Compatible Compound Libraries | Pre-formatted, solubilized small molecules for high-throughput screening. | Enamine, Merck Sigma-Aldrich LOPAC. |

| Cloud-Based ELN/LIMS | Electronic Lab Notebook and Laboratory Information Management System for structured data capture. | Benchling, IDBS. |

The convergence of AI and biotechnology is being driven by a synergistic ecosystem where tech giants provide the foundational compute and algorithms, AI-native biotechts innovate on rapid iterative design, and large pharmaceutical companies contribute deep biological expertise, scaled development capabilities, and routes to commercialization. The technical workflow outlined—a closed-loop, data-hungry DMTA cycle—is becoming the new standard, demanding robust experimental protocols and seamless data integration. Success in this field will depend on strategic navigation of this complex and collaborative landscape.

Building the Future: Key AI Methodologies and Their Transformative Biotech Applications

The convergence of artificial intelligence and biotechnology represents a paradigm shift in molecular science. This whitepaper, framed within a broader thesis on this convergence, details how generative AI models are transitioning from predictive tools to creative engines for de novo molecular design. Technologies like AlphaFold3 and diffusion models are no longer merely analyzing biological data; they are synthesizing novel, functional molecular constructs, thereby accelerating drug discovery and protein engineering from years to months.

Foundational Technologies & Quantitative Benchmarks

Protein Structure Prediction & Generation: AlphaFold Evolution

AlphaFold3, released by Google DeepMind and Isomorphic Labs in May 2024, generalizes beyond monomeric protein folding to a unified predictive and generative platform for biomolecular complexes.

Table 1: Performance Benchmark of AlphaFold Versions & Contemporaries

| Model (Release Year) | Scope | Average TM-score (vs. Experimental) | Key Capability | Experimental Validation (RMSD Å) |

|---|---|---|---|---|

| AlphaFold2 (2020) | Protein monomers | ~0.88 (CASP14) | Static structure prediction | 1.0-1.5 |

| RoseTTAFold2 (2023) | Proteins, complexes | ~0.86 | Protein-protein complexes | 1.5-2.5 |

| AlphaFold3 (2024) | Proteins, DNA, RNA, ligands, PTMs | >0.7 on complexes | Generative design of complexes | < 2.0 on ligands |

| RFdiffusion (2023) | De novo protein design | N/A (design metric) | Generates novel protein backbones | High success in in vitro folding |

Experimental Protocol for AlphaFold3 Validation:

- Input Preparation: Assemble sequences (protein, DNA, RNA) and ligand SMILES strings.

- Model Inference: Run the AlphaFold3 server or local implementation with default multiple sequence alignment (MSA) and structural template searches disabled for ab initio mode.

- Output Generation: The model outputs a predicted atomic point cloud with per-residue and per-atom confidence metrics (pLDDT, pTM, ipTM).

- Experimental Ground-Truth Comparison: The predicted structure is aligned to an experimentally solved structure (e.g., via X-ray crystallography) using CEAlign or TM-align algorithms.

- Metric Calculation: Root-mean-square deviation (RMSD) for heavy atoms and Template Modeling Score (TM-score) are computed. A TM-score >0.5 indicates correct topology.

Diffusion Models for Molecular Generation

Diffusion models learn to generate molecular structures by iteratively denoising from random noise. They operate in discrete (graph-based) or continuous (3D coordinate) spaces.

Table 2: Key Generative AI Models for Molecular Design

| Model Name | Type | Molecular Space | Key Application | Success Rate (Experimental) |

|---|---|---|---|---|

| RFdiffusion | Diffusion | 3D Backbone Coordinates | Symmetric protein assemblies, binders | ~20% high-affinity binders |

| Chroma | Diffusion | 3D Coordinates + Chemical | Proteins with functional sites | Validated for enzyme design |

| DiffDock | Diffusion | Ligand Pose (SE(3)) | Molecular docking | >30% top-1 accuracy (<2Å RMSD) |

| PoET | Auto-regressive | Amino Acid Sequence | Protein language model for design | High expression/folding rates |

Experimental Protocol for Diffusion-based Protein Design (e.g., RFdiffusion):

- Specify Design Goal: Define a structural motif, symmetric repeat, or binding site contour via a "guidance" function.

- Noise Initialization: Start with a cloud of Cα atoms (backbone) initialized as random noise or a simple scaffold.

- Denoising Process: Apply the trained diffusion model for 50-200 steps. At each step, the model predicts the denoised structure, guided towards the desired functional characteristic.

- Sequence Design: Pass the generated backbone to a inverse folding model (e.g., ProteinMPNN) to predict an optimal amino acid sequence that stabilizes the structure.

- In Silico Validation: Use RosettaFold or AlphaFold2 to "fold" the designed sequence and verify structural fidelity to the generated blueprint (predicted Aligned Error < 5Å).

Integrated Workflow forDe NovoDrug Creation

The modern generative pipeline integrates multiple AI modules.

Diagram 1: Generative AI Drug Discovery Pipeline (92 chars)

Key Signaling Pathways in Targeted Drug Design

Generative models often aim to modulate specific disease-relevant pathways.

Diagram 2: PI3K-AKT-mTOR Pathway & AI Inhibition (87 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Validating AI-Designed Molecules

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| HEK293T Cells | Protein expression platform for testing designed proteins or expressing target receptors. | ATCC CRL-3216 |

| Surface Plasmon Resonance (SPR) Chip | Label-free kinetic analysis of binding affinity (KD) between AI-designed molecule and purified target. | Cytiva Series S Sensor Chip CMS |

| Cryo-EM Grids | High-resolution structural validation of designed protein complexes. | Quantifoil R1.2/1.3 300 mesh Au |

| Kinase Assay Kit | Functional enzymatic activity assay for inhibitors targeting kinase pathways (e.g., PI3K-AKT). | ADP-Glo Kinase Assay (Promega) |

| Phospho-Specific Antibody Panel | Western blot analysis of pathway modulation (e.g., p-AKT, p-S6) by designed therapeutics. | Cell Signaling Technology #4060 |

| Size Exclusion Chromatography Column | Purification and assessment of monodispersity for de novo designed proteins. | Superdex 200 Increase 10/300 GL (Cytiva) |

The integration of generative AI models like AlphaFold3 and diffusion networks is establishing a new foundation for molecular design. This technical guide outlines the core methodologies and validation frameworks underpinning this shift. As the AI-biotechnology convergence deepens, the iterative loop between in silico generation and high-throughput experimental validation will become increasingly automated, driving the creation of previously unimaginable therapeutic modalities and functional biomaterials.

This whitepaper, framed within a broader thesis on AI and biotechnology convergence, details the application of deep learning (DL) to the critical pharmaceutical challenges of target identification and validation. The integration of multi-omics (genomics, transcriptomics, proteomics, metabolomics) and high-content phenotypic data presents both an unprecedented opportunity and a significant analytical hurdle. DL architectures are uniquely suited to decipher the complex, non-linear relationships within these high-dimensional datasets, accelerating the discovery of novel, druggable targets and predicting their biological and clinical relevance.

Core Deep Learning Architectures in Multi-Omics Analysis

Data Integration and Representation Learning

A primary challenge is the heterogeneous nature of multi-omics data. DL models like Multi-modal Autoencoders (MMAE) and Cross-modal Attentive Networks learn unified latent representations from disparate data types.

Protocol: Training a Stacked Denoising Multi-modal Autoencoder

- Data Preprocessing: Independently normalize each omics dataset (e.g., Z-score for RNA-seq, min-max for methylation data). Introduce stochastic noise (e.g., Gaussian noise, random masking) to input features.

- Model Architecture: Construct separate encoder networks for each omics modality. Each encoder consists of 3 fully connected layers with decreasing neurons (e.g., 1024 → 512 → 256), ReLU activation, and batch normalization. The outputs of each modality's encoder are concatenated into a joint latent vector (e.g., 128 dimensions).

- Training: A single decoder network (mirroring encoder architecture) reconstructs the denoised input for all modalities from the latent vector. Use a composite loss function:

L_total = L_reconstruction + λ * L_contrastive, whereL_reconstructionis Mean Squared Error for continuous data and Binary Cross-Entropy for discrete data, andL_contrastiveensures similar samples have similar latent codes. - Output: The trained latent space is used for downstream tasks like clustering patient subtypes or predicting drug response.

Target Identification via Graph Neural Networks (GNNs)

Biological systems are inherently graph-structured (e.g., protein-protein interaction (PPI) networks, gene regulatory networks). GNNs, particularly Graph Convolutional Networks (GCNs) and Graph Attention Networks (GATs), propagate information across these networks to identify key disease-associated modules and novel candidate targets.

Protocol: Identifying Novel Targets with a GAT on a PPI Network

- Graph Construction: Build an undirected graph

G = (V, E)where nodesVare proteins and edgesEare known physical interactions from databases like STRING or BioGRID. Initialize node features using gene expression or mutation vectors. - Model Architecture: Implement a 3-layer GAT. Each layer computes attention coefficients between a node and its neighbors, performing weighted message passing. The final layer produces a node embedding.

- Training: Formulate a semi-supervised node classification task. A subset of nodes are labeled as "known disease targets" or "non-targets" based on databases like Open Targets. The model is trained to predict these labels.

- Validation: Rank all unlabeled proteins by their predicted "target" score. Top-ranked candidates are prioritized for in silico validation (e.g., docking studies) and functional assays.

Quantitative Performance of DL Models in Target Discovery

Table 1: Benchmarking DL architectures on public multi-omics datasets for target identification tasks.

| Model Architecture | Dataset (TCGA Study) | Primary Task | Key Metric | Reported Performance | Reference (Example) |

|---|---|---|---|---|---|

| Multi-modal DNN | BRCA (Genome, Transcriptome) | Subtype Classification | AUC-ROC | 0.94 | (Xiao et al., 2021) |

| Graph Convolutional Network | Pan-cancer (PPI + Mut) | Essential Gene Prediction | Average Precision | 0.78 | (Greene et al., 2022) |

| Variational Autoencoder | CCLE (Expr, CNV, Mut) | Drug Response Prediction | Concordance Index | 0.85 | (Rampášek et al., 2022) |

| Transformer Encoder | GTEx + TCGA (Transcriptome) | Novel Driver Gene Discovery | Precision@100 | 0.31 | (Zeng et al., 2023) |

Integrated Experimental & Computational Validation Workflow

A robust DL-driven pipeline requires iterative experimental feedback for validation.

Diagram 1: Iterative DL-driven target identification and validation cycle.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential materials and reagents for experimental validation of DL-predicted targets.

| Category / Item | Example Product/Technology | Primary Function in Validation |

|---|---|---|

| Gene Modulation | CRISPR-Cas9 knockout/activation kits (e.g., Synthego, IDT) | Functional validation of target necessity and sufficiency in disease-relevant cellular phenotypes. |

| Phenotypic Screening | High-content screening (HCS) systems (e.g., PerkinElmer Operetta, Celigo) | Quantifying complex morphological changes (cell death, organelle health) post-target modulation. |

| Protein Analysis | Multiplex immunoassays (e.g., Olink, MSD) | Measuring target protein expression and downstream pathway activation in patient samples or models. |

| Cell Models | Induced pluripotent stem cell (iPSC)-derived cells or patient-derived organoids (PDOs) | Testing target relevance in physiologically relevant, patient-specific genetic backgrounds. |

| In Vivo Models | Patient-derived xenograft (PDX) mice or humanized mouse models | Evaluating target efficacy and safety in a complex, systemic environment. |

| Data Integration | Cloud-based bioinformatics platforms (e.g., DNAnexus, Terra) | Managing and analyzing the multi-omics and phenotypic data generated during validation. |

Detailed Experimental Validation Protocol

Protocol: High-Content Phenotypic Validation of a Novel Kinase Target This protocol follows the in vitro validation step in Diagram 1.

Cell Line Engineering:

- Select a disease-relevant cell line (e.g., a cancer cell line with the target pathway active).

- Using a lentiviral system, create three stable polyclonal populations: a) Non-targeting shRNA control, b) shRNA against the novel kinase target, c) Overexpression of the wild-type kinase.

- Confirm modulation via qPCR and western blot.

High-Content Screening Assay Setup:

- Seed engineered cells in 384-well imaging plates. For knockout lines, include a titration of a known standard-of-care therapeutic as a control.

- At 72 hours post-seeding, stain cells with a multiplex dye set: Hoechst 33342 (nuclei, 350/461 nm), MitoTracker Deep Red (mitochondria, 644/665 nm), Annexin V Alexa Fluor 488 (apoptosis, 495/519 nm), and CellEvent Caspase-3/7 reagent (apoptosis, 502/530 nm).

- Fix cells and image using a 20x objective on a high-content imager (e.g., ImageXpress Micro Confocal).

Image and Data Analysis:

- Use onboard software (e.g., MetaXpress) to segment cells and quantify >500 features per cell: morphological (size, shape), intensity-based (marker fluorescence), and textual features.

- Export single-cell data. Apply a DL-based image analysis tool (e.g., a convolutional autoencoder) to extract latent morphological features not captured by traditional analysis.

- Perform statistical analysis (e.g., ANOVA) to compare populations. A successful target knockdown should mimic the phenotypic signature of the therapeutic control or show a specific phenotype (e.g., increased apoptosis, loss of mitochondrial membrane potential).

Diagram 2: High-content phenotypic validation workflow for a novel target.

The convergence of deep learning and biotechnology is transforming target identification from a hypothesis-limited to a data-driven discipline. By effectively mining multi-omics and phenotypic landscapes, DL models generate high-probability candidate targets. However, their true value is realized only within an iterative, closed-loop framework where computational predictions are rigorously tested with modern experimental toolkits. This virtuous cycle of prediction and validation, as outlined in this guide, is accelerating the development of novel therapeutics and is a cornerstone of next-generation biopharmaceutical research.

This whitepaper, framed within a broader thesis on AI and biotechnology convergence, provides a technical guide to the application of artificial intelligence (AI) and machine learning (ML) for predicting clinical trial outcomes, toxicity, and pharmacokinetic/pharmacodynamic (PK/PD) properties. The convergence of high-dimensional biological data and advanced computational methods is transforming drug development by enabling in silico hypothesis generation and de-risking candidates prior to costly human trials.

Algorithmic Approaches

AI-driven predictive modeling employs a spectrum of algorithms, each suited to specific data types and prediction tasks.

Table 1: Core AI/ML Algorithms in Predictive Drug Development

| Algorithm Class | Example Models | Primary Application | Key Advantage |

|---|---|---|---|

| Tree-Based Ensembles | Random Forest, XGBoost, LightGBM | Binary outcome prediction (e.g., toxicity yes/no), feature importance. | Handles mixed data types, robust to non-linear relationships. |

| Deep Learning (DL) | Multilayer Perceptrons (MLPs), Convolutional Neural Networks (CNNs), Graph Neural Networks (GNNs) | PK parameter prediction, molecular property regression, omics data integration. | Captures complex, high-order interactions in unstructured data. |

| Natural Language Processing (NLP) | Transformer Models (BERT, BioBERT) | Mining Electronic Health Records (EHRs) for adverse event signals, literature-based discovery. | Extracts latent knowledge from unstructured text corpora. |

| Bayesian Methods | Bayesian Neural Networks, Gaussian Processes | PK/PD modeling with uncertainty quantification, dose optimization. | Provides probabilistic predictions and credible intervals. |

Key Data Modalities

Model performance is intrinsically linked to data quality and diversity. Primary data sources include:

- Chemical & Structural Data: SMILES strings, molecular fingerprints, 3D conformations.

- Omics Data: Genomics (GWAS, sequencing), transcriptomics, proteomics, metabolomics.

- Clinical Trial Data: Participant-level data on demographics, efficacy endpoints, adverse events (AEs), and lab values.

- Real-World Data (RWD): EHRs, medical claims, patient registries, pharmacovigilance databases (e.g., FDA Adverse Event Reporting System - FAERS).

- Literature & Patents: Large textual corpora for knowledge graph construction.

Experimental Protocols for Key Applications

Protocol: Predicting Phase III Trial Success from Multi-Omics and Early Clinical Data

Objective: To build a classifier that predicts the probability of Phase III trial success (positive primary endpoint) using data available at the end of Phase II.

Materials & Workflow:

- Data Curation: Assemble a labeled dataset of historical drug programs. Features include: target pathway enrichment scores (from transcriptomics), genetic polymorphism profiles of trial populations (pharmacogenomics), aggregate safety profiles from Phase II (frequency of Grade ≥3 AEs), and compound properties (e.g., lipophilicity, polar surface area).

- Feature Engineering: Normalize omics data (z-score). Encode categorical variables (e.g., therapeutic area) using one-hot encoding. Perform principal component analysis (PCA) on high-dimensional omics features to reduce dimensionality.

- Model Training: Use a stacked ensemble model. First-level models include XGBoost, a 1D-CNN for omics data, and an MLP. A logistic regression model serves as the meta-learner, taking the predictions from the first-level models as input.

- Validation: Perform temporal validation (train on data before a specific year, test on subsequent years) to avoid data leakage and simulate real-world forecasting. Evaluate using AUC-ROC, precision-recall curves, and calibration plots.

Protocol: In Silico Prediction of Organ-Specific Toxicity (e.g., Cardiotoxicity)

Objective: To predict the risk of drug-induced cardiotoxicity (e.g., prolonged QT interval, cardiomyopathy) from chemical structure and in vitro assay data.

Materials & Workflow:

- Data Source: Utilize public datasets like the FDA's Comprehensive in Vitro Proarrhythmia Assay (CIPA) initiative data and Tox21.

- Molecular Representation: Convert chemical structures to Morgan fingerprints (radius 2, 2048 bits) and pre-trained molecular embeddings (e.g., from ChemBERTa).

- Model Architecture: Implement a Graph Neural Network (GNN) that operates directly on the molecular graph, followed by a multi-task learning head.

- Training: The GNN is trained to simultaneously predict: a) inhibition of the hERG ion channel (primary endpoint), b) cytotoxicity in human cardiomyocyte cell lines, and c) transcriptional stress response profiles from Cell Painting assays. This multi-task approach improves generalizability.

- Output: A risk score (0-1) and a list of analogous compounds with known clinical toxicity profiles.

Title: AI Workflow for Cardiotoxicity Prediction

Protocol: AI-Enhanced Population PK/PD Modeling

Objective: To generate virtual patient populations and predict inter-individual variability in drug exposure and response.

Materials & Workflow:

- Base Model: Start with a traditional non-linear mixed-effects (NLME) model describing the PK/PD relationship (e.g., two-compartment PK with an Emax PD model).

- Covariate Discovery: Instead of pre-specified covariate testing, use a Random Forest or Gradient Boosting model to identify complex, non-linear relationships between patient features (genetic variants, renal/liver function markers, age, weight) and the NLME model's individual random effects (e.g., on clearance, volume).

- Neural ODEs: Implement a neural ordinary differential equation (Neural ODE) framework as a complementary approach. The neural network learns the derivatives of the system dynamics directly from rich, time-series PK/PD data, potentially uncovering unmodeled biological processes.

- Virtual Population Simulation: Sample from real-world demographic and genomic distributions to create a virtual cohort of 10,000 patients. Use the AI-enhanced model to simulate drug concentration-time profiles and predicted effect for each virtual patient, identifying subpopulations at risk of under-dosing or toxicity.

Title: AI-Enhanced PK/PD Modeling & Simulation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for AI-Driven Predictive Assays

| Item / Solution | Function in AI Model Development | Example Vendor/Resource |

|---|---|---|

| High-Content Screening (HCS) Kits | Generate multiparametric cellular morphology data (Cell Painting) for training phenotypic toxicity predictors. | Revvity (formerly PerkinElmer), Thermo Fisher Scientific |

| hERG Inhibition Assay Kits | Provide standardized in vitro data for a key cardiotoxicity endpoint to train and validate predictive models. | Eurofins Discovery, Charles River Laboratories |

| Recombinant CYP450 Enzymes | Generate data on metabolic stability and drug-drug interaction potential for PK prediction models. | Corning, Sigma-Aldrich |

| Patient-Derived Organoid (PDO) Systems | Create clinically relevant in vitro response data to train models on heterogeneous patient populations. | STEMCELL Technologies, Organoid Therapeutics |

| Public Data Repositories | Source of labeled data for model training and benchmarking. | ChEMBL, DrugBank, CIPA Portal, TCGA, FDA OpenFDA portal |

Quantitative Performance Benchmarks

Table 3: Reported Performance of AI Models in Recent Studies (2023-2024)

| Prediction Task | Data Used | Model Type | Reported Performance | Key Limitation |

|---|---|---|---|---|

| Phase III Outcome | 612 trials, multi-omics, early clinical | Stacked Ensemble (XGBoost + MLP) | AUC: 0.82; Precision: 76% (for positive predictions) | Retrospective cohort; potential historical bias. |

| Drug-Induced Liver Injury (DILI) | ~1,200 compounds, chemical & bioactivity | Graph Attention Network (GAT) | AUC: 0.89; Sensitivity: 81% | Relies on structural analogs with known labels. |

| Human Clearance (PK) | 1,085 small molecules, in vitro assay data | Hybrid CNN & Gradient Boosting | Mean Absolute Error (MAE): 0.22 log mL/min/kg | Poor extrapolation to novel chemical scaffolds. |

| Optimal First-in-Human Dose | Phase I clinical data, preclinical PK/PD | Bayesian Optimization + NLME | Prediction within 2-fold of actual dose: 92% of cases | Requires high-quality preclinical PK/PD linkage. |

AI-powered predictive modeling represents a cornerstone of the biotech-AI convergence, offering a paradigm shift from reactive to proactive drug development. By systematically integrating diverse data streams through sophisticated algorithms, these models illuminate hidden patterns governing clinical outcomes, toxicity, and PK/PD. While challenges remain—including data quality, model interpretability, and regulatory acceptance—the continued refinement of protocols and toolkits promises to enhance the precision, efficiency, and success rate of bringing new therapies to patients.

This technical guide, framed within the broader thesis of AI and biotechnology convergence, details the application of advanced computer vision (CV) in two pivotal biotech domains: High-Content Screening (HCS) and histopathology analysis. The integration of deep learning with high-throughput imaging and digitized tissue slides is accelerating drug discovery and precision diagnostics by extracting quantitative, high-dimensional data from complex biological images.

The convergence of artificial intelligence (AI) and biotechnology is revolutionizing how we interrogate biological systems. At the intersection lies computer vision, enabling the automated, quantitative, and unbiased analysis of microscopic images. This guide provides an in-depth examination of core methodologies in HCS for drug discovery and computational pathology for clinical and research applications.

High-Content Screening (HCS) with Computer Vision

HCS combines automated microscopy with multiplexed staining and automated image analysis to analyze cellular phenotypes and compound effects.

Core Experimental Protocol: Multiparametric Phenotypic Profiling

A standard protocol for assessing compound toxicity and mechanism of action is outlined below.

1. Cell Seeding & Treatment:

- Seed appropriate cell lines (e.g., U2OS, HepG2) in 384-well microplates.

- After 24 hours, treat cells with compound libraries (typically 1-10 µM) and controls (DMSO vehicle, positive control toxins). Incubate for 24-72 hours.

2. Cell Staining & Fixation:

- Fix cells with 4% paraformaldehyde (15 min).

- Permeabilize with 0.1% Triton X-100 (10 min).

- Stain with multiplexed dyes:

- Hoechst 33342 (nuclei, 1 µg/mL).

- Phalloidin-Alexa Fluor 488 (F-actin cytoskeleton).

- MitoTracker Deep Red (mitochondria).

- Wash and seal plates for imaging.

3. Automated Image Acquisition:

- Use a high-content confocal imager (e.g., PerkinElmer Opera Phenix, Yokogawa CV8000).

- Acquire images in 4-6 channels (DAPI, FITC, TRITC, Cy5) at 20x or 40x magnification with z-stacking (optional).

4. Computer Vision Analysis Pipeline:

- Preprocessing: Illumination correction, background subtraction, channel alignment.

- Segmentation: Utilize deep learning models (e.g., U-Net, Cellpose) trained on labeled data to segment individual nuclei and cytoplasm.

- Feature Extraction: For each segmented cell, extract hundreds of morphometric, intensity, and textural features (see Table 1).

- Classification & Profiling: Apply dimensionality reduction (t-SNE, UMAP) and clustering to group compounds by phenotypic signature.

Table 1: Key Quantitative Features Extracted in HCS

| Feature Category | Specific Metrics | Typical Value Range (Control Cells) | Biological Relevance |

|---|---|---|---|

| Nuclear Morphology | Area, Perimeter, Eccentricity, Intensity | 80-120 µm², 0.1-0.3 (Eccentricity) | Apoptosis, cell cycle state |

| Cytoplasmic Texture | Haralick features (Contrast, Correlation) | 0.8-1.2 (Correlation) | Protein aggregation, organelle disruption |

| Intensity Distribution | Total Intensity, Std Dev of Intensity | 50-200 a.u. (MitoTracker) | Mitochondrial mass & membrane potential |

| Spatial Relationships | Distance from nucleus to organelles | 5-15 µm (Nuc-to-Mito) | Cytoskeletal disruption |

Title: High-Content Screening Computer Vision Workflow

Histopathology Analysis with Computational Pathology

Whole Slide Imaging (WSI) digitizes glass pathology slides, enabling AI-driven analysis for diagnosis, prognosis, and biomarker discovery.

Core Experimental Protocol: AI-Assisted Tumor Microenvironment Analysis

A protocol for quantifying tumor-infiltrating lymphocytes (TILs) and PD-L1 expression in non-small cell lung carcinoma (NSCLC).

1. Tissue Processing & Staining:

- Obtain FFPE (Formalin-Fixed, Paraffin-Embedded) tissue sections (4 µm thick).

- Perform automated immunohistochemistry (IHC) for CD8 (T-cell marker) and PD-L1 (immune checkpoint) with hematoxylin counterstain.

2. Whole Slide Imaging & Data Management:

- Scan slides at 40x magnification using a digital slide scanner (e.g., Aperio AT2, Hamamatsu NanoZoomer).

- Save images in pyramidal file formats (e.g., .svs, .ndpi) to manage multi-gigabyte files.

3. Computer Vision Analysis Pipeline:

- Tiling & Patch Extraction: Divide WSI into small, manageable patches (e.g., 256x256 px at 20x equivalent).

- Tissue Detection: Apply a model to exclude background, artifacts, and non-informative tissue.

- Critical Segmentation Tasks:

- Nuclei Segmentation/Classification: Use a HoVer-Net or Mask R-CNN model to segment all nuclei and classify them as Tumor, Lymphocyte, Stromal, or Necrotic.

- PD-L1 Scoring: Segment tumor and immune cells, then classify PD-L1 membrane staining as positive or negative based on validated thresholds (e.g., Tumor Proportion Score).

- Spatial Analysis: Calculate spatial metrics like TIL density at the invasive margin and cell-to-cell proximity.

Table 2: Key Quantitative Metrics in Computational Pathology

| Metric | Calculation Method | Clinical/Research Utility | Typical Benchmark (NSCLC) |

|---|---|---|---|

| Tumor Proportion Score (TPS) | (PD-L1+ Tumor Cells / Total Viable Tumor Cells)*100 | Patient selection for immunotherapy | TPS ≥1% for therapy eligibility |

| TIL Density | # CD8+ Lymphocytes / mm² in tumor stroma | Prognostic biomarker | High TILs correlate with better OS |

| Spatial Co-localization | G-function or Ripley's K analysis | Understanding immune exclusion | |

| Tumor Bud Count | Automated detection of detached tumor cell clusters | Prognostic in colorectal cancer | >10 buds = poor prognosis |

Title: Computational Pathology Analysis Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for CV-Driven Experiments

| Item | Function & Relevance | Example Products / Models |

|---|---|---|