AI in Single-Cell Genomics: Transforming Discovery from Cell Atlases to Precision Therapies

This article provides a comprehensive overview of AI's transformative role in single-cell genomics, tailored for researchers, scientists, and drug development professionals.

AI in Single-Cell Genomics: Transforming Discovery from Cell Atlases to Precision Therapies

Abstract

This article provides a comprehensive overview of AI's transformative role in single-cell genomics, tailored for researchers, scientists, and drug development professionals. It begins by establishing the foundational synergy between AI's pattern recognition and the high-dimensional data of single-cell RNA sequencing (scRNA-seq). It then details core methodological applications, from automated cell type annotation to trajectory inference and multimodal data integration. The guide addresses critical troubleshooting and optimization strategies for real-world data challenges, including batch effect correction and data imputation. Finally, it offers a framework for validating AI models and comparing leading computational tools. The conclusion synthesizes how AI is accelerating the path from foundational research to clinical translation in biomedicine.

The AI-Single-Cell Synergy: Core Concepts and Why It's Revolutionizing Biology

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized genomics, enabling the interrogation of cellular heterogeneity at unprecedented resolution. However, this power comes with significant computational challenges: scale (datasets exceeding millions of cells) and noise (technical artifacts like dropout events, batch effects, and ambient RNA). These challenges are central to a broader thesis on AI in single-cell genomics: that AI is not merely an analytical tool but a fundamental partner in experimental design and biological discovery. This partnership leverages AI's capacity for pattern recognition in high-dimensional spaces to distill biological signal from technical noise, transforming raw data into actionable biological insights for research and therapeutic development.

Core AI Methodologies and Experimental Protocols

Dimensionality Reduction and Visualization: UMAP/t-SNE Enhanced by Autoencoders

- Protocol: A standard workflow begins with a count matrix (cells x genes). After normalization (e.g., SCTransform) and preliminary feature selection, an autoencoder is employed.

- Training: The autoencoder (a neural network with a bottleneck layer) is trained to reconstruct its input gene expression profile.

- Embedding: The activations of the narrow bottleneck layer serve as a non-linear, low-dimensional embedding that captures the essential variance of the data.

- Visualization: This embedding is used as input to UMAP (Uniform Manifold Approximation and Projection) for 2D/3D visualization, yielding more stable and biologically meaningful layouts than PCA-based approaches.

Cell Type Annotation: From Manual Markers to Supervised/Self-Supervised Models

- Protocol (Supervised Transfer Learning):

- Reference Training: A neural network (e.g., a feed-forward network or a graph neural network) is trained on a large, expertly annotated reference atlas (e.g., from the Human Cell Atlas).

- Query Projection: New, unannotated query data is projected into the same latent space. The model predicts cell type labels based on the learned patterns.

- Uncertainty Quantification: Models like scANVI (single-cell ANnotation using Variational Inference) jointly model the data and provide confidence scores for each label, flagging novel or ambiguous cell states.

Denoising and Imputation: Addressing Dropout with Deep Generative Models

- Protocol (Using a Deep Count Autoencoder - DCA):

- Model Architecture: DCA uses a denoising autoencoder framework with a Zero-Inflated Negative Binomial (ZINB) loss function, explicitly modeling count data and dropout.

- Training: The model learns to reconstruct the true expression matrix from a corrupted (noised) input.

- Output: It outputs a denoised count matrix, imputing plausible values for likely technical dropouts while preserving true biological zeros, enabling more accurate downstream analysis.

Trajectory Inference: Modeling Cell Fate with Neural ODEs

- Protocol (Neural Ordinary Differential Equations for Trajectories):

- State Definition: Each cell is represented in a latent space learned by a variational autoencoder (VAE).

- Dynamics Learning: A neural network defines a continuous vector field within this latent space, modeling the dynamics of gene expression changes.

- Inference: The trajectory (pseudotime) is calculated by integrating along the learned vector field from a user-defined root cell, providing a continuous model of differentiation or cell state transitions.

Table 1: Performance Comparison of Key AI-based scRNA-seq Tools (2023-2024 Benchmarks)

| Task | Tool (Model Type) | Key Metric | Reported Performance | Baseline (Non-AI) |

|---|---|---|---|---|

| Cell Annotation | scBERT (Transformer) | Annotation Accuracy (on novel data) | 92.1% | 78.5% (SingleR) |

| Cell Annotation | scANVI (Semi-supervised VAE) | Label Transfer F1-score | 0.89 | 0.72 (PCA + SVM) |

| Data Imputation | DCA (Denoising Autoencoder) | Gene-Gene Correlation Recovery (Spearman) | 0.85 | 0.61 (Magic) |

| Batch Correction | scGen (VAE) | Batch Mixing (kBET acceptance rate) | 0.91 | 0.74 (Harmony) |

| Trajectory Inference | CellRank 2 (Neural ODE + ML) | Fate Prediction Accuracy (simulated) | 94% | 81% (PAGA) |

| Scale | scPipe (Deep Learning Pipeline) | Cells Processed per Hour (on GPU) | ~1 Million | ~100k (Standard) |

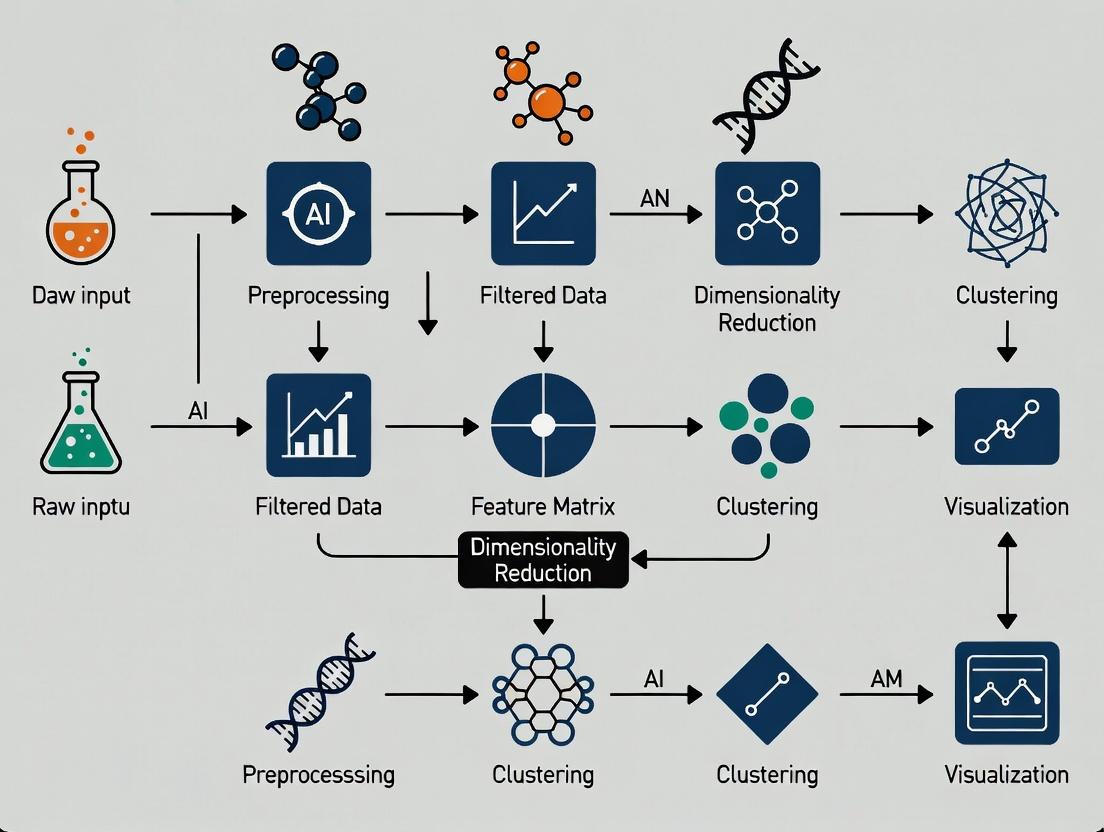

Visualizing the AI-scRNA-seq Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Computational Tools for AI-Enhanced scRNA-seq Studies

| Item | Function & Relevance to AI Partnership |

|---|---|

| 10x Genomics Chromium Next GEM Kits | Provides the foundational high-throughput, droplet-based single-cell library preparation. AI models are trained and optimized on data generated primarily by this dominant platform. |

| Multiplexed Cell Hashing (e.g., BioLegend TotalSeq-A) | Uses antibody-oligo conjugates to label cells from different samples with unique barcodes, enabling sample multiplexing. Critical for generating the large, multi-batch datasets required for robust AI model training. |

| CRISPR Perturb-seq Kits | Combines CRISPR-mediated gene knockout with scRNA-seq readout. AI models (like neural ODEs) analyze these datasets to infer complex gene regulatory networks and causal relationships at scale. |

| V(D)J Enrichment Reagents | Enables simultaneous gene expression and immune repertoire profiling from single cells. Graph Neural Networks (GNNs) are uniquely suited to model the paired chain relationships in B/T cell receptor data. |

| Cell-Free RNA Spike-Ins (e.g., ERCC, SIRV) | Exogenous RNA controls used to quantify technical noise and sensitivity. The concentration-response curve of spike-ins is used to calibrate and train denoising AI models like DCA. |

| Annotated Reference Atlas Data (e.g., CZ CELLxGENE) | Curated, community-standard collections of labeled single-cell data (e.g., from Human Cell Atlas). These are the indispensable "training sets" for supervised and transfer learning models for cell annotation. |

| GPU-Accelerated Cloud Compute Instances (e.g., NVIDIA A100) | The physical hardware enabling the training of large deep learning models (like transformers) on datasets of millions of cells, making the AI partnership computationally feasible. |

This technical guide delineates the pivotal machine learning (ML) paradigms—supervised, unsupervised, and self-supervised learning—within the context of single-cell genomics. As the field transitions from analyzing static "pixels" of data to dynamic, multi-modal cellular "portraits," these computational frameworks are fundamental for decoding cellular heterogeneity, identifying novel cell states, and accelerating therapeutic discovery. We provide an in-depth analysis of current methodologies, experimental protocols, and reagent toolkits essential for researchers and drug development professionals.

Single-cell genomics has revolutionized biology by enabling the profiling of gene expression, chromatin accessibility, and protein abundance at unprecedented resolution. The resulting high-dimensional datasets, often termed the "pixels" of cellular identity, present significant analytical challenges and opportunities. Machine learning provides the essential scaffolding to transform this raw data into biological insight, driving applications from basic research to target identification in drug development.

Core Machine Learning Paradigms

Supervised Learning

Supervised learning involves training a model on labeled data to predict outcomes for unseen data. In single-cell genomics, labels can be cell types, disease states, or treatment responses.

- Key Algorithms: Logistic Regression, Random Forests, Gradient Boosting Machines (GBM/XGBoost), Support Vector Machines (SVM), and Deep Neural Networks (DNNs).

- Primary Application: Automated cell type annotation, predicting drug response from single-cell profiles, and classifying disease subtypes.

Table 1: Quantitative Performance of Supervised Models in Cell Type Annotation

| Model | Dataset (e.g., PBMC) | Number of Cell Types | Accuracy (%) | F1-Score | Reference |

|---|---|---|---|---|---|

| Random Forest | 10x Genomics PBMC 3k | 8 | 94.2 | 0.93 | Lopez et al., 2018 |

| XGBoost | Human Lung Atlas | 15 | 91.7 | 0.90 | Hu et al., 2021 |

| DNN (SCINA) | Tabula Sapiens | 23 | 89.5 | 0.88 | Zhang et al., 2019 |

| SVM | Mouse Brain | 7 | 96.0 | 0.95 | Abdelaal et al., 2019 |

Experimental Protocol: Supervised Cell Type Classification

- Data Acquisition: Obtain a single-cell RNA-seq count matrix and a ground truth label vector (e.g., from manual annotation or FACS sorting).

- Preprocessing: Normalize counts (e.g., log(CP10K)), select highly variable genes (2000-5000 genes), and scale features.

- Dimensionality Reduction: Perform PCA on the scaled data, retaining top 50-100 principal components.

- Data Splitting: Split cells into training (70%), validation (15%), and test (15%) sets, ensuring label stratification.

- Model Training: Train classifier (e.g., Random Forest) on the training set using the PC scores as features.

- Hyperparameter Tuning: Optimize parameters (e.g., number of trees, max depth) on the validation set via grid search.

- Evaluation: Apply the final model to the held-out test set and report accuracy, precision, recall, and F1-score.

Unsupervised Learning

Unsupervised learning identifies intrinsic patterns, structures, or groupings in data without pre-existing labels. It is crucial for exploratory analysis.

- Key Algorithms: Principal Component Analysis (PCA), t-Distributed Stochastic Neighbor Embedding (t-SNE), Uniform Manifold Approximation and Projection (UMAP), and clustering methods (Leiden, K-means).

- Primary Application: Dimensionality reduction for visualization, discovery of novel cell states or trajectories, and batch effect correction.

Table 2: Comparison of Unsupervised Dimensionality Reduction Techniques

| Method | Key Principle | Computational Speed | Preserves Global Structure | Typical Use Case |

|---|---|---|---|---|

| PCA | Linear variance maximization | Very Fast | Yes | Initial noise reduction |

| t-SNE | Minimizes divergence between high & low-dim distributions | Slow | No | Detailed cluster visualization |

| UMAP | Minimizes cross-entropy of fuzzy topological graphs | Medium | Better than t-SNE | Standard visualization, trajectory inference |

Experimental Protocol: Unsupervised Clustering & Visualization

- Data Processing: Generate a processed count matrix as in the supervised protocol.

- Neighborhood Graph: Construct a k-nearest neighbor graph (k=20-30) based on distances in PCA space.

- Clustering: Apply the Leiden algorithm to the graph to partition cells into clusters at a chosen resolution parameter.

- Marker Gene Identification: For each cluster, perform differential expression analysis (e.g., Wilcoxon rank-sum test) to find upregulated marker genes.

- Visualization: Embed the graph into 2D using UMAP (mindist=0.3, nneighbors=15) for intuitive visualization of clusters.

- Biological Interpretation: Annotate clusters by comparing marker gene lists to known cell type signatures from literature or databases.

Self-Supervised Learning

Self-supervised learning (SSL) generates supervisory signals directly from the data's structure. It is transformative for leveraging vast unlabeled datasets.

- Key Architectures: Autoencoders, Masked Language Models (adapted as Masked Gene Models), and Contrastive Learning frameworks (SimCLR, Barlow Twins).

- Primary Application: Learning general-purpose, low-dimensional representations of cells, denoising data, multi-omic integration, and predicting gene-gene interactions.

Table 3: Recent Self-Supervised Models in Single-Cell Analysis

| Model | Architecture | Pre-training Task | Key Advantage | Benchmark Performance (Cell Type AUC) |

|---|---|---|---|---|

| scBERT | Transformer | Masked Gene Prediction | Captures gene-gene context | 0.912 |

| scVI | Variational Autoencoder | Probabilistic Latent Embedding | Handles count noise, batch integration | 0.887 |

| DCA | Denoising Autoencoder | Input Reconstruction | Explicit denoising, imputation | 0.851 |

| MoCo (sc-MoCo) | Contrastive Learning | Instance Discrimination | Learns invariant features | 0.902 |

Experimental Protocol: Self-Supervised Pre-training with a Masked Gene Model

- Data Curation: Assemble a large, unlabeled corpus of single-cell gene expression profiles (e.g., from public repositories).

- Masking: For each cell's gene expression vector, randomly mask 10-20% of the non-zero entries (set to zero or a mask token).

- Model Training: Train a transformer encoder model to predict the original expression values of the masked genes, using the unmasked genes as context. The loss is typically mean squared error or negative binomial loss.

- Representation Extraction: Use the trained model's internal activation (e.g., the [CLS] token output or mean of hidden states) as a contextual embedding for each cell.

- Downstream Transfer: Fine-tune the pre-trained model on a smaller, labeled target task (e.g., cell type classification) by adding a task-specific output layer and performing end-to-end training with a reduced learning rate.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Reagents and Platforms for Single-Cell ML Experiments

| Item / Solution | Function in the Workflow | Example Vendor/Product |

|---|---|---|

| Single-Cell Isolation Kit | Generates the foundational single-cell suspension for library prep. | 10x Genomics Chromium, BD Rhapsody, Parse Biosciences Evercode |

| scRNA-seq Library Prep Kit | Converts cellular mRNA into sequencable libraries with cell barcodes. | 10x Genomics Chromium Next GEM, Smart-seq2/3 reagents |

| Viability Stain | Ensures high input viability, critical for data quality. | Thermo Fisher LIVE/DEAD, BioLegend Zombie dyes |

| Cell Hashing Antibodies | Enables sample multiplexing and doublet detection via antibody-oligos. | BioLegend TotalSeq, BD Single-Cell Multiplexing Kit |

| Nuclei Isolation Buffer | For sequencing from frozen tissue or difficult-to-dissociate samples. | Miltenyi Biotec Nuclei Isolation Kit, NST/DAPI buffer |

| UMI & Barcode Reagents | Unique Molecular Identifiers (UMIs) enable accurate transcript counting. | Included in commercial kits (10x, Parse, BD) |

| Benchmark Annotation Set | Gold-standard labels for training/evaluating supervised models. | Allen Brain Map, Human Cell Atlas (HCA) data, CellTypist references |

| Cloud Compute Credits | For scalable model training and data storage. | AWS, Google Cloud, Microsoft Azure grants |

The integration of artificial intelligence with single-cell genomics represents a paradigm shift in biological discovery and therapeutic development. A core thesis is that AI's predictive and analytical power is fundamentally constrained by the quality, scale, and structure of its training data. Curated cell atlases, such as the Human Cell Atlas (HCA), are not merely reference maps; they are the foundational data infrastructure enabling the next generation of AI applications in biomedicine. This whitepaper outlines the technical construction, experimental validation, and critical utility of these atlases within this AI-driven context.

Core Data Architecture and Quantitative Landscape

Modern cell atlases are built on multi-omics single-cell and spatial profiling technologies. The following table summarizes the current scale and data types of major initiatives.

Table 1: Scale and Composition of Major Cell Atlas Initiatives (As of 2024)

| Atlas Initiative | Estimated Cells Profiled | Primary Technologies | Key Tissue/Organ Focus | Data Accessibility |

|---|---|---|---|---|

| Human Cell Atlas (HCA) | ~50 Million | scRNA-seq, snRNA-seq, scATAC-seq, MERFISH, CODEX | Pan-organism, with major milestones for immune system, lung, heart, kidney, etc. | CZ CELLxGENE, Terra, HCA Data Portal |

| Fly Cell Atlas | ~1.2 Million | scRNA-seq (10x, Smart-seq2) | Whole adult Drosophila melanogaster | Interactive website, raw data on GEO/SRA |

| Mouse Cell Atlas | ~1.3 Million | Microwell-seq, scRNA-seq | Whole adult mouse | Interactive web server, MCA datasets |

| Tabula Sapiens | ~1.5 Million (Human) | scRNA-seq, scATAC-seq, CITE-seq | 24 organs from the same human donors | CZ CELLxGENE, figshare |

Foundational Experimental and Computational Workflows

Protocol: Integrated Single-Cell Multi-Omic Atlas Construction

This core protocol details the steps for generating a high-quality, AI-ready reference atlas.

1. Sample Procurement and Preparation:

- Source: Tissues from consented donors (HCA) or model organisms. Prioritize multimodal donors.

- Dissociation: Use optimized, tissue-specific enzymatic cocktails (e.g., Miltenyi Biotec's Multi Tissue Dissociation Kits) to maximize live cell yield and minimize stress gene artifacts.

- Viability Enrichment: Perform density gradient centrifugation or dead cell removal magnetic bead separation.

2. Library Preparation & Sequencing:

- Single-Cell Partitioning: Use high-throughput microfluidic platforms (10x Genomics Chromium, Parse Biosciences) or combinatorial indexing (sci-).

- Multiomic Capture: For nuclei, perform simultaneous gene expression and chromatin accessibility (10x Multiome). For cells, use CITE-seq for surface protein quantification.

- Sequencing: Target a minimum of 20,000 read pairs per cell for scRNA-seq on an Illumina NovaSeq platform to ensure robust gene detection.

3. Primary Computational Processing (Generation of the Cell-by-Gene Matrix):

- Raw Data Processing: Use

Cell Ranger(10x),kb-python, orSTARsolofor alignment, barcode assignment, and UMI counting. Ambient RNA correction withSoupXorDecontX. - Quality Control: Filter cells based on metrics (Table 2). Remove doublets using

ScrubletorDoubletFinder.

Table 2: Standard QC Filtering Thresholds for scRNA-seq Data

| Metric | Typical Lower Bound | Typical Upper Bound | Rationale |

|---|---|---|---|

| Genes Detected | 500 - 1,000 | 5,000 - 7,500 | Removes empty droplets & low-quality cells; excludes multiplets. |

| UMI Counts | 1,000 - 2,000 | 25,000 - 50,000 | Similar rationale as genes detected. |

| Mitochondrial Read % | N/A | 10% - 20% (tissue-dependent) | High % indicates apoptotic or stressed cells. |

4. Reference Atlas Construction:

- Integration: Harmonize data across donors, batches, and technologies using AI/ML methods like

scVI,scANVI, orHarmony. - Annotation: Iterative process using marker genes, reference-based transfer (

scArches), and expert knowledge. Critical Step: Annotation is stored as curated metadata, forming the "ground truth" for supervised AI. - Atlas Deployment: Processed and annotated data is stored in standardized formats (

.h5ad,.loom) and served via interactive platforms (CELLxGENE, UCSC Cell Browser).

Visualization: Reference Atlas Construction and Query Workflow

Title: Workflow for Building and Querying a Curated Cell Atlas

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Reagent Solutions for Cell Atlas Construction

| Item | Function & Relevance to Atlas Quality | Example Products/Brands |

|---|---|---|

| Tissue Dissociation Kits | Generate high-viability single-cell suspensions. Tissue-specific optimization is critical for minimizing technical bias. | Miltenyi Biotec GentleMACS Dissociators & kits; Worthington Biochemical collagenase blends. |

| Live/Dead Cell Stains | Assess viability pre- and post-dissociation for QC and sorting. | Thermo Fisher LIVE/DEAD Fixable Viability Dyes; BioLegend Zombie Dyes. |

| Single-Cell Partitioning Reagents | Partition individual cells/nuclei into droplets or wells for barcoding. The core of library prep. | 10x Genomics Chromium Next GEM Kits; Parse Biosciences Evercode kits. |

| Multimodal Capture Reagents | Enable simultaneous measurement of gene expression + another modality (ATAC, protein), enhancing reference information density. | 10x Genomics Multiome (ATAC+GEX) Kit; BioLegend TotalSeq Antibodies for CITE-seq. |

| Nuclei Isolation Buffers | For frozen or difficult-to-dissociate tissues; crucial for expanding atlas sample diversity. | Sigma Nuclei EZ Lysis Buffer; 10x Genomics Nuclei Isolation Kit. |

| Indexing PCR Primers & Enzymes | Amplify and add sample indices for multiplexed sequencing. High-fidelity enzymes reduce errors. | Kapa HiFi HotStart ReadyMix; IDT for Illumina Unique Dual Indexes. |

| Cell Hashing Antibodies | Label cells from different samples with unique barcoded antibodies for sample multiplexing, reducing batch effects. | BioLegend TotalSeq-A/B/C Hashtag Antibodies. |

AI Applications Enabled by Curated Atlases

Curated atlases directly fuel specific AI/ML tasks in single-cell genomics:

Table 4: AI Tasks Powered by Curated Reference Atlases

| AI Task | Atlas Role | Example Algorithm |

|---|---|---|

| Automatic Cell Annotation | Provides the labeled training data for supervised/semi-supervised models. | scANVI, CellTypist, SingleR |

| Data Integration & Batch Correction | Serves as an anchor to harmonize new datasets via transfer learning. | scArches, SCALEX, Harmony |

| Perturbation Modeling | Establishes a "healthy" baseline to predict in-silico the effects of genetic or chemical perturbations. | CPA (Compositional Perturbation Autoencoder) |

| Novel Cell State Discovery | Dense sampling of reference space allows identification of rare populations and transitional states. | DeepSORT, SCCAF |

Visualization: AI-Driven Perturbation Analysis Using a Reference Atlas

Title: AI Predicts Perturbation Effects Using a Reference Atlas

The application of Artificial Intelligence (AI) in single-cell genomics research is driving a paradigm shift in our ability to decipher cellular heterogeneity, gene regulatory networks, and disease mechanisms. This technical guide explores three foundational AI architectures—Autoencoders, Graph Neural Networks (GNNs), and Transformers—positioned within the broader thesis that their integration is essential for constructing a multi-scale, interpretable understanding of cellular systems. These models move beyond bulk analysis, enabling the deconvolution of cellular states from high-dimensional -omics data, predicting gene-gene interactions, and modeling sequential dependencies in biological sequences, thereby accelerating therapeutic target discovery.

Foundational Models: Architectures and Genomic Applications

Autoencoders for Dimensionality Reduction and Feature Learning

Autoencoders are neural networks trained to reconstruct their input through a compressed latent representation. In single-cell genomics, they are pivotal for denoising and compressing high-dimensional gene expression data (e.g., from single-cell RNA sequencing) into lower-dimensional, biologically meaningful embeddings.

Architecture: A standard autoencoder comprises an encoder f(x) that maps input data x (e.g., gene expression vector) to a latent code z, and a decoder g(z) that reconstructs the input x'. The loss function is typically Mean Squared Error (MSE) between x and x'.

- Variational Autoencoders (VAEs): Introduce a probabilistic twist, forcing the latent space

zto follow a prior distribution (e.g., Gaussian). This enables generative modeling and smooth interpolation between cell states. - Applications: scVI (single-cell Variational Inference) uses a VAE to model technical noise and batch effects, producing corrected, denoised expression values for downstream clustering and trajectory inference.

Key Experimental Protocol: Denoising scRNA-seq Data with scVI

- Data Input: Raw UMI count matrix (cells x genes).

- Preprocessing: Library size normalization per cell. Genes are filtered (e.g., keep genes expressed in >1% of cells).

- Model Training:

- The encoder (neural network) takes normalized counts and batch information as input.

- It outputs parameters (mean µ and variance σ) of a Gaussian distribution for each cell's latent representation

z. - A sample is drawn from this distribution:

z ~ N(µ, σ²). - The decoder maps

zand batch information to parameters of a negative binomial distribution, which models the count data. - The model is trained to maximize the evidence lower bound (ELBO), which includes the reconstruction likelihood of the counts and the Kullback-Leibler divergence between the latent distribution and a standard normal prior.

- Output: Denoised, batch-corrected expression values and a low-dimensional latent embedding for all cells.

Graph Neural Networks (GNNs) for Relational Biology

GNNs operate on graph-structured data, making them ideal for modeling biological networks where entities (nodes) such as genes, proteins, or cells are connected by edges (interactions, pathways, spatial proximity).

Architecture: GNNs perform message passing, where node representations are iteratively updated by aggregating information from their neighbors. A common layer is the Graph Convolutional Network (GCN):

H⁽ˡ⁺¹⁾ = σ( H⁽ˡ⁾ W⁽ˡ⁾), where  is the normalized adjacency matrix, H⁽ˡ⁾ are node features at layer l, W⁽ˡ⁾ is a learnable weight matrix, and σ is a non-linear activation.

- Applications:

- Gene Regulatory Network (GRN) Inference: Nodes are genes, edges are regulatory interactions. GNNs predict novel edges or classify interaction types.

- Spatial Transcriptomics: Cells are nodes connected based on physical location. GNNs predict cell-cell communication or spatial gene expression patterns.

- Drug-Target Interaction: Predicting links between drug and protein nodes in a heterogeneous knowledge graph.

Key Experimental Protocol: Predicting Cell-Cell Communication with GNNs from Spatial Data

- Graph Construction:

- Nodes: Each cell, represented by its gene expression profile.

- Edges: Connect cells within a fixed spatial distance (e.g., 50 µm). Edge weights can be inversely proportional to distance.

- Node Features: Use PCA-reduced or autoencoder-derived embeddings of gene expression.

- Model Training:

- A GNN model (e.g., GAT - Graph Attention Network) is applied for

kmessage-passing layers. - The final node embeddings encode neighborhood information.

- For each ligand-receptor pair (e.g., from a database like CellChatDB), concatenate the embeddings of ligand-expressing sender cells and receptor-expressing receiver cells.

- A multilayer perceptron (MLP) classifies whether a significant communication event exists between the cell pair.

- A GNN model (e.g., GAT - Graph Attention Network) is applied for

- Output: A probabilistic graph of predicted ligand-receptor-mediated interactions between spatially proximal cells.

Transformers for Sequence and Context Modeling

Transformers, built on self-attention mechanisms, have revolutionized NLP and are now applied to genomic sequences (DNA, RNA, protein) and even to cells-as-sequences (where a cell's "sequence" is its ordered gene expression profile).

Architecture: The core is the Multi-Head Self-Attention mechanism. It allows each position (e.g., a nucleotide in a DNA sequence) to attend to all other positions, computing a weighted sum of values, where weights are determined by the compatibility between queries and keys. This captures long-range dependencies effortlessly.

- Applications:

- DNA Sequence Modeling: Models like DNABert pre-train on reference genomes to learn representations for tasks like predicting promoter regions, transcription factor binding sites, and variant effects (e.g., Enformer).

- Single-Cell Analysis: scBERT treats the normalized expression vector of a cell as a "sentence," with genes as "tokens." It uses a Transformer encoder to learn cell representations for classification (e.g., cell type annotation) in a pre-train/fine-tune paradigm.

Key Experimental Protocol: Fine-Tuning a Pre-trained Transformer for Cell Type Annotation

- Data Preparation: A large, annotated scRNA-seq reference atlas (e.g., Human Cell Landscape).

- Pre-training (Model-Specific): A model like scBERT is first pre-trained on massive, unlabeled scRNA-seq data using a Masked Gene Modeling task (randomly mask some gene expression values and predict them).

- Fine-Tuning:

- The pre-trained Transformer encoder is taken, and a classification head (linear layer) is appended on top.

- The model is trained on the labeled reference data. Input is a cell's gene expression vector (preprocessed and normalized). The model is trained with cross-entropy loss to predict the known cell type label.

- Inference: The fine-tuned model can predict cell types for new, unseen query cells based solely on their gene expression profiles.

Comparative Analysis of Model Performance

Table 1: Quantitative Comparison of Foundational AI Models in Key Genomic Tasks

| Model Class | Exemplary Tool | Primary Task | Key Metric & Reported Performance | Data Type | Strengths | Limitations |

|---|---|---|---|---|---|---|

| Autoencoder (VAE) | scVI | Dimensionality Reduction & Batch Correction | Cluster purity (ARI: 0.85±0.05), Batch mixing (kBET: 0.92±0.03) | scRNA-seq | Probabilistic, handles noise/zeros well | Latent space can be less interpretable |

| Graph Neural Network | Graph Attention Network | Gene Regulatory Network Inference | AUROC (0.89±0.04), AUPRC (0.81±0.06) | Gene co-expression + prior knowledge graphs | Models explicit relationships | Performance depends heavily on initial graph quality |

| Transformer | Enformer | Non-coding Variant Effect Prediction | Pearson R (0.85) on MPRA experiment validation | DNA sequence (∼200kb context) | Captures very long-range genomic context | Computationally intensive for long sequences |

| Transformer | scBERT | Cell Type Annotation | Accuracy (0.972), F1-score (0.968) on human PBMC data | scRNA-seq gene expression | Transfer learning, captures gene-gene interactions | Requires large pre-training data |

Table 2: Typical Computational Requirements (2023-2024 Benchmarks)

| Model | Typical Training Hardware | Approx. Training Time | Model Size (Params) | Recommended Library/Framework |

|---|---|---|---|---|

| scVI (VAE) | Single GPU (e.g., NVIDIA V100) | 1-2 hours (for 50k cells) | 1-5 Million | PyTorch, scvi-tools |

| GCN/GAT | Single GPU | 30 mins - 2 hours | 500K - 5 Million | PyTorch Geometric, DGL |

| Enformer | TPU v4 / Multiple GPUs | Days (pre-training) | 300 Million | TensorFlow, JAX |

| scBERT | Single to Multiple GPUs | Hours (fine-tuning), Weeks (pre-training) | 10-100 Million | PyTorch, Hugging Face |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Single-Cell Genomics Experiments

| Item / Reagent | Function in Experimental Pipeline | Example Product/Platform |

|---|---|---|

| Chromium Controller & Kits | High-throughput single-cell partitioning, barcoding, and library preparation for scRNA-seq. | 10x Genomics Chromium Single Cell 3’ Gene Expression |

| DNBelab C Series | Alternative droplet-based system for single-cell library preparation. | MGI DNBelab C4 |

| Smart-seq2/3 Reagents | Full-length, plate-based scRNA-seq protocol for higher sensitivity on fewer cells. | Takara Bio SMARTer kits |

| Visium Spatial Gene Expression Slide | For capturing spatially resolved whole-transcriptome data from tissue sections. | 10x Genomics Visium |

| Cell hashing antibodies | Multiplexing samples by labeling cells with antibody-derived tags (ADTs) for pooled sequencing. | BioLegend TotalSeq-A |

| Cell Ranger | Primary software suite for processing raw sequencing data from 10x Genomics into gene-cell matrices. | 10x Genomics Cell Ranger (v7.1+) |

| CellBender | Software tool to remove ambient RNA noise from scRNA-seq count matrices. | Broad Institute CellBender |

| Annotated Reference Atlases | High-quality, curated single-cell datasets used for model training and transfer learning. | Human Cell Landscape, CellXGene Census |

| Pre-trained Model Weights | Released parameters for foundational models (e.g., scBERT, Enformer) to enable fine-tuning without costly pre-training. | Hugging Face Hub, GitHub Releases |

Visualizations of Model Architectures and Workflows

From Data to Discovery: A Guide to Key AI Applications and Workflows

Automated Cell Type Annotation and Novel Cell State Discovery

The advent of high-throughput single-cell RNA sequencing (scRNA-seq) has revolutionized biology by enabling the characterization of cellular heterogeneity at unprecedented resolution. However, this technological leap has created a significant analytical bottleneck: the accurate and scalable interpretation of the resulting complex datasets. This whitepaper addresses a core challenge within the broader thesis of AI applications in single-cell genomics: the dual problem of automated cell type annotation and novel cell state discovery. Moving beyond manual, marker-based classification, AI-driven methods provide a systematic, quantitative, and reproducible framework to map the cellular universe, identify known cell types, and uncover previously unrecognized or transitional cellular states critical for understanding development, disease, and therapeutic response.

Core Methodologies and Quantitative Benchmarks

Automated Cell Type Annotation: Reference-Based Approaches

These methods project a query dataset onto a well-annotated reference atlas using machine learning.

| Method (Tool) | Core Algorithm | Key Metric (Accuracy) | Speed (Cells/sec) | Reference Size (Typical) | Year |

|---|---|---|---|---|---|

| Seurat (v5) | CCA + Mutual Nearest Neighbors (MNN) | 94-97% (PBMC) | ~1,000 | 500k - 1M+ cells | 2023 |

| scANVI | Deep Generative Model (VAE) | 96-98% (Pancreas) | ~500 | 100k - 500k cells | 2022 |

| SingleCellNet | Random Forest Classifier | 92-95% (Cross-tissue) | ~800 | 10k - 100k cells | 2021 |

| CellTypist | Logistic Regression with Hierarchical Loss | 95-99% (Immune) | ~10,000 | 10M+ cells (immune) | 2023 |

| scPred | Support Vector Machine (SVM) | 90-94% (Various) | ~300 | 50k - 200k cells | 2021 |

Experimental Protocol for Benchmarking Annotation Tools:

- Data Acquisition: Download a gold-standard, manually annotated scRNA-seq dataset (e.g., PBMC from 10x Genomics, Tabula Sapiens).

- Reference/Query Split: Randomly split the dataset into a reference set (70%) and a query set (30%), ensuring balanced cell type representation.

- Tool Execution: Run each annotation tool using its default parameters. For reference-based tools, train on the reference set and predict on the query set.

- Ground Truth Comparison: Compare tool predictions to the manual annotations in the query set.

- Metric Calculation: Compute accuracy, weighted F1-score, and per-cell-type precision/recall. Measure computational time and memory usage.

Novel Cell State Discovery: Unsupervised & Hypothesis-Free Approaches

These methods identify discrete populations or continuous trajectories without prior labels.

| Method (Tool) | Core Algorithm | Output Type | Key Strength | Datasets Used For Validation |

|---|---|---|---|---|

| SCANPY (Leiden) | Graph Clustering (Leiden algorithm) | Discrete Clusters | Scalability, integration with workflow | Retina, Bone Marrow |

| PhenoGraph | k-NN Graph + Community Detection | Discrete Clusters | Robustness to batch effects | CyTOF, scRNA-seq |

| Monocle3, PAGA | Graph + Principal Graph Learning | Continuous Trajectory | Branching dynamics, pseudotime | Development, Differentiation |

| Cytopath | Optimal Transport + Dictionary Learning | State & Program Discovery | Decomposes cells into latent programs | Cancer, Drug Perturbation |

| SCUBI | Deep Generative Model (Topic Model) | Rare Population Detection | Models technical noise explicitly | Rare Immune Cells |

Experimental Protocol for Novel State Validation:

- Discovery: Apply an unsupervised method (e.g., Leiden clustering) to identify candidate novel clusters (C*).

- Differential Expression: Perform a Wilcoxon rank-sum test between C* and all neighboring cell types to identify significantly upregulated marker genes for C*.

- Functional Enrichment: Use GO, KEGG, or Reactome pathway analysis on the marker gene set to assess biological coherence.

- Independent Validation:

- In Silico: Project C* onto an independent, larger public atlas to see if it co-embeds uniquely.

- Wet-lab: Design fluorescence in situ hybridization (FISH) probes (e.g., via RNAscope) for top 2-3 marker genes and confirm co-expression in tissue sections. Alternatively, perform CITE-seq to confirm unique surface protein expression.

- Trajectory Inference Validation: For continuous states, use RNA velocity (scVelo, velocyto) on spliced/unspliced counts to confirm predicted directionality of state transitions.

Visualization of Core Workflows and Relationships

Title: Automated Annotation and Novel Discovery Workflow

Title: AI Method Relationships in Single-Cell Analysis

The Scientist's Toolkit: Key Research Reagent Solutions

| Category | Item / Reagent | Function in Experiment | Example Vendor/Product |

|---|---|---|---|

| Single-Cell Library Prep | Chromium Next GEM Chip | Partitions single cells with barcoded beads for 3' or 5' gene expression library prep. | 10x Genomics (Chromium Next GEM) |

| Multiplexing Oligos (CellPlex) | Allows sample multiplexing (pooling) for cost reduction and batch effect minimization. | 10x Genomics (CellPlex) | |

| Single Cell Multome ATAC + Gene Exp. | Enables simultaneous assay of chromatin accessibility (ATAC) and gene expression. | 10x Genomics (Multome) | |

| Surface Protein Profiling | TotalSeq Antibodies | Oligo-tagged antibodies for CITE-seq, measuring surface protein abundance alongside transcriptome. | BioLegend |

| Spatial Validation | RNAscope Probes | In situ hybridization (ISH) probes for validating marker gene expression of novel states in tissue context. | ACD Bio-Techne |

| Visium Spatial Gene Expression Slide | For spatially resolved whole-transcriptome analysis to map discovered cell states to tissue architecture. | 10x Genomics | |

| Functional Validation | Cell Sorting Antibodies | High-purity FACS isolation of novel cell populations for downstream functional assays (e.g., culture). | BD Biosciences, Miltenyi |

| Critical Software | Cell Ranger | Primary pipeline for processing raw sequencing data from 10x Genomics into count matrices. | 10x Genomics |

| Seurat, SCANPY | Primary open-source R/Python toolkits for downstream analysis, including all AI methods discussed. | Open Source | |

| Reference Databases | CellTypist Models | Pre-trained, community-curated automated annotation models for immune and other cell types. | EBI, celltypist.org |

Within the broader thesis on AI applications in single-cell genomics research, trajectory inference (TI) or pseudotime analysis stands as a cornerstone methodology. It computationally reconstructs the dynamic, continuous processes—such as cellular differentiation, disease progression, or drug response—from static single-cell RNA sequencing (scRNA-seq) snapshots. The integration of artificial intelligence (AI) and machine learning (ML) has dramatically enhanced our ability to model these complex, non-linear biological trajectories, moving beyond simple linear orderings to sophisticated graphs that capture branching, merging, and cyclic cell fate decisions. This whitepaper provides an in-depth technical guide to modern, AI-powered trajectory inference, detailing its core principles, algorithms, experimental validation, and applications in developmental biology and disease modeling for researchers and drug development professionals.

Core AI/ML Algorithms in Trajectory Inference

AI-powered TI methods move beyond traditional dimensionality reduction and simple ordering. They employ sophisticated statistical and deep learning models to infer high-dimensional trajectories.

Key Algorithmic Approaches:

- Graph-Based Learning: Models cells as nodes in a graph, with edges representing similarities. Trajectories are inferred by finding the shortest paths or minimum spanning trees (e.g., PAGA, Monocle 3).

- Probabilistic Modeling: Uses generative models like Gaussian Processes (e.g., Palantir) or Bayesian inference to model uncertainty in cell states and transition probabilities.

- Neural Ordinary Differential Equations (Neural ODEs): A breakthrough framework that uses neural networks to parameterize the derivatives of cell state change. It learns a continuous-time model of cellular dynamics from snapshot data (e.g., dyntaint, NeuralODE).

- Autoencoder-Based Architectures: Variational Autoencoders (VAEs) and their extensions learn a low-dimensional latent space where the trajectory structure is explicitly modeled. Methods like scVITAE, TASL, or CellRank use VAEs to capture non-linear manifolds and probabilistic cell fate biases.

- RNA Velocity-Informed Models: AI models integrate RNA velocity—which estimates the rate of gene expression change from spliced/unspliced mRNA counts—to guide trajectory inference towards biologically plausible directions of state change (e.g., CellRank 2, VeloVAE).

Comparative Analysis of Select AI-Powered TI Tools:

| Tool (Year) | Core AI/ML Methodology | Key Strength | Scalability (Cell Count) | Output Type | Disease Application Example |

|---|---|---|---|---|---|

| Monocle 3 (2020) | Graph Learning + UMAP | Robust branching analysis, complex topologies | >1 Million | Tree/Grap h | COPD progression from lung cells |

| PAGA (2019) | Graph Abstraction | Preserves global topology, model-agnostic | >1 Million | Abstracted Graph Map | Atlas-level integration, e.g., COVID-19 immune atlas |

| Palantir (2019) | Gaussian Processes + Diffusion Maps | Quantifies differentiation potential & uncertainty | ~50,000 | Probabilistic Paths | Cancer stem cell differentiation in AML |

| CellRank 2 (2023) | Kernel-Based + ML (e.g., VAE, GPs) | Integrates multi-optic data, velocity, & lineages | >500,000 | Macrostates, Fate Probabilities | Heart development & congenital disease |

| Dynamo (2022) | Neural ODEs + Analytical Formulations | Predicts future cell states & perturbation effects | ~100,000 | Vector Field, Trajectories | Modeling reprogramming to iPSCs |

Experimental Protocol for Trajectory Inference & Validation

A robust TI study requires careful experimental design and computational validation.

Standard Computational Workflow:

- Data Preprocessing: Quality control, normalization (e.g., SCTransform), and highly variable gene selection.

- Dimensionality Reduction: Use PCA, or an autoencoder (e.g., scVI) for denoising and compression.

- AI-Powered Trajectory Inference: Apply chosen TI algorithm (e.g., Monocle 3, CellRank) to infer pseudotime and graph structure.

- Gene Dynamics Analysis: Fit generalized additive models (GAMs) or use neural ODEs to identify genes dynamically regulated along pseudotime.

- Validation & Interpretation: Use held-out genes, RNA velocity (if not integrated), or external knowledge bases (e.g., CytoTRACE for differentiation potency) to assess biological plausibility.

Wet-Lab Validation Protocol: Title: Lineage Tracing Validation of Inferred Hepatocyte Differentiation Trajectory

- Cell Source: Primary human hepatoblasts (HB) in a 3D Matrigel culture system promoting differentiation.

- Barcoding & Time-Course: Lentiviral introduction of a heritable genetic barcode into the HB population. Sample cells at Days 0, 3, 7, 10, and 14 for scRNA-seq.

- Computational Inference: Perform AI-powered TI (using a tool like Palantir or CellRank) on the full, un-barcoded scRNA-seq dataset to predict a trajectory from HB → mature hepatocyte (MH).

- Hypothesis: The TI-predicted trajectory will recapitulate the known temporal order (Day 0 → Day 14).

- Validation: Extract the lineage barcode sequences from the scRNA-seq libraries. Construct a phylogenetic tree of barcodes. Correlate the barcode tree structure with the computationally inferred pseudotime ordering. A high correlation (Spearman's ρ > 0.7) validates the trajectory.

- Functional Assay: FACS-sort cells from predicted early, mid, and late pseudotime bins and assay for albumin secretion (late marker) and CYP450 activity (mature function) to confirm functional progression.

Visualization of Pathways and Workflows

Single-Cell Trajectory Analysis Workflow

Hematopoiesis Trajectory with Disease Perturbation

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in AI-Powered Trajectory Studies | Example Product/Technology |

|---|---|---|

| 10x Genomics Chromium | High-throughput single-cell partitioning & barcoding for generating large-scale scRNA-seq datasets essential for robust TI. | Chromium X Series |

| BD Rhapsody | Alternative platform for high-precision, targeted scRNA-seq, useful for focused trajectory studies on known gene panels. | BD Rhapsody Cartridge |

| Smart-seq2/3 Reagents | Full-length scRNA-seq protocol for high-sensitive analysis of individual cells, crucial for validating lowly expressed key regulators. | Takara Bio SMARTer kits |

| Cell Hashing Antibodies | Multiplex samples with oligonucleotide-tagged antibodies, reducing batch effects and costs for multi-condition trajectory studies. | BioLegend TotalSeq-A |

| Lentiviral Barcoding Libraries | For lineage tracing validation experiments, enabling heritable marking of progenitor cells to ground-truth computational inferences. | Custom sgRNA/library from VectorBuilder |

| Live Cell Dyes (e.g., CFSE) | To track cell division history experimentally, providing proliferation data that can correlate with pseudotime. | Thermo Fisher CellTrace |

| CITE-seq Antibody Panels | Simultaneously profile surface protein expression with transcriptome, adding a crucial modality to define cell states for TI. | BioLegend TotalSeq-C |

| Perturb-seq Pools | CRISPR-based single-cell knockout screens coupled with scRNA-seq, allowing causal inference of gene function on trajectories. | Synthego CRISPR libraries |

| Matrigel / 3D Culture Systems | To maintain primary cell states or drive differentiation ex vivo, creating systems where meaningful trajectories occur. | Corning Matrigel |

| Cell Ranger / STARsolo | Standardized pipelines for initial processing of scRNA-seq data from raw reads to count matrices, the essential input for TI. | 10x Genomics / Public Tool |

Applications in Development and Disease

- Developmental Biology: Mapping the precise lineage decisions in embryogenesis, organogenesis, and tissue regeneration. AI models can predict novel intermediate states and key transcriptional drivers of fate choices.

- Cancer: Reconstructing tumor evolution from initiation, through clonal expansion, to metastasis. TI can identify therapy-resistant subpopulations, their cellular origins, and potential vulnerabilities.

- Neurodegeneration: Modeling the progression of diseases like Alzheimer's from pre-symptomatic to late stages using post-mortem brain cells, identifying early transcriptional shifts.

- Drug Development: Simulating patient-specific cell responses to perturbations in silico. Neural ODE models can predict how a drug might shift a disease trajectory back towards a healthy state, prioritizing candidates.

AI-powered trajectory inference represents a paradigm shift in our ability to decipher the continuum of life and disease from single-cell genomics. By moving beyond static classification to dynamic modeling, these tools provide a causal, mechanistic framework for understanding cellular decision-making. As these methods mature and integrate multi-omic data, they will become indispensable in translational research, from pinpointing the origins of pathological states to designing strategies to redirect cellular fate towards therapeutic outcomes. Within the grand thesis of AI in genomics, TI serves as a critical interpreter, transforming high-dimensional snapshots into the moving pictures of biology.

The advent of multimodal single-cell technologies represents a paradigm shift in genomics, moving beyond gene expression profiling to capture a unified molecular and spatial portrait of cellular identity. This whitepaper provides a technical guide to integrating CITE-seq (Cellular Indexing of Transcriptomes and Epitopes by Sequencing), ATAC-seq (Assay for Transposase-Accessible Chromatin by Sequencing), and Spatial Transcriptomics. Framed within the broader thesis that AI is the essential engine for synthesizing these complex, high-dimensional datasets, we detail methodologies, analysis pipelines, and computational tools that empower researchers to deconvolute the intricate mechanisms governing cell state, fate, and function in development, disease, and drug response.

Single-cell transcriptomics has revolutionized biology but offers a limited view. True cellular understanding requires concurrent measurement of the genome (via chromatin accessibility), proteome (via surface protein abundance), and transcriptome within a native spatial context. The simultaneous generation of these data modalities creates an integration challenge that is fundamentally computational. AI and machine learning (ML) are no longer just advantageous but necessary to model the non-linear relationships between these layers, disentangle biological signal from technical noise, and predict novel cellular behaviors. This integration is critical for drug development, enabling target identification, mechanism-of-action studies, and patient stratification with unprecedented resolution.

Core Technologies & Data Structures

CITE-seq: Paired RNA and Surface Protein Measurement

CITE-seq uses oligonucleotide-tagged antibodies to quantify surface protein abundance alongside transcriptomes in single cells within the same droplet-based sequencing run.

Key Experimental Protocol:

- Cell Preparation: Generate a single-cell suspension with viability >90%.

- Antibody Staining: Incubate cells with a cocktail of ~100-200 DNA-barcoded antibodies (TotalSeq from BioLegend or similar) for 30 min on ice.

- Washing: Remove unbound antibodies with multiple PBS+BSA buffer washes.

- Co-encapsulation: Load stained cells, RT/PCR reagents, and CITE-seq-enabled gel beads (10x Genomics Feature Barcode technology) into a microfluidic chip.

- Library Preparation: Generate separate but linked libraries:

- Gene Expression Library: Poly-A capture of mRNA transcripts.

- Antibody-Derived Tag (ADT) Library: PCR amplification of antibody barcodes.

- Sequencing: Pooled libraries are sequenced on platforms like Illumina NovaSeq. ADT libraries require a lower sequencing depth (~5,000 reads/cell) compared to cDNA.

scATAC-seq: Chromatin Accessibility Profiling

scATAC-seq identifies open chromatin regions, marking active regulatory elements (promoters, enhancers) using a hyperactive Tn5 transposase.

Key Experimental Protocol (10x Genomics Chromium Single Cell ATAC Solution):

- Nuclei Isolation: Lyse cells and isolate intact nuclei in a cold, isotonic buffer.

- Transposition: Incubate nuclei with engineered Tn5 transposase loaded with sequencing adapters. Tn5 simultaneously fragments accessible DNA and inserts adapters.

- Barcoding & Amplification: Use microfluidics to partition nuclei into gel beads-in-emulsion (GEMs), where transposed DNA fragments are barcoded with a unique cell identifier and amplified via PCR.

- Library Construction: Purify and size-select fragments (primarily 100–600 bp).

- Sequencing: Paired-end sequencing is required to map fragment boundaries.

Spatial Transcriptomics: Mapping Gene Expression in Tissue Context

Technologies like Visium (10x Genomics) or Slide-seq provide spatially resolved, genome-wide expression data.

Key Experimental Protocol (Visium Spatial Gene Expression):

- Tissue Preparation: Flash-freeze or OCT-embed fresh tissue. Cryosection at 10 µm thickness onto Visium slides containing ~5,000 barcoded spots (55 µm diameter each).

- Fixation & Staining: H&E staining for histology-guided annotation.

- Permeabilization: Optimize time to release mRNA from tissue.

- Reverse Transcription: Released mRNA is captured by spot-specific barcoded oligo-dT primers and reverse-transcribed in situ.

- cDNA Synthesis & Library Prep: Second-strand synthesis, denaturation, and amplification to create a sequencing library.

- Sequencing & Alignment: Sequence library and align reads to genome and spot-specific spatial barcodes.

Table 1: Comparison of Core Multimodal Single-Cell Technologies

| Technology | Measured Modality | Typical Cells/Experiment | Key Readout | Primary Application | Key Limitation |

|---|---|---|---|---|---|

| CITE-seq | Transcriptome + Surface Proteome | 5,000 - 20,000 | UMI counts (RNA), ADT counts (Protein) | Immune phenotyping, cell surface target validation | Limited to pre-defined antibody panel (~200 proteins) |

| scATAC-seq | Chromatin Accessibility | 5,000 - 30,000 | DNA fragment counts in open chromatin peaks | Regulatory network inference, TF activity | Sparse data, challenging integration with RNA |

| Spatial Transcriptomics (Visium) | Transcriptome + Spatial Location | ~5,000 spots (multiple cells/spot) | UMI counts per spatial barcode | Tumor microenvironments, developmental biology | Spot resolution > single-cell; lower sensitivity |

Table 2: AI/ML Tools for Multimodal Data Integration

| Tool | Primary Method | Input Data Types | Key Output | Reference (Year) |

|---|---|---|---|---|

| Seurat v5 | Canonical Correlation Analysis (CCA), Reciprocal PCA, Weighted Nearest Neighbors (WNN) | RNA, ADT, ATAC (peaks), Spatial | Unified cell clusters, multimodal UMAPs | Hao* et al., Nature (2024) |

| TotalVI | Variational Autoencoder (VAE) | RNA (scVI), ADT | Denoised protein expression, joint latent representation | Gayoso* et al., Nat. Commun. (2021) |

| MultiVI | Deep probabilistic model (VAE) | RNA, ATAC | Joint cell embedding, imputed accessibility | Ashuach* et al., BioRxiv (2022) |

| SpaGCN | Graph Convolutional Network (GCN) | Spatial Transcriptomics, Histology | Spatial domains, spatially variable genes | Hu et al., Nat. Methods (2021) |

| CellCharter | Context-aware ML | Spatial, Protein (CODEX/IMC), RNA | Cellular niches, neighborhood analysis | Varrone* et al., BioRxiv (2024) |

AI-Driven Integration Workflows & Signaling Pathway Inference

Integration of Paired vs. Unpaired Multimodal Data

AI models must handle data that are either paired (measured from the exact same cell, e.g., CITE-seq) or unpaired (measured from different cells from the same sample, e.g., scRNA-seq + scATAC-seq). For unpaired data, methods like MultiVI or BindSC use transfer learning and mutual nearest neighbors in a shared latent space to align modalities.

Diagram Title: AI Workflow for Integrating Paired and Unpaired Multimodal Data.

From Multimodal Data to Inferred Signaling Pathways

Integrated data can be used to predict cell-cell communication and active signaling pathways. A common approach involves:

- Niche Identification: Use spatial data (or graph-based clustering on RNA/protein) to define cellular neighborhoods.

- Ligand-Receptor Co-expression: Calculate expression of ligands and receptors from RNA or protein data across neighboring cell types.

- Accessibility of Target Genes: Use scATAC-seq to assess chromatin accessibility of pathway target genes, validating downstream activity.

- AI-Based Prediction: Tools like NicheNet or CellChat use prior knowledge networks combined with integrated data to infer biologically relevant signaling.

Diagram Title: Inferring Signaling Pathways from Integrated Multimodal Data.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagent Solutions for Multimodal Single-Cell Experiments

| Item | Vendor/Example | Function in Experiment |

|---|---|---|

| TotalSeq Antibodies | BioLegend | DNA-barcoded antibodies for CITE-seq; bridge protein detection to sequencing. |

| Chromium Single Cell Immune Profiling | 10x Genomics | Integrated kit for simultaneous gene expression and surface protein (CITE-seq) detection. |

| Chromium Single Cell ATAC Kit | 10x Genomics | Reagents and beads for generating single-cell chromatin accessibility libraries. |

| Visium Spatial Tissue Optimization Slide | 10x Genomics | Pre-optimization slide to determine ideal tissue permeabilization time. |

| Visium Spatial Gene Expression Slide & Kit | 10x Genomics | Barcoded slide and all reagents for Spatial Transcriptomics library prep. |

| Nuclei Isolation Kits (e.g., Nuclei EZ Lysis) | Sigma-Aldrich | Gentle lysis buffers for isolating intact nuclei for scATAC-seq. |

| Tn5 Transposase | Illumina (Nextera) / DIY | Engineered enzyme for simultaneous fragmentation and tagging of open chromatin. |

| Dual Index Kit TT Set A | 10x Genomics | Unique dual indices for multiplexing samples in scATAC-seq and other assays. |

| RiboGuard RNase Inhibitor | Takara Bio | Critical for preserving RNA integrity during lengthy multimodal protocols. |

| BSA (Nuclease-Free) | New England Biolabs | Used in wash buffers to reduce non-specific binding of antibodies in CITE-seq. |

The integration of CITE-seq, ATAC-seq, and Spatial Transcriptomics data transcends the limitations of any single modality, offering a near-comprehensive view of cellular state. However, the complexity and scale of such data make traditional analysis intractable. As detailed in this guide, the path forward is inextricably linked to the development and application of sophisticated AI models—from variational autoencoders to graph neural networks. For the drug development professional, this integration enables the identification of novel combinatorial biomarkers, the high-resolution mapping of drug effects across cellular networks, and the development of more predictive in silico models of disease. The future of single-cell genomics is not just multimodal; it is intelligently integrated through artificial intelligence.

Predictive Modeling for Disease Mechanisms and Drug Response

This whitepaper details the integration of predictive modeling into single-cell genomics to elucidate disease mechanisms and drug responses. Framed within a broader thesis on AI in single-cell research, this guide provides the technical framework for researchers and drug development professionals to build, validate, and deploy models that translate high-dimensional cellular data into actionable biological insights and therapeutic predictions.

Foundational Data Types and Quantitative Landscape

Predictive modeling in this domain relies on integrating multimodal single-cell data. The table below summarizes core quantitative data types and their characteristics.

Table 1: Core Single-Cell Data Types for Predictive Modeling

| Data Modality | Typical Scale (Cells x Features) | Key Predictive Features | Primary Modeling Use |

|---|---|---|---|

| scRNA-seq | 10^4 - 10^6 cells x 15,000-30,000 genes | Gene expression counts, Spliced/Unspliced ratios | Cell state identification, trajectory inference, differential expression. |

| scATAC-seq | 10^4 - 10^5 cells x 500,000+ peaks | Chromatin accessibility peaks, motif activities | Regulatory network inference, enhancer-gene linkage. |

| CITE-seq/REAP-seq | 10^4 - 10^5 cells x 100-500 proteins | Surface protein abundance (ADT counts) | Phenotypic anchoring, cell surface profiling. |

| Perturb-seq/CRISPR screens | 10^5 - 10^7 cells x 100-1,000 guides | Gene expression + perturbation identity | Causal gene function, genetic interaction networks. |

| Drug Response (sc) | 10^3 - 10^4 cells x 10-100 compounds | Post-treatment transcriptomic profiles | Drug mechanism of action, resistance pathways. |

Core Methodological Framework

Experimental Protocol: Single-Cell Drug Perturbation Screening

A key experiment for modeling drug response involves exposing a diseased cell population (e.g., primary cancer cells or engineered tissue models) to a library of compounds at multiple doses, followed by single-cell transcriptomic profiling.

Detailed Protocol:

- Cell Preparation: Culture target cell population (e.g., patient-derived organoids, PBMCs). Ensure viability >95%.

- Perturbation: Plate cells in 384-well format. Using a liquid handler, add compounds from a predefined library (e.g., FDA-approved drugs, targeted inhibitors) across a 4-point dilution series (e.g., 10 nM, 100 nM, 1 µM, 10 µM). Include DMSO-only wells as controls. Incubate for a predetermined time (e.g., 24-72 hours).

- Single-Cell Library Preparation: Pool cells from all wells, ensuring equal representation. Perform viability staining and sort live cells. Generate single-cell RNA-seq libraries using a platform like 10x Genomics Chromium Next GEM, incorporating sample multiplexing oligos (e.g., CellPlex) to retain well-of-origin information.

- Sequencing: Sequence libraries to a target depth of 50,000 reads per cell on an Illumina NovaSeq platform.

- Computational Demultiplexing: Use tools like

CellRanger mkfastqandCellRanger countfor initial processing. EmploySoupXorDecontXto remove ambient RNA. Demultiplex samples usingCellBenderorSeurat’sHTODemuxfunction to assign each cell to its original drug treatment condition.

Predictive Model Architectures and Training

Workflow Diagram:

Diagram Title: Predictive Modeling Workflow from Data to Tasks

Common Model Architectures:

- Variational Autoencoders (VAEs): (

scVI,totalVI) Learn a low-dimensional, probabilistic latent representation of single-cell data. Used for batch correction, denoising, and as a feature extractor for downstream models. - Graph Neural Networks (GNNs): Model cells as a graph (nodes=cells, edges=similarities). Powerful for capturing neighborhood dependencies and predicting the effect of perturbations on cellular communities.

- Transformer-based Models: (

scBERT,Geneformer) Pre-trained on large-scale scRNA-seq corpora. Fine-tuned for specific tasks like predicting cell state transitions upon drug treatment.

Training Protocol:

- Data Partitioning: Split cells (not samples) into training (70%), validation (15%), and held-out test (15%) sets. Ensure all conditions are represented in each split.

- Hyperparameter Optimization: Use Bayesian optimization (e.g.,

Optuna) over 50-100 trials to tune learning rate, hidden layer dimensions, and dropout rates. Monitor validation loss. - Regularization: Employ techniques like early stopping, dropout, and weight decay to prevent overfitting to technical noise.

- Interpretability: Apply post-hoc methods like SHAP (SHapley Additive exPlanations) or integrated gradients on the latent space to identify feature genes driving predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Predictive Modeling Experiments

| Item | Function | Example Product/Catalog |

|---|---|---|

| 10x Genomics Chromium Next GEM Chip K | Partitions single cells into nanoliter-scale droplets for barcoded library preparation. | 10x Genomics, 1000269 |

| Cell Multiplexing Oligos (CMOs) | Allows sample pooling by labeling cells from different conditions with unique lipid-tagged barcodes. | 10x Genomics CellPlex Kit, 1000265 |

| Chromium Next GEM Single Cell 5' Reagent Kit | Reagents for generating gene expression, immune profiling, and CRISPR screening libraries. | 10x Genomics, 1000263 |

| Live/Dead Viability Stain | Fluorescent dye (e.g., DRAQ7, Sytox Green) to exclude dead cells during FACS sorting. | BioLegend, 424001 |

| Compound Library | Curated set of pharmacologically active molecules for perturbation screening. | Selleckchem, L3000 (FDA-approved) |

| CITE-seq Antibody Panel | Oligo-tagged antibodies for simultaneous surface protein measurement. | BioLegend TotalSeq-C |

| Nucleic Acid Stain | Accurate cell counting and viability assessment prior to loading. | Thermo Fisher, Acridine Orange/Propidium Iodide (AOPI) stain |

| RNase Inhibitor | Protects RNA integrity during cell processing and library prep. | Takara, 2313B |

Pathway Mapping and Mechanism Inference

A critical output is mapping model predictions onto biological pathways. For instance, a model predicting resistance to a BRAF inhibitor in melanoma might implicate MAPK pathway reactivation or immune evasion pathways.

Signaling Pathway Diagram:

Diagram Title: MAPK Signaling Pathway and Inhibitor Feedback

Validation and Translation

Table 3: Model Validation Metrics and Benchmarks

| Validation Type | Metric | Current Benchmark (State-of-the-Art) | Interpretation |

|---|---|---|---|

| Cell State Prediction | Adjusted Rand Index (ARI) | 0.85-0.95 on annotated PBMC datasets | Measures clustering accuracy against gold-standard labels. |

| Drug Response Prediction | Root Mean Square Error (RMSE) of predicted vs. measured IC50 | ~0.3 log(µM) in large-scale screens (e.g., LINCS) | Accuracy of dose-response prediction. |

| Perturbation Effect | Area Under Precision-Recall Curve (AUPRC) | 0.7-0.8 for predicting essential genes in Perturb-seq | Ability to identify true causal hits. |

| Clinical Outcome Correlation | Concordance Index (C-index) | >0.65 in retrospective patient cohort studies | Predictive power for patient survival or treatment benefit. |

Validation Protocol: In Vitro to Ex Vivo Correlation

- Model Prediction: Use a trained model to predict top 3 candidate compounds for a new patient-derived cancer line.

- Ex Vivo Testing: Treat an aliquot of the patient's viable tumor cells (in 3D culture) with the predicted compounds.

- Endpoint Assay: After 96 hours, measure cell viability via ATP-based luminescence (CellTiter-Glo).

- Correlation Analysis: Calculate Pearson correlation between model-predicted sensitivity scores (e.g., latent space shift magnitude) and actual ex vivo viability reduction. A correlation of r > 0.5 is considered promising for further development.

The identification of novel, high-confidence therapeutic targets remains a primary bottleneck in oncology and immunology drug development. Traditional bulk sequencing masks critical cellular heterogeneity, while manual analysis of high-dimensional single-cell RNA sequencing (scRNA-seq) data is intractable. This case study positions itself within a broader thesis on AI in single-cell genomics, demonstrating how integrated computational pipelines are transforming target identification from a discovery-phase to a validation-ready workflow. We present a technical guide on implementing an AI-augmented framework that leverages multi-omic single-cell data to prioritize actionable targets.

Core Methodology: An Integrated Computational-Experimental Pipeline

The accelerated workflow integrates three core phases: Atlas Construction, AI-Powered Candidate Prioritization, and Functional Validation.

2.1. Phase I: Construction of a Multi-Condition Single-Cell Atlas

- Experimental Protocol:

- Sample Procurement: Collect fresh tumor and matched non-malignant tissue from patients (e.g., NSCLC, melanoma) and from relevant murine models. Include samples from untreated, treated (e.g., checkpoint inhibitor), and relapsed conditions.

- Cell Dissociation & Viability: Process tissues using gentle mechanical and enzymatic dissociation (e.g., Miltenyi Biotec's Tumor Dissociation Kit). Pass cells through a 70µm strainer and assess viability (>90% via trypan blue).

- Multiplexed scRNA-seq Library Preparation: Use a cell-hashing approach (e.g., BioLegend TotalSeq-B antibodies) to pool samples from up to 12 conditions, reducing batch effects. Perform library preparation using the 10x Genomics Chromium Next GEM Single Cell 5' v3 kit with Feature Barcode technology for simultaneous gene expression, surface protein (CITE-seq), and TCR/BCR profiling.

- Sequencing: Sequence libraries on an Illumina NovaSeq 6000 to a minimum depth of 50,000 reads per cell.

2.2. Phase II: AI-Powered Target Candidate Prioritization

- Computational Protocol:

- Preprocessing & Integration: Process raw data using Cell Ranger. Apply SCTransform normalization and integrate datasets across conditions using Harmony or Seurat's integration anchors to remove technical variance.

- Unsupervised Clustering & Annotation: Perform PCA, UMAP reduction, and Leiden clustering. Annotate cell types using a reference-based (e.g., SingleR) and marker-based approach, cross-referenced with CITE-seq protein data.

- Differential Analysis & Trajectory Inference: Identify differentially expressed genes (DEGs) between critical populations (e.g., exhausted CD8+ T cells vs. functional memory T cells) using MAST or Wilcoxon rank-sum test. Apply pseudotime analysis (Monocle3, PAGA) to model cell state transitions.

- AI-Driven Prioritization Module:

- Input Features: For each gene, compile: (i) Log2 fold-change & adjusted p-value from DEG analysis, (ii) Expression specificity score (e.g., Jensen-Shannon divergence), (iii) Pathway enrichment (Reactome, MSigDB), (iv) Ligand-Receptor interaction score (CellPhoneDB, NicheNet), (v) CRISPR screen fitness score (from DepMap portal), (vi) Druggability score (from databases like DGIdb).

- Model Training: Train a gradient-boosted tree model (XGBoost) on labeled historical data where targets succeeded or failed in preclinical validation. Use SHAP (Shapley Additive exPlanations) values to interpret feature importance for each prediction.

2.3. Phase III: High-Throughput In Vitro Validation

- Experimental Protocol (Pooled CRISPR Screening):

- Library Design: Synthesize a custom CRISPRko library targeting the top 200 AI-prioritated genes plus 50 non-targeting controls and 20 essential/positive controls.

- Cell Transduction & Selection: Transduce a relevant in vitro co-culture system (e.g., patient-derived organoids with autologous T cells) with the lentiviral sgRNA library at a low MOI to ensure single integration. Select with puromycin for 72 hours.

- Phenotypic Selection: Culture cells for 14-21 days, applying selection pressure (e.g., cytokine withdrawal, chemotherapeutic agent). Harvest genomic DNA at multiple time points.

- sgRNA Quantification: Amplify integrated sgRNA sequences via PCR and sequence on an Illumina MiSeq. Quantify sgRNA abundance depletion/enrichment using MAGeCK-VISPR pipeline.

Data Presentation

Table 1: Summary of AI-Prioritized Target Candidates from NSCLC scRNA-Seq Atlas

| Target Gene | Cell Type Specificity | DEG (Log2FC) | Pathway Association | Ligand-Receptor Role | In Vitro Screen Fitness Score (β) | AI Model Priority Score |

|---|---|---|---|---|---|---|

| TIGIT | Exhausted CD8+ T cells | 4.2 | Immune Checkpoint | Receptor (PVR ligand) | -1.85 | 0.94 |

| LAIR1 | Tumor-Associated Macrophage | 3.8 | Collagen Binding / Immunoregulation | Receptor (Collagen ligand) | -1.21 | 0.87 |

| CD38 | Plasma cell, exhausted T cell | 2.5 | NAD+ Metabolism | Ectoenzyme | -0.98 | 0.79 |

| GPRC5A | Malignant Epithelial | 5.1 | Retinoic Acid Response | Orphan GPCR | -2.34 | 0.92 |

Table 2: Pooled CRISPR Screen Validation Results (Day 21)

| Target Class | # Genes Targeted | # Hits (β < -0.5, p<0.01) | Validation Rate | Top Validated Hit |

|---|---|---|---|---|

| AI-Prioritized | 200 | 47 | 23.5% | LAIR1 |

| Random Genome | 200 | 12 | 6.0% | N/A |

| Positive Controls (Essential) | 20 | 18 | 90.0% | POLR2A |

Visualizations

Title: Three-Phase AI-Driven Target ID Workflow (76 chars)

Title: LAIR1 Inhibitory Signaling Pathway in T Cells (66 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Solution | Vendor Example | Primary Function in Workflow |

|---|---|---|

| Single Cell 5' Kit v3 with Feature Barcode | 10x Genomics | Enables simultaneous capture of 5' gene expression and surface protein (CITE-seq) or TCR data from thousands of single cells. |

| TotalSeq-B Antibodies | BioLegend | Antibody-derived tags for CITE-seq, allowing immunophenotyping alongside transcriptomic profiling. |

| Tumor Dissociation Kit, human | Miltenyi Biotec | Optimized enzyme blend for gentle tissue dissociation to maximize viable single-cell yield from complex solid tumors. |

| Chromium Next GEM Chip K | 10x Genomics | Microfluidic device for partitioning single cells and barcoded beads into nanoliter-scale Gel Bead-In-EMulsions (GEMs). |

| LentiArray CRISPR Library | Horizon Discovery | Pre-designed, ready-to-use pooled lentiviral sgRNA libraries for targeted or genome-wide knockout screens. |

| Cell Staining Buffer | Tonbo Biosciences | Flow cytometry-compatible buffer for antibody staining in CITE-seq protocols, minimizing cell loss. |

| MAGeCK-VISPR Software | Open Source | Comprehensive computational pipeline for the analysis and visualization of CRISPR screen sequencing data. |

Navigating the Noise: Practical Strategies for Robust AI Analysis

The integration of Artificial Intelligence (AI) into single-cell genomics represents a paradigm shift, enabling the deconvolution of biological complexity at unprecedented resolution. A core thesis of modern computational biology posits that AI is not merely an analytical tool but a foundational component for validating biological discovery by disentangling true biological signal from pervasive technical noise. Among the most formidable technical challenges is the batch effect—systematic non-biological variation introduced due to differences in sample processing times, reagents, sequencing platforms, or laboratory conditions. This whitepaper provides an in-depth technical guide to AI-driven strategies designed to correct for these artifacts, thereby ensuring robust, reproducible, and biologically accurate insights in research and drug development.

The Nature and Impact of Batch Effects

Batch effects manifest as shifts in gene expression distributions that are correlated with experimental batches rather than biological phenotypes. In single-cell RNA sequencing (scRNA-seq), they can obscure cell-type identification, confound differential expression analysis, and lead to false conclusions. Quantitative measures of batch effect severity include:

- Principal Component Analysis (PCA) Variance Explained: The proportion of variance in the first few principal components attributed to batch metadata.

- k-Nearest Neighbor Batch Effect Test (kBET): A statistical test that evaluates whether the local distribution of batch labels matches the global distribution.

- Silhouette Width: Measures cell-type purity within clusters; lower scores indicate batch mixing within cell-type clusters.

- Local Inverse Simpson’s Index (LISI): Quantifies the effective number of batches or cell types in a local neighborhood.

Table 1: Quantitative Metrics for Batch Effect Assessment

| Metric | Purpose | Ideal Value | Interpretation of Poor Performance |

|---|---|---|---|

| Batch PCA Variance | Quantifies global batch signal | < 5% in top PCs | High % variance indicates strong batch effect. |

| kBET Rejection Rate | Tests local batch mixing | ~0.05 (alpha level) | Rate >> 0.05 indicates significant batch separation. |

| Cell-type Silhouette | Measures cluster purity | > 0.5 (High purity) | Low score indicates cell types split by batch. |

| Integration LISI (iLISI) | Measures batch mixing | High (close to # of batches) | Low score indicates poor batch integration. |

| Cell-type LISI (cLISI) | Measures cell-type separation | Low (close to 1) | High score indicates cell types are mixed. |

AI-Driven Correction Strategies: Methodologies and Protocols

Deep Learning-Based Integration: scVI and scANVI

Experimental Protocol:

- Input Data Preparation: Form a gene expression count matrix (cells x genes) with associated batch and, if available, cell-type label vectors.

- Model Architecture Specification (scVI):

- Encoder: A neural network maps observed expression data to a distribution in a low-dimensional latent space (z), parameterized by a Gaussian. Batch information is used as an input covariate.

- Latent Space: The low-dimensional representation