AI-Powered Biomarker Discovery in Cancer: Revolutionizing Precision Oncology with Machine Learning

This article provides a comprehensive overview of AI-driven predictive biomarker discovery in oncology, tailored for researchers, scientists, and drug development professionals.

AI-Powered Biomarker Discovery in Cancer: Revolutionizing Precision Oncology with Machine Learning

Abstract

This article provides a comprehensive overview of AI-driven predictive biomarker discovery in oncology, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of predictive biomarkers and the role of artificial intelligence, delves into core methodologies like deep learning and multi-omics integration, addresses key challenges in model optimization and data quality, and critically evaluates validation frameworks and comparative performance against traditional methods. The synthesis aims to serve as a strategic guide for implementing and validating AI-powered biomarker pipelines to accelerate the development of personalized cancer therapies.

From Data to Insight: Understanding AI's Role in Predictive Biomarker Discovery for Cancer

Defining Predictive vs. Prognostic Biomarkers in Modern Oncology

In the era of precision oncology, the accurate distinction between predictive and prognostic biomarkers is fundamental to therapeutic decision-making and clinical trial design. The core thesis of this document is that AI-driven discovery platforms are revolutionizing this field by decoding complex, high-dimensional omics data to identify novel biomarkers with higher specificity. This technical guide delineates the definitions, validation pathways, and experimental protocols essential for modern biomarker research, framed within the context of leveraging artificial intelligence to accelerate and refine this critical process.

Definitions and Key Distinctions

- Prognostic Biomarker: Informs about the natural history of the disease (e.g., overall survival, risk of recurrence) in an untreated patient or a patient treated with standard-of-care. It provides information on the inherent aggressiveness of the cancer.

- Predictive Biomarker: Indicates the likelihood of benefit (or harm) from a specific therapeutic intervention. It provides information on the drug-tumor interaction.

Table 1: Core Differences Between Prognostic and Predictive Biomarkers

| Feature | Prognostic Biomarker | Predictive Biomarker |

|---|---|---|

| Primary Question | What is the likely disease course/outcome? | Who will respond to a specific therapy? |

| Clinical Utility | Informs prognosis; may guide intensity of standard therapy (e.g., adjuvant chemotherapy). | Informs therapy selection; is the basis for a targeted therapy. |

| Treatment Context | Independent of a specific novel therapy. | Inherently linked to a specific therapeutic agent. |

| Example | High KI-67 index in breast cancer indicating higher risk of recurrence. | HER2 amplification predicting response to trastuzumab. |

| Statistical Test | Significant main effect in a multivariate model. | Significant treatment-by-biomarker interaction effect. |

Current Quantitative Landscape

Recent analyses highlight the growing prevalence and impact of biomarker-driven oncology.

Table 2: Quantitative Snapshot of Biomarkers in Oncology (2020-2024)

| Metric | Value | Source / Context |

|---|---|---|

| FDA-Approved Predictive Biomarkers (Total) | ~50 | Across all solid tumors and hematologic malignancies. |

| Average Acceleration in Drug Development | 25-30% | When paired with a validated predictive biomarker. |

| AI-Published Biomarker Candidates (2023) | 1,200+ | Novel associations identified via ML models in public omics datasets. |

| Clinical Trials with Biomarker Stratification (2024) | ~65% of Phase III trials | Up from ~45% in 2018. |

| Concordance of AI-Discovered Targets with Wet-Lab Validation | ~40-60% | Highlighting the need for rigorous experimental follow-up. |

Experimental Protocols for Validation

Protocol for Retrospective Prognostic Biomarker Analysis

Objective: To determine if a candidate biomarker (e.g., gene expression signature) is independently associated with clinical outcome (e.g., Disease-Free Survival, DFS) in a cohort treated with standard therapy.

- Cohort Selection: Identify a formalin-fixed, paraffin-embedded (FFPE) tissue cohort with annotated long-term clinical outcome data (minimum 5-year follow-up) from patients treated with uniform standard-of-care.

- Biomarker Assay: Perform the candidate assay (e.g., RNA-seq, multiplex immunohistochemistry) under standardized, CLIA-like conditions. Technicians should be blinded to clinical outcomes.

- Dichotomization: Using a pre-specified cut-point (e.g., median, optimal cut-point from a training set), classify samples as "Biomarker High" or "Biomarker Low."

- Statistical Analysis:

- Perform Kaplan-Meier analysis to estimate survival curves for each group. Compare using the log-rank test.

- Conduct multivariate Cox proportional hazards regression, adjusting for established clinical-pathological factors (e.g., stage, age, performance status). A hazard ratio (HR) with a p-value < 0.05 indicates independent prognostic value.

Protocol for Predictive Biomarker Validation in a Randomized Trial

Objective: To test if biomarker status modifies the treatment effect of a novel therapy (Drug X) vs. standard therapy (Drug S).

- Trial Design: Ideally, a prospective-retrospective analysis from a Phase III randomized controlled trial (RCT) where patients were randomized to Drug X vs. Drug S.

- Biomarker Testing: Perform the assay on baseline tumor samples from all available patients in the RCT. The testing lab must be blinded to both treatment arm and outcome.

- Analysis of Interaction:

- Stratify patients into four groups: Biomarker High/ Low treated with Drug X or Drug S.

- The primary test is for a statistical interaction between treatment assignment and biomarker status in a Cox model.

- A significant interaction term (p < 0.05) is the hallmark of a predictive biomarker. Superiority of Drug X over S in the "Biomarker High" group, but not in the "Low" group, provides clinical evidence.

Visualization of Concepts and Workflows

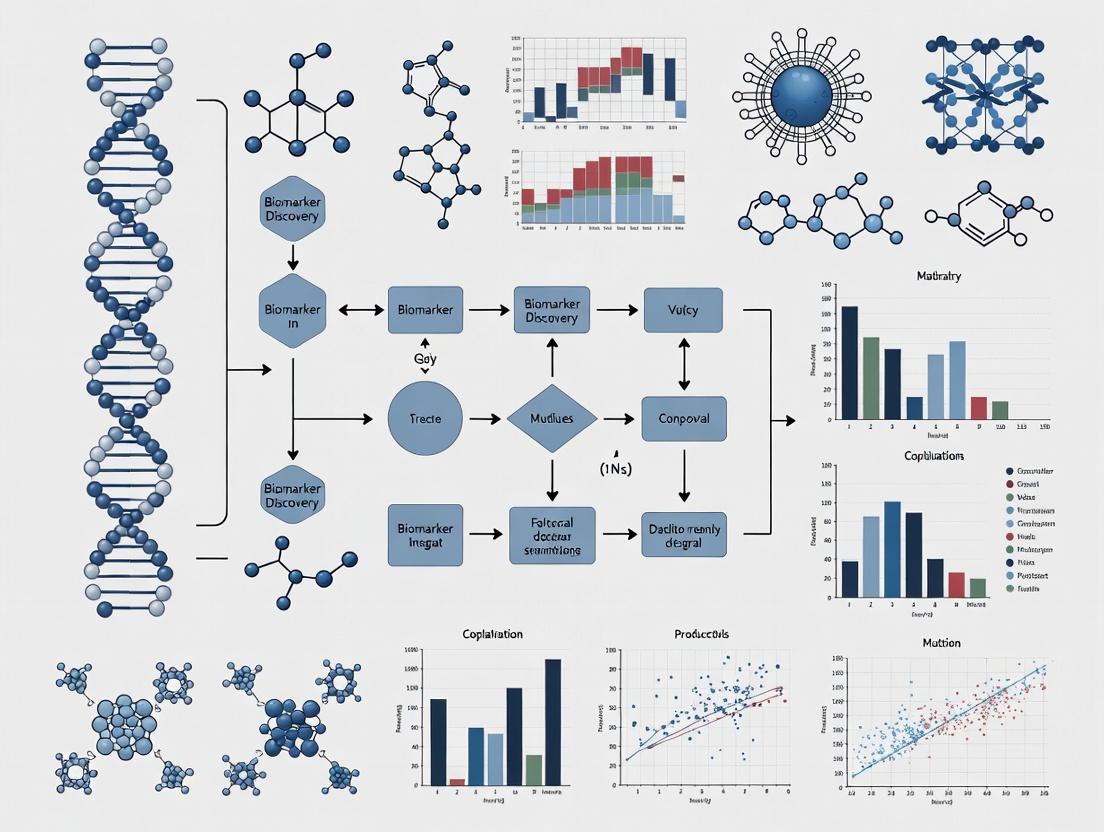

Diagram 1: Clinical Decision Pathway Using Biomarkers

Diagram 2: AI-Driven Biomarker Discovery Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Biomarker Discovery & Validation Experiments

| Item | Function in Biomarker Research | Example Vendor/Product |

|---|---|---|

| FFPE RNA Extraction Kit | Isolates high-quality, amplifiable RNA from archived clinical tissue samples for expression profiling. | Qiagen RNeasy FFPE Kit; Thermo Fisher RecoverAll Total Nucleic Acid Kit. |

| Multiplex IHC/IF Antibody Panel | Enables simultaneous detection of 4-8 protein biomarkers on a single tissue section, preserving spatial context. | Akoya Biosciences Opal Polychromatic IF Kits; Abcam Multi-plex IHC kits. |

| NGS Pan-Cancer Panel | Targeted sequencing of several hundred cancer-associated genes for genomic biomarker identification. | Illumina TruSight Oncology 500; FoundationOne CDx. |

| Digital Spatial Profiling (DSP) Reagents | Allows for whole-transcriptome or protein analysis from user-defined regions of interest on an FFPE slide. | NanoString GeoMx Human Whole Transcriptome Atlas; Protein Assay. |

| Organoid Culture Media | Supports the growth of patient-derived tumor organoids for functional validation of biomarker-drug relationships. | STEMCELL Technologies IntestiCult; Corning Matrigel. |

| Single-Cell RNA-seq Library Prep Kit | Facilitates biomarker discovery at single-cell resolution to deconvolute tumor microenvironment contributions. | 10x Genomics Chromium Next GEM Single Cell 3' Kit; BD Rhapsody WTA Kit. |

The central thesis of modern oncology research posits that AI-driven predictive biomarker discovery, powered by the integration of multi-omics data, is essential for decoding tumor heterogeneity, understanding therapeutic resistance, and delivering precision medicine. This whitepaper details how the deluge of data from disparate omics layers provides the necessary substrate for training sophisticated AI models to uncover these critical biomarkers.

The Multi-Omics Data Landscape in Oncology

Each omics layer provides a unique, quantitative snapshot of biological activity. When integrated, they form a multi-dimensional representation of a tumor's state.

Table 1: Key Characteristics of Multi-Omics Data Layers

| Omics Layer | Core Measurement | Typical Data Scale per Sample | Key Technology Platforms | Relevance to Biomarker Discovery |

|---|---|---|---|---|

| Genomics | DNA Sequence & Variation | ~3 GB (WGS) | NGS (Illumina), Long-read (PacBio, ONT) | Identifies hereditary risk, somatic driver mutations, copy number alterations. |

| Transcriptomics | RNA Expression Levels | ~0.5-1 GB (RNA-seq) | Bulk/Single-cell RNA-seq, Microarrays | Reveals gene expression signatures, aberrant pathways, immune cell infiltration. |

| Proteomics | Protein Abundance & Modification | Varies (10s MB) | Mass Spectrometry (LC-MS/MS), RPPA, Olink | Directly measures functional effectors, phospho-signaling, drug targets. |

| Imaging | Morphological & Functional Phenotype | >1 GB (WSI, MRI) | Digital Pathology, Radiomics (CT/PET/MRI) | Captures spatial architecture, tumor-stroma interactions, heterogeneity. |

Experimental Protocols for Multi-Omics Data Generation

Integrated Single-Cell Multi-Omics Protocol (CITE-seq)

- Objective: Simultaneously profile transcriptome and surface protein expression in single cells.

- Workflow:

- Cell Suspension Preparation: Generate a viable single-cell suspension from fresh or frozen tissue (tumor dissociated).

- Antibody Tagging: Stain cells with a panel of antibodies conjugated to oligonucleotide barcodes (TotalSeq antibodies).

- Library Preparation: Load cells onto a microfluidic chip (10x Genomics). GEMs (Gel Bead-In-Emulsions) are formed, capturing both cellular mRNA and antibody-derived tags.

- Sequencing: Perform next-generation sequencing (Illumina NextSeq/NovaSeq). The reads are demultiplexed into two libraries: gene expression (from poly-dT) and antibody-derived tags (ADT).

- Data Processing: Use Cell Ranger (10x Genomics) and Seurat R package to align reads, quantify features, and create a combined matrix of RNA and protein counts per cell.

Spatial Transcriptomics (Visium) Protocol

- Objective: Map gene expression within the intact tissue architecture.

- Workflow:

- Tissue Preparation: Flash-freeze or OCT-embed fresh tissue. Cryosection at 10µm onto Visium spatial gene expression slides.

- Fixation & Staining: Fix sections with methanol and stain with H&E for pathological annotation.

- Permeabilization & cDNA Synthesis: Optimize permeabilization time. Reverse transcription occurs on the slide, where released RNA binds to spatially barcoded oligonucleotides on the surface.

- Sequencing Library Prep: cDNA is harvested, amplified, and prepared for sequencing (Illumina).

- Image & Data Alignment: The H&E image is aligned with the array coordinate system. Sequenced reads are mapped to a reference genome and assigned to specific spatial barcodes (spots).

Mass Spectrometry-Based Proteomics (TMT-LC-MS/MS)

- Objective: Quantify protein abundance and post-translational modifications across multiple samples.

- Workflow:

- Protein Extraction & Digestion: Lyse tissue/cells. Reduce, alkylate, and digest proteins with trypsin.

- Tandem Mass Tag (TMT) Labeling: Label peptides from different samples (e.g., tumor vs. normal, different time points) with unique isobaric chemical tags (TMT 11-plex or 16-plex).

- Fractionation: Pool labeled samples and fractionate via high-pH reverse-phase HPLC to reduce complexity.

- LC-MS/MS Analysis: Analyze fractions on a nano-flow HPLC coupled to an Orbitrap mass spectrometer (e.g., Thermo Scientific Exploris). Perform data-dependent acquisition (DDA).

- Data Analysis: Use software (MaxQuant, Proteome Discoverer) for peptide identification, TMT reporter ion quantification, and statistical analysis for differential expression.

AI Model Architectures for Multi-Omics Integration

AI models transform multi-omics data into predictive biomarkers.

Table 2: AI/ML Approaches for Multi-Omics Data Integration

| Model Type | Key Architecture | Input Data | Output/Prediction | Use Case in Oncology |

|---|---|---|---|---|

| Early Fusion | Deep Neural Network (DNN) | Concatenated feature vectors from all omics | Patient stratification, survival risk | Predicting therapy response from bulk genomic + clinical data. |

| Intermediate Fusion | Multimodal Autoencoder | Separate encoders per omic, fused latent space | Latent representation, clustering | Identifying novel subtypes from RNA+DNA methylation data. |

| Late Fusion | Ensemble Models (Random Forest, SVM) | Predictions from separate omics-specific models | Consensus prediction | Combining radiology, pathology, and genomics models for diagnosis. |

| Graph-Based | Graph Neural Network (GNN) | Biological networks (PPI) with omics node features | Pathway activity, drug sensitivity | Modeling signaling cascades perturbed by genomic alterations. |

Visualization of Workflows and Relationships

Multi-Omics to AI Predictive Model Pipeline

AI Integrates Multi-Omics Data into a Pathway Biomarker

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents & Kits for Featured Protocols

| Reagent/Kits | Vendor Examples | Function in Multi-Omics Workflow |

|---|---|---|

| TotalSeq Antibodies | BioLegend | Oligo-tagged antibodies for CITE-seq, linking protein detection to sequencing. |

| Visium Spatial Gene Expression Slide & Kit | 10x Genomics | Arrayed, spatially barcoded slides and reagents for spatial transcriptomics. |

| Tandem Mass Tag (TMT) Kits | Thermo Fisher Scientific | Isobaric labels for multiplexed, quantitative comparison of proteomes. |

| Chromium Next GEM Chip & Kits | 10x Genomics | Microfluidic chips and reagents for single-cell RNA-seq and multi-omics library prep. |

| TruSeq RNA/DNA Library Prep Kits | Illumina | Robust, standardized kits for preparing NGS libraries from nucleic acids. |

| RNeasy/MiniPrep Kits | Qiagen | Reliable isolation of high-quality RNA/DNA from complex biological samples. |

| Protease Inhibitor Cocktails | Sigma-Aldrich, Roche | Essential for maintaining protein integrity during proteomics sample prep. |

Within oncology research, the discovery and validation of predictive biomarkers is a critical bottleneck in the development of personalized therapies. Traditional statistical methods often fail to capture the complex, high-dimensional interactions inherent in multi-omics data (genomics, transcriptomics, proteomics) and digital pathology images. This whitepaper introduces the core Artificial Intelligence (AI) paradigms—Machine Learning (ML), Deep Learning (DL), and Neural Networks (NNs)—that are fundamentally reshaping biomarker discovery. Framed within a thesis on AI-driven predictive biomarker discovery, this guide provides researchers with the technical foundation to understand, implement, and critically evaluate these transformative approaches.

Foundational AI Paradigms in Biomarker Research

Machine Learning: Supervised & Unsupervised Learning

Machine Learning involves algorithms that learn patterns from data without explicit programming. In biomarker research, two primary types are employed:

- Supervised Learning: Uses labeled data to train models for prediction or classification.

- Application: Building a classifier to predict therapeutic response (Responder vs. Non-Responder) from genetic mutation profiles.

- Common Algorithms: Random Forests, Support Vector Machines (SVM), Logistic Regression.

- Unsupervised Learning: Discovers hidden patterns or groupings in unlabeled data.

- Application: Identifying novel patient subtypes from integrated omics data, which may represent distinct biomarker signatures.

- Common Algorithms: k-Means Clustering, Hierarchical Clustering, Principal Component Analysis (PCA).

Deep Learning & Neural Networks

Deep Learning is a subset of ML based on artificial neural networks with multiple layers ("deep" architectures). These models automatically learn hierarchical feature representations from raw data.

- Artificial Neural Network (ANN): A computational model inspired by biological neurons, consisting of interconnected layers (input, hidden, output) that process information via weighted sums and activation functions.

- Key Architectures in Biomarker Research:

- Convolutional Neural Networks (CNNs): Excel at processing spatially structured data like histopathology whole-slide images (WSI) to detect morphological biomarkers.

- Recurrent Neural Networks (RNNs)/Long Short-Term Memory (LSTM): Process sequential data, such as time-series gene expression data from longitudinal studies.

- Autoencoders: Used for dimensionality reduction and denoising of high-dimensional omics data, facilitating downstream analysis.

Quantitative Impact in Oncology Research

Recent studies and reviews highlight the accelerating adoption and performance of AI in biomarker discovery.

Table 1: Performance Metrics of AI Models in Selected Oncology Biomarker Tasks

| AI Task | Data Type | Model Type | Key Performance Metric | Reported Result | Reference (Example) |

|---|---|---|---|---|---|

| PD-L1 Expression Prediction | Histopathology WSIs | Deep CNN (e.g., ResNet) | AUC (Area Under Curve) | 0.87 - 0.94 | Bera et al., Nat Commun, 2023 |

| Microsatellite Instability (MSI) Detection | Histopathology WSIs | Multiple Instance Learning CNN | Accuracy | > 90% | Kather et al., The Lancet Oncol, 2020 |

| Therapeutic Response Prediction | Multi-omics (RNA-seq, Mutations) | Integrated ML Pipeline (RF, SVM) | F1-Score | 0.79 | An et al., Cancer Cell, 2021 |

| Novel Subtype Discovery | Single-Cell RNA-seq | Autoencoder + Clustering | Silhouette Score | 0.72 | Way et al., Bioinformatics, 2023 |

Table 2: Comparison of Core AI Paradigms for Biomarker Research

| Paradigm | Typical Input Data | Strengths | Limitations | Primary Use Case in Biomarkers |

|---|---|---|---|---|

| Traditional ML (e.g., SVM, RF) | Curated features (e.g., mutation counts, protein levels) | Interpretable, effective on structured data, works with smaller samples | Requires manual feature engineering, may miss complex patterns | Predicting outcomes from quantified assay data |

| Deep Learning (e.g., CNN, Autoencoder) | Raw, high-dimensional data (images, sequences, omics matrices) | Automatic feature extraction, superior on unstructured data, state-of-the-art accuracy | Requires large datasets, "black box" nature, computationally intensive | Discovering morphological & latent molecular signatures from raw images/omics |

Experimental Protocols for AI-Driven Biomarker Discovery

Protocol 1: CNN-Based Biomarker Detection from Digital Pathology

Aim: To train a CNN to identify a histomorphological biomarker (e.g., tumor-infiltrating lymphocytes - TILs) predictive of immunotherapy response.

- Data Curation:

- Obtain a retrospective cohort of H&E-stained WSIs with associated clinical response data.

- Expert pathologists annotate regions of interest (ROIs) for TILs (label as High-TIL vs. Low-TIL).

- Preprocessing & Patch Extraction:

- Normalize stain variation across slides using algorithms like Macenko or Vahadane.

- Tile WSIs into smaller, manageable patches (e.g., 256x256 pixels).

- Assign each patch a label based on its parent ROI annotation.

- Model Training & Validation:

- Architecture: Use a pre-trained CNN (e.g., ResNet50) as a feature extractor, followed by custom classification layers.

- Training: Fine-tune the model on the patch dataset using cross-entropy loss and an optimizer (e.g., Adam).

- Validation: Perform k-fold cross-validation. Assess patch-level accuracy and slide-level AUC via an aggregation mechanism (e.g., attention-based pooling).

- Interpretation: Apply techniques like Gradient-weighted Class Activation Mapping (Grad-CAM) to visualize which image regions most influenced the prediction.

Protocol 2: Integrated ML for Multi-Omics Biomarker Signature

Aim: To build a supervised ML model that integrates genomic and transcriptomic data to predict patient survival.

- Data Integration & Feature Reduction:

- Collect matched genomic (e.g., somatic mutations) and transcriptomic (RNA-seq) data from a cohort like TCGA.

- Perform upstream bioinformatics processing (alignment, variant calling, expression quantification).

- Reduce dimensionality: Select top variant genes and use PCA on expression data to derive principal components (PCs).

- Feature Engineering & Labeling:

- Create a unified feature table: Include mutation status (binary) for key genes and expression PCs.

- Label each patient based on overall survival (e.g., "Long-term survivor" vs. "Short-term survivor") using a predefined cutoff.

- Model Building & Evaluation:

- Algorithm Selection: Train and compare a Random Forest (RF) and a Support Vector Machine (SVM) classifier.

- Hyperparameter Tuning: Use grid search with cross-validation to optimize parameters (e.g., number of trees in RF, kernel and C in SVM).

- Evaluation: Hold out a validation set. Report metrics: Accuracy, Precision, Recall, AUC-ROC. Perform feature importance analysis from the RF model to identify key drivers.

Visualizing Workflows and Architectures

AI-Driven Biomarker Discovery Pipeline

CNN Architecture for Histopathology Analysis

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Toolkit for AI-Integrated Biomarker Experiments

| Category | Item / Solution | Function in AI Biomarker Workflow |

|---|---|---|

| Wet-Lab & Assay | FFPE Tissue Sections & H&E Stain | Provides the foundational physical biomaterial and standard morphology for digital pathology and spatial omics. |

| Multiplex Immunofluorescence (mIF) Kits (e.g., Opal, CODEX) | Enables simultaneous detection of multiple protein biomarkers in situ, generating rich, spatially resolved data for AI analysis. | |

| Next-Generation Sequencing (NGS) Kits (e.g., for RNA-seq, WES) | Generates high-dimensional genomic and transcriptomic data, the primary input for multi-omics ML models. | |

| Data & Software | Digital Slide Scanner (e.g., from Leica, Hamamatsu) | Converts glass slides into high-resolution Whole Slide Images (WSIs), the raw data for computational pathology. |

| Bioinformatics Pipelines (e.g., GATK, Cell Ranger, STAR) | Processes raw sequencing data (FASTQ) into analyzable formats (VCF, count matrices), a critical preprocessing step. | |

| AI Frameworks & Libraries (e.g., PyTorch, TensorFlow, scikit-learn) | Provides the open-source software environment for building, training, and validating ML/DL models. | |

| Pathology Annotation Software (e.g., QuPath, HALO) | Allows pathologists to label regions/cells for training supervised AI models (ground truth generation). |

This whitepaper details the technical framework for AI-driven predictive biomarker discovery in oncology, focusing on its core applications: predicting treatment response, anticipating resistance mechanisms, and estimating patient survival. These applications are transforming precision oncology by moving from reactive to proactive care strategies.

Core AI Methodologies and Data Integration

Data Types and Preprocessing

AI models integrate multi-omics data, clinical records, and digital pathology. Standard preprocessing includes batch effect correction (e.g., ComBat), normalization (TPM for RNA-seq, VAF for mutations), and dimensionality reduction (PCA, UMAP).

Primary AI/ML Architectures

- Supervised Learning: Random Forests and Gradient Boosting Machines (XGBoost, LightGBM) for structured clinical and genomic data.

- Deep Learning: Convolutional Neural Networks (CNNs) for whole-slide images; Recurrent Neural Networks (RNNs) for longitudinal data; Transformer-based models for multi-omics integration.

- Survival Analysis: Cox Proportional Hazards models enhanced with regularization (LASSO-Cox) and deep survival models (DeepSurv).

Table 1: Comparative Performance of AI Models in Predictive Tasks

| Model Type | Application Example | Average C-index / AUC | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Random Forest | ICB Response Prediction | 0.72-0.78 | Handles high-dim. data, feature importance | Prone to overfitting on small n |

| XGBoost | Resistance Mutation Prediction | 0.75-0.82 | High accuracy, efficient | Less interpretable, many hyperparameters |

| CNN (ResNet) | Pathology-based Survival | 0.74-0.81 | Learns spatial features | Requires large annotated datasets |

| Multi-modal Transformer | Integrated Risk Stratification | 0.79-0.85 | Fuses disparate data types | Computationally intensive |

Experimental Protocols for Validation

Protocol: In Vitro Validation of AI-Predicted Biomarkers

Aim: Functionally validate a gene signature predicting resistance to tyrosine kinase inhibitors (TKIs) in NSCLC.

- Cell Lines: Use parental and TKI-resistant NSCLC lines (e.g., PC9, HCC827 with EGFR mutations).

- Knockdown/Overexpression: Employ siRNA or lentiviral constructs to modulate candidate gene expression identified by AI.

- Treatment Assay: Seed cells in 96-well plates. Treat with a TKI (e.g., osimertinib) dose range (0-10 µM) for 72 hours.

- Viability Measurement: Assess using CellTiter-Glo luminescent assay. Calculate IC50 values.

- Downstream Analysis: Perform immunoblotting on key pathway proteins (p-EGFR, p-AKT, p-ERK) to confirm mechanism.

Protocol: Prospective Cohort Study for Clinical Validation

Aim: Validate an AI-derived composite biomarker score in a prospective cohort.

- Cohort Design: Enroll patients with a specific cancer type initiating a standard therapy.

- Biospecimen Collection: Collect pre-treatment tissue (FFPE for sequencing/IHC) and blood (for ctDNA).

- Data Generation: Perform targeted NGS (e.g., FoundationOneCDx) and calculate the AI biomarker score.

- Blinding & Follow-up: Keep score blinded to clinicians. Monitor patients per standard guidelines for radiographic response (RECIST 1.1), progression-free survival (PFS), and overall survival (OS).

- Statistical Analysis: Use Kaplan-Meier plots and log-rank test for survival outcomes. Perform multivariable Cox regression adjusting for clinical covariates.

Key Signaling Pathways in Response and Resistance

Diagram 1: AI Maps Therapy-Induced Signaling & Resistance

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Experimental Validation

| Item | Function/Application | Example Product/Catalog |

|---|---|---|

| ctDNA Isolation Kit | Isolves cell-free DNA from plasma for liquid biopsy NGS. | QIAamp Circulating Nucleic Acid Kit |

| Multiplex IHC/IF Kit | Enables simultaneous detection of 4+ protein biomarkers on FFPE tissue. | Akoya Biosciences OPAL Polychromatic IF |

| Live-Cell Analysis System | Monitors real-time cell proliferation and death for drug response assays. | Incucyte S3 or Sartorius iQue |

| NGS Pan-Cancer Panel | Targeted sequencing of key cancer genes from limited DNA/RNA input. | Illumina TruSight Oncology 500 |

| CRISPRa/i Screening Library | Genome-wide activation/knockout screens to identify resistance genes. | Horizon Dharmacon DECONVOLUTOR |

| Cytokine Profiling Array | Measures dozens of soluble immune factors in serum or culture supernatant. | R&D Systems Proteome Profiler Array |

| Organoid Culture Medium | Supports the growth of patient-derived tumor organoids for ex vivo testing. | STEMCELL Technologies IntestiCult |

AI Model Development and Validation Workflow

Diagram 2: AI Biomarker Development & Validation Pipeline

Quantitative Performance Metrics

Table 3: Benchmarking AI Predictive Performance Across Cancer Types

| Cancer Type | Therapy | Predictive Feature(s) | AI Model | Validation Cohort Size (n) | Performance (Metric) |

|---|---|---|---|---|---|

| Non-Small Cell Lung | Immune Checkpoint Blockade (ICB) | TMB, Gene Expression Signature | Ensemble (RF + CNN) | 350 (External) | AUC: 0.81, HR for PFS: 0.45 |

| Colorectal | Anti-EGFR (cetuximab) | RAS/RAF wt, Transcriptomic Subtype | Logistic Regression | 220 (Prospective) | ORR Prediction Accuracy: 87% |

| Melanoma | BRAF/MEK inhibitors | Pre-treatment ctDNA Level | Cox-PH Neural Net | 180 | C-index for PFS: 0.79 |

| Breast | Neoadjuvant Chemotherapy | Spatial TIL Patterns from H&E | ResNet-50 | 410 (TCGA + Internal) | pCR Prediction AUC: 0.83 |

Future Directions and Challenges

Key challenges include clinical trial integration, regulatory approval pathways for AI-based biomarkers, and ensuring algorithmic fairness across diverse populations. The convergence of dynamic biomarkers from liquid biopsies and real-world data will further refine AI models for continuous prediction of treatment response and survival.

The discovery of predictive biomarkers is central to the development of targeted cancer therapies and personalized medicine. For decades, traditional statistical methods (e.g., linear regression, Cox proportional hazards models, ANOVA) have been the cornerstone of this endeavor. However, the inherent complexity, high dimensionality, and heterogeneity of modern multi-omics oncology data (genomics, transcriptomics, proteomics, digital pathology) expose critical limitations of these classical approaches. This whitepaper details the technical imperative for artificial intelligence (AI) and machine learning (ML) in overcoming these constraints within oncology research.

Limitations of Traditional Statistical Methods in Oncology Biomarker Discovery

Traditional methods operate under strict assumptions often violated by biological data.

Table 1: Key Limitations of Traditional Statistical Methods vs. AI/ML Capabilities

| Limitation | Traditional Statistics | AI/ML Approach |

|---|---|---|

| High-Dimensional Data (p >> n) | Prone to overfitting; requires manual feature reduction (e.g., PCA) before modeling. | Built-in regularization (L1/L2), automatic feature learning, and dimensionality reduction (autoencoders). |

| Non-Linear Relationships | Poorly captures complex, non-linear interactions between genes/proteins. | Excels at modeling non-linearities via activation functions in deep neural networks, kernel methods. |

| Data Heterogeneity & Integration | Challenging to integrate disparate data types (e.g., image, sequence, clinical) into a single model. | Multi-modal architectures (e.g., graph neural networks, late fusion models) can fuse heterogeneous data. |

| Feature Interaction Discovery | Requires a priori hypothesis about interactions; combinatorial explosion for testing. | Automatically discovers higher-order interactions through hierarchical feature representation. |

| Handling Unstructured Data | Cannot directly process images (histopathology) or text (clinical notes). | Convolutional Neural Networks (CNNs) for images, Natural Language Processing (NLP) for text. |

Experimental Protocol: A Comparative Study of Survival Prediction

To empirically demonstrate the comparative advantage, consider a protocol for predicting overall survival in glioblastoma multiforme (GBM) using RNA-seq and clinical data from a source like The Cancer Genome Atlas (TCGA).

Protocol Title: Comparative Analysis of Cox Proportional Hazards vs. Deep Survival Neural Network for GBM Prognostication

Data Acquisition & Preprocessing:

- Download GBM dataset (TCGA-GBM) via the Genomic Data Commons (GDC) API. This includes RNA-seq (counts) and clinical data (overall survival status/time).

- Preprocessing: Filter genes by variance (top 5000 most variable). Normalize RNA-seq counts using log2(CPM + 1). Z-score normalize each gene. Handle missing clinical data with median imputation. Split data into training (70%), validation (15%), and test (15%) sets, ensuring stratification by survival event.

Traditional Statistical Method (Benchmark):

- Method: Penalized Cox Proportional Hazards Model (Lasso-Cox).

- Implementation: Using R

glmnetpackage. - Steps: Perform 10-fold cross-validation on the training set to tune the L1 penalty (λ) parameter. Fit the final model on the entire training set with the optimal λ. Generate risk scores (linear predictor) for the test set.

AI/ML Method (DeepSurv):

- Method: DeepSurv, a deep neural network for survival analysis (Katzman et al., 2018).

- Implementation: Using PyTorch or TensorFlow.

- Architecture: Input layer (5000 genes), 3 fully connected hidden layers (1024, 512, 128 nodes) with ReLU activation and BatchNorm, dropout (rate=0.3), output layer (1 node, linear activation). Loss function: negative log partial likelihood.

- Training: Train for 200 epochs using Adam optimizer. Use the validation set for early stopping.

Evaluation:

- Metric: Concordance Index (C-index) on the held-out test set.

- Secondary Analysis: Perform Kaplan-Meier analysis, stratifying test patients into high/low-risk groups based on median risk score from each model. Compare log-rank test p-values.

Table 2: Hypothetical Results from Comparative Survival Analysis

| Model | Test Set C-index (95% CI) | Log-Rank P-value (Risk Stratification) | Number of Features Used |

|---|---|---|---|

| Lasso-Cox (Traditional) | 0.68 (0.62-0.74) | 1.2e-3 | 42 |

| DeepSurv (AI) | 0.75 (0.70-0.80) | 4.5e-5 | 5000 (all, but weighted) |

Visualizing AI-Driven Multi-Omics Integration Workflow

AI Workflow for Multi-Omics Biomarker Fusion

The Scientist's Toolkit: Key Research Reagent Solutions for AI-Driven Biomarker Validation

Table 3: Essential Reagents & Tools for Experimental Validation of AI-Predicted Biomarkers

| Item | Function & Relevance |

|---|---|

| CRISPR-Cas9 Knockout/Knockin Kits | Functional validation of AI-identified genetic biomarkers by modulating target gene expression in relevant cancer cell lines. |

| Phospho-Specific Antibodies (Multiplex IHC/ICC) | Validate predicted activity states of signaling pathways (e.g., p-AKT, p-ERK) in patient-derived tissue microarrays (TMAs). |

| Organoid or PDX (Patient-Derived Xenograft) Culture Systems | Ex vivo or in vivo models for testing AI-predicted biomarkers of therapy response in a physiologically relevant context. |

| Multiplex Immunoassay Panels (e.g., Luminex) | Quantify secreted or circulating protein biomarkers (cytokines, chemokines) predicted by multi-omics AI models from patient serum/plasma. |

| Digital Pathology Scanner & Annotation Software | Digitize H&E/IHC slides for analysis by AI models and correlate AI-discovered histopathological features with molecular biomarkers. |

| Single-Cell RNA-Seq Library Prep Kits | Profile tumor heterogeneity at single-cell resolution to deconvolute and validate AI-inferred cellular subtypes from bulk sequencing predictions. |

| High-Throughput Drug Screening Libraries | Test AI-predicted drug-gene biomarker associations in large-scale in vitro screens to confirm therapeutic vulnerabilities. |

The transition from traditional statistics to AI is not merely a trend but a methodological necessity in oncology biomarker discovery. The ability of AI to integrate complex, high-dimensional data, uncover non-linear relationships, and directly interpret unstructured data enables the discovery of novel, robust predictive signatures that remain invisible to conventional methods. Successful adoption requires interdisciplinary collaboration between computational scientists, biologists, and clinicians, coupled with rigorous experimental validation as outlined in the provided protocols and toolkit.

Building the Pipeline: Key AI Methodologies and Real-World Applications in Oncology

Data Preprocessing and Feature Engineering for High-Dimensional Biomedical Data

This technical guide is framed within the broader thesis of AI-driven predictive biomarker discovery in oncology research. The identification of robust, predictive biomarkers from complex, high-dimensional datasets is a cornerstone of modern precision oncology. Success hinges on the rigorous preprocessing of raw data and the intelligent engineering of informative features, which transform noisy biological measurements into reliable inputs for machine learning (ML) and artificial intelligence (AI) models. This document provides an in-depth protocol for these critical steps, targeting researchers, scientists, and drug development professionals.

The Challenge of High-Dimensional Biomedical Data in Oncology

Oncological data from modalities like next-generation sequencing (RNA-seq, whole-exome, single-cell), proteomics, and digital pathology imaging is characterized by high dimensionality (P >> N problem, where features far exceed samples), technical noise, batch effects, and high sparsity. Failure to address these issues leads to overfitted, non-generalizable models and spurious biomarker candidates.

Table 1: Common High-Dimensional Data Types in Oncology Biomarker Discovery

| Data Modality | Typical Dimensionality (Features) | Primary Noise Sources | Key Preprocessing Targets |

|---|---|---|---|

| RNA-Seq (Bulk) | 20,000-60,000 genes | Library size, composition, batch effects | Normalization, batch correction, low-count filtering |

| Single-Cell RNA-Seq | 20,000+ genes per cell | Dropout (zero-inflation), ambient RNA, batch effects | Imputation, doublet removal, integration |

| Whole-Exome Sequencing | ~50,000 variants/sample | Sequencing depth, alignment artifacts | Depth normalization, variant quality recalibration |

| Mass Spectrometry Proteomics | 1,000-10,000 proteins | Ion suppression, batch drift, missing values | Peak alignment, normalization, imputation |

| Digital Pathology (WSI) | 1,000,000+ pixels/image | Stain variation, scanning artifacts | Color normalization, tissue segmentation |

Foundational Data Preprocessing Pipeline

Experimental Protocol: Raw Data QC and Sanitization

Objective: To remove low-quality samples and non-informative features prior to analysis. Methodology:

- Sample-level QC: Calculate metrics (e.g., sequencing depth, mapping rate, % viable cells). Exclude samples falling >3 median absolute deviations (MAD) from the cohort median for key metrics.

- Feature-level Filtering:

- Genomics/Transcriptomics: Remove genes/variants with zero counts in >80% of samples (or >90% of cells for scRNA-seq).

- Proteomics: Remove proteins detected in <70% of samples in any patient group.

- General: Apply variance filtering; remove features in the bottom 20th percentile of variance (non-zero for count data).

Normalization and Batch Effect Correction

Protocol:

- Normalization: Choose method based on data type.

- RNA-Seq: Use DESeq2's median of ratios (for differential expression) or Trimmed Mean of M-values (TMM) for between-sample comparison.

- scRNA-Seq: Apply library size normalization (e.g., counts per 10,000) followed by log1p transformation.

- Proteomics: Use median centering or quantile normalization across samples.

- Batch Effect Assessment: Perform Principal Component Analysis (PCA). Color samples by batch (e.g., sequencing run, processing date). Visual separation on PC1 or PC2 indicates strong batch effects.

- Correction: Apply Combat (parametric empirical Bayes) or Harmony for genomic data. For image data, use stain normalization (e.g., Macenko method).

Title: Core Data Preprocessing Workflow for Biomarker Discovery

Advanced Feature Engineering Strategies

Dimensionality Reduction for Feature Extraction

Protocol: Use dimensionality reduction not just for visualization, but to create new, lower-dimensional features.

- Non-Linear Embedding (for complex relationships): Apply UMAP or t-SNE, but use a fixed random seed and fit only on a held-out training set. The resulting 2-50 embedding coordinates become new features.

- Autoencoder-Based Reduction: Train a shallow undercomplete autoencoder on normalized data. Use the activations of the bottleneck layer as engineered features. This compresses information while capturing non-linearities.

Biological Knowledge-Driven Feature Engineering

Protocol: Integrate pathway and network databases to create biologically interpretable super-features.

- Gene Set Scoring: Using MSigDB, calculate per-sample enrichment scores for hallmark pathways (e.g., "HALLMARK_APOPTOSIS") via single-sample GSEA (ssGSEA) or the Seurat's AddModuleScore method. This reduces 20k genes to ~50 pathway activity scores.

- Protein-Protein Interaction (PPI) Network Features: For mutation data, map genes to a PPI (e.g., STRING). Calculate network centrality measures (degree, betweenness) for each mutated gene in a sample's personal network. Use these as features.

Title: Three Pillars of Advanced Feature Engineering

Validation Framework for Preprocessing & Engineering

Experimental Protocol: Nested Cross-Validation for Pipeline Integrity Objective: To prevent data leakage and over-optimistic performance estimation during preprocessing and feature engineering. Methodology:

- Outer Loop (Performance Estimation): Split data into K1 folds (e.g., 5). Hold out one fold for final testing.

- Inner Loop (Pipeline Tuning): On the remaining K1-1 folds, perform a second split (K2 folds). All preprocessing steps (imputation, normalization, scaling, feature selection) must be fitted on the inner-loop training folds and then applied to the inner-loop validation fold. This includes learning parameters for dimensionality reduction or calculating pathway scores.

- Final Training: The best pipeline from the inner loop is refit on all K1-1 folds.

- Final Testing: Apply the fully-defined pipeline (with all fitted parameters) to the held-out outer test fold for an unbiased performance estimate.

Table 2: Impact of Proper Preprocessing on Model Performance

| Preprocessing Step | Metric (AUC-ROC) | Model (LR) | Performance Change vs. Raw Data | Notes |

|---|---|---|---|---|

| Raw Count Matrix | 0.61 +/- 0.05 | Logistic Regression | Baseline | High variance, prone to overfitting. |

| + Normalization (DESeq2) | 0.72 +/- 0.04 | Logistic Regression | +0.11 | Reduces technical sample-to-sample variation. |

| + Batch Correction (Combat) | 0.78 +/- 0.03 | Logistic Regression | +0.06 | Removes bias from processing batches. |

| + Pathway Features (ssGSEA) | 0.85 +/- 0.02 | Logistic Regression | +0.07 | Introduces biologically interpretable features. |

The Scientist's Toolkit: Essential Reagent Solutions

Table 3: Key Research Reagents & Computational Tools for Preprocessing

| Item/Tool Name | Category | Primary Function in Preprocessing |

|---|---|---|

| DESeq2 (R) | Software/Bioinformatics Package | Performs variance-stabilizing normalization and dispersion estimation for RNA-seq count data. |

| Scanpy (Python) | Software/Bioinformatics Package | Comprehensive toolkit for single-cell data analysis, including QC, normalization, and PCA/UMAP. |

| Combat (sva R package) | Algorithm | Removes batch effects from high-dimensional data using empirical Bayes frameworks. |

| MSigDB | Biological Database | Curated gene sets for calculating pathway activity scores (knowledge-driven features). |

| Harmony (R/Python) | Algorithm | Integrates single-cell or bulk datasets by removing dataset-specific effects. |

| UMAP | Algorithm | Non-linear dimensionality reduction for feature extraction and visualization. |

| Macenko Stain Normalizer | Algorithm | Standardizes color distribution in histopathology images to mitigate stain variability. |

| Trusight Oncology 500 Kit (Illumina) | Wet-lab Reagent | Targeted sequencing panel for comprehensive cancer variant detection; requires specific bioinformatic pipelines for preprocessing. |

| Seurat (R) | Software/Bioinformatics Package | Toolkit for single-cell genomics, specializing in data normalization, integration, and clustering-based feature creation. |

This whitepaper details the application of Convolutional Neural Networks (CNNs) in histopathology and radiology for AI-driven predictive biomarker discovery in oncology research. The integration of deep learning with high-dimensional medical imaging data enables the extraction of quantitative, reproducible features that can serve as non-invasive biomarkers for diagnosis, prognosis, and therapeutic response prediction.

Core CNN Architectures for Medical Imaging

Table 1: Performance Comparison of CNN Architectures on Histopathology (Camelyon16) and Radiology (NSCLC-Radiomics) Datasets

| Architecture | Input Size | Histopathology (Patch AUC) | Radiology (Volumetric AUC) | Key Advantage for Biomarker Discovery |

|---|---|---|---|---|

| ResNet-50 | 224x224 | 0.991 | 0.872 | Robust feature learning via skip connections |

| Inception-v3 | 299x299 | 0.987 | 0.865 | Multi-scale feature extraction |

| DenseNet-121 | 224x224 | 0.993 | 0.878 | Feature reuse, parameter efficiency |

| EfficientNet-B3 | 300x300 | 0.994 | 0.881 | Compound scaling optimization |

| ViT-B/16 | 224x224 | 0.985 | 0.869 | Global context via self-attention |

Data synthesized from recent studies (2023-2024) including Nat Med 2024;30:2, Med Image Anal 2024;92:103083.

Experimental Protocols

Protocol A: Whole Slide Image (WSI) Analysis for Histopathology

Objective: To discover stromal tumor-infiltrating lymphocyte (sTIL) density as a predictive biomarker for immunotherapy response.

- Slide Digitization: Scan H&E-stained slides at 40x magnification (0.25 µm/pixel resolution) using Aperio AT2 or Philips Ultra Fast Scanner.

- Patch Extraction: Use OpenSlide library to extract 256x256 pixel patches at 20x equivalent magnification. Exclude background using Otsu thresholding.

- Data Annotation: Expert pathologists label patches for sTIL density (0-100%) using Digital Slide Archive.

- Model Training: Train a ResNet-50 using a 5-fold cross-validation scheme. Loss function: Mean Squared Error. Optimizer: AdamW (lr=1e-4, weight decay=1e-5).

- Inference & Biomarker Aggregation: Apply trained model to entire WSI. Aggregate patch-level predictions via attention-based multiple instance learning to generate a patient-level sTIL score.

Protocol B: CT Radiomics Pipeline for Lung Nodule Characterization

Objective: To extract quantitative imaging biomarkers from chest CT for differentiating benign from malignant pulmonary nodules.

- Image Acquisition & Preprocessing: Acquire non-contrast CT scans at 1.0 mm slice thickness. Normalize voxel intensities to Hounsfield Units (HU). Apply N4 bias field correction.

- Segmentation: Use nnU-Net for automatic nodule segmentation, followed by radiologist refinement in 3D Slicer.

- Feature Extraction: Extract 851 radiomic features per nodule using PyRadiomics v3.0.1 (shape, first-order statistics, texture).

- Deep Feature Extraction: Pass 64x64x64 mm³ isotropic volumes centered on the nodule through a 3D DenseNet.

- Biomarker Integration: Concatenate handcrafted radiomic features with deep features. Train a logistic regression classifier with L1 regularization to identify top predictive features.

Visualizing Key Workflows

Title: WSI Analysis Pipeline for Biomarker Discovery

Title: Radiomics-AI Fusion Pipeline for CT Biomarkers

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for CNN-Based Imaging Biomarker Research

| Item / Solution | Vendor / Platform | Function in Experiment |

|---|---|---|

| Aperio AT2 Scanner | Leica Biosystems | High-throughput digitization of histopathology slides at 40x (0.25 µm/pixel). |

| Philips IntelliSpace Discovery | Philips | Integrated platform for radiology AI development & PACS integration. |

| OpenSlide Python API | OpenSlide Project | Open-source library for reading and tiling whole-slide image files (SVS, NDPI). |

| 3D Slicer v5.2 | Slicer Community | Open-source platform for medical image segmentation and visualization. |

| PyRadiomics v3.0.1 | Computational Imaging & Bioinformatics Lab, Harvard | Standardized extraction of handcrafted radiomic features from 2D/3D regions. |

| MONAI (Medical Open Network for AI) | Project MONAI | PyTorch-based framework for deep learning in healthcare imaging. |

| Digital Slide Archive (DSA) | Emory University & Kitware | Web-based platform for managing, annotating, and analyzing whole slide images. |

| nnU-Net | Isensee et al. | Self-configuring framework for automatic medical image segmentation. |

| Vectra Polaris | Akoya Biosciences | Multiplex immunofluorescence imaging for spatial biomarker validation. |

| NVIDIA Clara Discovery | NVIDIA | Application framework for AI in genomics, microscopy, and radiology. |

Validation and Clinical Translation Framework

Table 3: Multi-Cohort Validation Strategy for CNN-Derived Biomarkers

| Validation Stage | Cohort Size (Minimum) | Primary Endpoint | Statistical Requirement |

|---|---|---|---|

| Discovery | n=300 (retrospective) | Feature Stability (ICC > 0.8) | Technical validation of repeatability. |

| Analytical Validation | n=500 (multi-institutional) | Agreement with Gold Standard (κ > 0.6) | Generalizability across scanners/protocols. |

| Clinical Validation | n=1000 (prospective, annotated) | Association with Outcome (p < 0.01, multivariate) | Independent prognostic/predictive value. |

| Clinical Utility | n=3000 (randomized trial data) | Improvement in Decision Curve Analysis | Net benefit over standard of care. |

The integration of CNNs with histopathology and radiology provides a powerful, scalable platform for discovering novel predictive imaging biomarkers in oncology. The reproducible, quantitative features extracted by these models offer a path toward more precise patient stratification and treatment selection in drug development pipelines.

In the quest for AI-driven predictive biomarker discovery in oncology, the integration of disparate, high-dimensional data modalities—such as genomic sequences, histopathology whole-slide images (WSIs), proteomic profiles, and clinical records—presents a profound computational challenge. This technical guide explores the synergistic application of Graph Neural Networks (GNNs) and Transformer architectures to model the complex, relational biology of cancer. By constructing multi-modal biological graphs and leveraging cross-attention mechanisms, these frameworks can uncover novel, interpretable biomarkers and predictive signatures that transcend single-data-type analyses, ultimately accelerating therapeutic development.

Cancer is a systems-level disease driven by intricate interactions between genomic alterations, cellular microenvironment, and patient physiology. Traditional single-modal machine learning approaches often fail to capture these interactions. The integration of multi-omics data (genomics, transcriptomics, proteomics) with imaging and clinical data through GNNs and Transformers offers a path to a more holistic, predictive model of tumor behavior and therapeutic response.

Foundational Architectures

Graph Neural Networks (GNNs) for Biological Networks

GNNs operate on graph structures ( G = (V, E) ), where nodes ( V ) represent biological entities (e.g., genes, cells, patients) and edges ( E ) represent interactions (e.g., protein-protein interactions, spatial proximity). Message-passing mechanisms allow information to propagate across the network.

Key Variants:

- Graph Convolutional Networks (GCNs): Perform localized spectral convolutions.

- Graph Attention Networks (GATs): Use attention mechanisms to weigh neighbor node importance.

- Graph Transformer Networks: Integrate self-attention layers within the graph structure.

Transformer Architectures for Sequential and Non-Sequential Data

Originally designed for sequences, the Transformer's self-attention mechanism computes pairwise interactions between all elements in a set, making it naturally suited for set-structured biological data and long-range dependencies.

Core Components:

- Multi-Head Self-Attention: Captures diverse relational patterns.

- Positional Encoding: Injects spatial or sequential order.

- Cross-Attention: Crucial for fusing different modalities (e.g., aligning image regions with genomic features).

Integration Strategies: A Technical Framework

Hierarchical Multi-Modal Graph Construction

The first step is representing heterogeneous data as a unified graph. A common paradigm involves a hierarchical structure.

Diagram Title: Hierarchical Multi-Modal Graph for Oncology Data

Fusion via Cross-Attention and Message Passing

Two primary technical approaches enable integration:

- Late Fusion with Cross-Modal Attention: Each modality is processed by a dedicated encoder (e.g., CNN for images, Transformer for sequences). Their latent representations are fused using cross-attention layers in a joint Transformer block.

- Early Fusion via Heterogeneous Graph Learning: All entities are projected into a shared graph. A heterogeneous GNN (e.g., RGCN) with edge-type-specific parameters performs message passing directly across different node and edge types.

Diagram Title: Cross-Modal Fusion Architecture for Biomarker Discovery

Experimental Protocols & Quantitative Data

Protocol: Multi-Modal Predictor for Immunotherapy Response

This protocol outlines a standard experiment for predicting response to Immune Checkpoint Inhibitors (ICIs).

Objective: Predict binary response (Responder/Non-Responder) from pre-treatment multi-modal data.

Dataset: A curated cohort from public sources (e.g., TCGA, CPTAC) with matched WSI, RNA-Seq, and clinical outcomes.

Workflow:

- Graph Construction:

- Nodes: Patient-level, Tumor Sample, Gene (from top N variable genes), Image Patch (from tiled WSI).

- Edges: Patient-Patient (clinical similarity), Patient-Tumor, Tumor-Gene (expression > threshold), Gene-Gene (from PPI database like STRING), Tumor-Image Patch.

- Model Architecture: A 3-layer Heterogeneous GAT (HGAT) followed by a Transformer encoder with 4 attention heads for global pooling.

- Training: Supervised training with cross-entropy loss, 5-fold cross-validation, Adam optimizer.

- Evaluation: AUROC, AUPRC, and Kaplan-Meier analysis of stratified risk groups.

Table 1: Performance Comparison of Multi-Modal Integration Methods on a Simulated NSCLC ICI Cohort

| Model Architecture | Data Modalities Used | AUROC (Mean ± SD) | AUPRC (Mean ± SD) | Interpretation Score* |

|---|---|---|---|---|

| Baseline (Logistic Reg.) | Clinical Only | 0.62 ± 0.05 | 0.58 ± 0.06 | Low |

| ResNet-50 | WSI Only | 0.71 ± 0.04 | 0.67 ± 0.05 | Medium |

| Transformer | RNA-Seq Only | 0.76 ± 0.03 | 0.72 ± 0.04 | Medium |

| Early Fusion (HGAT) | All (WSI, RNA-Seq, Clinical) | 0.85 ± 0.02 | 0.81 ± 0.03 | High |

| Late Fusion (Cross-Attn) | All (WSI, RNA-Seq, Clinical) | 0.87 ± 0.02 | 0.83 ± 0.02 | Medium-High |

*Interpretation Score: Assesses the ease of extracting biologically plausible biomarker hypotheses from the model (e.g., via attention weights or node importance scores).

Protocol: Spatial Transcriptomics Guided Cell Interaction Graph

Objective: Model cell-cell communication in the tumor microenvironment (TME) to discover stromal biomarkers.

Methodology:

- Cell Graph from Imaging: Segment nuclei from H&E or multiplex immunofluorescence (mIF) images. Each cell is a node.

- Node Features: Morphological features from imaging and assigned gene expression profiles from aligned spatial transcriptomics spots (using deconvolution methods).

- Edge Definition: Connect cells within a spatial distance threshold (e.g., 50µm). Edge attributes can include distance and co-expression correlation.

- Model & Task: A GNN is trained to classify cell types or predict ligand-receptor interaction activity between neighboring cells.

Table 2: Key Reagent Solutions for Featured Multi-Modal Experiments

| Research Reagent / Tool | Provider Example | Function in Experimental Protocol |

|---|---|---|

| 10x Genomics Visium | 10x Genomics | Enables spatially resolved whole-transcriptome analysis, linking histology image spots to RNA-seq data. |

| CODEX/Phenocycler | Akoya Biosciences | Provides high-plex protein imaging for defining cell states and neighborhoods in the TME for graph node features. |

| STRINGS Database | EMBL | Source of curated protein-protein interaction networks used to define prior-knowledge edges in biological graphs. |

| TCGA/CPTAC Portals | NCI/NIH | Primary sources for curated, publicly available matched multi-omics and clinical oncology data for model training. |

| Scanpy / Squidpy | Open Source (Python) | Toolkits for single-cell and spatial omics data analysis, including graph construction and basic GNN implementations. |

| PyTorch Geometric (PyG) | Open Source (Python) | A foundational library for building and training GNNs on heterogeneous graphs, essential for custom model development. |

| DGL-LifeSci | Open Source (Python) | Domain-specific library for chemical and biological graph deep learning, offering pre-built modules for biomolecules. |

Discussion & Future Directions

The fusion of GNNs and Transformers provides a powerful, flexible framework for multi-modal integration. Key challenges remain:

- Scalability: Processing graphs with millions of nodes (e.g., all cells in a cohort).

- Interpretability: Moving from high-performance predictions to causal, mechanistic biological insights.

- Data Harmonization: Handling batch effects and technical variability across disparate data sources.

Future work will focus on dynamic graph models that capture disease progression and self-supervised pre-training on large-scale biomedical graphs to improve data efficiency. In the context of predictive biomarker discovery, these techniques promise to move beyond single-gene biomarkers towards complex, multi-modal signatures encompassing genetics, cellular context, and patient phenotype, thereby delivering more reliable and actionable predictions for oncology drug development.

This technical guide presents a focused analysis of emerging case studies within a broader thesis on AI-driven predictive biomarker discovery in oncology. The integration of machine learning (ML) and deep learning (DL) with high-dimensional molecular and clinical data is transforming the identification of biomarkers that predict response to three primary therapeutic modalities: immunotherapy, targeted therapy, and chemotherapy. This shift from traditional, hypothesis-driven discovery to data-driven, pattern-recognition approaches is accelerating precision oncology and revealing novel biological insights.

AI-Discovered Biomarkers in Immunotherapy

Immunotherapy, particularly immune checkpoint inhibitors (ICIs), has shown remarkable but heterogeneous clinical benefits. AI models are deciphering complex predictive signatures beyond PD-L1.

Case Study 1: Multimodal Integration for ICI Response Prediction A 2023 study employed a DL framework integrating whole-slide histopathology images (WSIs), genomic mutational profiles, and clinical data to predict response to anti-PD-1 therapy in non-small cell lung cancer (NSCLC).

Experimental Protocol:

- Data Curation: Retrospective cohort of 500 NSCLC patients treated with pembrolizumab. Data included H&E-stained WSIs, targeted next-generation sequencing (NGS) data (500-gene panel), and baseline clinical variables (e.g., smoking history).

- Feature Extraction:

- Histopathology: A pre-trained convolutional neural network (CNN), ResNet50, was used to extract ~1000 feature vectors from tiled WSI regions.

- Genomics: Somatic mutations were encoded as binary presence/absence vectors. Key immunomodulatory genes (e.g., POLE, STK11) were highlighted.

- Clinical: Variables were one-hot encoded.

- Model Architecture: A multibranch neural network with separate encoders for each data type, followed by concatenation and fully connected layers for binary classification (responder vs. non-responder).

- Validation: The model was validated on an independent external cohort (n=150), with performance measured by area under the receiver operating characteristic curve (AUROC).

Key Quantitative Findings:

Table 1: Performance of Multimodal AI Model vs. Single-Modality Models

Model Input Data AUROC (Internal Test) AUROC (External Validation) Histopathology (WSI) only 0.68 0.62 Genomics only 0.72 0.70 Clinical only 0.63 0.59 Multimodal AI (Integrated) 0.85 0.81 Signaling Pathway & Workflow Diagram:

AI Workflow for Multimodal Immunotherapy Biomarker Discovery

Case Study 2: Spatial Transcriptomics Deconvolution An AI model analyzing spatial transcriptomics data identified a novel biomarker niche: "tertiary lymphoid structure (TLS) maturity score," predictive of response to ICIs in melanoma.

AI-Discovered Biomarkers in Targeted Therapy

AI excels at identifying synthetic lethal interactions and rare oncogenic driver combinations that define patient subgroups for targeted agents.

Case Study: Deep Learning on Drug Screens & CRISPR Knockouts A 2024 study used a graph neural network (GNN) trained on large-scale pharmacogenomic databases (e.g., DepMap) to predict vulnerability to PARP inhibitors beyond BRCA mutations.

Experimental Protocol:

- Graph Construction: A heterogeneous knowledge graph was built with nodes representing genes, cell lines, drugs, and pathways. Edges represented relationships (e.g., gene-gene interaction, cell line mutation, drug target).

- Training Objective: The GNN was trained to predict cell line sensitivity (IC50) to olaparib based on the graph structure and node features (e.g., mutation status, expression).

- Discovery: The model highlighted a cluster of DNA repair genes (e.g., RAD51C, FANCA) with low-frequency loss-of-function mutations. Cell lines with these mutations were predicted and experimentally validated to be olaparib-sensitive.

- Clinical Correlation: Mining of real-world genomic data identified ~3% of ovarian and prostate cancer patients with these alterations who had not previously qualified for PARP inhibitor therapy.

Key Quantitative Findings:

Table 2: AI-Predicted vs. Validated Sensitivity to Olaparib

Gene Alteration Predicted IC50 Fold-Change (vs. WT) Validated IC50 Fold-Change (vs. WT) Prevalence in TCGA OV/PRAD BRCA1 mut (known) 12.5 10.8 5-7% RAD51C mut (AI-predicted) 8.2 7.5 1.2% FANCA mut (AI-predicted) 6.7 6.1 0.8%

AI-Discovered Biomarkers in Chemotherapy

Chemotherapy response has been difficult to predict due to polygenic mechanisms. AI models are uncovering gene expression networks associated with drug metabolism and cellular resilience.

Case Study: Neural Network on Pan-Cancer Expression for Platinum Response A model trained on The Cancer Genome Atlas (TCGA) RNA-seq data from over 10,000 samples across 33 cancer types identified a conserved 50-gene expression signature related to oxidative stress management that predicts sensitivity to platinum-based agents.

Experimental Protocol:

- Input & Preprocessing: Normalized RNA-seq (TPM) data from TCGA. Patients were labeled as "sensitive" or "resistant" based on pathologic response criteria or progression-free survival.

- Model Architecture: A variational autoencoder (VAE) for dimensionality reduction, followed by a random forest classifier. The VAE compressed the ~20,000-gene expression space into a 128-dimensional latent space.

- Signature Extraction: Genes with the highest weights in the latent dimensions most correlated with the classifier's decision were extracted.

- Functional Validation: siRNA knockdown of top signature genes (e.g., TXNRD1, SLC7A11) in resistant cell lines increased cisplatin-induced apoptosis.

Key Quantitative Findings:

Table 3: Performance of Oxidative Stress Signature in Predicting Platinum Response

Cancer Type Signature AUROC Hazard Ratio (PFS) for Signature-High vs. Low High-Grade Serous Ovarian 0.79 0.45 (95% CI: 0.32-0.63) Lung Adenocarcinoma 0.73 0.58 (95% CI: 0.42-0.80) Bladder Urothelial Carcinoma 0.76 0.52 (95% CI: 0.38-0.71) Pathway Logic Diagram:

AI-Discovered Oxidative Stress Pathway in Platinum Response

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Validating AI-Discovered Biomarkers

| Item / Reagent | Function in Validation | Example Product/Catalog |

|---|---|---|

| CRISPR-Cas9 Knockout Kits | Functional validation of AI-predicted gene targets by generating isogenic cell line models. | Synthego Synthetic sgRNA & Electroporation Kit. |

| Multiplex Immunofluorescence (mIF) Panels | Spatial validation of AI-identified tumor microenvironment features (e.g., TLS, immune cell spatial relationships). | Akoya Biosciences Opal 7-Color Automation Kit. |

| Targeted NGS Panels (Custom) | Confirm presence of AI-predicted rare genomic biomarkers in patient cohorts. | Illumina TruSeq Custom Amplicon v2. |

| Organoid/3D Cell Culture Systems | Test drug response predictions in more physiologically relevant ex vivo models. | Corning Matrigel for 3D Culture. |

| Single-Cell RNA-seq Library Prep Kits | Deconvolute AI-identified bulk expression signatures at cellular resolution. | 10x Genomics Chromium Next GEM Single Cell 3' Kit v4. |

| Phospho-Specific Antibody Arrays | Validate AI-inferred signaling pathway activity states. | R&D Systems Proteome Profiler Human Phospho-Kinase Array. |

The integration of artificial intelligence (AI) into oncology research has catalyzed a paradigm shift in predictive biomarker discovery. This whitepaper details the critical translational pathway required to transition an AI-discovered biomarker signature from a computational algorithm to a validated clinical assay. The core thesis is that robust validation, grounded in classical molecular biology and clinical trial frameworks, is indispensable for transforming algorithmic predictions into tools that can guide therapeutic decisions and improve patient outcomes in oncology.

The Translational Pipeline: From Discovery to Clinical Utility

The journey of an AI-discovered biomarker follows a structured, multi-phase pipeline. Failure at any stage can invalidate even the most promising computational finding.

Table 1: Key Stages in the Translational Pathway for AI-Discovered Biomarkers

| Stage | Primary Objective | Key Activities & Outputs | Success Metrics |

|---|---|---|---|

| 1. In Silico Discovery | Identify candidate biomarkers from high-dimensional data. | Multi-omics integration (genomics, transcriptomics, proteomics, digital pathology). Unsupervised/supervised ML model training. | Model AUC >0.85, cross-validation consistency, biological plausibility. |

| 2. Analytical Validation | Verify the assay measures the biomarker accurately and reliably. | Development of a prototype assay (e.g., RNA-seq panel, IHC, multiplex immunoassay). Determination of precision, accuracy, sensitivity, specificity, and dynamic range. | Intra/inter-assay CV <15%, >95% specificity/sensitivity in controlled samples, established LOD/LOQ. |

| 3. Biological/Clinical Validation | Confirm biomarker association with the biological phenotype or clinical endpoint. | Retrospective analysis on independent, well-annotated patient cohorts. Correlation with treatment response (ORR, PFS) or prognosis (OS). | Statistically significant hazard/odds ratio (p<0.05), clinical utility index. |

| 4. Clinical Qualification & Regulatory Approval | Establish evidentiary standard for use in a specific clinical context. | Prospective-retrospective (blinded) analysis from phase II/III trials. Submission to regulatory bodies (FDA, EMA). | Achievement of primary endpoint in prespecified analysis, regulatory approval (e.g., FDA PMA or 510(k)). |

| 5. Clinical Implementation | Integrate assay into routine clinical workflow. | Development of clinical guidelines, reimbursement strategies, and education for oncologists. | Broad adoption, impact on treatment decisions, improvement in population-level outcomes. |

Experimental Protocols for Critical Validation Phases

Protocol 1: Orthogonal Verification of a Transcriptomic Signature

- Objective: To confirm an AI-derived RNA expression signature using an alternative, clinically feasible platform.

- Materials: FFPE tumor sections from a retrospective cohort (N>150 with balanced outcomes). RNA extraction kit, Nanostring nCounter platform with a custom-designed panel, HTG EdgeSeq processor.

- Method:

- Sample Preparation: Macro-dissect FFPE sections to ensure >50% tumor content. Extract total RNA and quantify using a fluorometric assay.

- Assay Execution: Aliquot 100ng RNA per sample. For Nanostring: hybridize with custom codeset (containing signature genes + housekeepers) for 16h at 65°C, process on nCounter Prep Station and Digital Analyzer. For HTG EdgeSeq: process according to manufacturer's protocol for the PlexPRIME panel.

- Data Analysis: Normalize raw counts using housekeeping genes. Apply the original AI model's algorithm (e.g., weighted sum) to calculate a signature score for each sample.

- Statistical Correlation: Perform Spearman correlation analysis between signature scores derived from the discovery platform (e.g., RNA-seq) and the orthogonal verification platform.

Protocol 2: Retrospective Clinical Validation Using a Multiplex Immunoassay

- Objective: To validate the association of an AI-identified proteomic signature with response to immune checkpoint inhibitors (ICI).

- Materials: Pretreatment plasma/serum samples from a completed ICI trial cohort. Multiplex immunoassay platform (e.g., Olink Target 96, MSD U-PLEX).

- Method:

- Cohort Definition: Define blinded patient groups: responders (CR/PR per RECIST 1.1) and non-responders (SD/PD).

- Assay Protocol: Dilute samples per kit specifications. Incubate with pre-mixed antibody-linked probes (Olink) or electrochemiluminescence plates (MSD). Perform all washes meticulously.

- Readout & Normalization: Quantify protein levels (NPX for Olink, pg/mL for MSD). Normalize using internal controls and median scaling.

- Analysis: Apply pre-specified signature algorithm. Use a Mann-Whitney U test to compare signature scores between responders and non-responders. Generate a Receiver Operating Characteristic (ROC) curve and calculate AUC with 95% confidence intervals.

Visualizing Pathways and Workflows

Diagram Title: AI Biomarker Translation Pipeline

Diagram Title: Predictive Signature in ICI Response Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Platforms for Biomarker Translation

| Category / Item | Example Product/Platform | Primary Function in Translation |

|---|---|---|

| Nucleic Acid Analysis | HTG EdgeSeq PlexPRIME | Streamlines biomarker panel validation from FFPE RNA with minimal hands-on time, ideal for rapid prototyping. |

| Multiplex Protein Analysis | Olink Target 96/384 | Provides high-specificity, high-sensitivity quantification of protein signatures in serum/plasma with validated antibodies. |

| Spatial Biology | Nanostring GeoMx DSP / Visium by 10x Genomics | Enables validation of biomarker spatial context and tumor-microenvironment interactions within tissue sections. |

| Automated Image Analysis | HALO (Indica Labs) or QuPath | Quantifies biomarker expression from IHC or multiplex IF images, enabling reproducible scoring aligned with AI output. |

| High-Plex FFPE Proteomics | IsoPlexis Single-Cell Secretion | Functional proteomics to link AI-identified signatures to specific immune cell activities from limited clinical samples. |

| Reference Standards | NCI-CPTAC Reference Material | Provides benchmarked, multi-omics characterized samples for cross-platform assay calibration and harmonization. |

| Digital Biobank | BCR/TCGA Legacy / UK Biobank | Provides access to large, clinically annotated retrospective cohorts essential for the clinical validation phase. |

Navigating Challenges: Optimizing AI Models and Overcoming Pitfalls in Biomarker Discovery

Addressing Data Biases, Cohort Size Limitations, and Batch Effects

The pursuit of predictive biomarkers in oncology research, powered by artificial intelligence (AI), represents a paradigm shift toward personalized medicine. AI models promise to decipher complex patterns from multi-omics data, imaging, and electronic health records to identify signatures that predict treatment response, prognosis, or resistance. However, the translational validity of these discoveries is critically undermined by three pervasive technical challenges: data biases, cohort size limitations, and batch effects. This whitepaper provides an in-depth technical guide to identifying, quantifying, and mitigating these issues within the specific context of oncology biomarker research.

Deconstructing Data Biases in Oncology Datasets

Data bias refers to systematic distortions in data collection, annotation, or sampling that do not accurately reflect the target population. In oncology, these biases can lead to biomarkers that perform well only in narrow, non-representative subgroups.

- Selection Bias: Patients in academic cancer centers (where most genomic data is generated) often differ from the general population in socioeconomic status, stage at presentation, and access to care.

- Annotation/Label Bias: Inconsistencies in pathologic review (e.g., tumor cellularity scoring, PD-L1 scoring), RECIST criteria application, or outcome labeling (e.g., "responder" vs. "non-responder") introduce noise.

- Confounding Variables: Age, sex, ancestry, comorbidities, and prior treatments are often unevenly distributed and can be incorrectly learned as predictive signals by AI models.

Quantitative Assessment of Bias

The first step is to quantify potential bias within a dataset. The following table summarizes key metrics for assessment.

Table 1: Metrics for Quantifying Data Bias in Oncology Cohorts

| Bias Type | Metric | Calculation/Description | Interpretation | ||

|---|---|---|---|---|---|

| Representation Bias | Prevalence Disparity | (N_subgroup / N_total) - (P_subgroup_in_population) |

Difference between cohort fraction and true population fraction. Ideal: ~0. | ||

| Label Noise | Inter-rater Agreement (e.g., for pathology) | Cohen's Kappa, Intraclass Correlation Coefficient (ICC) | Kappa/ICC < 0.4 indicates poor agreement, high label bias risk. | ||

| Confounding Strength | Standardized Mean Difference (SMD) between groups | SMD = (Mean₁ - Mean₂) / Pooled SD | SMD | > 0.1 suggests meaningful imbalance in a confounder. | |

| Feature-Covariate Association | Cramér's V (categorical), Correlation (continuous) | Measures association between a candidate biomarker feature and a demographic covariate (e.g., ancestry). | High association suggests feature may be confounded, not biologically predictive. |

Mitigation Protocols

Protocol 1: Bias-Aware Data Splitting

- Purpose: To prevent data leakage of biased signals during training/validation.

- Method: Use stratified splitting not only on the label (e.g., response) but also on key confounding variables (e.g., institution, sequencing platform, ancestry). Advanced techniques include:

- GroupKFold: Splits data such that all samples from a particular "group" (e.g., a specific clinical site) are contained in either the train or test set, never both.

- Confounder-matched Validation Set: Use propensity score matching or similar to create a validation set where confounders are balanced across outcome classes.

Protocol 2: Algorithmic Debiasing

- Purpose: To reduce a model's dependence on spurious, biased correlations.

- Method:

- Adversarial Debiasing: Jointly train the primary biomarker prediction model and an adversarial network that tries to predict the confounding variable (e.g., institution) from the model's latent features. The primary model is penalized for enabling accurate adversarial prediction.

- Re-weighting: Assign higher weights to samples from underrepresented subgroups during training to balance their influence on the loss function.

Diagram 1: Adversarial debiasing workflow for biomarker models.

Overcoming Cohort Size Limitations

Oncology biomarker studies, especially for rare cancer subtypes or novel therapeutic responses, are often plagued by small sample sizes (N), leading to overfit, non-reproducible models.

Strategies for Small-N Analysis

Table 2: Strategies to Mitigate Small Cohort Limitations

| Strategy | Description | Key Considerations in Oncology |

|---|---|---|

| Multi-modal Data Fusion | Integrate genomics, transcriptomics, digital pathology, radiomics to increase features per patient. | Data harmonization is critical. Use late-fusion architectures to handle missing modalities. |

| Transfer Learning & Pre-training | Initialize models on large public datasets (e.g., TCGA, Pan-cancer Atlas) before fine-tuning on small target cohort. | "Source-task" relevance matters. Pre-training on pan-cancer RNA-seq can boost performance on rare cancer RNA-seq. |