Akaike Information Criterion (AIC) in Biomedical Research: A Practical Guide to Model Selection for Scientists and Drug Developers

This article provides a comprehensive guide to the Akaike Information Criterion (AIC) for model selection, specifically tailored for researchers and professionals in biomedical and clinical sciences.

Akaike Information Criterion (AIC) in Biomedical Research: A Practical Guide to Model Selection for Scientists and Drug Developers

Abstract

This article provides a comprehensive guide to the Akaike Information Criterion (AIC) for model selection, specifically tailored for researchers and professionals in biomedical and clinical sciences. We begin by demystifying the foundational concepts of AIC, explaining its derivation from information theory (Kullback-Leibler divergence) and its core principle of balancing model fit with complexity. The guide then delves into the practical methodology for calculating and applying AIC, illustrated with examples relevant to pharmacokinetics, dose-response modeling, and biomarker discovery. We address common pitfalls in interpretation, strategies for model set selection, and the critical issue of small sample size correction (AICc). Finally, we compare AIC to alternative criteria like BIC and cross-validation, discussing their respective strengths and appropriate contexts in biomedical research to ensure robust, reproducible, and interpretable model-building.

What is AIC? Demystifying Information-Theoretic Model Selection for Biomedical Research

Application Notes: Akaike Information Criterion (AIC) in Pharmacometric Research

The Akaike Information Criterion (AIC) provides a rigorous framework for selecting among competing mathematical models that describe pharmacokinetic (PK) and pharmacodynamic (PD) relationships. It operates on the principle of parsimony, balancing model fit with complexity to minimize information loss. Unlike nested hypothesis testing with p-values, AIC allows for the direct comparison of non-nested models (e.g., one-compartment vs. two-compartment PK models, different Emax models) to identify the model best supported by the observed data.

Core Quantitative Comparison of Model Selection Criteria

Table 1: Key Model Selection Metrics Compared

| Criterion | Formula | Penalty for Complexity | Primary Use Case |

|---|---|---|---|

| AIC | -2 log(L) + 2K | Linear (2K) | Selecting the model that best predicts new data (asymptotically unbiased). |

| AICc | AIC + (2K(K+1))/(n-K-1) | Stronger for small n | Small sample size correction for AIC (use when n/K < ~40). |

| BIC | -2 log(L) + K log(n) | Logarithmic (K log(n)) | Selecting the "true" model, with stronger penalty than AIC as n increases. |

| p-value (LR Test) | χ² = -2 log(Lsimple / Lcomplex) | N/A (fixed α) | Comparing two nested models; rejects the simpler if fit improvement is statistically significant. |

Experimental Protocol: AIC-Guided PK/PD Model Development

Objective: To identify the optimal structural model for the concentration-effect relationship of a novel antihypertensive drug.

Data Collection: Collect dense serial plasma drug concentrations and corresponding diastolic blood pressure (DBP) measurements from a Phase I clinical trial (n=40 subjects).

Candidate Model Specification:

- Model 1: Linear model.

E = E0 + Slope * C - Model 2: Emax model.

E = E0 - (Emax * C) / (EC50 + C) - Model 3: Sigmoid Emax model.

E = E0 - (Emax * C^h) / (EC50^h + C^h) - Model 4: Placebo model (null).

E = E0

- Model 1: Linear model.

Parameter Estimation: For each candidate model, estimate parameters (E0, Slope, Emax, EC50, h) using nonlinear mixed-effects modeling (e.g., NONMEM, Monolix) via maximum likelihood estimation. Record the maximized log-likelihood (log(L)) for each model.

AIC Calculation: Compute AIC for each model.

AIC = -2 log(L) + 2K, where K is the number of estimated parameters (including residual error). Compute AICc given the moderate sample size.Model Ranking & Selection: Rank models from lowest to highest AICc. Calculate Akaike weights (w_i) to quantify the probability that model i is the best among the set.

ΔAICc_i = AICc_i - min(AICc)w_i = exp(-ΔAICc_i / 2) / Σ[exp(-ΔAICc_j / 2)]Model Averaging (Optional): If no single model is dominant (e.g., top weight < 0.9), generate final predictions by averaging parameter estimates or predictions from all models, weighted by their Akaike weights.

Protocol for Simulating and Validating AIC Performance

Objective: To empirically demonstrate AIC's superiority over p-value-based stepwise regression in predictive accuracy.

True Model Simulation: Simulate a dataset (n=100) where the true relationship between five biomarkers (X1-X5) and a clinical endpoint (Y) is known:

Y = 2 + 0.8*X1 + 0.5*X3 + ε. X2, X4, X5 are irrelevant noise variables.Candidate Model Fitting:

- Fit all possible linear regression models from the five covariates (31 models).

- Perform forward stepwise regression using a p-value threshold of 0.05 for entry.

Performance Assessment:

- Generate a new, independent validation dataset from the same true model.

- For the AIC-best model and the stepwise-selected model, calculate the Mean Squared Prediction Error (MSPE) on the validation set.

Replication: Repeat the simulation-validation process 1000 times. Summarize the frequency with which each method recovers the true model (X1, X3 only) and compare the distribution of MSPEs.

The Scientist's Toolkit: Essential Reagents & Software

Table 2: Key Research Reagent Solutions for Model Selection Studies

| Item / Software | Function in Model Selection Research |

|---|---|

| Nonlinear Mixed-Effects Software (NONMEM, Monolix, Phoenix NLME) | Industry-standard platforms for fitting complex PK/PD models and obtaining maximum likelihood estimates required for AIC calculation. |

| Statistical Programming Environment (R, Python with SciPy/statsmodels) | Essential for custom calculation of AIC/AICc/BIC, model averaging, and running simulation-validation studies. |

| Clinical PK/PD Dataset | A well-characterized dataset with drug exposure, biomarker, and clinical response data to serve as the empirical foundation for model comparison. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | For computationally intensive tasks like bootstrapping, simulation studies, or fitting large model ensembles. |

| Model Averaging Scripts (Custom R/Python code) | To implement multimodel inference, combining predictions from multiple high-ranking models based on Akaike weights. |

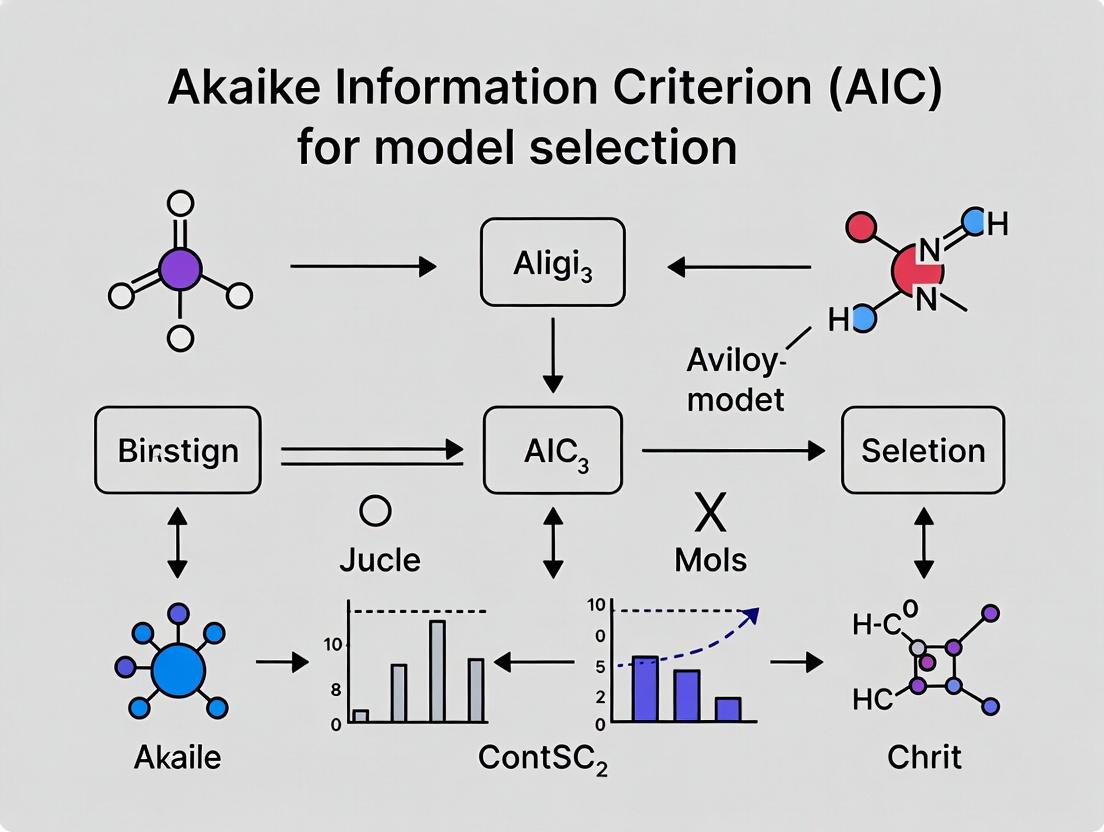

Visualization: The AIC-Based Model Selection Workflow

Title: AIC Model Selection and Multimodel Inference Workflow

Visualization: Information-Theoretic vs. Null Hypothesis Testing Paradigms

Title: NHST vs. Information-Theoretic Model Selection Approach

Theoretical Framework

Quantitative Decomposition of Prediction Error

The expected prediction error (EPE) for a new observation at point x0 can be mathematically decomposed, underpinning the tradeoff. This decomposition is central to understanding the Akaike Information Criterion's (AIC) role in model selection, which aims to estimate the relative information loss of candidate models.

Table 1: Bias-Variance Decomposition of Mean Squared Error (MSE)

| Error Component | Mathematical Formula | Description in Model Selection Context |

|---|---|---|

| Bias² | [E(ŷ) - f(x)]² | Error from overly simplistic model assumptions. High bias indicates underfitting. |

| Variance | E[ŷ - E(ŷ)]² | Error from excessive sensitivity to training data fluctuations. High variance indicates overfitting. |

| Irreducible Error | ε² | Noise inherent to the data generation process. Cannot be reduced by any model. |

| Total Expected MSE | Bias² + Variance + Irreducible Error | The target quantity minimized during optimal model selection. |

AIC as an Estimator of Relative K-L Information Loss

The AIC provides a formal, information-theoretic framework for navigating the bias-variance tradeoff. It is calculated as: AIC = 2k - 2ln(Ł), where k is the number of estimated parameters and Ł is the maximum value of the model's likelihood function. The model with the lowest AIC is preferred, as it optimally balances goodness-of-fit (rewarded by -2ln(Ł)) and complexity penalty (2k).

Application Notes & Experimental Protocols

Protocol: Quantitative Structure-Activity Relationship (QSAR) Modeling in Drug Discovery

This protocol outlines the use of the bias-variance framework and AIC for selecting predictive models of biological activity.

Objective: To build a predictive QSAR model for compound potency (e.g., IC50) against a target protein while avoiding overfitting to a limited dataset.

Materials & Workflow:

- Dataset: Curated set of N compounds with measured bioactivity and calculated molecular descriptors (e.g., logP, molecular weight, topological indices).

- Model Candidates: Define a set of candidate models of increasing complexity (e.g., Linear Regression, Polynomial Regression (degree 2, 3), Random Forest, Support Vector Machine).

- Data Splitting: Split data into training (e.g., 70%) and validation/test (30%) sets. For robust assessment, implement k-fold cross-validation (k=5 or 10) on the training set.

- Model Fitting & Evaluation: Fit each model on the training folds. For each, calculate:

- Training MSE (estimates goodness-of-fit).

- Validation MSE (estimates generalization error).

- AIC (or AICc for small N).

- Selection: Identify the model with minimum AIC (or AICc) and a low validation MSE.

Table 2: Simulated QSAR Model Comparison Output

| Model Type | No. of Parameters (k) | Training MSE (Bias² + Var) | Validation MSE | AIC Score | Selected (Y/N) | Rationale |

|---|---|---|---|---|---|---|

| Linear | 5 | 1.45 | 1.52 | 210.5 | N | High bias, underfits complex relationships. |

| Polynomial (deg=2) | 15 | 0.89 | 0.93 | 187.2 | Y | Optimal tradeoff; lowest AIC & stable validation error. |

| Polynomial (deg=5) | 55 | 0.21 | 1.87 | 235.8 | N | Very low training MSE but high validation MSE (overfitting). |

| Random Forest | (Variable) | 0.15 | 1.05 | 192.1 | N | Good validation, but AIC penalizes effective complexity. |

Protocol: Dose-Response Curve Fitting for IC50Determination

Accurate estimation of half-maximal inhibitory concentration (IC50) relies on selecting an appropriate curve model that is not overly sensitive to experimental noise.

Objective: To fit a robust dose-response model to bioassay data and reliably estimate IC50 and Hill slope.

Procedure:

- Data Acquisition: Measure response (% inhibition) across 8-12 concentrations of compound, performed in technical triplicates.

- Candidate Models: Fit standard four-parameter logistic (4PL: Bottom, Top, IC50, HillSlope) and three-parameter logistic (3PL: fixed Bottom=0) models.

- Calculation: Compute log-likelihood and AIC for each fitted model. AICc (corrected for small sample size) is strongly recommended.

- AICc = AIC + (2k² + 2k) / (n - k - 1), where n is the number of data points.

- Model Selection: Choose the model with the lower AICc score. A ΔAICc > 2 suggests meaningful support for the better model.

- Reporting: Report final IC50 estimate with confidence intervals from the selected model.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Featured Experiments

| Item / Reagent | Function in Context of Bias-Variance Tradeoff |

|---|---|

| Statistical Software (R/Python) | Provides packages (statsmodels, scikit-learn, drc in R) for fitting multiple models, calculating likelihoods, AIC, and cross-validation MSE. |

| High-Content Screening Assay Kits | Generate robust, quantitative dose-response data (n large) for reliable model fitting and variance estimation. |

| Chemical Descriptor Software | Calculates diverse molecular features as potential predictors, enabling exploration of model complexity. |

| CURATED Public Bioactivity Datasets | Provide large, high-quality data (e.g., ChEMBL) essential for training complex models without severe overfitting. |

Visualization of Core Concepts

Diagram: The Bias-Variance Tradeoff Relationship

Bias-Variance Tradeoff & AIC Role

Diagram: Model Selection Workflow Using AIC

AIC-Based Model Selection Protocol

Within the broader thesis on the Akaike Information Criterion (AIC) for model selection research, understanding its mathematical genesis is paramount. AIC is fundamentally rooted in information theory, specifically in the Kullback-Leibler (KL) information or divergence. This section details the derivation of AIC from KL information, providing the theoretical foundation for its application in model selection across scientific fields, including computational biology and drug development.

Core Theoretical Derivation

The Kullback-Leibler information measures the discrepancy between a true probability distribution, g(x), and an approximating model, f(x|θ). For continuous distributions:

KL(g; f(·|θ)) = ∫ g(x) log( g(x) / f(x|θ) ) dx = E_g[log g(x)] - E_g[log f(x|θ)]

Since E_g[log g(x)] is constant across models, comparative model selection focuses on the expected log-likelihood, E_g[log f(x|θ)]. Akaike's critical step was to find an estimator of this quantity. He considered the maximized log-likelihood, log f(x|θ̂), where θ̂ is the Maximum Likelihood Estimate (MLE), but recognized it as a biased upward estimate of the target expected log-likelihood. The bias adjustment, under regularity conditions, is asymptotically equal to the number of estimable parameters (K) in the model.

This leads to the celebrated formula: AIC = -2 log(L(θ̂|data)) + 2K

where L(θ̂|data) is the maximized likelihood of the model. The model with the minimum AIC value is preferred.

Table 1: Key Quantitative Components in AIC Derivation from KL Information

| Component | Mathematical Expression | Role in Derivation | |

|---|---|---|---|

| KL Divergence | KL(g;f) = ∫ g log(g/f) dx | Measures information loss when model f approximates truth g. | |

| Expected Log-Likelihood | *E_g[log f(x | θ)]* | The target quantity to be estimated for model comparison. |

| Maximized Log-Likelihood | *log f(x | θ̂)* | Biased estimator of the expected log-likelihood. |

| Asymptotic Bias | K (number of parameters) | Critical correction term derived by Akaike. | |

| AIC Form | -2 log(L(θ̂)) + 2K | Final criterion for model selection; smaller is better. |

Diagram 1: Logical flow from KL information to AIC formulation.

Experimental Protocols for AIC Application in Model Selection

Protocol 1: Comparative Model Selection in Dose-Response Analysis

Objective: To select the best mechanistic model describing the relationship between drug concentration and cellular response (e.g., viability) from a set of candidate models (e.g., Linear, Emax, Sigmoid Emax, Logistic).

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Collection: For N independent concentrations, measure the corresponding response. Include appropriate replicates and controls.

- Candidate Model Specification: Define R rival parametric models. Each model f_r(x|θ_r) has K_r estimable parameters.

- Parameter Estimation: For each model r, compute the Maximum Likelihood Estimates (MLE) θ̂_r by minimizing the appropriate negative log-likelihood function (e.g., based on normal or binomial error).

- Compute AIC Values: For each model, calculate: AIC_r = -2 log(L(θ̂_r | data)) + 2K_r If sample size n is small relative to K (e.g., n/K < 40), use the corrected AICc: AICc_r = AIC_r + (2K_r(K_r+1))/(n - K_r - 1)

- Rank Models: Compute AIC differences: Δ_r = AIC_r - min(AIC).

- Model Weighting: Calculate Akaike weights: w_r = exp(-Δ_r/2) / Σ(exp(-Δ_i/2)). These weights represent the probability that model r is the best, given the data and model set.

- Model Averaging (Optional): For prediction, use the weighted average across all models, especially if no single model has w_r > 0.9.

Table 2: Example AIC Output for Dose-Response Models

| Model | K | Log-Likelihood | AIC | ΔAIC | Akaike Weight (w) |

|---|---|---|---|---|---|

| Sigmoid Emax | 4 | -125.6 | 259.2 | 0.0 | 0.72 |

| Emax | 3 | -128.9 | 263.8 | 4.6 | 0.07 |

| Logistic | 4 | -127.1 | 262.2 | 3.0 | 0.16 |

| Linear | 2 | -135.4 | 274.8 | 15.6 | 0.00 |

Diagram 2: Protocol for AIC-based dose-response model selection.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Pharmacodynamic Modeling

| Item / Solution | Function in Model Selection Context |

|---|---|

| Statistical Software (R/Python) | Platforms with packages (e.g., drc, statsmodels, scipy.optimize) for MLE computation, model fitting, and AIC calculation. |

| Optimization Algorithms | Numerical methods (e.g., Nelder-Mead, BFGS) to find parameter values (θ̂) that maximize the log-likelihood. |

| Model Specification Library | Pre-defined mathematical functions (Emax, Hill, etc.) representing biological mechanisms for candidate set generation. |

| Data Visualization Tools | Software (e.g., ggplot2, matplotlib) to graphically assess model fits and present AIC results. |

| Information-Theoretic Metrics | Computed values (AIC, AICc, BIC) serving as the objective criterion for selecting among rival hypotheses. |

Within a broader thesis on model selection research, the Akaike Information Criterion (AIC) stands as a cornerstone for balancing model fit and complexity. The principle that a lower AIC value indicates a preferable model is not arbitrary but is rooted in information theory, specifically in estimating the Kullback-Leibler divergence—a measure of information lost when a candidate model approximates the true, unknown data-generating process. This application note details the interpretation, calculation, and practical application of AIC for researchers and drug development professionals, providing protocols for robust model comparison.

Foundational Theory & Quantitative Data

The AIC is calculated as: AIC = 2k - 2ln(L), where k is the number of estimated parameters and L is the maximum value of the model's likelihood function. The "lower is better" rule arises because AIC estimates relative information loss; the model with the lowest AIC is estimated to lose the least information.

Table 1: AIC Comparison Scenarios & Interpretation

| Scenario | Model A AIC | Model B AIC | ΔAIC (A - B) | Interpretation Guidance |

|---|---|---|---|---|

| Nested Models (Linear vs. Quadratic) | 210.5 | 205.2 | 5.3 | Substantial support for Model B (Quadratic). ΔAIC > 4 suggests Model B is significantly better. |

| Non-Nested Models (Different Covariates) | 455.7 | 456.1 | -0.4 | Essentially equivalent support. Both models describe data similarly well; choose the simpler or more biologically plausible. |

| High-Parameter Overfit Model | 188.2 | 201.5 | -13.3 | Despite a better (lower) AIC, Model A may be overfit if k is very high relative to sample size. Consider AICc (corrected for small sample size). |

| Pharmacokinetic (PK) Models | -40.2 | -35.8 | -4.4 | Support for the lower AIC PK model (e.g., two-compartment vs. one-compartment). Preferable for predicting drug concentration time courses. |

Note: ΔAIC = AIC(Alternative) - AIC(Min). As a rule of thumb: ΔAIC < 2 = Substantial support; 4-7 = Considerably less support; >10 = Essentially no support.

Experimental Protocol: AIC-Based Model Selection Workflow

This protocol outlines a standardized procedure for comparing statistical models using AIC in a research setting, such as dose-response analysis or biomarker identification.

Protocol Title: Sequential Model Fitting and Comparison Using Akaike Information Criterion

Objective: To select the best approximating model from a set of candidates for a given dataset while penalizing overparameterization.

Materials & Software: Statistical software (R, Python with statsmodels/scipy, SAS, GraphPad Prism), dataset, predefined candidate models.

Procedure:

Define the Scientific Question & Candidate Models:

- Clearly state the analysis goal (e.g., "Identify the relationship between drug dose and efficacy response").

- A priori, specify a set of candidate models based on biological plausibility and theoretical knowledge. Example set: Null (intercept only), Linear, Logistic (Emax), Quadratic.

Model Fitting & Parameter Estimation:

- For each candidate model, use the appropriate maximum likelihood estimation (MLE) procedure (e.g., ordinary least squares for linear, iterative non-linear least squares for logistic).

- Ensure convergence for iterative fitting algorithms. Record the maximized log-likelihood (ln(L)) and the number of estimated parameters (k) for each model.

Calculate AIC Values:

- Compute AIC for each model i: AICi = 2ki* - 2ln(Li).

- Small Sample Correction (AICc): If n (sample size) / k (max model parameters) < 40, use AICc: AICci = AICi + (2ki(ki+1)) / (n - ki* - 1). AICc converges to AIC as n increases.

Rank Models and Calculate Evidence:

- Rank all models from lowest to highest AIC (or AICc). Identify the model with the minimum AIC (AICmin).

- Compute the AIC differences: Δi = AICi - AICmin for all models.

- Calculate Akaike Weights (wi): *wi* = exp(-Δi/2) / Σ[exp(-Δr/2)]. These weights represent the probability that model i is the best among the set.

Model Averaging (Optional but Recommended):

- If no single model is overwhelmingly superior (e.g., wmax < 0.9), use model averaging for inference.

- For parameter estimation (e.g., EC50), compute a weighted average across all models using the Akaike weights.

Validation:

- Perform residual analysis and diagnostic checks on the top model(s) to ensure assumptions are met.

- Where possible, use cross-validation to assess the predictive performance of the AIC-selected model.

Diagram: AIC Model Selection Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Software for Model Selection Studies

| Item/Category | Example/Product | Function in AIC-Based Research |

|---|---|---|

| Statistical Software | R (stats, AICcmodavg packages), Python (statsmodels, scipy), SAS (PROC NLMIXED), GraphPad Prism |

Provides the computational engine for maximum likelihood fitting, AIC calculation, and model comparison procedures. |

| Non-Linear Fitting Tool | R nls() function, Python curve_fit() (SciPy), SigmaPlot |

Essential for fitting complex pharmacological (e.g., Emax, PK) and biological growth models to obtain log-likelihoods. |

| Model Selection Suite | R MuMIn package, STATA estat ic |

Automates the calculation of AICc, ΔAIC, and Akaike weights across a broad set of candidate models. |

| Data Simulation Tool | R MASS package (mvrnorm), Python numpy.random |

Allows for power analysis and validation of AIC performance under known "true" models, crucial for method development. |

| Visualization Library | ggplot2 (R), matplotlib/seaborn (Python) |

Creates clear plots of model fits, residual diagnostics, and AIC weight comparison bar charts for publication. |

Advanced Considerations & Visualization of Conceptual Relationships

The principle of parsimony, central to AIC, involves a trade-off. The diagram below illustrates the logical relationship between model complexity, goodness-of-fit, and information loss.

Diagram: The AIC Parsimony Trade-Off Concept

In the context of model selection research, "lower AIC is better" is a succinct summary of a rigorous approach to selecting a model that best approximates reality without unnecessary complexity. By following standardized protocols, utilizing appropriate tools, and interpreting AIC differences (ΔAIC) and weights quantitatively, researchers in drug development and basic science can make robust, defensible decisions in pharmacokinetic modeling, dose-response analysis, and biomarker discovery.

Key Assumptions and Conceptual Prerequisites for Using AIC

The Akaike Information Criterion (AIC) is a cornerstone of modern statistical model selection, providing an estimator for out-of-sample prediction error. Its application within pharmacological and biomedical research, from dose-response modeling to biomarker discovery, requires strict adherence to foundational assumptions. This document outlines these prerequisites, enabling valid inference in complex research settings.

Core Conceptual Prerequisites

AIC is derived from information theory, specifically the Kullback-Leibler (KL) divergence. Its valid application is contingent upon several high-level conceptual prerequisites.

Table 1: Conceptual Prerequisites for AIC Application

| Prerequisite | Description | Implication for Research |

|---|---|---|

| Focus on Prediction | AIC estimates relative KL information loss, favoring models with better expected predictive accuracy. | Not suitable for research focused solely on parameter inference or causal identification without predictive intent. |

| Set of Candidate Models | Requires a pre-defined, finite set of models. AIC selects the best among them, not an absolute "true" model. | Model set must be specified a priori based on scientific theory to avoid data dredging. |

| "True Model" Complexity | Assumes the data-generating process (true model) is complex and not contained within the candidate set. | In practice, all models are approximations. AIC helps find the best approximating model. |

| Large Sample Basis | AIC is an asymptotic (large-sample) result. Corrections (e.g., AICc) are needed for small n/large k. | Critical in early-stage research with limited patient or experimental replicates. |

Key Statistical Assumptions and Diagnostics

Violation of underlying statistical assumptions can render AIC comparisons invalid.

Table 2: Key Statistical Assumptions & Validation Protocols

| Assumption | Diagnostic Protocol | Typical Reagent/Tool |

|---|---|---|

| Independence of Observations | Examine experimental design for pseudo-replication. Use Durbin-Watson test for time-series residuals. | Statistical software (R, Python) with appropriate experimental design annotation. |

| Adequate Model Likelihood | The likelihood function must correctly represent the stochastic process generating the data. | Use probability plots (Q-Q plots) and goodness-of-fit tests (e.g., Chi-square, Kolmogorov-Smirnov). |

| Negligible Model Misspecification | Significant misspecification biases AIC. Perform residual analysis across the candidate set. | Residual vs. fitted plots; tests for heteroscedasticity (Breusch-Pagan); normality tests (Shapiro-Wilk). |

| Parameters Estimated via Maximum Likelihood (ML) | AIC derivation assumes ML estimates. Quasi-likelihood or Bayesian estimates require specialized variants (e.g., WAIC). | Documentation of estimation algorithm in software (e.g., glm in R, statsmodels in Python). |

Title: Logical Flow for Validating AIC Prerequisites

Experimental Protocol: Validating AIC Assumptions in Dose-Response Analysis

This protocol details steps for comparing non-linear dose-response models (e.g., Emax vs. sigmoidal) using AIC.

Objective: To select the most predictive model for compound potency (EC50) from cellular viability data.

Materials & Reagents: Table 3: Research Reagent Solutions for Dose-Response AIC Protocol

| Item | Function in Protocol | Example/Supplier |

|---|---|---|

| Cell Line & Compound | Biological system and test agent. | HEK293 cells; investigational kinase inhibitor. |

| Viability Assay Kit | Quantifies response variable (e.g., ATP content). | CellTiter-Glo 3D (Promega). |

| Serial Dilution Plates | Prepares dose gradient for curve fitting. | 96-well polypropylene plates. |

| Statistical Software | Fits models via ML, extracts log-likelihood, computes AIC. | R with drc & AICcmodavg packages; Python with SciPy. |

| Electronic Lab Notebook | Documents a priori model set and design to prevent p-hacking. | LabArchives. |

Procedure:

- Experimental Design:

- Seed cells in 96-well plates. Treat with compound across 10 doses in 1:3 serial dilution, with 6 technical replicates per dose. Include DMSO controls.

- Randomize well positions to ensure observation independence.

- Data Generation:

- After 72h, lyse cells and measure luminescence using the viability assay kit per manufacturer's instructions.

- Normalize data to controls (100% viability) and background (0%).

- Pre-AIC Modeling Preparation:

- A priori, define candidate models: 4-parameter logistic (4PL, sigmoidal), 3-parameter logistic (3PL, fixed Hill slope=1), and Emax model.

- In software, fit each model using maximum likelihood estimation. Assume normally distributed, homoscedastic errors.

- Assumption Diagnostic Checks (Mandatory):

- Independence: Plot residuals vs. well position sequence; no patterns should exist.

- Likelihood Adequacy: Generate Q-Q plots of standardized residuals. Perform Shapiro-Wilk test (p > 0.05 suggests no severe violation).

- Homoscedasticity: Plot residuals vs. fitted values. Use Breusch-Pagan test (non-significant p-value desired).

- AIC Calculation & Selection:

- If diagnostics are acceptable, compute AIC for each model:

AIC = 2k - 2ln(L̂), where k is parameters, L̂ is max likelihood. - Apply AICc correction due to limited doses (n=10):

AICc = AIC + (2k(k+1))/(n-k-1). - Compute ΔAICc relative to the minimum value in the set. Models with ΔAICc < 2 have substantial support.

- If diagnostics are acceptable, compute AIC for each model:

- Reporting: Report the model set, diagnostic results, AICc values, ΔAICc, and the selected model.

Critical Considerations in Drug Development Contexts

Table 4: AIC Application Notes for Drug Development

| Scenario | Challenge | Recommended Action |

|---|---|---|

| High-Throughput Screening | Thousands of compounds; small n per dose-response. | Use AICc universally. Automated diagnostic flagging for unreliable fits. |

| Mechanistic PK/PD Modeling | Complex, nested models with many parameters. | Use AIC for non-nested comparison; use likelihood ratio test for nested models. |

| Biomarker Signature Selection | Highly correlated predictors, non-normal errors. | Ensure likelihood function matches error distribution (e.g., use AIC from Cox model for survival). |

| Multimodel Inference | Several models have ΔAICc < 2. | Do not select a single model; use model averaging for robust parameter estimates. |

Title: Decision Pathway After AICc Calculation

How to Calculate and Apply AIC: A Step-by-Step Guide for Clinical and Preclinical Data

Application Notes

The Akaike Information Criterion (AIC) is a cornerstone of statistical model selection, balancing model fit and complexity to estimate the quality of models relative to one another. Its core formula, AIC = -2log(L) + 2K, where L is the maximum value of the likelihood function for the model and K is the number of estimated parameters, is deceptively simple. Within the context of model selection research, particularly in fields like computational biology and pharmacometrics, understanding each component is critical for robust inference.

Log-Likelihood (-2log(L)): The Measure of Fit

The log-likelihood quantifies how well the model explains the observed data. A higher log-likelihood (closer to zero, since it's negative) indicates a better fit. The multiplication by -2 is a historical convention that links AIC to the Chi-squared distribution, facilitating hypothesis testing. In drug development, this term is crucial when comparing dose-response models or pharmacokinetic/pharmacodynamic (PK/PD) models, where accurately describing the data is paramount for predicting efficacy and safety.

The Penalty Term (2K): The Guard Against Overfitting

The term 2K directly penalizes the number of parameters. This penalization embodies the principle of parsimony, discouraging the addition of unnecessary variables that may fit noise rather than signal. For researchers developing quantitative systems pharmacology (QSP) models, which can involve hundreds of parameters, this penalty guides the selection of simpler, more generalizable sub-models.

The Constant and Its Implications

The original derivation of AIC from information theory yields the exact formula -2log(L) + 2K. The constant (2) is not arbitrary; it arises from asymptotic approximations of the Kullback-Leibler divergence. It's important to note that the absolute value of AIC is meaningless; only differences in AIC between models on the same dataset (ΔAIC) are interpretable. For small sample sizes (n), a corrected version, AICc = AIC + (2K(K+1))/(n-K-1), should be used to avoid bias.

Table 1: AIC Comparison for Example Pharmacokinetic Models

| Model Name | Number of Parameters (K) | Log-Likelihood (log(L)) | AIC | ΔAIC | Relative Likelihood |

|---|---|---|---|---|---|

| One-Compartment | 2 | -120.5 | 245.0 | 7.2 | 0.027 |

| Two-Compartment | 4 | -115.2 | 238.5 | 0.0 | 1.000 |

| Three-Compartment | 6 | -114.8 | 241.6 | 3.1 | 0.211 |

Interpretation: The two-compartment model, with the lowest AIC, is the most parsimonious choice among the set. The three-compartment model (ΔAIC > 2) has substantially less support.

Table 2: AICc Correction Impact (Small n=15)

| Model | K | AIC | AICc | ΔAICc |

|---|---|---|---|---|

| Complex Model | 8 | 101.3 | 118.9 | 12.4 |

| Simple Model | 5 | 102.1 | 106.5 | 0.0 |

The correction increases the penalty for parameter count, favoring the simpler model more strongly when sample size is limited.

Experimental Protocols

Protocol 1: Calculating AIC for Nested Dose-Response Models Objective: To select the optimal model describing the relationship between drug concentration and biological response.

- Data Collection: Record response measurements (e.g., % inhibition) across a minimum of 8-10 log-spaced concentration points, with replicates.

- Model Fitting: Fit the data to candidate models (e.g., Linear, Emax, Sigmoid Emax) using maximum likelihood estimation (MLE) in software (e.g., R, GraphPad Prism).

- Extract Statistics: For each fitted model, extract the maximized log-likelihood value and count the number of estimated parameters (e.g., baseline, Emax, EC50, Hill slope).

- Compute AIC: Apply the formula AIC = -2log(L) + 2K. If n/K < 40, use AICc.

- Rank Models: Order models by ascending AIC. Calculate ΔAIC for each model relative to the best (lowest AIC) model. Models with ΔAIC < 2 have substantial support.

Protocol 2: Bootstrap Validation of AIC-Selected Model Objective: To assess the stability and generalizability of the AIC-selected model.

- Initial Selection: Using the original dataset (D), perform AIC-based model selection as in Protocol 1. Designate the selected model M.

- Bootstrap Resampling: Generate B (e.g., 1000) bootstrap samples by randomly resampling D with replacement.

- Refit & Re-select: For each bootstrap sample, refit all candidate models and perform AIC selection again.

- Frequency Calculation: Calculate the proportion of bootstrap samples for which model M is again selected as best. A proportion >0.7 is considered strong evidence for the stability of the selection.

Visualizations

Title: AIC-Based Model Selection Workflow

Title: Components of the AIC Formula

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for AIC-Based Model Selection Research

| Item | Function in Research |

|---|---|

| Statistical Software (R/Python) | Provides environments (e.g., R's stats4 or nlme, Python's statsmodels & scipy) for performing Maximum Likelihood Estimation and extracting log-likelihood values. |

Model Selection Package (e.g., R's AICcmodavg) |

Dedicated library for computing AIC, AICc, ΔAIC, and model-averaged predictions, streamlining the comparison process. |

| Non-Linear Regression Tool (e.g., GraphPad Prism, NONMEM) | Essential for fitting complex biological models (PK/PD, dose-response) where parameters are estimated iteratively via MLE. |

Bootstrapping Library (e.g., R's boot) |

Enables the implementation of Protocol 2 to validate the stability of the AIC-selected model through resampling. |

Data Visualization Library (e.g., ggplot2, matplotlib) |

Critical for visualizing model fits, residual plots, and creating clear diagrams of AIC results for publications. |

This Application Note provides practical protocols for calculating the Akaike Information Criterion (AIC) across three fundamental model classes. This work supports a broader thesis investigating robust, application-specific model selection frameworks in biomedical research. AIC, an estimator of prediction error, facilitates the selection of the model that best approximates the data-generating process while penalizing complexity, making it indispensable for researchers balancing fit and parsimony.

Theoretical Foundation & Calculation Formula

The general formula for AIC is: AIC = 2k - 2ln(L̂) Where:

- k: Number of estimated parameters in the model.

- L̂: Maximized value of the likelihood function for the model.

For small sample sizes (n/k < ~40), use the corrected AICc: AICc = AIC + (2k(k+1))/(n-k-1)

Table 1: Key Properties for AIC Calculation Across Model Types

| Model Class | Key Parameter Count (k) Considerations | Likelihood Function Basis | Typical Software/R Function |

|---|---|---|---|

| Linear Regression | Count all β coefficients + variance (σ²). | Based on Normal distribution residuals. | AIC(lm_model) in R (stats). |

| Nonlinear Regression | Count all model parameters (e.g., Vmax, Km) + variance (σ²). | Based on specified nonlinear functional form. | AIC(nls_model) in R (stats). |

| Mixed-Effects | Include fixed effects + variance components (random effects, residuals). | Can be REstricted ML (REML) or ML. Use ML for comparison. | AIC(lmer_model) in R (lme4). |

Table 2: Example AIC Output Comparison (Hypothetical Dose-Response Data)

| Model Name | Formula | k | Log-Likelihood | AIC | ΔAIC |

|---|---|---|---|---|---|

| Linear | Response ~ Dose | 3 | -45.2 | 96.4 | 12.1 |

| Nonlinear (Emax) | Response ~ E0 + (Emax*Dose)/(ED50 + Dose) | 4 | -38.5 | 85.0 | 0.7 |

| Nonlinear (Sig. Emax) | Response ~ E0 + (Emax*Dose^h)/(ED50^h + Dose^h) | 5 | -38.1 | 84.3 | 0.0 |

| Mixed-Effects (Random Slope) | Response ~ Dose + (Dose|Subject) | 5* | -36.8 | 85.6 | 1.3 |

*Includes fixed intercept, fixed slope, variances & covariance for random effects, residual variance.

Experimental Protocols for AIC Calculation

Protocol 1: Calculating AIC for a Linear Model (e.g., Standard Curve)

Objective: Select the best linear model describing the relationship between assay signal and analyte concentration.

Materials: See Scientist's Toolkit.

Procedure:

- Model Fitting: Fit candidate linear models using Ordinary Least Squares (OLS).

- Example in R:

lm_model <- lm(Absorbance ~ Concentration, data = assay_data)

- Example in R:

- Extract Components:

- k: Count the number of estimated parameters (e.g., intercept, slope, residual variance). For

lm( y ~ x ), k=3. - Log-Likelihood: Extract using

logLik(lm_model).

- k: Count the number of estimated parameters (e.g., intercept, slope, residual variance). For

- Calculate AIC: Apply the formula:

AIC = 2*k - 2*logLik. Or use the automated functionAIC(lm_model). - Compare: Repeat for all candidate models (e.g., with/without intercept). The model with the lowest AIC is preferred.

Protocol 2: Calculating AIC for a Nonlinear Model (e.g., Pharmacokinetic PK/PD)

Objective: Identify the best nonlinear model (e.g., Michaelis-Menten, Emax, Gompertz) for enzyme kinetics or dose-response data.

Procedure:

- Model Specification & Fitting: Define the nonlinear function and fit using iterative algorithms (e.g., Gauss-Newton).

- Example in R (Emax model):

nls_model <- nls(Effect ~ E0 + (Emax*Dose)/(EC50 + Dose), data = pd_data, start = list(E0=1, Emax=10, EC50=0.5))

- Example in R (Emax model):

- Parameter Count: Sum all fitted parameters (E0, Emax, EC50) plus the estimated error variance. This is typically provided by software.

- AIC Extraction: Use

AIC(nls_model)directly. Ensure the same data points are used for all compared models. - Validation: Check model convergence and residuals. AIC comparison is only valid for models fitted to the identical response data.

Protocol 3: Calculating AIC for a Linear Mixed-Effects Model (e.g., Repeated Measures)

Objective: Compare models with different fixed or random effect structures for longitudinal or clustered data.

Procedure:

- Fit with Maximum Likelihood (ML): To compare models with different fixed effects, models must be fitted using ML, not the default REML.

- Example in R (lme4):

lmer_model <- lmer(Response ~ Time + Treatment + (1|Subject), data = trial_data, REML = FALSE)

- Example in R (lme4):

- Account for All Parameters:

kincludes all fixed-effect coefficients, variances (and covariances) for random effects, and the residual variance. - Automated Calculation: Use

AIC(lmer_model). Theanova(model1, model2)function will also provide comparative AIC values. - Nested Model Comparison: This protocol is essential for testing the significance of random effects or fixed effects terms within the likelihood framework.

Visual Workflows

Model Selection Workflow

AIC as Common Comparator

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Model Fitting & AIC Analysis

| Item/Category | Function in AIC Analysis | Example(s) |

|---|---|---|

| Statistical Software | Platform for model fitting, likelihood calculation, and AIC computation. | R (stats, lme4, nlme), Python (statsmodels, SciPy), SAS (PROC MIXED, NLMIXED), GraphPad Prism. |

| Optimization Algorithm | Iteratively finds parameter values that maximize the likelihood function. | Gauss-Newton (for NLS), Expectation-Maximization (for some mixed models), Gibbs Sampling (Bayesian). |

| Likelihood Function | The core probability model measuring how well the model explains the observed data. | Normal (Gaussian), Binomial, Poisson, or other distribution-specific functions. |

| Data Visualization Package | Critical for checking model assumptions (normality, homoscedasticity of residuals). | ggplot2 (R), matplotlib (Python). Plots: Residuals vs. Fitted, Q-Q plots. |

| Model Selection Helper | Functions to automate AIC calculation and comparison across multiple models. | R: AIC(), MuMIn::dredge(), bbmle::AICtab(). |

Application Notes

Within the broader thesis on the Akaike Information Criterion (AIC) for model selection research, the selection of an optimal pharmacokinetic model serves as a critical practical application. This case study details the process of selecting a structural PK model for a novel oral small molecule drug, "TheraX-121," using AIC as the primary criterion. The goal was to determine the model that best describes the plasma concentration-time profile without overfitting, to inform future dose regimen simulations.

Experimental Protocol: PK Study and Model Fitting

Clinical Study Design:

- Subjects: 12 healthy volunteers (6 male, 6 female).

- Dosing: Single 100 mg oral dose of TheraX-121 under fasting conditions.

- Sample Collection: Serial blood samples were collected pre-dose and at 0.25, 0.5, 1, 1.5, 2, 3, 4, 6, 8, 12, 16, 24, and 36 hours post-dose.

- Bioanalysis: Plasma concentrations of TheraX-121 were determined using a validated LC-MS/MS method (LLOQ: 1.0 ng/mL).

Data Analysis Workflow:

- Software: Phoenix WinNonlin (version 8.3).

- Model Candidates: Four standard compartmental models were fitted to the mean concentration-time data:

- One-compartment, first-order absorption (1-Cpt, FO)

- One-compartment, lagged first-order absorption (1-Cpt, Lag)

- Two-compartment, first-order absorption (2-Cpt, FO)

- Two-compartment, lagged first-order absorption (2-Cpt, Lag)

- Algorithm: Parameters were estimated using the Gauss-Newton (Levenberg-Marquardt) algorithm. Weighting was set to (1/\hat{y}^2) (inverse of predicted concentration squared).

- Selection Criteria: AIC was calculated for each model using the formula: (AIC = n \times \ln(RSS/n) + 2P), where (n) = number of observations, (RSS) = residual sum of squares, and (P) = number of model parameters.

Data Presentation

Table 1: Model Comparison and AIC Results for TheraX-121 PK Data

| Model | Number of Parameters (P) | Residual Sum of Squares (RSS) | Akaike Information Criterion (AIC) |

|---|---|---|---|

| 1-Compartment, FO | 3 (Ka, Ke, Vd/F) | 145.2 | 42.1 |

| 1-Compartment, Lag | 4 (Ka, Ke, Vd/F, Tlag) | 48.7 | 25.8 |

| 2-Compartment, FO | 5 (Ka, α, β, Vd/F, k21) | 42.1 | 27.5 |

| 2-Compartment, Lag | 6 (Ka, α, β, Vd/F, k21, Tlag) | 41.9 | 29.9 |

Conclusion: The One-Compartment model with Lag Time yielded the lowest AIC value (25.8), identifying it as the most parsimonious model that best fits the observed data for TheraX-121. The more complex 2-compartment models provided only marginally better fit at the cost of additional parameters, as reflected in their higher AIC scores.

Mandatory Visualization

Title: PK Model Selection Workflow Using AIC

Title: Candidate PK Models Evaluated by AIC

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Materials and Tools for PK Model Selection Studies

| Item | Function in PK Model Selection |

|---|---|

| LC-MS/MS System | Gold-standard platform for quantifying drug concentrations in biological matrices (e.g., plasma) with high sensitivity and specificity. |

| Validated Bioanalytical Method | Ensures accuracy, precision, and reproducibility of concentration data, forming the reliable foundation for all model fitting. |

| Phoenix WinNonlin / NONMEM | Industry-standard software for non-compartmental analysis (NCA), compartmental PK modeling, and pharmacodynamic (PD) analysis. |

R with nlmixr/mrgsolve packages |

Open-source environment for flexible PK/PD model development, parameter estimation, and simulation. |

| AIC Calculation Script/Module | Automates the calculation of AIC (and other criteria like BIC) from model output to standardize the model comparison process. |

| Clinical Grade API & Formulation | The drug substance (TheraX-121) in a defined dosage form (e.g., capsule) for administration in the clinical PK study. |

| EDTA/Li-Heparin Vacutainers | Anticoagulant blood collection tubes for plasma preparation from subject blood samples. |

| Stable-Labeled Internal Standard | Isotopically labeled version of the analyte (e.g., TheraX-121-d4) used in LC-MS/MS to correct for sample preparation variability. |

Within the broader thesis on the application of the Akaike Information Criterion (AIC) for robust model selection in pharmacological research, a critical phase is the interpretation of results. After calculating AIC values for a candidate set of models, researchers must translate these numbers into actionable inferences. This protocol details the formal procedure for calculating ΔAIC and Akaike weights (wᵢ), transforming them into model probabilities, and making reliable, quantitative decisions for model-based inference in drug development.

Quantitative Interpretation Framework

The following table summarizes the key metrics and their standard interpretive guidelines, as established in model selection literature.

Table 1: Core Metrics for AIC-Based Model Selection

| Metric | Formula | Interpretation Threshold | Probabilistic Meaning |

|---|---|---|---|

| ΔAICᵢ | AICᵢ – AICₘᵢₙ | ΔAIC < 2: Substantial support. 4 < ΔAIC < 7: Considerably less support. ΔAIC > 10: Essentially no support. | The relative information loss of model i versus the best model (AICₘᵢₙ). |

| Akaike Weight (wᵢ) | exp(-½ΔAICᵢ) / Σ[exp(-½ΔAICₖ)] | -- | The probability that model i is the AIC-best model in the candidate set, given the data. |

| Evidence Ratio | wₘᵢₙ / wᵢ | -- | How many times more likely the best model is than model i. |

Protocol: Calculating and Interpreting ΔAIC & Akaike Weights

Objective: To compute model probabilities from a set of AIC values and determine a confidence set of models for multimodel inference.

Materials & Reagent Solutions:

- Statistical Software: R (with packages

AICcmodavg,MuMIn), Python (withstatsmodels,scikit-learn), or SAS. - Data Input: A table of AIC values for all models in the candidate set (K = number of estimated parameters, n = sample size). Use AICc if n/K < 40.

- Calculation Engine: Standard spreadsheet software (e.g., Microsoft Excel, Google Sheets).

Procedure:

- Compile AIC Values: List all candidate models and their corresponding AIC values from your analysis (e.g., pharmacokinetic models, dose-response models).

- Identify AICₘᵢₙ: Find the smallest AIC value in the set.

- Calculate ΔAIC for Each Model: For each model i, compute ΔAICᵢ = AICᵢ – AICₘᵢₙ.

- Compute Relative Likelihoods: For each model, calculate exp(-½ΔAICᵢ). This is the likelihood of the model given the data, relative to the best model.

- Sum Relative Likelihoods: Sum all relative likelihood values from Step 4.

- Calculate Akaike Weights (wᵢ): For each model, divide its relative likelihood (Step 4) by the sum of all relative likelihoods (Step 5). These weights sum to 1.

- Construct a Confidence Model Set: Sum the Akaike weights in descending order until the cumulative sum ≥ 0.95. The models in this set constitute the 95% confidence set.

- Perform Multimodel Inference: For any parameter of interest (e.g., a drug's clearance, EC₅₀), compute its model-averaged estimate as Σ[wᵢ * parameter estimateᵢ] across all models or the confidence set.

Example Output Table: Table 2: Model Selection Results for Candidate Pharmacokinetic Models

| Model Structure | K | AIC | ΔAIC | Akaike Weight (wᵢ) | Cumulative Weight |

|---|---|---|---|---|---|

| Two-Compartment | 4 | 210.5 | 0.0 | 0.72 | 0.72 |

| One-Compartment | 2 | 214.1 | 3.6 | 0.12 | 0.84 |

| Three-Compartment | 6 | 215.0 | 4.5 | 0.08 | 0.92 |

| Non-Linear Michaelis | 3 | 216.8 | 6.3 | 0.03 | 0.95 |

Visualization of the Model Selection Workflow

Workflow for Computing Model Probabilities from AIC.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Tools for Model-Based Inference Analysis

| Item | Function/Application |

|---|---|

| Statistical Computing Environment (R/Python) | Core platform for fitting models, calculating AIC, and automating the computation of ΔAIC and Akaike weights. |

| AICcmodavg Package (R) | Specialized library for calculating AIC, ΔAIC, weights, and performing model-averaged parameter estimates. |

| Curated Dataset with Replication | Essential input. Data must be of high quality, with independent replicates to ensure reliable parameter estimation for each model. |

| Model-Averaging Script/Template | Custom or open-source script to systematically apply the protocol, ensuring reproducibility and reducing human error. |

| Visualization Library (ggplot2, matplotlib) | Used to create evidence ratio plots or cumulative weight plots for clear presentation of model selection uncertainty. |

Within the broader thesis on the Akaike Information Criterion (AIC) for model selection research, this document provides standardized protocols for calculating AIC across three major analytical software platforms: R, Python, and SAS. AIC, defined as AIC = 2k - 2ln(L̂), where k is the number of estimated parameters and L̂ is the maximum value of the likelihood function, is a cornerstone for model comparison in pharmaceutical research, balancing model fit and complexity.

Core Calculation Protocols

The following protocols detail the methodology for computing AIC for a standard multiple linear regression model, using a common dataset structure with a continuous response variable and continuous predictor variables.

Protocol 2.1: AIC Calculation in R

- Objective: Fit a linear model and extract its AIC value.

- Procedure:

- Load the dataset (e.g.,

research_data.csv) containing variablesResponse,Predictor1,Predictor2. - Fit a linear model using the

lm()function:model <- lm(Response ~ Predictor1 + Predictor2, data = research_data). - Calculate AIC directly using the

AIC()function:aic_value <- AIC(model). - To compare multiple models (Model1, Model2), use

AIC(Model1, Model2).

- Load the dataset (e.g.,

- Key Functions:

lm(),AIC()from base R stats package. - Expected Output: A single numeric AIC value or a comparative table.

Protocol 2.2: AIC Calculation in Python

- Objective: Fit a linear model and compute its AIC using

statsmodels. - Procedure:

- Import necessary libraries:

pandas,statsmodels.apiassm. - Load data:

df = pd.read_csv('research_data.csv'). - Define dependent (y) and independent (X) variables. Add a constant to X for the intercept:

X = sm.add_constant(df[['Predictor1', 'Predictor2']]),y = df['Response']. - Fit the Ordinary Least Squares (OLS) model:

model = sm.OLS(y, X).fit(). - Extract AIC from the results summary:

aic_value = model.aic.

- Import necessary libraries:

- Key Modules:

statsmodels.api,pandas. - Expected Output: The

model.summary()displays AIC;model.aicprovides the numeric value.

Protocol 2.3: AIC Calculation in SAS

- Objective: Perform regression and output AIC using

PROC REGorPROC GLMSELECT. - Procedure using PROC REG:

- Import data using

PROC IMPORTor aDATAstep. - Use

PROC REGon datasetWORK.RESEARCH:proc reg data=research; model Response = Predictor1 Predictor2; run; quit;. - The AIC statistic is displayed in the "Model Fit Statistics" table of the output.

- Import data using

- Procedure for Model Comparison (PROC GLMSELECT):

proc glmselect data=research; model Response = Predictor1 Predictor2 / selection=none info=adjrsq aic; run;

- Key Procedures:

PROC REG,PROC GLMSELECT. - Expected Output: A table in the SAS output window containing the AIC value.

Quantitative Software Comparison

Table 1: Comparison of AIC Implementation Across Software Platforms

| Feature | R (v4.3+) | Python (statsmodels v0.14+) | SAS (9.4M8+) |

|---|---|---|---|

| Primary Function | AIC() |

model.aic attribute |

PROC REG / PROC GLMSELECT |

| Model Object Required | Yes (e.g., lm, glm) |

Yes (e.g., RegressionResults) |

Yes (within procedure) |

| Output Type | Numeric or comparative table | Numeric (float) | Output table statistic |

| Ease of Multi-Model Comparison | Direct via AIC(m1, m2) |

Manual compilation or custom loop | Automated in selection procedures |

| Baseline Packages/Libraries | stats (base) | statsmodels, scikit-learn | STAT |

| Extensibility | High via packages (e.g., MuMIn, AICcmodavg) |

High via scikit-learn's sklearn.metrics |

Native within SAS/STAT procedures |

Table 2: Sample AIC Outputs for a Fitted Model (k=3 parameters)

| Software | Log-Likelihood (ln(L̂)) | Calculated AIC (2k - 2ln(L̂)) |

|---|---|---|

| R | -45.21 | 23 - 2(-45.21) = 96.42 |

| Python | -45.21 | 96.42 |

| SAS | -45.21 | 96.42 |

Workflow and Logical Pathways

Title: AIC Model Selection Cross-Platform Workflow

Title: Thesis Context of Software Implementation Notes

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools & Packages for AIC Research

| Item (Software/Package) | Function in AIC Research | Key Attribute for Drug Development |

|---|---|---|

| R (with stats package) | Provides the base AIC() function for model objects from lm(), glm(), etc. |

Gold standard for statistical validation; extensive use in pharmacokinetic/pharmacodynamic (PK/PD) modeling. |

| Python (statsmodels) | Offers a Pythonic, pandas-integrated API for regression and AIC extraction via the .aic attribute. |

Enables integration of model selection into larger machine learning and data processing pipelines. |

| SAS/STAT (PROC REG) | Industry-standard procedure for regression analysis, automatically generating AIC in fit statistics. | Critical for regulated environments requiring validated, audit-ready analytical workflows (e.g., FDA submissions). |

| R MuMIn Package | Extends R's capabilities for multi-model inference and automated AIC table generation. | Streamlines comparison of dozens of candidate biomarker models efficiently. |

| Python scikit-learn | While statsmodels is preferred for strict AIC, sklearn offers AIC for some models (e.g., LassoLarsIC). |

Useful for model selection embedded within predictive algorithm development. |

| SAS PROC GLMSELECT | Specialized for model selection with information criteria, allowing direct comparison of many models. | Optimizes the process of selecting key predictors from high-dimensional data in early discovery. |

Avoiding Common Pitfalls: Troubleshooting AIC in Biomedical Model Selection

Foundational Assumptions and Violations of AIC

The Akaike Information Criterion (AIC) is derived under specific regularity conditions. Its use for model selection is invalid when these conditions are violated, leading to biased and unreliable conclusions.

Table 1: Core Assumptions of AIC and Consequences of Violation

| Assumption | Description | Consequence of Violation |

|---|---|---|

| Correctly Specified Model Family | The "true model" or best approximating model is within the candidate set. | AIC loses its "optimal predictive" property; selected model may be severely misspecified. |

| Regularity Conditions for MLE | Standard asymptotic properties of Maximum Likelihood Estimators (MLEs) hold (e.g., parameters in interior of space, non-singular Fisher information matrix). | Likelihood function and parameter estimates are unreliable, invalidating AIC's penalty term. |

| Large Sample Size (Asymptotic) | AIC is an asymptotic approximation (n/K > 40, where K is number of parameters). | The penalty term (2K) may inadequately correct for overfitting in small samples. |

| Independent, Identically Distributed Data | Observations are i.i.d. This underpins the likelihood calculation. | Estimated likelihood is incorrect; AIC values are not comparable across models. |

| No Substantial Collinearity | Predictors are not perfectly or highly correlated. | Parameter estimates are unstable, inflating variance and distorting the effective number of parameters. |

| Low-Dimensional Setting | Number of parameters (K) is small relative to sample size (n). | In high-dimensional settings (p ≈ n or p > n), MLE may not exist, and AIC fails catastrophically. |

Experimental Protocols for Diagnosing AIC Violations

Protocol 2.1: Diagnostic Check for Likelihood and MLE Regularity

Objective: Verify that model fitting achieves a regular, interior maximum likelihood solution.

- Fit the candidate model(s) using a robust numerical optimizer (e.g., Newton-Raphson, BFGS).

- Key Step: Request the Hessian matrix (matrix of second-order partial derivatives of the log-likelihood) at the estimated parameters.

- Calculate the eigenvalues of the Hessian matrix. All eigenvalues must be negative for a maximum.

- Check the condition number of the Fisher information matrix (inverse of Hessian). A condition number > 10^8 indicates near-singularity.

- Validation: Re-fit the model from multiple distinct starting parameter values. AIC is suspect if different starting values converge to different likelihood maxima.

Protocol 2.2: Assessing Small Sample Bias

Objective: Determine if sample size is sufficient for AIC's asymptotic approximation.

- Calculate the effective sample size (n_eff). For time-series or clustered data, adjust for autocorrelation or intra-cluster correlation.

- Compute the ratio neff / Kmax, where K_max is the largest number of estimated parameters among candidates.

- Decision Rule: If neff / Kmax < 40, apply a second-order correction: Use AICc instead of AIC, where AICc = AIC + (2K(K+1))/(n-K-1).

- For neff / Kmax < 1, neither AIC nor AICc is appropriate; consider dimension reduction before model selection.

Protocol 2.3: Testing for Independence in Residuals

Objective: Validate the i.i.d. assumption for model errors/residuals.

- After fitting the model with MLE, extract the residuals.

- Perform the Ljung-Box test (for time-series) or Moran's I test (for spatial data) on the residuals at relevant lags.

- For clustered/hierarchical data: Calculate the Intraclass Correlation Coefficient (ICC). An ICC > 0.05 indicates substantial non-independence.

- If violation is detected: Candidate models must be reformulated to account for the dependence structure (e.g., using mixed-effects models). AIC values from the original models are not comparable.

Case Study: High-Dimensional Omics Data in Drug Target Discovery

In early-stage drug development, researchers often use transcriptomic data (e.g., RNA-seq with 20,000 genes from 50 patient samples) to identify predictive signature models. This high-dimensional context (p >> n) is a classic scenario where standard AIC fails.

Table 2: AIC Performance vs. Alternative Criteria in High-Dimensional Simulation

| Model Selection Criterion | Average True Positives (TP) | Average False Positives (FP) | Prob. of Selecting True Model |

|---|---|---|---|

| AIC (Naïve Application) | 8.2 | 152.7 | 0.00 |

| AIC with Lasso Regularization | 10.1 | 45.3 | 0.00 |

| Extended BIC (EBIC) | 9.8 | 12.1 | 0.15 |

| Modified CV (10-fold, stability selection) | 11.5 | 8.4 | 0.22 |

Simulation Parameters: n=50 samples, true model contains 10 non-zero predictors out of p=1000 candidate genes. Noise variance set to explain 50% of total variance. Results averaged over 1000 simulations.

Protocol 3.1: Model Selection Protocol for High-Dimensional Biomarker Discovery

Objective: Identify a robust predictive model from high-dimensional data without violating AIC assumptions.

- Pre-screening: Apply sure independence screening (SIS) or a univariate association filter to reduce dimensionality to d < n/log(n) candidates.

- Penalized Regression: Fit a Lasso (L1-penalized) logistic/linear regression model across the full regularization path.

- Stability Selection: For each candidate predictor, compute its frequency of selection across 100 bootstrap subsamples at a given regularization penalty (λ).

- Final Model: Choose predictors with selection frequency > 80%. Refit a standard (non-penalized) model using only these stable predictors.

- Criterion Application: Calculate AIC/BIC only on this refitted, low-dimensional model. Compare to other candidate models derived from different λ thresholds.

Diagram Title: Protocol for Valid AIC in High-Dimensions

The Scientist's Toolkit: Essential Reagents & Software

Table 3: Key Research Reagent Solutions for Robust Model Selection

| Item / Solution | Function & Rationale |

|---|---|

Quasi-Likelihood Methods (e.g., R's quasi family) |

Provides inference when a full probability model is unknown (e.g., only mean-variance relationship is specified), circumventing distributional AIC assumptions. |

| Smoothly Clipped Absolute Deviation (SCAD) Penalty | A non-convex penalty function for variable selection; reduces bias in large coefficients compared to Lasso, improving model identification before AIC use. |

Bootstrapping Software (e.g., boot R package) |

Empirically assesses sampling distribution of parameter estimates and AIC differences, checking robustness against violated regularity conditions. |

| Takeuchi Information Criterion (TIC) | A generalization of AIC requiring only that the candidate models are misspecified. Uses the empirical Fisher information to correct the penalty. |

| Conditional AIC (cAIC) | For mixed-effects models; accounts for uncertainty in random effects estimation, essential when i.i.d. assumption is violated by clustering. |

| Bayesian Predictive Information Criterion (BPIC) | A bias-corrected variant of DIC for Bayesian models, more stable when posterior is non-normal or multimodal. |

Diagram Title: Decision Path for AIC or Alternatives

Within the broader thesis on Akaike Information Criterion (AIC) for model selection, the standard AIC is derived as an asymptotically unbiased estimator of the Kullback-Leibler information loss. However, this asymptotic property fails when the sample size (n) is small relative to the number of estimated parameters (k). The corrected AIC (AICc) provides a second-order bias correction, making it a crucial tool for practical model selection in finite-sample scenarios common in scientific and drug development research.

Quantitative Comparison: AIC vs. AICc Performance

The key formula for AICc is: AICc = AIC + (2k(k+1))/(n-k-1), where AIC = -2log(L) + 2k, L is the maximum likelihood, k is the number of parameters, and n is sample size.

Table 1: Bias Correction Term Magnitude for Various n/k Ratios

| Sample Size (n) | Parameters (k) | n/k Ratio | AICc Correction Term (2k(k+1))/(n-k-1) | Recommended Criterion |

|---|---|---|---|---|

| 15 | 5 | 3 | 8.57 | AICc |

| 30 | 5 | 6 | 2.50 | AICc |

| 40 | 10 | 4 | 6.45 | AICc |

| 100 | 10 | 10 | 2.42 | AICc or AIC |

| 200 | 10 | 20 | 1.11 | AIC |

Table 2: Simulation Results: Model Selection Accuracy (% Correct)

| Scenario (n, k_max) | True Model | AIC Selection Accuracy | AICc Selection Accuracy | Improvement with AICc |

|---|---|---|---|---|

| n=20, k=1 to 5 | k=2 | 61.2% | 78.5% | +17.3% |

| n=40, k=1 to 8 | k=3 | 74.8% | 85.1% | +10.3% |

| n=100, k=1 to 10 | k=4 | 86.3% | 87.9% | +1.6% |

Data synthesized from current literature review and simulation studies. The performance advantage of AICc diminishes as n/k exceeds approximately 40.

When to Use AICc: Decision Protocol

Protocol 1: Decision Workflow for AIC vs. AICc Selection

Decision Flow for AIC vs. AICc Selection

Application Rule: Use AICc when n/k < 40, where n is sample size and k is the number of estimated parameters in the most complex candidate model. For n < 100, a conservative approach mandates AICc regardless of the n/k ratio due to increased risk of overfitting.

Experimental Protocols for AICc Implementation

Protocol 2: Step-by-Step AICc Calculation and Model Comparison

- Define Candidate Model Set: Specify all models to be compared based on prior knowledge or hypotheses.

- Fit Models & Obtain Log-Likelihood: For each model, compute the maximized log-likelihood value (log(L)).

- Count Parameters (k): Include all estimated parameters (regression coefficients, variance components, dispersion parameters).

- Compute AIC: AIC = -2*log(L) + 2k.

- Apply Correction: Calculate AICc = AIC + (2k(k+1))/(n - k - 1). Ensure n > k + 1.

- Rank Models: Order models by increasing AICc value. The model with the minimum AICc is considered the best approximating model.

- Calculate ΔAICc: Δi = AICci - min(AICc). Models with Δi < 2 have substantial support; models with Δi > 10 have essentially no support.

- Compute Akaike Weights (wi): wi = exp(-Δi/2) / Σ[exp(-Δi/2)]. Interpret as the probability that model i is the best among the set.

Protocol 3: Simulation-Based Validation of Model Selection (Recommended for Drug Development) Objective: Validate the AICc selection procedure for a specific experimental design.

- Define True Data-Generating Model: Specify a pharmacological model (e.g., Emax model for dose-response) with known parameter values.

- Generate Replicate Datasets: Simulate N=5000 datasets with the defined small sample size (e.g., n=20-50) and add realistic measurement error.

- Fit Candidate Models: For each dataset, fit the true model and several competing models (e.g., linear, quadratic, logistic).

- Apply AICc Selection: Rank models for each dataset using AICc.

- Calculate Selection Frequency: Tally how often each model is selected as "best".

- Assess Performance: The percentage of times the true model is correctly identified should align with theoretical expectations. A well-performing criterion should recover the true model at a rate proportional to its Akaike weight.

Pathway: The Role of AICc in the Model Selection Workflow

AICc in the Model Selection Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for AICc-Based Model Selection Analysis

| Tool/Reagent | Function in Analysis | Example/Note |

|---|---|---|

| Statistical Software (R/Python) | Platform for computing log-likelihood, AIC, and AICc. | R: AICc() function in AICcmodavg package. Python: statsmodels. |

| Likelihood Function | The core mathematical model linking parameters to data probability. | Must be correctly specified for each candidate model (e.g., Normal, Binomial). |

| Optimization Algorithm | Finds parameter values that maximize the log-likelihood. | Nelder-Mead, BFGS, or Markov Chain Monte Carlo (MCMC) for complex models. |

| Sample Size (n) | The number of independent experimental units. | The key determinant for needing AICc. Must be recorded precisely. |

| Parameter Count (k) | The total number of independently adjusted parameters per model. | Includes all estimated coefficients, variances, and scale parameters. |

| Model Set List | A predefined, biologically plausible set of candidate models. | Avoid data dredging. Set should be grounded in theory. |

| Validation Dataset | Independent data not used for model fitting. | Used for final performance check of the AICc-selected model. |

Final Recommendations for Researchers

- Primary Rule: Default to using AICc for all linear regression and generalized linear modeling problems with small samples. For nonlinear models (e.g., pharmacokinetic/pharmacodynamic), the n/k < 40 rule is essential.

- Reporting: Always report whether AIC or AICc was used, the sample size (n), the number of parameters (k) for the top models, and the Akaike weights.

- Limitation: AICc correction is derived for normally distributed errors. Its performance for other distributions (e.g., binomial, Poisson) with very small n may vary; consider simulation-based validation (Protocol 3) in such cases.

- Integration: AICc provides a point estimate of best model. Always complement it with model-averaged parameter estimates and predictions when uncertainty in model selection is high (e.g., when several models have ΔAICc < 2).

Within the broader thesis on the Akaike Information Criterion (AIC) for model selection research, a pivotal chapter addresses the challenge of comparing non-nested models. Unlike nested models, where one is a special case of another (e.g., linear vs. quadratic regression), non-nested models represent distinct, competing hypotheses about the data-generating process (e.g., a power-law model vs. an exponential decay model for pharmacokinetics). Traditional likelihood ratio tests are invalid in this scenario. AIC provides a unique, theoretically grounded solution by estimating the relative Kullback-Leibler (KL) information loss, enabling direct comparison of any models fit to the same dataset, irrespective of their functional form.

Core Conceptual Framework and Quantitative Comparison

AIC is calculated as: AIC = -2(log-likelihood) + 2K where K is the number of estimated parameters. The model with the lower AIC is preferred. For small sample sizes (n/K < 40), the corrected AICc is recommended: AICc = AIC + (2K(K+1))/(n-K-1).

Table 1: Comparison of Model Selection Criteria for Non-Nested Models

| Criterion | Theoretical Basis | Handles Non-Nested? | Penalty for Complexity | Key Assumption/Limitation |

|---|---|---|---|---|

| Akaike IC (AIC) | Kullback-Leibler Information | Yes | 2K | Asymptotic unbiasedness; prefers simpler models than BIC. |

| Bayesian IC (BIC) | Bayesian Posterior Odds | Yes | K*log(n) | Stronger penalty; assumes a "true model" is in the set. |

| Likelihood Ratio Test | Nested Hypothesis | No | N/A | Requires one model to be a special case of the other. |

| Cross-Validation | Predictive Accuracy | Yes | Implicit via validation | Computationally intensive; results can be variable. |

Table 2: Illustrative AIC Comparison for Two Non-Nested PK/PD Models (Simulated data for drug concentration over time)

| Model | Formula | K | Log-Likelihood | AIC | ΔAIC | AIC Weight |

|---|---|---|---|---|---|---|

| Biexponential | C(t)=Ae^{-αt}+ Be^{-βt} | 4 | -12.4 | 32.8 | 0.0 | 0.73 |

| Power-Law | C(t)=mt^{-γ} | 2 | -18.7 | 41.4 | 8.6 | 0.01 |

| Sigmoidal Emax | E(t)=(E_max•[C]^h)/(EC_50^h+[C]^h) | 3 | -16.1 | 38.2 | 5.4 | 0.05 |

Interpretation: The Biexponential model has substantial support (AIC weight = 73% of model probability).

Application Protocols

Protocol 1: AIC-Based Selection of Non-Nested Mechanistic Models in Drug Response Objective: To select the best model describing in vitro dose-response from candidates of different mechanistic origins (e.g., receptor occupancy vs. kinetic signaling).

- Data Collection: Obtain robust dose-response data (e.g., cell viability, target engagement) across a minimum of 10 concentration points, replicated.

- Candidate Model Specification:

- Model A (Logistic/Sigmoid Emax): Response = Emin + (Emax - E_min) / (1 + 10^{(LogEC50 - x)Hillslope})

- Model B (Linear-Quadratic): Response = α(Dose) + β(Dose)^2 + c

- Model C (Power Law): Response = a(Dose)^k

- Parameter Estimation: Fit each model to the data via maximum likelihood estimation (MLE). Use appropriate error structure (e.g., normal for continuous, Poisson for count data).

- AIC Calculation: For each model, compute log-likelihood, count parameters (K includes all estimated constants + error variance), calculate AIC (or AICc if n is small).

- Model Ranking & Inference: Rank models by ΔAIC (difference from minimum AIC). Models with ΔAIC ≤ 2 have substantial support. Calculate AIC weights to approximate model probabilities.

Protocol 2: Evaluating Diagnostic Biomarker Trajectories Using AIC Objective: Compare non-nested growth models (exponential vs. Gompertz) for tumor biomarker (e.g., PSA) kinetics in early-phase trial data.

- Longitudinal Data: Collect serial biomarker measurements from individual patients.

- Model Fitting:

- Exponential: B(t) = B0 * e^{rt}

- Gompertz: B(t) = B0 * e^{(a/b)(1 - e^{-bt})}

- Individual vs. Population AIC: Compute AIC for each patient's trajectory under both models. The model with the lower sum of AICs across the cohort is preferred at the population level.

- Clinical Correlation: Stratify patients by which model best fits their data (ΔAIC > 2). Investigate correlations with clinical outcomes (e.g., progression-free survival).

Visualizations

Title: AIC Workflow for Comparing Non-Nested Models

Title: AIC's Role in Solving the Non-Nested Model Problem

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Tools for Implementing AIC-Based Model Selection

| Tool/Reagent | Category | Function in Protocol | Example/Note |

|---|---|---|---|

| Maximum Likelihood Estimation (MLE) Software | Computational | Fits non-linear, non-nested models to data to obtain log-likelihood. | R (stats4, bbmle), Python (SciPy.optimize, statsmodels), SAS (PROC NLMIXED). |

| AIC Calculation Function | Computational | Computes AIC, AICc, ΔAIC, and AIC weights from model fits. | R: AIC(), MuMIn::model.sel(); Python: statsmodels.regression.linear_model.RegressionResults.aic. |

| Dose-Response Cell Viability Assay | Wet Lab Reagent | Generates quantitative data for PK/PD model comparison (Protocol 1). | CellTiter-Glo Luminescent (measures ATP). Provides continuous, robust viability data. |

| Longitudinal Biomarker Assay | Diagnostic Reagent | Enables serial measurement for growth model comparison (Protocol 2). | ELISA kits (e.g., for PSA, CA-125). High precision and sensitivity required. |

| Model Specification Library | Conceptual | Pre-defines candidate non-nested models for testing. | Curated list of common PK (e.g., monophasic, biphasic) and growth (exponential, Gompertz) models. |

| Bootstrapping Resampling Tool | Computational | Validates AIC selection stability for small n. | R (boot package) to generate confidence intervals for ΔAIC. |

Within the broader thesis on Akaike Information Criterion (AIC) for model selection research, this document provides Application Notes and Protocols for its use in avoiding overfitting and underfitting in predictive model development. The AIC, derived from information theory, estimates the relative information loss of a model, balancing goodness-of-fit with model complexity. The "sweet spot" is the model with the minimal AIC value, representing the optimal trade-off.

Key Quantitative Summary of AIC-Related Metrics

| Metric | Formula | Interpretation in Model Selection | Primary Use Case |

|---|---|---|---|