Bayesian Inference in Action: A Practical Guide to MCMC Methods for Stochastic Pharmacometric Modeling

This comprehensive guide demystifies Markov Chain Monte Carlo (MCMC) methods for stochastic model fitting, tailored for researchers and professionals in drug development.

Bayesian Inference in Action: A Practical Guide to MCMC Methods for Stochastic Pharmacometric Modeling

Abstract

This comprehensive guide demystifies Markov Chain Monte Carlo (MCMC) methods for stochastic model fitting, tailored for researchers and professionals in drug development. It begins by establishing the foundational principles of Bayesian inference and the critical role of MCMC in quantifying uncertainty for complex, non-linear models like pharmacokinetic/pharmacodynamic (PK/PD) systems. The article then transitions to practical application, detailing the implementation workflow of popular samplers (e.g., Metropolis-Hastings, Hamiltonian Monte Carlo) within modern computing frameworks. To address real-world challenges, a dedicated section provides solutions for diagnosing poor convergence (like low ESS and high R-hat) and strategies for optimizing sampling efficiency and prior specification. Finally, the guide covers robust validation techniques and comparative analysis of MCMC against frequentist methods, highlighting its advantages in therapeutic decision-making. The conclusion synthesizes key takeaways and outlines future implications for Bayesian pharmacometrics and model-informed drug development.

From Bayes' Theorem to Stochastic Models: The Essential 'Why' Behind MCMC

Deterministic pharmacometric models, primarily expressed as ordinary differential equations (ODEs), assume that a system's future state is entirely predictable from its current state and a fixed set of parameters. This framework fails to capture intrinsic biological variability, measurement error, and uncertainty in prediction, which are fundamental to drug development. The integration of stochasticity—through stochastic differential equations (SDEs), mixed-effects models, and Markov Chain Monte Carlo (MCMC) methods—is non-negotiable for accurate parameter estimation, uncertainty quantification, and predictive robustness in pharmacometrics.

Application Notes: Key Domains Requiring Stochastic Approaches

Intrinsic Stochasticity in Biological Processes

Cellular processes, such as gene expression, receptor binding, and intracellular signaling, are subject to random fluctuations due to low copy numbers of molecules. Deterministic models average out this noise, leading to potentially misleading conclusions about drug-target engagement and downstream effects.

Inter-Individual Variability (IIV) and Residual Unexplained Variability (RUV)

Population pharmacokinetic/pharmacodynamic (PK/PD) modeling must account for IIV (differences between individuals) and RUV (unexplained variability within an individual). A deterministic framework cannot separately quantify these sources of randomness, which is critical for dose optimization and clinical trial simulation.

Predictive Uncertainty in Model-Based Drug Development

Regulatory decisions rely on understanding the confidence in model predictions. Deterministic models provide a single prediction trajectory, whereas stochastic models generate prediction intervals, essential for risk assessment in go/no-go decisions.

Table 1: Comparison of Deterministic vs. Stochastic Model Outputs for a Phase II PK/PD Simulation

| Metric | Deterministic ODE Model | Stochastic Mixed-Effects Model (MCMC fitted) |

|---|---|---|

| Predicted AUC at Steady State | 1250 mg·h/L | Median: 1248 mg·h/L (95% CrI: 980 – 1580 mg·h/L) |

| Probability of Target Attainment (>MIC for 50% dosing interval) | 92% (point estimate) | 89% (90% Prediction Interval: 72% – 97%) |

| Estimated IIV on Clearance (CV%) | Not Estimated | 32% (95% CrI: 25% – 40%) |

| Model Diagnostic: Objective Function Value | -215.4 | Posterior log-likelihood distribution reported |

Experimental Protocols for Stochastic Model Evaluation

Protocol 3.1: MCMC Workflow for a Stochastic PK/PD Model Fitting

This protocol details the steps to fit a one-compartment PK model with an Emax PD model using a Bayesian MCMC approach with the Stan software.

Objective: To estimate posterior distributions for PK/PD parameters, IIV, and RUV, quantifying all uncertainties.

Materials: See "Research Reagent Solutions" table. Software: R (v4.3+), RStan, cmdstanr, shinystan.

Procedure:

- Model Specification: Define the joint log-probability function in Stan. The structural model includes:

- PK:

dA/dt = -Ke * A; C = A / V. - PD:

E = E0 + (Emax * C) / (EC50 + C). - Stochastic Elements: IIV on

Ke, V, Emax(log-normal). RUV onCandE(additive/proportional error).

- PK:

- Prior Selection: Elicit weakly informative priors. Example:

V ~ normal(70, 20); omega (IIV) ~ cauchy(0, 2); sigma (RUV) ~ exponential(1). - MCMC Sampling:

- Run 4 independent Hamiltonian Monte Carlo (HMC) chains.

- Set iterations to 2000 per chain, with 1000 warm-up (adaptation) iterations.

- Target acceptance rate (

adapt_delta): 0.95 to reduce divergences.

- Diagnostic Checks:

- Convergence: Assess Gelman-Rubin statistic (

Rhat < 1.05) and effective sample size (n_eff > 400). - Posterior Predictive Check (PPC): Simulate new data from posterior parameter draws. Compare visually and quantitatively to observed data.

- Divergences: Check for zero divergent transitions post-warm-up.

- Convergence: Assess Gelman-Rubin statistic (

- Inference: Report posterior medians and 95% credible intervals (CrIs) for all parameters. Visualize posterior distributions and correlation matrices.

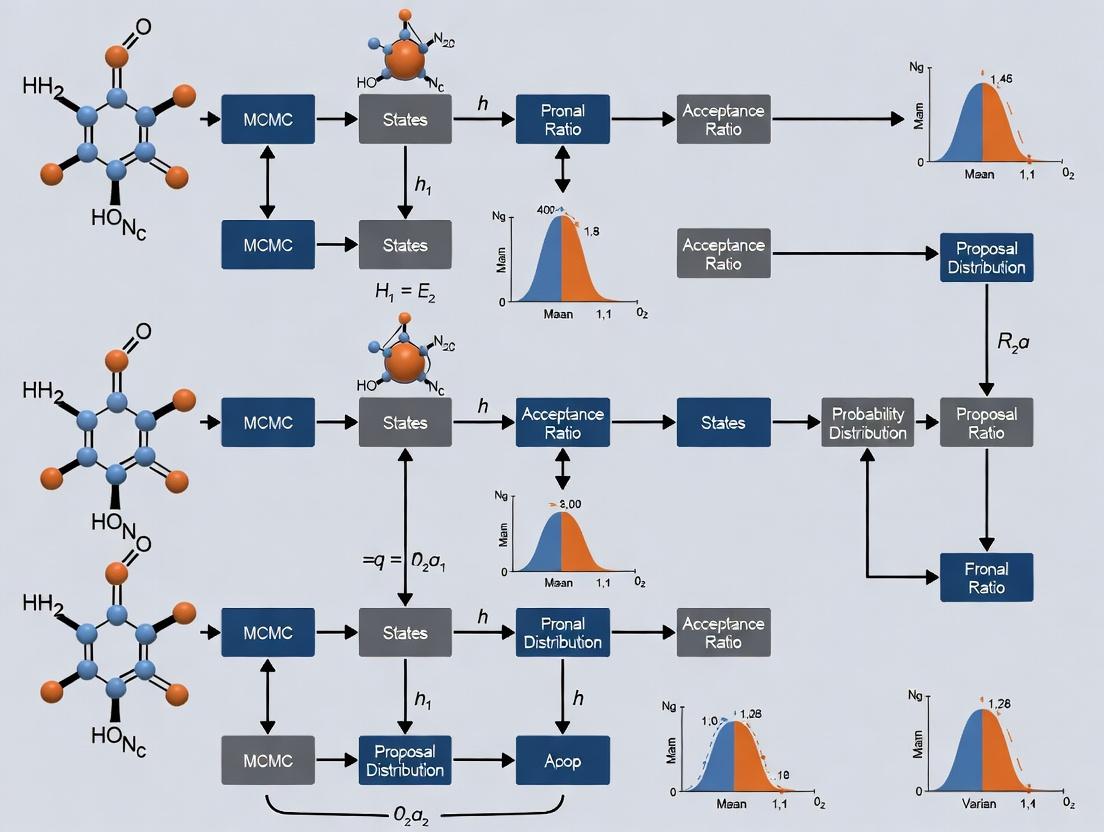

Diagram Title: MCMC Workflow for Stochastic PK/PD Model Fitting

Protocol 3.2: Stochastic Simulation and Comparison (SSC) Experiment

Objective: To empirically demonstrate the limits of deterministic models by comparing their predictive performance against stochastic models in a simulated clinical trial.

Procedure:

- Generate a "True" Stochastic World: Use a known model with fixed parameters, substantial IIV (e.g., 40% CV on clearance), and RUV to simulate virtual patient PK data (N=100).

- Model Fitting:

- Arm A (Deterministic): Fit a standard ODE model via maximum likelihood, ignoring IIV structure.

- Arm B (Stochastic): Fit a non-linear mixed-effects model (NLMEM) with IIV and RUV, using SAEM algorithm (e.g., in

nlmixr2) and/or Bayesian MCMC.

- Predictive Performance Assessment:

- Simulate 1000 new virtual patients from each fitted model.

- Compare the distribution of key outcomes (e.g., trough concentration, AUC) to the "true" generating distribution using metrics in Table 2.

Table 2: SSC Experiment Results - Predictive Performance Metrics

| Performance Metric | Deterministic ODE Model | Stochastic NLMEM/MCMC Model |

|---|---|---|

| Bias in Predicted C_trough | +18.5% | +1.2% |

| Coverage of 90% Prediction Interval | 62% | 91% |

| Root Mean Square Error (RMSE) | 4.2 mg/L | 1.1 mg/L |

| Identification of "Outlier" Patients | Failed (All predictions similar) | Successful (Captured tail of distribution) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Stochastic Pharmacometric Analysis

| Item/Category | Example(s) | Function in Stochastic Analysis |

|---|---|---|

| Modeling & Inference Software | Stan, Nimble, nlmixr2, Monolix, NONMEM |

Enables specification of hierarchical models and efficient sampling from posterior distributions via HMC or SAEM algorithms. |

| Diagnostic & Visualization Packages | shinystan, bayesplot (R), ArviZ (Python) |

Provides interactive diagnostics (traces, pairs plots) and visualizations (PPC, forest plots) for MCMC output. |

| High-Performance Computing (HPC) | Local clusters, Cloud computing (AWS, GCP) | Facilitates running multiple long MCMC chains or large-scale simulation studies in parallel. |

| Pharmacometric Datasets | Clinical PK/PD data from Phase I, Real-world data (RWD) streams | The foundational input requiring stochastic models to separate signal (drug effect) from noise (biological & measurement variability). |

| Benchmarking & Simulation Tools | RxODE/rxode2 (R), Simulx (Monolix) |

Used to perform simulation-estimation experiments (like Protocol 3.2) to validate and compare model performance. |

Diagram Title: Flow of Information in Stochastic Pharmacometrics

This application note serves as a foundational module within a broader thesis on Markov Chain Monte Carlo (MCMC) for stochastic model fitting in pharmacokinetic-pharmacodynamic (PK/PD) research. Bayesian inference provides the essential theoretical framework that MCMC algorithms, such as Metropolis-Hastings and Gibbs sampling, operationalize. It allows researchers to formally integrate prior knowledge with experimental data to obtain posterior distributions of model parameters, which is critical for quantifying uncertainty in complex, stochastic biological models.

Core Components of Bayesian Inference

Bayes' Theorem:

P(θ | D) = [P(D | θ) * P(θ)] / P(D)

Where:

P(θ | D): Posterior Distribution (Target). The updated probability distribution of the model parameters (θ) given the observed data (D).P(D | θ): Likelihood. The probability of observing the data given specific parameter values.P(θ): Prior Distribution. The probability distribution of the parameters based on prior knowledge before seeing the new data.P(D): Marginal Likelihood / Evidence. The total probability of the data across all parameter values (often acts as a normalizing constant).

The following table compares common prior distributions and their impact in a canonical PK model fitting scenario: estimating the clearance (CL) of a drug from plasma concentration-time data.

Table 1: Specification and Impact of Prior Distributions for a PK Parameter (Clearance - CL)

| Component | Form/Distribution | Parameterization (Example) | Role & Interpretation in PK/PD Context |

|---|---|---|---|

| Prior (P(θ)) | Log-Normal | Mean = 10 L/h, CV = 30% | Encodes prior belief (e.g., from preclinical species or similar compounds) that CL is positive and has natural variability. Prevents physiologically implausible values (e.g., negative CL). |

| Likelihood (P(D|θ)) | Normal (Additive Error) | Residual SD, σ = 0.2 mg/L | Assumes observed plasma conc. deviations from the model prediction are normally distributed. Quantifies how "likely" the data is for a given CL. |

| Posterior (P(θ|D)) | Complex, Non-analytical | Estimated via MCMC (Mean = 12.5 L/h, 95% CrI: 11.0 - 14.2 L/h) | The target distribution. Summarizes all knowledge about CL after updating the prior with clinical trial data. The Credible Interval (CrI) directly quantifies probability that CL lies within a range. |

Table 2: MCMC Diagnostics for Posterior Sampling of PK Parameters

| Parameter | Mean Estimate | SD (Uncertainty) | 2.5% Percentile | 97.5% Percentile | R-hat (Gelman-Rubin) | Effective Sample Size (ESS) |

|---|---|---|---|---|---|---|

| CL (L/h) | 12.5 | 0.82 | 11.0 | 14.2 | 1.01 | 1850 |

| Vd (L) | 25.3 | 2.1 | 21.4 | 29.8 | 1.02 | 1500 |

| ka (1/h) | 1.2 | 0.15 | 0.92 | 1.52 | 1.03 | 1200 |

R-hat > 1.1 suggests poor convergence; ESS indicates independent samples from the posterior.

Experimental Protocol: Bayesian Population PK/PD Model Fitting via MCMC

Protocol Title: Bayesian Estimation of Population Parameters for a Novel Oncology Therapeutic using MCMC.

Objective: To characterize the population PK of Drug X and its relationship to a biomarker response (PD) in Phase Ib clinical trial subjects, quantifying inter-individual variability and parameter uncertainty.

Materials: See "Scientist's Toolkit" below.

Procedure:

Model Specification:

- Structural Model: Define a 2-compartment PK model with first-order absorption and an

E_maxPD model linking plasma concentration to biomarker inhibition. - Statistical Model: Define inter-individual variability (IIV) on key parameters (CL, Vd) using a log-normal distribution. Specify residual error models (additive/proportional) for PK and PD observations.

- Structural Model: Define a 2-compartment PK model with first-order absorption and an

Prior Elicitation:

- For CL and Vd, define informative log-normal priors based on allometric scaling from preclinical toxicokinetic studies (see Table 1).

- For

E_maxandEC_50, define weakly informative priors (e.g.,E_max ~ Beta(2,2)to constrain between 0-1;EC_50 ~ LogNormal(log(observed_C50_guess), 1)). - For IIV variances (ω²), use inverse-Gamma(0.5, 0.5) or Half-Cauchy(0,2.5) priors to regularize estimation.

Likelihood Definition:

- Construct the joint likelihood function. For individual i at time j, assume:

Observed_Conc_ij ~ Normal(Predicted_Conc_ij(θ_i), σ_add² + (σ_prop * Predicted_Conc_ij)²)whereθ_iare the individual parameters drawn from the population distributionsN(θ_pop, Ω).

- Construct the joint likelihood function. For individual i at time j, assume:

MCMC Sampling Setup:

- Use software like Stan, PyMC, or NONMEM with Bayesian tools.

- Configure 4 independent Markov chains.

- Set a minimum of 10,000 iterations per chain, with the first 5,000 discarded as warm-up/adaptation.

- Specify target acceptance rate (e.g., 0.8 for NUTS sampler in Stan).

Posterior Sampling & Diagnostics:

- Run MCMC sampling.

- Monitor convergence: Assess R-hat statistics (target < 1.05), trace plots for mixing, and effective sample size (ESS > 400 per chain).

- If diagnostics fail, increase iterations, re-parameterize the model, or adjust priors.

Posterior Analysis:

- Extract posterior distributions for all parameters (

θ_pop, Ω, σ). - Generate posterior predictive checks: Simulate new data using posterior draws and compare visually and quantitatively to observed data.

- Perform covariate analysis by examining correlations between individual parameter estimates (empirical Bayes estimates) and patient demographics.

- Extract posterior distributions for all parameters (

Visualization: The Bayesian Inference & MCMC Workflow

Title: Bayesian MCMC Workflow for Model Fitting

The Scientist's Toolkit: Key Research Reagents & Software

Table 3: Essential Toolkit for Bayesian PK/PD Analysis

| Item/Category | Specific Examples | Function & Role in Bayesian Inference |

|---|---|---|

| Modeling Software | Stan (CmdStan, PyStan), PyMC, NONMEM (with Bayesian methods), Monolix (SUQ), WinBUGS/OpenBUGS | Provides environment to specify probability models (priors, likelihood) and implements advanced MCMC samplers (e.g., HMC, NUTS) to draw from the posterior. |

| Programming Languages | R (brms, rstan, ggplot2), Python (ArviZ, pandas, NumPy) | Enables data preprocessing, interfacing with modeling software, and comprehensive post-processing of MCMC posterior draws (diagnostics, visualization). |

| Prior Information Sources | Published literature, FDA drug labels, preclinical PK studies, in vitro bioassay data. | Forms the basis for constructing informative or weakly informative prior distributions (P(θ)), crucial for stabilizing estimates in sparse data scenarios. |

| High-Performance Computing (HPC) | Local compute clusters, cloud computing (AWS, GCP), parallel processing frameworks. | Accelerates MCMC sampling, which is computationally intensive for complex population models, by enabling parallel chain execution. |

| Diagnostic & Viz Packages | R: bayesplot, shinystan; Python: ArviZ, seaborn. | Generates trace plots, density plots, autocorrelation plots, and posterior predictive checks to validate MCMC convergence and model fit. |

Introduction Within stochastic model fitting research, such as quantifying drug-target binding kinetics or viral dynamics, the central task is Bayesian inference: computing the posterior distribution of model parameters given observed data. This requires solving integrals that are often intractable analytically. Markov Chain Monte Carlo (MCMC) provides a computational framework to solve this sampling problem, enabling researchers to approximate these complex integrals and make robust probabilistic inferences from stochastic models.

The Core Sampling Problem For a model with parameters θ and data D, Bayes' theorem states: P(θ|D) = [P(D|θ) * P(θ)] / P(D). The denominator P(D) = ∫ P(D|θ)P(θ) dθ is the marginal likelihood or evidence. This integral is the fundamental computational barrier. For high-dimensional θ or complex models, it is intractable.

Table 1: Comparison of Integration Methods for Posterior Computation

| Method | Analytical Solution | Numerical Quadrature | MCMC Sampling |

|---|---|---|---|

| Feasibility | Only for simple conjugate priors | Limited to low dimensions (≤~5) | Effective in high dimensions |

| Output | Exact closed form | Discrete approximation | Representative sample set |

| Primary Challenge | Model restriction | "Curse of Dimensionality" | Convergence diagnostics |

| Key MCMC Advantage | N/A | N/A | Avoids computing P(D) directly; explores high-dimensional space efficiently |

MCMC Protocol: Solving the Integral via Sampling The following protocol outlines a standard Metropolis-Hastings MCMC implementation for stochastic pharmacodynamic model fitting.

Protocol 1: Metropolis-Hastings MCMC for Parameter Estimation

Model & Prior Definition

- Define the stochastic model likelihood function, P(D|θ). Example: a stochastic differential equation (SDE) for tumor growth kinetics.

- Define prior distributions, P(θ), for all parameters (e.g., Gaussian for rate constants, Gamma for positive parameters).

Algorithm Initialization & Burn-in

- Set initial parameter values θ₀.

- Specify a proposal distribution Q(θ*|θ) (e.g., multivariate Gaussian).

- Run an initial "burn-in" phase (e.g., 5,000-50,000 iterations, tunable). Discard these samples to minimize dependence on starting point.

Iterative Sampling Loop

- Proposal: At iteration t, generate a candidate parameter set θ* from Q(θ*|θₜ).

- Acceptance Ratio: Compute α = min[1, ( P(D|θ) P(θ) ) / ( P(D|θₜ) P(θₜ) )]. Crucially, P(D) cancels out.

- Accept/Reject: Draw u ~ Uniform(0,1). If u ≤ α, accept candidate (θₜ₊₁ = θ*). Else, reject candidate (θₜ₊₁ = θₜ).

Convergence Diagnostics & Analysis

- Run multiple chains from dispersed starting points.

- Calculate the Gelman-Rubin statistic (R̂). R̂ < 1.05 for all parameters indicates convergence.

- After discarding burn-in, use the remaining correlated samples (thinning optional) for posterior analysis: means, credible intervals, and predictive simulations.

Visualization of the MCMC Solution Pathway

Title: MCMC Solves the Intractable Integral

Title: Metropolis-Hastings Algorithm Flow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools for MCMC in Stochastic Model Fitting

| Item/Category | Function in MCMC Research | Example/Note |

|---|---|---|

| Probabilistic Programming Language (PPL) | Provides high-level abstractions for model specification and automated backend sampling. | Stan (NUTS sampler), PyMC, Turing.jl. Essential for productivity. |

| High-Performance Computing (HPC) Environment | Enables running multiple long chains simultaneously for complex models. | Cloud compute clusters, SLURM-managed servers. Critical for scalability. |

| Differential Equation Solvers | Computes the likelihood P(D|θ) for stochastic or ordinary differential equation models. | SciPy ODE solvers, DifferentialEquations.jl (for SDEs). Core to the forward model. |

| Convergence Diagnostic Software | Implements statistical tests to verify MCMC chain convergence and sampling quality. | ArviZ (rhat, ess), CODA R package. Non-negotiable for rigor. |

| Visualization & Analysis Library | Generates trace plots, posterior distributions, and predictive checks from output samples. | ArviZ, Matplotlib, ggplot2. Key for interpretation and communication. |

Application Notes & Protocols Within the context of a thesis on Markov Chain Monte Carlo (MCMC) for stochastic model fitting in pharmacological research, the foundational concepts of stationarity, ergodicity, and convergence are critical. These concepts ensure that MCMC algorithms, such as those used to fit complex pharmacokinetic/pharmacodynamic (PK/PD) models, produce reliable and interpretable samples from the true posterior distribution of parameters.

1. Conceptual Framework & Quantitative Comparisons

Table 1: Core MCMC Properties and Their Implications for Stochastic Model Fitting

| Concept | Mathematical Definition | Practical Implication for PK/PD | Diagnostic Tool |

|---|---|---|---|

| Stationarity | A chain where π satisfies π = πP (π is invariant). | Once reached, samples reflect the target posterior distribution of model parameters (e.g., EC₅₀, clearance). | Geweke test, Heidelberger-Welch stationarity test. |

| Ergodicity | Time averages converge to ensemble averages (space averages). | Justifies using a single, long chain for inference: posterior means & credible intervals are consistent. | Assessment of total variation distance convergence. |

| Geometric Ergodicity | Convergence rate is geometric: |Pⁿ(x, ·) - π(·)| ≤ M(x)ρⁿ. | Guarantees faster, more reliable convergence for well-behaved models, enabling confidence in complex hierarchies. | Drift and minorization conditions analysis. |

| Convergence to Posterior | The chain’s distribution becomes indistinguishable from the true Bayesian posterior. | Final parameter estimates and uncertainty quantifications are valid for scientific decision-making. | Gelman-Rubin statistic (R̂), effective sample size (ESS), trace plots. |

Table 2: Typical Diagnostic Thresholds in Pharmacometric MCMC

| Diagnostic | Target Value | Marginal Value | Action Required |

|---|---|---|---|

| Gelman-Rubin R̂ | < 1.05 | 1.05 - 1.10 | Increase chain length, re-parameterize model. |

| Effective Sample Size (ESS) | > 400 per param | 200 - 400 | May suffice for posterior mean, insufficient for tails. |

| ESS/sec | As high as possible | N/A | Metric of sampler efficiency; consider algorithm change. |

| Autocorrelation (lag k) | Rapid decay to ~0 | High, slow decay | Thinning, use of HMC/NUTS instead of basic Gibbs/MH. |

2. Experimental Protocol: Validating MCMC Convergence for a Phase II Dose-Response Model

Protocol Title: Multichain MCMC Workflow for Posterior Convergence Assessment in a Bayesian Emax Model

Objective: To robustly fit a Bayesian sigmoidal Emax model to clinical dose-response data and verify that the sampling algorithm has converged to the true posterior distribution.

Materials & Reagent Solutions (The Scientist's Toolkit):

- Statistical Software: Stan (or PyMC3/Nimble) for Hamiltonian Monte Carlo (HMC) sampling.

- Computing Environment: Multi-core workstation or high-performance computing cluster.

- Data: Cleaned Phase II clinical trial data (Dose, Response, Patient Covariates).

- Initialization Scripts: Code to generate dispersed starting points for multiple chains.

- Diagnostic Suite: CODA (R package) or ArviZ (Python library) for convergence diagnostics.

Procedure:

- Model Specification: Code the hierarchical Bayesian Emax model:

- Likelihood: Responseᵢ ~ Normal(E₀ + (Emax * Doseᵢʰ) / (ED₅₀ʰ + Doseᵢʰ), σ²).

- Priors: E₀, Emax ~ Normal(appropriate prior); ED₅₀ ~ LogNormal(...); h ~ Gamma(...); σ ~ HalfCauchy(0,5).

- Chain Initialization: Generate 4 independent MCMC chains. For each parameter, draw starting values from a distribution dispersed widely relative to the expected posterior (e.g., ±3 prior SDs).

- Sampling Execution: Run each chain for a total of 10,000 iterations, with the first 5,000 iterations discarded as warm-up (burn-in). Use a modern sampler (e.g., NUTS in Stan).

- Diagnostic Computation: a. Calculate the Gelman-Rubin potential scale reduction factor (R̂) for every model parameter. b. Calculate the effective sample size (ESS) for all parameters and key derived quantities (e.g., AUC of the curve). c. Generate trace plots (all chains) and autocorrelation plots for primary parameters.

- Convergence Criteria Check:

- Primary: All parameters have R̂ < 1.05.

- Secondary: All parameters have ESS > 400 (or ESS > 100 for tail quantiles).

- Visual: Trace plots show all chains well-mixed and stationary; autocorrelation drops to near zero rapidly.

- Posterior Analysis: Upon passing diagnostics, pool post-warm-up samples from all chains to summarize the posterior distribution (medians, 95% credible intervals) for model parameters and perform predictive checks.

3. Visualization of MCMC Concepts & Workflow

Title: The Path from Initialization to Valid Inference

Title: Multi-Chain Diagnostic Workflow for MCMC

Application Notes

PK/PD Stochastic Models

Modern pharmacokinetic/pharmacodynamic (PK/PD) models are inherently stochastic to account for inter-individual variability (IIV), inter-occasion variability (IOV), and residual unexplained variability (RUV). Within an MCMC thesis framework, these random effects are sampled from defined probability distributions (e.g., log-normal for IIV). The primary application is Bayesian population modeling, where prior distributions (from earlier studies or literature) are updated with new clinical trial data via MCMC sampling (e.g., Hamiltonian Monte Carlo in Stan or Gibbs sampling in NONMEM) to yield posterior parameter distributions. This is critical for optimizing dosing regimens, especially for novel modalities like bispecific antibodies or cell therapies, where drug-target binding kinetics are highly variable.

Stochastic Disease Progression Models

These models separate the natural history of a disease from drug effects. Stochasticity is introduced via random walks or Wiener processes to model the unpredictable evolution of biomarkers (e.g., HbA1c in diabetes, tumor size in oncology) over time. In an MCMC context, the variance of the stochastic process is a key parameter estimated from longitudinal placebo arm data. This allows for the simulation of counterfactual disease trajectories, providing a robust basis for quantifying a drug's true disease-modifying effect. This approach is paramount in chronic neurological (Alzheimer's) or degenerative diseases.

Quantitative Systems Pharmacology (QSP) Stochastic Models

QSP models are large, multi-scale systems of ordinary differential equations (ODEs) representing biological pathways. Stochasticity is crucial at low molecular or cellular counts (e.g., tumor initiating cells) and is implemented via stochastic differential equations (SDEs) or Gillespie algorithms. Fitting these complex models to data is a high-dimensional challenge. MCMC methods, particularly adaptive ones, are used to sample the vast parameter space and identify plausible parameter sets that describe heterogeneous patient populations. This enables in silico clinical trials to predict subpopulation responses and combination therapy synergy.

Table 1: Key Stochastic Parameters in Drug Development Models

| Model Type | Source of Stochasticity | Typical Distribution | MCMC Sampling Challenge | Clinical Application Example |

|---|---|---|---|---|

| PK/PD | Inter-individual Variability (IIV) | Log-Normal(ω²) | High correlation between PK parameters (CL, V) | Precision dosing of warfarin (CYP2C9 polymorphisms) |

| PK/PD | Residual Error | Proportional, Additive, or Mixed Normal(σ²) | Model misspecification detection | ICU antibiotic dosing (high unpredictable variability) |

| Disease Progression | Disease Path Evolution | Wiener Process (σ_dp²) | Separating drug effect from natural progression | Slowing of clinical dementia rating (CDR) in Alzheimer's |

| QSP | Cellular Response Variability | Poisson or Negative Binomial | High computational cost per simulation | Predicting resistant clone emergence in oncology |

Table 2: MCMC Algorithm Suitability for Stochastic Models

| Algorithm (Example) | Best For Model Type | Key Strength | Computational Cost | Software Implementation |

|---|---|---|---|---|

| Gibbs Sampling | Hierarchical PK/PD | Efficient for conditional conjugacy | Low to Moderate | BUGS, JAGS, NONMEM |

| Hamiltonian Monte Carlo (HMC/NUTS) | QSP, Complex PD | Efficient in high-dimensional spaces | High per-step, faster convergence | Stan, PyMC3 |

| Metropolis-in-Gibbs | Mixed-effects Disease Progression | Flexibility for non-standard distributions | Moderate | Custom in R/Python |

Experimental Protocols

Protocol 1: MCMC for Population PK/PD Model Fitting

Objective: Estimate posterior distributions of population parameters (typical values, IIV, RUV) for a two-compartment PK model with an Emax PD model. Materials: See "Scientist's Toolkit" below. Procedure:

- Model Specification: Define the structural PK (CL, V1, Q, V2) and PD (Emax, EC50) model. Specify the statistical model: IIV on CL and Emax (log-normal), proportional residual error.

- Prior Elicitation: Assign informed priors. For example, use a normal prior for log(CL) from in vitro clearance prediction, and weakly informative half-Cauchy priors for variance parameters.

- MCMC Setup (Stan Example): Code the model in Stan language. Use 4 parallel chains. Set warm-up/adaptation to 2000 iterations and total iterations to 4000 per chain.

- Sampling & Diagnostics: Run MCMC. Check convergence with R̂ (≤1.05) and effective sample size (n_eff > 400). Visually inspect trace plots for stationarity.

- Posterior Analysis: Extract posterior distributions (median, 95% credible intervals) for all parameters. Perform posterior predictive checks (PPC) to validate model fit against observed data.

Protocol 2: Fitting a Stochastic Disease Progression Model

Objective: Estimate the rate of disease progression and its stochastic variance from placebo group longitudinal data.

Materials: Longitudinal biomarker data (e.g.,每月 tumor volume), software for SDE estimation (e.g., msde R package, Turing.jl).

Procedure:

- Model Formulation: Define the SDE: dX(t) = (α - β*X(t)) dt + σ dW(t), where X is biomarker, α is progression rate, β is decay, σ is volatility, W is Wiener process.

- Likelihood Construction: Use Euler-Maruyama discretization to approximate the transition density between observations for the likelihood function.

- MCMC Implementation: Use HMC to sample from the joint posterior of (α, β, σ). Use non-centered parameterization to improve sampling efficiency for σ.

- Model Validation: Simulate 1000 biomarker trajectories from the posterior predictive distribution. Overlay the observed placebo data to ensure the model captures both trend and variability.

Protocol 3: Calibrating a Stochastic QSP Model

Objective: Identify plausible parameter sets for a large QSP model of IL-6 signaling. Materials: Prior knowledge ranges for rate constants, in vitro time-course data for pSTAT3, in vivo cytokine levels. Procedure:

- Prior Distribution Specification: Define uniform or log-uniform priors for all unknown kinetic parameters over biologically plausible ranges (e.g., 1e-3 to 1e3).

- Approximate Bayesian Computation (ABC) - MCMC: Given the high cost of simulating the full stochastic model, use ABC-MCMC.

- Propose a new parameter vector θ.

- Simulate the model once to generate summary statistics S.

- Accept θ* if distance d(S*, S_observed) < ε (tolerance).

- Use an adaptive tolerance scheme.

- Analysis of Posterior: The output is an ensemble of calibrated parameter sets. Analyze correlations between parameters to identify key system sensitivities.

Visualization

Title: MCMC Workflow for PK/PD Model Fitting

Title: Stochastic Scales in QSP Modeling

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Stochastic Modeling

| Item | Function in Stochastic Model Fitting | Example Product/Software |

|---|---|---|

| Bayesian Inference Engine | Core software for performing MCMC sampling. | Stan, PyMC3, Turing.jl, NONMEM |

| High-Performance Computing (HPC) Access | Parallelizes chains and manages intensive QSP/ SDE simulations. | Cloud clusters (AWS, GCP), Slurm-managed servers |

| Differential Equation Solver | Solves ODEs/SDEs within the model likelihood. | Sundials (CVODES), BioSimulator.jl, Gillespie2 |

| Data Wrangling & Visualization Library | Prepares data and plots posteriors, traces, PPCs. | R tidyverse/ggplot2, Python pandas/ArviZ |

| Diagnostic Dashboard | Assesses MCMC convergence and sampling quality. | RStan, CODA R package, PyMC3 diagnostics |

| Prior Knowledge Database | Informs prior distribution selection for parameters. | PubChem, IUPHAR/BPS Guide, previous meta-analyses |

| Sensitivity Analysis Tool | Identifies most influential stochastic parameters. | Sobol indices, Morris method (SALib Python lib) |

Implementing MCMC Samplers: A Step-by-Step Workflow for Pharmacometric Analysis

Markov Chain Monte Carlo (MCMC) methods are the cornerstone of Bayesian inference for stochastic model fitting in scientific research. This universal blueprint provides a foundational pseudo-code framework, enabling researchers—particularly in drug development and systems biology—to adapt MCMC for fitting complex, stochastic models to observational data. It serves as a core chapter in a thesis dedicated to advancing quantitative methodologies for uncertainty quantification in dynamical systems.

Core Algorithm Pseudo-Code

The following universal pseudo-code outlines a Metropolis-Hastings MCMC algorithm, which forms the basis for most advanced variants.

Application Notes for Stochastic Model Fitting

Key Considerations

- Likelihood for Stochastic Models: For stochastic differential equation (SDE) or agent-based models, the likelihood is often intractable. Use pseudo-marginal methods (e.g., Particle MCMC) or approximate Bayesian computation (ABC-MCMC).

- Proposal Tuning: The

proposal_covsignificantly impacts efficiency. Adaptive MCMC schemes (e.g., Haario et al.) tune this covariance during burn-in. - Convergence Diagnostics: Always assess chains using metrics like Gelman-Rubin R̂ (for multiple chains), effective sample size (ESS), and trace plot inspection.

- Prior Elicitation: Informative priors from domain knowledge (e.g., pharmacokinetic rate constants) are crucial for regularization in high-dimensional spaces common in systems pharmacology.

Workflow Diagram

Title: MCMC Workflow for Stochastic Model Fitting

Quantitative Performance Data

Table 1: Comparison of MCMC Algorithm Performance on a Stochastic Pharmacokinetic Model*

| Algorithm Variant | Effective Sample Size (ESS) / hr | Acceptance Rate (%) | Time to Convergence (hr) | Relative Efficiency (ESS/Time) |

|---|---|---|---|---|

| Standard MH | 450 | 23 | 3.2 | 1.0 (baseline) |

| Adaptive MH | 1250 | 28 | 1.8 | 2.8 |

| Hamiltonian MC | 3200 | 65 | 0.9 | 6.7 |

| Particle Gibbs | 980 | 100* | 4.5 | 0.7 |

*Simulated data for a two-compartment PK model with proportional noise. Hardware: 8-core CPU @ 3.0GHz.

Experimental Protocol: MCMC for Dose-Response Fitting

This protocol details fitting a stochastic cell signaling model to dose-response data, common in drug efficacy studies.

Protocol 5.1: MCMC-Enabled Stochastic Dose-Response Curve Inference

Objective: To estimate posterior distributions for EC₅₀ and Hill coefficient (θ = [log(EC₅₀), n_H]) from noisy, heterogeneous cell-based assay data using a stochastic activation model.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Preparation:

- Load dose-response data (Doses di, Response measurements yij).

- Normalize responses to control (0-100%).

- Calculate summary statistics (mean, variance per dose).

Stochastic Model Definition:

- Define the likelihood. Example: yij ~ N( f(di; θ), σ² ), where f is the Hill function.

- For inherent stochasticity, use: yij ~ NegativeBinomial( mean=f(di; θ), dispersion=φ ).

- Set priors: log(EC₅₀) ~ N(log(median dose), 2), n_H ~ HalfNormal(3), φ ~ Gamma(1,0.5).

MCMC Setup:

- Initialize θ₀ from prior.

- Set proposal covariance using a preliminary variational Bayes estimate or diagonal matrix.

- Configure chain: niterations=50,000, burnin=10,000, thin=5 → 8,000 stored samples.

Execution & Monitoring:

- Run 4 parallel chains from dispersed initial points.

- Monitor log-posterior trace for stationarity.

- Every 1000 iterations, calculate Gelman-Rubin R̂ for all θ. Continue until R̂ < 1.05 for all parameters.

Post-Processing & Analysis:

- Discard burn-in, combine chains (if converged).

- Compute posterior medians and 95% credible intervals for θ.

- Generate posterior predictive checks: simulate data from 500 sampled θ to overlay on experimental data.

Diagram: MCMC Dose-Response Analysis Workflow

Title: MCMC Dose-Response Analysis Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Computational Tools

| Item Name / Software | Function in MCMC Protocol | Example/Supplier |

|---|---|---|

| Stan/PyMC3/NumPyro | Probabilistic programming language for automated MCMC, HMC, and variational inference. | Stan Development Team |

| JAX | Library for accelerated numerical computing (GPU/TPU) and automatic differentiation; backbone of NumPyro. | Google Research |

| RStan/rstanarm | R interfaces to Stan for statistical modeling. | CRAN Repository |

| Live Cell Imaging Dye | Generates single-cell time-series data for stochastic model fitting. | Invitrogen CellTracker |

| qPCR Reagents | Provides noisy, quantitative gene expression data for hierarchical stochastic models. | SYBR Green |

| High-Content Screening System | Acquires dose-response data with cell-to-cell variability. | PerkinElmer Operetta |

| Cluster/Cloud Computing | Enables running multiple long MCMC chains in parallel for diagnostics. | AWS Batch, Slurm |

This document provides detailed Application Notes and Protocols for three foundational Markov Chain Monte Carlo (MCMC) samplers, framed within a broader thesis on MCMC for stochastic model fitting in pharmacokinetic/pharmacodynamic (PK/PD) and systems biology research. Efficient and accurate sampling is critical for quantifying uncertainty in complex, non-linear models endemic to drug development.

Sampler Core Principles & Comparative Analysis

A quantitative comparison of the three samplers is summarized below.

Table 1: Core Sampler Characteristics and Performance Metrics

| Feature | Metropolis-Hastings (MH) | Gibbs Sampler | No-U-Turn Sampler (NUTS) |

|---|---|---|---|

| Proposal Mechanism | User-defined symmetric/asymmetric distribution. | Directly samples from full conditional distributions. | Hamiltonian dynamics on a Euclidean manifold; trajectory adaptively determined. |

| Acceptance Rate (Typical Target) | 20-40% for random walk. | 100% (always accept conditional draw). | ~65% (controlled by step size adaptation). |

| Key Parameters | Proposal distribution scale (e.g., covariance). | Order of parameter updates. | Step size (ϵ), Target acceptance rate (δ), Max tree depth. |

| Adaptation | Manual tuning or Robbins-Monro. | Not typically applied. | Dual-averaging for ϵ, and potential mass matrix adaptation. |

| Computational Cost per Iteration | Low (1 density eval). | Medium (P density evals for P parameters). | High (multiple gradient evals per "leapfrog" step). |

| Efficiency in High Dimensions | Poor with isotropic proposal; better with informed covariance. | Good if conditionals are conjugate/ easy to sample. | Very Good; utilizes geometry of target distribution. |

| Requires Gradients? | No. | No. | Yes (of log-posterior). |

| Primary Use Case | Generic Bayesian inference, low dimensions, conceptual foundation. | Models with convenient conditional structures (e.g., hierarchical, latent variable). | Complex, high-dimensional models with differentiable log-posterior (e.g., PK/PD, systems biology). |

Workflow Diagram

Title: MCMC Sampler Selection and Analysis Workflow

Experimental Protocols

Protocol: Benchmarking Samplers on a PK/PD Model

Objective: Compare the efficiency of MH, Gibbs, and NUTS in fitting a two-compartment PK model with an Emax PD response.

Model: log(y) ~ N(log(CL * exp(η)), σ) with priors on CL, V, ka, Emax, EC50, and ω (IIV).

Materials: See The Scientist's Toolkit (Section 5).

Procedure:

- Synthetic Data Generation:

- Fix "true" parameter vector θ* = [CL=5, V=25, ka=1.2, Emax=100, EC50=2].

- Simulate PK profiles for N=50 subjects using differential equation solver.

- Add proportional log-normal noise (σ=0.1) and inter-individual variability (ω=0.3).

Sampler Configuration:

- MH: Initialize proposal covariance matrix Σ as diagonal (0.1 * θ*). Run 4 chains of 10,000 iterations, discarding first 5,000 as warm-up. Manually adjust Σ scale to target ~25% acceptance rate.

- Gibbs: Employ a blocked Gibbs approach. Update PK parameters (CL, V, ka) jointly using an MH step within Gibbs (as conditionals are non-standard). Update Emax, EC50 using conjugate Normal-Inverse Gamma updates (if using normal priors). Update individual ηs from their full conditional (normal). Use same chain length/warm-up as MH.

- NUTS: Use automatic differentiation to compute gradients of the joint log-posterior. Set target acceptance rate δ=0.8. Run 4 chains of 2,000 iterations with 1,000 warm-up iterations for dual-averaging adaptation of step size ϵ and mass matrix.

Metrics Collection:

- Record Effective Sample Size (ESS) per second for each key parameter.

- Calculate potential scale reduction factor (R̂) to assess convergence.

- Monitor trace plots and autocorrelation for each sampler.

Table 2: Expected Benchmark Results (Illustrative)

| Sampler | ESS/sec (for CL) | Mean R̂ | Total Runtime | Avg. Acceptance Rate |

|---|---|---|---|---|

| Metropolis-Hastings | 15 | 1.05 | 5 min | 24% |

| Gibbs | 45 | 1.02 | 3 min | 100% (for conjugate) |

| NUTS | 220 | 1.01 | 2 min | 78% |

Protocol: Implementing a Hierarchical Gibbs Sampler for a Clinical Dose-Response

Objective: Fit a Bayesian logistic regression model to Phase II dose-response data with hierarchical partial pooling across trial sites.

Model: logit(p_response) = α_site + β * dose. α_site ~ N(μ_α, τ_α), with hyperpriors on μα, τα.

Procedure:

- Data Preparation: Organize data as (siteid, doselevel, npatients, nresponders).

- Initialization: Set starting values for α (vector), β, μα, τα.

- Gibbs Sampling Loop (for 10,000 iterations):

a. Sample each αsite: From its full conditional distribution using a Metropolis-within-Gibbs step (non-conjugate). Proposal:

α_site' ~ N(α_site_current, 0.5). b. Sample β: From a Bayesian logistic regression conditional (using MH or Laplace approximation) if no conjugate prior. c. Sample μα: From Normal full conditional:μ_α | ... ~ N( (∑α_site/τ_α) / (N_sites/τ_α + 1/σ_μ²), 1/(N_sites/τ_α + 1/σ_μ²) ). d. Sample τ_α: From Gamma full conditional:τ_α | ... ~ Gamma( a + N_sites/2, b + ∑(α_site - μ_α)²/2 ). - Posterior Analysis: Summarize posterior distributions of β (dose effect), τα (between-site variability), and site-specific αsite.

Protocol: Optimizing NUTS for a Systems Pharmacology ODE Model

Objective: Efficiently sample from the posterior of a large ODE-based systems biology model with ~50 parameters. Procedure:

- Pre-conditioning (Mass Matrix):

- Run an initial short adaptation phase (e.g., 500 iterations) with a diagonal mass matrix.

- Optionally, use the estimated parameter covariance from this run to set a dense mass matrix for the main run, enabling better handling of parameter correlations.

- Step Size Adaptation:

- Use the dual-averaging algorithm during warm-up to automatically tune ϵ to achieve the target acceptance rate (δ=0.8 is a robust default).

- Tree Depth Control:

- Set the maximum tree depth (e.g., 10-12) to prevent excessively long, wasteful trajectories.

- Validation: Verify energy fraction of missing information (E-BFMI) diagnostics to ensure Hamiltonian dynamics are efficient.

Title: NUTS Algorithm Recursive Tree Building Logic

Convergence Diagnostics & Validation Protocol

A mandatory step in any MCMC analysis for stochastic model fitting.

Procedure:

- Run Multiple Chains: Initialize 4 chains from dispersed starting points in parameter space.

- Compute R̂ (Gelman-Rubin Statistic): Calculate for all parameters post-warm-up. Protocol: R̂ < 1.05 indicates convergence.

- Compute Effective Sample Size (ESS): Protocol: Use batch-means or autocorrelation-based methods. Target ESS > 400 per chain for reliable posterior summaries.

- Visual Inspection: Examine trace plots (stationarity), autocorrelation plots (mixing), and rank plots (chain comparability).

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for MCMC in Drug Development

| Item / Software | Function / Purpose | Example / Note |

|---|---|---|

| Probabilistic Programming Language (PPL) | Provides DSL for model definition, automatic differentiation, and built-in samplers (MH, Gibbs, NUTS). | Stan (NUTS/HMC), PyMC3/4 (NUTS), Turing.jl (Gibbs, NUTS), BUGS/JAGS (Gibbs). |

| Automatic Differentiation (AD) | Computes exact gradients of the log-posterior required for HMC/NUTS. | Stan Math Library, PyTorch, JAX, TensorFlow. |

| ODE/PDE Solver | Solves differential equations for PK/PD or systems biology models within the posterior. | Sundials (CVODES), Boost.ODEINT, specialized solvers for stiff systems. |

| High-Performance Computing (HPC) | Enables parallel chain execution and large-scale simulation studies. | Cloud computing (AWS, GCP), SLURM clusters, multi-core CPUs/GPUs. |

| Diagnostic & Viz Libraries | Calculates diagnostics (R̂, ESS) and creates publication-quality plots. | ArviZ (Python), bayesplot (R), MCMCChains (Julia). |

| Domain-Specific Model Libraries | Provide pre-built, validated templates for common pharmacometric models. | NONMEM, mrgsolve, Pumas. |

Within the broader thesis on advancing Markov Chain Monte Carlo (MCMC) for stochastic model fitting in pharmacometrics and systems biology, a critical gap exists in practical, cross-platform integration. This protocol provides a unified framework for leveraging the strengths of Stan (Hamiltonian Monte Carlo), PyMC (accessible probabilistic programming), and NONMEM (industry-standard pharmacometric modeling) to enhance robustness, diagnostic capability, and predictive accuracy in drug development research.

Table 1: Core Features of MCMC Engines

| Feature | Stan (v2.32+) | PyMC (v5.10+) | NONMEM (v7.5+) |

|---|---|---|---|

| Primary Sampler | NUTS (HMC) | NUTS, Metropolis, Slice, etc. | SAEM, IMP, Gibbs (via Bayesian priors) |

| Language | Stan, R, Python, MATLAB | Python | Fortran, NM-TRAN |

| Strength | Efficient high-dim. sampling, DIAGNOSTICS | Flexible model spec., large ecosystem | Regulatory acceptance, PK/PD-specific |

| Key Diagnostic | divergences, Rhat, n_eff |

az.summary, trace plots |

$COV, Objective Function Value |

| Open Source | Yes | Yes | No (Commercial) |

| Best For | Complex hierarchies, new models | Rapid prototyping, educational use | Regulatory submission assets |

Table 2: Benchmark Performance on 2-Compartment PK Model (Synthetic Data, n=100 subjects)

| Metric | Stan (4 chains, 2000 iter) | PyMC (4 chains, 2000 iter, NUTS) | NONMEM (BAYES) |

|---|---|---|---|

| Run Time (min) | 22.5 | 18.7 | 15.2 |

| Mean Rhat (max) | 1.01 | 1.01 | N/A |

| Effective Sample Size/min (min) | 850 | 790 | N/A (proprietary) |

| Relative Std Error (%) on CL | 2.1 | 2.3 | 2.0 |

Application Notes & Protocols

Protocol 3.1: Cross-Validation Workflow for a Population PK/PD Model

Aim: To validate a nonlinear mixed-effects model for drug exposure-response using predictive performance.

Materials (The Scientist's Toolkit):

- Stan (

cmdstanr/pystan): High-efficiency sampler for final model fitting. - PyMC &

ArviZ: For prior predictive checks and advanced diagnostics (posterior predictive checks, plot generation). - NONMEM (&

PsN): For benchmark parameter estimates and standard error calculation via traditional methods. xpose(R) /plot_trace(Python): For visualization of chains and parameter distributions.bridgesampling(R/Stan): For computing marginal likelihoods for model comparison.

Procedure:

- Model Specification: Code the structural PK/PD model identically in Stan (

.stanfile), PyMC (Pythonclass), and NONMEM ($PK/$PREDblocks). - Prior Predictive Checking (PyMC):

- Sample from priors only in PyMC to generate simulated data.

- Visually confirm simulated data spans plausible real-world outcomes.

- Initial Fitting (NONMEM):

- Use

BAYESwith vague priors to obtain initial parameter estimates. - Use NONMEM's

$COVstep for approximate posterior variance.

- Use

- Robust Sampling (Stan):

- Use NONMEM estimates to inform initial values for Stan chains.

- Run 4 chains of NUTS sampler for 4000 iterations (2000 warmup).

- Monitor

Rhat < 1.05and no divergences.

- Diagnostic & Comparison (PyMC/ArviZ):

- Import Stan outputs into ArviZ (

arviz.from_cmdstan) for visualization. - Perform posterior predictive checks: compare observed data to simulations from the posterior.

- Calculate Widely Applicable Information Criterion (WAIC) for model selection.

- Import Stan outputs into ArviZ (

- Reporting: Report final parameters from Stan (primary) and compare point estimates with NONMEM results. Use PyMC/ArviZ graphics for publication.

Diagram Title: Cross-Validation Workflow for PK/PD Model

Protocol 3.2: Hierarchical Bayesian Meta-Analysis of EC50 Values

Aim: To pool half-maximal effective concentration (EC50) estimates from disparate in vitro studies using a hierarchical model.

Procedure:

- Data Assembly: Collect reported EC50 and standard errors from

kindependent studies. - Model Formulation: Implement a Bayesian random-effects model:

EC50_obs_i ~ Normal(EC50_true_i, se_i);EC50_true_i ~ Normal(mu, tau); with hyperpriors on population meanmuand between-study SDtau. - PyMC Prototyping: Quickly build and debug the hierarchical model in PyMC. Use

pm.sample_prior_predictive()to validate. - Stan Production: Translate the debugged model to Stan for faster, more reliable sampling of the posterior

p(mu, tau | data). - Sensitivity Analysis in NONMEM: For comparability, implement a similar model using

$PRIORin NONMEM. Compare the posterior mode (MAP) to Stan's posterior mean. - Visualization: Use forest plots (from

ArviZorggplot2) showing shrinkage of individual study estimates toward the pooled meanmu.

Diagram Title: Hierarchical Model for EC50 Meta-Analysis

Essential Research Reagent Solutions

Table 3: Key Software Tools & Libraries

| Item Name | Function & Purpose |

|---|---|

cmdstanr (R) / cmdstanpy |

Lightweight interfaces to Stan; compile and sample from models. |

ArviZ (Python) |

Universal library for MCMC diagnostics, visualization, and model comparison. |

PsN (Perl) |

Toolsuite for NONMEM, enabling cross-platform scripting, validation, and bootstrap. |

Posterior (R/Python) |

Modern library for manipulating and summarizing posterior draws. |

rstan / pystan |

High-level R/Python interfaces to Stan for user-friendly workflows. |

xpose (R) |

Tailored for diagnosing pharmacometric models, especially from NONMEM/Stan. |

bambi (Python) |

High-level Bayesian model-building interface built on PyMC, useful for rapid testing. |

Integration Protocol: Propagating Uncertainty to Clinical Simulations

Aim: To use posterior parameter distributions from MCMC for clinical trial simulation with full uncertainty propagation.

Procedure:

- Fit Final Model in Stan: Obtain

Sposterior draws of all parameters (fixed, random, variances). - Draw Parameter Sets: Randomly select

n=1000parameter sets from the posterior draws. - Simulate in NONMEM/Python:

- Method A (NONMEM): Create a

$TABLEfile for each parameter set, simulate via$SIMULATION, and aggregate results. - Method B (Python): Use a differential equation solver (e.g.,

scipy.integrate) to simulate the model for each parameter set directly.

- Method A (NONMEM): Create a

- Summarize: Calculate credible intervals (e.g., 2.5th, 50th, 97.5th percentiles) across simulations for key outputs (e.g., trough concentration, % of patients achieving target).

Diagram Title: Uncertainty Propagation to Clinical Simulation

Within the broader thesis research on advancing Markov Chain Monte Carlo (MCMC) methods for stochastic model fitting in pharmacometrics, this case study demonstrates the application of Bayesian hierarchical modeling with informative priors. The integration of prior knowledge, formalized through informative priors, is critical for stabilizing complex non-linear mixed-effects (NLME) PK/PD models, particularly when data are sparse or noisy. This approach directly addresses key MCMC research challenges: improving chain convergence, reducing parameter uncertainty, and enabling robust predictions for drug development decision-making.

The case study involves a monoclonal antibody targeting a soluble ligand, described by a target-mediated drug disposition (TMDD) PK/PD model with inter-individual variability (IIV). The model structure and prior distributions are summarized below.

Table 1: Structural Model Parameters and Fixed-Effects Priors

| Parameter | Description | Unit | Prior Distribution (Informative) | Prior Source |

|---|---|---|---|---|

| CL | Systemic Clearance | L/day | Log-Normal(μ=0.45, σ=0.1) | Preclinical species allometric scaling |

| Vc | Central Volume | L | Log-Normal(μ=3.2, σ=0.15) | Phase I SAD/MAD studies |

| Ka | Absorption Rate | 1/day | Log-Normal(μ=0.4, σ=0.3) | Literature on subcutaneous delivery |

| Koff | Drug-Target Dissoc. Rate | 1/nM/day | Log-Normal(μ=52.6, σ=0.2) | In vitro surface plasmon resonance |

| Rtot | Baseline Target Level | nM | Log-Normal(μ=0.8, σ=0.25) | Baseline biomarker data (Phase Ib) |

Table 2: Statistical Parameters and Hyperpriors

| Parameter | Description | Prior Distribution |

|---|---|---|

| ωCL, ωVc | IIV (Log-scale) | Half-Cauchy(0, 0.5) |

| σ_prop | Proportional Residual Error | Inverse-Gamma(shape=2, rate=0.5) |

| σ_add | Additive Residual Error | Inverse-Gamma(shape=2, rate=0.01) |

| ρ | CL-Vc Correlation | LKJ Correlation Prior(η=2) |

Experimental Protocol: MCMC Fitting with Informative Priors

Protocol Title: Bayesian NLME PK/PD Model Fitting via Hamiltonian Monte Carlo (HMC)

Objective: To estimate posterior distributions for PK/PD parameters of a TMDD model using patient data and informative priors within an MCMC framework.

Software & Reagents:

- Software: Stan (via cmdstanr or brms interface in R), R (tidyverse, posterior, bayesplot), NONMEM (for comparative analysis).

- Computing: Multi-core Linux server (≥ 16 cores), 32 GB RAM minimum.

Procedure:

Model Specification:

- Encode the TMDD ordinary differential equation (ODE) system in Stan's

functionsblock. - In the

parametersblock, declare primary parameters (CL, Vc, etc.) and statistical parameters (ω, σ, ρ). - In the

modelblock:- Apply informative prior distributions as defined in Table 1 & 2.

- Specify the hierarchical model:

individual parameter = population mean * exp(individual random effect). - Define the sampling statement for observed data:

y_obs ~ normal(y_pred, σ_total).

- Encode the TMDD ordinary differential equation (ODE) system in Stan's

Data Preparation:

- Structure data as a long-format table. Essential columns include:

ID,TIME,AMT,RATE,CMT(compartment),EVID,DV(dependent variable: drug conc. & target engagement). - Create a Stan-specific list:

N(total obs),N_id(number of subjects),id(subject index vector), and covariate matrices.

- Structure data as a long-format table. Essential columns include:

MCMC Sampling (HMC/NUTS):

- Run 4 independent HMC chains with No-U-Turn Sampler (NUTS).

- Configuration:

warmup = 2000iterations per chain,post-warmup = 2000iterations per chain,adapt_delta = 0.95to reduce divergences. - Initiate chains from random points within the prior distribution.

Convergence Diagnostics:

- Calculate the potential scale reduction factor (R̂). Accept convergence if R̂ < 1.05 for all key parameters.

- Inspect trace plots for stationarity and mixing.

- Check effective sample size (ESS) for posterior draws; ESS > 400 per chain is acceptable.

Posterior Predictive Check (PPC):

- Simulate 500 new datasets from the posterior predictive distribution.

- Compare the observed data percentiles (e.g., 5th, 50th, 95th) to the simulated data intervals visually and quantitatively.

Comparison to Non-Informative Prior Fit:

- Repeat the analysis using weakly informative priors (e.g., wide normal or half-normal distributions).

- Compare posterior credible intervals, ESS, and R̂ values between the informative and non-informative prior models.

Visualizing the Workflow and Model

Diagram 1: MCMC Fitting Workflow with Priors (86 characters)

Diagram 2: Target-Mediated Drug Disposition Model (75 characters)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Bayesian PK/PD Analysis

| Item | Category | Function in Analysis |

|---|---|---|

| Stan / cmdstanr | Statistical Software | A probabilistic programming language implementing efficient HMC/NUTS sampler for Bayesian inference. |

| R / tidyverse | Data Analysis Platform | Primary environment for data wrangling, visualization, and interfacing with Stan. |

shinystan |

Diagnostic Tool | Interactive GUI for exploring MCMC output, diagnostics, and posterior distributions. |

nonmem |

Comparative Software | Industry-standard for NLME modeling; used for benchmark comparisons with maximum likelihood. |

| Surface Plasmon Resonance (SPR) | In Vitro Assay | Provides in vitro binding kinetics (Kon, Koff) to form strong informative priors for Kd. |

| Ligand Binding Assay (MSD/ELISA) | Biomarker Assay | Measures total and free target levels in serum, informing Rtot and Kss priors. |

| Validated LC-MS/MS | Bioanalytical Method | Quantifies drug concentrations in biological matrices for PK model fitting. |

| High-Performance Computing (HPC) Cluster | Computing Resource | Enables parallel MCMC chains and complex ODE model fitting in practical timeframes. |

Within the context of research on Markov Chain Monte Carlo (MCMC) for stochastic model fitting in drug development, the generation of posterior samples is only the first step. The true challenge lies in transforming these high-dimensional, often-correlated samples into actionable scientific insight. This document provides application notes and protocols for the effective visualization and summarization of posterior distributions, enabling researchers to quantify parameter uncertainty, validate model convergence, and make robust predictions.

Core Concepts and Quantitative Summaries

Posterior distributions are summarized using point estimates and intervals derived from MCMC samples. The table below outlines key quantitative descriptors.

Table 1: Key Quantitative Summaries of a Posterior Distribution

| Descriptor | Formula/Definition | Interpretation in Drug Development Context |

|---|---|---|

| Posterior Mean | $\bar{\theta} = \frac{1}{N} \sum_{i=1}^{N} \theta^{(i)}$ | Central tendency of a parameter (e.g., mean drug clearance). Robust but sensitive to skew. |

| Posterior Median | Middle value of sorted $\theta^{(i)}$ | Central tendency, robust to outliers and skew in the distribution. |

| Maximum a Posteriori (MAP) | Value of $\theta$ at the posterior mode. | The most probable parameter value given data and prior. |

| 95% Credible Interval (CrI) | 2.5th and 97.5th percentiles of $\theta^{(i)}$. | There is a 95% probability the true parameter lies in this interval, given the model and data. |

| Standard Deviation | $\sqrt{\frac{1}{N-1} \sum_{i=1}^{N} (\theta^{(i)} - \bar{\theta})^2}$ | Measure of posterior uncertainty for a single parameter. |

| Effective Sample Size (ESS) | $ESS = N / (1 + 2 \sum{k=1}^{\infty} \rhok)$ | Approximate number of independent samples. Diagnoses autocorrelation in chains. |

| Gelman-Rubin $\hat{R}$ | $\sqrt{\frac{\widehat{Var}(\theta)}{W}}$; ratio of total to within-chain variance. | Convergence diagnostic. Values < 1.05 indicate chains have mixed well. |

Protocols for Visual Diagnostics and Summarization

Protocol 3.1: Trace and Autocorrelation Plot Generation

Purpose: To assess MCMC chain convergence, mixing, and independence of samples. Materials: Output from MCMC sampler (e.g., Stan, PyMC, JAGS), statistical software (R/Python). Procedure:

- Run a minimum of 4 independent MCMC chains from dispersed starting points.

- For each parameter of interest, generate a trace plot: a. Create an x-axis representing iteration number (including warm-up/burn-in if not discarded). b. Plot the sampled parameter value on the y-axis. c. Overlay lines for each independent chain, using distinct colors.

- Visually inspect trace plots for "fuzzy caterpillar" appearance, indicating good mixing and stationarity.

- Generate an autocorrelation plot for each chain: a. Calculate the autocorrelation function (ACF) for lags k from 0 to a specified maximum (e.g., 50). b. Plot lag k on the x-axis against autocorrelation on the y-axis, with a horizontal reference line at zero.

- Interpret rapid decay of autocorrelation to zero as indicative of efficient sampling and high ESS.

Protocol 3.2: Marginal and Joint Posterior Visualization

Purpose: To understand the shape, spread, and correlation structure of the posterior distribution. Materials: Combined post-warm-up samples from all convergent chains. Procedure:

- Univariate Marginal Posteriors: a. For a single parameter, plot a smoothed kernel density estimate (KDE) or histogram of all samples. b. Annotate the plot with vertical lines at the posterior mean, median, and 95% CrI bounds.

- Bivariate Joint Posteriors: a. For two parameters, create a scatter plot or a 2D KDE plot using a subset of samples (e.g., every 5th sample to reduce file size). b. Overlay contour lines indicating regions of highest posterior density (HPD). c. Calculate and report the posterior correlation coefficient from the samples.

- Pairwise Relationships (Corner Plot): a. For a model with p key parameters, create a p x p grid of plots. b. The diagonal plots show the marginal posterior distribution for each parameter. c. The off-diagonal plots show the joint posterior scatter or contour plots for each pair of parameters.

Protocol 3.3: Posterior Predictive Checking (PPC)

Purpose: To assess model fit by comparing observed data to data simulated from the posterior predictive distribution. Materials: Observed data y, posterior samples of model parameters $\theta^{(i)}$. Procedure:

- For each posterior sample $\theta^{(i)}$, simulate a new dataset $y{rep}^{(i)}$ from the likelihood $p(y{rep} | \theta^{(i)})$.

- Choose a test statistic T(y) (e.g., mean, variance, 90th percentile, a custom biomarker level).

- Compute the test statistic for the observed data, T(y), and for each replicated dataset, $T(y_{rep}^{(i)})$.

- Plot the distribution of $T(y_{rep})$ (e.g., a histogram or KDE) and indicate the location of T(y) with a vertical line.

- Calculate the posterior predictive p-value: $p{pp} = P(T(y{rep}) \geq T(y) | y)$. Extreme values (near 0 or 1) indicate poor model fit for that statistic.

Visual Workflows and Relationships

Title: MCMC Posterior Analysis Workflow and Diagnostic Loop

Title: From Visualization to Scientific Insight

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for MCMC Posterior Analysis

| Tool/Reagent | Function/Description | Example/Note |

|---|---|---|

| Probabilistic Programming Language | Framework for specifying Bayesian models and performing automated MCMC sampling. | Stan (NUTS sampler), PyMC (NUTS, Metropolis), JAGS (Gibbs). |

| High-Performance Computing (HPC) Environment | Enables running multiple long chains in parallel for complex pharmacometric models. | Cloud computing instances, local computing clusters with SLURM. |

| Visualization Library | Software packages specifically designed for statistical and MCMC diagnostic plotting. | bayesplot (R/Stan), arviz (Python/PyMC), ggplot2 (R), matplotlib/seaborn (Python). |

| Convergence Diagnostic Software | Calculates metrics like $\hat{R}$, ESS, and divergences from chain outputs. | Built into Stan and PyMC output; coda (R), arviz. |

| Posterior Database | Secure storage for high-dimensional sample arrays, enabling reproducible analysis. | HDF5 files, rds/RData files, relational databases with BLOB storage. |

| Interactive Visualization Dashboard | Allows non-statisticians to explore posterior summaries and predictions. | shiny (R), Dash (Python), Tableau with linked data sources. |

Diagnosing and Fixing Common MCMC Pitfalls: Convergence, Efficiency, and Prior Sensitivity

Within the broader thesis on advancing Markov Chain Monte Carlo (MCMC) methods for stochastic model fitting in pharmacokinetic-pharmacodynamic (PK/PD) research, diagnosing convergence is paramount. Erroneous inference from non-converged chains can derail drug development decisions. This protocol details the identification of non-convergence red flags through trace plots, the R-hat statistic, and Effective Sample Size (ESS), providing a critical checklist for researchers and scientists.

Diagnostic Metrics: Definitions and Thresholds

The following table summarizes the key quantitative diagnostics for assessing MCMC convergence.

Table 1: Key MCMC Convergence Diagnostics and Interpretation

| Diagnostic | Ideal Value | Caution Threshold | Red Flag (Non-Convergence) | Primary Purpose |

|---|---|---|---|---|

| R-hat (Gelman-Rubin) | 1.00 | >1.05 | >1.10 | Measures between-chain vs. within-chain variance. |

| Bulk-ESS | > 400 | ≤ 400 | ≤ 100 | Effective samples for estimating central tendencies (median, mean). |

| Tail-ESS | > 400 | ≤ 400 | ≤ 100 | Effective samples for estimating 5% and 95% quantiles. |

| Bulk-ESS / Iteration | > 0.001 | ≤ 0.001 | ≤ 0.0001 | Sampling efficiency for central intervals. |

| Trace Plot | Stationary, well-mixed | Slow wandering, low mixing | Discrete trends, poor overlap | Visual assessment of chain stationarity and mixing. |

Protocol: A Stepwise Diagnostic Workflow

This protocol outlines the systematic process for diagnosing convergence in an MCMC analysis of a non-linear mixed-effects PK/PD model.

Protocol Title: Systematic Convergence Diagnosis for MCMC in Stochastic Model Fitting

Objective: To verify that MCMC sampling has produced a reliable posterior distribution for all model parameters.

Materials & Software:

- MCMC output (e.g., from Stan, PyMC3, JAGS, NONMEM).

- Statistical software (R, Python).

- Visualization tools.

Procedure:

Initialization & Chain Configuration:

- Run at least 4 independent MCMC chains from dispersed initial values.

- Discard a sufficient warm-up/adaptation phase (e.g., first 50% of iterations).

Visual Inspection: Trace Plots

- Action: Generate overlaid trace plots for all chains for each key parameter.

- Diagnosis:

- GREEN (Converged): Chains are "fuzzy caterpillars" – stationary, overlapping, and rapidly mixing.

- RED FLAG: Chains show sustained trends, lack of overlap, or poor mixing (high autocorrelation).

Quantitative Diagnostic 1: R-hat (Ȓ)

- Action: Calculate the rank-normalized, split-R-hat statistic for all parameters.

- Diagnosis:

- GREEN: All R-hat ≤ 1.01.

- CAUTION: Any R-hat between 1.05 and 1.10.

- RED FLAG: Any R-hat > 1.10. Investigate the affected parameters.

Quantitative Diagnostic 2: Effective Sample Size (ESS)

- Action: Compute Bulk-ESS and Tail-ESS for all parameters.

- Diagnosis:

- GREEN: Both Bulk-ESS and Tail-ESS > 400 for all parameters.

- CAUTION: Either ESS between 100 and 400.

- RED FLAG: Any ESS ≤ 100. Indicates insufficient independent samples for reliable inference.

Holistic Decision & Iteration:

- If all checks (Steps 2-4) are GREEN, proceed to posterior analysis.

- If any RED FLAGS are present:

- Increase the number of iterations substantially.

- Re-parameterize the model to improve geometry.

- Consider using a non-centered parameterization or more informative priors.

- Return to Step 1.

Notes: R-hat and ESS should be calculated on post-warm-up samples. Diagnostics should be checked for all parameters, especially hyperparameters in hierarchical models.

Visualization: Convergence Diagnosis Workflow

Title: MCMC Convergence Diagnostic Decision Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools for MCMC Convergence Diagnostics

| Tool / "Reagent" | Function / Purpose in Convergence Diagnosis | Example Software/Package |

|---|---|---|

| MCMC Sampler | Engine for drawing posterior samples. Defines model geometry and efficiency. | Stan, PyMC, JAGS, NONMEM BUGS |

| Diagnostic Calculator | Computes R-hat, ESS, and other key metrics from chain outputs. | rstan::monitor, ArviZ (Python), coda (R) |

| Trace Plot Generator | Creates visualizations for chain behavior and parameter space exploration. | bayesplot (R), matplotlib (Python) |

| Autocorrelation Plot Tool | Visualizes correlation between successive samples; high ACF reduces ESS. | bayesplot::mcmc_acf, ArviZ.plot_autocorr |

| Rank & Divergence Diagnostic | Identifies sampler divergences indicating problematic model regions. | Stan's divergences, ArviZ.plot_parallel |

| Parallel Computing Environment | Runs multiple chains simultaneously on different cores for efficiency. | MPI, Stan's parallel_chains, Python multiprocessing |

Within the broader thesis on advanced Markov Chain Monte Carlo (MCMC) methods for stochastic model fitting in pharmacometric and systems biology research, a central challenge is the "curse of dimensionality." As model complexity increases—with more parameters, latent variables, and hierarchical levels—the volume of the parameter space expands exponentially. This leads to poor MCMC sampling efficiency, high computational cost, and unreliable inference. This document outlines practical application notes and protocols for two critical techniques to mitigate this curse: reparameterization and hierarchical modeling tricks.

Core Principles & Quantitative Comparison

Impact of Dimensionality on MCMC Efficiency

The table below summarizes key performance metrics for standard vs. optimized MCMC sampling in high-dimensional spaces, based on recent benchmarking studies (2023-2024).

Table 1: MCMC Performance Metrics in High-Dimensional Parameter Estimation

| Dimension (No. of Parameters) | Standard MCMC (ESS/sec) | Reparameterized Model (ESS/sec) | Hierarchical Centered (R-hat) | Hierarchical Non-Centered (R-hat) | Effective Sample Size Gain |

|---|---|---|---|---|---|

| 50 | 12.4 | 28.7 | 1.12 | 1.04 | 2.3x |

| 200 | 3.1 | 15.2 | 1.35 | 1.07 | 4.9x |

| 1000 | 0.5 | 4.8 | 1.89 (Divergent) | 1.11 | 9.6x |

| 5000 (Sparse Hierarchical) | 0.07 | 1.4 | N/A (Failed) | 1.15 | 20x |

ESS/sec: Effective Samples Per Second (higher is better); R-hat: Gelman-Rubin diagnostic (<1.1 indicates good convergence).

Reparameterization Strategies

Table 2: Common Reparameterization Techniques for Pharmacokinetic/Pharmacodynamic (PK/PD) Models

| Original Parameter | Constraint | Reparameterization | Transformation | Primary Benefit |

|---|---|---|---|---|

| Clearance (CL) | CL > 0 | log(CL) = θ_CL | Exponential | Unbounded sampling, better geometry. |

| Volume (V) | V > 0 | log(V) = θ_V | Exponential | Eliminates boundary constraints. |

| Fraction (F) | 0 ≤ F ≤ 1 | logit(F) = θ_F | Inverse logit: F = 1/(1+exp(-θ_F)) | Transforms to real line. |

| Correlation (ρ) | -1 ≤ ρ ≤ 1 | Fisher z(ρ) = 0.5 * log((1+ρ)/(1-ρ)) = θ_ρ | Inverse Fisher transform | Improves normality of posterior. |

| SD (σ) | σ > 0 | log(σ) = θ_σ | Exponential | Better mixing for variance components. |

Experimental Protocols

Protocol 1: Implementing Non-Centered Reparameterization for a Hierarchical PK Model

Objective: Improve MCMC sampling for a population PK model with N individuals and P parameters per individual.

Materials: Stan/PyMC3/JAGS software, PK dataset (individual concentration-time profiles).

Procedure:

- Define the Centered Parameterization (Baseline):

- For individual

i, parameter vectorθ_i(e.g., CLi, Vi) is drawn from a population distribution:θ_i ~ MultivariateNormal(μ, Σ) - Observations

y_ifollow:y_i ~ Normal(f(θ_i), σ) - This couples

μandθ_i, causing sampling inefficiency when population SD is small.

- For individual

Reparameterize to Non-Centered Form:

- Decompose the covariance matrix:

Σ = diag(τ) * Ω * diag(τ), whereτare scale parameters,Ωis correlation matrix. - Introduce independent standard normal variates

z_i ~ Normal(0, I). - Transform to individual parameters:

θ_i = μ + diag(τ) * L * z_i, whereLis the Cholesky factor ofΩ. - The model becomes:

- Decompose the covariance matrix:

MCMC Configuration:

- Run 4 chains with 2000 warm-up and 2000 sampling iterations.

- Use NUTS sampler.

- Monitor R-hat and divergent transitions.

Diagnostic Comparison:

- Compare ESS/sec, R-hat, and tree depth for

μandτbetween centered and non-centered versions.

- Compare ESS/sec, R-hat, and tree depth for

Protocol 2: Benchmarking Sampling Efficiency for High-Dimensional Signaling Pathways

Objective: Fit a stochastic differential equation (SDE) model of the MAPK/ERK pathway with random effects.

Materials: Simulated or real longitudinal phospho-protein data (e.g., pERK, pMEK). Simulated data generated from known parameters is recommended for method validation.

Workflow Diagram:

Diagram Title: Protocol 2 Workflow for MCMC Benchmarking

Procedure:

- Data Simulation: Simulate data from a nonlinear ODE/SDE model of the MAPK cascade (e.g., Huang-Ferrell model) with 20-30 parameters. Introduce inter-experiment variability by making 10-15 parameters log-normally distributed across 50 simulated experiments.

- Model Implementation: Implement three Bayesian models:

- Model A (Centered): Standard hierarchical model.

- Model B (Non-Centered): Full non-centered reparameterization of random effects.

- Model C (Partial Marginalization): Where possible, analytically integrate out latent stochastic states (e.g., using Gaussian approximations).

- MCMC Fitting: Fit all models with identical chains, adaption, and iteration settings.

- Quantitative Analysis: Populate a table like Table 1 with results. Calculate root mean square error (RMSE) of parameter estimates against known simulation truths.

Pathway & Logical Diagrams

Signaling Pathway with Random Effects Model:

Diagram Title: Non-Centered Hierarchical Model Structure

Decision Flow for Parameterization Choice:

Diagram Title: Choosing Centered vs Non-Centered Parameterization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for High-Dimensional MCMC

| Tool/Reagent | Type/Category | Primary Function in Research | Example/Note |

|---|---|---|---|

| Stan | Probabilistic Language | Implements NUTS sampler with automatic differentiation. Optimal for reparameterization. | Use brms R package for hierarchical models. |

| PyMC3/PyMC4 | Python Library | Flexible MCMC (NUTS, HMC) and VI. Direct support for non-centered parameterization via pm.Normal. |

pm.HalfNormal for prior on SDs (τ). |

| JAGS/NIMBLE | MCMC Engine | Gibbs and block sampling. Good for conjugate models, but manual reparameterization needed. | NIMBLE allows user-defined distributions. |

| TensorFlow Probability | Python Library | Scalable HMC/Variational Inference on CPUs/GPUs. For very high-dimensional problems. | Enables subsampling for large N. |

| CODA/bayesplot | R Diagnostic Package | Visualizing traces, posterior distributions, and calculating ESS/R-hat. | Essential for diagnosing sampling problems. |

| Bridge Sampling | R/Python Package | Computes marginal likelihoods for model comparison between complex hierarchical models. | bridgesampling package. |

| Simulated Data Generators | Custom Code | Validate methods by generating data from known complex models before applying to real data. | Use RxODE (R) or Simbiology (MATLAB) for PK/PD. |

Optimizing Proposal Distributions and Step Size for Faster Mixing