Beyond the Genome: The Ultimate Guide to Multi-Omics Data Integration in 2024

This comprehensive guide explains multi-omics data integration, the transformative approach combining genomics, transcriptomics, proteomics, and metabolomics data.

Beyond the Genome: The Ultimate Guide to Multi-Omics Data Integration in 2024

Abstract

This comprehensive guide explains multi-omics data integration, the transformative approach combining genomics, transcriptomics, proteomics, and metabolomics data. Aimed at researchers and drug development professionals, we demystify the foundational concepts, detail cutting-edge methodologies and bioinformatics tools, address common pitfalls and optimization strategies, and validate approaches through real-world applications in precision oncology and drug discovery. Learn how integrated analysis creates a holistic view of biological systems, moving beyond single-omics limitations to accelerate biomarker discovery and therapeutic development.

Decoding Complexity: What is Multi-Omics Integration and Why is it a Research Game-Changer?

1. Introduction Multi-omics data integration is the coordinated analysis of multiple, distinct biological data layers ("omes") to construct a comprehensive model of biological systems. This approach transcends the limitations of single-omics studies, enabling the discovery of novel mechanistic insights, robust biomarkers, and therapeutic targets by connecting molecular cause to functional effect.

2. The Omics Cascade: Layers of Biological Information The multi-omics universe is structured as a central dogma-informed cascade, where information flows from blueprint to function.

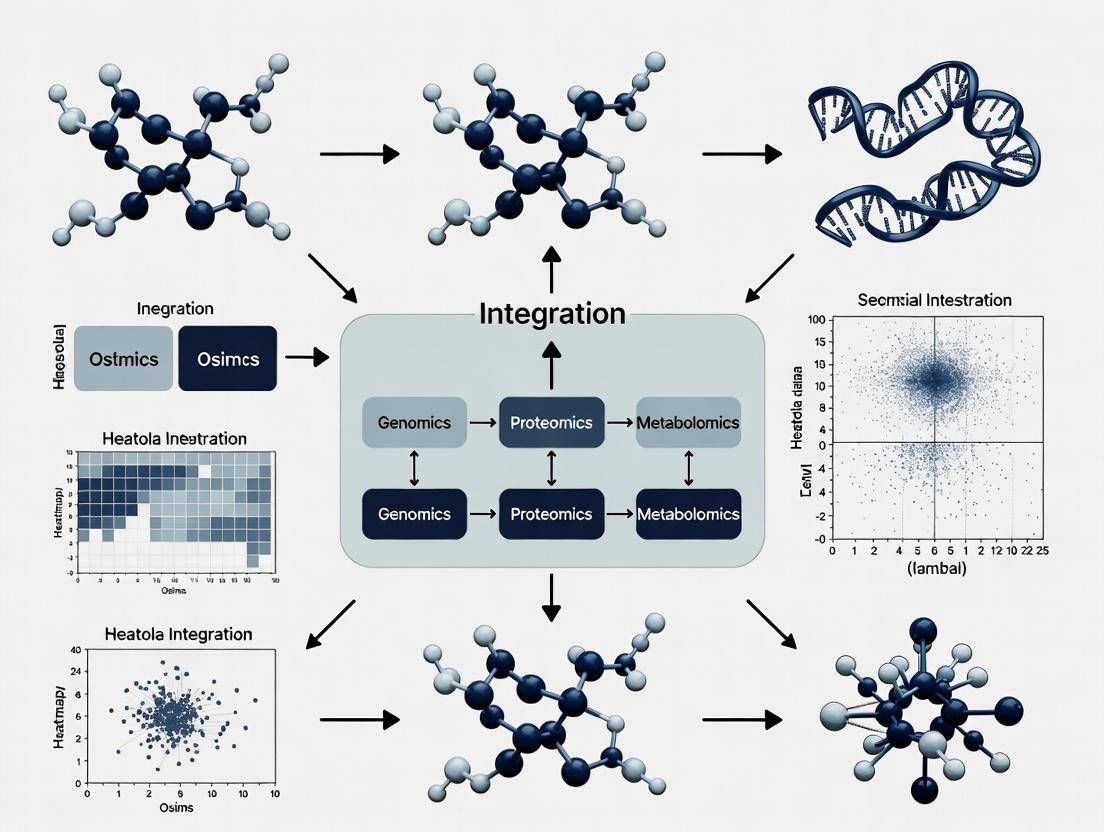

Diagram Title: The Central Omics Cascade

Table 1: Core Omics Layers and Their Quantitative Outputs

| Omics Layer | Molecular Entity | Key Technologies | Typical Output Scale | Temporal Dynamics |

|---|---|---|---|---|

| Genomics | DNA Sequence | WGS, WES, SNP Arrays | 3.2 billion bases (human) | Static (mostly) |

| Epigenomics | DNA/Chromatin Modifications | Bisulfite-seq, ChIP-seq, ATAC-seq | ~28M CpG sites (human) | Dynamic (hrs-days) |

| Transcriptomics | RNA Levels | RNA-seq, Single-cell RNA-seq | ~60,000 transcripts (human) | Dynamic (mins-hrs) |

| Proteomics | Protein Abundance & PTMs | LC-MS/MS, TMT/SILAC, RPPA | >20,000 proteins; >1M PTMs | Dynamic (hrs-days) |

| Metabolomics | Small Molecule Metabolites | LC/GC-MS, NMR | >20,000 predicted metabolites | Dynamic (secs-mins) |

| Microbiomics | Microbial Communities | 16S rRNA-seq, Shotgun Metagenomics | 100s-1000s of species | Dynamic (days-weeks) |

3. Core Methodologies for Multi-Omics Integration Integration strategies are categorized by their level of data fusion and analytical approach.

Diagram Title: Multi-Omics Integration Method Categories

Table 2: Quantitative Performance of Common Integration Tools

| Tool/Algorithm | Integration Type | Typical Use Case | Scalability (Features x Samples) | Key Statistical Metric |

|---|---|---|---|---|

| MOFA/MOFA+ | Intermediate (Factor) | Identifying latent sources of variation | High (100k x 10k) | Variance Explained (R²) |

| WGCNA | Late (Correlation) | Co-expression network construction | Medium (50k x 500) | Module Eigengene |

| mixOmics | Early/Intermediate | Multi-class discrimination, Dimensionality reduction | Medium (10k x 1k) | Cross-Validation Error |

| LION | Late (Knowledge) | Metabolomics-pathway integration | Knowledge-based | Enrichment Significance (p-value) |

| Multi-omics GRN | Intermediate (Bayesian) | Gene Regulatory Network inference | Computationally Intensive | Edge Confidence Score |

4. Detailed Experimental Protocol: A Representative Multi-Omics Workflow

Protocol: Integrated Transcriptomics-Proteomics-Metabolomics Profiling of Cell Line Response to Drug Treatment A. Sample Preparation (Triplicate)

- Treatment: Seed 1x10^6 cells per condition. Treat with compound vs. vehicle control for 24h.

- Harvest: Trypsinize, wash 2x with PBS. Aliquot into three equal pellets.

- Storage: Snap freeze pellets in liquid N₂. Store at -80°C for parallel omics extraction.

B. Parallel Omics Data Generation

- Transcriptomics (RNA-seq):

- Extraction: Use TRIzol reagent with DNase I treatment. QC via Bioanalyzer (RIN > 8.0).

- Library Prep: Poly-A selection, NEBNext Ultra II Directional RNA Library Prep.

- Sequencing: Illumina NovaSeq, 2x150 bp, 30M reads/sample.

Proteomics (LC-MS/MS with TMT Labeling):

- Lysis: Resuspend pellet in 8M Urea, 100mM TEAB, pH 8.5. Sonicate. Reduce/Alkylate.

- Digestion: Trypsin (1:50 w/w) overnight at 37°C. Desalt.

- Labeling: Label peptides from each sample with unique 16-plex TMTpro reagent.

- Fractionation: Pool labeled peptides, fractionate via basic pH reverse-phase HPLC.

- MS: Analyze fractions on Orbitrap Eclipse. MS1: 120k res; MS2: 50k res, HCD fragmentation.

Metabolomics (HILIC LC-MS, Untargeted):

- Extraction: Resuspend pellet in 80% ice-cold methanol. Vortex, sonicate, centrifuge (15k g, 10 min, 4°C).

- Analysis: Inject supernatant onto Acquity BEH Amide column. Elute with gradient (A: 95% ACN/20mM AmAcetate; B: 50% ACN).

- MS: Q-TOF in both positive/negative ESI mode. Data-dependent acquisition.

C. Data Processing & Integration

- Individual Omics Analysis:

- RNA-seq: Align to reference genome (STAR), quantify genes (featureCounts), Differential Expression (DESeq2, adj. p < 0.05).

- Proteomics: Database search (MaxQuant), TMT reporter ions quantification. Differential Abundance (Limma, adj. p < 0.05).

- Metabolomics: Peak picking, alignment (XCMS), annotation (METLIN). Differential Abundance (Limma).

- Integration: Use MOFA+:

- Input normalized matrices (log counts, log2 ratios, peak intensities).

- Train model to infer 5-10 latent factors.

- Interpret factors via loadings (genes/proteins/metabolites) and correlate with phenotype.

5. The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Vendor Examples | Function in Multi-Omics |

|---|---|---|

| TMTpro 16-plex Kit | Thermo Fisher Scientific | Isobaric labeling for multiplexed quantitative proteomics of up to 16 samples simultaneously. |

| NEBNext Ultra II Kits | New England Biolabs | High-efficiency library preparation for next-generation sequencing (RNA/DNA). |

| Single-Cell Multiome ATAC + Gene Exp. | 10x Genomics | Simultaneous profiling of chromatin accessibility and transcriptome in single nuclei. |

| Cellular Metabolomics Extraction Kit | Biotium | Optimized solvent system for quenching metabolism and extracting polar/neutral metabolites. |

| Sera-Mag Oligo(dT) Magnetic Beads | Cytiva | Poly-A mRNA capture for transcriptomics, compatible with automation. |

| PhosSTOP/EDTA-free cOmplete | Roche/Sigma-Aldrich | Preserve phospho-proteome and prevent protein degradation during lysis. |

| PBS, Mass Spec Grade | Thermo Fisher Scientific | Ensure minimal background ion contamination for sensitive proteomics/metabolomics. |

6. Signaling Pathway Reconstruction via Multi-Omics Integration Integrated data enables mapping of active pathways from gene to metabolite.

Diagram Title: Multi-Omics Mapped Signaling Pathway

7. Conclusion and Future Directions Defining the multi-omics universe is an ongoing endeavor. Success in multi-omics integration research hinges on rigorous experimental design, standardized protocols, and sophisticated computational tools that can handle the scale, noise, and biological complexity of these interconnected data layers. The future lies in real-time integration, single-cell multi-omics, and the incorporation of spatial technologies, moving ever closer to a complete, predictive digital model of the cell.

1. Introduction: The Multi-Omics Imperative

Multi-omics data integration research is the systematic effort to combine, analyze, and interpret heterogeneous datasets from diverse molecular layers—such as genomics, transcriptomics, proteomics, metabolomics, and epigenomics. The core thesis posits that biological function emerges from the complex interactions between these layers, and therefore, a unified narrative cannot be derived from any single 'omics' modality in isolation. The central challenge lies in overcoming the technical, computational, and biological disparities between these data silos to construct a coherent, systems-level model of biological state and function.

2. The Data Silo Landscape: Sources and Disparities

The following table summarizes the core quantitative characteristics of major omics modalities, highlighting the sources of integration complexity.

Table 1: Comparative Overview of Major Omics Data Modalities

| Modality | Key Measurement | Typical Technology | Throughput | Dynamic Range | Temporal Resolution |

|---|---|---|---|---|---|

| Genomics | DNA Sequence & Variation | NGS (WGS, WES) | Very High (Billions of reads) | Static (Diploid) | Static/Low |

| Epigenomics | DNA Methylation, Chromatin Accessibility | Bisulfite-seq, ATAC-seq | High | ~3-4 orders of magnitude | Medium-High |

| Transcriptomics | RNA Abundance (Coding & Non-coding) | RNA-seq, scRNA-seq | Very High | ~5 orders of magnitude | High |

| Proteomics | Protein Abundance & Modification | LC-MS/MS, TMT | Medium | ~4-5 orders of magnitude | Medium |

| Metabolomics | Small-Molecule Metabolite Levels | LC/GC-MS, NMR | Low-Medium | ~3-6 orders of magnitude | Very High |

3. Foundational Methodologies for Data Integration

3.1. Early Integration (Data-Level) This approach merges raw or pre-processed data from multiple omics into a single composite dataset for joint analysis.

- Protocol: Concatenation-Based Integration for Multi-Omics Clustering.

- Data Preprocessing: Independently normalize each omics matrix (e.g., gene counts, protein intensities) using modality-specific methods (e.g., DESeq2 for RNA-seq, vsn for proteomics).

- Feature Selection: Perform variance-stabilizing selection (e.g., top 1000 most variable features) per modality to reduce dimensionality and noise.

- Scaling & Concatenation: Scale selected features from each matrix to have zero mean and unit variance (Z-score). Horizontally concatenate the scaled matrices into a unified sample-by-(omics features) matrix

M_combined. - Joint Analysis: Apply unsupervised learning algorithms (e.g., Similarity Network Fusion (SNF), multi-omics k-means) directly on

M_combinedto identify novel sample stratifications.

3.2. Intermediate Integration (Feature-Level) This method models relationships between latent variables inferred from each dataset.

- Protocol: Multi-Omics Factor Analysis (MOFA/MOFA+).

- Model Setup: Prepare omics datasets

{X_1, X_2, ..., X_M}forNshared samples. Specify likelihoods (e.g., Gaussian for continuous, Bernoulli for methylation). - Factorization: The model decomposes each data view as

X_m = Z W_m^T + ε_m, whereZis the shared matrix of latent factors across all omics,W_mare view-specific weights, andε_mis noise. - Training: Use variational inference to estimate parameters, automatically learning the number of active factors.

- Interpretation: Correlate latent factors (

Z) with sample metadata (e.g., clinical outcome) and examine top-weighted features (W_m) per factor and omics view to derive biological insights.

- Model Setup: Prepare omics datasets

3.3. Late Integration (Decision-Level) Analyses are performed separately, and results are integrated at the level of predictions or statistical inferences.

- Protocol: Bayesian Integrative Analysis for Biomarker Discovery.

- Independent Analysis: For each omics dataset, perform differential analysis (e.g., DESeq2 for RNA-seq, limma for proteomics) comparing experimental conditions.

- Result Harmonization: Extract p-values, effect sizes (e.g., log2 fold-change), and feature identifiers (e.g., gene symbols). Map all identifiers to a common namespace (e.g., official gene symbol).

- Bayesian Meta-Analysis: For each mapped gene, combine evidence across omics layers using a Bayesian framework. Model:

P(H|D) ∝ P(D_genomics|H) * P(D_transcriptomics|H) * P(D_proteomics|H) * P(H), whereHis the hypothesis of differential activity. - Decision Fusion: Rank genes by their posterior probability of being consistently altered across multiple omics levels, generating a robust multi-omics biomarker signature.

4. Visualizing the Integration Pathway

Diagram Title: Multi-Omics Data Integration Conceptual Workflow

5. A Case Study: Integrating Signaling Pathways

A unified narrative often requires mapping multi-omic perturbations onto known biological pathways. Below is a simplified signaling pathway diagram derived from integrated genomic (mutations), transcriptomic (gene expression), and phospho-proteomic data.

Diagram Title: Integrated Multi-Omics View of PI3K-AKT-mTOR Signaling

6. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Tools for Multi-Omics Integration Studies

| Item | Category | Function in Multi-Omics Workflow |

|---|---|---|

| Single-Cell Multi-Omic Kits (e.g., 10x Genomics Multiome ATAC + Gene Exp.) | Wet-lab Reagent | Enables simultaneous assay of chromatin accessibility (epigenomics) and gene expression (transcriptomics) from the same single cell, providing intrinsically paired data. |

| Tandem Mass Tag (TMT) Reagents | Proteomics Reagent | Allows multiplexed quantitative analysis of up to 18 proteomes in a single LC-MS/MS run, reducing batch effects and enabling direct comparison across conditions for integration. |

| Cell Signaling Multiplex Panels (Luminex/LEGENDplex) | Immunoassay | Quantifies dozens of proteins (cytokines, phospho-proteins) from minute sample volumes, providing mid-throughput proteomic data linkable to transcriptomic reads. |

| Reference Databases (e.g., STRING, KEGG, Reactome) | Bioinformatics Resource | Provide prior knowledge networks of protein-protein interactions and pathway relationships, essential for interpreting and connecting features from disparate omics layers. |

| Integration Software Packages (e.g., MOFA+, mixOmics, MultiAssayExperiment in R) | Computational Tool | Provide standardized, statistically rigorous frameworks for implementing intermediate and late integration methods, ensuring reproducibility. |

| Synthetic Spike-In Standards (e.g., SIRVs for RNA-seq, UPS2 for proteomics) | Quality Control Reagent | Added to samples before processing to technically monitor and correct for platform-specific biases and detection limits across assays. |

7. Conclusion

Transitioning from data silos to a unified biological narrative is the defining challenge and opportunity of modern biology. Successful multi-omics data integration research requires a concerted cycle of experimental design that prioritizes matched samples, methodological selection appropriate to the biological question, and interpretation grounded in prior knowledge. By systematically applying the protocols, visualizations, and tools outlined herein, researchers can move beyond correlative lists to construct causative, mechanistic models that accelerate therapeutic discovery and precision medicine.

Multi-omics data integration research is the interdisciplinary field dedicated to developing and applying computational and statistical methods to combine diverse biological data sets (genomics, transcriptomics, proteomics, metabolomics, etc.) to construct comprehensive models of biological systems. This whitepaper delineates the two principal integration paradigms—vertical and horizontal—and situates them within the ultimate goal of achieving a predictive, systems-level understanding of biology, crucial for advancing biomarker discovery and therapeutic development.

Biological systems are inherently multi-layered. The central dogma (DNA → RNA → Protein) is an oversimplification of a dynamic, regulated network with extensive feedback and cross-talk. Multi-omics integration research seeks to move beyond single-data-type analysis to capture this complexity. The core challenge is methodological: how to effectively fuse heterogeneous, high-dimensional, and noisy data types measured across different scales and cohorts to yield biologically and clinically actionable insights.

Core Integration Paradigms

Two fundamental architectural strategies have emerged: Horizontal and Vertical Integration.

Horizontal Integration (Data-Level)

Horizontal integration, also called "late integration" or "concatenation-based integration," involves combining multiple omics datasets from the same set of biological samples. The data matrices (e.g., gene expression, protein abundance) are aligned by sample ID and often concatenated into a single, wide feature matrix for downstream analysis.

- Objective: To find coordinated patterns (clusters, dimensions) that span multiple molecular layers within a defined cohort.

- Typical Use Case: Stratifying patient tumors into distinct molecular subtypes using combined genomic, epigenomic, and proteomic profiles from the same biopsy.

- Key Methods: Multi-omics Factor Analysis (MOFA), Similarity Network Fusion (SNF), multiple kernel learning, and integrated clustering approaches.

Vertical Integration (Model-Level)

Vertical integration, or "early integration," focuses on modeling the flow of biological information across different omics layers for the same biological entity (e.g., a gene locus or a pathway). It prioritizes biological causality and regulatory mechanisms.

- Objective: To understand how variation at one level (e.g., genomic mutation) propagates to influence downstream layers (e.g., transcriptomic, proteomic, phenotypic outcomes).

- Typical Use Case: Identifying cis-regulatory mechanisms (eQTLs, pQTLs) or modeling the impact of a driver mutation on pathway activity and drug response.

- Key Methods: Bayesian networks, mechanistic modeling, multi-optic Quantitative Trait Locus (molQTL) mapping, and pathway-centric enrichment analyses.

Table 1: Horizontal vs. Vertical Integration: A Comparative Overview

| Feature | Horizontal Integration | Vertical Integration |

|---|---|---|

| Core Principle | Combine across omics by sample | Link omics layers by biological entity |

| Data Alignment | Samples (rows) aligned, features (columns) concatenated | Features (e.g., genes) aligned across layers for same sample/cohort |

| Primary Goal | Discovery of cross-omic patterns, subtypes, and biomarkers | Elucidation of mechanistic relationships and causal drivers |

| Temporal Aspect | Generally static/snapshot | Can incorporate directional or causal flow (e.g., genome → phenome) |

| Typical Output | Integrated patient clusters, multi-omics signatures | Regulatory networks, causal inference models, mechanistic hypotheses |

| Strengths | Holistic view of system state; powerful for stratification. | Provides biological interpretability and testable causal hypotheses. |

| Challenges | High dimensionality; difficult to separate correlation from causation. | Requires precise biological alignment; sensitive to missing data. |

Experimental Protocols for Key Integration Studies

Protocol for a Horizontal Integration Study: Multi-Omics Subtyping of Cancer

Objective: To identify novel molecular subtypes of breast cancer using matched DNA methylation, RNA-seq, and proteomics data from tumor biopsies.

- Sample Preparation: Extract high-quality DNA, RNA, and protein from the same tumor tissue core using a trizol-based or sequential extraction kit. Include matched normal adjacent tissue controls.

- Data Generation:

- DNA Methylation: Process using Illumina Infinium MethylationEPIC BeadChip. Perform normalization (ssNoob) and β-value calculation.

- RNA-seq: Prepare libraries with poly-A selection. Sequence on an Illumina platform (minimum 30M paired-end reads). Align to reference genome (STAR) and quantify gene expression (featureCounts).

- Proteomics: Perform data-independent acquisition (DIA) mass spectrometry on trypsin-digested peptides. Use a spectral library for identification and quantification (Spectronaut or DIA-NN).

- Preprocessing: For each dataset, remove low-variance features, perform batch correction (ComBat), and log-transform where appropriate (e.g., RNA-seq counts, proteomics intensities).

- Integration & Analysis: Apply Similarity Network Fusion (SNF):

- Construct patient similarity networks for each omics data type separately using a chosen metric (e.g., Euclidean distance).

- Fuse networks iteratively via a nonlinear message-passing process to create a single integrated network.

- Apply spectral clustering on the fused network to identify patient clusters (subtypes).

- Validation: Assess cluster robustness via silhouette width and survival analysis (Kaplan-Meier curves, log-rank test) using an independent validation cohort.

Protocol for a Vertical Integration Study: Mapping Proteomic Quantitative Trait Loci (pQTLs)

Objective: To identify genetic variants that influence plasma protein abundance levels, linking genomic variation to the functional proteome.

- Cohort & Genotyping: Utilize a population cohort with whole-genome sequencing (WGS) or dense genotyping array data. Perform standard QC: call rate >98%, Hardy-Weinberg equilibrium p > 1e-6, minor allele frequency (MAF) > 1%.

- Proteomic Profiling: Measure protein levels in plasma using a high-throughput aptamer-based platform (e.g., SomaScan) or multiplexed immunoassay (e.g., Olink). Normalize data using internal controls and correct for technical covariates.

- Covariate Adjustment: Regress out effects of age, sex, genetic principal components (PCs), and batch from the normalized protein abundances to obtain residuals.

- Statistical Mapping: Perform matrixQTL or PLINK analysis:

- For each protein (residuals as phenotype) and each genetic variant within a 1 Mb cis-window of the protein's encoding gene, fit a linear model:

Protein ~ Genotype + Covariates. - Apply Storey's q-value method for multiple testing correction to identify significant cis-pQTLs (FDR < 0.05).

- For each protein (residuals as phenotype) and each genetic variant within a 1 Mb cis-window of the protein's encoding gene, fit a linear model:

- Validation & Triangulation: Replicate findings in an independent cohort. Use Mendelian randomization or colocalization analysis (e.g., with COLOC) to assess shared causality with transcriptomic (eQTL) data or disease endpoints.

Visualizing the Pathways and Workflows

Diagram Title: Horizontal and Vertical Integration Workflows

Diagram Title: The Quest for Systems Biology: An Integrated Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Kits for Multi-Omics Sample Preparation

| Item | Function in Multi-Omics Research | Key Considerations |

|---|---|---|

| AllPrep DNA/RNA/Protein Kit (Qiagen) | Simultaneous purification of genomic DNA, total RNA, and protein from a single biological sample. | Preserves molecular integrity for all analytes; critical for ensuring perfect sample matching in vertical integration studies. |

| TRIzol/ TRI Reagent | Monophasic solution for sequential isolation of RNA, DNA, and proteins from cell/tissue lysates. | Cost-effective and widely validated, but requires careful phase separation and may involve more hands-on time. |

| Single-Cell Multiome ATAC + Gene Expression Kit (10x Genomics) | Enables concurrent profiling of chromatin accessibility (ATAC-seq) and gene expression (RNA-seq) from the same single cell. | Enables vertical integration at the single-cell level, linking regulatory landscape to transcriptional output. |

| SomaScan Plasma Protein Assay (SomaLogic) | Aptamer-based platform for measuring ~7,000 human protein analytes from small volumes of plasma or serum. | Provides the high-throughput proteomic data essential for population-scale pQTL studies (vertical integration). |

| Olink Target 96 or Explore Panels | Proximity Extension Assay (PEA) technology for high-specificity, multiplex quantification of proteins in biofluids. | Offers high sensitivity and specificity, suitable for low-abundance biomarker discovery in clinical cohorts. |

| Cell Signaling TotalSeq Antibodies (BioLegend) | Oligo-conjugated antibodies for measuring surface or intracellular proteins alongside transcriptome in single-cell RNA-seq (CITE-seq/REAP-seq). | Facilitates horizontal integration of protein and RNA data at single-cell resolution within the same experiment. |

Horizontal and vertical integration are not competing strategies but complementary approaches within multi-omics data integration research. Horizontal integration provides a panoramic, static view of system states, ideal for classification and biomarker discovery. Vertical integration drills down to establish mechanistic, often causal, links between molecular layers. The true quest for systems biology lies in the iterative cycling between these paradigms: using horizontal discovery to generate hypotheses about novel subtypes, which are then mechanistically deconstructed using vertical integration, ultimately feeding into predictive, multi-scale models of health and disease. This integrative loop is foundational to the future of precision medicine and rational drug development.

Within the transformative field of multi-omics data integration research, the shift from hypothesis-driven inquiry to unbiased, data-driven discovery represents a fundamental paradigm shift. This approach leverages high-throughput technologies and advanced computational methods to generate novel insights from complex biological systems without a priori assumptions, accelerating biomarker identification and therapeutic target discovery.

The Data-Driven Multi-Omics Integration Pipeline

Modern unbiased discovery relies on the systematic generation and integration of multiple omics layers. The quantitative scale of data involved is substantial.

Table 1: Scale and Sources in Contemporary Multi-Omics Studies

| Omics Layer | Typical Measurement Technology | Approx. Features per Sample | Key Output Measured |

|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS) | 3-5 million SNPs/Indels | Genetic variation, mutations |

| Transcriptomics | Bulk/Single-cell RNA-seq | 20,000-60,000 genes/transcripts | Gene expression levels |

| Proteomics | Mass Spectrometry (TMT/LFQ) | 3,000-10,000 proteins | Protein abundance, PTMs |

| Metabolomics | LC-MS/GC-MS NMR | 100-1,000 metabolites | Small molecule abundance |

| Epigenomics | ATAC-seq, ChIP-seq, Bisulfite-seq | 100,000s peaks/sites | Chromatin accessibility, methylation |

Core Experimental Protocols for Unbiased Discovery

Protocol 1: Cross-Omic Sample Preparation for Integrative Analysis

Objective: To generate matched genomic, transcriptomic, and proteomic data from a single biological specimen (e.g., tumor biopsy).

- Tissue Partitioning & Lysis: Snap-frozen tissue is cryo-pulverized. Powder is divided into aliquots in DNA/RNA Shield, RIPA buffer (with protease inhibitors), and metabolomics stabilization solution.

- Parallel Nucleic Acid & Protein Extraction:

- DNA/RNA: Use a dual-prep kit (e.g., AllPrep). Homogenize in RLT Plus buffer, pass through an AllPrep DNA column. Flow-through is mixed with ethanol for binding RNA to a separate column. DNA and RNA are eluted separately.

- Proteins: The remaining tissue powder in RIPA is sonicated (3x10s pulses, 30% amplitude). Lysate is centrifuged at 14,000g for 15 min at 4°C. Supernatant is quantified via BCA assay.

- Library Preparation & Sequencing:

- DNA: 100ng input for WGS library prep (enzymatic fragmentation, end-repair, A-tailing, adapter ligation, PCR amplification).

- RNA:

- For bulk: 500ng input for poly-A selection and stranded cDNA library prep.

- For single-cell: Generate single-cell suspensions, target 10,000 cells for 10x Genomics 3' v4 chemistry.

- Mass Spectrometry Proteomics:

- 50µg protein per sample is reduced (DTT), alkylated (IAA), and digested with trypsin (1:50 ratio) overnight at 37°C.

- Peptides are labeled with TMTpro 16plex reagent, pooled, and fractionated by high-pH reverse-phase HPLC into 24 fractions.

- LC-MS/MS analysis on an Orbitrap Eclipse with a 120min gradient.

Protocol 2: Single-Cell Multi-Omics (CITE-seq)

Objective: Simultaneously capture transcriptome and surface protein data from single cells.

- Cell Preparation: Generate a single-cell suspension from fresh tissue (dissociation enzyme cocktail, 37°C, 15-30 min). Pass through a 40µm strainer. Stain with 1-2µL of TotalSeq-B antibody cocktail (containing ~100 barcoded antibodies) per million cells for 30 min on ice.

- Washing & Loading: Wash cells 3x with PBS + 0.04% BSA. Count and assess viability (>90%). Load cells onto a 10x Genomics Chromium Chip B to target 10,000 cells.

- GEM Generation & Library Prep: Follow manufacturer's protocol. GEMs undergo RT, after which cDNA is purified and split for separate library constructions:

- Gene Expression Library: Amplify cDNA, fragment, and add sample index via PCR.

- Antibody-Derived Tag (ADT) Library: Amplify the antibody-derived tags from the cDNA pool using a separate primer set.

- Sequencing: Pool libraries and sequence on an Illumina NovaSeq (28/10/10/90 cycle configuration for ADT index/ADT read/GEX index/GEX read).

Visualizing the Data-Driven Workflow and Integration Logic

Workflow for Unbiased Multi-Omic Discovery

Multi-Omic Data Integration Method Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Kits for Data-Driven Multi-Omic Studies

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| Dual DNA/RNA Purification Kit | Simultaneous extraction of high-quality genomic DNA and total RNA from a single sample, minimizing sample variability. | Qiagen AllPrep DNA/RNA/miRNA Universal Kit |

| Tandem Mass Tag (TMT) Reagents | Multiplexed isobaric labeling for quantitative proteomics, enabling comparison of up to 16 samples in a single MS run. | Thermo Fisher TMTpro 16plex Label Reagent Set |

| Single-Cell Antibody Cocktail (CITE-seq) | Oligo-tagged antibodies for measuring surface protein abundance alongside transcriptome in single cells. | BioLegend TotalSeq-B Human Universal Cocktail |

| Single-Cell 3' GEX Kit v4 | Generation of gel bead-in-emulsions (GEMs) and libraries for single-cell RNA-seq gene expression profiling. | 10x Genomics Chromium Next GEM Single Cell 3' Kit v3.1 |

| High-Throughput NGS Library Prep Kit | Fast, automated library construction for whole-genome sequencing from low-input DNA. | Illumina DNA Prep with Enrichment |

| SP3 Paramagnetic Beads | Efficient, detergent-free protein clean-up and digestion for proteomics, compatible with automated workflows. | Cytiva SpeedBeads Magnetic Carboxylate Modified Particles |

| Cell Dissociation Enzyme | Gentle tissue dissociation for generating viable single-cell suspensions from complex tissues. | Miltenyi Biotec GentleMACS Human Tumor Dissociation Kit |

| LC-MS Grade Solvents | Ultra-pure solvents for metabolomics and proteomics LC-MS to minimize background noise and ion suppression. | Honeywell LC-MS CHROMASOLV Water & Acetonitrile |

Abstract: The integration of multi-omics data—genomics, transcriptomics, proteomics, metabolomics—is revolutionizing the path from biomarker discovery to mechanistic disease understanding. This whitepaper provides a technical guide to the core methodologies, experimental protocols, and analytical frameworks driving this transformation, contextualized within the broader thesis of multi-omics integration research.

Multi-omics data integration research is predicated on the thesis that a holistic, systems-level view of biological systems, achieved by computationally and statistically combining diverse molecular data layers, yields insights unattainable through single-omics studies. This approach is essential for disentangling complex disease etiologies, identifying robust biomarkers, and uncovering novel therapeutic targets.

Core Integration Strategies & Quantitative Impact

The choice of integration strategy is dictated by the biological question and data types. The performance of these methods is quantitatively benchmarked using metrics such as accuracy in predicting clinical outcomes, number of novel disease subtypes identified, and validation rates of discovered biomarkers.

Table 1: Comparison of Primary Multi-Omics Integration Strategies

| Strategy | Description | Key Algorithms/Tools | Typical Use Case | Reported Performance Gain vs. Single-Omics |

|---|---|---|---|---|

| Early Integration | Raw or pre-processed data concatenated before analysis. | Standard ML (Random Forest, SVM), Deep Neural Networks. | Predictive modeling with abundant samples. | +15-25% in clinical outcome prediction accuracy. |

| Intermediate Integration | Separate analysis followed by fusion of lower-dimensional representations. | Multi-Omics Factor Analysis (MOFA), Similarity Network Fusion (SNF). | Discovery of coordinated molecular patterns and patient stratification. | Identifies 2-4 novel, clinically relevant disease subtypes. |

| Late Integration | Separate analyses with results combined at decision/interpretation level. | Bayesian frameworks, Ensemble methods, P-value aggregation. | Biomarker signature validation and causal inference. | Increases biomarker validation rate by ~30%. |

Experimental Protocol: A Longitudinal Multi-Omics Cohort Study

This protocol outlines a standard workflow for an integrated biomarker discovery study.

A. Study Design & Sample Collection:

- Cohort: Recruit a prospective cohort (e.g., N=500) of patients and matched healthy controls.

- Biospecimens: Collect primary tissue (e.g., tumor biopsies) and liquid biopsies (blood, plasma, serum) at diagnosis and key timepoints (e.g., post-treatment).

- Aliquoting: Immediately aliquot samples to minimize freeze-thaw cycles. Store at -80°C or in liquid nitrogen.

B. Multi-Omics Data Generation:

- Genomics (DNA from tissue/blood): Perform Whole Genome Sequencing (WGS) or Whole Exome Sequencing (WES) to identify somatic mutations, copy number variations (CNVs), and structural variants. Use Illumina NovaSeq platforms. Average coverage: 30x (WGS) / 100x (WES).

- Transcriptomics (RNA from tissue): Conduct bulk or single-cell RNA-Seq (scRNA-Seq). For bulk, use Illumina platforms targeting 50 million reads/sample. For scRNA-Seq, employ 10x Genomics Chromium system.

- Proteomics & Phosphoproteomics (Tissue/Plasma): Perform data-independent acquisition (DIA) mass spectrometry (e.g., on a Thermo Fisher Orbitrap Eclipse) for deep, quantitative profiling. Enrich phosphopeptides using TiO₂ or IMAC kits.

- Metabolomics (Plasma/Serum): Apply both targeted (LC-MS/MS with authentic standards) and untargeted (high-resolution LC-MS) platforms.

C. Data Preprocessing & Integration:

- Bioinformatic Processing: Use established pipelines (GATK for genomics, STAR/Kallisto for RNA-Seq, DIA-NN for proteomics, XCMS for metabolomics).

- Intermediate Integration via MOFA2:

- Input: Matrices of mutations (binary), gene expression (normalized counts), protein abundance (log2 intensities), metabolite levels (scaled).

- Run MOFA2 to decompose data into a set of latent factors that capture shared and specific sources of variation across omics layers.

- Correlate factors with clinical metadata to interpret biology.

Visualizing Integrated Workflows and Pathways

Title: Multi-Omics Integration Analysis Workflow

Title: Integrated Pathway Inference from Multi-Omics Data

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Kits for Multi-Omics Studies

| Item | Function | Example Product/Kit |

|---|---|---|

| PAXgene Blood RNA Tube | Stabilizes intracellular RNA in blood samples for transcriptomic studies, preserving gene expression profiles. | BD PAXgene Blood RNA Tubes |

| Streptavidin Magnetic Beads | Critical for immunoprecipitation and pull-down assays in protein-protein interaction studies and target validation. | Dynabeads Streptavidin |

| Phosphopeptide Enrichment Kit | Selective enrichment of phosphorylated peptides from complex digests for deep phosphoproteomic profiling. | Thermo Fisher TiO₂ Mag Sepharose Kit |

| Single-Cell 3' Gel Bead Kit | Enables partitioning and barcoding of single cells for transcriptome analysis in droplet-based scRNA-Seq. | 10x Genomics Chromium Next GEM Kit |

| Plasma/Serum Metabolome Kit | Depletes proteins and extracts metabolites from biofluids with high recovery and reproducibility for metabolomics. | Biocrates AbsoluteIDQ p400 HR Kit |

| Multi-Omics Tissue Homogenizer | Provides rapid, uniform disruption of tough tissues while keeping RNA, DNA, and proteins intact for co-extraction. | Bertin Instruments Precellys Homogenizer |

The Integration Toolkit: A 2024 Guide to Methods, Workflows, and Practical Applications

Multi-omics data integration research aims to combine diverse biological data layers—genomics, transcriptomics, proteomics, metabolomics—to construct a comprehensive model of biological systems. This paradigm is essential for unraveling complex disease mechanisms and identifying robust therapeutic targets. The fundamental architectural decision in this workflow is the choice between Early (Data-Level) Integration and Late (Model-Level) Integration. This guide provides a technical framework for selecting the appropriate strategy based on experimental design and analytical goals.

Core Strategies: Definitions and Technical Foundations

Early Integration (Data-Level Fusion)

In early integration, heterogeneous omics datasets are combined into a single, unified data matrix before model building. This requires extensive preprocessing to normalize, scale, and transform disparate data types into a compatible format.

Late Integration (Model-Level Fusion)

Late integration involves building separate models or performing separate analyses on each omics dataset independently. The results (e.g., learned features, statistical scores, predicted labels) are then integrated at the decision or interpretation level.

Quantitative Comparison of Integration Strategies

The following table summarizes the key computational and practical characteristics of each approach, synthesized from current benchmarking studies.

Table 1: Strategic Comparison of Early vs. Late Integration

| Characteristic | Early Integration | Late Integration |

|---|---|---|

| Data Handling | Raw or preprocessed data matrices concatenated. | Each dataset processed independently; results combined. |

| Dimensionality | Very high, prone to the "curse of dimensionality." | Manages dimensionality within each modality separately. |

| Handling Heterogeneity | Challenging; requires sophisticated normalization. | Easier; modality-specific processing is applied. |

| Model Complexity | Single, often complex model (e.g., deep neural network). | Multiple simpler models or ensemble methods. |

| Interpretability | Can be low; difficult to disentangle modality-specific signals. | Higher; modality-specific contributions remain clearer. |

| Optimal Use Case | Strong inter-modal correlations; ample sample size. | Weak correlations between modalities; distinct data structures. |

| Key Challenge | Noise propagation across modalities. | Designing a robust framework for combining disparate results. |

Table 2: Performance Metrics from Benchmarking Studies (Hypothetical Data)

| Study Focus | Early Integration Method | Late Integration Method | Reported Accuracy | Key Limitation Noted |

|---|---|---|---|---|

| Cancer Subtype Classification | Concatenation + PCA + SVM | Kernel Fusion | 89.2% | Early: Sensitivity to batch effects |

| Drug Response Prediction | Stacked Autoencoders | Similarity Network Fusion | 82.5% | Late: Loss of direct feature interaction |

| Patient Survival Stratification | Partial Least Squares | Multi-Kernel Learning | 76.8% | Early: Lower performance on sparse data |

Detailed Experimental Protocols

Protocol 4.1: Implementing Early Integration via Concatenation & Dimensionality Reduction

Objective: To integrate transcriptomics (RNA-Seq) and proteomics (LC-MS) data for sample classification.

Materials: Normalized count matrix (RNA-Seq), Log2-transformed intensity matrix (LC-MS), Standardized computational environment (R/Python).

Procedure:

- Preprocessing: Perform quantile normalization on each dataset separately to adjust for technical variation.

- Feature Selection: Apply variance-stable selection (e.g., top 2000 variable features per modality).

- Scaling: Standardize each feature (mean=0, variance=1) across samples within each dataset.

- Concatenation: Horizontally merge the two scaled matrices by sample ID to create a unified matrix

[Sample x (Features_RNA + Features_Protein)]. - Dimensionality Reduction: Apply Principal Component Analysis (PCA) or non-linear methods (t-SNE, UMAP) to the unified matrix.

- Downstream Analysis: Use derived components for clustering (e.g., k-means) or classification (e.g., random forest).

Protocol 4.2: Implementing Late Integration via Similarity Network Fusion (SNF)

Objective: To integrate epigenetic (DNA methylation) and transcriptomic data for discovering disease subgroups.

Materials: Beta-value matrix (Methylation), Normalized expression matrix (RNA-Seq), SNFtool R package.

Procedure:

- Construct Modality-Specific Similarity Networks:

- For each data modality, calculate a patient-to-patient similarity matrix (e.g., using Euclidean distance).

- Convert each distance matrix into a normalized, sparse similarity graph (KNN-based), resulting in graphs

W_methylationandW_expression.

- Fuse Networks Iteratively:

- Use the SNF algorithm to iteratively update each graph so that it reflects information from the other graph.

- The fusion equation for two networks is:

W_fused = W_expression * S * W_methylation^T + W_methylation * S * W_expression^T, whereSis a normalization matrix. This is performed iteratively until convergence.

- Cluster the Fused Network:

- Apply spectral clustering on the final fused similarity matrix

W_fusedto obtain sample clusters.

- Apply spectral clustering on the final fused similarity matrix

- Validation: Evaluate cluster robustness and biological relevance using survival analysis or known clinical labels.

Visualizations

Diagram 1: Multi-Omics Integration Decision Flow

Diagram 2: Similarity Network Fusion (SNF) Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents and Computational Tools for Multi-Omics Integration

| Item / Solution | Function / Purpose | Example in Protocol |

|---|---|---|

| Quantile Normalization Script | Aligns statistical distributions across samples within a dataset, making them comparable. | Preprocessing step in Protocol 4.1 to remove technical bias. |

| Variance-Stabilizing Selection Algorithm | Identifies informative features (genes/proteins) with high biological variability, reducing noise. | Feature selection prior to concatenation in Protocol 4.1. |

| Z-Score Standardization Module | Scales features to a common mean and variance, preventing high-variance modalities from dominating the model. | Data scaling step within each modality. |

| Similarity Network Fusion (SNF) Toolbox | A computational package specifically designed to perform late integration via network fusion. | Core algorithm for Protocol 4.2 (e.g., SNFtool in R). |

| Spectral Clustering Library | Clustering algorithm effective for identifying community structures within graphs or similarity matrices. | Used to cluster the final fused network in Protocol 4.2. |

| Multi-Kernel Learning (MKL) Framework | A late integration method that optimally combines kernel matrices built from different data types for prediction. | Alternative to SNF for supervised tasks in Table 2. |

The choice between early and late integration is not universally optimal but is contingent upon the biological question, data quality, and sample size. Early integration is powerful for capturing direct interactions between molecular layers but demands rigorous preprocessing and large n. Late integration offers flexibility and preserves data structure integrity, making it robust for exploratory analysis of heterogeneous data. In practice, a hybrid or intermediate approach often emerges as the most pragmatic solution within the iterative scope of multi-omics research.

Multi-omics data integration research aims to holistically understand biological systems by combining diverse molecular data layers (genomics, transcriptomics, proteomics, metabolomics, etc.). This integration is pivotal for elucidating complex disease mechanisms, identifying robust biomarkers, and accelerating therapeutic discovery. However, the high dimensionality, heterogeneity, noise, and differing scales of omics datasets present formidable computational challenges. This whitepaper details three advanced computational methods—Multi-Kernel Learning (MKL), Graph Neural Networks (GNNs), and AI-driven fusion architectures—that are critical for effective multi-omics integration within a modern research thesis framework.

Core Methodologies & Protocols

Multi-Kernel Learning (MKL) for Heterogeneous Data Fusion

MKL provides a principled framework for integrating disparate data types by constructing a separate kernel (similarity matrix) for each omics view and then optimally combining them.

Experimental Protocol for MKL-Based Integration:

- Data Preprocessing: For each omics dataset (e.g., RNA-seq, methylation arrays, somatic mutations), perform platform-specific normalization, missing value imputation, and feature scaling.

- Kernel Construction: Define a kernel function ( K_m ) for each omics modality ( m ). Common choices include:

- Linear Kernel: ( K(xi, xj) = xi^T xj )

- Gaussian RBF Kernel: ( K(xi, xj) = \exp(-\gamma ||xi - xj||^2) )

- Polynomial Kernel: ( K(xi, xj) = (xi^T xj + c)^d )

- Kernel Combination: Learn an optimal weighted combination of the base kernels: ( K{combined} = \sum{m=1}^{M} \betam Km ), with ( \betam \geq 0 ) and often ( \sum \betam = 1 ). Optimization can use heuristic methods, multiple kernel learning with a regularizer (e.g., ( ||\beta||_p )), or supervised learning objectives.

- Model Training: Employ the combined kernel in a kernel-based machine learning algorithm (e.g., Support Vector Machine for classification, Kernel Ridge Regression for survival prediction).

- Validation: Use stratified cross-validation, ensuring patient samples are not split across training and test sets for different omics views.

Diagram Title: Multi-Kernel Learning Integration Workflow

Graph Neural Networks (GNNs) for Structured Biological Data

GNNs operate directly on graph structures, making them ideal for integrating omics data with prior biological knowledge networks (e.g., protein-protein interaction, gene regulatory pathways).

Experimental Protocol for GNN-Based Multi-Omics Analysis:

- Graph Construction:

- Nodes: Represent biological entities (e.g., genes, proteins, metabolites).

- Node Features: Encode multi-omics measurements as feature vectors for each node (e.g., mutation status, expression, methylation).

- Edges: Define based on known interactions from databases like STRING, KEGG, or Reactome.

- Model Architecture: Implement a GNN model (e.g., Graph Convolutional Network, Graph Attention Network).

- Message Passing: For each node, aggregate feature information from its neighbors. A simple update for node ( v ) at layer ( l ) is: ( hv^{(l)} = \sigma ( W^{(l)} \cdot \text{AGGREGATE}({ hu^{(l-1)}, \forall u \in \mathcal{N}(v) } ) ) ), where ( h_v ) is the node embedding, ( \mathcal{N} ) is the set of neighbors, and ( \sigma ) is a non-linear activation.

- Readout & Prediction: After ( L ) layers, use a pooling function (e.g., global mean) to generate a graph-level embedding for tasks like patient outcome prediction, or use node-level embeddings for tasks like gene prioritization.

- Training: Use task-specific loss (e.g., cross-entropy for classification) and train with backpropagation.

Diagram Title: GNN Message Passing Between Two Layers

AI-Driven Fusion Architectures

These are end-to-end deep learning models designed to learn joint representations from raw or processed multi-omics inputs.

Experimental Protocol for a Deep Fusion Autoencoder:

- Input: Aligned multi-omics vectors per sample (e.g., concatenated or as separate channels).

- Encoder: A neural network (often with omics-specific subnetworks) compresses the input into a low-dimensional latent representation ( z ). ( z = f\text{encoder}(x\text{geno}, x\text{trans}, x\text{prot}; \theta) ).

- Bottleneck: The latent space ( z ) is the integrated, compressed representation used for downstream tasks.

- Decoder: The network reconstructs the original input from ( z ). ( \hat{x} = f_\text{decoder}(z; \phi) ).

- Training: Minimize a composite loss: ( \mathcal{L} = \mathcal{L}\text{reconstruction} + \lambda \mathcal{L}\text{task} ), where the task loss (e.g., classification) is applied directly to ( z ), forcing it to be informative.

- Validation: Performance is evaluated on held-out test sets for both reconstruction fidelity and the primary predictive task.

Quantitative Performance Comparison

Recent benchmarks highlight the performance of these methods on common tasks like cancer subtype classification and survival prediction.

Table 1: Performance Comparison on TCGA Pan-Cancer Classification

| Method Category | Specific Model | Average Accuracy (%) | Average F1-Score | Key Strength |

|---|---|---|---|---|

| Single-Omics Baseline | SVM (RNA-seq only) | 71.2 | 0.69 | Simplicity, interpretability |

| Multi-Kernel Learning | SimpleMKL | 78.5 | 0.77 | Handles heterogeneity, no need for imputation |

| Graph Neural Network | MultiOmicsGCN (with PPI) | 82.1 | 0.81 | Incorporates prior biological knowledge |

| AI Fusion Model | DeepMF (Autoencoder) | 80.7 | 0.79 | Learns complex non-linear interactions |

Table 2: Computational Resource Requirements

| Method | Avg. Training Time (hrs) | GPU Memory Required (GB) | Scalability to >10k Features |

|---|---|---|---|

| Multi-Kernel Learning | 1.5 | < 2 (CPU-bound) | Moderate (kernel matrix size) |

| Graph Neural Network | 0.8 | 4 - 8 | High (sparse graph ops) |

| Deep Fusion Autoencoder | 2.3 | 6 - 12 | High (with regularization) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Libraries

| Item/Category | Example Specific Tool (v2.0+) | Function in Multi-Omics Integration |

|---|---|---|

| Kernel Learning Library | SHOGUN Toolbox | Provides efficient implementations of MKL algorithms for combining diverse omics kernels. |

| GNN Framework | PyTorch Geometric (PyG) | A library for building and training GNNs on structured omics data and biological networks. |

| Deep Learning Platform | TensorFlow / Keras | Enables the design and training of custom deep fusion architectures (e.g., autoencoders). |

| Omics Data Preprocessor | scanpy (for scRNA-seq) / QIIME 2 (for microbiome) |

Handles modality-specific normalization, filtering, and batch effect correction. |

| Biological Network DB | NDEx (Network Data Exchange) | A repository for downloading and sharing pre-built biological interaction networks for GNNs. |

| Benchmarking Dataset | The Cancer Genome Atlas (TCGA) Pan-cancer atlas | A standard, multi-omics cohort for training and validating integration models. |

| Hyperparameter Optimization | Ray Tune | Facilitates scalable, distributed search for optimal model parameters across complex pipelines. |

| Visualization Suite | igraph / Gephi |

For visualizing and interpreting the learned graph structures and node embeddings from GNNs. |

Multi-omics data integration research is a cornerstone of modern systems biology, aiming to comprehensively model complex biological systems by jointly analyzing diverse molecular data layers (e.g., genomics, transcriptomics, proteomics, metabolomics). The core thesis is that the synergistic integration of these complementary data types can uncover emergent biological insights—such as novel disease subtypes, biomarkers, and mechanistic pathways—that are inaccessible through single-omics analysis. This technical guide reviews essential computational frameworks that enable this integration, each addressing distinct statistical and computational challenges inherent in handling high-dimensional, heterogeneous, and noisy multi-omics datasets.

Core Frameworks: Technical Review

MOFA+ (Multi-Omics Factor Analysis+)

MOFA+ is a Bayesian statistical framework for unsupervised integration of multi-omics data. It decomposes multiple data matrices into a set of common latent factors that capture the shared variance across omics layers, plus omics-specific residuals.

- Key Algorithm & Methodology: It uses variational inference to approximate the posterior distribution of the model parameters. The core model assumes the observed data is generated from a low-rank matrix factorization: For sample n, feature d in view m:

Y_m[n,d] = Σ_k Z[n,k] * W_m[d,k] + ε_m[n,d]whereZare the latent factors,W_mare the view-specific weights, andεis noise. - Experimental Protocol (Typical Workflow):

- Data Preprocessing: Per-omics normalization and logging as appropriate. Handle missing values explicitly (a MOFA+ strength).

- Model Training: Specify the number of factors (or use automatic relevance determination). Train until the evidence lower bound (ELBO) converges.

- Factor Interpretation: Correlate factors with sample covariates (e.g., clinical outcomes) for biological interpretation.

- Downstream Analysis: Use factor values for clustering, or weights (

W_m) for identifying driving features per factor.

mixOmics

mixOmics is an R toolkit offering a wide array of multivariate methods for the exploration and integration of multi-omics datasets, with a strong emphasis on discriminant analysis and supervised integration.

- Key Algorithm & Methodology: Its flagship method is DIABLO (Data Integration Analysis for Biomarker discovery using Latent variable approaches), a supervised multi-block Partial Least Squares Discriminant Analysis (PLS-DA) method. It seeks a common subspace where separation between pre-defined classes is maximized, while simultaneously integrating information from multiple omics blocks.

- Experimental Protocol (DIABLO Workflow):

- Design: Define the classification problem and the omics blocks (X1, X2,...).

- Tuning: Use repeated cross-validation to tune the number of components and the key

keepXparameter (number of selected features per block per component). - Model Training: Run the final DIABLO model with tuned parameters.

- Evaluation: Assess classification performance via cross-validation error rates. Visualize sample plots, correlation circos plots, and feature selection networks.

PyTorch Geometric (PyG) for Multi-Omics

PyTorch Geometric is a library built upon PyTorch for deep learning on graphs. In multi-omics, it is used to model biological systems as networks, where nodes can represent molecules (genes, proteins) and edges their interactions.

- Key Algorithm & Methodology: Graph Neural Networks (GNNs), such as Graph Convolutional Networks (GCNs) or Graph Attention Networks (GATs), operate by propagating and transforming node features across the graph structure. For multi-omics integration, nodes can be annotated with features from different omics layers (e.g., mutation status, expression level).

- Experimental Protocol (Graph-based Integration):

- Graph Construction: Build a biological network (e.g., a Protein-Protein Interaction network from STRING). Map multi-omics features to corresponding nodes.

- Model Architecture: Define a GNN with multiple convolutional layers, followed by readout and classification/regression heads.

- Training: Use backpropagation and gradient descent to minimize loss (e.g., cross-entropy for patient stratification).

- Interpretation: Apply explainability techniques (e.g., GNNExplainer) to identify important subgraphs and node features.

Comparative Analysis & Data Presentation

Table 1: Quantitative Comparison of Multi-Omics Integration Frameworks

| Feature | MOFA+ | mixOmics (DIABLO) | PyTorch Geometric (GNN) |

|---|---|---|---|

| Primary Paradigm | Unsupervised, Statistical | Supervised, Multivariate | Supervised/Unsupervised, Deep Learning |

| Core Methodology | Bayesian Factor Analysis | Multi-block PLS-DA (sPLS-DA) | Graph Neural Networks (GNNs) |

| Data Input | Matrices (samples x features) | Matrices (samples x features) | Graph (nodes/edges + node features) |

| Key Output | Latent Factors & Loadings | Discrimination Components, Selected Features | Node/Graph Embeddings, Predictions |

| Handles Missing Data | Yes (explicitly) | Limited (requires imputation) | Depends on model setup |

| Scalability | Medium (≈10k features) | Medium (≈10k features) | High (scales with graph size) |

| Interpretability | High (factor analysis) | High (feature selection) | Medium-Low (black-box, needs XAI) |

| Best For | Discovery of latent sources of variation | Biomarker discovery & classification | Modeling relational/network biology |

Table 2: Typical Performance Metrics on Benchmark Tasks (Synthetic Data)

| Framework | Task | Typical Metric | Reported Performance Range* |

|---|---|---|---|

| MOFA+ | Latent Factor Recovery | Correlation with true factors | 0.75 - 0.95 |

| mixOmics (DIABLO) | Sample Classification | Balanced Accuracy | 0.80 - 0.98 |

| PyTorch Geometric (GNN) | Node Classification | AUC-ROC | 0.85 - 0.99 |

*Performance is highly dependent on data quality, signal strength, and model tuning.

Visualization of Workflows and Relationships

MOFA+ Unsupervised Integration Analysis Pipeline

mixOmics DIABLO Supervised Biomarker Discovery

Graph Neural Network for Multi-Omics on Networks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Multi-Omics Integration

| Item (Tool/Resource) | Function & Purpose | Key Application Context |

|---|---|---|

| Singularity/Apptainer Containers | Reproducible, portable software environments encapsulating complex tool dependencies. | Essential for deploying MOFA+, PyG, and other frameworks in HPC or cloud environments. |

| Conda/Bioconda Environments | Language-agnostic package and environment management, especially for R/Python mixes. | Setting up isolated environments for mixOmics (R) and associated Python pre-processing scripts. |

| UCSC Xena or cBioPortal | Public hubs for hosting, visualizing, and accessing large-scale multi-omics cancer datasets. | Primary source for real-world, clinically annotated data to validate integration methods. |

| STRING Database | A comprehensive database of known and predicted protein-protein interactions. | The primary resource for constructing prior biological networks used in graph-based (PyG) analyses. |

| OmicsSoft/NetworkAnalyst | Web-based platforms for post-integration functional enrichment and network analysis. | Interpreting lists of driving features from MOFA+ or DIABLO via pathway over-representation. |

| PyTorch Geometric (PyG) Datasets | Pre-processed benchmark graph datasets (e.g., from Planetoid, MoleculeNet). | Standardized datasets for developing and benchmarking new multi-omics GNN architectures. |

This whitepaper provides an in-depth technical guide to a canonical multi-omics data integration workflow for cancer subtyping, framed within the broader research thesis that integrated analysis of genomic, transcriptomic, epigenomic, and proteomic data yields clinically actionable biological insights superior to single-omics approaches. We present a step-by-step case study using a simulated but representative clear cell renal cell carcinoma (ccRCC) cohort to demonstrate a complete, reproducible pipeline.

Multi-omics data integration research seeks to combine multiple layers of biological information to construct a comprehensive model of cellular function and disease pathophysiology. In oncology, this approach is critical for moving beyond single-gene biomarkers towards network-based subtyping, which can stratify patients for prognosis and therapy.

Case Study Design & Dataset

Our simulated case study is designed to identify robust molecular subtypes in ccRCC. The cohort comprises 200 tumor samples with matched normal tissue.

Table 1: Simulated Multi-Omics Dataset Specifications

| Omics Layer | Platform/Assay | Key Variables Measured | Sample Count (Tumor/Normal) |

|---|---|---|---|

| Whole Genome Sequencing (WGS) | Illumina NovaSeq | Somatic SNVs, Indels, Copy Number Variations (CNVs) | 200/200 |

| RNA Sequencing (Transcriptomics) | Illumina NovaSeq | Gene Expression (TPM values) | 200/200 |

| DNA Methylation | Illumina EPIC Array | Methylation Beta-values (850k CpG sites) | 200/200 |

| Proteomics & Phosphoproteomics | LC-MS/MS | Protein & Phosphosite Abundance | 200/100 |

Step-by-Step Computational & Analytical Workflow

Step 1: Data Preprocessing & Quality Control

Each omics layer undergoes independent preprocessing.

Experimental Protocol 3.1.1: WGS Data Processing

- Alignment: Raw FASTQ files are aligned to the GRCh38 reference genome using

BWA-MEM. - Variant Calling: Somatic SNVs/Indels are called using

Mutect2(GATK). CNVs are inferred usingControl-FREEC. - Annotation: Variants are annotated for functional impact using

ANNOVARandVEP.

Experimental Protocol 3.1.2: RNA-seq Data Processing

- Pseudo-alignment & Quantification:

kallistoorSalmonis used for transcript-level quantification. - Normalization: Transcript-per-million (TPM) counts are aggregated to gene-level and variance-stabilizing transformation (VST) is applied via

DESeq2.

Table 2: QC Metrics and Post-Filtering Sample Count

| Omics Layer | Primary QC Metric | Threshold | Samples Remaining |

|---|---|---|---|

| WGS | Mean Coverage Depth | >30x | 198 |

| RNA-seq | Library Size | >10M reads | 199 |

| Methylation | Detection P-value | <0.01 | 200 |

| Proteomics | Protein IDs | >5000 | 195 |

Step 2: Univariate & Single-Omics Analysis

Prior to integration, each dataset is analyzed independently to identify layer-specific dysregulation.

Experimental Protocol 3.2.1: Differential Analysis

For each omics layer (e.g., RNA-seq), a linear model (e.g., limma-voom) is fitted comparing tumor vs. normal, adjusting for batch and patient age. Significance: FDR < 0.05 and |log2FC| > 1.

Table 3: Single-Omics Differential Features Summary

| Omics Layer | Total Features Tested | Significantly Altered Features (Tumor vs. Normal) | Top Dysregulated Gene/Region |

|---|---|---|---|

| Genomics (CNV) | 24,000 genes | 1,150 genes with amplifications/deletions | VHL (deletion, 85% of samples) |

| Transcriptomics | 20,000 genes | 4,320 DEGs | CA9 (upregulated) |

| Methylation | 850,000 CpG sites | 112,500 DMPs | Hypomethylation at VHL promoter |

| Proteomics | 8,500 proteins | 1,210 DEPs | HIF1A (upregulated) |

Step 3: Multi-Omics Data Integration for Subtyping

We employ an unsupervised integration method, Similarity Network Fusion (SNF), to cluster patients into molecular subtypes.

Experimental Protocol 3.3.1: Similarity Network Fusion (SNF)

- Input: Patient-by-feature matrices for mRNA, CNV, and methylation (top 5,000 most variable features each).

- Similarity Matrices: Construct patient similarity networks for each data type using Euclidean distance and a scaled exponential kernel.

- Fusion: Iteratively fuse the networks using SNF (R package

SNFtool) to propagate information across omics layers. - Clustering: Apply Spectral Clustering on the fused network to determine optimal number of clusters (k) via Eigen-gap method.

- Output: Patient assignment to Subtypes 1, 2, and 3.

Diagram: SNF Multi-Omics Integration Workflow

Step 4: Characterization of Subtypes

Subtypes are characterized by survival, clinical features, and pathway activity.

Table 4: Clinical and Molecular Characteristics of SNF-Derived Subtypes

| Characteristic | Subtype 1 (n=68) | Subtype 2 (n=75) | Subtype 3 (n=52) | P-value |

|---|---|---|---|---|

| 5-Year Overall Survival | 85% | 62% | 45% | <0.001 |

| Stage III/IV at Dx | 25% | 58% | 77% | <0.001 |

| VHL Mutation Rate | 92% | 81% | 65% | 0.003 |

| Mean Hypoxia Score | Low | Intermediate | High | <0.001 |

| Angiogenesis Pathway Enrichment | Low | High | Intermediate | <0.001 |

Experimental Protocol 3.4.1: Pathway Enrichment Analysis

- For each subtype vs. others, perform differential analysis per omics layer.

- Extract top 100 subtype-specific features per layer.

- Perform gene set enrichment analysis (GSEA) using MSigDB Hallmark collections.

- Integrate pathway scores using single-sample GSEA (ssGSEA) from

GSVAR package.

Step 5: Identification of Driver Pathways & Therapeutic Vulnerabilities

Multi-omics factor analysis (MOFA+) is used to deconvolute the integrated data into latent factors representing co-varying biological signals.

Experimental Protocol 3.5.1: MOFA+ Analysis

- Model Training: Input all four omics matrices into

MOFA2model, training 15 factors. - Factor Interpretation: Correlate factors with clinical traits and subtype labels. Annotate factors by loading heavily weighted features onto pathways (KEGG, Reactome).

- Validation: Assess factor stability via cross-validation.

Diagram: MOFA+ Reveals Driving Biological Factors

Key Signaling Pathways Identified

The integrated analysis highlighted the central role of the VHL-HIF pathway and its downstream cascades.

Diagram: Integrated VHL-HIF Pathway Dysregulation in ccRCC

The Scientist's Toolkit: Research Reagent & Resource Solutions

Table 5: Essential Reagents & Resources for Multi-Omics Cancer Subtyping

| Item / Resource | Function in Workflow | Example Vendor/Platform |

|---|---|---|

| High-Quality Nucleic Acid Kits | Extraction of DNA & RNA from FFPE/frozen tissue for WGS/RNA-seq. | Qiagen AllPrep, Thermo Fisher RecoverAll |

| Methylation EPIC BeadChip | Genome-wide DNA methylation profiling at >850,000 CpG sites. | Illumina Infinium MethylationEPIC |

| TMTpro 16plex | Multiplexed quantitative proteomics enabling parallel analysis of 16 samples. | Thermo Fisher Scientific |

| Single-Cell Multi-Omics Kits | For validation/scaling to single-cell resolution (e.g., CITE-seq, ATAC-seq). | 10x Genomics Chromium |

| Reference Genomes & Annotations | Essential for alignment, quantification, and annotation (e.g., GENCODE, GATK bundles). | GRCh38 from GENCODE, GATK Resource Bundle |

| Bioinformatics Pipelines | Containerized workflows for reproducible analysis (Nextflow, Snakemake). | nf-core/sarek (WGS), nf-core/rnaseq |

| Cloud Computing Credits/Platforms | Handling large-scale compute and storage for multi-omics data. | AWS, Google Cloud, DNAnexus |

This case study demonstrates that a systematic multi-omics integration workflow, from QC through SNF clustering to MOFA+ factor interpretation, can uncover coherent, clinically relevant cancer subtypes with distinct driver pathways. It validates the core thesis that integrated analysis provides a more powerful, systems-level understanding of oncogenesis than any single data layer alone, directly informing prognostic stratification and targeted therapeutic strategies.

This whitepaper details the application of integrated multi-omics data—spanning genomics, transcriptomics, proteomics, metabolomics, and epigenomics—to revolutionize three key pillars of modern therapeutics: precision medicine, novel target identification, and computational drug repurposing. The core thesis is that the vertical and horizontal integration of these disparate data layers, powered by advanced computational pipelines, creates a systems-level understanding of disease pathophysiology that is greater than the sum of its parts. This integrated view is essential for moving beyond correlative associations to causative models that can predict patient-specific disease trajectories and therapeutic responses.

Multi-Omics in Precision Medicine: Stratifying Patients and Predicting Outcomes

Precision medicine leverages multi-omics to move from population-based to individual-based healthcare. The integration of germline DNA variants, somatic tumor mutations, gene expression signatures, and metabolic profiles enables the identification of distinct molecular subtypes within clinically homogeneous diseases, leading to more accurate prognostication and therapy selection.

Key Experimental Protocol: Multi-Omics Patient Stratification Pipeline

- Sample Collection & Processing: Collect matched tissue (e.g., tumor biopsy) and biofluid (blood, urine) samples from a longitudinal patient cohort. Preserve samples for DNA, RNA, protein, and metabolite extraction using standardized protocols (e.g., PAXgene for RNA, methanol:water for metabolites).

- Multi-Layer Profiling:

- Genomics/Epigenomics: Perform Whole Genome Sequencing (WGS) or targeted panel sequencing. Conduct bisulfite sequencing or ChIP-seq for DNA methylation and histone modification profiles.

- Transcriptomics: Perform bulk or single-cell RNA-Seq. Use alignment tools (STAR, HISAT2) and quantify expression (featureCounts, Kallisto).

- Proteomics/Phosphoproteomics: Utilize liquid chromatography with tandem mass spectrometry (LC-MS/MS) in Data-Dependent Acquisition (DDA) or Data-Independent Acquisition (DIA/SWATH) mode.

- Metabolomics: Apply targeted (multiple reaction monitoring, MRM) and untargeted LC-MS or GC-MS platforms.

- Data Integration & Clustering: Employ multi-view clustering algorithms (e.g., Similarity Network Fusion (SNF), iClusterBayes, MOFA+). These methods take patient-matched omics matrices, reduce dimensionality for each layer, and fuse them to identify patient clusters (subtypes) that are consistent across all data types.

- Clinical Association & Validation: Statistically associate the derived molecular subtypes with clinical endpoints (overall survival, drug response). Validate the subtypes and their predictive power in an independent patient cohort.

Table 1: Key Quantitative Outcomes from a Multi-Omics Stratification Study in Breast Cancer (Hypothetical Data)

| Molecular Subtype | Prevalence | Defining Omics Features | 5-Year Survival | Recommended Therapy |

|---|---|---|---|---|

| Luminal-Metabolic | 35% | ESR1+, High lipid metabolism genes, Unique plasma acyl-carnitines | 92% | Endocrine therapy + Metformin |

| Basal-Inflammatory | 25% | TP53 mut, High immune infiltrate signal, IL-6 pathway proteins | 75% | Chemo + Anti-PD-L1 |

| Mesenchymal-Hypoxic | 20% | EMT signature, Hypermethylated CDH1 promoter, High lactate | 60% | Chemo + HIF inhibitor |

| HER2-Metabolic | 20% | ERBB2 amp, High glycolysis enzymes, Serum glutamate elevated | 85% | Anti-HER2 + HK2 inhibitor |

Title: Multi-Omics Precision Medicine Workflow

Target Identification: From Systems Networks to Causal Drivers

Integrated multi-omics shifts target discovery from single-gene, differential expression approaches to the identification of dysregulated networks and key causal hubs. By overlaying DNA variation with its functional consequences (RNA, protein, metabolites), researchers can prioritize master regulators with disease-driving potential.

Key Experimental Protocol: Causal Network Inference for Target Prioritization

- Multi-Omic Data Matrix Construction: Generate matrices for genetic variants (eQTLs/pQTLs), gene expression, protein abundance, and phospho-sites from disease vs. control tissues (Steps as in Section 2.2).

- Network Construction: Build co-expression networks (WGCNA) or Bayesian networks from transcriptomic data. Independently, build Protein-Protein Interaction (PPI) networks using curated databases (STRING, BioGRID).

- Multi-Layer Integration for Causal Inference:

- Use genetic variants (e.g., SNPs from GWAS) as instrumental variables in Mendelian Randomization (MR) analyses to infer causal relationships between molecular traits (gene expression -> protein -> metabolite) and clinical phenotypes.

- Apply tools like OmicsIntegrator or CausalPath to integrate PPI networks with multi-omics perturbation data, identifying paths from genomic alterations to downstream phenotypic changes.

- Hub & Driver Identification: Calculate network centrality measures (degree, betweenness) within the integrated causal network. Genes/proteins that are high-centrality hubs, modulated by upstream genomic events, and connected to disease-relevant pathways are high-priority candidate targets.

- Experimental Validation: Perform CRISPR-Cas9 knockout or siRNA knockdown of the top candidate hub genes in relevant cellular or animal models. Assess the impact on downstream network nodes (other omics layers) and the disease phenotype.

Table 2: Target Prioritization Scores from an Integrated Network Analysis in Alzheimer's Disease

| Candidate Gene | Network Degree | Mendelian Randomization p-value | Druggability (Pharos Score) | Multi-Omics Support |

|---|---|---|---|---|

| TYROBP | 42 | 2.1e-05 | High (0.92) | GWAS locus, Upregulated RNA & Protein, Core microglia network |

| PTK2B | 38 | 1.7e-04 | Medium (0.76) | GWAS locus, Phospho-site altered, Connects amyloid & tau pathways |

| CLU | 35 | 3.8e-03 | Low (0.45) | GWAS locus, Altered CSF protein, Apolipoprotein hub |

Title: Causal Target ID via Multi-Omics & Mendelian Randomization

Drug Repurposing: Leveraging Multi-Omic Disease Signatures

Computational drug repurposing uses multi-omics signatures to connect disease states to drugs that can reverse these signatures. By comparing disease-induced molecular perturbations to drug-induced perturbation databases, one can identify existing compounds with therapeutic potential for new indications.

Key Experimental Protocol: Signature-Based Drug Repurposing

- Define Disease & Drug Signatures:

- Disease Signature: Derive a multi-omics differential expression profile (e.g., upregulated/downregulated genes, proteins, metabolites) from case-control studies (as in Section 2).

- Drug Signature: Utilize publicly available perturbation databases such as LINCS L1000 (gene expression), CLUE CMap, or PRISM (proteomics) which catalog molecular profiles of cell lines treated with thousands of compounds.

- Signature Comparison & Scoring: Use connectivity-mapping algorithms. The core method involves calculating a connectivity score (e.g., normalized enrichment score) between the disease signature and each drug signature. A highly negative score indicates the drug reverses the disease signature (therapeutic potential).

- Multi-Omic Consensus Scoring: Perform connectivity mapping independently for the transcriptomic, proteomic, and phosphoproteomic layers of the disease. Rank drugs by their consensus score across multiple omics layers to increase robustness.

- Mechanistic Validation & Pathway Analysis: For top candidate drugs, perform pathway enrichment analysis (GSEA, Ingenuity Pathway Analysis) on the overlapping genes/proteins between the disease and reversed drug signature to hypothesize the mechanism of action.

- In Vitro/In Vivo Testing: Test top-ranked compounds in disease-relevant cellular or animal models to validate efficacy in reversing the phenotype, not just the molecular signature.

Table 3: Top Drug Repurposing Candidates for NASH from Multi-Omics Connectivity Mapping

| Drug (Original Use) | Transcriptome Score | Proteome Score | Consensus Rank | Predicted Mechanism |

|---|---|---|---|---|

| Tegaserod (IBS) | -98.7 | -95.2 | 1 | Serotonin receptor modulation, reduces inflammation & fibrosis |

| Panobinostat (Myeloma) | -92.4 | -88.9 | 2 | HDAC inhibition, reverses metabolic & inflammatory gene sets |

| Dipyridamole (Antiplatelet) | -89.1 | -82.5 | 3 | Adenosine reuptake inhibition, improves lipid metabolism |

Title: Signature-Based Drug Repurposing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Platforms for Multi-Omics Integration Research

| Category / Item | Example Product/Platform | Primary Function in Multi-Omics Workflow |

|---|---|---|

| Sample Prep & Stabilization | PAXgene Blood RNA Tubes, Streck Cell-Free DNA Tubes | Preserves specific molecular analytes (RNA, DNA) in biofluids at collection, minimizing ex vivo degradation. |

| Nucleic Acid Library Prep | Illumina DNA Prep, SMARTer Stranded RNA-Seq Kit | Prepares sequencing libraries from DNA or RNA with high efficiency and low bias for genomic/transcriptomic profiling. |

| Protein Digestion & Labeling | S-Trap Micro Columns, TMTpro 16plex Isobaric Label Kit | Efficient protein digestion and multiplexing of samples for high-throughput, quantitative proteomics via LC-MS/MS. |

| Metabolite Extraction | Methanol:Water:Chloroform, Biocrates AbsoluteIDQ p400 HR Kit | Broad-spectrum metabolite extraction or targeted quantification of hundreds of pre-defined metabolites. |