CADD in 2025: Integrating AI, Physics, and Data to Revolutionize Drug Discovery

This article provides a comprehensive overview of modern Computer-Aided Drug Design (CADD) for researchers and drug development professionals.

CADD in 2025: Integrating AI, Physics, and Data to Revolutionize Drug Discovery

Abstract

This article provides a comprehensive overview of modern Computer-Aided Drug Design (CADD) for researchers and drug development professionals. It explores the foundational principles of CADD, details cutting-edge methodological advancements including the powerful synergy of machine learning and physics-based simulations, and addresses critical troubleshooting and optimization challenges. The content further examines rigorous validation frameworks and comparative analyses of computational methods, synthesizing key insights to guide the effective application of these tools in accelerating therapeutic development for areas like oncology and infectious diseases.

The Foundations of CADD: Core Principles and the Current Landscape

Computer-Aided Drug Design (CADD) represents a transformative force in modern pharmaceuticals, marking the field's evolution from traditional, empirical methods to a rational, targeted discovery process [1] [2]. Historically reliant on serendipitous discoveries and resource-intensive trial-and-error methodologies, drug discovery has been fundamentally reshaped by CADD's integration [1]. This interdisciplinary computational approach leverages principles from computational chemistry, molecular biology, bioinformatics, and cheminformatics to model, predict, and optimize interactions between small molecules and biological targets [2]. By understanding these atomic and molecular interactions, researchers can predict binding affinities, selectivity, and pharmacological effects before synthesizing and testing compounds in the laboratory [2]. The primary objective of CADD is to accelerate the drug discovery process by helping medicinal chemists guide the strategic choices of drug candidates, significantly reducing research costs and development cycles while improving the precision of hit identification and lead optimization [3] [4]. CADD now provides support for experiments throughout the research process of a drug candidate, from the identification of biological targets to the first pre-clinical studies, establishing itself as a core pillar of contemporary drug discovery pipelines [4] [2].

Core Methodologies and Experimental Protocols

The versatility and effectiveness of CADD arise from a suite of sophisticated computational techniques, which are broadly categorized into two complementary approaches: Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD) [1] [4]. The choice between these methodologies depends primarily on the availability of either the three-dimensional structure of the biological target or known active ligand information.

Structure-Based Drug Design (SBDD)

SBDD relies on the three-dimensional structural information of biological targets, such as proteins or nucleic acids, to design or optimize drug candidates [2]. This approach begins with obtaining a reliable 3D structure of the target, either through experimental means or computational modeling when experimental data is unavailable [2].

Protocol 2.1.1: Molecular Docking and Virtual Screening

- Objective: To predict the binding orientation of small molecules within a target's binding pocket and identify potential hit compounds from large chemical libraries.

- Materials:

- Target protein structure (from PDB, AlphaFold, etc.)

- Library of small molecule compounds (in SDF or MOL2 format)

- Docking software (AutoDock Vina, Glide, DOCK, MOE)

- Procedure:

- Target Preparation:

- Obtain the 3D structure of the protein target from the Protein Data Bank (PDB) or via computational prediction tools like AlphaFold [1] [4].

- Remove water molecules and co-crystallized ligands, unless critical for binding.

- Add hydrogen atoms and assign appropriate protonation states to amino acid residues at physiological pH.

- Define the binding site coordinates, typically based on known ligand binding locations or through binding site detection algorithms.

- Ligand Preparation:

- Obtain 3D structures of compounds from chemical databases (e.g., ZINC, PubChem).

- Generate plausible tautomers and stereoisomers.

- Minimize ligand energy using molecular mechanics force fields to ensure proper geometry.

- Molecular Docking:

- Configure docking parameters (search space, exhaustiveness).

- Execute the docking simulation to generate multiple binding poses for each ligand.

- Score each pose using the software's scoring function to estimate binding affinity.

- Pose Analysis and Selection:

- Visually inspect top-scoring poses for key interactions (hydrogen bonds, hydrophobic contacts, pi-stacking).

- Select compounds with favorable binding modes and scores for experimental validation.

- Target Preparation:

Protocol 2.1.2: Binding Free Energy Calculation using Molecular Dynamics

- Objective: To achieve a more accurate, quantitative prediction of protein-ligand binding affinity by accounting for dynamic effects and solvation.

- Materials:

- Docked protein-ligand complex structure

- Molecular dynamics software (GROMACS, NAMD, AMBER)

- High-performance computing (HPC) resources

- Procedure:

- System Setup:

- Solvate the protein-ligand complex in a water box (e.g., TIP3P water model).

- Add ions to neutralize the system and achieve physiological salt concentration.

- Energy Minimization:

- Perform steepest descent or conjugate gradient minimization to remove steric clashes.

- Equilibration:

- Conduct gradual equilibration under NVT (constant Number, Volume, Temperature) and NPT (constant Number, Pressure, Temperature) ensembles to stabilize temperature and pressure.

- Production MD Simulation:

- Run an extended MD simulation (nanoseconds to microseconds) to sample conformational space.

- Save trajectory frames at regular intervals for analysis.

- Free Energy Analysis:

- Use methods like MM-PBSA/GBSA or free energy perturbation (FEP) on the trajectory to calculate binding free energies [2].

- Analyze results to rank compounds by predicted binding affinity.

- System Setup:

Ligand-Based Drug Design (LBDD)

LBDD is applied when the 3D structure of the target is unknown, leveraging the information from a set of ligands with known biological activity under the hypothesis that structurally similar molecules exhibit similar pharmacological properties [5] [2].

Protocol 2.2.1: Quantitative Structure-Activity Relationship (QSAR) Modeling

- Objective: To build a predictive model that correlates chemical structure features with biological activity.

- Materials:

- A curated dataset of compounds with associated bioactivity data (e.g., IC50, Ki)

- Chemoinformatics software (KNIME, MOE, Python/R with appropriate libraries)

- Procedure:

- Data Curation:

- Collect structures and biological activity data for a congeneric series of compounds.

- Ensure data quality by removing duplicates and correcting errors.

- Molecular Descriptor Calculation:

- Compute a wide range of molecular descriptors (e.g., physicochemical, topological, electronic) for all compounds.

- Dataset Division:

- Split the dataset into training set (~70-80%) for model building and test set (~20-30%) for model validation.

- Model Building:

- Use machine learning algorithms (e.g., Partial Least Squares, Random Forest, Support Vector Machines) on the training set to relate descriptors to activity.

- Model Validation:

- Apply the model to the test set to predict the activity of unseen compounds.

- Assess model performance using statistical metrics (e.g., R², Q², RMSE).

- Application:

- Use the validated model to predict the activity of new, untested compounds and prioritize them for synthesis.

- Data Curation:

Protocol 2.2.2: Pharmacophore Modeling and Virtual Screening

- Objective: To identify the essential structural features responsible for biological activity and use this model to screen compound libraries.

- Materials:

- A set of known active compounds and (optionally) inactive compounds

- Pharmacophore modeling software (MOE, LigandScout)

- Procedure:

- Conformational Analysis:

- Generate a set of low-energy conformers for each active ligand.

- Model Generation:

- Superimpose the active conformers and identify common chemical features (e.g., hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, charged groups).

- Create a pharmacophore hypothesis that defines the spatial arrangement of these features.

- Model Validation:

- Test the model's ability to discriminate between known active and inactive compounds.

- Virtual Screening:

- Use the validated pharmacophore model as a 3D query to search large compound databases.

- Retrieve and visually inspect hits that match the pharmacophore features.

- Select promising hits for experimental testing.

- Conformational Analysis:

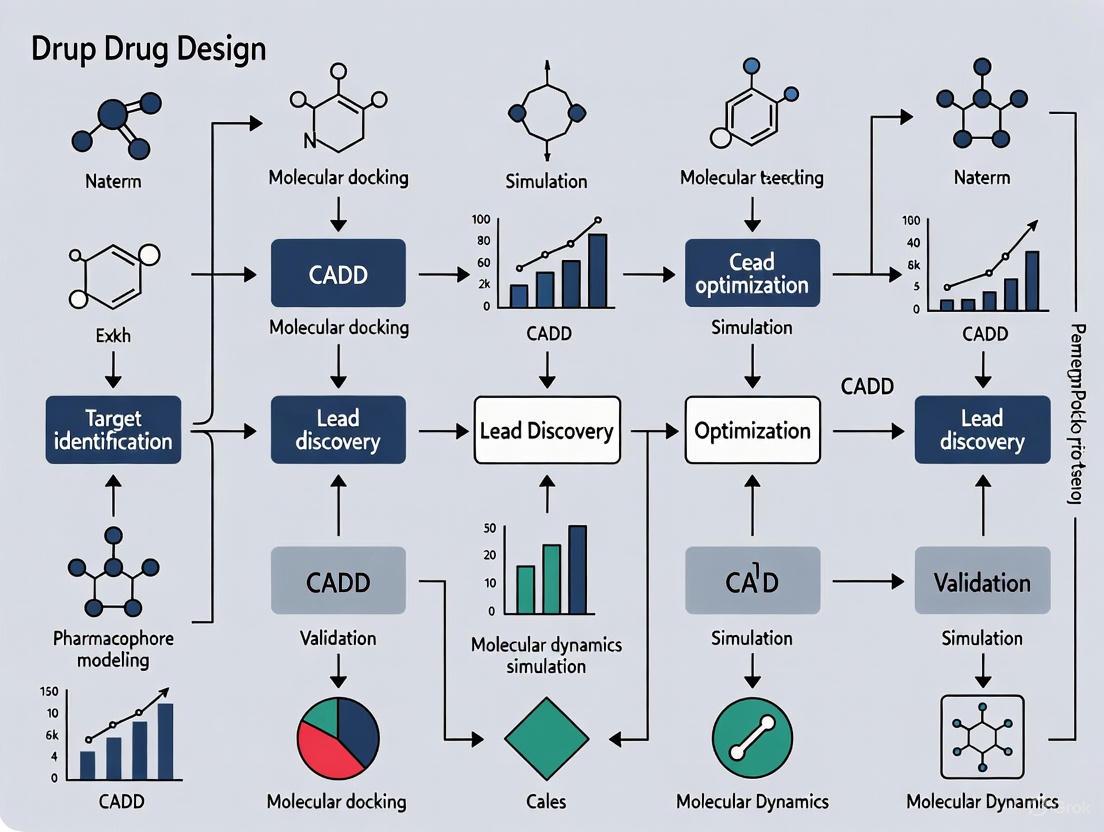

The following workflow diagram illustrates the integrated application of these SBDD and LBDD methodologies within a modern drug discovery pipeline.

Diagram 1: Integrated CADD Drug Discovery Workflow. This diagram outlines the strategic decision-making process between SBDD and LBDD approaches based on data availability, converging on virtual screening for hit identification.

Essential Research Reagents and Computational Tools

The effective application of CADD methodologies relies on a sophisticated toolkit of software platforms, databases, and computational resources. The table below catalogs key research reagent solutions essential for executing the protocols described in this document.

Table 1: Essential Research Reagent Solutions for CADD

| Tool/Resource Name | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| AlphaFold [1] [4] | Structure Prediction | Predicts 3D protein structures from amino acid sequences with high accuracy. | SBDD: Provides reliable target structures when experimental ones are unavailable. |

| AutoDock Vina [1] | Docking Software | Fast, accurate molecular docking and virtual screening. | Protocol 2.1.1: Predicting ligand binding poses and affinities. |

| GROMACS [1] | Molecular Dynamics | High-performance MD simulation package for simulating biomolecular systems. | Protocol 2.1.2: Running production MD simulations for free energy calculations. |

| MOE (Molecular Operating Environment) [4] | Integrated Software Suite | Comprehensive platform for structure- and ligand-based design, QSAR, and simulations. | Multiple: Used across SBDD and LBDD protocols for docking, pharmacophore modeling, and QSAR. |

| KNIME [4] | Workflow Platform | Visual platform for creating data science workflows and automating computational tasks. | Protocol 2.2.1: Building and validating QSAR models; automating virtual screening pipelines. |

| ZINC/ChEMBL | Compound Database | Publicly accessible databases of commercially available and bioactive compounds. | Protocol 2.1.1 & 2.2.2: Source of compound libraries for virtual screening. |

| Protein Data Bank (PDB) | Structure Repository | Central repository for experimentally determined 3D structures of biological macromolecules. | SBDD: Primary source of target structures for docking and analysis. |

Quantitative Data and Performance Metrics

The impact of CADD is demonstrated through both its predictive accuracy in specific tasks and its overall contribution to streamlining the drug discovery pipeline. The following tables summarize performance metrics for key computational tools and techniques.

Table 2: Performance Comparison of Molecular Docking Tools [1]

| Tool | Primary Application | Key Advantages | Common Limitations |

|---|---|---|---|

| AutoDock Vina | Predicting binding affinities and orientations. | Fast, accurate, and easy to use. | May be less accurate for highly flexible systems. |

| AutoDock GOLD | Predicting binding for flexible ligands. | High accuracy for handling ligand flexibility. | Requires a license and can be expensive. |

| Glide | High-accuracy docking and virtual screening. | Highly accurate and integrated with Schrödinger suite. | Requires commercial Schrödinger suite. |

| DOCK | Versatile docking and virtual screening. | Versatile; can be used for both docking and virtual screening. | Can be slower than other tools. |

| SwissDock | Web-based docking predictions. | Easy to use and accessible online. | May not be as accurate for complex systems. |

Table 3: Summary of Key CADD Techniques and Applications

| Computational Method | Theoretical Basis | Output/Deliverable | Impact on Discovery Process |

|---|---|---|---|

| Molecular Docking [1] [2] | Molecular mechanics, scoring functions. | Predicted binding pose and affinity score. | Rapid identification of potential hits from large libraries; suggests initial binding hypotheses. |

| Molecular Dynamics (MD) [1] [6] | Statistical mechanics, Newtonian physics. | Time-evolution trajectory of the system; free energy estimates. | Provides dynamic insight into binding stability, mechanisms, and more accurate affinity predictions (MM-PBSA/GBSA, FEP). |

| QSAR [1] [5] | Statistical modeling, Machine Learning. | Predictive model linking chemical descriptors to activity. | Guides lead optimization by predicting the activity of unsynthesized analogs. |

| Pharmacophore Modeling [5] [2] | Chemical feature perception and alignment. | 3D query representing essential interactions for bioactivity. | Enables scaffold hopping and identification of novel chemotypes via virtual screening. |

| Virtual Screening [1] [3] | Docking, pharmacophore, or similarity searching. | A prioritized list of candidate molecules for experimental testing. | Dramatically reduces the number of compounds requiring costly experimental HTS. |

Computer-Aided Drug Design has unequivocally transitioned from a supplementary tool to a central pillar of drug discovery [1] [7]. By integrating computational methodologies across the entire discovery pipeline—from target analysis and hit identification to lead optimization and ADMET prediction—CADD provides a rational framework that significantly reduces the time and cost associated with bringing new therapeutics to market [5] [4]. The synergistic application of structure-based and ligand-based approaches, powered by advancements in artificial intelligence and ever-increasing computational resources, ensures that CADD will continue to be a critical driving force in the development of safer and more effective medicines [3] [2].

In the field of Computer-Aided Drug Design (CADD), two principal paradigms have emerged as cornerstones for modern therapeutic discovery: structure-based drug design (SBDD) and ligand-based drug design (LBDD) [8] [9]. These methodologies represent complementary approaches to the same fundamental challenge: efficiently identifying and optimizing chemical compounds that effectively modulate biological targets. SBDD relies on the three-dimensional structural information of the target protein, typically obtained through experimental methods like X-ray crystallography or computational predictions, to guide the design of molecules that complement the binding site [8] [10]. In contrast, LBDD leverages information from known active compounds to infer properties of new potential drugs when the target structure is unknown or difficult to obtain [8] [11].

The strategic selection and integration of these approaches have become increasingly critical in pharmaceutical research, as they offer pathways to reduce discovery timelines and costs while improving the quality of candidate compounds [12] [13]. This article provides a comprehensive comparison of these dominant methodologies, detailing their respective use cases, experimental protocols, and emerging integration strategies that leverage the strengths of both paradigms.

Core Principles and Comparative Analysis

Structure-Based Drug Design (SBDD)

SBDD is fundamentally rooted in the principle of molecular recognition, designing compounds that sterically and chemically complement the target binding site [8] [10]. This approach requires detailed knowledge of the three-dimensional architecture of the biological target, typically a protein or nucleic acid involved in a disease process [14]. The process begins with obtaining a high-resolution structure of the target protein, which can be achieved through experimental techniques including X-ray crystallography, nuclear magnetic resonance (NMR) spectroscopy, and cryo-electron microscopy (cryo-EM), or through computational predictions using tools like AlphaFold [10] [11] [14].

Once the structure is obtained, researchers analyze the binding site characteristics including shape, electrostatic properties, and hydrogen-bonding capabilities [14]. This structural information enables rational drug design through computational techniques such as molecular docking, where potential drug candidates are virtually screened for their ability to bind the target, and molecular dynamics simulations, which assess the stability of proposed protein-ligand complexes [8] [11]. The primary advantage of SBDD lies in its ability to provide atomic-level insights into drug-target interactions, facilitating the design of highly specific compounds with optimized binding affinity [8] [10].

Ligand-Based Drug Design (LBDD)

LBDD approaches are employed when three-dimensional structural information of the target is unavailable, but data about molecules that interact with the target exist [8] [11]. This methodology operates on the similarity-property principle, which posits that structurally similar molecules are likely to exhibit similar biological activities [11] [12]. LBDD techniques analyze the physicochemical properties and structural features of known active compounds to build models that predict the activity of new molecules [8].

Key LBDD methods include Quantitative Structure-Activity Relationship (QSAR) modeling, which establishes mathematical relationships between molecular descriptors and biological activity, and pharmacophore modeling, which identifies the essential steric and electronic features necessary for molecular recognition [8] [11]. These approaches enable virtual screening of compound libraries to identify novel candidates that share critical characteristics with known actives, even when their molecular scaffolds differ significantly (a process known as "scaffold hopping") [11]. The major strength of LBDD is its independence from target structure, making it applicable to targets that are difficult to characterize structurally, such as membrane proteins [8].

Comparative Analysis of SBDD and LBDD

Table 1: Comparative Analysis of Structure-Based and Ligand-Based Drug Design Approaches

| Aspect | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Primary Requirement | 3D structure of target protein [8] | Known active ligands [8] |

| Key Techniques | Molecular docking, molecular dynamics simulations, free energy perturbation [8] [11] | QSAR, pharmacophore modeling, similarity searching [8] [11] |

| Typical Applications | Rational design of novel scaffolds, affinity optimization, selectivity engineering [8] [14] | Scaffold hopping, lead expansion, analog series optimization [11] |

| Key Advantages | Provides atomic-level interaction details; enables de novo design [8] [10] | Fast and computationally efficient; no need for protein structure [8] |

| Major Limitations | Dependent on quality and relevance of protein structure; computationally intensive [8] [14] | Limited by chemical space of known actives; may miss novel mechanisms [8] [12] |

Table 2: Structural Biology Techniques for SBDD

| Technique | Resolution Range | Sample Requirements | Key Applications in SBDD |

|---|---|---|---|

| X-ray Crystallography | 1.5-3.5 Å [10] | High-quality protein crystals [8] [10] | Atomic-level binding site analysis; ligand co-crystallization [8] |

| Cryo-EM | 3.0-3.5 Å (typically) [10] | Vitrified protein solutions [10] | Membrane proteins; large complexes [8] [10] |

| NMR Spectroscopy | 2.5-4.0 Å [10] | Isotopically labeled proteins in solution [8] [10] | Studying protein dynamics; flexible systems [8] |

| Computational Prediction | Variable (e.g., AlphaFold) [11] [14] | Protein sequence [14] | Targets resistant to experimental structure determination [11] |

Experimental Protocols

Protocol 1: Structure-Based Virtual Screening

Objective: To identify novel hit compounds through molecular docking against a known protein structure.

Materials and Reagents:

- Target Protein Structure: Experimentally determined (PDB format) or computationally predicted [11] [14]

- Compound Library: Commercially available (e.g., ZINC, Enamine) or proprietary collections in SDF or PDBQT format [15]

- Docking Software: AutoDock Vina, Glide, GOLD, or similar [15]

- Computational Resources: High-performance computing cluster for large-scale screening [13]

Procedure:

- Target Preparation:

- Obtain the 3D structure of the target protein from the Protein Data Bank or through prediction tools like AlphaFold [11] [14].

- Remove water molecules and extraneous ligands, except those critical for binding [15].

- Add hydrogen atoms and optimize hydrogen bonding networks using tools like MolProbity [14].

- Define the binding site coordinates based on known ligand positions or predicted active sites [15].

Ligand Library Preparation:

Molecular Docking:

Post-Docking Analysis:

Validation:

Protocol 2: Ligand-Based Virtual Screening Using QSAR

Objective: To predict compound activity using quantitative structure-activity relationship models.

Materials and Reagents:

- Training Set Compounds: Known active and inactive compounds with measured biological activities [8] [11]

- Test Compounds: Database of compounds to be screened and prioritized [15]

- Chemical Descriptor Software: PaDEL-Descriptor, RDKit, or similar [15]

- Modeling Environment: Python/R with machine learning libraries (scikit-learn, TensorFlow) [16] [15]

Procedure:

- Data Set Curation:

- Compile a diverse set of compounds with reliable activity data (IC50, Ki, or EC50 values) [12].

- Apply chemical standardization to normalize structures (tautomer standardization, salt removal) [12].

- Divide data into training (80%) and test (20%) sets using rational splitting methods to ensure chemical space coverage [15].

Molecular Descriptor Calculation:

Model Development:

Virtual Screening:

Model Interpretation and Validation:

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for CADD

| Reagent/Tool | Function | Example Applications |

|---|---|---|

| Protein Data Bank (PDB) | Repository of experimentally determined protein structures [10] | Source of target structures for SBDD [10] |

| ZINC Database | Curated collection of commercially available compounds [15] | Virtual screening compound libraries [15] |

| AutoDock Vina | Molecular docking software [15] | Predicting ligand binding modes and affinities [15] |

| PaDEL-Descriptor | Molecular descriptor calculation software [15] | Generating chemical features for QSAR modeling [15] |

| AlphaFold | Protein structure prediction tool [11] | Generating models when experimental structures are unavailable [11] |

Integrated Workflows and Combined Approaches

Sequential Integration Strategies

The sequential integration of LBDD and SBDD methods represents a powerful funnel-based approach that maximizes efficiency in virtual screening [11] [17]. In this workflow, large compound libraries are first processed using fast ligand-based methods to reduce the chemical space, after which the pre-filtered subset undergoes more computationally intensive structure-based analysis [11] [12]. This strategy is particularly valuable when dealing with ultra-large chemical libraries containing billions of compounds, where exhaustive structure-based screening would be prohibitively resource-intensive [12].

A typical sequential workflow proceeds through these stages:

- Initial Filtering: Large compound collections are screened using 2D or 3D similarity searches against known active compounds or through pre-trained QSAR models [11] [17].

- Property-Based Filtering: Compounds passing the initial screen are evaluated for drug-like properties, including physicochemical characteristics, potential toxicity, and synthetic accessibility [12].

- Structure-Based Prioritization: The refined compound set undergoes molecular docking against the target structure [11] [17].

- Consensus Scoring: Final candidate selection incorporates results from both ligand-based and structure-based approaches [11].

This sequential approach was effectively demonstrated in the CACHE Challenge #1, where participants sought ligands for the LRRK2-WDR domain [12]. Successful teams typically employed initial ligand-based filtering to narrow the enormous chemical space before applying structure-based methods to the reduced compound sets, highlighting the practical utility of this integrated strategy in real-world drug discovery scenarios [12].

Parallel and Hybrid Screening Approaches

Advanced screening pipelines increasingly employ parallel implementation of LBDD and SBDD methods, where compounds are simultaneously evaluated using both approaches [11] [17]. The independent results are subsequently combined using consensus scoring frameworks that leverage the complementary strengths of each method [11] [12]. This strategy helps mitigate the limitations inherent in individual approaches and increases the probability of identifying authentic active compounds [17].

Key parallel implementation strategies include:

- Parallel Scoring: Selecting the top-ranked compounds from both ligand-based similarity rankings and structure-based docking scores without requiring consensus between them [17]. This approach increases sensitivity and the likelihood of recovering potential actives, particularly when one method underperforms due to technical limitations [11].

- Hybrid Scoring: Multiplying or averaging normalized scores from different methods to create a unified ranking system [11] [17]. This approach favors compounds ranked highly by both methodologies, thereby prioritizing specificity and increasing confidence in selected candidates [17].

- Ensemble Methods: Using multiple protein conformations or diverse ligand sets to capture the dynamic nature of binding sites and the heterogeneity of active chemical series [11]. These ensembles provide a more comprehensive representation of the drug-target interaction landscape compared to single-structure or single-template approaches [11].

Diagram 1: Combined LBVS and SBVS workflow. This diagram illustrates a parallel virtual screening approach where ligand-based and structure-based methods are applied simultaneously, with results combined through consensus scoring to identify high-confidence hit compounds [11] [17].

Case Study: Identification of Natural Tubulin Inhibitors

A recent study exemplifies the powerful integration of structure-based and ligand-based approaches in the discovery of natural inhibitors targeting the human αβIII tubulin isotype, a protein implicated in cancer drug resistance [15]. This research employed a comprehensive methodology that leveraged the complementary strengths of both paradigms to identify promising therapeutic candidates.

The integrated workflow proceeded through these key stages:

- Target Preparation: Researchers developed a homology model of the human αβIII tubulin isotype using Modeller software, based on a bovine tubulin template with 100% sequence identity [15].

- Structure-Based Virtual Screening: The team screened 89,399 natural compounds from the ZINC database against the Taxol-binding site of tubulin using AutoDock Vina, selecting the top 1,000 hits based on binding energy [15].

- Machine Learning Classification: A supervised machine learning approach was implemented to distinguish between active and inactive compounds based on chemical descriptor properties [15]. The model was trained on known Taxol-site targeting drugs (actives) and non-Taxol targeting drugs (inactives) with decoys generated by the DUD-E server [15].

- ADMET and Biological Property Prediction: The top candidates were evaluated for absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties, along with Prediction of Activity Spectra for Substances (PASS) analysis to anticipate potential biological activities [15].

- Validation through Molecular Dynamics: The final four hit compounds underwent molecular dynamics simulations to assess complex stability and binding modes, with binding affinity calculations confirming strong interactions with the target [15].

This case study demonstrates how the sequential application of structure-based and ligand-based methods, augmented by machine learning, can efficiently identify promising drug candidates with specific target affinity [15]. The integrated approach allowed researchers to leverage the precision of structure-based docking while incorporating the pattern recognition capabilities of ligand-based modeling, ultimately identifying natural compounds with potential to overcome drug resistance in cancer therapy [15].

Structure-based and ligand-based drug design represent complementary paradigms in modern computational drug discovery, each with distinctive strengths, limitations, and optimal application domains [8] [11]. SBDD provides atomic-level insights into drug-target interactions but requires high-quality structural information, while LBDD offers efficient screening capabilities based on known active compounds without requiring target structure [8]. The strategic integration of these approaches through sequential, parallel, or hybrid implementation creates synergistic workflows that enhance hit identification efficiency and quality [11] [17].

Future directions in CADD point toward increasingly sophisticated integration of these methodologies, powered by advances in artificial intelligence and machine learning [12] [16]. Deep learning models that simultaneously leverage both structural and ligand information, such as the DRAGONFLY framework for de novo drug design, represent the next frontier in computational drug discovery [16]. Furthermore, the growing availability of predicted protein structures through tools like AlphaFold is expanding the applicability of SBDD to previously inaccessible targets [11] [14]. As these computational approaches continue to evolve, the strategic combination of structure-based and ligand-based methodologies will remain essential for addressing the complex challenges of modern drug discovery and development.

The Drug Development Burden: A Quantitative Analysis

The traditional drug discovery and development process is characterized by immense financial investment and extended timelines, presenting significant market challenges that Computer-Aided Drug Design (CADD) aims to address.

Table 1: Drug Development Cost and Timeline Analysis

| Development Phase | Average Duration | Average Cost (USD) | Probability of Success |

|---|---|---|---|

| Discovery & Preclinical | 1 - 6 years [18] | $15 - $100 million [18] | Preclinical to Phase I Transition: ~10% [18] |

| Clinical Trials (Phases I-III) | 6 - 7 years [18] | $435 million (Phase I: $25M; Phase II: $60M; Phase III: $350M) [18] | Phase I to Approval: ~12% [19] |

| FDA Review & Approval | 0.5 - 2 years [18] | $2 - $3 million (application fee) [18] | N/A |

| Total | 10 - 15 years [19] [18] | $2.6 billion (incl. cost of failures) [20] [19] | 1 in 5,000 compounds from preclinical stage [18] |

The primary drivers of the $2.6 billion cost include the high failure rate of drug candidates (approximately 90% fail in clinical trials) and the prolonged development cycle requiring consistent funding over a decade or more [18]. CADD emerges as a strategic solution to rationalize and expedite this process, offering a more efficient and cost-effective approach by leveraging computational power to predict compound behavior before costly synthetic and experimental work begins [13].

CADD Methodologies and Protocols

CADD encompasses two primary computational approaches: Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD). The application of these methods integrates into a streamlined workflow for lead identification and optimization.

Core CADD Workflow

The following diagram illustrates the logical relationship and workflow between the major CADD methodologies.

Structure-Based Drug Design (SBDD) Protocol

SBDD relies on the knowledge of the three-dimensional structure of the biological target, typically a protein [1].

Protocol 1: Target Modeling and Binding Site Characterization

- Objective: Generate an accurate 3D model of the target protein and identify the putative binding site.

- Software Tools:

- Homology/Comparative Modeling: SWISS-MODEL [21], MODELLER [21] (Used when an experimental structure is unavailable but a homologous template exists).

- Deep Learning-Based Structure Prediction: AlphaFold [21], ESMFold [1], I-TASSER [21] (Used for ab initio modeling without a clear template).

- Binding Site Prediction: CASTp [21], Active Site Prediction Tool [21] (Identifies cavities and pockets on the protein surface suitable for ligand binding).

- Methodology:

- Sequence Alignment: For homology modeling, align the target protein sequence with the template sequence(s).

- Model Building: Generate 3D coordinates for the target based on the template structure, modeling missing loops and side chains.

- Model Refinement: Use energy minimization and molecular dynamics (MD) simulations (e.g., with GROMACS [1] [21]) to relax steric clashes and improve stereochemistry.

- Model Validation: Assess the quality of the generated model using tools like PROCHECK or MolProbity to verify Ramachandran plot outliers and rotamer geometry.

- Binding Site Identification: Run binding site prediction algorithms to define the coordinates and volume of the active site.

Protocol 2: Molecular Docking and Virtual Screening

- Objective: Predict the binding orientation (pose) and affinity of small molecules within the target's binding site and screen large compound libraries in silico.

- Software Tools: AutoDock Vina [1] [21], Glide [1] [21], GOLD [1], DOCK [1].

- Methodology:

- Preparation of Structures:

- Protein: Add hydrogen atoms, assign partial charges (e.g., using Kollman or AMBER force fields), and define protonation states of key residues (e.g., His, Asp, Glu).

- Ligand Library: Obtain structures from databases like ZINC [21] or PubChem [21]. Prepare ligands by generating 3D conformations, optimizing geometry, and assigning charges (e.g., using Gasteiger-Marsili).

- Grid Generation: Define a 3D grid box encompassing the binding site. The box size should be large enough to accommodate ligand flexibility.

- Docking Execution: Run the docking algorithm. For virtual screening, this is performed on thousands to millions of compounds.

- Pose Scoring and Ranking: The docking software scores each pose using a scoring function (knowledge-based, force-field based, or empirical). Rank compounds based on their predicted binding affinity (e.g., kcal/mol).

- Post-Docking Analysis: Visually inspect top-ranked complexes (using PyMOL, Maestro [21]) to analyze key interactions (H-bonds, hydrophobic contacts, pi-stacking). Cluster similar poses to identify consensus binding modes.

- Preparation of Structures:

Ligand-Based Drug Design (LBDD) Protocol

LBDD is employed when the 3D structure of the target is unknown, and the design is based on known active molecules (ligands) [1].

Protocol 3: Pharmacophore Modeling and 3D Database Screening

- Objective: Create an abstract model of the essential molecular features responsible for biological activity and use it to identify new scaffolds.

- Software Tools: LigandScout [21], Phase [21], Pharmer [21].

- Methodology:

- Data Set Curation: Compile a set of known active compounds and, if possible, inactive decoys with diverse structures but similar properties.

- Conformational Analysis: Generate a representative set of low-energy conformers for each molecule in the training set.

- Pharmacophore Hypothesis Generation:

- Constructive Phase: Superimpose active molecules and identify common chemical features (e.g., hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, charged groups).

- Model Building: Create a spatial model (hypothesis) defining the geometric and chemical constraints common to active compounds.

- Hypothesis Validation:

- Use statistical methods (e.g., Fischer's randomization test [21]) to assess the significance of the model.

- Test the model against a validation set of compounds with known activity to evaluate its predictive power.

- Virtual Screening: Use the validated pharmacophore model as a 3D query to search large chemical databases and retrieve compounds that match the feature arrangement.

Protocol 4: Quantitative Structure-Activity Relationship (QSAR) Modeling

- Objective: Develop a quantitative model that correlates numerical descriptors of chemical structures with their biological activity.

- Software Tools: Various commercial and open-source QSAR packages (e.g., within Schrödinger suite, KNIME, Python/R libraries).

- Methodology:

- Data Set Preparation: Assay a congeneric series of compounds for a specific biological endpoint (e.g., IC50, Ki).

- Molecular Descriptor Calculation: Compute numerical descriptors for each compound, which can be 0D (molecular weight), 1D (substructure counts), 2D (topological indices), or 3D (molecular surface area, volume).

- Model Development:

- Split data into training and test sets.

- Use machine learning algorithms (e.g., Random Forest, Support Vector Machines, Partial Least Squares regression) on the training set to build a model linking descriptors to activity.

- Model Validation: Assess the model's internal consistency (cross-validation on training set) and, more importantly, its predictive ability using the external test set. Key metrics include R² and Q².

- Model Application: Use the validated QSAR model to predict the activity of new, untested compounds and prioritize them for synthesis.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents, Databases, and Software for CADD

| Item Name / Category | Function / Application | Specific Examples |

|---|---|---|

| Commercial Software Suites | Integrated platforms for molecular modeling, simulation, and data analysis. | Schrödinger Suite (Maestro, Glide) [21], BIOVIA Discovery Studio [21] |

| Open-Source Molecular Dynamics | Simulate the time-dependent behavior of biomolecules in physiological conditions. | GROMACS [1] [21], AMBER [21], OpenMM [1] |

| Docking & Virtual Screening Tools | Predict ligand binding pose and affinity; screen compound libraries in silico. | AutoDock Vina [1] [21], DOCK [1], SwissDock [1] |

| Public Compound Databases | Sources of chemical structures for virtual screening and lead discovery. | ZINC [21] (for purchasable compounds), PubChem [21] (bioactivity data) |

| Protein Structure Databases | Sources of experimental and predicted protein structures for SBDD. | Protein Data Bank (PDB), AlphaFold Protein Structure Database [1] |

| Specialized Hardware | Accelerate computationally intensive calculations like MD and AI model training. | High-Performance Computing (HPC) Clusters, Graphics Processing Units (GPUs) [22] |

| Machine Learning Frameworks | Develop and train custom predictive models for QSAR, de novo design, etc. | TensorFlow, PyTorch (often integrated into broader CADD platforms) [13] |

Application Note: Quantitative Market Landscape of Computer-Aided Drug Design (CADD)

The global Computer-Aided Drug Design (CADD) market is experiencing transformative growth, propelled by the integration of advanced computational technologies such as Artificial Intelligence (AI) and Machine Learning (ML). CADD utilizes computational methods to discover, design, and optimize drug candidates, significantly accelerating the drug discovery pipeline and reducing associated costs [23]. This application note provides a detailed quantitative analysis of the key players driving innovation and the distinct regional landscapes shaping the global CADD market, with a focus on North America's dominance and the rapid emergence of the Asia-Pacific region.

Key Global Players and Competitive Landscape

The CADD market features a dynamic ecosystem of established technology firms, specialized software providers, and agile startups. Their contributions are fundamental to the methodologies described in subsequent experimental protocols.

Table 1: Key Players and Technological Contributions in the CADD Market

| Company/Organization | Primary Role/Contribution | Key Technologies/Services | Recent Strategic Developments (2024-2025) |

|---|---|---|---|

| Schrödinger, Inc. [23] | Software Provider & Service Provider | Physics-based computational platforms, Molecular modeling | Key player in the dominant North American market. |

| BIOVIA (Dassault Systèmes) [23] [24] | Software Provider | Scientific software for molecular modeling, simulation, and data management | Part of a key player segment in a dominant market. |

| Absci Corporation [25] | AI-Driven Drug Discovery | Generative AI for de novo protein and drug design | Collaborated with AMD to deploy AI accelerators for drug discovery workloads. |

| NVIDIA [26] | Technology Enabler | Advanced GPUs, AI platforms (Clara) for biomedical research | Partnered with IQVIA to boost clinical research with AI agents. |

| Google/Google Cloud [26] | Technology Enabler | Cloud AI tools for biomedical image analysis and data processing | Expanded collaboration with Recursion to leverage cloud technologies for drug discovery. |

| Insilico Medicine [25] | AI-Driven Drug Discovery | Generative AI platform for target identification and molecule design | Its AI platform identified a drug target and created a drug for fibrosis. |

| Chai Discovery [25] | Biotech Startup | AI-powered platform for novel antibody design | Secured $70M to evolve its Chai-2 platform for designing new antibodies. |

| Latent Labs [25] | AI-Driven Discovery | AI foundation models for programmable biology and protein design | Secured $50M in funding to establish generative AI models for developing new proteins. |

| Rowan [22] | CADD Platform Provider | Integrated platform for benchmarking, validation, and workflow management | Aims to reduce the "invisible work" in CADD, such as software integration and model validation. |

Regional Market Analysis: Quantitative Dynamics

Regional dominance in the CADD market is influenced by factors including technological infrastructure, R&D investment, government initiatives, and the local presence of pharmaceutical and biotech industries.

Table 2: Regional Analysis of the CADD Market (2024-2034 Projections)

| Region | Market Share (2024) | Projected CAGR (2025-2034) | Key Growth Drivers | Noteworthy Regional Initiatives |

|---|---|---|---|---|

| North America | ~45% [25] [23] | Not explicitly stated | Presence of key players, state-of-the-art R&D infrastructure, high healthcare technology investments, focus on personalized medicine [25] [23] [26]. | US FDA issued guidelines on AI for regulatory decision-making [25]. |

| Asia-Pacific (APAC) | Not the largest share | Fastest Growing [25] [23] | Rapid industrialization, government-driven innovation programs, expanding pharmaceutical sector, rising disease burden, growing investments in R&D [25] [23] [26]. | China's "AI + Medicine" plan (2025-2027); Japan's MHLW funding for AI-enabled drug discovery [25]. |

| Europe | Substantial share [27] | Not explicitly stated | Stringent quality standards, sustainability goals, increasing R&D initiatives [27]. | Not specified in search results. |

| Latin America, Middle East & Africa | Gradual progression [27] | Gradual progression [27] | Improving economic conditions, rising urbanization, growing awareness of advanced solutions [27]. | Not specified in search results. |

The CADD landscape is characterized by robust growth in North America, led by technological innovation and a strong biopharmaceutical ecosystem, while the Asia-Pacific region presents the highest growth potential due to strategic governmental support and rapid market expansion. The synergy between key players advancing AI/ML technologies and supportive regional policies is defining the future of efficient and effective drug discovery.

Protocol: Computational Methods for Structure-Based Drug Design

Scope

This protocol outlines a standard workflow for Structure-Based Drug Design (SBDD), the dominant segment in the CADD market which accounted for approximately 55% share in 2024 [25] [23]. SBDD relies on the 3D structural information of a biological target to identify and optimize potential drug molecules [25].

Principle

SBDD utilizes the atomic-level structure of a target protein, often obtained from X-ray crystallography, Cryo-EM, or NMR, to guide the discovery of ligands that bind with high affinity and specificity. This approach allows for the rational design of novel therapeutics and was notably applied in the development of protease inhibitors for treatments like Paxlovid [25].

Experimental Procedures

Target Preparation and Selection

- Objective: To obtain a clean, biophysically relevant 3D structure of the target protein for computational studies.

- Methods:

- Source Structure: Obtain the target structure from the Protein Data Bank (PDB) or through homology modeling.

- Structure Refinement: Remove water molecules, co-crystallized ligands, and ions not involved in the binding site. Add missing hydrogen atoms and assign correct protonation states for residues (e.g., His, Asp, Glu) using software like BIOVIA Discovery Studio [23] or Schrödinger's Protein Preparation Wizard.

- Binding Site Definition: Define the active site or allosteric pocket of interest based on known catalytic residues or the location of a co-crystallized native ligand.

Molecular Docking for Virtual Screening

- Objective: To computationally screen large libraries of compounds and predict their binding pose and affinity within the target site.

- Methods:

- Library Preparation: Prepare a database of small molecule structures (e.g., from ZINC database) by energy minimization and generating possible tautomers and stereoisomers.

- Docking Execution: Perform docking simulations using software such as AutoDock Vina [25]. This involves sampling possible conformations (poses) of the ligand within the defined binding site and scoring them based on a scoring function.

- Pose Analysis: Visually inspect the top-ranked poses for key interactions like hydrogen bonds, hydrophobic contacts, and pi-stacking. Prioritize compounds with strong complementary interactions for further analysis.

Binding Affinity Refinement using Molecular Dynamics (MD)

- Objective: To assess the stability of the protein-ligand complex and obtain more accurate binding free energy estimates.

- Methods:

- System Setup: Solvate the protein-ligand complex in a water box (e.g., TIP3P model) and add ions to neutralize the system.

- Simulation Run: Perform MD simulations using packages like GROMACS or AMBER on high-performance computing (HPC) systems or cloud platforms (e.g., Google Cloud AI, NVIDIA Clara) [26]. A typical production run may be for 100 nanoseconds.

- Trajectory Analysis: Analyze the root-mean-square deviation (RMSD) of the protein and ligand to check for complex stability. Calculate binding free energies using methods like Molecular Mechanics/Generalized Born Surface Area (MM/GBSA).

Experimental Validation and Iterative Design

- Objective: To synthesize and test top-ranked computational hits, using experimental data to refine the models.

- Methods:

- Compound Acquisition/Synthesis: Acquire or synthesize the computationally identified hit compounds.

- In vitro Assays: Test compounds for binding affinity (e.g., Surface Plasmon Resonance) and functional activity in biochemical or cell-based assays.

- Cycle of Learning: Use the experimental results to validate the computational predictions. If a co-crystal structure is obtained with a hit compound, use it to refine the docking protocol and initiate further rounds of design and optimization in an iterative cycle.

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for SBDD

| Item/Tool | Function/Description | Example Providers/Platforms |

|---|---|---|

| Protein Structure Database | Repository of experimentally determined 3D protein structures for target selection and preparation. | Protein Data Bank (PDB) |

| Compound Library | Large collections of small molecules for virtual screening to identify initial hits. | ZINC Database |

| Molecular Docking Software | Predicts the preferred orientation and binding affinity of a small molecule to a protein target. | AutoDock Vina [25], Schrödinger Suite [23] |

| Molecular Dynamics Software | Simulates the physical movements of atoms and molecules over time to study complex stability and dynamics. | GROMACS, AMBER |

| AI/ML Drug Design Platform | Uses generative models and predictive algorithms to design novel molecules and optimize properties. | Insilico Medicine Platform [25], Absci Corp. AI [25] |

| Integrated CADD Platform | Streamlines workflows by combining benchmarking, validation, and computation in a unified environment. | Rowan [22] |

| High-Performance Computing (HPC) | Provides the computational power required for demanding tasks like MD simulations and AI model training. | NVIDIA GPUs [26], Google Cloud AI [26] |

The field of computer-aided drug design (CADD) is undergoing a profound transformation, moving beyond traditional structure-based modeling to embrace a new era defined by artificial intelligence (AI), cloud-native infrastructure, and novel therapeutic modalities. This paradigm shift is accelerating the entire drug discovery value chain, from initial target identification to clinical trials, enabling researchers to address biological targets once considered "undruggable" [28]. The integration of these three powerful trends is compressing discovery timelines that traditionally spanned years into months, while simultaneously improving the precision and success rates of new therapeutic candidates [29] [30]. This document provides detailed application notes and experimental protocols for leveraging these converging technologies within modern CADD research frameworks.

Quantitative Landscape: Market Data and Performance Metrics

The adoption of advanced technologies in drug discovery is reflected in robust market growth and distinct performance advantages. The tables below summarize key quantitative data for strategic planning.

Table 1: Computer-Aided Drug Design (CADD) Market Segmentation (2024) [23]

| Segmentation Category | Dominant Segment (Market Share) | Highest Growth Segment (CAGR) |

|---|---|---|

| Type | Structure-Based Drug Design (SBDD) (~55%) | Ligand-Based Drug Design (LBDD) |

| Technology | Molecular Docking (~40%) | AI/ML-Based Drug Design |

| Application | Cancer Research (~35%) | Infectious Diseases |

| End-User | Pharmaceutical & Biotech Companies (~60%) | Academic & Research Institutes |

| Deployment Mode | On-Premise (~65%) | Cloud-Based |

Table 2: Performance Metrics of Leading AI-Driven Drug Discovery Platforms [29]

| Company / Platform | Key AI Approach | Reported Efficiency Gain | Example Clinical Candidate |

|---|---|---|---|

| Exscientia | Generative AI, Centaur Chemist | ~70% faster design cycles; 10x fewer compounds synthesized | CDK7 inhibitor (GTAEXS-617), LSD1 inhibitor (EXS-74539) |

| Insilico Medicine | Generative AI | Target to Phase I in 18 months for IPF drug | Idiopathic Pulmonary Fibrosis drug (Phase I) |

| Recursion | Phenotypic Screening, AI | Integrated platform with Exscientia post-merger | Multiple oncology programs |

| BenevolentAI | Knowledge Graphs, Target ID | AI-derived targets advancing to clinic | Multiple undisclosed programs |

| Schrödinger | Physics-Based Simulations, FEP+ | Platform for rapid in-silico candidate optimization | Multiple partnered and internal programs |

Application Note: Implementing AI-Driven Discovery Workflows

Core Principles and Workflow

AI is revolutionizing CADD by automating complex design tasks and extracting insights from large-scale multimodal data. Leading platforms demonstrate that AI can compress the early-stage discovery and preclinical timeline from a typical 5 years to under 2 years in some cases [29]. The core applications include generative chemistry for de novo molecular design, predictive models for ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties, and target identification through biological network analysis.

Figure 1: AI-Driven Drug Discovery Cycle. This workflow illustrates the iterative "design-make-test-analyze" loop accelerated by AI, where experimental feedback continuously refines the computational models.

Experimental Protocol: AI-Guided Lead Optimization

Protocol Title: Iterative Lead Optimization Using a Closed-Loop AI Design Platform

Objective: To optimize a hit compound into a preclinical candidate with desired potency, selectivity, and ADMET properties using an integrated AI-driven workflow.

Materials:

- AI Software Platform: Access to a generative AI chemistry platform (e.g., Exscientia's Centaur Chemist, Insilico Medicine's Chemistry42) [29].

- Initial Compound: Confirmed hit molecule from HTS or virtual screening.

- Target Product Profile (TPP): A defined set of criteria for the desired candidate (e.g., IC50 < 100 nM, >30x selectivity, CLhep < 10 mL/min/kg).

- Automation Studio: Robotic synthesis and high-throughput screening infrastructure [29].

Methodology:

- Data Curation and Model Priming (Week 1):

- Curate all existing SAR data for the hit series and related chemotypes.

- Input the TPP into the AI platform as optimization constraints.

- Train or fine-tune platform-specific models on the proprietary dataset.

Generative Design Cycle (Week 2):

- The AI platform proposes a focused library of novel compounds (typically 50-200) predicted to meet the TPP.

- A medicinal chemist reviews and approves the proposed structures for synthesis.

Automated Synthesis and Testing (Weeks 3-4):

- Approved compound designs are sent to an automated synthesis platform (e.g., Exscientia's AutomationStudio) [29].

- Synthesized compounds are purified and subjected to a predefined assay cascade (e.g., primary potency, cytotoxicity, microsomal stability).

Data Integration and Model Retraining (Week 5):

- Experimental results are fed back into the AI platform.

- The predictive models are retrained on the new data, improving their accuracy for the next cycle.

Iteration:

- Repeat steps 2-4 until a compound meeting all TPP criteria is identified.

Key Performance Indicator: Success is measured by the number of design cycles and total compounds synthesized to reach the candidate. AI platforms have demonstrated the ability to achieve this with 10x fewer compounds than traditional medicinal chemistry [29].

Application Note: Leveraging Cloud-Native CADD Infrastructures

Core Principles and Architecture

Cloud computing delivers scalable, collaborative, and cost-effective computational resources, overcoming the limitations of traditional on-premise HPC clusters. It democratizes access to state-of-the-art CADD tools for smaller biotechs and academic labs [31]. The cloud service models relevant to CADD are:

- IaaS (Infrastructure as a Service): Provides virtualized computing resources (e.g., AWS, Google Cloud) for running complex molecular dynamics simulations or virtual screening campaigns [32].

- PaaS (Platform as a Service): Offers a development environment for building custom drug discovery applications and workflows, such as specialized data analytics platforms [32].

- SaaS (Software as a Service): Delivers ready-to-use CADD applications via a web browser (e.g., Schrödinger's LiveScope, BIOVIA Discovery Studio) [32].

Figure 2: Cloud Collaboration Architecture for CADD. This diagram shows how a centralized cloud platform enables seamless collaboration and data integration across different roles and locations.

Experimental Protocol: Large-Scale Virtual Screening on the Cloud

Protocol Title: Cloud-Based High-Throughput Virtual Screening of Billion-Compound Libraries

Objective: To rapidly screen an ultra-large chemical library against a protein target to identify novel hit compounds.

Materials:

- Cloud Provider Account: An account with a major cloud provider (e.g., AWS, Google Cloud, Microsoft Azure).

- Target Structure: A prepared 3D structure of the target protein (e.g., from PDB or homology model).

- Chemical Library: A commercially available or proprietary compound library in a suitable format (e.g., ZINC20, Enamine REAL).

- Docking Software: A licensed or open-source molecular docking software (e.g., AutoDock Vina, Glide, FRED) configured as a cloud-native solution.

Methodology:

- Infrastructure Setup (Day 1):

- Use a pre-configured cloud formation template (e.g., AWS CloudFormation) to launch a virtual HPC cluster with hundreds to thousands of CPU cores.

- Configure parallel file storage (e.g., AWS FSx for Lustre) for high-speed I/O during the screening.

Data and Software Deployment (Day 1):

- Upload the target structure and chemical library to the cloud storage.

- Deploy the docking software across the compute cluster using a container orchestration service (e.g., Kubernetes).

Job Execution and Orchestration (Days 2-5):

- Use a job scheduler (e.g., AWS Batch) to split the chemical library into chunks and distribute docking tasks across all compute nodes.

- Monitor job progress through a cloud-based dashboard.

Post-Processing and Analysis (Day 6):

- Collate all results into a centralized cloud database.

- Use cloud-based data analytics tools (e.g., Jupyter Notebooks on Google Colab) to rank compounds by docking score and interaction patterns.

- Apply AI/ML models to further filter and prioritize top hits for purchase and testing.

Key Considerations:

- Cost Management: Utilize spot/transient instances for significant cost savings and auto-scaling to shut down resources when not in use [31].

- Security: Ensure the cloud configuration complies with relevant data protection regulations (e.g., HIPAA, GDPR) through encryption and access controls [31] [32].

Application Note: Designing for Emerging Therapeutic Modalities

Emerging modalities represent a shift from traditional small molecules and antibodies to therapies that act on DNA, RNA, or through engineered cellular machinery. They now account for $197 billion, or 60%, of the total pharma projected pipeline value [33]. Key modalities include:

- RNA Therapeutics: Including mRNA, siRNA, and antisense oligonucleotides (ASOs) that modulate gene expression [28] [34].

- Targeted Protein Degraders: Such as PROTACs (PROteolysis TArgeting Chimeras) that use the cell's ubiquitin-proteasome system to eliminate specific proteins [28] [34].

- Cell & Gene Therapies: Including CAR-T and CRISPR-based gene editing that offer potential cures for genetic diseases [33] [28].

Experimental Protocol: In-Silico Design of a PROTAC Molecule

Protocol Title: Computational Design and Optimization of a Bifunctional PROTAC Degrader

Objective: To design a novel PROTAC molecule that mediates the degradation of a protein of interest (POI) by recruiting an E3 ubiquitin ligase.

Materials:

- Structures: High-resolution crystal structures or high-quality AlphaFold2 models of the POI and the E3 ligase (e.g., Cereblon, VHL).

- Known Ligands: Structures of known small-molecule binders for the POI and the E3 ligase.

- Software: Molecular docking software (e.g., Glide, GOLD), molecular dynamics (MD) simulation package (e.g., GROMACS, Desmond), and a linker database.

Methodology:

- POI and E3 Ligase Ligand Analysis (Week 1):

- Identify solvent-exposed attachment vectors on the known POI and E3 ligase ligands where a linker can be connected without disrupting binding.

- Perform molecular docking to confirm the binding pose and identify optimal attachment points.

Linker Screening and PROTAC Assembly (Week 2):

- Screen a database of flexible and rigid linkers of varying lengths.

- In silico connect the POI ligand and E3 ligase ligand with selected linkers to generate a library of putative PROTAC molecules.

Ternary Complex Modeling and Assessment (Week 3):

- Model the full ternary complex (POI:PROTAC:E3 Ligase) using protein-protein docking guided by the PROTAC structure.

- Run short MD simulations to assess the stability of the ternary complex and the proximity between the POI's lysine residues and the E3 ligase's catalytic cysteine.

PROTAC Property Prediction (Week 4):

- Use AI/ML models or physicochemical calculations to predict the cellular permeability, solubility, and metabolic stability of the top-designed PROTACs, as their large size and complexity often pose challenges.

Key Consideration: The choice of E3 ligase is critical. While most PROTACs use a limited set of E3 ligases (Cereblon, VHL), research is actively expanding this toolbox to include others like DCAF16 and KEAP1 to access new targets and tissues [34].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for Advanced CADD

| Reagent / Solution | Function / Application | Examples / Vendor |

|---|---|---|

| Generative AI Chemistry Platforms | De novo design of novel small molecules optimized for multiple parameters. | Exscientia's Centaur Chemist, Insilico Medicine's Chemistry42 [29] |

| Digital Twin Software | Creates AI-generated virtual control patients in clinical trials to reduce placebo group size and accelerate timelines. | Unlearn.ai [30] [34] |

| Cloud-Based CADD Platforms | Provides scalable computing, SaaS tools, and collaborative workspaces for distributed teams. | Schrödinger LiveSuite, BIOVIA Discovery Studio on Cloud [31] [23] |

| PROTAC-Specific Design Suites | In-silico tools for modeling bifunctional degraders and ternary complexes. | Specific modules in Schrödinger Suite, Cresset Flare [34] |

| CRISPR Design Tools | AI-powered design of guide RNAs for gene editing therapies with minimized off-target effects. | Tools from Broad Institute, MIT [28] [34] |

| LNPs & Delivery Design Software | Computational modeling of lipid nanoparticles and other delivery vehicles for RNA/protein-based therapeutics. | Various academic and commercial molecular dynamics packages |

Methodologies in Action: A Deep Dive into Modern CADD Tools and Applications

The Synergy of Machine Learning and Physics-Based Simulations

In the field of Computer-Aided Drug Design (CADD), a new paradigm is emerging from the integration of machine learning (ML) and physics-based simulations. This synergy aims to overcome the individual limitations of each approach: ML models can struggle with generalization and physical realism, while purely physics-based methods are often computationally prohibitive for large-scale exploration [35] [36]. The combination creates a powerful framework that leverages the predictive speed of ML with the rigorous physical foundation of simulation methods, ultimately accelerating drug discovery [37] [38].

This integration is particularly valuable for addressing complex challenges in modern drug discovery, including the design of novel molecular scaffolds, targeted protein degradation, and the development of biologics [37]. By harnessing both data-driven insights and fundamental physical principles, researchers can generate drug candidates with higher predicted affinity, improved synthetic accessibility, and greater novelty [39]. This document provides detailed application notes and experimental protocols for implementing these synergistic approaches, complete with quantitative data comparisons and visual workflows.

Quantitative Performance Comparison

The integration of ML and physics-based methods has demonstrated quantitatively superior performance across multiple drug discovery benchmarks, from molecular generation efficiency to binding affinity prediction accuracy.

Table 1: Performance Metrics of Physics-Informed AI in Drug Discovery

| Method/System | Key Innovation | Test System | Performance Results | Comparison to State-of-the-Art |

|---|---|---|---|---|

| NucleusDiff [36] | Manifold-constrained diffusion model accounting for atomic distances | CrossDocked2020 (100 complexes) | Significant improvement in binding affinity prediction; Reduced atomic collisions to nearly zero | Outperformed state-of-the-art models in binding affinity |

| NucleusDiff [36] | Same as above | COVID-19 3CL protease | Increased prediction accuracy | Reduced atomic collisions by up to two-thirds compared to other leading models |

| VAE-AL Workflow [39] | Variational autoencoder with nested active learning cycles | CDK2 | 9 molecules synthesized, 8 with in vitro activity, 1 with nanomolar potency | Successfully generated novel scaffolds distinct from known templates |

| VAE-AL Workflow [39] | Same as above | KRAS | 4 molecules with potential activity identified via in silico methods | Explored sparsely populated chemical space effectively |

Table 2: Classification Performance of Machine Learning Methods Under varying Data Conditions [40]

| Method | Best For | Worst For | Key Performance Characteristics |

|---|---|---|---|

| Linear Discriminant Analysis (LDA) | Smaller number of correlated features (not exceeding ~half sample size) | Large feature sets | Most stable (precise) error estimates under optimal conditions |

| Support Vector Machines (SVM) with RBF kernel | Larger feature sets (sample size ≥20) | Small sample sizes | Clear outperformance over LDA, RF, and kNN as feature set grows |

| k-Nearest Neighbour (kNN) | Growing number of features | High variability data with small effect sizes | Performance improves with feature growth, outperforms LDA and RF unless data variability is high |

| Random Forests (RF) | Highly variable data with small effect sizes | Many common scenarios | Outperforms only kNN in specific high-variability, small-effect-size cases |

Experimental Protocols

Protocol 1: VAE with Active Learning for Molecular Generation

This protocol implements a generative AI workflow combining a variational autoencoder (VAE) with nested active learning cycles to generate novel, synthetically accessible molecules with high predicted binding affinity [39].

Materials and Reagents:

- Chemical Databases: Target-specific training sets (e.g., known CDK2 or KRAS inhibitors)

- Software: VAE architecture with encoder/decoder networks, molecular docking software (AutoDock Vina, Glide, DOCK, or SwissDock [1]), chemoinformatics toolkits for similarity assessment

- Computational Resources: High-performance computing cluster with GPU acceleration

Procedure:

- Data Preparation and Initial Training

- Represent training molecules as SMILES strings, then tokenize and convert to one-hot encoding vectors

- Pre-train VAE on a general molecular dataset to learn viable chemical space

- Fine-tune VAE on target-specific training set to increase target engagement

Nested Active Learning Cycles

Inner Cycle (Chemical Optimization):

- Sample the VAE to generate new molecules

- Evaluate generated molecules for drug-likeness, synthetic accessibility, and similarity to training set using chemoinformatic oracles

- Add molecules meeting threshold criteria to a temporal-specific set

- Use this set to fine-tune the VAE in subsequent training iterations

Outer Cycle (Affinity Optimization):

- After set number of inner cycles, subject accumulated molecules in temporal-specific set to docking simulations

- Transfer molecules meeting docking score thresholds to permanent-specific set

- Use permanent-specific set to fine-tune VAE for subsequent cycles

Repeat inner and outer cycles for predetermined iterations (typically 3-5 outer cycles with multiple inner cycles each)

Candidate Selection and Validation

- Apply stringent filtration to molecules in permanent-specific set

- Perform intensive molecular modeling simulations (e.g., PELE, absolute binding free energy calculations)

- Select top candidates for synthesis and experimental validation

Troubleshooting:

- If generated molecules lack diversity, adjust similarity thresholds in inner AL cycle

- If synthetic accessibility is poor, increase weighting of SA oracle in evaluation step

- If binding affinity plateaus, incorporate more sophisticated physics-based scoring in outer cycle

Protocol 2: Physics-Informed Diffusion Model for Binding Affinity Prediction

This protocol implements NucleusDiff, a diffusion model that incorporates physical constraints to reduce unphysical atomic collisions while maintaining high binding affinity prediction accuracy [36].

Materials and Reagents:

- Training Data: CrossDocked2020 dataset (~100,000 protein-ligand binding complexes)

- Software: NucleusDiff implementation, standard molecular visualization tools

- Computational Resources: GPU-enabled workstations or compute cluster

Procedure:

- Model Configuration

- Implement diffusion model architecture with manifold constraints

- Establish anchoring points on molecular manifold to monitor atomic distances

- Configure repellant force parameters to prevent atomic collisions

Training Protocol

- Train model on CrossDocked2020 dataset using standard training epochs

- Validate on held-out test set of 100 complexes

- Monitor both binding affinity accuracy and atomic collision metrics

Inference and Prediction

- Input novel protein-ligand complexes for binding affinity prediction

- Generate predictions with confidence intervals based on model uncertainty

- Visualize results to verify physical plausibility of predicted binding modes

Validation:

- Test model on external datasets not included in training (e.g., COVID-19 3CL protease)

- Compare performance against state-of-the-art models without physics constraints

- Quantify reduction in atomic collisions while maintaining binding affinity accuracy

Workflow Visualization

VAE-AL Generative Molecular Design Workflow

Physics-Informed AI Model Architecture

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Category | Specific Tool/Resource | Function/Application | Key Features |

|---|---|---|---|

| Molecular Docking | AutoDock Vina [1] | Predicting binding affinities and orientations of ligands | Fast, accurate, easy to use |

| AutoDock GOLD [1] | Predicting binding affinities, especially for flexible ligands | Accurate for flexible ligands, requires license | |

| Glide [1] | Predicting binding affinities and orientations | Accurate, integrated with Schrödinger suite | |

| Structure Prediction | AlphaFold2 [1] [35] | Protein structure prediction from sequence | AI-driven high-accuracy prediction |

| ESMFold [1] | Protein structure prediction | Alternative to AlphaFold2 | |

| SWISS-MODEL [1] [35] | Homology modeling | Automated server, comparative modeling | |

| Molecular Dynamics | GROMACS [1] | Simulating behavior of proteins over time | Classical mechanics simulations |

| OpenMM [1] | Molecular dynamics simulations | Customizable, GPU acceleration | |

| Generative Models | VAE-AL Framework [39] | Generating novel molecules with desired properties | Combines variational autoencoder with active learning |

| NucleusDiff [36] | Structure-based drug design with physical constraints | Manifold-constrained diffusion model | |

| Specialized Databases | CrossDocked2020 [36] | Training dataset for structure-based drug design | ~100,000 protein-ligand binding complexes |

Computer-Aided Drug Design (CADD) has become an indispensable pillar in modern pharmaceutical research, dramatically accelerating the discovery and optimization of therapeutic agents [41]. Among its various methodologies, structure-based drug design (SBDD) leverages three-dimensional structural information of biological targets to guide the identification and development of small molecule drugs [42]. This article details three core SBDD techniques—molecular docking, molecular dynamics (MD) simulations, and free-energy perturbation (FEP)—that form a synergistic pipeline for predicting and optimizing protein-ligand interactions. Molecular docking provides initial binding mode and affinity predictions, MD simulations introduce critical dynamics and flexibility, and FEP calculations deliver highly accurate, quantitative binding affinity predictions [43] [42] [44]. The convergence of increased computational power, sophisticated algorithms, and integration of machine learning (ML) is continually enhancing the accuracy, efficiency, and scope of these methods, solidifying their role in reducing the time and cost associated with bringing new drugs to market [45] [42] [41].

Molecular Docking: Predicting Binding Poses and Affinities

Molecular docking is a foundational SBDD technique used to predict the optimal binding conformation (pose) of a small molecule (ligand) within a target's binding site and to estimate its binding affinity [43].

Key Methodologies and Algorithms

Docking algorithms comprise two main components: a conformational search algorithm and a scoring function [43].

Conformational Search Methods: These algorithms explore the vast conformational space of the ligand within the protein's binding site.

- Systematic Methods: These exhaustively explore conformations by systematically rotating rotatable bonds. Examples include Systematic Search (used in Glide and FRED) and Incremental Construction (used in FlexX and DOCK) [43].

- Stochastic Methods: These use random sampling and probabilistic rules to explore conformational space. Prominent examples include Monte Carlo (MC) methods and Genetic Algorithms (GA), the latter being used in AutoDock and GOLD [43].

Scoring Functions: These are mathematical functions used to rank docking poses by predicting the binding affinity, typically aiming to reproduce binding thermodynamics (ΔG = ΔH - TΔS) [43]. The development of more general and accurate scoring functions remains an active area of research, with machine learning-based functions showing significant promise [46].

Application Notes and Protocol

A robust molecular docking protocol involves several critical steps to ensure biologically relevant and reproducible results [43].

- Target Preparation: Obtain a high-quality 3D structure of the target protein from experimental sources (PDB) or predictive models (AlphaFold2). Prepare the structure by adding missing atoms, assigning protonation states, and optimizing hydrogen-bonding networks [43] [47].

- Ligand Preparation: Generate 3D structures of small molecules from their chemical representations. Assign correct bond orders, ionization states, and generate possible tautomers and stereoisomers [43].

- Docking Grid Generation: Define the spatial coordinates and dimensions of the binding site on the target protein to focus the conformational search [43].

- Pose Prediction and Scoring: Execute the docking run using a selected search algorithm and scoring function to generate a set of predicted ligand poses, each with an associated score [43].

- Post-Docking Analysis: Critically evaluate the top-ranked poses. Prioritize those with favorable interaction patterns (e.g., hydrogen bonds, hydrophobic contacts) and consider using MD simulations for further pose refinement and validation [43] [44].

Table 1: Common Conformational Search Algorithms in Molecular Docking

| Method | Description | Representative Software |

|---|---|---|

| Systematic Search | Systematically rotates all rotatable bonds by fixed intervals. | Glide, FRED [43] |

| Incremental Construction | Fragments the ligand, docks rigid fragments, and rebuilds linkers. | FlexX, DOCK [43] |

| Monte Carlo (MC) | Makes random changes to conformations, accepting/rejecting based on energy/probability. | Glide [43] |

| Genetic Algorithm (GA) | Evolves populations of ligand conformations based on a fitness score (e.g., docking score). | AutoDock, GOLD [43] |

Molecular Dynamics: Incorporating Flexibility and Dynamics

Molecular Dynamics simulations address a key limitation of static docking by modeling the time-dependent behavior of proteins and ligands, treating atoms as particles that move according to Newton's laws of motion [43] [42].