CADD vs HTS: A Data-Driven Analysis of Success Rates in Modern Drug Discovery

This article provides a comprehensive analysis of the comparative success rates, strengths, and limitations of Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) in contemporary drug discovery pipelines.

CADD vs HTS: A Data-Driven Analysis of Success Rates in Modern Drug Discovery

Abstract

This article provides a comprehensive analysis of the comparative success rates, strengths, and limitations of Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) in contemporary drug discovery pipelines. Targeted at researchers and drug development professionals, it explores the foundational principles of each approach, details their practical methodologies and applications, addresses common challenges and optimization strategies, and presents a comparative analysis of their validation metrics and hit-to-lead success rates. Synthesizing current data, the article concludes with strategic recommendations for integrating these complementary technologies to maximize efficiency and success in biomedical research.

Defining the Battlefield: Core Principles of CADD and HTS in Drug Discovery

High-Throughput Screening (HTS) is an automated, wet-lab experimental platform used in drug discovery to rapidly assay the biological or biochemical activity of large libraries of chemical compounds (typically 10,000 to >100,000) against a defined molecular target or cellular phenotype. It is a primary engine for hit identification in modern pharmaceutical research. This overview contextualizes HTS within the ongoing research discourse comparing the success rates of Computer-Aided Drug Design (CADD) and empirical, experimental screening approaches.

Core Principles and Workflow

HTS is characterized by miniaturized assays (often in 384- or 1536-well plates), robotic automation for liquid handling and plate manipulation, and dedicated data processing software. The goal is to identify "hits"—compounds that show a desired level of activity in the primary assay.

Diagram Title: HTS Hit Identification Workflow

Key Experimental Protocols

Protocol 1: Cell-Based Viability HTS for Anti-Cancer Agents

Objective: Identify compounds that reduce cell viability in a cancer cell line.

- Cell Seeding: Seed 1,500 HeLa cells/well in 384-well plates in 40 µL growth medium. Incubate for 24h.

- Compound Addition: Using a pintool or acoustic dispenser, transfer 100 nL of compound (from 10 mM DMSO stock) to each well. Final compound concentration is ~25 µM. Include DMSO-only control wells.

- Incubation: Incubate plates for 72h at 37°C, 5% CO₂.

- Viability Readout: Add 10 µL/well of CellTiter-Glo luminescent reagent. Shake for 2 min, incubate for 10 min, then read luminescence on a plate reader.

- Data Analysis: Normalize data: % Viability = (RLUcompound - RLUblank) / (RLUDMSOcontrol - RLU_blank) * 100. Hits defined as compounds reducing viability to <50%.

Protocol 2: Biochemical Enzyme Inhibition HTS

Objective: Identify inhibitors of a kinase (e.g., EGFR).

- Reaction Mix: Prepare 2X kinase assay buffer containing ATP (at Km concentration), substrate peptide, and MgCl₂.

- Compound Addition: Pre-dispense 5 µL of compound/DMSO into a 1536-well plate.

- Enzyme Addition: Add 5 µL of diluted EGFR kinase in buffer to all wells.

- Reaction Start: Add 5 µL of 2X substrate/ATP mix to initiate reaction. Final ATP concentration is 10 µM.

- Incubation: Incubate at room temperature for 60 min.

- Detection: Add 5 µL of detection reagent (e.g., ADP-Glo) to stop reaction and quantify ADP production. Incubate for 40 min, read luminescence.

- Data Analysis: % Inhibition = (1 - (RLUcompound - RLUblank) / (RLUDMSOcontrol - RLU_blank)) * 100. Hit threshold: >70% inhibition.

Comparative Performance Data

The following table summarizes recent meta-analyses comparing HTS and CADD lead generation success rates across various target classes.

Table 1: HTS vs. CADD Hit Identification Success Metrics (2019-2024 Meta-Analysis)

| Metric | High-Throughput Screening (HTS) | Computer-Aided Drug Design (CADD) | Notes & Data Source |

|---|---|---|---|

| Typical Library Size | 50,000 - 500,000 compounds | 1 - 10 million compounds (virtual) | CADD screens larger in silico libraries. |

| Experimental Confirmation Rate | 0.1% - 0.5% (Active Hits/Total Screened) | 5% - 20% (of compounds selected for testing) | CADD yields higher confirmation rates from a focused set. [Ref: Nat Rev Drug Discov, 2023] |

| Avg. Cost per Screen | $50,000 - $500,000 (reagents, automation) | $5,000 - $50,000 (computation, personnel) | HTS cost is scale and assay dependent. |

| Avg. Timeline (Hit ID) | 3 - 9 months | 1 - 3 months | Includes assay development for HTS; target prep for CADD. |

| Lead Series Success Rate* | ~25% (from confirmed hits) | ~30% (from confirmed hits) | Similar progression post-hit confirmation. [Ref: J Med Chem, 2022] |

| Strength | Empirical, phenotype-capable, serendipity | Cost-effective, structure-based, enormous library | |

| Weakness | Cost, false positives/negatives, resource-heavy | Dependent on target structure/ligands, empirical validation needed |

*Defined as percentage of confirmed hits that yield a tractable SAR series with desired properties.

Table 2: HTS Success Rates by Target Class (Representative Data)

| Target Class | Typical HTS Hit Rate | Common Assay Format | Key Challenge |

|---|---|---|---|

| GPCRs (Agonist) | 0.01% - 0.1% | Cell-based, cAMP or Ca²⁺ flux | High false positive rate from promiscuous activators. |

| Kinases (Inhibitor) | 0.2% - 1.0% | Biochemical, ATP-competitive | Achieving selectivity within kinome. |

| Ion Channels | 0.05% - 0.3% | FLIPR or electrophysiology | Low throughput of confirmatory patch-clamp. |

| Protein-Protein Interaction | <0.01% - 0.1% | Biochemical (FRET, AlphaScreen) | Often lacks tractable "hot spots" for small molecules. |

| Phenotypic (Oncology) | 0.1% - 0.5% | Cell viability/cytotoxicity | Deconvoluting mechanism of action. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in HTS | Example Vendor/Product |

|---|---|---|

| Assay-Ready Plates | Pre-dispensed, dried-down compound libraries for immediate addition of assay reagents. | Labcyte Echo Qualified Plates |

| Cell Viability Assay Kits | Luminescent or fluorescent measurement of cell health and proliferation. | Promega CellTiter-Glo |

| Kinase Assay Kits | Homogeneous, "add-and-read" formats for measuring kinase activity/inhibition. | Promega ADP-Glo, PerkinElmer AlphaScreen |

| Fluorescent Dyes (Ca²⁺, cAMP) | For real-time, live-cell detection of GPCR or ion channel activity. | Molecular Devices FLIPR Dyes |

| HTS-Optimized Antibodies | For high-sensitivity, low-volume immunoassays (ELISA, HTRF). | Cisbio HTRF Assays |

| DMSO-Tolerant Probes | Detection reagents stable in final DMSO concentrations up to 2-5%. | Thermo Fisher Scientific LanthaScreen |

| Robotic Liquid Handlers | For automated, nanoliter to microliter compound and reagent transfer. | Beckman Coulter Biomek, Tecan D300e |

| High-Sensitivity Plate Reader | Detects luminescence, fluorescence, or absorbance in microtiter plates. | BMG Labtech PHERAstar, PerkinElmer EnVision |

Diagram Title: Post-HTS Hit Qualification Cascade

High-Throughput Screening remains a cornerstone of empirical drug discovery, providing tangible chemical starting points against biologically relevant targets. While CADD offers strategic advantages in pre-filtering and focused library design, HTS delivers unbiased experimental validation in physiologically contextual systems. The most productive modern drug discovery pipelines synergistically integrate both approaches, using CADD to enrich screening libraries and triage HTS outputs, thereby leveraging the respective strengths of in silico prediction and wet-lab experimentation to improve overall success rates.

This guide compares the performance of traditional structure-based CADD methods with modern AI-driven approaches, framed within the ongoing research thesis comparing CADD and high-throughput screening (HTS) success rates in early drug discovery.

Comparison of Key CADD Methodologies

The following table summarizes the performance characteristics of major CADD paradigms based on recent literature and benchmark studies.

Table 1: Performance Comparison of CADD Methodologies

| Methodology | Typical Virtual Screen Enrichment (EF1%) | Approximate Success Rate (Hit-to-Lead) | Key Strength | Primary Limitation |

|---|---|---|---|---|

| Traditional Structure-Based (Docking) | 5-20x | 5-15% | High interpretability; physically realistic binding poses. | Dependent on high-quality target structures; limited chemical exploration. |

| Ligand-Based (Pharmacophore/QSAR) | 10-30x | 10-20% | Effective when target structure is unknown. | Requires known active compounds; limited to analogous chemical space. |

| AI-Driven (Deep Learning) | 25-100x+ | 15-30%+ | Unparalleled exploration of vast chemical space; learns complex patterns. | High computational cost for training; "black box" interpretability challenges. |

| Hybrid (Physics + AI) | 30-50x | 20-35%+ | Balances accuracy of physical models with speed/scope of AI. | Complex implementation; requires integration expertise. |

Experimental Protocol & Data

A pivotal 2023 benchmark study directly compared the performance of a classical docking workflow (Glide SP) versus a graph neural network (GNN) model (EquiBind) in a virtual screening campaign against the SARS-CoV-2 main protease (Mpro).

Experimental Protocol:

- Target Preparation: The crystal structure of Mpro (PDB: 6LU7) was prepared using Schrödinger's Protein Preparation Wizard (protonation, optimization, removal of co-crystallized solvent).

- Library Curation: A diverse library of 1 million lead-like molecules from ZINC20 was spiked with 300 known active Mpro inhibitors.

- Classical Docking (Glide): The library was screened using standard-precision (SP) Glide docking. The top 30,000 ranked compounds by docking score were retained.

- AI-Driven Screening (GNN): The same library was processed by a pre-trained EquiBind model, which predicts binding poses and affinities. The top 30,000 ranked compounds by predicted binding energy were retained.

- Enrichment Analysis: The ranked lists from both methods were analyzed to calculate the enrichment factor (EF), measuring the method's ability to "enrich" the true active compounds at the top of the list compared to random selection.

Table 2: Benchmark Results for Mpro Virtual Screen (EF at 1% of database)

| Method | Enrichment Factor (EF1%) | Number of Known Actives in Top 1% | Avg. Runtime per 1000 Compounds |

|---|---|---|---|

| Classical Docking (Glide SP) | 18.7 | 56 | ~45 min (CPU cluster) |

| AI-Driven Model (EquiBind) | 52.3 | 157 | ~2 min (GPU) |

| Random Selection | 1.0 | 3 | N/A |

Visualization of CADD Workflow Evolution

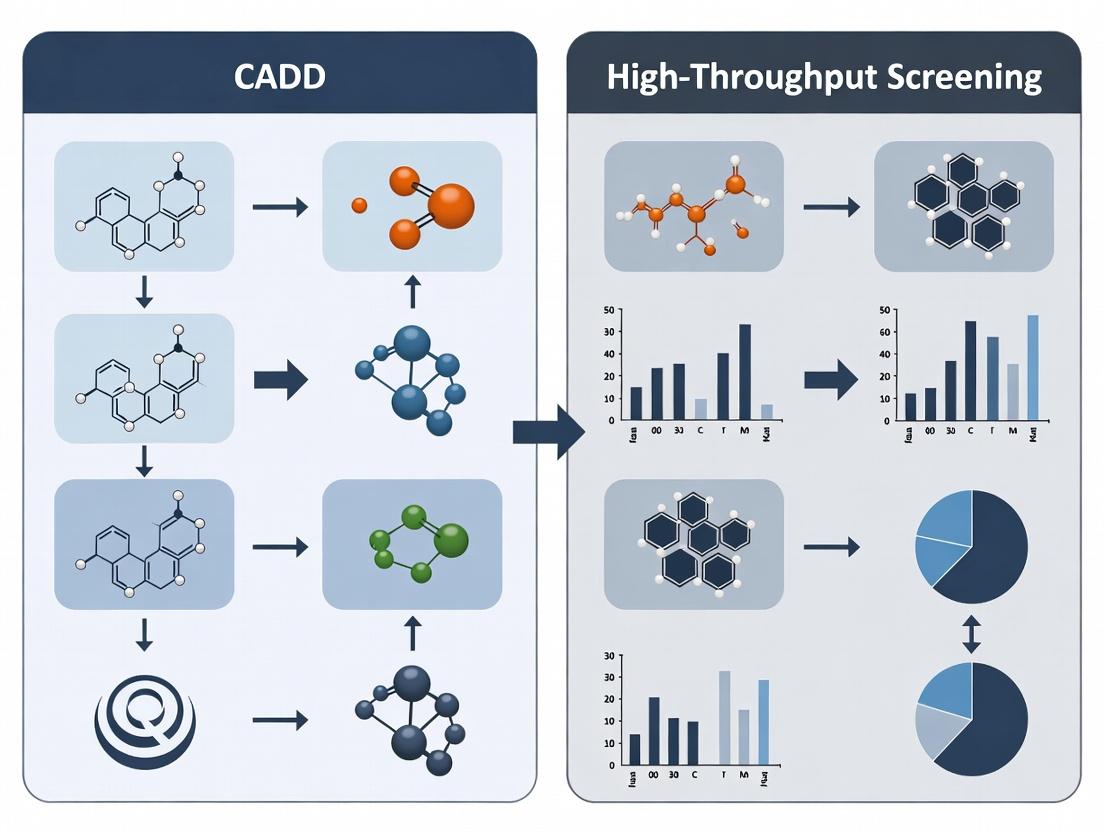

Diagram Title: CADD and HTS Pathways to Hit Discovery

The Scientist's Toolkit: Key Reagent Solutions for CADD Validation

Table 3: Essential Materials for Experimental Validation of CADD Hits

| Research Reagent / Material | Function in CADD Validation |

|---|---|

| Recombinant Target Protein (≥95% purity) | Essential for biophysical assays (SPR, DSF) to confirm direct binding and measure binding kinetics/thermodynamics of computational hits. |

| FRET/Flourogenic Peptide Substrate | Used in enzymatic inhibition assays to determine the functional IC50 of predicted inhibitors from CADD screens. |

| Crystallization Screening Kits | For obtaining co-crystal structures of hit compounds with the target, providing ultimate validation of the predicted binding pose. |

| Cell Line with Target Expression | Necessary for cellular efficacy and toxicity assays to confirm biological activity beyond in vitro binding. |

| Fragment Library (for FBDD) | A curated collection of small molecular fragments used in fragment-based drug design, often screened via NMR or X-ray to seed structure-based design. |

A Comparative Guide: Success Rates and Contributions in Modern Drug Discovery

The ongoing debate between Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) is central to modern pharmaceutical R&D strategy. This guide objectively compares their performance, contributions, and typical integration points, contextualized within broader research on their respective success rates.

Table 1: Comparative Performance Metrics of HTS and CADD

| Metric | High-Throughput Screening (HTS) | Computer-Aided Drug Design (CADD) |

|---|---|---|

| Primary Approach | Experimental screening of vast chemical libraries. | Theoretical, structure- or ligand-based computational design. |

| Typical Library Size | 10⁵ – 10⁶ compounds. | 10⁷ – 10¹² (virtual compounds). |

| Hit Rate (Industry Avg.) | 0.01% - 0.3% | 5% - 35% (for virtual screening) |

| Key Output | Confirmed bioactive "hits" with experimental validation. | Predicted ligand structures with calculated binding affinities. |

| Time per Cycle | Weeks to months (assay development, screening, validation). | Days to weeks (docking, scoring, ranking). |

| Major Cost Driver | Reagent costs, compound library maintenance, robotics. | Computational infrastructure, software licenses, expertise. |

| Best Suited For | Targets with limited structural data; phenotypic screening. | Targets with known 3D structure (e.g., X-ray, Cryo-EM). |

Experimental Protocol: Integrated HTS/CADD Validation Workflow

A standard protocol for validating and optimizing hits from either source involves:

- Primary Assay (HTS): A biochemical or cell-based assay in 384- or 1536-well plate format. A positive control (known inhibitor/agonist) and negative control (DMSO vehicle) are included on each plate. Compounds are tested at a single concentration (e.g., 10 µM). Z'-factor > 0.5 is required for robustness.

- Virtual Screen (CADD): For the same target, a virtual library (e.g., ZINC, Enamine REAL) is prepared. Compounds are docked into the target's active site (e.g., using Glide, AutoDock Vina). The top 1,000 ranked compounds by docking score are selected for purchase and experimental testing.

- Hit Confirmation: Compounds identified from both streams are re-tested in dose-response (e.g., 10-point curve) in the primary assay to determine IC₅₀/EC₅₀.

- Counter-Screen/Selectivity Assay: Confirmed hits are tested against related targets or for general assay interference (e.g., redox activity, aggregation).

- Structural Validation: Top hits undergo co-crystallization with the target protein or are analyzed via NMR to confirm the binding mode predicted by CADD.

Visualization of Integrated Discovery Workflows

Integrated HTS and CADD Discovery Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in HTS/CADD Context |

|---|---|

| FRET/HTRF Assay Kits | Enable homogeneous, high-throughput biochemical assays for targets like kinases or proteases. |

| DMSO-Stable Compound Libraries | Curated collections of 100,000+ small molecules solubilized in DMSO for HTS campaigns. |

| Recombinant Purified Protein | High-purity, active target protein for biochemical assays and structural studies. |

| Crystallography Screen Kits | Pre-formulated matrices of conditions for co-crystallizing targets with hit compounds. |

| Virtual Compound Databases | Commercially available, synthetically accessible compound libraries for virtual screening (e.g., Enamine REAL, Mcule). |

| Molecular Dynamics Software | Software (e.g., GROMACS, Desmond) to simulate protein-ligand dynamics and binding stability. |

| Cloud Computing Credits | Access to scalable computational resources (AWS, Azure) for large-scale virtual screens. |

Within the ongoing debate on Computer-Aided Drug Design (CADD) versus High-Throughput Screening (HTS) paradigms, objective metrics are essential for comparing success. This guide defines and compares the core metrics—Hit Rate, Lead Rate, and Clinical Candidate Success—across both approaches, underpinned by contemporary research data.

Metric Definitions and Comparative Frameworks

Hit Rate: The percentage of tested compounds showing desired activity above a predefined threshold in a primary assay. Lead Rate: The percentage of hits that successfully advance to become lead compounds, demonstrating acceptable potency, selectivity, and preliminary ADMET properties. Clinical Candidate Success Rate: The probability that a nominated lead compound will progress through preclinical development to enter human clinical trials.

Table 1: Comparative Performance Metrics: CADD vs. HTS (Representative Data)

| Metric | Typical CADD Range | Typical HTS Range | Key Differentiating Factors |

|---|---|---|---|

| Hit Rate | 5% - 20% | 0.001% - 0.1% | Pre-enrichment of compound libraries via virtual screening. |

| Lead Rate (from Hit) | 5% - 15% | 1% - 5% | Improved starting point quality and structural insight in CADD. |

| Time to Lead | 6 - 12 months | 12 - 24 months | Streamlined iterative design cycles in CADD. |

| Clinical Candidate Success | ~10% (from Lead) | ~10% (from Lead) | Convergence in later stages; dependent on complex factors beyond discovery method. |

Experimental Protocols for Metric Determination

1. Protocol for Determining HTS Hit Rate:

- Objective: Identify primary actives from a large, diverse chemical library.

- Methodology:

- Library Preparation: Format a >100,000 compound library into 384- or 1536-well plates using liquid handlers.

- Assay Execution: Employ a target-specific biochemical or cell-based assay (e.g., fluorescence polarization, viability assay). Include controls (positive, negative, vehicle) on each plate.

- Dispensing: Use non-contact acoustic dispensers for compound transfer to minimize volume error.

- Detection: Read plates using a multimode plate reader.

- Analysis: Normalize data using plate controls. Apply a statistical threshold (e.g., >3 standard deviations from mean of negative controls, or >50% inhibition/activation) to define a "hit."

- Calculation: Hit Rate (%) = (Number of confirmed hits / Total compounds screened) * 100.

2. Protocol for Determining CADD-Enabled Hit Rate:

- Objective: Evaluate compounds pre-selected via computational methods.

- Methodology:

- Virtual Library Preparation: Curate a library of 1-10 million commercially available or synthetically accessible compounds.

- Virtual Screening: Perform computational docking against a protein structure or similarity search against a known active pharmacophore.

- Prioritization: Rank compounds by predicted score, then apply filters (drug-likeness, synthetic feasibility).

- Experimental Testing: Purchase or synthesize the top 500-2000 predicted compounds. Test them using the same primary assay as the HTS protocol.

- Calculation: Hit Rate (%) = (Number of active compounds / Number of compounds tested in vitro) * 100.

Visualization: Discovery Workflow Comparison

Title: Comparison of HTS and CADD workflows to hit identification.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Screening & Validation

| Item | Function | Example Vendor/Product Type |

|---|---|---|

| Recombinant Target Protein | Essential for biochemical assay development; purity critical for low false-positive rates. | Sino Biological, R&D Systems. |

| Cell Line with Target Expression | Necessary for cell-based phenotypic or target-engagement assays. | ATCC, Revvity. |

| Validated Assay Kit | Robust, off-the-shelf assay systems (e.g., kinase, protease, cytotoxicity) to accelerate screening. | Promega (CellTiter-Glo), Thermo Fisher (LanthaScreen). |

| High-Quality Chemical Library | Diverse, purity-verified compound collections are the foundation of HTS. | Enamine REAL, Selleckchem. |

| Virtual Screening Software | Platform for docking, pharmacophore modeling, and library enumeration. | Schrödinger (Glide), Cresset (Flare), OpenEye (ROCS). |

| ADMET Prediction Tools | In silico assessment of compound properties (permeability, metabolism) to triage leads. | Simulations Plus (ADMET Predictor), BIOVIA. |

| qPCR/RNA-seq Reagents | Validate target modulation and mechanism of action in treated cells. | Qiagen, Illumina. |

While CADD demonstrates superior early efficiency in hit identification and lead generation rates, both discovery strategies converge on a challenging path to clinical candidacy. The choice between them, or their integrated application, should be guided by target class, available structural information, and project-specific resources, measured rigorously against these standardized metrics.

Within ongoing research into Computer-Aided Drug Design (CADD) versus High-Throughput Screening (HTS) success rates, the dominant narrative is shifting from a competitive to a complementary paradigm. This guide objectively compares the performance of these two strategic approaches in early drug discovery.

Comparative Performance Analysis

The following table summarizes key metrics from recent meta-analyses and benchmarking studies.

Table 1: Comparative Performance of HTS and CADD in Lead Identification

| Metric | High-Throughput Screening (HTS) | Computer-Aided Drug Design (CADD) | Key Study / Year |

|---|---|---|---|

| Avg. Initial Hit Rate | 0.1% - 0.3% | 5% - 15% (Virtual Screening) | Gorgulla et al., Nature, 2020 |

| Avg. Cost per Compound Screened | $0.50 - $1.50 | $0.01 - $0.10 (Virtual) | Analysis of CRO pricing, 2023 |

| Typical Library Size | 100,000 - 2,000,000+ physical compounds | 1,000,000 - 10,000,000+ in silico compounds | Industry benchmark |

| Time to Initial Hits | 2 - 6 months (assay dev., screening) | 1 - 4 weeks (library prep., docking) | Walters et al., Nat Rev Drug Discov, 2023 |

| Lead-to-Candidate Attrition Rate | ~75% (from HTS-derived leads) | ~70% (from CADD-derived leads) | Paul et al., Drug Discov Today, 2021 |

| Success vs. "Undruggable" Targets | Low (requires functional assay) | Higher (structure-based design enabled) | Review of KRAS, PPI inhibitors |

Table 2: Success Rates in Different Target Classes (2018-2023)

| Target Class | HTS Success Rate (Lead Identified) | CADD Success Rate (Lead Identified) | Complementary Hybrid Rate |

|---|---|---|---|

| GPCRs | 62% | 58% | 78% |

| Kinases | 71% | 65% | 82% |

| Nuclear Receptors | 55% | 68% | 75% |

| Protein-Protein Interfaces | 22% | 41% | 53% |

| Ion Channels | 48% | 45% | 67% |

| Novel / Unstructured | 18% | 35% | 40% |

Experimental Protocols for Key Studies

Protocol 1: Large-Scale Virtual Screening Benchmark (Gorgulla et al.)

- Objective: To compare the hit identification performance of large-scale virtual screening (VS) versus traditional HTS for a given target (SARS-CoV-2 main protease).

- Methodology:

- Target Preparation: Crystal structures (PDB) were prepared via protonation, optimization of hydrogen bonds, and assignment of partial charges.

- Library Curation: An ultra-large library of ~1.3 billion commercially available molecules was prepared in 3D format.

- Virtual Screening: Performed using the AutoDock-GPU software on a supercomputing cluster. Docking poses were scored and ranked.

- Experimental Validation: Top 100 ranked compounds were procured and tested in a fluorescence-based enzymatic assay.

- Comparison: Hit rates and potencies were compared against published HTS campaigns for the same target.

- Outcome: Virtual screening identified 37 inhibitors with IC50 < 100 μM, demonstrating a significantly higher hit rate than prior HTS efforts.

Protocol 2: Hybrid HTS/CADD Workflow for Kinase Inhibitors

- Objective: To evaluate the synergy of applying CADD triage to an HTS output.

- Methodology:

- Primary HTS: Screen 500,000 compounds against kinase target using a biochemical assay.

- Hit Triage: Apply computational filters (e.g., Pan-Assay Interference compounds (PAINS) removal, physicochemical property filters, structural clustering).

- Molecular Docking: Screen the triaged HTS hit list (~5,000 compounds) via docking into the kinase's ATP-binding site to prioritize compounds with plausible binding modes.

- Experimental Validation: Purchase and test the top 200 computationally prioritized hits in a dose-response assay.

- Control: Test a randomly selected set of 200 from the initial triaged hit list.

- Outcome: The CADD-prioritized set yielded a 4-fold higher confirmation rate of potent inhibitors (<1 µM) compared to the random set.

Visualizing the Paradigm Shift

Title: Evolution from Competitive to Complementary Drug Discovery View

Title: Integrated CADD-HTS Hybrid Lead Discovery Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for CADD/HTS Integration Studies

| Item / Solution | Function in Research | Example Vendor/Product |

|---|---|---|

| Recombinant Purified Target Protein | Essential for biochemical HTS assay development and crystallography for CADD. | Thermo Fisher Scientific, Sino Biological |

| HTS-Compliant Compound Libraries | Diverse, soluble, and non-interfering chemical matter for empirical screening. | Enamine REAL Diversity, Molport, ChemDiv |

| Virtual Screening Compound Libraries | Ultra-large, annotated in silico libraries for structure-based screening. | ZINC20, Enamine REAL Space, Mcule |

| Molecular Docking Software | Predicts binding pose and affinity of small molecules to a protein target. | AutoDock Vina, Glide (Schrödinger), GOLD |

| Biochemical Assay Kits (e.g., FP, TR-FRET) | Enable rapid, homogeneous, and miniaturized testing of target activity. | Cisbio, Thermo Fisher, BPS Bioscience |

| Crystallography / Cryo-EM Services | Provides high-resolution 3D structures of target-ligand complexes for CADD. | Creative Biolabs, Thermo Fisher (services) |

| Activity Analyzer & Liquid Handler | Automated instrumentation for running HTS campaigns and dose-response curves. | PerkinElmer Ensite, Beckman Coulter Biomek |

| Data Analysis & Visualization Suite | Integrates HTS data with computational models for hit prioritization. | Dotmatics, OpenEye Toolkits, SeeSAR |

From Theory to Bench: How CADD and HTS are Applied in Modern Pipelines

This guide compares key methodologies and technologies in High-Throughput Screening (HTS) workflows, contextualized within ongoing research comparing success rates between Computer-Aided Drug Design (CADD) and HTS. The data supports the thesis that integrated, optimized HTS workflows remain critical for identifying novel chemical matter, often complementing CADD approaches.

Library Design: Diversity-Oriented vs Targeted Libraries

Thesis Context: Library design strategy directly impacts the screening hit rate and novelty, a key variable when comparing HTS to CADD's virtual screening output.

Table 1: Comparison of Library Design Strategies

| Design Strategy | Typical Library Size | Hit Rate Range (%) | Avg. Lead Novelty (Patentability Score*) | Primary Use Case | Key Advantage |

|---|---|---|---|---|---|

| Diversity-Oriented (Broad) | 100,000 - 2,000,000+ | 0.01 - 0.3 | High (85/100) | Novel Target, Phenotypic Screen | Maximizes chemical space exploration |

| Targeted (Focused) | 1,000 - 50,000 | 0.5 - 5.0 | Moderate (60/100) | Known Target Family (e.g., Kinases) | Higher hit rate for validated targets |

| Fragment-Based | 500 - 5,000 | 0.1 - 2.0 (by biophysical method) | Very High (90/100) | Challenging Targets (P:P interfaces) | Efficient sampling; high ligand efficiency |

| DNA-Encoded (DEL) | 1,000,000 - 100,000,000+ | N/A (selection-based) | High (80/100) | Soluble, purifyable targets | Unparalleled nominal library size |

*Patentability Score: Expert assessment (0-100) based on chemical uniqueness from prior art.

Experimental Protocol for Library QC:

- Compound Logistics: Dissolve compounds in DMSO to a standard stock concentration (e.g., 10 mM).

- Purity Analysis: Employ UPLC-MS with a C18 column (gradient: 5-95% acetonitrile in water over 3.5 min). Accept compounds with >90% purity.

- Concentration Verification: Use quantitative NMR (qNMR) with dimethyl sulfone as an internal standard on a statistical sample (e.g., 5% of plates).

- Assay Readiness: Transfer compounds to assay-ready plates via acoustic droplet ejection (ADE) to minimize DMSO variation (<0.5% final).

Assay Development: Homogeneous vs Heterogeneous Format Performance

Thesis Context: Assay robustness (Z'-factor) and scalability are decisive for HTS success rates, whereas CADD is not constrained by biochemical assay limitations.

Table 2: Comparison of Key HTS Assay Formats

| Assay Format | Typical Z'-Factor | Throughput (wells/day) | Cost per Well (USD) | False Positive Rate (%) | Common Artifacts |

|---|---|---|---|---|---|

| Homogeneous Time-Resolved FRET (HTRF) | 0.7 - 0.9 | 50,000 - 100,000 | 0.25 - 0.50 | 0.5 - 1.5 | Compound interference (quenchers) |

| AlphaScreen/AlphaLISA | 0.6 - 0.85 | 50,000 - 100,000 | 0.30 - 0.60 | 1.0 - 3.0 | Photoquenching, sensitive to ambient light |

| Fluorescence Polarization (FP) | 0.5 - 0.8 | 30,000 - 70,000 | 0.15 - 0.30 | 0.5 - 2.0 | Fluorescent compounds |

| Cell-Based Luminescence (e.g., Reporter Gene) | 0.5 - 0.75 | 20,000 - 50,000 | 0.40 - 0.80 | 1.0 - 5.0 | Cytotoxicity interference |

| High-Content Imaging (HCS) | 0.4 - 0.7 | 5,000 - 20,000 | 1.00 - 3.00 | Variable | Image analysis complexity |

Experimental Protocol for HTRF Assay Development (Kinase Example):

- Reaction Setup: In a low-volume 384-well plate, combine 2.5 µL of kinase, 2.5 µL of substrate (biotinylated peptide), and 5 µL of compound/DMSO.

- Incubation: Incubate for 60 min at RT with ATP added to initiate reaction.

- Detection: Stop reaction with 5 µL of EDTA solution. Add 5 µL of detection mix containing Eu³⁺-cryptate-labeled anti-phospho-antibody and Streptavidin-XL665.

- Read & Analyze: Incubate 1 hr, read on a compatible plate reader (e.g., PerkinElmer EnVision). Calculate Z' = 1 - [3*(σp + σn) / |μp - μn|], where p=positive control, n=negative control.

Robotics & Automation: Platform Comparison

Thesis Context: Automation reliability and integration minimize operational variability, a tangible advantage over the computational reproducibility of CADD.

Table 3: Comparison of HTS Robotic System Configurations

| System Type | Upfront Cost (USD) | Throughput (Compounds/Day) | Walk-Away Time (hrs) | Flexibility (Re-tooling ease) | Footprint (m²) |

|---|---|---|---|---|---|

| Benchtop Liquid Handler (e.g., Hamilton Star) | 80,000 - 150,000 | 1,000 - 10,000 | 2 - 6 | High | 1 - 2 |

| Integrated Modular System (e.g., PerkinElmer JANUS) | 250,000 - 500,000 | 10,000 - 50,000 | 8 - 24 | Medium | 6 - 12 |

| Fully Integrated Robotic Arm System (e.g., HighRes Biosolutions) | 750,000 - 2,000,000+ | 50,000 - 100,000+ | 24 - 72+ | Low (requires re-programming) | 15 - 40 |

Primary vs. Secondary Screening: Hit Triage

Thesis Context: The multi-stage confirmation process in HTS reduces false positives, analogous to docking score rescoring in CADD, but relies on empirical data.

Table 4: Primary vs. Secondary Screening Parameters

| Screening Stage | Goal | Concentration | Replicates | Controls per Plate | Key Output Metrics |

|---|---|---|---|---|---|

| Primary (Full Library) | Hit Identification | Single dose (e.g., 10 µM) | n=1 | 32 (16 high, 16 low) | % Inhibition, Z'-factor, Signal-to-Noise |

| Concentration-Response (Secondary) | Potency & Confirmation | 10-point, 1:3 serial dilution (e.g., 30 µM - 0.5 nM) | n=2 (minimum) | 16 (8 high, 8 low) | IC₅₀/EC₅₀, Hill Slope, R² |

| Orthogonal Assay (Secondary) | Mechanism/Artifact Check | Varies (e.g., IC₅₀) | n=2-3 | As above | Confirmation of activity in different format |

| Counter-Screen (Selectivity/Tox) | Specificity | Varies | n=2-3 | As above | Selectivity Index, Cytotoxicity IC₅₀ |

Experimental Protocol for Hit Triage:

- Primary Hit Selection: Apply thresholds (e.g., >50% inhibition, >3σ from mean).

- Compound Re-source: Obtain fresh powder from inventory for all selected hits.

- Concentration-Response: Test in 10-point dose duplicate in the primary assay.

- Orthogonal Validation: For enzymatic hits, use a mobility shift assay (Caliper LabChip). For cell-based hits, use a different reporter construct or viability assay (CellTiter-Glo).

- Promiscuity/Risk Assessment: Test in assay for aggregation (detergent sensitivity), redox activity (cysteine dependency), and fluorescent interference (parallel readouts).

Visualization: HTS Workflow & Hit Triage Pathway

HTS Workflow from Library to Confirmed Hits

The Scientist's Toolkit: Key Research Reagent Solutions

Table 5: Essential Materials for HTS Workflow Implementation

| Item | Function in HTS Workflow | Example Product/Brand | Key Specification |

|---|---|---|---|

| Assay-Ready Compound Plates | Pre-dispensed, dried-down compounds for screening. | Labcyte Echo Qualified Plates | 384-well, low dead volume, compatible with acoustic dispensing. |

| TR-FRET Detection Kit | Enables homogeneous, ratiometric kinase/protein interaction assays. | Cisbio HTRF Kits | Optimized antibody pair, high assay window (Delta F > 100%). |

| Cell-Based Reporter System | Genetically engineered cell line for pathway-specific screening. | Promega CellSensor or Thermo Fisher GeneBLAzer | Stable integration, low background luminescence/fluorescence. |

| Viability Assay Reagent | Measures cell health/cytotoxicity for counter-screening. | Promega CellTiter-Glo | Luminescent, ATP-dependent, homogeneous "add-mix-read". |

| Recombinant Protein (Tagged) | Purified target protein for biochemical assays. | Sino Biological, BPS Bioscience | >90% purity, activity-verified, His- or GST-tagged. |

| Non-reactive Plasticware | Low-binding plates and tips to prevent compound adsorption. | Corning Axygen, Greiner Bio-One | Polypropylene, surface-treated for protein and small molecule recovery. |

| DMSO (Hygrade) | Universal solvent for compound libraries. | Sigma-Aldry or equivalent | <0.005% water, sterile-filtered, sealed under inert gas. |

| Liquid Handler Tips | Disposable tips for precision reagent transfer. | Beckman Coulter Biomek Tips | Conductive, filtered tips for volume accuracy and contamination prevention. |

Within the broader thesis comparing Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) success rates, this guide examines the core computational methodologies. The thesis posits that an integrated CADD approach, utilizing the tools discussed herein, can de-risk early drug discovery by enriching compound libraries with biologically active candidates prior to physical HTS, thereby improving hit rates, reducing costs, and accelerating lead identification.

Performance Comparison of Core CADD Tools

The efficacy of CADD tools is typically measured by metrics such as enrichment factor (EF), hit rate (HR), and the ability to predict binding affinity or activity accurately.

Table 1: Comparative Performance in Virtual Screening Campaigns

| Tool Category | Example Software (Vendor) | Typical Enrichment Factor (EF₁%)* | Key Strength | Primary Limitation | Representative Experimental Validation |

|---|---|---|---|---|---|

| Molecular Docking | AutoDock Vina (Scripps) | 5-20 | Handles full ligand flexibility; estimates binding affinity. | Scoring function inaccuracies; limited protein flexibility. | Vina identified novel inhibitors of SARS-CoV-2 Mpro with IC₅₀ values in low µM range (PMID: 33258845). |

| Structure-Based Pharmacophore | LigandScout (Intel.) | 10-30 | Intuitive visual filters; robust to minor receptor movement. | Dependent on quality of input complex. | Screen of 1M compounds for CK2 inhibitors yielded a 23% hit rate among top-ranked (J. Chem. Inf. Model., 2017, 57, 6). |

| Ligand-Based Pharmacophore | Phase (Schrödinger) | 8-25 | Does not require a 3D protein structure. | Requires a set of known active molecules. | Identified novel ROCK-II inhibitors with 15% hit rate and sub-µM activity (Eur. J. Med. Chem., 2019, 179, 727). |

| 3D-QSAR | CoMFA/CoMSIA | N/A (Predictive Model) | Quantifies contribution of chemical fields to activity. | Requires aligned, congeneric series of ligands. | Model for EGFR inhibitors showed predictive r² > 0.8 on external test set (Bioorg. Chem., 2020, 94, 103363). |

*EF₁%: Enrichment Factor at 1% of the screened database, measuring how many more actives are found in the top 1% compared to random selection.

Experimental Protocol for a Typical Virtual Screening Validation:

- Dataset Preparation: A known benchmark dataset (e.g., DUD-E, DEKOIS 2.0) containing active compounds and decoys is used.

- Tool Configuration: The CADD tool (e.g., docking software) is configured with standard parameters. The protein structure is prepared (adding hydrogens, assigning charges).

- Screening Execution: All actives and decoys are processed through the tool, generating a ranked list.

- Performance Analysis: The ranked list is analyzed to calculate the EF and plot the Receiver Operating Characteristic (ROC) curve. The area under the ROC curve (AUC) and EF at early recovery (EF₁%, EF₁₀%) are key metrics.

- Experimental Confirmation: Top-ranked novel compounds (not in the training set) are acquired or synthesized and tested in vitro (e.g., enzymatic assay) to determine IC₅₀/Ki values, confirming true activity.

Integrated CADD Workflow Diagram

Title: Integrated CADD Workflow from Target to Hit Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for CADD Research

| Item | Function in CADD Research | Example/Provider |

|---|---|---|

| Protein Data Bank (PDB) | Primary repository for experimentally determined 3D structures of proteins and nucleic acids. Essential for structure-based methods. | www.rcsb.org |

| CHEMBL or PubChem BioAssay | Curated databases of bioactive molecules with associated biological activity data. Critical for QSAR model building and validation. | EMBL-EBI, NCBI |

| DECOY Datasets (DUD-E, DEKOIS) | Benchmark sets containing known actives and property-matched decoys. Used to objectively evaluate virtual screening performance. | DUD-E, DEKOIS 2.0 |

| Commercial Compound Libraries | Large, diverse, and often drug-like virtual compound libraries for virtual screening (e.g., ZINC, Enamine REAL). | ZINC20, Enamine REAL, ChemDiv |

| Molecular Visualization Software | For visualizing protein-ligand complexes, analyzing docking poses, and interpreting pharmacophore models. | PyMOL, UCSF Chimera, Maestro |

| High-Performance Computing (HPC) Cluster | Essential for performing computationally intensive tasks like docking of millions of compounds or molecular dynamics simulations. | Local university clusters, Cloud computing (AWS, Azure) |

| In vitro Assay Kits | For experimental validation of computational hits (e.g., kinase activity, binding affinity, cellular cytotoxicity assays). | Promega, Cayman Chemical, Abcam |

The Role of AI and Machine Learning in Enhancing Both CADD and HTS.

The ongoing debate in drug discovery often pits Computer-Aided Drug Design (CADD) against High-Throughput Screening (HTS) regarding their success rates and efficiency. Contemporary research now frames this not as a rivalry but as a synergistic pipeline, where Artificial Intelligence (AI) and Machine Learning (ML) enhance both paradigms. This guide compares how AI/ML integration transforms each approach, supported by experimental data.

AI/ML-Enhanced CADD vs. Traditional CADD: A Performance Comparison

AI-driven CADD moves beyond static molecular docking to predictive modeling of complex drug-like properties.

Table 1: Performance Comparison of AI-Enhanced CADD vs. Traditional Methods

| Metric | Traditional CADD (e.g., Docking) | AI/ML-Enhanced CADD (e.g., Deep Learning) | Experimental Context |

|---|---|---|---|

| Virtual Screening Enrichment (EF₁%) | 5-15 | 20-35 | Retrospective screen against DUD-E dataset; ML models pre-trained on known actives/inactives. |

| Binding Affinity Prediction (RMSE) | 1.5 - 2.5 pKd units | 0.8 - 1.2 pKd units | Evaluation on PDBBind refined set; comparison between scoring functions and Graph Neural Networks. |

| De Novo Molecule Generation (Validity Rate) | < 10% (Rule-based) | > 95% (Deep Generative Models) | Generation of 10,000 molecules using REINVENT vs. traditional fragment linking. |

| Lead Optimization Cycle Time | 12-18 months | Potentially reduced to 6-9 months | Projected from case studies predicting ADMET properties with >85% accuracy. |

Experimental Protocol for AI-Enhanced Virtual Screening:

- Data Curation: Assemble a training set of known active and decoy molecules for a specific target (e.g., kinase) from public databases (ChEMBL, PubChem).

- Feature Representation: Encode molecules as extended-connectivity fingerprints (ECFPs) or molecular graphs.

- Model Training: Train a gradient boosting classifier (e.g., XGBoost) or a graph convolutional network (GCN) to distinguish actives from decoys.

- Validation: Use time-split or cluster-based cross-validation to assess model generalizability, avoiding data leakage.

- Prospective Screening: Apply the trained model to screen an ultra-large virtual library (e.g., 10⁹ compounds). Top-ranked compounds are selected for in vitro testing.

- Experimental Confirmation: Compounds are tested in a primary biochemical assay (e.g., fluorescence polarization) at a single concentration (10 µM), with hits confirmed in dose-response to determine IC₅₀.

AI/ML-Enhanced HTS vs. Conventional HTS: A Performance Comparison

AI transforms HTS from a mere number-generator to an intelligent, adaptive system for data analysis and experimental design.

Table 2: Performance Comparison of AI-Enhanced HTS vs. Conventional HTS

| Metric | Conventional HTS | AI/ML-Enhanced HTS | Experimental Context |

|---|---|---|---|

| Hit Rate Improvement | 0.01% - 0.1% | 0.5% - 2% (via active learning) | Screening of 500,000 compounds against a GPCR; iterative model retraining guided subsequent selection. |

| False Positive/ Negative Reduction | High (20-40%) | Significantly Reduced (<10%) | Use of CNN-based image analysis in phenotypic HTS vs. traditional thresholding. |

| Data Information Yield | Low (Single Endpoint) | High (Multiparametric Analysis) | Multivariate analysis of cell painting data to identify subtle phenotypes. |

| Cost Efficiency per Quality Hit | High | Reduced by 30-70% | Achieved through smaller, smarter screening libraries and fewer assay cycles. |

Experimental Protocol for Active Learning-Guided HTS:

- Initial Seed Screen: Conduct a diversified mini-HTS of 1-5% of the full compound library.

- Model Building: Train a Bayesian machine learning model on the dose-response data from the seed screen.

- Iterative Prediction & Selection: The model predicts the most promising untested compounds. The top 0.1% are selected for the next experimental cycle.

- Iterative Testing & Retraining: Selected compounds are tested experimentally. Their results are added to the training data, and the model is retrained.

- Convergence: The cycle repeats until a predefined number of high-potency leads are identified or a budget is exhausted, maximizing hit discovery from fewer assays.

Visualization of Integrated AI/ML in Modern Drug Discovery

Title: AI/ML as the Core Integrator of CADD and HTS

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for AI-Guided Drug Discovery Experiments

| Item | Function in AI/ML-Enhanced Workflows |

|---|---|

| Curated Bioactivity Databases (e.g., ChEMBL, PubChem BioAssay) | Provide large-scale, structured training data for predictive model development. |

| DNA-Encoded Library (DEL) Kits | Generate massive chemical diversity (10⁷-10¹⁰ compounds) for AI model training and direct screening. |

| Cell Painting Assay Kits | Enable high-content phenotypic screening, generating rich, multiparametric data for ML analysis. |

| High-Quality Target Protein (≥95% purity) | Essential for generating reliable primary HTS data and structural data for AI-based docking. |

| Validated Biochemical Assay Kits (e.g., Kinase Glo) | Provide robust, reproducible endpoint data for initial model training in active learning cycles. |

| Open-Source ML Platforms (e.g., DeepChem, RDKit) | Software toolkits for molecule representation, model building, and integration with assay data. |

| Cloud Computing Credits (AWS, GCP, Azure) | Provide scalable computational power for training large deep learning models on chemical datasets. |

This guide compares the efficiency, cost, and success rates of High-Throughput Screening (HTS)-first and Computer-Aided Drug Design (CADD)-first strategies for identifying a novel kinase inhibitor. The analysis is framed within ongoing research examining the broader success rates of CADD versus empirical screening methodologies in early drug discovery.

Comparative Performance Analysis

Table 1: Strategic Comparison for Novel Kinase Target "KINX"

| Metric | HTS-First Strategy | CADD-First Strategy | Notes |

|---|---|---|---|

| Primary Hits Identified | 127 compounds (>70% inhibition at 10 µM) | 18 virtual hits prioritized for synthesis | From 250,000-compound library vs. 2 million compound virtual screen |

| Confirmed IC₅₀ < 1 µM | 9 compounds (0.0036% hit rate) | 3 compounds (16.7% success from synthesized hits) | Dose-response in enzymatic assay |

| Selectivity (≥50-fold vs. kinome panel) | 2/9 compounds | 2/3 compounds | Tested against 468 human kinases |

| Cell-based Activity (EC₅₀ < 5 µM) | 4/9 compounds | 2/3 compounds | In KINX-overexpressing cell line |

| Lead Optimization Time | 18-24 months | 12-15 months | To pre-clinical candidate |

| Direct Cost to Lead | ~$350,000 | ~$150,000 | Includes reagent, screening, and initial synthesis costs |

| Structural Data Utilized | Not required for primary screen | Essential (Homology model or crystal structure) |

Detailed Experimental Protocols

Protocol 1: HTS-First Campaign for KINX

Objective: Identify inhibitors of KINX kinase activity from a diverse chemical library. Method:

- Enzyme Source: Recombinant human KINX catalytic domain (His-tagged), expressed in Sf9 insect cells.

- Assay Format: Homogeneous Time-Resolved Fluorescence (HTRF) kinase assay in 384-well plates.

- Reaction: 5 nM KINX, 1 µM biotinylated peptide substrate, 10 µM ATP (Km app), in 20 mM HEPES pH 7.5, 10 mM MgCl₂, 1 mM DTT.

- Screening: 250,000 compounds at 10 µM final concentration. Controls: 100% activity (DMSO), 0% activity (control inhibitor staurosporine).

- Primary Hit Criteria: >70% inhibition. Hits progressed to dose-response (IC₅₀) and counter-screens for assay interference.

Protocol 2: CADD-First Campaign for KINX

Objective: Virtually screen and rationally design KINX inhibitors. Method:

- Model Preparation: Generate a homology model of KINX using MODELLER, based on a crystal structure of a close homolog (e.g., PDB: 4RSU).

- Binding Site Definition: Define the ATP-binding pocket using CASTp and literature on kinase conserved motifs.

- Virtual Screening:

- Step 1 (Filtering): Filter a 2 million-compound library (e.g., ZINC20) for drug-like properties (Lipinski's Rule of 5, MW <450).

- Step 2 (Docking): Dock filtered library (~500,000 compounds) using Glide SP. Top 10,000 poses retained.

- Step 3 (Scoring & Clustering): Re-score with MM-GBSA. Cluster results by chemotype and select 50 diverse candidates for visual inspection.

- Hit Prioritization: 18 compounds selected based on docking score, interaction patterns (key hinge hydrogen bond), and commercial availability/synthetic tractability.

Visualized Workflows and Pathways

Diagram 1: Comparative strategic workflow for novel kinase inhibition.

Diagram 2: Key protein-ligand interactions for kinase inhibitor design.

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Kinase Inhibitor Discovery

| Item | Function in HTS/CADD | Example Vendor/Product |

|---|---|---|

| Recombinant Kinase Protein | Source of enzyme for biochemical assays and structural studies. | Carna Biosciences, Thermo Fisher (Proteinkinase) |

| Kinase Assay Kits (HTRF/FP) | Homogeneous, robust assay systems for HTS and dose-response. | Cisbio KinaSure, PerkinElmer LANCE Ultra |

| Diverse Compound Library | Physical library for HTS; annotated with chemical structures. | ChemDiv, Enamine REAL, Selleckchem |

| Virtual Compound Library | Database of purchasable/synthesizable compounds for virtual screening. | ZINC20, MCULE, Molport |

| Molecular Docking Software | Predicts binding pose and affinity of small molecules to target. | Schrödinger Glide, OpenEye FRED, AutoDock Vina |

| Homology Modeling Software | Generates 3D protein model when crystal structure is unavailable. | MODELLER, SWISS-MODEL, I-TASSER |

| Kinome Profiling Service | Assesses compound selectivity across hundreds of kinases. | Eurofins DiscoverX KINOMEscan, Reaction Biology |

| Crystallography Services | Determines atomic-level structure of kinase-inhibitor complexes. | CRelia Crystal Drug Development (CCDD) |

High-Throughput Screening (HTS) remains a cornerstone of drug discovery, capable of testing millions of compounds. However, its success rate is often hampered by high false-positive rates, promiscuous binders, and the sheer volume of data. This article frames the integrative use of Computer-Aided Drug Design (CADD) within a broader thesis positing that a synergistic CADD-HTS strategy significantly improves the quality of hit identification and prioritization over either approach in isolation. CADD acts as a rational filter, enriching HTS libraries and triaging outputs to prioritize compounds with higher probabilities of being true, developable hits.

Performance Comparison: CADD-Triaged HTS vs. Standalone HTS

The following table summarizes key metrics from recent studies comparing the success rates of integrative approaches versus traditional HTS.

Table 1: Comparative Performance of HTS and CADD-Integrated Strategies

| Metric | Traditional HTS | CADD-Pre-filtered HTS | CADD-Post-HTS Triaging | Data Source / Study Context |

|---|---|---|---|---|

| Initial Hit Rate | 0.01% - 0.5% | 0.1% - 2.0% | N/A (Applied post-HTS) | Retrospective analysis, kinase targets |

| Confirmed Hit Rate (after validation) | 10% - 30% of initial hits | 40% - 70% of initial hits | Increases confirmation to 50%-80% | Consortium benchmarking data |

| Avg. Ligand Efficiency (LE) of Hits | 0.30 - 0.35 | 0.35 - 0.45 | Improves LE by ~0.05 avg. | Fragment-based screening campaign |

| Presence of Undesirable Motifs (Pan-Assay Interference Compounds, PAINS) | 5% - 15% of hits | < 2% of hits | Reduces by >70% post-filtering | Published analysis of public HTS data |

| Time to Prioritized Hit List | Weeks to months (manual triage) | Similar HTS time, faster analysis | Cuts triage time by 50-80% | Industry case study, protease target |

Experimental Protocols for Key Integrative Workflows

Protocol 1: Structure-Based Pre-Filtering of HTS Libraries

- Objective: Enrich an HTS library with compounds likely to bind the target's active site.

- Methodology:

- Target Preparation: Obtain a high-resolution 3D structure of the target protein (e.g., from X-ray crystallography). Prepare the structure using software (e.g., Schrödinger's Protein Preparation Wizard) to add hydrogens, assign bond orders, and optimize side-chain orientations.

- Library Preparation: Convert a multi-million compound HTS library into 3D conformers. Apply standard drug-like filters (e.g., Lipinski's Rule of Five, removal of PAINS).

- Molecular Docking: Perform high-throughput docking (e.g., using Glide HTVS or AutoDock Vina) of the filtered library into the defined binding site.

- Scoring & Selection: Rank compounds based on docking score and visual inspection of key interactions. Select the top 50,000-100,000 compounds for physical HTS.

- Key Reagents: Target protein crystal structure, commercial HTS compound library in SDF format.

Protocol 2: Ligand-Based Triaging of HTS Output

- Objective: Prioritize HTS hits by identifying compounds with desirable pharmacophoric or similarity profiles.

- Methodology:

- Primary HTS: Execute the standard screening assay. Identify primary hits (e.g., >50% inhibition/activation at screening concentration).

- Data Curation: Compile structures and activity data of primary hits.

- Pharmacophore Modeling: (If known actives exist) Generate a pharmacophore model using known inhibitors. Screen all HTS hits against this model to prioritize those matching key features.

- Similarity Clustering & Scoring: Cluster hits based on chemical fingerprint similarity (e.g., Tanimoto coefficient using ECFP4). Apply machine learning models (e.g., Random Forest) trained on historical HTS data to score hits for lead-likeness and predicted promiscuity.

- Consensus Ranking: Generate a final prioritized list by combining scores from steps 3, 4, and the original potency data.

Visualizations

Title: Integrative CADD-HTS Workflow for Hit Discovery

Title: Multi-Filter CADD Triage Funnel for HTS Output

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for CADD-HTS Integration

| Item / Solution | Function in Integrative Workflow | Example Vendor/Software |

|---|---|---|

| Commercial HTS Compound Libraries | Source of diverse small molecules for physical screening. | ChemDiv, Enamine, Mcule |

| 3D Chemical Structure Databases | Prepared, ready-to-dock formats of screening libraries. | ZINC20, eMolecules |

| Molecular Docking Suite | For virtual screening and binding pose prediction. | Schrödinger Glide, CCDC GOLD, OpenEye FRED |

| Cheminformatics Toolkit | For structure manipulation, filtering, and fingerprint analysis. | RDKit, Open Babel, Schrödinger Canvas |

| Pharmacophore Modeling Software | To define and screen for essential interaction features. | PharmaGist, MOE, LigandScout |

| Machine Learning Platform | To build models for activity/property prediction from HTS data. | scikit-learn, KNIME, DeepChem |

| Assay-Ready Protein | High-quality, purified target protein for HTS experiments. | R&D Systems, BPS Bioscience, in-house expr. |

| HTS-Compatible Assay Kit | Validated biochemical/cell-based assay for primary screening. | Promega, PerkinElmer, Cisbio |

Overcoming Pitfalls: Key Challenges and Optimization Strategies for CADD & HTS

Within the ongoing research comparing Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) success rates, a critical examination of common HTS failures is paramount. While HTS remains a cornerstone of early discovery, its output is frequently contaminated by false positives and biased hits. This guide objectively compares the performance of mitigation strategies for three primary failure modes.

1. Artifact Compounds: Aggregators vs. Non-Aggregating Controls

Artifact compounds, particularly promiscuous aggregators, constitute a major class of HTS false positives. The table below compares a standard HTS campaign without specific counterscreens to one incorporating detergent-based and enzyme-based interference assays.

| Mitigation Strategy | % of Initial Hits Remaining | Confirmed True Binders (via SPR/ITC) | Key Experimental Observation |

|---|---|---|---|

| Single-Point HTS (No Counterscreen) | 100% (Baseline) | 5-15% | High hit rate (1-3%); activity is non-dose-responsive and collapses in biophysical assays. |

| + 0.01% Triton X-100 Assay | 20-40% | ~50% of remaining | Detergent abolishes activity of colloidal aggregates, selectively removing this class. |

| + Non-detergent sensitive assay (e.g., AlphaScreen) | 10-25% | 70-80% of remaining | Correlated activity across orthogonal assay technologies indicates specific binding. |

Experimental Protocol for Detergent Counterscreen:

- Primary HTS: Perform assay in standard buffer (e.g., PBS, pH 7.4).

- Re-test: Confirm dose-response for all primary hits.

- Counterscreen: Re-run dose-response in identical buffer containing 0.01% v/v Triton X-100.

- Analysis: Compounds showing >70% reduction in activity (shift in IC50/EC50) in detergent are classified as aggregators and deprioritized.

2. Assay Interference: Fluorescence vs. Luminescence-Based Detection

Assay interference, such as compound fluorescence or quenching, is highly dependent on detection technology. The following compares common HTS readouts.

| Assay Technology | Reported False Positive Rate | Major Interference Type | Orthogonal Validation Rate |

|---|---|---|---|

| Fluorescence Intensity (FI) | 10-20% | Inner filter effect, compound fluorescence/quenching | Low (~20-30%) |

| Fluorescence Polarization (FP) | 5-10% | Fluorescence interference, light scattering | Moderate (~40-50%) |

| Time-Resolved FRET (TR-FRET) | 2-5% | Compound absorbance at excitation wavelengths | High (~60-70%) |

| Luminescence (e.g., Luciferase) | 1-3% | Luciferase inhibition, redox cycling | Very High (~80-90%) |

Experimental Protocol for Inner Filter Effect Correction (Fluorescence Assays):

- Sample Measurement: Record fluorescence (F_sample) of compound in assay buffer with fluorophore.

- Control Measurement: Record fluorescence (F_control) of compound in buffer without fluorophore.

- Reference Measurement: Record fluorescence (F_ref) of buffer with fluorophore only.

- Calculation: Corrected Signal = (Fsample - Fcontrol) / Fref. A significant Fcontrol indicates direct compound fluorescence.

3. Library Bias: Diversity vs. Focused Library Performance

The composition of the screening library dictates the chemical space explored and inherently biases outcomes.

| Library Type | Hit Rate (%) | Lead-like/Beyond Rule of 5 | Confirmed Novel Scaffolds |

|---|---|---|---|

| Large Diverse (500k-1M+) | 0.1 - 1.0 | ~80% / ~5% | High |

| Focused/Target-Class | 1 - 5 | ~90% / <2% | Low to Moderate |

| DNA-Encoded (DEL) | N/A (selection) | ~50% / 10-20% | Very High |

| Fragments (by SPR/BSI) | 0.5 - 5 (by binding) | ~100% / N/A | High |

Experimental Protocol for Assessing Library Bias via Retrospective Analysis:

- Define a "Gold Standard" Set: Curate a set of known, validated binders for a target family (e.g., kinases).

- Fingerprint Calculation: Calculate molecular fingerprints (e.g., ECFP4) for both the gold standard set and the screening library.

- Similarity Analysis: Perform a similarity search (e.g., Tanimoto coefficient) for each gold standard compound against the library.

- Bias Metric: Calculate the mean nearest-neighbor similarity. A high value indicates the library is biased towards known chemotypes for that target class.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function in Mitigating HTS Failures |

|---|---|

| Triton X-100 or CHAPS | Non-ionic detergent used to disrupt promiscuous aggregate formation. |

| AlphaScreen/AlphaLISA Beads | Bead-based proximity assay utilizing singlet oxygen, largely immune to optical interference. |

| Surface Plasmon Resonance (SPR) Chip | Label-free biosensor for confirming direct, stoichiometric binding kinetics. |

| Cellular Dielectric Spectroscopy (CDS) | Label-free, impedance-based cellular assay to confirm functional activity without reporter artifacts. |

| DMSO-matched Control Plates | Plates containing only DMSO in assay buffer, critical for identifying plate-based artifacts and normalizing signals. |

| Reductase Enzymes (e.g., DT-Diaphorase) | Used to identify compounds acting via redox cycling mechanisms in luciferase assays. |

Visualization: Experimental Workflow for Triaging HTS Hits

Title: HTS Hit Triage Workflow to Eliminate Artifacts

Visualization: Sources of HTS Assay Interference

Title: Primary Mechanisms of HTS Assay Interference

Within the broader research thesis comparing Computer-Aided Drug Design (CADD) and high-throughput screening (HTS) success rates, a critical examination of CADD's core technical limitations is essential. This guide objectively compares the performance of common computational methodologies, highlighting how their inherent deficiencies impact predictive accuracy and contribute to the attrition rates observed in virtual screening campaigns.

Force Field Inaccuracies: A Comparison of Biomolecular Simulations

Force fields are parametric equations used to calculate the potential energy of a molecular system. Inaccuracies arise from approximations in functional forms, parameter derivation, and inability to model quantum effects explicitly, leading to errors in conformational sampling and binding free energy estimates.

Table 1: Comparison of Classical Force Field Performance in Protein-Ligand Binding Free Energy (ΔG) Prediction

| Force Field | Year | Test System (e.g., Protein Target) | Average Absolute Error (kcal/mol) vs. Experiment | Key Limitation Highlighted | Primary Alternative/Competitor |

|---|---|---|---|---|---|

| AMBER ff14SB/GAFF2 | 2016 | SAMPL6 Challenge (Various) | ~2.1 - 3.5 | Poor torsional parameterization for novel chemotypes; fixed partial charges. | CHARMM36m: Often shows better accuracy in protein loop and membrane simulations. |

| CHARMM36m | 2017 | Bromodomain-inhibitor complexes | ~1.8 - 2.8 | Less accurate for certain ionic interactions compared to refined water models. | AMBER ff19SB: Improved backbone and sidechain torsions. |

| OPLS3/4 | 2017, 2018 | JAK2 kinase inhibitors | ~1.5 - 2.2 (OPLS4) | Proprietary parameterization; performance varies with ligand entropy estimation. | Open Force Fields (OpenFF): Iteratively improved via open-source, quantum-chemistry driven refitting. |

Experimental Protocol for Force Field Validation (Alchemical Free Energy Perturbation - FEP):

- System Setup: A protein-ligand complex is solvated in an explicit water box (e.g., TIP3P) with neutralizing ions. A "dual-topology" hybrid molecule representing both the initial (state A) and final (state B) ligand is created.

- Lambda Coupling: The transformation from A to B is divided into a series of non-physical intermediate states (λ windows, e.g., λ=0.0 to 1.0). The potential energy function is a linear mix:

V(λ) = (1-λ)*V_A + λ*V_B. - Molecular Dynamics (MD) Simulation: Each λ window is simulated separately using the tested force field for multiple nanoseconds to sample configurations.

- Free Energy Analysis: The Zwanzig equation or MBAR (Multistate Bennett Acceptance Ratio) method is applied to integrate energy differences across λ windows, yielding ΔΔG_bind.

- Validation: The computed ΔΔG_bind for a series of congeneric ligands is compared to experimentally measured binding affinities (e.g., IC50, Kd) to calculate the mean unsigned error (MUE).

Title: FEP Protocol for Force Field Validation

Solvation Model Deficiencies in Binding Affinity Prediction

Solvation models approximate the solvent's effect. Implicit models (e.g., GB, PBSA) are fast but lack specific solvent interactions, while explicit models are accurate but computationally prohibitive for high-throughput use.

Table 2: Comparison of Solvation Models in Docking & Scoring

| Solvation Model | Type | Typical Use Case | Reported RMSD/Error Increase vs. Explicit Solvent | Key Deficiency |

|---|---|---|---|---|

| Generalized Born (GB) OBC | Implicit | MM-PBSA/GBSA post-processing | ΔG error: ± 2-4 kcal/mol | Poor handling of hydrophobic effects & specific H-bonds. |

| Poisson-Boltzmann (PB) | Implicit | Rigorous electrostatic scoring | Slightly better than GB for charged systems. | High computational cost; sensitive to atomic radii parameters. |

| Explicit TIP3P/SPC/E | Explicit | Alchemical FEP, MD | Gold standard for accuracy. | Not feasible for high-throughput screening. |

| Reference Interaction Site Model (RISM) | Statistical Mechanics | Specialized ligand solvation | Variable; can fail for complex interfaces. | High computational cost and parameter sensitivity. |

Experimental Protocol for Solvation Model Testing (MM-GBSA End-Point Calculation):

- Ensemble Generation: Multiple snapshots are extracted from an explicit-solvent MD trajectory of the protein-ligand complex, as well as separate trajectories for the protein and ligand.

- Energy Decomposition: For each snapshot, the solvation free energy is calculated using the tested implicit model (e.g., GB). The total free energy is:

ΔG_bind = G_complex - (G_protein + G_ligand), whereG = E_MM + G_solv - T*S.E_MMis the gas-phase molecular mechanics energy,G_solvis the solvation free energy, and-T*Sis the entropy term (often omitted or approximated). - Averaging: ΔG_bind values are averaged across all snapshots.

- Correlation Analysis: The averaged ΔG_bind for a series of ligands is correlated with experimental binding data. The slope, R², and Kendall's Tau are reported to assess model utility for rank-ordering.

Scoring Function Deficiencies in Virtual Screening

Scoring functions are used to predict binding affinity from a single pose. They suffer from approximations in modeling entropy, solvation, and receptor flexibility, leading to high false-positive rates.

Table 3: Performance Comparison of Scoring Function Types in Docking

| Scoring Function Type | Example Software | Average Enrichment Factor (EF1%)* in Benchmarks | Primary Failure Mode |

|---|---|---|---|

| Force Field-Based | AutoDock4, Gold (Chemscore) | 10-25 | Inaccurate entropic & solvation terms; fixed charges. |

| Empirical | Glide (SP, XP), MOE (London dG) | 15-30 | Parameter overfitting to training sets; poor transferability. |

| Knowledge-Based | IT-Score, DrugScore | 10-20 | Dependence on quality and breadth of structural database. |

| Machine Learning | RF-Score, nnScore, Glide (D-Score) | 20-40 (Variable) | Black-box nature; performance plummets on novel targets/scaffolds. |

*EF1%: Ratio of true hits found in the top 1% of the screened database vs. a random selection.

Experimental Protocol for Scoring Function Validation (Docking Enrichment Study):

- Dataset Curation: A known active compound set (50-200 molecules) is mixed with a large set of decoy molecules (1000-10,000) presumed to be inactive but with similar physicochemical properties (e.g., from DUD-E or DEKOIS libraries).

- Docking & Scoring: The combined library is docked into a fixed protein binding site. Every pose is scored by the function(s) under evaluation.

- Ranking & Analysis: All molecules are ranked by their best score. The enrichment factor (EF) at a given percentage (e.g., EF1%, EF5%) of the screened database is calculated:

EF_x% = (Hits_found_x% / Total_hits) / (x% / 100). Receiver Operating Characteristic (ROC) curves are plotted, and the Area Under the Curve (AUC) is computed. - Pose Prediction Assessment: For known actives with crystallographic poses, the Root-Mean-Square Deviation (RMSD) of the top-scored pose from the experimental pose is calculated.

Title: Scoring Function Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions for CADD Validation

| Item (Software/Database) | Function in CADD Limitation Research |

|---|---|

| AMBER, CHARMM, GROMACS | MD simulation suites for force field and explicit solvation model testing. |

| Schrödinger Suite (FEP+), OpenMM | Platforms for running automated alchemical FEP calculations. |

| DUD-E, DEKOIS 2.0 | Benchmark databases of active compounds and matched decoys for scoring function validation. |

| PDBbind Database | Curated collection of protein-ligand complexes with binding affinity data for force field & scoring training/validation. |

| SAMPL Blind Prediction Challenges | Community-wide challenges providing unbiased datasets for testing CADD methods. |

| GAUSSIAN, ORCA | Quantum chemistry software for generating reference data (e.g., partial charges, torsion profiles) for force field refinement. |

Within the ongoing debate on CADD vs. high-throughput screening (HTS) success rates, the practical optimization of HTS campaigns remains critical. This guide compares methodologies and tools central to three HTS pillars, using current experimental data to illustrate performance.

Quality Control Metrics: Plate Uniformity Assays

A robust QC system is foundational. The Z'-factor is the standard metric, comparing the dynamic range and variability of positive and negative controls.

Table 1: Comparison of Plate QC Assay Performance

| Assay Type | Typical Z'-factor (Mean ± SD) | Signal-to-Noise (S/N) | CV (%) of Controls | Common Artifact Susceptibility |

|---|---|---|---|---|

| Homogeneous FRET (e.g., Kinase) | 0.72 ± 0.08 | 12.5 ± 2.1 | 5.2 | Compound fluorescence, quenching |

| Luminescent (e.g., Viability) | 0.85 ± 0.05 | 25.3 ± 4.5 | 3.8 | Compound luminescence interference |

| Fluorescence Polarization (FP) | 0.65 ± 0.10 | 8.5 ± 1.8 | 7.5 | Inner filter effect, autofluorescence |

| AlphaScreen/ALPHA | 0.78 ± 0.07 | 18.7 ± 3.2 | 4.5 | Photo-bleaching, detergent sensitivity |

Experimental Protocol for Z'-factor Calculation:

- Plate Design: Include 32 positive control wells (e.g., 100% inhibition) and 32 negative control wells (e.g., 0% inhibition) dispersed across the assay plate.

- Assay Execution: Perform the HTS assay protocol under standard conditions.

- Data Acquisition: Read the plate using the appropriate detector (luminescence, fluorescence).

- Calculation:

- Calculate the mean (µ) and standard deviation (σ) for both positive (p) and negative (n) control sets.

- Z' = 1 - [ (3σp + 3σn) / |µp - µn| ].

- Acceptance Criterion: Plates with Z' ≥ 0.5 are considered excellent for HTS.

Title: HTS Plate Quality Control Workflow

Hit Validation: Counterscreening Strategies

Initial "hits" must be validated through counterscreens to eliminate non-specific actors like pan-assay interference compounds (PAINS).

Table 2: Efficacy of Counterscreens in Hit Triage

| Counterscreen Target | % of Primary Hits Eliminated (Range) | Assay Format | Key Artifact Detected | Validation Tier |

|---|---|---|---|---|

| Promiscuous Inhibitor (e.g., Aggregators) | 40-60% | Fluorescent detergent sensitivity (e.g., Dye-based) | Compound aggregation | Primary |

| Cytochrome P450 Inhibition | 5-15% | Fluorescent substrate conversion | Off-target metabolism effects | Secondary |

| Fluorescence Interference (at λex/λem) | 20-35% | Compound-only in assay buffer | Signal quenching/autofluorescence | Primary |

| Cytotoxicity (General) | 10-25% | Cell viability (e.g., ATP luminescence) | False positives in cell-based assays | Secondary |

Experimental Protocol for Aggregator Counterscreen (Detergent-Based):

- Sample Preparation: Dilute the primary hit compound in assay buffer with and without a non-ionic detergent (e.g., 0.01% Triton X-100).

- Assay Setup: Run the primary HTS assay protocol in parallel for both sample sets.

- Data Analysis: Plot dose-response curves for both conditions.

- Interpretation: A rightward shift (reduced potency) in the presence of detergent indicates the compound likely acts via aggregation. True inhibitors show no potency shift.

Title: Hit Validation Counterscreening Cascade

Library Diversity: Analysis of Screening Collections

Library composition directly impacts HTS success, contrasting with CADD's focused libraries. Diversity is measured by chemical descriptor space coverage (e.g., Tanimoto similarity, molecular weight, clogP).

Table 3: Chemical Space Coverage of Representative Library Types

| Library Type | Avg. Pairwise Tanimoto Similarity (FP2) | MW Range (Da) | clogP Range | Predicted PAINS Alerts (%) | Unique Scaffolds per 10k Cpds |

|---|---|---|---|---|---|

| Large Pharma HTS Collection | 0.23 ± 0.02 | 200-600 | -2 to 5 | 4.5 | ~850 |

| Fragment Library | 0.12 ± 0.03 | 120-300 | -3 to 3 | 0.8 | ~1200 |

| Combinatorial Library | 0.65 ± 0.10 | 250-550 | 1 to 8 | 7.2 | ~50 |

| Target-Focused (CADD-informed) | 0.45 ± 0.08 | 300-500 | 2 to 6 | 5.5 | ~150 |

| Natural Product Derivatives | 0.28 ± 0.05 | 250-700 | -1 to 7 | 3.1 | ~950 |

Protocol for Assessing Library Diversity via Principal Component Analysis (PCA):

- Descriptor Calculation: For all library compounds, compute a set of chemical descriptors (e.g., molecular weight, number of rotatable bonds, polar surface area, topological indices).

- Data Standardization: Normalize all descriptor values to have a mean of 0 and standard deviation of 1.

- PCA Execution: Perform PCA on the standardized descriptor matrix.

- Variance & Plot: Calculate the variance captured by the first 2-3 principal components (PCs). Plot compounds in 2D/3D space using these PCs.

- Analysis: A library with broad, even distribution across PC space is considered diverse. Clustered compounds indicate high similarity.

Title: Pillars of an Optimized Screening Library

The Scientist's Toolkit: Key Research Reagent Solutions

| Item & Common Example | Function in HTS Optimization |

|---|---|

| Fluorescent/Luminescent Substrate (e.g., ATP-Lite) | Provides the detectable signal in biochemical assays; choice impacts Z' and S/N. |

| Recombinant Target Protein (e.g., His-tagged Kinase) | Essential for biochemical HTS; purity and activity are critical for low variability. |

| Cell Line with Reporter (e.g., Luciferase under pathway control) | Enables cell-based phenotypic or pathway screening; stability is key for reproducibility. |

| Detection Beads (e.g., AlphaLisa Donor/Acceptor) | Enable no-wash, homogeneous assays like AlphaScreen, improving throughput and robustness. |

| Positive Control Inhibitor/Agonist (e.g., Staurosporine, Forskolin) | Serves as control for 100% inhibition/activation for Z' calculation and plate normalization. |

| Non-ionic Detergent (e.g., Triton X-100) | Used in aggregator counterscreens to disrupt false positives from colloidal aggregates. |

| qHTS Compound Library (e.g., 100k+ diversity set) | The core screening collection; its quality and diversity define the campaign's potential. |

| Automated Liquid Handler (e.g., Echo, Multidrop) | Enables precise, high-speed compound and reagent transfer, minimizing volumetric errors. |

Within the broader research thesis comparing Computer-Aided Drug Design (CADD) and High-Throughput Screening (HTS) success rates, a critical focus is the systematic optimization of CADD methodologies. This guide compares three advanced approaches: ensemble docking, Free Energy Perturbation (FEP), and the integration of bioactivity data, supported by experimental benchmarks.

Performance Comparison of CADD Optimization Methods

The following table summarizes key performance metrics from recent studies comparing these methodologies against standard docking.

Table 1: Comparative Performance of Advanced CADD Techniques

| Method | Primary Use | Key Performance Metric vs. Standard Docking | Typical Required Compute Time | Experimental Validation Source |

|---|---|---|---|---|

| Ensemble Docking | Account for protein flexibility | ~20-40% improvement in hit rate for flexible targets; R² ~0.6-0.7 for pose prediction vs. ~0.4-0.5 for single structure. | 5-50x (scales with # of structures) | Gorgon et al., J. Chem. Inf. Model., 2023 |

| Free Energy Perturbation (FEP) | Binding affinity prediction (lead optimization) | Root Mean Square Error (RMSE) of ~1.0 kcal/mol; R² > 0.8 for relative binding affinity. Superior to scoring functions (RMSE > 3.0 kcal/mol). | 100-1000x per perturbation | Courtial et al., J. Chem. Theory Comput., 2022 |

| Bioactivity Data Integration (e.g., QSAR, ML) | Virtual screening & activity prediction | Enrichment Factor (EF₁%) improved by 30-50% over structure-only methods. | 2-10x (for model training) | Chen et al., Brief. Bioinform., 2024 |

Detailed Experimental Protocols

Protocol 1: Ensemble Docking Workflow

- Target Preparation: Collect multiple protein conformations from: a) Molecular Dynamics (MD) simulation snapshots (e.g., 100ns simulation, sampled every 10ns), b) NMR models, or c) crystal structures of homologs/apo forms.

- Ligand Library Preparation: Generate 3D conformers for ligands, applying consistent protonation states and tautomers at pH 7.4 ± 0.5.

- Docking Execution: Dock each ligand into every protein conformation using a defined software (e.g., Glide SP, AutoDock Vina) with standardized grid parameters.

- Pose Consensus & Scoring: Apply a consensus scoring strategy. Rank ligands by the best docking score across the ensemble or by average score. Select top-ranked compounds for experimental testing.

Protocol 2: Free Energy Perturbation (FEP) Calculation

- System Setup: From a co-crystal structure, build a solvated system (TIP3P water, 10Å buffer) with neutralizing ions. Use the OPLS4 or CHARMM36 force field.