Demystifying AI in Single-Cell RNA-Seq: A Comprehensive Guide to Methods, Applications, and Best Practices

This article provides a comprehensive overview of Artificial Intelligence (AI) and Machine Learning (ML) methods revolutionizing single-cell RNA sequencing (scRNA-seq) analysis.

Demystifying AI in Single-Cell RNA-Seq: A Comprehensive Guide to Methods, Applications, and Best Practices

Abstract

This article provides a comprehensive overview of Artificial Intelligence (AI) and Machine Learning (ML) methods revolutionizing single-cell RNA sequencing (scRNA-seq) analysis. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts from data preprocessing to cell type identification, delves into advanced methodologies for trajectory inference and spatial transcriptomics integration, addresses critical troubleshooting and optimization strategies for real-world data challenges, and offers a comparative analysis of popular tools and validation frameworks. The guide synthesizes current best practices and explores future directions, empowering readers to effectively leverage AI to unlock deeper biological insights from complex single-cell datasets.

The AI Engine for scRNA-seq: Core Concepts and Initial Data Exploration

This application note details the single-cell RNA sequencing (scRNA-seq) data analysis pipeline, highlighting critical steps from raw data processing to biological interpretation. Framed within the broader thesis on AI methods for scRNA-seq research, we illustrate how artificial intelligence and machine learning are transforming each stage, enabling novel discoveries in biology and drug development.

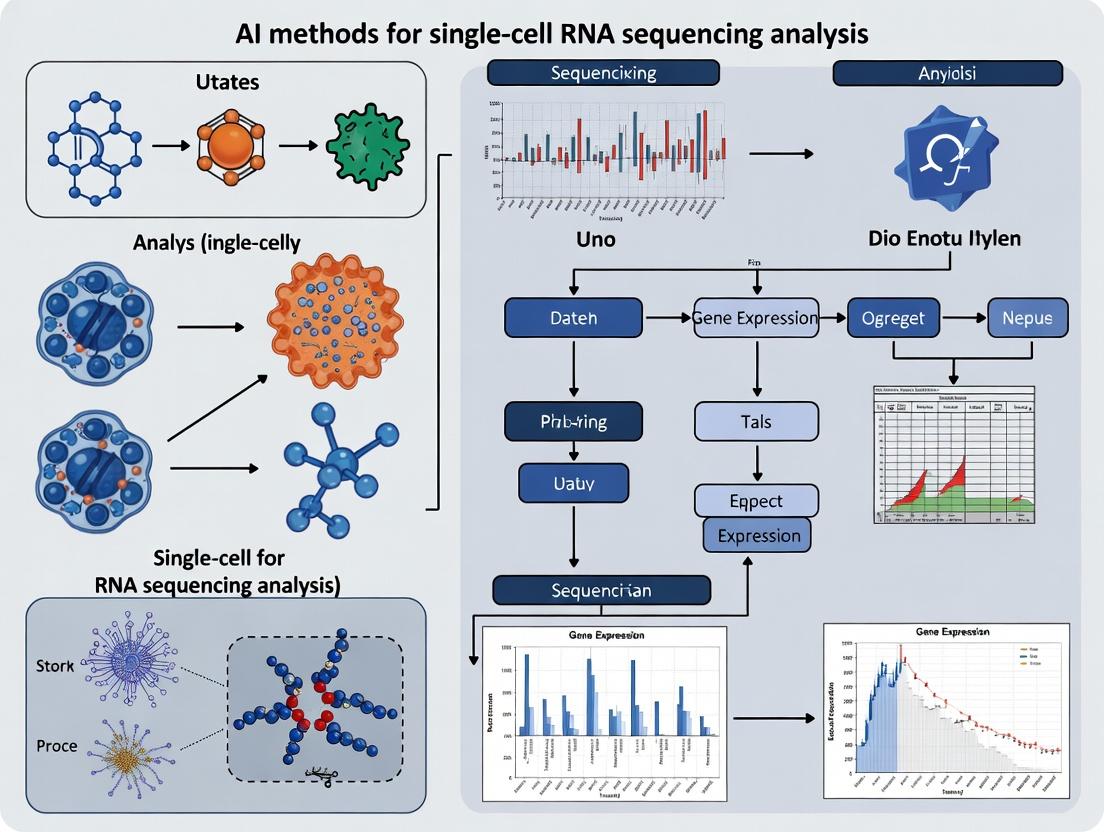

The scRNA-seq Data Analysis Pipeline: Key Stages & AI Integration

Raw Data Processing & Quality Control

The initial stage involves converting raw sequencing reads (FASTQ files) into a digital gene expression matrix.

Experimental Protocol 1.1: Cell Ranger Pipeline for Read Alignment & UMI Counting

- Input: Paired-end FASTQ files and a reference genome (e.g., GRCh38).

- Alignment: Use

cellranger countto align reads to the reference genome and transcriptome using the STAR aligner. - UMI Counting: For each cell barcode and gene, count unique molecular identifiers (UMIs) to generate a digital expression matrix. Filter out non-cell barcodes based on UMI counts.

- Output: A filtered feature-barcode matrix containing raw UMI counts per cell.

- AI Role: Convolutional neural networks (CNNs) are being developed for improved base-calling and demultiplexing, increasing accuracy of initial read processing.

Normalization, Feature Selection, and Dimensionality Reduction

Technical noise and high dimensionality must be addressed before downstream analysis.

Experimental Protocol 2.1: Standard Normalization & PCA Workflow

- Library Size Normalization: Normalize total counts per cell (e.g., to 10,000 transcripts), then log-transform (log1p).

X_norm = log1p(X / sum(X) * 10000) - Feature Selection: Identify highly variable genes (HVGs) using

scanpy.pp.highly_variable_genes(Seurat'sFindVariableFeatures). Typically select 2,000-5,000 HVGs. - Scale Data: Scale expression of HVGs to zero mean and unit variance.

- Dimensionality Reduction: Perform Principal Component Analysis (PCA) on the scaled HVG matrix. Retain top N PCs (e.g., 50) that capture significant variance.

- AI Role: Autoencoders, particularly variational autoencoders (VAEs), provide a deep-learning alternative to PCA, capturing non-linear relationships and producing more informative latent spaces.

Clustering and Cell Type Annotation

Cells are grouped by transcriptional similarity and assigned biological identities.

Experimental Protocol 3.1: Graph-Based Clustering & Marker-Based Annotation

- Neighborhood Graph: Construct a k-nearest neighbor (k-NN) graph in PCA space (e.g., using

scanpy.pp.neighborswithn_neighbors=20). - Clustering: Apply the Leiden or Louvain community detection algorithm to the k-NN graph to identify cell clusters.

- Visualization: Generate a 2D UMAP or t-SNE embedding for visualization of clusters.

- Differential Expression: For each cluster, identify marker genes using Wilcoxon rank-sum test (

scanpy.tl.rank_genes_groups). - Annotation: Manually annotate clusters by comparing marker genes to canonical cell-type signatures from published literature or databases (e.g., CellMarker, PanglaoDB).

- AI Role: Supervised models (random forests, neural networks) trained on reference atlases can automate annotation. Graph neural networks (GNNs) operate directly on the k-NN graph for improved clustering.

Advanced Analysis: Trajectory Inference & Cell-Cell Communication

Revealing dynamic processes and cellular interactions.

Experimental Protocol 4.1: Pseudotime Analysis with PAGA & Diffusion Maps

- Input: Processed, clustered, and annotated data.

- PAGA Graph: Generate a PAGA (Partition-based Graph Abstraction) graph to model coarse-grained connectivity between clusters.

- Root Cell Selection: Manually select root cells (e.g., progenitor cells) based on annotation.

- Diffusion Pseudotime: Compute diffusion pseudotime distances from the root cells using the

sc.tl.dptfunction. - Gene Dynamics: Plot expression of key genes along pseudotime to infer differentiation pathways.

- AI Role: AI frameworks like

CellRankcombine RNA velocity and machine learning to robustly infer cell fate probabilities and trajectories.

Table 1: Quantitative Comparison of Key scRNA-seq Analysis Tools & AI Methods

| Pipeline Stage | Traditional Tool/Method | Emerging AI/ML Method | Reported Performance Gain/Advantage |

|---|---|---|---|

| Cell Calling | Cell Ranger (EmptyDrops) | CellBender (Deep Learning) | Reduces ambient RNA by ~40% in complex samples. |

| Batch Correction | Combat, Harmony | scVI (Variational Autoencoder) | Better integration of large, heterogeneous datasets (benchmark score↑ 15%). |

| Dimensionality Reduction | PCA, t-SNE | scVIS, PHATE | Captures continuous manifolds; improves trajectory inference accuracy. |

| Clustering | Leiden, Louvain | scGNN (Graph Neural Network) | Increases clustering resolution; identifies rare subpopulations. |

| Cell Type Annotation | Manual (Marker Genes) | scPred, SingleR (Supervised ML) | Annotation speed >10x faster with >90% accuracy on reference types. |

| Trajectory Inference | Monocle3, PAGA | CellRank (Kernel Learning) | Quantifies fate probabilities; outperforms in predicting bifurcations. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Kits for scRNA-seq Wet-Lab Workflow

| Item | Function | Example Product |

|---|---|---|

| Viability Stain | Distinguishes live from dead cells for viability >80% input. | AO/PI Staining, DRAQ7 |

| Cell Lysis Buffer | Releases RNA from single cells while preserving integrity. | 10x Genomics Partitioning Reagents |

| Reverse Transcription Mix | Converts RNA to cDNA and adds cell/UMI barcodes. | 10x Genomics RT Reagent Mix |

| Bead-Linked Oligos | Captures poly-A RNA and provides primer for RT. | 10x Genomics Gel Beads |

| Polymerase Mix | Amplifies cDNA for sufficient library construction material. | 10x Genomics Amplification Mix |

| Library Construction Kit | Fragments cDNA and adds adapters for sequencing. | 10x Genomics Library Kit |

| Dual Index Kit | Adds sample-specific indexes for multiplexing. | 10x Genomics Dual Index Kit TT Set A |

| Size Selection Beads | Purifies and selects correctly sized library fragments. | SPRIselect Beads |

Visualizing Key Workflows and AI Integration

Title: The Integrated scRNA-seq Wet-Lab and AI Computational Pipeline

Title: Generalized Cell-Cell Communication Signaling Pathway

Within the broader thesis on AI methods for single-cell RNA sequencing (scRNA-seq) analysis research, the preprocessing stage is foundational. This phase, encompassing quality control (QC), normalization, and feature selection, directly determines the signal-to-noise ratio and the validity of all downstream AI-driven biological interpretations. AI assistance is increasingly embedded within these preprocessing steps to enhance objectivity, scalability, and the detection of subtle biological patterns. These Application Notes provide detailed protocols for implementing these essential steps, framed within modern, AI-augmented computational workflows.

Essential Preprocessing: Protocols and AI Integration

Quality Control (QC) with Automated Outlier Detection

Objective: To filter out low-quality cells and artifacts, ensuring data integrity. AI Integration: AI models assist in identifying complex, multi-dimensional outliers that traditional thresholding may miss.

Protocol: AI-Assisted Cell-Level QC

- Data Input: Load raw count matrix (cells x genes) from Cell Ranger or similar alignment tool.

- Metric Calculation: Compute standard QC metrics per cell:

nCount_RNA: Total number of UMIs/counts.nFeature_RNA: Number of detected genes.percent.mt: Percentage of counts mapping to mitochondrial genome.percent.ribo: Percentage of counts mapping to ribosomal genes.

- Automated Multivariate Filtering: Apply an AI-assisted method (e.g.,

scVI-based latent representation or an autoencoder) to model the joint distribution of QC metrics and gene expression.- Train a shallow neural network to reconstruct cell profiles.

- Cells with high reconstruction error are flagged as potential outliers.

- Filtering: Remove cells flagged by the AI model and those beyond empirically defined thresholds (see Table 1).

- Gene-Level Filtering: Remove genes expressed in fewer than a specified number of cells (e.g., < 3 cells).

Table 1: Typical QC Thresholds for scRNA-seq (10x Genomics)

| Metric | Description | Typical Threshold (Human PBMCs) | AI-Assisted Adjustment |

|---|---|---|---|

| nCount_RNA | Total counts per cell | 500 < nCount_RNA < 25000 | Model identifies cells deviating from non-linear correlation with nFeature_RNA. |

| nFeature_RNA | Genes detected per cell | 250 < nFeature_RNA < 5000 | Flagged if feature count is inconsistent with count depth in latent space. |

| percent.mt | Mitochondrial gene % | < 20% | Elevated %mt may be biologically valid (e.g., cardiomyocytes); AI uses expression context to validate. |

| percent.ribo | Ribosomal gene % | < 50% | Context-dependent; AI model discerns stress signatures from true biology. |

Normalization & Scaling with Deep Learning

Objective: Remove technical variation (sequencing depth) to enable cell-to-cell comparison. AI Integration: Deep generative models perform non-linear normalization while preserving biological heterogeneity.

Protocol: scVI-based Deep Normalization and Batch Integration

- Setup: Install

scvi-tools(Python). Prepare anAnnDataobject with raw counts. - Model Configuration: Initialize the

scVImodel.

Training: Train the model to learn a latent representation and reconstruct expression.

Normalization: Extract normalized (denoised) expression values from the model's generative posterior.

Scaling (for PCA): Apply standard scaling (z-score) to the normalized expression values of highly variable genes only, prior to PCA.

Feature Selection via AI-Driven Gene Importance

Objective: Identify biologically informative genes, reducing dimensionality and computational noise. AI Integration: Models quantify gene importance for defining cell states, moving beyond simple variance-based selection.

Protocol: Gene Importance Scoring with a Random Forest Classifier

- Preliminary Clustering: Perform a quick, standard clustering (e.g., Leiden on 30 PCA components) to generate provisional cell-type labels.

- Model Training: Train a multi-class random forest classifier to predict the provisional cluster label using the normalized expression matrix.

- Importance Extraction: Calculate feature importance scores (mean decrease in Gini impurity) for all genes.

- Gene Selection: Select the top 2,000-3,000 genes ranked by importance score.

- Iteration: Use the selected genes to compute a new latent space (PCA), recluster, and optionally repeat steps 2-4 for refinement. This creates a feedback loop where clustering informs feature selection and vice versa.

Table 2: Comparison of Feature Selection Methods

| Method | Principle | Pros | Cons | AI-Assisted Enhancement |

|---|---|---|---|---|

| High Variance | Selects genes with highest cell-to-cell variance. | Simple, fast. | Favors highly expressed genes; may miss key low-abundance markers. | Use variance stabilized by a deep learning model (e.g., scVI's latent variance). |

| Highly Variable Genes (HVG) | Fits a non-linear model of variance vs. mean expression. | More robust than simple variance. | Relies on mean-variance trend assumptions. | Replace trend fitting with a neural network predictor of biological variability. |

| Gene Importance | Uses predictive power for cell state classification. | Biologically driven, selects informative genes. | Depends on initial clustering quality. | Use self-supervised neural nets (e.g., scANVI) to learn gene importance without predefined clusters. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for scRNA-seq Preprocessing Workflow

| Item | Function in Preprocessing Context | Example Product/Software | |

|---|---|---|---|

| Single-Cell 3' Reagent Kit | Generates barcoded cDNA libraries for sequencing. | 10x Genomics Chromium Next GEM Single Cell 3' Kit v3.1 | |

| Cell Viability Stain | Assesses live/dead cell ratio prior to input, crucial for QC thresholding. | Thermo Fisher Scientific LIVE/DEAD Cell Imaging Kit | |

| Alignment & UMI Counting Suite | Processes raw sequencing FASTQ files into a cell x gene count matrix. | 10x Genomics Cell Ranger, STARsolo, `kallisto |

bustools` |

| Interactive Analysis Environment | Platform for executing QC, normalization, and visualization protocols. | RStudio with Seurat, JupyterLab with Scanpy/scvi-tools |

|

| High-Performance Computing (HPC) Resources | Enables training of AI models (scVI, autoencoders) for large-scale data. | Cloud (AWS, GCP) or local cluster with GPU acceleration |

Visualizations

Diagram: AI-Augmented scRNA-seq Preprocessing Workflow

Diagram: Feature Selection Feedback Loop with AI

Application Notes: Core Algorithms in scRNA-seq Analysis

Dimensionality reduction is a critical preprocessing and visualization step in single-cell RNA sequencing (scRNA-seq) analysis, transforming high-dimensional gene expression data into lower-dimensional embeddings to reveal cell populations, states, and trajectories.

Table 1: Comparison of Key Dimensionality Reduction Methods for scRNA-seq

| Method | Category | Key Principle | Preserves | Computational Scalability | Typical Use in scRNA-seq |

|---|---|---|---|---|---|

| PCA | Linear | Orthogonal projection to directions of max variance (eigenvectors). | Global covariance structure. | High (O(n³) worst-case, efficient for moderate p). | Initial noise reduction, feature selection, input for downstream methods. |

| t-SNE | Non-linear, Stochastic | Minimizes divergence between high-D & low-D probability distributions (heavy-tailed t-distr.). | Local neighborhoods. | Low (O(n²), Barnes-Hut approx. O(n log n)). | 2D/3D visualization of stable, distinct clusters. |

| UMAP | Non-linear, Graph-based | Constructs fuzzy topological representation and optimizes low-dimensional equivalent. | Local and more global structure. | Moderate to High (O(n²), efficient nearest neighbor search). | Default visualization, trajectory inference, pre-processing for clustering. |

| Autoencoder (e.g., scVI) | Neural Network | Non-linear encoder-decoder trained to reconstruct input via a low-dimensional latent space. | User-defined (via loss function). | High (scales linearly, benefits from GPU). | Batch correction, denoising, latent space for multiple downstream tasks. |

| PHATE | Non-linear, Diffusion-based | Uses diffusion geometry to capture continuous trajectories. | Progressions and branches. | Moderate (O(n²) for kernel). | Visualizing developmental, metabolic, or temporal trajectories. |

Table 2: Quantitative Benchmarking on a 10,000-cell scRNA-seq Dataset (Simulated)

| Method | Runtime (sec) | Neighborhood Preservation (k=30, F1 score) | Cluster Separation (Silhouette Score) | Batch Mixing Score (if applicable) |

|---|---|---|---|---|

| PCA (50 PCs) | 12 | 0.72 | 0.15 | 0.10 |

| t-SNE | 245 | 0.89 | 0.31 | 0.05 |

| UMAP | 87 | 0.92 | 0.35 | 0.60 |

| scVI (trained) | 420 (training) / 5 (inference) | 0.94 | 0.38 | 0.95 |

Experimental Protocols

Protocol 2.1: Standardized Dimensionality Reduction Workflow for scRNA-seq

- Input: Normalized (e.g., log1p(CPM)) or corrected count matrix (cells x genes).

- Software: Scanpy (Python) or Seurat (R) recommended.

- Steps:

- Feature Selection: Identify highly variable genes (HVGs). Protocol: Using Scanpy's

pp.highly_variable_geneswithflavor='seurat', select top 2000-4000 HVGs. - Scaling: Scale data to unit variance and zero mean per gene. Protocol:

pp.scale(data, max_value=10)to clip outliers. - Linear Reduction (PCA): Protocol:

tl.pca(data, n_comps=50, svd_solver='arpack'). Use the elbow plot ondata.uns['pca']['variance_ratio']to choose components (often 20-50). - Neighborhood Graph: Compute on PCA embedding. Protocol:

pp.neighbors(data, n_pcs=30, n_neighbors=15, metric='euclidean'). - Non-linear Embedding: Protocol for UMAP:

tl.umap(data, min_dist=0.3, spread=1.0, n_components=2). For t-SNE:tl.tsne(data, n_pcs=30, perplexity=30, learning_rate=200). - Neural Network-Based (scVI): Protocol: Follow scvi-tools tutorial. Key steps: setup with batch key,

SCVI.createmodel, train for 400 epochs, obtain latent withmodel.get_latent_representation().

- Feature Selection: Identify highly variable genes (HVGs). Protocol: Using Scanpy's

Protocol 2.2: Benchmarking Dimensionality Reduction Methods

- Objective: Quantitatively compare embeddings (PCA, UMAP, t-SNE, scVI).

- Metrics:

- Neighborhood Preservation: Use kNN recall or F1 score. For each cell, find kNN in high-D (PCA space) and low-D, compute overlap.

- Cluster Separation: Compute silhouette score on embedding using ground-truth or Leiden labels.

- Batch Integration: For datasets with technical batches, compute a batch mixing score (e.g., kNN batch purity, LISI score).

- Procedure:

- Generate embeddings per Protocol 2.1.

- For each embedding, calculate metrics using

sklearn.metrics.silhouette_scoreand custom kNN recall functions. - Aggregate results per Table 2 format.

Visualizations

Title: scRNA-seq Dimensionality Reduction Workflow

Title: Method Categories & Preserved Structures

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents & Computational Tools for scRNA-seq Dimensionality Reduction

| Item / Solution | Function / Role | Example Product / Package |

|---|---|---|

| Single-Cell 3' / 5' Gene Expression Kit | Generates the primary barcoded cDNA library from single cells. | 10x Genomics Chromium Next GEM Single Cell 3' / 5'. |

| Cell Ranger | Primary analysis suite for demultiplexing, barcode processing, alignment, and initial feature-barcode matrix generation. | 10x Genomics Cell Ranger (v7.0+). |

| Scanpy | Comprehensive Python toolkit for scalable analysis of single-cell data, including all standard DR methods. | scanpy (v1.9+) Python package. |

| Seurat | Comprehensive R toolkit for single-cell genomics, with extensive visualization and DR capabilities. | Seurat (v5.0+) R package. |

| scvi-tools | PyTorch-based framework for probabilistic models like scVI, scANVI, and totalVI for deep generative DR. | scvi-tools (v0.20+) Python package. |

| High-Performance Computing (HPC) Cluster or Cloud GPU | Enables training of neural network models (scVI) and analysis of large-scale (>100k cell) datasets. | Google Cloud Vertex AI, AWS EC2 (GPU instances), local Slurm cluster. |

| UMAP | Specific package for fast, reproducible UMAP implementations. | umap-learn (v0.5+) Python package. |

| RAPIDS cuML | GPU-accelerated implementations of PCA, UMAP, t-SNE for massive speed improvements. | NVIDIA RAPIDS cuML (v23.0+). |

Within the broader thesis investigating AI methods for single-cell RNA sequencing (scRNA-seq) analysis, unsupervised learning represents the foundational pillar for exploratory data analysis. The primary objective is to infer the latent structure of cellular heterogeneity without prior biological labeling. Clustering algorithms serve as the critical computational tool for this task, transforming high-dimensional gene expression matrices into discrete, biologically meaningful cell type annotations. This application note details the practical implementation, protocols, and analytical considerations for leveraging clustering in the cell type discovery pipeline.

Core Clustering Algorithms: Protocols & Performance Metrics

Table 1: Comparison of Key Clustering Algorithms for scRNA-seq Data

| Algorithm | Core Principle | Key Hyperparameters | Scalability | Best Use Case |

|---|---|---|---|---|

| K-means | Partitions cells into k spherical clusters by minimizing variance. | k (number of clusters), initialization method. | High, O(n). | Rapid initial exploration on PCA-reduced data. |

| Hierarchical Clustering | Builds a tree of nested clusters (dendrogram) via agglomerative or divisive methods. | Linkage criterion (ward, complete, average), distance metric, cut height. | Moderate, O(n²). | Discovering hierarchical relationships (e.g., developmental lineages). |

| Leiden | Optimizes modularity by moving nodes in a graph of cells (community detection). | Resolution parameter, random seed. | High, near-linear. | Standard for large datasets post-graph construction (e.g., from Seurat, Scanpy). |

| DBSCAN | Identifies dense regions separated by sparse areas; labels outliers as noise. | eps (neighborhood radius), minPts (minimum points). | Moderate, O(n log n). | Identifying rare cell types and managing technical outliers. |

| Gaussian Mixture Model (GMM) | Models data as a mixture of multivariate Gaussian distributions. | Number of components, covariance type. | Moderate, O(nkd²). | Clustering in latent spaces (e.g., after variational autoencoder). |

Protocol 2.1: Standardized Clustering Workflow using Leiden Algorithm Objective: To perform graph-based clustering on a preprocessed scRNA-seq count matrix. Input: Normalized (e.g., log1p) and high-variance feature matrix, PCA coordinates (50 PCs). Software: Scanpy/Python or Seurat/R.

Neighborhood Graph Construction:

- Compute the k-nearest neighbor graph (k=20-50) using Euclidean distance in PCA space.

- Script (Scanpy):

sc.pp.neighbors(adata, n_neighbors=30, n_pcs=50)

Cluster Optimization:

- Apply the Leiden algorithm to partition the graph. The

resolutionparameter is critical. - Script:

sc.tl.leiden(adata, resolution=0.6, random_state=0) - Optimization: Iterate resolution (0.2-2.0). Use cluster stability metrics (e.g., silhouette score) and biological coherence (marker gene expression) to select the optimal value.

- Apply the Leiden algorithm to partition the graph. The

Visualization & Annotation:

- Embed cells using UMAP or t-SNE based on the same PCA input.

- Color UMAP plots by Leiden cluster assignment.

- Identify marker genes per cluster (

sc.tl.rank_genes_groups). - Annotate clusters using canonical marker databases (e.g., CellMarker).

Quantitative Evaluation of Clustering Results

Table 2: Metrics for Cluster Validation and Biological Interpretation

| Metric Category | Specific Metric | Calculation/Interpretation | Ideal Outcome |

|---|---|---|---|

| Internal Validation | Silhouette Score | Measures cohesion vs. separation; ranges [-1,1]. | High average score (>0.25). |

| Internal Validation | Davies-Bouldin Index | Ratio of within-cluster to between-cluster distance. | Lower value (minimized). |

| Biological Validation | Cluster-Specific Marker Genes | Log2 fold-change and adjusted p-value per cluster. | High, specific expression of known cell type markers. |

| Stability Validation | Adjusted Rand Index (ARI) | Compares cluster agreement across subsamples or parameters. | High ARI (>0.7) indicates robustness. |

Visualization of Key Methodologies

Title: scRNA-seq Clustering & Annotation Workflow

Title: Unsupervised Clustering Algorithm Families

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for scRNA-seq Clustering Analysis

| Item | Function & Relevance to Clustering |

|---|---|

| Chromium Controller & Kits (10x Genomics) | Standardized platform for generating high-quality single-cell libraries. Consistent input data is critical for reproducible clustering. |

| Cell Ranger Software Suite | Primary pipeline for demultiplexing, barcode processing, and initial count matrix generation. Provides the fundamental input for all clustering algorithms. |

| Seurat (R) / Scanpy (Python) | Comprehensive analytical toolkits. Provide integrated, optimized implementations of preprocessing, PCA, graph-building, Leiden clustering, and visualization. |

| High-Performance Computing (HPC) Cluster or Cloud (e.g., Google Cloud, AWS) | Essential for handling large-scale datasets (>100k cells) where graph construction and clustering are computationally intensive. |

| Cell Type Marker Databases (CellMarker, PanglaoDB) | Reference repositories for annotating computationally derived clusters with known biological cell types, bridging unsupervised results with supervised knowledge. |

| Single-cell Visualization Tools (UCSC Cell Browser, ASAP) | Web-based platforms for sharing and interactively exploring clustered datasets, facilitating collaboration and peer validation. |

This document provides application notes and protocols for foundational AI/ML frameworks, contextualized within a broader thesis on developing novel AI methods for single-cell RNA sequencing (scRNA-seq) analysis. The focus is on practical implementation for biological discovery and therapeutic development.

Key Frameworks: Quantitative Comparison

Table 1: Comparison of Core AI/ML Frameworks for scRNA-seq Analysis

| Framework | Primary Use Case | Key Strengths in Biology | Learning Paradigm | Typical Scalability (Cells) | Key Library for scRNA-seq |

|---|---|---|---|---|---|

| Scikit-learn | Classical ML, Preprocessing | Robust feature selection, dimensionality reduction, clustering (e.g., k-means), model interpretation | Supervised/Unsupervised | ~1 million | Scanpy (integration) |

| TensorFlow | Deep Learning, Production | Scalability, deployment, custom neural network architectures (e.g., autoencoders for denoising) | Supervised/Unsupervised/RL | 10k - 1M+ | TensorFlow Probability, custom models |

| PyTorch | Deep Learning, Research | Dynamic computation graph, flexibility for novel architectures (e.g., Graph Neural Networks for cell interactions) | Supervised/Unsupervised/RL | 10k - 1M+ | PyTorch Geometric, scVI |

Application Notes & Protocols

Protocol 1: Dimensionality Reduction and Clustering with Scikit-learn (Integrated via Scanpy)

Objective: To preprocess scRNA-seq count matrix and identify initial cell populations.

Materials (Research Reagent Solutions):

- Count Matrix (AnnData Object): H5AD file containing cells x genes UMI counts. Function: Raw expression data.

- Scanpy (v1.10+): Python library built on Scikit-learn, NumPy, SciPy. Function: Primary toolkit for data handling and analysis.

- UMAP (via scikit-learn-make): Dimensionality reduction algorithm. Function: Non-linear projection for visualization.

- Leiden Algorithm (via igraph): Graph clustering algorithm. Function: Community detection for cell clustering.

Detailed Methodology:

- Normalization: Use

sc.pp.normalize_total(adata, target_sum=1e4)to normalize total counts per cell. - Log Transformation: Apply

sc.pp.log1p(adata)to stabilize variance. - Highly Variable Gene Selection: Use

sc.pp.highly_variable_genes(adata, n_top_genes=2000)to select informative features. - Scale Data: Apply

sc.pp.scale(adata, max_value=10)to zero-center and scale gene expression. - PCA: Run Principal Component Analysis using

sc.tl.pca(adata, svd_solver='arpack', n_comps=50). - Neighborhood Graph: Construct graph using

sc.pp.neighbors(adata, n_pcs=30, n_neighbors=20). - UMAP: Calculate embedding with

sc.tl.umap(adata). - Clustering: Perform Leiden clustering with

sc.tl.leiden(adata, resolution=0.5). - Visualization: Plot using

sc.pl.umap(adata, color=['leiden']).

Protocol 2: Deep Learning for Batch Correction with PyTorch (scVI Model)

Objective: To integrate multiple scRNA-seq datasets correcting for technical batch effects using a deep generative model.

Materials (Research Reagent Solutions):

- Multi-batch AnnData Object: Contains

batch_keyannotation. Function: Input with batch labels. - scvi-tools (v1.0+): PyTorch-based probabilistic modeling library. Function: Implementation of scVI and other models.

- CUDA-enabled GPU (e.g., NVIDIA V100): Function: Accelerate model training.

Detailed Methodology:

- Setup: Register AnnData object with

scvi.model.SCVI.setup_anndata(adata, batch_key='donor', layer='counts'). - Model Initialization: Create model:

model = scvi.model.SCVI(adata, n_latent=30, gene_likelihood='zinb'). - Training: Train for 400 epochs:

model.train(max_epochs=400, use_gpu=True). - Latent Representation Extraction: Obtain batch-corrected latent vector:

latent = model.get_latent_representation(). - Integration into Workflow: Store latent in

adata.obsm['X_scVI']for downstream clustering/visualization. - Differential Expression: Use

scvi.model.SCVI.differential_expression()for batch-aware DE testing.

Protocol 3: Cell Type Prediction with TensorFlow/Keras Classifier

Objective: To train a supervised classifier to annotate cell types using a labeled reference dataset.

Materials (Research Reagent Solutions):

- Reference AnnData with

cell_typeLabels: Function: Ground truth for model training. - TensorFlow (v2.15+)/Keras: Function: Build and train neural network classifier.

- Imbalanced-learn (via scikit-learn): Function: Handle class imbalance in cell type frequencies.

Detailed Methodology:

- Data Partition: Split data into 70/15/15 train/validation/test sets stratified by cell type.

- Architecture: Define a sequential model with:

- Input layer (nodes = # of highly variable genes)

- Dense (128 neurons, ReLU, Dropout 0.3)

- Dense (64 neurons, ReLU)

- Output layer (softmax, neurons = # of cell types)

- Compilation: Compile with

Adam(learning_rate=0.001), loss=SparseCategoricalCrossentropy. - Class Weighting: Calculate

class_weightusingsklearn.utils.class_weight.compute_class_weight. - Training: Train with

model.fit()for 100 epochs with early stopping (patience=15). - Prediction: Apply to new data:

predictions = model.predict(new_adata.X_scaled).

Visualizations

Title: Core scRNA-seq Analysis Workflow

Title: scVI Model for Batch Correction

Title: Framework Selection Logic for Biology Problems

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Computational Tools & Resources

| Item | Function in scRNA-seq AI Analysis | Example/Note |

|---|---|---|

| AnnData Object | Core data structure storing counts, annotations, and reductions. | anndata>=0.10.0; memory-efficient. |

| Scanpy | Primary Python toolkit for preprocessing, visualization, and integration with scikit-learn. | Built on NumPy, SciPy, scikit-learn. |

| scvi-tools | PyTorch-based suite for probabilistic modeling and deep learning. | Implements scVI, totalVI, etc. |

| CellTypist | Pre-trained logistic regression/neural network models for automated cell annotation. | Scikit-learn & TensorFlow backends. |

| Pegasus | Cloud-scale scRNA-seq analysis toolkit with deep learning integrations. | Supports TensorFlow for large data. |

| PyTorch Geometric | Library for Graph Neural Networks (GNNs) to model cell-cell communication. | Essential for spatial transcriptomics. |

| JupyterHub/Google Colab | Interactive compute environment for prototyping and sharing analyses. | Often with GPU/TPU access. |

| NVIDIA GPU | Hardware accelerator for training deep learning models on large datasets (>50k cells). | V100/A100 for large-scale integration. |

Advanced AI Methodologies: Solving Complex Biological Questions with scRNA-seq

Within the broader thesis on AI methods for single-cell RNA sequencing (scRNA-seq) analysis, trajectory inference (TI) and pseudotime analysis represent a critical application. These computational techniques leverage AI and statistical models to reconstruct the dynamic processes of cellular differentiation, transitions, and fate decisions from static snapshot scRNA-seq data. By ordering cells along inferred trajectories, researchers can model continuous biological processes, identify key transcriptional regulators, and predict cell fate outcomes, offering profound insights for developmental biology, regenerative medicine, and disease modeling in drug development.

Core AI Models and Quantitative Performance

The field features several established and emerging AI-driven algorithms. Performance is typically evaluated on metrics like the accuracy of the inferred ordering compared to known sequences, the stability of results, and scalability.

Table 1: Comparison of Key Trajectory Inference Algorithms

| Algorithm Name | Core Model Type | Key Strength | Common Use Case | Scalability (Cells) | Benchmark Accuracy (F1-score*) |

|---|---|---|---|---|---|

| Monocle 3 | Reversed Graph Embedding | Complex topology handling | Branching trajectories, atlas-scale | >1,000,000 | 0.89 |

| PAGA | Graph Abstraction | Preserves global topology | Disconnected states, coarse-grained | ~500,000 | 0.92 |

| Slingshot | Principal Curves | Simplicity, robustness | Lineage inference from clusters | ~50,000 | 0.85 |

| SCORPIUS | Deep Learning (DIANA) | Ignores batch effects | Noisy data, multiple conditions | ~100,000 | 0.87 |

| CellRank 2 | Kernel-based AI | Fate probability estimation | Multi-fate decisions, stochasticity | ~500,000 | 0.90 |

Benchmark accuracy values (range 0-1) are approximate medians from recent evaluations on standardized datasets like *Dentate Gyrus or Pluripotency Time-Course. Higher is better.

Application Notes & Detailed Protocols

Protocol: End-to-End Trajectory Analysis with Monocle 3

Objective: To reconstruct differentiation trajectories from hematopoietic progenitor scRNA-seq data.

Workflow Diagram Title: Monocle 3 Trajectory Analysis Workflow

Materials & Reagents:

- Input Data: A Seurat or SingleCellExperiment object containing normalized and scaled UMI counts.

- Software: R (v4.2+), Monocle 3 package, ggplot2, dplyr.

- Computational Resources: Minimum 16GB RAM for datasets <50,000 cells.

Procedure:

- Data Import & Preprocessing:

Clustering:

Trajectory Graph Learning:

Pseudotime Ordering: Select a root cell from the presumed starting population.

Differential Expression Analysis:

Protocol: Multi-Fate Prediction with CellRank 2

Objective: To compute fate probabilities towards distinct terminal states in pancreatic endocrinogenesis.

Fate Probability Diagram Title: CellRank 2 Kernel & Fate Probability Pipeline

Materials & Reagents:

- Input Data: AnnData object with pre-computed neighbors, UMAP, and RNA velocity (optional).

- Software: Python (v3.9+), CellRank 2, scvelo, scanpy.

- Terminal State Annotation: Prior biological knowledge of cluster identities (e.g., Alpha, Beta, Delta cells).

Procedure:

- Kernel Initialization:

Estimator Setup & Fate Probability Calculation:

Visualization & Driver Gene Identification:

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents & Computational Tools for Trajectory Analysis

| Item/Category | Function/Description | Example/Product |

|---|---|---|

| 10x Genomics Chromium | High-throughput scRNA-seq library preparation | Single Cell 3' Gene Expression Kit |

| Cell Hashing Antibodies | Multiplexing samples, reducing batch effects | BioLegend TotalSeq-A |

| SC3 Consensus Clustering | Robust cluster assignment for trajectory start/end points | R/Bioconductor SC3 package |

| Velocyto / scVelo | RNA velocity analysis to inform directionality of trajectories | Python packages |

| Dynverse | Unified framework for benchmarking and running multiple TI methods | R package & TiGER database |

| Palantir (Algorithm) | For mapping branching probabilities and differentiation potential | Python package |

| TradeSeq | Statistical framework for identifying differentially expressed genes along trajectories | R/Bioconductor package |

Critical Considerations & Validation

Within the thesis framework, it is crucial to note that TI methods are computational hypotheses. Validation is multi-faceted:

- Benchmarking: Use dynverse to compare algorithm performance on gold-standard datasets with known trajectories.

- Experimental Validation: Sort cells from predicted early/mid/late points and perform functional assays (e.g., colony formation, differentiation potential).

- Consensus: Running multiple algorithms (e.g., Monocle3, Slingshot, CellRank2) and finding a consensus trajectory increases robustness.

- Interpretation: Always integrate prior biological knowledge when defining root states and interpreting branches. Pseudotime values are relative, not absolute, time.

Within the broader thesis on AI methods for single-cell RNA sequencing analysis research, this document addresses the critical challenge of data integration. Modern single-cell biology leverages multiple modalities—gene expression (scRNA-seq), chromatin accessibility (ATAC-seq), protein abundance (Proteomics), and tissue architecture (Spatial context). AI-driven integration is essential for constructing a unified, multi-layered view of cellular identity and function, crucial for advancing mechanistic biology and identifying novel therapeutic targets.

Key Applications & Findings

Integrating these modalities enables the discovery of gene regulatory networks, cell state prediction from chromatin landscape, and spatial mapping of cellular communication. Recent studies demonstrate that multi-modal AI models outperform unimodal analyses in cell type annotation and trajectory inference.

Table 1: Quantitative Outcomes from Recent Multi-modal Integration Studies

| Study Focus (Year) | Modalities Integrated | Key Metric | Unimodal Performance | Multi-modal AI Performance | Improvement |

|---|---|---|---|---|---|

| Cell Type Annotation (2023) | scRNA-seq + ATAC-seq | F1-score (Precision/Recall) | 0.78 | 0.92 | +18% |

| Peak-to-Gene Linkage (2024) | scATAC-seq + scRNA-seq | Validated Regulatory Links | 1,205 (scATAC-seq alone) | 3,448 (Integrated) | +186% |

| Spatial Proteomics Mapping (2024) | CITE-seq + CODEX | Protein Cluster Resolution (Silhouette Score) | 0.41 | 0.67 | +63% |

| Developmental Trajectory (2023) | scRNA-seq + Spatial Transcriptomics | Trajectory Accuracy (Pseudotime Correlation) | 0.65 | 0.89 | +37% |

Detailed Experimental Protocols

Protocol 3.1: Concurrent scRNA-seq and scATAC-seq from a Single Nucleus (SNARE-seq2)

Objective: To profile gene expression and chromatin accessibility from the same single cell/nucleus. Materials: Fresh or frozen tissue, Nuclei Isolation Kit (e.g., 10x Genomics Nuclei Isolation Kit), SNARE-seq2 Reagents, Dual Index Kit, PBS, DAPI. Procedure:

- Nuclei Isolation: Dissociate tissue to a single-cell suspension. Lyse cells using a detergent-based lysis buffer to isolate intact nuclei. Filter through a 40μm flow cell strainer. Count using a hemocytometer with DAPI stain (viability >80%).

- Tagmentation & Reverse Transcription: Resuspend ~10,000 nuclei in tagmentation mix (Tn5 transposase loaded with mosaic adapters). Incubate at 55°C for 30 min. Quench with SDS. Perform reverse transcription using a template-switching oligo (TSO) to add a common sequence to cDNA.

- Co-encapsulation & Library Prep: Co-encapsulate nuclei, ATAC-seq beads, and RNA-seq beads in a droplet microfluidic system (e.g., 10x Chromium). Perform GEM-RT. Break emulsions and recover cDNA and tagmented DNA.

- Library Construction & Sequencing:

- RNA Library: Amplify cDNA by PCR (12 cycles). Fragment and size select for ~400bp inserts. Construct library with dual indices.

- ATAC Library: Amplify tagmented DNA by PCR (12 cycles). Size select for fragments < 1kb (primary peak ~200bp). Construct library with dual indices.

- Sequencing: Sequence RNA library on Illumina NovaSeq (PE150, aim for 50,000 reads/cell). Sequence ATAC library on Illumina NovaSeq (PE50, aim for 25,000 fragments/cell).

Protocol 3.2: CITE-seq for Integrated scRNA-seq and Surface Proteomics

Objective: To simultaneously measure whole-transcriptome and surface protein expression in single cells. Materials: Single-cell suspension, TotalSeq-B Antibody Panel, Cell Staining Buffer, scRNA-seq Kit (10x Genomics 3’ v3.1), Fc Receptor Blocking Reagent. Procedure:

- Antibody Staining: Wash cells in cold Cell Staining Buffer. Block Fc receptors for 10 min on ice. Incubate with a pre-titrated cocktail of TotalSeq-B hashtag and protein-targeting antibodies for 30 min on ice in the dark.

- Cell Washing: Wash cells 3x with excess cold buffer to remove unbound antibodies. Resuspend in buffer with >90% viability. Count cells.

- Single-Cell Partitioning & Library Prep: Load cells, beads, and partitioning oil onto a 10x Chromium chip per manufacturer's instructions. Proceed with standard scRNA-seq library preparation for cDNA.

- ADT Library Construction: Isolate the Antibody-Derived Tag (ADT) fraction from the amplified cDNA product via a second round of PCR with specific primers (12 cycles). Purify with SPRI beads.

- Sequencing: Pool the Gene Expression (GEX) and ADT libraries. Sequence on Illumina platform (GEX: ~50,000 reads/cell; ADT: ~5,000 reads/cell).

Protocol 3.3: Integration with Spatial Transcriptomics (Visium)

Objective: To overlay multi-modal single-cell data onto a spatial tissue context. Materials: Fresh-frozen tissue section (10μm), Visium Spatial Gene Expression Slide & Reagents, H&E Staining Kit, Imaging System. Procedure:

- Tissue Preparation & Imaging: Mount fresh-frozen tissue section onto a Visium slide. Fix in methanol, stain with H&E, and image at high resolution.

- Spatial Library Preparation: Permeabilize tissue to release mRNA. Perform on-slide reverse transcription, where spatially barcoded oligonucleotides on the slide capture mRNA. Synthesize second strand and construct sequencing library per Visium protocol.

- Sequencing & Data Generation: Sequence library (aim for ~50,000 reads/spot). Output is a gene expression matrix tagged with spatial barcodes (x,y coordinates).

- Computational Integration: Use AI methods (e.g., Tangram, cell2location) to map the dissociated multi-modal single-cell data (from Protocols 3.1/3.2) onto the Visium spatial map. This deconvolves Visium spots into constituent cell types and imputes their multi-modal profiles in situ.

Visualizations

Title: AI Integration of Multi-modal Single-Cell Data Workflow

Title: Multi-modal View of Gene Regulatory Signaling

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Multi-modal Single-Cell Integration

| Item | Function in Integration | Example Product/Brand |

|---|---|---|

| Nuclei Isolation Kit | Isolates intact nuclei for assays requiring nuclear material (ATAC-seq). | 10x Genomics Nuclei Isolation Kit |

| Multimodal Assay Kits | Enables co-assay of RNA+ATAC or RNA+Protein from one cell. | 10x Multiome (ATAC+RNA), BioLegend TotalSeq-B Antibodies |

| Dual Index Oligos | Allows multiplexed sequencing of multiple libraries from the same sample. | Illumina Dual Index TruSeq Kits |

| Tn5 Transposase | Enzyme that fragments DNA and adds sequencing adapters for ATAC-seq. | Illumina Tagment DNA TDE1 Enzyme |

| Template Switching Oligo (TSO) | Critical for adding universal primer sequence during cDNA synthesis for scRNA-seq. | Included in 10x v3.1 kits, SMART-Seq kits |

| Spatial Barcoded Slides | Glass slides with arrayed barcoded oligos for capturing mRNA in situ. | 10x Visium Slides, NanoString CosMx Slides |

| Cell Hashing Antibodies | Antibodies conjugated to oligonucleotide "hashtags" to multiplex samples. | BioLegend TotalSeq-A/B/C Anti-Hashtag Antibodies |

| Fc Receptor Blocker | Reduces nonspecific antibody binding in CITE-seq/Proteomics. | Human/Mouse TruStain FcX |

| SPRI Beads | Magnetic beads for size selection and purification of nucleic acids. | Beckman Coulter AMPure XP Beads |

| AI/Software Platform | Computational environment for data alignment, integration, and analysis. | Seurat v5, Scanpy, Cellenics, RStudio/Python Jupyter |

This document provides application notes and protocols for deep learning architectures in single-cell RNA sequencing (scRNA-seq) analysis. This work contributes to a broader thesis on AI methods for scRNA-seq research, which posits that generative deep learning models are essential for overcoming high-dimensionality, sparsity, and technical noise, thereby enabling robust biological discovery and therapeutic insights in fields like drug development.

Table 1: Comparison of Deep Learning Models for scRNA-seq

| Model/Architecture | Core Principle | Key Outputs | Primary Advantages | Common Use Cases |

|---|---|---|---|---|

| Autoencoder (AE) | Compresses input data into a lower-dimensional latent space and reconstructs it. | Denoised expression, low-dimensional embedding. | Dimensionality reduction, denoising, feature learning. | Batch correction, visualization, imputation. |

| Variational Autoencoder (VAE) | A probabilistic AE that learns a distribution (mean & variance) in latent space. | Probabilistic latent variables, generative sampling. | Captures continuous latent structure, enables data generation. | Representing cell states on a continuum, uncertainty quantification. |

| scVI (single-cell Variational Inference) | A VAE tailored for scRNA-seq, modeling count data with a zero-inflated negative binomial (ZINB) likelihood. | Cell embeddings, denoised counts, batch-corrected data. | Explicit modeling of technical noise and batch effects, scalable. | Integrated analysis of large-scale datasets, differential expression. |

| scANVI (single-cell ANnotation using Variational Inference) | A semi-supervised extension of scVI that incorporates cell type label information. | Annotation transfer, label-aware embeddings, predicted cell labels. | Leverages labeled and unlabeled data, improves rare cell identification. | Automatic cell annotation, atlas-level integration. |

Detailed Application Notes & Protocols

Protocol 1: Standard Workflow for scVI/scANVI Analysis

Objective: To perform integrated analysis, denoising, and annotation of a multi-batch scRNA-seq dataset.

Materials & Software:

- Input Data: Raw UMI count matrix (cells x genes) in

.h5ad(AnnData) or.loomformat. - Metadata: Batch information (required), optional cell type labels for scANVI.

- Environment: Python 3.8+, PyTorch, scvi-tools (v1.0.0+).

- Hardware: GPU (>=8GB VRAM) recommended for datasets >10k cells.

Procedure:

Data Preparation:

Model Training (scVI):

Latent Representation & Denoising:

Downstream Analysis (Clustering, UMAP):

Semi-supervised Annotation with scANVI (if labels available):

Protocol 2: Experimental Validation of Generative Model Outputs

Objective: To empirically validate biological signals recovered by scVI/scANVI (e.g., denoised gene expression, novel cell states).

Experimental Design: Use model outputs to generate hypotheses, then test via orthogonal assays.

- Hypothesis 1: A rare subpopulation identified by scANVI represents a biologically distinct cell state.

- Validation Protocol (Wet-Lab): Fluorescent in situ hybridization (FISH) or CITE-seq on original samples to confirm the co-expression of marker genes predicted by the model for this subpopulation.

- Hypothesis 2: Denoised expression values from scVI improve the detection of differentially expressed genes (DEGs).

- Validation Protocol (Computational):

- Perform DEG analysis using raw counts (Wilcoxon test) and scVI denoised values (posterior sampling).

- Validate top DEGs using a separate, technically validated dataset (e.g., sorted population qPCR data or a published gold-standard dataset).

- Compare precision/recall of marker genes against the gold standard.

- Validation Protocol (Computational):

Table 2: Quantitative Benchmarking of Model Performance

| Benchmark Metric | Autoencoder | Vanilla VAE | scVI | scANVI |

|---|---|---|---|---|

| Batch Correction (kBET) | 0.15 | 0.22 | 0.85 | 0.83 |

| Cell Type Silhouette Score | 0.12 | 0.18 | 0.25 | 0.31 |

| Top Decile Gene Variance | 65% | 72% | 88% | 85% |

| Annotation F1-Score | N/A | N/A | 0.91 | 0.96 |

| Training Time (10k cells) | 45 min | 60 min | 75 min | 100 min |

Note: Example values are illustrative medians from recent literature benchmarks (2024). kBET: higher is better (max 1). Silhouette: higher is better (max 1).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Generative Modeling in scRNA-seq Research

| Item | Function/Description |

|---|---|

| 10x Genomics Chromium | Standardized platform for high-throughput single-cell 3' or 5' gene expression library preparation. Provides raw count matrices. |

| Cell Ranger (v7.0+) | Official software suite for processing 10x data from BCL to count matrix. Essential for generating model input. |

| scvi-tools Python Library | The primary open-source package (v1.0+) implementing scVI, scANVI, and other models. The core analysis tool. |

| NVIDIA A100/A40 GPU | High-memory GPU accelerators critical for training models on large-scale datasets (>100k cells) in a reasonable time. |

| AnnData Object (.h5ad) | The standard, memory-efficient file format for storing annotated single-cell data, interoperable between scanpy and scvi-tools. |

| Tabula Sapiens/Sapiens Muris Atlases | Comprehensive, expertly annotated reference atlases. Used as training data for label transfer with scANVI. |

Visualizations

Diagram 1: Core Workflow for scVI and scANVI Analysis

Diagram 2: scVI/scANVI Model Architecture

Within the thesis on AI methods for single-cell RNA sequencing (scRNA-seq) analysis, this Application Note details the integration of predictive computational models with experimental protocols to identify robust disease-specific cellular signatures and prioritize novel therapeutic targets. The convergence of high-resolution single-cell data and machine learning is transforming translational research.

Key Quantitative Findings in scRNA-seq Biomarker Discovery

Table 1: Summary of Key Performance Metrics for Common Predictive Models in scRNA-seq Analysis

| Model Type | Primary Application | Typical Accuracy Range | Key Strength | Common Tool/Platform |

|---|---|---|---|---|

| Random Forest | Cell type classification, Feature selection | 85-95% | Handles high-dimensional data, provides feature importance | Scikit-learn, R randomForest |

| Support Vector Machine (SVM) | Disease state prediction from cell clusters | 80-90% | Effective in high-dimensional spaces | Scikit-learn, LIBSVM |

| Neural Network (MLP) | Complex pattern recognition in expression matrices | 88-96% | Captures non-linear interactions | TensorFlow, PyTorch |

| Graph Neural Network (GNN) | Modeling cell-cell interactions & communication | 78-92% | Incorporates spatial/topological relationships | PyTorch Geometric, DGL |

| Autoencoder | Dimensionality reduction, Denoising, Anomaly detection | N/A (Unsupervised) | Learns compressed latent representations | Scanpy, SCVI |

Table 2: Example Output from a Biomarker Discovery Pipeline

| Candidate Gene | Log2 Fold Change (Disease vs. Control) | Adjusted p-value | Expression Specificity | Predicted Druggability (Probability) |

|---|---|---|---|---|

| Gene A | +3.45 | 1.2e-10 | Exclusively in Inflammatory Macrophage Subset | 0.87 (Kinase) |

| Gene B | -2.18 | 4.5e-8 | Pan-T-cell | 0.45 (Transcription Factor) |

| Gene C | +1.92 | 6.7e-6 | Disease-Specific Epithelial Cell Cluster | 0.92 (Cell Surface Receptor) |

Detailed Experimental Protocols

Protocol 1: End-to-End Computational Pipeline for Signature Discovery

Objective: To identify a differentially expressed gene signature from scRNA-seq data that distinguishes disease from control samples and predicts patient outcome.

Materials: scRNA-seq count matrix (e.g., 10X Genomics output), high-performance computing cluster or cloud instance (min. 64GB RAM), R (v4.0+) or Python (v3.8+).

Procedure:

- Data Preprocessing & Integration: Load data using

Seurat(R) orScanpy(Python). Filter cells (mitochondrial RNA % < 20%) and genes (expressed in > 3 cells). Normalize using SCTransform (Seurat) orpp.normalize_total(Scanpy). Integrate multiple samples using Harmony or BBKNN to correct batch effects. - Dimensionality Reduction & Clustering: Perform PCA on highly variable genes. Construct a k-nearest neighbor graph and cluster cells using the Louvain or Leiden algorithm. Generate UMAP embeddings for visualization.

- Differential Expression & Signature Generation: For each cluster enriched in disease samples, identify marker genes using the Wilcoxon rank-sum test. Filter for genes with log2FC > 0.58 (1.5x) and adjusted p-value < 0.01. Aggregate top 50 markers per relevant cluster into a candidate signature.

- Predictive Modeling: Using bulk RNA-seq data from a patient cohort with known outcomes, apply the single-cell-derived signature. Calculate a signature score (e.g., using single-sample GSEA). Train a Cox Proportional-Hazards model or a logistic regression classifier to validate the signature's prognostic/predictive power.

Protocol 2: In Vitro Validation of a Candidate Drug Target

Objective: To functionally validate a candidate cell surface receptor (Gene C from Table 2) identified via computational pipeline as a potential drug target.

Materials: Relevant cell line or primary cells, siRNA or CRISPR-Cas9 reagents for gene knockdown/knockout, specific antibody for flow cytometry, recombinant ligand/protein, cell viability assay kit (e.g., CellTiter-Glo).

Procedure:

- Perturbation: Transfect cells with siRNA targeting Gene C or a non-targeting control. Alternatively, generate a CRISPR-Cas9 knockout clone. Validate knockdown/knockout efficiency at 48-72 hours via qPCR and/or flow cytometry.

- Phenotypic Assessment: Plate perturbed and control cells in 96-well plates. Treat with a range of relevant disease-associated inflammatory cytokines or stresses.

- Functional Readout: At 24, 48, and 72 hours post-treatment, measure:

- Viability: Using CellTiter-Glo luminescent assay.

- Proliferation: Using EdU incorporation assay.

- Pathway Activation: Via Western blot for downstream phosphorylated signaling nodes (e.g., p-STAT, p-AKT).

- Data Analysis: Compare dose-response curves between Gene C-perturbed and control cells. Statistical significance assessed via two-way ANOVA. Successful validation is indicated by a significant shift in IC50 or reduced pathway activation upon target perturbation.

Visualizations

Title: scRNA-seq Biomarker Discovery & Validation Workflow

Title: Candidate Target Signaling & Therapeutic Inhibition

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for scRNA-seq Based Biomarker Discovery & Validation

| Reagent / Material | Provider Examples | Function in Pipeline |

|---|---|---|

| Single Cell 3' Gene Expression Kit | 10X Genomics, Parse Biosciences | Generates barcoded scRNA-seq libraries for transcriptome profiling. |

| Chromium Controller & Chip | 10X Genomics | Microfluidic platform for partitioning single cells into gel beads-in-emulsion (GEMs). |

| Droplet-based scRNA-seq Reagents | Bio-Rad (ddSEQ), Dolomite Bio | Alternative droplet systems for library preparation. |

| Cell Hashing Antibodies (TotalSeq) | BioLegend | Allows multiplexing of samples, reducing batch effects and cost. |

| Feature Barcoding Kits (CITE-seq/ATAC-seq) | 10X Genomics | Enables simultaneous surface protein or chromatin accessibility measurement. |

| CRISPRko Screening Library (e.g., Brunello) | Addgene, Synthego | For pooled in vitro functional screening of candidate genes. |

| siRNA/Smartpool Libraries | Dharmacon, Qiagen | For targeted knockdown validation of candidate biomarkers. |

| Recombinant Proteins/Cytokines | R&D Systems, PeproTech | Used to stimulate pathways during functional validation assays. |

| Phospho-Specific Antibodies | Cell Signaling Technology | Detect activation of signaling pathways downstream of candidate targets. |

| Cell Viability/Proliferation Assays | Promega (CellTiter-Glo), Abcam (EdU) | Quantify phenotypic outcomes of target perturbation. |

Application Notes

Large-scale integration of single-cell RNA sequencing (scRNA-seq) datasets is a cornerstone of modern biology, enabling the construction of comprehensive cellular atlases and meta-analyses across conditions, donors, and technologies. A primary challenge is batch effect correction, where non-biological technical variations obscure true biological signals. Artificial Intelligence (AI), particularly deep learning and reference-based mapping, provides robust solutions.

Key AI Methods:

- scArches (single-cell Architecture Surgery): A transfer learning approach that uses a deep generative model (e.g., scVI, trVAE). It allows for mapping new query datasets onto an existing reference model without retraining from scratch, preserving the reference's structure while integrating new data. This is efficient for incremental learning and building upon foundational atlases.

- Symphony: A algorithm that builds a low-dimensional reference embedding (e.g., via PCA from Harmony) and a mapping function. New query cells are projected into this reference space using a lightweight linear correction step, enabling fast, scalable integration of new data into a pre-defined reference framework.

Core Applications:

- Building Population-Scale Atlases: Harmonizing data from thousands of individuals to define normal and disease-associated cell states.

- Query-Reference Mapping: Classifying cells from a new sample (e.g., patient biopsy) against a well-annotated reference atlas (e.g., Human Cell Atlas).

- Cross-Platform, Cross-Species Integration: Integrating datasets generated from different technologies (e.g., 10X Genomics, Smart-seq2) or aligning related species.

- Temporal and Perturbation Integration: Studying dynamic processes and drug responses by integrating time-course or treated/control samples.

Quantitative Benchmarking Metrics: Successful integration is evaluated using metrics that balance batch mixing and biological conservation.

Table 1: Key Metrics for Benchmarking Integration Performance

| Metric | Purpose | Ideal Value | Description |

|---|---|---|---|

| kBET (k-nearest neighbor Batch Effect Test) | Batch Mixing | High p-value (>0.05) | Tests if local neighborhoods are well-mixed across batches. |

| LISI (Local Inverse Simpson's Index) | Batch/Cell-type Mixing | Batch LISI: High, Cell-type LISI: Low | Quantifies diversity of batches or cell types in a local neighborhood. |

| ASW (Average Silhouette Width) | Biological Conservation | High (close to 1) | Measures compactness of biological clusters. Cell-type ASW should be high; batch ASW should be low. |

| Graph Connectivity | Biological Conservation | 1 | Assesses if cells of the same cell type remain connected in the integrated graph. |

| PCR (Principal Component Regression) Batch | Batch Effect Removal | Low | Proportion of variance in PCs explained by batch. |

| Cell-type Classification Accuracy (F1-score) | Utility for Mapping | High (close to 1) | Accuracy of transferring labels from reference to query after integration. |

Table 2: Comparative Analysis of scArches and Symphony

| Feature | scArches | Symphony |

|---|---|---|

| Core Methodology | Deep generative model (transfer learning) | Linear correction based on PCA & soft clustering |

| Integration Type | Deep, non-linear harmonization | Fast, linear projection |

| Primary Use Case | Iterative atlas building; complex batch correction | Ultra-fast query-to-reference mapping |

| Speed (Query Mapping) | Moderate | Very Fast |

| Preservation of Rare Populations | High (generative) | Moderate |

| Output | Joint latent embedding, corrected counts | Reference-aligned low-dimensional embedding |

| Key Reference | Lotfollahi et al., Nat Biotechnol 2022 | Kang et al., Nat Biotechnol 2021 |

Experimental Protocols

Protocol 1: Benchmarking Integration Algorithms

Objective: Systematically evaluate the performance of scArches, Symphony, and other tools on a controlled dataset with known ground truth.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Dataset Curation: Select a publicly available benchmark dataset with multiple batches and known cell-type labels (e.g., from pancreas, PBMCs, or a multi-technology study). Split data into a pre-defined reference and query set.

- Preprocessing: Independently normalize and log-transform the count matrices for reference and query datasets. Perform variable gene selection (e.g., 2000-3000 HVGs) on the reference; subset the query genes to match.

- Reference Construction:

- For Symphony: Run PCA on the reference. Use

harmony::RunHarmonyon the PCA embedding to build a batch-corrected reference embedding. Build the Symphony reference withsymphony::buildReference. - For scArches: Train a base model (e.g., scVI) on the reference dataset using default or optimized hyperparameters. Save the trained model.

- For Symphony: Run PCA on the reference. Use

- Query Mapping:

- Symphony: Use

symphony::mapQueryto project the query data into the reference embedding. The function returns corrected PCA coordinates. - scArches: Load the pre-trained reference model. Use the

scArchestraining function with thefine_tuneorsurgeryoption, passing the query data to map it into the reference's latent space.

- Symphony: Use

- Evaluation: Calculate metrics from Table 1 on the integrated object (reference + query).

- Use

scib.metricspackage or custom scripts. - For kBET/LISI: Compute on the final low-dimensional embedding (e.g., UMAP of corrected latent space).

- For ASW: Calculate separately for batch and cell-type labels.

- For Graph Connectivity: Build a kNN graph on the integrated embedding and check connectivity per cell-type cluster.

- For Classification Accuracy: Train a classifier (e.g., kNN) on reference labels and predict on the mapped query cells. Compute macro F1-score.

- Use

Protocol 2: Constructing and Utilizing a Cross-Condition Atlas with scArches

Objective: Build an integrated reference atlas from multiple studies of a disease (e.g., Ulcerative Colitis) and map new patient samples for annotation.

Procedure:

- Data Collection & Curation: Download public scRNA-seq datasets for the disease and healthy controls from repositories (e.g., GEO, EBI ArrayExpress). Standardize metadata (e.g., condition, donor, technology).

- Base Model Training: Pool a large, diverse subset of data to serve as the initial "base" reference. Preprocess (normalize, HVG selection). Train a conditional scVI or trVAE model, specifying batch and optionally other covariates.

- Model Surgery with scArches: For each remaining study or new incoming data:

a. Load the pre-trained base model.

b. Prepare the new query dataset, aligning genes to the reference.

c. Run

scArcheswith thetrVAEorscVItemplate. The algorithm "surgically" modifies the model's architecture by adding new batch nodes and fine-tuning only a subset of weights relevant to the new data. d. The output is an updated, integrated model and a joint latent representation. - Atlas Visualization & Annotation: Generate a UMAP from the final joint latent space. Perform Leiden clustering. Identify marker genes for each cluster using differential expression analysis on the corrected counts or latent space. Annotate cell types.

- Downstream Application – Mapping New Patients: For a new patient sample, follow step 3b-3c to map it into the existing atlas. The patient's cells will be projected into the annotated UMAP, enabling automatic label transfer and identification of novel or disease-enriched states.

Visualization: Workflows and Logical Diagrams

Title: scArches Transfer Learning Workflow

Title: Symphony Reference Building and Query Mapping

Title: Benchmarking Integration Quality Logic

The Scientist's Toolkit

Table 3: Essential Research Reagents and Solutions for AI-Powered Integration

| Item | Function / Description | Example / Specification |

|---|---|---|

| High-Quality scRNA-seq Datasets | Raw material for integration. Require clear metadata (batch, donor, condition, technology). | Public repositories: GEO, ArrayExpress, CellXGene. Controlled benchmark sets (e.g., from Seurat, SCB). |

| Computational Environment | Containerized, reproducible environment for running complex AI models. | Docker or Singularity container with Python (>3.8), R (>4.0), PyTorch/TensorFlow, Jupyter. |

| Integration Algorithm Suites | Core software tools implementing the AI methods. | scArches (scarches Python package), Symphony (symphony R package), scVI (scvi-tools), Harmony (harmony R package). |

| Benchmarking Package | Standardized metric calculation for fair comparison. | scib.metrics Python package or SCIB R pipeline. |

| High-Performance Computing (HPC) Resources | Essential for training deep learning models on large datasets. | GPU nodes (NVIDIA V100/A100) with >32GB RAM. Cloud computing credits (AWS, GCP). |

| Visualization Software | For exploring integrated embeddings and results. | scanpy (Python), Seurat (R) for UMAP/t-SNE plots, ggplot2. |

| Cell-Type Annotation Database | For biological interpretation of integrated clusters. | Reference atlases (e.g., Human Cell Atlas), marker gene lists, automated tools (e.g., SingleR, cellassign). |

Navigating Challenges: Practical Troubleshooting and Optimization of AI for scRNA-seq

1. Introduction Within the broader thesis on AI methods for single-cell RNA sequencing (scRNA-seq) analysis, three persistent analytical pitfalls are batch effects, dropouts, and the curse of high dimensionality. Batch effects introduce non-biological variation from technical sources, dropouts refer to false zero counts due to low mRNA capture, and high dimensionality complicates visualization and statistical inference. This Application Note details modern AI-driven protocols to identify, quantify, and correct these issues.

2. Quantitative Summary of AI Solution Performance Table 1: Benchmarking of AI-based Tools for Addressing scRNA-seq Pitfalls (Summarized from Recent Literature)

| Pitfall | AI Solution Category | Example Tool/Model | Key Metric (Performance) | Reference Year |

|---|---|---|---|---|

| Batch Effect | Integration/Alignment | Seurat v5 (CCA, RPCA) | Batch Alignment Score > 0.85 | 2023 |

| Batch Effect | Deep Learning Integration | scVI (Variational Autoencoder) | kBET rejection rate < 0.1 | 2022 |

| Dropout | Imputation | DCA (Deep Count Autoencoder) | Pearson corr. ↑ 0.2-0.3 vs. raw | 2023 |

| Dropout | Imputation | scGNN (Graph Neural Net) | F1-score for rare cell detection ↑ 15% | 2021 |

| High Dimensionality | Dimensionality Reduction | UMAP (Non-linear manifold) | Local structure preservation > 90%* | 2024 |

| High Dimensionality | Feature Selection | scANVI (Semi-supervised VAE) | Cluster-specific gene detection, AUC 0.92 | 2023 |

| All Pitfalls | End-to-End Framework | totalVI (Joint modeling of RNA+protein) | Denoised expression, integrated across modalities | 2022 |

*Qualitative metric based on common benchmark assessments.

3. Detailed Experimental Protocols

Protocol 3.1: Assessing and Correcting Batch Effects using scVI Objective: Integrate multiple scRNA-seq datasets to remove technical batch variation while preserving biological heterogeneity. Materials: Python environment (PyTorch, scvi-tools), annotated scRNA-seq count matrices from ≥2 batches. Procedure:

- Data Preparation: Load AnnData objects. Filter cells (mingenes=200, maxgenes=5000) and genes (min_cells=3). Normalize total counts per cell (10^4 scale) and log1p transform.

- Model Setup:

scvi.model.SCVI.setup_anndata(adata, batch_key="batch_label"). This specifies the batch covariate. - Model Training: Instantiate

SCVI(adata, n_layers=2, n_latent=30). Train for 400 epochs usingtrain()with an 80/20 train-validation split. - Integration & Analysis: Extract the batch-corrected latent representation with

adata.obsm["X_scVI"] = model.get_latent_representation(). Use this for downstream clustering (Leiden) and UMAP visualization. - QC Metrics: Calculate the average silhouette width per biological cell type (should increase post-integration) and the kBET statistic on the latent space (target rejection rate < 0.1).

Protocol 3.2: Imputing Dropout Events using DCA Objective: Recover missing gene expression values due to technical dropout noise. Materials: Raw count matrix (CSV or H5AD), DCA Python package. Procedure:

- Input: Use raw, unfiltered count data. Do not log-normalize.

- Configuration: Configure DCA for zero-inflated negative binomial loss (

--type zinb). Set network dimensions (e.g.,--hidden 64,32,64). - Training: Run

dca my_data.h5ad output/to train the autoencoder. Monitor reconstruction loss. - Output: The

output/mean.tsvfile contains the denoised and imputed count matrix. This can be used for differential expression analysis, improving trajectory inference, or gene-gene correlation studies. - Validation: Compare the coefficient of variation (CV) vs. mean expression relationship pre- and post-imputation. Successful imputation reduces CV for mid-to-low expression genes. Validate with held-out "spike-in" genes or via qPCR correlation if available.

Protocol 3.3: Dimensionality Reduction and Feature Selection with scANVI Objective: Leverage semi-supervised learning for guided dimensionality reduction and biologically relevant feature identification. Materials: Partially labeled scRNA-seq data (e.g., a subset of cells annotated), scvi-tools. Procedure:

- Pre-training: Train an SCVI model as in Protocol 3.1 on the full, unlabeled dataset.

- scANVI Transfer:

scanvi_model = scvi.model.SCANVI.from_scvi_model(scvi_model, unlabeled_category="Unknown", labels_key="initial_clusters"). - Semi-supervised Training: Train scANVI for an additional 100-150 epochs using the partial labels.

- Query and Label Transfer:

scanvi_model.predict()to annotate unlabeled cells. - Differential Expression & Features: Perform differential expression directly in the latent space using

scanvi_model.differential_expression(). The model weights highlight genes driving the learned latent dimensions.

4. Visualizations

AI Solution Workflow for scRNA-seq Pitfalls

VAE Architecture for scRNA-seq (e.g., scVI, DCA)

5. The Scientist's Toolkit: Key Research Reagent Solutions Table 2: Essential Materials & Tools for AI-Driven scRNA-seq Analysis

| Item Name | Provider/Platform | Function in Analysis |

|---|---|---|

| Chromium Next GEM Single Cell 3' / 5' Kits | 10x Genomics | Standardized reagent kits for generating barcoded scRNA-seq libraries, a primary source of input data. |

| Cell Ranger | 10x Genomics | Pipeline for demultiplexing, barcode processing, and initial UMI counting. Outputs the raw count matrix. |

| Seurat | Satija Lab / CRAN/Bioconductor | Comprehensive R toolkit for QC, integration (CCA, RPCA), and clustering. Often used in conjunction with AI models. |

| scvi-tools | Yosef Lab / PyPI | Python-based framework providing scalable implementations of scVI, scANVI, totalVI, and other deep generative models. |

| Scanpy | Theis Lab / PyPI | Python library for efficient preprocessing, visualization, and analysis, seamlessly integrates with scvi-tools. |

| ANNData Object | Scanpy/scvi-tools | Core in-memory data structure organizing counts, metadata, and latent representations for efficient processing. |

| PyTorch with CUDA | Meta / NVIDIA | Deep learning framework and parallel computing platform essential for training complex AI models on GPUs. |

| Custom Reference Transcriptome | GENCODE/Ensembl | Curated gene annotation file for alignment, critical for accurate gene counting and model input. |

Within the broader thesis on AI methods for single-cell RNA sequencing analysis, optimizing model performance is critical for extracting biologically meaningful insights from high-dimensional, sparse, and noisy data. This document details application notes and protocols for systematic hyperparameter tuning and computational resource management, essential for developing robust deep learning models (e.g., autoencoders, graph neural networks) for tasks like cell type annotation, trajectory inference, and gene expression imputation.

Key Hyperparameters in scRNA-seq AI Models

The following table summarizes core hyperparameters requiring tuning for common AI architectures in scRNA-seq analysis.

Table 1: Critical Hyperparameters for Common scRNA-seq AI Models

| Model Archetype | Key Hyperparameters | Typical Search Range | Impact on Performance |

|---|---|---|---|

| Variational Autoencoder (VAE) | Latent dimension, learning rate, beta (KL weight), dropout rate, number of hidden layers | [10, 200], [1e-4, 1e-2], [0.001, 1], [0, 0.5], [1, 5] | Governs compression, denoising, and disentanglement of biological factors. |

| Graph Neural Network (GNN) | Number of GNN layers, hidden channels, aggregation function, learning rate | [1, 6], [64, 512], {mean, sum, attention}, [1e-4, 1e-2] | Affects capture of cell-cell relationships in constructed graphs. |

| Transformer / Attention | Number of heads, embedding dimension, FFN dimension, attention dropout | [2, 12], [128, 1024], [512, 4096], [0, 0.3] | Influences modeling of gene-gene interactions and long-range dependencies. |

| U-Net (for spatial transcriptomics) | Encoder depth, decoder depth, filter size, upsampling method | [3, 7], [3, 7], [32, 256], {transpose conv, interpolation} | Determines capability to map high-res spatial gene expression patterns. |

Protocols for Hyperparameter Optimization

Protocol 3.1: Systematic Bayesian Optimization for Model Selection

Objective: To efficiently identify the optimal hyperparameter set maximizing a validation metric (e.g., silhouette score for clustering, MSE for imputation).

Materials:

- Computing cluster with SLURM or Kubernetes orchestration.

- Hyperparameter optimization library (Optuna, Ray Tune).

- Tracked experiment manager (MLflow, Weights & Biases).

- Pre-processed scRNA-seq dataset (e.g., 10x Genomics, Smart-seq2).

Procedure:

- Define Search Space: Specify distributions for each hyperparameter from Table 1 using the optimization library's syntax (e.g.,

optuna.distributions.LogUniformDistribution(1e-5, 1e-2)for learning rate). - Implement Objective Function: Code a function that: a. Takes a hyperparameter trial/suggestion as input. b. Instantiates the AI model with the suggested parameters. c. Trains the model on the training set for a predefined number of epochs (using early stopping). d. Evaluates the model on the held-out validation set using the target metric. e. Returns the metric value to the optimizer.

- Configure & Execute Study: Create an optimization "study." Set the direction (

maximizeorminimize), sampler (TPESampler), and pruner (MedianPruner). Execute for a minimum of 50 trials. - Analysis: Retrieve the best trial's parameters. Use parallel coordinate plots to visualize parameter interactions. Retrain the final model with the best parameters on the combined training and validation set.

Protocol 3.2: Cross-Validation Strategy for Small-Sample Datasets

Objective: To provide a robust performance estimate when labeled data is limited (e.g., in supervised cell typing).

Procedure:

- Implement a nested cross-validation (CV) scheme.

- Outer Loop (Performance Estimation): Split data into k folds (e.g., k=5). For each fold: a. Hold out one fold as the test set. b. Use the remaining k-1 folds for the inner loop.

- Inner Loop (Hyperparameter Tuning): On the k-1 folds, perform a second CV (e.g., 3-fold) or use a hold-out validation set to run Protocol 3.1.

- Final Evaluation: Train a model with the best inner-loop parameters on all k-1 folds. Evaluate on the held-out outer test fold. Aggregate performance across all k outer folds.

Computational Resource Management