DREAM Challenges: The Definitive Guide to Crowdsourced Biomedical Benchmarking for Drug Discovery

This article provides a comprehensive overview of the Dialogue for Reverse Engineering Assessments and Methods (DREAM) Challenges, a pioneering community-driven platform for rigorous benchmarking in computational biology and translational medicine.

DREAM Challenges: The Definitive Guide to Crowdsourced Biomedical Benchmarking for Drug Discovery

Abstract

This article provides a comprehensive overview of the Dialogue for Reverse Engineering Assessments and Methods (DREAM) Challenges, a pioneering community-driven platform for rigorous benchmarking in computational biology and translational medicine. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of DREAM, detailing its role in establishing gold-standard benchmarks for algorithms in genomics, drug sensitivity prediction, and clinical outcome forecasting. We delve into methodological best practices for participation, common pitfalls and optimization strategies, and frameworks for validating and comparing results. The guide synthesizes how DREAM's open-science model accelerates robust methodology development, fosters collaboration, and directly impacts the pipeline of computational drug discovery and precision medicine.

What Are DREAM Challenges? A Primer on Open-Science Benchmarking in Biomedicine

The Dialogue for Reverse Engineering Assessments and Methods (DREAM) challenges were conceived as a novel framework to benchmark and advance predictive models in systems biology and translational medicine. Originating in 2006, DREAM pioneered a paradigm of "collaborative competition," where participants compete to solve well-defined scientific problems while sharing methodologies, fostering community-wide learning and rigorous assessment of algorithms. This whitepaper details the core philosophical and technical foundations of the DREAM initiative, situating it as a critical engine for robust community research benchmarking in computational biology and drug development.

Origins and Philosophical Framework

The DREAM project was launched to address a critical reproducibility crisis in computational biology. Its founding principle is that the true predictive power of a model is only revealed when tested on blind data not used in its construction. This philosophy is operationalized through a cycle of community challenge design, participation, and assessment.

Guiding Principles:

- Blind Prediction: All challenges are conducted on withheld gold-standard data.

- Objective Benchmarking: Quantitative and unbiased evaluation metrics are defined a priori.

- Collaborative Competition: While competing for accuracy, participants often form consortia and share insights, accelerating collective problem-solving.

- Open Science: Winning methods are dissected and published, providing reusable protocols and benchmarks for the field.

Core Technical Architecture of a DREAM Challenge

A standard DREAM challenge follows a meticulously designed workflow to ensure fairness, rigor, and maximal community utility.

Challenge Workflow Protocol

Protocol Title: Standard DREAM Challenge Execution Workflow Objective: To define the sequential stages for creating, running, and analyzing a community benchmark challenge. Methodology:

- Problem Scoping: Domain experts identify a critical, data-rich prediction question with clinical or biological relevance (e.g., predicting drug synergy from genomic features).

- Data Curation & QC: A training dataset (features and a portion of outcomes) is released. A test dataset (features only, with outcomes withheld) is prepared.

- Challenge Launch: The training data, prediction question, and evaluation metric (e.g., AUPRC, RMSD) are publicly announced. Teams register and download data.

- Prediction Period: Participants develop models and submit predictions for the test set to a central platform. Multiple submission rounds may be allowed.

- Evaluation & Scoring: The organizer evaluates all submissions against the withheld gold standard using the pre-defined metric.

- Post-Challenge Analysis: Results are published. Top-performing methods are analyzed to identify best practices, and often, a consensus model outperforming any single submission is created.

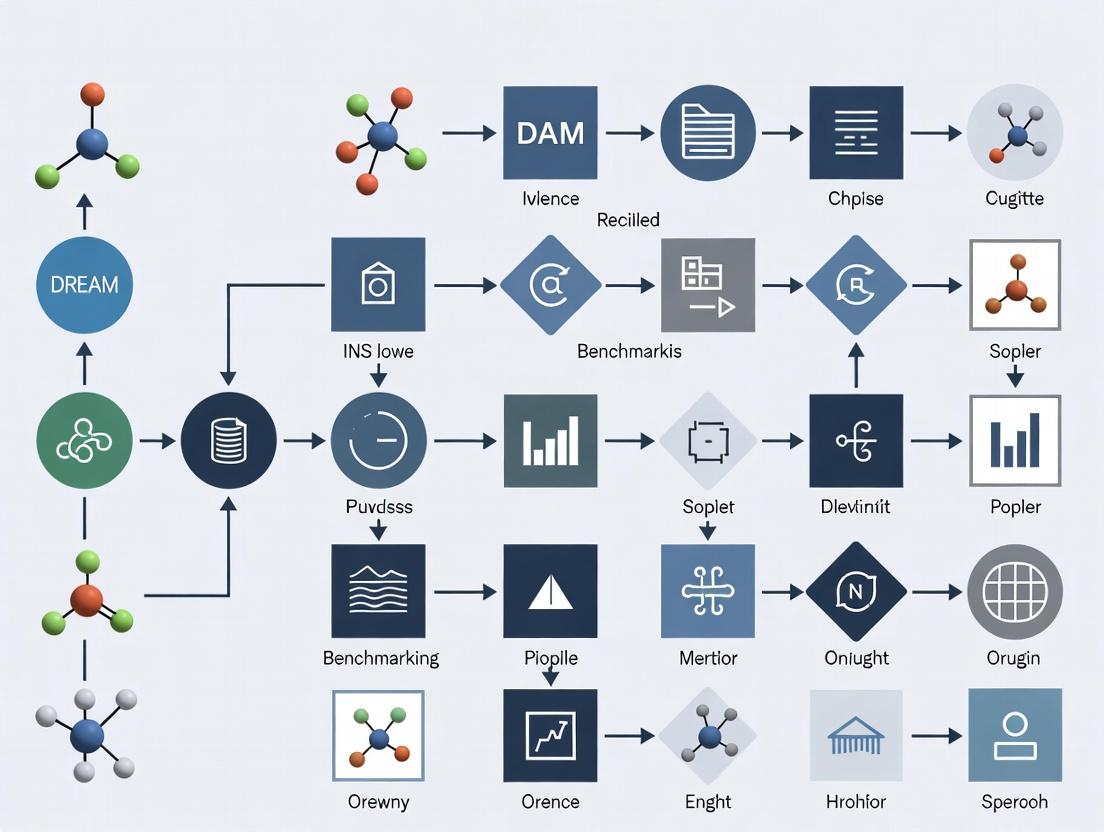

Diagram: DREAM Challenge Workflow

Quantitative Impact & Benchmarking Data

The success of DREAM is quantified by its broad adoption and its role in establishing definitive benchmarks. The table below summarizes key metrics from its foundational period and major challenge categories.

Table 1: DREAM Challenge Metrics and Impact (Representative Data)

| Challenge Category | Example Challenge (Year) | Participating Teams | Key Benchmark Established | Consensus Model Improvement vs. Best Single? |

|---|---|---|---|---|

| Network Inference | DREAM5 Network Inference (2010) | 29 | Rigorous assessment of gene regulatory network algorithms | Yes |

| Drug Sensitivity | NCI-DREAM Drug Sensitivity (2012) | 44 | Framework for predicting cell line response to compounds | Yes |

| Translational Medicine | DREAM-AKI Prognosis (2019) | 105 | Best practices for clinical acute kidney injury prediction models | Yes |

| Single-Cell Analysis | DREAM Single Cell Transcriptomics (2021) | 80+ | Benchmark for cell type identification and trajectory inference | Under Analysis |

The Scientist's Toolkit: Essential Research Reagents for DREAM-Style Benchmarking

Conducting or participating in a DREAM-style challenge requires a suite of conceptual and technical "reagents."

Table 2: Essential Toolkit for DREAM-Style Collaborative Competition

| Item / Solution | Function in the Benchmarking Process |

|---|---|

| Blinded Test Set (Gold Standard) | The ultimate arbiter of model performance; must be meticulously curated and completely withheld during model development. |

| Pre-Defined Scoring Metric | A quantitative, objective function (e.g., MSE, AUROC) used to rank submissions, chosen to reflect the biological/clinical question. |

| Standardized Data Format | A common schema (e.g., CSV, HDF5) for training data and prediction submissions to ensure automated, error-free evaluation. |

| Submission Portal & Leaderboard | A secure web platform for teams to upload predictions and view real-time rankings (on validation data) to foster engagement. |

| Post-Challenge Code/Container | A Docker container or code repository submitted by winners to encapsulate their method, enabling exact reproducibility. |

| Consensus Algorithm | A method (e.g., model stacking, Bayesian integration) to combine top-performing submissions, often yielding a superior community model. |

Experimental Protocol: Implementing a Post-Challenge Consensus Analysis

A hallmark of DREAM is the post-challenge analysis that extracts maximal community knowledge.

Protocol Title: Generation of a Community Consensus Prediction Model Objective: To integrate top-performing challenge submissions into a single, more robust consensus model. Methodology:

- Input: Collect the final prediction files from the top N (e.g., 10) performing teams on the full test set.

- Alignment: Ensure all prediction matrices are aligned identically by sample and outcome identifier.

- Consensus Method Selection: Choose an integration strategy. A common, robust method is Linear Stacking: a. Use the gold-standard test data (now unblinded) as a training set for the meta-model. b. Treat the predictions from each of the N teams as features in this new dataset. c. Train a regularized linear model (e.g., Elastic Net) or a simple average to weight each team's prediction. d. Use cross-validation on this set to prevent overfitting of the consensus weights.

- Validation: Assess the consensus model on an optional, entirely held-out validation dataset (if available) to confirm its superior and generalizable performance.

- Dissection: Analyze the weights of the consensus model to infer which methodological approaches contributed most to performance.

Diagram: Consensus Model Generation

The genesis of DREAM established "collaborative competition" as a powerful paradigm for driving scientific discovery. By providing a rigorous, open, and community-driven framework for benchmarking, DREAM challenges have not only produced state-of-the-art predictive models but have also illuminated the strengths and limitations of methodological approaches across biomedicine. For researchers and drug development professionals, engagement in DREAM or adoption of its principles offers a proven path to generate robust, reproducible, and clinically relevant computational findings.

DREAM (Dialogue for Reverse Engineering Assessments and Methods) Challenges are collaborative competitions that benchmark computational and experimental methods in systems biology and medicine. Framed within a broader thesis on benchmarking community research, these challenges provide a rigorous, open-science framework for crowdsourcing solutions to complex biomedical problems, driving innovation in drug development and translational science.

The Core Challenge Lifecycle

The management of a DREAM Challenge follows a structured, phased workflow.

Diagram 1: DREAM Challenge Management Lifecycle (7 phases)

Quantitative Structure & Participation Metrics

Based on recent DREAM Challenges (e.g., AstraZeneca-Sanger Drug Combination Prediction, NCI-CPTAC Multi-omics Cancer Prognosis, ICGC-TCGA DREAM Somatic Mutation Calling), the following metrics are typical.

Table 1: Typical DREAM Challenge Quantitative Metrics

| Metric Category | Typical Range | Example (Specific Challenge) |

|---|---|---|

| Duration | 3 - 6 months | 4 months (Drug Combination Prediction, 2022) |

| Number of Participating Teams | 50 - 200+ | 167 teams (Somatic Mutation Calling, 2020) |

| Number of Submissions | 500 - 10,000+ | ~8,000 submissions (Multi-omics Prognosis, 2021) |

| Data Volume | 10 GB - 10 TB | ~5 TB (Pan-cancer ATAC-seq, 2023) |

| Benchmarking Datasets | 2 - 5 (Train/Test/Validation) | 3 datasets: Public, Private, Final Hold-out |

| Prize Pool (if applicable) | $0 - $100,000 | In-kind compute credits & conference travel |

Experimental Protocol: The Gold-Standard Benchmarking Methodology

A central tenet of DREAM is rigorous, unbiased evaluation. The protocol below is used to assess participant predictions.

Protocol Title: Double-Blinded Evaluation with Hold-Out Validation Sets

- Data Partitioning: The challenge organizers partition the reference dataset into three subsets:

- Training/Public Test Set: Released to participants at launch. Includes features and a portion of the ground-truth outcomes.

- Private Validation Set: Used for ongoing leaderboard scoring. Ground truth is withheld from participants.

- Final Hold-Out Set: Used only for the final assessment to determine winners. Never used in leaderboard updates to prevent overfitting.

- Prediction Submission: Participants submit their algorithm's predictions on the private or final hold-out set features via a standardized format (e.g., CSV, JSON) to a platform like Synapse or CodaLab.

- Automated Scoring: An automated scoring pipeline (often a Docker container) evaluates submissions against the held-out ground truth using pre-specified metrics (e.g., AUROC, RMSE, concordance index).

- Leaderboard Update: For the private phase, scores are posted to a public leaderboard, typically with a limit on daily submissions to prevent brute-force tuning.

- Final Assessment: The final ranking is based solely on performance on the final hold-out set, which is evaluated once after the submission deadline closes.

Diagram 2: Double-blinded evaluation workflow with hold-out sets

The Scientist's Toolkit: Essential Research Reagent Solutions

For a typical DREAM Challenge focused on predictive modeling from molecular data, the key "reagents" are computational.

Table 2: Key Computational Research Reagents for a DREAM Challenge

| Item | Function in Challenge | Example/Format |

|---|---|---|

| Training Dataset | Core input for model development. Contains features (e.g., gene expression, mutations) and partial ground truth. | HDF5, CSV, or MAF files on Synapse. |

| Validation Features | The feature data for private/final sets on which predictions must be made. Ground truth is withheld. | Identically formatted to training data. |

| Docker Container | Standardized environment for local testing of scoring metric and ensuring reproducibility. | Docker image from DockerHub. |

| Submission Template | Predefined file format ensuring participant predictions are machine-readable for automated scoring. | prediction.csv with specific column headers. |

| Scoring Script/Module | The exact implementation of the evaluation metric (e.g., scikit-learn function) for participant use. | Python script or R package. |

| Benchmark Baseline | A simple reference method (e.g., random guess, linear model) performance for comparison. | Published AUROC/RMSE score on leaderboard. |

Governance, Collaboration, and Post-Challenge Phase

Management involves a consortium of organizers, data providers, and judges. Post-challenge, top methods are often integrated into community resources, and collaborative manuscripts are written.

Table 3: Post-Challenge Outputs and Outcomes

| Output Type | Description | Impact on Benchmarking Thesis |

|---|---|---|

| Methods Publication | A peer-reviewed paper (often in Nature Methods, Cell Systems) detailing challenge design, outcomes, and winning methods. | Establishes a new community benchmark. |

| Open-Source Code | Winning algorithms are released publicly on GitHub or CodeOcean. | Enables method reuse and direct comparison in future research. |

| Consortium Author List | Often includes all successful participants, embodying large-scale collaboration. | Demonstrates crowdsourced benchmarking power. |

| Data Resource | Curated challenge datasets become permanent, citable community resources (e.g., on Synapse). | Provides a standardized test bed for future tool development. |

The Dialogue for Reverse Engineering Assessments and Methods (DREAM) challenges represent a cornerstone in the systematic benchmarking of computational biology and translational research. By framing community-wide critical assessments around specific, high-impact biological problems, DREAM has established a gold standard for evaluating predictive algorithms in genomics, network inference, and clinical outcome prediction. This whitepaper analyzes landmark studies catalyzed by these challenges, detailing their experimental rigor, quantitative outcomes, and enduring methodological contributions to biomedical science and drug development.

Landmark DREAM Challenges and Quantitative Outcomes

Table 1: Key DREAM Challenges and Core Findings

| Challenge Name & Edition | Primary Objective | Key Quantitative Outcome | Impact on Field |

|---|---|---|---|

| DREAM2 Network Inference (2007) | Reverse-engineer transcriptional networks from synthetic and E. coli perturbation data. | Best method (ANOVA-based) achieved an AUPR of 0.61 on in silico data; performance dropped significantly on E. coli data. | Established baseline for network inference accuracy; highlighted gap between in silico and biological data. |

| DREAM7 Drug Sensitivity Prediction (2012) | Predict IC50 values for 28 compounds across 53 breast cancer cell lines using genomic data. | Winning model (Bayesian multitask learning) achieved a Pearson correlation of 0.52 between predicted and observed IC50. | Demonstrated feasibility and limits of in vitro drug response prediction from molecular features. |

| DREAM8 Sage Bionetworks Breast Cancer Prognosis (2012) | Predict patient survival using gene expression data from ~2000 breast tumors. | Top model achieved a Concordance Index (C-index) of 0.67, modestly outperforming clinical-only models. | Showed that complex models offered limited improvement over established clinical markers (e.g., ER status). |

| DREAM9.5 AML Outcome Prediction (2015) | Predict cytogenetic status and survival in Acute Myeloid Leukemia (AML) using multi-omics data. | Winning entry for survival prediction attained a C-index of 0.74, integrating mutations, expression, and clinical data. | Validated the utility of integrating diverse molecular data types for improved clinical risk stratification. |

| DREAM10 Single-Cell Transcriptomics (2016) | Infer gene regulatory networks from single-cell RNA-seq data of differentiating mouse embryonic stem cells. | Top-performing method demonstrated significant improvement over bulk-data methods, but overall accuracy remained low (AUPR < 0.3). | Revealed unique challenges and spurred algorithm development for single-cell network inference. |

Table 2: Comparative Performance Metrics Across Challenge Classes

| Challenge Class | Typical Best Metric Score | Benchmark Dataset | Primary Limitation Uncovered |

|---|---|---|---|

| Network Inference (Transcriptional) | AUPR: 0.40 - 0.65 (on gold standards) | E. coli SOS pathway, in silico networks | Poor generalizability; high false positive rates. |

| Drug Sensitivity Prediction | Pearson r: 0.45 - 0.60 | GDSC, CCLE cell line panels | Context-specificity; poor translation to in vivo models. |

| Clinical Outcome Prediction | C-index: 0.65 - 0.75 | TCGA, METABRIC cohorts | Overfitting; marginal gain over established clinical variables. |

Detailed Experimental Protocols

Protocol: DREAM Network Inference Challenge Workflow

Objective: Infer a directed gene regulatory network from gene expression data. Input: Steady-state or time-series expression profiles following genetic or environmental perturbations.

- Data Provisioning: Participants receive gene expression matrices (genes x samples) and a description of perturbations. Gold standard networks are withheld.

- Algorithm Application:

- Correlation/Information Theory: Calculate pairwise mutual information (e.g., ARACNE algorithm) or Pearson correlation.

- Regression Models: Use LASSO or Bayesian regression to infer causal edges from perturbation states.

- Model Simulation: For in silico challenges, use ordinary differential equation (ODE) models to fit time-series data.

- Prediction Submission: Teams submit a ranked list of predicted regulatory edges (Regulator → Target).

- Benchmarking: Organizers evaluate submissions against the held-out gold standard using the Area Under the Precision-Recall Curve (AUPR). Precision-Recall is preferred over ROC due to severe class imbalance (few true edges among many possible).

Protocol: DREAM Clinical Outcome Prediction Pipeline

Objective: Develop a model to predict patient survival or treatment response from multi-omics data. Input: Matrices of genomic features (e.g., gene expression, mutations, CNVs) linked to de-identified clinical outcomes.

- Training/Test Split: Data is partitioned into a public training set (with outcomes) and a private test set (outcomes held by organizers).

- Feature Preprocessing & Selection:

- Normalize expression data (e.g., TPM, RSEM).

- Perform quality control and batch correction (e.g., using ComBat).

- Apply dimensionality reduction (e.g., PCA) or feature selection (e.g., univariate Cox regression p-value filtering).

- Model Training: Implement predictive algorithms:

- Cox Proportional Hazards Model with elastic net regularization (

glmnetR package). - Random Survival Forests (

randomForestSRCpackage). - Deep Learning: Implement a multi-layer perceptron with a Cox loss function.

- Cox Proportional Hazards Model with elastic net regularization (

- Prediction & Validation: Generate risk scores for test set patients. Submissions are evaluated on the Concordance Index (C-index) in the held-out test set, quantifying the model's ability to correctly rank survival times.

Visualizations

Title: DREAM Challenge Generic Workflow

Title: Network Inference Challenge Pipeline

Title: Clinical Prediction Model Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for DREAM-Style Research

| Item / Resource | Function in Challenge Research | Example/Provider |

|---|---|---|

| Synapse Platform | Secure data hosting, participant registration, and blinded prediction submission. | Sage Bionetworks (Used from DREAM7 onward). |

| R/Bioconductor | Primary environment for statistical analysis, omics data processing, and model building. | Packages: limma, survival, glmnet, impute. |

| Python SciPy Stack | Alternative ecosystem for machine learning and deep learning model development. | Libraries: scikit-learn, pandas, PyTorch/TensorFlow. |

| Gene Expression Omnibus (GEO) / The Cancer Genome Atlas (TCGA) | Primary public data sources for training and validation datasets. | NIH/NCI repositories. |

| Cell Line Encyclopedia (CCLE) & GDSC | Curated pharmacogenomic datasets linking molecular profiles to drug response. | Broad Institute, Wellcome Sanger Institute. |

| Cytoscape | Visualization and analysis of inferred biological networks. | Open-source platform. |

| Docker/Singularity | Containerization for reproducible execution of computational methods. | Used for "containerized challenges" to ensure result reproducibility. |

Within the broader thesis on DREAM challenges as a benchmark for community-driven research, this paper examines the composition of the DREAM (Dialogue for Reverse Engineering Assessments and Methods) community and the mechanisms through which its scientific collaborations are established and function. The DREAM initiative, pioneered by Sage Bionetworks and IBM, creates a framework for crowdsourcing solutions to complex biomedical questions through open data science challenges. This in-depth guide analyzes the technical and social architecture that enables this community to produce high-impact, reproducible computational research.

Community Participant Demographics and Affiliations

The DREAM community is a multi-stakeholder ecosystem. A live search for recent challenge participation data (e.g., from Synapse platform publications and challenge summaries) reveals the following quantitative breakdown of key participant groups.

Table 1: DREAM Challenge Participant Composition (Representative Data)

| Participant Category | Approximate Percentage | Primary Affiliation Types | Typical Contribution |

|---|---|---|---|

| Academic Researchers | ~45% | Universities, Research Institutes | Algorithm development, novel methodological approaches, fundamental biological insight. |

| Industry Scientists | ~25% | Biotech, Pharma, AI/ML Companies | Applied tool development, translational focus, scalability considerations. |

| Bioinformatics & Data Scientists | ~20% | Core Facilities, CROs, Independent Consultants | Data processing pipelines, benchmarking, implementation expertise. |

| Clinicians & Translational Researchers | ~7% | Hospitals, Medical Schools | Clinical problem framing, validation context, biological/clinical dataset provision. |

| Students & Trainees | ~3% | Graduate Programs, Postdoctoral Fellowships | Method implementation, next-generation researcher training. |

Table 2: Collaboration Metrics Across Challenge Phases

| Phase | Avg. Team Size | Avg. Number of Institutions per Team | Key Collaboration Forging Activity |

|---|---|---|---|

| Pre-Challenge (Problem Scoping) | 5-10 (Organizers) | 3-5 | Workshop-based consensus on question design, data generation protocols. |

| Active Challenge Period | 3-5 (Per submitting team) | 1-2 (Mostly single-institution) | Online forums (e.g., Synapse Discussion), virtual meet-ups, code sharing. |

| Post-Challenge (Consortium Phase) | 15-50+ (Consortium) | 10-20+ | In-person hackathons, manuscript writing groups, shared analysis sprints. |

Protocol for Forging Collaborations: The DREAM Workflow

The process of forming and sustaining collaborations is systematic and integral to the challenge design.

Experimental Protocol 3.1: Challenge Design and Community Engagement

- Problem Identification: Organizers (a consortium of academic/industry scientists) identify a critical, unsolved problem in systems biology or translational medicine where diverse computational approaches are likely beneficial.

- Data Curation & Sandbox Creation: A gold-standard dataset is curated or generated. A "provisional" data sandbox is released on the Synapse platform to allow teams to test ingestion and preprocessing pipelines.

- Launch & Open Registration: The challenge is formally launched. Participants register on Synapse, forming teams or opting to be individually matched based on skillsets listed in profiles.

- Iterative Submission & Leaderboard: Teams submit predictions/code to a blinded validation set. A public leaderboard fosters friendly competition and highlights high-performing strategies.

- Discussion & Code Sharing: Participants are strongly encouraged (and often required for final evaluation) to share code. The associated discussion forum becomes the primary real-time collaboration tool for troubleshooting and method discussion.

- Post-Challenge Consortium Formation: Top-performing teams and key contributors are invited to co-author a flagship manuscript. This group forms an ad-hoc consortium, collaboratively dissecting why methods succeeded/failed, often leading to new, sustained collaborations.

Visualization of Collaboration Pathways and Workflows

Diagram 1: DREAM Challenge Collaboration Lifecycle (85 characters)

Diagram 2: DREAM Team Formation Pathways (70 characters)

The Scientist's Toolkit: Key Research Reagent Solutions

For a typical predictive modeling DREAM challenge (e.g., drug synergy prediction or single-cell transcriptomics analysis), the following "reagent solutions" form the core methodological toolkit.

Table 3: Essential Research Reagents & Platforms for DREAM Participation

| Item / Solution | Category | Function & Rationale |

|---|---|---|

| Synapse Platform | Collaboration Infrastructure | Serves as the central hub for data hosting, team registration, submission tracking, and discussion. Enforces data access controls and provenance tracking. |

| Docker Containers | Reproducibility Tool | Standardizes computational environments across diverse participant systems, ensuring model predictions are reproducible and evaluable. |

| Benchmark Data (e.g., GDSC, LINCS, TCGA) | Reference Data | Provides the curated, often public-domain, training and validation datasets that form the challenge's foundation. |

| Scikit-learn, PyTorch, TensorFlow | Core Software Libraries | Open-source machine learning libraries that represent the most common foundational tools for model building among participants. |

| Jupyter Notebooks / RMarkdown | Analysis & Reporting Tool | Facilitates the creation of executable documents that combine code, results, and narrative, crucial for sharing methods post-challenge. |

| GitHub/GitLab | Code Management & Sharing | The de facto standard for version control and open-source code sharing, enabling collaboration on algorithm development. |

| Standardized Evaluation Metrics (e.g., AUPRC, RMSE) | Assessment Reagent | Pre-defined, objective metrics chosen by organizers to impartially rank methods and focus the community on a unified goal. |

The DREAM community exemplifies a structured, platform-enabled approach to forging large-scale scientific collaboration. It strategically assembles a diverse participant pool from academia and industry around meticulously crafted benchmark problems. The collaboration is not incidental but is engineered through a phased protocol that moves from competitive individual effort to cooperative consortium science. This model, supported by a specific toolkit of digital reagents and infrastructure, successfully benchmarks community research by generating crowdsourced, reproducible solutions while simultaneously creating a durable network of interdisciplinary researchers. This process validates the core thesis that well-designed challenges are powerful engines for both benchmarking methods and catalyzing the formation of new, productive scientific alliances.

The Dialogue for Reverse Engineering Assessments and Methods (DREAM) challenges represent a paradigm shift in benchmarking community-driven research in computational biology. By creating rigorous, blinded, and crowd-sourced competitions, DREAM tackles the pervasive issues of reproducibility, validation, and overfitting that have historically plagued the analysis of complex biological data. This whitepaper details the framework, impact, and methodologies underpinning this critical initiative.

The DREAM Framework: A Community-Based Benchmarking Engine

DREAM challenges are organized around specific, unsolved problems in systems biology and medicine. Participants are provided with standardized training datasets and must generate predictions on a blinded test set, which are then scored against a withheld gold standard. This process eliminates bias and allows for the objective assessment of methodological performance.

Table 1: Impact Metrics of Selected DREAM Challenges

| Challenge Focus (Year) | Number of Participating Teams | Top-Performing Method Performance Gain vs. Baseline | Key Outcome |

|---|---|---|---|

| Network Inference (2010) | >30 | 50-300% (AUC Improvement) | Established consensus on reliable transcriptional network reconstruction methods. |

| Tumor Classification (2012) | 44 | 15% (Accuracy Increase) | Highlighted the critical importance of data preprocessing and batch effect correction. |

| Clinical Outcome Prediction (2017) | 35 | 20% (C-Index Improvement) | Demonstrated the utility of ensemble models integrating diverse molecular data. |

| Single-Cell Transcriptomics (2019) | 50+ | Varied by subtask | Created benchmark datasets and metrics for cell type identification and trajectory inference. |

Core Experimental Protocol: Executing a DREAM Challenge

The workflow of a typical DREAM challenge follows a strict, pre-registered protocol to ensure fairness and reproducibility.

1. Challenge Design & Data Curation:

- Problem Definition: A steering committee of domain experts defines a specific, answerable question (e.g., "Predict drug synergy from genomic features").

- Data Acquisition & Splitting: High-quality experimental or clinical datasets are sourced. Data is partitioned into a publicly released training/validation set and a fully blinded test set. The ground truth for the test set is held privately by the organizers.

2. Participant Engagement & Prediction Phase:

- Challenge Launch: The training data, scoring metrics (e.g., AUC-ROC, MSE), and submission format are published.

- Method Development: Participating teams develop their computational pipelines. While methods are diverse, the use of robust validation on the provided training data is encouraged.

- Blinded Prediction Submission: Teams submit their predictions on the test set via a standardized portal.

3. Evaluation & Synthesis:

- Objective Scoring: Organizers score all submissions against the gold standard test data.

- Consensus Analysis: A key output is often a consensus prediction (e.g., via Bayesian integration or simple averaging) that frequently outperforms any individual submission.

- Manuscript Generation: Results are collated into a consortium paper, co-authored by top performers and organizers, detailing the findings, best practices, and the benchmark dataset for future use.

Diagram: DREAM challenge workflow from conception to publication.

Successful participation in DREAM challenges and reproducible computational biology research requires a suite of methodological "reagents."

Table 2: Key Research Reagent Solutions for Reproducible Analysis

| Item / Resource | Category | Function & Importance for Rigor |

|---|---|---|

| Synapse Platform (Sage Bionetworks) | Data/Workflow Platform | Provides a secure, version-controlled repository for challenge data, code, and submissions, ensuring traceability. |

| Docker / Singularity Containers | Computational Environment | Encapsulates the entire software environment (OS, libraries, code) to guarantee computational reproducibility. |

| Jupyter / RMarkdown Notebooks | Code Documentation | Weaves executable code, results, and narrative explanation into a single document, promoting transparency. |

| scikit-learn / Tidymodels | Machine Learning Libraries | Provide standardized, well-tested implementations of algorithms, reducing implementation errors. |

| Git / GitHub | Version Control System | Tracks all changes to code and manuscripts, enabling collaboration and auditing of the research process. |

| GRCh38 / GENCODE v44 | Genomic Reference | Using a consistent, versioned reference genome for alignment and annotation prevents batch effects from reference differences. |

Visualizing the Reproducibility Crisis & DREAM's Role

The crisis in reproducibility often stems from circular analysis where the same data is used for both training and validation, leading to overoptimistic performance. DREAM breaks this cycle through blinded assessment.

Diagram: Contrasting common overfitting cycles with DREAM's blinded benchmarking.

DREAM challenges provide an indispensable infrastructure for establishing rigor in computational biology. By fostering collaborative competition on standardized, blinded problems, they generate not only state-of-the-art solutions but also robust community benchmarks and consensus on best practices. The adoption of DREAM principles—pre-registration, data and code sharing, containerization, and blinded evaluation—is fundamental for translating computational predictions into reliable biological knowledge and clinical applications.

How to Tackle a DREAM Challenge: A Step-by-Step Methodology for Researchers

In the landscape of computational biology and translational medicine, competitive data analysis challenges, such as those organized by the DREAM (Dialogue for Reverse Engineering Assessments and Methods) initiative, serve as a powerful engine for benchmarking community research. These challenges distill complex biological questions into structured problems, fostering innovation and establishing robust benchmarks. For the individual researcher, the critical first step is to effectively decode challenge announcements to identify opportunities that align with one's technical expertise and research goals. This guide provides a technical framework for this assessment process.

The DREAM Framework and Its Role in Benchmarking

DREAM challenges are designed as rigorous, crowd-sourced competitions that address fundamental questions in systems biology and medicine. They provide a neutral ground for benchmarking algorithms and methodologies on gold-standard, often newly generated, datasets. The overarching thesis is that such community-driven benchmarking accelerates research transparency, identifies best-in-class methods, and reduces the "reproducibility crisis" in computational fields.

Table 1: Key Characteristics of DREAM Challenges for Benchmarking

| Characteristic | Description | Benchmarking Impact |

|---|---|---|

| Pre-registration | Protocols and evaluation metrics are defined before data analysis begins. | Eliminates metric hacking and ensures fair comparison. |

| Blinded Validation | Hold-out validation datasets are kept secret by challenge organizers. | Provides unbiased assessment of generalizability. |

| Scalable Evaluation | Automated scoring pipelines assess all submissions uniformly. | Enables large-scale, consistent benchmarking across dozens of methods. |

| Open Science | Winning methods are often published and code is made open-source. | Creates a persistent benchmark for future method development. |

Deconstructing a Challenge Announcement: A Technical Guide

Problem Type Classification

The first task is to categorize the core problem, which dictates the required methodological toolkit.

Diagram 1: Challenge Problem-Type Decision Tree

Evaluation Metric Analysis

The evaluation metric is the ultimate guide for algorithm development. Understanding its mathematical formulation is non-negotiable.

Table 2: Common DREAM Evaluation Metrics and Their Demands

| Metric | Problem Type | Formula (Simplified) | Technical Implication for Participant |

|---|---|---|---|

| Area Under the ROC Curve (AUC-ROC) | Binary Classification | ∫₁⁰ TPR(FPR) dFPR | Optimizes ranking of predictions; insensitive to class imbalance. |

| Precision-Recall AUC (AUPRC) | Binary Classification, Imbalanced Data | ∫₁⁰ Precision(Recall) dRecall | Focuses on performance on the positive class; preferred for skewed datasets. |

| Concordance Index (C-index) | Survival Analysis/Regression | (∑ᵢ∑ⱼ I[Yᵢ | Measures if predictions correctly order pairs of outcomes. |

| Mean Squared Error (MSE) | Regression | (1/n) ∑ (Yᵢ - Ŷᵢ)² | Heavily penalizes large errors; assumes Gaussian noise. |

| Normalized Mutual Information (NMI) | Clustering/Network | 2 * I(X;Y) / [H(X) + H(Y)] | Quantifies overlap between predicted and true clusters, normalized for chance. |

Data Landscape Assessment

A technical deep-dive into the provided data is essential. The protocol below outlines a systematic approach.

Experimental Protocol 1: Pre-Challenge Data Sufficiency Assessment

Objective: To determine if the provided training data has adequate signal and coverage for the stated problem. Methodology:

- Dimensionality & Sparsity Analysis: Calculate

(n_samples, n_features)and the sparsity matrix(percentage of zero/non-measured values). For multi-omics, perform per-modality. - Label Distribution: For classification, compute class balance. For survival data, plot the Kaplan-Meier curve of the training cohort event distribution.

- Batch Effect Detection (if metadata provided): Using known batch covariates (e.g., sequencing run, lab site), perform Principal Component Analysis (PCA). Visualize the first two principal components, colored by batch. A strong batch effect necessitates integration methods.

- Positive Control Analysis: If the challenge provides known positive/negative control pairs (e.g., validated gene-disease links), test if a simple baseline model (e.g., cosine similarity on features) can retrieve them. Failure suggests feature engineering is critical.

Diagram 2: Pre-Challenge Data Assessment Workflow

The Scientist's Toolkit: Research Reagent Solutions

Success in DREAM challenges often hinges on the effective use of specific computational tools and biological resources.

Table 3: Essential Toolkit for DREAM Challenge Participation

| Category | Item/Resource | Function & Relevance |

|---|---|---|

| Core Analysis | Scikit-learn (Python) / Caret (R) | Provides standardized implementations of hundreds of machine learning models, ensuring benchmark comparisons start from a common foundation. |

| Deep Learning | PyTorch or TensorFlow/Keras | Essential for designing novel neural architectures for complex data (e.g., sequences, graphs, images). |

| Omics Data Processing | Bioconductor (R) / Scanpy (Python) | Curated packages for normalization, transformation, and analysis of genomic, transcriptomic, and single-cell data. |

| Network Analysis | igraph / NetworkX | Libraries for constructing, visualizing, and analyzing biological networks (e.g., protein-protein interaction). |

| Benchmarking | MLflow / Weights & Biases | Tracks hundreds of hyperparameter experiments, code versions, and resulting metrics, critical for reproducible method development. |

| Biological Prior Knowledge | STRING Database, KEGG, MSigDB | Provide gene-gene interaction networks, pathway maps, and gene sets for incorporating biological constraints into models. |

| Containerization | Docker / Singularity | Ensures computational environment and analysis pipeline are perfectly reproducible for challenge organizers and the community. |

Strategic Alignment: Mapping Your Expertise

The final step is a candid mapping of the challenge's demands against your team's capabilities. This involves assessing requirements in data volume, computational scale, and biological domain knowledge.

Diagram 3: Strategic Expertise Alignment Matrix

Decoding a DREAM challenge announcement is a structured analytical exercise. By deconstructing the problem type, scrutinizing the evaluation metric, rigorously assessing the data landscape, and honestly aligning these demands with your team's toolkit and expertise, you can make an informed decision. This process ensures that your participation is not only competitive but also contributes meaningfully to the broader thesis of community-driven benchmarking, advancing robust and reproducible research in computational biology.

Within the context of benchmarking community-driven biomedical research, the DREAM (Dialogue for Reverse Engineering Assessments and Methods) Challenges provide a critical framework. These challenges rely on standardized datasets and formats to ensure reproducibility, fairness, and rigorous comparison of computational methods across diverse fields like genomics, drug sensitivity prediction, and signaling network inference. This guide details the technical processes for accessing, understanding, and preprocessing these cornerstone resources.

DREAM challenges produce structured, well-annotated datasets, often hosted on Synapse, a collaborative research platform. The table below summarizes key quantitative attributes of recent, representative challenges.

Table 1: Characteristics of Recent DREAM Challenge Datasets

| Challenge Name / Focus Area | Primary Data Types | Typical Sample Size (Range) | Key Preprocessing Needs | Primary Host Platform |

|---|---|---|---|---|

| DREAM SMC 2022 (Single Cell Multi-omics) | scRNA-seq, scATAC-seq, Protein Abundance | 10,000 - 200,000 cells | Batch correction, modality alignment, sparse matrix handling | Synapse, Figshare |

| DREAM NCI-MARCO (Drug Response) | Cell line genomic data (WES, RNA), drug SMILES, IC50 values | 100 - 500 cell lines, 100+ compounds | Missing value imputation, feature scaling, chemical descriptor generation | Synapse |

| DREAM HiRes Spatial Proteomics | Multiplexed imaging (CyCIF, IMC), spatial coordinates | 10 - 50 tissue regions, 40+ protein channels | Image registration, channel normalization, cell segmentation features | Synapse |

Access Protocol:

- Registration: Create an account on the Sage Bionetworks Synapse platform.

- Challenge Location: Navigate to the specific DREAM challenge project page (e.g.,

synXYZ1234). - Terms of Use: Accept the challenge-specific data use agreement.

- Download: Utilize the Synapse Python or R client for programmatic, version-controlled data access.

Standardized Formats and Schemas

DREAM datasets enforce consistent schemas to enable cross-team comparison.

Table 2: Common DREAM File Formats and Schemas

| File Type | Format | Schema Description | Validation Tool |

|---|---|---|---|

| Phenotype/Response Data | CSV/TSV | Rows: samples (e.g., cell lines, patients). Columns: measured outcomes (e.g., IC50, survival status). Mandatory 'SampleID' column. | Custom challenge-provided validator script |

| Molecular Features | CSV/TSV, HDF5 | Rows: samples. Columns: features (e.g., gene expression, mutation status). Must align with Phenotype file SampleID order. | Pandas/Tidyverse checks |

| Experimental Metadata | JSON, CSV | Describes experimental batches, reagent lots, sequencing platform details. Linked via unique keys to primary data. | JSON schema validators |

| Submission File | CSV | Strict column structure for predictions (e.g., 'SampleID', 'PredictedProbability', 'TeamID'). Essential for scoring. | Official challenge evaluation script |

Preprocessing Workflows and Experimental Protocols

The following methodologies are essential for preparing DREAM data for analysis.

Protocol: Normalization and Batch Effect Correction for Genomic Data

Aim: Remove technical variation while preserving biological signal. Reagents/Materials: Raw count matrix (RNA-seq), sample metadata file. Procedure:

- Filtering: Remove genes with zero counts across all samples.

- Normalization: Apply a scaling method (e.g., DESeq2's median of ratios, or TMM for bulk RNA-seq; scran for single-cell).

- Batch Correction: If metadata indicates multiple batches, apply ComBat (via

svapackage in R) or Harmony (for single-cell data) using batch as a covariate. - Validation: Perform PCA pre- and post-correction; batch clusters should integrate.

Protocol: Handling Drug Response Data (IC50/EC50)

Aim: Generate consistent, comparable dose-response metrics. Reagents/Materials: Raw dose-response measurements (e.g., fluorescence values across concentrations), drug concentration log file. Procedure:

- Curve Fitting: For each sample-drug pair, fit a 4-parameter logistic (4PL) model:

y = Bottom + (Top-Bottom)/(1+10^((LogIC50-x)*HillSlope)). - Outlier Capping: Limit the range of fitted IC50 values to the tested concentration range (e.g., minimum and maximum log concentration).

- Transform: Convert final IC50 values to -log10(IC50) (pIC50) for use in linear models.

Protocol: Generating a Machine Learning-Ready Feature Matrix

Aim: Integrate heterogeneous data types into a single, clean numerical matrix. Procedure:

- Genomic Features: Use normalized, batch-corrected expression values. Apply variance filtering (keep top N genes by variance).

- Mutation Data: Encode as binary (1/0 for mutated/not) or as oncogenic impact scores (e.g., from OncoKB).

- Chemical Descriptors: For drug features, use RDKit to compute molecular fingerprints (e.g., Morgan fingerprints) from SMILES strings.

- Alignment: Ensure all feature matrices share a common set of samples (SampleID). Concatenate features horizontally.

- Imputation: For missing numeric values, use k-nearest neighbors (KNN) imputation (e.g.,

knnImputefrom R'scaret). - Scaling: Standardize all features to have zero mean and unit variance (StandardScaler in sklearn).

Title: DREAM Data Preprocessing Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for DREAM Data Handling

| Item | Function | Example/Resource |

|---|---|---|

| Synapse Clients | Programmatic, authenticated access to DREAM datasets. | synapseclient (Python), synapser (R) |

| Data Validation Scripts | Verify submission format compliance and data schema. | Challenge-specific scripts from DREAM organizers. |

| Batch Correction Algorithms | Remove unwanted technical variation from high-throughput data. | ComBat (sva R package), Harmony (harmony R/Python). |

| Curve Fitting Library | Model dose-response relationships to derive IC50/pIC50. | drc R package, scipy.optimize.curve_fit (Python). |

| Chemical Informatics Toolkit | Compute molecular features from drug structures (SMILES). | RDKit (Python/C++). |

| Sparse Matrix Handler | Efficiently manipulate large, sparse single-cell genomics data. | scipy.sparse (Python), Matrix (R). |

| Imputation Package | Address missing data in feature matrices. | fancyimpute (Python), mice (R). |

Pathway Diagram: DREAM's Role in Community Benchmarking

Title: DREAM Challenge Community Benchmarking Cycle

Within the rigorous framework of DREAM (Dialogue for Reverse Engineering Assessments and Methods) challenges, algorithmic performance is not a general property but a specific measure against meticulously designed evaluation metrics. These challenges, serving as a benchmarking cornerstone for the biomedical and systems biology community, establish that success is defined a priori by the metric. This whitepaper details a technical methodology for tailoring model selection and development to these decisive criteria, moving beyond generic accuracy to achieve challenge-specific superiority.

The Primacy of the Evaluation Metric

DREAM challenges define success through quantitative metrics that reflect the biological or clinical question. Optimizing for Mean Squared Error (MSE) versus Area Under the Precision-Recall Curve (AUPRC) can lead to fundamentally different model architectures and outputs.

Table 1: Common DREAM Challenge Metrics and Their Implications for Model Design

| Metric | Primary Use Case | Model Development Implication |

|---|---|---|

| Area Under the ROC Curve (AUC) | Balanced binary classification; overall ranking performance. | Encourages calibration of prediction scores across all thresholds. Less sensitive to class imbalance. |

| Area Under the PR Curve (AUPRC) | Binary classification with high class imbalance (e.g., drug-target interaction). | Focuses model refinement on correct identification of the rare positive class; favors high-precision models. |

| Pearson/Spearman Correlation | Continuous outcome prediction (e.g., gene expression, drug sensitivity). | Drives models to maintain ordinal or linear relationships rather than absolute accuracy. |

| Normalized Mutual Information (NMI) | Clustering tasks (e.g., patient stratification). | Evaluates shared information between clusters, insensitive to label permutation. Guides feature learning for disentangled representations. |

| Probabilistic Concordance Index (C-index) | Survival analysis, time-to-event data. | Requires models to correctly rank event times, not predict them absolutely. |

Methodology: A Two-Phase Development Pipeline

The core protocol involves a feedback loop between metric-aware objective design and rigorous validation.

Phase 1: Metric Integration into the Objective Function

- Direct Optimization: Where differentiable, use the evaluation metric (or a smooth surrogate) as the loss function (e.g., using a differentiable approximation of AUPRC).

- Composite Loss: Combine a standard loss (e.g., BCE) with a metric-specific regularizer (e.g., a penalty for pairwise ranking errors to improve C-index).

- Post-processing Calibration: Train a secondary model (e.g., Platt scaling, isotonic regression) to calibrate raw outputs to optimize the final metric.

Phase 2: Nested Cross-Validation Protocol To prevent overfitting to the public leaderboard, employ a rigorous internal validation schema mirroring the challenge's final evaluation.

Workflow: Nested CV for Metric-Focused Model Selection

Case Study: Optimizing for AUPRC in a Drug Synergy Challenge

A DREAM challenge tasked participants with predicting synergistic drug combinations from molecular features. The evaluation metric was AUPRC due to extreme sparsity of synergistic pairs (<2% positive rate).

Experimental Protocol:

- Data: GENEx drug combination screening data (feature matrices: cell line genomics, drug chemical descriptors).

- Baseline Models: Random Forest (RF), Gradient Boosting (XGB), Multi-Layer Perceptron (MLP) with Binary Cross-Entropy (BCE) loss.

- Tailored Model: A siamese neural network with a custom loss function.

- Custom Loss Function:

Loss = α * BCE + (1-α) * Pairwise Hinge Loss. The pairwise hinge loss was computed on batches to penalize cases where a negative sample had a higher predicted score than a positive sample, directly targeting the ranking component of AUPRC. - Validation: Nested 5-fold CV, with the inner loop tuning

αand architectural hyperparameters.

Table 2: Model Performance Comparison (Internal Validation)

| Model | Loss Function | Mean AUPRC | Δ vs. Baseline |

|---|---|---|---|

| Random Forest | Gini Impurity | 0.21 | Baseline |

| XGBoost | Logistic Loss | 0.25 | +0.04 |

| Standard MLP | Binary Cross-Entropy | 0.27 | +0.06 |

| Siamese NN | Composite (BCE + Pairwise) | 0.35 | +0.14 |

Mechanism Diagram: Siamese Network for Drug Pair Ranking

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Metric-Driven Algorithm Development

| Item / Resource | Function / Purpose |

|---|---|

| scikit-learn | Provides standard metrics, robust cross-validation splitters, and baseline models for rapid prototyping. |

| TensorFlow / PyTorch | Enables custom loss function implementation, gradient-based optimization of non-differentiable metric surrogates, and complex model architectures. |

| AUPRC / C-index Differentiable Surrogates (e.g., tf.sort, torch.topk) | Libraries or custom code to create differentiable approximations of ranking-based metrics for direct gradient flow. |

| Optuna or Ray Tune | Frameworks for efficient hyperparameter optimization, crucial for tuning composite loss weights and model parameters within nested CV. |

| DREAM Challenge Scaffolds (Synapse) | Provides standardized data ingestion, pre-processing pipelines, and metric calculation scripts to ensure local validation matches final evaluation. |

| SHAP / LIME | Model interpretability tools to ensure metric-optimized models retain biological plausibility in feature importance. |

In the context of DREAM challenge benchmarking, the algorithm is subservient to the metric. Winning solutions systematically integrate the evaluation criterion into every stage of the development pipeline—from loss function design through hyperparameter tuning to final model selection. This guide outlines a reproducible framework for this alignment, emphasizing that true performance is measured not by a model's intrinsic complexity, but by its precise fidelity to the challenge's defined biological question. This metric-first philosophy drives both competitive success and scientifically translatable computational research.

Within the DREAM (Dialogue for Reverse Engineering Assessments and Methods) Challenges framework, the Synapse platform serves as the central hub for collaborative computational research, enabling rigorous benchmarking of community-driven predictions in biomedicine. This technical guide details the operational workflow of the submission portal and the essential validation protocols that underpin the integrity of challenge outcomes. The process ensures reproducibility, fair assessment, and translational relevance for researchers, scientists, and drug development professionals.

The Synapse Submission Ecosystem

Platform Architecture & Access

Synapse is a collaborative, open-source platform for data-intensive research. Access is governed through individual authenticated accounts. All DREAM challenge projects are organized within a structured workspace, containing specific submission "Evaluation Queues."

Table 1: Core Synapse Entities for DREAM Challenges

| Entity Type | Function | Example in DREAM |

|---|---|---|

| Project | Container for a specific challenge. Houses data, wiki, discussion, and queues. | syn1234567 (e.g., NCI-CPTAC Proteogenomic Challenge) |

| Folder | Organizes data and documents within a project. | /training_data, /goldstandard |

| File | Actual data or prediction file submitted. | team_alpha_predictions.csv |

| Evaluation Queue | Managed submission portal. Receives, stores, and triggers scoring on submissions. | Challenge_1_Prediction_Queue |

| Wiki | Central documentation for rules, data schema, and timelines. | Challenge Overview page |

Submission Workflow

The submission process follows a strict sequence to ensure consistency and automated validation.

Diagram Title: DREAM Challenge Submission Workflow

Validation Protocols: Pre-Scoring Integrity Gates

Technical Validation

Automated checks run immediately upon file submission to an Evaluation Queue. These are non-substantive checks focused on format and schema.

Table 2: Technical Validation Checks

| Check Parameter | Purpose | Typical Error Message |

|---|---|---|

| File Format | Ensures correct file type (e.g., .csv, .tsv). | "Invalid file extension." |

| Column Headers | Verifies exact required column names and order. | "Missing required column: 'Patient_ID'." |

| Data Types | Validates that columns contain expected data types (float, int, string). | "Column 'score' contains non-numeric values." |

| Row Count | Confirms submission has the expected number of rows (e.g., one per test sample). | "Submitted row count (950) does not match expected (1000)." |

| Unique IDs | Ensards all identifiers are unique where required. | "Duplicate values in 'SampleID' column." |

| NA Handling | Checks for allowable missing value representations. | "Disallowed NA value found." |

Experimental & Methodological Validation Context

For challenges involving wet-lab data generation (e.g., drug sensitivity, proteomics), the underlying experimental protocols define the biological meaning and noise model of the data, directly impacting benchmark validity.

Example Protocol 1: High-Throughput Drug Sensitivity Screening (e.g., CTD² Dashboard)

- Methodology: Cell viability assay using ATP quantification (CellTiter-Glo).

- Procedure:

- Seed cells in 384-well plates.

- Following incubation, add compound libraries via pin-tool transfer.

- Incubate for 72-120 hours.

- Add CellTiter-Glo reagent, incubate for 10 minutes, and measure luminescence.

- Calculate % viability relative to DMSO (negative control) and bare-well (positive control).

- Key Validation: Z'-factor calculation for each plate (≥ 0.4 is acceptable). Formula: Z' = 1 - [3*(σp + σn) / |μp - μn|], where σ/μ are standard deviation/mean of positive (p) and negative (n) controls.

Example Protocol 2: Phosphoproteomics Profiling (e.g., PDC)

- Methodology: Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) with TMT labeling.

- Procedure:

- Lyse cells, digest proteins with trypsin.

- Label peptides with Tandem Mass Tag (TMT) reagents.

- Perform phosphopeptide enrichment using TiO₂ or Fe-IMAC beads.

- Fractionate by high-pH reverse-phase chromatography.

- Analyze by LC-MS/MS on an Orbitrap instrument.

- Identify and quantify phosphosites using search engines (e.g., MaxQuant) against a reference proteome.

- Key Validation: False discovery rate (FDR) estimation via target-decoy search (typically ≤ 1% at peptide-spectrum match level).

Diagram Title: Phosphoproteomics Data Generation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Featured Experiments

| Item | Function | Example Product/Catalog |

|---|---|---|

| CellTiter-Glo 2.0 | Luminescent ATP assay for quantifying viable cells. | Promega, G9242 |

| TMTpro 16-plex | Isobaric mass tags for multiplexed quantitative proteomics. | Thermo Fisher, A44520 |

| Trypsin, MS-grade | Protease for specific protein digestion into peptides. | Promega, V5280 |

| TiO₂ Magnetic Beads | Enrichment of phosphorylated peptides from complex mixtures. | GL Sciences, 5010-21315 |

| High-pH RP Column | Peptide fractionation to reduce sample complexity pre-MS. | Waters, XBridge BEH C18 |

| Decoy Database | Critical for estimating false discovery rates (FDR) in proteomics. | Generated via software (e.g., MaxQuant) |

Post-Submission: Scoring & Benchmarking

Once a submission passes technical validation, it proceeds to the challenge-specific scoring pipeline. This often involves comparison against a held-out gold standard dataset.

Table 4: Common DREAM Challenge Scoring Metrics

| Challenge Type | Primary Metric(s) | Rationale |

|---|---|---|

| Prediction (Continuous) | Pearson Correlation, RMSE | Measures linear association and error magnitude. |

| Prediction (Binary) | AUC-ROC, AUPRC | Assesses ranking and classification performance independent of threshold. |

| Network Inference | AUPRC (vs. reference network), F-score | Evaluates accuracy of inferred edges against a known ground truth. |

| Segmentation (Imaging) | Dice Coefficient, Jaccard Index | Quantifies spatial overlap between predicted and true region. |

The Synapse submission portal, governed by layered validation protocols, is the cornerstone of objective benchmarking in DREAM challenges. Technical validations ensure data integrity, while adherence to detailed experimental protocols underpins the biological validity of the benchmark data itself. This rigorous framework allows the community to accurately gauge methodological progress, directly informing future research and drug development efforts.

The DREAM (Dialogue for Reverse Engineering Assessments and Methods) challenges represent a paradigm for community-driven benchmarking in computational biology and drug development. A core thesis emerging from this ecosystem is that the true value of a research output extends beyond the final prediction or model performance; it lies in the complete transparency and reproducibility of the approach. This guide details best practices for moving from initial code to a formalized publication, ensuring your methodology can be validated, reused, and built upon by fellow researchers and professionals.

The Documentation Pipeline: A Structured Workflow

Effective documentation is not an afterthought but an integrated, parallel process to research development. The following diagram outlines the critical stages and their outputs.

Diagram Title: Research Documentation and Sharing Pipeline

Quantitative Benchmarks: Lessons from Recent DREAM Challenges

The following table summarizes key quantitative outcomes and reproducibility metrics from recent DREAM challenges, highlighting the impact of rigorous methodology sharing.

Table 1: Reproducibility and Performance Metrics from Select DREAM Challenges

| Challenge Focus | Key Metric | Top Performing Method | Methods with Fully Reproducible Code (%) | Median Performance Gap (Reproducible vs. Non-Reproducible) |

|---|---|---|---|---|

| Single-Cell Transcriptomics (SC2, 2021) | Cell-type identification (F1-score) | Ensemble Graph Neural Network | 68% | +0.12 F1-score |

| Drug Synergy Prediction (AstraZeneca-Sanger, 2022) | Synergy Score (Pearson Correlation) | Deep Learning with Attention | 45% | +0.08 Correlation |

| Cancer Proteogenomics (NCI-CPTAC, 2023) | Survival Risk Stratification (C-index) | Multi-modal Integration Model | 72% | +0.05 C-index |

| Gene Network Inference (GRN, 2020) | AUPR (Area Under Precision-Recall) | Context-Specific Regression | 61% | +0.15 AUPR |

Detailed Experimental Protocol: A Template for Sharing

This protocol exemplifies the detail required for sharing a computational analysis, modeled on common tasks in DREAM challenges.

Protocol: Bulk RNA-Seq Differential Expression and Pathway Analysis

Objective: To identify differentially expressed genes (DEGs) between two conditions and perform downstream pathway enrichment analysis in a reproducible manner.

1. Software Environment Specification

- Operating System: Ubuntu 22.04 LTS.

- Package Manager: Conda (miniconda3 v24.1.2).

- Environment File: A

environment.ymlfile is mandatory, specifying exact versions.

2. Input Data Curation

- Raw Data: Fastq files with associated SRA run identifiers.

- Metadata: A comma-separated (CSV) sample table with columns:

sample_id,condition,batch,sra_run_id,fastq_ftp_link. - Reference Genome: Specify Ensembl release (e.g., Homo_sapiens.GRCh38.dna.primary_assembly.fa) and annotation GTF file.

3. Core Analysis Steps

- Step 3.1 - Quality Control & Alignment:

- Tool:

fastp(v0.23.4) for adapter trimming,STAR(v2.7.11b) for alignment. - Command Template:

STAR --genomeDir /ref/index --readFilesIn $R1 $R2 --outFileNamePrefix $SAMPLE. - Output: BAM files, read count matrices.

- Tool:

- Step 3.2 - Differential Expression:

- Tool:

DESeq2(R package). - Key Parameters:

fitType='parametric',test='Wald',alpha=0.05. - Output: DEG list with columns:

gene_id,baseMean,log2FoldChange,lfcSE,stat,pvalue,padj.

- Tool:

- Step 3.3 - Pathway Enrichment:

- Tool:

clusterProfiler(R package). - Parameters:

ont='BP'(Biological Process),pvalueCutoff=0.01,qvalueCutoff=0.05. - Gene Set Database:

org.Hs.eg.db. - Output: Enriched pathways table with

ID,Description,GeneRatio,BgRatio,pvalue,p.adjust,geneID.

- Tool:

4. Workflow Automation

- Implement using a workflow manager (e.g., Snakemake, Nextflow).

- The Snakemake rule for DESeq2 is shown below, depicting the logical relationships.

Diagram Title: Snakemake Rule for DESeq2 Analysis

The Scientist's Toolkit: Essential Research Reagent Solutions

For the computational experiments typified in DREAM challenges, the "reagents" are software, data, and platforms.

Table 2: Key Research Reagent Solutions for Computational Benchmarking

| Item | Category | Function & Explanation |

|---|---|---|

| Conda/Bioconda | Environment Management | Creates isolated, reproducible software environments with version-pinned dependencies for Python and R bioinformatics packages. |

| Docker | Containerization | Packages code, runtime, system tools, and libraries into a portable image that runs uniformly on any infrastructure, guaranteeing consistency. |

| Snakemake/Nextflow | Workflow Management | Defines and executes scalable, reproducible data analysis pipelines, managing dependencies and parallelization across clusters/cloud. |

| Git/GitHub | Version Control & Collaboration | Tracks all changes to code and documentation, facilitates collaboration, and serves as the primary distribution point for research software. |

| Zenodo | Research Artifact Archive | Provides a DOI for and permanently archives snapshots of code, data, and software releases, linking them to publications. |

| Synapse | Collaborative Platform | (Used by many DREAM challenges) A secure repository for sharing challenge data, code, and communicating with participants while tracking provenance. |

| Jupyter Book/Quarto | Executable Documentation | Creates interactive, publication-quality websites from computational notebooks (Jupyter/R Markdown) that combine narrative, code, and results. |

Pathway to Publication: Integrating Documentation into the Manuscript

The final publication must seamlessly integrate with the shared artifacts.

- Methods Section: Reference the versioned repository (e.g., GitHub commit hash v1.0.2) and container image (e.g., Docker Hub digest).

- Supplementary Materials: Include the executable version of key analysis scripts, not just static code snippets.

- Data Availability Statement: Provide accession codes for all input and final output data in public archives (e.g., GEO, Zenodo).

- Code Availability Statement: Use a persistent link (e.g., a Zenodo DOI linked to the GitHub release) for the core analysis code.

By adopting this comprehensive framework for documentation and sharing, researchers contribute not just a result to the community benchmark, but a fully realized, reproducible approach that accelerates validation, fosters innovation, and strengthens the collective thesis of open, collaborative science embodied by the DREAM challenges.

Overcoming Common Hurdles in DREAM Challenges: Troubleshooting and Performance Tips

In the context of the DREAM (Dialogue for Reverse Engineering Assessments and Methods) challenges, a cornerstone of community-driven benchmarking in computational biology, the paramount challenge is not merely building predictive models but ensuring they generalize robustly to independent, held-out test data. This is especially critical in drug development, where models predicting drug response, biomarker status, or protein-ligand interactions must perform reliably in novel clinical cohorts or experimental settings. Overfitting—where a model learns spurious patterns, noise, or cohort-specific biases from the training data—remains the primary obstacle to translational utility. This whitepaper outlines proven, actionable strategies to mitigate overfitting, ensuring model predictions are both statistically sound and biologically actionable.

Core Principles and Quantitative Evidence

The following table summarizes key strategies and their quantitative impact on model generalization, as evidenced by meta-analyses of DREAM challenge outcomes and contemporary machine learning literature.

Table 1: Anti-Overfitting Strategies and Empirical Performance Impact

| Strategy | Primary Mechanism | Typical Measured Impact on Held-Out AUC/Accuracy | Key Considerations in DREAM Context |

|---|---|---|---|

| Nested Cross-Validation | Isolates model selection & tuning from final performance estimation. | Reduces optimistic bias by 5-15% compared to simple hold-out. | Mandatory for rigorous challenge participation; ensures no data leakage. |

| Regularization (L1/L2) | Penalizes model complexity via weight shrinkage. | Can improve generalization by 3-10% for high-dimensional omics data. | L1 (Lasso) promotes sparsity, aiding biomarker identification. |

| Dropout (for NNs) | Randomly omits units during training, simulating ensemble. | 2-8% improvement on noisy, small-N biological datasets. | Effective only during training; requires appropriate dropout rate tuning. |

| Feature Selection / Dimensionality Reduction | Reduces hypothesis space and noise. | Improvement highly variable (0-15%); depends on method. | Univariate filtering can leak information; must be performed inside CV. |

| Data Augmentation | Artificially expands training set via label-preserving transforms. | 4-12% gain in image-based or sequence-based tasks. | Must be biologically plausible (e.g., adding realistic noise). |

| Early Stopping | Halts training when validation performance plateaus. | Prevents rapid performance degradation (often >10% loss). | Requires a large-enough validation set to be a reliable signal. |

| Ensemble Methods (Bagging, Stacking) | Averages predictions from diverse models. | Consistently adds 2-7% over best single model. | Increases computational cost but is a hallmark of winning DREAM entries. |

Experimental Protocols for Robust Validation

Protocol 1: Nested Cross-Validation for Model Development

- Partition Data: Split the full dataset into K outer folds (e.g., K=5).

- Outer Loop: For each outer fold i: a. Designate fold i as the held-out test set. b. The remaining K-1 folds constitute the model development set. c. Perform an inner L-fold cross-validation (e.g., L=10) on the development set. d. Within the inner CV, for each split, perform all pre-processing, feature selection, and hyperparameter tuning. e. Train a final model on the entire development set using the optimal hyperparameters. f. Evaluate this model on the outer held-out test set (fold i).

- Aggregate: The final performance is the average across all K outer test folds. The model for deployment is then retrained on the entire dataset using the same hyperparameter search process.

Protocol 2: Regularized Regression with Embedded Feature Selection (L1)

- Pre-process: Within the training set of each CV split, standardize features (zero mean, unit variance).

- Optimization: Perform a grid search over a logarithmic range of regularization strength (λ) values (e.g.,

np.logspace(-4, 2, 20)). - Training: For each λ, fit a logistic or Cox regression model with an L1 penalty on the training fold.

- Validation: Evaluate the model on the corresponding validation fold using the preferred metric (e.g., partial likelihood for Cox).

- Selection: Choose the λ that yields the best average validation performance across inner CV folds.

- Interpretation: Features with non-zero coefficients in the final model constitute the selected biomarker panel.

Visualizing the Workflow

Diagram: Nested CV vs Data Leakage

Diagram: Regularization Conceptual Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Robust Model Generalization

| Item / Solution | Function in Anti-Overfitting Workflow | Example/Note |

|---|---|---|

| Scikit-learn (Python) | Provides unified API for nested CV, regularization, feature selection, and ensemble methods. | Use Pipeline with GridSearchCV and the Preprocessing modules to prevent data leakage. |

| MLflow or Weights & Biases | Tracks hyperparameters, metrics, and model artifacts across hundreds of experiments. | Critical for reproducible model selection and comparing generalization performance. |

| SHAP or LIME | Model-agnostic interpretation tools to ensure selected features are biologically plausible, not artifacts of overfitting. | High variance in explanations across similar models can signal instability/overfitting. |

| Synthetic Data Generators (e.g., CTGAN) | Creates artificial training samples for data augmentation in low-N settings, with privacy preservation. | Must be used cautiously; evaluate whether synthetic samples improve validation, not just training, performance. |

| Docker or Singularity | Containerization ensures the exact computational environment (library versions, OS) used for training is replicated for validation and deployment. | Eliminates "it worked on my machine" variability, a subtle form of overfitting to a specific system state. |

| Causal Discovery Toolkits (e.g., CausalNex, DoWhy) | Moves beyond correlation to infer causal structures, leading to models more invariant to dataset shifts. | Aligns with the DREAM goal of learning foundational biological mechanisms. |

Dealing with Noisy, High-Dimensional, or Sparse Biomedical Data

The Dialogue for Reverse Engineering Assessments and Methods (DREAM) challenges have established a critical framework for benchmarking computational methods in systems biology and medicine. A persistent, core theme across these challenges is the rigorous evaluation of algorithms designed to extract biological insights from data that is characteristically noisy, high-dimensional, and sparse. This whitepaper provides an in-depth technical guide on methodologies to manage these intrinsic data properties, contextualized by lessons learned from the DREAM community. The benchmarking ethos of DREAM—emphasizing reproducibility, unbiased validation, and community-driven standards—directly informs the protocols and best practices discussed herein.

Characterization of Data Challenges

Biomedical data from modern high-throughput technologies (e.g., single-cell RNA-seq, mass spectrometry proteomics, digital pathology imaging) presents a triad of interconnected challenges:

- Noise: Includes technical variation (batch effects, instrument drift) and biological heterogeneity not pertinent to the studied phenotype.

- High-Dimensionality: Features (p, e.g., genes, proteins) vastly outnumber samples (n), leading to the "curse of dimensionality" and model overfitting.

- Sparsity: Many feature measurements are zero or missing (e.g., dropout events in single-cell sequencing, absent peptides in proteomics).

These properties are quantified and benchmarked in DREAM challenges to test algorithm robustness.

Table 1: Quantitative Characterization of Data Challenges in Common Assays

| Data Type | Typical Dimensions (Samples x Features) | Estimated Noise Level (Technical Variance) | Typical Sparsity (% Zero/Missing Values) | Exemplary DREAM Challenge |

|---|---|---|---|---|

| Bulk RNA-Seq | 10² - 10⁴ x 10⁴ - 2x10⁴ | Moderate (CV: 10-40%) | Low (<5%) | NCI-DREAM Drug Sensitivity Prediction |

| Single-Cell RNA-Seq | 10³ - 10⁶ x 2x10⁴ | High (CV: 30-80%) | Very High (70-95% dropout) | Single-Cell Transcriptomics Challenge |

| Mass Spectrometry Proteomics | 10¹ - 10² x 10³ - 10⁴ | Moderate-High (CV: 20-60%) | High (40-80% missing) | Prostate Cancer Prognosis Challenge |

| Metagenomic Profiles | 10² - 10³ x 10³ - 10⁵ (OTUs) | High (CV: 25-70%) | High (50-90%) | Microbiome Network Inference |

| High-Content Imaging | 10² - 10³ x 10² - 10³ (morphologic features) | Low-Moderate (CV: 5-25%) | Low (<1%) | Cell Painting Morphology Prediction |

CV: Coefficient of Variation; OTU: Operational Taxonomic Unit

Detailed Methodological Protocols

Protocol for Denoising Single-Cell RNA-Seq Data (Benchmarked in DREAM)

This protocol is based on top-performing methods from the DREAM Single-Cell Transcriptomics Challenge.

Objective: Impute biologically meaningful gene expression values while mitigating technical dropout noise. Input: Raw UMI count matrix (Cells x Genes). Software: Python (Scanpy, scVI) or R (Seurat).

Steps:

- Quality Control & Normalization:

- Filter cells with < 500 genes and genes expressed in < 10 cells.

- Calculate size factors (total counts per cell) and normalize counts to 10,000 per cell (CP10k).

- Log-transform:

X_norm = log2(CP10k + 1).

- Highly Variable Gene (HVG) Selection: Select 2,000-5,000 genes with highest dispersion-to-mean ratio to reduce dimensionality.

- Denoising/Imputation (Choose one):

- Method A (Deep Generative - scVI): Train a variational autoencoder conditioned on batch. Use the model's generative posterior mean as denoised expression. Key Parameters:

n_latent=10,gene_likelihood='zinb'. - Method B (k-NN Smoothing - MAGIC): Construct a k-nearest neighbor graph in PCA space (n_pcs=50). Diffuse expression values across the graph via Markov transition matrix. Key Parameters:

k=30,t=6(diffusion time).

- Method A (Deep Generative - scVI): Train a variational autoencoder conditioned on batch. Use the model's generative posterior mean as denoised expression. Key Parameters:

- Validation (Following DREAM Schema):

- Hold-out Validation: Mask 10% of non-zero entries as pseudo-ground truth.