From Networks to Novel Therapies: A Comprehensive Guide to Multi-Omics Integration for Accelerated Drug Discovery

This article provides a comprehensive resource for researchers and drug development professionals on the application of network-based multi-omics integration in modern drug discovery.

From Networks to Novel Therapies: A Comprehensive Guide to Multi-Omics Integration for Accelerated Drug Discovery

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on the application of network-based multi-omics integration in modern drug discovery. It begins by establishing the foundational principles, exploring why traditional single-omics approaches fall short and how network biology provides the necessary framework for integrating genomics, transcriptomics, proteomics, and metabolomics data. The piece then delves into the core methodologies and practical applications, detailing computational pipelines and strategies for target identification, drug repurposing, and biomarker discovery. To address real-world challenges, it offers troubleshooting guidance for common pitfalls in data integration, normalization, and network construction. Finally, it provides a critical evaluation of leading tools and platforms, comparing validation frameworks and benchmarking studies to empower informed methodological choices. This article synthesizes current best practices and future directions, highlighting how this integrative paradigm is transforming the identification and validation of therapeutic candidates.

The Blueprint of Complexity: Foundational Principles of Network Biology and Multi-Omics Data

Complex diseases such as Alzheimer's, cancer, and metabolic syndromes are not driven by a single molecular aberration but arise from dynamic, multi-layered interactions across the genome, epigenome, transcriptome, proteome, and metabolome. Single-omics approaches, which analyze one layer in isolation, provide a fragmented and often misleading view. This application note details the quantitative and mechanistic limitations of single-omics in disease modeling and provides protocols for basic multi-omics integration within the thesis context of network-based integration for drug discovery.

Quantitative Evidence of Single-Omics Limitations

Table 1: Concordance Rates Between Omics Layers in Disease Studies

| Omics Layer Comparison | Typical Concordance Range | Implication for Disease Modeling |

|---|---|---|

| Genomic Variants -> Transcriptomic (eQTLs) | 20-40% | Most genetic risk loci do not directly alter gene expression in a measurable, linear way. |

| Transcriptomic -> Proteomic Abundance | 30-50% | mRNA levels are poor predictors of protein abundance due to post-transcriptional regulation. |

| Proteomic -> Metabolomic Activity | 10-30% | Protein activity and metabolic flux are modulated by PTMs, localization, and allostery. |

| Epigenomic -> Transcriptomic (Promoter Methylation) | 40-60% | Methylation status is context-dependent and not a simple on/off switch for gene expression. |

Table 2: Success Rates of Single-Omics Biomarkers in Clinical Translation

| Omics Source | Reported Discovery Success | FDA-Approved Biomarker Success Rate | Primary Reason for Attrition |

|---|---|---|---|

| Genomics (SNP-based) | High (1000s of associations) | < 5% | Lack of functional validation and mechanistic insight. |

| Transcriptomics (RNA-seq) | High (100s of signatures) | ~ 2% | Tumor heterogeneity, technical noise, and poor proteomic correlation. |

| Proteomics (Mass Spectrometry) | Moderate (10s of candidates) | ~ 1.5% | Dynamic range challenges, sample variability, and cost. |

Experimental Protocols for Validating Multi-Layer Discordance

Protocol 1: Discrepancy Analysis Between Transcriptome and Proteome in a Disease Cell Model Objective: To empirically demonstrate the limitation of relying solely on mRNA data. Materials: Diseased cell line (e.g., cultured cancer cells), appropriate growth media, RNA extraction kit, protein extraction RIPA buffer, LC-MS/MS system, RNA-seq platform. Procedure:

- Culture cells under standardized conditions. Harvest in triplicate at 80% confluence.

- RNA-seq: Extract total RNA, check quality (RIN > 8). Prepare libraries using a stranded mRNA kit. Sequence on an Illumina platform (30M paired-end reads per sample). Align reads to reference genome (e.g., STAR aligner) and quantify expression (e.g., using DESeq2).

- Shotgun Proteomics: Lyse cells in RIPA buffer with protease inhibitors. Digest proteins with trypsin. Desalt peptides and analyze by LC-MS/MS on a Q-Exactive HF platform. Identify and quantify proteins using MaxQuant against a human UniProt database.

- Integration & Discrepancy Analysis:

- Normalize both datasets (TPM for RNA, LFQ intensity for protein).

- Perform pairwise correlation (Pearson) for matched genes/proteins.

- Identify significant outliers: genes with >4-fold change in RNA but <1.5-fold change in protein (and vice versa). Perform pathway enrichment (KEGG, GO) on outlier groups.

Protocol 2: Network Perturbation Analysis Using Single vs. Multi-Omics Input Objective: To show that network models built from multi-omics data are more resilient to perturbation and identify more therapeutically relevant targets. Materials: Publicly available multi-omics dataset (e.g., from CPTAC or TCGA), network analysis software (Cytoscape), statistical computing environment (R/Python). Procedure:

- Data Acquisition: Download matched genomic (mutations), transcriptomic, and proteomic data for a disease cohort (e.g., TCGA breast cancer).

- Build Two Interaction Networks:

- Network A (Single-Omic): Construct a Protein-Protein Interaction (PPI) network seeded with differentially expressed genes (DEGs) only (p<0.01, |FC|>2).

- Network B (Multi-Omic): Construct a PPI network seeded with: a) Mutated genes, b) DEGs, c) Differentially abundant proteins. Integrate edges from known signaling databases (Reactome, STRING).

- Topological & Functional Analysis:

- Calculate robustness: simulate random node removal (10% increments) and measure network fragmentation.

- Identify network hubs (high-degree nodes) in each network.

- Perform in silico perturbation (e.g., simulate knocking down a hub) and predict downstream impact on network signaling activity (using tools like HotNet2 or PHONEMeS).

- Validation: Compare hub genes against known essential genes from CRISPR screens (e.g., DepMap). Network B hubs will show higher overlap with essential genes and approved drug targets.

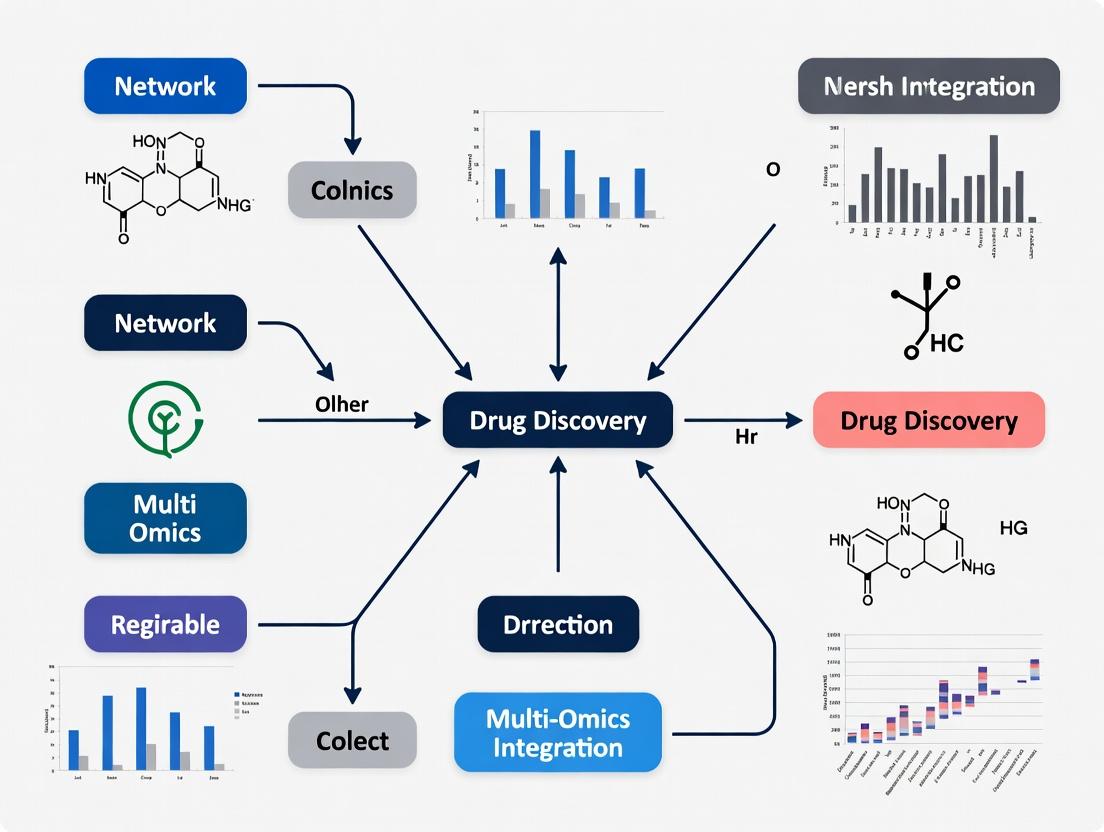

Visualizing the Multi-Omics Integration Workflow

Title: From Sample to Network: A Multi-Omics Integration Workflow

Title: Single vs. Multi-Omics Disease Mechanism Mapping

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Multi-Omics Integration Studies

| Item / Reagent | Function in Multi-Omics Research | Example Vendor/Product |

|---|---|---|

| PAXgene Blood RNA Tube | Simultaneous stabilization of RNA, DNA, and proteins from a single blood sample, enabling matched multi-omics from one vial. | Qiagen, BD |

| Triple-SILAC Kits | Metabolic labeling for quantitative proteomics, allowing precise mixing of up to three cell states for deep, comparative analysis. | Thermo Fisher Scientific |

| Chromatin Immunoprecipitation (ChIP) Seq Kits | For epigenomic profiling of histone modifications or transcription factor binding, linking genotype to regulatory phenotype. | Cell Signaling Technology, Active Motif |

| Isobaric Tagging Reagents (TMTpro 18-plex) | Enable high-throughput, multiplexed quantitative proteomics from many samples, crucial for cohort studies. | Thermo Fisher Scientific |

| CellenONE X1 or similar | Automated single-cell dispenser for generating single-cell multi-omics libraries (e.g., CITE-seq, ATAC-seq), addressing heterogeneity. | Cellenion |

| Multi-Omic Integration Software Suites | Platforms for statistical and network-based integration (e.g., MOFA, mixOmics, Cytoscape with Omics Visualizer). | Bioconductor, Cytoscape App Store |

Network biology provides a framework to represent and analyze biological systems as complex networks. Within the thesis of network-based multi-omics integration for drug discovery, this paradigm is essential for identifying novel therapeutic targets and understanding polypharmacology.

Core Tenets:

- Nodes: Represent biological entities (e.g., proteins, genes, metabolites, diseases).

- Edges: Represent physical, functional, or associative interactions between nodes (e.g., protein-protein binding, gene regulation, metabolic conversion).

- Interactome: The comprehensive map of all molecular interactions within a cell or organism, serving as the foundational scaffold for multi-omics data integration.

Application Notes: Network Construction & Analysis in Drug Discovery

The following resources are critical for constructing prior-knowledge networks.

Table 1: Key Public Interactome Databases (Updated 2023-2024)

| Database | Interaction Type | Species | Number of Interactions (Curated) | Primary Use in Drug Discovery |

|---|---|---|---|---|

| STRING v12.0 | Functional associations, PPIs | >14,000 | ~67 million (for human: ~12 million) | Context-aware pathway analysis, target prioritization |

| BioGRID v4.4 | Physical & genetic PPIs | Multiple (Human focus) | ~2.6 million (Human: ~1.2 million) | High-quality reference for validation, CRISPR screen follow-up |

| Human Reference Interactome (HuRI) v1.0 | Binary PPIs (systematic map) | Human (H. sapiens) | ~53,000 high-confidence binary pairs | Building a gold-standard, low-noise scaffold network |

| STITCH v5.0 | Chemical-Protein | Multiple | ~1.6 million (for 500,000 compounds) | Drug-target interaction prediction, side-effect analysis |

| OmniPath | Integrated signaling pathways | Human | ~116,000 curated interactions | Multi-omics pathway modeling and signaling analysis |

Key Network Topological Metrics for Target Identification

Analysis of network structure reveals critical nodes (potential drug targets).

Table 2: Network Metrics for Target Prioritization

| Metric | Definition | Biological Interpretation in Drug Discovery | Typical Threshold (High Value) |

|---|---|---|---|

| Degree Centrality | Number of connections a node has. | High-degree "hub" proteins may be essential but can have more side effects. | >50 (Depends on network size) |

| Betweenness Centrality | Fraction of shortest paths passing through a node. | "Bottleneck" proteins control information flow; potent disruptors of pathways. | >0.01 |

| Closeness Centrality | Average shortest path length to all other nodes. | Proteins that can quickly influence the entire network. | >0.5 |

| Eigenvector Centrality | Measure of influence based on connection quality. | Proteins connected to other influential proteins (e.g., in key complexes). | >0.1 |

| Local Clustering Coefficient | How connected a node's neighbors are to each other. | Identifies functional modules or protein complexes. | >0.7 |

Experimental Protocols

Protocol: Constructing a Context-Specific Protein-Protein Interaction (PPI) Network for a Disease of Interest

Objective: Integrate a generic human interactome with transcriptomic (RNA-seq) data to build a disease-relevant subnetwork.

Materials & Reagents:

- Input Data: Human interactome (e.g., from STRING or OmniPath), RNA-seq differential expression results (DEGs with p-value & log2FC).

- Software: R (igraph, tidygraph, dplyr) or Python (NetworkX, pandas).

- Compute Environment: Standard desktop or HPC cluster.

Procedure:

- Data Retrieval:

- Download a comprehensive human PPI network. Filter for high-confidence interactions (e.g., STRING combined score > 700).

- Load the DEG list. Define "active" nodes (e.g., |log2FC| > 1, adjusted p-value < 0.05).

Network Pruning:

- Subset the master PPI network to include only interactions where both interacting partners are present in the DEG list. This creates a DEG-core network.

- Optionally, add first neighbors of the DEG-core nodes from the master network to capture key regulators. This creates an expanded disease network.

Network Annotation & Analysis:

- Calculate topological metrics (Table 2) for all nodes in the pruned network.

- Annotate nodes with their differential expression status (up/down-regulated).

- Perform community detection (e.g., using the Louvain algorithm) to identify functional modules.

Target Prioritization:

- Rank nodes by a composite score combining high betweenness centrality, significant differential expression, and known druggability (from databases like DrugBank).

- Visualize the top subnetworks for hypothesis generation.

Protocol: Multi-Omics Integration via Network Propagation

Objective: Prioritize genes underlying a disease phenotype by propagating genomic (GWAS) signals through a PPI network.

Materials & Reagents:

- Input Data: GWAS summary statistics (p-values per SNP), SNP-to-gene mapping file, PPI network.

- Software: R (dnet, igraph) or dedicated tools like NetWAS or DAMON.

- Compute Environment: Requires significant memory for large networks.

Procedure:

- Seed Gene Definition:

- Map GWAS SNPs to genes using genomic proximity or eQTL data. Assign each gene a seed score (e.g., -log10(GWAS p-value)).

Network Preparation:

- Construct a symmetric, normalized adjacency matrix from the PPI network.

Signal Propagation:

- Apply a network propagation algorithm (e.g., Random Walk with Restart - RWR). This smooths the seed scores across the network, assigning high scores not only to seed genes but also to their well-connected neighbors.

- The propagation follows the formula:

F = (1 - r) * W * F + r * S, whereFis the final score vector,Wis the normalized adjacency matrix,Sis the seed score vector, andris the restart probability (typically 0.5-0.7).

Output & Validation:

- Rank all genes by their propagated score.

- Validate the top-ranked genes against independent datasets (e.g., knockout phenotypes, differential expression in independent cohorts).

Diagrams

Network-Based Multi-Omics Integration Workflow

Network Propagation Algorithm Schematic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Experimental Network Validation

| Item | Function in Network Biology Context | Example/Supplier |

|---|---|---|

| Co-Immunoprecipitation (Co-IP) Kit | Validate predicted binary protein-protein interactions (edges) from computational networks. | Thermo Fisher Scientific Pierce Co-IP Kit, Abcam. |

| Proximity Ligation Assay (PLA) Reagents | Detect and visualize endogenous PPIs in situ with high specificity and spatial resolution. | Sigma-Aldrich Duolink PLA. |

| CRISPR-Cas9 Knockout/Knockin Systems | Functionally validate the role of high-priority network nodes (genes) in disease phenotypes. | Synthego synthetic gRNAs, IDT Alt-R. |

| Phospho-Specific Antibody Panels | Probe dynamic signaling network states (edges) under drug treatment or perturbation. | Cell Signaling Technology Phospho-Antibody Sampler Kits. |

| Luminescent/ Fluorescent Biosensor Cell Lines | Monitor activity of network nodes (e.g., kinase activity, second messengers) in live cells. | ATCC Bioassay-Relevant Cell Lines. |

| Biotinylated Small-Molecule Probes | Chemically validate predicted drug-target interactions from networks like STITCH. | Custom synthesis services (e.g., Click Chemistry Tools). |

| Next-Generation Sequencing Reagents | Generate transcriptomic/proteomic data to build context-specific networks (RNA-seq, ChIP-seq). | Illumina NovaSeq Kits, 10x Genomics Chromium. |

Within the framework of network-based multi-omics integration for drug discovery, each molecular layer provides a unique and complementary view of biological systems. Genomics defines the static blueprint, transcriptomics the dynamic regulatory state, proteomics the functional effectors, and metabolomics the phenotypic readout of cellular processes. Integrating these layers into unified networks is crucial for identifying robust, disease-relevant pathways and viable drug targets, moving beyond single-layer reductionism.

Layer Definitions & Key Applications in Drug Discovery

| Omics Layer | Core Definition | Primary Analytical Technologies | Key Drug Discovery Applications |

|---|---|---|---|

| Genomics | The study of the complete set of DNA (genome), including genes and non-coding sequences, and their variations. | NGS (Whole Genome, Exome Sequencing), SNP/Array Genotyping. | Target identification (Mendelian diseases), pharmacogenomics (predicting drug response/toxicity), patient stratification biomarkers. |

| Transcriptomics | The study of the complete set of RNA transcripts (transcriptome) produced by the genome under specific conditions. | RNA-Seq, Single-Cell RNA-Seq, Microarrays, qRT-PCR. | Understanding disease mechanisms, identifying differentially expressed pathways, biomarker discovery for disease subtyping and treatment response. |

| Proteomics | The study of the complete set of proteins (proteome), including their structures, modifications, interactions, and abundances. | Mass Spectrometry (LC-MS/MS), Affinity-Based Arrays (e.g., Olink), RPPA. | Target validation, mode-of-action studies, pharmacodynamic biomarker identification, assessing post-translational modifications critical for signaling. |

| Metabolomics | The study of the complete set of small-molecule metabolites (metabolome) within a biological system. | Mass Spectrometry (GC-MS, LC-MS), Nuclear Magnetic Resonance (NMR). | Discovery of phenotypic biomarkers, understanding drug efficacy/toxicity mechanisms, revealing metabolic vulnerabilities in diseases like cancer. |

Detailed Application Notes & Protocols

Application Note 1: Identifying a Candidate Oncogenic Network in Colorectal Cancer

- Objective: Integrate multi-omics data to identify a dysregulated signaling network driving proliferation.

- Workflow:

- Genomics: Perform whole-exome sequencing on tumor/normal pairs to identify somatic mutations (e.g., in APC, KRAS, TP53).

- Transcriptomics: Conduct RNA-Seq on the same samples. Perform differential expression and pathway (e.g., GSEA) analysis.

- Proteomics/Phosphoproteomics: Use LC-MS/MS on tissue lysates to quantify protein and phospho-site abundances, highlighting activated pathways (e.g., MAPK, PI3K).

- Metabolomics: Perform LC-MS to profile polar metabolites, identifying upregulated glycolytic or nucleotide synthesis intermediates.

- Integration: Use network inference tools (e.g., Integrative Multi-Omics Association, IOMA) to merge datasets. A network centered on a mutated KRAS gene, showing downstream overexpression of transcripts (MYC), hyperphosphorylation of proteins (ERK1/2), and accumulation of metabolites (lactate) is constructed. This consolidated network pinpoints co-dependencies for combinatorial targeting.

Protocol 3.1: LC-MS/MS-Based Label-Free Quantitative Proteomics

- Sample Preparation: Lyse cells/tissue in RIPA buffer with protease/phosphatase inhibitors. Reduce with DTT, alkylate with IAA, and digest with trypsin overnight. Desalt peptides using C18 solid-phase extraction tips.

- LC Conditions: Load peptide onto a C18 nano-trap column. Separate on a 75μm x 25cm analytical C18 column with a 60-120 minute gradient from 2% to 35% solvent B (0.1% Formic Acid in Acetonitrile) at 300 nL/min.

- MS Analysis: Use a Q-Exactive HF or Orbitrap Eclipse mass spectrometer. Acquire data in data-dependent acquisition (DDA) mode: full MS scan (350-1500 m/z, R=120,000) followed by MS2 scans of the top 20 precursors (R=15,000, NCE=28).

- Data Processing: Process raw files with MaxQuant (v2.0+). Search against the UniProt human database. Use LFQ algorithm for quantification. Apply filters: FDR < 1% at PSM and protein levels. Statistical analysis (t-test/ANOVA) in Perseus or R.

Protocol 3.2: Untargeted Metabolomics via HILIC LC-MS

- Metabolite Extraction: Add 400 μL of cold 80% methanol/water (-80°C) to 1e6 cells. Vortex, incubate at -80°C for 1 hour, then centrifuge at 21,000 g for 15 min at 4°C. Transfer supernatant to MS vial.

- LC Conditions: Use a ZIC-pHILIC column (150 x 2.1 mm, 5 μm). Solvent A: 20 mM ammonium carbonate in water, pH 9.2. Solvent B: Acetonitrile. Gradient: 20% A to 80% A over 15 min, hold 5 min, re-equilibrate.

- MS Analysis: Use a high-resolution mass spectrometer (e.g., Q-TOF) in both positive and negative electrospray ionization modes. Scan range: 50-1000 m/z.

- Data Processing: Use XCMS or MS-DIAL for peak picking, alignment, and annotation. Annotate using accurate mass (±5 ppm) and MS/MS spectral libraries (e.g., HMDB, MassBank). Normalize to internal standards (e.g., D-Camphorsulfonic acid) and sample protein content.

Visualization of Multi-Omics Integration Workflow

Workflow for Network-Based Multi-Omics Integration

Integrated Oncogenic Signaling Network Example

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Vendor Examples | Function in Multi-Omics Protocols |

|---|---|---|

| RIPA Lysis Buffer | Thermo Fisher, MilliporeSigma | Comprehensive cell/tissue lysis for protein and nucleic acid co-extraction or dedicated proteomic analysis. |

| TriZol/ TRI Reagent | Thermo Fisher, Zymo Research | Simultaneous extraction of RNA, DNA, and proteins from a single sample for parallel omics analysis. |

| Phase Lock Gel Tubes | Quantabio, 5 PRIME | Facilitates clean separation of organic and aqueous phases during nucleic acid or metabolite extraction, improving yield/purity. |

| Trypsin, Sequencing Grade | Promega, Thermo Fisher | High-purity protease for specific digestion of proteins into peptides for LC-MS/MS analysis. |

| SP3 Beads (Magnetic) | Cytiva, Thermo Fisher | Enable single-tube, detergent-free protein cleanup, digestion, and post-translational modification enrichment for proteomics. |

| HILIC & C18 LC Columns | Waters, Thermo Fisher, MilliporeSigma | Critical for separating polar metabolites (HILIC) and peptides/non-polar metabolites (C18) prior to mass spectrometry. |

| Stable Isotope-Labeled Internal Standards | Cambridge Isotopes, Sigma-Isotec | Essential for absolute quantification and quality control in targeted metabolomics and proteomics (SILAC, AQUA peptides). |

| Multi-Omics Data Integration Software (Cloud/On-Prem) | Terra (Broad/Verily), IPA (Qiagen), GenePattern | Platforms providing computational workflows, databases, and network analysis tools for integrated multi-omics data. |

Public repositories are fundamental for acquiring the large-scale, multi-omics data required for network-based integration in drug discovery. The following table summarizes the core characteristics of four pivotal resources.

Table 1: Core Characteristics of Key Multi-omics Repositories

| Repository | Primary Data Type | Scope & Organisms | Key Access Method(s) | Typical Data Format(s) | Relevance to Drug Discovery |

|---|---|---|---|---|---|

| TCGA (The Cancer Genome Atlas) | Genomics, Transcriptomics, Epigenomics, Clinical | Human (Cancer-focused, 33+ types) | GDC Data Portal, TCGAbiolinks (R), API |

BAM, VCF, MAF, TSV, XML | Identifies oncogenic drivers, biomarkers, and therapeutic targets. |

| GEO (Gene Expression Omnibus) | Transcriptomics, Epigenomics, Genomics | All organisms (Array & NGS) | Web browser, GEOquery (R), geofetch (Python), FTP |

SOFT, MINiML, Series Matrix, RAW files | Discovers disease signatures, drug response profiles, and mechanism of action. |

| ProteomicsDB | Proteomics, Quantitative Mass Spectrometry | Human, Mouse, M. tuberculosis | Web browser, REST API, direct SQL download | JSON, XML, TSV (via export) | Maps protein expression, localization, and interaction networks for target validation. |

| HMDB (Human Metabolome Database) | Metabolomics | Human | Web browser, REST API, Data Downloads page | XML, TSV, SDF | Links metabolites to pathways and diseases for biomarker discovery and toxicology. |

Detailed Access Protocols and Application Notes

Protocol 2.1: Programmatic Bulk Download of TCGA Data via the GDC API

Application Note: This protocol is optimal for integrating genomic alterations and gene expression from a specific cancer cohort into a patient-specific network model.

Materials & Reagents:

- Computing system with ≥8 GB RAM and stable internet.

- Python 3.7+ environment with

requestsandjsonpackages. - GDC API token (optional, for controlled-access data).

Procedure:

- Define Query: Identify files using the GDC Data Portal interface or API. For example, to find RNA-Seq counts for Lung Adenocarcinoma (LUAD):

- Submit Query and Retrieve Manifest:

- Download Data: Use the manifest with the GDC Data Transfer Tool or via the Data endpoint:

Protocol 2.2: Retrieving and Normalizing GEO Datasets withGEOquery

Application Note: Essential for acquiring transcriptomic datasets to condition-specific gene co-expression networks.

Materials & Reagents:

- R environment (≥4.0).

- Bioconductor package

GEOqueryinstalled.

Procedure:

- Install and Load Package:

- Download Series Matrix File: For dataset GSE12345 (hypothetical):

- Extract Expression Matrix and Phenotype Data:

- Perform Basic Normalization (if needed): For log2 transformation:

Protocol 2.3: Extracting Proteomic Data from ProteomicsDB via API

Application Note: Used to obtain tissue-specific protein abundance for constraining or annotating networks.

Materials & Reagents:

- Python environment with

requestsandpandas. - ProteomicsDB tissue or protein ID.

Procedure:

- Query by Tissue: To get protein list for 'Kidney':

- Query Protein-Specific Quantification: For protein P00533 (EGFR):

Protocol 2.4: Accessing HMDB Metabolite and Pathway Data

Application Note: Crucial for mapping metabolomic perturbations onto integrated networks.

Materials & Reagents:

- Web browser or command-line tool (e.g.,

curl). - HMDB ID (e.g., HMDB0000056 for Alanine).

Procedure:

- Batch Download (Recommended): Download the "All Metabolites" XML or CSV file from the HMDB Downloads page.

- Programmatic Query via REST API:

Integration Workflow for Network-Based Drug Discovery

Table 2: Example Multi-omics Data Integration Workflow

| Step | Objective | Input Data (Source) | Key Tool/Action | Output for Network Analysis |

|---|---|---|---|---|

| 1. Target Identification | Find genes/proteins dysregulated in disease. | RNA-Seq (TCGA), Proteomics (ProteomicsDB) | Differential expression analysis (DESeq2, limma) |

List of significantly altered nodes (genes/proteins). |

| 2. Network Construction | Model molecular interactions. | Altered nodes, Reference interactome (STRING, BioGRID) | Network inference (Cytoscape, igraph) |

Disease-associated interaction network. |

| 3. Pharmacological Perturbation | Identify drugs that reverse disease signature. | Drug-induced gene expression (GEO, LINCS), Metabolite changes (HMDB) | Connectivity mapping (CMap), Enrichment analysis |

Ranked list of candidate drugs/compounds. |

| 4. Validation & Prioritization | Assess candidate viability. | Clinical correlates (TCGA), Protein abundance (ProteomicsDB) | Survival analysis, Correlation analysis | Prioritized drug targets with prognostic evidence. |

Visualization of Workflows and Relationships

Title: Multi-omics data integration workflow for drug discovery

Title: Network-based integration of multi-omics data reveals drug targets

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Multi-omics Data Access and Integration

| Item | Function in Workflow | Example/Specification |

|---|---|---|

| GDC Data Transfer Tool | High-performance, reliable bulk download of TCGA data. | Command-line tool from the NCI GDC. Supports restartable transfers. |

TCGAbiolinks R/Bioc Package |

Integrated analysis of TCGA data, from query to differential expression. | Version ≥ 2.30.0. Provides standardized preprocessing pipelines. |

GEOquery R/Bioc Package |

Parses GEO SOFT/MINiML files into R data structures for analysis. | Essential for converting GEO metadata and expression data into usable formats. |

requests Python Library |

Simplifies HTTP requests to REST APIs (e.g., ProteomicsDB, HMDB, GDC). | Enables programmatic, scriptable data retrieval without web browser interaction. |

| Cytoscape with omics plugins | Visualizes and analyzes integrated biological networks. | Use plugins stringApp, clueGO, and CyTargetLinker for multi-omics enrichment. |

igraph / NetworkX Library |

Programmatic construction, manipulation, and analysis of networks in R/Python. | Performs centrality calculations, community detection, and graph-based modeling. |

| Jupyter / RStudio Environment | Interactive computational notebook for reproducible analysis workflows. | Combines code execution, visualization, and narrative documentation in one place. |

| SQLite / PostgreSQL Database | Local storage for large, integrated datasets queried repeatedly. | Useful for caching HMDB or ProteomicsDB data for rapid local querying. |

Application Notes: Network-Based Identification of Disease Modules

Network medicine posits that disease phenotypes arise from perturbations to interconnected functional modules within the cellular interactome. The central hypothesis is that proteins associated with a specific disease (disease genes) are not randomly distributed but cluster into localized neighborhoods—"disease modules"—within large-scale molecular networks. These modules, once identified, reveal dysregulated biological pathways and highlight potential druggable targets. This approach is integral to network-based multi-omics integration, where genomic, transcriptomic, and proteomic data are mapped onto protein-protein interaction (PPI) networks to derive mechanistic insights.

Key Quantitative Findings from Recent Studies (2023-2024):

Table 1: Performance Metrics of Network-Based Disease Module Detection Algorithms

| Algorithm Name | Type of Network Used | Avg. Module Recall (Disease Genes) | Avg. Pathway Enrichment (p-value) | Reference (Preprint/Journal) |

|---|---|---|---|---|

| DIAMOnD | Human Reference PPI | 0.32 | < 1e-10 | Nat. Commun. 2023 |

| MOdule-based | Tissue-Specific PPI | 0.41 | < 1e-12 | Cell Syst. 2024 |

| Hierarchical HotNet | Multi-omics Integrated | 0.38 | < 1e-15 | Sci. Adv. 2023 |

Table 2: Druggability Analysis of Predicted Modules in Oncology

| Disease | Identified Module Hub | Approved Drug (Example) | Clinical Trial Phase for New Candidates |

|---|---|---|---|

| Triple-Negative Breast Cancer | PLK1 | Volasertib (Inhibitor) | Phase II (3 agents) |

| Glioblastoma | EGFR/PDGFR Co-module | Erlotinib, Imatinib | Phase I/II (5 combination trials) |

| Colorectal Cancer | WNT/β-catenin module | PRI-724 (Inhibitor) | Phase II (2 agents) |

Experimental Protocols

Protocol 2.1: Construction of an Integrated Multi-Omics Network for Module Detection

Objective: To build a heterogeneous network integrating genetic associations, transcriptomic co-expression, and physical protein interactions for a disease of interest.

Materials & Reagents:

- High-performance computing cluster (>= 32 GB RAM).

- Disease gene list from DisGeNET, OMIM, or GWAS catalog.

- RNA-seq expression dataset (e.g., from GEO, TCGA) for relevant tissue.

- Reference Protein-Protein Interaction database (e.g., STRING, BioGRID, HuRI).

- Software: Cytoscape 3.10+, R packages

igraph,WGCNA,biomaRt.

Procedure:

- Data Retrieval:

- Download the latest human PPI data from STRING (score >= 700) and filter for physical interactions.

- Obtain a seed list of known disease-associated genes from DisGeNET (v7.0 or later).

- Download RNA-seq FPKM data from a relevant patient cohort in TCGA or GTEx.

Network Layer Construction:

- PPI Layer: Create an adjacency matrix from the filtered PPI list.

- Co-expression Layer: Using the RNA-seq data, calculate pairwise Pearson correlation coefficients for all genes. Apply the WGCNA algorithm to construct a signed co-expression network. Retain edges with a correlation p-value < 0.001 and a soft-thresholding power determined by scale-free topology fit.

- Genetic Interaction Layer: Compile genes from GWAS loci and connect genes within the same linkage disequilibrium block.

Network Integration:

- Use a weighted sum method: Integrated Edge Weight = (w1 * PPIscore) + (w2 * |Co-exprCorrelation|) + (w3 * GeneticLinkScore). Default weights (w1=0.5, w2=0.3, w3=0.2) can be optimized.

- Construct the final integrated network graph using the

igraphlibrary in R.

Disease Module Detection:

- Apply a random walk with restart (RWR) algorithm seeded with the known disease genes.

- Parameters: Restart probability r = 0.7; run until convergence (L1-norm difference < 1e-6).

- Rank all genes in the network by their steady-state probability. The top 100 genes constitute the candidate disease module.

Validation:

- Perform pathway enrichment analysis (Reactome, KEGG) on the module genes using hypergeometric test (FDR correction, q < 0.05).

- Compare module genes with known drug targets from DrugBank.

Protocol 2.2: In Silico Prioritization of Druggable Pathways from a Module

Objective: To rank pathways within a disease module based on druggability and experimental evidence.

Procedure:

- Pathway Extraction:

- Input the disease module gene list into the Enrichr API.

- Retrieve enriched pathways from KEGG 2021, Reactome 2022, and WikiPathways databases. Filter for terms with adjusted p-value < 0.01.

Druggability Scoring:

- For each enriched pathway, calculate a Druggability Index (DI):

- DI = (Number of genes in pathway that are known drug targets in DrugBank / Total genes in pathway) * 100.

- Add a penalty for essential genes (from OGEE database) to account for potential toxicity.

- Incorporate a score for the availability of chemical probes (from ChEMBL) for non-targeted genes in the pathway.

- For each enriched pathway, calculate a Druggability Index (DI):

Prioritization Output:

- Generate a ranked list of pathways based on DI.

- Create a sub-network visualization highlighting the top pathway and its connections to the disease seed genes.

Visualizations

Workflow for Network-Based Disease Module Detection & Prioritization

Example Druggable Pathway (PI3K-AKT-mTOR) with Inhibitors

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Network-Based Multi-Omics Research

| Item Name | Vendor/Provider | Function in Research |

|---|---|---|

| STRING Database | EMBL | Provides comprehensive, scored protein-protein interaction data for network construction. |

| DisGeNET | CIPF | Curated platform of gene-disease associations for seeding disease modules. |

| Cytoscape Software | Open Source | Primary platform for network visualization, analysis, and plugin deployment (e.g., CytoHubba). |

| igraph R Library | CRAN | Core library for efficient graph theory computations and algorithm implementation. |

| DrugBank Database | University of Alberta | Annotated database of drug targets and drug-like compounds for druggability assessment. |

| Enrichr Web Tool | Ma'ayan Lab | Integrated resource for gene set enrichment analysis across hundreds of pathway libraries. |

| GTEx/TCGA Portals | NIH | Primary sources for tissue-specific and disease-specific transcriptomic data. |

| ChEMBL Database | EMBL-EBI | Database of bioactive molecules with drug-like properties for target chemistry assessment. |

Building the Integrated Model: Core Methodologies and Translational Applications

Data Preprocessing and Normalization Strategies for Heterogeneous Omics Datasets

Within the framework of a thesis on Network-based multi-omics integration for drug discovery research, the harmonization of disparate omics datasets is a foundational and critical step. Heterogeneous data from genomics, transcriptomics, proteomics, and metabolomics present unique technical and statistical challenges, including variations in scale, dynamic range, measurement noise, and batch effects. Effective preprocessing and normalization are prerequisite to constructing robust biological networks and deriving actionable insights for therapeutic target identification and biomarker discovery.

Core Preprocessing Challenges in Heterogeneous Omics

Each omics layer has specific data characteristics that necessitate tailored preprocessing prior to integration.

Table 1: Characteristics and Primary Challenges of Major Omics Data Types

| Omics Layer | Typical Data Form | Primary Preprocessing Challenges |

|---|---|---|

| Genomics (e.g., SNP, WGS) | Discrete counts, allele frequencies | Population stratification, sequencing depth bias, GC-content bias, rare variant handling. |

| Transcriptomics (e.g., RNA-seq) | High-dimensional count data | Library size differences, composition bias, gene length dependence, zero inflation. |

| Proteomics (e.g., LC-MS/MS) | Continuous intensity/spectral counts | Missing values (MNAR), dynamic range compression, batch effects, peptide-to-protein rollup. |

| Metabolomics (e.g., NMR, MS) | Continuous spectral intensities | Peak alignment, strong batch/run-order effects, heterogeneous variance, normalization to internal standards. |

| Epigenomics (e.g., ChIP-seq) | Read coverage/peak calls | Background noise, regional biases, input control normalization. |

Foundational Normalization Strategies

Normalization aims to remove unwanted technical variation to make samples comparable.

Transcriptomics-Specific Normalization

Protocol 3.1.1: TMM Normalization for Bulk RNA-seq Data

- Input: Raw gene-level read count matrix (genes x samples).

- Filtering: Remove genes with low counts (e.g., CPM < 1 in >90% of samples).

- Reference Sample: Select a reference sample (e.g., the one with upper quartile closest to the mean across all samples).

- Calculate Scaling Factors: For each sample k, compute the Trimmed Mean of M-values (TMM) relative to the reference.

- M-value: log₂( (countgk / Nk) / (countgr / Nr) )

- A-value: 0.5 * log₂( (countgk / Nk) * (countgr / Nr) )

- Trim M-values by 30% and A-values by 5%.

- The scaling factor for sample k is

2^(weighted mean of trimmed M-values).

- Adjust Libraries: Multiply each sample's total library size (N_k) by its scaling factor to obtain an "effective library size."

- Output: Normalized counts per million (CPM) or counts for downstream differential expression (using effective library sizes in statistical models).

Proteomics-Specific Normalization

Protocol 3.2.1: Median Centering with Imputation for Label-Free Quantification (LFQ) Data

- Input: Protein abundance matrix (proteins x samples), often as log₂-transformed intensities.

- Missing Value Imputation (if required for normalization method): Apply a two-step approach:

- MNAR Imputation: For data Missing Not At Random (low-abundance dropout), impute with values drawn from a down-shifted distribution (e.g., normal distribution with mean = 2.5 SDs below the sample mean).

- MAR Imputation: For data Missing At Random, impute with values from a randomized but reasonable distribution (e.g., normal distribution around the sample mean).

- Median Normalization: For each sample i, calculate the median protein abundance

M_iacross all proteins. Compute the global medianM_globalacross all sample medians. The adjustment factor for sample i isM_global - M_i. Add this factor to all abundances in sample i. - Output: Median-centered, imputed log₂ intensity matrix ready for statistical testing.

Cross-Omic Scaling and Batch Effect Correction

After platform-specific normalization, data must be co-scaled for integration.

Protocol 4.1: Combat for Empirical Bayes Batch Correction

- Prerequisite: Perform omics-specific normalization first. Data should be in a continuous scale (e.g., log-transformed).

- Model Specification: Define the model matrix for biological covariates of interest (e.g., disease state). Specify the batch variable (e.g., sequencing run, processing date).

- Parameter Estimation: For each feature (gene, protein), use linear regression to estimate batch-associated shifts, borrowing information across features via empirical Bayes.

- Adjustment: Remove the estimated batch effect from the data, preserving biological variation associated with the covariates of interest.

- Validation: Inspect PCA plots before and after correction. Batch clustering should be diminished.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Multi-omics Preprocessing

| Item | Function in Preprocessing/Normalization |

|---|---|

| UMI (Unique Molecular Identifier) Kits (e.g., from 10x Genomics, SMART-seq) | Labels individual mRNA molecules pre-amplification to correct for PCR duplicate bias in transcriptomics. |

| SIS/SILAC/AQUA Peptide Standards | Spike-in known quantities of isotopically labeled peptides/proteins for absolute quantification and normalization control in targeted proteomics. |

| Pooled Quality Control (QC) Samples | A sample created by pooling aliquots from all experimental samples, run repeatedly across batches to monitor and correct for technical drift. |

| Internal Standards (for Metabolomics) | Chemical compounds (e.g., deuterated analogs) added to all samples to correct for sample preparation and instrument variation. |

| Reference RNA/DNA Samples (e.g., ERCC, MAQC) | Synthetic spike-ins with known concentrations used to construct calibration curves and assess dynamic range. |

Batch-aware Analysis Software (e.g., sva/Combat in R, Harmony) |

Computational tools specifically designed to diagnose and statistically remove batch effects while preserving biological signal. |

Visualization of Workflows and Relationships

Diagram 1: Multi-omics preprocessing and normalization workflow.

Diagram 2: Strategy mapping for heterogeneous omics data normalization.

Within the paradigm of network-based multi-omics integration for drug discovery, constructing robust, biologically interpretable networks is the foundational step. These networks model interactions between molecular entities (e.g., genes, proteins, metabolites) across genomic, transcriptomic, proteomic, and metabolomic layers. The choice of algorithm—correlation, Bayesian, or machine learning (ML)-based—directly influences the network's topology, predictive power, and ultimate utility in identifying druggable targets and biomarkers. This document provides application notes and standardized protocols for implementing these key network construction approaches.

Correlation-Based Network Construction

Correlation networks infer relationships based on co-expression or co-abundance patterns across samples. They are undirected, with edge weights representing the strength of linear or non-linear association.

Protocol 1.1: Weighted Gene Co-expression Network Analysis (WGCNA)

Application: Identifies modules of highly correlated genes from transcriptomic data (e.g., RNA-seq from disease vs. normal tissues).

Materials & Workflow:

- Input Data:

N x Mmatrix of normalized gene expression values (N genes, M samples). - Similarity Matrix: Calculate pairwise biweight midcorrelation or Spearman correlation for all gene pairs →

S[i,j]. - Adjacency Matrix: Transform similarity into adjacency using a signed or unsigned power function:

A[i,j] = |S[i,j]|^β. The soft-thresholding power (β) is chosen based on scale-free topology criterion. - Topological Overlap Matrix (TOM): Compute TOM to minimize spurious connections:

TOM[i,j] = (Σ_u A[i,u]A[u,j] + A[i,j]) / (min(k_i, k_j) + 1 - A[i,j]), wherekis node connectivity. - Module Detection: Perform hierarchical clustering on TOM-based dissimilarity (

1-TOM). Dynamic tree cut identifies gene modules. - Module Trait Association: Correlate module eigengenes (1st principal component of module expression) with phenotypic traits to identify biologically relevant modules.

Key Research Reagent Solutions:

| Reagent/Resource | Function in Protocol |

|---|---|

| Normalized RNA-seq Count Matrix | Primary input; ensures comparability across samples. |

WGCNA R Package |

Implements core algorithms for correlation, adjacency, TOM, and module detection. |

| High-Performance Computing Cluster | Enables computation of large similarity matrices (e.g., >20,000 genes). |

| Phenotypic Trait Data Table | Essential for correlating network modules to clinical/disease outcomes. |

Table 1: Example Module-Trait Associations in a Disease Cohort

| Module (Color) | # Genes | Correlation with Disease Severity | p-value | Putative Hub Gene |

|---|---|---|---|---|

| Blue | 1205 | 0.82 | 3.2e-12 | STAT3 |

| Turquoise | 892 | -0.75 | 8.5e-09 | PPARG |

| Brown | 650 | 0.41 | 0.003 | MYC |

Diagram Title: WGCNA Workflow for Module Discovery

Bayesian Network Inference

Bayesian Networks (BNs) infer directed, probabilistic dependency graphs, representing potential causal relationships. They are powerful for integrating heterogeneous data and modeling regulatory hierarchies.

Protocol 2.1: Bayesian Network Learning with Multi-Omics Priors

Application: Reconstructs directed regulatory networks from integrated multi-omics data (e.g., SNP, methylation, expression).

Materials & Workflow:

- Input Data:

N x Mmatrix of continuous molecular data (discretized for some algorithms). Priors: Integrate known interactions from databases (e.g., STRING, KEGG) as prior probabilities. - Structure Learning: Use score-based (e.g., Bayesian Information Criterion - BIC) or constraint-based (e.g., PC algorithm) methods to learn the DAG structure (

G).- Score-based (Greedy Search): Maximize a score function:

Score(G) = log P(Data | G) - f(N) * |G|, wheref(N)is a penalty term. - Hybrid (MMHC): Runs constraint-based step to draft a skeleton, followed by score-based optimization.

- Score-based (Greedy Search): Maximize a score function:

- Parameter Learning: For a fixed structure

G, estimate conditional probability distributions (CPDs) for each node given its parents (e.g., using Maximum Likelihood Estimation). - Bootstrapping & Robustness: Repeat learning on resampled data (n=100-500 bootstraps). Calculate edge confidence as the frequency of its appearance.

- Validation: Use held-out data for likelihood evaluation or compare predicted causal targets with siRNA/CRISPR screening results.

Key Research Reagent Solutions:

| Reagent/Resource | Function in Protocol |

|---|---|

bnlearn R Package / PyMC3 Python Library |

Provides algorithms for structure and parameter learning. |

| Prior Knowledge Database (e.g., STRING, TRRUST) | Supplies biologically plausible edges to constrain search space. |

| High-Memory Workstation (≥128 GB RAM) | Necessary for bootstrap analyses on large node sets. |

Discretization Tool (e.g., discretize in bnlearn) |

Preprocesses continuous omics data for certain BN algorithms. |

Table 2: High-Confidence Bayesian Network Edges in a Cancer Pathway

| Source Node (Parent) | Target Node (Child) | Edge Confidence (%) | Data Type (Source) |

|---|---|---|---|

| TP53 (Mutation) | CDKN1A (Expression) | 98 | Genomic -> Transcriptomic |

| EGFR (Phosphorylation) | MAPK1 (Phosphorylation) | 95 | Phosphoproteomic |

| Promoter Methylation | BRCA1 (Expression) | 87 | Epigenomic -> Transcriptomic |

Diagram Title: Bayesian Network Learning with Multi-Omics Integration

Machine Learning-Based Network Construction

ML approaches, particularly graph neural networks (GNNs) and regularized regression, can model complex, non-linear interactions and integrate diverse feature sets.

Protocol 3.1: Network Inference via Graph Neural Network Autoencoder

Application: Learns latent representations for nodes (genes/proteins) to predict missing links, especially effective for heterogeneous multi-omics graphs.

Materials & Workflow:

- Graph Representation: Define initial graph: Nodes = molecular entities. Initial edges = prior knowledge (e.g., PPI). Node features = concatenated multi-omics profiles (e.g., expression, copy number, mutation status).

- Model Architecture: Implement a Graph Autoencoder (GAE) or Variational GAE.

- Encoder (GNN Layers): Uses message passing (e.g., Graph Convolutional Networks - GCN) to generate node embeddings

Z = GNN(X, A), whereXis feature matrix,Ais initial adjacency. - Decoder: Reconstructs adjacency matrix via inner product:

= σ(Z * Z^T), whereσis logistic sigmoid.

- Encoder (GNN Layers): Uses message passing (e.g., Graph Convolutional Networks - GCN) to generate node embeddings

- Training: Minimize reconstruction loss between predicted

Âand a target adjacency matrix (e.g., derived from known pathways). Use negative sampling for non-edges. - Prediction & Validation: Rank potential new edges by decoder scores. Validate top predictions with external experimental databases (e.g., BioGRID) or functional enrichment analysis (e.g., GO term over-representation).

Key Research Reagent Solutions:

| Reagent/Resource | Function in Protocol |

|---|---|

PyTorch Geometric or Deep Graph Library |

Frameworks for building and training GNN models. |

| Multi-Omics Feature Matrix (Aligned by Sample ID) | Provides rich, heterogeneous node features. |

| GPU (e.g., NVIDIA A100/A6000) | Accelerates training of deep GNN models. |

| Gold-Standard Interaction Set (e.g., pathway members) | Serves as positive training labels and validation set. |

Table 3: GNN-Predicted Novel Interactions for Target PIK3CA

| Predicted Interactor | Decoder Score (Probability) | Supporting Evidence (External DB) | Functional Relevance |

|---|---|---|---|

| IRS2 | 0.96 | Co-complex in BioGRID | Insulin signaling crosstalk |

| RPTOR | 0.91 | None (novel) | mTOR pathway regulation |

| AKT1S1 | 0.88 | Genetic interaction in yeast | AKT signaling modulation |

Diagram Title: Graph Autoencoder for Link Prediction

Integrated Application in Drug Discovery

The constructed networks serve as scaffolds for multi-omics integration. Key downstream analyses include:

- Identification of Driver Nodes: Using centrality measures (degree, betweenness) on integrated networks to pinpoint key regulatory genes.

- Module-Based Biomarker Discovery: Correlating network module activity with patient stratification or treatment response.

- Drug Target Prioritization: Proximity analysis in the network between disease modules and known drug targets.

- Mechanism of Action Elucidation: Inferring signaling pathways from directed edges in Bayesian or causal networks.

Protocol 4.1: Target Prioritization via Network Proximity

- Inputs: A background network (G) from any method above; a set of disease genes (D) from GWAS or differential expression; a set of known drug targets (T).

- Distance Calculation: For each drug target

tinT, compute the average shortest path distance to all disease genesdinDwithin networkG. - Proximity Metric: Calculate

z-scoreby comparing observed average distance to a null distribution generated by randomly sampling degree-matched nodes. - Prioritization: Rank drugs/targets by proximity

z-score(more negative = closer in network). Validate top candidates via in silico docking or literature mining for known efficacy.

Table 4: Network Proximity of Approved Drugs to an Alzheimer's Disease Module

| Drug (Target) | Proximity z-score to AD Module | Clinical Trial Phase (for AD)* | Network Source |

|---|---|---|---|

| Liraglutide (GLP1R) | -3.21 | Phase 3 | Integrated Multi-Omics BN |

| Metformin (PRKAAs) | -2.87 | Phase 2 | WGCNA Co-expression |

| Sirolimus (mTOR) | -2.45 | Preclinical | GNN-Predicted Network |

*Information from live search of clinicaltrials.gov.

1. Introduction Network-based multi-omics integration is a cornerstone of modern drug discovery. It enables the construction of holistic models of disease by connecting molecular layers (genomics, transcriptomics, proteomics, metabolomics) within their biological context. This document provides application notes and detailed protocols for the spectrum of integration techniques, from foundational to cutting-edge, within a thesis focused on identifying novel drug targets and biomarkers.

2. Data Integration Techniques: A Comparative Overview The choice of integration method depends on data complexity, the biological question, and the desired output model.

Table 1: Comparison of Multi-omics Integration Techniques

| Technique | Principle | Advantages | Limitations | Ideal Use Case |

|---|---|---|---|---|

| Early Fusion (Concatenation) | Feature vectors from each omics layer are simply joined. | Simple, fast, preserves all input data. | Assumes feature independence; prone to "curse of dimensionality"; ignores network structure. | Preliminary analysis with few, correlated omics datasets. |

| Kernel/Matrix Fusion | Datasets are transformed into similarity matrices (kernels) and combined. | Handles non-linear relationships; can integrate heterogeneous data types. | Kernel choice is critical; result can be hard to interpret biologically. | Integrating sequence, expression, and clinical data for patient stratification. |

| Network Diffusion | Propagates information (e.g., gene scores) across a prior biological network (PPI, pathways). | Leverages known biology; robust to noise in individual datasets. | Reliant on quality of prior network; can dilute specific signals. | Prioritizing disease genes from GWAS or differential expression lists. |

| Graph Neural Networks (GNNs) | Learns low-dimensional representations of nodes (genes/proteins) by aggregating features from network neighbors. | Captures network topology and node features; powerful for prediction and clustering. | Requires substantial data; risk of overfitting; "black box" nature. | Predicting novel drug-target interactions or protein functions in a cellular interactome. |

3. Detailed Experimental Protocols

Protocol 3.1: Early Fusion for Patient Subtyping Objective: To identify distinct patient subtypes by concatenating mRNA expression and DNA methylation data.

- Data Preprocessing: For N patients, normalize mRNA expression (from RNA-Seq) to TPM and log2-transform. For methylation data (from arrays/seq), use beta values. Perform per-feature standardization (z-score) on each dataset separately.

- Feature Selection: Reduce dimensionality. Select the top 5,000 most variable genes and the top 5,000 most variable CpG sites (by standard deviation).

- Concatenation: For each patient i, create a combined feature vector:

Patient_i = [Gene1_z, ..., Gene5000_z, CpG1_z, ..., CpG5000_z]. This results in a matrix of size N x 10,000. - Clustering: Apply non-linear dimensionality reduction (UMAP, t-SNE) followed by density-based clustering (e.g., HDBSCAN) on the concatenated matrix.

- Validation: Assess cluster robustness via silhouette score and validate against clinical outcomes (e.g., survival analysis).

Protocol 3.2: GNN-based Drug Target Prediction Objective: To predict novel protein targets for a disease using a multi-omics-informed biological network.

- Graph Construction:

- Nodes: Proteins/genes.

- Edges: Protein-protein interactions from consensus databases (STRING, BioGRID). Prune for high-confidence (combined score > 0.7).

- Node Features: Create a feature vector for each gene node by concatenating:

- Normalized differential expression score (log2FC) from relevant transcriptomics.

- Genetic association score (e.g., -log10(p-value) from GWAS).

- Essentiality score (e.g., CRISPR knockout effect from DepMap).

- Model Setup: Implement a Graph Convolutional Network (GCN) or Graph Attention Network (GAT).

- Input: Adjacency matrix (A) and node feature matrix (X).

- Architecture: Two graph convolutional layers with ReLU activation, followed by a dropout layer and a final linear layer with sigmoid activation for binary classification (target/non-target).

- Training: Use known drug-target pairs (from DrugBank, ChEMBL) as positive labels. Generate negative labels by random sampling from non-interacting pairs. Split data into train/validation/test sets (70/15/15). Use binary cross-entropy loss and Adam optimizer.

- Prediction & Validation: Rank predicted novel targets by model confidence. Validate top candidates through in silico docking studies and literature mining for pathway relevance.

4. Visualizations

Title: Network-Based Multi-Omics Integration Workflow

Title: GNN Message Passing Between Two Nodes

5. The Scientist's Toolkit: Key Research Reagents & Resources

Table 2: Essential Resources for Network-based Multi-omics Integration

| Resource | Function | Example/Tool |

|---|---|---|

| High-Confidence Interaction Database | Provides the foundational biological network (edges) for graph construction. | STRING, BioGRID, Human Protein Reference Database (HPRD). |

| Omics Data Repository | Source of node features (genomic variants, expression, epigenetic marks). | The Cancer Genome Atlas (TCGA), Gene Expression Omnibus (GEO), ProteomicsDB. |

| Curated Drug-Target Database | Provides gold-standard labels for supervised GNN training and validation. | DrugBank, ChEMBL, Therapeutic Target Database (TTD). |

| Graph Deep Learning Framework | Libraries for building, training, and evaluating GNN models. | PyTorch Geometric (PyG), Deep Graph Library (DGL), Spektral (TensorFlow). |

| Biological Network Analysis Suite | For network diffusion, centrality analysis, and module detection. | CytoScape, igraph, NetworkX. |

| High-Performance Computing (HPC) Cluster | Essential for training complex GNN models on large, genome-scale networks. | Local SLURM cluster, cloud computing (AWS, GCP). |

The integration of genomics, transcriptomics, proteomics, and metabolomics data into biological networks provides a systems-level framework for understanding disease. A core objective of this thesis is to leverage this network-based, multi-omics integration to identify and prioritize novel therapeutic targets. This application note details a methodology that combines two powerful network-based metrics—Network Proximity and Centrality Analysis—to rank candidate proteins or genes from integrated omics data based on their predicted efficacy and essentiality within the disease network.

Core Principles & Definitions

- Network Proximity: Measures the topological distance between a set of candidate targets and a known disease module (a set of proteins/genes associated with the disease). Shorter aggregate distances suggest the target is likely to perturb the disease mechanism more effectively.

- Centrality Analysis: Identifies nodes (e.g., proteins) that are topologically "important" within the integrated network. High-centrality nodes are often essential for network integrity and function.

- Integrated Multi-Omics Network: A heterogeneous network constructed by combining:

- Nodes: Proteins/genes from protein-protein interaction (PPI) databases, enriched with differentially expressed genes (transcriptomics), altered proteins (proteomics), and key metabolites (metabolomics).

- Edges: Physical/molecular interactions, functional associations, and metabolic reactions.

Experimental Protocol: Target Prioritization Workflow

Protocol 1: Construction of the Integrated Multi-Omics Network

Objective: To build a comprehensive, context-specific biological network.

Materials & Input Data:

- Disease-associated gene list (from GWAS, DisGeNET, OMIM).

- Differentially expressed genes (DEGs) from RNA-seq.

- Altered protein abundances from mass spectrometry.

- Significantly changed metabolites from metabolomics profiling.

- A high-quality reference interaction network (e.g., from STRING, BioGRID, HumanBase).

Procedure:

- Data Mapping: Map all omics-derived entities (genes, proteins, metabolites) to their corresponding gene symbols or UniProt IDs.

- Network Extraction: Query the reference interaction network to extract the union of all first-neighbor interactions for the mapped entities. This creates a "seed network."

- Network Integration & Pruning:

- Retain only interactions with a confidence score ≥ 0.7 (or species/context-specific cutoff).

- Annotate nodes with omics data labels (e.g., "DEG", "Disease Gene").

- For metabolite nodes, connect them to their enzyme-encoding genes.

- Output: A connected, undirected graph

G(V, E), where V is the set of nodes and E is the set of edges.

Protocol 2: Calculation of Network Proximity

Objective: To quantify the closeness of candidate targets T to the disease module D.

Procedure:

- Define Sets:

- Disease Module

D: List of confirmed disease-associated genes. - Candidate Targets

T: List of candidate genes/proteins from your analysis (e.g., upstream regulators, novel hits from CRISPR screen).

- Disease Module

- Compute Shortest Path Lengths: For every pair of nodes

(t, d)wheret ∈ Tandd ∈ D, calculate the shortest path distanced(t, d)in networkG. - Calculate Network Proximity

d_{TD}: Use the metric defined by Guney et al. (2016):d_{TD} = (1/|T|) * Σ_{t∈T} min_{d∈D} d(t, d)- Interpretation: The average shortest distance from each candidate target to its nearest disease gene. Lower

d_{TD}indicates higher proximity.

- Statistical Significance: Generate an empirical null distribution by randomly selecting

|T|nodes from the network 1000 times and calculating their proximity toD. Compute a Z-score and p-value for the observedd_{TD}.

Protocol 3: Centrality Analysis

Objective: To identify topologically central nodes within the integrated network G.

Procedure:

- Calculate Multiple Centrality Metrics for each node

vinG:- Betweenness Centrality:

C_B(v) = Σ_{s≠v≠t} (σ_{st}(v) / σ_{st}), whereσ_{st}is the total number of shortest paths from node s to t, andσ_{st}(v)is the number of those paths passing through v. - Degree Centrality:

C_D(v) = deg(v) / (n-1), wheredeg(v)is the number of connections of node v. - Eigenvector Centrality: A measure of a node's influence based on the centrality of its neighbors.

- Betweenness Centrality:

- Rank Aggregation: Rank nodes separately by each centrality metric. Use a rank aggregation method (e.g., Robust Rank Aggregation) to generate a final, consolidated centrality rank for each node.

- Output: A ranked list of all nodes by their aggregated centrality score.

Protocol 4: Integrated Prioritization Score

Objective: To generate a final priority score combining Proximity and Centrality.

Procedure:

- Normalize Scores: For each candidate target

tinT:- Normalize proximity:

NP(t) = 1 - (d_{TD}(t) / max(d_{TD}(all T))). (Higher is better). - Normalize aggregated centrality rank:

NC(t) = 1 - (rank(t) / |V|). (Higher is better).

- Normalize proximity:

- Compute Combined Score: Apply a weighted sum.

Priority Score(t) = w * NP(t) + (1-w) * NC(t), wherewis a weight (typically 0.6-0.7 to favor proximity).

- Final Ranking: Sort candidate targets in

Tby their descending Priority Score.

Data Presentation

Table 1: Example Output of Target Prioritization for Hypothetical Disease X

| Candidate Target (Gene Symbol) | Network Proximity (d_{TD}) |

Proximity Z-Score | Proximity p-value | Aggregated Centrality Rank (1=Highest) | Final Priority Score (w=0.65) | Final Rank |

|---|---|---|---|---|---|---|

| GENE_A | 1.2 | -3.45 | 0.0003 | 5 | 0.891 | 1 |

| GENE_B | 1.8 | -2.10 | 0.018 | 2 | 0.872 | 2 |

| GENE_C | 3.1 | -0.85 | 0.198 | 1 | 0.655 | 3 |

| GENE_D | 2.5 | -1.65 | 0.049 | 45 | 0.523 | 4 |

| GENE_E | 4.2 | +0.90 | 0.815 | 3 | 0.410 | 5 |

Note: Lower d_{TD} and Z-score, and higher centrality rank (lower number) are favorable.

Visualization

Diagram Title: Workflow for Network-Based Target Prioritization

Diagram Title: Network Proximity of Targets T1 & T2 to Disease Module

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Example Product/Resource | Primary Function in Protocol |

|---|---|---|

| Interaction Database | STRING, BioGRID, HumanBase | Provides the foundational protein-protein and functional association network for constructing the integrated network (G). |

| Network Analysis Suite | Cytoscape with plugins (NetworkAnalyzer, CytoNCA), igraph (R/Python) | Performs graph operations, calculates shortest paths (for proximity), and computes all centrality metrics. |

| Statistical Software | R, Python (SciPy/NumPy) | Used for generating null distributions, calculating Z-scores and p-values for proximity, and rank aggregation. |

| Disease Gene Database | DisGeNET, OMIM, GWAS Catalog | Provides the curated set of known disease-associated genes to define the Disease Module (D). |

| Omics Data Repository | GEO, PRIDE, MetaboLights | Source of context-specific transcriptomic, proteomic, and metabolomic datasets for network annotation and filtering. |

| Rank Aggregation Tool | RobustRankAggreg (R package) | Integrates ranked lists from multiple centrality measures into a single, robust aggregated rank. |

Application Notes Within the framework of a thesis on Network-based multi-omics integration for drug discovery, computational drug repurposing via disease module mapping offers a powerful, systems-level strategy. It operates on the principle that disease phenotypes arise from perturbations in localized, interconnected regions (modules) within comprehensive molecular interaction networks. The core hypothesis posits that a therapeutic compound can counteract a disease if its protein targets significantly intersect with, or are proximate to, the corresponding disease module within the network.

Key Quantitative Data

Table 1: Representative Databases for Network-Based Drug Repurposing

| Database Name | Primary Content Type | Key Use in Pipeline | Estimated Size (Representative) |

|---|---|---|---|

| STRING | Protein-protein interactions (physical, functional) | Constructing background interactome | ~24.6 million proteins, 3.1 billion interactions (v12.0) |

| DrugBank | Drug-target associations, drug info | Mapping compound profiles | ~16,000 drug entries, ~5,500 protein targets |

| DisGeNET | Gene-disease associations (variant, curated) | Defining disease seed genes | ~1.8 million associations (v7.0) |

| GWAS Catalog | SNP-trait associations | Prioritizing disease-associated genes | ~350,000 associations (2024 release) |

| LINCS L1000 | Gene expression signatures post-perturbation | Connectivity mapping | ~1.3 million signatures for 42,000 compounds |

Table 2: Common Network Proximity & Enrichment Metrics

| Metric | Formula (Conceptual) | Interpretation for Repurposing |

|---|---|---|

| Nearest Distance (d) | Average shortest path from drug targets (T) to disease genes (D) in network | d < ~2 suggests potential efficacy; d >> random expectation suggests no effect. |

| Separation (s) | s = ⟨d(T→D)⟩ - ½[⟨d(T→T)⟩ + ⟨d(D→D)⟩] | s < 0 indicates significant network proximity, a positive repurposing signal. |

| Module Overlap (MO) | MO = |T ∩ D| / sqrt(|T| * |D|) | MO > random expectation indicates direct mechanistic overlap. |

| Z-score of Proximity | (⟨d⟩actual - ⟨d⟩random) / σdrandom | Z < -1.65 (p<0.05) indicates significant proximity. |

Experimental Protocols

Protocol 1: Constructing a Disease-Specific Module Objective: To define a connected sub-network representing the molecular context of a disease.

- Seed Gene Collection: Compile a high-confidence gene set for the target disease (e.g., "Idiopathic Pulmonary Fibrosis"). Use DisGeNET (score > 0.3) and GWAS Catalog (p < 5x10⁻⁸). Result: Seed Set D.

- Background Interactome: Download a comprehensive, integrated interaction network (e.g., from STRING, combining physical and functional edges). Filter for confidence score > 700.

- Module Expansion: Use a network diffusion algorithm (e.g., Random Walk with Restart) starting from D across the background interactome. Set restart probability (r) = 0.7. Run until convergence.

- Module Definition: Rank all genes by their steady-state probability. Select the top 200 genes or those with probability > 1e-5. This forms the Disease Module M_d.

Protocol 2: Calculating Network Proximity for a Drug Objective: To quantitatively assess the relationship between a drug's targets and the disease module.

- Drug Target Profile: For a candidate drug (e.g., "Bosentan"), retrieve its known protein targets from DrugBank. Result: Target Set T.

- Compute Shortest Paths: For each target t in T and each disease seed gene d in D, calculate the shortest path distance in the background interactome (from Protocol 1, Step 2).

- Calculate Proximity Metrics:

- Compute the nearest distance ⟨d⟩ = mean( min(dist(t, D)) for all t in T ).

- Compute the separation s (see Table 2).

- Statistical Validation: Generate 1000 random gene sets of size |T|, preserving network degree distribution. Re-calculate ⟨d⟩ for each. Derive an empirical p-value = (number of random sets with ⟨d⟩random ≤ ⟨d⟩actual) / 1000.

- Interpretation: A significantly low ⟨d⟩, negative s, and p < 0.05 constitute a positive computational prediction for repurposing.

Mandatory Visualization

Diagram 1: Core workflow for drug repurposing via disease modules

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Protocol |

|---|---|

| Cytoscape with stringApp | Desktop software for network visualization, analysis, and direct query of STRING database. Used for module inspection and manual curation. |

| igraph (R/Python) | Powerful network analysis library for calculating shortest paths, degree distributions, and running randomizations at scale. |

| NetworkX (Python) | Standard library for creating, manipulating, and studying complex networks. Core for building custom analysis pipelines. |

| RWR & DiffuStats (R) | Specialized packages for performing Random Walk with Restart and other network diffusion algorithms on biological networks. |

| LINCS L1000 Signature Search | Tool for validating predictions by checking if drug-induced gene expression signatures oppose disease-associated signatures. |

| Gene Set Enrichment Analysis (GSEA) | Method to test if drug targets show significant overlap with disease module genes beyond random expectation. |

Within the framework of network-based multi-omics integration for drug discovery, identifying robust biomarkers is critical for stratifying patient populations into clinically relevant subgroups. This enables precision medicine by predicting disease progression, therapeutic response, and patient prognosis. This document outlines a standardized protocol for discovering and validating predictive multi-omics biomarkers.

1.0 Experimental Workflow for Biomarker Discovery and Validation

The following table summarizes the key phases and their quantitative outputs.

Table 1: Phases of Predictive Multi-Omics Biomarker Development

| Phase | Primary Objective | Key Data Types | Typical Cohort Size (N) | Success Metrics |

|---|---|---|---|---|

| 1. Discovery | Identify candidate features & signatures | Genomics, Transcriptomics, Proteomics, Metabolomics | 100 - 500 patients | P-value < 0.05 (adjusted); AUC > 0.75 |

| 2. Prioritization | Filter via biological networks & pathways | Multi-omics data + prior knowledge (e.g., PPI, pathways) | N/A | Network centrality score; Pathway enrichment FDR < 0.1 |

| 3. Technical Validation | Confirm measurement accuracy | Targeted assays (qPCR, MS, immunoassays) | 50 - 100 patients | Correlation R² > 0.8; CV < 20% |

| 4. Clinical Validation | Assess predictive power in independent cohorts | Clinical endpoints + validated assays | 200 - 1000+ patients | Hazard Ratio (HR) ≠ 1; Kaplan-Meier log-rank p < 0.01; AUC > 0.7 |

2.0 Detailed Experimental Protocols

Protocol 2.1: Network-Based Multi-Omics Integration for Biomarker Prioritization Objective: To move beyond individual omics features by integrating data into molecular networks to identify robust, functionally coherent biomarker modules. Materials: Multi-omics datasets (e.g., RNA-Seq counts, LC-MS protein intensities), high-performance computing cluster, bioinformatics software (R, Python). Procedure:

- Data Preprocessing: Independently normalize and scale each omics dataset. Perform quality control and batch correction.

- Differential Analysis: For each omics layer, identify features significantly associated with the clinical outcome (e.g., responder vs. non-responder) using appropriate statistical tests (e.g., DESeq2 for RNA-Seq, limma for proteomics).

- Network Construction: Construct a multi-omics interaction network.

- Use a prior knowledge network (e.g., STRING PPI, pathway databases) as a scaffold.

- Map significant features from all omics layers onto this scaffold.

- Optionally, refine edges using data-driven correlation measures (e.g., WGCNA for co-expression).

- Module Detection & Scoring: Apply community detection algorithms (e.g., Louvain, Infomap) to identify densely connected multi-omics modules. Score each module based on:

- Statistical Enrichment: Aggregated p-values of constituent features.

- Topological Importance: Average centrality (e.g., betweenness) of features.

- Functional Coherence: Enrichment of pathways relevant to the disease mechanism (using tools like g:Profiler).

- Biomarker Signature Derivation: Extract the top-ranked multi-omics module(s). Represent it as a signature, which could be the first principal component (PC1) of the module's features or a risk score calculated from a multivariate model.

Protocol 2.2: Validation of a Proteomic Biomarker Panel via Immunoassays Objective: To technically validate a shortlist of protein biomarkers identified from discovery-phase proteomics. Materials: Patient serum/plasma samples (independent from discovery cohort), validated ELISA or multiplex immunoassay (e.g., Luminex) kits, plate reader, liquid handling robot. Procedure:

- Sample Preparation: Thaw frozen plasma samples on ice. Centrifuge at 10,000g for 10 minutes at 4°C to remove precipitates.

- Assay Execution: Perform the immunoassay in duplicate according to manufacturer's protocol. Include a standard curve of known concentrations on every plate.

- Data Acquisition & Quantification: Read plates. Generate a 4- or 5-parameter logistic curve from the standards to interpolate sample concentrations.

- Analytical Validation: Assess the assay's performance:

- Calculate the intra- and inter-assay Coefficient of Variation (%CV) for quality control samples. Accept if <15-20%.

- Determine the lower limit of quantification (LLOQ).

- Correlate the immunoassay measurements with the original discovery (e.g., mass spectrometry) intensities for the same samples. Require Pearson R > 0.7.

3.0 Visualizations

Title: Multi-omics biomarker discovery and validation workflow.

Title: Multi-omics biomarker network in a signaling pathway.

4.0 The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Multi-Omics Biomarker Studies

| Reagent / Material | Supplier Examples | Primary Function in Biomarker Workflow |

|---|---|---|

| TRIzol / Qiazol | Thermo Fisher, Qiagen | Simultaneous extraction of RNA, DNA, and proteins from precious tissue samples for multi-omics analysis. |

| TruSeq RNA/DNA Library Prep Kits | Illumina | Prepare high-quality, indexed sequencing libraries from nucleic acids for genomics and transcriptomics discovery. |

| TMTpro 16-plex Isobaric Labels | Thermo Fisher | Enable multiplexed, quantitative analysis of up to 16 samples in a single LC-MS/MS run for high-throughput proteomics. |

| Human XL Cytokine Luminex Discovery Assay | R&D Systems, Bio-Rad | Multiplex immunoassay for validating dozens of protein biomarkers simultaneously in serum/plasma with high sensitivity. |

| Seahorse XF Cell Mito Stress Test Kit | Agilent Technologies | Functional metabolic assay to validate biomarker hypotheses related to cellular energetics and mitochondrial function. |

| CITE-seq Antibodies (TotalSeq) | BioLegend | Allow simultaneous measurement of cell surface protein expression and transcriptomics in single-cell studies for deep stratification. |

| IPA (Ingenuity Pathway Analysis) / Metascape | Qiagen / Free Web Tool | Software for pathway and network analysis to prioritize biomarker candidates and interpret biological context. |

Navigating Computational Challenges: Troubleshooting Data and Network Pitfalls

Solving Batch Effects and Platform-Specific Artifacts in Integrated Datasets