Horizontal vs Vertical Multi-Omics Integration: Choosing the Right Strategy for Precision Medicine Research

This article provides a comprehensive guide for researchers and drug development professionals navigating the two primary paradigms of multi-omics data integration.

Horizontal vs Vertical Multi-Omics Integration: Choosing the Right Strategy for Precision Medicine Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals navigating the two primary paradigms of multi-omics data integration. We explore the foundational concepts of horizontal (sample-matched) and vertical (feature-matched) integration, detail state-of-the-art methodologies and their applications in biomarker discovery and disease subtyping, address common computational and biological challenges, and offer a comparative validation framework. The goal is to empower scientists to select and optimize the appropriate integration strategy for robust, translatable biological insights in biomedical research.

Demystifying Multi-Omics Integration: A Primer on Horizontal and Vertical Strategies

Application Notes: Paradigm Definitions in Multi-Omics

Multi-omics integration is a cornerstone of systems biology, aiming to construct a comprehensive view of biological systems. Two principal paradigms govern the approach: Horizontal and Vertical Integration.

Horizontal Integration (HI): Also called "data-level" or "meta-omics" integration, HI involves the simultaneous analysis of multiple different omics datasets (e.g., genomics, transcriptomics, proteomics, metabolomics) acquired from the same set of biological samples. The goal is to identify correlated patterns and interactions across different molecular layers within a defined cohort, building network-level understanding.

Vertical Integration (VI): Also termed "feature-level" or "multi-scale" integration, VI focuses on tracing a biological signal or relationship (e.g., a genetic variant's effect) across multiple molecular levels for the same biological entity (e.g., a single gene or pathway). It connects causal chains from one molecular layer to the next (e.g., SNP → Gene Expression → Protein Abundance → Metabolite Level).

Quantitative Comparison of Integration Paradigms

Table 1: Core Characteristics of Horizontal vs. Vertical Integration

| Aspect | Horizontal Integration | Vertical Integration |

|---|---|---|

| Primary Goal | Discover coordinated patterns & networks across omics layers. | Establish causal, mechanistic flows across omics layers. |

| Sample Relationship | Multiple omics measured in the same cohort of samples. | Relationships traced for specific features across linked assays. |

| Temporal Dimension | Often cross-sectional (single time point). | Can incorporate longitudinal or perturbation time-series data. |

| Typical Methods | Multivariate statistics, similarity-based fusion, graph networks. | Bayesian networks, structural equation modelling, mechanistic models. |

| Key Challenge | Technical noise/batch effects alignment, heterogeneous data scales. | Requiring a priori biological knowledge or linkage models. |

| Primary Output | Molecular subtypes, predictive biomarkers, inter-omics networks. | Mechanistic hypotheses, driver identification, pathway causality. |

Table 2: Common Computational Tools & Their Applications (2024)

| Tool/Package | Primary Paradigm | Key Function | Language |

|---|---|---|---|

| MOFA+ | Horizontal | Factor analysis for multi-view data. | R/Python |

| mixOmics | Horizontal | Multivariate exploration & integration. | R |

| DIABLO | Horizontal | Multi-omics data integration for classification. | R |

| MONGREL | Vertical | Multi-omics hierarchical regression for causal inference. | R/Stan |

| Multi-Omic | Both | Bayesian network learning across omics. | Python |

| Graphical Model | |||

| CausalPath | Vertical | Infer causal signaling from phosphoproteomics & other data. | Web/Java |

Experimental Protocols

Protocol for a Horizontally Integrated Multi-Omics Cohort Study

Objective: To identify molecular subtypes of a disease (e.g., breast cancer) by integrating genomic, transcriptomic, and metabolomic data from the same patient tumor samples.

Workflow Summary:

- Sample Collection & Preparation: Collect tumor tissue biopsies from N=200 patients under standardized SOPs. Aliquot tissue for DNA, RNA, and metabolite extraction.

- Multi-Omic Data Generation:

- Genomics (DNA): Perform Whole Exome Sequencing (WES) to identify somatic mutations and copy number variations. Use a platform like Illumina NovaSeq. Process with GATK best practices.

- Transcriptomics (RNA): Perform RNA-Seq (poly-A selected) on the same samples. Use Illumina platform. Align to reference genome (STAR) and quantify gene-level counts (featureCounts).

- Metabolomics: Perform untargeted Liquid Chromatography-Mass Spectrometry (LC-MS) on tissue lysates. Use both positive and negative ionization modes.

- Data Preprocessing & Normalization:

- Genomics: Create a binary mutation matrix (1/0 for presence/absence of non-silent mutations in driver genes) and a segmented copy number matrix.

- Transcriptomics: TMM normalization, log2-CPM transformation, and batch correction (e.g., using ComBat).

- Metabolomics: Peak alignment, missing value imputation (minimum value), log-transformation, and Pareto scaling.

- Horizontal Integration Analysis: Use the MOFA+ framework.

- Input: Genomic matrix (mutations), Transcriptomic matrix (log-CPM), Metabolomic matrix (scaled intensities).

- Train the MOFA model to decompose variation into a set of common latent factors.

- Cluster patients based on their factor values to define molecular subtypes.

- Interpret factors by identifying heavily weighted features (e.g., Factor 1 driven by TP53 mutations, immune gene expression, and lactate levels).

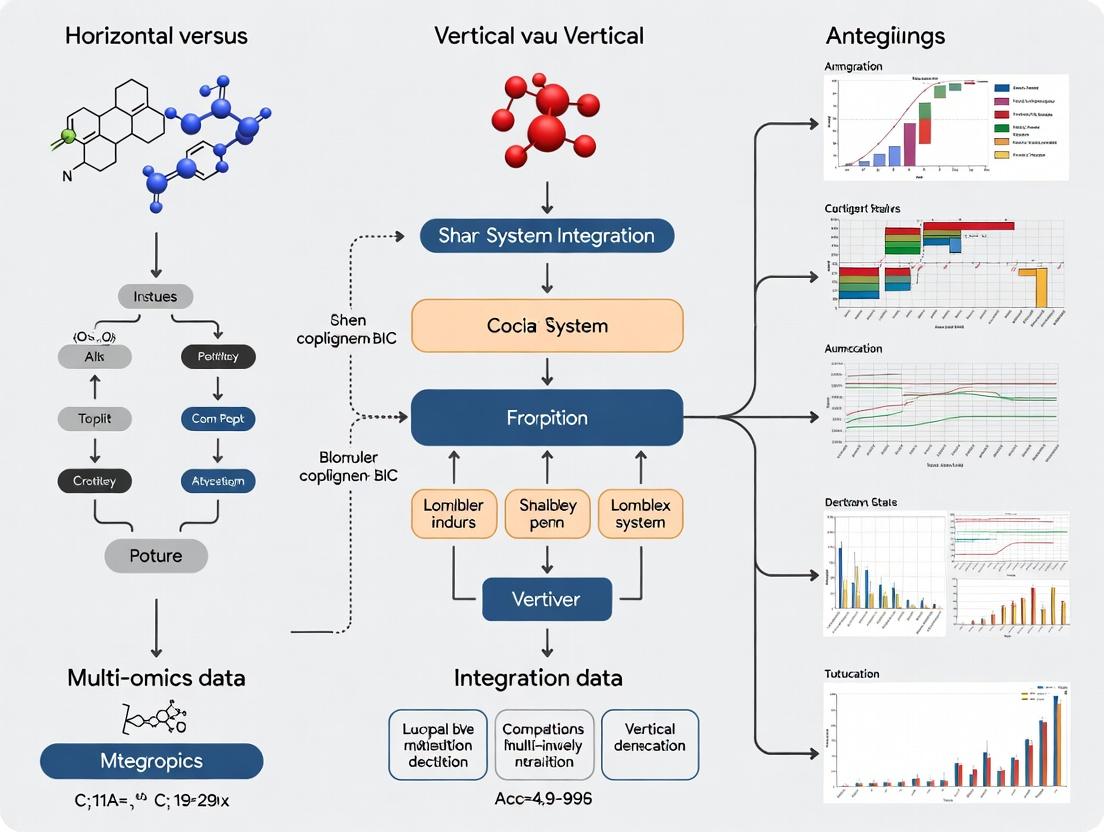

Title: Workflow for Horizontal Multi-Omics Integration

Protocol for a Vertical Integration Study on a Genetic Perturbation

Objective: To mechanistically trace the effects of a specific gene knockout (e.g., MYC) across the transcriptome, proteome, and phosphoproteome in a cell line model.

Workflow Summary:

- Perturbation & Experimental Design: Generate isogenic MYC knockout (KO) and wild-type (WT) control cell lines using CRISPR-Cas9. Culture biological replicates (n=6) for each condition.

- Multi-Layer Profiling from Same Culture:

- Transcriptome: Harvest cells for total RNA extraction. Perform RNA-Seq (poly-A). Library prep with kits like Illumina TruSeq Stranded mRNA.

- Proteome & Phosphoproteome: From the same cell pellet, lyse cells. Digest lysates with trypsin. Perform Tandem Mass Tag (TMT) labeling for multiplexing.

- Global Proteome: Fractionate one aliquot of labeled peptides by high-pH reverse-phase HPLC and analyze by LC-MS/MS.

- Phosphoproteome: Enrich phosphorylated peptides from another aliquot using TiO2 or Fe-IMAC beads, then fractionate and analyze by LC-MS/MS.

- Data Processing:

- RNA-Seq: Differential expression analysis (e.g., DESeq2). Output: Log2 fold changes (KO vs WT) for genes.

- Proteomics: MS data processed with MaxQuant/SearchGUI. Differential abundance tested (e.g., Limma). Output: Log2 fold changes for proteins and phospho-sites.

- Vertical Integration Analysis: Construct a Bayesian Network or use CausalPath.

- Map features: MYC gene → MYC transcript → MYC protein.

- Input differential data into CausalPath with a prior knowledge network (e.g., SIGNOR, KEGG). The tool statistically tests for consistent downstream effects.

- Output: A validated cascade showing MYC KO leading to reduced E2F transcript targets, subsequently altering cell cycle protein abundance, and finally changing phosphorylation of key CDK substrates.

Title: Vertical Integration Tracing a Perturbation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Kits for Multi-Omics Integration Studies

| Item | Function & Application |

|---|---|

| AllPrep DNA/RNA/Protein Mini Kit (Qiagen) | Simultaneous co-isolation of genomic DNA, total RNA, and protein from a single tissue or cell sample. Critical for minimizing sample variance in HI studies. |

| Tandem Mass Tag (TMT) 16/18-plex (Thermo Fisher) | Isobaric labeling reagents for multiplexed quantitative proteomics. Allows combined analysis of up to 18 samples in one MS run, enabling robust VI across conditions with high precision. |

| TruSeq Stranded mRNA Library Prep Kit (Illumina) | Standardized library preparation for RNA-Seq. Ensures high-quality transcriptomic data, a foundational layer for both HI and VI. |

| KAPA HyperPrep Kit (Roche) | Flexible library prep for WES/WGS. Provides uniform coverage for genomic variant detection, a key input for integration. |

| TiO2 Mag Sepharose (Cytiva) or Fe-IMAC Beads | Magnetic beads for highly efficient phosphopeptide enrichment. Enables deep phosphoproteome coverage for vertical signaling studies. |

| Seahorse XFp / XFe96 Analyzer (Agilent) | Measures cellular metabolic fluxes (OCR, ECAR) in live cells. Functional metabolomic data for validating/grounding integrated molecular findings. |

| Single-Cell Multiome ATAC + Gene Exp. (10x Genomics) | Emerging technology allowing simultaneous assay of chromatin accessibility (ATAC) and gene expression in single nuclei. Represents the next frontier in horizontal integration. |

The integrative analysis of multi-omics data is a cornerstone of modern systems biology, pivotal for unraveling complex biological mechanisms in disease and therapeutics. The prevailing strategies are categorized as horizontal (sample-matched) and vertical (feature-matched) integration. Horizontal integration correlates multiple omics layers (e.g., transcriptomics, proteomics, metabolomics) across a common set of biological samples. Vertical integration connects different molecular layers along the central dogma (e.g., genomic variant to gene expression to protein abundance) for shared biological features or genes across potentially different sample cohorts. This application note details the experimental design, protocols, and analytical considerations for generating and utilizing these two distinct data structures.

Comparative Framework: Definitions and Applications

Table 1: Core Characteristics of Sample-Matched vs. Feature-Matched Designs

| Characteristic | Sample-Matched (Horizontal) Integration | Feature-Matched (Vertical) Integration |

|---|---|---|

| Primary Aim | Understand coordinated multi-layer changes across a cohort (e.g., patient stratification). | Establish causal or regulatory chains from genome to phenome for specific genes/pathways. |

| Sample Requirement | Identical samples subjected to multiple omics assays. | Samples can differ but must share relevant features (e.g., specific genetic variants). |

| Typical Data Structure | Multi-assay matrix: Samples (rows) x Multi-omics features (columns). | Linked datasets via feature anchors (e.g., gene ID, genomic coordinate). |

| Key Analytical Challenge | Batch effect correction across assay platforms, data scaling. | Harmonizing annotations, resolving context-specific (e.g., tissue) discordance. |

| Primary Application | Biomarker discovery, molecular subtyping, phenotypic prediction. | Mechanistic disease modeling, understanding GWAS hits, identifying drug targets. |

| Common Tools | MOFA+, DIABLO, mixOmics, Integrative NMF. | Multi-omics QTL mapping, PRIORitizE, NetWAS, linear mixed models. |

Experimental Protocols

Protocol 3.1: Generating a Sample-Matched Multi-Omics Dataset from Tumor Tissue

Objective: To extract DNA, RNA, and protein from the same tumor tissue sample for genomic, transcriptomic, and proteomic profiling.

Materials:

- Fresh-frozen or optimally preserved tissue (e.g., RNAlater).

- AllPrep DNA/RNA/Protein Mini Kit (Qiagen).

- RNeasy MinElute Cleanup Kit (Qiagen).

- BCA Protein Assay Kit.

- DNase I.

- Platform-specific library prep kits (e.g., Illumina for WES/RNA-seq).

Procedure:

Tissue Homogenization:

- Weigh 10-30 mg of tissue. Place in a tube with lysis buffer and homogenize using a rotor-stator homogenizer. Keep lysate cool.

Simultaneous Extraction (AllPrep):

- Follow manufacturer's protocol. Lysate is loaded onto an AllPrep DNA spin column. Flow-through (contains RNA and protein) is saved.

- DNA Column: Wash and elute genomic DNA. Proceed to Whole Exome Sequencing (WES) library prep.

- RNA from Flow-Through: Add ethanol to flow-through, apply to RNeasy column. Perform on-column DNase I digestion. Wash and elute RNA. Assess integrity (RIN > 7). Proceed to RNA-seq library prep.

- Protein from Flow-Through: Precipitate protein from the RNA extraction flow-through using acetone. Resuspend pellet. Quantify via BCA assay. Proceed to proteomic preparation (e.g., tryptic digestion for LC-MS/MS).

Quality Control & Sequencing/Mass Spec:

- QC DNA (Fragment Analyzer), RNA (Bioanalyzer), and protein yield.

- Perform WES (150bp paired-end), RNA-seq (e.g., 100M reads), and LC-MS/MS (e.g., TMT-labeled, data-dependent acquisition) using platform-standard protocols.

Protocol 3.2: Establishing a Feature-Matched Linkage from GWAS to Proteomics

Objective: To validate and characterize the functional protein-level consequences of a genetic variant identified in a Genome-Wide Association Study (GWAS).

Materials:

- GWAS summary statistics for a trait of interest.

- Genotyping data (e.g., SNP array) from a cohort with plasma proteomic data (e.g., SomaScan or Olink).

- PLINK software.

- R/Bioconductor with

coloc,MendelianRandomizationpackages.

Procedure:

Variant Selection and Cohort Identification:

- Identify lead SNP from GWAS (p < 5e-8). Define its linkage disequilibrium (LD) block using reference panels (e.g., 1000 Genomes).

- Identify an independent cohort where subjects have been genotyped (for the same SNP/region) and have quantified plasma protein levels (feature match = genomic region & gene).

Proteomic Quantitative Trait Locus (pQTL) Mapping:

- For each protein quantified in the proteomic platform, perform linear regression of protein abundance (normalized, log-transformed) on the SNP genotype (additive model), adjusting for covariates (age, sex, principal components).

- pQTL is significant if p < (0.05 / number of tested proteins in the platform).

Colocalization Analysis:

- Use

colocin R to assess if the GWAS signal and the pQTL signal share a common causal variant. A high posterior probability (PP.H4 > 0.8) suggests colocalization.

- Use

Mendelian Randomization (MR):

- If a significant cis-pQTL is found and colocalizes, use the SNP as an instrumental variable in MR to test if the protein has a causal effect on the GWAS trait. Use TwoSampleMR or MR-Base.

Visualizing Integration Strategies and Workflows

Sample-Matched (Horizontal) Integration Workflow

Feature-Matched (Vertical) Integration Logic

The Scientist's Toolkit: Key Research Reagents & Platforms

Table 2: Essential Solutions for Multi-Omics Sample Processing

| Item | Function | Example Product/Brand |

|---|---|---|

| All-in-One Nucleic Acid/Protein Kits | Co-extraction of DNA, RNA, and protein from a single tissue lysate, preserving sample integrity. | Qiagen AllPrep, Norgen's All-in-One Purification Kit. |

| Single-Cell Multi-Omic Kits | Enable simultaneous profiling of transcriptome and epigenome from the same single cell. | 10x Genomics Multiome ATAC + Gene Expression, Parse Biosciences Single Cell Multiome. |

| High-Multiplex Immunoassays | Quantify 1000s of proteins from minute sample volumes for large cohort proteomics. | SomaScan (Somalogic), Olink Explore, Proximity Extension Assay. |

| Isobaric Mass Tag Kits | Multiplex samples for quantitative proteomics, increasing throughput and reducing batch effects. | TMT (Thermo Fisher), iTRAQ (AB Sciex). |

| Spatial Multi-omics Platforms | Map transcriptomic and proteomic data within tissue architecture from the same section. | 10x Visium, Nanostring GeoMx DSP, Akoya CODEX. |

| Cell-Free DNA/RNA Collection Tubes | Stabilize blood samples for downstream plasma-based genomic and transcriptomic assays. | Streck cfDNA BCT, PAXgene Blood ccfDNA Tube. |

Within the broader thesis on horizontal versus vertical multi-omics data integration, the choice of approach is a fundamental strategic decision. This document provides application notes and experimental protocols to guide researchers in selecting and implementing the appropriate methodology.

- Horizontal (Patient-Centric) Integration: Integrates multiple omics layers (e.g., genomics, transcriptomics, proteomics) across a cohort of patients or samples. The primary axis of integration is the biological subject, aiming to build a comprehensive, cross-omic profile for each individual to stratify populations, identify biomarkers, or understand inter-individual variability.

- Vertical (Pathway-Centric) Integration: Integrates multiple omics layers within a specific biological pathway, process, or system. The primary axis of integration is the biological mechanism, aiming to reconstruct detailed, causal flow of information (e.g., from genetic variant to mRNA to protein to metabolite) for a defined pathway.

Decision Framework: When to Use Each Approach

The following table summarizes the key objectives, applications, and data requirements that dictate the choice of approach.

Table 1: Decision Framework for Horizontal vs. Vertical Integration

| Aspect | Horizontal (Patient-Centric) Approach | Vertical (Pathway-Centric) Approach |

|---|---|---|

| Primary Objective | Identify patient subtypes, predictive/prognostic biomarkers, or comprehensive molecular signatures correlated with phenotype. | Elucidate mechanistic drivers, causal relationships, and regulatory dynamics within a specific biological system. |

| Core Question | "What are the multi-omic differences between patient groups A and B?" | "How does a genetic perturbation in Pathway X alter the transcriptome, proteome, and metabolome downstream?" |

| Ideal Use Case | Cohort studies (e.g., TCGA, clinical trials), population health, precision oncology, complex disease stratification. | Functional validation studies, pathway pharmacology, toxicology, understanding drug mechanism of action (MoA). |

| Typical Study Design | Many subjects/samples (n > 100), fewer omics layers (2-3), matched samples per subject. | Fewer experimental units (n < 20), deeper omics layers (3+), often with controlled perturbations (e.g., knock-out, inhibition). |

| Data Structure | Wide: Samples as rows, multi-omic features (e.g., mutations, genes, proteins) as columns. | Deep: Features linked to a pathway as rows, multi-omic measurements across conditions/time as columns. |

| Key Analytical Methods | Multi-omic clustering, supervised classification, multivariate regression, network-based stratification. | Pathway enrichment, multi-omic Bayesian networks, time-series integration, kinetic modeling. |

| Main Output | Patient clusters, multi-omic signatures, biomarker panels for diagnosis/stratification. | Annotated pathway maps with multi-omic measurements, predictive models of pathway flux. |

Application Notes & Experimental Protocols

Protocol 3.1: Implementing a Horizontal (Patient-Centric) Study

Objective: To identify multi-omic subtypes of a disease (e.g., breast cancer) from a cohort of patient tumors.

Workflow Summary: Sample Collection → Multi-omic Data Generation → Data Alignment & Preprocessing → Horizontal Integration & Clustering → Subtype Characterization & Validation.

Title: Horizontal Integration Workflow for Patient Stratification

Detailed Protocol Steps:

- Cohort & Sample Selection: Select a well-annotated cohort (e.g., n=200 patients) with matched tumor tissue. Ensure appropriate IRB approval and informed consent.

- Multi-Omic Data Generation:

- Genomics (WES): Extract tumor and matched germline DNA. Perform exome capture and sequencing on an Illumina platform (150bp paired-end, >100x coverage). Call variants (SNVs, Indels) using GATK best practices.

- Transcriptomics (RNA-Seq): Extract total RNA, assess RIN >7. Prepare stranded mRNA-seq libraries. Sequence to a depth of ~50 million reads per sample. Align to reference genome (STAR) and quantify gene expression (featureCounts).

- Proteomics (LC-MS/MS): Perform tissue lysis, protein digestion (trypsin), and peptide cleanup. Use TMTpro 16-plex labeling for multiplexing. Fractionate by high-pH reverse-phase HPLC. Analyze fractions by LC-MS/MS on an Orbitrap Eclipse. Identify and quantify proteins using SequestHT in Proteome Discoverer.

- Data Preprocessing: For each omics layer, perform quality control, batch correction (ComBat), normalization (e.g., VSN for proteomics, TMM for RNA-Seq), and feature reduction (e.g., remove low-variance genes).

- Horizontal Integration: Use the patient ID as the primary key. Align data into a list of matrices where each matrix

[i]is an omics dataset withmpatients (rows) andn_ifeatures (columns). All matrices share the same row order (patients). - Clustering Analysis: Apply the Similarity Network Fusion (SNF) algorithm.

- Calculate patient similarity matrices

W^(i)for each omic using Euclidean distance and a patient similarity kernel. - Fuse all

W^(i)into a single integrated patient networkW. - Apply spectral clustering on

Wto obtain patient cluster assignments (k=3-5).

- Calculate patient similarity matrices

- Subtype Characterization: Perform differential analysis (DESeq2 for RNA-Seq, limma for proteomics) between clusters. Conduct pathway enrichment (GSEA, MsigDB) on differential features. Correlate clusters with clinical outcomes (survival analysis).

The Scientist's Toolkit: Key Reagents for Protocol 3.1

| Item | Function | Example (Vendor) |

|---|---|---|

| Allprep DNA/RNA/miRNA Kit | Simultaneous purification of genomic DNA and total RNA from a single tissue sample, ensuring matched multi-omic material. | Qiagen #80204 |

| TMTpro 16plex Label Reagent Set | Isobaric chemical tags for multiplexing up to 16 samples in a single LC-MS/MS run, reducing quantitative variability. | Thermo Fisher Scientific #A44520 |

| TruSeq DNA Exome & Stranded mRNA Prep Kits | Standardized library preparation kits for WES and RNA-Seq, ensuring reproducibility across large cohorts. | Illumina #20020616 / #20020595 |

| Sera-Mag Magnetic Beads | For PCR cleanup and library size selection; critical for efficient NGS library preparation. | Cytiva #29343052 |

| Trypsin, Sequencing Grade | High-purity protease for consistent and complete protein digestion prior to MS analysis. | Promega #V5111 |

Protocol 3.2: Implementing a Vertical (Pathway-Centric) Study

Objective: To delineate the multi-omic impact of inhibiting the MAPK/ERK signaling pathway in a cancer cell line model.

Workflow Summary: Pathway Selection & Perturbation → Multi-Omic Time-Course → Vertical Data Alignment → Causal Network Inference → Mechanistic Model.

Title: Vertical Integration Workflow for Pathway Analysis

Detailed Protocol Steps:

- Pathway Definition & Perturbation: Select a well-annotated pathway (e.g., KEGG MAPK signaling). Treat a sensitive cell line (e.g., A375 melanoma) with a specific MEK inhibitor (e.g., Trametinib, 100 nM). Include a DMSO vehicle control.

- Multi-Omic Time-Course Sampling: Harvest cells at multiple time points post-treatment (e.g., 0, 15min, 1h, 6h, 24h) in biological triplicate for each omic.

- Multi-Omic Data Generation:

- Phospho-Proteomics: Lyse cells, digest proteins, enrich phosphopeptides using Fe-NTA or TiO2 magnetic beads. Analyze by LC-MS/MS (Orbitrap). Quantify phosphosite levels (MaxQuant).

- Transcriptomics: Extract RNA and prepare sequencing libraries as in Protocol 3.1.

- Metabolomics: Perform methanol extraction of polar metabolites. Analyze by Hydrophilic Interaction Liquid Chromatography (HILIC) coupled to a high-resolution mass spectrometer (e.g., Q Exactive HF). Process with XCMS.

- Vertical Data Alignment: Map all measured entities (phosphosites, transcripts, metabolites) to the KEGG MAPK pathway map (hsa04010). Create a unified data table where rows are pathway components (e.g., "MAPK1", "ELK1") and columns are multi-omic measurements across time points and conditions.

- Causal Network Inference: Construct a Dynamic Bayesian Network (DBN) using the time-series data.

- Discretize the continuous multi-omic data.

- Use the bnlearn R package (

structure learningwithdtabcalgorithm) to infer probabilistic relationships between entities across time lags, constrained by prior pathway knowledge. - This infers directional edges (e.g., "Phospho-ERK at t-1 → FOS mRNA at t").

- Model Building & Validation: Integrate DBN output with literature knowledge to refine a mechanistic model. Validate predictions (e.g., of a key downstream transcription factor) using orthogonal methods like ChIP-qPCR or a CRISPRi knockdown experiment.

The Scientist's Toolkit: Key Reagents for Protocol 3.2

| Item | Function | Example (Vendor) |

|---|---|---|

| Phosphoprotein Enrichment Kits (Fe-NTA/TiO2) | Selective enrichment of phosphopeptides from complex digests, essential for phosphoproteomics. | Thermo Fisher Scientific #88807 / GL Sciences #5010-21309 |

| Trametinib (MEK Inhibitor) | High-potency, selective tool compound for perturbing the MAPK/ERK pathway. | Selleckchem #S2673 |

| HILIC Chromatography Columns | Stationary phase for separating polar metabolites in LC-MS based metabolomics. | Waters #186004742 |

| KAPA mRNA HyperPrep Kit | Efficient, rapid library prep from low RNA inputs, suitable for time-course experiments. | Roche #08098140702 |

| MetaXpress Software | For high-content image analysis if pathway validation includes immunofluorescence assays. | Molecular Devices |

Application Notes: Horizontal vs. Vertical Integration in Disease Research

In the thesis comparing horizontal (multi-assay across a cohort) and vertical (deep, multi-layered on a single sample) multi-omics integration, the choice of strategy is fundamentally dictated by the biological question. This note details their application across three therapeutic areas.

Table 1: Integration Strategy Selection Based on Research Question

| Disease Area | Exemplary Research Question | Optimal Integration Strategy | Primary Rationale & Data Types |

|---|---|---|---|

| Oncology | Identifying robust molecular subtypes and prognostic biomarkers across a heterogeneous patient population. | Horizontal Integration | Enables discovery of consensus patterns (e.g., immune-hot vs. -cold tumors) by clustering across many patients. Data: Bulk RNA-seq, DNA methylation, somatic mutations from TCGA/ICGC cohorts. |

| Oncology | Unraveling the complete mechanism of action of a targeted therapy in a specific in vitro model. | Vertical Integration | Connects the drug's primary target to downstream functional effects within the same biological system. Data: Proteomics (target engagement), phospho-proteomics (signaling), RNA-seq (transcriptional response). |

| Neurology | Discovering peripheral biomarkers (e.g., in blood) for central nervous system pathology in Alzheimer's disease. | Horizontal Integration | Correlates diverse molecular features from an accessible tissue (blood) with clinical imaging/outcomes across a cohort. Data: Plasma proteomics, metabolomics, miRNA-seq from longitudinal studies like ADNI. |

| Neurology | Modeling the cell-type-specific dysregulation in a post-mortem brain sample from a Parkinson's disease patient. | Vertical Integration | Builds a causal, layer-by-layer understanding within a single, critically relevant tissue sample. Data: snRNA-seq (cell type), paired snATAC-seq (chromatin accessibility), and spatial transcriptomics from adjacent section. |

| Complex Diseases (e.g., RA, IBD) | Stratifying patients into endotypes for targeted clinical trial recruitment. | Horizontal Integration | Identifies clusters of patients sharing multi-omics profiles, predicting drug response. Data: Serum metabolomics, synovial tissue RNA-seq, immunophenotyping from trial baseline data. |

| Complex Diseases | Deconstructing the tumor-immune-stroma interactome in a single rheumatoid arthritis synovial biopsy. | Vertical Integration | Maps the local cellular crosstalk and signaling networks driving inflammation in a specific tissue microenvironment. Data: CITE-seq (transcriptome + surface proteins), secretome analysis from the same biopsy culture. |

Detailed Experimental Protocols

Protocol 1: Horizontal Integration for Oncology Subtyping Objective: To identify integrative molecular subtypes in breast cancer using public cohort data.

- Data Acquisition: Download matched tumor data (RNA-seq, DNA methylation 450k array, somatic copy number alterations) for ~1000 samples from The Cancer Genome Atlas (TCGA) BRCA cohort via the Genomic Data Commons (GDC) API.

- Preprocessing & Dimensionality Reduction:

- RNA-seq: Fragments Per Kilobase Million (FPKM) normalization, log2 transformation. Perform principal component analysis (PCA), retain top 50 PCs.

- Methylation: M-value calculation from beta values, ComBat batch correction. Retain top 50 PCs.

- CNAs: Segment log2 ratios, summarize to gene-level GISTIC scores. Retain top 50 PCs.

- Multi-Omics Clustering: Use the

MoClustermethod (from themovicsR package) on the concatenated PCA matrices (150 features total). Apply non-negative matrix factorization (NMF) to define clusters (k=2-10). Select optimal k via consensus clustering metrics (cophenetic correlation, silhouette width). - Subtype Characterization: For each cluster, perform differential analysis per platform. Annotate subtypes using known pathways (e.g., PI3K/AKT, immune response), PAM50 classification, and survival analysis (Kaplan-Meier, log-rank test).

Protocol 2: Vertical Integration for Drug Mechanism of Action Objective: To delineate the signaling cascade induced by a KRAS G12C inhibitor in a lung adenocarcinoma cell line.

- Cell Treatment & Lysis: Culture NCI-H358 cells. Treat with 1 µM MRTX849 (adagrasib) or DMSO for 1, 6, and 24 hours (n=4). Wash with PBS and lyse cells in a denaturing buffer.

- Multi-Layer Protein Extraction:

- Phospho-Proteomics: Enrich phosphopeptides from 1mg of total protein lysate using Fe-NTA magnetic beads. Desalt and dry.

- Global Proteomics: Use the flow-through from phospho-enrichment, followed by clean-up.

- LC-MS/MS Analysis: Reconstitute peptides and analyze on a timsTOF Pro with a NanoElute UHPLC. Use PASEF method. Libraries for DIA-NN are generated from parallel deep DDA runs.

- Vertical Data Integration:

- Kinase-Substrate Mapping: Input significantly changing phosphosites (p<0.01, |log2FC|>1) into the Kinase-Substrate Enrichment Analysis (KSEA) tool. Identify activated/inhibited upstream kinases (e.g., ERK1/2, SHP2).

- Pathway Linking: Integrate KSEA results with significant changes from global proteomics (e.g., upregulation of DUSP6, downregulation of Cyclin D1) using causal network tools (CausalPath). Validate top predictions (e.g., p-ERK/ERK ratio) by western blot.

Mandatory Visualizations

Diagram 1: Horizontal vs Vertical Integration Workflow

Diagram 2: Vertical MoA Analysis for KRASi

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Featured Multi-Omics Protocols

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| Phosphopeptide Enrichment Beads | Selective isolation of phosphorylated peptides from complex digests for phosphoproteomics. | Fe-NTA Magnetic Agarose Beads (Thermo Fisher, 78601) |

| CITE-seq Antibody Conjugation Kit | Enables labeling of antibodies with oligonucleotide barcodes for simultaneous protein and RNA detection at single-cell level. | TotalSeq-C Antibody Labeling Kit (BioLegend, 688102) |

| Single-Nucleus ATAC-seq Kit | Provides reagents for nuclei isolation, transposition, and library prep for chromatin accessibility profiling. | Chromium Next GEM Single Cell ATAC Kit (10x Genomics, 1000175) |

| DIA-MS Spectral Library Kit | Contains standardized HeLa digests for generating comprehensive spectral libraries for Data-Independent Acquisition proteomics. | Pierce HeLa Protein Digest Standard (Thermo Fisher, PCO001) |

| Multi-Omics Integration Software | Platform for performing horizontal (NMF, iCluster) and vertical (causal inference) integration analyses. | Movics R Package; CausalPath Web Tool |

| Cohort Data Portal Access | Source for matched, clinically annotated multi-omics data from large patient cohorts (e.g., TCGA, ADNI). | GDC Data Portal; ADNI LONI Image & Data Archive |

Within the ongoing research discourse comparing horizontal (across samples) and vertical (across molecular layers within a single sample) multi-omics integration, the foundational step is the rigorous definition and preparation of input data. The choice of integration approach is fundamentally constrained by the nature, scale, and quality of the omics data types available. This application note delineates the essential prerequisites for each paradigm, providing protocols for initial data assessment and curation to ensure robust downstream integration and biological inference.

The following tables summarize the core data requirements for horizontal and vertical multi-omics integration strategies.

Table 1: Data Type Suitability and Scale

| Omics Data Type | Typical Assay | Horizontal Integration (Across Samples) | Vertical Integration (Across Layers) |

|---|---|---|---|

| Genomics | Whole Genome Sequencing (WGS), SNP arrays | Essential. Requires consistent variant calling across a large cohort (n > 100s). | Foundation Layer. Serves as static reference for regulatory or functional variation. |

| Transcriptomics | RNA-Seq, Microarrays | Core Data. Expression matrices (genes x samples) for correlation/prediction. | Core Layer. Dynamic layer linking genotype to phenotype. Requires matched sample. |

| Epigenomics | ChIP-Seq, ATAC-Seq, Methylation arrays | Cohort-wide. Histone mark, accessibility, or methylation profiles across samples. | Regulatory Layer. Explains transcriptomic variation. Must be from same biological system. |

| Proteomics | Mass Spectrometry (LC-MS/MS), RPPA | Highly Valuable. Protein abundance or post-translational modification data. | Functional Effector Layer. Critical for mechanistic models. Matching is critical. |

| Metabolomics | LC/MS, GC/MS, NMR | Phenotypic Anchor. End-point small molecule profiles across cohorts. | Phenotypic Output Layer. Captures final biochemical activity. Technical variability is high. |

Table 2: Minimum Quality and Replication Requirements

| Prerequisite | Horizontal Integration | Vertical Integration |

|---|---|---|

| Sample Size | Large cohorts (100s-1000s) for statistical power. | Can be deep-dive on smaller N (e.g., 10-50), but requires perfect matching. |

| Sample Matching | Can be meta-analysis of disparate studies with batch correction. | Absolute Mandate. All omics layers must derive from the same biological specimen (or aliquots). |

| Data Completeness | Tolerates missing data per layer if sample N is large. | Missing data in any layer for a sample can severely compromise the integrated model. |

| Technical Replication | Important for assessing assay robustness within cohort. | Crucial for verifying measurement accuracy within the same sample. |

| Minimum Sequencing Depth/ Coverage | RNA-Seq: >20M reads/sample; WGS: >30X; Proteomics: Depth to ID 5000+ proteins. | Often requires greater depth per sample to detect low-abundance, layer-crossing signals. |

| Key QC Metric | Batch effect assessment (PCA, surrogate variable analysis). | Pairwise correlation of measurements from the same sample across platforms (e.g., RNA-protein). |

Experimental Protocols for Foundational Data Generation

Protocol 1: Generating a Vertically Integrated Multi-omics Sample from Tissue

Objective: To obtain genomic, transcriptomic, proteomic, and metabolomic data from a single tissue specimen.

Materials:

- Fresh-frozen tissue sample (≥50 mg)

- AllPrep DNA/RNA/Protein Mini Kit (Qiagen)

- Methanol, acetonitrile, water (LC-MS grade)

- RIPA lysis buffer with protease/phosphatase inhibitors

- DNase I, RNase inhibitors

Procedure:

- Cryopulverization: Under liquid N2, pulverize frozen tissue using a mortar and pestle or cryomill. Keep powder frozen.

- Aliquoting: Rapidly weigh and aliquot powder for (a) DNA/RNA, (b) protein, (c) metabolomics.

- Nucleic Acid & Protein Co-extraction: Process aliquot (a) per AllPrep protocol. Elute DNA and RNA in separate tubes. Quantify via Qubit and Bioanalyzer/TapeStation.

- Protein Extraction: Homogenize aliquot (b) in RIPA buffer. Centrifuge at 14,000g, 20min, 4°C. Collect supernatant. Quantify via BCA assay. Aliquot for LC-MS/MS.

- Metabolite Extraction: To aliquot (c), add 500µL of 80% methanol (-80°C). Vortex, sonicate, incubate at -80°C for 1hr. Centrifuge at 21,000g, 15min, 4°C. Collect supernatant for LC-MS.

- Sequencing/Profiling: Process DNA for WGS, RNA for RNA-Seq (stranded, poly-A selected). Process protein extracts for tryptic digestion and LC-MS/MS. Analyze metabolites on HRAM LC-MS.

Protocol 2: Cohort-Level (Horizontal) Multi-omics Data Curation and QC

Objective: To aggregate and quality-control omics data from multiple public or in-house studies for horizontal integration.

Procedure:

- Metadata Harmonization: Map all sample metadata to a common ontology (e.g., NCBI Biosample attributes).

- Batch Detection: For each omics data type (e.g., gene expression matrix), perform PCA. Color-code by study of origin, sequencing batch, or processing date.

- Batch Correction (if needed): Apply a method like ComBat (parametric empirical Bayes) to adjust for non-biological technical variation, preserving biological signal.

- Missing Data Imputation: For proteomics or metabolomics data with missing values, use methods like k-nearest neighbors (KNN) or MissForest, only within assays, not across layers.

- Platform/Gene ID Alignment: Map all molecular features (genes, proteins, metabolites) to common identifiers (e.g., gene symbol, UniProt ID, InChIKey). Use resources like HGNC, UniProt, HMDB.

Visualizing Data Integration Workflows

Title: Horizontal integration workflow across a cohort.

Title: Vertical integration workflow for a single sample.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Multi-omics Research |

|---|---|

| AllPrep DNA/RNA/Protein Mini Kit (Qiagen) | Enables simultaneous isolation of genomic DNA, total RNA, and native protein from a single sample aliquot, crucial for vertical integration. |

| MTBE/Methanol/Water Solvent System | A robust metabolite extraction protocol for untargeted metabolomics, offering broad coverage of polar and non-polar metabolites. |

| TMTpro 16plex (Thermo Fisher) | Isobaric labeling reagents for high-throughput proteomics, allowing multiplexing of up to 16 samples in one LC-MS run, reducing batch effects in horizontal studies. |

| DNase I (RNase-free) | Essential for removing genomic DNA contamination during RNA extraction, ensuring pure RNA for transcriptomics. |

| Phase Lock Gel Tubes | Improve recovery and purity during phenol-chloroform extractions, commonly used in proteomics and metabolomics workflows. |

| ERCC RNA Spike-In Mix (Thermo Fisher) | Synthetic RNA controls added before RNA-Seq library preparation to monitor technical variability and enable normalization across horizontal study batches. |

| Pierce Quantitative Colorimetric Peptide Assay | Accurate quantification of peptide yield prior to LC-MS/MS, ensuring equal loading and improving quantitative reproducibility. |

| Sera-Mag Magnetic Beads (Cytiva) | Used for SPRI-based clean-up and size selection in NGS library prep, ensuring consistent yield and fragment size across genomics/transcriptomics samples. |

Tools & Techniques: Implementing Horizontal and Vertical Multi-Omics Integration in Practice

This protocol details a horizontal (sample-wise) multi-omics integration workflow, framed within a comparative thesis investigating horizontal versus vertical (feature-wise) data fusion strategies. Horizontal integration correlates the same set of samples across multiple omics layers (genomics, transcriptomics, proteomics), seeking a unified sample representation. This contrasts with vertical integration, which models biological relationships across different molecular levels for a given feature set. The presented workflow progresses from classical statistical learning (Multi-Kernel Learning) to modern deep learning architectures (Deep Neural Networks) for robust, non-linear sample fusion, enabling advanced biomarker discovery and patient stratification in translational research and drug development.

Core Methodological Frameworks

Multi-Kernel Learning (MKL) for Late Integration

MKL combines multiple kernel matrices, each representing similarity between samples for one omics data type, into an optimal composite kernel for downstream prediction (e.g., disease subtyping).

Key Equation: K_combined = ∑_{m=1}^{M} β_m K_m, where K_m is the kernel matrix for omics modality m, β_m is its learned weight (β_m ≥ 0, ∑β_m = 1), and M is the total number of omics types.

Table 1: Common Kernel Functions for Omics Data

| Kernel Type | Function | Best For | Key Parameter | ||||

|---|---|---|---|---|---|---|---|

| Linear | K(x_i, x_j) = x_i^T x_j |

Dense, normalized data (e.g., gene expression) | None | ||||

| Radial Basis Function (RBF) | `K(xi, xj) = exp(-γ | xi - xj | ^2)` | Capturing complex, non-linear similarities | γ (bandwidth) |

||

| Polynomial | K(x_i, x_j) = (x_i^T x_j + c)^d |

Modeling feature interactions | Degree (d), coefficient (c) |

Deep Neural Network (DNN) for Early Integration

DNNs enable early fusion by concatenating raw or reduced feature vectors from each omics type at the input layer, allowing high-level representations to be learned through non-linear transformations in hidden layers.

Table 2: Comparison of MKL vs. DNN Fusion Approaches

| Aspect | Multi-Kernel Learning (MKL) | Deep Neural Network (DNN) |

|---|---|---|

| Integration Stage | Late (kernel-level) | Early (input-level) or Intermediate |

| Interpretability | High (kernel weights β_m) |

Lower (black-box) |

| Data Requirements | Lower (works well with smaller N) | High (requires large N to avoid overfitting) |

| Handles Non-linearity | Yes (via kernel choice) | Excellently (via activation functions) |

| Feature Interaction | Limited to kernel definition | Complex, learned interactions across omics |

Experimental Protocols

Protocol 3.1: Standardized Multi-Omics Data Preprocessing for Horizontal Fusion

Goal: Prepare coherent sample-matched datasets from diverse omics sources.

Input: Raw data matrices (samples x features) for Genomics (e.g., SNPs), Transcriptomics (RNA-seq counts), Proteomics (Abundance).

Reagents/Software: R/Python, sva/ComBat (R), scikit-learn (Python).

Steps:

- Sample Alignment: Ensure a common set of

Nsamples exists across allMomics datasets. Log and document any exclusions. - Per-Omics Normalization:

- Genomics (SNPs): Standardize to mean=0, variance=1 per SNP.

- Transcriptomics: TMM normalization (edgeR) followed by log2(CPM+1) transformation.

- Proteomics: Quantile normalization and log2 transformation.

- Batch Effect Correction: Apply

ComBat(or similar) within each omics dataset to adjust for technical batches, using sample-wise omics data as the input matrix. - Feature Filtering: Retain top

kfeatures per omics layer based on variance or association with phenotype to reduce dimensionality (k=5000typical). - Output:

Mcleaned, sample-aligned, and scaled matricesX_m∈R^{N x k_m}.

Protocol 3.2: Implementating SimpleMKL for Classification

Goal: Fuse omics datasets to predict a binary clinical outcome.

Input: Preprocessed matrices X_1...X_M from Protocol 3.1, binary phenotype vector y ∈ {0,1}^N.

Reagents/Software: SimpleMKL toolbox, SHOGUN toolbox, or scikit-learn with custom MKL.

Steps:

- Kernel Computation: For each omics matrix

X_m, compute a kernel matrixK_mof sizeN x N. For continuous data, an RBF kernel is recommended. Use cross-validation to tune itsγparameter. - Model Training: Implement the SimpleMKL algorithm:

a. Initialize all kernel weights

β_m = 1/M. b. Solve the standard SVM dual problem using the current combined kernelK_combined. c. Compute gradient of the SVM objective w.r.tβ_m. d. Updateβ_mvia reduced gradient descent, projecting onto the simplex (β_m ≥ 0, ∑β_m = 1). e. Iterate steps b-d until convergence of the objective. - Validation: Perform nested cross-validation. The outer loop splits data into train/test; the inner loop on the training set optimizes SVM

Cparameter, RBFγ, and finalβ_m. - Output: Optimal kernel weights

β_m, final classifier, and cross-validated performance metrics (AUC, Accuracy).

Protocol 3.3: Deep Neural Network for Early Integration & Classification

Goal: Use a feedforward DNN to integrate omics data at the input layer.

Input: Preprocessed matrices X_1...X_M from Protocol 3.1, phenotype vector y.

Reagents/Software: PyTorch or TensorFlow/Keras, scikit-learn, Hyperopt or Optuna for tuning.

Steps:

- Input Concatenation: For each sample

i, concatenate feature vectors from allMomics layers to create a unified input vector:z_i = concat(x_i^1, x_i^2, ..., x_i^M). - Network Architecture Design:

a. Input Layer: Size =

∑ k_m(total features from all omics). b. Hidden Layers: 2-4 fully connected (dense) layers with decreasing neurons (e.g., 1024 → 512 → 256). Use ReLU activation and Batch Normalization. c. Output Layer: Single neuron with sigmoid activation for binary classification. d. Regularization: Incorporate Dropout (rate=0.5) after each hidden layer and L2 weight regularization. - Model Training: Use Adam optimizer, Binary Cross-Entropy loss. Implement a

10%validation split for early stopping (patience=20 epochs). Train for a maximum of 200 epochs. - Hyperparameter Tuning: Use Bayesian optimization (Hyperopt) to search learning rate, dropout rate, L2 coefficient, and layer sizes.

- Output: Trained DNN model, test set performance metrics, and sample-level learned representations from the penultimate layer.

Visualizations

Title: Multi-Kernel Learning (MKL) Fusion Workflow

Title: Deep Neural Network for Early Omics Fusion

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-Omics Fusion Studies

| Item/Category | Example Product/Software | Primary Function in Workflow |

|---|---|---|

| Batch Effect Correction | sva/ComBat (R), Harmony (R/Py) |

Removes non-biological technical variation within each omics dataset, critical for valid horizontal integration. |

| Kernel Computation Library | scikit-learn (Python), kernlab (R) |

Provides optimized functions to compute diverse kernel matrices (Linear, RBF, Polynomial) from feature matrices. |

| MKL Solver | SimpleMKL (MATLAB), SHOGUN (C++/Py) |

Implements optimization algorithm to learn optimal kernel weights (β_m) for combining omics-specific kernels. |

| Deep Learning Framework | PyTorch, TensorFlow with Keras |

Enables flexible design, training, and evaluation of DNN architectures for early integration of omics data. |

| Hyperparameter Optimization | Optuna, Hyperopt, Weights & Biases |

Automates the search for optimal model parameters (e.g., learning rate, network depth, dropout) for MKL/DNN. |

| Unified Data Structure | MultiAssayExperiment (R), MuData (Python) |

Provides a standardized container for sample-aligned multi-omics data, ensuring consistency across analysis steps. |

| Omics-Specific Normalization | edgeR/DESeq2 (RNA-seq), limma (Proteomics) |

Performs appropriate, statistically sound normalization for raw count or abundance data before integration. |

1. Introduction & Thesis Context Within the broader thesis contrasting horizontal (across cohorts) and vertical (across omics layers within the same sample) integration, this protocol details a robust vertical integration workflow. It enables the causal linking of multi-omic features from disparate molecular layers (e.g., genome, epigenome, transcriptome, proteome) derived from the same biological specimen, moving beyond correlation to infer regulatory mechanisms driving phenotype.

2. Overall Workflow Protocol

- Input: Matched multi-omics datasets (e.g., Whole Genome Sequencing (WGS)/Whole Exome Sequencing (WES), DNA methylation, RNA-Seq, Proteomics) from the same set of samples (N > 50 recommended).

- Stage 1: Unsupervised Vertical Integration & Dimensionality Reduction.

- Aim: Identify latent structures and sample clusters driven by coherent cross-omic patterns.

- Protocol: Multi-Omics Factor Analysis (MOFA+).

- Data Preparation: Format each omics dataset as a samples-by-features matrix. Perform omics-specific normalization (e.g., VST for RNA-Seq, beta-mixture quantile normalization for methylation). Handle missing data via MOFA+'s internal probabilistic framework.

- Model Training: Set the number of Factors (K) using automatic relevance determination or cross-validation. Train the model to decompose data into Factors (latent variables) and corresponding weights per omics view.

- Output Interpretation: Correlate Factors with sample covariates (e.g., disease status) to annotate. Analyze top-weighted features for each Factor in each omics layer to generate biological hypotheses.

- Stage 2: Supervised Vertical Linking for Candidate Driver Identification.

- Aim: Statistically link specific "driver" features from one layer (e.g., genetic variant, methylated CpG) to "target" features in a downstream layer (e.g., gene expression, protein abundance).

- Protocol: Sparse Multi-Block Partial Least Squares (sMBPLS) Regression.

- Block Definition: Define blocks: X1 (genetic variants in cis-regions), X2 (methylation in promoter/enhancers), Y (gene expression/protein levels of the target gene).

- Model Fitting: Use k-fold cross-validation to tune sparsity parameters (λ) for each block to select non-redundant, predictive features. Fit sMBPLS to extract latent components that maximally covary between combined X blocks and Y.

- Significance Testing: Perform permutation testing (≥1000 permutations) on the extracted component's covariance to calculate a p-value. Apply false discovery rate (FDR) correction across all tested gene loci.

- Stage 3: Mechanistic Validation & Network Construction.

- Aim: Integrate prior biological knowledge to construct testable pathway models from statistically linked features.

- Protocol: Knowledge-Primed Causal Network Mapping.

- Seed Network Generation: Use Stage 2 results (e.g., a significant SNP-CpG-Gene triplet) as seeds in a knowledge graph (e.g., STRING, KEGG, Reactome) via APIs.

- Contextual Pruning: Prune the extended network using tissue-specific interaction data (e.g., from GTEx) and chromatin interaction data (e.g., Hi-C) to retain spatially plausible edges.

- Hypothesis Output: The final sub-network proposes a mechanistic chain (e.g., SNP→TF binding site alteration→methylation change→expression change→protein activity change).

3. Data Tables

Table 1: Comparison of Vertical Integration Methods Applied in Workflow

| Method | Type | Primary Objective | Key Output | Software/Package |

|---|---|---|---|---|

| MOFA+ | Unsupervised | Dimensionality reduction; identify latent factors | Factors explaining variance across omics; sample clustering | R/Python MOFA2 |

| sMBPLS | Supervised | Predictive linking of blocks of features | Sparse model of cross-omic predictors for an outcome; p-values | R sgPLS |

| mixOmics | Both | Diverse DIABLO framework for classification | Integrated signature for sample discrimination | R mixOmics |

Table 2: Example Results from a sMBPLS Analysis Linking Genotype to Expression

| Target Gene (Y) | Top SNP Predictor (X1) | Beta (X1) | Top Methylation Predictor (X2) | Beta (X2) | Model p-value (FDR-corrected) | Explained Variance (R²Y) |

|---|---|---|---|---|---|---|

| EGFR | rs17337023 | -0.87 | cg02801887 | 0.42 | 2.1e-05 | 0.31 |

| TP53 | rs1042522 | 0.91 | cg11073992 | -0.38 | 4.7e-04 | 0.26 |

| VEGFA | rs699947 | 0.45 | cg16785077 | 0.51 | 1.3e-03 | 0.22 |

4. Visualization Diagrams

Diagram 1: Vertical vs Horizontal Integration Context

Diagram 2: Multi-Stage Vertical Integration Workflow

Diagram 3: sMBPLS Model for Cross-Omic Feature Linking

5. The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Vertical Integration Workflow |

|---|---|

| PAXgene Tissue System | Stabilizes RNA, DNA, and proteins simultaneously from a single tissue biopsy, ensuring matched multi-omic input. |

| Single-Cell Multiome ATAC + Gene Expression Kit | Enables vertical integration at single-cell resolution by capturing chromatin accessibility and transcriptome from the same cell. |

| TMTpro 16plex Isobaric Label Reagents | Allows multiplexed quantitative proteomics of up to 16 samples, crucial for profiling matched sample cohorts cost-effectively. |

| CETSA & PTMscan Kits | Provide functional readouts (protein thermal stability, post-translational modifications) to validate proteomic predictions from upstream omics. |

| CRISPR Screening Libraries (e.g., Kinome) | Enable functional validation of predicted driver genes or regulatory elements identified in the integration workflow. |

| MOFA2 R/Bioconductor Package | Core tool for unsupervised factor analysis across heterogeneous omics data types. |

| Cytoscape with STRING/Reactome Apps | Platform for visualizing and enriching knowledge-primed causal networks from linked feature lists. |

Multi-omics integration strategies are fundamentally categorized as horizontal (integration across different omics layers from the same samples) or vertical (integration across different levels of biological information, from molecular to phenotypic, often for the same entity). This review critically assesses four prominent frameworks within this dichotomy, guiding researchers in tool selection for their specific integration paradigm.

Framework Comparison & Application Notes

Quantitative Comparison Table

| Framework | Primary Integration Type | Core Algorithm/Method | Key Output | Scalability (Samples/Features) | Language/Platform | Best For |

|---|---|---|---|---|---|---|

| MOFA+ | Horizontal | Statistical Bayesian Factor Analysis | Latent factors, feature weights | ~1,000s samples, 10,000s features | R/Python | Unsupervised discovery of shared & unique variation across omics. |

| mixOmics | Horizontal & Vertical | Projection-based (PCA, PLS, DIABLO) | Component plots, variable selection | ~100s samples, 1,000s features | R | Supervised & unsupervised integration with strong visualization. |

| netDx | Vertical | Patient similarity networks, machine learning | Diagnostic models, feature importance | ~1,000s samples, 10,000s+ features | R/BioConductor | Building interpretable predictive models from multi-modal data. |

| iCluster | Horizontal | Joint latent variable model (penalized regression) | Integrated clusters, subtype discovery | ~100s-1,000 samples, 10,000s features | R | Integrative clustering for discrete subgroup identification. |

Detailed Application Notes

MOFA+: A Bayesian framework for horizontal integration. It decomposes multi-omics data into a set of latent factors that capture the common and dataset-specific sources of variation. It is exceptionally robust to missing data and noise, making it ideal for large-scale cohort studies like TCGA. It does not directly incorporate phenotypic outcomes (vertical integration).

mixOmics: Provides a versatile suite for both horizontal (e.g., DIABLO for multi-omics classification) and vertical (e.g., PLS for linking omics to clinical traits) integration. Its strength lies in powerful visualizations (e.g., circos plots, relevance networks) to interpret complex associations.

netDx: A vertically-oriented framework that builds patient-specific similarity networks for each data type (e.g., mRNA, methylation, clinical) and integrates them to predict clinical outcomes. It generates highly interpretable models, showing which data types and features drive predictions.

iCluster: A horizontal integration tool specifically designed for integrative clustering. It uses a joint latent variable model with lasso-type penalties to identify coherent multi-omics subtypes, crucial for cancer classification and biomarker discovery.

Experimental Protocols

Protocol 1: Multi-omics Subtype Discovery using iCluster (Horizontal Integration)

Objective: Identify integrated molecular subtypes from mRNA expression, DNA methylation, and copy number variation data.

- Data Preprocessing: Independently normalize each omics dataset. Features are typically centered and scaled. Filter low-variance features.

- Data Formatting: Create a list object in R where each element is a sample-by-feature matrix for one omics type. Ensure identical sample ordering.

- Parameter Tuning: Use the

tune.iCluster()function to perform cross-validation and select the optimal lambda (penalty) parameters and number of latent components (K). - Model Fitting: Run the

iCluster()function with the optimal K and lambda values. - Cluster Assignment: Extract the cluster assignments for each sample from the fitted model.

- Validation: Assess cluster stability via bootstrapping. Perform survival analysis (Kaplan-Meier) or correlate with known clinical phenotypes to validate biological relevance.

Protocol 2: Building a Predictive Diagnostic Model with netDx (Vertical Integration)

Objective: Integrate gene expression, histopathology images, and clinical data to predict patient survival groups.

- Define Patient Similarity: For each data type, design a custom similarity metric. Examples:

- Gene Expression: 1 - Pearson correlation distance.

- Clinical Data: Normalized Euclidean distance.

- Build Similarity Networks: For each data type, create a patient similarity network (graph) where nodes are patients and edge weights are defined by the similarity metric.

- Feature Selection: Use a supervised approach (e.g., iterative feature pruning) to select features within each data type that best correlate with the outcome label.

- Integrated Model Training: Use the integrated network (combined from selected features across data types) within a machine learning classifier (e.g, support vector machine on graphs) to predict the outcome.

- Model Interpretation: Use pathway analysis on selected gene networks and examine weights from clinical data networks to interpret the model's decision logic.

Visualizations

Title: iCluster Workflow for Horizontal Integration

Title: Horizontal vs. Vertical Multi-omics Integration

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function/Application in Multi-omics Integration |

|---|---|

| R/BioConductor | Primary computational environment for statistical analysis and execution of MOFA+, mixOmics, netDx, and iCluster. |

| Single-cell RNA-seq Kit (e.g., 10x Genomics) | Generates transcriptomic data for one omics layer, often integrated with surface protein (CITE-seq) or ATAC-seq data horizontally. |

| DNA Methylation Array (e.g., Illumina EPIC) | Provides genome-wide methylation profiles for integration with gene expression data to study regulatory mechanisms. |

| Proteomics Reagents (e.g., TMT Isobaric Labels) | Enable multiplexed quantitative proteomics, creating a protein abundance layer for integration with mRNA data. |

| High-Quality DNA/RNA Extraction Kits | Foundational step to ensure high-integrity, multi-omic data from the same biological sample (critical for horizontal integration). |

| Clinical Data Management System (CDMS) | Source of curated phenotypic and outcome data essential for vertical integration models (e.g., in netDx). |

Within the broader thesis comparing horizontal (multi-omics per sample) versus vertical (single-omics across large cohorts) data integration strategies for biomarker discovery, this protocol focuses on a hybrid approach. This method leverages vertical cohort-derived multi-omics features to build and validate horizontal, patient-specific composite signatures. The goal is to move beyond single-molecule biomarkers to robust, systems-level signatures that enhance diagnostic accuracy and prognostic prediction.

Core Experimental Protocol: A Hybrid Integration Workflow

Protocol 2.1: Multi-Omic Data Acquisition and Pre-processing

- Objective: To generate consistent, analysis-ready datasets from publicly available cohorts (vertical data).

- Steps:

- Cohort Selection: Identify relevant disease cohorts from repositories (TCGA, GEO, EGA). Prioritize studies with matched mRNA-seq, DNA methylation (e.g., Illumina EPIC array), and proteomic (e.g., RPPA or mass spectrometry) data.

- Data Download: Use genomic data portals (e.g., UCSC Xena, cBioPortal) or

GEOquery/SRAtoolkitin R/Bioconductor. - Uniform Pre-processing:

- RNA-seq: Align to reference genome (STAR), quantify transcripts (featureCounts), normalize (TPM, DESeq2's median of ratios).

- Methylation: Process IDAT files (

minfipackage), perform functional normalization, filter probes (detection p-value, SNPs, cross-reactive). Define beta-values. - Proteomics: Normalize to internal controls/median polish, log2-transform.

- Sample Matching: Retain only samples with data across all omics layers. Annotate with clinical variables (diagnosis, stage, survival).

Protocol 2.2: Vertical Integration for Feature Selection

- Objective: To identify candidate features from each omic layer associated with the clinical outcome.

- Steps:

- Univariate Screening: For each omic layer separately, perform statistical testing (e.g., Cox regression for survival, limma for diagnosis) against the clinical endpoint.

- Multi-Omic Network Integration: Construct a knowledge-guided multi-omics network.

- Nodes: Include significant features from Step 1.

- Edges: Define relationships (e.g., gene-protein identity, cis-gene methylation-expression correlation, protein-protein interaction from STRING DB).

- Module Detection: Use community detection algorithms (e.g., Louvain, Walktrap) on the integrated network to identify tightly connected modules spanning omics types.

- Representative Feature Selection: From each significant module, select the top-ranked feature per omic layer (based on initial p-value and network centrality) as a candidate for the composite signature.

Protocol 2.3: Composite Signature Construction & Validation

- Objective: To build a single, sample-level (horizontal) prognostic index from the selected multi-omic features.

- Steps:

- Signature Training: In a designated training cohort (e.g., 70% of main cohort), fit a multivariate model (Coxnet/Lasso-Cox for survival, logistic regression for diagnosis) using the candidate features from Protocol 2.2.

- Compute Prognostic/Diagnostic Index: For any new patient (horizontal data), apply the model:

PI = Σ (Feature_Value_i * Model_Coefficient_i). This PI is the composite signature score. - Threshold Determination: In the training set, use maximally selected rank statistics (survival) or Youden's index (diagnosis) to define optimal PI cut-off for risk/status stratification.

- Validation: Test the locked signature in the held-out test cohort (30%) and independent, publicly available validation cohorts. Assess performance via time-dependent ROC (prognosis) or standard AUC (diagnosis).

Data Tables

Table 1: Performance Comparison of Signature Types in a Simulated Validation Cohort

| Signature Type | # Features | Diagnosis AUC (95% CI) | Prognostic C-index (95% CI) | Data Integration Strategy |

|---|---|---|---|---|

| Transcript-only | 12 | 0.82 (0.78-0.86) | 0.65 (0.60-0.70) | Vertical (single-omic) |

| Methylation-only | 10 | 0.79 (0.75-0.83) | 0.68 (0.63-0.73) | Vertical (single-omic) |

| Composite Multi-omic | 8 | 0.91 (0.88-0.94) | 0.76 (0.72-0.80) | Hybrid (Vertical -> Horizontal) |

Table 2: Example Composite Signature for Breast Cancer Prognosis

| Feature | Omic Layer | Model Coefficient | Biological Interpretation |

|---|---|---|---|

| ESR1 | Gene Expression | -0.52 | Luminal differentiation marker |

| AKT1 | Protein (Phospho) | +0.31 | Activated PI3K pathway signal |

| BRCA1 CpG Island | Methylation (Beta) | +0.48 | Epigenetic silencing |

| miR-21-5p | microRNA Expression | +0.23 | Oncogenic miRNA, therapy resistance |

Visualizations

Title: Hybrid Multi-Omic Integration Workflow for Biomarker Discovery

Title: Example Composite Signature Biological Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Example Product/Technology | Function in Protocol |

|---|---|---|

| Nucleic Acid Extraction | Qiagen AllPrep Kit | Simultaneous purification of DNA, RNA, and protein from a single tissue sample, preserving horizontal sample integrity. |

| Methylation Profiling | Illumina Infinium MethylationEPIC v2.0 BeadChip | Genome-wide CpG site methylation quantification at single-nucleotide resolution for vertical cohort analysis. |

| Proteomic Assay | Olink Target 96/384 Panels | High-specificity, multiplex immunoassay for relative protein quantification in serum/plasma, suitable for large cohorts. |

| Multi-Omic Data Portal | UCSC Xena Browser | Platform for downloading and visually exploring pre-processed vertical cohort data (TCGA, GTEx, etc.). |

| Network Analysis | Cytoscape with STRING App | Visualization and analysis of feature interaction networks for integrated module detection. |

| Statistical Modeling | R glmnet package |

Implementation of Lasso and Elastic-Net regression for building parsimonious composite signature models. |

Modern drug development is fundamentally a data integration challenge. The thesis contrasting horizontal (across-sample) and vertical (within-sample) multi-omics integration provides a critical framework. Horizontal integration, analyzing one omics layer (e.g., genomics) across many patients, excels in patient stratification and identifying population-level targets. Vertical integration, profiling multiple omics layers (genomics, transcriptomics, proteomics) within the same sample/patient, is paramount for elucidating complete mechanistic pathways and understanding the functional consequences of genetic alterations. Effective drug development requires a strategic synthesis of both approaches: horizontal to define cohorts and validate targets across populations, and vertical to deconvolute causal biology within a defined system.

Application Notes & Protocols

Target Identification: Integrating GWAS with Functional Genomics

Application Note: Target identification leverages horizontal integration of large-scale genomic datasets (e.g., GWAS summary statistics across hundreds of thousands of individuals) with vertical integration of functional omics from model systems to prioritize causal genes and druggable pathways.

Protocol 1.1: Computational Prioritization of Causal Genes from GWAS Loci

- Objective: To move from a GWAS-associated genomic locus to a high-confidence, druggable target gene.

- Materials: GWAS summary statistics, reference epigenomic annotations (e.g., ENCODE, ROADMAP), expression/protein Quantitative Trait Locus (eQTL/pQTL) data, druggable genome database (e.g., DGIdb).

- Methodology:

- Locus Definition: For lead GWAS variant(s), define a credible interval (e.g., ±500 kb) or use statistical fine-mapping to identify a set of potentially causal variants.

- Functional Annotation Overlay: Annotate variants using epigenomic data (chromatin accessibility, histone marks) from relevant cell types/tissues to highlight regulatory regions.

- Colocalization Analysis: Perform statistical colocalization (e.g., using

colocR package) between GWAS signals and eQTL/pQTL datasets to identify genes whose expression is likely influenced by the same causal variant. - Pathway & Network Enrichment: Input prioritized genes into pathway (KEGG, Reactome) and protein-protein interaction network analyses to identify enriched, druggable modules.

- Druggability Assessment: Cross-reference final gene list with databases of known drug targets, bioactive compounds, and protein structures to assess tractability.

Table 1: Key Data Sources for Genomic Target Identification

| Data Type | Example Source | Primary Use in Target ID |

|---|---|---|

| GWAS Summary Stats | UK Biobank, GWAS Catalog | Identify disease-associated genomic loci (Horizontal) |

| Epigenomic Maps | ENCODE, ROADMAP Epigenomics | Annotate regulatory potential of variants (Vertical) |

| eQTL/pQTL Data | GTEx, PancanQTL, UKB-PPP | Link variants to gene/protein expression (Vertical) |

| Druggable Genome | DGIdb, ChEMBL, Target Central | Assess pharmacological tractability |

| CRISPR Screens | DepMap, Project Score | Identify essential genes in disease models (Vertical) |

Title: Target ID workflow combining horizontal GWAS and vertical functional omics.

Mechanism of Action (MoA) Elucidation: Vertical Multi-Omics Profiling

Application Note: Deconvoluting MoA requires deep vertical integration, measuring the molecular cascade from genetic perturbation or drug treatment through transcriptome, proteome, and phosphoproteome in relevant cellular or tissue samples.

Protocol 2.1: Multi-Omics Profiling for Drug MoA Deconvolution

- Objective: To comprehensively characterize the molecular effects of a drug candidate in a primary cell line model.

- Materials: Target cell line, drug compound and vehicle control, multi-omics profiling platforms (RNA-seq, LC-MS/MS for proteomics/phosphoproteomics).

- Methodology:

- Experimental Design: Treat cells with three concentrations of drug (IC10, IC50, IC90) and vehicle control in biological triplicate. Harvest cells at multiple time points (e.g., 2h, 8h, 24h).

- Sample Processing:

- RNA: Extract total RNA, perform poly-A selection, and prepare stranded RNA-seq libraries.

- Protein/Phosphoprotein: Lyse cells in urea-based buffer with phosphatase/protease inhibitors. Digest proteins with trypsin. Enrich phosphopeptides using TiO2 or Fe-IMAC columns.

- Data Acquisition: Sequence RNA libraries (minimum 30M reads/sample). Analyze peptides via LC-MS/MS on a high-resolution mass spectrometer.

- Vertical Data Integration:

- Perform differential analysis for each omics layer individually (DESeq2 for RNA,

limmafor proteomics). - Apply multi-omics integration tools (e.g.,

MOFA+,Integrative NMF) to identify latent factors representing coordinated changes across molecular layers. - Perform joint pathway analysis (e.g., using

multiGSEA) on integrated factor loadings.

- Perform differential analysis for each omics layer individually (DESeq2 for RNA,

- Mechanistic Inference: Overlay significantly changing phosphoproteins on kinase-substrate networks (e.g., PhosphoSitePlus) to infer kinase activity. Integrate with transcriptomic changes to map upstream regulators and downstream effectors.

Table 2: Multi-Omics MoA Study Quantitative Results (Example)

| Molecular Layer | Total Features Measured | Significantly Altered Features (vs. Control, 24h) | Top Enriched Pathway (FDR < 0.05) |

|---|---|---|---|

| Transcriptomics (RNA-seq) | ~20,000 genes | 1,542 up, 1,187 down | mTORC1 signaling (p=3.2e-09) |

| Proteomics (LC-MS/MS) | ~8,000 proteins | 210 up, 310 down | Autophagy (p=1.7e-05) |

| Phosphoproteomics | ~25,000 phosphosites | 890 up, 1,450 down | AGC kinase substrates (p=5.4e-12) |

Title: Vertical multi-omics workflow for drug mechanism of action.

Patient Stratification: Horizontal Integration for Biomarker Discovery

Application Note: Stratifying patients likely to respond to a therapy relies on horizontal integration of clinical data with molecular profiling (often a single dominant omics layer) across a large, heterogeneous patient cohort to identify predictive biomarkers.

Protocol 3.1: Development of a Transcriptomic-Based Predictive Biomarker Signature

- Objective: To identify and validate an RNA expression signature that predicts response to a targeted therapy from pre-treatment tumor biopsies.

- Materials: Archived FFPE tumor biopsies from a completed Phase II/III trial with documented clinical response (Responders vs. Non-Responders). RNA-seq or Nanostring nCounter platform.

- Methodology:

- Cohort Definition: Select matched responder (R) and non-responder (NR) samples (e.g., n=50 each) from the trial population. Ensure balanced clinical covariates (age, sex, prior therapy).

- Profiling: Extract RNA and perform whole-transcriptome RNA-seq or profile using a targeted oncology-focused gene expression panel.

- Signature Discovery (Training Set):

- Using 2/3 of samples, perform differential expression analysis (R vs. NR).

- Apply regularized regression (LASSO or Elastic Net) to identify a minimal gene set predictive of response, using cross-validation to prevent overfitting.

- Generate a continuous signature score (e.g., linear combination of normalized gene expression).

- Signature Validation (Test Set):

- Apply the locked model to the held-out 1/3 of samples.

- Assess performance: Calculate Area Under the ROC Curve (AUC), sensitivity, and specificity. Determine an optimal score cutoff.

- Clinical Assay Development: Translate the discovered signature into a clinically deployable assay (e.g., RT-qPCR panel or diagnostic Nanostring cartridge).

Table 3: Performance Metrics of a Hypothetical Predictive Biomarker Signature

| Metric | Training Set (n=67) | Independent Test Set (n=33) | Acceptable Threshold |

|---|---|---|---|

| AUC (95% CI) | 0.89 (0.82-0.95) | 0.85 (0.72-0.96) | >0.75 |

| Sensitivity | 88% | 83% | >80% |

| Specificity | 82% | 79% | >75% |

| Signature Size | 12 genes | 12 genes (locked) | Minimized |

Title: Horizontal integration workflow for predictive biomarker development.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Kits for Multi-Omics in Drug Development

| Item | Function & Application | Example Vendor/Product |

|---|---|---|

| Poly(A) RNA Selection Beads | Isolate mRNA from total RNA for RNA-seq library prep, reducing ribosomal RNA background. | NEBNext Poly(A) mRNA Magnetic Isolation Module |

| Phosphopeptide Enrichment Kits | Selective enrichment of phosphorylated peptides from complex digests for phosphoproteomics. | Thermo Fisher Titanium Dioxide (TiO2) Spin Tips |

| Isobaric Mass Tag Kits (TMT/IBT) | Enable multiplexed quantitative proteomics, allowing parallel analysis of 6-18 samples in one MS run. | Thermo Fisher TMTpro 16plex |

| Single-Cell RNA-seq Kit | Profile gene expression in individual cells for patient stratification in heterogeneous tissues (e.g., tumors). | 10x Genomics Chromium Next GEM Single Cell 3' |

| CRISPR Screening Library | Genome-wide or targeted gRNA libraries for functional genomics and target identification/validation. | Horizon Discovery DECIPHER pooled library |

| Multiplex Immunoassay Panels | Simultaneously quantify dozens of proteins (cytokines, chemokines, phospho-proteins) in serum/tissue lysates for MoA/PD studies. | Meso Scale Discovery (MSD) U-PLEX Assays |

| Cell Viability/Proliferation Assay | High-throughput measurement of drug response (IC50) in cell lines or primary cells. | Promega CellTiter-Glo Luminescent Assay |

Overcoming Challenges: Practical Solutions for Multi-Omics Data Integration Pitfalls

Tackling Technical Noise and Batch Effects in Horizontal Cohort Studies

Horizontal multi-omics integration involves the analysis of multiple molecular layers (e.g., genomics, transcriptomics, proteomics) across a single, often large, cohort of individuals. This approach is central to systems biology in population-scale studies, such as those in epidemiology or clinical trial biomarker discovery. In contrast, vertical integration focuses on deep multi-omics from a single subject or small sample set. The primary challenge in horizontal studies is the confounding of true biological signals with non-biological technical variation introduced by batch effects, platform differences, reagent lots, and personnel shifts. This Application Note provides detailed protocols for identifying, diagnosing, and mitigating these artifacts to ensure robust biological inference.

Table 1: Quantitative Impact of Common Technical Confounders in Horizontal Omics Studies

| Technical Confounder | Typical Measurement (e.g., Transcriptomics) | Estimated % Variance Explained (Range) | Primary Diagnostic Method |

|---|---|---|---|

| Processing Batch | Samples processed in different weeks | 10-40% | PCA, colored by batch |

| Sequencing Lane/Library Prep Batch | Different Illumina lanes or prep kits | 5-25% | Correlation matrix, batch-wise PCA |