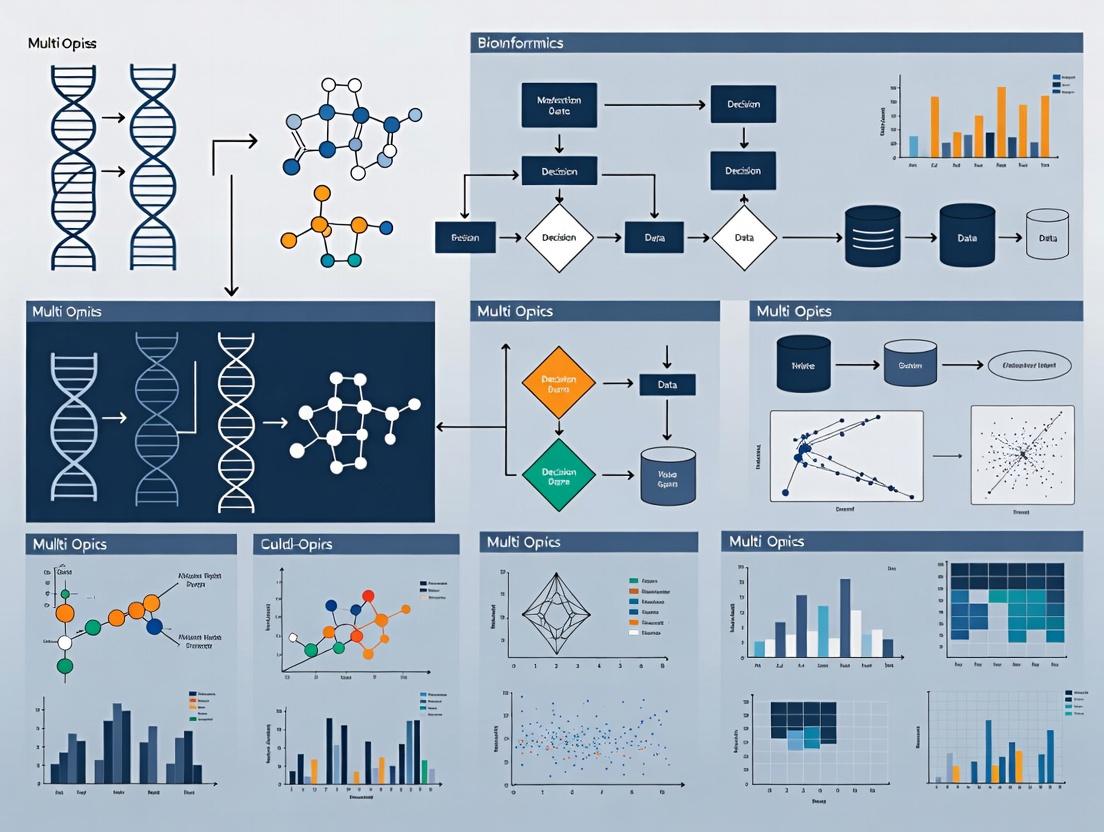

Missing Values in Multi-Omics Data: A Comprehensive Guide to Handling, Imputation, and Best Practices for Biomedical Researchers

Missing values are an inevitable and critical challenge in multi-omics data analysis, directly impacting downstream discovery and reproducibility.

Missing Values in Multi-Omics Data: A Comprehensive Guide to Handling, Imputation, and Best Practices for Biomedical Researchers

Abstract

Missing values are an inevitable and critical challenge in multi-omics data analysis, directly impacting downstream discovery and reproducibility. This article provides a structured guide for biomedical researchers and drug development professionals. We first explore the origins and mechanisms behind missing data in genomics, transcriptomics, proteomics, and metabolomics. We then detail a spectrum of methodological approaches, from simple deletion to advanced machine learning-based imputation, with practical application guidance. The guide addresses common troubleshooting scenarios and optimization strategies for robust analysis. Finally, we present a framework for validating imputation performance and comparing methods, concluding with future directions for enhancing data integrity in translational research.

Understanding the Missing Data Problem: Why Multi-Omics Datasets Are Incomplete

Technical Support Center: Troubleshooting Missing Data

FAQ 1: My proteomics data has many values missing in low-abundance proteins. What mechanism is this likely to be, and how can I test it?

- Answer: This pattern is strongly indicative of Missing Not At Random (MNAR), specifically due to limits of detection. The instrument cannot quantify signals below a certain threshold. You can test this by:

- Density Plot Analysis: Plot the distribution of recorded intensities. A sharp cutoff or a pile-up of values just above a certain threshold suggests MNAR.

- Signal-to-Noise Check: Compare the missing values against the associated noise or background signal for each run; values missing where the background is high confirm MNAR.

- Protocol: Perform a "spike-in" experiment using known, low-concentration standards. If these standards are systematically missing or unreliable, it confirms MNAR related to instrument sensitivity.

FAQ 2: In my transcriptomics dataset, samples from a specific patient cohort have missing values for a whole batch of genes. Is this MCAR or MAR?

- Answer: This is most likely Missing At Random (MAR). The missingness is related to the observed variable "patient cohort" (or an associated clinical variable like poor RNA quality). The missing gene expression values themselves are not the cause.

- Troubleshooting Guide:

- Step 1: Check the sample metadata. Correlate the missingness pattern with

Sample_Group,RNA_Integrity_Number (RIN),Sequencing_Batch, orLibrary_Prep_Date. - Step 2: Create a missingness indicator matrix (1=missing, 0=present).

- Step 3: Use a statistical test (e.g., chi-square) to determine if the missingness depends on the metadata variable. A significant p-value suggests MAR, not MCAR.

- Step 1: Check the sample metadata. Correlate the missingness pattern with

- Troubleshooting Guide:

FAQ 3: How can I distinguish between technical MAR (due to experiment) and biological MNAR (due to biology) in metabolomics?

- Answer: This requires careful experimental design and analysis.

- For Technical MAR (e.g., sample processing error):

- The pattern will align with batch, run order, or specific technician.

- Protocol: Use Quality Control (QC) samples. If the same metabolite is missing in all study samples but present in interspersed QC samples within the same batch, the cause is technical (MAR related to sample condition).

- For Biological MNAR (e.g., metabolite not produced):

- The pattern aligns with a biological condition (e.g., all diseased samples lack a metabolite).

- Protocol: Re-extract and re-run a subset of the original biological specimens for the affected group. If the metabolite remains absent after repeated technical analysis, evidence for biological MNAR is strong.

- For Technical MAR (e.g., sample processing error):

Data Summary: Common Missingness Patterns by Omics Layer

| Omics Layer | Primary Source of Missingness | Typical Mechanism | Estimated Frequency in Public Datasets* |

|---|---|---|---|

| Genomics (SNP arrays) | Low-quality DNA, calling algorithms | MAR (dependent on sample quality) | 2-5% |

| Transcriptomics (RNA-seq) | Low expression, dropouts in scRNA-seq | MNAR (limit of detection) | 10-30% (up to 90% in scRNA-seq) |

| Proteomics (LC-MS) | Low-abundance peptides, instrument sensitivity | MNAR (limit of detection) | 15-40% |

| Metabolomics (NMR/LC-MS) | Compound below detection, sample degradation | Mix of MNAR (detection) & MAR (batch) | 20-50% |

| Epigenomics (ChIP-seq) | Region-specific antibody efficiency | MAR/MNAR | 5-20% |

Frequency estimates are illustrative aggregates from recent literature surveys.

Experimental Protocols for Mechanism Diagnosis

Protocol 1: Testing for MCAR using Little's Test

- Objective: Statistically test if the missing data pattern is completely random.

- Procedure:

a. Format your data matrix (features x samples).

b. Create a missingness indicator matrix.

c. Implement Little's MCAR test (available in R package

naniarorBaylorEdPsych). d. Interpretation: A non-significant p-value (> 0.05) fails to reject the null hypothesis that data is MCAR. A significant p-value indicates data is not MCAR (i.e., it is MAR or MNAR).

Protocol 2: Pattern Visualization for MAR vs. MNAR

- Objective: Visually identify if missingness is related to observed values (MAR) or to the value itself (MNAR).

- Procedure:

a. For a given feature, split data into two groups:

missingandobserved. b. Plot the distribution of another, highly correlated observed feature across these two groups. c. Interpretation: If the distributions differ significantly (t-test), it suggests MAR (missingness depends on other observed data). If they are similar, it is more consistent with MNAR.

Diagram: Missing Data Mechanism Decision Logic

Title: Decision Logic for Identifying Missing Data Mechanisms

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Missing Data Analysis |

|---|---|

| Internal Standards (Isotope-Labeled) | Spiked into samples pre-processing to distinguish technical MNAR (loss) from biological MNAR (true absence) in MS-based proteomics/metabolomics. |

| Pooled Quality Control (QC) Samples | Created by combining aliquots of all samples; run repeatedly throughout the analytical batch to monitor drift and identify MAR due to instrument performance. |

| Process Blank Samples | Contain no biological material; used to identify contamination or carryover causing false signals or masking true MNAR. |

| RNA Integrity Number (RIN) Standards | Used to calibrate and assign a quality score to RNA samples, a critical observed variable for diagnosing MAR in transcriptomics. |

| Serial Dilution Spike-Ins | A dilution series of known compounds to empirically map the limit of detection (LOD) and quantify the MNAR threshold for a given assay. |

Technical Support Center: Troubleshooting Multi-Omics Data Integration

FAQs & Troubleshooting Guides

Q1: My multi-omics dataset has over 20% missing values in the proteomics layer. Will imputation introduce more bias than simply removing those features? A: This is a critical threshold. While feature removal preserves data integrity for remaining features, it drastically reduces statistical power and can bias biological interpretation if missingness is non-random (e.g., low-abundance proteins). Imputation is generally recommended but requires caution.

- Protocol: Conduct a Missing Completely At Random (MCAR) test (e.g., Little's test) on your proteomics data. If MCAR is rejected, missingness is likely informative.

- Action: For non-MCAR data, use model-based imputation (e.g.,

missForestin R, which handles non-linear relationships) rather than mean/median. Validate by simulating missingness in a complete subset and comparing imputed to known values.

Q2: After integrating transcriptomics and metabolomics data, my joint pathway analysis results are unstable. Could missing values be the cause? A: Yes. Missing values in either dataset weaken correlation estimates, which are foundational for integration methods like MOFA or sparse Canonical Correlation Analysis (sCCA), leading to unstable feature weights and pathway mappings.

- Protocol: Apply a multi-omics imputation framework prior to integration.

- Perform individual layer imputation (e.g., SVD for transcripts, KNN for metabolites).

- Use the imputed matrices to initialize a joint imputation algorithm like

IntegrImputeorMIBDC. - Re-run the integration and pathway analysis (e.g., with

multiGSEA).

- Validation: Bootstrap your dataset (with missing values) 100 times, apply the above protocol, and check the consistency of top-ranked pathways.

Q3: Which missing value handling method best preserves statistical power for differential analysis in integrated cohorts? A: The method that retains the largest number of complete samples per test. Pairwise deletion during integration destroys the matched sample structure. The table below compares the effective sample size (N_eff) for a cohort of 100 matched samples.

Table 1: Effective Sample Size After Common Missing Data Handling Strategies

| Strategy | Description | N_eff for Integrated Analysis | Risk |

|---|---|---|---|

| Complete-Case (Listwise) | Discard any sample with a missing value in ANY omics layer. | Often < 50% of original cohort. | Severe loss of power, introduces bias if samples are not MCAR. |

| Pairwise Deletion | Use all available data for each separate omics analysis. | Variable; integration becomes invalid. | Correlations across omics layers are computed on different sample subsets, breaking integration. |

| Imputation (MICE) | Multivariate Imputation by Chained Equations. | ~90-100% of original cohort. | Can distort covariance if the imputation model is mis-specified. Requires careful diagnostic checks. |

| Bayesian Factorization | (e.g., BMF) Models data and missingness jointly. | ~100% of original cohort. | Computationally intensive. Assumes a low-rank structure. |

Q4: How do I choose an imputation method for my specific mix of continuous (metabolomics) and count (single-cell RNA-seq) data? A: You need a method capable of handling mixed data types. A two-step protocol is recommended.

- Protocol: Mixed-Data Imputation

- Layer-Specific Pre-Imputation: For count data, use

scImputeorALRA(adapted for dropouts). For continuous data, useMICEwith predictive mean matching. - Joint Model Refinement: Feed pre-imputed matrices into a joint model like

iNMF(integrative Non-negative Matrix Factorization), which can handle different distributions and refine imputations via shared factor matrices.

- Layer-Specific Pre-Imputation: For count data, use

Experimental Workflow for Diagnosing & Mitigating Missing Data Impact

The following diagram outlines a systematic workflow for diagnosing the nature of missing data and selecting an appropriate mitigation strategy within an integrative analysis pipeline.

Title: Workflow for Missing Data Diagnosis and Mitigation in Multi-Omics

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Handling Missing Values in Multi-Omics Research

| Tool/Reagent | Function in Context | Application Note |

|---|---|---|

R package mice |

Implements Multivariate Imputation by Chained Equations for continuous, binary, and categorical data. | Critical for flexible, model-based imputation of individual omics layers. Use the pred matrix to control which variables inform each imputation. |

R package MissForest |

Non-parametric imputation using random forests. Handles complex interactions and non-linearities. | Preferred for omics data where linear assumptions fail. Computationally heavy for very large feature sets (>10k). |

Python package IterativeImputer (sklearn) |

Python equivalent of MICE. Supports different estimators. | Integrates seamlessly into Python-based ML pipelines for multi-omics. |

R package MOFA2 |

Bayesian group factor analysis for multi-omics integration. Handles missing values naturally in its model. | Can be used directly on data with missing entries; treats them as latent variables. A powerful integration-and-imputation combo. |

bnstruct R package |

Performs Missing Not At Random (MNAR) hypothesis testing and imputation. | Use test.MNAR to check for informative missingness before choosing a strategy. |

| Simulation Code Template | Custom R/Python script to artificially inject missing values at known rates/mechanisms. | Essential for validating any chosen pipeline. Compare recovered results from imputed data against the original complete truth. |

Key Experimental Protocol: Sensitivity Analysis via Simulation

Title: Protocol to Quantify the Impact of Missing Data on Integration Results. Objective: To empirically assess how missing values affect the stability and power of a multi-omics integration model. Steps:

- Create a Gold Standard: From your full dataset, identify a subset of samples that are complete across all omics layers (N_complete).

- Simulate Missingness: Artificially introduce missing values into this complete subset under different scenarios:

- MCAR: Randomly remove X% (e.g., 10%, 20%, 30%) of values.

- MAR (informative): Remove values in one omics layer based on quantiles of values in another layer (e.g., "remove low-metabolite values for samples with high transcript expression").

- Apply Mitigation: Run your chosen imputation and integration pipeline (e.g.,

mice->MOFA2) on each simulated dataset. - Benchmark Output: Compare the key output (e.g., latent factors from MOFA, pathway enrichment scores) from the imputed-integrated data against the results from the original complete gold standard. Calculate metrics like correlation of factor loadings or Jaccard index of top pathways.

- Quantify Power Loss: Perform a differential analysis (e.g., on MOFA factors) between groups using both the original and imputed data. Compare the p-values and effect sizes; the inflation of p-values indicates power loss.

This protocol provides concrete, quantitative evidence to support your choice of missing data strategy in your thesis research.

Troubleshooting Guides & FAQs

Q1: My missing data heatmap is too dense/unreadable when visualizing a large multi-omics cohort. What can I do? A: For large datasets (e.g., >100 samples x >10,000 features), a full-resolution heatmap is often impractical. Use the following approach:

- Aggregate: Plot missingness by sample or by feature group (e.g., by proteomics platform, chromosome region).

- Subsample: For an initial view, randomly subsample features.

- Use Interactive Tools: Employ libraries like

plotlyin R/Python to create zoomable heatmaps.

Q2: When performing a statistical test for Not Missing at Random (NMAR) patterns, I get a significant result. Does this mean I must use NMAR-specific imputation methods? A: Not necessarily. A significant test (e.g., using logistic regression to test if missingness depends on the hypothesized underlying value) suggests NMAR is a possibility. However, the test often has low power and cannot prove NMAR. Proceed as follows:

- Triangulate: Check for correlations between missingness and other observed variables (indicating MAR).

- Sensitivity Analysis: Perform imputation under both MAR and MNAR assumptions and compare the stability of your downstream analysis results. If conclusions differ substantially, NMAR must be seriously considered.

Q3: My missingness pattern heatmap shows a clear block pattern. What does this indicate, and how should I proceed? A: Block patterns typically indicate a technical or batch effect. For example, all metabolites in a specific batch failed detection.

- Action: Investigate experimental logs to confirm the batch effect. Do not impute across these blocks blindly. Consider imputing within batches or using batch-aware imputation methods after the initial diagnosis.

Q4: How do I choose between using Missingno in Python or naniar in R for my initial diagnostics?

A: Both are excellent for initial exploration. The choice often depends on your primary analysis ecosystem.

Missingno(Python): Excellent for quick matrix heatmaps, nullity correlation heatmaps, and dendrograms. Highly integrated withpandas.naniar(R): Provides a tidyverse-consistent grammar for missing data exploration. Excellent for creatingggplot2-style visualizations likegeom_miss_point()and for summarizing missingness across factors.- Recommendation: Use the one that matches your team's workflow. For a comprehensive view, consider the comparative table below.

Comparative Analysis of Diagnostic Tools

Table 1: Key Software Tools for Initial Missing Data Diagnostics

| Tool / Package | Language | Primary Visualization Strength | NMAR Testing Capability | Best For |

|---|---|---|---|---|

missingno |

Python | Matrix heatmap, dendrogram | No | Quick, intuitive overview of large matrix patterns. |

naniar |

R | Scatter plots with missingness (gg_miss_case), histograms |

Via model supplements | Tidy, integrated exploratory data analysis (EDA). |

VIM |

R | Aggregated plots (bar, box), marginplot | Yes (via testMAR) |

In-depth statistical exploration & hypothesis testing. |

ggplot2 (custom) |

R | Custom heatmaps, histograms | No (requires coding) | Full customization for publication. |

seaborn (custom) |

Python | Custom heatmaps, histograms | No (requires coding) | Full customization for publication. |

MCARTest |

R | Statistical test output | Yes (tests MCAR) | Formal hypothesis testing for Missing Completely at Random. |

Table 2: Common Missing Data Patterns in Multi-Omics & Initial Interpretations

| Pattern (Visualized) | Likely Mechanism | Suggested Next Diagnostic Step |

|---|---|---|

| Random scattered missingness | Stochastic detection failure, low abundance. | Check for correlation with low signal intensity (MNAR test). |

| Missing by sample group | Batch effect, poor sample quality. | Compare with sample metadata (e.g., extraction date, cohort). |

| Missing by feature type/block | Platform failure, analyte-specific protocol issue. | Review platform-specific QC logs. |

| Monotone pattern | Sequential assays where failure in one step halts the next. | Treat as a structured missingness problem; consider pattern-specific imputation. |

Experimental Protocol: Testing for NMAR Patterns in Proteomics Data

Objective: To statistically assess if missing protein intensities are dependent on the (unobserved) true protein abundance level (a sign of NMAR).

Materials:

- A normalized protein intensity matrix (log-transformed).

- A corresponding binary missingness indicator matrix.

- R or Python statistical environment.

Procedure:

- Hypothesis: For a target protein with missing values, its probability of being missing depends on its underlying true abundance.

- Proxy Variable: Use the population average of the observed values for that protein across samples as a proxy for the unobserved true abundance. Note: This is a strong assumption.

- Model: Fit a logistic regression model for each protein with substantial missingness.

- Response Variable: Missingness indicator for the protein (1=missing, 0=observed).

- Predictor Variable: The mean observed intensity of that protein (your proxy).

- Interpretation: A statistically significant (p < 0.05 after multiple-testing correction) coefficient for the predictor suggests evidence against the Missing at Random (MAR) mechanism and potential NMAR.

- Sensitivity Analysis: Repeat the test using different proxies (e.g., intensity from a correlated protein measured without missingness) to check robustness.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Kits for QC in Multi-Omics Experiments

| Item | Function in Context of Missing Data Prevention |

|---|---|

| Internal Standard Spike-ins (e.g., SPLASH for proteomics, MetaboRec for metabolomics) | Distinguishes true biological absence from technical dropout. Abnormal standard signals indicate platform issues causing systematic missingness. |

| Reference Quality Control (QC) Pools | A homogenized sample run repeatedly throughout the batch. High missingness in the QC pool indicates technical batch failure. |

| Process Blanks / Solvent Blanks | Identifies carryover or background contamination, which can cause false non-missing values, informing data filtering before missingness analysis. |

| Commercial "HeLa" or "NAD" Standard Extracts | Provides a benchmark for expected detection rates. Significantly lower feature detection in these standards flags protocol deviations. |

| Multi-Omics Lysis/Stabilization Buffers (e.g., AllPrep, TRIzol) | Ensures maximal concurrent extraction of DNA, RNA, protein, etc. Poor lysis is a primary source of sample-level missingness across omics layers. |

Diagnostic Workflow & Logical Diagrams

Diagram 1: Initial Diagnostic Workflow for Missing Data

Diagram 2: NMAR Test Logic Using a Proxy Variable

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In my TCGA RNA-seq analysis, many genes show "NA" or zero counts. What causes this, and how should I differentiate technical zeros from biological zeros? A: Missingness in TCGA often stems from low expression below detection limits (technical) or genomic alterations like deletions (biological). First, map zeros to genomic coordinates. Zeros co-occurring with copy number loss events in the same sample suggest biological absence. For low-expression technical zeros, consider imputation methods like SAVE or DrImpute that leverage gene-gene correlations, but only after removing biological zeros identified via genomic data integration.

Q2: My LC-MS proteomics dataset has a high proportion of missing values, especially in early fractions or for low-abundance peptides. What is the primary mechanism and the best pre-processing fix?

A: This is typical of "Missing Not At Random" (MNAR) due to censoring. Low-abundance ions fail to trigger MS/MS identification or fall below the intensity threshold. Apply a two-step filter: 1) Remove proteins with >50% missingness across all samples. 2) Use algorithms like impSeqRob or bpca for the remaining data, as they are robust to MNAR. Always perform imputation after normalization and log2 transformation.

Q3: For metabolomics (GC-MS), I see missing values that seem random across runs. Could this be an instrument issue, and what QC step did I likely miss? A: Random missingness often points to inconsistent peak picking or alignment errors in data preprocessing. Re-process your raw files with stringent QC steps: 1) Use a quality control (QC) sample injected regularly to correct for drift. 2) Apply a retention time alignment algorithm (e.g., in XCMS or MZmine). 3) Use a relative standard deviation (RSD) filter (<20% in QC samples) to remove unreliable features before considering any biological missingness.

Q4: When integrating multiple omics from TCGA, how do I handle samples with missing data in one or more platforms?

A: Do not default to complete-case analysis (deleting samples). For multi-omics integration, use methods designed for block-wise missingness. Consider: 1) Matrix completion: Tools like MISSING or iClusterPlus can impute at the integrated matrix level. 2) Multi-view learning: Methods like Multi-Omics Factor Analysis (MOFA) model all views simultaneously and handle missing observations naturally.

Experimental Protocols

Protocol 1: Differentiating Biological vs. Technical Zeros in TCGA.

- Data Download: Download Level 3 RNA-seq (gene counts) and segmented copy number variation (CNV) data for your cohort of interest from the GDC Data Portal.

- Coordinate Mapping: Using GENCODE annotations, map each gene's genomic coordinates.

- Overlap Analysis: For each sample-gene pair with zero expression, check if the gene's coordinates overlap with a segment having a CNV log2 ratio < -0.5 (indicating deletion). Use BEDTools or custom R scripts.

- Annotation: Flag zeros overlapping deletions as "biological." All other zeros are initially considered "technical."

- Validation: Cross-reference flagged biological zeros with mutation (e.g., truncating mutations) and methylation (e.g., promoter hypermethylation) data for the same sample.

Protocol 2: MNAR-Responsive Imputation for LC-MS Proteomics.

- Preprocessing: Normalize raw protein intensities using quantile normalization, followed by log2 transformation.

- Binary Matrix Creation: Create a binary matrix where 1 indicates a detected value and 0 indicates missing.

- Probabilistic Model: Use the

impSeqRobR package. It models the missingness probability using a logistic regression on the binary matrix and peptide intensity. - Iterative Imputation: The algorithm iteratively imputes missing values using a robust model, down-weighting the influence of potential outliers.

- Convergence Check: Run for a preset number of iterations (default 20) or until the imputed values stabilize.

Table 1: Prevalence and Causes of Missing Data Across Omics Platforms

| Platform | Typical % Missing | Primary Cause | Missingness Mechanism | Recommended Imputation Method |

|---|---|---|---|---|

| TCGA RNA-seq | 5-15% | Low expression, genomic deletions | MNAR & MAR | SAVE, DrImpute (after filtering biological zeros) |

| LC-MS Proteomics | 20-50% | Low-abundance censoring, stochastic selection | MNAR | impSeqRob, BPCA, QRILC |

| GC/LC-MS Metabolomics | 10-30% | Peak misalignment, low signal | MAR & MNAR | RF, k-NN (after rigorous peak re-alignment) |

Visualizations

Diagram 1: TCGA Zero-Value Diagnostic Workflow

Diagram 2: LC-MS MNAR Imputation Logic

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Missing Data Analysis

| Item | Function | Application Note |

|---|---|---|

| SAVE R Package | Imputation using a stratified averaging of gene correlations. | Optimal for RNA-seq data where missingness is largely MAR. |

| impSeqRob R Package | Probabilistic, iterative imputation robust to outliers and MNAR. | Primary choice for censored LC-MS proteomics data. |

| MetaboAnalyst 5.0 | Web platform with multiple imputation modules (RF, k-NN, QRILC). | Quick, GUI-based solution for metabolomics data preprocessing. |

| MICE R Package | Multiple Imputation by Chained Equations for flexible multivariate imputation. | Useful for clinical covariate imputation in integrated datasets. |

| MOFA2 R/Python | Multi-Omics Factor Analysis for integration with missing views. | Directly models missing data in multi-omics integration projects. |

| XCMS Online | Cloud-based peak picking and alignment for MS data. | Reduces missing values from technical misalignment at the raw data stage. |

From Deletion to Imputation: A Practical Guide to Multi-Omics Missing Value Strategies

Troubleshooting Guides & FAQs

Q1: Why is complete-case analysis (listwise deletion) often discouraged in multi-omics research? A: Complete-case analysis discards any sample (row) with a missing value in any variable. In multi-omics studies, where measurements from genomics, transcriptomics, proteomics, and metabolomics are integrated, missingness is pervasive due to technical limitations (e.g., low-abundance proteins, detection limits). Removing all incomplete cases typically leads to a catastrophic loss of sample size and statistical power. More critically, it can introduce severe bias if the missing data is not Missing Completely At Random (MCAR), distorting downstream biological conclusions.

Q2: Are there any valid scenarios for using filtering or complete-case analysis? A: Yes, but they are limited and require careful justification. Valid scenarios include:

- Preliminary Quality Control (QC): Filtering out entire omics features (columns) where missingness exceeds a high threshold (e.g., >50% of samples) before imputation, as these provide little information.

- MCAR with Negligible Loss: When you have strong evidence (via tests like Little's MCAR test) that data is MCAR and the proportion of removed samples is very small (e.g., <5%).

- Specific, Complete Sub-Analyses: When a specific hypothesis test requires a perfectly aligned subset of omics layers for a small, targeted set of features, and the sample loss is acceptable for that sub-question.

Q3: I filtered my data, and my p-values became "better" (more significant). Is this a good sign? A: No, this is a major red flag. It often indicates that the filtering process has systematically biased your dataset. For example, removing samples with missing values in a low-abundance metabolic pathway may inadvertently remove all samples from a certain patient subgroup or treatment response category. This artificially inflates effect sizes and leads to false-positive discoveries. You must investigate the characteristics of the removed samples versus the retained ones.

Q4: How do I decide between filtering a feature (column) versus imputing it? A: The decision is based on the extent and likely mechanism of missingness. Use the following workflow:

Q5: What are the key diagnostic steps before considering any omission of data? A: Follow this diagnostic protocol:

- Quantify Missingness: Generate a table of missingness percentages per sample and per feature across all omics layers.

- Visualize Pattern: Use heatmaps or missingness diagrams to visualize if patterns exist (e.g., all missing in a specific group).

- Test for MCAR: Apply statistical tests (e.g., Little's test) to assess if the null hypothesis of MCAR can be rejected.

- Correlate with Phenotypes: Test if the presence/absence of missing data is correlated with key clinical or experimental covariates (e.g., tumor stage, batch, survival outcome). If a correlation exists, the data is not MCAR, and omission is dangerous.

Key Diagnostic Data Table

Table 1: Sample Missingness Analysis in a Hypothetical Multi-Omics Cohort (N=100 Samples)

| Omics Layer | Features Measured | Samples with 0% Missing | Samples with >20% Missing | Mean Missingness per Sample |

|---|---|---|---|---|

| Genomics | 500,000 SNPs | 100 (100%) | 0 (0%) | 0.0% |

| Transcriptomics | 20,000 Genes | 85 (85%) | 5 (5%) | 2.1% |

| Proteomics | 5,000 Proteins | 15 (15%) | 30 (30%) | 18.7% |

| Metabolomics | 500 Metabolites | 10 (10%) | 45 (45%) | 25.4% |

Conclusion from Table 1: Proteomics and metabolomics have significant missing data. Applying complete-case analysis across all layers would reduce the usable sample size from 100 to ~10, validating the need for advanced handling over omission.

Experimental Protocol: Validating the Impact of Complete-Case Analysis

Objective: To empirically demonstrate the bias and power loss induced by complete-case analysis in a multi-omics dataset with simulated missing not at random (MNAR).

Materials: A publicly available multi-omics dataset (e.g., from TCGA or CPTAC) with clinical phenotyping.

Methodology:

- Data Preparation: Start with a clean, complete subset of the data (this may require prior mild imputation on a source dataset).

- Simulate MNAR: Introduce missing values into one omics layer (e.g., proteomics) under an MNAR mechanism. For example, set values below the 1st quartile of abundance to be missing with a 90% probability.

- Create Analysis Pipelines:

- Pipeline A (Complete-Case): Remove any sample with a missing value in the integrated dataset.

- Pipeline B (Advanced Imputation): Apply an appropriate MNAR-aware imputation method (e.g.,

MissForestwith a missingness indicator, orQRILCfor left-censored data).

- Perform Differential Analysis: Using both processed datasets, perform a differential abundance/expression test for features between two clinical groups (e.g., tumor vs. normal).

- Compare Outcomes: Calculate and compare:

- Number of samples retained for analysis.

- The log2 fold change and p-value distribution of the identified significant features.

- The overlap in significant features identified by both pipelines.

- Bias assessment: Check if the removed samples in Pipeline A are disproportionately from one clinical group.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Handling Missing Data in Multi-Omics

| Item (Software/Package) | Primary Function | Application Context |

|---|---|---|

R: naniar & visdat |

Visualization and exploration of missing data patterns. | Critical first step in diagnostics. Generates summaries and plots to understand the structure of missingness. |

R: mice (Multivariate Imputation by Chained Equations) |

Flexible imputation of missing data for mixed data types (continuous, categorical). | Workhorse for Multiple Imputation (MI) under the Missing at Random (MAR) assumption. |

R: MissForest |

Non-parametric imputation using random forests. | Handles complex interactions and non-linearities; can be robust to some MNAR deviations. |

R: imputeLCMD |

Imputation methods for left-censored data (e.g., MNAR from detection limits). | Specifically designed for proteomics/metabolomics data where low signals are missing. |

R: Perseus / Python: scikit-learn |

Contains various simple imputation functions (mean, median, KNN). | Useful for baseline imputation or within a larger preprocessing pipeline. |

Python: Autoimpute |

Advanced statistical imputation with analysis pooling for multiple imputation. | Streamlines the MI workflow, from imputation to result aggregation. |

| Little's MCAR Test | Statistical test for the null hypothesis that data is Missing Completely at Random. | Formal testing to justify (or more often, reject) the use of complete-case analysis. |

Troubleshooting Guides & FAQs

Q1: My k-NN imputation in R (VIM/DMwR2 packages) is extremely slow on my large multi-omics dataset. What can I do?

A: This is common with high-dimensional omics data. First, ensure you are using the Gower distance metric (often default) as it handles mixed data types common in multi-omics. For acceleration:

- Subset Features: Use variance filtering or correlation-based feature selection prior to imputation.

- Approximate Methods: Use the

imputepackage'simpute.knnfunction, which uses faster approximate nearest neighbor search. - Parallelization: In Python's

scikit-learn,KNNImputercan be used withn_jobs=-1. In R, consider theparallelpackage withparLapply. - Sample Wisely: If computationally feasible, transpose your matrix so samples are neighbors, not features.

Q2: After SVD-based imputation (e.g., softImpute in R), my resulting dataset has introduced negative values for protein abundance or gene expression, which is biologically impossible. How do I fix this?

A: SVD is a linear algebra method unaware of biological constraints.

- Post-hoc Truncation: Simply set all negative values to 0 or a small positive value (e.g., the detection limit). This is common but can bias downstream analysis.

- Use a Constrained Algorithm: Opt for a method like

bcv(Bioconductor) which includes non-negative matrix factorization (NMF)-based imputation, inherently preventing negative values. - Log-Transform: Perform imputation on log-transformed data, then back-transform. The log scale reduces the impact of extreme values and may mitigate negatives.

Q3: MissForest in R (missForest package) fails with the error "Error in randomForest.default(...) : NAs in foreign function call" on my metabolomics data with block-wise missingness.

A: MissForest requires an initial imputation to start its iterative process. The default mean/mode imputation can fail if entire rows/columns are missing.

- Solution: Provide a robust initial imputation matrix. Use a simple method like

knn(with a small k) ormicewith a simple model (e.g.,pmm) for a few iterations to create a starter matrix without gaps, then feed it to MissForest using theximp.initargument.

Q4: When using MICE for imputing multi-omics data (mixed continuous and categorical), how do I choose the right method (e.g., pmm, norm, rf) for each variable in Python's statsmodels or R's mice?

A: The choice is critical and should be guided by data type and distribution.

- Continuous (Normal): Use

normornorm.nob. - Continuous (Non-Normal): Use

pmm(Predictive Mean Matching) – it's robust and preserves the original data distribution. - Binary Categorical: Use

logreg(logistic regression). - Multi-class Categorical: Use

polyreg(polytomous regression). - High-dimensional/Complex Interactions: Use

rf(random forest), but it is computationally intensive. Example in R:

Q5: How do I evaluate the performance of these imputation methods on my real multi-omics dataset where the true values are unknown? A: Use an "Amputation and Validation" protocol.

- Amputate: Artificially introduce missingness (e.g., MCAR at 10% rate) into a complete subset of your data.

- Impute: Apply each method (k-NN, SVD, MissForest, MICE) to this dataset with artificial gaps.

- Validate: Compare the imputed values against the known, original values.

- Metric: Use Normalized Root Mean Square Error (NRMSE) for continuous data and Proportion of Falsely Classified (PFC) for categorical data. Lower values indicate better performance.

Table 1: Comparative Performance of Imputation Methods (Simulated Multi-Omics Data)

| Method | Package (R/Python) | Average NRMSE (Gene Expression) | Average PFC (Mutation Status) | Relative Speed | Handles Mixed Data |

|---|---|---|---|---|---|

| k-NN Imputation | VIM (R), KNNImputer (Py) |

0.154 | 0.021 | Fast | Yes (with Gower dist.) |

SVD (softImpute) |

softImpute (R) |

0.121 | N/A | Very Fast | No (Continuous only) |

| MissForest | missForest (R) |

0.098 | 0.012 | Slow | Yes |

MICE (norm/pmm) |

mice (R), statsmodels (Py) |

0.113 | 0.015 | Medium | Yes |

Experimental Protocol: Amputation-Validation for Imputation Benchmarking

Objective: To empirically evaluate the accuracy of four imputation methods within a multi-omics context.

- Data Preparation: Identify a complete-case subset (no missing values) from your integrated omics matrix (e.g., 100 samples x 500 features from genomics, proteomics).

- Amputation: Simulate Missing Completely At Random (MCAR) mechanisms. Remove 10%, 20%, and 30% of values across the dataset using R's

prodNAfunction (missForestpackage) or custom Python code. - Imputation Execution:

- Apply k-NN (k=10), SVD (

softImpute, rank=5), MissForest (ntree=100), and MICE (m=5, maxit=10, method='pmm'). - Use default parameters unless specified by prior knowledge.

- Apply k-NN (k=10), SVD (

- Validation: For each artificially missing cell, calculate the difference between the imputed value and the true (held-out) value.

- Analysis: Compute aggregate NRMSE per method and missingness rate. Perform a repeated measures ANOVA to determine if differences in method performance are statistically significant.

Visualizations

Imputation Method Benchmarking Workflow

Iterative Model-Based Imputation Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Multi-Omics Imputation

| Item | Function in Research | Example (R/Python) |

|---|---|---|

| Data Wrangling Suite | Handles heterogeneous data formats from sequencers, mass specs, etc. | tidyverse (R), pandas (Py) |

| Parallel Processing Library | Accelerates computationally intensive methods like MissForest/MICE. | parallel, future (R), multiprocessing (Py) |

| High-Performance Computing (HPC) Scheduler | Manages batch jobs for large-scale imputation experiments. | Slurm, Sun Grid Engine |

| Containerization Platform | Ensures reproducibility of the software environment. | Docker, Singularity |

| Imputation-Specific Packages | Core implementations of the algorithms. | mice, missForest, softImpute (R); scikit-learn, fancyimpute (Py) |

| Visualization Library | Diagnoses missingness patterns and imputation results. | ggplot2 (R), VIM (R), matplotlib/seaborn (Py) |

Troubleshooting Guides & FAQs

Q1: After applying a k-NN imputation to my single-cell RNA-seq data, I observe artificially homogenized clusters. What went wrong and how can I fix it?

A: This is a common issue when using global k-NN on sparse, high-dimensional scRNA-seq data. The algorithm imputes values based on nearest neighbors in expression space, which can blur biologically distinct populations if k is too large or the distance metric is inappropriate.

- Solution: Switch to a method that leverages the data structure, such as scImpute or SAVER. These methods first identify "dropout" genes likely to be technical zeros vs. truly unexpressed genes using a probabilistic model, then perform imputation only on the predicted technical zeros. This preserves the sparsity structure of true biological zeros.

- Protocol: For scImpute:

- Install the R package from CRAN:

install.packages("scImpute"). - Prepare your count matrix (genes x cells).

- Run:

scimpute(count_path, infile="csv", outfile="csv", type="count", Kcluster=#), where#is your estimated number of cell types. The function automatically estimates dropout probability and imputes.

- Install the R package from CRAN:

Q2: When performing cross-modal imputation (e.g., predicting missing methylation from gene expression), my model performs well on training data but fails on a new batch. How do I address batch effects? A: Cross-modal models are highly susceptible to batch effects. The failure indicates the model learned batch-specific technical correlations rather than robust biological relationships.

- Solution: Integrate batch correction before or within the imputation pipeline.

- Pre-process each omics layer individually using a method like Harmony or ComBat to remove batch effects.

- Or, use a cross-modal framework that explicitly accounts for batch, such as a Multi-omics Autoencoder with a batch-adversarial loss. Here, the encoder learns a shared representation that predicts the missing modality while a discriminator tries to guess the batch from this representation; the encoder is trained to "fool" the discriminator.

- Protocol (Simplified Pre-processing with ComBat):

- Using the

svapackage in R, for each modality matrix (e.g.,mat_exp), run:corrected_exp <- ComBat(mat_exp, batch=batch_vector). - Use the

corrected_expmatrix to train your cross-modal predictor (e.g., a ridge regression model) for methylation.

- Using the

Q3: My multi-omics dataset has missingness in entire samples for some modalities (block-wise missingness). Which imputation approach should I avoid, and what is recommended? A: Avoid using vanilla matrix factorization (e.g., standard SVD) on a concatenated omics matrix. It cannot handle entire missing blocks and will bias the latent factors.

- Solution: Use a joint matrix factorization or multi-view learning method designed for block-missing data, such as MOGONET or Joint Non-negative Matrix Factorization (jNMF). These methods factorize each omics matrix into a common sample-factor matrix and a modality-specific feature-factor matrix, allowing information transfer across modalities even when some are entirely missing for a subset of samples.

- Protocol (High-level jNMF workflow):

- For

momics matricesX1...Xm, the model seeks matricesW(common sample factors),H1...Hm(modality-specific loadings) such thatXi ≈ W * Hifor all i. - The objective function

∑_i || Xi - W * Hi ||^2is minimized iteratively, updating only the non-missing parts of eachXi. - Once trained, the product

W * Higives the imputed values for missing entries (or entire blocks) in modalityi.

- For

Q4: I used a deep learning model (Autoencoder) for imputation, but the results are non-reproducible and show high variance. What are the key stabilization steps? A: Deep learning for imputation requires careful regularization due to the risk of overfitting to noise and the stochastic nature of training.

- Solution:

- Regularization: Enforce dropout layers during training, L2 weight decay, and a low-dimensional bottleneck in the autoencoder.

- Loss Function: Use a masked loss function (e.g., Mean Squared Error) calculated only on observed values. The model learns to reconstruct the input, but error is penalized only where data truly exists, forcing it to generalize to missing regions.

- Ensemble: Train multiple autoencoders with different random seeds and average their imputation outputs. This reduces variance.

- Protocol (Masked MSE Loss in PyTorch):

Summarized Quantitative Data

Table 1: Performance Comparison of Selected Imputation Methods on a Benchmark scRNA-seq Dataset (PBMC)

| Method | Type | RMSE (↓) | Cell-type Cluster Silhouette Score (↑) | Runtime (min, ↓) |

|---|---|---|---|---|

| Mean Imputation | Naive | 1.45 | 0.12 | <0.5 |

| k-NN Imputation (k=10) | Global | 0.98 | 0.41 | 5.2 |

| scImpute | Omics-Specific | 0.61 | 0.65 | 12.7 |

| SAVER | Omics-Specific | 0.58 | 0.68 | 22.3 |

| DeepImpute | DL-Based | 0.63 | 0.62 | 8.5 |

Table 2: Cross-Modal Imputation Accuracy for Predicting 20% Missing DNA Methylation from Gene Expression (TCGA-BRCA)

| Model | Pearson Correlation (↑) | Mean Absolute Error (↓) | Handles Block Missing? |

|---|---|---|---|

| Ridge Regression | 0.72 | 0.085 | No |

| Random Forest | 0.69 | 0.091 | No |

| Multi-omics AE (w/ Adversarial Batch) | 0.78 | 0.072 | Yes |

| MOGONET | 0.75 | 0.079 | Yes |

Experimental Protocols

Protocol 1: Benchmarking Imputation Methods for scRNA-seq Data Objective: Evaluate the impact of imputation on downstream clustering.

- Data Acquisition: Download a public scRNA-seq dataset with known cell types (e.g., from 10x Genomics).

- Data Preprocessing: Filter cells and genes. Normalize using library size and log-transform (e.g., log1p(CPM)).

- Induce Missingness: For a ground truth comparison, artificially mask 20% of non-zero entries uniformly at random to simulate dropouts.

- Imputation: Apply each candidate method (Mean, k-NN, scImpute, etc.) to the masked matrix.

- Evaluation:

- RMSE: Calculate between imputed values and the held-out true values.

- Clustering: Perform PCA and Leiden clustering on the imputed data. Compute silhouette score against known cell labels.

- Analysis: Compare metrics across methods.

Protocol 2: Cross-Modal Imputation with a Multi-omics Autoencoder Objective: Impute missing proteomics data using matched transcriptomics.

- Data Preparation: Obtain a paired transcriptomics (T) and proteomics (P) matrix (samples x features). Standardize each modality (z-score).

- Architecture: Build a dual-input autoencoder.

- Encoder: Two separate dense branches for T and P, concatenated, then fed into a joint bottleneck layer.

- Decoder: From the bottleneck, two separate dense branches reconstruct T and P.

- Training: Use only samples with both T and P present. Input (T, P) and train to reconstruct (T, P) using a combined reconstruction loss (MSE).

- Imputation: For a sample with missing P but present T, input (T, zeros). The decoder's proteomics output is the imputation.

- Validation: Hold out a subset of P values in training samples to validate imputation accuracy (Pearson correlation).

Visualization Diagrams

Diagram 1: scImpute Workflow for scRNA-seq

Diagram 2: Multi-omics Autoencoder for Cross-Modal Imputation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Multi-omics Imputation Research

| Item | Function in Imputation Research | Example/Note |

|---|---|---|

| Benchmark Datasets | Provide ground truth for inducing missingness and evaluating methods. | TCGA (cancer), GTEx (tissue), 10x PBMC (single-cell). |

| High-Performance Computing (HPC) / Cloud Credits | Enables training of complex models (deep learning, matrix factorization) on large omics matrices. | AWS, Google Cloud, or local GPU cluster access. |

| Omics Integration Software | Frameworks that often include or facilitate imputation modules. | MOFA+ (R/Python), Scanpy (scRNA-seq, Python). |

| Containerization Tools | Ensures reproducibility of computational environments and pipelines. | Docker, Singularity. |

| Missingness Pattern Simulator | Custom scripts to artificially generate Missing Completely at Random (MCAR), At Random (MAR), or Block-wise missing data for controlled experiments. | Essential for rigorous benchmarking. |

Troubleshooting Guides & FAQs

FAQ 1: Why does my imputed dataset show unexpected batch effects after running MICE?

Answer: This is often due to the algorithm incorporating batch-specific patterns into the imputation model. Always stratify your MICE imputation by known batch variables or include batch as a fixed effect in the predictive model. Pre-process to remove major batch effects before imputation if the missingness is not believed to be batch-related.

FAQ 2: I get convergence errors when using MissForest. What should I check?

Answer: MissForest convergence relies on out-of-bag (OOB) error stabilization. First, increase maxiter (e.g., to 50). If errors persist, check for features with an extremely high proportion (>80%) of missing values; consider removing them prior to imputation. Also, ensure your data is properly scaled, as the underlying Random Forest is sensitive to feature scales.

FAQ 3: After k-NN imputation, my downstream clustering results are overly "tight" with no outliers. Is this expected?

Answer: Yes, k-NN imputation can reduce legitimate biological variance by borrowing information from neighbors, artificially inflating sample similarity. This is a known limitation of donor-based methods. Consider using a method like SVD-based imputation or Bayesian PCA, which better preserves global data structure, for clustering-focused analyses.

FAQ 4: How do I choose an imputation method for data missing not at random (MNAR) in proteomics?

Answer: For MNAR (e.g., missing due to abundance below detection), simple mean/median imputation is strongly discouraged as it biases results. Use methods designed for left-censored data: for protein-level data, consider impSeqRob in R or use a left-shifted Gaussian distribution (as in PYMUVI). A common practice is to use a QRILC (Quantile Regression Imputation of Left-Censored data) approach.

Table 1: Performance Comparison of Common Imputation Methods on a Simulated Multi-Omics Dataset (n=100 samples)

| Method | RMSE (Metabolomics) | RMSE (Transcriptomics) | Computation Time (s) | % Variance Preserved |

|---|---|---|---|---|

| Mean Imputation | 1.45 | 0.89 | <1 | 67% |

| k-NN (k=10) | 0.92 | 0.61 | 12 | 82% |

| MICE (5 iterations) | 0.88 | 0.58 | 85 | 88% |

| MissForest (100 trees) | 0.71 | 0.52 | 210 | 91% |

| SVD (rank=5) | 0.95 | 0.55 | 8 | 85% |

Table 2: Impact of Missing Value Rate on Imputation Accuracy

| Initial Missing Rate | Best Method (Metabolomics) | RMSE Increase vs. 5% Baseline |

|---|---|---|

| 5% (Baseline) | MissForest | 0.00 |

| 10% | MissForest | +0.08 |

| 20% | MICE | +0.21 |

| 30% | SVD | +0.45 |

Experimental Protocols

Protocol 1: Benchmarking Imputation Methods for Proteomics Data

- Data Simulation: Start with a complete proteomics matrix (e.g., from a public repository like PRIDE). Introduce missing values under two mechanisms: MCAR (randomly remove 15% of values) and MNAR (remove the lowest 15% of abundances in each row).

- Imputation Execution: Apply each candidate method (Mean, k-NN, MICE, QRILC) to the datasets with introduced missingness. Use default parameters as a starting point.

- Validation: Calculate the Root Mean Square Error (RMSE) between the imputed matrix and the original complete matrix for the artificially missing values only.

- Downstream Validation: Perform a differential expression analysis pipeline on both the original complete data and the imputed data. Compare the lists of significant proteins (e.g., using Jaccard index).

Protocol 2: Integrated Multi-Omics Imputation Using MOFA2

- Data Preparation: Format your transcriptomics, methylation, and proteomics datasets into a list of matrices, aligned by common samples. Log-transform and scale each view appropriately.

- Model Training: Use the

create_mofa()function to build an object. Set the convergence threshold (convergence_mode). Train the model, which inherently learns a latent representation that accounts for missing values. - Imputation: Extract the imputed data from the trained model using the

impute()function. This generates a complete data list for each view, based on the shared factor model. - Evaluation: Use cross-validation (holding out additional random values) to assess the imputation accuracy per data view.

Visualizations

Title: Decision Workflow for Multi-Omics Imputation Method Selection

Title: Experimental Design for Benchmarking Imputation Performance

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Multi-Omics Imputation Pipeline

| Item | Function | Example (R/Python) |

|---|---|---|

| Missingness Pattern Visualizer | Diagnose MCAR vs. MNAR patterns before method selection. | naniar::gg_miss_upset(), missingno matrix plot |

| Scalable k-NN Imputer | Efficient nearest-neighbor imputation for large matrices. | impute::impute.knn(), sklearn.impute.KNNImputer |

| Flexible MICE Package | Gold-standard for multiple imputation with customizable models. | mice package in R |

| Random Forest Imputer | Non-parametric imputation for complex interactions. | missForest package in R |

| MNAR-Specific Imputer | Handles left-censored data common in proteomics/ metabolomics. | imputeLCMD::impute.QRILC() |

| Multi-View Integration Tool | Jointly imputes multiple omics layers using shared factors. | MOFA2 package |

| Benchmarking Framework | Systematically compares methods on your specific data. | benchmark_imputation() custom script |

Solving Imputation Pitfalls: Optimization Strategies for Robust Multi-Omics Analysis

Technical Support Center

Troubleshooting Guide & FAQs

Q1: My imputation model performs excellently on training data but fails to generalize to unseen biological replicates. What is happening and how do I fix it? A: This is a classic sign of overfitting. The model has learned noise or specific patterns in your training multi-omics set that do not represent the broader biological population.

- Diagnostic Steps: Compare training and validation loss curves. A diverging gap indicates overfitting.

- Solutions:

- Increase Regularization: Apply stronger L1/L2 penalties or increase dropout rates in neural network-based imputers (e.g., GAIN).

- Simplify the Model: Reduce the number of neighbors in KNN, decrease the depth of a random forest, or lower the latent dimensions in a matrix factorization model.

- Expand/Amplify Training Data: Use data augmentation techniques specific to omics (e.g., adding small Gaussian noise, subsampling) or incorporate publicly available datasets from similar experiments.

- Implement Early Stopping: Halt training when validation loss plateaus or begins to increase.

Q2: After tuning, my imputation model is stable but consistently produces high error rates across all datasets. What does this indicate? A: This suggests underfitting. The model is too simplistic to capture the complex, non-linear relationships within and between your genomics, transcriptomics, and proteomics layers.

- Diagnostic Steps: Both training and validation errors are high and closely aligned.

- Solutions:

- Reduce Regularization: Lower weight decay parameters or remove dropout layers.

- Use a More Complex Model: Switch from simple mean/mode imputation to a model capable of capturing interactions (e.g., MICE with random forest chained equations, a deep autoencoder).

- Add Informative Features: Incorporate prior biological knowledge as features (e.g., pathway membership, protein-protein interaction networks) to guide the imputation.

- Increase Training Iterations: Allow the optimization algorithm more epochs to converge, ensuring the learning rate is not too low.

Q3: How do I determine the optimal number of iterations for an iterative imputation method like MICE or EM? A: Running for too few iterations causes underfitting; too many can lead to overfitting and increased computational cost.

- Protocol:

- Artificially introduce missingness (e.g., 10-20% MCAR) into a complete subset of your data.

- Run the iterative algorithm and record the imputed values for the introduced missing entries at each iteration.

- Calculate the deviation (e.g., RMSE) between the imputed and true known values at each iteration.

- Plot iteration number against error. The optimal point is where the error curve flattens (convergence). Typically, 10-20 iterations are sufficient for MICE, but this must be empirically validated.

Q4: When using a K-Nearest Neighbors (KNN) imputer, how do I select 'K' to avoid local overfitting? A: A very low 'K' is sensitive to noise (overfitting local structure), while a very high 'K' smooths out meaningful biological variation (underfitting).

- Methodology:

- Perform a grid search over a range of

kvalues (e.g., 5, 10, 15, 20, 25, 30). - For each

k, use cross-validation on data with artificially introduced missingness. - Calculate the aggregate imputation error (e.g., NRMSE for continuous, proportion of falsely classified for categorical).

- Plot

kagainst error. Choose thekat the elbow of the curve, balancing bias and variance.

- Perform a grid search over a range of

Table 1: Impact of Hyperparameters on Common Imputation Models in Multi-Omics

| Model | Key Hyperparameter | Risk if Too High | Risk if Too Low | Typical Search Range (Multi-Omics) | Optimal Tuning Method |

|---|---|---|---|---|---|

| MICE (Random Forest) | max_iter |

Overfitting, high compute time | Underfitting, non-convergence | 5 - 30 | Convergence diagnostic plots |

| KNN Imputer | n_neighbors (k) |

Underfitting (oversmoothing) | Overfitting (noise capture) | 5 - 30 (scales with sample size) | Cross-validation on NRMSE |

| MissForest | max_depth of trees |

Overfitting | Underfitting | 10 - 50 | Out-of-bag error estimate |

| Autoencoder (DNN) | latent_dim |

Overfitting (memorization) | Underfitting (poor representation) | 32 - 256 | Validation loss monitoring |

| Matrix Factorization | rank (latent features) |

Overfitting to spurious correlations | Underfitting, poor recovery | 10 - 100 | Bayesian optimization |

Table 2: Example Convergence Metrics for Iterative Imputation (Synthetic Proteomics Dataset)

| Iteration | Training Loss (MSE) | Validation Loss (MSE) | Delta (Val - Train) | Status |

|---|---|---|---|---|

| 1 | 0.452 | 0.481 | 0.029 | Underfitting |

| 5 | 0.198 | 0.211 | 0.013 | Learning |

| 10 | 0.101 | 0.108 | 0.007 | Optimal (Converged) |

| 15 | 0.092 | 0.115 | 0.023 | Early Overfitting |

| 20 | 0.085 | 0.132 | 0.047 | Overfitting |

Experimental Protocol: Cross-Validation for Imputation Parameter Tuning

Title: Nested Cross-Validation Protocol for Imputation Model Tuning

Objective: To robustly select hyperparameters for an imputation model without data leakage, ensuring generalizable performance on multi-omics data.

Procedure:

- Outer Loop (Performance Evaluation): Split the complete-case subset of data (where no values are missing) into K folds (e.g., 5).

- Inner Loop (Hyperparameter Tuning): For each outer training set:

a. Artificially impose a missing-at-random (MAR) pattern (e.g., 15%).

b. For each candidate hyperparameter set (e.g.,

k=5,10,15for KNN): i. Perform L-fold cross-validation (e.g., 3-fold) on this outer training set. ii. Impute the artificial missing values in each inner fold. iii. Compare imputed values to the known original values, calculating an error metric (e.g., Normalized Root Mean Square Error - NRMSE). c. Select the hyperparameter set yielding the lowest average inner-loop error. - Assessment: Train a model with the selected optimal parameters on the entire outer training set, impute the held-out outer test set, and compute the final generalization error.

- Aggregation: Repeat for all K outer folds and average the generalization errors for a final performance estimate.

Diagram: Workflow for Tuning and Validating Imputation Models

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Imputation Model Development

| Item / Software Package | Function in Imputation Research | Key Application Note |

|---|---|---|

| Scikit-learn | Provides benchmark imputers (KNN, IterativeImputer/MICE) and essential tools for cross-validation, grid search, and metrics calculation. | Use sklearn.impute.IterativeImputer with a RandomForestRegressor as the estimator for non-linear multi-omics relationships. |

| R MICE Package | Gold-standard implementation of Multiple Imputation by Chained Equations (MICE), allowing different models per variable type. | Critical for mixed data types (continuous metabolomics & count-based microbiome). Use mice() with method = "rf" for robust performance. |

| TensorFlow / PyTorch | Frameworks for building custom deep learning imputation models (e.g., GAIN, VAE, Denoising Autoencoders). | Essential for capturing complex, high-dimensional interactions across omics layers. Requires significant tuning to avoid overfitting. |

| MissForest (R) | Non-parametric imputation using a random forest model. Often performs well on heterogeneous omics data. | Handles mixed data types and complex interactions out-of-the-box. Tune mtry and maxiter parameters. |

| Hyperopt / Optuna | Libraries for advanced hyperparameter optimization (Bayesian optimization) beyond grid search. | More efficient than exhaustive search for tuning complex models like deep neural networks over large parameter spaces. |

| SciPy / NumPy | Foundational libraries for handling large matrices, performing statistical tests, and custom algorithm development. | Used for creating synthetic missingness patterns, calculating convergence metrics, and custom loss functions. |

FAQs & Troubleshooting Guides

Q1: At what threshold should I remove a feature (e.g., gene, protein) with excessive missing data from my multi-omics analysis? A: There is no universal threshold; it is experiment-dependent. However, a common starting point in literature is to remove features with >20% missingness. The acceptable threshold varies by omics layer (e.g., proteomics often tolerates higher missingness than transcriptomics) and downstream analysis. For a drug target screening experiment, a stricter threshold (e.g., <10% missing) may be applied to ensure reliability. Always perform a sensitivity analysis by testing multiple thresholds (e.g., 10%, 20%, 30%) and comparing the stability of your key results.

Q2: I have applied a 20% missingness filter, but my dataset lost over 40% of its proteins. What are my options? A: This is common in mass spectrometry-based proteomics. Instead of discarding the data, consider these steps:

- Investigate the Cause: Determine if missingness is Missing Completely At Random (MCAR) or biologically relevant (Missing Not At Random, MNAR). MNAR, common in proteomics, suggests the protein is absent or below detection in a sample.

- Use MNAR-specific Imputation: Apply methods designed for left-censored (MNAR) data like

MinProb(from theimp4porDEPR packages) orQRILC. - Data Augmentation via Imputation: Use multiple imputation (e.g.,

micepackage) to create several complete datasets, analyze each, and pool results. - Alternative Approach: Binary Conversion: For hypothesis-driven studies, you may convert the protein abundance matrix to a binary "detected/not-detected" matrix and use statistical tests suitable for count data.

Q3: My multi-omics integration failed after imputing each layer separately. What went wrong? A: Separate imputation breaks the cross-omics relationships. You need a joint imputation method that models all omics layers simultaneously.

- Troubleshooting Step: Use a multi-omics aware method like MOGONET (for classification tasks with imputation) or Multi-Omics Factor Analysis (MOFA2), which handles missing values intrinsically during its factor decomposition.

- Protocol for MOFA2-based Imputation:

- Input your multi-omics data matrices (rows=samples, columns=features) into the MOFA2 model, specifying where missing values are.

- Train the model. It will infer latent factors that explain variance across all omics types.

- Use the

imputefunction in MOFA2 to predict the missing values based on the shared latent factors and the observed data structure. - Validate by artificially introducing missingness into a complete portion of your data and assessing imputation accuracy.

Q4: Are there robust experimental protocols to reduce missing values in proteomics sample preparation? A: Yes, wet-lab strategies can significantly reduce missingness.

- Protocol: Enhanced Protein/Peptide Fractionation:

- Objective: Reduce sample complexity to improve MS/MS identification rates.

- Reagents: High-pH Reverse-Phase (RP) columns, Strong Cation Exchange (SCX) resins.

- Steps: After digestion, split the peptide sample. Fractionate using high-pH RP chromatography (e.g., into 8-12 fractions) or SCX. Desalt each fraction separately and analyze via LC-MS/MS. Use spectral matching software (MaxQuant, DIA-NN) to consolidate identifications across fractions, dramatically increasing coverage and reducing missingness.

Table 1: Common Imputation Methods for High Missingness Scenarios

| Method (Package) | Best For Missingness Type | Typical Use Case | Key Consideration |

|---|---|---|---|

k-Nearest Neighbors (impute R) |

MCAR, MAR | Transcriptomics, Metabolomics | Computationally heavy for large p (features). |

MinProb (imp4p R) |

MNAR | Proteomics (abundance data) | Assumes data is log-normally distributed. |

Random Forest (missForest R) |

MCAR, MAR | Multi-omics, Mixed data types | Can capture complex interactions but is slow. |

Multiple Imputation (mice R) |

MCAR, MAR | Any layer, for downstream stats | Provides uncertainty estimates. |

| MOFA2 (Python/R) | MCAR, MAR, MNAR | Multi-omics integration | Directly models shared structure for imputation. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Mitigating Missing Values in Proteomics

| Item | Function | Example Product/Brand |

|---|---|---|

| Phase Transfer Surfactant (PTS) | Enhances protein solubilization and digestion efficiency, increasing peptide yield and MS detection. | ProteaseMAX |

| Tandem Mass Tag (TMT) Reagents | Multiplex samples (e.g., 16-plex) in one MS run, reducing run-to-run variation and missing data from label-free workflows. | Thermo Fisher TMTpro 16plex |

| High-pH RP Fractionation Kit | Reduces sample complexity pre-MS, increasing proteome depth and lowering missingness per run. | Pierce High pH Reversed-Phase Peptide Fractionation Kit |

| Phosphatase/Protease Inhibitor Cocktails | Preserves post-translational modification states and prevents protein degradation during prep, maintaining data completeness. | Halt Protease & Phosphatase Inhibitor Cocktails |

| Internal Standard Spike-ins | Provides a retention time and abundance reference for alignment and imputation in DIA/SWATH-MS. | Biognosys iRT Kit |

Experimental Workflow for Handling High Missingness

Signaling Pathway Impacted by Missing Value Handling

Troubleshooting Guides & FAQs

Q1: After imputing missing values in my proteomics dataset, the integrated transcriptomics-proteomics analysis shows biologically implausible correlations. What went wrong? A: This is a classic sign of imputation-induced bias. Batch-specific missingness patterns were likely treated uniformly. First, diagnose using the Batch-Imputation Consistency Check:

- Calculate the coefficient of variation (CV) for each protein/transcript within each batch pre- and post-imputation.

- Create a summary table:

| Metric | Batch A (Pre-Imputation) | Batch A (Post-Imputation) | Batch B (Pre-Imputation) | Batch B (Post-Imputation) |

|---|---|---|---|---|

| Mean CV across features | 0.21 | 0.15 | 0.29 | 0.16 |

| % Features with CV change >50% | 12% | N/A | 9% | N/A |

| Avg. correlation shift vs. gold-standard | - | +0.41 | - | +0.38 |

If post-imputation CVs converge artificially (e.g., both become ~0.15) and correlations shift uniformly, the imputation method has over-harmonized, removing batch-specific biological signal. Solution: Apply a batch-aware imputation protocol: Use a method like bpca or missForest separately within each batch, then apply ComBat or Harmony for batch effect correction on the imputed data, using the missingness pattern as a covariate.

Q2: My imputed metabolomics data passes QC, but downstream multi-omics clustering fails to separate known biological groups. How can I troubleshoot? A: The issue likely stems from inconsistency between the distribution of imputed values and the assay's technical noise profile. Perform the Imputation-Verification Workflow:

- Spike-in Validation: Prior to imputation, artificially remove values from a complete metabolomic assay (5-20% MCAR). Impute using your chosen method.

- Quantify Error: For each metabolite, calculate the Root Mean Square Error (RMSE) between the true known values and the imputed values.

- Assess Consistency: Compare the distribution of imputed values to the distribution of truly measured low-abundance metabolites. Use a Kullback-Leibler divergence test. A divergence >0.5 suggests the imputation model is generating unrealistic values that distort latent space.

Protocol: Imputation Consistency Assessment

- Input: Matrix with artificial missingness.

- Step 1: Impute using your standard pipeline.

- Step 2: Compute per-feature RMSE.

- Step 3: For each feature, perform a two-sample Kolmogorov-Smirnov test between the distribution of imputed values and the distribution of measured values below the assay's limit of detection.

- Step 4: Flag features where RMSE > 1.5 * median RMSE and KS test p-value < 0.01. These features require assay-specific re-imputation or exclusion.

Q3: When integrating imputed data across sequencing batches, my differential expression analysis yields inflated false discovery rates (FDR). How do I correct this? A: Standard DE models assume error randomness, which is violated by systematic imputation error. You must adjust your model. Solution: Implement an Imputation Uncertainty Weighted (IUW) model.

- For each gene

iin batchj, estimate the imputation uncertainty scoreU_ijas (1 - prediction confidence from the imputation algorithm). If using kNN, this can be the variance of the donor values. - Incorporate this as a weight in your DE tool (e.g., in

limmaorDESeq2). In a linear model:Y_ij = β0 + β1*Condition + ε_ij, whereVar(ε_ij)is proportional toU_ij. - This down-weights the contribution of highly uncertain imputed values to the DE statistic.

Protocol: IUW for limma

Key Experimental Protocols Cited

Protocol 1: Cross-Batch Imputation Validation Objective: To evaluate the robustness of an imputation method across technical batches.

- Data Partitioning: Start with a complete dataset (e.g., RNA-seq counts). Split it into two batches, simulating batch effect via a random mean shift (δ ~ N(0, 0.5*sd)).

- Induce Missingness: In each batch, randomly remove values under Missing Completely at Random (MCAR, 10%) and Missing at Random (MAR, 15%) dependent on expression level.

- Imputation: Apply the chosen imputation algorithm (e.g., SAVER, missForest) in three strategies: (A) on each batch separately, (B) on pooled data, (C) on pooled data with batch as a covariate.

- Evaluation: Calculate normalized RMSE (NRMSE) per gene per batch. Successful imputation will have low NRMSE and a low inter-batch NRMSE difference (<0.1).

Protocol 2: Multi-Omics Consistency Pipeline Post-Imputation Objective: To ensure imputed data from different omics layers cohere biologically.

- Anchor Selection: Identify a set of well-measured, biologically correlated anchor features across assays (e.g., a transcription factor and its known protein product).

- Correlation Preservation Test: Calculate the Spearman correlation (ρpre) between anchors in the original data with missing values. Calculate correlation (ρpost) after separate imputation of each assay.

- Thresholding: Flag anchor pairs where |ρpost - ρpre| > 0.3. If >20% of anchors are flagged, re-impute using a multi-omics aware method (e.g., MOGSA, multi-omics factor analysis) that models the joint space.

Visualizations

Title: Batch-Consistent Imputation & Integration Workflow

Title: Diagnostic Logic for Post-Imputation Inconsistency

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Ensuring Post-Imputation Consistency |

|---|---|

| Synthetic Spike-in Controls (e.g., SIRMs for metabolomics) | Provides a known, constant signal across batches to calibrate and assess the impact of imputation on absolute values. |

| Benchmarking Datasets (e.g., complete cell line multi-omics data) | A gold-standard resource with minimal missingness to artificially induce missing patterns and validate imputation accuracy across simulated batches. |

| Batch Effect Correction Software (ComBat-Seq, Harmony) | Critical for removing technical variation after imputation, allowing the use of missingness patterns as covariates to protect biological signal. |

| Multi-omics Integration Suites (MOFA2, mixOmics) | Enable joint dimensionality reduction and factor analysis, which can provide prior information for more consistent, cross-assay imputation. |

Imputation Uncertainty Estimators (e.g., bootstrap in softImpute) |

Generate per-value confidence metrics essential for weighting in downstream analysis and flagging low-confidence imputations. |

| Containerized Pipeline Tools (Nextflow, Snakemake) | Ensure the exact same imputation algorithm, parameters, and random seeds are applied across all batches for reproducible results. |

This technical support center addresses common challenges in ensuring reproducible data preprocessing, particularly for imputation in multi-omics studies.

FAQs & Troubleshooting Guides

Q1: My imputation results are slightly different every time I run my script, even with the same data. What is the cause? A: This is almost certainly due to the use of a stochastic (random) imputation method (e.g., k-NN, MICE, matrix completion) without setting the seed for the random number generator (RNG). Each execution uses a different random starting point, leading to variance in the imputed values.

Q2: How do I properly document my imputation choices for a journal or for my thesis?

A: Your documentation should be precise enough for another researcher to exactly recreate your missing value table. Include: 1) The name and version of the software/library used, 2) The specific algorithm (e.g., mice with method='pmm'), 3) All non-default parameters (see Table 1), 4) A clear statement of how the RNG seed was set, and 5) The rationale for choosing the method (e.g., "MissForest was selected for its ability to handle non-linear relationships in the metabolomics data").

Q3: I set a random seed, but my colleague gets different results on a different machine. Why?

A: Differences can arise from: 1) Different versions of the programming language (e.g., Python 3.11 vs 3.12) or key packages (e.g., scikit-learn), which may have updated algorithms, 2) Different operating systems or hardware architectures affecting low-level numerical computations, or 3) The use of parallel/threaded computations where the order of operations is non-deterministic. Always document your exact computational environment.

Q4: What is the risk of seeding the RNG with a common integer like 0 or 123? A: While using any fixed seed ensures reproducibility within your study, commonly used seeds are less "random" and could, in theory, lead to suboptimal or biased results if the chosen seed interacts poorly with the algorithm. For robust reporting, you may consider running a sensitivity analysis with multiple seeds.

Key Experimental Protocols for Reproducible Imputation

Protocol: Reproducible Multiple Imputation by Chained Equations (MICE)

- Environment Setup: Record package versions (e.g.,

R 4.3.2,mice 3.16.0). - Seed Initialization: At the start of the script, set the seed using

set.seed(8675309)(R) ornp.random.seed(8675309); random.seed(8675309)(Python). - Imputation Execution: Run MICE, specifying the number of imputations (

m=5), iterations (maxit=10), and method per variable (method=c('pmm', 'norm', ...)). - Output & Log: Save the completed

midsobject and the console log containing the seed and all function calls.

Protocol: Sensitivity Analysis for Imputation Method Selection