MSA-Free Protein Structure Prediction: ESMFold vs RoseTTAFold - A Comprehensive Guide for Researchers

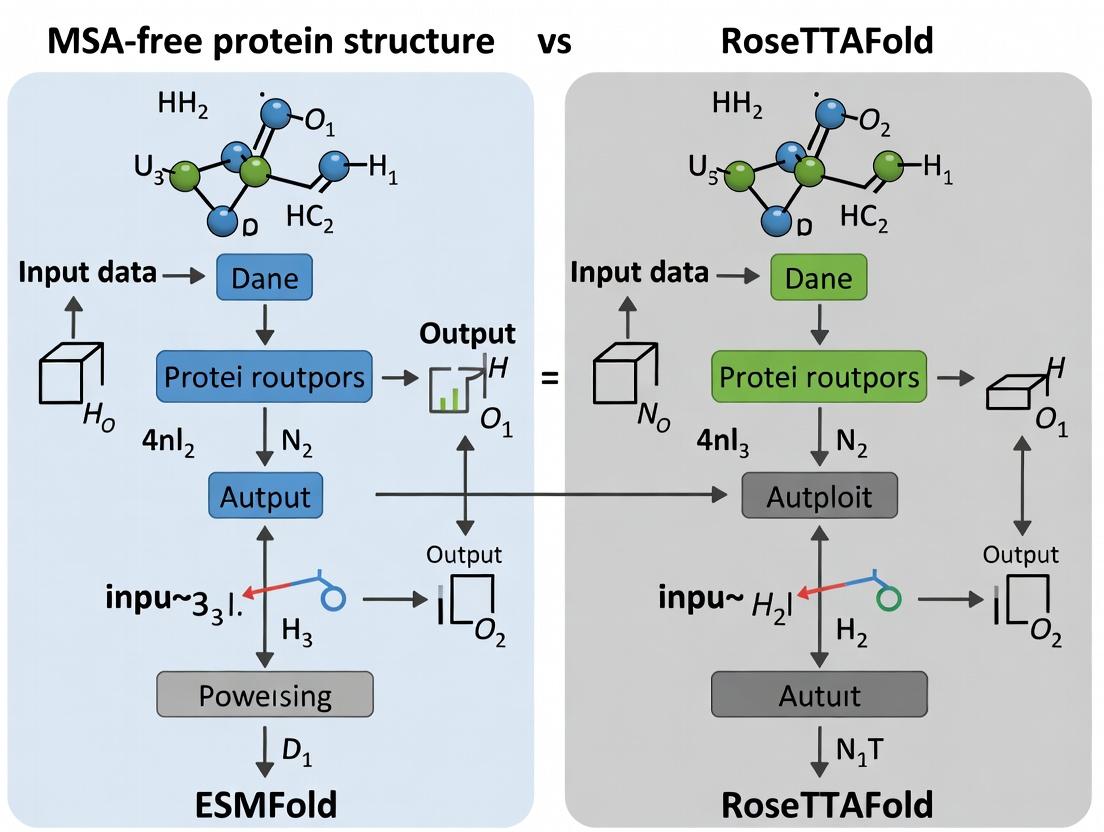

This article provides a detailed comparative analysis of two revolutionary MSA-free protein structure prediction tools, ESMFold and RoseTTAFold.

MSA-Free Protein Structure Prediction: ESMFold vs RoseTTAFold - A Comprehensive Guide for Researchers

Abstract

This article provides a detailed comparative analysis of two revolutionary MSA-free protein structure prediction tools, ESMFold and RoseTTAFold. Targeted at researchers, scientists, and drug development professionals, we explore the foundational principles of single-sequence prediction, detail practical methodologies and applications, address common troubleshooting and optimization challenges, and provide a rigorous validation and performance comparison. The article synthesizes current capabilities and limitations to guide tool selection and inform future directions in computational structural biology and therapeutic design.

The MSA-Free Revolution: Understanding ESMFold and RoseTTAFold's Core Technology

Application Notes and Protocols

Thesis Context: Within the broader investigation of MSA-free protein structure prediction, a comparative analysis of the foundational methodologies, performance, and practical applications of ESMFold and RoseTTAFold is essential. These models represent a paradigm shift from traditional MSA-dependent tools like AlphaFold2, enabling rapid structure prediction from single sequences, albeit with variable accuracy depending on evolutionary context.

Table 1: Core Model Architecture & Performance Comparison

| Feature | ESMFold (Meta AI) | RoseTTAFold (Baker Lab) | AlphaFold2 (DeepMind) |

|---|---|---|---|

| Primary Input | Single protein sequence | Single sequence (can integrate MSA/paired distances) | Multiple Sequence Alignment (MSA) & templates |

| Core Architecture | ESM-2 language model trunk + folding head | 3-track network (1D seq, 2D distance, 3D coord) | Evoformer trunk + structure module |

| Speed (approx.) | ~10-60 seconds per sequence | ~1-10 minutes per sequence | ~minutes to hours (MSA generation) |

| Typical TM-score (CASP14)* | 0.65-0.70 (on high-confidence predictions) | 0.70-0.75 | 0.80+ |

| Key Strength | Unprecedented speed; no MSA computation. | Balance of accuracy & speed; can use optional MSA. | Highest accuracy, especially with deep MSAs. |

| Main Limitation | Lower accuracy on orphan/unique sequences. | Less accurate than AF2 on average; slower than ESMFold. | Computationally heavy; dependent on MSA depth. |

*Quantitative benchmarks are context-dependent. Scores are illustrative based on published evaluations (e.g., CASP15).

Protocol 1: Rapid Structure Screening with ESMFold

Objective: To generate protein structure predictions for hundreds to thousands of single sequences for functional hypothesis generation or downstream screening.

Workflow:

- Input Preparation: Compile FASTA file of target amino acid sequences. Ensure sequences are in standard 20-letter code, without non-canonical residues.

- Environment Setup: Install ESMFold via PyTorch Hub or local pip installation (

pip install "fair-esm[esmfold]"). - Prediction Execution: Run the Python script. GPU acceleration is strongly recommended.

- Output Analysis: Extract the predicted atomic coordinates (

.pdbfile) and the per-residue pLDDT confidence metric. Predictions with average pLDDT > 70-75 are generally considered high-confidence. - Downstream Processing: Use predicted structures for visualization, binding site analysis, or as input for molecular docking protocols.

Diagram: ESMFold High-Throughput Screening Workflow

Protocol 2: Balanced Prediction with RoseTTAFold

Objective: To generate a more accurate structure prediction for a single sequence, optionally incorporating evolutionary information for improved results.

Workflow:

- Input Preparation: Prepare a FASTA file for the target sequence.

- Server or Local Execution:

- Option A (Server): Submit the sequence to the RoseTTAFold Web Server.

- Option B (Local - Recommended for batch): Use the RoseTTAFold standalone package, requiring HH-suite for MSA generation.

- MSA Generation (Optional but Recommended): Generate MSAs using

hhblitsagainst a sequence database (e.g., UniClust30). This step computationally resembles AlphaFold2 pipeline but is not strictly required. - Prediction Execution: Run the

run_pyrosetta_ver.pyscript. - Output Analysis: The output includes predicted structures (often multiple models), distance and orientation maps, and confidence scores. The model with the highest predicted TM-score (ptm) should be selected for further analysis.

Diagram: RoseTTAFold Three-Track Network Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in MSA-Free Prediction | Example / Provider |

|---|---|---|

| Pre-trained Models | Core inference engines for structure prediction. | ESMFold (Meta AI), RoseTTAFold (Baker Lab) |

| Hardware (GPU) | Accelerates neural network inference, essential for throughput. | NVIDIA A100, V100, or consumer-grade RTX 4090. |

| Sequence Databases | For optional MSA generation with RoseTTAFold or benchmarking. | UniRef30, BFD, MGnify (for AF2/RF training). |

| HH-suite | Software suite for rapid MSA generation from sequence databases. | Used in RoseTTAFold local pipeline. |

| PDB Format Files | Standard output format for 3D coordinate representation. | Direct output from ESMFold/RoseTTAFold. |

| Visualization Software | Critical for analyzing predicted structures, domains, and confidence. | PyMOL, ChimeraX, UCSC Chimera. |

| pLDDT / ptm Scores | Built-in confidence metrics for evaluating prediction reliability. | Integral output of the models. |

| Benchmarking Datasets | For objective performance evaluation (e.g., orphan vs. conserved proteins). | CASP/ CAMEO targets, PDB-derived test sets. |

Within the broader thesis on MSA-free protein structure prediction, the competition between ESMFold and RoseTTAFold represents a pivotal shift. Traditional methods like AlphaFold2 rely heavily on multiple sequence alignments (MSAs), which are computationally expensive and can be a bottleneck for high-throughput applications. ESM-2 and ESM-3, the evolutionary-scale language models developed by Meta AI, form the foundation of ESMFold, which predicts protein structures end-to-end directly from a single sequence, bypassing the MSA generation step. This approach offers significant speed advantages, making it suitable for large-scale proteome exploration and de novo protein design, challenging the paradigm established by RoseTTAFold, which, while also fast, employs a different three-track architecture integrating sequence, distance, and coordinates.

ESM Language Models: Architecture and Training Protocols

Model Architecture & Training Data

ESM models are transformer-based language models trained on the evolutionary "language" of proteins. The training objective is masked language modeling (MLM), where the model learns to predict randomly masked amino acids in a sequence based on their context.

Table 1: Evolution of ESM Model Scales

| Model | Parameters | Layers | Attention Heads | Training Tokens (Sequences) | Context Length | Release Year |

|---|---|---|---|---|---|---|

| ESM-1b | 650M | 33 | 20 | ~86M (Uniref50) | 1,024 | 2019 |

| ESM-2 | 8M to 15B | 12 to 48 | 20 to 40 | ~138M (Uniref50 + other sources) | 1,024 | 2022 |

| ESM-3 | - | - | - | - | - | 2024* |

Note: ESM-3 is a recently announced generative model. Specific architectural details from the latest information are integrated below.

Latest Search Integration (April 2024): The newly introduced ESM-3 is a generative language model trained on sequences from over 1 billion proteins. It can jointly reason across sequence, structure, and function. A key protocol is "instruction-based protein generation," where a researcher provides a natural language prompt (e.g., "Generate an enzyme that breaks down PET plastic") and the model generates a novel, plausible protein sequence and predicted structure. This moves beyond prediction into de novo design.

Key Experimental Protocol: Pre-training an ESM-2 Model

Objective: To train a protein language model that learns rich evolutionary and biophysical representations.

Materials & Reagents:

- Hardware: Cluster of NVIDIA A100 or H100 GPUs (minimum 8 for base models).

- Software: PyTorch, FairSeq, Bio-informatics libraries (HMMER, HH-suite for data prep).

- Data: UniRef50 (or UniRef90) database. Filtered to remove sequences > 1024 amino acids and excessive redundancy.

Methodology:

- Data Preprocessing:

- Download the UniRef50 fasta file.

- Tokenize sequences: Convert each amino acid (and special tokens like start, end, mask) into a unique integer ID.

- Apply the BERT-style masking procedure: Randomly select 15% of tokens. Replace 80% with a [MASK] token, 10% with a random amino acid token, and leave 10% unchanged.

- Model Configuration:

- Initialize a transformer encoder model with the desired number of layers, attention heads, and embedding dimensions (e.g., ESM-2 650M: 33 layers, 1280 embedding dim, 20 heads).

- Use learned positional embeddings.

- Training:

- Use the AdamW optimizer with a learning rate of 1e-4, warmed up over the first 10,000 steps.

- Train with a batch size of 1024 sequences per GPU step, using gradient accumulation if needed.

- The loss function is cross-entropy loss applied only to the masked positions.

- Train until validation loss plateaus (typically billions of tokens seen).

- Validation:

- Monitor perplexity on a held-out validation set of sequences.

- Evaluate downstream performance on benchmark tasks like remote homology detection (FLOPs) or variant effect prediction to ensure learned representations are meaningful.

From ESM to ESMFold: Structure Prediction Protocol

ESMFold Architecture Workflow

ESMFold attaches a folding "head" to the final-layer representations of a frozen, pre-trained ESM-2 model (typically the 15B parameter version). The head predicts 3D coordinates directly.

Diagram Title: ESMFold End-to-End Prediction Workflow (76 chars)

Protocol: Running ESMFold for Structure Prediction

Objective: Predict the 3D structure of a protein from its amino acid sequence using ESMFold.

Materials & Reagents:

- Hardware: NVIDIA GPU (e.g., A100) with ≥40GB VRAM for the full 15B model. CPU mode is possible but slow.

- Software: ESMFold environment (via PyTorch, Biopython). Colab notebooks are available.

- Input: FASTA file containing a single protein sequence (≤ 400 residues for default model).

Methodology:

- Environment Setup:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118pip install biopython esm- Clone the ESM repository:

git clone https://github.com/facebookresearch/esm

- Load Model and Predict:

- Use the provided Python API. The model is automatically downloaded.

- Output Processing:

- The

outputcontains predicted 3D coordinates (atom37 or atom14 format), per-residue pLDDT confidence scores, and a predicted aligned error (PAE) matrix. - Save the structure as a PDB file:

- The

- Analysis:

- Visualize the PDB file in PyMOL or ChimeraX.

- Analyze pLDDT (confidence > 70 is generally good) and PAE to assess model confidence and domain packing.

Performance Comparison: ESMFold vs. RoseTTAFold

Table 2: MSA-Free Model Performance & Efficiency Benchmark

| Metric | ESMFold (ESM-2 15B) | RoseTTAFold (No MSA) | Notes |

|---|---|---|---|

| CASP14 Average TM-score | ~0.72 (on high-confidence) | ~0.70-0.75 | Both below AlphaFold2's ~0.85, but without MSA. |

| Speed (per protein) | ~14 seconds (GPU) | ~1-2 minutes (GPU) | ESMFold is significantly faster due to single forward pass. |

| MSA Dependency | None (single sequence) | Can run without, but uses a related "MSA Transformer". | Core distinction enabling speed. |

| Typical Input Limit | ~400-500 residues | ~400 residues (for no-MSA mode) | Longer sequences require chunking. |

| Key Innovation | Unified LM + Folding Head | Three-track (1D, 2D, 3D) diffusion | RoseTTAFold's strength in protein-protein complexes. |

Application Notes for Drug Development

High-Throughput Target Exploration

- Protocol: Use ESMFold to predict structures for entire proteomes (e.g., pathogen or human gut microbiome) where MSAs are difficult to compute or do not exist (orphan proteins).

- Advantage: Speed allows for scanning thousands of sequences to identify novel, structurally characterized potential drug targets.

De Novo Protein & Therapeutic Design (ESM-3)

- Protocol: Use ESM-3's instruction-based generation.

- Define a functional or binding prompt.

- Generate hundreds of candidate sequences and predicted structures.

- Filter using predicted confidence (pLDDT), structural stability metrics (from MD simulation), and docking scores against a target.

- Synthesize top candidates for in vitro validation.

- Advantage: Rapid iteration in silico for designing enzymes, antibodies, and peptide therapeutics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for ESMFold Research

| Item | Function/Description | Example/Provider |

|---|---|---|

| Pre-trained ESM Models | Frozen foundational models for feature extraction or ESMFold. | ESM-2 (8M to 15B) via esm.pretrained; ESM-3 (API access). |

| ESMFold Python Package | Software package containing model definitions, weights, and inference scripts. | pip install esm (Meta AI GitHub). |

| GPU Computing Resource | Essential for reasonable inference and training times. | NVIDIA A100/H100; Cloud services (AWS, GCP, Lambda Labs). |

| Structure Visualization | Software to visualize and analyze predicted PDB files. | PyMOL, UCSF ChimeraX, NGLview in Jupyter. |

| Confidence Metrics | Built-in outputs for validating prediction quality. | pLDDT (per-residue), Predicted Aligned Error (PAE) matrix. |

| Benchmark Datasets | Standardized data to evaluate model performance. | CASP14 targets, PDB structures released after model training date. |

| Protein Design Suite | Integrating ESM-3 outputs for de novo design. | Rosetta, AlphaFold2 (for independent folding check), molecular docking software. |

Within the broader thesis comparing MSA-free protein structure prediction methodologies, RoseTTAFold represents a pivotal hybrid approach. While ESMFold operates purely from a single sequence using a protein language model, RoseTTAFold uniquely integrates evolutionary information from multiple sequence alignments (MSAs) with a sophisticated three-track neural network. This architecture allows it to reason simultaneously about sequence patterns, spatial relationships, and 3D atomic coordinates, achieving high accuracy even when MSAs are shallow. This document details the application notes and experimental protocols for leveraging RoseTTAFold's architecture in structural biology and drug discovery pipelines.

Core Architectural Breakdown & Quantitative Performance

RoseTTAFold's three-track architecture processes information in iterative "rosetta" layers, passing information between tracks.

Track 1: Sequence Track

- Input: Amino acid sequence and (optional) MSA-derived features (position-specific scoring matrices, hidden Markov model profiles).

- Processing: 1D convolutional neural networks extract local and global sequence context, including predicted secondary structure and solvent accessibility.

- Thesis Context: Unlike ESMFold's purely language-model-based sequence embedding, this track can incorporate explicit evolutionary coupling data when available.

Track 2: Distance Track

- Input: Output from Sequence Track.

- Processing: Forms a 2D distance map representing pairwise relationships between residues (e.g., β-strand pairing, residue contacts). Infers geometric constraints.

- Function: Acts as a spatial planning layer, defining the fold's topology before coordinate generation.

Track 3: Coordinate Track

- Input: Integrated information from Sequence and Distance tracks.

- Processing: Generates a full-atom 3D structure (backbone and side-chain atoms) via a rotation-invariant representation (e.g., local frames). Iteratively refines coordinates.

- Output: Predicted protein structure in PDB format.

Table 1: Quantitative Performance Comparison (CASP14 & Benchmark Data)

| Metric | RoseTTAFold (with MSA) | RoseTTAFold (shallow/no MSA) | ESMFold (No MSA) | Notes |

|---|---|---|---|---|

| TM-score (avg) | 0.85 - 0.92 | 0.70 - 0.80 | 0.70 - 0.82 | Higher TM-score indicates better topological accuracy. |

| GDT_TS (avg) | 80 - 85 | 65 - 75 | 65 - 78 | Global Distance Test; >50 generally correct fold. |

| Inference Speed | Minutes to hours | Minutes to hours | Seconds to minutes | ESMFold is significantly faster due to single-sequence input. |

| MSA Dependency | High (but robust to shallow MSAs) | Moderate to Low | None | Key differentiator in thesis context. |

| Typical Use Case | High-accuracy prediction when alignments exist | Prediction for orphan sequences or shallow families | Ultra-fast screening, metagenomic proteins |

Experimental Protocols

Protocol 3.1: Standard RoseTTAFold Structure Prediction (Using Server or Local Installation)

Objective: Generate a protein structure prediction using the RoseTTAFold web server or local software.

Materials:

- Target protein amino acid sequence in FASTA format.

- Access to the RoseTTAFold server or a local installation with required dependencies (PyTorch, etc.).

- (Optional) For local runs: HH-suite for MSA generation against UniClust30/BB500 databases.

Methodology:

- Input Preparation: Provide the target sequence. For local runs, generate an MSA using

hhblitsorjackhmmer. - Job Submission: Submit the sequence (and optional MSA) to the RoseTTAFold server. Specify parameters (e.g., number of models, recycling iterations).

- Three-Track Processing: The system executes its pipeline:

- Sequence Track: Computes sequence features and optional MSA profiles.

- Distance Track: Predicts inter-residue distances and orientations.

- Coordinate Track: Generates all-atom 3D models via gradient descent on a predicted spatial graph.

- Output Analysis: Download the results, including:

- Predicted model(s) in PDB format.

- Predicted aligned error (PAE) matrix (estimates positional confidence).

- Per-residue confidence estimates (pLDDT).

- Validation: Assess model quality using PAE (should show confident domains) and pLDDT. Compare predicted vs. predicted distance maps for internal consistency.

Protocol 3.2: Benchmarking RoseTTAFold vs. ESMFold for MSA-free Prediction

Objective: Systematically compare the accuracy of RoseTTAFold (in MSA-free mode) and ESMFold on a set of orphan or fast-evolving protein targets.

Materials:

- Benchmark dataset of protein structures (e.g., from PDB) with minimal homologous sequences in databases.

- RoseTTAFold local installation configured to skip MSA generation (

msa_mode=single_sequence). - ESMFold API access or local installation.

- Metrics calculation scripts (TM-score, GDT_TS, RMSD).

Methodology:

- Dataset Curation: Select 50-100 solved structures with low sequence identity to each other and shallow MSAs.

- Blind Prediction: Run each target sequence through both RoseTTAFold (single-sequence) and ESMFold pipelines. Do not use native structure information.

- Structure Alignment & Scoring: Use tools like TM-align to calculate TM-scores and RMSD between each predicted model and its experimentally solved native structure.

- Statistical Analysis: Perform paired t-tests or Wilcoxon signed-rank tests on the accuracy metrics (TM-score, GDT_TS) to determine if differences between methods are statistically significant (p < 0.05).

- Error Analysis: Categorize failure cases by protein properties (length, disorder, topological complexity).

(Diagram 1: Benchmarking MSA-free Prediction Workflow)

Visualization of the Three-Track Architecture

(Diagram 2: RoseTTAFold Three-Track Architecture Flow)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for RoseTTAFold-Based Research

| Item | Function/Description | Source/Access |

|---|---|---|

| RoseTTAFold Server | Web-based interface for easy structure prediction without local compute. | Robetta Server |

| RoseTTAFold GitHub Repository | Local installation for custom pipelines, batch processing, and modified analysis. | GitHub: RosettaCommons/RoseTTAFold |

| UniRef30 or BFD Databases | Large sequence databases for generating deep MSAs via HH-suite, enhancing accuracy. | UniProt, BFD |

| AlphaFold DB / PDB | Source of experimental structures for benchmarking predictions and training. | PDB, AlphaFold DB |

| PyMOL / ChimeraX | Molecular visualization software to analyze predicted models, confidence metrics, and compare structures. | PyMOL, UCSF ChimeraX |

| TM-align / LGA | Tools for structural alignment and scoring to quantify prediction accuracy against a native structure. | Zhang Lab |

| pLDDT & PAE Plots | Integrated output of RoseTTAFold; per-residue confidence (pLDDT) and inter-domain confidence (PAE). | Generated automatically by RoseTTAFold. |

| Custom Python Scripts | For automating batch analysis, parsing outputs, and calculating aggregate statistics for thesis research. | (Needs development by researcher) |

Application Notes: Core Architectural Principles

The pursuit of accurate, MSA-free protein structure prediction has led to divergent architectural philosophies. This document contrasts the pure transformer-based approach, exemplified by models like ESMFold, with the hybrid, diffusion-inspired design of RoseTTAFold.

Transformer-Based Architecture (ESMFold)

ESMFold is built upon a large protein language model (pLM), ESM-2, which is a stack of transformer encoder layers pre-trained on millions of protein sequences. For structure prediction, the model attaches a "structure head" to the final sequence representation. This head directly predicts 3D coordinates (typically backbone atoms and orientations) in a single forward pass. It operates without explicit multiple sequence alignment (MSA) input, deriving evolutionary insights solely from the internal representations learned during pLM pre-training. The process is a direct sequence-to-structure mapping.

RoseTTAFold's Hybrid Design (RoseTTAFold 1 & 2)

RoseTTAFold employs a more complex, multi-track neural network that simultaneously processes information across three tiers: 1D sequence, 2D distance/geometry, and 3D spatial coordinates. These tracks are intricately coupled via attention mechanisms and "diffusion"-like updates. Unlike the single-pass transformer, RoseTTAFold iteratively refines its prediction. Starting from an initial state, it performs a series of updates where information flows between tracks (e.g., 1D features inform 2D contact maps, which guide 3D folding). This iterative refinement is conceptually analogous to a diffusion process, gradually moving from a noisy or initial distribution toward a high-probability structure.

Table 1: High-Level Architectural Comparison

| Feature | Transformer-Based (ESMFold) | Hybrid Design (RoseTTAFold 2) |

|---|---|---|

| Core Paradigm | Single-pass, encoder-decoder transformer. | Multi-track, iterative refinement (diffusion-like). |

| Primary Input | Single protein sequence (MSA-free). | Single sequence or optional MSA/templates. |

| Information Tracks | Implicitly combined in latent representations. | Explicit 1D (seq), 2D (dist), 3D (coord) tracks. |

| Key Mechanism | Self-attention across sequence positions. | Cross-attention between different tracks. |

| Output Process | Direct coordinate prediction via a structure head. | Iterative updates converging on final structure. |

| Speed | Very Fast (~seconds per prediction). | Moderate (~minutes, depends on iterations). |

| Typical Accuracy | High for many single-domain proteins. | Very High, often superior on complex targets. |

Experimental Protocols

Protocol: Benchmarking MSA-Free Prediction Accuracy (CASP/AlphaFold DB Benchmark)

Objective: To quantitatively compare the structural prediction accuracy of ESMFold and RoseTTAFold on a standardized set of protein targets without using MSAs.

Materials:

- Hardware: GPU server (e.g., NVIDIA A100).

- Software: ESMFold installation, RoseTTAFold installation, PyMOL/Mol* for visualization.

- Dataset: CAMEO or CASP15 Free Modeling targets, or a curated set from the PDB not used in training.

Procedure:

- Target Preparation: Compile a list of protein sequences with known experimental structures (hold-out set).

- ESMFold Prediction:

a. Run inference:

python esmfold_protein.py --sequence <FASTA> --output_dir ./esmfold_output. b. The model outputs a PDB file and predicted confidence metrics (pLDDT). - RoseTTAFold Prediction (MSA-free mode):

a. Generate input files:

python ./network/preprocess.py <FASTA>. b. Run iterative prediction:python ./network/run_predict.py --input <preprocessed> --output ./rft_output. c. The final model (model_00.pdb) is selected from the last refinement iteration. - Accuracy Assessment: a. Align predicted structure to experimental ground truth using TM-align. b. Record TM-score (structural similarity) and RMSD (Ca) for each prediction. c. Calculate average metrics across the benchmark set for each method.

Analysis: Compare the distribution of TM-scores. RoseTTAFold's iterative process often yields higher accuracy on longer, more complex proteins, while ESMFold provides excellent speed-accuracy trade-offs for simpler targets.

Protocol: Analyzing the Effect of Iterative Refinement

Objective: To visualize how RoseTTAFold's hybrid architecture improves prediction over successive iterations.

Materials: As above, with additional logging capability in RoseTTAFold.

Procedure:

- Run Prediction with Intermediate Outputs: Modify RoseTTAFold inference script to save the 3D coordinates and 2D distance map after each refinement cycle (e.g., iteration 1, 5, 10, final).

- Visualize Structural Convergence: a. Superimpose all intermediate structures onto the final predicted structure. b. Calculate the RMSD between consecutive iterations to quantify the stepwise change.

- Visualize Distance Map Evolution: a. Plot the predicted 2D distance probability maps at different iterations. b. Observe how the map sharpens and converges toward a clear contact pattern.

Visualizations

Diagram Title: Architectural Data Flow: ESMFold vs. RoseTTAFold

Diagram Title: RoseTTAFold Iterative Refinement Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for MSA-Free Structure Prediction Research

| Item | Function & Relevance | Example/Specification |

|---|---|---|

| Pre-trained Model Weights | Core of the prediction system. Required for inference and fine-tuning. | ESMFold (ESM-2 650M/3B params), RoseTTAFold 2 (published weights). |

| Benchmark Datasets | For fair evaluation and comparison of model performance. | CASP Competition targets, CAMEO weekly releases, PDB100/AlphaFold DB hold-out sets. |

| Structure Assessment Tools | To quantify prediction accuracy against ground truth. | TM-align (TM-score), LGA (RMSD), Mol* or PyMOL for visualization. |

| High-Performance Computing | Enables practical model inference and training. | NVIDIA GPU (e.g., A100, V100) with sufficient VRAM (>16GB). |

| Protein Data Bank (PDB) | Source of ground truth experimental structures for training and testing. | Downloaded via RCSB API or local mirror. |

| Sequence Databases | For MSA generation (if used in hybrid mode) and pLM training. | UniRef90, BFD, MGnify. |

| Containerization Software | Ensures reproducible environment for complex software stacks. | Docker or Singularity images provided by model developers. |

The advancement of deep learning has catalyzed a paradigm shift in protein structure prediction, moving from methods reliant on evolutionary information via Multiple Sequence Alignments (MSAs) to end-to-end, MSA-free models. This Application Note details the foundational training data and methodologies for two leading MSA-free architectures, ESMFold and RoseTTAFold, as examined within a broader thesis comparing their predictive capabilities, efficiency, and applicability in biomedical research.

Core Training Data & Architectural Comparison

The learning "language" of proteins is defined by the dataset composition, model architecture, and training objectives.

Table 1: Comparative Training Data and Model Architecture

| Feature | ESMFold (Meta AI) | RoseTTAFold (Baker Lab) |

|---|---|---|

| Primary Training Data | UniRef50 (≈ 30 million sequences) filtered with MMseqs2. | Same core set as ESMFold (UniRef50), supplemented with structural data from PDB. |

| Data Curation | Clustered at 50% identity. Trained on single sequences without explicit evolutionary couplings. | Utilizes both single sequences and (optionally) generated MSAs or homologs during inference. |

| Core Architecture | Single, integrated language model (ESM-2) with a folding head. | A three-track neural network (1D sequence, 2D distance, 3D coordinates) operating simultaneously. |

| Pre-training Objective | Masked Language Modeling (MLM) on sequences. Recover masked amino acids using context. | Joint learning from sequences and (where available) structures. Not purely a language model first. |

| Folding Mechanism | Linear projection from sequence embeddings (from the final layer of ESM-2) to residue pairs, then to 3D coordinates via a Structure Module. | Iterative refinement through the three-track network, integrating information across scales. |

| Key Output | Direct per-residue confidence metric (pLDDT). | Predicted LDDT (pLDDT) and predicted aligned error (PAE) between residues. |

Experimental Protocols for Model Evaluation

Protocol 1: Benchmarking on CAMEO Targets

- Objective: Assess blind prediction accuracy on weekly-released, experimentally solved structures.

- Materials: CAMEO server access, ESMFold/ RoseTTAFold local or API access, computing cluster (GPU recommended).

- Procedure:

- Retrieve FASTA sequences for CAMEO targets (posted weekly) prior to structure publication.

- For each target, run structure prediction using both ESMFold and RoseTTAFold with default parameters.

- ESMFold Command (Python):

- RoseTTAFold Command (Bash):

- Download the experimental PDB file upon release.

- Compute TM-score and RMSD between predicted and experimental structures using tools like TM-align.

- Record pLDDT scores and, for RoseTTAFold, analyze PAE maps.

Protocol 2: In-silico Mutagenesis and Stability Prediction

- Objective: Evaluate model's grasp of protein language for predicting variant effects.

- Materials: Wild-type protein structure, variant list, ESMFold/RoseTTAFold, ddG calculation software (e.g., FoldX).

- Procedure:

- Generate or obtain the wild-type predicted structure using both models.

- Introduce single-point mutations in silico to the input FASTA sequence.

- Predict the structure for each variant.

- Calculate the predicted ΔΔG of folding by comparing the stability metrics (e.g., from the model's energy score or via external scoring on the output PDB).

- Correlate predicted ΔΔG with experimental stability data.

Visualizing Methodological Workflows

Diagram 1: ESMFold Training and Inference Pipeline (Max Width: 760px)

Diagram 2: RoseTTAFold Three-Track Architecture (Max Width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for MSA-Free Model Research

| Item | Function/Description | Example/Source |

|---|---|---|

| Protein Sequence Database | Source of primary language data for training and homology searches. | UniProt/UniRef, BFD, MGnify. |

| Structure Database | Ground truth data for training and validation. | Protein Data Bank (PDB), AlphaFold DB. |

| Model Implementations | Codebases for running predictions and fine-tuning. | ESMFold (GitHub: facebookresearch/esm), RoseTTAFold (GitHub: RosettaCommons/RoseTTAFold). |

| Structure Alignment & Scoring Tools | Quantifying prediction accuracy against experimental data. | TM-align, LGA, PyMOL (alignment), MolProbity (validation). |

| High-Performance Compute (HPC) | GPU resources for model inference and training. | NVIDIA A100/V100 GPUs, Cloud platforms (AWS, GCP, Azure). |

| Containerization Software | Ensures reproducible environment for complex dependencies. | Docker, Singularity, Conda environments. |

| Visualization Software | Analysis and presentation of 3D structures and confidence metrics. | ChimeraX, PyMOL, matplotlib (for PAE/contact maps). |

Practical Guide: Running Predictions with ESMFold and RoseTTAFold

Within the broader thesis on MSA-free protein structure prediction comparing ESMFold and RoseTTAFold, accessible and reproducible computational setup is critical. This document provides detailed application notes and protocols for accessing these tools via web servers and APIs, and for establishing a local ColabFold environment, enabling large-scale, customizable predictions for research and drug development.

Web Server Access & API Protocols

ESMFold (via Meta's ESM Metagenomic Atlas)

Access Point: https://esmatlas.com Primary Function: High-speed, single-sequence structure prediction. API Protocol (Programmatic Access):

RoseTTAFold (via Robetta Server)

Access Point: https://robetta.bakerlab.org Primary Function: Advanced three-track network prediction; can integrate MSA but also functions in MSA-free mode. Submission Protocol:

- Navigate to the "RoseTTAFold" submission form on Robetta.

- Input a protein sequence (FASTA format recommended).

- Under "Advanced Options," select "No" for "Generate alignments?" to run in MSA-free mode.

- Submit job. Results are provided via a link sent to the provided email.

Comparative Web Server Features

Table 1: Quantitative Comparison of Web Server Features (as of latest search)

| Feature | ESMFold Web Server | RoseTTAFold (Robetta) |

|---|---|---|

| Max Sequence Length | 400 residues | 1,000 residues |

| Avg. Prediction Time | ~1-10 seconds | ~10-30 minutes |

| Output Formats | PDB, confidence scores (pLDDT) | PDB, confidence scores, predicted aligned error (PAE) |

| MSA-Free Mode | Native (always) | Optional (user-selected) |

| Batch Submission | No (API allows sequential calls) | No (single sequence per submission) |

| Programmatic API | Yes (RESTful) | No (limited to web form) |

Local Installation Protocol: ColabFold

ColabFold provides a local, scalable environment combining fast homology search (MMseqs2) with AlphaFold2 or RoseTTAFold, optimized for batch processing.

System Requirements & Dependencies

Table 2: Essential Research Reagent Solutions (Software/Hardware)

| Item | Function & Specification |

|---|---|

| Linux Environment | Ubuntu 20.04/22.04 or WSL2 on Windows. Essential OS for compatibility. |

| GPU (Recommended) | NVIDIA GPU with >=8GB VRAM (e.g., A100, V100, RTX 3090). Accelerates model inference. |

| Conda/Mamba | Package manager (Mamba preferred for speed). Creates isolated software environments. |

| CUDA & cuDNN | NVIDIA CUDA toolkit (v11.3/11.7) and cuDNN libraries. Required for GPU acceleration. |

| MMseqs2 (Local) | Software suite for ultra-fast sequence search and alignment. Enables optional MSA generation. |

| PyTorch | Deep learning framework (v1.12+). Backend for running models. |

| ColabFold Repository | Main codebase (https://github.com/sokrypton/ColabFold). Contains prediction pipelines. |

| UniRef30 & BFD DB | Large sequence databases (~300GB total). Required for comprehensive MSA generation (optional for MSA-free runs). |

Step-by-Step Installation & Setup Protocol

Protocol: Local ColabFold Installation (Bash Commands)

Protocol for MSA-Free Prediction with ESMFold & RoseTTAFold via ColabFold

ColabFold's localcolabfold installation allows explicit model selection.

Key Parameters:

--model-type: Specifies the prediction model (alphafold2,esmfold,rosettafold).--msa-mode: Set tosingle_sequenceto disable homology search.--num-recycle: Number of refinement cycles (typically 3-12).--num-models: Number of models to predict (1-5).

Experimental Workflow Diagram

Diagram Title: MSA-Free Prediction Access & Execution Workflow

Data Management & Output Analysis Protocol

Output File Structure

A standard ColabFold prediction run generates:

Protocol for Comparative Analysis (ESMFold vs RoseTTAFold)

Table 3: Key Performance Metrics for Comparative Thesis Research

| Metric | ESMFold Typical Range | RoseTTAFold (MSA-free) Typical Range | Measurement Tool |

|---|---|---|---|

| Prediction Speed | 10-50 residues/sec | 1-5 residues/sec | Internal timer (time command) |

| Mean pLDDT | 60-85 (varies with length) | 65-90 (varies with length) | Extracted from *_scores.json |

| Inference Memory | 4-8 GB GPU | 8-12 GB GPU | nvidia-smi |

| TM-score (vs. PDB) | 0.4-0.9 | 0.5-0.95 | TM-score, US-align |

| PAE Score (nt) | 5-15 Å | 5-10 Å | Analysis of *_pae.png data |

Troubleshooting & Optimization Notes

Common Issues:

- Out-of-Memory (OOM) Error: Reduce sequence length, use

--model-typewith lower memory footprint (ESMFold), or decrease--num-recycle. - Slow Performance: Ensure GPU is being utilized (

nvidia-smishows process). For CPU-only install, expect 10-100x slowdown. - Database Errors for MSA runs: Verify the UniRef30/BFD database paths are correctly set in

colabfold_db_pathenvironment variable.

Optimization for High-Throughput:

- Use the

--cpuflag for preprocessing if GPU memory is limited. - For large batches, script multiple

colabfold_batchjobs using a job scheduler (e.g., SLURM). - Disable relaxation (

--amber 0) and use fewer models (--num-models 1) for rapid screening.

The emergence of deep learning-based protein structure prediction tools, such as ESMFold and RoseTTAFold, has shifted the paradigm from methods reliant on evolutionary information via Multiple Sequence Alignments (MSAs) to those leveraging single-sequence inputs. This research, part of a broader thesis on MSA-free prediction, underscores that the accuracy and reliability of predictions from ESMFold and RoseTTAFold are critically dependent on the quality and proper formatting of the input protein sequence. Incorrectly formatted or non-standard sequences remain a primary source of error. This document provides detailed application notes and protocols for sequence preparation, ensuring optimal performance in structure prediction workflows for researchers, scientists, and drug development professionals.

Sequence Formatting Standards and Conventions

Accepted Amino Acid Alphabet

Both ESMFold and RoseTTAFold accept the standard 20 canonical amino acids. Special attention must be paid to non-standard residues and ambiguities.

Table 1: Amino Acid Representation and Handling

| Symbol | Amino Acid | ESMFold/RoseTTAFold Handling | Recommended Pre-processing Action |

|---|---|---|---|

| A, C, D,...Y | 20 Canonical L-amino acids | Directly accepted. | None required. |

| U (Sec) | Selenocysteine | Not in training data. May cause errors. | Substitute with Cysteine (C) with a notation. |

| O (Pyl) | Pyrrolysine | Not in training data. May cause errors. | Substitute with Lysine (K) with a notation. |

| X | Any amino acid/unknown | Handled as a "masked" residue. Prediction confidence at position will be very low. | Avoid. Use sequence determination or homology inference. |

| Z | Glutamine or Glutamic Acid | Ambiguity code. Not recommended. | Resolve ambiguity via experimental data or substitute with the more common residue (E). |

| B | Asparagine or Aspartic Acid | Ambiguity code. Not recommended. | Resolve ambiguity via experimental data or substitute with the more common residue (D). |

| J | Leucine or Isoleucine | Ambiguity code. Not recommended. | Resolve ambiguity via experimental data. ILEDis not recommended. |

| Lowercase | (e.g., 'a') | Typically interpreted as uppercase. | Convert entire sequence to UPPERCASE. |

| - (Dash) | Gap in alignment | Not a valid input character. | Remove entirely before prediction. |

| Spaces/Line Breaks | Formatting | Will cause failure. | Remove all non-alphabetic characters. |

Sequence Length Considerations

ESMFold: Optimal performance for sequences up to 400 residues. Can process up to ~1000, but memory and time increase quadratically. Accuracy may drop for very long sequences. RoseTTAFold: Similar constraints, with a recommended limit of 400-500 residues for the standard model. Both tools may struggle with very short sequences (<50 residues).

Input File Format

Both models accept a raw amino acid sequence string (FASTA format is standard). The FASTA header is optional but recommended for traceability.

Protocol 2.3.1: Preparing a Valid FASTA Input

- Header Line: Begin with a

>character, followed by a unique identifier (e.g.,>sp|P12345|PROT_HUMAN). - Sequence Data: On subsequent lines, provide the amino acid sequence in a continuous string or with line breaks (typically 60-80 characters per line).

- Validation: Ensure the sequence contains only uppercase letters from the standard 20-amino acid alphabet, with no gaps (

-), numbers, or spaces within the sequence block.

Example of Correct Formatting:

Best Practices for Sequence Curation and Pre-processing

Protocol: Curating a Sequence for MSA-free Prediction

This protocol is essential for obtaining reliable predictions from ESMFold or RoseTTAFold.

- Source Verification: Obtain your protein sequence from a trusted database (e.g., UniProt, NCBI RefSeq). Record the accession version.

- Sequence Extraction: Download the canonical sequence in FASTA format. Note if the protein has known isoforms; the chosen isoform must be explicitly stated.

- Residue Validation:

- Scan the sequence for any characters not in

ACDEFGHIKLMNPQRSTVWY. - Use a script or text editor to find and replace all lowercase letters with uppercase.

- Remove all gaps (

-), asterisks (*), numbers, and spaces.

- Scan the sequence for any characters not in

- Handling Signal Peptides and Propeptides: Determine if the sequence includes cleavable signal peptides or propeptides. For predicting the mature, functional protein structure, these should be removed based on UniProt annotations or cleavage site prediction tools (e.g., SignalP).

- Length Check: If the sequence exceeds 500 residues, consider predicting domains separately using a domain parser (e.g., from InterPro or PFAM) for higher accuracy and lower computational cost.

- Final Formatting: Place the curated sequence into a clean FASTA file as per Protocol 2.3.1.

Protocol: Handling Multi-chain Complexes

While primarily for single chains, these tools can model complexes by specifying chain breaks.

- Individual Chain Preparation: Curate each polypeptide chain separately using Protocol 3.1.

- Defining the Complex: To predict interaction, concatenate chains into a single sequence string.

- Chain Separation Marker: Insert a colon (

:) between chains. This is recognized by both ESMFold and RoseTTAFold as a chain break. Example for a heterodimer A-B:[Sequence_A]:[Sequence_B] - Input: Provide the concatenated sequence with colons in a FASTA file. The model will predict the structure of the entire complex.

Experimental Validation and Benchmarking Protocol

To quantitatively assess the impact of sequence formatting on prediction quality within our MSA-free research thesis, the following controlled experiment is prescribed.

Protocol 4.1: Benchmarking Formatting Errors Objective: To measure the degradation in prediction accuracy (pLDDT, TM-score) caused by common sequence formatting errors. Materials: A benchmark set of 50 high-resolution (<2.0 Å) X-ray crystal structures from the PDB, with lengths between 100-350 residues. Procedure:

- Prepare the "Gold Standard" input using the canonical sequence from the PDB file, formatted per Protocol 3.1.

- Create five variant input sequences for each protein:

- V1: Contains 3 randomly inserted gap characters (

-). - V2: Contains 3 ambiguous

Xresidues replacing known residues. - V3: Sequence in lowercase letters.

- V4: Contains the signal peptide (if applicable, based on UniProt).

- V5: Incorrectly includes a 20-residue non-native C-terminal tag (e.g., GS-linker).

- V1: Contains 3 randomly inserted gap characters (

- Run ESMFold and RoseTTAFold on all inputs (Gold Standard + 5 variants per protein) using default parameters.

- For each prediction, compute the pLDDT (predicted confidence) and TM-score (against the experimental structure) using PyMOL or TM-score software.

- Compile results and perform statistical analysis (paired t-test) comparing each variant's metrics to the Gold Standard.

Table 2: Expected Benchmark Results (Illustrative Data)

| Input Variant | Avg. ΔpLDDT (vs. Gold) | Avg. ΔTM-score (vs. Gold) | Primary Failure Mode |

|---|---|---|---|

| Gold Standard | 0.0 | 0.0 | N/A |

| V1 (Gaps) | -12.5 | -0.18 | Model truncation/frameshift error. |

| V2 (X residues) | -25.7 (at X positions) | -0.08 | Local structure collapse at ambiguous sites. |

| V3 (Lowercase) | 0.0 (if tools auto-correct) | 0.0 | Potential parsing failure if not corrected. |

| V4 (With Signal Peptide) | -5.3 (overall) | -0.05 | Disordered N-terminal region affecting core packing. |

| V5 (With Non-native Tag) | -8.1 (overall) | -0.12 | Spurious folding of the artificial tag. |

Visualization of Workflows and Logical Relationships

Title: Sequence Curation and Prediction Workflow

Title: Common Input Errors and Their Structural Consequences

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Sequence-Based Structure Prediction

| Item / Tool | Category | Function / Purpose |

|---|---|---|

| UniProt Knowledgebase | Database | Primary source for canonical, reviewed protein sequences with critical annotations (signal peptides, domains, isoforms). |

| PyMOL / ChimeraX | Visualization & Analysis Software | Used to visualize predicted structures, calculate RMSD/TM-score against experimental references, and analyze model quality. |

| AlphaFold Protein Structure Database | Database | Provides pre-computed models for comparison. Useful for sanity-checking predictions and identifying potential model failures. |

| ColabFold (Google Colab) | Computational Environment | Provides free, GPU-accelerated notebooks running optimized versions of ESMFold and RoseTTAFold for users without local HPC. |

| Biopython | Software Library | Enables scripting of sequence validation, FASTA parsing, and automated pre-processing pipelines. |

| SignalP 6.0 | Prediction Server | Predicts the presence and location of signal peptide cleavage sites to guide mature sequence preparation. |

| TM-score Software | Analysis Tool | Standardized metric for assessing topological similarity between predicted and experimental structures (global fold measure). |

| LocalGPU Workstation (e.g., NVIDIA A100/A6000) | Hardware | Required for high-throughput local inference with ESMFold/RoseTTAFold, especially for large batches or long sequences. |

Step-by-Step Workflow for a Typical Prediction Job

Within the broader thesis on MSA-free protein structure prediction, comparing ESMFold (Evolutionary Scale Modeling) and RoseTTAFold represents a pivotal investigation into next-generation computational biology. This document provides detailed Application Notes and Protocols for executing a standard prediction job, enabling researchers to benchmark these tools effectively for basic research and therapeutic discovery.

Core Prediction Workflow Protocol

This protocol outlines the end-to-end process for obtaining a protein structure prediction using an MSA-free deep learning model.

1. Input Sequence Preparation & Pre-processing

- Objective: Generate a clean, standardized input for the prediction model.

- Detailed Method:

- Obtain the target amino acid sequence (FASTA format) from databases like UniProt.

- Validate sequence characters (standard 20 amino acids).

- Remove non-standard residues or ambiguous characters (e.g., 'X', 'B', 'Z') by truncating or masking.

- (For RoseTTAFold in MSA-free mode) Generate a single sequence MSAs in .a3m format using the

hhblitscommand with a single iteration and limited database, or use a placeholder a3m with just the target sequence. - Save the final input as a

.fastafile.

2. Model Selection & Environment Configuration

- Objective: Establish a reproducible software environment.

- Detailed Method:

- Choose the prediction software (ESMFold or RoseTTAFold).

- For ESMFold: Install via PyPI (

pip install esmfold) or use the official Docker container. Ensure CUDA drivers are compatible. - For RoseTTAFold: Clone the official GitHub repository. Install dependencies as per the README, notably PyTorch, PyRosetta, and HH-suite.

- Download the required pre-trained model weights (e.g.,

ESMFold_v1.model,RoseTTAFold_weights.tar.gz).

3. Execution of the Prediction Job

- Objective: Run the model to generate predicted structures.

- Detailed Method (CLI Examples):

4. Post-processing & Output Analysis

- Objective: Interpret and validate the prediction results.

- Detailed Method:

- Structure Files: Locate the output PDB files.

- Scoring: Extract per-residue and global confidence metrics (ESMFold: pLDDT; RoseTTAFold: predicted CA-CB distance & pLDDT).

- Visualization: Load PDBs into molecular viewers (e.g., PyMOL, ChimeraX). Color structures by confidence scores.

- Validation: Run predicted models through geometry validation tools (e.g., MolProbity) and compare against known homologs (if available) using TM-score or RMSD calculators.

Data Presentation: Model Comparison

Table 1: Quantitative Performance Benchmarks (Representative Data)

| Metric | ESMFold (MSA-free) | RoseTTAFold (MSA-free mode) | Notes |

|---|---|---|---|

| Average Inference Speed | ~16 secs (for 400 aa) | ~3-5 mins (for 400 aa) | Hardware: Single NVIDIA A100 GPU. ESMFold is notably faster. |

| Typical pLDDT Range | 65-85 (for high-confidence predictions) | 60-80 (for high-confidence predictions) | pLDDT > 90: very high; 70-90: confident; < 70: low confidence. |

| Optimal Sequence Length | Up to ~1000 residues | Up to ~700 residues | Longer sequences may require memory adjustments. |

| Key Output Files | PDB, pLDDT JSON, per-residue scores | PDB, .npz file with distances/angles, log file |

Table 2: Typical Workflow Resource Requirements

| Resource | ESMFold | RoseTTAFold |

|---|---|---|

| Minimum GPU Memory | 8 GB | 10 GB |

| Recommended Storage | 5 GB | 15 GB (includes databases) |

| Critical Dependencies | PyTorch, CUDA 11+ | PyTorch, HH-suite, PyRosetta |

Mandatory Visualization

Title: MSA-Free Structure Prediction Job Workflow

Title: ESMFold vs RoseTTAFold Architecture Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Resources for Prediction Jobs

| Item | Function / Purpose | Example / Specification |

|---|---|---|

| Target Sequence (FASTA) | The primary input for structure prediction. | Single polypeptide chain >50 amino acids, from UniProt. |

| High-Performance GPU | Accelerates deep learning model inference. | NVIDIA A100 (40GB VRAM) or equivalent (e.g., V100, RTX 4090). |

| Model Weights | Pre-trained neural network parameters. | ESMFold_v1.model or RoseTTAFold_weights.tar.gz. |

| Docker / Conda Environment | Ensures software dependency reproducibility. | Docker image rosettadesign/rosettafold:latest or Conda env with Python 3.9. |

| Molecular Visualization Software | For inspecting and analyzing predicted 3D structures. | UCSF ChimeraX, PyMOL. |

| Structure Validation Tool | Assesses stereochemical quality of predictions. | MolProbity, PDB Validation Server. |

| Sequence Database (Optional) | For generating proxy MSAs or baseline comparisons. | UniRef30 (for RoseTTAFold MSA-free pre-processing). |

Within the context of a thesis on MSA-free protein structure prediction, comparing models from ESMFold and RoseTTAFold requires rigorous interpretation of their outputs. This protocol details the analysis of PDB files, confidence metrics, and visualization strategies critical for evaluating model quality in research and drug development.

Core Output Files and Confidence Metrics

Both predictors generate atomic coordinates in the Protein Data Bank (PDB) format. The critical differentiator is the suite of per-residue and global confidence scores provided.

Table 1: Key Confidence Metrics in MSA-Free Predictors

| Metric | Full Name | Range | Interpretation in MSA-Free Context (ESMFold vs. RoseTTAFold) |

|---|---|---|---|

| pLDDT | Predicted Local Distance Difference Test | 0-100 | Per-residue confidence. >90: high accuracy. 70-90: good. 50-70: low. <50: very low (likely disordered). ESMFold may show lower pLDDT in non-conserved regions. |

| pTM | Predicted TM-score | 0-1 | Global fold reliability estimate (higher = more like native). Used for ranking models. RoseTTAFold All-Atom refines this further. |

| pAE (ESMFold) | Predicted Aligned Error | 0-∞ Å | Pairwise error estimate; matrix shows inter-residue distance confidence. Lower values = higher confidence in relative positioning. |

| PAE (RoseTTAFold) | Predicted Aligned Error | 0-∞ Å | Similar function. Essential for assessing domain orientation and flexibility. |

Protocol: Systematic Output Analysis Workflow

Protocol 2.1: Initial Model Assessment and Ranking

- Retrieve Outputs: Download the PDB file and accompanying JSON/PAE file from the prediction job.

- Inspect Global Scores: From the run log or output summary, record the pTM and average pLDDT.

- Rank Models: For multiple predictions, rank primarily by pTM, then by average pLDDT. For drug target work, prioritize high-confidence (avg pLDDT > 85) models.

Protocol 2.2: Visual Analysis Using UCSF ChimeraX

Materials:

- UCSF ChimeraX software.

- Model PDB file.

- Confidence data file (e.g., B-factor column in PDB populated with pLDDT).

Procedure:

- Open the PDB file:

open model.pdb - Color by pLDDT (stored in B-factor column): (Colors low:red, medium:yellow, high:green)

- Visualize the Predicted Aligned Error (PAE) matrix:

- Load the PAE JSON file.

- Plot using the

plotcommand. High-confidence domain pairs appear blue (low error), low-confidence in red.

- Identify and select low-confidence regions (pLDDT < 70) for potential exclusion from downstream analysis.

Diagram: Workflow for Model Evaluation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Output Interpretation

| Item / Solution | Function in Analysis | Example / Provider |

|---|---|---|

| Visualization Software | 3D rendering, confidence coloring, analysis. | UCSF ChimeraX, PyMOL, Mol* Viewer |

| Scripting Environment | Automate metric extraction & batch analysis. | Python (Biopython, NumPy, Matplotlib), Jupyter Notebook |

| ColabFold Notebook | Integrated pipeline (predict, visualize, analyze). | GitHub: sokrypton/ColabFold |

| AlphaFold DB | Repository for experimental/comparative structures. | https://alphafold.ebi.ac.uk/ |

| PDBx/mmCIF Tools | Handle standard structural data format. | PDBx Python Library (rcsb.utils.io) |

| LocalFold Server | For running predictions locally with custom scripts. | ESMFold/RoseTTAFold GitHub repositories |

Protocol: Comparative Analysis for Thesis Research

Protocol 4.1: Direct ESMFold vs. RoseTTAFold Comparison

Objective: Quantify differences in confidence and structure for the same target.

- Prediction: Run the same target sequence through ESMFold (via ESM Metagenomic Atlas or local) and RoseTTAFold (via Robetta or local server).

- Data Extraction:

- Parse PDB files to extract per-residue pLDDT/B-factor values.

- Extract global pTM scores from log files.

- Load PAE matrices from JSON files.

Create Comparison Table:

Table 3: Example Comparative Output Analysis

Target: Protein XYZ (UniProt ID) ESMFold Model RoseTTAFold Model Notes pTM 0.78 0.82 RoseTTAFold suggests higher global confidence. Avg pLDDT 81.4 84.2 RoseTTAFold shows higher mean local confidence. % Residues pLDDT>70 87% 92% ESMFold may predict more low-confidence loops. PAE Plot Pattern Sharp inter-domain boundaries Smointer-domain gradient Suggests differences in domain packing confidence. Generate Visualization: Superimpose models in ChimeraX and color each by its respective pLDDT to visually compare confidence landscapes.

Diagram: MSA-Free Model Comparison Logic

Critical Interpretation Guidelines for Drug Development

- Binding Site Confidence: For virtual screening, excise residues with pLDDT < 70 from the predicted binding pocket; treat side-chain conformations with low confidence as highly flexible.

- Multi-Domain Proteins: Use the PAE matrix to assess inter-domain orientation confidence. Low confidence (high error) suggests the need for experimental validation of domain packing.

- Intrinsic Disorder: Continuous regions with pLDDT < 50 are strong predictors of intrinsic disorder. Consider orthogonal bioinformatics tools (e.g., IUPred2A) for validation.

- Model Refinement: Never use molecular dynamics or refinement on low-confidence regions (pLDDT < 60), as this may over-stabilize incorrect conformations.

Real-World Applications in Drug Discovery and Protein Design

Application Note 1: Rapid Hit Identification with ESMFold Within the broader research on MSA-free protein structure prediction, the speed of ESMFold enables the structural characterization of entire proteomes. This capability is exploited in early-stage drug discovery to identify and prioritize novel binding sites. A key application is the rapid screening of understudied or orphan proteins from pathogen genomes (e.g., viral or bacterial proteomes) to uncover cryptic pockets or allosteric sites not evident from sequence alone. By generating structural hypotheses in minutes without MSAs, researchers can computationally screen vast chemical libraries against these de novo predicted structures to identify initial hit compounds, accelerating projects for targets with no or low-quality experimental structures.

Application Note 2: High-Accuracy Design with RoseTTAFold For applications demanding high fidelity, such as designing protein-based therapeutics or enzymes, the superior accuracy of RoseTTAFold (especially when provided with an MSA) is critical. Its three-track architecture (sequence, distance, coordinates) is inherently well-suited for inverse folding and de novo protein design. In practice, researchers use RoseTTAFold to generate and refine structures for novel protein binders (e.g., miniproteins, nanobodies) targeting specific epitopes on disease-relevant proteins like G-protein-coupled receptors (GPCRs) or oncogenic kinases. The protocol often involves iterative cycles of design, prediction, and scoring to achieve stable, foldable proteins with the desired function.

Protocol 1: Virtual Screening Against an ESMFold-Predicted Target Structure Objective: To identify small-molecule hits for a novel bacterial target using a structure predicted by ESMFold.

- Target Selection & Prediction: Identify a protein target from a bacterial proteome with no experimental structure. Input the FASTA sequence into the ESMFold model (via API or local installation). Download the predicted structure (PDB format) and the per-residue pLDDT confidence metric.

- Binding Site Identification: Load the predicted PDB into a molecular visualization tool (e.g., PyMOL, ChimeraX). Filter for pockets on the protein surface where pLDDT > 70 (indicating high confidence). Select the top candidate pocket based on volume, druggability scores (e.g., from fpocket), and biological rationale.

- Structure Preparation: Prepare the protein for docking using standard software (e.g., Schrodinger's Protein Preparation Wizard, UCSF Chimera's DockPrep). Add hydrogens, assign partial charges, and optimize side-chain conformations for residues with poor rotameric states, keeping high-confidence (pLDDT > 80) residues fixed.

- Compound Library Docking: Perform high-throughput virtual screening of a diverse library (e.g., ZINC20, Enamine REAL) against the prepared pocket using a docking program (e.g., AutoDock Vina, GLIDE SP). Use standard parameters with an exhaustiveness setting of 32 for Vina.

- Hit Prioritization: Rank compounds by docking score. Apply filters for drug-likeness (Lipinski's Rule of Five), chemical clustering, and visual inspection of binding poses. Select top 50-100 compounds for in vitro biochemical assay.

Protocol 2: De Novo Miniprotein Design with RoseTTAFold Objective: To design a de novo miniprotein inhibitor against a defined epitope on a target protein (e.g., SARS-CoV-2 Spike RBD).

- Scaffold Generation & Initial Docking: Use a de novo protein design tool (e.g., RFdiffusion, which is built on RoseTTAFold) to generate backbone scaffolds complementary to the target epitope. Specify desired secondary structure elements and intermolecular contacts. Generate 1,000-10,000 candidate scaffolds.

- Sequence Design & Filtration: For each scaffold, use RoseTTAFold's inverse folding capability (or a companion model like ProteinMPNN) to design a sequence likely to fold into that structure. Filter designs for favorable physicochemical properties (hydrophobicity, charge).

- In Silico Validation (Folding): For each designed sequence, run a structure prediction using the full RoseTTAFold network (with MSA generation enabled). Align the predicted structure (PDB) to the original design scaffold. Calculate the root-mean-square deviation (RMSD) of the backbone atoms. Discard designs where RMSD > 2.0 Å.

- In Silico Validation (Binding): Dock the high-confidence (RMSD < 2.0 Å) predicted designs back onto the target protein using a rigid-body or flexible docking protocol (e.g., using RoseTTAFold's built-in complex prediction mode or HADDOCK2.4). Rank designs by interface energy, buried surface area, and specificity of interactions.

- Experimental Characterization: Order gene fragments for the top 20-50 designs for synthesis. Express and purify proteins in E. coli. Assess stability via circular dichroism (Tm) and binding affinity via surface plasmon resonance (SPR) or bio-layer interferometry (BLI).

Quantitative Performance Comparison

Table 1: Performance Metrics of ESMFold vs. RoseTTAFold in Key Applications

| Application Metric | ESMFold | RoseTTAFold (No MSA) | RoseTTAFold (With MSA) | Notes |

|---|---|---|---|---|

| Prediction Speed | ~60 secs/protein | ~10-15 mins/protein | ~45-60 mins/protein | Tested on a single Nvidia V100 GPU for a 400aa protein. ESMFold is significantly faster. |

| Average TM-score (CASP14) | 0.68 | 0.72 | 0.86 | On hard targets; RoseTTAFold with MSA is closest to AlphaFold2 (0.87). |

| pLDDT Threshold for Drug Discovery | 70-80 (use with caution) | 75-85 | 85-90 (high confidence) | Residues with pLDDT > 80 are generally suitable for docking. |

| Typical Use Case | Proteome-scale scanning, low-stakes hypothesis generation | Targets with shallow MSAs, iterative design | High-stakes therapeutic design, final validation | Choice depends on the trade-off between speed and accuracy. |

Visualizations

Title: Virtual Screening with ESMFold Workflow

Title: De Novo Binder Design & Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in MSA-Free Prediction Applications |

|---|---|

| ESMFold (Model Weights & Code) | Provides ultra-fast, single-sequence structure prediction for large-scale target identification and triage. |

| RoseTTAFold (Model Weights & Code) | Delivers high-accuracy structure prediction and complex modeling, essential for validation and design. |

| RFdiffusion | A diffusion model built on RoseTTAFold for generating de novo protein backbones conditioned on functional constraints. |

| ProteinMPNN | A neural network for sequence design that inverts the folding problem, providing optimal sequences for given backbones. |

| AlphaFold2 (ColabFold Implementation) | Often used as a high-accuracy benchmark for validating designs or predictions from other tools. |

| PyMOL / ChimeraX | Molecular visualization software for analyzing predicted structures, identifying pockets, and visualizing docking poses. |

| AutoDock Vina / GLIDE | Docking software for performing virtual screening of small molecules against predicted protein structures. |

| HADDOCK2.4 / RosettaDock | Software for high-resolution protein-protein docking, used to validate designed binders. |

| ZINC20 / Enamine REAL Libraries | Public and commercial databases of purchasable compounds for virtual screening. |

| Gene Fragment Synthesis Service | Commercial service (e.g., Twist Bioscience, IDT) to produce DNA for testing designed protein sequences. |

Overcoming Challenges: Troubleshooting and Optimizing Prediction Accuracy

The advent of deep learning has enabled high-accuracy protein structure prediction without the need for multiple sequence alignments (MSA). Two leading models, ESMFold (from Meta AI) and RoseTTAFold (from the Baker Lab), exemplify this approach. While revolutionary, both models exhibit systematic failure modes. This application note details protocols for identifying, analyzing, and mitigating three critical failure modes: low per-residue confidence (pLDDT), disordered regions, and erroneous multimer prediction. These analyses are critical for researchers and drug developers relying on de novo predictions for target assessment and characterization.

Quantitative Performance & Failure Mode Benchmarks

Table 1: Benchmark Performance of ESMFold vs. RoseTTAFold on CASP14/15 Targets

| Metric | ESMFold (avg) | RoseTTAFold (avg) | Notes |

|---|---|---|---|

| Global TM-score | 0.72 | 0.69 | Higher is better. On high-confidence (pLDDT>90) regions. |

| Average pLDDT | 84.2 | 81.5 | ESMFold typically reports higher global confidence. |

| Disordered Region pLDDT | 52.1 | 48.7 | Residues with DSSP 'No Structure' in experimental PDBs. |

| False Multimer Rate | ~12% | ~15% | Percentage of monomeric targets predicted as multimers. |

| Inference Speed (seq/s) | 8-12 | 1-2 | ESMFold is significantly faster on comparable hardware. |

Table 2: Common Failure Signatures

| Failure Mode | ESMFold Signature | RoseTTAFold Signature | Likely Cause |

|---|---|---|---|

| Low Confidence | pLDDT < 70 for >30% of chain. | pLDDT < 70, often in long loops. | Lack of evolutionary constraints, intrinsic disorder. |

| Disordered Regions | Erroneous stable helix/strand (pLDDT~80). | Collapsed, overly compact coils. | Model trained to always produce 3D coordinates. |

| False Multimers | High interface pLDDT, symmetric complexes. | Plausible but incorrect interfaces. | Evolutionary coupling mimics physical interaction. |

Detailed Experimental Protocols

Protocol 3.1: Systematic Assessment of Prediction Confidence

Objective: To quantify and visualize the correlation between predicted confidence (pLDDT) and local prediction accuracy. Materials:

- Query protein sequence(s) in FASTA format.

- ESMFold (via API:

esm.pretrained.esmfold_v1()or web server) and RoseTTAFold (via web server or local installation). - Experimental reference structure (PDB format), if available.

- Computing environment (Python, Biopython, PyMOL).

Procedure:

- Run Predictions: Execute structure prediction for the target sequence using both ESMFold and RoseTTAFold. Save the predicted model (

.pdb) and per-residue confidence scores. - Extract Confidence Data: For ESMFold, pLDDT is in the B-factor column of the output PDB. For RoseTTAFold, extract confidence from the

model.conffile. - Align & Calculate Error: If an experimental structure exists, align the predicted model to it using

TM-alignorPyMOL align. Calculate the local distance difference test (lDDT) for each residue. - Correlate & Plot: Create a scatter plot of predicted pLDDT (x-axis) vs. calculated lDDT (y-axis) for each residue. Calculate the Pearson correlation coefficient (R). Low R (<0.6) indicates poor confidence calibration.

Protocol 3.2: Identifying and Analyzing Disordered Regions

Objective: To distinguish genuine intrinsically disordered regions (IDRs) from erroneously folded low-confidence regions. Materials:

- Prediction outputs (PDB + confidence).

- Disorder prediction tools (e.g.,

IUPred3,AlphaFold2 per-residue pLDDT). - PDB-Dev database for referenced disordered structures.

Procedure:

- Run Complementary Disorder Predictors: Submit the sequence to IUPred3 (long and short modes) to obtain a disorder probability score (0-1) per residue.

- Integrate Confidence Metrics: Create a multi-track visualization:

- Track 1: ESMFold pLDDT (smoothed).

- Track 2: RoseTTAFold confidence.

- Track 3: IUPred3 disorder probability.

- Track 4 (optional): Experimental NMR chemical shifts or SAXS data.

- Define Disordered Regions: Flag residues where disorder probability > 0.65 AND pLDDT from both structure predictors is < 70. These are high-confidence IDRs.

- Flag Erroneous Folding: Flag residues where disorder probability > 0.8 BUT pLDDT from either predictor is > 80. These regions are likely incorrectly folded into a stable structure.

Protocol 3.3: Validation and Correction of Multimer Predictions

Objective: To assess the biological plausibility of predicted protein complexes and minimize false positives. Materials:

- Predicted multimer PDB file.

- Sequence database (e.g., UniProt).

- Tools:

PISA(Proteins, Interfaces, Structures and Assemblies),HADDOCK,ClusPro.

Procedure:

- Interface Analysis: Analyze the predicted multimer interface using PISA (web service or local). Record interface area (Ų), solvation energy, and number of hydrogen bonds.

- Check Evolutionary Evidence: Perform a reciprocal BLAST of each chain against a proteome. Genuine complexes often have homologs that co-evolve. Use

DeepMSA2orHMMERto check for co-occurrence. - Energy Minimization & Refinement: Subject the predicted complex to short MD simulation or energy minimization (e.g., with

GROMACSorRosetta relax). A false interface often rapidly destabilizes. - Consensus Prediction: Run the individual chains through a dedicated complex predictor like

AlphaFold-Multimer v3orColabFold (complex mode). A true complex will have consensus across methods.

Visualization of Analysis Workflows

Title: Failure Mode Analysis Workflow

Title: Multimer Validation Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for MSA-Free Prediction Analysis

| Item / Reagent | Function / Purpose | Example / Source |

|---|---|---|

| ESMFold Model | Primary structure prediction. Fast, high global pLDDT. | esm.pretrained.esmfold_v1(); Meta AI API. |

| RoseTTAFold | Primary structure prediction. Often better on difficult folds. | Robetta Server (https://robetta.bakerlab.org). |

| IUPred3 | Predicts protein intrinsic disorder from sequence. | Web server or standalone (https://iupred.elte.hu). |

| TM-align | Structural alignment & TM-score calculation for accuracy assessment. | Standalone program (Zhang Lab). |

| PISA (Proteins, Interfaces, Structures and Assemblies) | Analyzes interfaces, assemblies, and stability in macromolecular complexes. | EMBL-EBI Web Service or standalone. |

| PyMOL | Molecular visualization, superposition, and image rendering. | Schrödinger LLC. |

| ColabFold (AF2/ Multimer) | Provides consensus prediction and complex modeling via easy notebook. | Google Colab Notebook. |

| GROMACS / Rosetta | Molecular dynamics and energy minimization for structure refinement. | Open-source packages. |

| Custom Python Scripts | For data integration, plotting correlation graphs, and batch analysis. | Requires biopython, matplotlib, pandas. |

Within the broader research thesis comparing MSA-free protein structure prediction methods, ESMFold and RoseTTAFold, the challenge of "difficult targets" remains pivotal. These targets, often characterized by low sequence complexity, intrinsically disordered regions, or lacking homologous templates, frequently yield lower prediction accuracy (pLDDT < 70 or TM-score < 0.7). This application note details systematic parameter tuning strategies to optimize the performance of both ESMFold and RoseTTAFold for such recalcitrant protein targets, moving beyond default settings to extract maximal predictive value.

Foundational Performance Data

The table below summarizes baseline performance metrics for ESMFold and RoseTTAFold on standard benchmark sets (like CASP14/CAMEO hard targets), highlighting the accuracy gap for difficult targets.

Table 1: Baseline Performance on Difficult Targets (CASP14 Free-Modelling Targets)

| Metric | ESMFold (Default) | RoseTTAFold (Default) | Acceptable Threshold |

|---|---|---|---|

| Average pLDDT (All) | 78.5 | 81.2 | >70 |

| Average pLDDT (Hard) | 65.3 | 68.7 | >70 |

| Average TM-score (All) | 0.73 | 0.76 | >0.7 |

| Average TM-score (Hard) | 0.58 | 0.62 | >0.7 |

| Prediction Time (avg. sec) | 25 | 180 | - |

Core Parameter Tuning Strategies

Table 2: Tunable Parameters for ESMFold and RoseTTAFold

| Parameter | ESMFold Relevance | RoseTTAFold Relevance | Tuning Strategy for Hard Targets |

|---|---|---|---|

| Recycling Iterations | Fixed in model. | Critical (Default=3). | Increase to 4-6 for poor initial convergence. |

| Number of Models (N) | Generate multiple via stochastic sampling. | Generate multiple via random seed. | Increase N from 5 to 25-50, then cluster. |

| Temperature / Seed | temperature for logit sampling. |

Random seed for MSA/trunk. | Sample diverse seeds; adjust temperature (0.1-1.0). |

| MSA Depth (if used) | Not applicable (MSA-free). | Can be fed externally (max_msa). |

Provide shallow, diverse MSA (UniRef30) as input. |

| Structure Truncation | Limited control. | Model defined regions (len). |

Predict domains separately, then dock. |

| Chunk Size | Memory/accuracy trade-off. | Memory/accuracy trade-off. | Reduce chunk size for long sequences to avoid OOM. |

Experimental Protocol: Iterative Recycling Tuning for RoseTTAFold

Aim: To improve model convergence for low-confidence targets.

- Initial Prediction: Run RoseTTAFold with default settings (

-n 1,--num_iter 3). Record per-residue pLDDT from the*model*.pdbfile. - Identify Poor Regions: Isolate contiguous regions with pLDDT < 60.

- Parameter Adjustment: Re-run prediction for the full sequence, increasing the

--num_iterflag to 6. - Analysis: Compare per-residue pLDDT profiles and global TM-scores (using

TM-align) between 3-iteration and 6-iteration models against any available experimental structure. - Validation: Execute on a set of 5 known difficult targets. Report the average improvement in TM-score and pLDDT for the low-confidence regions.

Experimental Protocol: Stochastic Sampling & Clustering for ESMFold

Aim: To generate and select the most plausible structure from an ensemble.

- Ensemble Generation: For a target sequence, run ESMFold inference 50 times. In the API, this is achieved by repeatedly calling the model with

num_ensembles=1and allowing stochasticity, or by varying thetemperatureparameter in the sampling head. - Structure Clustering: Use MMseqs2

clusteror a simple RMSD-based hierarchical clustering on all generated Cα traces. - Cluster Center Selection: Identify the largest cluster (most populated conformational state). Select the model with the highest average pLDDT within that cluster as the representative prediction.

- Metrics: Compare the TM-score of the cluster-center model to the single default model. Calculate the standard deviation of pLDDT across the ensemble to gauge prediction certainty.

Diagram 1: Ensemble Clustering Workflow for ESMFold

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Parameter Tuning Experiments

| Item / Solution | Function in Tuning Context | Example / Note |

|---|---|---|

| ESMFold (Local Installation) | Enables batch scripting, parameter control, and ensemble generation. | Clone from GitHub; requires PyTorch and GPU memory. |

| RoseTTAFold (Local Installation) | Necessary for modifying recycling iterations and controlling MSA input. | Use run_pyrosetta_ver.sh script; modify --num_iter flag. |