Multi-Omics in Translation: 4 Key Objectives to Bridge the Gap Between Bench and Bedside

This article provides a comprehensive roadmap for researchers, scientists, and drug development professionals on the strategic objectives of multi-omics studies in translational medicine.

Multi-Omics in Translation: 4 Key Objectives to Bridge the Gap Between Bench and Bedside

Abstract

This article provides a comprehensive roadmap for researchers, scientists, and drug development professionals on the strategic objectives of multi-omics studies in translational medicine. We move beyond technical descriptions to detail the core intents driving integration of genomics, transcriptomics, proteomics, and metabolomics. The scope covers foundational discovery of molecular mechanisms, methodological frameworks for application in patient stratification and biomarker identification, critical troubleshooting for data integration and biological interpretation, and essential validation strategies for clinical utility. The synthesis offers a clear guide for designing and executing robust multi-omics studies that directly impact diagnostics, therapeutic development, and personalized patient care.

From Molecules to Mechanisms: Foundational Multi-Omics for Disease Deconstruction

Defining the Multi-Omics Mandate in Translational Research

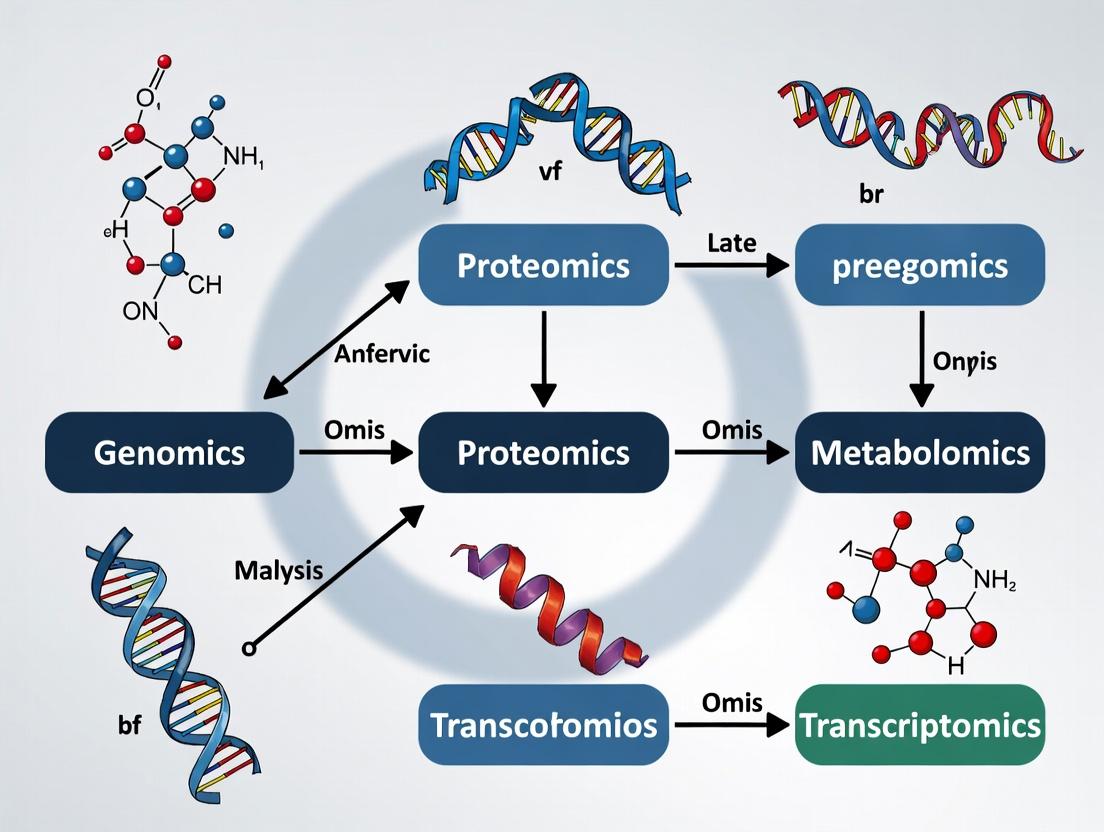

The mandate for multi-omics in translational research is unequivocal: to systematically integrate diverse molecular data layers—genomics, transcriptomics, proteomics, metabolomics—to bridge the chasm between bench-side discovery and patient bedside application. This whitepaper posits that the core objective of multi-omics in translational medicine is not merely data generation but the construction of causal, predictive models of disease that can identify novel therapeutic targets, stratify patient populations, and monitor intervention efficacy with unprecedented precision.

The Translational Imperative: From Silos to Integration

Traditional single-omics approaches have provided foundational insights but often fail to capture the complex, dynamic interactions within biological systems. Translational research demands a holistic view. The multi-omics mandate addresses this by mandating the concurrent analysis of multiple molecular tiers to map the flow of information from genotype to phenotype, thereby revealing actionable mechanisms in human disease.

Foundational Omics Layers & Technologies

A core suite of technologies enables this mandate. The table below summarizes quantitative outputs and key platforms for each layer.

Table 1: Core Omics Layers in Translational Research

| Omics Layer | Molecular Target | Key Quantitative Outputs | Primary High-Throughput Technologies |

|---|---|---|---|

| Genomics | DNA Sequence & Variation | Single Nucleotide Polymorphisms (SNPs), Copy Number Variations (CNVs), Mutational Burden | Whole Genome/Exome Sequencing (WGS/WES), SNP Arrays |

| Transcriptomics | RNA Expression & Splicing | Gene Expression Levels (FPKM/TPM), Isoform Usage, Fusion Genes | RNA-Sequencing (RNA-Seq), Single-Cell RNA-Seq (scRNA-Seq) |

| Proteomics | Protein Abundance & Modification | Protein Abundance, Post-Translational Modifications (PTMs), Protein-Protein Interactions | Mass Spectrometry (LC-MS/MS), Reverse Phase Protein Arrays (RPPA) |

| Metabolomics | Small-Molecule Metabolites | Metabolite Concentrations, Pathway Flux | Mass Spectrometry (GC-MS, LC-MS), Nuclear Magnetic Resonance (NMR) |

Experimental & Computational Workflow

Executing a multi-omics study requires a stringent, coordinated pipeline from sample to insight.

Core Protocol: Integrated Multi-Omics Cohort Study

- Sample Collection & Biobanking: Collect matched tissue (e.g., tumor biopsy) and biofluid (blood, urine) samples from a well-phenotyped clinical cohort. Aliquot and store at appropriate conditions (-80°C for tissue/plasma, liquid N₂ for single-cell preparations).

- Parallel Multi-Omics Profiling:

- DNA/Genomics: Extract genomic DNA; perform WGS or targeted panel sequencing. Align to reference genome (GRCh38) using BWA-MEM; call variants with GATK.

- RNA/Transcriptomics: Extract total RNA; assess quality (RIN > 7). Prepare stranded libraries for bulk RNA-Seq or utilize 10x Genomics platform for scRNA-Seq. Align with STAR; quantify with featureCounts.

- Proteomics: Perform tissue lysis and protein digestion. Analyze peptides via data-dependent acquisition (DDA) LC-MS/MS on a Q-Exactive HF instrument. Identify and quantify proteins using MaxQuant or Spectronaut.

- Metabolomics: Deproteinize plasma samples with cold methanol. Analyze supernatants via hydrophilic interaction liquid chromatography (HILIC) coupled to a high-resolution mass spectrometer. Process with XCMS or Compound Discoverer.

- Data Integration & Modeling: Employ computational frameworks:

- Concatenation-Based: Merge features from all omics layers into a single matrix for multivariate analysis (e.g., PCA, PLS-DA).

- Model-Based: Use multi-omics factor analysis (MOFA) or Similarity Network Fusion (SNF) to identify latent factors driving variation across data types.

- Network-Based: Construct causal networks using tools like CausalPath or integrate with prior knowledge (e.g., KEGG, Reactome) to infer signaling pathways.

Diagram Title: Integrated Multi-Omics Translational Workflow

Pathway & Network Visualization

Multi-omics integration reveals perturbed signaling axes. Below is a generalized pathway diagram highlighting how different omics layers inform a consolidated disease mechanism.

Diagram Title: Multi-Omics Informed Signaling Pathway

The Scientist's Toolkit: Essential Research Reagents & Platforms

Successful execution depends on high-quality, reproducible reagents and tools.

Table 2: Key Research Reagent Solutions for Multi-Omics

| Category | Item/Kit | Primary Function in Workflow |

|---|---|---|

| Nucleic Acid Isolation | Qiagen AllPrep DNA/RNA/miRNA Universal Kit | Simultaneous purification of genomic DNA and total RNA from a single tissue sample, preserving molecular integrity for parallel sequencing. |

| Single-Cell Profiling | 10x Genomics Chromium Next GEM Single Cell 5' Kit | Enables high-throughput barcoding of transcripts from thousands of individual cells for scRNA-Seq, critical for tumor heterogeneity studies. |

| Mass Spec Sample Prep | Thermo Fisher Pierce High pH Reversed-Phase Peptide Fractionation Kit | Fractionates complex peptide digests to reduce complexity and increase proteome coverage in LC-MS/MS analysis. |

| Metabolite Standards | Biocrates AbsoluteIDQ p400 HR Kit | Provides a standardized mass spectrometry kit for the targeted quantification of up to 400 metabolites, ensuring inter-study comparability. |

| Data Integration Software | Altanalyze / Jupyter Notebooks with MOFA2 | Open-source bioinformatics platforms for the normalization, integration, and joint visualization of multi-omics data sets. |

The multi-omics mandate is a predictive framework. Its fulfillment in translational research lies in moving beyond correlation to establish causality, thereby delivering on the core objectives of translational medicine: de-risking drug development through mechanistic understanding, enabling precision patient stratification, and accelerating the delivery of effective therapies.

The advent of high-throughput technologies has enabled the comprehensive measurement of biological molecules at multiple levels. In translational medicine research, the primary objective of multi-omics studies is to integrate data from these distinct yet interconnected molecular layers to construct a holistic, systems-level understanding of disease mechanisms. This integrated approach aims to discover robust biomarkers for early diagnosis, stratify patient populations, identify novel therapeutic targets, and predict treatment responses, thereby accelerating the development of personalized medical interventions. This guide details the four core omics layers foundational to this paradigm.

Genomics

Genomics is the study of an organism's complete set of DNA, including all genes and their intergenic regions. It provides the static blueprint, detailing the sequence variants, structural variations, and epigenetic modifications that may predispose an individual to disease or influence drug metabolism.

Key Objectives in Translational Medicine:

- Identification of germline and somatic mutations driving disease.

- Pharmacogenomics: Understanding genetic variants affecting drug efficacy and toxicity.

- Epigenomic profiling (e.g., DNA methylation, chromatin accessibility) for regulatory insights.

Experimental Protocol: Whole Genome Sequencing (WGS)

- Sample Prep & Library Construction: Isolate genomic DNA, fragment via sonration or enzymatic digestion. Repair ends, add 'A' overhangs, and ligate platform-specific adapters.

- Library Amplification: Perform PCR to amplify adapter-ligated fragments.

- Sequencing: Load library onto platforms (e.g., Illumina NovaSeq). Bridge amplification on a flow cell creates clusters, followed by sequencing-by-synthesis with fluorescently-labeled nucleotides.

- Data Analysis: Demultiplex reads, align to a reference genome (e.g., using BWA-MEM), call variants (GATK best practices), and annotate functional impact (SnpEff, VEP).

WGS Experimental Workflow

Transcriptomics

Transcriptomics profiles the complete set of RNA transcripts (mRNA, non-coding RNA) in a cell or tissue at a given time point. It reflects the dynamic expression of the genome and responds to environmental and disease states.

Key Objectives in Translational Medicine:

- Uncover disease-associated gene expression signatures and pathways.

- Identify alternative splicing events and fusion genes.

- Characterize non-coding RNA roles in regulation.

Experimental Protocol: Bulk RNA-Sequencing

- RNA Extraction & QC: Isolate total RNA (e.g., TRIzol, column kits). Assess integrity (RIN > 7 via Bioanalyzer).

- Library Prep: Deplete rRNA or enrich poly-A mRNA. Fragment RNA, synthesize cDNA, ligate adapters, and amplify.

- Sequencing & Analysis: Sequence on Illumina platform. Align reads (STAR, HISAT2), quantify gene/isoform expression (featureCounts, Salmon), perform differential expression analysis (DESeq2, edgeR).

Central Dogma with Transcriptomics

Proteomics

Proteomics characterizes the full complement of proteins, including their abundances, post-translational modifications (PTMs), interactions, and structures. It directly reflects functional cellular machinery and drug target landscapes.

Key Objectives in Translational Medicine:

- Discover diagnostic serum or tissue protein biomarkers.

- Map protein-signaling networks dysregulated in disease.

- Assess drug-target engagement and mechanism of action.

Experimental Protocol: Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS)

- Sample Lysis & Digestion: Lyse cells/tissue in appropriate buffer. Reduce, alkylate, and digest proteins with trypsin.

- LC Separation: Desalt and separate peptides via reversed-phase nanoLC.

- MS Analysis: Ionize peptides (ESI) and analyze in mass spectrometer (e.g., Q-Exactive). Full MS scan followed by data-dependent MS/MS fragmentation of top ions.

- Data Processing: Database search (MaxQuant, Proteome Discoverer) against a proteome database. Quantify via label-free (LFQ) or isobaric labeling (TMT, iTRAQ) methods.

Metabolomics

Metabolomics identifies and quantifies small-molecule metabolites (<1.5 kDa) within a biological system. It represents the ultimate downstream readout of cellular processes and is highly sensitive to phenotypic changes.

Key Objectives in Translational Medicine:

- Reveal metabolic pathway perturbations in disease (e.g., Warburg effect in cancer).

- Identify metabolic biomarkers for rapid diagnostics.

- Understand drug metabolism and pharmacometabolomics.

Experimental Protocol: Untargeted Metabolomics by LC-MS

- Metabolite Extraction: Use cold methanol/water/chloroform to quench metabolism and extract metabolites.

- Chromatography: Separate metabolites on HILIC (polar) or C18 (non-polar) columns.

- MS Analysis: Analyze using high-resolution MS (e.g., Q-TOF) in both positive and negative ionization modes.

- Data Analysis: Process raw data (XCMS, MS-DIAL) for peak picking, alignment, and annotation against spectral libraries (HMDB, METLIN).

Comparative Analysis of Core Omics Layers

Table 1: Core Omics Layers - A Comparative Summary

| Layer | Molecule Class | Key Technology | Temporal Dynamics | Primary Translational Output |

|---|---|---|---|---|

| Genomics | DNA (Variants, Modifications) | NGS (WGS, WES) | Static (Lifetime Somatic Changes) | Risk prediction, Pharmacogenomics, Target ID |

| Transcriptomics | RNA (mRNA, ncRNA) | RNA-Seq, Microarrays | Fast (Minutes-Hours) | Pathway Activity, Expression Signatures, Subtyping |

| Proteomics | Proteins & PTMs | LC-MS/MS, Arrays | Medium (Hours-Days) | Functional Effectors, Biomarkers, Drug Targets |

| Metabolomics | Metabolites | LC/GC-MS, NMR | Very Fast (Seconds-Minutes) | Functional Phenotype, Diagnostic Biomarkers |

Table 2: Multi-Omics Integration Objectives in Translational Research

| Integration Type | Thesis Objective | Example Application |

|---|---|---|

| Genome + Transcriptome | Identify functional regulatory variants (eQTLs) | Linking a SNP to altered oncogene expression in cancer. |

| Transcriptome + Proteome | Assess post-transcriptional regulation & correlation | Discordant mRNA-protein levels revealing translational control. |

| Proteome + Metabolome | Map active enzymatic pathways | Elevated kinase + downstream metabolites signaling pathway activation. |

| All Layers | Construct predictive models of disease phenotype | Molecular subtyping of patients for targeted therapy selection. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Core Omics Workflows

| Reagent / Kit | Function | Primary Omics Application |

|---|---|---|

| TRIzol/ Qiazol | Monophasic solution for simultaneous RNA/DNA/protein extraction from a single sample. | Multi-omics sample splitting for Transcriptomics, Genomics, Proteomics. |

| Poly(A) Magnetic Beads | Enrichment of messenger RNA via poly-A tail binding for RNA-Seq library prep. | Transcriptomics (RNA-Seq). |

| Nextera / TruSeq DNA Library Prep Kit | Enzymatic fragmentation and adapter ligation for next-generation sequencing libraries. | Genomics (WGS, WES). |

| Trypsin (Sequencing Grade) | Proteolytic enzyme cleaves proteins at lysine/arginine for bottom-up proteomics. | Proteomics (sample digestion for LC-MS/MS). |

| TMTpro 16plex Isobaric Labels | Chemical tags for multiplexed quantitative comparison of up to 16 samples in one MS run. | Proteomics (high-throughput quantification). |

| Methanol (LC-MS Grade) | Used for metabolite extraction; quenches enzymatic activity to preserve metabolic profile. | Metabolomics (sample preparation). |

| C18 Solid-Phase Extraction Columns | Purification and desalting of peptides or metabolites prior to LC-MS analysis. | Proteomics & Metabolomics. |

| ERCC RNA Spike-In Mix | Synthetic RNA controls added to samples for normalization and quality control. | Transcriptomics (QC for RNA-Seq). |

Within the overarching thesis on multi-omics in translational medicine, this objective addresses the fundamental challenge of moving beyond descriptive disease classifications. It posits that integrating genome, epigenome, transcriptome, proteome, and metabolome data is essential to deconvolute the multifactorial origins and diverse clinical manifestations of complex diseases (e.g., cancer, neurodegenerative, metabolic, and autoimmune disorders). This guide details the technical frameworks for achieving this objective.

Core Conceptual Framework and Current Data Landscape

Complex diseases arise from dynamic interactions between genetic predisposition, environmental exposures, and lifestyle factors, leading to significant molecular and clinical heterogeneity. The following table summarizes key quantitative insights from recent multi-omics studies.

Table 1: Quantitative Insights from Recent Multi-Omics Studies in Complex Diseases

| Disease Area | Sample Size (Typical Study) | Key Heterogeneity Metric Identified | Estimated # of Molecular Subtypes | Primary Omics Layers Integrated |

|---|---|---|---|---|

| Colorectal Cancer | 500-1000 patients | Consensus Molecular Subtypes (CMS) | 4 | Genomics, Transcriptomics, Epigenomics |

| Alzheimer's Disease | 300-800 post-mortem brains | Tauopathy and Neuroinflammation scores | 3-5 distinct trajectories | Transcriptomics, Proteomics, Metabolomics |

| Type 2 Diabetes | 1000-5000 cohort | Insulin secretion vs. resistance clusters | 5+ endotypes | Genomics, Metabolomics, Proteomics |

| Rheumatoid Arthritis | 200-500 synovial biopsies | Myeloid vs. lymphoid-rich pathotypes | 3-4 | Single-cell Transcriptomics, Proteomics, Cytometry |

| Major Depressive Disorder | 500-1000 patients | Inflammation-associated metabolic profiles | 2-3 biotypes | Metabolomics, Transcriptomics, Genomics |

Detailed Experimental Methodologies

Protocol 1: Integrated Multi-Omics Cohort Profiling Workflow

This protocol outlines a standard pipeline for generating and integrating data from a patient cohort.

- Sample Collection & Biobanking: Collect matched primary tissue (e.g., tumor biopsy, blood, saliva), plasma/serum, and peripheral blood mononuclear cells (PBMCs). Aliquot and store at -80°C or in liquid nitrogen.

- DNA Extraction & Whole Genome Sequencing (WGS): Use a kit-based method (e.g., Qiagen DNeasy) for genomic DNA. Perform WGS (30-40x coverage) to identify single nucleotide variants (SNVs), copy number variations (CNVs), and structural variants (SVs).

- DNA Methylation Profiling: Treat extracted DNA with bisulfite conversion (e.g., using the EZ DNA Methylation Kit). Hybridize to an Illumina EPIC array or perform whole-genome bisulfite sequencing (WGBS).

- RNA Extraction & Sequencing: Extract total RNA (e.g., using TRIzol reagent). Perform poly-A selection and strand-specific library prep. Sequence (RNA-seq) to a depth of 50-100 million paired-end reads.

- Proteomics (LC-MS/MS): Homogenize tissue or lyse cells. Digest proteins with trypsin. Desalt peptides and analyze via liquid chromatography-tandem mass spectrometry (LC-MS/MS) on a high-resolution instrument (e.g., Orbitrap). Use isobaric labeling (TMT) for multiplexed quantification.

- Metabolomics (NMR & LC-MS): Extract metabolites from plasma/tissue using methanol/water/chloroform. Analyze via: a) Nuclear Magnetic Resonance (NMR) for broad quantification, and b) targeted LC-MS for specific pathways.

Protocol 2: Single-Cell Multi-Omics for Cellular Heterogeneity

This protocol details the use of droplet-based single-cell RNA sequencing (scRNA-seq) with surface protein detection (CITE-seq).

- Tissue Dissociation: Prepare a single-cell suspension from fresh tissue using a validated enzymatic dissociation kit (e.g., Miltenyi Biotec GentleMACS).

- Cell Viability & Counting: Stain with Trypan Blue or Acridine Orange/Propidium Iodide. Use an automated cell counter. Aim for >90% viability.

- CITE-seq Antibody Staining: Conjugate antibodies against surface proteins (CD45, CD3, CD19, etc.) with unique oligonucleotide barcodes. Incubate live cells with antibody cocktail for 30 mins on ice. Wash thoroughly.

- Library Preparation (10x Genomics Platform): Load cells, conjugated antibodies, and gel beads into a Chromium chip. Generate Gel Beads-in-emulsion (GEMs). Perform reverse transcription, cDNA amplification, and library construction per manufacturer's protocol.

- Sequencing & Data Processing: Pool libraries and sequence on an Illumina NovaSeq. Use Cell Ranger (10x Genomics) for demultiplexing, alignment, and UMI counting. Use Seurat (R package) for downstream integration of RNA and ADT (antibody-derived tag) data, clustering, and differential expression.

Protocol 3: Spatial Transcriptomics for Contextual Heterogeneity

Protocol for the 10x Genomics Visium platform.

- Fresh Frozen Tissue Sectioning: Embed tissue in Optimal Cutting Temperature (OCT) compound. Cryosection at 10µm thickness onto Visium slides. Store at -80°C.

- Fixation, Staining & Imaging: Fix sections with methanol. Stain with H&E and image at 20x resolution.

- Permeabilization Optimization: Perform a tissue optimization test to determine optimal permeabilization time for mRNA release.

- On-Slide Reverse Transcription: Permeabilize tissue to release mRNA, which binds to spatially barcoded primers on the slide. Synthesize cDNA.

- cDNA Harvesting & Library Prep: Denature and collect cDNA. Construct sequencing libraries via amplification and index addition.

- Data Alignment & Integration: Use Space Ranger for alignment to a reference genome and assignment of transcripts to spatial barcodes. Integrate with H&E image using Loupe Browser.

Visualizing the Analytical Workflow and Pathways

Diagram 1: Multi-Omics Integrative Analysis Pipeline

Diagram 2: Core Signaling Pathway in Cancer Heterogeneity

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents for Multi-Omics Studies in Disease Heterogeneity

| Reagent / Solution | Vendor Examples | Primary Function in Protocol |

|---|---|---|

| QIAGEN DNeasy Blood & Tissue Kit | QIAGEN | High-quality genomic DNA extraction from diverse sample types for WGS and methylation studies. |

| Illumina TruSeq DNA PCR-Free Library Prep Kit | Illumina | Preparation of high-complexity, unbiased genomic libraries for whole-genome sequencing. |

| EZ-96 DNA Methylation-Gold Kit | Zymo Research | Reliable bisulfite conversion of DNA for downstream methylation array or sequencing analysis. |

| TRIzol Reagent | Thermo Fisher Scientific | Simultaneous extraction of total RNA, DNA, and proteins from a single sample. |

| 10x Genomics Chromium Next GEM Single Cell 3' Kit v3.1 | 10x Genomics | Droplet-based partitioning and barcoding for single-cell RNA-seq library construction. |

| Bio-Plex Pro Human Cytokine Screening Panel | Bio-Rad | Multiplex immunoassay for quantifying up to 48 cytokines/chemokines in serum/lysates (proteomic phenotyping). |

| TMTpro 16plex Label Reagent Set | Thermo Fisher Scientific | Isobaric labeling for multiplexed quantitative proteomics using LC-MS/MS. |

| C18 Solid Phase Extraction (SPE) Plates | Waters Corporation | Clean-up and concentration of metabolites prior to LC-MS analysis in metabolomics workflows. |

| CellTrace Violet Cell Proliferation Kit | Thermo Fisher Scientific | Fluorescent dye to track cell division and proliferation in functional assays of heterogeneous populations. |

| Recombinant Human TGF-β1 Protein | R&D Systems | Key cytokine for in vitro stimulation experiments to model tumor microenvironment signaling. |

Within the multi-omics thesis framework, this objective is the translational engine. It leverages integrated genomic, transcriptomic, proteomic, and metabolomic data to move from correlative observations to causative biological insights. The goal is to identify measurable indicators (biomarkers) of disease state, progression, or treatment response, and to pinpoint molecular entities (targets) whose modulation is expected to have therapeutic benefit. This bridges the gap between descriptive omics and clinical application.

Foundational Strategies and Quantitative Landscape

The discovery pipeline employs both hypothesis-driven and unbiased screening approaches. Key strategies include differential expression analysis, network biology, and machine learning-based pattern recognition.

Table 1: Core Multi-Omics Strategies for Biomarker/Target Discovery

| Strategy | Primary Omics Layer | Key Output | Typical Validation Rate* |

|---|---|---|---|

| Genome-Wide Association Study (GWAS) | Genomics | Susceptibility loci, SNP associations | < 5% (to functional target) |

| Differential Expression (Bulk/Single-Cell) | Transcriptomics/Proteomics | Up/Down-regulated genes/proteins | 10-20% |

| Phosphoproteomic Profiling | Proteomics | Dysregulated signaling kinases & pathways | 15-25% |

| Metabolomic Phenotyping | Metabolomics | Disease-associated metabolic fluxes & biomarkers | 10-15% |

| Multi-Omics Data Integration | All layers | Prioritized, context-specific candidate networks | 20-30% (higher confidence) |

*Validation rate refers to the approximate percentage of initial candidates that are subsequently confirmed in independent cohorts or functional models.

Table 2: Current High-Throughput Platforms Enabling Discovery

| Platform Technology | Throughput Scale | Primary Application in Discovery |

|---|---|---|

| Next-Generation Sequencing (NGS) | 1-1000s of genomes/transcriptomes | Mutation calling, expression QTLs, novel isoforms |

| Mass Spectrometry (LC-MS/MS) | 1000s of proteins/100s of samples | Quantitative proteomics, post-translational modifications |

| High-Resolution Metabolomics (HRM) | 100s-1000s of metabolites | Mapping metabolic pathway dysregulation |

| Multiplex Immunoassays (e.g., Olink) | 10s-1000s of proteins in µL samples | Validation of proteomic candidates in large cohorts |

| Spatial Transcriptomics/Proteomics | Single-cell resolution in tissue context | Identifying biomarker localization and tumor microenvironments |

Detailed Experimental Protocols

Protocol 2.1: Integrated Proteogenomic Analysis for Target Identification

This protocol outlines the steps to identify novel therapeutic targets by correlating genomic alterations with proteomic/phosphoproteomic changes.

1. Sample Preparation:

- Tissue/Cell Lysate: Snap-frozen tissue or cell pellets are homogenized in lysis buffer (8M Urea, 75mM NaCl, 50mM Tris pH 8.2, protease/phosphatase inhibitors). Protein concentration is determined by BCA assay.

- Genomic DNA/RNA Extraction: Parallel aliquots are used for DNA (for WES/WGS) and RNA (for RNA-seq) extraction using silica-column based kits.

2. Multi-Omics Data Generation:

- Whole Exome Sequencing (WES): Libraries prepared using hybrid capture (e.g., Illumina TruSeq). Sequence on NovaSeq, aiming for >100x mean coverage.

- RNA Sequencing: Poly-A selected libraries sequenced on Illumina platform for >50 million paired-end reads per sample.

- Proteomics: 100µg protein per sample is reduced, alkylated, and digested with trypsin. Peptides are labeled with TMTpro 16-plex, fractionated by basic pH reversed-phase HPLC. LC-MS/MS is performed on an Orbitrap Eclipse Tribrid MS with a 180min gradient. Phosphopeptides are enriched using Fe-NTA magnetic beads prior to labeling.

3. Data Integration & Analysis:

- Genomic Analysis: Mutations (SNVs/Indels) called using GATK. Copy number variations (CNVs) derived from WES using tools like FACETS.

- Transcriptomic Analysis: Differential expression (DE) analysis using DESeq2. Fusion genes detected with STAR-Fusion.

- Proteomic Analysis: Protein/phosphosite identification and quantitation using Sequest-HT in Proteome Discoverer 3.0. Normalize to median protein abundance.

- Integration: Use tools like cBioPortal or custom R scripts (ggplot2, pheatmap) to:

- Overlay mutations/CNVs on protein abundance/phosphorylation.

- Identify cis- (genomic alteration correlates with protein change in same gene) and trans- (e.g., kinase mutation correlates with phosphosite changes in downstream substrates) effects.

- Perform pathway enrichment (Reactome, KEGG) on concordantly altered genes/proteins.

Protocol 2.2: Serum Biomarker Discovery using Untargeted Metabolomics

This protocol details the process for discovering circulating metabolic biomarkers.

1. Serum Sample Collection and Pre-processing:

- Collect blood in serum separator tubes, allow to clot for 30min, centrifuge at 2000xg for 10min. Aliquot and store at -80°C.

- Thaw samples on ice. Precipitate proteins by adding 300µL cold methanol to 100µL serum. Vortex, incubate at -20°C for 1h, centrifuge at 15,000xg for 15min at 4°C.

- Transfer supernatant to a fresh vial, dry in a speed vacuum concentrator.

2. LC-HRMS Analysis:

- Reconstitution: Reconstitute dried extract in 100µL 50:50 water:acetonitrile.

- Chromatography: Use a HILIC column (e.g., Waters BEH Amide, 2.1x150mm, 1.7µm). Mobile phase A: 10mM ammonium acetate in 95:5 water:acetonitrile (pH 9.0). B: 10mM ammonium acetate in 95:5 acetonitrile:water. Gradient: 0-2min 100% B, 2-17min to 70% B, 17-20min 70% B.

- Mass Spectrometry: Analyze on a Q-TOF MS (e.g., Agilent 6546) in both positive and negative ESI modes. Data acquired in full scan mode (m/z 50-1200).

3. Data Processing and Statistical Analysis:

- Convert raw files to mzML using MSConvert (ProteoWizard).

- Perform peak picking, alignment, and annotation using XCMS and CAMERA in R.

- Annotate metabolites by matching accurate mass (within 5 ppm) and MS/MS spectra (if available) against databases (HMDB, METLIN).

- Use multivariate statistics (PLS-DA via mixOmics package) to identify discriminatory features. Apply univariate tests (Wilcoxon) with FDR correction (Benjamini-Hochberg). Calculate AUC for top candidates.

Visualizing the Discovery Pipeline

Discovery & Validation Workflow

Mechanism to Biomarker & Target

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Biomarker and Target Discovery Experiments

| Reagent/Material | Supplier Examples | Function in Discovery Pipeline |

|---|---|---|

| TMTpro 16-plex Label Reagents | Thermo Fisher Scientific | Isobaric tags for multiplexed, quantitative comparison of up to 16 proteomic samples in a single MS run, reducing batch effects. |

| Fe-NTA Magnetic Beads | Thermo Fisher, Qiagen | High-affinity enrichment of phosphopeptides from complex digests for phosphoproteomic studies. |

| SMARTer Single-Cell RNA-seq Kits | Takara Bio | Enable full-length transcriptome amplification from single cells for discovering cell-type specific biomarkers. |

| Olink Target 96/384 Panels | Olink Proteomics | Validate protein biomarker candidates using highly specific, multiplexed immunoassays requiring minimal sample volume. |

| Crispr-Cas9 Knockout Libraries | Horizon Discovery | Perform genome-wide or pathway-focused loss-of-function screens to validate essentiality of candidate therapeutic targets. |

| Phenotypic Screening Assay Kits (e.g., CellTiter-Glo) | Promega | Measure cell viability/proliferation in high-throughput format during functional validation of targets. |

| Patient-Derived Xenograft (PDX) Models | Jackson Laboratory, Champions Oncology | In vivo validation of biomarkers and therapeutic targets in a clinically relevant human tissue microenvironment. |

| Stable Isotope Labeled Metabolites (e.g., 13C-Glucose) | Cambridge Isotope Labs | Enable flux analysis to map dynamic metabolic pathway activity and identify druggable metabolic dependencies. |

Integrative Data as a Hypothesis-Generating Engine

In the context of translational medicine, the primary objective of multi-omics studies is to bridge the gap between molecular discoveries and clinical application, thereby accelerating the development of diagnostics, prognostics, and therapeutics. Integrative data analysis serves as the critical engine for generating actionable biological hypotheses from these complex datasets, moving beyond single-layer descriptions to uncover the multi-scale mechanisms of disease.

The Hypothesis-Generation Framework in Translational Multi-Omics

The traditional hypothesis-driven research model is increasingly complemented by data-driven discovery in translational medicine. Integrative analysis of genomics, transcriptomics, proteomics, metabolomics, and epigenomics data generates novel hypotheses about disease drivers, therapeutic targets, and biomarker signatures. This process typically follows a structured workflow:

- Data Generation & Curation: Acquisition of multiple omics data types from well-phenotyped clinical cohorts.

- Pre-processing & Quality Control: Normalization, batch correction, and imputation for each data layer.

- Multi-Omics Integration: Application of statistical and machine learning models to fuse datasets.

- Pattern & Network Discovery: Identification of cross-omic modules, pathways, and interaction networks associated with phenotypes.

- Prioritization & Hypothesis Formulation: Ranking of candidate drivers, biomarkers, or mechanisms for experimental validation.

Core Methodologies for Integrative Analysis

Similarity-Based Integration

Methods like Multiple Kernel Learning (MKL) combine different omics data types by constructing separate similarity matrices (kernels) for each modality and then fusing them to predict a clinical outcome.

Protocol: Multiple Kernel Learning for Patient Stratification

- Input: Matrices for mRNA expression, DNA methylation, and protein abundance from tumor samples (n=200) with associated progression-free survival (PFS) data.

- Kernel Construction: Generate Gaussian kernels for each omics data type, optimizing the bandwidth parameter via cross-validation.

- Kernel Fusion: Employ a weighted linear combination (e.g.,

K_combined = w1*K_mRNA + w2*K_methyl + w3*K_protein). Weights can be fixed or optimized. - Model Training: Use the combined kernel with a kernel-based algorithm (e.g., Support Vector Regression for continuous outcomes, Cox regression for survival) to predict PFS.

- Output: A predictive model and the contribution weights of each omics layer, highlighting which data type is most informative for the phenotype. Patients can be clustered based on the fused kernel similarity to identify novel subtypes.

Matrix Factorization-Based Integration

Methods such as Multi-Omics Factor Analysis (MOFA) decompose multiple data matrices into a set of common latent factors that capture the shared variance across omics types.

Protocol: MOFA for Identifying Latent Biological Processes

- Input: Centered and scaled matrices for gene expression, chromatin accessibility (ATAC-seq), and metabolite levels from a cohort of rheumatoid arthritis synovial tissue biopsies (n=150).

- Model Setup: Specify the number of factors (e.g., 10-15). Use default sparsity priors to promote factor-specific loading on features.

- Training: Run the model using stochastic variational inference until the evidence lower bound (ELBO) converges.

- Interpretation: Correlate factors with clinical metadata (e.g., disease activity score). Visualize factor loadings to identify which genes, genomic regions, and metabolites define each latent process (e.g., "Factor 1: Inflammatory Response").

- Hypothesis Output: Formulate a hypothesis such as: "Latent Factor 3, characterized by high loadings on GLUT1 expression and lactate abundance, represents a hypoxic metabolic program that correlates with severe radiographic progression."

Network-Based Integration

This approach builds molecular interaction networks (e.g., protein-protein interaction) and overlays multi-omics differential data to identify dysregulated subnetworks or communities.

Protocol: Prize-Collecting Steiner Forest for Driver Subnetwork Discovery

- Input: 1) A comprehensive protein-protein interaction (PPI) network. 2) Differential expression p-values for genes (from RNA-seq) and differential phosphorylation scores for proteins (from phospho-proteomics) between drug-resistant vs. sensitive cancer cell lines.

- Node Prize Assignment: Convert p-values/scores to "prizes" for each molecular entity (e.g.,

prize = -log10(p-value)). - Network Construction: Use the PPI network as the underlying graph, with edge costs typically based on confidence scores.

- Algorithm Execution: Run the Prize-Collecting Steiner Forest algorithm to find a connected subnetwork that maximizes the total collected prizes (highly dysregulated nodes) while minimizing the cost of included edges.

- Output: A dense subnetwork of interacting genes and proteins hypothesized to be coordinately involved in driving drug resistance. Central hubs within this subnetwork become high-priority candidates for functional validation.

Table 1: Comparison of Key Multi-Omics Integration Methods

| Method | Category | Primary Use Case | Key Output | Example Tool/Package |

|---|---|---|---|---|

| Multiple Kernel Learning (MKL) | Similarity-based | Supervised prediction, patient stratification | Outcome prediction model, kernel weights | Pykernels, mixKernel |

| Multi-Omics Factor Analysis (MOFA) | Factorization | Unsupervised discovery of latent factors | Latent factors & loadings, data imputation | MOFA2 (R/Python) |

| Similarity Network Fusion (SNF) | Network-based | Patient clustering using multiple data types | Fused patient similarity network | SNFtool (R) |

| Integrative NMF (iNMF) | Factorization | Joint clustering across omics, biomarker ID | Consensus clusters, feature weights | LIGER (R) |

| Multi-omics Master Regulator Analysis | Network-based | Identifying upstream causal regulators | Master regulator protein activity | MARINa / VIPER |

Table 2: Quantitative Outcomes from Recent Integrative Multi-Omics Studies in Translational Medicine

| Disease Area | Omics Layers Integrated | Cohort Size (n) | Key Hypothesis Generated | Validation Outcome |

|---|---|---|---|---|

| Alzheimer's Disease | GWAS, Transcriptomics, Proteomics, Methylomics | Post-mortem brains: 1,200 | The SPI1 transcription factor orchestrates a microglial network influencing TREM2 expression and amyloid phagocytosis. | Confirmed in iPSC-derived microglial models; SPI1 reduction ameliorated pathology in vitro. |

| Triple-Negative Breast Cancer | WGS, RNA-seq, Methylomics, Histopathology | Patients: 465 | Recurrent RASAL2 silencing (methylation) activates a MAPK/MEK signaling circuit independent of KRAS mutations. | In vivo xenograft model showed sensitivity to MEK inhibitors in RASAL2-silenced tumors. |

| Severe COVID-19 | Single-cell RNA-seq, Proteomics (plasma), CyTOF | Blood samples: 128 | Monocyte inflammasome activation and cytosolic DNA sensing pathways converge to drive IL-1β/IL-18 release. | Blocking the AIM2 inflammasome axis reduced cytokine release in ex vivo whole-blood assays. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Multi-Omics Hypothesis Validation

| Item | Function in Validation | Example Vendor/Catalog | Key Consideration |

|---|---|---|---|

| CRISPR-Cas9 Knockout Kits | Functional validation of candidate driver genes identified from integrative networks. | Synthego (sgRNA kits), Horizon Discovery | Ensure high editing efficiency in your cell model; include multiple sgRNAs per target. |

| Phospho-Specific Antibodies | Confirm predicted activity states of signaling pathways (e.g., p-ERK, p-AKT). | Cell Signaling Technology, Abcam | Validate antibody specificity for the modified epitope via peptide competition or genetic knockout. |

| Recombinant Cytokines/Growth Factors | Perturb systems to test predicted network responses (e.g., add TNF-α to test NF-κB module). | PeproTech, R&D Systems | Use carrier-free versions at biologically relevant concentrations (pg/mL to ng/mL). |

| Activity-Based Protein Profiling (ABPP) Probes | Directly measure enzymatic activity changes predicted from metabolomic-proteomic integration. | ActivX, Merck | Requires specialized mass spectrometry readouts; use with appropriate vehicle controls. |

| LC-MS Grade Solvents & Columns | Essential for reproducible metabolomics and proteomics validation experiments. | Fisher Chemical, Waters Corp | Bulk solvents should be from a single lot; column chemistry must match the analyte class. |

| Stable Isotope Tracers (e.g., 13C-Glucose) | Trace metabolic fluxes through pathways highlighted by integrative analysis (e.g., glycolysis, TCA cycle). | Cambridge Isotope Laboratories | Purity (>99% 13C) is critical. Design time-course experiments to capture flux dynamics. |

Visualizing the Workflow and Pathways

Multi-omics Hypothesis Generation Workflow

Hypothetical Integrated Pathway from Multi-Omics

Blueprint for Integration: Methodologies and Direct Clinical Applications

Strategic Frameworks for Multi-Omics Study Design

The primary objective of multi-omics studies in translational medicine is to bridge the gap between molecular discoveries and clinical applications. This involves integrating disparate molecular data layers—genomics, transcriptomics, proteomics, metabolomics—to construct comprehensive biological networks that elucidate disease mechanisms, identify novel therapeutic targets, and discover predictive biomarkers for patient stratification.

Core Strategic Frameworks

Based on current literature, three predominant strategic frameworks guide multi-omics study design in translational research.

Table 1: Comparison of Primary Multi-Omics Study Frameworks

| Framework | Primary Objective | Key Advantage | Major Challenge | Typical Use Case in Translational Medicine |

|---|---|---|---|---|

| Horizontal Integration | Analyze multiple omics layers from the same set of biological samples. | Captures a simultaneous, systems-level snapshot of biological state. | High cost; complex data integration. | Biomarker discovery for disease classification. |

| Vertical Integration | Trace the flow of biological information from one molecular layer to the next (e.g., genome → transcriptome → proteome). | Elucidates causal mechanisms and regulatory relationships. | Requires sophisticated longitudinal or perturbation study designs. | Understanding drug mechanism of action or resistance. |

| Temporal/Dynamic Integration | Profile omics layers across multiple time points or in response to a perturbation. | Reveals dynamic, causal relationships and pathway activation states. | Extremely resource-intensive; requires advanced computational modeling. | Monitoring treatment response or disease progression. |

Experimental Design Methodologies

Protocol for a Horizontal Integration Study (Biomarker Discovery)

Aim: To identify a multi-omics signature distinguishing responders from non-responders to a cancer immunotherapy.

- Cohort Selection: Recruit 50 matched pairs of responders and non-responders (N=100). Obtain pre-treatment tumor biopsy (FFPE or fresh-frozen) and plasma.

- Sample Processing:

- DNA Sequencing (WES): Extract tumor and germline DNA. Perform Whole Exome Sequencing (Illumina NovaSeq) to identify somatic mutations and neoantigen load.

- RNA Sequencing (Bulk): Extract total RNA. Perform poly-A selected RNA-seq (Illumina) for gene expression and immune cell deconvolution (using CIBERSORTx).

- Proteomics (Mass Spectrometry): From adjacent tissue, perform liquid chromatography-tandem mass spectrometry (LC-MS/MS) with TMT labeling for quantitative proteomics.

- Metabolomics (LC-MS): From plasma, perform untargeted LC-MS to profile global metabolites.

- Data Integration: Use multi-omics factor analysis (MOFA+) to identify latent factors that explain variance across all data types and correlate with clinical response.

Protocol for a Vertical Integration Study (Mechanistic Elucidation)

Aim: To determine the functional impact of a genetic risk variant on a disease phenotype.

- Cohort with Genotype: Recruit carriers and non-carriers of the variant (N=30 each). Obtain primary cells (e.g., fibroblasts) or establish iPSC-derived cell lines.

- Perturbation: Use CRISPR/Cas9 to isogenically correct the variant in carrier-derived cells, creating a matched control.

- Multi-Omics Profiling: For both wild-type and corrected lines:

- ATAC-seq: Profile chromatin accessibility.

- RNA-seq: Profile gene expression.

- Phospho-proteomics: Enrich for phosphorylated peptides followed by LC-MS/MS to profile signaling pathway activity.

- Integration: Construct a regulatory network linking the variant locus (via ATAC-seq peaks) to differentially expressed genes (RNA-seq) and altered signaling pathways (phospho-proteomics).

Visualizing Workflows and Pathways

Diagram 1: Horizontal multi-omics workflow for biomarker discovery.

Diagram 2: Vertical integration strategy to establish mechanism.

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Research Reagents for a Core Multi-Omics Study

| Category | Specific Reagent/Solution | Function in Multi-Omics Workflow |

|---|---|---|

| Nucleic Acid Isolation | AllPrep DNA/RNA/miRNA Universal Kit (Qiagen) | Simultaneous co-extraction of high-quality genomic DNA and total RNA from a single tissue sample, preserving sample integrity for parallel sequencing. |

| Protein Digestion & Labeling | TMTpro 16plex Label Reagent Set (Thermo Fisher) | Isobaric chemical tags for multiplexed quantitative proteomics, enabling simultaneous LC-MS/MS analysis of up to 16 samples, improving throughput and reducing run-to-run variation. |

| Metabolite Extraction | Methanol:Acetonitrile:Water (2:2:1, v/v) Solvent System | A common, standardized solvent for global metabolite extraction from plasma or tissues, ensuring broad coverage of polar and semi-polar metabolites for LC-MS. |

| Chromatin Profiling | Illumina Tagmentase (Tn5) Enzyme | Engineered transposase used in ATAC-seq to simultaneously fragment DNA and add sequencing adapters, mapping open chromatin regions from low cell inputs. |

| Single-Cell Partitioning | 10x Genomics Chromium Single Cell Multiome ATAC + Gene Expression Kit | Enables coupled profiling of chromatin accessibility and gene expression from the same single nucleus, crucial for vertical integration at single-cell resolution. |

| Data Integration Software | MOFA+ (R/Python Package) | A statistical framework for multi-omics integration that decomplicates data into a set of latent factors, identifying shared and specific sources of variation across omics layers. |

In the pursuit of translational medicine, multi-omics studies aim to create a holistic, systems-level understanding of disease biology by integrating data from genomics, transcriptomics, proteomics, and metabolomics. The fidelity and depth of this integration are fundamentally dependent on the robustness, scalability, and standardization of the underlying data generation pipelines. This guide provides a technical deep dive into the core pipelines for Next-Generation Sequencing (NGS) and Mass Spectrometry (MS), the two pillars of modern omics, while framing their objectives within the translational research thesis.

Next-Generation Sequencing (NGS) Pipelines

NGS pipelines generate data for genome, exome, and transcriptome sequencing, enabling the discovery of genetic variants, gene expression profiles, and epigenetic modifications.

Key Experimental Protocol: Illumina Short-Read Whole Genome Sequencing

- Library Preparation: Genomic DNA is fragmented (e.g., via sonication or enzymatic digestion) to ~350 bp. Fragments are end-repaired, A-tailed, and ligated to platform-specific adapters containing unique dual indices (UDIs) for sample multiplexing and sequencing primers.

- Size Selection & Purification: Libraries are size-selected using SPRI beads to ensure uniform fragment length.

- Library QC: Quantification is performed via qPCR (e.g., KAPA Library Quant Kit) and fragment size distribution is analyzed on a Bioanalyzer or TapeStation.

- Cluster Amplification: Libraries are loaded onto a flow cell. In a bridge amplification process on the Illumina platform, each fragment is isothermally amplified into a clonal cluster.

- Sequencing-by-Synthesis (SBS): Fluorescently labeled, reversibly terminated nucleotides are added sequentially. After each incorporation, a laser excites the fluorophore, and an optical system captures the emitted wavelength to identify the base. The terminator is then cleaved for the next cycle. Paired-end sequencing (e.g., 2x150 cycles) is standard.

- Primary Data Analysis (On-instrument): The sequencer's software performs base calling and demultiplexing, converting raw images into sequence reads (FASTQ files) assigned to individual samples.

Table 1: Core NGS Applications in Translational Omics

| Modality | Typical Coverage/Depth | Key Output | Primary Translational Application |

|---|---|---|---|

| Whole Genome Seq (WGS) | 30x - 100x | Germline & somatic SNVs, Indels, CNVs, SVs | Genetic disease diagnosis, cancer biomarker discovery, pharmacogenomics. |

| Whole Exome Seq (WES) | 100x - 200x | Coding region variants (SNVs, Indels) | Mendelian disorder gene identification, tumor driver mutation profiling. |

| RNA Sequencing | 20-50 million reads/sample | Gene/isoform expression counts, fusion genes | Molecular subtyping, pathway activity inference, biomarker discovery. |

| Chip-Seq / ATAC-Seq | 20-50 million reads/sample | Peaks (genomic regions of protein binding/open chromatin) | Understanding regulatory mechanisms and epigenetic drivers of disease. |

Mass Spectrometry-Based Proteomics & Metabolomics Pipelines

MS pipelines characterize and quantify proteins and metabolites, providing direct functional readouts of cellular state.

Key Experimental Protocol: Data-Dependent Acquisition (DDA) Liquid Chromatography-Tandem MS (LC-MS/MS) for Proteomics

- Sample Preparation: Proteins are extracted from tissue or biofluids, reduced, alkylated, and digested with trypsin into peptides. Peptides may be fractionated (e.g., via high-pH reverse-phase) or labeled (TMT, SILAC) for multiplexing.

- Liquid Chromatography (LC): Peptides are loaded onto a reverse-phase C18 column (nanoflow scale) and separated via a gradient of increasing organic solvent (acetonitrile) over 60-120 minutes.

- Ionization: Eluting peptides are ionized via electrospray ionization (ESI) and introduced into the mass spectrometer.

- Mass Spectrometry Analysis (DDA Cycle):

- MS1 Survey Scan: The orbitrap or TOF mass analyzer measures the m/z and intensity of all intact peptide ions.

- Peptide Selection: The most intense ions (e.g., top 20) from the MS1 scan are selected for fragmentation.

- Fragmentation: Selected precursor ions are fragmented via Collision-Induced Dissociation (CID) or Higher-Energy C-trap Dissociation (HCD).

- MS2 Scan: The fragment ions (product spectra) are analyzed to determine the peptide sequence.

- Data Processing: Raw files are processed using search engines (MaxQuant, FragPipe, DIA-NN) against a protein sequence database for identification and quantification.

Table 2: Core Mass Spectrometry Modalities in Translational Omics

| Modality | Typical Throughput | Key Output | Primary Translational Application |

|---|---|---|---|

| DDA Proteomics | 10-100s of samples/run | Protein identification & label-free/TMT quantification | Biomarker discovery, signaling pathway analysis, target engagement. |

| Data-Independent Acquisition (DIA/SWATH) | 10-100s of samples/run | Highly reproducible quantification of 1000s of proteins | Large-scale cohort studies requiring high reproducibility. |

| Targeted Proteomics (PRM/SRM) | 100s of samples/run | Highly sensitive, precise quantification of pre-defined proteins | Validation of biomarker panels, clinical assay development. |

| Untargeted Metabolomics | 10-100s of samples/run | Relative abundance of 1000s of metabolite features | Discovery of metabolic dysregulation, mechanistic toxicology. |

| Targeted Metabolomics | 100s-1000s of samples/run | Absolute concentration of defined metabolite panels | Validation of metabolic biomarkers, clinical chemistry applications. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for NGS and MS Pipelines

| Item | Function | Example Product/Category |

|---|---|---|

| NGS Library Prep Kit | Fragments, repairs, and adapts DNA/RNA for sequencing. | Illumina DNA Prep, KAPA HyperPrep, NEBNext Ultra II. |

| Unique Dual Indexes (UDIs) | Allows multiplexing of many samples without index hopping cross-talk. | Illumina IDT for Illumina UDIs, Twist Unique Dual Indexes. |

| SPRI Beads | Magnetic beads for DNA/RNA size selection and purification. | Beckman Coulter AMPure XP, KAPA Pure Beads. |

| Trypsin, MS-Grade | Protease for specific digestion of proteins into peptides for LC-MS/MS. | Promega Trypsin Gold, Roche Trypsin, Sequencing Grade. |

| Tandem Mass Tags (TMT) | Isobaric labels for multiplexed quantitative proteomics (up to 18 samples). | Thermo Scientific TMTpro 18plex. |

| C18 LC Column | Nanoflow column for separation of peptides prior to MS injection. | Waters nanoEase M/Z HSS C18, PepSep ReproSil C18. |

| Internal Standards (Metabolomics) | Stable isotope-labeled compounds for normalization & quantification in MS. | Cambridge Isotope Laboratories MRM standards, Avanti SPLASH LipidoMix. |

| QC Reference Material | Standardized sample (e.g., HeLa digest, NIST plasma) for monitoring pipeline performance. | Pierce HeLa Protein Digest Standard, NIST SRM 1950 Plasma. |

Visualizing Core Workflows and Relationships

NGS Data Generation and Analysis Pipeline

DDA LC-MS/MS Proteomics Workflow

Omics Pipelines Feed Translational Objectives

Within the broader thesis on the objectives of multi-omics studies in translational medicine, Objective 3 focuses on moving beyond broad disease classifications to identify discrete, mechanistically defined patient subgroups. This precision stratification, or endotyping, is foundational for developing targeted therapies and allocating them to patients most likely to respond, thereby improving clinical trial success rates and patient outcomes.

Core Multi-Omics Data Types for Stratification

Endotyping requires the integration of multiple layers of molecular data to capture the full complexity of disease pathophysiology.

Table 1: Core Omics Modalities for Patient Stratification

| Omics Layer | Measured Entities | Key Technology Platforms | Primary Insight for Stratification |

|---|---|---|---|

| Genomics | DNA sequence variants (SNPs, indels, CNVs), structural variations | Whole-genome sequencing, SNP arrays, MLPA | Inherited risk, germline mutations, pharmacogenomic markers. |

| Transcriptomics | RNA expression levels (coding, non-coding), splicing variants | RNA-Seq, single-cell RNA-Seq, microarrays | Active biological pathways, cellular states, immune infiltration signatures. |

| Epigenomics | DNA methylation, histone modifications, chromatin accessibility | Bisulfite sequencing, ChIP-Seq, ATAC-Seq | Regulatory landscape, gene silencing/activation, environmental influences. |

| Proteomics | Protein abundance, post-translational modifications, protein complexes | Mass spectrometry (LC-MS/MS), multiplex immunoassays, RPPA | Functional effectors, signaling pathway activity, drug targets. |

| Metabolomics | Small-molecule metabolites (lipids, sugars, amino acids) | LC-MS, GC-MS, NMR | Metabolic pathway activity, real-time physiological state, biomarkers. |

Experimental Protocols for Multi-Omics Endotyping

Integrated Multi-Omics Cohort Profiling Protocol

This protocol outlines a standard workflow for generating data for stratification studies.

A. Patient Cohort & Biospecimen Collection:

- Cohort Design: Recruit a well-characterized patient cohort (n≥500) with deep phenotypic data (clinical history, imaging, lab values, outcomes). Include healthy controls.

- Sample Types: Collect matched primary tissue (e.g., tumor biopsy, synovial fluid), blood (for plasma/serum, PBMCs), and, if possible, stool for microbiome.

- Processing: Immediately flash-freeze tissue in liquid nitrogen. Separate blood components via density gradient centrifugation. Store all samples at -80°C.

B. Parallel Multi-Omics Assaying:

- DNA/Genomics: Extract genomic DNA from tissue or blood. Perform Whole Genome Sequencing (WGS, 30x coverage) using Illumina NovaSeq. Align to GRCh38 reference genome. Call variants using GATK Best Practices pipeline.

- RNA/Transcriptomics: Extract total RNA (RIN > 7). Prepare poly-A selected libraries and sequence on Illumina platform (minimum 50M paired-end reads per sample). Align with STAR, quantify with featureCounts.

- DNA Methylation/Epigenomics: Perform bisulfite conversion on 500ng DNA. Hybridize to Infinium MethylationEPIC v2.0 BeadChip or use whole-genome bisulfite sequencing.

- Proteomics: For discovery, perform tissue lysis, tryptic digestion, TMT labeling, and LC-MS/MS on an Orbitrap Eclipse. For validation, use Olink Target 96 or 384 plex panels.

C. Data Integration & Analysis:

- Preprocessing: Normalize each dataset separately (e.g., DESeq2 for RNA-Seq, ssNoob for methylation, limma for proteomics).

- Dimensionality Reduction: Apply unsupervised clustering (e.g., k-means, hierarchical) and visualization (t-SNE, UMAP) to each data layer.

- Integrative Clustering: Use multi-view or consensus clustering algorithms (e.g., MOFA+, iClusterBayes) to identify patient subgroups robust across omics layers.

- Validation: Confirm clusters in an independent validation cohort using a minimal classifier (e.g., Random Forest on top 50 features).

Diagram Title: Multi-Omics Endotyping Workflow

Single-Cell RNA-Seq for Cellular Subtyping Protocol

Critical for resolving cellular heterogeneity within tissues.

A. Single-Cell Suspension Preparation:

- Mechanically dissociate and enzymatically digest fresh tissue (e.g., with collagenase IV/DNase I) to create a single-cell suspension. For frozen tissue, use a dedicated nuclei isolation protocol.

- Pass cells through a 40μm flow cytometry strainer. Perform live/dead staining with DAPI or propidium iodide. Use FACS or magnetic-activated cell sorting (MACS) to positively select live, singlet cells.

B. Library Preparation & Sequencing:

- Load cells onto the 10x Genomics Chromium Controller to generate single-cell Gel Beads-in-Emulsion (GEMs).

- Perform reverse transcription, cDNA amplification, and library construction per the Chromium Next GEM Single Cell 3' Kit v3.1 protocol.

- Pool libraries and sequence on an Illumina NovaSeq 6000 aiming for ≥20,000 reads per cell.

C. Bioinformatic Analysis for Stratification:

- Process raw data using Cell Ranger to generate a feature-barcode matrix.

- Use Seurat (R) or Scanpy (Python) for downstream analysis: QC filtering, normalization, highly variable gene selection, PCA, graph-based clustering, and UMAP visualization.

- Assign cell type identity using reference atlases (e.g., Azimuth) or marker genes.

- Perform differential expression between patients or conditions within each cell type to identify patient-specific dysregulation patterns.

Diagram Title: Single-Cell RNA-Seq Stratification Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Kits for Multi-Omics Stratification Studies

| Item Name | Supplier Examples | Function in Endotyping Studies |

|---|---|---|

| PAXgene Blood RNA Tube | Qiagen, BD | Stabilizes intracellular RNA in whole blood for consistent transcriptomic profiles from patient blood draws. |

| AllPrep DNA/RNA/miRNA Universal Kit | Qiagen | Simultaneously isolates high-quality DNA, total RNA, and miRNA from a single tissue sample, preserving multi-omic linkage. |

| TruSeq Stranded Total RNA Library Prep Kit | Illumina | Prepares RNA-Seq libraries for bulk transcriptomics, preserving strand information for accurate expression quantification. |

| Chromium Next GEM Single Cell 3' Kit v3.1 | 10x Genomics | Enables high-throughput single-cell transcriptomic profiling for dissecting cellular heterogeneity within patient samples. |

| Infinium MethylationEPIC BeadChip Kit | Illumina | Provides comprehensive, cost-effective profiling of >935,000 methylation sites across the genome for epigenomic stratification. |

| TMTpro 16plex Label Reagent Set | Thermo Fisher | Allows multiplexed quantitative proteomic analysis of up to 16 samples in one LC-MS/MS run, enhancing throughput and reducing batch effects. |

| Olink Target 96 or 384 Panels | Olink | Enables high-specificity, high-sensitivity multiplex immunoassay profiling of 92-384 proteins in minute sample volumes for validation. |

| CellHash Tagging Antibodies | BioLegend | Allows multiplexing of samples in single-cell experiments by labeling cells from different patients with unique barcoded antibodies prior to pooling. |

Data Integration & Analytical Pathways

The core challenge is the integrative analysis of heterogeneous, high-dimensional datasets.

Table 3: Multi-Omics Integration Methods for Patient Stratification

| Method Category | Example Algorithm | Key Principle | Output for Stratification |

|---|---|---|---|

| Early Integration | Concatenation + PCA | Datasets are merged at the feature level prior to analysis. | Combined latent variables for clustering. |

| Intermediate Integration | MOFA+ (Multi-Omics Factor Analysis) | Learns a set of common (and unique) latent factors that explain variance across all omics datasets. | Factor values per patient used as features for clustering and endotype characterization. |

| Late Integration | Consensus Clustering | Clustering is performed on each dataset independently, and results are combined to find a consensus partition. | A robust consensus patient cluster assignment. |

| Network-Based Integration | mixOmics (DIABLO) | Models relationships between features from different omics types to identify multi-omics biomarker panels. | A weighted multi-omics signature that discriminates endotypes. |

Diagram Title: Multi-Omics Data Integration Pathways

Precision patient stratification and endotyping, as enabled by integrated multi-omics, represents a paradigm shift from symptom-based to mechanism-based disease classification. The technical workflows, reagents, and computational methods outlined here provide a roadmap for researchers to deconvolve patient heterogeneity. Successful implementation directly feeds into the subsequent translational objectives: identifying novel drug targets (Objective 4), discovering predictive biomarkers (Objective 5), and fundamentally understanding therapeutic resistance (Objective 6), thereby closing the loop from bench to bedside and back.

Within the multi-objective framework of translational medicine, multi-omics studies serve distinct, synergistic goals: Objective 1 defines disease endotypes, Objective 2 identifies predictive biomarkers, and Objective 3 maps therapeutic targets. Objective 4: Accelerating Drug Discovery and Pharmaco-omics is the translational engine that integrates these insights to de-risk and accelerate therapeutic development. It specifically applies pharmacogenomics, pharmacotranscriptomics, pharmacoproteomics, and pharmacometabolomics (collectively, pharmaco-omics) to elucidate mechanisms of drug action, predict variable drug response, and identify novel repurposing opportunities. This guide details the technical execution of Objective 4.

Core Pharmaco-omics Methodologies

Protocol: Multi-omic Profiling for Drug Response Prediction

This protocol integrates baseline omics data with post-treatment phenotypic response to build predictive models.

- Cohort & Treatment: Enroll patient cohort (n≥150) in a Phase II clinical trial or well-controlled observational study. Administer the drug of interest at a standardized dose.

- Biospecimen Collection: Collect pre-treatment tissue (e.g., tumor biopsy, peripheral blood mononuclear cells) and plasma/serum. Collect matched post-treatment samples at a defined early time point (e.g., 24-72 hours).

- Multi-omics Data Generation:

- Genomics: Perform whole-exome or targeted sequencing on pre-treatment DNA to identify germline and somatic variants.

- Transcriptomics: Conduct RNA-Seq on pre- and post-treatment tissue.

- Proteomics & Phosphoproteomics: Utilize liquid chromatography-tandem mass spectrometry (LC-MS/MS) on pre- and post-treatment tissue and plasma.

- Metabolomics: Apply LC-MS/MS-based untargeted metabolomics on pre- and post-treatment plasma.

- Phenotypic Anchoring: Quantify primary clinical response endpoint (e.g., % tumor shrinkage, change in disease activity score, drug clearance rate).

- Data Integration & Modeling: Use multi-omics factor analysis (MOFA) or similar to derive latent factors. Train machine learning models (e.g., random forest, elastic net) using pre-treatment omics features to predict continuous or categorical response.

Protocol: Mechanistic Deconvolution of Drug Action via Perturbation Omics

This in vitro protocol delineates the direct signaling and regulatory impacts of a compound.

- Cell Model: Use a physiologically relevant cell line (primary if possible). Establish vehicle and treatment groups in triplicate.

- Perturbation: Treat cells with the drug at multiple concentrations (IC10, IC50, IC90) and time points (15min, 1h, 6h, 24h). Include a known inhibitor of the target as a positive control.

- Multi-layer Profiling: Harvest cells for concurrent omics analyses:

- Transcriptomics: Bulk or single-cell RNA-Seq.

- Epigenomics: ATAC-Seq or ChIP-Seq for relevant histone marks.

- Proteomics: Global and phosphoproteomics via LC-MS/MS.

- Causal Network Inference: Integrate temporal data using tools like CausalPath or DoRothEA to reconstruct drug-induced signaling cascades and transcriptional regulatory networks. Validate key edges via siRNA knockdown.

Data Synthesis and Tables

Table 1: Representative Quantitative Outcomes from Pharmaco-omics Studies

| Omics Layer | Application Example | Key Metric | Typical Result Range | Impact on Drug Discovery |

|---|---|---|---|---|

| Pharmacogenomics | Warfarin dosing algorithm | Prediction accuracy (R²) of stable dose | R² = 0.55-0.65 | Reduces time to stable INR by ~30%. |

| Pharmacotranscriptomics | PD-1 inhibitor response in melanoma | AUC of baseline gene signature | AUC = 0.70-0.85 | Identifies non-responders, sparing toxicity. |

| Pharmacoproteomics | PARP inhibitor sensitivity in BRCA1/2-wt tumors | Phosphosite change (Log2 FC) post-treatment | FC of pH2AX > 2.0 indicates sensitivity | Expands patient population for targeted therapy. |

| Pharmacometabolomics | Statin-induced myopathy risk | Serum metabolite odds ratio (OR) | OR for carnitine precursors > 3.0 | Enables pre-emptive mitigation of adverse events. |

Table 2: Essential Research Reagent Solutions for Pharmaco-omics

| Reagent / Solution | Function in Pharmaco-omics | Critical Specification |

|---|---|---|

| Stable Isotope-Labeled Amino Acids (SILAC) | Enables quantitative tracking of de novo protein synthesis and degradation rates post-drug treatment. | >98% isotopic enrichment; lysine and arginine variants. |

| Isobaric Mass Tags (e.g., TMTpro 18-plex) | Multiplexed, high-throughput quantitative proteomics across multiple drug doses/time points in a single MS run. | Batch-to-batch consistency in labeling efficiency. |

| Single-Cell Barcoding Kits (e.g., 10x Genomics) | Profiles drug response heterogeneity and identifies rare resistant subpopulations at transcriptome level. | High cell viability input; controlled partition efficiency. |

| Magnetic Bead-based Metabolite Extraction Kits | Rapid, reproducible purification of polar/ non-polar metabolites from plasma/tissue for LC-MS. | Broad metabolite coverage; low protein carryover. |

| Phosphatase/ Protease Inhibitor Cocktails | Preserves the in vivo phosphoproteome and proteome state at moment of cell lysis post-perturbation. | Broad-spectrum, compatible with MS analysis. |

Visualized Workflows and Pathways

Diagram Title: Pharmaco-omics Data Integration Workflow

Diagram Title: Drug-Induced mTOR Signaling & Omics Output

Within the overarching thesis on "Objectives of multi-omics studies in translational medicine research," this technical guide presents three detailed case studies. The core objective of multi-omics in translational contexts is to deconvolve disease heterogeneity, identify predictive biomarkers, and discover actionable therapeutic targets by integrating genomic, transcriptomic, proteomic, metabolomic, and other data layers. This approach moves beyond single-omics analyses to construct a systems-level understanding of pathophysiology, directly informing diagnostic development and therapeutic intervention strategies.

Case Study 1: Oncology - Breast Cancer Subtyping and Treatment Resistance

Context: A primary objective in translational oncology is to stratify patients based on molecular drivers and to understand mechanisms of therapy resistance. This case study details an integrative analysis of triple-negative breast cancer (TNBC) to identify resistance pathways to immune checkpoint inhibitors (ICI).

Experimental Protocol: Longitudinal Multi-Omics Profiling

- Cohort: Pre- and post-treatment (anti-PD-1) tumor biopsies and matched plasma from 50 TNBC patients (responders vs. non-responders).

- DNA Sequencing (WES): Performed on tumor and germline DNA using the Illumina NovaSeq 6000 platform (150bp paired-end). Somatic variants were called using GATK Mutect2 and annotated for driver status.

- RNA Sequencing (bulk): Total RNA from tumor tissue was sequenced (Illumina). Transcript quantification (RSEM) and pathway analysis (GSEA) were conducted.

- Proteomics & Phosphoproteomics: Liquid chromatography-tandem mass spectrometry (LC-MS/MS) on tissue lysates using TMT labeling. Phosphopeptides were enriched using TiO2 beads.

- Data Integration: Somatic mutations, differentially expressed genes/proteins, and activated phospho-pathways were integrated using multi-omics factor analysis (MOFA) to derive latent factors associated with resistance.

Table 1: Key Multi-Omics Features Associated with ICI Resistance in TNBC

| Omics Layer | Analytical Method | Feature Associated with Resistance | Frequency in Non-Responders | p-value |

|---|---|---|---|---|

| Genomics | Whole Exome Sequencing | Mutational Signature SBS42 (platin-like) | 65% | 0.007 |

| Transcriptomics | RNA-Seq | Upregulation of VEGFA gene | 4.2-fold increase | 1.5e-5 |

| Proteomics | LC-MS/MS (TMT) | Downregulation of MHC-I complex proteins | 70% of cases | 0.003 |

| Phosphoproteomics | LC-MS/MS (TiO2) | Hyperphosphorylation of STAT3 (Tyr705) | 3.8-fold increase | 4.2e-4 |

| Integrative | MOFA Factor | Factor 1 (Driven by VEGFA, p-STAT3) | 82% variance explained | < 0.001 |

Multi-omics workflow for uncovering ICI resistance in TNBC.

Research Reagent Solutions (Oncology)

| Reagent/Material | Supplier Example | Function in Protocol |

|---|---|---|

| Illumina DNA Prep Kit | Illumina | Library preparation for WES. |

| TMTpro 16plex Label Reagent Set | Thermo Fisher | Isobaric labeling for multiplexed quantitative proteomics. |

| TiO2 Phosphopeptide Enrichment Kit | GL Sciences | Enrichment of phosphorylated peptides for MS analysis. |

| MOFA2 R/Bioconductor Package | GitHub (bioFAM) | Statistical tool for multi-omics data integration. |

| anti-pSTAT3 (Tyr705) Antibody | Cell Signaling Tech | Validation of phosphoproteomic findings via IHC/WB. |

Case Study 2: Neurology - Biomarker Discovery in Alzheimer's Disease

Context: A key translational objective in neurology is to identify robust, early diagnostic and prognostic biomarkers for complex diseases like Alzheimer's Disease (AD). This case integrates cerebrospinal fluid (CSF) proteomics with brain imaging and cognitive data.

Experimental Protocol: CSF Proteomics with Validation

- Cohort: CSF samples from 200 participants: Cognitively Normal (CN), Mild Cognitive Impairment (MCI), and AD dementia.

- Discovery Proteomics: CSF proteins were quantified using the Olink Explore 1536 platform (targeting inflammatory and neurology panels). Data was normalized and log2-transformed.

- Validation: Top candidate proteins were validated using an orthogonal method: LC-MS/MS with parallel reaction monitoring (PRM) in an independent cohort (n=100).

- Integration with Clinical Phenotypes: Protein levels were correlated with:

- Neuroimaging: Amyloid-PET SUVR and Tau-PET SUVR values.

- Cognition: Longitudinal change in Preclinical Alzheimer Cognitive Composite (PACC) scores over 36 months.

- Modeling: A multi-omics (protein + imaging) Cox proportional hazards model was built to predict progression from MCI to AD.

Table 2: CSF Proteomic Biomarkers for AD Progression

| Protein Biomarker | Olink Panel | Fold Change (AD vs CN) | Correlation with Tau-PET (r) | Hazard Ratio for Progression (95% CI) |

|---|---|---|---|---|

| GFAP | Neurology | 2.1 | 0.72 | 2.5 (1.8-3.4) |

| NEFL | Neurology | 1.8 | 0.65 | 2.1 (1.5-2.9) |

| YKL-40 (CHI3L1) | Inflammation | 1.6 | 0.58 | 1.8 (1.3-2.5) |

| sTREM2 | Inflammation | 1.4 | 0.41 | 1.5 (1.1-2.0) |

| Multi-Protein Panel | Combined | - | - | 3.2 (2.2-4.6) |

Multi-modal biomarker discovery workflow for Alzheimer's Disease.

Research Reagent Solutions (Neurology)

| Reagent/Material | Supplier Example | Function in Protocol |

|---|---|---|

| Olink Explore 1536 | Olink Proteomics | High-plex, high-sensitivity proximity extension assay for protein quantification. |

| PRM Calibration Kit (Hi3) | Waters Corporation | Provides heavy labeled peptide standards for absolute quantification in PRM-MS. |

| CSF Abeta42/Aβ40, p-Tau181 Immunoassay | Fujirebio (Lumipulse) | Core AD CSF biomarkers for cohort stratification. |

| Amyloid-PET Tracer ([18F]Flutemetamol) | GE Healthcare | In vivo imaging of amyloid plaque burden. |

Case Study 3: Immunology - Mapping the Immune Response in Rheumatoid Arthritis

Context: The translational objective is to delineate cell-type-specific molecular networks driving pathogenesis in autoimmune disease to enable targeted therapy. This study employs single-cell multi-omics on synovial tissue.

Experimental Protocol: Single-Cell Multi-Omics (CITE-seq)

- Sample Processing: Synovial tissue from 10 Rheumatoid Arthritis (RA) and 5 osteoarthritis (OA, control) patients was digested to a single-cell suspension.

- CITE-seq: Cells were processed using the 10x Genomics Chromium Next GEM Single Cell 5' Kit v2 with Feature Barcoding. A panel of 50 antibodies against surface proteins (TotalSeq-B) was included.

- Sequencing & Primary Analysis: Libraries were sequenced on NovaSeq. Cell Ranger was used for alignment, demultiplexing, and feature counting.

- Bioinformatics: Seurat R package was used for:

- Clustering: Based on integrated RNA + ADT (antibody-derived tag) data.

- Differential Analysis: Identifying marker genes and proteins for RA-expanded cell populations.

- Cell-Cell Communication: Inferring ligand-receptor interactions using NicheNet.

- Validation: Flow cytometry sorted fibroblast subsets were cultured for functional assays (cytokine release upon stimulation).

Table 3: Single-Cell Characterization of RA Synovial Tissue

| Cell Cluster (Subset) | % of Cells (RA vs OA) | Key Transcriptomic Marker | Key Surface Protein (ADT) | Putative Pathogenic Role |

|---|---|---|---|---|

| PDGFRA+ FAPα+ Fibroblasts | 22% vs 5% | MMP3, IL6 | FAPα (high) | Tissue invasion, inflammation |

| HLA-DRhi CD86+ Macro | 18% vs 8% | TNF, CXCL10 | CD86 (high) | Antigen presentation, T cell activation |

| CD4+ Tph Cells | 12% vs 2% | CXCL13, PDCD1 | ICOS (high) | B cell help, ectopic lymphoneogenesis |

| Plasmablasts | 8% vs 1% | XBP1, JCHAIN | CD138 (high) | Autoantibody production |

Single-cell multi-omics workflow for dissecting RA synovial pathology.

Research Reagent Solutions (Immunology)

| Reagent/Material | Supplier Example | Function in Protocol |

|---|---|---|

| Chromium Next GEM Single Cell 5' Kit v2 | 10x Genomics | Enables simultaneous capture of single-cell transcriptome and surface protein data. |

| TotalSeq-B Antibody Cocktail | BioLegend | Oligo-tagged antibodies for CITE-seq surface protein detection. |

| Seurat R Toolkit | Satija Lab / CRAN | Comprehensive package for single-cell RNA-seq data analysis and integration with ADT data. |

| NicheNet R Package | GitHub | Predicts ligand-receptor interactions and downstream signaling from scRNA-seq data. |

| Anti-human FAPα Antibody (Sorting) | R&D Systems | Fluorescence-activated cell sorting (FACS) of pathogenic fibroblast subset for functional validation. |

These case studies exemplify the core objectives of multi-omics in translational medicine: to achieve deep molecular stratification (Oncology), to discover mechanism-informed biomarkers (Neurology), and to resolve cellular drivers and interactions (Immunology). The integration of disparate data types through structured workflows and advanced computational models generates actionable biological insights, accelerating the path from bench-scale discovery to bedside application in diagnostics and therapeutics.

Navigating the Complexity: Troubleshooting Data Integration and Biological Meaning

Common Pitfalls in Multi-Omics Experimental Design and Cohort Selection