Multi-Omics Integration Mastery: A Comprehensive Guide to Preprocessing Techniques for Robust Biological Discovery

This comprehensive guide details the critical preprocessing pipeline for successful multi-omics data integration, tailored for researchers, scientists, and drug development professionals.

Multi-Omics Integration Mastery: A Comprehensive Guide to Preprocessing Techniques for Robust Biological Discovery

Abstract

This comprehensive guide details the critical preprocessing pipeline for successful multi-omics data integration, tailored for researchers, scientists, and drug development professionals. We first establish the foundational principles and unique challenges of diverse omics datasets (genomics, transcriptomics, proteomics, metabolomics). We then delve into methodological approaches for normalization, batch effect correction, feature selection, and dimensionality reduction, highlighting key tools and workflows. A dedicated troubleshooting section addresses common pitfalls, data heterogeneity, and optimization strategies for computational efficiency. Finally, we review validation frameworks and comparative analyses of leading integration methods (early, intermediate, late fusion) to guide selection for specific biological questions. This article provides a complete roadmap from raw data to integrated, analysis-ready matrices for downstream discovery in translational research.

The Multi-Omics Landscape: Understanding Data Sources, Challenges, and Preprocessing Imperatives

Omics Technical Support Center

Troubleshooting Guides & FAQs

FAQ Category: Genomics (DNA Sequencing)

Q: Why is my whole-genome sequencing data showing low coverage in specific regions (e.g., GC-rich areas)?

- A: This is a common issue related to PCR amplification bias during library preparation. To mitigate:

- Protocol: Use a PCR-free library preparation kit for high-input DNA (>100ng). For low-input samples, employ kits with high-fidelity polymerases and limit PCR cycles.

- Troubleshooting: Check the GC content plot from your alignment tool. Consider using a library prep kit with specialized fragmentation (e.g., enzymatic shearing) and buffers optimized for balanced amplification.

- A: This is a common issue related to PCR amplification bias during library preparation. To mitigate:

Q: How do I handle high levels of unmapped reads in my RNA-seq experiment?

- A: High unmapped reads can stem from several sources. Follow this diagnostic protocol:

- Check Sequence Quality: Use FastQC. Trim adapters and low-quality bases with Trimmomatic or Cutadapt.

- Identify Contaminants: Align unmapped reads to databases of ribosomal RNA (Silva), phiX (common spike-in), or host genome (if working with xenografts).

- Protocol - Ribodepletion: For total RNA-seq, ensure effective ribosomal RNA depletion using probe-based kits (e.g., Ribo-Zero). Validate depletion with a Bioanalyzer.

- A: High unmapped reads can stem from several sources. Follow this diagnostic protocol:

FAQ Category: Proteomics (Mass Spectrometry)

Q: My LC-MS/MS proteomics run shows a sudden drop in peptide identifications over time. What is the cause?

- A: This typically indicates instrument or column performance issues.

- Troubleshooting Guide:

- Step 1: Check chromatography – peak shape broadening suggests column degradation. Flush and/or replace the LC column.

- Step 2: Inspect the MS source for contamination. Clean the ion transfer tube and sprayer.

- Step 3: Calibrate the mass spectrometer with standard calibration solution.

- Protocol for Column Maintenance: Perform weekly backflushes with 80% acetonitrile, 0.1% formic acid. Store columns in 90% acetonitrile.

- Troubleshooting Guide:

- A: This typically indicates instrument or column performance issues.

Q: How can I improve the identification of post-translational modifications (PTMs) in a discovery experiment?

- A: PTM identification requires specialized data acquisition and processing.

- Protocol: Use enrichment strategies prior to MS. For phosphorylation, use TiO2 or IMAC beads. For acetylation, use immunoprecipitation with specific antibodies.

- Data Processing: Utilize search engines (e.g., MaxQuant, MSFragger) with open PTM searches or specify variable modifications. Manually validate spectra for low-scoring PTM sites.

- A: PTM identification requires specialized data acquisition and processing.

FAQ Category: Metabolomics

Q: I observe significant batch effects in my untargeted metabolomics dataset. How can I correct for this during preprocessing?

- A: Batch effects are critical for multi-omics integration. Implement this workflow:

- Experimental Protocol: Randomize sample injection order. Use pooled quality control (QC) samples injected at regular intervals. Include internal standards in every sample.

- Data Preprocessing Protocol: Use MetaboAnalyst R package. Perform QC-based signal correction (e.g., LOESS) and follow with statistical batch correction methods like ComBat.

- A: Batch effects are critical for multi-omics integration. Implement this workflow:

Q: How do I handle missing values in my metabolomics intensity matrix?

- A: Missing values can be biological (true absence) or technical (below detection).

- Decision Guide: For >50% missing in a group, consider removing the feature. For lower rates, apply imputation.

- Imputation Protocol: Use

k-nearest neighbors (KNN) imputation for data with a strong correlation structure. For random missingness (likely technical), use minimum value or half-minimum imputation. Never use imputation for multi-omics integration without documenting the method.

- A: Missing values can be biological (true absence) or technical (below detection).

FAQ Category: Multi-Omics Integration (Thesis Context)

Q: What is the first critical step before integrating genomic variant data with proteomic data?

- A: The critical step is identifier harmonization. You must map genomic feature IDs (e.g., Ensembl Gene IDs) to the same identifier system used in your proteomics results (e.g., Uniprot IDs). Use biomaRt (R) or MyGene.info (Python) for reliable, current mapping.

Q: When normalizing different omics datasets for integration, should I use the same method for all?

- A: No. Each data type requires its own biologically appropriate normalization before integration.

- Protocol Summary: Normalize RNA-seq counts with TMM (edgeR) or DESeq2's median-of-ratios. Normalize proteomics LFQ intensities by median centering. Normalize metabolomics data by sum or probabilistic quotient normalization (PQN).

- Post-Normalization: Scale all datasets (e.g., z-score) to make them comparable for integrative algorithms like MOFA+.

- A: No. Each data type requires its own biologically appropriate normalization before integration.

Summarized Quantitative Data

Table 1: Common Technical Challenges and Success Metrics Across Omics Fields

| Omics Layer | Common Issue | Typical Target Metric | Acceptable Range |

|---|---|---|---|

| Genomics (WGS) | Uneven Coverage | Coverage Uniformity (≥90% of target bases at >20x depth) | >0.95 |

| Transcriptomics (RNA-seq) | Mapping Rate | Percentage of Reads Aligned to Transcriptome | >70% (Human) |

| Proteomics (LC-MS/MS) | Identification Reproducibility | CV of Protein IDs across QC Samples | <20% |

| Metabolomics (LC-MS) | Instrument Drift | Retention Time Drift in QC Samples | <0.1 min |

Table 2: Recommended Data Preprocessing Tools for Multi-Omics Integration

| Data Type | Preprocessing Step | Recommended Tool/Package | Key Function for Integration |

|---|---|---|---|

| Genomics (VCF) | Variant Annotation | SnpEff / Ensembl VEP | Adds gene context for matching to other layers. |

| Transcriptomics | Normalization | DESeq2 / edgeR | Generates stable, comparable log2 expression values. |

| Proteomics | Protein Intensity Processing | MaxQuant / DIA-NN | Outputs normalized, imputed intensity matrices. |

| Metabolomics | Peak Alignment & Missing Value Imputation | XCMS / MetaboAnalystR | Creates a consistent feature-intensity table. |

Experimental Protocols

Protocol 1: Cross-Omics Sample Preparation for Paired Genomics/Transcriptomics

- Objective: Extract high-quality DNA and RNA from the same biological sample (e.g., tissue, cells).

- Materials: AllPrep DNA/RNA/miRNA Universal Kit (Qiagen), RNase-free reagents, liquid nitrogen.

- Method:

- Lyse sample in AllPrep lysis buffer with β-mercaptoethanol. Homogenize.

- Pass lysate through an AllPrep DNA spin column. RNA flows through; DNA binds.

- Wash DNA column, elute DNA. Store at -20°C.

- Add ethanol to the flow-through from step 2 to bind RNA to an RNeasy column.

- Wash RNA column, perform on-column DNase digest, wash again, elute RNA. Store at -80°C.

- Quality Control: Assess DNA integrity by agarose gel, RNA integrity by RIN >7.0 (Bioanalyzer).

Protocol 2: Preparation of TMT-Labeled Peptides for Multiplexed Proteomics

- Objective: Label peptides from 10 different conditions for relative quantification.

- Materials: TMTpro 16plex Kit, High-pH Reversed-Phase Peptide Fractionation Kit, C18 Spin Columns.

- Method:

- Digest 100µg of protein from each sample to peptides using trypsin.

- Reconstitute each peptide sample in 100µL of 100mM TEAB buffer.

- Labeling: Add one channel of TMTpro reagent to each sample, incubate at room temperature for 1 hour.

- Quenching: Add 5% hydroxylamine, incubate for 15 minutes.

- Pooling: Combine all 10 labeled samples at a 1:1 ratio.

- Clean-up: Desalt the pooled sample using a C18 spin column.

- Fractionation: Fractionate the pooled sample into 12 fractions using high-pH reversed-phase chromatography to reduce complexity.

Visualizations

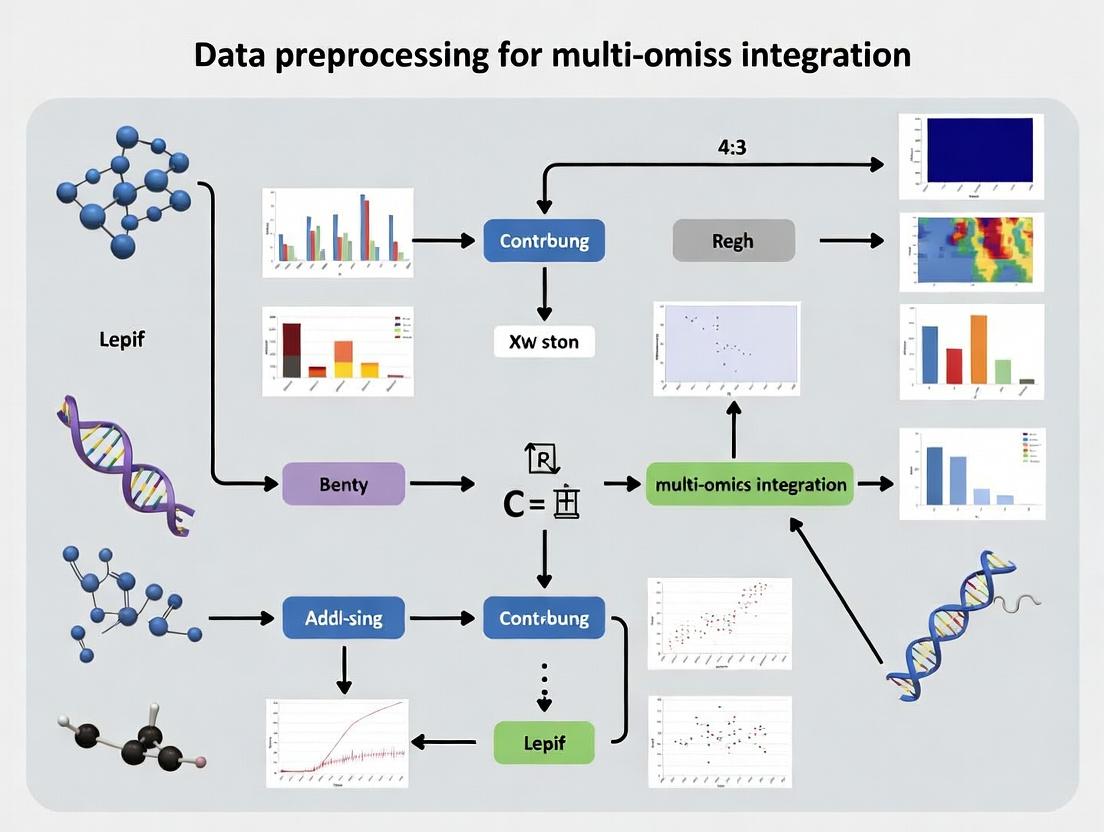

Multi-Omics Data Generation & Preprocessing Workflow

Preprocessing Pathway for Multi-Omics Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Multi-Omics Sample Preparation

| Item | Function | Example Product (Supplier) |

|---|---|---|

| AllPrep DNA/RNA Kit | Simultaneous purification of genomic DNA and total RNA from a single sample. Minimizes cross-contamination. | AllPrep DNA/RNA/miRNA Universal Kit (Qiagen) |

| Mass Spectrometry Grade Trypsin | Protease for digesting proteins into peptides for LC-MS/MS analysis. High specificity for lysine/arginine. | Trypsin Platinum, MS Grade (Promega) |

| TMTpro Isobaric Labels | Set of 16 chemical tags for multiplexing up to 16 samples in a single LC-MS/MS run, enabling precise relative quantification. | TMTpro 16plex Label Reagent Set (Thermo Fisher) |

| Ribo-Zero rRNA Removal Kit | Removes cytoplasmic and mitochondrial ribosomal RNA from total RNA samples to enrich for mRNA and non-coding RNA in RNA-seq. | Ribo-Zero Plus rRNA Depletion Kit (Illumina) |

| PBS (Phosphate-Buffered Saline) | Isotonic, non-toxic buffer for washing cells and tissues to preserve native state before omics analysis. | DPBS, no calcium, no magnesium (Gibco) |

| Internal Standard Mix (Metabolomics) | A cocktail of stable isotope-labeled metabolites added to every sample for quality control and correction of ionization efficiency drift. | MSK-CAFC-005 (Cambridge Isotope Labs) |

Troubleshooting & FAQs

Q1: After initial integration of raw transcriptomics and proteomics data, my principal component analysis (PCA) plot shows clear batch effects by technology platform, not biological group. What is the primary cause and how do I fix it? A1: The primary cause is technical variation (e.g., different dynamic ranges, detection limits, and noise profiles) overwhelming biological signal. To fix this, you must apply platform-specific normalization before integration. For RNA-Seq counts, use a method like DESeq2's median-of-ratios or edgeR's TMM. For mass spectrometry proteomics, use variance-stabilizing normalization (VSN) or quantile normalization. Never integrate raw counts or raw intensities directly.

Q2: My multi-omics clustering results are inconsistent. Metabolomics data often clusters samples separately from genomics data. Is this a technical artifact or a real biological discrepancy? A2: It is most likely a technical artifact stemming from differing data distributions. Metabolomics data (e.g., from LC-MS) is often compositional and log-normally distributed, while methylation data is beta-distributed. Direct integration treats these as comparable, which they are not. Apply probabilistic (e.g., MOFA+) or kernel-based integration methods that can model these distinct distributions, or transform each omics layer to a compatible scale (e.g., rank-based or quantile transformation).

Q3: When attempting correlation analysis between mRNA expression and protein abundance from matched samples, I find consistently low correlation coefficients (Pearson r < 0.3). Does this mean there is little biological relationship? A3: Not necessarily. Low direct correlation often results from: 1) Temporal delays: mRNA changes precede protein changes. 2) Post-transcriptional regulation: Data missing this layer. 3) Technical limitations: Different sample aliquots, missing value thresholds, and depth of coverage. Implement lagged correlation analyses or use dynamic Bayesian networks to model time-series data. Ensure matched samples are from the same aliquot and impute missing values appropriately per platform.

Q4: I have missing values for >50% of metabolites in my dataset. Can I simply remove these features before integration with more complete genomics data? A4: No. Aggressive removal will cause significant bias, as missingness is often non-random (e.g., lower abundance metabolites fall below detection). Imputation is required but must be omics-specific. For metabolomics, use methods like Random Forest (RF) or Bayesian PCA (BPCA) imputation that consider the data's compositional nature. Do not use mean/median imputation. After separate imputation, integrate with other layers.

Q5: My integrated model fails to validate on an independent dataset. Are the initial raw data preprocessing steps likely culprits? A5: Yes. Inconsistent preprocessing between discovery and validation cohorts is a major culprit. Ensure every normalization, batch correction, and transformation step is identically applied using parameters learned from the training data or robust cross-platform pipelines (e.g., SAMtools for WES, MaxQuant for proteomics). Never reprocess validation data independently.

Key Experimental Protocols for Preprocessing

Protocol 1: RNA-Seq Read Normalization and Batch Correction

- Alignment & Quantification: Align reads to reference genome using STAR or HISAT2. Generate gene-level counts using featureCounts.

- Normalization: Load raw count matrix into R/Bioconductor. Use

DESeq2to calculate size factors (median-of-ratios method) and generate variance-stabilized transformed data. - Batch Effect Assessment: Perform PCA on the transformed data. Color samples by known technical batches (sequencing run, library prep date).

- Correction (if needed): Apply a linear model-based method like

limma::removeBatchEffect()or useComBat-seq(for count data) if batch is confounded with biological group.

Protocol 2: LC-MS Metabolomics Data Preprocessing

- Peak Picking & Alignment: Process raw

.rawor.dfiles with XCMS or MS-DIAL for peak detection, alignment, and integration. - Missing Value Imputation: Filter features with >80% missingness in QC samples. For remaining missing values, apply RF imputation using the

missForestR package, tailored for compositional data. - Normalization: Perform probabilistic quotient normalization (PQN) to account for dilution effects, followed by log-transformation (generalized log, glog) to stabilize variance.

- Batch Correction: Use quality control-based robust LOESS signal correction (QCRLSC) or

ComBaton the log-transformed data.

Protocol 3: Multi-Omics Integration via MOFA+

- Input Preparation: Prepare each omics dataset as a matrix (samples x features). Apply omics-specific normalization and scaling (center and scale unit variance for continuous data).

- Model Setup: Create the MOFA object in R (

create_mofa()). Define the data structure. - Model Training: Run

run_mofa()with default options to decompose variation into factors. Use cross-validation to determine the optimal number of factors. - Downstream Analysis: Extract factors (

get_factors()) and weights (get_weights()) to interpret drivers of variation across omics layers.

Table 1: Common Technical Disparities in Raw Multi-Omics Data

| Omics Layer | Typical Raw Format | Dynamic Range | Missing Value Rate | Primary Source of Noise |

|---|---|---|---|---|

| Genomics (WES) | FASTA/FASTQ, VCF | High (allele fractions) | Low (<5%) | Sequencing errors, coverage bias |

| Transcriptomics (RNA-Seq) | FASTQ, raw counts | Very High (>10⁵) | Low | Library prep bias, GC content |

| Proteomics (LC-MS/MS) | .raw, peak intensities | Moderate (10⁴) | High (15-40%) | Ion suppression, stochastic sampling |

| Metabolomics (LC-MS) | .raw, peak areas | Moderate (10⁴) | Very High (30-60%) | Matrix effects, detection limits |

| Methylation (Array) | .idat, beta values | Fixed (0-1) | Very Low | Probe design bias, type I/II shift |

Table 2: Impact of Normalization on Correlation Between Paired mRNA-Protein

| Preprocessing Step Applied to Both Layers | Median Pearson Correlation (Simulated Dataset) | Key Improvement |

|---|---|---|

| None (Raw Data) | 0.18 | Baseline |

| Platform-Specific Normalization | 0.42 | Reduces technical variance |

| Normalization + Batch Correction | 0.51 | Removes systematic bias |

| Normalization + Batch Correction + Log-Transform | 0.55 | Stabilizes variance across range |

Visualizations

Title: Multi-Omics Preprocessing Workflow for Integration

Title: Core Challenges Blocking Raw Multi-Omics Integration

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Primary Function in Preprocessing | Key Consideration |

|---|---|---|

| UMI (Unique Molecular Identifiers) | Attached during cDNA library prep to correct for PCR amplification bias in RNA-Seq, improving quantification accuracy. | Essential for single-cell RNA-Seq; becoming standard for bulk low-input RNA-Seq. |

| SILAC (Stable Isotope Labeling by Amino acids in Cell culture) | Metabolic labeling for proteomics; creates a reference channel for highly accurate relative quantification, reducing technical variance. | Requires cell culture, not suitable for tissue/clinical samples. Alternative: TMT/iTRAQ. |

| Internal Standard Mix (Metabolomics) | A cocktail of stable isotope-labeled metabolites added pre-extraction to correct for ion suppression, matrix effects, and recovery losses in LC-MS. | Should cover multiple chemical classes; critical for absolute quantification. |

| Bisulfite Conversion Reagents | Converts unmethylated cytosines to uracil for DNA methylation analysis. Efficiency and completeness of conversion is critical for data quality. | Incomplete conversion is a major source of bias; requires careful optimization and controls. |

| ERCC (External RNA Controls Consortium) Spike-Ins | Synthetic RNA molecules of known concentration added to samples pre-RNA-Seq to assess technical sensitivity, dynamic range, and for normalization. | Useful for cross-study integration and assessing platform performance. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My gene expression and DNA methylation data are on vastly different scales, making integration impossible. What's the first preprocessing step I should take? A: Apply feature-wise scaling. For RNA-seq count data, perform a variance-stabilizing transformation (VST) or convert to log2(CPM+1). For methylation beta values (0-1 range), consider M-values for statistical analyses. This achieves comparability by placing both datasets into a similar, continuous numerical space suitable for multivariate analysis.

Q2: After integrating my proteomics and transcriptomics datasets, the results are dominated by technical batch effects, not biology. How can I reduce this noise?

A: Identify and correct for batch effects using statistical models. For known batch variables (e.g., sequencing run, sample plate), use Combat-AL or limma's removeBatchEffect. For unknown latent factors, tools like SVA or RUVSeq are essential. Always apply these methods within each omics layer before integration to achieve the goal of reducing noise.

Q3: When I fuse metabolomics and microbiome data, missing values cause models to fail. What are the standard imputation strategies? A: The strategy depends on the missing data mechanism. See the protocol below and consult the table for guidance.

Experimental Protocol: Handling Missing Values in Metabolomics Data for Integration

- Assessment: Calculate the percentage of missing values per feature (metabolite) and per sample.

- Filtering: Remove features with >20% missingness likely Missing Not At Random (MNAR). Remove samples with >30% missing values.

- Imputation:

- For values assumed MNAR (missing due to low abundance), use a minimum value imputation (e.g., half the minimum detected value).

- For values assumed Missing At Random (MAR), use a model-based approach. For metabolomics,

miceR package with predictive mean matching orimputeLCMD's QRILC method are recommended. - For large datasets, random forest imputation (

missForest) is robust but computationally intensive.

- Validation: Post-imputation, perform a PCA and compare the variance structure before and after to ensure major biological patterns are not artificially created.

Table 1: Missing Value Imputation Methods by Omics Type and Mechanism

| Omics Type | Likely Mechanism | Recommended Method | Software/Package | Key Parameter |

|---|---|---|---|---|

| Metabolomics | MNAR (below LOD) | Half-minimum imputation | In-house script | imp_val = min(feature)/2 |

| Metabolomics | MAR | QRILC | imputeLCMD R |

method = "QRILC" |

| Proteomics | MNAR | MinProb imputation | DEP R |

method = "man" |

| Transcriptomics | Low (MAR) | k-Nearest Neighbour | impute R |

k = 10 |

Q4: How do I normalize single-cell RNA-seq data before fusing it with bulk ATAC-seq data from the same cells?

A: This is a multi-modal single-cell problem. Use a pipeline designed for CITE-seq or SHARE-seq data. For scRNA-seq, normalize using SCTransform. For scATAC-seq, use term frequency-inverse document frequency (TF-IDF) normalization. Key enabling fusion step: Use canonical correlation analysis (CCA) in Seurat's FindMultiModalNeighbors or tools like MOFA+ to learn a shared latent representation, aligning the two omics spaces.

Q5: My multi-omics clustering yields inconsistent sample groupings across platforms. How can I diagnose the issue? A: This often stems from inadequate comparability preprocessing. Follow this diagnostic workflow:

Title: Diagnostic Workflow for Inconsistent Multi-Omics Clustering

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Multi-Omics Preprocessing Benchwork

| Item | Function in Preprocessing Context | Example Product/Kit |

|---|---|---|

| RNA Stabilization Reagent | Preserves transcriptomic profile at collection, reducing technical noise from degradation. | RNAlater, PAXgene |

| Methylation-Specific Enzymes | Enables bisulfite conversion for DNA methylation analysis, defining the measurable feature set. | EZ DNA Methylation Kit (Zymo) |

| Stable Isotope Standards | Spike-in controls for mass spectrometry (proteomics/metabolomics) for normalization and comparability. | SPLASH Lipidomix, Proteome Dynamics Std |

| UMI Adapters (NGS) | Introduces Unique Molecular Identifiers during library prep to correct PCR amplification noise. | TruSeq UMI Adapters (Illumina) |

| Cell Hashing Antibodies | Tags cells with multiplexing barcodes, allowing batch effect identification/correction post-sequencing. | BioLegend TotalSeq Antibodies |

| Bench-top QC Instrument | Provides initial quantitative data (conc., RIN, DV200) to guide pre-processing filtering decisions. | Bioanalyzer/TapeStation (Agilent), Qubit (Thermo) |

Q6: What's a standard workflow to preprocess LC-MS metabolomics data for fusion with miRNA data? A: The goal is noise reduction and comparability. The metabolomics pipeline is critical.

Title: Parallel Preprocessing Workflow for Metabolomics and miRNA Data Fusion

Troubleshooting Guides & FAQs

FAQ 1: Why do I encounter extreme value differences (scale issues) when trying to integrate RNA-seq counts with microarray intensity data?

- Answer: This is a fundamental characteristic of the assays. RNA-seq yields discrete count data (e.g., 0, 15, 2245), while microarrays produce continuous fluorescence intensities (e.g., 4.562, 12.891). Direct integration without normalization leads to technical bias overwhelming biological signal. The recommended protocol is to perform within-assay transformation (e.g., log2 for RNA-seq counts using a pseudocount; quantile normalization for microarrays) followed by cross-assay scaling (e.g., z-score standardization per feature across the integrated dataset) to place all measurements on a comparable, unitless scale.

FAQ 2: My multi-omics dataset has a high proportion of zeros. How do I determine if they are biological zeros (true absence) or technical missing values (dropouts)?

- Answer: Distinguishing these is critical for downstream analysis. For single-cell RNA-seq or metabolomics, zeros are often a mix of both. Implement the following experimental and computational protocol:

- Spike-in Controls: Use external spike-in RNAs (for scRNA-seq) or standards (for metabolomics) to model the technical dropout rate relative to expression/abundance.

- Detection Pattern Analysis: Correlate zero occurrences with low sequencing depth (for scRNA-seq) or low ion intensity (for mass-spec). Zeros correlated with low signal-to-noise are likely technical.

- Imputation Testing: Apply assay-specific imputation tools (e.g., MAGIC for scRNA-seq, NN-based for metabolomics) only to features suspected of technical dropout, and validate imputed values with orthogonal biological knowledge.

FAQ 3: What is the best method to handle missing values (NAs) in a combined proteomics and transcriptomics dataset where >20% of values are missing?

- Answer: The method depends on the missingness mechanism, as summarized in the table below. A standard protocol is:

- Characterize Missingness: Use statistical tests (e.g., Little's test) or visualization to classify NAs as Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR).

- Apply Stratified Strategy:

- For MCAR/MAR: Use k-Nearest Neighbors (k-NN) imputation within each assay separately, as correlations are stronger within than across assays.

- For MNAR (common in proteomics due to limits of detection): Use left-censored imputation methods (e.g.,

minProbfrom theimp4pR package) or replace with a minimal value derived from the assay's detection limit.

- Benchmark: Always compare the stability of your downstream integration model (e.g., clustering results) with and without imputation.

Table 1: Characteristic Comparison of Major Omics Assays

| Assay Type | Typical Scale | Data Distribution | Expected Sparsity (%) | Common Missingness Cause |

|---|---|---|---|---|

| Bulk RNA-seq | Counts (0 to 10^6+) | Negative Binomial | Low (<5%) | Low expression, sequencing artifacts |

| Single-Cell RNA-seq | UMI Counts (0 to 10^4+) | Zero-inflated Negative Binomial | High (50-90%) | Technical dropout, biological absence |

| Microarray | Continuous Intensity | Log-normal | Very Low (<1%) | Probe failure, image artifact |

| Shotgun Proteomics (LC-MS) | Peak Intensity/Count | Log-normal with heavy tail | Moderate-High (10-40%) | Low abundance, detection limit (MNAR) |

| Metabolomics (LC-MS) | Peak Area | Log-normal | Moderate (10-30%) | Detection limit, ion suppression |

| Methylation Array (450k/EPIC) | Beta-value (0-1) | Bimodal (0 & 1 peaks) | Very Low (<1%) | Probe binding failure |

Experimental Protocols

Protocol A: Assessing and Normalizing Data Scale Across Assays

- Load Data: Import feature matrices for each omics layer (e.g., genes, proteins).

- Visualize Spread: Generate boxplots of raw values per assay.

- Apply Assay-Specific Transform:

- RNA-seq counts:

log2(count + 1). - Microarray/Proteomics intensities:

log2(intensity). - Methylation Beta-values:

logit2(Beta)(M-values).

- RNA-seq counts:

- Cross-Assay Scaling: Apply robust z-score scaling:

(value - median(feature)) / mad(feature)for each feature across samples in the combined dataset.

Protocol B: Diagnosing Missing Value Mechanisms

- Create Missingness Indicator Matrix: Binary matrix (1=missing, 0=present).

- Correlate with Observed Data: For each feature, test if the mean of present values in other assays differs between groups where the target feature is missing vs. present (t-test). A significant p-value suggests MAR.

- Test for MCAR: Perform Little's statistical test on a random subset of features. A non-significant result (p > 0.05) is consistent with MCAR.

- Analyze Detection Limits: Plot missing value frequency vs. average signal intensity in related assays. A sharp increase below a threshold suggests MNAR.

Visualizations

Title: Multi-Omics Data Preprocessing Workflow

Title: Decision Pathway for Missing Value Mechanism & Imputation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Tools for Multi-Omics Preprocessing Validation

| Item | Function in Preprocessing Context |

|---|---|

| External RNA Controls Consortium (ERCC) Spike-in Mix | Added to RNA-seq samples pre-extraction to create a standard curve. Used to calibrate technical noise, estimate transcript abundance, and identify the limit of detection for distinguishing low expression from dropout. |

| Equimolar Protein Standard (e.g., MassPrep Mix) | A known mixture of proteins used in proteomics. Helps calibrate mass spectrometer response, identify technical missingness (MNAR) due to low ionizability, and normalize runs. |

| Synthetic Metabolite Standards (Isotope-labeled) | Spiked into samples for metabolomics. Essential for peak identification, correcting for ion suppression effects, and assessing technical variation that contributes to sparsity. |

| Control Cell Lines (e.g., HEK293, NA12878) | Profiled across all assays in parallel with experimental samples. Provides a baseline to disentangle assay-specific technical batch effects from biological variation during integration. |

| Bioinformatics Pipelines (Nextflow/Snakemake) | Workflow managers that encapsulate preprocessing steps (normalization, transformation, imputation) for reproducibility and consistent application across all assay data types. |

| Benchmarking Datasets (e.g., SEQC, MAQC) | Public, well-characterized multi-omics datasets with known outcomes. Used to validate that your preprocessing pipeline preserves biological signal and does not introduce artifacts. |

Troubleshooting Guides & FAQs

Q1: During multi-omics integration, my PCA/MDS plots show strong batch separation instead of biological groups. What is the primary cause and how can I diagnose it?

A: This is a classic symptom of Batch Effect dominance. Batch effects are technical variations introduced by processing date, reagent lot, instrument, or personnel. They can be stronger than the biological signal of interest.

- Diagnosis: Create a PCA plot colored by

Batch IDand another colored byTreatment Group. If samples cluster more tightly by batch, you have a confirmed batch effect. - Primary Solution: Integrate batch information as a covariate in your preprocessing. Use ComBat (sva package in R) or similar batch correction tools after normalization but before downstream integration. Critical Note: Batch correction should be applied separately to each omics dataset before integration, not to the integrated matrix.

Q2: I have missing metadata for some legacy samples. Can I still include them in my integrated analysis?

A: Proceed with extreme caution. Samples with missing critical metadata (e.g., Sample Type, Collection Date, Batch) are high-risk and can confound the entire analysis.

- Recommended Protocol:

- Isolate: Initially, analyze the dataset with and without the legacy samples.

- Correlate: Check if the legacy samples form an outlier cluster in an unsupervised analysis. Use hierarchical clustering or PCA.

- Impute with Caution: Only non-critical, descriptive metadata (e.g.,

Patient Height) can be imputed using mean/median values from the cohort. Never impute core experimental design metadata (Batch,Group). - Flag: If included, clearly flag these samples in all results and figures.

Q3: How do I align samples correctly when each omics dataset (e.g., RNA-seq, Proteomics) has a different sample ID format or some mismatches?

A: Sample misalignment is a major source of failed integration. A rigorous alignment protocol is required.

- Alignment Protocol:

- Create a Master Metadata Table: Start with a central table that has one row per unique biological subject/sample.

- Use a Universal Key: Create a

Universal_Sample_ID(e.g.,PatientID_Timepoint_Tissue). - Cross-Reference Table: Build a separate "Alignment Table" that maps each platform-specific sample ID (e.g.,

SeqID_123,Plex_456) to theUniversal_Sample_ID. - Validation Script: Write a script in R or Python to verify that all IDs from each data matrix have one and only one match in the master metadata table. Discard samples without a match.

Q4: What is the minimum essential metadata required for any multi-omics experiment to ensure reproducibility?

A: The following table categorizes the minimum essential metadata. Failure to document these can render a study irreproducible.

Table 1: Minimum Essential Metadata for Multi-Omics Studies

| Category | Field | Example | Purpose in Preprocessing |

|---|---|---|---|

| Sample Identity | SampleID, SubjectID, Timepoint, Tissue/Cell Type | PT-001, Day 7, Plasma |

Core alignment of measurements across platforms. |

| Experimental Design | Treatment_Group, Dose, Phenotype | Control, 10uM_DrugA, Responder |

Defines the biological question and comparison groups. |

| Batch Information | ProcessingDate, SequencingRun, LC-MSBatch, ReagentLot | 2023-10-27, SNSX_2305 |

Critical for diagnosing and correcting technical noise. |

| Technical Parameters | RNAIntegrityNumber (RIN), LibraryPrepKit, MS_Instrument | RIN=8.5, TMT_16plex, Orbitrap_Fusion |

Assesses data quality and identifies platform-specific biases. |

Q5: My differential analysis results are inconsistent between omics layers. Could this be caused by metadata issues?

A: Yes, inconsistencies often originate in metadata, not the algorithms.

- Troubleshooting Steps:

- Verify Group Alignment: Ensure the

Treatment_Grouplabel for each sample is identical and correct across the metadata for RNA-seq, proteomics, etc. A single mislabeled sample (e.g.,ControlvsCtrl) can skew results. - Check for Confounding: Create a contingency table to see if

Batchis confounded withGroup. For example, if allTreatmentsamples were sequenced in one batch and allControlsin another, the batch effect is inseparable from the biological effect. The study design is fundamentally flawed. - Subset Analysis: Re-run analysis using only the perfectly aligned, non-confounded subset of samples. If results become consistent, the problem was metadata alignment.

- Verify Group Alignment: Ensure the

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Kits for Multi-Omics Sample Preparation

| Item | Function in Multi-Omics Workflow | Critical Metadata to Record |

|---|---|---|

| PAXgene Blood RNA Tube | Stabilizes intracellular RNA profile at collection for transcriptomics. | Collection Tube Lot, Time_to_Stabilization |

| TMTpro 18plex Isobaric Label | Enables multiplexed quantitative proteomics of up to 18 samples in one MS run. | Label Kit Lot, Channel-to-Sample Assignment (crucial!). |

| AllPrep DNA/RNA/Protein Kit | Simultaneous isolation of multiple molecular species from a single sample aliquot. | Kit Lot, Elution Buffer Volume (impacts concentration). |

| DNase I (RNase-free) | Removes genomic DNA contamination from RNA preparations. | Enzyme Lot, Incubation Time. |

| Trypsin (Sequencing Grade) | Digests proteins into peptides for LC-MS/MS analysis. | Enzyme Lot, Protease-to-Protein Ratio. |

| PCR Barcoding Primers (for scRNA-seq) | Adds unique sample barcodes during library prep for single-cell multiplexing. | Primer Set ID, Barcode Sequence for each sample. |

Experimental Protocols

Protocol 1: Systematic Metadata Collection and Validation

Objective: To establish an error-free metadata table for multi-omics integration. Materials: Sample list, experimental design notes, lab notebooks, electronic records. Methodology:

- Design Phase: Before sample collection, create a metadata spreadsheet template with enforced vocabulary (e.g.,

Control,Treated) for key fields. - Centralized Entry: Assign one person to be the metadata curator. All data (sample condition, storage location, processing dates) is reported to the curator.

- Cross-Validation: When each omics dataset is received, the curator matches the provided sample list against the master metadata table. Discrepancies are resolved with the lab technician immediately.

- Version Control: Save the metadata table as a new, dated version after every major update (e.g.,

Metadata_ProjectX_v2.3_20231027.csv). Use a changelog.

Protocol 2: Batch Effect Diagnosis Using PCA

Objective: To visually and statistically assess the impact of batch effects. Materials: Normalized omics data matrix (e.g., gene expression), associated metadata table. Software: R with ggplot2 and stats packages. Methodology:

- Perform PCA on the normalized data matrix (e.g., using

prcomp()on transposed log-counts). - Extract the first two principal components (PC1, PC2).

- Plot PC1 vs PC2. Create two separate plots:

- Plot A: Color points by

Experimental_Group(e.g., Disease vs Healthy). - Plot B: Color points by

Batch_ID(e.g., Sequencing Run 1, 2, 3).

- Plot A: Color points by

- Interpretation: If samples cluster more distinctly by color in Plot B than in Plot A, a significant batch effect is present and must be addressed prior to integration.

Visualizations

Diagram 1: Metadata-Driven Multi-Omics Preprocessing Workflow

Diagram 2: Logic Flow for Batch Effect Diagnosis via PCA

The Preprocessing Pipeline: Step-by-Step Methods and Practical Application for Each Data Type

Troubleshooting Guides & FAQs

Q1: Why do I need to apply different QC thresholds for RNA-seq vs. ATAC-seq data during trimming? A: Different sequencing technologies and assay types generate distinct error profiles and artifacts. RNA-seq adapters and primers differ from those used in ATAC-seq. Furthermore, ATAC-seq data often has a higher proportion of low-quality bases at read ends due to transposase insertion bias. Applying the same universal threshold can either retain excessive technical noise (too lenient) or discard genuine biological signal, especially from open chromatin regions with lower coverage (too strict).

Q2: My post-trimming FASTQC report still shows "Per base sequence content" failures. What should I do?

A: This is expected for certain omics types. For example, in ATAC-seq, the Tn5 transposase has a known sequence bias (preferring insertion at certain motifs), leading to uneven nucleotide distribution at the very start of reads. Do not over-trim to correct this. Instead, note the bias for downstream analysis (e.g., during peak calling). For RNA-seq, persistent uneven composition may indicate residual adapter contamination; consider using a more aggressive adapter-scanning algorithm like cutadapt in multiple rounds.

Q3: How do I choose the correct quality scoring system (Phred+33 vs. Phred+64) for my platform?

A: This is platform-specific. Modern Illumina instruments (HiSeq 2000+, NovaSeq, NextSeq, MiSeq) use Phred+33 encoding (Sanger format). Older Illumina data (pre-1.8) may use Phred+64. If unsure, use a tool like fastQC to examine the range of quality score ASCII characters. Incorrect assignment will lead to erroneous trimming.

Q4: After trimming my single-cell RNA-seq (scRNA-seq) data, my UMI counts dropped drastically. What went wrong?

A: A common error is trimming the UMI or cell barcode sequences, which are typically located at the start of Read 1. Always use --trim-n and specify --clip_r1 offsets to preserve these critical regions before performing quality-based trimming. Trimming should be focused on the cDNA portion of the read.

Quantitative Filtering Thresholds by Platform

Table 1: Recommended Default Trimming Parameters for Major Sequencing Platforms

| Omics Assay | Platform (Typical) | Recommended Quality Threshold (Phred Score) | Minimum Read Length Post-Trim | Adapter Removal Priority | Special Note |

|---|---|---|---|---|---|

| Bulk RNA-seq | Illumina NovaSeq | Q20-30 (Sliding window) | 35-50 bp | TruSeq, Nextera | PolyG tails common in NovaSeq. Use --trim-poly-g. |

| scRNA-seq (10x) | Illumina NovaSeq | Q20 (3' end) | Keep full length for alignment* | Read 1: Nextera | Do not quality-trim cell/UMI bases (first 16-28 bp). |

| WGS (Whole Genome) | Illumina, MGI | Q15-20 (Sliding window) | 50-70 bp | Platform-specific | MGI data may have high dup rates; QC is critical. |

| ATAC-seq | Illumina HiSeq/NovaSeq | Q15 (Sliding window) | 20-30 bp (for peak calling) | Nextera (Tn5 compatible) | Very short reads can be valid. Be cautious with min length. |

| Chip-seq | Illumina | Q20 | 25-30 bp | Standard Illumina | Similar to ATAC-seq but less extreme length variation. |

| Metagenomics | Illumina, PacBio | Q20 (Illumina) | 50-100 bp | Multiple adapter sets | Host removal is a prior, crucial QC step. |

| Methyl-seq (WGBS) | Illumina | Q20 | 40 bp | RRBS adapters are specific | Avoid non-directional alignment by preserving start. |

Experimental Protocols

Protocol 1: Standardized QC & Trimming Workflow for Multi-Omic Data

Objective: To uniformly assess raw sequence quality and perform adapter/quality trimming across diverse omics datasets (RNA-seq, ATAC-seq) for integrated analysis.

Materials:

- Raw FASTQ files.

- High-performance computing (HPC) cluster or server with ≥ 8 GB RAM per core.

- Conda environment manager.

Methodology:

- Environment Setup:

Initial Quality Assessment (Pre-Trim):

Platform-Specific Trimming with Trim Galore (Automated Adapter Detection): For standard RNA-seq (Illumina):

For ATAC-seq (Nextera Adapters):

Post-Trimming QC Verification:

Metrics Compilation: Compare

pre_trim_multiqc_report.htmlandpost_trim_multiqc_report.html. Focus on changes in "Per sequence quality scores", "Adapter content", and "Sequence length distribution".

Visualizations

Multi-Omic Preprocessing QC Workflow

Relationship Between Read Quality and Downstream Multi-Omic Integration

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools for QC & Trimming in Multi-Omics Research

| Tool Name | Primary Function | Key Parameter to Adjust per Platform | Application in Multi-Omics |

|---|---|---|---|

| FastQC | Quality control visualization. | --kmers, --filter to ignore expected biases (e.g., ATAC-seq start bias). |

Initial diagnostic across all omics data types. |

| MultiQC | Aggregate QC reports. | N/A | Critical for comparing QC metrics from RNA-seq, ATAC-seq, etc., in one view. |

| Trim Galore! | Wrapper for Cutadapt & FastQC. | --quality, --adapter, --length, --clip_r1 (for scRNA-seq). |

Simplifies uniform trimming application. |

| Cutadapt | Precise adapter removal. | -a, -g (adapter sequences); -q (quality cutoff); --minimum-length. |

Gold standard for adapter trimming. Essential for custom protocols. |

| Fastp | All-in-one QC & trimming. | --trim_front1 (for barcodes), --detect_adapter_for_pe, --cut_mean_quality. |

High-speed, integrated tool for large-scale projects. |

| Trimmomatic | Flexible read trimming. | ILLUMINACLIP (adapter file), SLIDINGWINDOW, MINLEN. |

Widely used, robust for WGS and RNA-seq. |

| Picard Tools | Broad QC metrics post-alignment. | CollectMultipleMetrics, CollectRnaSeqMetrics. |

Assesses the impact of trimming on mapping. |

Troubleshooting Guides & FAQs

Q1: My TPM values are all zeros or extremely low for a sample that should have high expression. What went wrong? A: This is typically a raw read count issue, not a TPM calculation error. First, verify the quality of your raw FASTQ files using FastQC. Low sequencing depth or high adapter contamination can result in few reads mapping to genes. Ensure your alignment step (using STAR or HISAT2) had a high mapping rate (>70%). If using featureCounts, confirm the GTF annotation file matches your genome build. Recalculate TPM only after confirming robust raw counts.

Q2: When applying Median Polish to my microarray data, the algorithm fails to converge. How do I fix this?

A: Non-convergence often indicates an issue with the data matrix. First, check for and replace any NA or Inf values. Excessive outliers in a few probes can also prevent convergence. Implement a pre-filtering step to remove probes with consistently low signal across all arrays (e.g., in the bottom 5th percentile). You can also try increasing the maximum number of iterations (default is often 10) in the medpolish() function in R.

Q3: After VSN transformation, my proteomics data still shows variance heterogeneity across intensity levels. Is this normal?

A: VSN aims to stabilize the variance across the dynamic range. Perfect homogeneity is rare. Assess the meanSdPlot of the transformed data. A flat line is ideal, but a low-slope trend is often acceptable. If strong heteroscedasticity persists, it may indicate issues upstream: check for incomplete sample labeling (for TMT/iTRAQ), low peptide counts, or batch effects that need to be addressed before VSN. VSN is not a substitute for batch correction.

Q4: Can I directly compare TPM values from RNA-seq with microarray data normalized by RMA (which uses Median Polish)? A: No, not directly. While both are normalized, they are on different scales and have different technical biases. For integration, you must perform cross-platform normalization. Common strategies include:

- Quantile Normalization: Applied to both datasets after combining rank-invariant genes.

- ComBat or other Batch Correction: Treat the platform as a "batch effect."

- Using a Common Reference: Transform both datasets to a z-score relative to control samples present in both platforms.

Table 1: Comparison of Key Normalization Techniques Across Omics Layers

| Technique | Primary Omics Layer | Core Function | Key Assumption | Output Interpretation |

|---|---|---|---|---|

| TPM (Transcripts Per Million) | Transcriptomics (RNA-seq) | Normalizes for sequencing depth and gene length. | Total mRNA output per cell is constant. | Proportional expression level; comparable across genes and samples. |

| Median Polish (e.g., in RMA) | Transcriptomics (Microarrays) | Fits an additive model to remove probe-specific and array-specific effects. | Multiplicative noise can be transformed to additive via log2. | Log2-transformed, background-corrected, and normalized probe intensities. |

| VSN (Variance Stabilizing Normalization) | Proteomics (Mass Spec) | Stabilizes variance across intensity ranges and normalizes arrays. | Technical variance follows a quadratic relationship with mean intensity. | Intensity values with stable variance across the mean, enabling parametric tests. |

| Cyclic LOESS | Multi-omics (General) | Removes intensity-dependent biases between sample pairs. | Systematic biases are smooth functions of intensity. | Normalized intensities where sample distributions are aligned. |

Table 2: Common Error Indicators and Solutions

| Symptom | Likely Cause | Diagnostic Check | Solution |

|---|---|---|---|

| Skewed TPM distribution in one sample | Failed library prep or outlier sample. | Check total mapped reads; view PCA plot of raw counts. | Exclude sample or use robust scaling (e.g., TMM from edgeR) before integration. |

| High residual variance after Median Polish | Presence of strong single-probe outliers. | Inspect residuals matrix from medpolish output. |

Apply a mild log2(x+1) transform before polishing or winsorize extreme values. |

| VSN transformation fails (error) | Negative or zero values in input. | min(exprs_data). |

Replace zeros with small imputed values (e.g., from left-censored distribution) or use na.replace=TRUE. |

Experimental Protocols

Protocol 1: Calculating TPM from RNA-seq Read Counts Objective: Generate length-normalized, comparable expression values.

- Input: A matrix of raw gene counts (

counts) and a vector of corresponding gene lengths in kilobases (lengths_kb). - Calculate Reads Per Kilobase (RPK):

RPK = counts / lengths_kb - Calculate Per-Million Scaling Factor:

scale_factor = sum(RPK) / 1,000,000 - Calculate TPM:

TPM = RPK / scale_factor - Verification: The sum of TPM values for all genes in each sample should equal 1,000,000.

Protocol 2: Applying Median Polish via RMA for Microarrays Objective: Obtain normalized, summarized expression values from probe-level data.

- Background Correction: Apply the RMA convolution model to raw CEL file probe intensities to correct for optical noise.

- Log2 Transformation: Transform all background-corrected intensities.

- Quantile Normalization: Force the distribution of probe intensities to be identical across all arrays.

- Median Polish Summarization: For each probe set: a. Arrange probes (rows) vs samples (columns) in a matrix. b. Iteratively subtract row medians and column medians until convergence. c. The fitted column (sample) effects are the normalized, probe-set summarized expression values.

Protocol 3: Normalizing Proteomics Data with VSN Objective: Transform protein/peptide intensity data to stabilize variance.

- Input Preparation: Load a matrix of raw intensities (proteins/peptides x samples). Filter out proteins with >50% missing values.

- Imputation: Impute remaining missing values using a method appropriate for your data (e.g., MinProb for MNAR data).

- VSN Transformation: Apply the

vsn2()function (from thevsnpackage in R/Bioconductor) to the entire matrix. The function estimates parametersa(asymptotic variance) andb(slope) for the variance-mean relationship. - Validation: Use

meanSdPlot()to visualize the stabilized standard deviation across the mean intensity rank. A horizontal best-fit line indicates success.

Visualizations

Title: Multi-Omics Normalization Workflow for Integration

Title: Median Polish Algorithm Steps

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Normalization Experiments

| Item | Function in Normalization Context | Example/Note |

|---|---|---|

| Spike-in Controls (External) | Distinguish technical from biological variation. Used to fit normalization models (e.g., in VSN). | ERCC RNA Spike-ins (RNA-seq), Proteomics Spike-in Peptides (e.g., Thermo Pierce). |

| Housekeeping Gene Panel | Provide a stable biological reference for relative normalization (qPCR, WB). Crucial for validating global methods. | ACTB, GAPDH, HPRT1. Must be validated per tissue/condition. |

| Reference Sample / Pool | A consistent technical sample run across all batches/platforms to align distributions. | Commercial universal reference RNA (e.g., Stratagene) or a master patient sample pool. |

| Normalization Software Package | Implements statistical algorithms for robust scaling and transformation. | R/Bioconductor: edgeR (TMM), DESeq2 (Median of Ratios), vsn, limma (Cyclic LOESS, RMA). |

| Quality Control Metric Suite | Quantifies success of normalization prior to integration. | RSeQC (RNA-seq), arrayQualityMetrics (Microarrays), msqrob2 QC (Proteomics). |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: My data shows strong batch effects after integration. How do I choose between ComBat, SVA, and RUV?

- Answer: The choice depends on your experimental design and whether you have known batch variables.

- Use ComBat (from the

svapackage) when you have explicitly known batch variables (e.g., processing date, sequencing lane). It uses an empirical Bayes framework to adjust for these known batches while preserving biological variation. - Use SVA (Surrogate Variable Analysis) when you suspect unknown sources of variation or hidden confounders (e.g., sample quality, latent environmental factors). It estimates these surrogate variables for use in downstream models.

- Use RUV (Remove Unwanted Variation) when you have "negative control" genes/features known not to be influenced by the biological variables of interest. RUV uses these controls to estimate and remove unwanted factors.

- Use ComBat (from the

FAQ 2: After running ComBat, my batch-corrected data shows inflated or reduced variance. What went wrong and how can I fix it?

- Answer: This is often due to the

mean.onlyparameter or model over-adjustment.- Troubleshooting Steps:

- Check

mean.only: By default, ComBat adjusts both mean and variance. If your batches differ primarily in mean, setmean.only=TRUE. Use diagnostic plots (plotfunction onComBatoutput or PCA) to compare. - Review Model Formula: Ensure your model formula (

modparameter) correctly specifies your biological condition of interest. An incorrect model can remove biological signal. - Use

Prior.plots: Run ComBat withprior.plots=TRUEto visualize the empirical Bayes shrinkage. It should show distributions of batch effects shrinking towards a common mean. - Consider ComBat-seq: For RNA-Seq count data, use

ComBat-seq(from thesvapackage), which works directly on counts and avoids log-transformation artifacts.

- Check

- Troubleshooting Steps:

FAQ 3: When using SVA, how do I determine the correct number of surrogate variables (SVs) to estimate?

- Answer: The number of SVs (

n.sv) is critical. Using too many can remove biological signal; too few leaves unwanted variation.- Protocol: Use the

num.svfunction from thesvapackage with different statistical methods.- Be method: The default and often recommended. It uses the asymptotic distribution of the eigenvalues of the data matrix.

- Leek method: Based on permutation. Can be more robust in some cases.

- Code Example:

- Protocol: Use the

FAQ 4: For RUV, I don't have established negative control genes. How can I proceed?

- Answer: You can empirically derive negative controls. Common Strategies:

- RUVg (using control genes): Use genes with the lowest variation across samples (e.g., bottom 10% by standard deviation) or genes that are least significantly associated with your phenotype via a preliminary differential analysis.

- RUVr (using residuals): Use residuals from a first-fit model of your data against biological variables of interest. This does not require predefined controls.

- RUVs (using replicate/negative control samples): If you have technical replicates or pooled samples, use them directly as controls.

- Warning: Empirically derived controls are less reliable and may remove some biological signal. Validate results with positive control genes known to be differential.

FAQ 5: My PCA plot still shows batch clustering after correction. Is the correction failing?

- Answer: Not necessarily. Follow this diagnostic workflow:

- Check the scale: Ensure the PCA is performed on the corrected data matrix, not the original.

- Quantify improvement: Calculate metrics like the Percent Variance Explained by the batch before and after correction. A reduction indicates success.

- Use Silhouette Width: Measure how similar samples are to their biological group vs. their batch group. Correction should decrease batch silhouette and increase biological silhouette.

- Biological Validation: Check the expression of known biologically relevant markers or pathways. Their signal should be preserved or enhanced post-correction.

Table 1: Comparison of Batch Effect Correction Tools

| Feature | ComBat | SVA | RUV |

|---|---|---|---|

| Core Input Requirement | Known batch variables | Known biological variables; No batch needed | Negative control features/samples or residuals |

| Handles Unknown Factors | No | Yes (estimates SVs) | Yes (estimates k factors) |

| Underlying Method | Empirical Bayes | Surrogate Variable Analysis | Factor Analysis (on controls/residuals) |

| Key Parameter | batch, mod (model) |

n.sv (# of surrogate variables) |

k (# of unwanted factors), ctl (control indices) |

| Best For | Adjusting explicit, documented technical batches | Discovering & adjusting for hidden confounders | Situations with reliable negative controls or replicates |

| Risk | Over-adjustment if model is wrong | Over-fitting if n.sv is too high |

Removing biology if controls are not truly null |

Table 2: Diagnostic Metrics for Correction Success

| Metric | Formula/Interpretation | Ideal Outcome Post-Correction |

|---|---|---|

| PVE by Batch | (Variance explained by batch PC) / Total Variance |

Decreased substantially |

| Average Silhouette Width (Batch) | Measures cluster cohesion/separation for batch labels. Range: -1 to 1. | Approaches 0 or negative value |

| Average Silhouette Width (Biology) | Measures cluster cohesion/separation for biological labels. | Increased or maintained |

| DEG Recovery (Positive Controls) | Number of known true differentially expressed genes detected. | Increased sensitivity & specificity |

Experimental Protocols

Protocol 1: Implementing ComBat Correction for Transcriptomic Data

- Input Preparation: Generate a log2-transformed, normalized expression matrix (e.g., from

limma::voomorDESeq2::vst). Define thebatchvector and biologicalmodmatrix. - Run ComBat: Execute the

ComBatfunction from thesvapackage.

- Diagnostics: Generate PCA plots colored by batch and condition before/after correction. Calculate the Percent Variance Explained (PVE) for the first principal component associated with batch.

Protocol 2: Surrogate Variable Analysis (SVA) Workflow

- Initial Model: Create a full model matrix (

mod) for your biological variables and a null model matrix (mod0) containing only intercept or known covariates (not the primary condition). - Estimate SVs: Use the

svafunction to estimate surrogate variables.

- Incorporate into Analysis: Add the surrogate variables (

svobj$sv) as covariates in your downstream differential expression model (e.g., inlimmaorDESeq2).

Protocol 3: RUV Correction Using Empirical Negative Controls

- Define Controls: Perform an initial differential expression analysis. Select the least significant genes (e.g., highest p-values) as your empirical control set.

- Apply RUVg: Use the

RUVgfunction from theRUVSeqpackage.

- Optimize k: Test different values of

k(1, 2, 3...). Choose thekthat maximizes the improvement in your diagnostic metrics (e.g., PVE by batch).

Visualizations

Title: ComBat Empirical Bayes Correction Workflow

Title: SVA Discovers and Adjusts for Hidden Factors

Title: Decision Tree for Selecting a Batch Correction Method

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Batch-Corrected Multi-Omics

| Item | Function in Batch Correction Context |

|---|---|

| Reference RNA/DNA Samples (e.g., ERCC Spike-Ins, UHRR) | Acts as a technical control across batches. Used to monitor and normalize for technical variability. Essential for RUV if used as negative controls. |

| Pooled Sample Aliquots | A homogeneous sample run across all batches. Serves as a perfect technical replicate to assess and correct for inter-batch variation using methods like RUVs. |

| Sample Preservation Reagent (e.g., RNAlater) | Ensures consistent pre-processing biological state, reducing a major source of unwanted variation before sequencing/assay. |

| Automated Nucleic Acid Extraction System | Standardizes the extraction step, reducing a major technical batch effect tied to manual protocol differences. |

| Multiplexed Library Preparation Kits | Allows barcoding and pooling of samples early in the workflow, ensuring they are processed together in downstream steps, minimizing batch effects. |

| Vendor-Validated & Lot-Numbered Reagents | Critical for documentation. Batch variables often correspond to reagent lot changes. Precise tracking enables proper modeling in ComBat. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: In my proteomics dataset, over 20% of values are missing Not At Random (MNAR), likely due to low-abundance proteins falling below detection limits. Should I use listwise deletion or imputation? A: Listwise deletion is strongly discouraged as it will remove the majority of your proteins (features), crippling downstream analysis. For MNAR data in proteomics or metabolomics, use imputation methods designed for left-censored data.

- Recommended Protocol (Minimum Intensity Imputation):

- Normalize your data (e.g., quantile normalization).

- For each sample, calculate the minimum observed non-missing value.

- Impute all missing values with a random number drawn from a uniform distribution between zero and the sample-specific minimum. This can be performed using the

impute.MinDetfunction in theRimputeLCMDpackage or similar tools in Python.

- Troubleshooting: If imputed values create an artificial "floor" that distorts statistical testing, consider using methods like

NAguidewhich evaluates and recommends optimal strategies for your specific data structure.

Q2: After imputing missing values in my transcriptomics data, my differential expression analysis yields hundreds of false-positive hits. What went wrong? A: This often results from using an overly simplistic imputation method (e.g., mean imputation) that severely underestimates variance, making statistical tests overly sensitive. Use variance-aware imputation.

- Recommended Protocol (K-Nearest Neighbors - KNN Imputation):

- Normalize and scale your gene expression matrix (features as rows, samples as columns).

- Select a distance metric (e.g., Euclidean). Use cross-validation on a subset of artificially introduced missing values to choose an optimal

k(typically k=10-20). - For each sample with a missing value in gene G, find the

ksamples with the most similar expression profiles across all other genes. - Impute the missing value using the weighted average of gene G's values in the

kneighbor samples. Theimpute.knnfunction in theRimputepackage is standard.

- Critical Check: Always perform a Principal Component Analysis (PCA) pre- and post-imputation. The sample clustering should not be artificially tightened due to imputation.

Q3: When integrating multiple omics layers (e.g., methylation and gene expression), should I handle missing data separately for each layer or on the combined dataset? A: Handle missing data separately for each omics modality before integration. Different technologies have unique missingness mechanisms (e.g., MNAR for proteomics, MCAR for transcriptomics). Applying a unified method risks introducing modality-specific artifacts into the joint analysis.

- Workflow Protocol:

- Modality-Specific Imputation: Apply the optimal method (see Table 1) to each omics dataset independently.

- Quality Control: Validate imputation for each layer using modality-specific metrics (e.g., coefficient of variation distribution for proteomics).

- Integration: Perform downstream integration (e.g., via MOFA+, or DIABLO) using the complete matrices from Step 1.

Q4: What is the maximum percentage of missing data per feature for which imputation is still reliable? A: There is no universal threshold, but empirical studies provide guidelines. Exceed these with extreme caution.

Table 1: Imputation Performance Guidelines Based on Missing Data Percentage

| Missingness Rate (Per Feature) | Recommended Action | Typical Algorithm Performance (NRMSE*) | Best-suited Method Examples |

|---|---|---|---|

| < 10% | Imputation is reliable. | NRMSE < 0.1 | KNN, SVD (Matrix Factorization) |

| 10% - 30% | Impute with caution. Validate rigorously. | NRMSE 0.1 - 0.3 | Random Forest (MissForest), SVD |

| > 30% | Consider deletion of the feature. Imputation is high-risk. | NRMSE > 0.3 (High Uncertainty) | Advanced deep learning (e.g., DAE) or removal |

*Normalized Root Mean Square Error: Lower is better. Performance is dataset-dependent; these are general benchmarks.

Experimental Protocols for Evaluation

Protocol: Benchmarking Imputation Methods for Your Dataset Objective: To empirically select the optimal missing data handling strategy.

- Create a Ground Truth Matrix: From your original dataset (

X_original), identify a subset of features with no missing values. - Introduce Artificial Missingness: Randomly remove values from this complete subset (e.g., 5%, 10%, 20%) following a Missing Completely At Random (MCAR) pattern. This creates

X_corrupted. - Apply Candidate Methods: Impute

X_corruptedusing multiple methods (Mean, KNN, SVD, MissForest, etc.) to generateX_imputed. - Calculate Performance Metrics: For each method, compute the error between the imputed values and the true values from

X_originalusing NRMSE and Pearson correlation. - Select Best Performer: Choose the method with the lowest NRMSE and highest correlation for the missingness pattern most similar to your real data.

Protocol: Evaluating Impact on Differential Expression (DE) Analysis

- Generate Datasets: Create three versions of your data: a) With missing values deleted (complete-case), b) Imputed with Method A, c) Imputed with Method B.

- Run DE Analysis: Perform identical DE analysis (e.g., limma-voom for RNA-seq) on all three datasets.

- Compare Results: Assess the concordance in the top 100 significant genes (using rank correlation) and the false discovery rate (FDR) distribution between the methods. A good imputation method should preserve biological signal without inflating FDR.

Visualizations

Title: Decision Workflow for Handling Missing Data in Omics

Title: Impact of Missing Data Strategies on Downstream Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Packages for Missing Data Handling

| Tool/Reagent | Function | Typical Use Case |

|---|---|---|

R impute package |

Provides KNN imputation (impute.knn). |

General-purpose imputation for microarray or RNA-seq data assumed to be MCAR/MAR. |

R missForest package |

Non-parametric imputation using Random Forests. | Handles complex interactions and non-linearities in mixed data types. Robust to various missingness patterns. |

R imputeLCMD package |

Offers methods for left-censored data (MNAR). | Imputation for proteomics/metabolomics data where missing = below detection limit. |

Python scikit-learn IterativeImputer |

Multivariate imputation by chained equations (MICE). | Flexible, model-based imputation for integrative analysis pipelines built in Python. |

| NAguide (Web/Python tool) | Performs evaluation and recommendation of >10 imputation methods. | Benchmarking suite to select the best method for your specific dataset before commitment. |

| Simulated Missingness Datasets | Artificially created validation sets from complete data. | Essential for objectively testing and tuning imputation performance in a controlled manner. |

Troubleshooting Guides & FAQs

Q1: My dataset loses all predictive power after aggressive filtering. What went wrong? A: This is often caused by using a single, overly stringent filter (e.g., a high variance threshold) that removes biologically relevant but low-abundance features. Omics data (e.g., metabolites, rare transcripts) often contain low-variance but high-signal features. Solution: Implement a multi-criteria, rank-based filtering approach. Combine variance with statistical tests (e.g., ANOVA p-value against a phenotype) and domain knowledge (e.g., known pathways). Retain features that score well on any single criterion.

Q2: How do I choose between filter, wrapper, and embedded methods for my multi-omics project? A: The choice depends on your integration goal and computational resources.

- Filter Methods: Use first. They are fast, scalable, and independent of the classifier. Best for initial drastic reduction.

- Wrapper Methods: Use if you have a specific, well-defined predictive model and ample computational power. They evaluate feature subsets by model performance but risk overfitting.

- Embedded Methods: Use for a balanced approach. Methods like LASSO or Random Forest importance perform feature selection as part of the model training.

Q3: I have missing values in my features. Should I impute before or after feature selection? A: Impute before selection for filter methods. Most statistical filters (variance, correlation) cannot handle missing values. Use a cautious imputation method (e.g., k-NN, missForest) suitable for your data type. For wrapper/embedded methods using specific algorithms, follow the algorithm's native missing data handling guidelines.

Q4: How many features should I retain before proceeding to integration? A: There is no universal rule. The goal is to remove noise, not signal. Common strategies include:

- Percentage: Retain top 10-20% of features ranked by your chosen metric.

- Absolute Threshold: Keep features with variance > X percentile or p-value < 0.05.

- Elbow Plot: Plot ranked feature metric (e.g., variance) and look for the "knee" point. Retain features above it.

Q5: When filtering features from different omics layers (e.g., RNA-seq and Proteomics), should I use the same criteria? A: No. Apply layer-specific criteria tuned to each data type's noise characteristics, then integrate the filtered sets.

Table 1: Recommended Initial Filtering Criteria by Omics Layer

| Omics Layer | Recommended Primary Filter | Typical Threshold | Rationale |

|---|---|---|---|

| Transcriptomics | Low expression filter | Counts > 10 in ≥ 20% of samples | Removes very lowly expressed genes likely from technical noise. |

| Proteomics | Detected in samples | Present in ≥ 50-70% of samples per group | Proteins with many missing values are unreliable. |

| Metabolomics | Relative Standard Deviation (RSD) in QCs | RSD < 20-30% in pooled QC samples | Removes metabolites with poor analytical reproducibility. |

| Methylation | Detection p-value & variance | p-value < 0.01 & top 50k by sd | Removes poorly detected probes and invariant sites. |

Experimental Protocols

Protocol 1: Variance-Stability Based Filtering for Transcriptomics Data

Objective: To remove non-informative genes while preserving biological signal.

- Data Input: Normalized count matrix (e.g., from DESeq2 or edgeR).

- Calculate Variance: Compute the variance (or standard deviation) for each gene across all samples.

- Rank & Plot: Rank genes by descending variance. Generate a plot of variance vs. rank.

- Set Threshold: Identify the "elbow" point where variance plateaus. Alternatively, retain the top N genes (e.g., top 5000) or genes above a percentile (e.g., top 20%).

- Subset Matrix: Create a new expression matrix with only the retained high-variance genes.

- Validation: Check PCA plots pre- and post-filtering. Biological group separation should be maintained or improved.

Protocol 2: Redundancy Reduction via Correlation Filtering

Objective: To remove highly correlated features, reducing multicollinearity.

- Compute Correlation: Calculate pairwise correlation matrix (e.g., Pearson, Spearman) for all features after initial variance filtering.

- Define Threshold: Set a high absolute correlation coefficient threshold (e.g., |r| > 0.95).

- Cluster & Select: For each group of highly correlated features, retain one representative feature. Choose the feature with the highest variance or highest association with the outcome of interest.

- Iterate: Repeat until no feature pairs exceed the threshold.

Visualizations

Feature Selection Workflow for Multi-Omics

Multi-Criteria Feature Ranking Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Feature Selection in Multi-Omics Preprocessing

| Tool / Reagent | Function in Feature Selection | Example / Note |

|---|---|---|

| R/Bioconductor (sva, genefilter) | Provides statistical filters (variance, mean) & ComBat for batch correction prior to selection. | genefilter::varFilter for variance-based filtering. |

| Python (scikit-learn, SciPy) | Implements filter (VarianceThreshold, SelectKBest), wrapper (RFE), and embedded (LASSO) methods. | sklearn.feature_selection.VarianceThreshold. |

| BIOMART / Ensembl API | Provides gene/protein annotations to filter features based on biological knowledge (e.g., location, type). | Filter to keep only protein-coding genes. |

| Pathway Databases (KEGG, Reactome) | Enables pathway-based filtering; retain features belonging to relevant biological pathways. | Used in over-representation analysis post-filtering. |

| High-Performance Computing (HPC) Cluster | Essential for computationally intensive wrapper methods or filtering on large-scale datasets. | Needed for permutation-based testing on large feature sets. |

| Pooled Quality Control (QC) Samples | Critical for metabolomics/lipidomics to calculate RSD and filter out analytically noisy features. | Run QC samples intermittently throughout the analytical batch. |

Troubleshooting Guides & FAQs

Q1: My integrated analysis is dominated by my proteomics data. The clustering appears to be driven only by protein abundance, ignoring my transcriptomics data. What went wrong in preprocessing?

A: This is a classic symptom of inadequate scaling between datasets with different native ranges. Proteomics data (e.g., mass spectrometry intensities) often have a much higher absolute numerical range than transcriptomics data (e.g., RNA-seq counts). Without proper scaling, algorithms like MOFA or iCluster will assign disproportionate weight to the dataset with the largest variance.

- Solution: Apply per-dataset scaling after per-dataset normalization but before integration. The Z-score (standardization) method is highly recommended here. For each feature (gene/protein) in each omics dataset independently, subtract the mean and divide by the standard deviation. This centers all datasets around zero with unit variance, ensuring equal contribution during integration.

Q2: After log-transforming my metabolomics abundance data, the distribution still looks highly skewed (right-tailed). How does this affect integration and what can I do?

A: Log transformation (usually log2 or log10) compresses the dynamic range and helps normalize data where the difference between high and low values spans several orders of magnitude. However, it may not be sufficient for all metabolomic data, which can contain extreme outliers or technical artifacts.

- Troubleshooting Protocol:

- Visualize: Create density plots or boxplots of a sample's data before and after log transformation.

- Diagnose: If skewness persists, consider if Pareto scaling is more appropriate. Pareto scaling (dividing by the square root of the standard deviation) reduces the relative importance of large values but preserves data structure better than full standardization.

- Action: Apply Pareto scaling (

x_pareto = (x - mean) / sqrt(sd)). Re-plot. For extreme outliers, investigate if they are biologically plausible or potential artifacts warranting removal.

Q3: When I apply Z-score scaling to my sparse single-cell RNA-seq data for integration with bulk ATAC-seq data, I get many NaN/Infinite values. Why?

A: Z-score scaling requires calculating the standard deviation (SD). For sparse data, it is common for many features (genes) to have zero expression across most cells. The SD for such a feature is zero, and division by zero during Z-scoring causes computational failure.

- Step-by-Step Fix:

- Pre-filter: Filter out features with zero variance across >99% of samples prior to scaling.

- Alternative Scaling: Use a modified approach like "centering-only" (subtract mean only) for the sparse dataset, as the variance structure is still informative.

- Algorithm Choice: Ensure your chosen multi-omics integration tool (e.g., Seurat's CCA, SCOT) is explicitly designed to handle sparse matrix inputs natively.

Q4: Does the order of operations (Log -> Transformation -> Scaling) matter? What is the correct sequence?

A: Yes, the order is critical and follows a strict logic to prepare data for downstream integration algorithms.

- Correct Workflow Order:

- Normalization: Correct for technical biases (e.g., sequencing depth, sample loading). This is dataset-specific.