Multi-Omics Reproducibility Crisis: Strategies for Robust, Standardized, and Clinically Actionable Data

This article addresses the critical challenge of reproducibility in multi-omics studies, a foundational bottleneck in translational research and drug development.

Multi-Omics Reproducibility Crisis: Strategies for Robust, Standardized, and Clinically Actionable Data

Abstract

This article addresses the critical challenge of reproducibility in multi-omics studies, a foundational bottleneck in translational research and drug development. It provides a comprehensive guide for researchers and scientists, moving from defining the core sources of variability (batch effects, platform differences, bioinformatic pipelines) to implementing standardized best practices. The content explores methodological frameworks for integrated data generation, practical troubleshooting for common pitfalls, and validation strategies using reference materials and benchmarking. By synthesizing current standards and emerging solutions, it aims to equip professionals with the knowledge to generate reliable, comparable, and clinically relevant multi-omics data, thereby accelerating biomarker discovery and therapeutic development.

Why Multi-Omics Studies Fail to Replicate: Defining the Reproducibility Crisis

Multi-Omics Reproducibility Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our LC-MS/MS proteomics runs show high technical variance in protein quantification between replicates. What are the primary sources and how can we mitigate them? A: High technical variance often stems from sample preparation inconsistency, LC column degradation, or instrument calibration drift.

- Troubleshooting Guide:

- Check Sample Prep: Implement a robust protein assay (e.g., BCA) and ensure consistent digestion time/temperature using a thermomixer. Add stable isotope-labeled standard (SIS) peptides early to correct for preparation losses.

- Monitor LC System: Perform blank runs and check pressure profiles. Re-place or re-condition the column if peak shape deteriorates. Use a consistent column washing protocol.

- Calibrate Instrument: Perform mass calibration before each batch. Include a standardized quality control (QC) sample (e.g., HeLa digest) in every run to monitor retention time stability and signal intensity drift. Normalize data using total peptide amount or QC-based algorithms (e.g., LOESS).

Q2: In RNA-Seq, we get different differential expression results from the same samples processed at different centers. How do we align our workflows? A: Disparities arise from differences in RNA extraction kits, rRNA depletion vs. poly-A selection, library prep protocols, sequencers, and bioinformatic pipelines.

- Troubleshooting Guide:

- Wet-Lab Harmonization: Agree on a specific RNA integrity number (RIN) threshold (e.g., >7). Use the same approved commercial kit across sites. Implement spike-in RNA controls (e.g., External RNA Controls Consortium, ERCC) for normalization.

- Dry-Lab Standardization: Use a共同的, version-controlled pipeline (e.g., nf-core/rnaseq). Agree on key parameters: aligner (STAR), quantification (featureCounts), and differential expression tool (DESeq2/edgeR). Share all code and containerized environments (Docker/Singularity).

Q3: Our metabolomics study identifies potential biomarkers, but they fail validation in an independent cohort. What could be the reason? A: This is a classic reproducibility failure, often due to batch effects, inadequate statistical power, or overfitting in the discovery phase.

- Troubleshooting Guide:

- Design & Power: Ensure sample size is calculated a priori based on expected effect size. Randomize sample processing order across groups to avoid confounding.

- Batch Correction: Process discovery and validation cohorts with identical methods. Use internal standards and pooled QC samples. Apply batch correction algorithms (e.g., Combat, SVA) carefully and document the process.

- Avoid Overfitting: Use separate samples for discovery, tuning, and validation. Apply false discovery rate (FDR) correction. Validate findings with a targeted MS/MS assay in the independent cohort.

Q4: How can we ensure our single-cell RNA-seq clustering is reproducible? A: Reproducibility is challenged by ambient RNA, cell doublets, and algorithmic stochasticity.

- Troubleshooting Guide:

- Experimental Controls: Use cell hashing or multiplexing to label samples. Include empty wells/droplets to assess ambient RNA. Use doublet detection tools (e.g., DoubletFinder, scrublet).

- Computational Stability: Set random seeds in all analysis scripts (e.g.,

set.seed(42)in R). Use ensemble clustering or consensus methods. Benchmark parameters on public benchmark datasets. Report all software versions.

Table 1: Common Sources of Irreproducibility Across Omics Layers

| Omics Layer | Primary Technical Variance Source | Typical Impact on CV* | Key Corrective Action |

|---|---|---|---|

| Genomics (WES) | Capture kit bias, coverage uniformity | 15-25% | Use same kit lot; target coverage >100x |

| Transcriptomics (RNA-Seq) | Library prep method, sequencing depth | 20-30% | Standardize prep; use spike-ins; depth >30M reads |

| Proteomics (LC-MS/MS) | Sample digestion efficiency, LC drift | 25-40% | Use SIS peptides; implement QC injections |

| Metabolomics (LC-MS) | Ion suppression, column aging, batch effects | 30-50% | Use pooled QCs; randomize run order; apply batch correction |

*CV: Coefficient of Variation. Data synthesized from recent literature and reproducibility initiatives.

Table 2: Estimated Economic Impact of Irreproducible Biomedical Research

| Stage Impacted | Estimated Annual Cost (US) | Primary Omics-Related Cause |

|---|---|---|

| Preclinical Biomarker Discovery | ~$10-15 Billion | Poor experimental design, lack of SOPs, underpowered studies |

| Early Drug Development (Phase I/II) | ~$20-28 Billion | Failed validation of omics-based targets or pharmacodynamic markers |

| Total (Biomedical Research) | ~$50 Billion+ | Cumulative effect of irreproducible data across disciplines |

Sources: Freedman et al., *PLOS Biology 2015; recent industry analyst reports.*

Detailed Experimental Protocols

Protocol 1: Reproducible Plasma Metabolite Extraction for LC-MS Objective: To obtain consistent, high-quality metabolite extracts from human plasma for untargeted metabolomics. Reagents: Human plasma (EDTA), cold methanol (-20°C, LC-MS grade), cold acetonitrile (-20°C, LC-MS grade), internal standard mix (e.g., L-valine-d8, caffeine-d9), water (LC-MS grade). Procedure:

- Thaw plasma samples slowly on ice. Vortex for 10 seconds.

- Aliquot 50 µL of plasma into a pre-chilled 1.5 mL Eppendorf tube.

- Add 10 µL of internal standard mix. Vortex 10 seconds.

- Add 200 µL of cold methanol. Vortex vigorously for 30 seconds. Incubate at -20°C for 1 hour to precipitate proteins.

- Centrifuge at 14,000 x g for 15 minutes at 4°C.

- Transfer 200 µL of the supernatant to a new LC-MS vial.

- Dry the supernatant under a gentle stream of nitrogen gas at room temperature.

- Reconstitute the dried extract in 100 µL of 50:50 acetonitrile:water. Vortex for 1 minute.

- Centrifuge at 14,000 x g for 5 minutes at 4°C. Transfer 80 µL to a LC-MS vial with insert for analysis. Critical Notes: Perform all steps in a randomized order to avoid batch effects. Include pooled QC samples created by combining equal aliquots from all study samples.

Protocol 2: Robust RNA-Seq Library Preparation with Spike-in Controls Objective: To generate sequencing libraries with minimal technical noise for accurate gene expression quantification. Reagents: High-quality total RNA (RIN > 7), ERCC ExFold RNA Spike-In Mix (Thermo Fisher), poly(A) mRNA selection beads, strand-specific library prep kit (e.g., Illumina TruSeq Stranded mRNA), RNase inhibitors. Procedure:

- Quantify RNA using fluorometry (e.g., Qubit). Dilute all samples to the same concentration (e.g., 50 ng/µL).

- Spike-in Addition: For each 500 ng of total RNA, add 1 µL of a 1:100,000 dilution of ERCC ExFold Mix. Mix thoroughly by pipetting.

- Proceed with poly(A) selection per kit instructions. Elute mRNA in nuclease-free water.

- Perform fragmentation, first and second strand cDNA synthesis, adapter ligation, and PCR amplification according to the stranded mRNA kit protocol. Use a defined, minimal PCR cycle number (e.g., 12 cycles).

- Clean up final libraries using double-sided size selection beads (e.g., SPRIselect) to remove adapter dimers and large fragments.

- Quantify libraries by fluorometry and assess size distribution by bioanalyzer/tapestation. Critical Notes: Process all samples in a single batch if possible. If multiple batches are required, include the same positive control RNA sample (e.g., Universal Human Reference RNA) in each batch.

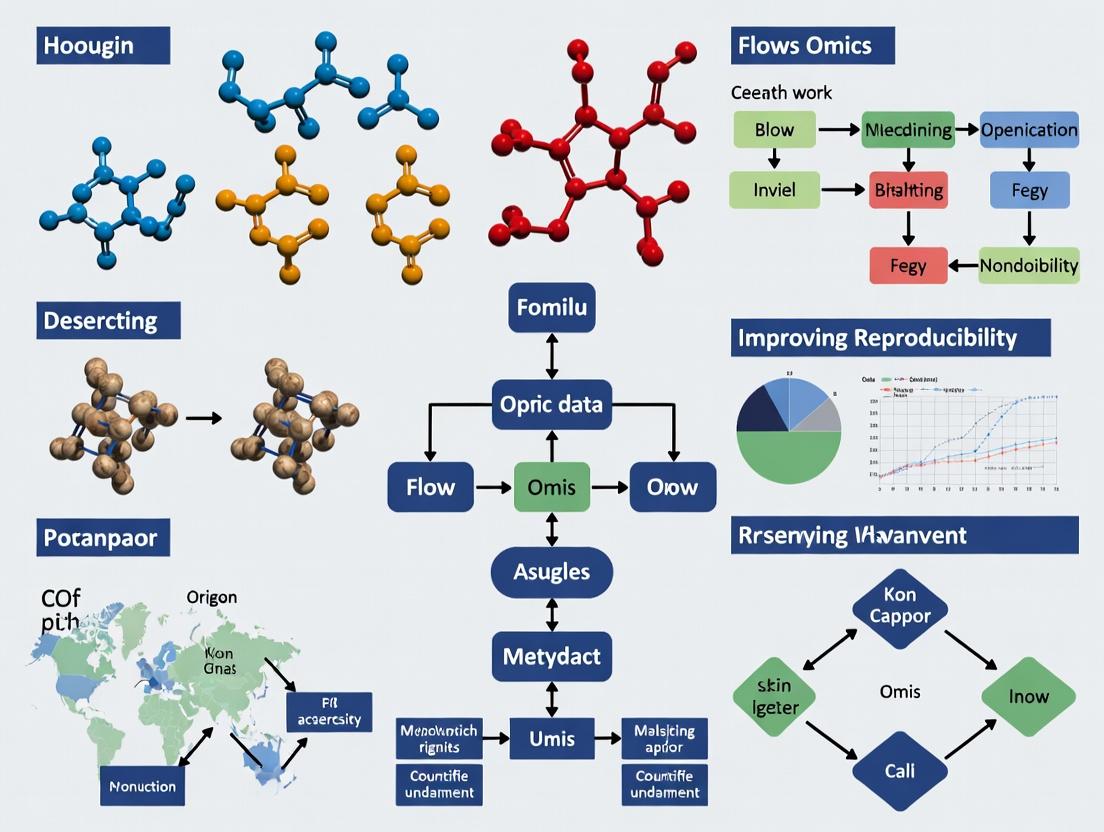

Pathway & Workflow Visualizations

Title: Reproducible Omics Research Workflow

Title: From Signaling to Omics Readouts

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Kits for Reproducible Multi-Omics

| Item | Function & Rationale | Example Product/Catalog |

|---|---|---|

| Spike-In RNA Controls (ERCC) | Added to RNA samples before library prep to monitor technical variation, normalize data, and detect cross-contamination. | Thermo Fisher Scientific, 4456740 |

| Stable Isotope-Labeled Standards (SIS) | Synthetic peptides/proteins/metabolites with heavy isotopes. Added pre-digestion/extraction for absolute quantification & process control in MS. | Sigma-Aldrich (various), Cambridge Isotopes |

| Pooled Quality Control (QC) Sample | A homogeneous mixture of aliquots from all study samples. Run repeatedly throughout sequence/batch to monitor and correct instrument drift. | Prepared in-house from study samples. |

| Universal Human Reference RNA | Standardized RNA from multiple cell lines. Used as an inter-laboratory control for transcriptomics assay performance. | Agilent, 740000 |

| Mass Spec Grade Solvents | Ultra-pure solvents (water, acetonitrile, methanol) with minimal contaminants to reduce background noise and ion suppression in LC-MS. | Fisher Chemical, LC-MS Grade |

| SPRI (Solid Phase Reversible Immobilization) Beads | Magnetic beads for consistent, automatable size selection and clean-up of NGS libraries or nucleic acids. | Beckman Coulter, SPRIselect |

| DNase/RNase Inactivation Reagent | To remove contaminating nucleases from work surfaces and equipment, protecting sample integrity. | Thermo Fisher Scientific, RNaseZap |

Troubleshooting Guides & FAQs

FAQ 1: My RNA-seq replicates show high variability. How can I determine if it's technical or biological noise? Answer: High inter-replicate variability can stem from either source. To diagnose, follow this protocol:

- Technical Replicates: Re-prepare libraries from the same biological sample RNA extract. High variability here indicates technical noise from library prep or sequencing.

- Biological Replicates: Prepare libraries from RNA extracted from independently collected biological samples. High variability here indicates true biological noise.

- Analysis: Use PCA plots; technical replicates should cluster tightly, while biological replicates show more spread. Calculate the Coefficient of Variation (CV) for each gene/feature across replicates.

Experimental Protocol: RNA-seq Noise Partitioning

- Materials: Cultured cells or tissue samples (minimum n=6 biological replicates per condition).

- Step 1: For 3 biological replicates, split the total RNA post-extraction into three aliquots. These become your technical replicates for library prep.

- Step 2: Use the remaining 3 independent biological samples as separate biological replicates.

- Step 3: Prepare sequencing libraries using an identical, calibrated kit (e.g., Illumina Stranded mRNA Prep) for all samples in a single batch if possible.

- Step 4: Sequence all libraries on the same flow cell lane to minimize batch effects.

- Step 5: Map reads (using STAR) and quantify gene counts (using featureCounts). Perform PCA and calculate CVs per gene group.

FAQ 2: In my proteomics experiment, how do I distinguish batch effects from true biological signal? Answer: Batch effects are systematic technical noise. Implement a randomized block design.

- Troubleshooting Step: Process samples from ALL experimental conditions in EVERY sample preparation batch. Do not process all controls in one batch and all treatments in another.

- Solution: Use internal standards (e.g., stable isotope-labeled standard peptides) spiked into each sample at the start of processing. Normalize sample peak areas to these standards. Post-acquisition, use software (e.g., ComBat, Limma) for batch correction, but only if the experimental design is balanced.

Experimental Protocol: LC-MS/MS Proteomics with Batch Randomization

- Materials: Cell lysates (n=10 per group), TMTpro 16plex kit, internal standard peptides (e.g., Biognosys' PQ500).

- Step 1: Denature, reduce, alkylate, and digest each sample individually.

- Step 2: Label each sample with a unique TMTpro channel according to a pre-defined randomization table that distributes conditions across labeling sets.

- Step 3: Combine all labeled samples into a single multiplexed pool. This pool now contains all samples and is fractionated and analyzed together, eliminating labeling batch effects.

- Step 4: For LC-MS/MS, inject the pooled sample multiple times in a randomized run order across the instrument queue.

- Step 5: Analyze data with search software (e.g., MaxQuant) and subsequent statistical analysis in Perseus or R, including a "batch" factor in the ANOVA model.

FAQ 3: For metabolomics, what are the best practices to control pre-analytical technical variability? Answer: Pre-analytical steps are the largest source of technical noise in metabolomics. Strict SOPs are critical.

- Issue: Metabolite levels change rapidly post-sampling.

- Solution: Implement immediate quenching (e.g., liquid nitrogen snap-freezing for cells/tissues, cold methanol for biofluids). Use pre-chilled collection tubes. Store all samples at -80°C without freeze-thaw cycles. For LC-MS, use a pooled quality control (QC) sample injected repeatedly throughout the run sequence to monitor and correct for instrumental drift.

Experimental Protocol: Plasma Metabolite Extraction for LC-MS

- Materials: Blood collection tubes (EDTA, pre-chilled), cold methanol (-20°C), internal standards (e.g., Cambridge Isotope Laboratories' MSK-CAF-1).

- Step 1: Collect blood in pre-chilled tubes. Centrifuge at 4°C within 15 minutes to isolate plasma.

- Step 2: Aliquot plasma into cryovials and snap-freeze in liquid nitrogen. Store at -80°C.

- Step 3: For extraction, thaw samples on ice. Piper 50 µL plasma into a precooled tube.

- Step 4: Add 200 µL of cold methanol containing a mixture of deuterated internal standards. Vortex vigorously for 1 minute.

- Step 5: Incubate at -20°C for 1 hour to precipitate proteins.

- Step 6: Centrifuge at 14,000 g at 4°C for 15 minutes. Transfer supernatant to a fresh vial for analysis.

- Step 7: Create a pooled QC by combining a small aliquot from every sample. Inject this QC every 4-6 experimental samples.

Table 1: Estimated Variance Contributions by Omics Layer

| Omics Layer | Primary Source of Technical Noise | Typical Technical CV Range | Typical Biological CV Range | Key Mitigation Strategy |

|---|---|---|---|---|

| Genomics (WGS) | Library Prep, Coverage Depth | 5-15% | 0.1% (SNPs) to >100% (CNVs) | Uniform coverage >30x, PCR-free prep |

| Transcriptomics (RNA-seq) | Library Prep, RNA Integrity | 10-25% | 30-100%+ | RIN >8, Unique Molecular Identifiers (UMIs) |

| Proteomics (LC-MS/MS) | Sample Prep, Ionization Efficiency | 15-30% (Label-free) 5-15% (Multiplexed) | 20-200%+ | Isobaric labeling (TMT), Internal standards |

| Metabolomics (LC-MS) | Sample Extraction, Instrument Drift | 20-40% | 30-300%+ | Standardized quenching, Pooled QC samples |

Table 2: Replicate Number Guidance for 80% Statistical Power

| Omics Assay | Detecting 2-Fold Change | Detecting 1.5-Fold Change | Major Driver of Replicate Need |

|---|---|---|---|

| Bulk RNA-seq | 3-4 Biological Replicates | 6-8 Biological Replicates | Biological Variation |

| Single-Cell RNA-seq | 3-4 Samples, 2000+ cells/sample | 4-6 Samples, 5000+ cells/sample | Biological & Technical (Dropout) |

| Shotgun Proteomics | 4-5 Biological Replicates (Label-free) | 8-10 Biological Replicates (Label-free) | Technical Variation in Prep |

| Targeted Metabolomics | 5-6 Biological Replicates | 10-12 Biological Replicates | Pre-analytical Technical Variation |

Visualizations

Title: Sources of Variance in Omics Data

Title: RNA-seq Workflow for Noise Assessment

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Noise Control

| Reagent/Material | Function & Role in Noise Reduction | Example Product |

|---|---|---|

| Unique Molecular Identifiers (UMIs) | Short random nucleotide tags added to each mRNA molecule before PCR amplification. Allows bioinformatic correction for amplification bias and noise, distinguishing technical duplicates from biological reads. | Illumina's TruSeq UMI Adaptors |

| Isobaric Mass Tags (TMT, iTRAQ) | Chemical labels that allow multiplexing of up to 18 samples in a single MS run. Dramatically reduces quantitative noise from instrument run-to-run variation by comparing reporter ions from co-eluted peptides. | Thermo Fisher TMTpro 16plex |

| Stable Isotope-Labeled Internal Standards (SIL/SIS) | Synthetic peptides or metabolites with heavy isotopes (13C, 15N) spiked into samples at known concentrations before processing. Enables precise normalization for sample recovery and ionization efficiency variances. | Biognosys PQ500 kit (proteomics), Cambridge Isotope Labs standards (metabolomics) |

| Pooled Quality Control (QC) Sample | A homogenous mixture created from a small aliquot of every experimental sample. Injected at regular intervals during MS acquisition to monitor and correct for temporal instrument drift (signal intensity, retention time). | Lab-created from study samples |

| RNA Integrity Number (RIN) Standards | Used to calibrate bioanalyzers. Accurate RIN assessment (>8 is typically required) is critical for controlling pre-analytical noise in transcriptomics, as degraded RNA is a major source of technical variation. | Agilent RNA 6000 Nano Kit |

Technical Support Center

Welcome to the Reproducibility Support Hub. This center provides targeted troubleshooting guidance for common, critical issues that undermine reproducibility in multi-omics studies.

Troubleshooting Guide: Identifying and Mitigating Key Culprits

Issue Category 1: Batch Effects

- Symptom: Clustering of samples by processing date, technician, or reagent kit in PCA plots, rather than by biological condition.

- Root Cause: Technical variation introduced when samples are processed in different groups (batches).

- Diagnostic Tool: Generate a PCA plot colored by batch ID. If batch explains significant variance, correction is needed.

- Solution Protocol:

- Design: Randomize samples across batches.

- Correction: Apply statistical methods after data acquisition. For genomic data, use Combat (from the

svaR package) or limma'sremoveBatchEffect. Always apply correction within a single study; never use it to merge public datasets without extreme caution.

Issue Category 2: Platform-Specific Biases

- Symptom: Inconsistent gene expression or metabolite quantification when the same sample is run on different platforms (e.g., Illumina vs. Affymetrix arrays, different LC-MS instruments).

- Root Cause: Differences in probe design, sequencing chemistry, detection sensitivity, and proprietary algorithms.

- Diagnostic Tool: Correlation analysis of measurements for a set of shared standards or spike-in controls across platforms.

- Solution Protocol:

- Calibration: Use universal reference standards (e.g., SEQC/MAQC consortium samples for transcriptomics, NIST SRM 1950 for metabolomics).

- Normalization: Use platform-agnostic methods like Quantile Normalization or cross-platform normalization (CPN) for microarray data.

- Metadata: Always record the exact platform model, software version, and assay kit lot number.

Issue Category 3: Sample Preparation Inconsistencies

- Symptom: High technical variability in yield, purity, or integrity metrics (e.g., RIN scores for RNA, protein degradation profiles) within the same biological group.

- Root Cause: Lack of standardized SOPs for collection, lysis, storage, and extraction.

- Diagnostic Tool: Monitor QC metrics in a control chart. Establish acceptable thresholds (see Table 1).

- Solution Protocol:

- SOPs: Develop and validate detailed, step-by-step protocols for every sample type.

- Automation: Use liquid handlers for reproducible pipetting in high-throughput settings.

- Internal Standards: Add spike-ins early in the protocol (e.g., ERCC RNA spikes, stable isotope-labeled metabolites/proteins) to track technical recovery and efficiency.

Frequently Asked Questions (FAQs)

Q1: How can I tell if my observed variance is due to a batch effect or real biology?

A: Perform a PERMANOVA or similar statistical test using the adonis2 function (R package vegan) to partition variance. If the "Batch" variable explains a statistically significant portion (p < 0.05) of the total variance in a distance matrix, you have a batch effect. The key is to see if biological factors remain significant after accounting for batch.

Q2: We must process samples over several weeks. What is the best experimental design to handle this? A: Use a balanced block design. Do not process all "Control" samples in one batch and all "Treatment" in another. Instead, distribute samples from each biological group evenly across all batches. Include at least one pooled reference sample (a mix from all groups) in every batch to monitor and later correct for inter-batch drift.

Q3: Our proteomics core switched LC-MS columns mid-study. How should we handle the data? A: This is a severe platform bias incident. Process a subset of previous samples (if available) on the new column to assess the bias magnitude. Do not simply merge the data. Treat data from the old and new columns as two separate "batches" and apply appropriate batch correction methods validated for proteomics (e.g., ComBat). Clearly document this in all publications.

Q4: What is the single most important step to improve reproducibility in sample prep? A: The implementation and strict adherence to a single, validated Standard Operating Procedure (SOP) by all personnel, coupled with the use of identical, calibrated equipment and reagent lots. Document any and all deviations.

Table 1: Acceptable QC Thresholds for Common Omics Assays

| Assay Type | QC Metric | Optimal Range | Minimum Acceptable | Tool/Method |

|---|---|---|---|---|

| RNA-Seq | RNA Integrity Number (RIN) | RIN ≥ 9.0 | RIN ≥ 7.0 | Bioanalyzer/TapeStation |

| WGS/WES | DNA Concentration | ≥ 15 ng/µL | ≥ 2.5 ng/µL | Qubit dsDNA HS Assay |

| LC-MS Metabolomics | Pooled QC Sample RSD* | RSD < 20% | RSD < 30% | Injected every 5-10 samples |

| Shotgun Proteomics | Protein Yield | Protocol-dependent | Consistent across batches | BCA or Bradford Assay |

*RSD: Relative Standard Deviation of peak intensities in repeated injections of an identical, pooled quality control sample.

Table 2: Common Batch Correction Algorithms

| Algorithm | Best For | Key Principle | Software/Package | Considerations |

|---|---|---|---|---|

| ComBat | Microarray, RNA-Seq, Methylation | Empirical Bayes adjustment for known batches | sva (R) |

Can over-correct if batch is confounded with biology. |

| limma removeBatchEffect | Any matrix-based data | Linear model to remove batch means | limma (R) |

Simpler than ComBat, good for mild effects. |

| Harmony | Single-cell RNA-Seq, CyTOF | Iterative clustering and integration | harmony (R/Python) |

Designed for complex, high-dimensional data. |

| SERRF | Metabolomics | Uses QC samples to model & correct drift | Online Tool / serrf (R) |

QC-sample dependent; requires systematic QC injection. |

Experimental Protocols

Protocol 1: Diagnostic PCA for Batch Effect Detection (RNA-Seq Count Data)

- Input: Normalized count matrix (e.g., from DESeq2's

varianceStabilizingTransformationor log2(CPM + 1)). - Compute PCA: Use the

prcomp()function in R on the transposed matrix (samples as rows, genes as columns). - Visualize: Plot PC1 vs. PC2 using

ggplot2. Color points byBatch(e.g., processing date) and shape byCondition(e.g., disease state). - Interpret: If samples cluster primarily by color (Batch), a significant batch effect is present. If they cluster by shape (Condition), biological signal is strong.

Protocol 2: Implementing Spike-In Controls for Proteomics Sample Prep

- Selection: Choose a stable isotope-labeled (SIL) protein or peptide standard mix that does not interfere with endogenous analytes (e.g., SpikeTides TQL for targeted proteomics).

- Addition Point: Add the spike-in standard immediately after cell lysis or to the intact protein digest to control for losses during digestion and cleanup.

- Quantification: In downstream MS analysis, normalize the peak areas of endogenous peptides to the peak areas of their corresponding spike-in standards. This yields a ratio that corrects for preparation inefficiencies.

Visualizations

Diagram 1: Multi-omics Workflow with Critical Control Points

Diagram 2: Logic for Addressing Reproducibility Culprits

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function | Example Product/Tool |

|---|---|---|

| Universal Reference Standards | Provides a benchmark for cross-platform and cross-lab calibration. | SEQC RNA reference samples (Horizon), NIST SRM 1950 Metabolites in Plasma. |

| External RNA Controls (ERCC) | Spike-in RNA mixes with known concentrations to assess technical sensitivity, dynamic range, and for normalization. | ERCC Spike-In Mix (Thermo Fisher). |

| Stable Isotope-Labeled Standards | Internal standards for mass spectrometry that correct for sample prep losses and ionization efficiency. | SILAC kits (proteomics), CIL LC-MS kits (metabolomics). |

| Pooled Quality Control (QC) Sample | An identical sample injected repeatedly throughout a run to monitor and correct for instrumental drift. | A pooled aliquot of all study samples. |

| Automated Liquid Handler | Eliminates pipetting variability, a major source of sample prep inconsistency. | Hamilton STAR, Beckman Coulter Biomek. |

| Validated Extraction Kits | Pre-optimized, consistent reagents and protocols for nucleic acid, protein, or metabolite isolation. | Qiagen RNeasy, Michrom MAGIC HPLC column. |

| Sample Tracking LIMS | Laboratory Information Management System to meticulously track sample provenance, handling, and metadata. | LabArchives, BaseSpace Clarity LIMS. |

Technical Support Center: Troubleshooting Reproducibility in Multi-Omics Analysis

Frequently Asked Questions (FAQs)

Q1: My differential expression analysis results change drastically when I use a different alignment tool (e.g., STAR vs. HISAT2). Why does this happen, and how can I stabilize my findings? A: Different aligners use distinct algorithms for handling mismatches, splicing, and multi-mapping reads, leading to varying counts. To stabilize findings:

- Validate: Use a spike-in control RNA-seq experiment to benchmark aligners for your specific organism and sequencing protocol.

- Consensus: Employ multiple aligners and intersect the resulting gene lists for high-confidence candidates.

- Parameter Documentation: Pre-register and meticulously document all alignment parameters (e.g.,

--outFilterMismatchNmax,--alignSJoverhangMin).

Q2: I am getting different biological interpretations from the same dataset when using different gene set enrichment analysis (GSEA) tools (GSEA, GSVA, g:Profiler). How should I proceed? A: Discrepancies arise from distinct null hypotheses, statistical models, and background corrections.

- Troubleshooting Step: Run a standardized positive control dataset (e.g., a well-characterized cell line perturbation) through each pipeline to understand tool-specific biases.

- Solution: Do not rely on a single tool's p-value. Report results from at least two methods and focus on pathways consistently enriched across tools with consistent directionality. Always specify the version of the gene set database used.

Q3: How do choices in normalization and batch effect correction tools (ComBat, sva, RUV) affect the integration of multi-omic datasets, and how can I audit this? A: Over- or under-correction can artificially create or erase biological signals.

- Protocol for Audit:

- Perform PCA on the data before and after correction.

- Color plots by known technical batches (sequencing run, preparation date) and biological groups (disease state). Successful correction should minimize cluster separation by batch while preserving biological separation.

- Use negative controls (e.g., housekeeping genes, non-differential features) to verify that correction does not introduce spurious variance.

Q4: In metabolomics, my significance findings shift when I change the peak picking/alignment algorithm in my preprocessing software (e.g., XCMS vs. MS-DIAL). What is the best practice? A: Peak picking is highly algorithm-dependent. Best practice involves:

- Manual Verification: Randomly select a subset of significant features and manually inspect their raw chromatograms and mass spectra across samples in software like MZmine.

- Benchmark with Standards: If available, process a set of samples spiked with known metabolite standards at known concentrations. Calculate the recall and precision of each pipeline for detecting these standards.

Q5: For microbiome analysis, how does the choice of 16S rRNA reference database (Greengenes, SILVA, RDP) and clustering threshold affect alpha and beta diversity metrics? A: Database taxonomy and clustering thresholds (97% vs. 99% identity) directly define Operational Taxonomic Unit (OTU) or Amplicon Sequence Variant (ASV) calls.

- Actionable Guide: Re-run your analysis using two major databases (e.g., SILVA and RDP) at the same clustering threshold. Report concordance. For a robust conclusion, the primary finding (e.g., "Group A has higher Shannon diversity than Group B") should hold across these parameter choices, even if exact p-values vary.

Table 1: Variability in Differential Gene Detection from Simulated RNA-seq Data (n=6/group)

| Analysis Step | Tool/Parameter Option A | Tool/Parameter Option B | % Overlap in Significant Genes (FDR<0.05) | Key Parameter Responsible for Divergence |

|---|---|---|---|---|

| Alignment & Quantification | STAR (--outFilterMismatchNmax 10) | HISAT2 (default) | 72% | Spliced alignment sensitivity |

| Differential Expression | DESeq2 (LFC shrinkage: apeglm) | edgeR (robust=TRUE) | 89% | Dispersion estimation method |

| P-value Adjustment | Benjamini-Hochberg | Independent Hypothesis Weighting (IHW) | 81% | Procedure for leveraging covariate (mean count) |

Table 2: Effect of Clustering Threshold on 16S Microbiome Metrics

| Metric | 97% Identity Clustering | 99% Identity Clustering | Notes |

|---|---|---|---|

| Average Number of OTUs/Sample | 245 | 310 | Higher threshold splits populations. |

| Median Shannon Diversity Index | 3.8 | 4.1 | Artificially inflates with more units. |

| PERMANOVA R² (Group Effect) | 0.15 (p=0.001) | 0.11 (p=0.012) | Effect size and significance can diminish. |

Experimental Protocol: Benchmarking Bioinformatics Pipelines

Title: Protocol for Systematic Pipeline Impact Assessment on Multi-Omic Data

Objective: To empirically quantify how algorithmic choices at each step of a bioinformatics workflow influence final biological conclusions.

Materials:

- A well-characterized public dataset with validated ground truth (e.g., SEQC/MAQC consortium data for transcriptomics) or an in-house positive control sample set.

- Access to high-performance computing cluster.

Methodology:

- Design a Parameter Grid: For each analysis step (QC, alignment, quantification, statistical testing), define 2-3 commonly used software tools or critical parameters.

- Execute Workflow Combinations: Run the analysis using all possible combinations of choices (a full factorial design). Containerize each pipeline using Docker/Singularity for reproducibility.

- Define Outcome Metrics: Calculate, for each pipeline:

- Recall/Sensitivity: % of known true positives detected.

- Precision: % of reported positives that are true positives.

- Effect Size Correlation: Correlation between measured fold-change and known fold-change.

- Rank Stability: Jaccard index of top-N candidate lists between pipelines.

- Visualize & Report: Create UpSet plots for gene list overlaps and heatmaps of outcome metrics across the parameter grid. The pipeline yielding the best balance of metrics for your specific data type should be adopted and pre-registered for future studies.

Visualizations

Title: Key Analysis Steps Where Choices Skew Results

Title: Stages of Multi-Omics Analysis Prone to Bias

The Scientist's Toolkit: Research Reagent Solutions for Reproducibility

Table 3: Essential Materials & Tools for Reproducible Bioinformatics

| Item | Function in Ensuring Reproducibility | Example / Specification |

|---|---|---|

| Spike-in Control RNAs | External RNA controls of known concentration added to samples pre-library prep. Allows benchmarking of alignment, quantification, and differential expression tools for accuracy and precision. | ERCC (External RNA Controls Consortium) Spike-In Mixes, SIRVs (Spike-in RNA Variants). |

| Synthetic Metabolite Standards | Known compounds spiked into biological samples pre-processing. Used to validate and compare peak detection, alignment, and quantification algorithms in metabolomics pipelines. | IROA (Isotope Ratio Outlier Analysis) Mass Spec Standards, MSQC (Metabolomics Standards QC) mix. |

| Mock Microbial Community DNA | Genomic DNA from a defined mix of known bacterial strains. Provides ground truth for evaluating 16S/ITS amplicon and shotgun metagenomics bioinformatics pipelines (taxonomic assignment, abundance estimation). | ZymoBIOMICS Microbial Community Standards. |

| Version-Controlled Code Repository | Documents every step of the analysis, allowing exact recreation of the computational environment and workflow. | GitHub, GitLab, or Bitbucket with detailed README. Use of renv, conda, or Docker containers. |

| Workflow Management System | Automates execution of multi-step pipelines, ensuring consistency and capturing all parameters and software versions in a report. | Nextflow, Snakemake, or WDL (Workflow Description Language). |

| Electronic Lab Notebook (ELN) | Provides the link between wet-lab sample provenance, experimental metadata, and the inception of computational analysis. Critical for audit trails. | Benchling, LabArchives, or open-source options. |

Technical Support Center: Troubleshooting Multi-Omics Reproducibility

FAQs & Troubleshooting Guides

Q1: My transcriptomics and proteomics data from the same samples show poor correlation. What are the primary technical causes? A: This is a common integration failure. Key issues include:

- Sample Processing Lag: Delays between RNA and protein extraction can degrade labile transcripts.

- Platform-Specific Bias: Poly-A selection vs. ribosomal RNA depletion in RNA-seq can yield different profiles.

- Protein vs. mRNA Half-Life Discrepancy: The measured mRNA level may not reflect the current protein abundance due to post-transcriptional regulation.

- Troubleshooting Protocol: Implement a synchronized quenching and lysis protocol. Use a single, homogenized aliquot split for parallel nucleic acid and protein isolation. Validate with spike-in controls (e.g., SIRVs for RNA, UPS2 for proteomics) to assess platform technical variance separately from biological variance.

Q2: How can I validate batch effect correction in my multi-omics dataset before integration? A: Follow this diagnostic workflow:

- Pre-Correction PCA: Perform Principal Component Analysis (PCA) on each omics layer, colored by batch ID.

- Apply Correction: Use a method like Combat, limma's

removeBatchEffect, or Harmony per platform. - Post-Correction PCA: Repeat PCA to visualize if batch clusters are removed.

- Positive Control: Include a replicated reference sample (e.g., a pooled sample) across all batches. These replicates should cluster tightly post-correction.

- Negative Control: Ensure batch correction does not artificially remove strong biological signal (e.g., case vs. control separation). See Diagnostic Diagram below.

Q3: What are critical steps for reproducible microbiome metagenomics linked to host metabolomics? A: Failures often stem from contamination and inconsistent processing.

- Issue: Host metabolite degradation and microbial overgrowth post-sampling.

- Solution: Use a standardized stabilization buffer (e.g., DNA/RNA Shield) immediately upon collection. For stool samples, employ a dual-filter system to separate microbial cells (for DNA) from supernatants (for metabolomics) simultaneously.

- Critical Control: Include blank extraction controls and process them identically through DNA sequencing and LC-MS. All contaminant species/features identified in blanks must be subtracted from experimental samples.

Q4: My chromatin accessibility (ATAC-seq) and RNA-seq data from single-cell multi-omics are conflicting. How to troubleshoot? A: This often points to cell-specific data quality issues or incorrect matching.

- Check Cell Filtering: Apply consistent, stringent doublet detection (e.g., using

DoubletFinderorscDblFinder) before integration. Doublets create false co-accessibility/expression. - Verify Peak-Gene Linkage: Do not assume distal peaks link to the nearest gene. Use a computational tool like

CiceroorSignacto calculate co-accessibility correlations to define likely gene targets. - Confirm Data Completeness: For each cell barcode, check the count depth for both assays. Filter out cells where one assay has near-zero counts. See Workflow Diagram below.

Table 1: Technical Variance Introduced at Key Pre-Analytical Steps

| Step | Omics Type | Typical Coefficient of Variation (CV%) Impact | Mitigation Strategy |

|---|---|---|---|

| Sample Quenching/Lysis | Metabolomics | 25-40% | Snap-freeze in liquid N₂ within 30s. Use cold methanol-based lysis. |

| Phosphoproteomics | >50% | Add phosphatase inhibitors instantly. Use standardized lysis buffer. | |

| Nucleic Acid Extraction | Transcriptomics | 10-20% | Use automated, bead-based platforms. Add external RNA controls. |

| Metagenomics | 30-60% (bias) | Use mock community controls. Standardize cell lysis method (bead-beating). | |

| Data Acquisition Batch | Proteomics (DIA) | 15-25% | Use staggered reference pools. Interpolated normalized. |

| Lipidomics | 20-35% | Randomize sample order. Use pooled quality control samples. |

Table 2: Reported Discrepancy Rates in High-Profile Multi-Omics Studies

| Study Focus (Example) | Reported Gene/Pathway Overlap | Post-Hoc Review Identified Cause | Reference Correction Step Implemented |

|---|---|---|---|

| Cancer Biomarker Discovery (TCGA proteogenomics) | mRNA-protein correlation (r) varied 0.1-0.6 across cancer types. | Use of archival FFPE blocks for protein vs. fresh-frozen for RNA. | Mandated matched sample type for all future collections. |

| Microbial Community Function | <30% of enriched KEGG pathways shared between metatranscriptome & metabolome. | Unsynchronized sampling; rapid metabolite turnover. | Implemented immediate in situ metabolite stabilization. |

| Single-Cell Multiome (ATAC + GEX) | Only ~60% of cells passed QC for both modalities in early protocols. | Nuclear permeabilization efficiency varied, degrading RNA. | Optimized commercial kit lysis buffer incubation time. |

Detailed Experimental Protocols

Protocol 1: Synchronized Quenching & Splitting for Transcriptomics & Proteomics Objective: Obtain paired, biologically representative RNA and protein from the same cell population.

- Rapid Quenching: Aspirate culture media swiftly. Immediately add 5mL of ice-cold PBS. Place culture dish directly on a metal plate chilled to -20°C.

- Simultaneous Lysis: Aspirate PBS. Add 1mL of TRIzol LS reagent to the plate. Lyse cells directly by scraping.

- Phase Separation & Splitting:

- Transfer homogenate to a Phase Lock Gel Heavy tube.

- Add 200µl chloroform, shake vigorously, centrifuge (12,000g, 15min, 4°C).

- Aqueous (RNA) Phase: Carefully transfer the upper aqueous phase to a new tube for RNA purification.

- Organic (Protein) Phase: Remove and retain the interphase and lower organic phase. Precipitate proteins with isopropanol, wash with guanidine HCl in ethanol.

- Parallel Processing: Purify RNA using a column-based kit with DNase I treatment. Wash and solubilize protein pellet in 1% SDS buffer for downstream trypsin digestion and LC-MS/MS.

Protocol 2: Blank-Subtraction for Microbiome-Metabolome Studies Objective: Identify and remove contaminating features from sequencing and LC-MS data.

- Prepare Blanks: Include at least 3 "blank" samples per extraction batch. These contain only the sterilization buffer or unused collection swab, processed identically to biological samples.

- DNA Sequencing:

- Sequence blanks alongside samples.

- Using

decontam(R package), identify ASVs (Amplicon Sequence Variants) with a higher prevalence in blanks than in true samples (prevalence method) or with low-frequency in samples but present in all blanks (frequency method). - Remove contaminant ASVs from the feature table.

- Metabolomics LC-MS:

- Process blank runs through the same feature detection pipeline (e.g., XCMS, MS-DIAL).

- For each feature, calculate the median peak area in blanks vs. biological samples.

- Discard any feature where the median blank intensity is >20% of the median sample intensity or >10x the average in solvent blanks.

Visualizations

Diagram 1: Batch Effect Diagnosis Workflow

Diagram 2: Single-Cell Multiome QC & Filtering Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Omics Reproducibility | Example Product/Brand |

|---|---|---|

| Universal DNA/RNA/Protein Stabilizer | Immediately halts degradation and biomolecular activity upon sample contact, preserving the in vivo state across analytes. | DNA/RNA Shield (Zymo), RNAlater Stabilizer |

| Process Control Spike-Ins | Adds known, non-biological molecules to track technical variance from extraction through sequencing/MS. Distinguishes technical noise from biological signal. | SIRVs (Spike-In RNA Variants), UPS2 (Universal Proteomics Standard), ISTD Mixes (Metabolomics) |

| Phase Lock Gel Tubes | Enables clean, reproducible, and complete separation of aqueous and organic phases during TRIzol-based parallel RNA/protein extraction, maximizing yield for both. | Phase Lock Gel Heavy (Quantabio) |

| Mock Microbial Community | Defined mix of known microbial genomes. Served as a positive control for metagenomic/metatranscriptomic workflow efficiency and bias assessment. | ZymoBIOMICS Microbial Community Standard |

| Multimodal Lysis Buffer | A single buffer formulation optimized for the simultaneous release and stabilization of DNA, RNA, protein, and metabolites from limited samples (e.g., biopsies). | AllPrep DNA/RNA/Protein Mini Kit (Qiagen) |

| Indexed Reference Pool | A pooled sample of all experimental samples, aliquoted and run at intervals throughout the MS acquisition sequence. Enables robust signal correction for instrument drift. | Prepared in-house from study samples. |

Building a Reproducible Pipeline: Best Practices for Multi-Omics Study Design and Execution

Technical Support Center: Troubleshooting & FAQs

FAQ 1: Our metabolomics data shows high intra-group variability. What are the most likely pre-analytical culprits?

- Answer: High variability often originates from inconsistent sample collection and quenching. Key culprits include:

- Variable Time-to-Quench: Delays in halting metabolism after sample extraction (e.g., from blood or cell culture) cause significant metabolite turnover. Standardize the interval between collection and flash-freezing or immersion in cold quenching solvent.

- Inconsistent Storage Temperature: Fluctuations in freezer temperature, even during brief access, degrade labile metabolites. Use monitored, dedicated -80°C freezers and limit freeze-thaw cycles.

- Hemolysis in Blood Samples: Hemolyzed samples release intracellular metabolites, altering the plasma/serum profile. Follow strict phlebotomy and centrifugation protocols and visually inspect samples.

FAQ 2: We are preparing an MIAME-compliant submission for a microarray dataset to a journal. What metadata is absolutely required beyond the raw data files?

- Answer: Per the latest MIAME guidelines, you must provide:

- Raw Data Files (e.g., .CEL files).

- Final Processed Data (normalized expression matrix).

- Annotation File linking each sample to its experimental factors and protocols.

- Essential Experimental Metadata: This includes the complete sample annotation (genotype, disease state, treatment), experimental design (sample relationships, replicates), hybridization protocol, scanning parameters, and data processing steps (normalization, transformation methods). Using an ISA-Tab structure to organize this is now the industry-recommended practice.

FAQ 3: Our protein yields from cell lysates are inconsistent, affecting downstream MIAPE-compliant proteomics. How can we improve homogenization and storage?

- Answer: Inconsistent lysis and post-lysis handling are common issues. Follow this detailed protocol:

- Homogenization: Keep cells on ice. Use a consistent lysis buffer volume-to-cell count ratio. For adherent cells, scrape on ice. Use mechanical homogenization (sonication or bead-beating) with controlled, timed bursts. Centrifuge to remove debris immediately after lysis.

- Aliquoting: Aliquot the clarified lysate into single-use volumes to avoid repeated freeze-thaws.

- Storage: Flash-freeze aliquots in liquid nitrogen before transferring to -80°C. Use storage tubes resistant to protein adsorption (e.g., low-bind polypropylene).

- Additives: Include appropriate protease and phosphatase inhibitor cocktails, specific to your sample type, in the lysis buffer. Confirm buffer compatibility with your downstream assay (e.g., MS-compatible detergents).

FAQ 4: What is the practical difference between ISA-Tab and MIAME/MIAPE formats for metadata, and which should we use?

- Answer: MIAME and MIAPE are minimum information standards—they define what metadata to report. ISA-Tab is a structured format and framework to organize and report that metadata in a machine-readable way. You should use ISA-Tab to structure the metadata required by MIAME (for transcriptomics) or MIAPE (for proteomics). ISA-Tab is assay-agnostic and facilitates linking sample metadata across multi-omics studies.

Table 1: Impact of Pre-Analytical Variables on Multi-Omics Data Quality

| Pre-Analytical Variable | Primary Omics Affect | Typical Consequence | Recommended Mitigation |

|---|---|---|---|

| Time-to-Freezing/Quenching | Metabolomics, Lipidomics | Altered metabolite profiles; enzyme activity. | Standardize to <2 mins; use automated plungers. |

| Number of Freeze-Thaw Cycles | Proteomics, Metabolomics | Protein aggregation; metabolite degradation. | Single-use aliquots; never thaw >3x. |

| Collection Tube Anticoagulant | Transcriptomics, Proteomics | Gene expression artifacts; protein modifications. | Use study-wide consistent type (e.g., EDTA, Citrate). |

| Hemolysis ( >0.5% visually) | Metabolomics, Proteomics | Release of erythrocyte biomolecules. | Train phlebotomists; centrifuge promptly; use visual hemolysis index. |

| RNase Contamination | Transcriptomics | RNA degradation (low RIN). | Use RNase-free reagents/consumables; dedicated workspace. |

Detailed Experimental Protocols

Protocol 1: Standardized Plasma Collection for Multi-Omics (Metabolomics & Proteomics)

- Objective: To collect human plasma with minimal pre-analytical variation.

- Materials: Tourniquet, safety needle, blood collection tubes (EDTA, pre-chilled), timer, centrifuge (pre-cooled to 4°C), low-bind cryovials, labels.

- Method:

- Perform venipuncture using a safety needle and draw blood into pre-chilled K2EDTA tubes.

- Invert tube gently 8-10 times for immediate mixing.

- Start timer. Place tube in slurry of ice and water.

- Within 30 minutes of draw, centrifuge at 2000 x g for 10 minutes at 4°C.

- Visually inspect for hemolysis. Aliquot 200µL of the top plasma layer (avoiding buffy coat) into pre-labeled cryovials.

- Flash-freeze aliquots in liquid nitrogen for ≥5 minutes, then store at -80°C in a dedicated, monitored freezer.

- Critical Steps: Timing (step 3-4), temperature control (steps 1,3,4,6), and aliquot integrity (step 5).

Protocol 2: MIAME-Compliant RNA Extraction & Quality Control for Microarray

- Objective: To extract high-quality RNA and document key parameters for MIAME.

- Materials: TRIzol Reagent, Chloroform, Isopropanol, 75% Ethanol (DEPC-treated), RNase-free water, Bioanalyzer/RIN tape station.

- Method:

- Homogenize tissue/cells in TRIzol (1mL per 50-100mg tissue). Document homogenization method and time.

- Add 0.2mL chloroform per 1mL TRIzol. Shake vigorously for 15 sec. Incubate 2-3 mins at RT.

- Centrifuge at 12,000 x g for 15 min at 4°C.

- Transfer aqueous phase to new tube. Add 0.5mL isopropanol per 1mL TRIzol. Incubate 10 min at RT.

- Centrifuge at 12,000 x g for 10 min at 4°C. Remove supernatant.

- Wash pellet with 1mL 75% ethanol. Vortex. Centrifuge at 7,500 x g for 5 min at 4°C.

- Air-dry pellet 5-10 min. Dissolve in RNase-free water.

- Measure concentration (ng/µL) and purity (A260/A280, A260/A230) via spectrophotometry.

- Assess integrity via RIN (RNA Integrity Number) on Bioanalyzer. Document all steps, instrument models, and QC results for MIAME.

- Critical Steps: Speed and conditions of homogenization (step 1), complete removal of aqueous phase (step 3), and accurate RIN documentation (step 9).

Visualizations

Diagram 1: Pre-Analytical Workflow for Biofluid Multi-Omics

Diagram 2: ISA-Tab Framework for Metadata Organization

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Inhibitor Cocktails (Protease/Phosphatase) | Added to lysis buffers to prevent protein degradation and preserve post-translational modification states during sample preparation for proteomics. |

| RNase Inhibitors & RNase-Free Consumables | Critical for preserving RNA integrity from collection through extraction for transcriptomics (RNA-Seq, microarrays). |

| Pre-Chilled, Additive-Specific Blood Collection Tubes | Standardizes the anti-coagulant (EDTA, Citrate, Heparin) and initiation of cold temperature for metabolomics and proteomics of blood plasma/serum. |

| Low-Bind Microcentrifuge & Cryogenic Tubes | Minimizes adsorption of proteins, peptides, or metabolites to tube walls, preventing loss of low-abundance analytes. |

| Certified, MS-Grade Solvents & Water | Ensures minimal background chemical interference and ion suppression in mass spectrometry-based metabolomics and proteomics. |

| Internal Standard Mixes (Stable Isotope Labeled) | Added at the earliest possible step (e.g., during quenching) to correct for losses during sample preparation and analysis in targeted metabolomics/proteomics. |

| Validated, Pre-Cast Gel Electrophoresis Systems | Provides consistent protein separation for western blot or top-down proteomics, reducing gel-to-gel variability. |

| Commercial DNA/RNA/Protein Stabilization Tubes | Allows ambient temperature transport/storage for specific sample types by chemically inhibiting nucleases and proteases. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: My multi-omics batch effects are obscuring biological signals despite randomization. What went wrong?

- Answer: Randomization aims to distribute batch effects randomly across groups, but it cannot eliminate strong, systematic batch noise common in multi-omics (e.g., LC-MS run day, RNA-seq library prep). If your sample size is small, randomization may fail to balance these technical factors. Solution: Implement blocking. Treat each major technical batch (e.g., a processing day) as a block. Within each block, randomize your experimental conditions. Crucially, include a reference QC sample (a pooled aliquot of all samples or a commercial standard) in every block. This allows for direct measurement and correction of inter-block variation.

FAQ 2: How do I determine the correct frequency for running QC samples in my longitudinal study?

- Answer: The frequency depends on the estimated drift of your measurement system. A standard protocol is to use a interleaved QC design. For every N experimental samples, analyze one QC reference sample. Start with N=5-10. Use the data to calculate precision metrics like the coefficient of variation (CV) for the QC samples.

Table 1: QC Sampling Frequency Based on System Stability

| Measured QC CV (% over 24h) | System Status | Recommended QC Frequency (per experimental samples) |

|---|---|---|

| < 10% | Stable | 1 QC per 10 samples |

| 10% - 20% | Moderate Drift | 1 QC per 5 samples |

| > 20% | High Instability | 1 QC per sample; pause and recalibrate instrument |

FAQ 3: What is the specific protocol for using blocking and reference samples in a multi-site proteomics study?

- Answer: Follow this detailed methodology to ensure reproducibility across sites:

- Pre-study Pooled Reference Creation: Generate a large, homogeneous QC pool from a representative sample aliquot. Aliquot and freeze at -80°C.

- Blocking by Site and Batch: Define the primary block as "Site". Within each site, block further by "Processing Week".

- Randomization within Blocks: Randomize the order of all experimental samples and the assigned pooled reference QCs within each week's run list.

- Run Order: Use a balanced run order. Example for one block: [QC, Sample A, Sample B, QC, Sample C, Sample D, QC].

- Data Normalization: Use the signal from the interspersed QC samples to perform linear or LOESS regression-based normalization within and across sites.

FAQ 4: My randomized block design results are still inconsistent. How do I diagnose the issue?

- Answer: Perform an Analysis of Variance (ANOVA) or Principal Component Analysis (PCA) with technical factors as covariates.

- Step 1: Plot PCA scores colored by

Batch. If batches cluster separately, batch effect is strong. - Step 2: Plot PCA scores colored by

Block. If blocks are distributed, blocking was effective. - Step 3: Check QC sample values across runs. Use the following table to guide troubleshooting:

- Step 1: Plot PCA scores colored by

Table 2: Troubleshooting Guide Based on QC Sample Analysis

| Observation in QC Data | Likely Cause | Corrective Action |

|---|---|---|

| Steady trend (drift) over time | Instrument degradation | Perform system maintenance & recalibration. |

| Sudden shift in QC values between batches | Major reagent lot change | Model lot as a fixed effect; use bridge samples. |

| High variability within a single run | Sample preparation inconsistency | Audit and standardize sample prep SOPs. |

| QC values are stable, but experimental samples show high group variance | Insufficient biological replication | Increase sample size (n); re-check power calculation. |

Experimental Protocol: Implementing a Randomized Block Design with Interspersed QC for LC-MS Metabolomics

Title: Protocol for Reproducible Multi-Batch Metabolomics Profiling. Objective: To minimize technical variance and enable robust batch correction in a case-control metabolomics study spanning multiple instrument runs. Materials: See "The Scientist's Toolkit" below. Procedure:

- Block Definition: Assign each LC-MS/MS continuous run day (max 24 samples) as one block.

- Sample Allocation: Ensure each block contains an equal number of case and control biological replicates. Randomly assign the sample position within the block using a random number generator.

- QC Integration: Thaw one aliquot of the pre-prepared pooled QC sample for each block. Inject the QC sample at the beginning of the run for column conditioning, then after every 4-6 experimental samples in a randomized position within that sequence.

- Blank Runs: Run a blank solvent sample (e.g., 80:20 Water:Acetonitrile) at the end of each block to monitor carryover.

- Data Acquisition: Use data-dependent acquisition (DDA) or targeted SRM methods as required.

- Pre-processing: Use the QC samples to perform quality control-based filtering: remove metabolic features with a CV > 30% in the pooled QC samples. Apply batch correction algorithms (e.g., ComBat, SVA) using the block ID and QC drift profile.

Visualizations

Title: Randomization vs. Randomized Block Design Workflow

Title: QC-Based Batch Correction Data Flow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents for Reproducible Multi-Omics Experimental Design

| Item | Function & Rationale |

|---|---|

| Pooled Reference QC Material | A homogeneous sample injected repeatedly to monitor and correct for technical variation across the study. |

| Process Blanks | Solvent or buffer taken through the entire prep protocol. Identifies background contamination. |

| Internal Standards (IS) | Stable isotope-labeled compounds spiked into every sample pre-extraction. Corrects for extraction efficiency and ion suppression in MS. |

| Quality Control Standards | Commercial certified reference materials (e.g., NIST SRM) to assess absolute accuracy and inter-lab comparability. |

| Bridge Samples | A subset of biological samples split and analyzed across all batches/studies to enable direct alignment. |

| Standard Operating Procedure (SOP) Document | Detailed, step-by-step protocol for all processes. The single most critical tool for reproducibility. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQs on Platform Selection & Experimental Design

Q1: For a multi-omics reproducibility study, how do I decide between RNA-Seq and a microarray for transcriptomics? A: The choice hinges on your study's specific goals, budget, and sample characteristics. Use the decision table below.

| Criterion | NGS (e.g., RNA-Seq) | Microarray | Recommendation for Reproducibility Focus |

|---|---|---|---|

| Discovery Power | High (detects novel transcripts/isoforms) | Limited to predefined probes | Choose NGS for exploratory, hypothesis-generating work. |

| Dynamic Range | Very High (5-6 orders of magnitude) | Moderate (3-4 orders) | Choose NGS for samples with extreme expression differences. |

| Input RNA Quality | Sensitive to degradation (RIN >7 ideal) | More tolerant of partial degradation | Choose microarrays for archived, partially degraded samples (e.g., FFPE). |

| Quantitative Precision | High at higher expression levels | Excellent at mid-to-low expression levels | Microarrays can offer superior reproducibility for low-abundance transcripts. |

| Cost per Sample | Higher | Lower | Choose microarrays for large-scale, targeted studies with >100s of samples. |

| Cross-Platform Concordance | Moderate; varies by platform & pipeline | High among major vendors | For meta-analysis, standardized microarray platforms may show better inter-lab reproducibility. |

| Key Reproducibility Step | Critical: Standardized bioinformatics pipeline (aligner, counter). | Critical: Consistent normalization method (RMA, PLIER). | Document all parameters and use publicly available pipelines (e.g., nf-core/rnaseq). |

Q2: My mass spectrometry proteomics results are highly variable between technical replicates. What are the main culprits? A: Variability in LC-MS/MS often stems from sample preparation and instrument performance. Follow this troubleshooting guide.

| Symptom | Possible Cause | Diagnostic Check | Corrective Action |

|---|---|---|---|

| High CVs in peptide abundances | Inconsistent digestion | Run SDS-PAGE to check digestion efficiency. | Use standardized protein assay, precise pH strips, fixed digestion time/temp, and a validated protease (e.g., sequencing-grade trypsin). |

| Retention time drift | LC column degradation or mobile phase issues | Monitor retention time of spiked-in standards over runs. | Use pre-column filters, fresh mobile phases, scheduled column washing, and a quality control (QC) sample run periodically. |

| Inconsistent protein IDs/quant | Variable electrospray ionization | Inspect total ion chromatogram (TIC) baseline and noise. | Clean ion source, calibrate instrument, use an internal standard spike-in (e.g., iRT peptides), and normalize to total protein or median intensity. |

| Missing values in label-free quant | Stochastic data-dependent acquisition (DDA) | Check if low-abundance peptides are randomly selected. | Switch to data-independent acquisition (DIA/SWATH) or use higher sample loading and fractionation. |

Q3: How should I cross-validate findings between a discovery (NGS) and a validation (array) platform? A: A systematic, statistical approach is required, not just overlapping top hits.

- Protocol for Cross-Platform Validation:

- Design: Perform discovery analysis on a subset of samples using NGS (e.g., Whole Exome Seq for DNA, RNA-Seq for transcripts).

- Target Selection: From NGS data, identify a prioritized list of targets (e.g., top 500 differentially expressed genes, somatic mutations in >10% of samples).

- Validation Assay: Design or select a targeted array (e.g., TaqMan array, NanoString nCounter, genotyping array) that includes your targets plus housekeeping/control genes.

- Experimental Execution: Run the validation assay on all samples (including those used for discovery and an independent cohort if available).

- Statistical Concordance Analysis:

- For continuous data (e.g., gene expression): Calculate correlation coefficients (Pearson/Spearman) for measurements of the same gene across overlapping samples. Use Bland-Altman plots to assess agreement.

- For categorical data (e.g., mutation calls): Calculate Cohen's Kappa statistic to measure agreement beyond chance.

- Key: Assess if the biological conclusion (e.g., pathway enrichment, patient stratification) is consistent across platforms.

Key Experimental Protocols

Protocol 1: Standardized Pre-Analysis Sample QC for Multi-Omics

- Purpose: Ensure sample integrity before committing to costly omics assays.

- Materials: Bioanalyzer/Tapestation, Qubit/Quantus fluorometer, spectrophotometer (Nanodrop).

- Steps:

- Nucleic Acids (for NGS/Arrays):

- Measure concentration using a fluorescence-based assay (Qubit) for accuracy.

- Assess integrity via electrophoretic trace (RIN for RNA, DIN for DNA). Acceptance: RIN ≥ 8 for RNA-Seq, RIN ≥ 7 for arrays; DIN ≥ 7 for WGS.

- Do not rely on Nanodrop A260/280 alone.

- Proteins (for Mass Spec):

- Quantify via colorimetric assay (e.g., BCA assay).

- Check integrity and purity by SDS-PAGE (Coomassie stain).

- Confirm absence of high-abundance contaminants (e.g., albumin in plasma preps).

- Documentation: Record all QC metrics in a sample manifest for downstream covariate analysis.

- Nucleic Acids (for NGS/Arrays):

Protocol 2: Implementing a Cross-Platform QC Sample

- Purpose: Monitor technical variation across all runs and platforms.

- Methodology:

- Create or Purchase a QC Pool: Generate a homogeneous pool of material relevant to your study (e.g., cell line lysate, pooled patient serum, synthetic oligonucleotide mix).

- Aliquot and Store: Create single-use aliquots at -80°C to avoid freeze-thaw cycles.

- Integration into Runs: Include the QC pool as an anonymous sample in every:

- NGS sequencing batch.

- Microarray hybridization batch.

- Mass spectrometry injection batch.

- Data Monitoring: Track QC sample results using control charts (e.g., PCA plots, median intensity, number of detected features). Investigate any batch that shows QC sample outliers.

Diagrams

Title: Multi-Omics Platform Selection & Validation Workflow

Title: Mass Spectrometry Troubleshooting Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-Omics Reproducibility |

|---|---|

| Universal RNA/DNA Spike-in Controls (e.g., ERCC RNA, SIRV) | Added to samples pre-extraction or pre-library prep to monitor technical variation, identify batch effects, and enable cross-platform normalization. |

| Mass Spectrometry Internal Standard Kits (iRT peptides, TMT/Isobaric Tags) | Provide a fixed reference for retention time alignment and enable multiplexed quantification, reducing run-to-run variability. |

| Pre-Defined, Lyophilized QC Pool Materials | Ready-to-use reference materials (e.g., Coriell cell lines, NIST SRM 1950 plasma) for inter-laboratory benchmarking and longitudinal performance tracking. |

| Automated Liquid Handlers (e.g., Echo, Hamilton) | Minimize human error and variability in pipetting during high-throughput sample preparation for arrays and plate-based NGS library prep. |

| Validated, Lot-Controlled Enzymes (e.g., TruSeq enzyme mix) | Ensure consistent library preparation efficiency and bias profile across multiple experimental batches and over time. |

| Commercial Stabilization Buffers (e.g., RNAlater, PAXgene) | Standardize sample collection and immediate stabilization, preserving molecular profiles from the moment of collection. |

| Bioinformatics Pipeline Containers (Docker/Singularity) | Package the entire analysis workflow (tools, versions, dependencies) to guarantee identical computational environments and result reproducibility. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a multi-omics time-series experiment, my sample aliquots for RNA and protein extraction from the same biological source yield mismatched cell count data. What could be the cause and how can I resolve it?

A: This is a common issue in split-sample designs. The primary cause is often inconsistent homogenization or lysis efficiency prior to aliquot splitting.

- Solution: Implement a standardized pre-splitting homogenization protocol. Use a validated mechanical homogenizer (e.g., bead mill) for a fixed duration and ensure the initial sample suspension is thoroughly mixed before creating aliquots for RNA, protein, and other assays. Document the exact volume and mixing steps. Verify consistency using a portable cell counter on a pilot split aliquot before proceeding with the full experiment.

Q2: When aligning temporal metabolomics and proteomics data, I encounter significant batch effects that correlate with the day of sample processing rather than the biological time point. How can I mitigate this?

A: Temporal workflow synchronization is critical. Batch effects here confound the time-series analysis.

- Solution: Restructure your workflow using a staggered, randomized block design. Instead of processing all samples for one modality at once, process samples from all time points for each modality in a single, randomized batch. If instrument time is limiting, use a reference sample (e.g., a pooled sample from all time points) injected at regular intervals across the run for later normalization (see table below for common normalization strategies).

Q3: My integrated analysis of chromatin accessibility (ATAC-seq) and transcriptomics (RNA-seq) data from sequentially split samples shows poor correlation. What experimental steps should I audit?

A: Focus on the nuclear integrity during the initial split. ATAC-seq requires intact nuclei, while RNA-seq can be sensitive to cytoplasmic contamination or immediate lysis.

- Protocol for Synchronized Nuclei & RNA Isolation:

- Cell Harvest: Use a gentle dissociation method. Count cells accurately.

- Nuclei Isolation (for ATAC-seq): Resuspend 2/3 of the cell pellet in pre-chilled, non-ionic lysis buffer (e.g., 10mM Tris-HCl, pH 7.4, 10mM NaCl, 3mM MgCl2, 0.1% IGEPAL CA-630). Incubate on ice for 5 minutes. Centrifuge to pellet nuclei. Keep ice-cold.

- RNA Stabilization (for RNA-seq): Immediately after removing the aliquot for nuclei, add the remaining 1/3 of the cell pellet directly to an RNA stabilization reagent (e.g., TRIzol) and homogenize. This ensures RNA integrity is locked in at the same moment nuclei are isolated.

- Documentation: Record the exact time delay between the split and the addition of each stabilization buffer.

Key Data & Normalization Strategies

Table 1: Common Batch Effect Correction Methods for Multi-Layer Temporal Data

| Method Name | Best For | Key Principle | Software/Tool |

|---|---|---|---|

| ComBat | Larger studies (>20 samples) | Empirical Bayes framework to adjust for batch. | sva (R), combat (Python) |

| Remove Unwanted Variation (RUV) | Studies without negative controls | Uses factor analysis on control genes/sites. | ruv (R) |

| Quality Control (QC) Reference Normalization | LC-MS based metabolomics/proteomics | Normalizes sample abundances to a pooled QC sample. | Most vendor software |

| Cyclic Loess | High-density arrays (e.g., methylation) | Normalizes intensity differences between samples. | limma (R) |

Table 2: Impact of Sample Splitting Delay on Assay Quality Metrics

| Delay to Stabilization (Minutes) | RNA Integrity Number (RIN) | % of Nuclei Intact (by microscopy) | Metabolite Degradation Score* |

|---|---|---|---|

| 0 (Immediate) | 9.8 ± 0.1 | 98% ± 1% | 1.0 |

| 5 | 9.5 ± 0.3 | 92% ± 3% | 1.8 |

| 15 | 8.1 ± 0.7 | 85% ± 5% | 3.5 |

| 30 | 6.5 ± 1.2 | 70% ± 8% | 6.2 |

*Lower score indicates better preservation. Score based on relative levels of labile metabolites (e.g., ATP, NADH).

Experimental Protocol: Synchronized Multi-Omics Time-Course

Title: Protocol for Integrated Transcriptomic and Proteomic Analysis from a Single Temporal Sample Split.

Objective: To generate matched RNA-seq and LC-MS/MS proteomics data from the same biological sample across multiple time points.

Materials: See "The Scientist's Toolkit" below. Method:

- Stimulation & Harvest: Apply perturbation to cell culture. At each time point (T0, T15, T30, T60...), quickly wash cells with cold PBS.

- Single-Cell Suspension: Detach cells using a gentle, non-enzymatic method (e.g., cell scraper in cold PBS). Pass through a 40µm filter. Perform a precise total cell count.

- Primary Split: Immediately split the cell suspension into two pre-chilled, labeled tubes: Tube A (60% for Proteomics) and Tube B (40% for Transcriptomics).

- Parallel Processing:

- Tube A (Proteomics): Pellet cells. Lyse in 100µL of strong detergent lysis buffer (e.g., RIPA with protease inhibitors). Sonicate on ice. Centrifuge, collect supernatant. Flash freeze in liquid N₂. Store at -80°C.

- Tube B (Transcriptomics): Pellet cells. Lyse directly in 500µL of RNA lysis buffer (e.g., from a kit). Immediately vortex and process through column-based RNA purification or store lysate at -80°C.

- Downstream Processing: Process all RNA samples in a single batch for library prep and sequencing. Process all protein lysates in a single batch for tryptic digestion, TMT labeling, and LC-MS/MS analysis.

- Data Integration: Map RNA and protein data to a common gene identifier. Use correlation analysis (e.g., WGCNA) or joint pathway analysis (e.g., multi-omics factor analysis).

Diagrams

Diagram Title: Multi-Layer Assay Temporal Workflow

Diagram Title: Troubleshooting Logic for Sample Split Issues

The Scientist's Toolkit

Table 3: Essential Research Reagents for Synchronized Multi-Omics Workflows

| Item | Function in Workflow | Key Consideration for Reproducibility |

|---|---|---|

| Non-enzymatic Cell Dissociation Solution | Generates single-cell suspension without degrading surface proteins or inducing stress-response genes. | Use a standardized, chemically defined solution across all time points and experiments. |

| RNA Stabilization Reagent (e.g., TRIzol) | Immediately halts RNase activity upon sample splitting, preserving the transcriptomic snapshot. | Aliquot reagent to avoid freeze-thaw cycles. Use the same batch for an entire study. |

| Mass-Spectrometry Grade Lysis Buffer | Efficiently extracts proteins while maintaining compatibility with downstream digestion and LC-MS. | Include a consistent cocktail of protease and phosphatase inhibitors. Pre-mix large batches. |

| Internal Standard Spike-Ins (for metabolomics) | Added immediately upon splitting to correct for technical variation in extraction and analysis. | Use isotopically labeled compounds that cover key metabolic pathways. |

| Pooled QC Reference Sample | A homogeneous sample created from a small aliquot of all experimental samples. | Used to monitor and correct for instrument drift across long temporal analysis runs. |

| Cryogenic Vials & Labels | For snap-freezing and long-term storage of aliquots at -80°C or in liquid N₂. | Use vial types validated for low biomolecule adhesion. Implement a robust, barcoded labeling system. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our multi-omics dataset (e.g., RNA-seq and Proteomics) has been deposited in a public repository, but other researchers report they cannot find it using standard keyword searches. What might be wrong?

A: This is a Findability issue, often related to incomplete or non-standard metadata.

- Common Cause: Missing persistent identifiers (PIDs) like a Digital Object Identifier (DOI) or using local, uncontrolled keywords instead of standardized ontologies.

- Solution:

- Ensure your dataset has a registered, resolvable DOI from the repository.

- Annotate all sample and data files using community-agreed ontologies (e.g., NCBI Taxonomy for organisms, UBERON for anatomy, GO for functions). Use tools like the Ontology Lookup Service (OLS) to find correct terms.

- Provide a rich, machine-readable

READMEfile with the complete experimental design.

Q2: When attempting to re-analyze a published metabolomics dataset, I encounter a proprietary data format that requires expensive, non-standard software to open. How can this be avoided?

A: This is an Accessibility & Interoperability issue.

- Common Cause: Data saved in closed, vendor-specific formats.

- Solution:

- For Data Producers: Always deposit data in open, non-proprietary, and standardized formats alongside any raw files. For mass spectrometry, use

mzML; for NMR, usenmrML. Provide conversion scripts if possible. - For Data Users: Contact the corresponding author and the hosting repository to request data in an open format. Cite this communication to promote transparency in your reproduction attempt.

- For Data Producers: Always deposit data in open, non-proprietary, and standardized formats alongside any raw files. For mass spectrometry, use

Q3: I have downloaded a re-usable transcriptomics dataset, but the gene identifiers are from an outdated genome build, making integration with my data impossible. What steps should I take?

A: This is an Interoperability challenge.

- Solution Protocol:

- Identify the source genome build and annotation used in the original study (check metadata).

- Use a reliable identifier mapping service (e.g., Ensembl BioMart, g:Profiler, UniProt ID Mapping).

- Perform the mapping and document all steps, including software versions and parameters.

- Critical Step: Validate the mapping by checking a subset of well-known genes for correct annotation in the new build.

Q4: The workflow and computational code provided with a reusable dataset fail to run on my system. How can I troubleshoot this?

A: This is a Reusability (Computational Reproducibility) problem.

- Troubleshooting Guide:

- Check Containerization: Was the analysis packaged using Docker or Singularity? If so, use the provided container.

- Check Dependency Management: Are precise software versions and environment files (e.g., Conda

environment.yml,requirements.txt) provided? Recreate the exact environment. - Check Paths and Parameters: Hard-coded file paths are a common failure point. Adjust configuration files to point to your local directory structure.

- If issues persist, use platforms like CodeOcean or Binder that can launch executable research capsules.

Key Data and Metrics for FAIR Assessment

Table 1: Quantitative Metrics for Assessing FAIRness in Multi-Omics Repositories

| FAIR Principle | Key Metric | Target Benchmark (Current) | Measurement Tool Example |

|---|---|---|---|

| Findable | % of datasets with a resolvable PID | >95% | FAIR Data Object Assessment |