Navigating the Maze: A Comprehensive Guide to Multi-Omics Data Integration Challenges and Solutions in 2024

This article provides a comprehensive overview of the central challenges in multi-omics data integration for researchers, scientists, and drug development professionals.

Navigating the Maze: A Comprehensive Guide to Multi-Omics Data Integration Challenges and Solutions in 2024

Abstract

This article provides a comprehensive overview of the central challenges in multi-omics data integration for researchers, scientists, and drug development professionals. We explore the foundational complexities of diverse, high-dimensional data types and their biological context. We then examine current methodological approaches, from early to late integration and AI-driven techniques, and their applications in disease subtyping and biomarker discovery. The guide also addresses critical troubleshooting steps for data harmonization, noise reduction, and computational bottlenecks. Finally, we cover validation frameworks and comparative analyses of tools to ensure biological robustness and reproducibility. This roadmap equips professionals to effectively leverage integrated multi-omics for transformative biomedical insights.

Understanding the Multi-Omics Landscape: Core Challenges and Data Complexity

The advent of high-throughput technologies has ushered in the era of multi-omics, a holistic approach to biological investigation that integrates multiple layers of molecular information. This guide defines the core omics layers and their associated technologies, framed by the central thesis that the primary challenge in modern systems biology is not data generation, but the meaningful integration of heterogeneous, multi-scale, and noisy omics datasets to derive actionable biological insights. Successful integration is critical for researchers and drug development professionals aiming to understand complex disease mechanisms and identify robust biomarkers.

Core Omics Layers: Definitions and Technologies

Each omics layer captures a distinct dimension of biological state and function, each with its own data characteristics and noise profiles that complicate integration.

Table 1: The Core Omics Layers

| Omics Layer | Analysed Molecule | Key Technologies | Provides Insight Into | Primary Challenges for Integration |

|---|---|---|---|---|

| Genomics | DNA | Whole-Genome Sequencing (WGS), Whole-Exome Sequencing (WES), SNP arrays | Genetic blueprint, variants, predispositions | Static data; requires functional interpretation via other layers. |

| Epigenomics | Chromatin modifications, DNA methylation | ChIP-seq, ATAC-seq, Bisulfite sequencing | Gene regulation, heritable phenotypic changes without DNA sequence alteration. | Tissue/cell-type specific; dynamic; complex correlation with expression. |

| Transcriptomics | RNA (coding & non-coding) | RNA-seq (bulk & single-cell), Microarrays | Gene expression levels, alternative splicing, regulatory RNAs. | mRNA levels poorly correlate with protein abundance (r≈0.4-0.7). |

| Proteomics | Proteins & Peptides | Mass Spectrometry (LC-MS/MS), Affinity-based arrays (e.g., Olink), RPPA | Protein abundance, post-translational modifications (PTMs), protein-protein interactions. | Dynamic range (>10^6); lack of amplification; PTM complexity. |

| Metabolomics | Small molecule metabolites (<1,500 Da) | LC-MS, GC-MS, NMR | Metabolic activity, endpoints of cellular processes, closest to phenotype. | High chemical diversity; rapid turnover; database coverage is incomplete. |

Key Methodologies and Experimental Protocols

Detailed workflows are essential for understanding the source of technical variance in each dataset.

Protocol 2.1: Bulk RNA-Sequencing (Transcriptomics)

- Objective: Profile the transcriptome (mRNA) of a tissue or cell population.

- Steps:

- Total RNA Extraction: Use guanidinium thiocyanate-phenol-chloroform (e.g., TRIzol) to isolate RNA, followed by DNase I treatment.

- Poly-A Selection: Isolate mRNA using oligo(dT) magnetic beads.

- Library Preparation: Fragment RNA, synthesize cDNA, add adapters, and amplify via PCR.

- Sequencing: Perform paired-end sequencing on an Illumina platform (e.g., NovaSeq).

- Bioinformatics: Align reads to a reference genome (STAR, HISAT2), quantify gene counts (featureCounts), and perform differential expression analysis (DESeq2, edgeR).

Protocol 2.2: Label-Free Quantitative Proteomics (LC-MS/MS)

- Objective: Identify and quantify proteins in a complex lysate.

- Steps:

- Protein Extraction & Digestion: Lyse cells in RIPA buffer, reduce disulfide bonds (DTT), alkylate (iodoacetamide), and digest with trypsin (overnight, 37°C).

- Desalting: Clean up peptides using C18 solid-phase extraction tips.

- LC-MS/MS Analysis: Inject peptides onto a C18 nanoLC column coupled online to a high-resolution tandem mass spectrometer (e.g., Thermo Orbitrap).

- Data Acquisition: Use Data-Dependent Acquisition (DDA): full MS scan followed by fragmentation (MS2) of the most intense ions.

- Data Processing: Identify proteins by searching MS2 spectra against a protein database (MaxQuant, Proteome Discoverer). Quantify based on precursor ion intensity.

Protocol 2.3: Untargeted Metabolomics (LC-MS)

- Objective: Detect a broad range of metabolites in a biofluid (e.g., plasma).

- Steps:

- Sample Preparation: Deproteinize plasma with cold methanol (1:4 ratio), vortex, incubate at -20°C, and centrifuge to pellet proteins.

- Chromatography: Separate metabolites using reversed-phase (C18) and hydrophilic interaction liquid chromatography (HILIC) columns in separate runs.

- Mass Spectrometry: Analyze using a high-resolution Q-TOF or Orbitrap mass spectrometer in both positive and negative electrospray ionization modes.

- Feature Extraction: Use software (XCMS, MZmine) to detect chromatographic peaks, align samples, and create a feature-intensity table.

- Identification: Annotate features by matching exact mass and MS/MS spectra to libraries (e.g., HMDB, METLIN).

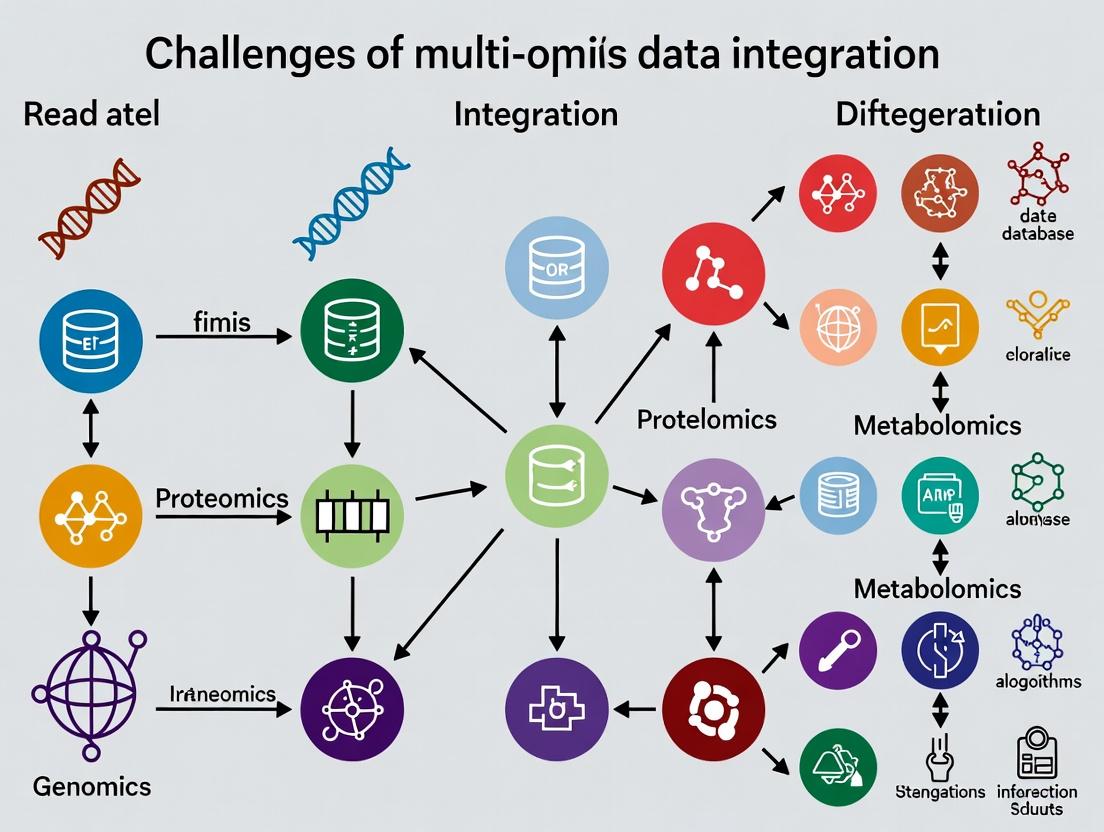

Visualization of Multi-Omics Workflow and Integration Challenge

Title: Multi-Omics Data Generation and Integration Pipeline

Title: Biological Information Flow and Integration Hurdles

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Kits for Multi-Omics Research

| Reagent/Kits | Supplier Examples | Function in Multi-Omics Workflow |

|---|---|---|

| TRIzol Reagent | Thermo Fisher, Qiagen | Simultaneous extraction of RNA, DNA, and proteins from a single sample, minimizing sample-to-sample variation. |

| DNase I (RNase-free) | New England Biolabs, Roche | Removal of genomic DNA contamination from RNA preparations for accurate transcriptomics. |

| Nextera DNA Flex Library Prep Kit | Illumina | Preparation of sequencing libraries from low-input or degraded DNA for genomics/epigenomics. |

| NEBNext Ultra II Directional RNA Library Prep Kit | New England Biolabs | High-efficiency preparation of strand-specific RNA-seq libraries. |

| Trypsin, Sequencing Grade | Promega, Thermo Fisher | Proteolytic enzyme for digesting proteins into peptides for bottom-up proteomics. |

| TMTpro 16plex Isobaric Label Reagents | Thermo Fisher | Multiplexing up to 16 samples in a single MS run for high-throughput, quantitative proteomics. |

| Bio-Rad Protein Assay Dye Reagent | Bio-Rad | Colorimetric quantification of total protein concentration for normalizing proteomics sample input. |

| Methanol (Optima LC/MS Grade) | Fisher Chemical | High-purity solvent for metabolite extraction and mobile phase in LC-MS metabolomics. |

| Pierce Quantitative Colorimetric Peptide Assay | Thermo Fisher | Accurate measurement of peptide concentration prior to LC-MS/MS injection. |

| Single-Cell Multiome ATAC + Gene Expression Kit | 10x Genomics | Enables simultaneous profiling of chromatin accessibility (epigenomics) and transcriptome from the same single cell. |

Beyond the Core: Expanding the Omics Universe

Integration challenges multiply as the universe expands:

- Microbiomics: Analysis of microbial communities, introducing a separate genome and metabolome.

- Lipidomics: Subset of metabolomics focused on lipids, requiring specialized separation.

- Glycomics: Study of glycans, with complex, non-templated structures.

- Spatial Omics: Technologies (e.g., Visium, MIBI) that retain tissue-context information, adding a spatial coordinate dimension to integration.

- Single-Cell & Multiome Assays: Resolve cellular heterogeneity but generate ultra-high-dimensional, sparse data.

Defining the multi-omics universe is the first step toward conquering its central challenge: integration. Each layer—genomics, transcriptomics, proteomics, metabolomics—provides a unique but incomplete snapshot of a complex, dynamic system. The future of biomedical research and drug development lies in developing robust computational and statistical frameworks that can reconcile these disparate data types, moving from simple correlation to causal, mechanistic models of health and disease.

The central challenge in modern multi-omics research is the systematic integration of diverse data modalities—genomics, transcriptomics, proteomics, metabolomics—to construct a unified model of biological systems. This integration is fundamentally obstructed by heterogeneity, which manifests in three primary dimensions: divergent measurement scales (e.g., counts, intensities, concentrations), incompatible data formats (FASTQ, BAM, mzML, .raw), and batch-specific technical noise. This technical guide deconstructs this hurdle and provides actionable methodologies for overcoming it within the broader thesis that data heterogeneity is the primary rate-limiting step in translational multi-omics discovery.

Quantitative Characterization of Heterogeneity

The table below summarizes the core quantitative disparities across major omics layers, based on current literature and typical experimental outputs.

Table 1: Characteristic Scales and Formats of Major Omics Data Types

| Omics Layer | Typical Measurement Scale | Dynamic Range | Primary File Formats | Common Technical Noise Sources |

|---|---|---|---|---|

| Genomics (WGS/WES) | Read Counts / Allele Fractions | 0-100% (VAF) | FASTQ, BAM, VCF | PCR duplicates, sequencing depth bias, GC-content bias |

| Transcriptomics (RNA-seq) | Read Counts (integer) | ~5 orders of magnitude | FASTQ, BAM, Gene Count Matrix | Batch effects, library prep bias, 3’ bias, rRNA contamination |

| Proteomics (LC-MS/MS) | Ion Intensity / Spectral Counts | ~4-5 orders of magnitude | .raw, .mzML, .mgf | Ion suppression, batch/column drift, peptide identification error |

| Metabolomics (LC-MS) | Ion Intensity | ~4-6 orders of magnitude | .raw, .mzML, .cdf | Matrix effects, instrument drift, peak misalignment |

| Epigenomics (ChIP-seq/ATAC-seq) | Read Counts / Enrichment Scores | ~3 orders of magnitude | FASTQ, BAM, bedGraph | Antibody specificity, chromatin accessibility bias, PCR artifacts |

Experimental Protocols for Cross-Omics Data Generation

Robust integration requires standardized, parallelized data generation. Below are detailed protocols for a coordinated multi-omics study from a single tissue sample.

Protocol: Coordinated Nucleic Acid Extraction for Genomics & Transcriptomics

Objective: Isolate high-quality DNA and RNA from the same biological sample (e.g., flash-frozen tissue). Reagents: AllPrep DNA/RNA/miRNA Universal Kit (Qiagen), RNase Away, liquid nitrogen. Steps:

- Homogenization: Cryogrind ≤30 mg tissue in liquid nitrogen. Transfer powder to lysis buffer.

- Simultaneous Lysis: Thoroughly homogenize using a vortex adapter. Centrifuge to clear lysate.

- RNA Phase: Transfer lysate to an RNeasy spin column. Perform on-column DNase I digestion. Elute RNA in nuclease-free water. Assess integrity (RIN > 8.0, Bioanalyzer).

- DNA Phase: Pass flow-through from step 3 to a separate column. Apply ethanol precipitation. Bind DNA to an AllPrep DNA column. Wash and elute. Assess purity (A260/280 ~1.8). Output: Paired DNA (for WGS/WES) and RNA (for RNA-seq) aliquots.

Protocol: Serial Protein & Metabolite Extraction from Cell Pellet

Objective: Sequentially extract proteins and metabolites from the same cell pellet. Reagents: Methanol, water, chloroform (for metabolomics); RIPA buffer with protease inhibitors (for proteomics). Steps:

- Quenching & Metabolite Extraction: Suspend 1x10⁷ cells in 1 mL cold 40:40:20 MeOH:ACN:H₂O at -20°C. Vortex 10 min, centrifuge (15,000g, 10 min, 4°C).

- Supernatant Collection: Transfer supernatant (metabolite fraction) to a new tube. Dry in a speed vacuum. Store at -80°C for LC-MS metabolomics.

- Protein Pellet Processing: Re-suspend the remaining pellet in 200 µL RIPA buffer. Sonicate on ice (3x 10s pulses). Centrifuge (14,000g, 15 min, 4°C).

- Protein Collection: Transfer supernatant (protein fraction) to a new tube. Quantify via BCA assay. Aliquot for LC-MS/MS proteomics. Output: Paired metabolite and protein extracts from an identical cell population.

Computational Workflows for Harmonization

Diagram: Multi-Omics Data Harmonization Pipeline

Title: Multi-Omics Data Harmonization and Integration Pipeline

Detailed Method: Multi-Omics Factor Analysis (MOFA+)

Objective: Identify latent factors that explain variance across multiple omics datasets. Input: Normalized matrices (samples x features) for each omics layer. Steps:

- Data Preparation: Ensure all matrices are aligned by sample ID. Center and scale features within each view.

- Model Training: Run MOFA+ with default variational inference. Set convergence tolerance (0.001). Use 10-15 factors as initial guess.

- Factor Interpretation: Correlate factors with sample metadata (e.g., disease status). Annotate factors by loading weights on original features (genes, proteins).

- Downstream Analysis: Use factor values as covariates in survival or differential expression models. Output: Latent factors representing shared and unique variation across omics types.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Kits and Reagents for Multi-Omics Sample Preparation

| Product Name | Vendor | Function in Multi-Omics Workflow | Key Benefit for Integration |

|---|---|---|---|

| AllPrep DNA/RNA/miRNA Universal Kit | Qiagen | Co-isolation of DNA, RNA, and small RNA from a single sample. | Eliminates biological variation from using separate samples for different assays. |

| PreOmics iST Kit | PreOmics | Single-pot, solid-phase-enhanced sample preparation for proteomics. | Highly reproducible protein extraction and digestion, reducing technical noise. |

| Matched Tissue & Plasma DNA/RNA Kits | Norgen Biotek | Parallel purification from matched tissue and liquid biopsy samples. | Enables direct comparison of solid tumor and circulating omics profiles. |

| Cellular Indexing of Transcriptomes & Epitopes by Sequencing (CITE-seq) Antibodies | BioLegend | Oligo-tagged antibodies for simultaneous protein surface marker and transcriptome measurement in single cells. | Direct, paired measurement of two modalities at single-cell resolution. |

| mTOR Signaling Multiplex ELISA Array | RayBiotech | Quantifies phospho-proteins in key signaling pathways (PI3K/AKT/mTOR). | Provides calibrated, quantitative protein-level data to complement phospho-proteomics. |

| Seahorse XFp FluxPak | Agilent | Measures live-cell metabolic parameters (glycolysis, OXPHOS). | Provides functional metabolic data to ground-truth metabolomics findings. |

Pathway Visualization: Impact of Heterogeneity on Biological Inference

Title: Noise Sources Obscuring Signal in Multi-Omics Pathway Inference

Overcoming the heterogeneity hurdle is not a single-step process but a rigorous, end-to-end framework encompassing coordinated wet-lab protocols, systematic normalization, and robust statistical integration. By adopting the experimental and computational guidelines detailed here, researchers can transform disparate, noisy data layers into coherent systems-level models, thereby unlocking the true potential of multi-omics for mechanistic discovery and therapeutic development.

The integration of multi-omics data—genomics, transcriptomics, proteomics, metabolomics—represents the frontier of systems biology and precision medicine. The core thesis is that a holistic, multi-layered view of biological systems will unlock profound insights into disease mechanisms and therapeutic targets. However, this promise is critically undermined by the High-Dimension, Low-Sample-Size (HDLSS) conundrum. Each omics layer can yield tens of thousands of features (p), while cohort sizes (n) often number in the hundreds or fewer. This p >> n regime leads to the "dimensionality disaster," where traditional statistical methods fail, models overfit, and spurious correlations dominate.

This whitepaper provides a technical guide to navigating the HDLSS landscape within multi-omics research, detailing current mitigation strategies, experimental validation protocols, and essential computational toolkits.

The Core Statistical Problem

In HDLSS settings, the data matrix is ill-conditioned. The sample covariance matrix is singular, making many inferential statistics undefined. The curse of dimensionality means data points become equidistant, and all samples appear as outliers. This results in:

- Overfitting: Models memorize noise rather than learning generalizable biological signals.

- Unstable Feature Selection: Small perturbations in data lead to wildly different selected biomarkers.

- Loss of Power: The multiple testing burden from high-dimensional hypotheses is immense.

Table 1: Quantitative Landscape of Multi-Omics Dimensionality

| Omics Layer | Typical Feature Range (p) | Typical Cohort Size (n) | Representative p/n Ratio |

|---|---|---|---|

| Whole Genome Sequencing (WGS) | ~3-5 million variants | 100s - 10,000s | 1,000:1 to 10,000:1 |

| Transcriptomics (RNA-seq) | ~20,000 genes | 10s - 100s | 200:1 to 2,000:1 |

| Proteomics (Mass Spectrometry) | ~5,000 - 10,000 proteins | 10s - 100s | 100:1 to 1,000:1 |

| Metabolomics | ~500 - 5,000 metabolites | 10s - 100s | 50:1 to 500:1 |

| Multi-Omics Integration | > 30,000 aggregated features | 10s - 100s | > 300:1 |

Methodological Frameworks for Navigating HDLSS

Dimensionality Reduction & Feature Selection

Prior to integration, aggressive dimensionality reduction is required.

- Experimental Protocol for Unsupervised Feature Filtering:

- Variance Stabilization: For each omics dataset, apply appropriate transformations (e.g., log2(CPM+1) for RNA-seq, log2(imputed intensity) for proteomics).

- Filter Low-Variance Features: Calculate the coefficient of variation (CV) or interquartile range (IQR) for each feature across samples. Remove features in the bottom X percentile (e.g., 20%).

- Correlation-Based Pruning: For remaining features, calculate pairwise correlation (e.g., Pearson's r). Within highly correlated clusters (|r| > 0.95), retain only the feature with the highest median absolute deviation.

- Output: A filtered feature matrix for each omics modality ready for integration.

Title: Workflow for Unsupervised Feature Filtering

Sparse Modeling and Regularization

Penalized regression models introduce constraints to prevent overfitting.

- Experimental Protocol for Sparse Multi-Omics Regression (e.g., for predicting clinical outcome):

- Pre-integration Concatenation: Merge filtered feature matrices from different omics platforms column-wise, using a shared sample ID. Standardize each feature (mean=0, variance=1).

- Model Training with Elastic Net: Apply an Elastic Net regression (combining L1/Lasso and L2/Ridge penalties) via cross-validation. Use

glmnetin R orsklearn.linear_model.ElasticNetCVin Python. - Hyperparameter Tuning: Perform a nested 10-fold cross-validation grid search over the mixing parameter (α, between 0 and 1) and the regularization strength (λ). The inner loop selects λ, the outer loop evaluates performance.

- Feature Importance: Extract non-zero coefficients from the final model as the selected, integrative biomarker signature.

- Validation: Apply the trained model to a completely held-out test set. Report performance metrics (AUC-ROC for classification, R² for regression).

Title: Sparse Modeling for Feature Selection

Multi-Block Data Integration Methods

These methods model the joint structure of multiple omics datasets without concatenation.

- Experimental Protocol for DIABLO (Data Integration Analysis for Biomarker discovery using Latent cOmponents) using mixOmics in R:

- Data Input: Prepare a list of filtered, pre-processed data matrices (X1: mRNA, X2: miRNA, X3: proteomics, etc.) and a response vector Y (e.g., disease subtype).

- Design Matrix: Specify a between-omics design matrix (e.g., full design = all omics connected) to guide the model on which datasets should be correlated.

- Tuning Component Number: Use

tune.block.splsda()to perform 10-fold cross-validation and determine the optimal number of components and the number of features to select per component per dataset. - Model Fitting: Run the final

block.splsda()model with tuned parameters. - Evaluation & Visualization: Assess performance via

perf()with repeated cross-validation. Visualize sample clustering on the first two components viaplotIndiv(). Generate a circos plot (circosPlot()) to visualize correlations between selected features across omics layers.

Table 2: Key Multi-Block Integration Methods

| Method | Underlying Algorithm | Key Strength | Software Package |

|---|---|---|---|

| DIABLO | Sparse Generalized Canonical Correlation Analysis | Supervised; finds correlated biomarkers predictive of an outcome. | mixOmics (R) |

| MOFA/MOFA+ | Bayesian Factor Analysis | Unsupervised; learns latent factors capturing shared/private variation. | MOFA2 (R/Python) |

| sMBPLS | Sparse Multi-Block Partial Least Squares | Handles highly collinear data; good for predictive modeling. | Custom sMBPLS R package |

| iClusterBayes | Joint Latent Variable Model | Integrative clustering for subtype discovery. | iClusterPlus (R) |

Title: Multi-Block Integration Conceptual Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for HDLSS Multi-Omics Research

| Item / Reagent | Function & Relevance |

|---|---|

| High-Quality, Annotated Biospecimens | The foundational input. Paired, high-integrity tissue/plasma samples with deep clinical phenotyping are irreplaceable. Small n makes sample quality paramount. |

| Multiplex Assay Kits (e.g., Olink, Luminex) | Allows measurement of 10s-1000s of proteins/cytokines from a single low-volume sample, maximizing data yield per precious sample. |

| Single-Cell RNA-seq Reagents (10x Genomics) | Transforms a bulk tissue sample (n=1) into data from thousands of cells, artificially increasing 'n' for cellular-resolution analyses. |

| Stable Isotope Labeling Reagents (SILAC, TMT) | Enables multiplexed proteomics, where multiple samples are pooled and run in one MS batch, drastically reducing technical noise—a critical confounder in HDLSS. |

| CRISPR Screening Libraries (e.g., Brunello) | Functional validation tool. After computational biomarker identification from HDLSS data, pooled CRISPR screens can test hundreds of gene targets in parallel for causal roles. |

| Cloud Computing Credits (AWS, GCP) | Essential for scalable computation of resource-intensive integration algorithms and repeated cross-validation. |

R/Python with Key Libraries (mixOmics, MOFA2, glmnet, scikit-learn) |

The computational workbench for implementing all described statistical and ML strategies. |

Within the overarching thesis on the Challenges of Multi-Omics Data Integration Research, a fundamental and pervasive obstacle is the confounding of true biological signal with non-biological technical noise. This whitepaper provides an in-depth technical guide to disentangling biological variation from technical variation, with a focus on identifying and correcting for batch effects. As datasets grow larger and more complex from high-throughput technologies like genomics, transcriptomics, and proteomics, the risk of batch effects—systematic technical variations introduced during experimental processing—obscuring or mimicking biological phenomena increases exponentially.

Biological Variation is the true signal of interest, arising from differences in genotype, phenotype, disease state, treatment response, or developmental stage between samples. It is the variation we seek to measure and interpret.

Technical Variation (Batch Effects) is non-biological noise introduced by factors such as:

- Different experiment dates or times.

- Multiple personnel or technicians.

- Reagent lot changes.

- Sequencing lane or instrument differences.

- Sample preparation protocol drifts.

Batch effects are often systematic, not random, and can be severe enough to cause false conclusions, such as clustering samples by processing date instead of disease subtype.

Quantitative Impact of Batch Effects

The following table summarizes common quantitative metrics used to assess the relative contribution of biological and technical variance in omics studies.

Table 1: Metrics for Assessing Sources of Variation in Omics Data

| Metric | Purpose | Interpretation in Context of Batch Effects |

|---|---|---|

| Principal Variance Component Analysis (PVCA) | Partitions total variance into contributions from biological factors (e.g., disease) and technical factors (e.g., batch). | A batch factor explaining >10% of total variance is often considered a major confounder requiring correction. |

| Median Coefficient of Variation (CV) | Measures dispersion of data relative to its mean. | High median CV within a biologically homogeneous group suggests high technical noise. |

| Inter-class Correlation (ICC) | Quantifies reliability of measurements across batches. Ranges from 0 (no reliability) to 1 (perfect reliability). | ICC < 0.5 indicates measurements are more variable across batches than consistent within biological groups, limiting reproducibility. |

| Silhouette Width | Measures how well samples cluster by biological class vs. technical batch. | Negative average silhouette width indicates samples are better clustered by batch than by biological class. |

Experimental Protocols for Identification and Control

Protocol 1: Prospective Experimental Design to Minimize Batch Effects

Title: Randomized Block Design for Sample Processing

- Sample Randomization: Do not process all samples from one biological group together. Randomize sample assignment to processing batches (e.g., sequencing lanes, MS runs) using a validated random number generator.

- Balancing: Ensure each batch contains a balanced representation of all biological groups (e.g., equal numbers of case and control samples per batch).

- Replication: Include both technical replicates (same biological sample processed multiple times) and biological replicates.

- Control Samples: Incorporate reference standards or "pooled" samples (an equal mixture of all samples) in every batch. These serve as longitudinal anchors to track technical drift.

Protocol 2: Retrospective Assessment Using Principal Component Analysis (PCA)

Title: Diagnostic PCA for Batch Effect Detection

- Perform PCA on the normalized, but not batch-corrected, feature-by-sample matrix (e.g., gene expression).

- Generate PCA score plots for the top principal components (PCs), typically PC1 vs. PC2 and PC3 vs. PC4.

- Color samples by biological group and by technical batch in separate plots.

- Interpretation: If samples cluster more strongly by batch than by biological condition in the leading PCs, a significant batch effect is present. Statistical testing (PERMANOVA) can be applied to the principal coordinates.

Protocol 3: Implementation of ComBat for Batch Correction

Title: ComBat Algorithm for Empirical Bayes Batch Adjustment

- Input: A

p x nmatrix of normalized data, wherepis features (genes) andnis samples. A model matrix for biological covariates of interest. A batch covariate vector. - Model Fitting: For each feature, model the data as a linear combination of biological covariates and a batch-specific additive (shift) and multiplicative (scale) effect.

- Empirical Bayes Estimation: Shrink the batch effect parameters towards the overall mean across all features, stabilizing estimates for low-variance features. This is crucial for datasets with many features.

- Adjustment: Remove the estimated additive and multiplicative batch effects for each feature in each batch.

- Output: A

p x nbatch-corrected matrix, ready for downstream biological analysis.

Visualization of Concepts and Workflows

Diagram Title: Disentangling Biological and Technical Variation

Diagram Title: Batch Effect Management Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Batch Effect Control Experiments

| Item | Function & Rationale |

|---|---|

| Universal Human Reference RNA (UHRR) | A standardized pool of RNA from diverse cell lines. Serves as an inter-batch calibration standard in transcriptomic studies to monitor technical performance. |

| Mass Spectrometry Grade Enzymes (Trypsin/Lys-C) | For proteomics. Using the same lot number across all batches minimizes variability in protein digestion efficiency, a major source of technical variance. |

| Indexed Adapters (Unique Dual Indexes - UDIs) | For next-generation sequencing. UDIs allow pooling of multiple samples per lane while uniquely identifying each sample, enabling robust demultiplexing and detection of cross-batch contamination. |

| Internal Standard Spike-Ins (e.g., S. pombe RNA, UPS2 Proteomic Standard) | Known quantities of exogenous molecules added to each sample. Used to distinguish technical variation (which affects spike-ins and endogenous molecules equally) from biological variation. |

| Single-Cell Multiplexing Kits (e.g., CellPlex, Hashtag Antibodies) | For single-cell genomics. Allows pooling of samples from multiple biological conditions into a single processing batch, virtually eliminating wet-lab batch effects for those samples. |

| Automated Nucleic Acid/Protein Purification Systems | Minimizes variation introduced by manual handling differences between technicians or labs, standardizing extraction efficiency and purity. |

In multi-omics data integration research, combining datasets from genomics, transcriptomics, proteomics, and metabolomics is fundamental for constructing comprehensive biological models. However, a pervasive and critical challenge is the presence of missing values across these datasets. This incompleteness arises from technical limitations, such as limits of detection in mass spectrometry, sample degradation, or bioinformatic processing errors. Systematic missingness, where certain compounds cannot be detected under specific experimental conditions, is particularly common in metabolomics and proteomics. Left unaddressed, missing data introduces bias, reduces statistical power, and can lead to erroneous conclusions in downstream integrative analyses, jeopardizing the translational potential in drug development.

Quantifying the Problem in Multi-Omics Studies

The prevalence and patterns of missing data vary significantly by omics layer and technology.

Table 1: Typical Missing Data Rates by Omics Technology

| Omics Layer | Technology | Typical Missing Rate | Primary Cause of Missingness |

|---|---|---|---|

| Metabolomics | LC-MS (untargeted) | 20-40% | Ion suppression, low abundance below LOD. |

| Proteomics | Shotgun LC-MS/MS | 15-30% | Stochastic sampling, low-abundance proteins. |

| Transcriptomics | RNA-Seq | 1-5% | Low expression, pipeline filtering. |

| Genomics | Whole-Genome Sequencing | <1% | Coverage gaps, ambiguous mapping. |

Table 2: Missing Data Patterns

| Pattern | Description | Implication for Analysis |

|---|---|---|

| Missing Completely at Random (MCAR) | Missingness independent of observed/unobserved data. | Less biased; reduces sample size. |

| Missing at Random (MAR) | Missingness depends on observed data. | Can be corrected with model-based methods. |

| Missing Not at Random (MNAR) | Missingness depends on the unobserved value itself (e.g., low abundance). | Most problematic; requires specific models. |

Experimental Protocols for Assessing Missingness

Before imputation, a rigorous assessment of the missing data pattern is required.

Protocol 1: Pattern Analysis via Heatmap and Logistic Regression

- Data Preparation: Create a binary matrix (0 = observed, 1 = missing) matching your omics data matrix (features x samples).

- Visualization: Generate a clustered heatmap of the binary matrix to identify sample- or feature-wise patterns.

- Statistical Testing: For each feature, perform a univariate logistic regression (missing/observed status as outcome) against potential covariates (e.g., sample group, total ion count, batch). A significant association suggests MAR.

- MNAR Inference: If abundance distributions differ significantly between observed and missing groups (estimated via detection limits), MNAR is likely.

Protocol 2: Benchmarking Imputation Performance (Spike-in Experiment)

- Generate Ground Truth: Start with a complete, high-quality omics dataset.

- Introduce Missing Values: Artificially remove values under MCAR (random) and MNAR (remove low-intensity values) schemes at rates of 10%, 20%, and 30%.

- Apply Imputation: Run candidate imputation methods on each corrupted dataset.

- Evaluate: Calculate the Root Mean Square Error (RMSE) between the imputed matrix and the ground truth. Assess the preservation of biological variance (e.g., PCA structure) and differential analysis outcomes.

Key Imputation Methodologies: Workflows and Applications

Imputation strategies range from simple to complex, with suitability dependent on the missingness pattern and data structure.

Title: Decision Workflow for Selecting an Imputation Strategy

Detailed Methodologies

Method 1: k-Nearest Neighbors (KNN) Imputation (for MCAR/MAR)

- For each sample containing missing values, calculate its distance (e.g., Euclidean) to all other samples using only commonly observed features.

- Identify the k samples with the smallest distance (nearest neighbors).

- For each missing feature in the target sample, compute the imputed value as the mean (or weighted mean) of that feature's values in the k neighbors.

- Iterate until convergence or for a pre-set number of cycles.

Method 2: Multiple Imputation by Chained Equations (MICE) (for MAR)

- Initialization: Fill missing values with simple imputation (e.g., mean).

- Iteration: For each feature with missing data (

Feature_m), fit a regression model (linear, logistic, etc.) using all other features as predictors, based on samples whereFeature_mis observed. - Imputation: Draw predicted values for

Feature_mfrom the fitted model (incorporating error) to fill the missing entries. - Cycling: Repeat steps 2-3 for each incomplete feature, cycling through the dataset for multiple rounds (typically 5-10) to create one imputed dataset.

- Repetition: Repeat the entire process to generate m multiple imputed datasets (e.g., m=5).

- Analysis & Pooling: Perform the intended downstream analysis (e.g., regression) on each of the m datasets and pool the results using Rubin's rules to obtain final estimates and confidence intervals.

Method 3: Left-Censored MNAR Imputation (e.g., MinProb)

- For each feature, estimate a global detection limit or a minimum observed value.

- Calculate a small imputation value:

min_value * rwhereris a user-defined factor (e.g., 0.65). - Add normally distributed random noise proportional to the data variance to avoid creating artificial peaks.

The Scientist's Toolkit: Research Reagent Solutions for Multi-Omics

Table 3: Essential Tools for Managing Missing Data in Multi-Omics Experiments

| Item | Function | Example/Note |

|---|---|---|

| Internal Standards (IS) | Corrects for technical variation and signal drift in MS; aids in distinguishing MNAR from technical zeros. | Stable Isotope-Labeled Compounds (e.g., 13C, 15N). |

| Quality Control (QC) Samples | Pooled sample run repeatedly; monitors instrument stability, identifies run-order dependent missingness (MAR). | Technical replicates for precision assessment. |

| Blank Samples | Distinguishes true missing data (analyte absent) from background noise or contamination. | Process blanks, solvent blanks. |

| Standard Reference Materials | Provides a known ground truth for benchmarking imputation accuracy in spike-in experiments. | NIST SRM 1950 (Metabolites), HeLa cell protein digests. |

| Sample Multiplexing Kits | Reduces batch effects and missing data due to inter-run variation by pooling samples for simultaneous processing. | TMT (Tandem Mass Tag), iTRAQ reagents for proteomics. |

Title: Data Processing Pipeline with Imputation Step

Effective handling of missing data is not a mere preprocessing step but a critical, foundational component of robust multi-omics data integration. The choice of imputation strategy must be guided by a diligent assessment of the missingness mechanism, which is itself influenced by experimental design and the judicious use of standards and controls. For drug development professionals, transparent reporting of missing data rates and imputation methods is essential to ensure the validity of identified biomarkers or therapeutic targets. As multi-omics studies increase in scale and complexity, the development of novel, integrated imputation frameworks that leverage the correlations across omics layers represents a vital frontier for improving the fidelity of systems-level biological insights.

From Theory to Practice: Key Integration Strategies and Real-World Applications

Within the broader thesis on the Challenges of multi-omics data integration research, the selection of an integration paradigm is a fundamental architectural decision. The vast heterogeneity, scale, and noise inherent in genomics, transcriptomics, proteomics, and metabolomics datasets necessitate strategic frameworks to combine information effectively. The three primary paradigms—Early, Intermediate, and Late Fusion—differ in the stage at which data from different omics layers are combined, each with distinct implications for addressing challenges like dimensionality, modality-specific noise, and biological interpretability.

Technical Definitions and Methodologies

Early Fusion (Feature-Level Integration)

Early fusion concatenates raw or pre-processed features from multiple omics modalities into a single, unified feature vector before model training.

- Workflow: Individual omics datasets (e.g., SNP arrays, RNA-seq counts, protein abundances) are normalized and scaled independently. These matrices are then concatenated column-wise (sample-wise) to create a combined matrix

X_combinedof dimensions[n_samples, (n_features_genomics + n_features_transcriptomics + ...)]. This monolithic matrix is input to a downstream machine learning model (e.g., PCA, Random Forest, Deep Neural Network). - Core Challenge: This approach directly confronts the "curse of dimensionality" (p ≫ n problem) and can be severely affected by modality-specific batch effects if not corrected prior to concatenation.

Intermediate Fusion (Joint Representation Learning)

Intermediate fusion integrates data within the model itself, learning a joint latent representation that captures shared and complementary information across modalities.

- Workflow: Each omics type is processed through separate, modality-specific input layers or encoders (e.g., CNNs for sequences, MLPs for numerical data). The model then combines these encoded representations at a hidden layer via operations such as concatenation, tensor fusion, or attention-based mechanisms. A central neural network further processes this joint representation for the final prediction.

- Core Strength: This paradigm is designed to model complex, non-linear interactions between omics layers, potentially capturing biological mechanisms that are not apparent in single-omics analyses.

Late Fusion (Decision-Level Integration)

Late fusion trains separate models on each omics dataset independently and integrates their predictions at the final decision stage.

- Workflow: A predictive model (e.g., SVM, classifier) is trained exclusively on each omics modality, yielding a set of modality-specific predictions or prediction probabilities. These individual results are then combined using a meta-learner (e.g., a simple voting scheme, weighted average, or another classifier) to produce a final, consensus prediction.

- Core Strength: It naturally accommodates asynchronous data availability (common in clinical settings) and allows for the use of modality-specific best-practice models.

Comparative Analysis of Fusion Paradigms

Table 1: Qualitative and Quantitative Comparison of Fusion Approaches

| Criterion | Early Fusion | Intermediate Fusion | Late Fusion |

|---|---|---|---|

| Integration Stage | Raw/Pre-processed Data Level | Hidden/Latent Representation Level | Decision/Prediction Level |

| Handles High Dimensionality | Poor (requires strong feature selection/dimension reduction) | Good (via encoder networks) | Excellent (per-modality modeling) |

| Models Inter-Modality Interactions | Limited (relies on downstream model) | Excellent (explicitly designed for it) | None (integrated post-hoc) |

| Robustness to Missing Modalities | Poor (entire sample may be excluded) | Moderate (can be designed with masking) | Excellent (only missing model is skipped) |

| Interpretability | Low (black-box on combined features) | Moderate (potential via attention weights) | High (per-modality contributions clear) |

| Typical Model Complexity | Low to Moderate | High (complex multi-branch architectures) | Moderate (ensemble of simpler models) |

| Reported Performance Gain (Example Range)* | 2-8% AUC increase over single-omics baselines | 5-15% AUC increase over single-omics baselines | 3-10% AUC increase over single-omics baselines |

*Performance gains are highly context-dependent and based on reviewed benchmarking studies (e.g., on TCGA pan-cancer or multi-omics drug response datasets).

Experimental Protocol: A Benchmarking Study

To empirically compare these paradigms, a standard benchmarking protocol is employed.

1. Data Preparation:

- Source: Use a public multi-omics cohort (e.g., TCGA-LIHC: methylation (450K array), RNA-seq (FPKM-UQ), and RPPA proteomics).

- Pre-processing: For each modality per sample: log-transformation (RNA-seq), beta-value normalization (methylation), Z-score normalization (RPPA). Perform sample-wise matching.

- Task: Binary classification of a clinical endpoint (e.g., tumor vs. normal, or survival risk group).

2. Paradigm Implementation:

- Early Fusion: Concatenate all three processed feature matrices. Apply PCA for dimensionality reduction to 100 components. Train a Random Forest classifier.

- Intermediate Fusion: Implement a multi-modal deep learning model (e.g., MOMA-Net). Use separate fully-connected encoder branches for each omics type (2 hidden layers each), concatenate their 64-dim outputs, and process through a final classifier head.

- Late Fusion: Train three independent XGBoost models—one per modality. Combine their output class probabilities using a logistic regression meta-learner.

3. Evaluation:

- 5-fold nested cross-validation (to tune hyperparameters within the training folds).

- Primary Metric: Area Under the ROC Curve (AUC).

- Secondary Metrics: Precision-Recall AUC, F1-Score.

Visualization of Workflows and Pathways

Diagram 1: Multi-Omics Fusion Workflow Comparison

Diagram 2: Conceptual Pathway of Multi-Omics Integration Impact

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools and Resources for Multi-Omics Integration Research

| Item / Resource | Function / Purpose |

|---|---|

R/Bioconductor (omicade4, MOFA2) |

Statistical packages for multi-omics factor analysis and early/intermediate fusion. |

Python Libraries (PyTorch, TensorFlow with tf.keras) |

Essential frameworks for building custom intermediate fusion deep learning architectures. |

| Singularity/Apptainer Containers | Ensures reproducibility of complex software stacks and dependency management across HPC clusters. |

| Benchmark Datasets (e.g., TCGA, CPTAC) | Curated, clinically-annotated multi-omics datasets essential for training and benchmarking fusion models. |

| Multi-Omics Benchmarking Suites (e.g., multiBench, PIMKL) | Pre-built pipelines for fair comparison of integration methods across standard tasks. |

| High-Performance Computing (HPC) Cluster | Provides necessary computational power for training large intermediate fusion models and permutation testing. |

| Secure Data Storage (e.g., encrypted NAS) | Required for storing large volumes of sensitive genomic and clinical patient data compliant with regulations. |

The choice between early, intermediate, and late fusion is not universally optimal but is dictated by the specific multi-omics integration challenge at hand. Early fusion, while simple, often falters under high-dimensional data. Late fusion offers robustness and flexibility, particularly for clinical translation. Intermediate fusion holds the greatest promise for novel biological discovery due to its capacity to learn complex cross-modal relationships but demands large sample sizes and significant computational resources. Navigating this trade-off space is central to advancing the field and overcoming the fundamental challenges in multi-omics data integration research.

The integration of multi-omics data (e.g., genomics, transcriptomics, proteomics, metabolomics) presents significant challenges, including technical noise, high dimensionality, disparate scales, and biological heterogeneity. Effective frameworks must address these to extract coherent biological signals and drive discoveries in systems biology and precision medicine.

Core Framework Analysis

MOFA+ (Multi-Omics Factor Analysis)

MOFA+ is a Bayesian statistical framework for the unsupervised integration of multiple omics assays. It uses a factor analysis model to disentangle the shared and specific sources of variation across data modalities.

Key Technical Specifications:

- Core Model: Bayesian group factor analysis with automatic relevance determination priors.

- Input Handling: Accepts both continuous (Gaussian) and discrete (Bernoulli, Poisson) data.

- Output: A set of latent factors, each with loadings per feature and weights per sample, explaining variance across omics layers.

Experimental Protocol for a Typical MOFA+ Analysis:

- Data Preprocessing: Normalize and scale each omics dataset individually. Handle missing values explicitly.

- Model Training: Create a

MOFAobject and train the model specifying the number of factors (can be inferred). Use stochastic variational inference for scalability. - Variance Decomposition: Analyze the percentage of variance explained (R²) per factor per view.

- Factor Interpretation: Correlate factors with sample metadata (e.g., clinical phenotypes) and perform feature set enrichment analysis on factor loadings.

- Downstream Analysis: Use factors as reduced-dimension representations for clustering, classification, or regression tasks.

mixOmics

mixOmics is a versatile R toolkit for the exploration and integration of multiple omics datasets using multivariate statistical methods, with a focus on discriminant analysis and variable selection.

Key Technical Specifications:

- Core Methods: Includes Projection to Latent Structures (PLS), sparse PLS Discriminant Analysis (sPLS-DA), and DIABLO (Data Integration Analysis for Biomarker discovery using Latent variable approaches) for multi-class multi-omics integration.

- Focus: Supervised and unsupervised integration with strong visualisation and variable selection capabilities.

Experimental Protocol for a DIABLO-based Multi-Omics Classification:

- Data Preparation: Format data into a list of matched omics matrices (

X) and a response factor vector (Y). Tune the number of components and the number of features to select per dataset per component via cross-validation. - Model Building: Run the

block.splsdafunction to integrate datasets and predict the sample classY. - Model Evaluation: Assess performance via repeated cross-validation and permutation tests. Generate

circosPlotto visualise correlations of selected features across omics layers. - Biomarker Identification: Extract the selected variables and examine their consensus across components.

Integrated Biosciences Tools (Emerging Platforms)

This category includes newer, often cloud-based or commercial platforms offering end-to-end workflows for multi-omics integration, such as Nextflow-based pipelines, Terra.bio, or Partek Flow.

Key Technical Specifications:

- Core Approach: Provide unified environments that link data management, reproducible pipeline execution (e.g., via Common Workflow Language), pre-built analysis modules, and interactive visualization.

- Focus: Usability, reproducibility, and scalability for large consortium-scale projects.

Quantitative Framework Comparison

Table 1: Comparative Analysis of Multi-Omics Integration Frameworks

| Feature | MOFA+ | mixOmics (DIABLO) | Integrated Platforms (e.g., Partek Flow) |

|---|---|---|---|

| Primary Approach | Unsupervised Factor Analysis | Supervised/Unsupervised Multivariate (PLS) | GUI-driven, Modular Workflows |

| Statistical Core | Bayesian Group Factor Analysis | Projection to Latent Structures (PLS) | Varies (Often includes PCA, regression, ML) |

| Key Strength | Decomposing shared & specific variation; Handles missing data | Powerful for classification & biomarker discovery; Excellent viz | Accessibility; Reproducibility; Scalability |

| Data Type Handling | Continuous & Discrete | Primarily Continuous | Broad (via modules) |

| Scalability | High (approx. 10^4 samples, 10^5 features) | Moderate to High | Cloud-scalable |

| Typical Use Case | Exploratory analysis, identifying latent factors of heterogeneity | Predicting clinical outcome, multi-omics biomarker panels | Collaborative, standardized analysis for non-specialists |

| Learning Curve | Moderate | Moderate | Low to Moderate |

Visualization of Workflows and Relationships

Title: Multi-Omics Integration Workflow Comparison

Title: MOFA+ Factor Model Schematic

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Multi-Omics Integration Studies

| Item / Solution | Function / Role in Multi-Omics Integration | Example Vendor/Product |

|---|---|---|

| Reference Standards (Multi-Omics) | Provides a known, uniform biological sample for technical validation and batch correction across different omics platforms. | NIST SRM 1950 (Metabolites in Human Plasma), Horizon Discovery Multiplex IMC Cell Line |

| Single-Cell Multi-Omics Kits | Enables simultaneous measurement of multiple molecular layers (e.g., RNA + ATAC, RNA + protein) from the same single cell, providing inherently matched data. | 10x Genomics Multiome (ATAC + Gene Exp.), BD AbSeq (RNA + Protein) |

| Barcoded Isotope Tags | Allows multiplexed sample pooling for proteomic/metabolomic LC-MS, reducing technical variation and enabling precise quantitation across many samples. | TMT (Tandem Mass Tag), iTRAQ |

| Spatial Transcriptomics/Proteomics Kits | Captures gene expression or protein data within tissue architecture, adding a spatial dimension for integration with histopathology. | 10x Genomics Visium, Nanostring GeoMx DSP |

| Cell Hashing/Oligo-Conjugated Antibodies | Labels cells from different samples with unique barcodes, allowing sample multiplexing in single-cell assays and improving throughput/reducing costs. | BioLegend TotalSeq-B/C Antibodies |

| Automated Nucleic Acid/Protein Extraction Systems | Ensures high-quality, consistent input material for downstream omics assays from diverse sample types (tissue, blood, cells). | Qiagen Qiacube, Promega Maxwell RSC |

| Cross-Linking Reagents | For ChIP-seq and related assays to capture protein-DNA interactions, generating data on regulatory mechanisms for integration with transcriptomics. | Formaldehyde, DSG (Disuccinimidyl glutarate) |

| Internal Standard Spike-Ins | Synthetic RNAs, proteins, or metabolites added to samples prior to processing to monitor technical performance and enable absolute quantitation. | ERCC RNA Spike-In Mix (Thermo Fisher), SILAC Spike-In Standards (Sigma) |

1. Introduction: The Multi-Omics Integration Challenge The integration of multi-omics data (genomics, transcriptomics, proteomics, metabolomics) represents a central challenge in systems biology and precision medicine. The core thesis posits that the high dimensionality, heterogeneity, noise, and complex, non-linear relationships inherent in such datasets render traditional statistical methods insufficient. This whitepaper details how deep learning (DL) architectures are uniquely equipped to overcome these barriers by discovering latent, non-linear patterns that drive biological insight and therapeutic discovery.

2. Core Deep Learning Architectures for Non-Linear Discovery

Table 1: Key DL Architectures for Multi-Omics Pattern Discovery

| Architecture | Core Strength | Typical Application in Multi-Omics | Key Advantage Over Linear Models |

|---|---|---|---|

| Autoencoders (AEs) | Unsupervised dimensionality reduction & feature learning. | Integrating layers by learning a joint latent representation. | Captures non-linear correlations for robust data compression. |

| Variational AEs (VAEs) | Generative modeling of latent distributions. | Probabilistic integration and generation of synthetic omics profiles. | Models data uncertainty and continuous latent spaces. |

| Multi-Modal Deep Neural Networks | Supervised integration of heterogeneous inputs. | Predicting clinical outcomes from combined genomic & image data. | Learns complex, cross-modal feature interactions end-to-end. |

| Graph Neural Networks (GNNs) | Modeling relational/network data. | Incorporating PPI networks with gene expression for subtyping. | Propagates information non-linearly through biological networks. |

| Attention/Transformer Models | Context-aware, weighted data integration. | Prioritizing impactful genomic variants across long sequences. | Dynamically focuses on salient features across disparate inputs. |

3. Experimental Protocol: A Standardized Workflow for Multi-Omics DL

A reproducible protocol for non-linear integration using a deep learning framework is outlined below.

3.1. Data Preprocessing & Harmonization

- Source Data: Obtain raw or normalized counts from platforms (e.g., RNA-seq, LC-MS proteomics, NMR metabolomics).

- Normalization: Apply omics-specific normalization (e.g., DESeq2 for transcriptomics, quantile for proteomics).

- Missing Value Imputation: Use DL-based methods (e.g., deep denoising autoencoders) or k-nearest neighbors.

- Batch Effect Correction: Apply Combat or its DL variant (e.g., using an AE to learn batch-invariant features).

- Feature Scaling: Scale each feature to a [0,1] range or standardize to zero mean and unit variance.

3.2. Model Implementation: Multi-modal Deep Autoencoder

- Objective: Learn a shared latent representation (

Z) from two omics layers (e.g., TranscriptomicsX_tand ProteomicsX_p). - Architecture:

- Input: Concatenated vector

[X_t, X_p]or separate encoding paths. - Encoder: Multiple fully-connected (dense) layers with non-linear activations (ReLU, LeakyReLU). Layer sizes decrease progressively (e.g., 1024 → 512 → 128 → 32 neurons).

- Bottleneck (Z): The deepest layer (e.g., 10-50 neurons) represents the integrated latent space.

- Decoder: Symmetric layers reconstructing the original inputs

[X'_t, X'_p].

- Input: Concatenated vector

- Training: Minimize reconstruction loss (Mean Squared Error) using Adam optimizer. Regularize with dropout and L2 penalty to prevent overfitting.

3.3. Downstream Analysis & Validation

- Latent Space Interpolation: Use the VAE variant to generate novel, plausible omics profiles.

- Clustering: Apply k-means or hierarchical clustering on

Zto identify novel disease subtypes. - Survival Analysis: Use a Cox proportional hazards model with

Zas input to predict patient outcomes. - Validation: Employ rigorous cross-validation (nested CV) and hold-out testing. Compare against PCA, PLS, and other linear baselines using metrics like silhouette score (clustering) or C-index (survival).

4. Visualization of Key Concepts

Title: DL Addresses Multi-Omics Integration Challenges

Title: Multi-Modal Autoencoder Architecture for Integration

5. The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagent Solutions for Multi-Omics DL Experiments

| Item/Category | Example Product/Platform | Function in the Workflow |

|---|---|---|

| Nucleic Acid Isolation Kits | Qiagen AllPrep, TRIzol Reagent | Simultaneous extraction of high-quality DNA, RNA, and protein from single samples. |

| Next-Gen Sequencing Library Prep | Illumina TruSeq, KAPA HyperPrep | Prepare transcriptomic (RNA-seq) and genomic (WES, WGS) libraries for sequencing. |

| Mass Spectrometry Ready Kits | Thermo Fisher TMTpro, PreOmics iST | Multiplex protein sample preparation, digestion, and labeling for quantitative proteomics. |

| Metabolite Extraction Solvents | Methanol:Acetonitrile:Water (2:2:1) | Standardized solvent system for broad-coverage untargeted metabolomics. |

| Single-Cell Multi-Omics Platforms | 10x Genomics Multiome (ATAC + Gene Exp.) | Generate paired, co-assayed data from the same single cell for intrinsic integration. |

| DL Framework & Environment | PyTorch or TensorFlow with CUDA, Google Colab | Open-source libraries and compute environments for building and training custom DL models. |

| Bioinformatics Pipelines | nf-core (Nextflow), Snakemake workflows | Reproducible, containerized pipelines for raw data processing and feature extraction. |

| Benchmarking Datasets | The Cancer Genome Atlas (TCGA), UK Biobank | Publicly available, clinically annotated multi-omics data for model training and validation. |

6. Conclusion Deep learning provides a transformative toolkit for tackling the fundamental challenge of non-linear pattern discovery in multi-omics data integration. By moving beyond linear assumptions, architectures like autoencoders, GNNs, and transformers enable the construction of unified biological models that more accurately reflect the complexity of living systems, thereby accelerating biomarker discovery and therapeutic development. Continued advancement requires close collaboration between computational scientists and experimentalists to ground these discovered patterns in biological mechanism.

Within the broader thesis on the challenges of multi-omics data integration, the application in precision oncology represents both a paramount goal and a significant test case. The core challenge lies in harmonizing disparate, high-dimensional data layers—genomics, transcriptomics, epigenomics, proteomics, and metabolomics—to define clinically actionable cancer subtypes and to nominate robust therapeutic targets. This technical guide outlines the current methodologies, workflows, and reagent solutions essential for advancing this integrative research.

Multi-Omics Data Acquisition and Preprocessing

The foundational step involves the systematic generation and quality control of omics data from tumor biospecimens.

Key Experimental Protocols

Protocol 1: Multi-Omic Profiling from a Single Tumor Sample (FFPE or Frozen)

- Sample Preparation: Macro-dissect or laser-capture microdissect to ensure >70% tumor purity. Split aliquots for nucleic acid and protein extraction.

- DNA Sequencing (WES/WGS): Extract DNA (e.g., QIAamp DNA FFPE Kit). For WES, use hybridization-based capture (e.g., IDT xGen Exome Research Panel). Sequence on Illumina NovaSeq X, targeting >100x mean coverage for tumor, >30x for matched normal.

- RNA Sequencing (Transcriptomics): Extract total RNA, assess RIN (RNA Integrity Number). Perform poly-A selection or ribosomal RNA depletion. Prepare libraries (e.g., KAPA mRNA HyperPrep Kit). Sequence to a depth of ≥50 million paired-end reads.

- DNA Methylation (Epigenomics): Treat DNA with sodium bisulfite (e.g., Zymo EZ DNA Methylation Kit). Hybridize to Illumina Infinium MethylationEPIC v2.0 BeadChip or perform whole-genome bisulfite sequencing.

- Proteomics & Phosphoproteomics: Extract proteins, digest with trypsin. For global proteomics, use data-independent acquisition (DIA) mass spectrometry (e.g., on a timsTOF Pro 2). For phosphoproteomics, enrich phosphopeptides using TiO2 or Fe-IMAC magnetic beads prior to LC-MS/MS.

Protocol 2: Single-Cell Multi-Omics (CITE-seq)

- Cell Suspension: Generate a single-cell suspension from fresh tumor tissue using a validated enzymatic dissociation kit (e.g., Miltenyi Biotec Tumor Dissociation Kit).

- Cell Staining: Stain with a panel of ~100 oligonucleotide-tagged antibodies (TotalSeq from BioLegend).

- Library Preparation: Load cells onto a 10x Genomics Chromium Controller for GEM generation and barcoding. Prepare libraries for Gene Expression (GEX), Antibody-Derived Tags (ADT), and optionally, V(D)J or ATAC-seq simultaneously using the Chromium Next GEM Single Cell Multiome ATAC + Gene Expression kit.

- Sequencing: Pool libraries and sequence on an Illumina NovaSeq 6000.

Table 1: Representative Data Outputs per Multi-Omics Modality from a Solid Tumor Sample

| Modality | Platform | Key Metrics | Typical Output per Sample | Primary Use in Subtyping/Target ID |

|---|---|---|---|---|

| Whole Exome Seq (WES) | Illumina NovaSeq X | Mean Coverage: Tumor >100x, Normal >30x | ~5-8 GB | Somatic mutations (SNVs, indels), Copy Number Variations (CNVs), Tumor Mutational Burden (TMB) |

| RNA-Seq (Bulk) | Illumina NovaSeq 6000 | Paired-end, Depth: ≥50M reads | ~15-20 GB | Gene expression signatures, Fusion genes, Pathway activity |

| DNA Methylation | Illumina MethylationEPIC | >850,000 CpG sites | ~0.5 GB | Methylation clusters, Regulatory element activity |

| Global Proteomics | timsTOF Pro 2 (DIA) | Protein Groups Identified: ~8,000-10,000 | ~50-100 GB | Protein abundance, Pathway activation states |

| Single-Cell Multiome | 10x Genomics + Illumina | Cells Recovered: 5,000-10,000; Reads/Cell: 20,000 (GEX) | ~200-500 GB | Cellular taxonomy, Cell-state transitions, Surface protein markers |

Multi-Omics Data Generation Workflow

Integrative Analysis for Subtype Identification

The core computational challenge is the integration of the data layers from Table 1.

Methodological Framework

Workflow: Multi-Omics Clustering for Subtype Discovery

- Modality-Specific Processing: Align sequences, call mutations (GATK), quantify gene expression (STAR, featureCounts), normalize protein abundance (MaxQuant, DIA-NN), calculate beta-values for methylation.

- Dimensionality Reduction: Perform PCA (numeric data) or MDS on each data type separately.

- Similarity Network Fusion (SNF): Construct patient similarity networks for each omics layer. Fuse these networks into a single integrated network using SNF.

- Clustering: Apply spectral clustering on the fused network to identify patient subgroups (subtypes).

- Validation: Assess cluster robustness via consensus clustering. Annotate subtypes using survival analysis (Kaplan-Meier, log-rank test) and differential analysis across all omics layers.

Key Pathway Analysis for Therapeutic Inference

Once subtypes are defined, pathway analysis identifies dysregulated biology. A recurrently altered pathway in oncology is the PI3K-AKT-mTOR axis.

PI3K-AKT-mTOR Pathway Dysregulation & Targeted Inhibitors

Table 2: Example Integrative Subtype Analysis Output in Breast Cancer (TNBC)

| Identified Subtype | Genomic Hallmark | Transcriptomic Signature | Proteomic/Phospho Feature | Putative Target(s) | Associated Therapeutic Agent(s) |

|---|---|---|---|---|---|

| Luminal-Androgen Receptor (LAR) | High PIK3CA mut, Low TP53 mut | AR-signaling, Luminal gene expression | High AR protein, p-AKT | AR, PI3K | Bicalutamide, Alpelisib |

| Basal-Like Immune-Suppressed (BLIS) | High TP53 mut, RB1 loss | Cell cycle, DNA repair, Low immune infiltration | High Cyclin E1, p-RB | CDK4/6, PARP | Palbociclib, Olaparib |

| Mesenchymal (MES) | High copy number alterations | EMT, Growth factor pathways | High Vimentin, p-FAK | FAK, AXL | Defactinib (FAKi) |

| Immunomodulatory (IM) | High TMB, 9p24.1 amp | Immune cell signaling, Cytokine pathways | High PD-L1, p-STAT1 | PD-1/PD-L1, JAK/STAT | Pembrolizumab, Ruxolitinib |

Experimental Validation of Targets

Nomination of targets from integrative analysis requires functional validation.

Protocol for CRISPR-Cas9 Screening in Subtype-Specific Models

Protocol 3: Pooled CRISPR Knockout Screen for Target Gene Validation

- Cell Models: Establish patient-derived organoid (PDO) lines representing distinct molecular subtypes.

- Viral Transduction: Transduce PDOs at low MOI with a lentiviral pooled sgRNA library (e.g., Brunello library, ~75,000 sgRNAs targeting ~19,000 genes). Include non-targeting control sgRNAs.

- Selection & Passaging: Select transduced cells with puromycin for 5-7 days. Passage cells for a total of ~14 population doublings, maintaining a minimum of 500x library coverage.

- Genomic DNA Extraction & Sequencing: Harvest cells at Day 0 (post-selection) and Day 14. Extract gDNA, amplify sgRNA regions via PCR, and sequence on an Illumina NextSeq 500.

- Analysis: Align reads to the sgRNA library reference. Using MAGeCK or similar, calculate sgRNA depletion/enrichment between Day 0 and Day 14. Genes whose targeting sgRNAs are significantly depleted represent subtype-specific essential genes (potential therapeutic targets).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Kits for Multi-Omics Validation Studies

| Item | Supplier Examples | Function in Precision Oncology Research |

|---|---|---|

| FFPE DNA/RNA Co-Extraction Kit | Qiagen (AllPrep), Zymo Research (Quick-DNA/RNA) | Simultaneous recovery of nucleic acids from precious, archived clinical specimens. |

| Multiplex IHC/IF Antibody Panels | Akoya Biosciences (OPAL), Cell Signaling Tech (Phenoptics) | Spatial profiling of multiple protein targets and immune cells in a single tissue section. |

| Patient-Derived Organoid (PDO) Culture Media | STEMCELL Technologies (IntestiCult), Trevigen (Cultrex) | Enables expansion of patient tumor cells in 3D, preserving original tumor heterogeneity. |

| Pooled CRISPR sgRNA Libraries | Horizon Discovery (Brunello, Dolcetto), Addgene | Genome-wide or pathway-focused screening for gene essentiality in subtype-specific models. |

| Phospho-Specific Antibodies for Flow/Mass Cytometry | CST, BD Biosciences, Fluidigm | High-throughput profiling of signaling pathway activation at single-cell resolution. |

| Isobaric Labeling Reagents for Proteomics (TMTpro) | Thermo Fisher Scientific | Enables multiplexed (up to 18-plex) quantitative comparison of proteomes across many samples/conditions. |

| Targeted NGS Panels (DNA/RNA) | Illumina (TruSight Oncology 500), Foundation Medicine (CDx) | Clinically validated, focused profiling of actionable mutations, fusions, and biomarkers. |

The successful application of multi-omics integration in precision oncology hinges on rigorous, standardized experimental protocols, sophisticated computational fusion algorithms, and systematic functional validation. By navigating the challenges of data heterogeneity, noise, and biological interpretation, researchers can move beyond single-omics classifiers to define multi-dimensional subtypes anchored in coherent biology. This integrative approach is critical for discovering resilient therapeutic targets that address the complex, adaptive nature of cancer, ultimately translating the promise of precision medicine into improved patient outcomes.

A central thesis in modern bioinformatics posits that the primary challenge of multi-omics research is no longer data generation, but the integration of disparate, high-dimensional data types into a coherent, biologically interpretable model. This whitepaper details how overcoming these integration challenges—specifically semantic heterogeneity, batch effects, and disparate data scales—is critical for advancing target identification and biomarker development in pharmaceutical research.

The Multi-Omics Integration Imperative

Effective drug discovery requires a systems-level understanding of disease. Individual omics layers (genomics, transcriptomics, proteomics, metabolomics) provide limited, often correlative insights. Their integration offers causative and mechanistic understanding. Key integration challenges align with the broader thesis:

- Technical Variation: Batch effects confound biological signals.

- Dimensionality: Each omics dataset has thousands to millions of features.

- Temporal & Spatial Disconnect: Data captured from different samples, time points, and cellular compartments.

- Data Type Heterogeneity: Continuous (e.g., protein abundance), count (e.g., RNA-seq), and binary (e.g., mutation) data require specialized statistical integration methods.

Quantitative Landscape of Multi-Omics in Pharma

Recent studies quantify the impact of integrated multi-omics approaches.

Table 1: Impact of Multi-Omics Integration on Drug Discovery Metrics

| Metric | Single-Omics Approach | Integrated Multi-Omics Approach | Data Source (Year) |

|---|---|---|---|

| Target Validation Success Rate | ~ 25% | Increases by 1.5-2x | Industry Benchmark (2023) |

| Preclinical Biomarker Accuracy (AUC) | 0.65 - 0.75 | 0.82 - 0.92 | Nature Reviews Drug Disc. (2024) |

| Time to Target Identification (Months) | 18-24 | Reduced by ~30% | Pharma R&D Report (2024) |

| Candidate Attrition Rate (Phase II) | ~ 70% | Potentially reduced by ~15% | Analysis of Clinical Trials (2023) |

Core Methodologies & Experimental Protocols

Protocol 1: Multi-Omics Guided Target Discovery in Oncology

- Objective: Identify novel therapeutic targets by integrating genomic, transcriptomic, and phosphoproteomic data from patient-derived tumor samples.

- Sample Preparation: Fresh-frozen tumor tissue is cryo-pulverized and aliquoted for parallel analysis.

- Experimental Workflow:

- DNA-Seq (Genomics): Isolate DNA; perform whole-exome sequencing to identify somatic mutations and copy number variations.

- RNA-Seq (Transcriptomics): Isolate total RNA; perform stranded mRNA-seq to quantify gene expression and fusion transcripts.

- LC-MS/MS (Phosphoproteomics): Lyse aliquoted tissue; digest proteins with trypsin; enrich phosphopeptides using TiO2 or IMAC columns; analyze via liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Data Integration & Analysis:

- Individual Layer Processing: Call variants (GATK), quantify expression (STAR/DESeq2), identify phosphosites (MaxQuant).

- Concatenation-Based Integration: Use Multi-Omics Factor Analysis (MOFA+) to jointly model all features and identify latent factors driving disease variance.

- Knowledge-Based Integration: Map altered genes, overexpressed mRNAs, and hyperphosphorylated proteins to signaling pathways (KEGG, Reactome) using tools like

PathwayMapper. A candidate target is prioritized if it appears across all three layers (mutated gene, overexpressed transcript, and its protein product with activating phosphorylation).

Diagram Title: Multi-Omics Target Discovery Workflow

Protocol 2: Longitudinal Multi-Omics for Pharmacodynamic Biomarkers

- Objective: Develop dynamic biomarkers of drug response by profiling the same subject over time.

- Study Design: Collect serial blood (plasma) and, if accessible, tissue biopsies from patients in a Phase I trial at pre-dose, 24h, 1 week, and 1 month post-treatment.

- Experimental Workflow:

- Plasma Analysis: Perform untargeted metabolomics (NMR, LC-MS) and proteomics (SOMAscan or Olink) on plasma samples.

- Tissue Analysis (Biopsy): Perform single-nuclei RNA-seq (snRNA-seq) to capture cell-type-specific transcriptional responses.

- Data Integration & Analysis:

- Temporal Alignment: Use mixed-effects models to account for within-subject correlations across time points.

- Vertical Integration: Correlate plasma metabolite/protein levels with cell-type-specific gene expression modules from snRNA-seq using tools like

WGCNA(Weighted Gene Co-expression Network Analysis). A robust pharmacodynamic biomarker is a plasma metabolite whose abundance change correlates with the drug-induced transcriptional module in the target cell population (e.g., tumor-infiltrating T-cells).

Diagram Title: Longitudinal Biomarker Development Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents for Multi-Omics Experiments

| Item | Function in Multi-Omics Workflow | Key Consideration |

|---|---|---|

| Cryo-Pulverizer | Homogenizes frozen tissue into fine powder for equitable aliquotting across omics assays. | Preserves nucleic acid and protein integrity; prevents thawing. |

| Poly(A) Magnetic Beads | Isolates polyadenylated mRNA from total RNA for RNA-seq library prep. | Critical for transcriptome specificity; bead quality impacts yield. |

| Phosphopeptide Enrichment Kits (TiO2/IMAC) | Selectively binds phosphorylated peptides from complex protein digests for MS analysis. | Choice depends on peptide characteristics; IMAC for global, TiO2 for acidic. |

| Single-Cell/Nuclei Isolation Kit | Generates viable single-cell or nuclear suspensions from tissue for snRNA-seq. | Optimization needed for tissue type (e.g., fibrous vs. soft tumor). |

| Multiplex Immunoassay Panels (e.g., Olink) | Quantifies dozens to thousands of proteins simultaneously from low-volume biofluids. | Bridges gap between discovery proteomics and high-throughput validation. |

| Stable Isotope-Labeled Internal Standards | Spike-in controls for absolute quantification in metabolomics and proteomics LC-MS. | Essential for batch correction and cross-study data integration. |

| Cell Line/Organoid Co-Culture Systems | Models tissue-tissue interactions (e.g., tumor-immune) for perturbational multi-omics. | Enables causal inference beyond patient observational data. |

Pathway Visualization: Integrated Multi-Omics Insights

The power of integration is exemplified in elucidating oncogenic signaling. Genomic data identifies an activating PIK3CA mutation. Transcriptomics shows downstream AKT and mTOR overexpression. Phosphoproteomics confirms hyperphosphorylation of AKT (S473) and S6K. Integration validates the PI3K-AKT-mTOR axis as a druggable pathway.

Diagram Title: Multi-Omics Integration Validates PI3K-AKT-mTOR Axis

Successfully navigating the inherent challenges of multi-omics data integration—as framed by the overarching thesis—is the linchpin for its application in drug discovery. By implementing robust experimental protocols, specialized analytical tools, and purpose-built reagent solutions, researchers can transform multi-layered complexity into actionable biological insight, driving the identification of novel, druggable targets and the development of mechanistically grounded biomarkers.

Solving Integration Pitfalls: A Troubleshooting Guide for Robust Analysis

Within the complex landscape of multi-omics data integration research, the challenge of deriving biologically meaningful signals from heterogeneous, high-dimensional datasets is paramount. A core thesis posits that technical and biological noise often obscures true signals, making robust pre-processing—specifically normalization, scaling, and batch correction—a critical, non-negotiable first step. This guide details the technical best practices for these procedures, framing them as essential solutions to the fundamental challenges of integrating genomics, transcriptomics, proteomics, and metabolomics data.

Normalization: Accounting for Compositional & Technical Biases

Normalization adjusts data to account for systematic technical variations, such as differences in sequencing depth, library preparation, or total ion current, enabling fair comparisons across samples.

Key Methods & Quantitative Comparison

The choice of normalization method is omics-type and technology-specific. The table below compares prevalent techniques.

Table 1: Comparison of Common Normalization Methods Across Omics Types

| Omics Type | Method | Core Principle | Best For | Key Assumption |

|---|---|---|---|---|

| Transcriptomics (RNA-seq) | TMM (Trimmed Mean of M-values) | Scales library sizes based on a trimmed mean of log expression ratios (ref vs sample). | Bulk RNA-seq, most sample comparisons. | Most genes are not differentially expressed. |