Optimizing Biomarker Discovery: A Practical Guide to Genetic Algorithms in Systems Biology

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the application of Genetic Algorithms (GAs) for biomarker identification within the high-dimensional data landscape of systems...

Optimizing Biomarker Discovery: A Practical Guide to Genetic Algorithms in Systems Biology

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the application of Genetic Algorithms (GAs) for biomarker identification within the high-dimensional data landscape of systems biology. We first establish the foundational principles of GAs and their unique suitability for navigating complex biological networks and omics datasets. We then detail methodological workflows, from data encoding to fitness function design for real-world applications in cancer, neurodegenerative, and metabolic disease research. To address practical challenges, we explore strategies for troubleshooting common pitfalls and optimizing algorithm performance, including hyperparameter tuning and handling data imbalance. Finally, we present robust frameworks for validating and benchmarking GA-derived biomarker panels against other machine learning techniques, assessing their clinical translatability and biological relevance. This guide synthesizes current best practices to empower the development of robust, interpretable, and clinically actionable biomarkers.

Genetic Algorithms Decoded: Core Principles for Biomarker Discovery in Complex Biological Systems

Application Notes for Biomarker Identification in Systems Biology

Genetic Algorithms (GAs) are evolutionary computation techniques applied to biomarker discovery to navigate the high-dimensional, complex search spaces typical of omics data (genomics, proteomics, metabolomics). Their strength lies in identifying parsimonious, high-performance biomarker panels from thousands of candidate features.

Core Application Table:

| Application Area | Primary Objective | Typical Fitness Function Components | Reported Performance Gains (Recent Benchmarks) |

|---|---|---|---|

| Diagnostic Signature Discovery | Identify minimal gene/protein sets for disease classification. | Classification accuracy (AUC-ROC), panel size penalty. | GA-selected 12-gene panel for early-stage ovarian cancer achieved AUC of 0.94 vs. 0.87 for full 500-gene expression array. |

| Prognostic Model Optimization | Evolve models predicting patient survival or treatment response. | Concordance index (C-index), statistical significance (p-value). | GA-optimized Cox model using proteomic data improved C-index by 0.12 over LASSO-based models. |

| Multi-Omics Data Integration | Fuse disparate data types (e.g., mRNA, methylation) into unified signatures. | Balanced accuracy across data types, redundancy reduction. | Integrated 8-feature signature (4 mRNA, 3 methylation, 1 protein) increased diagnostic specificity to 96% from 89% (single-omics). |

| Pathway-Centric Biomarker Identification | Select biomarkers representing dysregulated functional pathways. | Enrichment score for known pathways (e.g., KEGG, Reactome). | GA-identified 15-gene panel covered 3 key inflammatory pathways, explaining 40% more phenotypic variance than top differentially expressed genes. |

Protocol: GA for Serum Proteomic Biomarker Panel Discovery

Objective: To identify a minimal, high-performance panel of serum protein biomarkers for distinguishing Alzheimer’s Disease (AD) from Mild Cognitive Impairment (MCI) and controls.

Phase 1: Problem Encoding & Initialization

- Feature Pool: Start with normalized intensity data for 1,200 candidate proteins from a mass spectrometry-based discovery cohort (n=300: 100 AD, 100 MCI, 100 Control).

- Encoding: Use binary encoding. Each chromosome is a bit string of length 1,200. A '1' at position i indicates the inclusion of protein i in the panel.

- Population: Initialize a population of 200 random chromosomes. Set panel size constraints between 5 and 20 proteins.

Phase 2: Fitness Evaluation

- Feature Subset Extraction: For each chromosome, extract the corresponding protein intensity data from the cohort.

- Model Training: Train a Random Forest classifier using 5-fold cross-validation (80/20 split) on the selected subset.

- Fitness Score Calculation: Compute a composite fitness function F: F = (0.7 * Mean AUC-ROC) + (0.3 * (1 - (Panel_Size / Max_Size))) - (0.001 * Redundancy_Score) Where Redundancy_Score is the average pairwise correlation between selected proteins.

Phase 3: Evolutionary Cycle

- Selection: Perform tournament selection (size=3) to choose parents.

- Crossover: Apply uniform crossover between selected parent chromosomes with a probability (Pc) of 0.8.

- Mutation: Apply bit-flip mutation to each bit in the offspring with a low probability (Pm) of 0.01.

- Elitism: Preserve the top 10 chromosomes from the parent generation unchanged.

- Iteration: Repeat the evaluation-selection-crossover-mutation cycle for 150 generations or until fitness plateau.

Phase 4: Validation & Interpretation

- Panel Selection: Select the highest-fitness chromosome from the final generation.

- Independent Validation: Test the selected protein panel on a held-out validation cohort (n=150) using a predefined classifier (e.g., SVM).

- Pathway Analysis: Subject the final protein list to over-representation analysis using the Reactome database.

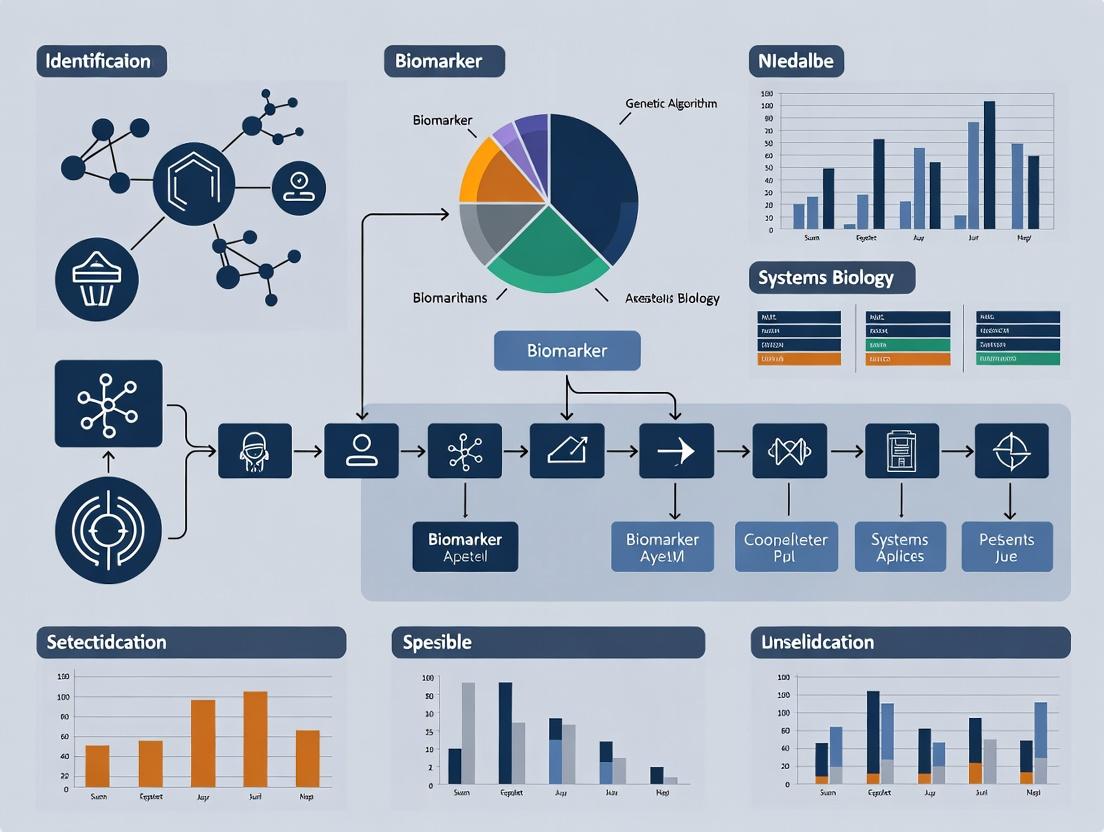

Visualizations

GA Workflow for Biomarker Discovery

Biomarker Validation & Analysis Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in GA-Driven Biomarker Research |

|---|---|

| High-Throughput Proteomic Platform (e.g., Olink, SomaScan) | Provides the initial high-dimensional protein intensity data (feature pool) from patient serum/plasma samples. |

| Normalized & Curated Omics Database (e.g., GEO, CPTAC) | Serves as source for discovery and independent validation cohorts. Essential for algorithm training and testing. |

| Machine Learning Library (e.g., scikit-learn, caret in R) | Provides the embedded classifiers (Random Forest, SVM) used within the GA's fitness evaluation function. |

| Genetic Algorithm Framework (e.g., DEAP, PyGAD) | Offers flexible, pre-coded modules for implementing selection, crossover, and mutation operators, speeding up development. |

| Pathway Analysis Suite (e.g., g:Profiler, Enrichr) | Used for biological interpretation of the final GA-evolved biomarker panel, assessing pathway enrichment. |

| Statistical Computing Environment (R or Python with NumPy/pandas) | The core computational environment for data preprocessing, algorithm execution, and result visualization. |

The identification of robust, clinically actionable biomarkers from high-dimensional omics data (genomics, transcriptomics, proteomics, metabolomics) represents a central challenge in systems biology and precision medicine. Traditional statistical methods often struggle with the "small n, large p" problem, where the number of features (p) vastly exceeds the number of samples (n), leading to overfitting and poor generalizability. This Application Note argues for the integration of evolutionary computation, specifically Genetic Algorithms (GAs), into the biomarker discovery pipeline. GAs provide a powerful framework for feature selection and model optimization, effectively navigating the vast combinatorial search space to identify parsimonious, high-performance biomarker signatures.

Core Challenges in High-Dimensional Biomarker Discovery

The table below summarizes key quantitative hurdles in omics-based biomarker discovery.

Table 1: Scale and Challenges in Omics Data Analysis

| Omics Layer | Typical Feature Dimension (p) | Key Challenge for Biomarker ID | Common False Discovery Rate |

|---|---|---|---|

| Genomics (GWAS) | 500,000 - 10M SNPs | Multiple testing correction, polygenic effects | High without stringent p-value thresholds (e.g., 5x10^-8) |

| Transcriptomics (RNA-seq) | 20,000 - 60,000 genes | Technical noise, batch effects, low concordance across platforms | Elevated in underpowered studies (n < 20 per group) |

| Proteomics (LC-MS/MS) | 3,000 - 10,000 proteins | Dynamic range, missing data, cost of validation | Can exceed 30% in discovery-phase studies |

| Metabolomics | 500 - 5,000 metabolites | Spectral overlap, database limitations, high variability | Highly variable due to platform and pre-processing |

Genetic Algorithm Protocol for Biomarker Signature Identification

This protocol outlines a standard GA workflow for identifying a minimal biomarker panel from transcriptomic data.

Protocol: GA-Driven Feature Selection for a Diagnostic Signature

1. Objective: To evolve a subset of k genes (where k is small, e.g., 5-20) that maximizes the predictive accuracy for a disease state (e.g., Cancer vs. Normal) while maintaining robustness.

2. Initialization (Population Generation):

- Population Size (N): 100-500 candidate solutions.

- Representation: Each candidate (chromosome) is a binary vector of length equal to the total number of features (e.g., 20,000 genes). A '1' indicates the gene is selected; '0' indicates it is not.

- Initialization Method: Randomly initialize 5-10% of bits to '1' per chromosome, ensuring each starts with a sparse subset.

3. Fitness Evaluation:

- For each candidate chromosome, extract the selected features (genes) from the training dataset.

- Train a lightweight classifier (e.g., Support Vector Machine with linear kernel, Random Forest) using only these features on a defined training set (e.g., 70% of samples).

- Calculate the fitness score on a held-out validation set (e.g., 30% of samples):

Fitness = 0.7 * (Balanced Accuracy) + 0.3 * (1 - (number_of_selected_genes / total_genes))This penalizes overly large gene sets, promoting parsimony.

4. Selection (Tournament Selection):

- Randomly select 3-5 candidate solutions from the population.

- The candidate with the highest fitness score in this group is selected as a parent.

- Repeat until a mating pool of size N is formed.

5. Crossover (Single-Point Crossover):

- Randomly pair parents from the mating pool.

- For each pair, generate a random crossover point along the binary vector.

- Create two offspring by swapping the segments of the parents beyond this point.

- Apply crossover with a high probability (Pc = 0.8).

6. Mutation (Bit-Flip Mutation):

- For each bit in each offspring, with a low probability (Pm = 0.01), flip the bit (1→0 or 0→1).

- This introduces new features and maintains genetic diversity.

7. Elitism:

- Directly copy the top 2-5% of highest-fitness candidates from the current generation to the next generation unchanged, preserving top solutions.

8. Termination:

- Iterate steps 3-7 for 100-500 generations or until the average fitness plateaus (no improvement for 50 generations).

- The final solution is the chromosome with the highest fitness score across all generations.

9. Validation:

- Apply the final selected gene signature to a completely independent test cohort not used during any GA training.

- Assess performance using AUC-ROC, sensitivity, specificity, and positive predictive value.

Workflow Visualization: GA for Biomarker Discovery

Title: Genetic Algorithm Workflow for Biomarker Discovery

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for Omics Biomarker Validation

| Item | Function in Biomarker Pipeline | Example Product/Kit |

|---|---|---|

| Nucleic Acid Extraction Kits | High-quality, inhibitor-free DNA/RNA isolation from diverse biospecimens (blood, tissue, FFPE) for genomic/transcriptomic profiling. | Qiagen DNeasy/RNeasy, Roche MagNA Pure. |

| Multiplex Immunoassay Panels | Validate protein biomarker candidates in many samples simultaneously. Crucial for translating proteomic discoveries. | Luminex xMAP, Olink Target 96/384, MSD U-PLEX. |

| CRISPR/Cas9 Editing Systems | Functional validation of biomarker genes by knock-out/knock-in in cell models to establish causal links. | Synthego sgRNA, Invitrogen TrueCut Cas9 Protein. |

| Synthetic Biology Standards | Spike-in controls for metabolomics and proteomics to enable absolute quantification and inter-lab reproducibility. | Biognosys iRT Kit, Cambridge Isotope Lab SIL/SID standards. |

| Single-Cell Sequencing Reagents | Deconvolute biomarker expression at cellular resolution from bulk tissue data. | 10x Genomics Chromium, Parse Biosciences WT Kit. |

| High-Fidelity Polymerase | Accurately amplify biomarker regions for sequencing or digital PCR validation without introducing errors. | NEB Q5, Takara PrimeSTAR GXL. |

| Digital PCR Master Mix | Absolute, sensitive quantification of biomarker copy number or expression without a standard curve for validation. | Bio-Rad ddPCR Supermix, Thermo Fisher QuantStudio. |

Signaling Pathway Analysis of an Evolved Biomarker Signature

A common outcome of GA-based discovery is a signature implicating a coherent biological pathway. Below is a diagram for a hypothetical evolved signature related to PI3K-Akt-mTOR signaling, a frequent pathway in cancer biomarkers.

Title: PI3K-Akt-mTOR Pathway with GA-Identified Biomarkers

Evolutionary approaches, particularly Genetic Algorithms, offer a robust and flexible solution to the feature selection problem inherent in omics-based biomarker discovery. By optimizing for both predictive power and signature parsimony, GAs can identify biologically interpretable, translatable biomarker panels that outperform those derived from univariate filtering or standard multivariate methods. Integrating these protocols into systems biology research pipelines enhances the likelihood of discovering validated, clinically useful biomarkers.

This document details the application of Genetic Algorithm (GA) core components—chromosomes, genes, and fitness functions—within a thesis framework focused on biomarker identification for systems biology and drug development. GAs provide a robust computational method for navigating the high-dimensional, nonlinear search spaces typical of omics data (genomics, proteomics, metabolomics) to identify robust, multi-analyte biomarker signatures.

Core GA Components: Biological & Computational Analogies

Table 1: Mapping Between Biological and GA Components

| Biological Component | GA Computational Component | Function in Biomarker Identification |

|---|---|---|

| Chromosome | Candidate Solution | A single, complete set of proposed biomarkers (e.g., a combination of 20 genes/proteins). |

| Gene | Feature/Allele | An individual biological entity (e.g., expression level of gene BRCA1, concentration of protein IL-6). Represents a single parameter in the solution. |

| Allele | Parameter Value | The specific value or state of a feature (e.g., "overexpressed", "underexpressed", or a normalized numerical value). |

| Genome/Population | Solution Set | A collection of many candidate biomarker panels being evaluated in parallel. |

| Fitness (Biological) | Fitness Function | A quantitative metric evaluating the diagnostic, prognostic, or predictive utility of the candidate biomarker panel. |

| Selection | Selection Operator | Prioritizes high-performing biomarker panels for "reproduction" into the next generation. |

| Crossover | Recombination Operator | Combines subsets of biomarkers from two parent panels to create a novel offspring panel. |

| Mutation | Mutation Operator | Randomly adds, removes, or alters a biomarker within a panel to maintain diversity and explore new regions of the search space. |

Defining the Fitness Function in a Biological Context

The fitness function is the critical link between the computational algorithm and biological relevance. It must encapsulate the clinical or research objective.

Protocol 3.1: Constructing a Multi-Objective Fitness Function for Biomarker Identification

Objective: To evolve a biomarker panel that maximizes diagnostic accuracy while minimizing panel size and cost. Materials: Normalized omics dataset (e.g., RNA-seq, mass spectrometry), clinical outcome labels, computational environment (Python/R).

Procedure:

- Encode Candidate Solution: Represent a chromosome as a binary vector of length N (total measured features), where '1' indicates inclusion of the feature in the panel.

- Train Predictive Model:

- Subset the full dataset to only include features marked '1' in the chromosome.

- Split data into training (70%) and validation (30%) sets using stratified sampling.

- Train a classifier (e.g., Support Vector Machine, Random Forest) on the training set.

- Calculate Fitness Score: Compute a composite score. Example:

Fitness = w1*AUC_ROC + w2*Accuracy + w3*(1 - Panel_Size/Max_Size)wherew1 + w2 + w3 = 1.0.AUC_ROC: Area Under the Receiver Operating Characteristic curve (validation set).Accuracy: Balanced accuracy (validation set).Panel_Size: Number of features in the panel.- Weights (e.g., w1=0.5, w2=0.3, w3=0.2) are set by the researcher based on priority.

- Iterate: The GA will maximize this fitness score over generations.

Table 2: Common Fitness Metrics for Biomarker Discovery

| Metric | Formula / Description | Biological/Clinical Relevance |

|---|---|---|

| Area Under Curve (AUC) | Integral of the ROC curve. | Overall diagnostic power across all classification thresholds. |

| Balanced Accuracy | (Sensitivity + Specificity) / 2 | Robust performance metric for imbalanced datasets. |

| Positive Predictive Value (PPV) | True Positives / (True Positives + False Positives) | Probability that subjects with a positive test truly have the disease. |

| Cox Proportional Hazards p-value | p-value from univariate/multivariate Cox regression. | Association strength of the panel with patient survival time. |

| Panel Cost Score | Σ(CostperAssay for each selected biomarker) | Encourages economically viable biomarker translation. |

Experimental Protocol: Applying GA for Proteomic Biomarker Discovery

Protocol 4.1: GA-Driven SRM/MRM Assay Development

Aim: To identify a minimal protein panel from a discovery-phase proteomics dataset that distinguishes metastatic from non-metastatic cancer.

Workflow Summary:

- Input Data: LC-MS/MS spectral library of ~500 differentially expressed candidate proteins.

- GA Initialization: Generate a population of 200 random chromosomes (binary vectors, length=500).

- Fitness Evaluation: For each chromosome (protein panel): a. Perform feature selection (if panel > 10 proteins, use LASSO). b. Train a logistic regression model. c. Fitness = 0.7AUC + 0.3(1 - sqrt(Panel_Size/500)).

- GA Evolution: Run for 100 generations using tournament selection, uniform crossover (rate=0.8), and bit-flip mutation (rate=0.02).

- Output: Top 5 protein panels for in vitro validation using targeted mass spectrometry (SRM/MRM).

Diagram 1: GA Biomarker Discovery Workflow

Diagram 2: Chromosome Encoding for Biomarker Panels

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Platforms for GA-Informed Biomarker Validation

| Reagent / Platform | Function in Validation | Example Product/Supplier |

|---|---|---|

| PCR Assays (qRT-PCR) | Validate gene expression levels of mRNA biomarkers identified by GA from RNA-seq data. | TaqMan Assays (Thermo Fisher), SYBR Green (Bio-Rad). |

| ELISA Kits | Quantify concentration of candidate protein biomarkers in serum/plasma/tissue lysates. | DuoSet ELISA (R&D Systems), V-PLEX (Meso Scale Discovery). |

| Multiplex Immunoassay Panels | Simultaneously validate multiple protein biomarkers from a panel. | Luminex xMAP, Olink Explore, Antibody Arrays (RayBiotech). |

| SRM/MRM Assay Kits | High-specificity, quantitative mass spectrometry validation of proteomic biomarkers. | Pre-designed Assay Kits (Biognosys, SISCAPA). |

| IHC/IF Antibodies | Spatial validation of protein biomarkers in tissue sections; assess cellular localization. | Validated Primary Antibodies (Cell Signaling Technology, Abcam). |

| CRISPR/Cas9 Editing Tools | Functional validation of gene biomarkers via knockout in cell line models. | sgRNAs, Cas9 expression vectors (Horizon Discovery, Synthego). |

| Organoid/Co-culture Systems | Test biomarker relevance in a more physiologically relevant ex vivo model. | Matrigel, Defined Media Kits (STEMCELL Technologies). |

Application Notes

This document details protocols for integrating multi-omics data within a systems biology framework, specifically to generate optimized input feature sets for Genetic Algorithm (GA)-driven biomarker discovery pipelines. The core challenge addressed is the reduction of high-dimensional, heterogeneous biological data into coherent network-based features that guide GA fitness evaluation towards robust, biologically interpretable biomarker panels.

Key Application 1: Constructing a Multi-Omics Contextual Network for GA Feature Pruning A priori biological knowledge is used to constrain the GA search space. Proteins or genes from transcriptomic (RNA-seq) and proteomic (LC-MS/MS) datasets are mapped onto integrated physical interaction (PPI) and signaling pathway databases. This creates a constrained network where only interactions supported by multiple data layers are retained. The GA is then initialized with candidate biomarkers (individuals) that are subgraphs of this constrained network, significantly improving convergence and biological relevance.

Key Application 2: Pathway Activity Scoring as a Fitness Function Component A GA’s fitness function must evaluate candidate biomarker panels beyond simple statistical separation. Here, pathway dysregulation scores are calculated for each patient sample using multi-omics data. A candidate biomarker panel's fitness is augmented by its ability to stratify samples based on these pathway activities, ensuring selected markers have coherent biological impact. This integrates the "hallmarks of cancer" or disease-specific pathways directly into the computational search.

Key Quantitative Data Summary

Table 1: Exemplar Multi-Omics Dataset Dimensions for GA-based Discovery

| Data Layer | Technology | Typical Features (Pre-filter) | Common Post-Integration Features | Key Database for Integration |

|---|---|---|---|---|

| Genomics | WES | 20,000-25,000 genes | ~500 non-synonymous mutations | COSMIC, dbSNP |

| Transcriptomics | RNA-seq | ~60,000 transcripts | ~8,000 differentially expressed genes | STRING, KEGG, Reactome |

| Proteomics | TMT-LC-MS/MS | ~10,000 proteins | ~1,500 differentially abundant proteins | STRING, PhosphoSitePlus |

| Metabolomics | LC-MS | ~1,000 metabolites | ~150 significant metabolites | HMDB, KEGG |

Table 2: Impact of Network Integration on GA Performance Metrics

| GA Initialization Strategy | Mean Generations to Convergence | Biological Coherence Score* (1-10) | Validation AUC (Independent Cohort) |

|---|---|---|---|

| Random Feature Selection | 120 | 3.2 | 0.72 |

| PPI-Network Constrained | 85 | 7.8 | 0.81 |

| Multi-Omics Pathway Constrained | 65 | 8.5 | 0.89 |

*Expert-curated score based on known pathway membership and functional connectivity.

Experimental Protocols

Protocol 1: Construction of a Multi-Omics Integrated Network for GA Initialization

Objective: To generate a biologically constrained network from heterogeneous omics data for seeding the GA population.

Materials & Reagents:

- Multi-omics datasets (e.g., RNA-seq count matrix, proteomic abundance table).

- High-performance computing cluster or workstation (≥ 32GB RAM).

- Software: R (igraph, limma, clusterProfiler), Python (NetworkX, Pandas), Cytoscape for visualization.

Procedure:

- Differential Analysis: For each omics layer, perform condition-specific (e.g., Tumor vs. Normal) differential analysis. Retain features with FDR < 0.05 and |log2FC| > 1.

- Identifier Harmonization: Map all retained features (e.g., Ensembl IDs, Uniprot IDs, HMDB IDs) to canonical gene symbols using Bioconductor annotation packages or UniProt API.

- Core Network Fetch: Query the STRING database (confidence score > 0.7) for physical interactions among all differentially expressed genes/proteins. Download the interaction list.

- Pathway Overlay: Use the Reactome or KEGG API to retrieve pathway membership for the differential features. Create a bipartite graph linking features to pathways.

- Network Integration: Merge the PPI network (Step 3) and the feature-pathway graph (Step 4) into a single heterogeneous network using graph union operations in

igraph. - Filter & Simplify: Extract the largest connected component. Collapse pathway nodes by retaining only those enriched (FDR < 0.01) in the differential features. The resulting network of molecular features is the "GA Search Network."

- GA Seeding: For the initial GA population, randomly sample connected subgraphs of size n (where n is the desired biomarker panel size) from the GA Search Network.

Protocol 2: Calculating Pathway Dysregulation Scores for GA Fitness Evaluation

Objective: To compute a sample-specific score representing the activity level of a canonical pathway, for integration into the GA fitness function.

Materials & Reagents:

- Normalized transcriptomic or proteomic abundance matrix (samples x features).

- Pre-defined gene sets (e.g., MSigDB Hallmarks, Reactome Pathways).

- Software: R (GSVA, singscore packages).

Procedure:

- Gene Set Preparation: Select relevant gene sets (e.g., "HALLMARKINFLAMMATORYRESPONSE," "REACTOME_APOPTOSIS"). Ensure gene identifiers match the abundance matrix.

- Single-Sample Scoring: Apply the Gene Set Variation Analysis (GSVA) algorithm to the abundance matrix using the selected gene sets.

- Score Matrix: The output

gsva_scoresis a matrix (pathways x samples). Each value represents the relative activity of a pathway in a single sample. - Fitness Integration: For a candidate biomarker panel in the GA, define an additional fitness term. For example:

Fitness_addon = abs(t-test_statistic(panel_predictions vs pathway_scores)). This penalizes panels whose predictions are independent of key biological processes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Multi-Omics Integration Workflows

| Item / Solution | Provider Example | Function in Workflow |

|---|---|---|

| TMTpro 16plex Label Reagent Set | Thermo Fisher Scientific | Multiplexed isobaric labeling for quantitative proteomics of up to 16 samples simultaneously, enabling cohort-wide profiling. |

| Chromium Single Cell 3' Reagent Kits | 10x Genomics | Enables generation of single-cell transcriptomic data, building cell-type-specific networks for refined biomarker discovery. |

| Human Phospho-Kinase Array Kit | R&D Systems | Multiplexed immunoblotting to profile activity/phosphorylation of key signaling pathway nodes, validating computational predictions. |

| Cell Signaling Pathway Antibody Sampler Kits | Cell Signaling Technology | Collections of validated antibodies for Western blot analysis of proteins in a specific pathway (e.g., AKT/mTOR, apoptosis). |

| Metabolon Discovery HD4 Platform | Metabolon | Standardized, global metabolomics profiling service, providing quantitative data for integration with other omics layers. |

| STRING Database & API | EMBL | Source of known and predicted protein-protein interactions, critical for building prior-knowledge networks. |

| Reactome Knowledgebase & API | OICR, NYU, EBI | Curated pathway database used for functional annotation and pathway activity analysis. |

Mandatory Visualizations

Diagram 1: Multi-omics data integration workflow for GA biomarker discovery.

Diagram 2: Key PI3K-AKT-mTOR signaling pathway with common genomic alterations.

Within systems biology research for biomarker discovery, Genetic Algorithms (GAs) have evolved from niche optimization tools to critical components in deciphering high-dimensional omics data. This application note details current protocols leveraging GAs for identifying predictive biomarker panels and modeling therapeutic response within precision medicine initiatives.

Application Note 1: GA-Driven Multi-Omics Biomarker Panel Optimization

Objective: To identify a minimal, highly predictive biomarker panel from integrated transcriptomics and proteomics data for patient stratification in non-small cell lung cancer (NSCLC). Background: The integration of disparate, high-dimensional data sources presents a combinatorial challenge. GAs efficiently navigate this search space to find optimal feature subsets that maximize predictive accuracy while minimizing panel size for clinical translation.

Table 1: Performance Metrics of GA-Optimized vs. Conventional Biomarker Panels

| Panel Type | Number of Features (Biomarkers) | Cross-Validated AUC (Mean ± SD) | Computational Time (Hours) |

|---|---|---|---|

| GA-Optimized Integrated Panel | 12 | 0.94 ± 0.03 | 4.5 |

| Transcriptomics-Only (T-test filter) | 48 | 0.87 ± 0.05 | 0.2 |

| Proteomics-Only (LASSO) | 32 | 0.89 ± 0.04 | 1.1 |

| Random Forest Feature Importance (Top 20) | 20 | 0.91 ± 0.04 | 3.8 |

Protocol 1: GA Workflow for Multi-Omics Feature Selection

- Data Preprocessing & Encoding: Independently normalize RNA-seq (FPKM) and mass spectrometry proteomics (log2 intensity) data from matched patient samples (n=250). Encode a candidate solution (chromosome) as a binary vector of length d (total unique features), where '1' indicates feature selection.

- Fitness Function Definition: Define fitness F as: F(S) = 0.7 * AUC(S) + 0.3 * (1 - (|S| / d)) where S is the selected feature subset, AUC(S) is the area under the ROC curve from a support vector machine (SVM) classifier using 5-fold cross-validation, and |S| is the subset size. This balances accuracy and parsimony.

- Algorithm Execution:

- Population: Initialize 100 random binary chromosomes.

- Selection: Perform tournament selection (size=3).

- Crossover: Apply uniform crossover with a probability of 0.8.

- Mutation: Apply bit-flip mutation with a probability of 0.01 per gene.

- Termination: Run for 100 generations or until fitness plateau (no improvement for 20 gens).

- Validation: Apply the final selected feature subset to a held-out independent validation cohort (n=80). Perform statistical analysis (e.g., Kaplan-Meier survival curves) based on GA-derived patient clusters.

Visualization 1: GA for Biomarker Discovery Workflow

Application Note 2: GA-Informed Boolean Network Modeling of Drug Response

Objective: To reconstruct a patient-specific Boolean network model of the PI3K/AKT/mTOR signaling pathway that predicts sensitivity to targeted inhibitors. Background: GAs optimize the structure (logical rules) of Boolean networks to fit dynamic phosphoproteomics data, creating executable models for in silico drug testing.

Protocol 2: Calibrating Patient-Specific Logic Models with GAs

- Network Initialization: Define a prior knowledge network (PKN) with key signaling entities (nodes: e.g., EGFR, PI3K, AKT, mTOR, PTEN) and known activating/inhibiting interactions (edges).

- Rule Encoding & Fitness: Encode a chromosome as a concatenated string defining the Boolean logic rule (AND/OR/NOT combinations) for each node. Define fitness as the negative mean squared error between simulated node activity (after perturbation) and time-course phospho-protein data (RPPA) from primary tumor cells.

- GA Execution for Model Fitting:

- Use a steady-state GA with a population of 50 candidate rule sets.

- Employ two-point crossover (P=0.7) and a custom mutation operator that swaps logic gates (P=0.05).

- Introduce an elitism strategy, preserving the top 5 solutions each generation.

- In Silico Perturbation & Prediction: Run the top-fitted model with key nodes (e.g.,

mTOR = OFF) to simulate drug action. Predict the phenotypic outcome (e.g.,Apoptosis = ON). Validate predictions via in vitro dose-response assays in matched cell lines.

Visualization 2: Boolean Network Calibration with GA

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for GA-Driven Biomarker Research

| Item | Function in Protocol | Example Vendor/Catalog |

|---|---|---|

| Multi-Omics Data Source | Provides integrated transcriptomic & proteomic input for GA feature selection. | TCGA (public), CPTAC (public), or commercial biobank datasets. |

| High-Throughput Sequencing Reagents | Generate transcriptomics input data (RNA-seq). | Illumina TruSeq Stranded mRNA Kit. |

| TMTpro 18-Plex Mass Tag Kit | Enables multiplexed, quantitative proteomics for cohort analysis. | Thermo Fisher Scientific, Cat# A44520. |

| Phospho-AKT (Ser473) ELISA Kit | Validates pathway activity predictions from Boolean network models. | Cell Signaling Technology, Cat# 7160. |

| Cell Viability Assay (ATP-based) | Measures in vitro drug response to validate GA model predictions. | Promega CellTiter-Glo, Cat# G7571. |

| GA/ML Software Library | Provides optimized algorithms for implementing custom fitness functions. | Python: DEAP, scikit-allel; R: GA package. |

| Boolean Network Simulation Tool | Executes logic models for simulation and in silico perturbation. | PyBoolNet, CellCollective. |

GAs now serve as a cornerstone computational strategy in precision medicine, enabling the distillation of complex biological data into actionable insights. By providing robust protocols for biomarker panel optimization and dynamic network modeling, GAs directly address the challenges of patient stratification and therapy prediction, accelerating the translation of systems biology research into clinical applications.

From Code to Biology: A Step-by-Step Workflow for Implementing Genetic Algorithms in Biomarker Studies

Application Notes

In the context of a Genetic Algorithm (GA) for biomarker discovery within systems biology, the initial and critical step is the accurate and efficient representation of complex biological entities—genes, proteins, and metabolites—as computational chromosomes. This encoding must preserve biological meaning while enabling evolutionary operators like crossover and mutation.

Key Challenges: Heterogeneity of data types (sequences, concentrations, network positions), varying scales, missing values, and high dimensionality.

Core Principles:

- Standardized Identifiers: Use curated databases (e.g., Ensembl for genes, UniProt for proteins, HMDB for metabolites) to map entities to unique IDs, ensuring reproducibility.

- Normalization: Apply techniques like Z-score or Min-Max scaling to concentration/expression data to prevent bias from magnitude differences.

- Dimensionality Pre-processing: Prior to chromosome encoding, techniques like Principal Component Analysis (PCA) or feature selection based on variance can reduce search space complexity for the GA.

- Chromosome Structure: A hybrid or multi-part chromosome is often required, with distinct segments for different entity types or feature representations.

Table 1: Common Data Types and Encoding Strategies for Biomarker Candidates

| Biological Entity | Primary Data Type | Typical Source | Recommended Encoding for GA Chromosome | Normalization Method |

|---|---|---|---|---|

| Gene | Expression Level (RNA-seq, microarray) | NCBI GEO, ArrayExpress | Real-valued vector (expression per sample) | TPM (Transcripts Per Million), then Z-score |

| Protein | Abundance (Mass Spectrometry) | PRIDE Archive | Real-valued vector (intensity per sample) | Log2 transformation, then Median Centering |

| Metabolite | Concentration (NMR, LC-MS) | Metabolights | Real-valued vector (peak area per sample) | Pareto Scaling, Auto-scaling |

| Genetic Variant | SNP Presence/Absence | dbSNP, 1000 Genomes | Binary bit (0=ref, 1=alt) or integer (for dosage) | Not applicable |

| Pathway Membership | Binary / Participation Score | KEGG, Reactome | Binary string (1=member, 0=non-member) or weighted real value | Not applicable |

Table 2: Example Chromosome Encoding Schemes

| Scheme Name | Structure | Description | Best For | ||

|---|---|---|---|---|---|

| Simple Concatenated | [Gene1][Gene2]...[Protein1][Protein2]...[Metab1]... |

All features encoded as real numbers and concatenated. | Small, homogeneous datasets. | ||

| Multi-Part (Segmented) | `[Gene Vector] | [Protein Vector] | [Metabolite Vector]` | Distinct chromosome segments for each data type. Allows type-specific genetic operators. | Integrative multi-omics studies. |

| Bitmask Selection | [1001101011] |

Each bit represents inclusion (1) or exclusion (0) of a pre-defined biomarker candidate from a master list. | Large-scale screening and feature selection. | ||

| Weighted Graph-Based | [Node_ID_1][Weight_1][Node_ID_2][Weight_2]... |

Represents a sub-network. Genes/proteins as nodes, interaction weights as alleles. | Network-based biomarker discovery. |

Experimental Protocols

Protocol 1: Pre-processing RNA-Seq Data for GA Encoding

Objective: Transform raw RNA-Seq count data into a normalized real-valued vector suitable for a GA chromosome.

Materials: High-performance computing environment (e.g., Linux server), R/Python, raw FASTQ or count matrix data.

Procedure:

- Quality Control & Alignment: Use FastQC for quality assessment. Align reads to a reference genome (e.g., GRCh38) using STAR aligner.

- Generate Count Matrix: Use featureCounts to summarize gene-level counts.

- Normalization: Load count matrix into R using

DESeq2oredgeR.- Apply

varianceStabilizingTransformation(DESeq2) or calculatelogCPM(edgeR) to stabilize variance across the mean.

- Apply

- Gene Identifier Mapping: Use

biomaRt(R) ormygene(Python) to map Ensembl IDs to official gene symbols. Resolve duplicates by keeping the highest expressed variant. - Final Vector Creation: For each sample, the chromosome segment is a vector

V = [N_gene1, N_gene2, ..., N_geneN], where N is the normalized, transformed expression value. This vector constitutes the "gene expression" segment of the GA chromosome for that sample or population.

Protocol 2: Encoding a Multi-Omics Biomarker Panel as a Segmented Chromosome

Objective: Create a unified chromosome representing a candidate biomarker panel derived from transcriptomic, proteomic, and metabolomic assays on the same cohort.

Materials: Normalized datasets (as per Protocol 1 for RNA-seq, with analogous steps for proteomics/metabolomics), a master list of integrated features.

Procedure:

- Feature Selection (Pre-GA): Perform univariate statistical testing (t-test, ANOVA) on each omics dataset separately to identify top

kcandidate features (e.g., genes, proteins, metabolites) associated with the phenotype. Combine to form a master list ofMfeatures. - Segmented Encoding:

- Segment 1 (Genes): Extract normalized expression values for the selected

k_genesfrom the RNA-seq matrix. Encode as a real-valued vector of lengthk_genes. - Segment 2 (Proteins): Extract normalized abundance values for the selected

k_proteins. Encode as a real-valued vector. - Segment 3 (Metabolites): Extract normalized concentrations for the selected

k_metabolites. Encode as a real-valued vector.

- Segment 1 (Genes): Extract normalized expression values for the selected

- Chromosome Assembly: Concatenate the three segments into a single chromosome:

Chromosome = [Segment1][Segment2][Segment3]. - GA Initialization: A population of such chromosomes is created, where each chromosome represents a potential multi-omics biomarker signature, with values randomly perturbed within biologically plausible ranges around the mean observed value.

Visualization

Title: Biomarker Data Encoding for Genetic Algorithm Workflow

Title: Multi-Part Chromosome Structure with Crossover Point

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Multi-Omics Data Generation

| Item | Function in Biomarker Discovery | Example Product/Kit |

|---|---|---|

| Total RNA Isolation Kit | Extracts high-quality, intact RNA from tissue or biofluids for transcriptomic profiling (RNA-seq). | Qiagen RNeasy Mini Kit, TRIzol Reagent. |

| Protein Lysis Buffer | Efficiently lyses cells/tissues while maintaining protein integrity and activity for downstream mass spectrometry. | RIPA Buffer with protease/phosphatase inhibitors. |

| Metabolite Extraction Solvent | Quenches metabolism and extracts a broad range of polar and non-polar metabolites for LC-MS/NMR. | 80% Methanol/Water (v/v, -20°C). |

| Next-Generation Sequencing Library Prep Kit | Prepares RNA or DNA libraries for sequencing, enabling gene expression or variant detection. | Illumina TruSeq Stranded mRNA Kit. |

| Isobaric Label Reagents (TMT/iTRAQ) | Allows multiplexed quantitative proteomics by labeling proteins from different samples with mass tags. | Thermo Scientific TMTpro 16plex. |

| Internal Standard Mix for Metabolomics | A set of stable isotope-labeled metabolites added to samples for normalization and quantification in MS. | Cambridge Isotope Laboratories MSK-CUSTOM. |

| Quality Control Reference Sample | A pooled sample from all study groups run repeatedly to monitor instrument performance and data reproducibility. | Commercially available human reference plasma (e.g., NIST SRM 1950). |

Within Genetic Algorithm (GA) frameworks for biomarker discovery, the fitness function is the critical optimization engine. It must quantitatively evaluate candidate biomarker panels against a triad of often-competing criteria: robust statistical performance, mechanistic biological relevance, and tangible clinical utility. Failure to balance these elements results in panels that are either statistically overfit, biologically uninterpretable, or clinically impractical. This protocol details the construction and implementation of a multi-objective fitness function for GA-driven biomarker identification in systems biology.

Core Fitness Function Components & Quantitative Benchmarks

A weighted multi-objective function is recommended: F = w₁S + w₂B + w₃C, where S=Statistical Power, B=Biological Relevance, and C=Clinical Utility. Weights (w₁, w₂, w₃) are tuned per project goals.

Table 1: Quantitative Metrics for Fitness Function Components

| Component | Primary Metrics | Target Benchmarks (Typical) | Measurement Protocol |

|---|---|---|---|

| Statistical Power (S) | AUC-ROC; Matthews Correlation Coefficient (MCC); p-value (corrected). | AUC > 0.85; MCC > 0.6; p < 0.01. | See Protocol 3.1. |

| Biological Relevance (B) | Pathway enrichment score; Protein-protein interaction density; Known gene-disease association score. | Enrichment FDR < 0.05; PPI density > 75th percentile. | See Protocol 3.2. |

| Clinical Utility (C) | Assay cost index; Analytical time score; FDA/EMA biomarker classification alignment. | Cost < \$500/sample; Turnaround < 8hrs. | See Protocol 3.3. |

Detailed Experimental & Computational Protocols

Protocol 3.1: Assessing Statistical Power

Objective: Quantify the diagnostic/prognostic performance of a candidate biomarker panel. Materials: Hold-out validation cohort dataset (RNA-seq, proteomics, etc.), clinical phenotype labels. Procedure:

- Model Training: Train a classifier (e.g., Random Forest, SVM) using the candidate biomarkers on the training set.

- Validation: Apply the model to the independent hold-out validation set.

- Performance Calculation:

- Calculate AUC-ROC using the

pROCR package orscikit-learnin Python. - Compute MCC from the confusion matrix: MCC = (TP×TN - FP×FN) / √((TP+FP)(TP+FN)(TN+FP)(TN+FN)).

- Perform permutation testing (1000 iterations) to obtain a false-discovery-rate (FDR) corrected p-value for the observed AUC.

- Calculate AUC-ROC using the

- Score Integration: Convert AUC, MCC, and -log10(FDR) to Z-scores and combine into a composite S score.

Protocol 3.2: Assessing Biological Relevance

Objective: Evaluate the mechanistic plausibility of the biomarker panel. Materials: Candidate gene/protein list, pathway databases (KEGG, Reactome), PPI networks (STRING, BioGRID). Procedure:

- Pathway Enrichment Analysis:

- Use

clusterProfiler(R) org:ProfilerAPI to test for over-representation in curated pathways. - Record the combined enrichment score (-log10(FDR) × enrichment ratio) for the top 3 significant pathways.

- Use

- Network Coherence Analysis:

- Submit the candidate list to the STRING DB API to retrieve interaction scores.

- Calculate the PPI density: (observed interactions) / (possible interactions) within the list.

- Compare this density to 1000 randomly drawn same-sized lists from the background genome to obtain a percentile rank.

- Score Integration: Combine normalized enrichment score and PPI density percentile into a composite B score.

Protocol 3.3: Assessing Clinical Utility

Objective: Gauge the translational feasibility of the biomarker panel. Materials: Assay cost models, regulatory guideline documents (FDA-NIH Biomarker Working Group BEST definitions). Procedure:

- Assay Feasibility Scoring:

- Map each biomarker to a detection modality (e.g., ELISA, qPCR, LC-MS/MS).

- Using a predefined cost matrix, calculate a total estimated cost per sample.

- Assign a cost score: Cost Score = 1 - (cost / costmax) where costmax is a threshold (e.g., \$1000).

- Regulatory Alignment Check:

- Classify the primary intended use of the panel (e.g., Diagnostic, Prognostic, Predictive, Safety).

- Verify alignment with BEST definitions. Assign a binary score (1 for full alignment, 0.5 for partial, 0 for misalignment).

- Turnaround Time Estimate: Based on the chosen assay platform, estimate total hands-on time. Generate a time score similar to the cost score.

- Score Integration: Compute a weighted average of Cost Score, Time Score, and Regulatory Score to yield C.

Visualization of the Fitness Evaluation Workflow

Title: Fitness Function Evaluation Workflow for Biomarker Panels

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Resources for Fitness Function Implementation

| Item / Resource | Function in Protocol | Example Product / Database |

|---|---|---|

| Validation Cohort Biospecimens | Independent sample set for unbiased statistical validation (Protocol 3.1). | Commercial Biobanks (e.g., Discovery Life Sciences), INDI/ADNI for neuro. |

| Pathway Analysis Software | Perform over-representation and gene set enrichment analysis (Protocol 3.2). | clusterProfiler (R), g:Profiler (web), Ingenuity Pathway Analysis (IPA). |

| Protein-Protein Interaction Database | Retrieve network data for coherence scoring (Protocol 3.2). | STRING database, BioGRID, Human Protein Reference Database (HPRD). |

| Clinical Assay Cost Model Matrix | Pre-built spreadsheet mapping biomarkers to assay costs and timelines (Protocol 3.3). | Custom-built based on vendor quotes (e.g., Thermo Fisher, Roche, Qiagen). |

| BEST (Biomarkers, EndpointS, Tools) Glossary | Reference for consistent biomarker classification and regulatory alignment (Protocol 3.3). | FDA-NIH Biomarker Working Group "BEST" Resource. |

| Multi-Objective Optimization Library | Algorithmic implementation of the weighted fitness function and GA. | DEAP (Python), GA (R package), custom Python/Matlab scripts. |

Application Notes

In the context of a thesis on Genetic Algorithms (GAs) for Biomarker Identification in Systems Biology Research, the selection, crossover, and mutation operators must be specifically tailored to handle the unique challenges of biological feature sets. These datasets are characterized by high dimensionality, small sample sizes (n << p), significant noise, and complex, non-linear interactions among features (e.g., genes, proteins, metabolites).

Key Challenges & Tailored Solutions:

- High Dimensionality & Sparsity: Standard operators risk losing critical, weakly expressed but informative features. Solutions include fitness-aware operators and specialized encodings.

- Epistasis & Redundancy: Biological features often function in pathways. Operators must preserve potentially useful combinations of features that exhibit synergistic effects.

- Interpretability & Biological Relevance: The final feature subset must be biologically interpretable, not just statistically predictive. Operators should integrate pathway or protein-protein interaction knowledge.

Data Presentation

Table 1: Comparison of Tailored GA Operators for Biological Feature Selection

| Operator Type | Standard Form | Tailored Form for Biological Features | Rationale & Impact on Biomarker Discovery | ||||

|---|---|---|---|---|---|---|---|

| Selection | Roulette Wheel, Tournament | Elitist-Conscious Ranked Selection: Combines strict elitism (top 10-15% pass automatically) with ranked selection for the rest. | Preserves high-fitness candidates (potentially optimal biomarker panels) from generation to generation, accelerating convergence in a noisy search space. | ||||

| Crossover | Single-point, Uniform | Mask-Based Crossover with Interaction Preservation: Uses a randomly generated mask to swap feature blocks. Weighted towards preserving features co-expressed in known pathways (e.g., KEGG, Reactome). | Increases the probability that biologically relevant feature combinations (e.g., genes in a signaling cascade) are inherited together, promoting more interpretable solutions. | ||||

| Mutation | Bit-flip (fixed prob.) | Adaptive, Two-Tier Mutation: 1) Global Adaptive Rate: Decreases as generations increase. 2) Feature-Specific Toggle: Lower probability for features in high-scoring pathways; higher for isolated features with moderate importance. | Balances exploration and exploitation. Helps escape local optima early while fine-tuning promising biomarker sets later. Respects biological module structure. | ||||

| Fitness Function | Classification Accuracy | Composite Fitness: `F = α(AUC) + β(1 - | S | /N) + γ*(Pathway Enrichment Score)` where α, β, γ are weights, | S | is subset size, N is total features. | Explicitly optimizes for predictive power (AUC), parsimony, and biological coherence simultaneously, leading to more robust and translatable biomarker signatures. |

Experimental Protocols

Protocol 1: Implementing and Testing a Tailored GA for Transcriptomic Biomarker Discovery

Objective: To identify a minimal, biologically coherent gene signature predictive of metastatic progression in breast cancer RNA-seq data.

Materials & Input Data:

- Dataset: TCGA-BRCA RNA-seq dataset (normalized counts) with metastatic relapse labels.

- Pathway Database: Curated KEGG signaling pathways (e.g., PI3K-Akt, p53 signaling) as an adjacency matrix.

- Pre-processing: Variance-stabilizing transformation, removal of low-count genes.

Procedure:

- Encoding: Initialize a population of 100 individuals. Each individual is a binary vector of length N (all genes), where '1' indicates the gene is selected.

- Fitness Evaluation: For each individual (gene subset):

a. Perform 5-fold cross-validation using a Support Vector Machine (SVM) classifier. Calculate the mean AUC.

b. Calculate parsimony term:

1 - (subset size / 500). c. Calculate Pathway Enrichment Score using a hypergeometric test against the KEGG matrix. d. Compute composite fitness:F = 0.7*AUC + 0.2*Parsimony + 0.1*Enrichment. - Selection: Select the top 10 individuals as elites. Use ranked selection (linear ranking) to choose 90 parents from the entire population for breeding.

- Crossover: Pair parents randomly. For each pair, generate a crossover mask. If two '1's in the mask correspond to genes known to interact in the pathway database, extend the mask to include the entire interacting partner set with 80% probability. Perform uniform crossover using the modified mask.

- Mutation: Apply adaptive mutation. Initial mutation rate = 0.05, decaying by 5% per generation. If a gene selected for mutation belongs to a pathway highly enriched in the current population, its mutation probability is halved.

- Replacement: Form the new generation from the 10 elites and 90 offspring. Run for 100 generations.

- Validation: Take the final best gene set and evaluate its performance on a held-out validation set (e.g., METABRIC dataset).

Mandatory Visualization

Diagram Title: Tailored Genetic Algorithm Workflow for Biomarker ID

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Implementing Tailored GA Biomarker Discovery

| Item | Function in the Protocol | Example Product/Resource |

|---|---|---|

| High-Dimensional Omics Data | The core input for feature selection. Provides the quantitative feature matrix (genes, proteins, etc.). | TCGA/ GEO Datasets (Public Repositories), In-house RNA-seq/ Proteomics Data. |

| Biological Network Database | Provides prior knowledge on feature interactions (e.g., pathways, PPI) to guide crossover and mutation. | KEGG, Reactome, STRING, MSigDB. Used to create the interaction mask. |

| Machine Learning Library | Enables the fitness evaluation via model training and validation (e.g., calculating AUC). | scikit-learn (Python), caret (R). For implementing the SVM/classifier in cross-validation. |

| High-Performance Computing (HPC) Cluster or Cloud Service | Facilitates the computationally intensive evaluation of thousands of candidate subsets across generations. | AWS EC2, Google Cloud Compute Engine, SLURM-based HPC cluster. |

| Specialized GA/Evolutionary Computation Framework | Provides the foundation for implementing custom selection, crossover, and mutation operators. | DEAP (Python), GA (R package), custom Python code using NumPy. |

Application Notes

The integration of multi-omics data with Genetic Algorithms (GAs) provides a powerful, non-hypothesis-driven approach for identifying robust biomarker panels. This step moves beyond theoretical optimization to solve pressing challenges in translational medicine.

Case Study 1: Breast Cancer Subtyping via Transcriptomic Data GAs outperform conventional clustering methods by simultaneously selecting gene subsets and optimizing cluster boundaries. A recent application analyzed TCGA RNA-seq data to redefine subtypes beyond the classic PAM50 classification. The GA-identified 75-gene signature stratified patients into groups with significant differences in 5-year overall survival, uncovering a high-risk subgroup within the traditionally "low-risk" Luminal A cohort. This allows for more personalized adjuvant therapy decisions.

Case Study 2: Prognosis in Alzheimer’s Disease Using Proteomic & Imaging Data Predicting progression from Mild Cognitive Impairment (MCI) to Alzheimer’s Disease (AD) is critical. A GA-based model integrated CSF proteomics (e.g., Aβ42, p-tau) and MRI hippocampal volumetry. The algorithm identified a minimal panel of 6 biomarkers, which, when combined into a weighted score, yielded a superior prognostic AUC compared to clinical assessments alone. This facilitates earlier intervention and cohort enrichment for clinical trials.

Case Study 3: Predicting Response to EGFR Inhibitors in Lung Cancer Resistance to EGFR tyrosine kinase inhibitors (e.g., Osimertinib) remains a hurdle. A GA was applied to genomic mutation data and baseline clinical variables from patients. The evolved rule set highlighted co-mutations in TP53 and specific tumor mutational burden (TMB) ranges as key negative predictors of progression-free survival (PFS). This model is being validated prospectively to guide combination therapy strategies.

Quantitative Data Summary Table 1: Performance Metrics of GA-Driven Biomarker Models Across Case Studies

| Case Study | Data Type | GA-Identified Panel Size | Key Performance Metric | Comparative Advantage (vs. Standard) |

|---|---|---|---|---|

| Breast Cancer Subtyping | RNA-seq (TCGA) | 75 genes | Hazard Ratio (HR) = 2.3 [95% CI: 1.7-3.1] for high-risk group | Identified high-risk subset within Luminal A (PAM50 missed) |

| AD Prognosis | CSF Proteomics, MRI | 6 biomarkers | AUC = 0.89 for MCI-to-AD conversion | Outperformed clinical score (AUC=0.72) |

| EGFRi Response | WGS, Clinical Vars | 3-feature rule set | Median PFS: 16 vs. 8 months (Predicted Sensitive vs. Resistant) | Integrated TP53 status and TMB into actionable rule |

Experimental Protocols

Protocol 1: GA for Multi-Omic Cancer Subtype Discovery Objective: To identify a minimal gene expression signature for novel cancer subtyping.

- Data Preprocessing: Download RNA-seq (FPKM) data and clinical survival metadata from a repository (e.g., TCGA). Perform log2 transformation, quantile normalization, and batch correction.

- GA Initialization: Encode a chromosome as a binary vector of length N (total genes), where '1' indicates gene selection. Initialize a population of 200 random chromosomes.

- Fitness Evaluation: For each chromosome (gene subset):

- Perform k-means clustering (k=4) on patients using the selected genes.

- Calculate the fitness function: F = C-index (survival) + (1 - Davis-Bouldin Index) - (λ * number of selected genes). Optimize for prognostic separation and cluster compactness.

- Evolution: Run for 1000 generations. Apply tournament selection, uniform crossover (rate=0.8), and bit-flip mutation (rate=0.01).

- Validation: Apply the final gene panel to an independent validation cohort (e.g., METABRIC). Confirm subtype reproducibility and survival differences using Kaplan-Meier log-rank test.

Protocol 2: GA for Integrative Prognostic Biomarker Panel Identification Objective: To derive a weighted prognostic score from heterogeneous data types.

- Feature Cohort Assembly: For each patient, collate continuous (CSF protein levels, imaging measures) and categorical (APOE ε4 status) data into a unified feature matrix. Handle missing values using KNN imputation.

- Chromosome Encoding: Use a real-valued encoding where each gene represents the weight of a specific biomarker. Include an additional gene for the score threshold.

- Fitness Function: The fitness is the Area Under the ROC Curve (AUC) for predicting the clinical endpoint (e.g., AD conversion in 24 months) using the simple rule: IF (weighted sum of selected biomarkers > threshold) THEN "Progressor".

- GA Execution: Evolve a population of 500 individuals for 500 generations using rank-based selection, simulated binary crossover, and polynomial mutation.

- Panel Finalization: Select the highest-fitness chromosome. Retain only biomarkers with an absolute weight > 0.1. Recalculate the optimal threshold on the training set.

Visualizations

GA Biomarker Discovery Workflow

GA Links Biomarkers to Drug Response

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Biomarker Validation

| Reagent / Material | Function in Validation Pipeline |

|---|---|

| Multiplex Immunoassay Panels (e.g., Olink, MSD) | Validates protein biomarker candidates from discovery phases in serum/CSF with high sensitivity and minimal sample volume. |

| Targeted RNA-seq Panels (e.g., Illumina TruSeq) | Enables cost-effective, deep sequencing of GA-identified RNA biomarker panels across large patient cohorts. |

| CRISPR Screening Libraries (e.g., Kinome-wide) | Functionally validates the role of candidate genetic biomarkers in disease-relevant cellular models of drug response/resistance. |

| Digital PCR Assays (ddPCR) | Provides absolute quantification of low-abundance transcriptional biomarkers or circulating tumor DNA with high precision for clinical translation. |

| Patient-Derived Organoid (PDO) Models | Serves as an ex vivo platform to test drug response predictions generated by the GA model on living patient-derived tissue. |

| Cloud Computing Credits (AWS, GCP) | Essential for running computationally intensive GA iterations on large, multi-omic datasets without local infrastructure limits. |

Application Notes

The integration of Genetic Algorithm (GA)-derived biomarker panels into downstream biological interpretation is a critical validation step within a systems biology thesis. This phase translates computationally identified features (e.g., gene/protein expression levels) into actionable biological insights, connecting algorithmic performance to mechanistic understanding. The primary challenge lies in overcoming the "black box" nature of GA outputs by rigorously testing their functional coherence and relevance to disease pathophysiology.

Successful integration requires a multi-layered analytical approach. First, the feature panel must be mapped onto established biological databases to identify over-represented pathways and functions. Subsequently, constructing interaction networks reveals the relational context of biomarkers, distinguishing between central drivers and peripheral correlates. This process not only validates the GA results but also generates novel hypotheses for experimental follow-up, creating a closed-loop between computation and wet-lab research. For drug development professionals, this step is paramount for prioritizing targets and understanding potential mechanism-of-action or off-target effects.

Protocols

Protocol 1: Functional Enrichment Analysis of GA-Derived Biomarker Panels

Objective: To identify statistically over-represented biological pathways, Gene Ontology (GO) terms, and disease associations within the feature panel identified by the Genetic Algorithm.

Materials:

- GA-optimized feature list (e.g., 150 gene Entrez IDs).

- High-performance computing workstation with R (v4.3+) or Python 3.10+.

- Enrichment analysis software: clusterProfiler R package or g:Profiler web tool/g:Profiler API.

Procedure:

- Data Preparation: Format the GA feature list as a plain text file of official gene symbols or stable identifiers (Ensembl, Entrez).

- Background Definition: Compile a comprehensive background list representing all genes/proteins assayed in the original omics study (e.g., all ~20,000 genes on the microarray or in the mass spectrometry database).

- Statistical Testing:

- Using clusterProfiler (R): Execute the

enrichGO,enrichKEGG, andenrichDOfunctions. SetpvalueCutoff = 0.05,qvalueCutoff = 0.1, andpAdjustMethod = "BH"(Benjamini-Hochberg). - Using g:Profiler (Web/API): Submit the feature and background lists. Select sources: GO:MF, GO:BP, GO:CC, KEGG, Reactome, WikiPathways. Set significance threshold to

g:SCS(algorithmic).

- Using clusterProfiler (R): Execute the

- Result Interpretation: Filter results for terms with adjusted p-value (FDR) < 0.05. Sort by enrichment ratio (Gene Ratio / Background Ratio). Manually review top 20 terms for biological plausibility in the disease context.

Quantitative Output Example: Table 1: Top Enriched Pathways from a Hypothetical GA-Derived Gene Panel (n=150) in Colorectal Cancer.

| Term Source | Pathway/Term Name | Gene Count | Background Count | Enrichment Ratio | Adjusted p-value |

|---|---|---|---|---|---|

| KEGG | Pathways in cancer | 18 | 530 | 4.8 | 3.2e-08 |

| Reactome | Cell Cycle Mitotic | 15 | 320 | 6.5 | 1.5e-07 |

| GO:BP | Wnt signaling pathway | 12 | 150 | 11.2 | 4.8e-06 |

| WikiPathways | PI3K-Akt signaling | 10 | 350 | 4.0 | 0.0012 |

Protocol 2: Protein-Protein Interaction (PPI) Network Construction and Analysis

Objective: To visualize and analyze the interconnectivity of the GA-identified biomarker panel, identifying hub genes and functional modules.

Materials:

- GA feature list.

- STRING database (string-db.org) or BioGRID API.

- Network analysis tools: Cytoscape (v3.10+), igraph R package, or NetworkX Python library.

Procedure:

- Network Fetching: Input the feature list into the STRING database. Set a minimum interaction score (e.g., 0.700, high confidence). Download the network file (TSV format) and the corresponding STRING identifiers.

- Network Import and Pruning: Import the TSV file into Cytoscape. Remove disconnected nodes (optional, based on thesis question). Apply a force-directed layout (e.g., Prefuse Force Directed or edge-weighted Spring-Electric).

- Topological Analysis: Use the Cytoscape

NetworkAnalyzertool to calculate key node metrics: Degree, Betweenness Centrality, and Closeness Centrality. Export this attribute table. - Module Detection: Apply the

MCODEapp in Cytoscape to identify densely connected clusters (parameters: Degree Cutoff=2, Node Score Cutoff=0.2, K-Core=2, Max Depth=100). Annotate each cluster by performing separate enrichment analyses on its member genes (see Protocol 1).

Quantitative Output Example: Table 2: Top Hub Genes from GA-Derived PPI Network Analysis.

| Gene Symbol | Degree | Betweenness Centrality | Closeness Centrality | MCODE Cluster |

|---|---|---|---|---|

| TP53 | 42 | 0.215 | 0.588 | 1 |

| AKT1 | 38 | 0.187 | 0.562 | 1 |

| MYC | 35 | 0.152 | 0.545 | 2 |

| EGFR | 33 | 0.121 | 0.531 | 2 |

| CTNNB1 | 28 | 0.088 | 0.512 | 3 |

Visualization

Downstream Analysis Workflow after GA Biomarker Discovery

Wnt/β-Catenin Signaling Pathway (Simplified)

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Downstream Biomarker Validation.

| Reagent / Tool | Provider Examples | Primary Function in Analysis |

|---|---|---|

| clusterProfiler R Package | Bioconductor | Statistical analysis and visualization of functional profiles for genes and gene clusters. |

| g:Profiler Tool Suite | University of Tartu | Web service for functional enrichment analysis across multiple namespace databases (GO, pathways, diseases). |

| STRING Database | ELIXIR | Resource of known and predicted protein-protein interactions, with confidence scoring. |

| Cytoscape Platform | Cytoscape Consortium | Open-source software platform for complex network visualization and integrative analysis. |

| Enrichment Analysis Kits (e.g., qPCR Arrays) | Qiagen, Bio-Rad | Pre-configured assays for experimental validation of pathway-focused gene expression changes. |

| Pathway-Specific Inhibitors/Activators | Selleckchem, MedChemExpress | Chemical probes for perturbing identified pathways in vitro/in vivo to test causal biomarker roles. |

| Commercial Antibody Panels | Cell Signaling Technology, Abcam | High-specificity antibodies for Western blot or IHC validation of protein-level biomarker changes. |

Overcoming Pitfalls: Expert Strategies for Optimizing Genetic Algorithm Performance in Biomarker Research

1. Introduction Within the broader thesis on applying Genetic Algorithms (GAs) to biomarker identification in systems biology, three interconnected challenges critically impact the robustness and feasibility of research: premature convergence, overfitting, and high computational cost. These challenges are magnified in large-scale omics studies (e.g., genomics, proteomics) where the feature space (p) vastly exceeds the sample number (n), creating a "curse of dimensionality." Addressing these issues is paramount for deriving biologically valid and clinically actionable biomarkers.

2. Quantitative Data Summary

Table 1: Common Challenges & Their Impact in GA-driven Biomarker Discovery

| Challenge | Typical Manifestation | Quantitative Impact Example | Primary Consequence |

|---|---|---|---|

| Premature Convergence | Loss of population diversity early in evolution (≤50 generations). | >80% of population shares identical top 10% of features by generation 40. | Sub-optimal biomarker panel, trapped in local fitness maxima. |

| Overfitting | High classification accuracy on training data (>95%) vs. low accuracy on validation set (<65%). | Model performance drop of >30% when moving from training to independent test cohort. | Non-generalizable biomarkers, poor clinical translation. |

| Computational Cost | Fitness evaluation of a single candidate solution on full dataset. | Time per evaluation: ~2 hours (WGS data on n=10,000). Total evolution time for 500 gens: ~6 months on a single CPU. | Limited exploration of solution space, impractical for iterative research. |

Table 2: Mitigation Strategies & Associated Computational Trade-offs

| Strategy | Targeted Challenge | Reduction in Validation Error (Typical Range) | Increase in Computational Overhead |

|---|---|---|---|

| Niching/Crowding | Premature Convergence | 5-15% | Low (10-20%) |

| Regularized Fitness Functions | Overfitting | 10-25% | Negligible |

| Wrapper-Feature Filtering Hybrid | Overfitting & Cost | 15-30% | Medium (Varies with filter) |

| Parallel & Distributed GAs | Computational Cost | (Enables larger searches) | Set-up cost high, then near-linear speedup with nodes. |

| Fitness Approximation (Surrogates) | Computational Cost | Must be controlled (<5% drop vs. full eval) | Up to 70% reduction in core computation time. |

3. Application Notes & Protocols

Application Note 1: Protocol for Mitigating Premature Convergence via Deterministic Crowding Objective: To maintain population diversity and delay convergence in a GA for selecting a 50-gene biomarker panel from a 20,000-gene expression dataset.

- Initialization: Generate initial population of 200 candidate solutions (chromosomes), each a binary vector of length 20,000.

- Parent Selection: Use tournament selection (size=3) to select 100 parent pairs.

- Crossover & Mutation: Apply uniform crossover (rate=0.8) to each pair. Apply bit-flip mutation (rate=0.001 per gene).

- Crowding Replacement: For each parent pair (P1, P2) and their offspring (C1, C2): a. Calculate Hamming distance between P1-C1 and P2-C2, and between P1-C2 and P2-C1. b. Form the two pairs with the smallest distance (e.g., [P1,C1], [P2,C2]). c. Within each pair, compare fitness (e.g., AUC from an SVM). The higher-fitness individual enters the next generation.

- Iteration: Repeat steps 2-4 for 500 generations or until diversity metric stabilizes.

Application Note 2: Protocol for Preventing Overfitting with Regularized Fitness Evaluation Objective: To evolve biomarker models that generalize well to unseen data.

- Data Partition: Split dataset into Training (70%), Validation (15%), and Hold-out Test (15%) sets. Only Training data is used for fitness evaluation during evolution.

- Fitness Function Definition: For a candidate biomarker set, the fitness F is calculated as:

F = AUC_{train} - λ * |S|

Where

AUC_{train}is the 5-fold cross-validated AUC on the Training set, |S| is the number of selected features, and λ is a regularization strength (e.g., 0.001). - GA Run: Execute GA (using protocol from Note 1) for 300 generations, maximizing F.

- Validation Check: Every 20 generations, evaluate the best solution from the population on the Validation set. Terminate if validation performance plateaus or decreases for 5 consecutive checks (early stopping).

- Final Assessment: Apply the best overall solution to the held-out Test set for final performance reporting.

Application Note 3: Protocol for Managing Cost via Surrogate Model-Assisted GA Objective: To reduce the time of fitness evaluation by building a surrogate model.

- Initial Sampling: Randomly sample 500 candidate solutions from the search space. Perform full, expensive fitness evaluation (e.g., SVM with cross-validation) on each.

- Surrogate Model Construction: Train a machine learning model (e.g., Random Forest regressor) using the sampled solutions as input (feature subset encoded) and their full-evaluation fitness scores as output.

- Surrogate-Assisted Evolution: a. Run the standard GA for 50 generations, using the surrogate model to predict fitness for all new candidates. b. Every 10 generations, select the top 20 novel candidates from the GA population and perform a full fitness evaluation on them. c. Add these newly evaluated candidates to the training set and update/retrain the surrogate model.

- Final Phase: After 200 surrogate-assisted generations, run 20 final generations using only the full fitness evaluation to refine the best solutions.

4. Diagrams

Title: GA Workflow with Deterministic Crowding

Title: Preventing Overfitting with Validation & Regularization

5. The Scientist's Toolkit

Table 3: Research Reagent Solutions for GA-driven Biomarker Discovery

| Item/Category | Function in the Workflow | Example/Notes |

|---|---|---|

| High-Performance Computing (HPC) Cluster | Enables parallel fitness evaluation and distributed GA populations to tackle computational cost. | Cloud-based (AWS Batch, Google Cloud Life Sciences) or on-premise SLURM cluster. |

| Machine Learning Libraries (Scikit-learn, TensorFlow) | Provides algorithms for fitness evaluation (e.g., SVM, RF) and for building surrogate models. | Scikit-learn for standard models; TensorFlow/PyTorch for deep learning surrogates. |

| GA/Evolutionary Computation Frameworks | Offers pre-built operators (selection, crossover) and population management. | DEAP (Python), JGAP (Java), or custom code in R/Python. |

| Bioconductor/R Bioinformatics Packages | Handles omics data preprocessing, normalization, and integration prior to GA analysis. | limma, DESeq2 for RNA-seq; BiocParallel for parallelization. |

| Containerization Software (Docker/Singularity) | Ensures reproducibility of the computational environment across HPC and cloud platforms. | Container image includes OS, all software, and dependency versions. |

| Curated Public Omics Databases | Source for training data and independent validation cohorts. | TCGA, GEO, ProteomicsDB, UK Biobank. |

Within a thesis on Genetic Algorithms (GAs) for biomarker identification in systems biology, hyperparameter tuning is critical for developing robust, predictive models. This guide details the optimization of three core GA hyperparameters—population size, mutation rate, and termination criteria—to efficiently search high-dimensional omics data (e.g., transcriptomics, proteomics) for clinically relevant biomarker signatures.

Population Size: Balancing Diversity and Computational Cost

Population size dictates genetic diversity and search space exploration. In biomarker discovery, the search space comprises combinations of genes, proteins, or metabolites.

Application Notes

- Small Populations (<50): Risk premature convergence on local optima, potentially missing complex, multi-feature biomarker panels.

- Large Populations (>200): Increase computational cost per generation significantly; may slow convergence unnecessarily.

- Guideline: Population size should scale with the complexity of the feature selection problem. A common heuristic is to set it proportional to the logarithm of the total number of features in the omics dataset.

Table 1: Empirical Recommendations for Population Size in Omics Data

| Omics Data Type | Typical Feature Space Size | Recommended Population Size Range | Rationale |

|---|---|---|---|

| Targeted Metabolomics | 50 - 500 metabolites | 50 - 100 | Moderate diversity suffices for smaller search spaces. |

| Transcriptomics (Gene Expression) | 10,000 - 60,000 genes | 100 - 300 | Larger size needed to navigate vast combinatorial space. |

| Proteomics (LC-MS) | 1,000 - 10,000 proteins | 100 - 200 | Balances coverage of protein networks with compute time. |

Experimental Protocol: Determining Optimal Population Size

- Initialization: Fix mutation rate (e.g., 0.01) and termination criterion (e.g., 100 generations).

- Iterative Run: Execute the GA 10 times for each candidate population size (e.g., 50, 100, 150, 200, 300).

- Evaluation: For each run, record: a) Best fitness (e.g., AUC of biomarker panel) per generation, b) Generation at convergence, c) Total compute time.

- Analysis: Plot mean best fitness vs. generation for each size. Select the smallest size that achieves consistent, high final fitness without premature convergence.

Diagram 1: Impact of population size on GA search in biomarker discovery.

Mutation Rate: Introducing Novelty for Feature Exploration

Mutation randomly alters individuals (biomarker candidate panels), maintaining population diversity and enabling escape from local optima.

Application Notes

- Low Rate (<0.005): Limits exploration, may cause stagnation.

- High Rate (>0.1): Turns search into random walk, disrupting useful gene co-expression patterns.

- Adaptive Strategies: Mutation rate can decrease over generations (simulated annealing) or increase when population diversity drops.

Table 2: Mutation Rate Effects & Tuning Protocols

| Rate Range | Effect on Biomarker Search | Suggested Tuning Protocol |

|---|---|---|

| Very Low (0.001-0.005) | Exploitation dominant. Converges fast but may yield suboptimal, simplistic signatures. | Use for fine-tuning late-stage, high-fitness candidate panels. |

| Moderate (0.01-0.05) | Balanced exploration/exploitation. Suitable for most omics feature selection tasks. | Start at 0.02. Use a grid search, evaluating final panel cross-validation accuracy. |

| High (0.1+) | Excessive randomness. May破坏 biologically relevant multi-gene modules. | Generally avoid. Can be tested briefly in initial exploration phases. |

Experimental Protocol: Grid Search for Mutation Rate

- Setup: Fix population size (from prior step) and termination criteria.

- Grid: Define mutation rates to test: e.g., [0.005, 0.01, 0.02, 0.04, 0.08].