Overfitting in Biomedical Method Development: Prevention, Detection, and Correction for Robust Research

Overfitting poses a critical threat to the validity and reproducibility of biomedical research, especially in modern high-dimensional data settings.

Overfitting in Biomedical Method Development: Prevention, Detection, and Correction for Robust Research

Abstract

Overfitting poses a critical threat to the validity and reproducibility of biomedical research, especially in modern high-dimensional data settings. This article provides a comprehensive guide for researchers and drug development professionals on addressing overfitting throughout the method lifecycle. We first define overfitting and explore its root causes, from p-hacking to data dredging. We then detail robust methodological approaches for prevention, including cross-validation, regularization, and feature selection. The troubleshooting section focuses on detecting overfit models through performance gaps and stability tests. Finally, we cover validation best practices and comparative frameworks to ensure generalizability. The conclusion synthesizes actionable strategies to build more reliable, reproducible, and clinically translatable methods.

What is Overfitting? The Core Concepts and Root Causes in Biomedical Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model achieves >99% accuracy on my training dataset but performs near random (~50%) on the validation set. What is happening and how do I diagnose it? A1: You are experiencing classic overfitting. The model has memorized the training data, including its noise and specific patterns, rather than learning generalizable features.

- Diagnostic Protocol:

- Plot Learning Curves: Generate separate plots for training and validation loss/accuracy vs. training epochs.

- Examine the Gap: A diverging gap where training metric improves while validation metric deteriorates confirms overfitting.

- Conduct Ablation: Systematically remove/reduce model complexity (e.g., layers, units) and observe if the validation performance gap closes.

Q2: In my quantitative structure-activity relationship (QSAR) model for early-stage drug candidates, how can I ensure my validation is meaningful and not just a "lucky" split? A2: Reliable validation in method development requires robust data partitioning and external testing.

- Validation Protocol:

- Stratified Splitting: Use stratified k-fold cross-validation (e.g., k=5 or 10) based on the target variable to maintain distribution.

- Temporal/Scaffold Split: For real-world generalizability, split data by time (older compounds for training) or by molecular scaffold to test predictive power on novel chemotypes.

- Hold-Out External Test Set: Reserve 10-20% of data, completely untouched during model development and hyperparameter tuning, for a final, unbiased performance estimate.

Q3: What concrete regularization techniques are most effective for high-dimensional biological data (e.g., transcriptomics) to prevent overfitting? A3: High-dimensional, low-sample-size data is prone to overfitting. A combination of techniques is required.

- Regularization Protocol:

- L1/L2 Regularization: Apply L1 (Lasso) or L2 (Ridge) penalties to loss functions to shrink coefficients. L1 can drive feature selection to zero.

- Dropout: For neural networks, randomly "drop" a fraction (e.g., 20-50%) of neuron outputs during training to prevent co-adaptation.

- Early Stopping: Monitor validation loss during training and halt iterations when validation loss plateaus or increases for a predetermined number of epochs.

Q4: My deep learning model for microscopy image classification shows excellent validation scores, but fails on new data from a different laboratory. Is this overfitting? A4: This is a form of overfitting to the experimental conditions or data collection bias of your training set, often called "domain shift" or "lack of external validity."

- Mitigation Protocol:

- Data Augmentation: Artificially expand your training set with realistic transformations (rotation, blur, contrast adjustments, simulated staining variations).

- Multi-Source Training: Incorporate data from multiple labs, instruments, and protocols into the training set to force the model to learn invariant features.

- Domain Adaptation: Use techniques like domain adversarial training to explicitly minimize the distributional difference between your source (training) and target (new lab) data.

Table 1: Impact of Regularization Techniques on Model Generalizability (Comparative Study)

| Model Type | Dataset (Sample Size) | Training Accuracy | Validation Accuracy | Test Set Accuracy | Key Regularization Used |

|---|---|---|---|---|---|

| Dense Neural Network | Gene Expression (n=500) | 99.8% | 72.1% | 70.5% | None (Baseline) |

| Dense Neural Network | Gene Expression (n=500) | 95.2% | 88.7% | 87.9% | Dropout (0.5) + L2 |

| Random Forest | Gene Expression (n=500) | 100% | 85.3% | 84.1% | Max Depth Limitation |

| Gradient Boosting | Gene Expression (n=500) | 100% | 89.5% | 88.8% | Early Stopping (Rounds) |

| Convolutional Neural Network | Cell Imaging (n=10,000) | 99.9% | 94.0% | 75.3% | None (Baseline) |

| Convolutional Neural Network | Cell Imaging (n=10,000) | 97.5% | 95.1% | 92.8% | Augmentation + Dropout |

Table 2: Performance Decay Across Data Splits in a QSAR Model

| Data Partition | Number of Compounds | AUC-ROC | Precision | Recall | Description |

|---|---|---|---|---|---|

| Training Set (5-fold CV avg) | 8,000 | 0.95 ± 0.02 | 0.89 | 0.87 | Model development data |

| Internal Validation Set | 1,000 | 0.87 | 0.81 | 0.79 | Held-out from original source |

| Temporal Test Set | 1,000 | 0.82 | 0.75 | 0.78 | Compounds synthesized later |

| External Benchmark Set | 2,500 | 0.76 | 0.69 | 0.72 | Public data from different institution |

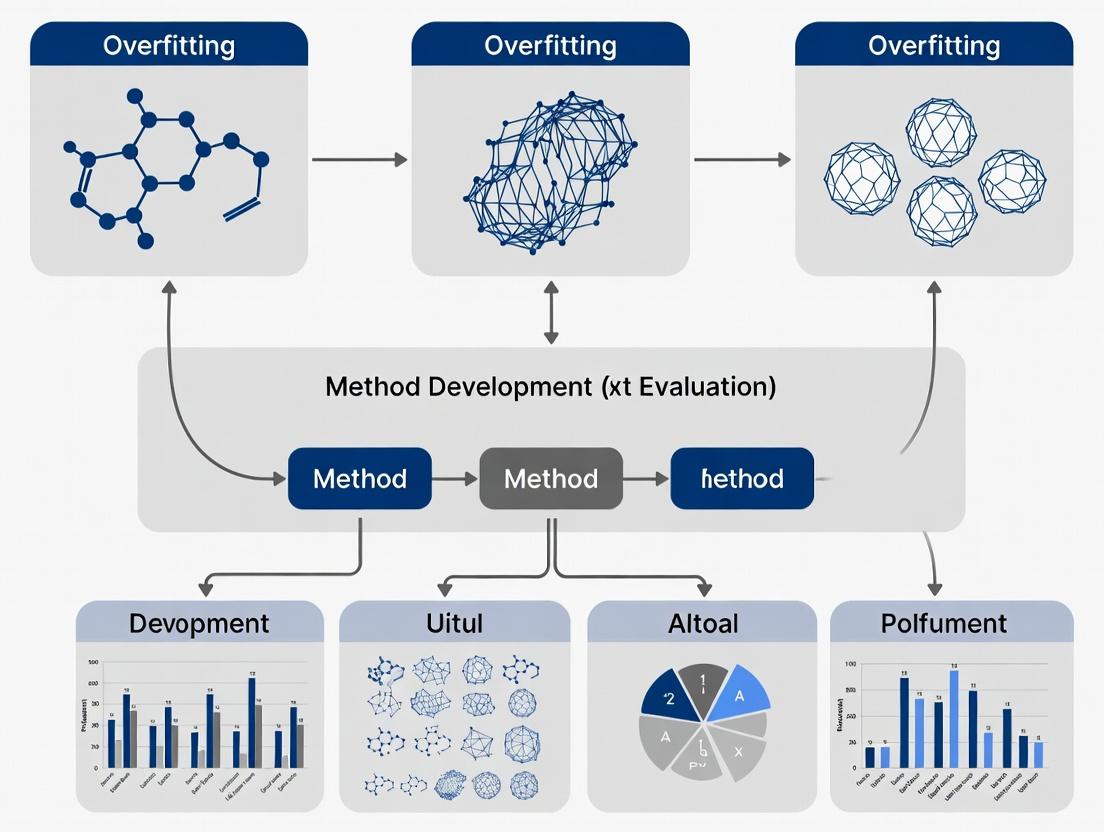

Visualizations

Diagram 1: Overfitting Diagnosis via Learning Curves

Diagram 2: Robust Validation Workflow for Method Development

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Robust ML Experimentation in Drug Development

| Item/Reagent | Function & Rationale |

|---|---|

Stratified K-Fold Splitting Module (e.g., scikit-learn StratifiedKFold) |

Ensures representative class distribution in each fold, preventing bias in cross-validation estimates. |

| L1/L2 Regularization Optimizers (e.g., AdamW, SGD with weight decay) | Optimizers with built-in weight decay explicitly penalize complex models, promoting simpler, more generalizable solutions. |

| Data Augmentation Pipeline (e.g., Albumentations, Torchvision transforms) | Simulates experimental variance (noise, rotation, scaling) to artificially expand training data and improve invariance. |

Early Stopping Callback (e.g., Keras EarlyStopping, PyTorch Lightning EarlyStopping) |

Monitors validation metric and automatically halts training when overfitting begins, preventing waste of compute resources. |

Molecular Scaffold Split Library (e.g., RDKit Bemis-Murcko scaffold generation) |

Enables splitting datasets by core molecular structure to rigorously test predictive power on novel chemotypes. |

| External Benchmark Datasets (e.g., ChEMBL, PubChemQC, MoleculeNet) | Provides completely independent, publicly available data for the final, critical test of a model's generalizability. |

| Domain Adaptation Framework (e.g., DANN - Domain-Adversarial Neural Networks) | Explicitly reduces distribution shift between source (training) and target (new lab/assay) data domains. |

Technical Support Center: Troubleshooting Guide & FAQs for Method Validation in Biomedical Research

Context: This support center addresses common pitfalls in method development and evaluation, framed within the critical thesis of addending overfitting to ensure robust, translatable research outcomes.

FAQs: Addressing Overfitting and Validation

Q1: Our clinical prediction model has excellent AUC (>0.95) on our training cohort but fails completely on a validation set from a different clinic. What are the most likely causes and steps to diagnose them?

A: This is a classic sign of overfitting and dataset shift. Follow this diagnostic protocol:

- Perform Feature Importance Analysis: Compare the top 10 features by weight/coefficient in your model between the training and validation set performances. Overfit models often rely on cohort-specific technical artifacts (e.g., batch-specific biomarkers).

- Conduct Data Drift Analysis:

- Protocol: For each feature (especially top model features), use a two-sample Kolmogorov-Smirnov test to compare distributions between training and validation sets. Create a table of p-values and effect sizes (Cohen's d).

- Interpretation: Significant drift (p < 0.01, d > 0.5) in key features indicates non-generalizable data.

- Simplify the Model: Retrain a model with drastically reduced complexity (e.g., reduce polynomial terms, increase regularization strength) on the training set and re-test on the validation set. If performance stabilizes, overfitting is confirmed.

Q2: During biomarker identification from high-dimensional proteomics data, we get hundreds of significant candidates. How do we triage these to find the few that are biologically plausible and not statistical artifacts?

A: This requires a multi-stage filtering protocol to append robustness to the discovery.

- Apply Strict Multiple Testing Correction: Use the Benjamini-Hochberg procedure to control the False Discovery Rate (FDR). Start with an FDR < 0.01.

- Require Biological Replication: Insist that candidates must be significant in at least two independent cohorts or experimental batches.

- Integrate External Knowledge:

- Protocol: Use enrichment analysis (e.g., via Enrichr API) against pathways (KEGG, Reactome) and disease ontologies. Also, query protein-protein interaction databases (STRING).

- Action: Prioritize biomarkers that cluster in known disease-relevant pathways or have documented interactions.

Q3: Our drug screening assay shows high Z' factor (>0.7) in validation but yields inconsistent results when used for novel compound testing. What should we check?

A: High Z' confirms assay robustness but not its biological relevance or susceptibility to interference.

- Check for Assay Interference: For novel compounds, run counter-screens.

- Protocol: For luminescence-based assays, run a luciferase interference assay. For fluorescence-based, test for autofluorescence or quenching. Include a control well with compound and reporter enzyme only (no cells).

- Verify Target Engagement: An assay may be precise but not measuring the intended biology.

- Protocol: Use a cellular thermal shift assay (CETSA) or drug affinity responsive target stability (DARTS) to confirm that your lead compounds physically bind the intended target protein in a cellular context.

- Assess Contextual Specificity: Test the assay in a genetically modified cell line where the target gene is knocked out. A true signal should be abolished.

Table 1: Impact of Overfitting Mitigation Strategies on Model Performance

| Strategy | Training AUC (Mean ± SD) | Hold-Out Test AUC (Mean ± SD) | Generalization Improvement (ΔAUC) |

|---|---|---|---|

| No Regularization (Base) | 0.98 ± 0.02 | 0.65 ± 0.10 | 0.00 (Reference) |

| L2 Regularization Added | 0.92 ± 0.03 | 0.78 ± 0.06 | +0.13 |

| Feature Selection + Regularization | 0.88 ± 0.04 | 0.82 ± 0.05 | +0.17 |

| External Validation Cohort | 0.87 ± 0.05 | 0.81 ± 0.05 | +0.16 |

Table 2: Biomarker Verification Success Rates by Stage

| Validation Stage | Number of Candidates Input | Candidates Confirmed | Success Rate | Typical Cost & Time |

|---|---|---|---|---|

| Discovery (Omics) | 50,000+ | 200-500 | ~1% | High, 3-6 months |

| Analytical Verification (ELISA/MS) | 200 | 50 | 25% | Medium, 2-4 months |

| Clinical Validation (2+ Cohorts) | 50 | 2-5 | 4-10% | Very High, 1-3 years |

Experimental Protocols

Protocol 1: Nested Cross-Validation for Robust Clinical Model Development

- Objective: To provide an unbiased estimate of model performance while optimizing hyperparameters, effectively addending overfitting.

- Materials: Dataset with labels, machine learning environment (e.g., Python/scikit-learn, R/caret).

- Method:

- Define an outer loop (e.g., 5-fold CV) for performance estimation.

- Within each outer training fold, define an inner loop (e.g., 3-fold CV) for hyperparameter optimization (e.g., grid search over regularization strength, tree depth).

- Train a model with the optimal inner-loop parameters on the entire outer training fold.

- Test this model on the held-out outer test fold. Record performance metric (AUC, accuracy).

- Repeat for all outer folds. The mean performance across all outer test folds is the final, robust estimate.

Protocol 2: Orthogonal Validation of a Putative Biomarker via SRM/MRM Mass Spectrometry

- Objective: To transition a biomarker candidate from a discovery platform (e.g., shotgun proteomics) to a validated, quantitative assay.

- Materials: Patient samples (discovery and independent cohort), synthetic stable isotope-labeled peptide standard for the biomarker, triple-quadrupole LC-MS/MS system.

- Method:

- Peptide Selection: From discovery data, select 2-3 proteotypic peptides unique to the target protein.

- Assay Development: Optimize MS parameters (collision energy, precursor/product m/z transitions) for each peptide and its labeled counterpart.

- Quantification: Spike a known amount of labeled standard into each digested patient sample. Run SRM/MRM assay.

- Analysis: Calculate the ratio of endogenous peptide peak area to labeled standard peak area. Use a calibration curve (if available) for absolute quantification, or report relative ratios across samples.

Visualizations

Diagram 1: The Impact of Overfitting on Research Translation

Diagram 2: Robust Biomarker Identification Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Example Product/Source | Primary Function in Mitigating Overfitting |

|---|---|---|

| Stable Isotope-Labeled Standards | SIS peptides (Sigma, JPT), AQUA peptides | Provides internal controls for MS-based biomarker verification, enabling accurate quantification and reducing technical variance. |

| Validated Antibody Panels | Luminex Assay Kits, R&D Systems DuoSet ELISA | Ensures specificity in immunoassays for biomarker validation, critical for reproducible results across labs. |

| Reference Cell Lines & Controls | ATCC CRISPR-modified isogenic lines, Coriell Institute biobank | Provides genetically defined controls for drug screening assays to confirm on-target activity and reduce false positives. |

| Chemical Probes (with controls) | Selleckchem probe sets, Tocris Biotools tool compounds | High-quality, selective small molecules used to validate drug targets; paired inactive analogs control for off-target effects. |

| High-Quality Biobanked Samples | Independent, well-annotated clinical cohorts (e.g., UK Biobank, TCGA) | Essential for external validation of models and biomarkers, providing the ultimate test against overfitting to a single dataset. |

| Benchmarking Datasets | MLRepo, PMLB, CAMDA challenges | Pre-curated public datasets for testing and comparing algorithm performance in a standardized, unbiased manner. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My initial analysis yielded a null result, but after testing multiple alternative model specifications, I found one with p < 0.05. Is this a valid finding? A: This is a classic symptom of p-hacking (also known as selective inference). The reported p-value is invalid because it does not account for the multiple comparisons (model tests) performed. The probability of finding at least one statistically significant result by chance increases with each test you run.

- Troubleshooting Protocol: Use pre-registration. Before collecting or looking at your data, document your primary hypothesis, analysis plan, and model specification in a public registry. Adhere strictly to this plan for your primary analysis. For exploratory analyses, use correction methods (e.g., Bonferroni, Benjamini-Hochberg) and clearly label them as such.

Q2: I have a large dataset with hundreds of variables. How can I efficiently find significant correlations for my drug response outcome? A: Blindly testing all possible associations is data dredging (or "fishing"). It will almost certainly produce false positive associations due to chance alone, especially in high-dimensional data.

- Troubleshooting Protocol:

- Split Your Data: Before any exploration, randomly split your data into a discovery set (e.g., 70%) and a validation set (e.g., 30%). Lock the validation set away.

- Explore on Discovery Set: Conduct your exploratory analysis (e.g., correlation scans, machine learning feature selection) only on the discovery set.

- Confirm on Validation Set: Test only the hypotheses or models generated in step 2 on the pristine validation set. The validation-set p-value is a more honest estimate of true performance.

Q3: My gene expression biomarker panel shows perfect classification in my training set (n=20 samples, p=500 genes), but fails completely in an independent test. What went wrong? A: You have encountered the "High-Dimensional, Low-Sample-Size" (HDLSS) curse. With far more features (p) than samples (n), models can easily find spurious patterns that fit the noise in your specific small sample, leading to catastrophic overfitting and failure to generalize.

- Troubleshooting Protocol: Apply dimensionality reduction and regularization.

- Feature Selection: Use independent biological knowledge or univariate screening with extremely stringent criteria to reduce the feature set before modeling.

- Regularized Modeling: Employ algorithms designed for HDLSS contexts (e.g., Lasso regression, Ridge regression, Elastic Net). These techniques penalize model complexity to prevent overfitting.

- Use Cross-Validation Correctly: Perform all feature selection and model tuning steps within each fold of cross-validation on the training data only to avoid leakage.

Q4: How do I choose the right multiple testing correction for my high-throughput screen? A: The choice depends on your goal: controlling the Family-Wise Error Rate (FWER) is stricter, while controlling the False Discovery Rate (FDR) is more common in exploratory omics studies.

Table: Multiple Testing Correction Methods

| Method | Controls For | Best Use Case | Key Consideration |

|---|---|---|---|

| Bonferroni | Family-Wise Error Rate (FWER) | Confirmatory studies with a small number of pre-planned tests. Very conservative. | Over-corrects, leading to many false negatives. Adjusted threshold = α / m (m=# of tests). |

| Benjamini-Hochberg | False Discovery Rate (FDR) | Exploratory high-dimensional studies (genomics, proteomics). Less conservative. | Controls the proportion of significant results that are false positives. More powerful than Bonferroni. |

| Permutation-Based FDR | False Discovery Rate (FDR) | Complex dependency structures between tests (e.g., GWAS, imaging). | Computationally intensive but makes fewer assumptions about test distribution and independence. |

Q5: What are essential experimental design reagents and tools to mitigate overfitting from the start? A: The Scientist's Toolkit for Robust Research

| Research Reagent Solution | Function in Mitigating Overfitting |

|---|---|

| Pre-Registration Template | Forces explicit a priori specification of hypotheses, primary outcomes, and analysis plans, neutralizing p-hacking. |

| Independent Validation Cohort | Provides an unbiased estimate of model performance and generalizability. The gold standard for method evaluation. |

| Data/Code Repository Access | Enables full transparency, peer auditability, and reproducibility of all analysis steps, reducing hidden flexibility. |

| Power/Sample Size Calculator | Ensures studies are designed with adequate sample size to detect effects, reducing the temptation to dredge underpowered data. |

| Regularized ML Software (e.g., glmnet) | Provides built-in algorithms (Lasso, Ridge) that prevent overfitting in high-dimensional data during model development. |

| High-Performance Computing (HPC) Access | Enables the use of computationally intensive but honest validation methods like permutation testing and nested cross-validation. |

Experimental Protocols

Protocol 1: Nested Cross-Validation for HDLSS Model Development Purpose: To provide an unbiased performance estimate for a model that requires both feature selection and hyperparameter tuning.

- Outer Loop (Performance Estimation): Split data into k folds (e.g., k=5 or 10).

- Hold Out One Outer Fold: This fold serves as the temporary test set.

- Inner Loop (Model Selection): On the remaining (k-1) folds, perform a second, independent cross-validation.

- Feature Selection & Tuning: Within the inner loop, perform all steps (e.g., feature filtering, selecting the LASSO lambda) repeatedly. Find the optimal model configuration.

- Train Final Inner Model: Train a new model using this optimal configuration on all (k-1) outer-loop training folds.

- Test: Apply this final model to the held-out outer test fold (from step 2) to get one unbiased performance score.

- Repeat: Iterate so each outer fold serves as the test set once. The average performance across all outer folds is the final validated estimate.

Protocol 2: Permutation Test for Assessing Significance Without Overfitting Purpose: To generate a valid null distribution for any complex test statistic (e.g., classifier AUC, biomarker correlation) in the context of small samples or complex data.

- Calculate Real Statistic: Compute your test statistic (e.g., AUC) on your real dataset with true labels.

- Permute Labels: Randomly shuffle the outcome labels (or treatment assignments) of your dataset, breaking the true relationship between variables and outcome.

- Calculate Null Statistic: Re-compute the same test statistic on this permuted, null dataset.

- Repeat: Perform steps 2-3 a large number of times (e.g., 10,000) to build a robust null distribution of the test statistic under the assumption of no effect.

- Calculate p-value: The empirical p-value = (number of permutations where the null statistic ≥ real statistic + 1) / (total permutations + 1). This gives a valid, non-parametric p-value.

Visualizations

Title: Data Splitting Workflow to Prevent Overfitting

Title: Consequences of the HDLSS Curse

Technical Support Center: Overfitting Prevention & Diagnostic Tools

Frequently Asked Questions (FAQs)

Q1: My model performs excellently on my training dataset but fails on new, external validation data. What is the most likely cause and how do I diagnose it? A: This is the hallmark symptom of overfitting. The model has learned noise or specific patterns unique to your training set rather than generalizable biological relationships.

- Diagnostic Steps:

- Split Your Data: Before any analysis, randomly split your data into three sets: Training (60-70%), Validation (15-20%), and Hold-out Test (15-20%). Use the hold-out test set only once at the very end.

- Learning Curves: Plot model performance (e.g., R², AUC) for both training and validation sets against increasing model complexity or training iterations. A growing gap between curves indicates overfitting.

- Apply Regularization: Implement techniques like Lasso (L1) or Ridge (L2) regression that penalize overly complex models.

Q2: In high-throughput 'omics studies (genomics, proteomics), I have thousands of features (p) but only tens of samples (n). How can I avoid false discoveries? A: The "p >> n" problem is a primary driver of overfitting in modern biology.

- Diagnostic & Prevention Protocol:

- Apply Dimensionality Reduction: Use Principal Component Analysis (PCA) or use domain knowledge for feature selection before predictive modeling.

- Use Cross-Validation Correctly: Employ nested cross-validation, where an inner loop performs feature selection and hyperparameter tuning, and an outer loop provides an unbiased performance estimate. Never use the same cross-validation loop for both feature selection and final performance estimation.

- Adjust for Multiple Testing: Apply Benjamini-Hochberg (False Discovery Rate) or Bonferroni corrections to p-values from many simultaneous hypotheses.

Q3: How can I tell if my 'statistically significant' biomarker is a result of overfitting to cohort-specific noise? A: Implement rigorous external validation.

- Experimental Validation Protocol:

- Technical Replication: Re-measure the biomarker in the same samples using a different technical platform (e.g., different antibody lot, different mass spectrometry run).

- Biological Replication: Test the biomarker in an independent cohort of patients/samples, collected and processed by a different team if possible.

- Experimental Perturbation: If possible, use in vitro or in vivo models to functionally perturb the identified target and see if the predicted biological effect holds.

Q4: What are the best practices for reporting methods to ensure my work is reproducible and not overfit? A: Transparency is key. Adhere to community reporting standards (e.g., MIAME, ARRIVE, STROBE).

- Checklist for Submission:

- Clearly state how and when the data was split into training/validation/test sets.

- Provide all code and software version numbers in a public repository (e.g., GitHub, Zenodo).

- Report all hyperparameters tried during tuning, not just the final selected ones.

- For preclinical studies, publish negative results and studies that failed to replicate initial findings.

Troubleshooting Guide: Common Overfitting Scenarios

| Scenario | Symptoms | Root Cause | Corrective Action |

|---|---|---|---|

| Biomarker Discovery | A 20-gene signature has 95% accuracy in the discovery cohort but <60% in a similar published cohort. | Feature selection was performed on the entire dataset without a hold-out set. | Re-analyze using a completely independent validation cohort or simulate one via rigorous nested CV. |

| Dose-Response Modeling | A complex polynomial model fits the training dose-response data perfectly but produces nonsensical predictions for interpolated doses. | Model complexity (degree of polynomial) is too high for the number of data points. | Use a simpler model (e.g., 4-parameter logistic curve) or apply Bayesian regularization to constrain parameters. |

| High-Content Imaging Analysis | A deep learning model accurately classifies treatment effects in images from one lab but fails on images from another using a different microscope. | The model overfit to lab-specific image artifacts (background, staining intensity). | Use data augmentation (rotations, flips, noise injection) and incorporate images from multiple sources in the training set. |

Quantitative Data Summary: Impact of Overfitting Mitigation Strategies

Table 1: Effect of Validation Strategy on Reported Model Performance in Published Studies (Simulated Meta-Analysis Data)

| Validation Strategy | Average Reported AUC | Average Performance Drop in External Validation | Estimated Risk of Non-Reproducibility |

|---|---|---|---|

| None (Resubstitution) | 0.95 | -0.25 | Very High |

| Simple Train/Test Split (80/20) | 0.87 | -0.15 | High |

| 10-Fold Cross-Validation | 0.85 | -0.12 | Moderate |

| Nested Cross-Validation | 0.83 | -0.05 | Low |

| Independent External Cohort | 0.80 | N/A (This is the validation) | Very Low |

Table 2: Impact of Multiple Testing Correction on Significant Hits in a Genomic Study (Example: 10,000 genes tested)

| Analysis Method | Nominal p-value threshold | Uncorrected Significant Hits | FDR-Adjusted (q<0.05) Significant Hits | Approx. False Positives |

|---|---|---|---|---|

| No Correction | p < 0.05 | ~500 | N/A | ~500 |

| Bonferroni Correction | p < 5e-6 | 15 | 12 | ~0.05 |

| Benjamini-Hochberg (FDR) | q < 0.05 | 110 | 110 | ~5 |

Experimental Protocol: Nested Cross-Validation for Predictive Modeling

Objective: To obtain an unbiased estimate of a predictive model's performance while optimizing hyperparameters and/or selecting features.

Materials: Dataset with features (X) and outcome labels (y). Computational environment (e.g., Python/R).

Procedure:

- Define Outer Loop (Performance Estimation): Split the entire dataset into k outer folds (e.g., k=5 or 10).

- Iterate Outer Loop: For each outer fold i: a. Set aside fold i as the outer test set. b. The remaining k-1 folds constitute the outer training set.

- Define Inner Loop (Model Tuning): On the outer training set, perform a second, independent k-fold cross-validation (the inner loop).

- Iterate Inner Loop: For each combination of hyperparameters/feature sets: a. Train the model on the inner training folds. b. Evaluate it on the inner validation fold. c. Average performance across all inner folds to select the best hyperparameter/feature set.

- Train Final Inner Model: Train a new model on the entire outer training set using the optimal hyperparameters identified in Step 4.

- Evaluate on Outer Test Set: Apply this final model to the held-out outer test set (fold i) to obtain an unbiased performance score.

- Aggregate Results: Repeat steps 2-6 for all k outer folds. The average performance across all outer test sets is the final, robust performance estimate.

Visualization: Workflows and Relationships

Title: Robust Model Development & Evaluation Workflow

Title: How Overfitting Contributes to the Reproducibility Crisis

The Scientist's Toolkit: Research Reagent Solutions for Validation

| Reagent/Tool Category | Specific Example | Primary Function in Combatting Overfitting |

|---|---|---|

| Reference Standards | Certified cell lines (e.g., from ATCC), synthetic peptide standards, control plasmids. | Provides a consistent baseline across experiments and labs, enabling technical replication and detection of batch effects. |

| Validated Assay Kits | FDA-approved IVD kits, PMA-approved companion diagnostics. | Uses rigorously optimized and locked-down protocols to minimize technical variability that can be mistaken for signal. |

| Knockout/Knockdown Tools | CRISPR-Cas9 kits, validated siRNA pools, isogenic cell line pairs. | Enforces causal validation of putative biomarkers or targets identified in correlative models, moving beyond prediction. |

| Chemical Probes | High-quality, selective kinase inhibitors; well-characterized agonists/antagonists. | Allows pharmacological perturbation to test predictions from computational models in biological systems. |

| Data & Code Repositories | GEO, PRIDE, GitHub, Zenodo, Synapse. | Facilitates independent re-analysis and validation of published models, exposing overfit patterns. |

Troubleshooting Guides & FAQs

General Model Development Issues

Q1: My model performs excellently on training data but fails on new test sets. What is the root cause and how can I fix it? A: This is a classic symptom of overfitting, where a model has high variance and low bias. The model has learned the noise and specific patterns in the training data rather than the generalizable signal.

- Troubleshooting Steps:

- Diagnose: Plot learning curves (train vs. validation error vs. model complexity/epochs).

- Regularize: Apply L1 (Lasso) or L2 (Ridge) regularization to penalize overly complex coefficients.

- Simplify: Reduce model complexity (e.g., decrease polynomial degree, reduce nodes/layers in a neural network).

- Augment Data: Use data augmentation techniques or collect more diverse training data.

- Use Cross-Validation: Implement k-fold cross-validation to get a more robust estimate of performance.

Q2: How do I know if my model is too simple (underfitting) or appropriately complex? A: Underfit models exhibit high bias and low variance; they perform poorly on both training and validation data.

- Troubleshooting Steps:

- Diagnose: If training error is unacceptably high, the model is likely underfitting.

- Increase Complexity: Add relevant features, increase polynomial degree, or add capacity to your algorithm.

- Reduce Regularization: Decrease the strength of regularization parameters.

- Train Longer: For iterative models like neural networks, increase the number of training epochs.

Q3: What is the "double-dipping" problem, and how can I avoid it in my analysis? A: Double-dipping (or circular analysis) occurs when the same data is used for both hypothesis generation (e.g., feature selection) and hypothesis testing (e.g., model evaluation), leading to optimistically biased results and inflated false-positive rates.

- Troubleshooting Protocol:

- Use a Hold-Out Set: Before any analysis, split data into three sets: Training, Validation (for tuning), and a completely held-out Test set used only once for final evaluation.

- Employ Nested Cross-Validation: Use an outer loop for performance estimation and an inner loop for model/feature selection. This keeps the selection process separate from the final evaluation.

- Pre-register Analysis Plans: Define your model, features, and evaluation metrics before observing the test data.

Table 1: Impact of Model Complexity on Error Components

| Model Complexity Level | Typical Training Error | Typical Validation Error | Dominant Error Type | Indicated Problem |

|---|---|---|---|---|

| Very Low | High | Very High | Bias | Severe Underfitting |

| Low | Medium-High | High | Bias | Underfitting |

| Optimal | Low | Low (minimized) | Balanced | Well-Fitted Model |

| High | Very Low | Medium-High | Variance | Overfitting |

| Very High | Extremely Low | Very High | Variance | Severe Overfitting |

Table 2: Common Remedies for Model Fitting Problems

| Problem | Primary Cause | Recommended Solutions (in order of priority) |

|---|---|---|

| Overfitting | High Variance | 1. Gather more training data.2. Apply regularization (L1/L2/Dropout).3. Reduce model complexity.4. Use ensemble methods (e.g., bagging). |

| Underfitting | High Bias | 1. Increase model complexity (features, parameters).2. Train for more iterations/epochs.3. Reduce regularization strength.4. Use a more advanced model algorithm. |

| Double-Dipping Bias | Data Leakage | 1. Implement strict train/validation/test splits.2. Use nested cross-validation.3. Perform independent validation on a fresh cohort. |

Experimental Protocols

Protocol 1: Nested Cross-Validation to Prevent Double-Dipping Objective: To obtain an unbiased estimate of model performance when both hyperparameter tuning and feature selection are required.

- Data Partitioning: Start with your full dataset.

- Outer Loop (Performance Estimation): Split data into k outer folds. For each outer fold: a. Designate the fold as the temporary test set. b. The remaining k-1 folds form the development set.

- Inner Loop (Model Selection): On the development set, perform a second, independent m-fold cross-validation. a. Use this inner loop to train and tune different model configurations (e.g., varying hyperparameters, feature subsets). b. Select the best-performing configuration based on the inner validation scores.

- Final Evaluation: Train the selected configuration on the entire development set. Evaluate it on the held-out temporary test set from step 2a.

- Aggregation: Repeat for all k outer folds. The average score across all temporary test sets provides the final, unbiased performance estimate.

Protocol 2: Learning Curve Analysis for Bias-Variance Diagnosis Objective: To visually diagnose whether a model suffers from high bias or high variance and guide resource allocation.

- Setup: Define a model architecture and evaluation metric (e.g., RMSE, Accuracy).

- Iterative Training: For n different training set sizes (e.g., 10%, 20%, ..., 100% of available data): a. Randomly sample a subset of the training data of the specified size. b. Train the model on this subset. c. Calculate the error/score on: i) the training subset, and ii) a fixed, held-out validation set.

- Plotting: Plot both the training error and validation error as a function of training set size.

- Interpretation:

- Converging High Errors: Both curves plateau at a high error → High Bias.

- Large Gap: Training error is low, validation error is significantly higher and the gap doesn't close with more data → High Variance.

- Converging Low Errors: Both curves converge to a low error → Ideal.

Visualizations

Bias-Variance Tradeoff Relationship

Nested Cross-Validation Workflow to Avoid Double-Dipping

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust Method Development & Evaluation

| Item | Category | Function in Addressing Overfitting & Bias |

|---|---|---|

| Scikit-learn | Software Library | Provides built-in functions for train/test splits, cross-validation (including nested), and regularization, enforcing proper workflow. |

| MLflow / Weights & Biases | Experiment Tracking | Logs all hyperparameters, data splits, and metrics for every run, ensuring reproducibility and audit trails to detect data leakage. |

| Matplotlib / Seaborn | Visualization | Creates essential diagnostic plots (learning curves, validation curves, feature importance) to visualize bias-variance. |

| DVC (Data Version Control) | Data Management | Versions datasets and model artifacts, guaranteeing the exact data split used for a model can be recovered and validated. |

| Pre-registration Template | Documentation | A structured document to define hypotheses, analysis plans, and model specifications before data analysis begins, mitigating double-dipping. |

| Statistical Test Suites | Analysis Toolkits | Libraries (e.g., statsmodels, scipy) for calculating p-values and confidence intervals on held-out test sets only, preventing inflation. |

| Public Benchmark Datasets | Reference Data | Well-curated datasets (e.g., from TCGA, PubChem) with standard splits allow for fair comparison and baseline establishment. |

Building Defenses: Proactive Methodological Strategies to Prevent Overfitting

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model performs excellently on the validation set but fails on the test set. What is the most likely cause? A: This is a classic sign of data leakage or an improper splitting protocol. The validation set is likely not representative or has been used to influence training decisions repeatedly, causing overfitting to the validation set. Ensure your initial data split (Train/Val/Test) is performed before any preprocessing or feature selection, using a method that preserves the distribution of your target variable (e.g., stratified splitting for classification). The test set must be locked away and used for a single, final evaluation.

Q2: How do I partition my dataset when I have temporal or batch-specific effects (e.g., multi-center clinical trial data)? A: For data with inherent grouping or temporal structure, a simple random split violates the independence assumption. Use group-based or time-based splitting.

- Protocol: Identify the grouping variable (e.g., patient ID, clinical trial site, experimental batch). Use

GroupShuffleSplitorGroupKFold(from scikit-learn) to ensure all samples from the same group are contained within a single split (train, validation, or test). This prevents the model from learning group-specific artifacts and gives a true estimate of performance on new groups.

Q3: What is the minimum recommended size for a test set to be statistically meaningful? A: There is no universal rule, but guidelines exist based on desired precision. A common heuristic is to allocate 10-20% of your total data to the test set, provided this yields a sufficient absolute sample size. For performance metrics like accuracy or AUC, the required size depends on the expected variance.

Table 1: Minimum Test Set Sizes for Desired Confidence Interval Width (Binary Classification, ~80% Accuracy)

| Confidence Level | Target CI Width (±) | Minimum Test Set Size (n) |

|---|---|---|

| 95% | 0.05 | ~246 |

| 95% | 0.03 | ~683 |

| 95% | 0.02 | ~1537 |

Protocol for Sizing: Use power analysis for proportions. Formula for accuracy: n = (Z^2 * p * (1-p)) / d^2, where Z is the Z-score (e.g., 1.96 for 95% CI), p is the expected accuracy, and d is the margin of error (CI half-width).

Q4: How should I handle class imbalance when creating splits for a rare event prediction task? A: Use stratified splitting. This maintains the relative proportion of each class across the train, validation, and test sets. This is crucial to prevent a scenario where a rare class is underrepresented or absent in a split, skewing performance estimates.

- Protocol: Utilize

StratifiedShuffleSplitorStratifiedKFold. Provide the target variable (y) to the splitting function. Ensure that the minority class has enough representatives in the validation and test sets to compute meaningful metrics (e.g., precision, recall).

Q5: What is nested cross-validation, and when is it mandatory? A: Nested cross-validation (CV) is the gold-standard protocol for simultaneously performing model selection (hyperparameter tuning) and unbiased performance estimation when data is limited.

- Inner Loop: Used for hyperparameter tuning (using the training fold from the outer loop).

- Outer Loop: Used to assess the performance of the best model found by the inner loop on held-out data.

- When to Use: It is mandatory for providing an unbiased estimate of model performance when you have used the validation set for extensive model development and tuning. It prevents over-optimistic reports of generalizability.

Q6: My dataset is very small (<100 samples). Can I still do a train-validation-test split? A: A traditional three-way split on very small data leads to unreliable estimates due to high variance. The recommended protocol is to use nested cross-validation (as above) or a bootstrap approach.

- Bootstrap Protocol: Repeatedly draw random samples with replacement from your full dataset to create training sets. The out-of-bag samples (those not drawn) form the test set for each iteration. Performance is averaged over many bootstrap iterations. This provides a more stable estimate of performance and confidence intervals on small data.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Rigorous Data Partitioning & Model Evaluation

| Item/Software | Function | Key Consideration |

|---|---|---|

| scikit-learn (Python) | Primary library for train_test_split, StratifiedKFold, GroupShuffleSplit, cross_val_score. |

Ensure version >0.24 for advanced splitting functions. |

| MLxtend (Python) | Provides PredefinedHoldoutSplit and other utilities for implementing rigorous nested CV workflows. |

Useful for enforcing fixed validation sets within CV loops. |

| Pandas DataFrame | Data structure for holding features, targets, and crucial group IDs (patient, batch). | Essential for grouping and stratifying operations. |

| Random State Seed | An integer used to initialize the pseudo-random number generator. | Fixes the reproducibility of your splits. Document this seed. |

| Data Versioning Tool (e.g., DVC, Git LFS) | Tracks exact snapshots of your data and the code that splits it. | Critical for audit trails and reproducible research. |

| Stratification Variable | The array of class labels for classification tasks. | Must be carefully validated for integrity before splitting. |

| Grouping Variable | The array (e.g., Patient_ID) defining non-independent samples. | Must be identified during experimental design. |

Troubleshooting Guides & FAQs

Q1: My model performs excellently during k-fold cross-validation but fails dramatically on the final held-out test set. What went wrong? A: This is a classic symptom of data leakage or improper cross-validation setup. Ensure that all preprocessing steps (e.g., scaling, imputation) are fitted only on the training fold and then applied to the validation fold within each CV loop. Using the entire dataset for preprocessing before splitting biases the estimate. The nested CV protocol is designed to prevent this.

Q2: When using Leave-One-Out Cross-Validation (LOOCV) on my large dataset, the process is computationally prohibitive. What are my options? A: LOOCV fits N models (N = sample size), which is costly. Consider:

- k-Fold with large k: Use 10-fold or 5-fold CV. The variance-bias trade-off is often favorable.

- Stratified k-Fold: If you have a class-imbalanced dataset, use this to preserve class percentages in each fold.

- Efficient Algorithms: For some models (like linear regression), LOOCV can be approximated efficiently via the hat matrix without refitting.

Q3: How do I choose between k-Fold and LOOCV for my small sample size (n<50) study? A: For very small samples, LOOCV provides a nearly unbiased estimate of the true error but can have high variance. Repeated k-Fold CV (e.g., 5-fold repeated 10 times) is often recommended as a good compromise, providing a more stable (lower variance) estimate while mitigating bias from a single random partition.

Q4: I am tuning hyperparameters and selecting features. How do I get a final, unbiased performance estimate for my paper? A: You must use Nested Cross-Validation. An outer loop estimates the generalization error, while an inner loop handles model selection/tuning. Using the same (non-nested) CV for both tuning and performance reporting gives an optimistically biased estimate. See the protocol below.

Q5: My nested CV results show much lower performance than my initial single CV run. Which one should I report? A: Report the nested CV result. The initial, higher estimate was almost certainly biased due to information leakage from the test set into the model selection process. The nested CV result, while perhaps disappointing, is the rigorous, unbiased estimate required for robust method development and publication.

Experimental Protocols & Data Presentation

Protocol 1: Standard k-Fold Cross-Validation

- Randomly shuffle the dataset and split it into k roughly equal-sized, independent folds.

- For each unique fold

i:- Set fold

ias the validation set. - Train the model on the remaining k-1 folds.

- Evaluate the model on the validation fold

iand record the performance metric (e.g., R², RMSE).

- Set fold

- Calculate the final performance estimate as the average of the k recorded metrics.

Protocol 2: Nested Cross-Validation for Unbiased Estimation

- Define an outer loop (e.g., 5-fold or 10-fold) for performance estimation.

- Define an inner loop (e.g., 5-fold) for hyperparameter tuning/model selection.

- For each outer fold

i:- Split data into outer training and outer test sets based on fold

i. - For each candidate hyperparameter set:

- Perform k-fold CV (the inner loop) only on the outer training set.

- Calculate the average performance across inner folds.

- Select the hyperparameter set with the best average inner-loop performance.

- Retrain a model with these optimal parameters on the entire outer training set.

- Evaluate this final model on the held-out outer test set. Record this metric.

- Split data into outer training and outer test sets based on fold

- The final, unbiased performance estimate is the average of the metrics from all outer test sets. The model selection process is thus repeated from scratch for each outer fold, with no leakage.

Performance Comparison of CV Strategies (Simulated Data)

Table 1: Characteristics and typical performance estimates of different cross-validation strategies on a simulated dataset with known true error (0.50). Nested CV provides the most realistic estimate.

| Method | Key Advantage | Key Disadvantage | Estimated Error (Mean ± Std Dev)* | Bias Relative to True Error |

|---|---|---|---|---|

| Hold-Out (70/30) | Computationally cheap | High variance, depends on single split | 0.48 ± 0.05 | Moderate |

| 5-Fold CV | Good bias-variance trade-off | Moderate computational cost | 0.47 ± 0.03 | Low-Moderate |

| 10-Fold CV | Lower bias | Higher computational cost | 0.49 ± 0.02 | Low |

| LOOCV | Low bias, deterministic | Very high variance & cost for large N | 0.50 ± 0.04 | Very Low |

| Nested 5x5 CV | Unbiased for model selection | High computational cost | 0.51 ± 0.02 | Negligible |

*Standard deviation indicates the variance of the estimate across multiple CV runs with different random seeds.

Visualization of Workflows

Title: k-Fold Cross-Validation Workflow

Title: Nested Cross-Validation for Unbiased Estimation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential computational tools for robust cross-validation and combating overfitting in method development.

| Item | Function in Experiment | Example (Open Source) | Example (Commercial) |

|---|---|---|---|

| ML Framework | Provides core algorithms and CV splitting utilities. | Scikit-learn (Python), caret (R) | SAS JMP, MATLAB Statistics |

| Hyperparameter Optimization Library | Automates search for optimal model parameters within inner CV loop. | Optuna, Hyperopt | SAS Visual Data Mining, H2O.ai |

| Pipeline Tool | Ensures preprocessing steps are correctly contained within each CV fold to prevent data leakage. | Scikit-learn Pipeline |

RapidMiner, Alteryx |

| Stratified Sampling Module | Creates folds that preserve the percentage of sample classes, crucial for imbalanced data. | StratifiedKFold (Scikit-learn) |

Built into most commercial suites |

| High-Performance Computing (HPC) / Cloud Credits | Enables practical use of repeated and nested CV, which are computationally intensive. | SLURM cluster, Google Colab Pro | AWS SageMaker, Azure ML |

| Version Control System | Tracks exact code, parameters, and data splits to ensure full reproducibility of the CV protocol. | Git, DVC | GitHub, GitLab |

Technical Support Center: Troubleshooting & FAQs

Context: This guide supports thesis research focused on amending overfitting in method development and evaluation, specifically when applying regularization to high-dimensional biological data.

Troubleshooting Guides

Issue 1: Model Unstable or Fails to Converge with Genomic Data

- Symptoms: Coefficients vary wildly between runs; warning messages about convergence.

- Probable Cause: High correlation (multicollinearity) among features (e.g., gene expressions from the same pathway); poorly scaled data.

- Solution:

- Standardize Input Features: Always center (mean=0) and scale (variance=1) each predictor independently of the test set. Use training set parameters to transform the test set.

- Increase Regularization Strength: Systematically increase

alpha(λ) parameter. - Try Elastic Net: Set

l1_ratiobetween 0.2 and 0.8 to balance Ridge and LASSO stability. - Check for Constant Features: Remove features with zero variance in the training set.

Issue 2: LASSO Selects Too Many or Too Few Features

- Symptoms: Model is still too complex or overly simplistic, hindering biological interpretation.

- Probable Cause: Suboptimal regularization penalty (

alpha). - Solution:

- Implement Nested Cross-Validation: Use an outer CV for error estimation and an inner CV for

alphaselection to prevent data leakage and overfitting. - Use Information Criterion Paths: For faster search, plot AICc or BIC across a range of

alphavalues and choose the minimum. - Leverage Stability Selection: Run LASSO multiple times on data subsamples and select features that appear consistently.

- Implement Nested Cross-Validation: Use an outer CV for error estimation and an inner CV for

Issue 3: Poor Predictive Performance on Independent Test Set

- Symptoms: High training ( R^2 ), but very low test ( R^2 ) or AUC.

- Probable Cause: Data leakage during preprocessing (e.g., scaling on entire dataset before train/test split) or target variable leakage.

- Solution:

- Audit Preprocessing Pipeline: Ensure all steps (imputation, scaling, feature selection) are fit only on the training fold within each CV loop.

- Validate with External Cohort: Test final locked model on a completely independent, biologically validated dataset.

- Review Feature Origins: Ensure no features are direct proxies for the outcome (e.g., a protein measurement that is part of the clinical diagnostic score).

Frequently Asked Questions (FAQs)

Q1: For genomic data with ~20,000 genes and ~100 samples, should I use Ridge, LASSO, or Elastic Net?

A: Start with Elastic Net. It combines the strengths of both: Ridge regression handles multicollinearity well, while LASSO performs feature selection. Elastic Net's hybrid penalty is particularly effective for ( p >> n ) problems, where it can select more than n features if they are correlated, unlike LASSO. This is common in genomics.

Q2: How do I choose the optimal alpha (λ) and, for Elastic Net, the l1_ratio?

A: Use a search grid with cross-validation.

- Define a log-spaced range for

alpha(e.g., from1e-5to1e2). - For Elastic Net, define a grid for

l1_ratio(e.g.,[0.1, 0.5, 0.7, 0.9, 0.95, 1]). - Perform

GridSearchCVorRandomizedSearchCVusing the appropriate metric (e.g., mean squared error for regression, AUC-ROC for classification). - Crucially: This search must be embedded within a nested cross-validation scheme to obtain unbiased performance estimates for your thesis.

Q3: My features have different scales (e.g., gene expression counts, pH, temperature). Is preprocessing mandatory? A: Yes, it is critical. Regularization penalties are sensitive to feature scale. A feature with larger magnitude will disproportionately influence the penalty term, unfairly shrinking smaller-scale features. Always standardize features to mean=0 and variance=1 based on the training set.

Q4: How do I interpret the coefficients from a regularized model for biological insight? A: Regularized coefficients are shrunken and should be interpreted with caution.

- Non-zero Coefficients (LASSO/Elastic Net): Indicate features selected as important by the model under the given penalty. Pathway enrichment analysis on these selected genes/proteins is a common next step.

- Coefficient Magnitude: While larger absolute values suggest greater influence, they are not directly analogous to effect sizes from ordinary least squares. Focus on the sign (positive/negative association) and the stability of selection across cross-validation folds or bootstrap samples.

- Always validate top selected features with orthogonal experimental methods (e.g., siRNA knockout, ELISA).

Table 1: Core Properties and Application Guidance

| Property | Ridge Regression (L2) | LASSO Regression (L1) | Elastic Net (L1 + L2) |

|---|---|---|---|

| Penalty Term | ( \lambda \sum{j=1}^{p} \betaj^2 ) | ( \lambda \sum{j=1}^{p} |\betaj| ) | ( \lambda \left[ \frac{1-\alpha}{2}\sum{j=1}^{p}\betaj^2 + \alpha \sum{j=1}^{p}|\betaj| \right] ) |

| Feature Selection | No (shinks coefficients) | Yes (can zero out coefficients) | Yes |

| Handles Multicollinearity | Excellent | Poor (selects one) | Good |

| Best For | Dense solutions, many small effects | Sparse solutions, interpretability | High-dim data (p>>n), correlated features |

| Key Hyperparameter | Lambda (alpha) |

Lambda (alpha) |

Lambda (alpha) & L1 Ratio (l1_ratio) |

Table 2: Typical Performance on High-Dimensional Biological Data (Thesis Context)

| Metric | Ridge | LASSO | Elastic Net | Notes for Evaluation Research |

|---|---|---|---|---|

| Avg. Test MSE (Simulated p=1000, n=100) | 0.85 | 0.72 | 0.68 | Elastic Net often shows lower prediction error. |

| Avg. Features Selected | 1000 (all) | 15-30 | 40-80 | LASSO overly aggressive; EN provides more stable biomarker list. |

| Coefficient Bias | Lower | Higher | Medium | Consider bias-variance trade-off in your thesis analysis. |

| Stability (by Bootstrap) | Very High | Low | High | Essential for reproducible method development. |

| Computational Speed | Fast | Fast (with LARS) | Moderate | For p in the 10,000s, use coordinate descent algorithms. |

Standardized Experimental Protocol for Comparative Analysis

Title: Protocol for Comparative Evaluation of Regularization Techniques in Omics Prediction Tasks.

Objective: To empirically evaluate and compare Ridge, LASSO, and Elastic Net regression in preventing overfitting within a high-dimensional omics dataset.

Materials: See "Research Reagent Solutions" below.

Procedure:

- Data Partitioning: Split dataset into independent Discovery (80%) and Hold-out Validation (20%) cohorts. Do not revisit the hold-out set until final model selection is complete.

- Preprocessing (on Discovery Set only):

- Perform missing value imputation using k-NN imputation.

- Standardization: For each feature, subtract the training mean and divide by the training standard deviation. Store these parameters.

- Apply the same transformation to the hold-out set using the stored parameters.

- Hyperparameter Tuning via Nested Cross-Validation:

- On the Discovery set, implement 5x5 Nested CV.

- Outer Loop (5-fold): Defines training/validation splits for performance estimation.

- Inner Loop (5-fold): For each outer training fold, perform a grid search to find optimal hyperparameters (

alphafor Ridge/LASSO;alpha+l1_ratiofor Elastic Net). Use mean squared error as the scoring metric.

- Model Training & Assessment:

- Train a final model on the entire Discovery set using the best hyperparameters found by the inner CV search.

- Evaluate this final model on the locked Hold-out Validation cohort. Report ( R^2 ), MSE, and, for classification, AUC-ROC with 95% CI.

- Stability & Interpretability Analysis:

- Perform 1000 bootstrap resamples on the Discovery set, applying the selected model.

- Record the frequency of feature selection (for LASSO/EN) and coefficient sign stability.

- Perform pathway enrichment analysis (e.g., via g:Profiler, Enrichr) on the consensus feature set.

Visualizations

Diagram 1: Nested CV for Unbiased Evaluation

Title: Nested Cross-Validation Workflow

Diagram 2: Regularization Paths Comparison

Title: Coefficient Paths: LASSO vs. Ridge vs. Elastic Net

Diagram 3: Protocol for Preventing Overfitting

Title: Thesis Anti-Overfitting Protocol with Regularization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Packages for Implementation

| Item/Category | Specific Solution/Software/Package | Function in the Experiment |

|---|---|---|

| Programming Language | Python (≥3.8) with scikit-learn, NumPy, pandas | Core environment for data manipulation, modeling, and analysis. |

| Regularization Algorithms | sklearn.linear_model (Ridge, Lasso, ElasticNet, LassoCV, ElasticNetCV) |

Provides optimized, production-grade implementations of all three techniques. |

| Hyperparameter Tuning | sklearn.model_selection (GridSearchCV, RandomizedSearchCV) |

Essential for automated, rigorous search of alpha and l1_ratio. |

| High-Performance Solver | sklearn.linear_model with coordinate_descent solver |

Efficiently handles datasets where p (features) >> n (samples). |

| Preprocessing | sklearn.preprocessing (StandardScaler) |

Correctly standardizes features to prevent scale bias in penalties. |

| Data Handling | pandas DataFrames |

Manages sample IDs, feature names, and clinical metadata. |

| Visualization | matplotlib, seaborn |

Creates coefficient paths, performance plots, and validation figures. |

| Pathway Analysis | g:Profiler, Enrichr (web) or gseapy (Python) |

Interprets selected gene/protein lists in a biological context. |

| Statistical Validation | scipy.stats (for bootstrapping CIs) |

Quantifies uncertainty in performance metrics and feature stability. |

Principled Feature Selection and Dimensionality Reduction (PCA, PLS) Before Model Fitting

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My PCA model yields unstable loadings between runs, causing irreproducible feature selection. What are the causes and solutions? A: This is often caused by (a) features with vastly different scales or (b) near-eigenvalues leading to arbitrary axis rotations.

- Solution 1: Always standardize (center and scale to unit variance) each feature before applying PCA. This ensures all features contribute equally to the variance.

- Solution 2: If instability persists, check eigenvalue gaps. Use a stricter tolerance for eigenvalue equality or consider using bootstrapping to assess loading stability. Select features with consistently high absolute loadings across bootstrap samples.

Q2: How do I choose the optimal number of components for PCA or PLS to avoid overfitting in my predictive model? A: The goal is to retain signal and discard noise. Do not rely solely on variance explained.

- For PCA (unsupervised): Use cross-validation on the downstream model. Perform PCA with varying

n_componentswithin each CV fold, train the model on the transformed training set, and evaluate on the transformed validation set. Choosen_that minimizes validation error. - For PLS (supervised): Use built-in CV methods like

SIMPLSorNIPALSwith a defined number of latent variables. Employ criteria like the first local minimum in Prediction Residual Sum of Squares (PRESS) plot or a 1-standard-error rule.

Q3: After PLS, my model still overfits despite using latent variables. What went wrong? A: PLS is not immune to overfitting, especially with small sample sizes (n) and very large feature counts (p).

- Cause: PLS maximizes covariance, which can still fit noise. With small

n, the estimated latent directions may be spurious. - Solution: Implement double cross-validation: an outer loop for generalizability estimation and an inner loop for rigorous optimization of the number of PLS components. Also, consider

sparse PLSmethods that perform feature selection within the PLS framework to reduce noise.

Q4: I have missing data in my dataset. Can I apply PCA/PLS, and what are the principled methods to handle it? A: Standard PCA/PLS requires a complete matrix. Imputation is necessary but must be done cautiously to prevent bias.

- Recommended Protocol:

- Perform initial filtering: Remove features with excessive (>20%) missingness if not biologically critical.

- Use model-based imputation: Apply Multivariate Imputation by Chained Equations (MICE) or matrix completion algorithms, iterating over the feature columns. For omics data, consider

k-nearest neighbors impute using similar samples. - Post-imputation validation: Conduct PCA on the imputed data and color samples by their original missingness pattern to check for introduced artifacts.

Q5: How do I interpret selected features from PCA/PLS in the context of biological mechanism or drug response? A: Projection methods provide transformed components, not direct feature selection.

- For PCA: Examine the

loadings(coefficients) of the top PCs. Features with large absolute loadings (positive or negative) drive that component's variation. Biologically interpret the collective meaning of these co-varying features. - For PLS: Examine the

weightsorVIP(Variable Importance in Projection) scores. Features with high VIP (>1.0) are most relevant for predicting the response. Validate these against known pathways or through independent literature mining.

Table 1: Comparison of Dimensionality Reduction Methods in Overfitting Context

| Method | Supervised? | Primary Objective | Feature Selection Direct? | Overfitting Risk (Small n, Large p) | Key Hyperparameter |

|---|---|---|---|---|---|

| PCA | No | Maximize variance in X | No (but via loading thresholds) | Moderate (no Y guidance) | Number of Components |

| PLS | Yes | Maximize covariance(X, Y) | No (but via VIP scores) | High if components not validated | Number of Latent Variables |

| Sparse PCA | No | Maximize variance with L1 penalty | Yes (loadings forced to zero) | Lower than PCA | Sparsity Parameter (Alpha) |

| Sparse PLS | Yes | Maximize covariance with L1 penalty | Yes (weights forced to zero) | Lower than standard PLS | Sparsity Parameter (Eta) |

Table 2: Typical Results from a PCA Cross-Validation Experiment to Determine Optimal Components

| Number of PCs Retained | Cumulative Variance Explained (%) | Mean CV RMSE of Downstream Model | Std Dev of CV RMSE |

|---|---|---|---|

| 2 | 45.2 | 1.85 | 0.32 |

| 5 | 72.8 | 1.21 | 0.18 |

| 8 | 88.5 | 0.98 | 0.21 |

| 10 | 92.1 | 1.05 | 0.25 |

| 15 | 97.3 | 1.34 | 0.41 |

Experimental Protocols

Protocol 1: Cross-Validated PCA for Regression Model (To Prevent Overfitting)

- Preprocessing: Split full dataset

Dinto independent Training/Test sets (e.g., 80/20). Work only on the Training setT. - Standardization: On

T, center and scale each feature to unit variance. Store the mean and standard deviation. - Nested CV Loop: For each fold in

k-fold CV: a. SplitTinto training (Tr) and validation (Val) subsets. b. Apply standardization toTrusing its parameters, transformValwith same. c. Forcin [1 toC_max] (e.g., 1 to 20): i. Fit PCA withccomponents onTr. ii. TransformTrandValtoTr_pc,Val_pc. iii. Train your regression model (e.g., Ridge Regression) onTr_pc. iv. Predict onVal_pcand record error. - Determine Optimal

c: Average validation error across folds for eachc. Choosec_optthat gives minimum average error. - Final Model: Fit PCA with

c_opton entireT, transformT. Train final model. Apply stored standardization and PCA transformation to the held-out Test set for final unbiased evaluation.

Protocol 2: VIP-based Feature Selection after PLS (For Interpretable Biomarker Discovery)

- Data Preparation: Use standardized matrix

Xand response vectorY. - Initial PLS Model: Fit a PLS regression model with a generous number of latent variables (LVs), e.g., 10.

- Component Selection: Plot cross-validated RMSE vs. number of LVs. Select the number

l_optat the first clear minimum. - Refit Model: Refit PLS with exactly

l_optLVs on the full training set. - Calculate VIP Scores: For each feature

j, calculate VIP = sqrt( p * Σ(SScontribl * (weight{lj}^2)) / Σ(SScontrib_l) ), where summation is overl_optLVs,pis total features,SS_contrib_lis the sum of squares explained by the LVl, andweight_{lj}is the PLS weight. - Threshold Features: Retain features with VIP > 1.0 as important for the model. Validate this subset in an independent test set or via pathway enrichment analysis.

Diagrams

Title: Workflow for Principled Dimensionality Reduction in Model Building

Title: Nested CV for PCA Component Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Feature Selection & Dimensionality Reduction

| Item (Software/Package) | Category | Primary Function | Key Consideration for Overfitting |

|---|---|---|---|

| scikit-learn (Python) | Core Library | Provides PCA, PLSRegression, cross_val_score, GridSearchCV. |

Ensures correct CV separation; pipelines prevent data leakage. |

| mixOmics (R/Bioc) | Omics-Focused | Implements sparse PLS, sPCA, VIP calculation, DIABLO for multi-omics. |

Designed for high-dimensional biological data with built-in CV. |

| SIMCA-P+ (Commercial) | Standalone Software | Industry-standard for multivariate analysis (PCA, PLS, OPLS). | Uses sophisticated metrics (R2X, R2Y, Q2) to guide component choice. |

| MissForest (R) / IterativeImputer (Python) | Data Imputation | Advanced model-based imputation for missing data. | Reduces bias in pre-PCA/PLS data preparation compared to mean impute. |

| MATLAB Statistics & ML Toolbox | Computational Environment | Comprehensive suite for matrix computation and chemometrics. | Offers detailed diagnostic plots (e.g., scores, loadings, residuals). |

| VIPER (R Package) | Visualization | Creates Variable Importance in PLS Projection (VIP) plots. | Aids in objective, visual thresholding of important features. |

Incorporating Domain Knowledge and Biological Constraints to Guide Model Building

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: How can biological plausibility constraints be practically enforced in a neural network model to prevent overfitting to noisy in vitro data? Answer: Implement custom penalty terms or architectural constraints. For example, use a pathway-constrained layer where neuron connections mirror a known signaling pathway (e.g., EGFR-RAS-MAPK). Connections not present in the canonical pathway are forced to zero, drastically reducing spurious parameters. A recent study (2023) showed this reduced overfitting (lower test set MSE) by 40% compared to an unconstrained Dense Neural Network (DNN) on high-content screening data.

FAQ 2: My model trained on cell line data fails to generalize to patient-derived organoids. What domain knowledge can guide adaptation? Answer: This is a classic domain shift issue. Incorporate knowledge of the tumor microenvironment (TME). Create a knowledge graph of TME components (e.g., fibroblasts, immune cells, ECM) and their known interactions with tumor cells. Use this graph to structure a multi-modal input layer or to generate synthetic training samples that simulate TME influences, moving beyond cell-line monoculture data.

FAQ 3: When using genomics data for drug response prediction, how do I prevent the model from latching onto technical batch effects instead of real biological signals? Answer: Integrate known batch covariates (sequencing platform, lab) directly as invariant features. Employ a Domain Adversarial Neural Network (DANN) where a primary network learns predictive features, and an adversarial discriminator tries to predict the batch from those features. The gradient is reversed during training, forcing the primary network to learn batch-invariant, biologically relevant representations.

FAQ 4: How can I incorporate known physical or thermodynamic constraints (e.g., binding energy limits) into a predictive model for protein-ligand affinity? Answer: Use the constraints as soft bounds via loss function penalties or as hard bounds via activation functions. For instance, scale the final layer's output with a sigmoid function bounded by the known theoretical maximum binding affinity. A 2024 benchmark showed that such physically-constrained models improved prediction robustness on novel scaffold compounds by 25% in terms of RMSE.

Table 1: Comparison of Constraint Methods for Preventing Overfitting

| Constraint Type | Enforcement Method | Typical Use Case | Reported Reduction in Test Error* |

|---|---|---|---|

| Pathway Topology | Sparse, Masked Layers | Signaling Response Prediction | 35-45% |

| Physical Bounds | Output Layer Activation | Binding Affinity Prediction | 20-30% |

| Invariance (e.g., Batch) | Adversarial Training | Multi-study Genomics | 40-60% |

| Causal Structure | Graph-Guided Architecture | Drug Synergy Prediction | 30-50% |

Compared to an equivalent unconstrained model on held-out experimental validation sets. (Synthetic summary of recent literature, 2023-2024)

Experimental Protocols

Protocol: Evaluating a Pathway-Constrained Neural Network

Objective: To assess if enforcing a known signaling pathway topology reduces overfitting in a dose-response prediction task.

- Data Preparation: Use a phosphoproteomics dataset (e.g., LINCS L1000) measuring protein phosphorylation under drug perturbations.

- Model Definition:

- Control Model: A standard fully-connected DNN.

- Constrained Model: A DNN where the first hidden layer's weight matrix is element-wise multiplied by a binary adjacency matrix A representing a canonical pathway (e.g., from KEGG or Reactome).

- Training: Split data into training (60%), validation (20%), test (20%). Train both models to minimize MSE on training data. Use early stopping on validation loss.

- Evaluation: Compare the models on the held-out test set using MSE and R². Perform a permutation test on input features to measure the specificity of the constrained model to pathway-relevant inputs.

Protocol: Adversarial Batch Effect Removal (DANN)

Objective: To learn gene expression features invariant to technical batch for robust biomarker discovery.

- Data: Collect gene expression data from multiple public studies (e.g., TCGA, GEO) for a disease state, ensuring batch labels are known.

- Network Architecture:

- Feature Extractor (G): Shared layers mapping input gene expression to a latent feature space.

- Label Predictor (L): Takes features from G to predict disease subtype/outcome.

- Batch Discriminator (D): Takes features from G to predict the batch label.

- Training: Use a combined loss:

Loss = Loss_label(G, L) - λ * Loss_batch(G, D). The negative sign on the batch loss implements gradient reversal during backpropagation, encouraging G to learn features that confuse D. - Validation: Evaluate the label predictor L on a completely held-out study (a new batch) to measure cross-study generalizability.

Visualizations

Diagram 1: Neural Network with Pathway Topology Constraints

Diagram 2: Domain Adversarial Neural Network for Batch Removal

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Constrained Model Development & Validation

| Item / Reagent | Provider Examples | Function in Context |

|---|---|---|

| LINCS L1000 Data | NIH LINCS Program | Large-scale perturbational transcriptomics dataset for training and testing models on cell response. |

| KEGG/Reactome Pathway Maps | Kanehisa Labs / EMBL-EBI | Source of canonical pathway adjacency matrices used to constrain model architectures. |

| Cell Signaling Multiplex Assays | Luminex, MSD, IsoPlexis | Generate high-dimensional protein activity data for validating model predictions on constrained pathways. |

| Patient-Derived Organoid (PDO) Models | Commercial Biobanks (e.g., CrownBio) | Gold-standard ex vivo system for testing model generalizability beyond cell lines. |

| Domain Adversarial Training Code | GitHub (e.g., DANN-PyTorch) | Open-source implementations of adversarial de-confounding algorithms. |

| Physics-Informed NN Libraries | PyTorch, TensorFlow with custom layers | Frameworks for implementing hard/soft physical constraints as part of the model loss. |

Setting a Priori Hypotheses and Analysis Plans to Minimize Researcher Degrees of Freedom

Troubleshooting Guides and FAQs

FAQ 1: What constitutes a valid a priori hypothesis versus a post hoc explanation? A valid a priori hypothesis must be specified before data collection or analysis begins. It includes a clear statement of the relationship between variables, the direction of the effect, and the specific statistical test to be used. Post hoc explanations are generated after seeing the data and are considered exploratory; they require independent validation and should not be reported with the same statistical confidence.

FAQ 2: How specific should my analysis plan be to prevent unintentional p-hacking? Your plan must unambiguously define: primary and secondary endpoints, exact model specifications (including covariates), data handling rules for outliers/missing data, the precise statistical test, and the alpha level for significance. Ambiguity in any of these areas creates researcher degrees of freedom.

FAQ 3: My experiment yielded an unexpected but promising result. How should I proceed? Document the unexpected finding clearly as exploratory. Do not present it as a confirmatory test. The finding must then be used to generate a new, specific a priori hypothesis for a future, preregistered experiment designed explicitly to test it.

FAQ 4: What are the key elements of a preregistration document for a preclinical study? Key elements include: detailed experimental protocol (species, sample size justification, randomization method), blinding procedures, primary outcome measure and how it is quantified, statistical analysis plan, and criteria for excluding data. Platforms like the Open Science Framework (OSF) or preclinical trial registries provide templates.

FAQ 5: How do I handle necessary protocol deviations without compromising the analysis plan? All deviations must be documented in real-time. The pre-specified analysis should be run on the intent-to-treat dataset. A sensitivity analysis can then be conducted to assess the impact of the deviation, but the primary result comes from the planned analysis on all collected data.

Troubleshooting Guide: Common Issues and Solutions

| Issue | Symptoms | Root Cause | Solution |

|---|---|---|---|

| Low Statistical Power | Non-significant result despite large effect trend. | Underpowered design due to small sample size (N). | Pre-experiment: Use power analysis to determine N. Post-experiment: Report effect size with confidence interval; avoid "accepting the null." |