Predicting Cosmetic Fate: A Modern QSPR Guide for Environmental Risk Assessment in Pharmaceutical Research

This article provides a comprehensive guide for researchers and drug development professionals on using Quantitative Structure-Property Relationship (QSPR) models to predict the environmental fate of cosmetic and personal care product...

Predicting Cosmetic Fate: A Modern QSPR Guide for Environmental Risk Assessment in Pharmaceutical Research

Abstract

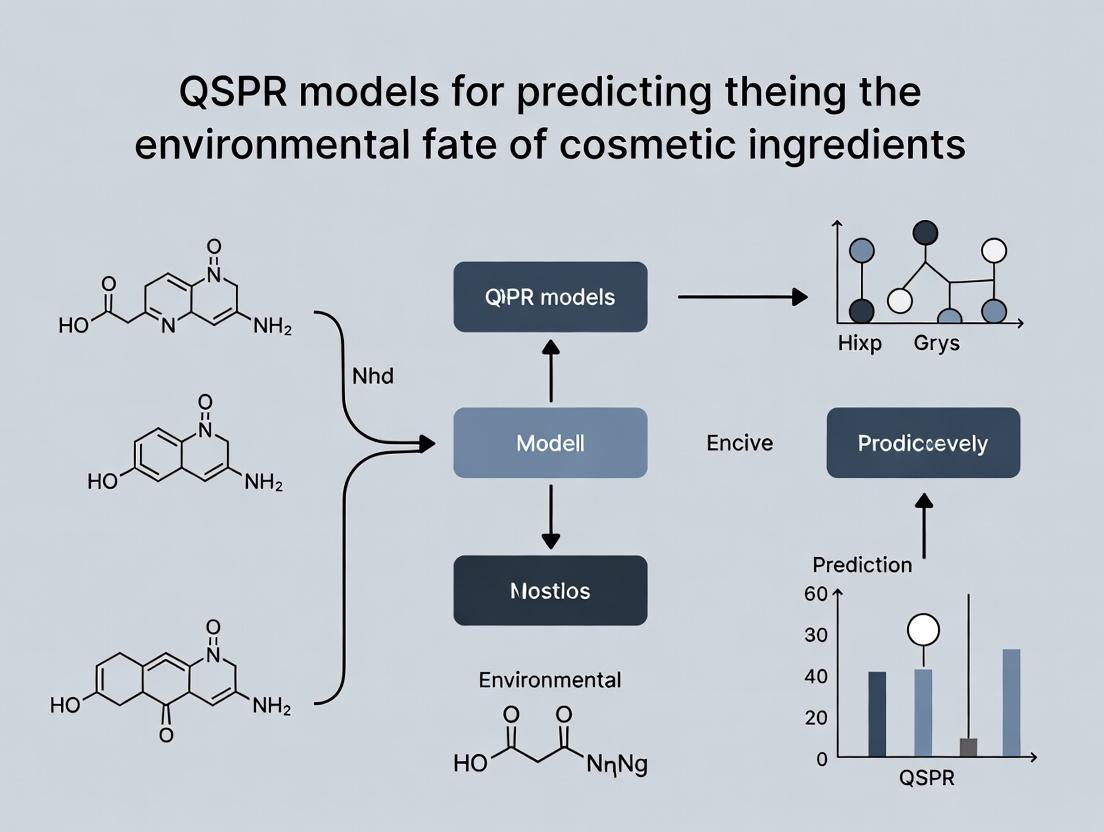

This article provides a comprehensive guide for researchers and drug development professionals on using Quantitative Structure-Property Relationship (QSPR) models to predict the environmental fate of cosmetic and personal care product ingredients. We explore the foundational principles linking molecular structure to environmental behavior, detail current methodologies for model development and application, address common challenges in model troubleshooting and optimization, and present rigorous validation and comparative analysis frameworks. The scope bridges cheminformatics with environmental toxicology, offering practical insights for integrating sustainability assessments into early-stage product development.

From Molecules to Ecosystems: Core QSPR Principles for Cosmetic Ingredient Fate

Within the broader thesis on Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, defining and measuring the core PBT endpoints is foundational. These endpoints—Biodegradation, Bioaccumulation, and Toxicity—serve as the critical empirical data for calibrating, validating, and applying QSPR models. This application note details standardized protocols for their experimental determination, ensuring data quality for robust model development.

Key Endpoint Definitions & Quantitative Data

Table 1: Key Environmental Fate Endpoints & Regulatory Thresholds

| Endpoint | Primary Metric | Common Test System | Typical Duration | Regulatory Thresholds (Example) | Relevance to QSPR Modeling |

|---|---|---|---|---|---|

| Biodegradation | % Dissolved Organic Carbon (DOC) Removal or % Theoretical CO₂/BOD | Activated Sludge, River Water | 28 days (Ready) / 60-90 days (Ultimate) | Ready Biodegradability: ≥60% (OECD 301) | Target property for models predicting mineralization rate. |

| Bioaccumulation | Bioconcentration Factor (BCF) in whole fish | Freshwater fish (e.g., Pimephales promelas, Danio rerio) | Uptake (28d) + Depuration (14d) | BCF > 2000 L/kg (high concern) < 500 L/kg (low concern) (EU REACH) | Key endpoint for lipophilicity (log Kow)-based QSPR models. |

| Acute Aquatic Toxicity | Median Lethal/Effect Concentration (LC/EC₅₀) | Daphnia magna (crustacean), Fish, Algae | 24-96 hours | e.g., Daphnia EC₅₀ < 1 mg/L (classified as acute toxic) | Used to model narcotic toxicity or specific mechanistic effects. |

| Chronic Aquatic Toxicity | No Observed Effect Concentration (NOEC) | Daphnia magna reproduction, Fish early life stage | 21-28 days | Used for risk assessment and PNEC derivation. | Critical for low-dose, long-term effect prediction models. |

Detailed Experimental Protocols

Protocol 3.1: Ready Biodegradability (OECD Test Guideline 301F)

Principle: Measurement of the ultimate biodegradation level of a test substance by determining the evolved CO₂ in a closed system containing inoculated mineral medium.

Materials:

- Test substance (≥ 50 mg/L DOC).

- Mineral salts medium (contains N, P, trace elements).

- Activated sludge inoculum (≤ 30 mg/L suspended solids) from a municipal plant.

- CO₂-trapping solution (e.g., barium hydroxide, NaOH).

- Apparatus: Stoppered Erlenmeyer flasks, aerated CO₂ evolution system, titrator or TOC analyzer.

Procedure:

- Prepare test, inoculum blank, and reference substance (sodium acetate) flasks.

- Add mineral medium, inoculum, and test substance to achieve known DOC.

- Continuously pass CO₂-free air through the system; evolved CO₂ is trapped in alkali.

- At intervals (e.g., days 7, 14, 28), titrate the trapped CO₂ or measure inorganic carbon (IC).

- Calculate % biodegradation = [(CO₂(test) - CO₂(blank)) / Theoretical CO₂(test substance)] x 100.

- A substance passes "ready" if >60% theoretical CO₂ is produced within a 10-day window within the 28-day test.

Protocol 3.2: Determination of the Bioconcentration Factor (BCF) in Fish (OECD TG 305)

Principle: The test consists of two phases: uptake (exposure to the substance via water) and depuration (transfer to clean water). The BCF is derived from the rate constants or the ratio of concentration in fish to water at steady-state.

Materials:

- Juvenile freshwater fish (e.g., fathead minnow, ~1-5g).

- Test substance, radiolabeled ([¹⁴C]-labeled) for precise tracking.

- Flow-through or semi-static test system with temperature control.

- Water sampling apparatus, tissue homogenizer, liquid scintillation counter (LSC).

Procedure:

- Uptake Phase (28 days): Expose fish to a constant, sub-lethal concentration of the test substance (e.g., 1-10 µg/L). Maintain at least two treatment tanks and one control.

- Sample water and 4-5 fish at regular intervals (e.g., 1, 4, 7, 14, 21, 28 days).

- Depuration Phase (14 days): Transfer remaining fish to clean, flowing water.

- Sample fish at intervals (e.g., 1, 2, 4, 7, 14 days post-transfer).

- Analysis: Digest or oxidize fish samples. Analyze water and tissue extracts via LSC to determine chemical concentration.

- Calculation: BCF at steady-state (BCFₛₛ) = Cfish (steady-state) / Cwater (steady-state). Alternatively, calculate kinetic BCF = k₁ (uptake rate constant) / k₂ (depuration rate constant).

Protocol 3.3:Daphnia sp.Acute Immobilisation Test (OECD TG 202)

Principle: Determination of the EC₅₀ of a substance towards Daphnia magna neonates over 24h and 48h.

Materials:

- Cultured Daphnia magna (< 24h old neonates).

- Reconstituted standard freshwater (e.g., ISO or OECD medium).

- Test substance stock solution.

- Multi-well test plates or glass beakers.

Procedure:

- Prepare a geometric series of at least 5 test concentrations and a control.

- Randomly distribute 5 neonates into each test vessel, each containing 10-20 mL of test solution.

- Incubate without feeding at 20°C with a 16:8 light:dark cycle.

- Check for immobility (lack of movement after gentle agitation) at 24h and 48h.

- Calculate EC₅₀ (immobilization) using statistical methods (e.g., probit analysis, Spearman-Karber).

Visualization of PBT Assessment within QSPR Research

Title: QSPR Model Development Integrating Empirical PBT Data

Title: Experimental Workflow for Fish Bioconcentration Factor

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Reagents for PBT Endpoint Assessment

| Item | Function/Benefit | Example/Note |

|---|---|---|

| Activated Sludge Inoculum | Provides a diverse microbial community for realistic biodegradation testing. | Sourced from municipal wastewater treatment plants; pre-conditioned if needed. |

| OECD Standard Freshwater | Reconstituted water with defined hardness and ion composition for aquatic toxicity/BCF tests. | Ensures reproducibility and eliminates variability from natural water sources. |

| Reference/Control Substances | Validates test system performance (positive control) and establishes baseline (negative/solvent control). | e.g., Sodium benzoate (biodegradation), Potassium dichromate (Daphnia toxicity). |

| ¹⁴C- or ³H-Labeled Test Compounds | Enables precise, sensitive tracking of parent compound in BCF and biodegradation studies. | Radio-label typically on a stable, non-exchangeable part of the molecule. |

| Semi-Static/Flow-Through Test Chambers | Maintains stable exposure concentrations for BCF and chronic toxicity tests. | Flow-through systems are preferred for volatile or unstable compounds. |

| Liquid Scintillation Counter (LSC) | Quantifies radioactivity in water, tissue, and CO₂ traps from labeled compounds. | Essential for mass balance calculations in fate studies. |

| DOC/TOC Analyzer | Measures dissolved/organic carbon for biodegradation and water quality monitoring. | Key instrument for non-radiolabeled biodegradation tests (OECD 301). |

| In-Vitro Toxicity Assay Kits | High-throughput screening for specific toxicity pathways (e.g., estrogenicity, mutagenicity). | Used for mechanistic data to inform in silico models (e.g., QSAR for toxicity). |

This application note supports a doctoral thesis investigating Quantitative Structure-Property Relationship (QSPR) models to predict the environmental fate of cosmetic ingredients. The release of personal care products into aquatic systems necessitates robust tools to forecast parameters like biodegradation, bioaccumulation, and aquatic toxicity. Molecular descriptors serve as the foundational numerical inputs for these models. This document details the most critical descriptors, their physicochemical basis, and standardized protocols for their calculation and application in an environmental QSPR workflow.

Core Molecular Descriptors: Theory and Data

The selection of descriptors is guided by their direct relationship to key environmental fate processes: partitioning, bioavailability, and molecular interactions.

Table 1: Essential Molecular Descriptors for Environmental Fate QSPR

| Descriptor | Abbreviation | Definition | Relevance to Environmental Fate |

|---|---|---|---|

| Octanol-Water Partition Coefficient | Log P (or Log Kow) | Logarithm of the ratio of a compound's concentration in octanol to its concentration in water at equilibrium. | Primary predictor of bioaccumulation (BCF), soil/sediment adsorption (Koc), and baseline aquatic toxicity (narcosis). |

| Topological Polar Surface Area | TPSA | Sum of the surface areas of polar atoms (O, N, S, P and attached H). Computed from 2D structure. | Correlates with cell membrane permeability, bioavailability, and hydrogen-bonding potential in aquatic environments. |

| Water Solubility | Log S (or Log W) | Logarithm of the molar solubility in water. | Directly impacts chemical mobility and concentration in aquatic systems. Linked to Log P via Collander-type relationships. |

| Molar Refractivity | MR | Measure of the steric bulk and polarizability of a molecule. | Indicates dispersion force interactions, relevant for adsorption to organic carbon and non-specific toxicity. |

| Hydrogen Bond Donor/Acceptor Count | HBD / HBA | Number of OH/NH groups (donors) and O/N atoms (acceptors). | Quantifies hydrogen-bonding capacity, influencing solubility, sorption, and biodegradation kinetics. |

| Molecular Weight | MW | Mass of one mole of the compound. | Simple filter for molecular size; related to diffusion rates and membrane penetration. |

Key Quantitative Relationships (Empirical):

- Bioconcentration Factor (BCF): Log BCF ≈ 0.85 * Log P - 1.70 (for Log P 1-7).

- Soil Adsorption Coefficient (Log Koc): Log Koc ≈ 0.90 * Log P + 0.20.

- Aquatic Toxicity (Baseline Narcosis): pLC50 ≈ 0.87 * Log P + 2.07.

Experimental Protocols

Protocol 3.1: In Silico Calculation of Core Descriptors

This protocol standardizes the descriptor calculation pipeline for a chemical dataset.

Input Structure Preparation:

- Obtain or draw chemical structures in SMILES or SDF format.

- Standardization: Use KNIME, OpenBabel, or RDKit to standardize structures: neutralize charges, aromatize rings, generate canonical tautomers, and add explicit hydrogens.

- 3D Geometry Optimization: For descriptors requiring conformation (e.g., 3D PSA), generate an energy-minimized 3D conformation using force fields (MMFF94, UFF) in software like OpenBabel or RDKit.

Descriptor Calculation (Batch Mode):

- Utilize chemical informatics suites:

- RDKit (Python): Use

rdMolDescriptors.CalcCrippenDescriptors()for Log P (Wildman-Crippen),rdMolDescriptors.CalcTPSA()for TPSA. - CDK (Java/KNIME): Employ the "Molecular Properties" or "Descriptor Calculation" nodes.

- Online Platforms: Use the EPA's CompTox Chemistry Dashboard or OCHEM for batch calculation and validation.

- RDKit (Python): Use

- Utilize chemical informatics suites:

Descriptor Verification and Curation:

- Compare calculated Log P values from at least two different algorithms (e.g., XLogP, ALogPS, Crippen).

- For a subset of compounds, compare with high-quality experimental data from sources like PubChem or the EPA's DSSTox.

- Flag and investigate outliers (>1.5 log unit difference).

Protocol 3.2: Integrating Descriptors into a QSPR Model Workflow

This protocol details the steps from descriptors to a predictive model for an environmental endpoint (e.g., biodegradation half-life).

Dataset Compilation:

- Source experimental endpoint data from curated databases: ECHA REACH, EPI Suite's BIOWIN training set, PubChem BioAssay.

- Merge structural files (SDF) with endpoint data (CSV) using a unique identifier (e.g., InChIKey, CAS RN).

Descriptor Selection and Pre-processing:

- Reduce dimensionality using correlation analysis (remove descriptors with r > 0.95) and domain knowledge (e.g., prioritize Table 1 descriptors).

- Split data into training (70-80%) and test (20-30%) sets using systematic or random sampling, ensuring chemical space coverage.

- Scale/normalize descriptor values (e.g., Z-score normalization) for machine learning algorithms.

Model Development and Validation:

- Train multiple algorithms: Multiple Linear Regression (MLR), Partial Least Squares (PLS), Random Forest (RF), or Support Vector Machine (SVM).

- Optimize hyperparameters using cross-validation on the training set.

- Validate using the held-out test set. Report key metrics: R2, Q2 (cross-validated), RMSE, and MAE.

- Apply OECD QSAR Validation Principles, ensuring defined applicability domain (e.g., leverage approach, Euclidean distance).

Visualization of Workflows and Relationships

Diagram Titles: A. QSPR Modeling Workflow for Environmental Fate B. Key Descriptor-Property Relationships

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Environmental QSPR Research

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Core engine for 2D/3D descriptor calculation, fingerprint generation, and molecular manipulation within Python scripts. |

| KNIME Analytics Platform | Data Analytics Workflow Tool | Provides a visual interface (nodes) for integrating descriptor calculation (CDK, RDKit), data processing, and machine learning for QSPR modeling. |

| EPA CompTox Chemistry Dashboard | Database & Tool Suite | Authoritative source for experimental and predicted property data (Log P, toxicity, fate), used for data acquisition and model benchmarking. |

| OCHEM Platform | Online QSAR Modeling Platform | Web-based environment for uploading datasets, calculating numerous descriptors, and training/validating QSPR models collaboratively. |

| OpenBabel | Chemical File Conversion Tool | Converts between >100 chemical file formats, essential for preparing and standardizing structural input data from diverse sources. |

| EPI Suite | Predictive Suite | Industry-standard tool for initial estimates of environmental fate parameters; useful for comparative analysis with new QSPR models. |

| Python (SciKit-Learn) | Programming Library | Provides robust implementations of regression and machine learning algorithms (Random Forest, SVM) for building predictive models. |

| OECD QSAR Toolbox | Software Application | Facilitates the grouping of chemicals, filling data gaps, and applying OECD validation principles to ensure model regulatory relevance. |

Critical Datasets and Repositories for Cosmetic Ingredient Properties

Within the framework of developing Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, access to high-quality, curated datasets is paramount. These models rely on accurate experimental data for properties such as Log P, biodegradation rates, aquatic toxicity, and sorption coefficients to train and validate predictive algorithms. This document details the critical public datasets, repositories, and protocols essential for this research domain.

Critical Datasets and Repositories

The following table summarizes the primary repositories containing experimental property data for cosmetic and general chemical ingredients relevant to environmental fate QSPR modeling.

Table 1: Key Repositories for Cosmetic Ingredient Property Data

| Repository / Database Name | Primary Provider / Maintainer | Key Data Types for Environmental Fate | Accessibility | Update Frequency |

|---|---|---|---|---|

| COSMOS Database | COSMOS Project (EU) | Physicochemical properties, (eco)toxicity, environmental fate, use data. | Free, web-based (registration may be required) | Periodically updated |

| ECHA CHEM Database | European Chemicals Agency | Registered substance data incl. physicochemical, environmental fate, toxicity under REACH. | Free, public access | Continuous |

| EPA Comptox Chemicals Dashboard | U.S. Environmental Protection Agency | Extensive chemical properties, bioactivity, exposure, hazard data from multiple sources. | Free, public access | Frequent updates |

| PubChem | National Library of Medicine (NIH) | Chemical structures, physical properties, biological activities, toxicity. | Free, public access | Continuous |

| QSAR DataBank | JRC (EU) | Curated datasets for physicochemical, environmental fate, and toxicity endpoints. | Free, public access via download | Periodically updated |

| Opera | (KNIME extension, LMC) | Curated models and datasets for environmental properties (Log P, biodegradation, etc.). | Open-source, within KNIME | Model-dependent |

| REACH-USE Database | Danish EPA / ECHA | Substance-specific information on use, function, and tonnage. | Free, public access | Periodic |

Table 2: Example Quantitative Data Snapshot for Common Cosmetic Ingredients

| Ingredient (CAS) | Log P (Exp.) | Biodegradation (Ready) | Aquatic Toxicity (LC50 Fish, mg/L) | Melting Point (°C) | Source Database |

|---|---|---|---|---|---|

| Benzyl Salicylate (118-58-1) | 3.63 | 2.43 (Not Readily) | 2.7 | 24-26 | EPI Suite / PubChem |

| Cyclopentasiloxane (541-02-6) | 6.72 | 1.00 (Readily) | >1000 | -38 | COSMOS/ECHA |

| Glycerin (56-81-5) | -1.76 | 4.12 (Readily) | >10000 | 17.8 | PubChem/QSARDB |

| Sodium Lauryl Sulfate (151-21-3) | 1.60 (calc) | 3.71 (Readily) | 14 | 206 | EPA Comptox |

| Octocrylene (6197-30-4) | 6.88 | 1.44 (Not Readily) | 0.1 | -10 | ECHA/EPA Comptox |

Application Notes and Experimental Protocols

Protocol for Data Extraction and Curation from Public Repositories

This protocol describes a systematic approach for gathering and standardizing data for QSPR model development.

Objective: To compile a clean, consistent dataset of cosmetic ingredient properties from multiple public repositories. Materials: Computer with internet access, KNIME Analytics Platform or Python/R environment, chemical structure standardization tool (e.g., RDKit, OpenBabel).

Procedure:

- Compound Identification:

- Define the target list of cosmetic ingredients using INCI names and CAS Registry Numbers.

- Use the EPA Comptox Dashboard batch search function to map identifiers and obtain DTXSIDs (Dashboard-specific IDs) for unambiguous substance tracking.

Data Harvesting:

- For each DTXSID/CASRN, query the following endpoints via API or manual batch download:

- EPA Comptox: Extract measured physicochemical properties (Log P, water solubility, vapor pressure) and ecotoxicity summaries.

- ECHA CHEM: Download registration dossiers (if available) and extract key study summaries for environmental fate (degradation, distribution).

- PubChem: Collect bioassay and toxicity data via PUG-REST API.

- QSAR DataBank: Download pre-curated datasets for specific endpoints (e.g., biodegradation half-lives).

- For each DTXSID/CASRN, query the following endpoints via API or manual batch download:

Data Standardization and Curation:

- Structure Standardization: Generate canonical SMILES for each compound using RDKit. Remove salts, standardize tautomers, and neutralize charges.

- Unit Harmonization: Convert all property values to consistent SI or standard units (e.g., Log P unitless, solubility in mol/L).

- Outlier and Conflict Resolution: Flag conflicting values for the same property from different sources. Apply rules (e.g., prefer experimental over predicted, peer-reviewed over industry report) or calculate the median value.

- Data Table Compilation: Create a master table linking each unique compound ID to all collated property values, with metadata columns for data source, reliability score, and measurement method.

Descriptor Calculation: Using the standardized SMILES, calculate a suite of 2D and 3D molecular descriptors (e.g., using PaDEL-Descriptor, Mordred) for subsequent QSPR modeling.

Title: Workflow for Cosmetic Ingredient Data Curation

Protocol forIn SilicoPrediction of Biodegradation Using OPERA Models

This protocol details the use of the open-source OPERA models within the KNIME platform to predict key environmental fate parameters.

Objective: To predict biodegradation probability and other fate properties for a list of cosmetic ingredients. Materials: KNIME Analytics Platform (latest LTS version), OPERA nodes installation (via KNIME Community Hub), input file of canonical SMILES.

Procedure:

- Workflow Setup:

- Launch KNIME and install the OPERA nodes from the community extensions.

- Create a new workflow. Use a File Reader node to import a CSV file containing a column of canonical SMILES strings for your target ingredients.

Structure Verification:

- Connect the file reader to a RDKit From Molecule node to parse the SMILES.

- Follow with a Structure Check node to validate and clean structures.

Property Prediction:

- Connect the structure stream to the relevant OPERA prediction nodes (e.g.,

Biodegradation_Probability,Bioconcentration_Factor,Log_P). - Configure each node to append prediction columns and, if available, applicability domain (AD) flags to the data table.

- Connect the structure stream to the relevant OPERA prediction nodes (e.g.,

Results Aggregation and Analysis:

- Use a Column Aggregator or Joiner node to merge predictions from multiple OPERA nodes into a single table.

- Add a Rule Engine node to interpret results (e.g., IF "Biodegradation_Probability" > 0.5 THEN "Readily Biodegradable").

- Visualize predictions using Bar Chart or Scatter Plot nodes. Filter compounds falling outside the AD.

- Write the final table of experimental data (if available) and parallel predictions using a CSV Writer node.

Title: OPERA Model Prediction Workflow in KNIME

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Tools and Resources for Cosmetic Ingredient QSPR Research

| Tool / Resource Name | Type | Function in Research | Access |

|---|---|---|---|

| KNIME Analytics Platform | Workflow Automation | Integrates data access, curation, OPERA models, and machine learning for end-to-end QSPR pipeline. | Open-source |

| RDKit | Cheminformatics Library | Core functions for reading, writing, and standardizing chemical structures, and calculating molecular descriptors. | Open-source (Python/C++) |

| EPA Comptox Dashboard API | Web API | Programmatic access to a vast array of chemical property, toxicity, and exposure data. | Free, public |

| PaDEL-Descriptor | Software | Calculates 1D, 2D, and 3D molecular descriptors and fingerprints from chemical structures. | Free, standalone or library |

| OECD QSAR Toolbox | Software | Identifies analogs, fills data gaps, and profiles chemicals for regulatory endpoints including environmental fate. | Free (registration) |

| R / Python (scikit-learn, tidyverse) | Programming Environment | Statistical analysis, data visualization, and building custom machine learning QSPR models. | Open-source |

| ECHA CHEM Advanced Search | Web Interface | Detailed queries for REACH registration data, including robust study summaries for environmental fate. | Free, public |

Application Note: QSPR Modeling for Regulatory Compliance in Cosmetics

This application note details the integration of Quantitative Structure-Property Relationship (QSPR) models within a research framework aimed at predicting the environmental fate of cosmetic ingredients, as necessitated by major regulatory drivers: the European Union's REACH regulation, the U.S. Environmental Protection Agency's (EPA) frameworks, and Green Chemistry principles.

1. Regulatory Data Requirements & QSPR Model Endpoints

Key physicochemical and environmental fate properties mandated for assessment under REACH and EPA programs are primary targets for QSPR prediction in cosmetic ingredient research. These predictions support early-phase risk screening and reduce vertebrate testing.

Table 1: Key Environmental Fate Parameters for QSPR Prediction

| Regulatory Driver | Target Property | Typical QSPR Endpoint | Significance for Environmental Fate |

|---|---|---|---|

| REACH (EC 1907/2006) | Persistence (P) | Biodegradability (e.g., half-life) | Determines chemical longevity; PBT/vPvB assessment. |

| Bioaccumulation (B) | Bioconcentration Factor (BCF) | Potential for accumulation in aquatic organisms. | |

| Octanol-Water Partition Coeff. | Log P (Log Kow) | Proxy for lipophilicity & membrane permeability. | |

| (Q)SAR Assessment | Toxicological endpoints (e.g., LC50) | Part of integrated testing strategies. | |

| EPA TSCA / DFE | Chemical Safety Assessment | Acute Aquatic Toxicity | Screening-level ecological risk. |

| Design for the Environment (DfE) | Molecular Functionality | Informs Green Chemistry redesign. | |

| Green Chemistry | Atom Economy / MW | Molecular Weight, % Yield | Waste minimization at the molecular design stage. |

| Innate Hazard | Predicted toxicity profiles | Inherently safer design of cosmetic actives. |

2. Protocol for Developing a QSPR Model for Biodegradation Half-Life

Objective: To develop a validated QSPR model for predicting ready biodegradability (as a proxy for half-life) of organic cosmetic ingredients.

Materials & Reagents:

- Dataset: A curated dataset of chemical structures and corresponding experimental biodegradation data (e.g., from EPA's DSSTox or ECHA database).

- Software: Chemical structure editing (e.g., ChemDraw), molecular descriptor calculation (e.g., PaDEL-Descriptor, DRAGON), statistical/ML platform (e.g., Python/R with scikit-learn, KNIME).

- Computational Environment: Standard workstation or high-performance computing cluster for descriptor calculation.

Procedure:

- Data Curation: Compile a dataset of 500+ organic compounds with reliable, standardized biodegradation metrics (e.g., %BOD in 28 days). Apply stringent criteria for data quality and remove inorganic and organometallic compounds.

- Descriptor Generation: For each SMILES string in the dataset, generate a comprehensive set of 2D and 3D molecular descriptors (e.g., topological, electronic, geometric). Perform pre-processing to remove constant and highly correlated descriptors.

- Model Development: Split data into training (70%) and test (30%) sets. Employ a feature selection algorithm (e.g., Genetic Algorithm, Recursive Feature Elimination) on the training set to identify the most relevant descriptors. Construct a predictive model using a suitable algorithm (e.g., Random Forest, Support Vector Machine, Partial Least Squares).

- Validation & Applicability Domain (AD): Validate the model internally (cross-validation on the training set) and externally (prediction on the held-out test set). Report standard metrics: R², Q², RMSE. Define the model's AD using a method such as leverage or distance-based to identify compounds for which predictions are reliable.

- Regulatory Contextualization: Apply the validated model to predict biodegradability for a library of novel cosmetic UV filters. Categorize results into "readily biodegradable," "inherently biodegradable," or "persistent" based on regulatory thresholds (e.g., REACH criteria: >70% degradation = readily biodegradable).

3. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for QSPR-Driven Environmental Fate Research

| Item / Solution | Function | Example / Rationale |

|---|---|---|

| Curated Regulatory Datasets | Provides high-quality experimental data for model training and validation. | EPA CompTox Chemicals Dashboard, ECHA REACH database, OECD QSAR Toolbox. |

| Descriptor Calculation Software | Generates quantitative numerical features from molecular structure. | PaDEL-Descriptor (open-source), DRAGON (commercial), RDKit cheminformatics library. |

| Machine Learning Platform | Enables model building, feature selection, and statistical validation. | Python with scikit-learn & pandas, R Studio, KNIME Analytics Platform. |

| Applicability Domain Tool | Defines the chemical space where model predictions are reliable. | Standalone scripts or integrated functions (e.g., in AMBIT, KNIME) for calculating leverage, distance, or ranges. |

| Chemical Inventory Database | Library of target cosmetic ingredients and potential alternatives. | In-house database of emulsifiers, preservatives, UV filters; linked to predicted properties. |

4. Visualizing the QSPR-Regulatory Integration Workflow

Title: QSPR Workflow for Regulatory-Driven Cosmetic Design

5. Protocol for Applying the OECD Principles for QSAR Validation to Cosmetic Ingredients

Objective: To ensure that developed QSPR models for cosmetic ingredients comply with the OECD Principles for the Validation of (Q)SARs, a requirement for regulatory acceptance under REACH.

Procedure:

- Principle 1: A Defined Endpoint. Explicitly specify the regulatory endpoint being modeled (e.g., "Ready Biodegradability" as a binary classification per REACH Annex VII).

- Principle 2: An Unambiguous Algorithm. Document the exact algorithm (e.g., Random Forest with 500 trees, Gini impurity). Provide all equations, software, and settings.

- Principle 3: A Defined Domain of Applicability. Using the model from Section 2, calculate the Applicability Domain (AD) for each prediction. Report results only for compounds falling within the AD.

- Principle 4: Appropriate Measures of Goodness-of-Fit, Robustness, and Predictivity. Provide the following for the biodegradation model:

- Goodness-of-fit: R² and RMSE for the training set.

- Robustness: Q² and RMSE from 5-fold cross-validation.

- Predictivity: R² and RMSE for the external test set.

- Principle 5: A Mechanistic Interpretation, If Possible. Interpret the top 3 molecular descriptors in the final model (e.g., "High XlogP correlates with lower biodegradability due to hydrophobic partitioning"). Relate to known chemical or biological mechanisms.

The Role of QSPR in Prioritizing Ingredients for Lab Testing

Within the thesis research on Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, a critical challenge is the efficient allocation of limited experimental resources. This application note details the protocol for using validated QSPR models as a prioritization tool to identify which cosmetic ingredients warrant further, resource-intensive laboratory testing (e.g., biodegradation, toxicity assays). The primary goal is to minimize unnecessary animal testing and costly experimental campaigns by focusing on compounds predicted to be of high environmental concern.

Core QSPR Models for Environmental Fate Prioritization

The prioritization framework relies on a suite of QSPR models predicting key environmental fate parameters. The following table summarizes the target properties, their significance, and typical model performance metrics based on current literature and our internal validation.

Table 1: Key QSPR Models for Environmental Fate Prioritization of Cosmetic Ingredients

| Target Property | Environmental Significance | Typical QSPR Model Performance (R²) | Prioritization Threshold |

|---|---|---|---|

| Biodegradability (e.g., %BOD) | Persistence in the environment; regulatory trigger (e.g., EU PBT/vPvB). | 0.70 - 0.85 | Compounds with predicted %BOD < 60% are flagged for lab testing. |

| Log P (Octanol-Water Partition Coefficient) | Bioaccumulation potential and aquatic toxicity. | 0.90 - 0.98 | Compounds with predicted Log P > 4.5 are flagged for bioaccumulation testing. |

| pKa (Acid Dissociation Constant) | Speciation and bioavailability in aquatic systems. | 0.85 - 0.95 | Compounds with pKa near environmental pH (6-8) are prioritized for speciation studies. |

| Soil Adsorption Coefficient (Log Koc) | Mobility in groundwater; potential for drinking water contamination. | 0.75 - 0.88 | Compounds with predicted Log Koc < 2.5 (high mobility) are prioritized for leaching studies. |

| Atmospheric OH Radical Reaction Rate (kOH) | Persistence in air (Indirect Greenhouse Gas potential). | 0.65 - 0.80 | Compounds with predicted half-life > 2 days are flagged for atmospheric fate testing. |

Protocol: Application of QSPR for Prioritization

Protocol 1: High-ThroughputIn SilicoScreening and Risk Flagging

Objective: To computationally screen a library of cosmetic ingredients and assign risk-based flags for laboratory testing.

Materials & Software:

- Input Data: A curated library of cosmetic ingredient structures (e.g., SMILES, MOL files).

- QSPR Software: PaDEL-Descriptor, CORAL, or proprietary QSPR platforms. KNIME or Python (RDKit, scikit-learn) for workflow automation.

- Models: Pre-validated QSPR models for properties in Table 1.

Procedure:

- Descriptor Calculation: For each compound in the library, calculate the molecular descriptors required by the pre-validated QSPR models (e.g., topological, electronic, geometric).

- Property Prediction: Apply the QSPR models to predict the environmental fate properties for each compound.

- Flagging Algorithm: Implement the following logical decision tree based on predicted values and regulatory thresholds: a. Flag for Persistence Testing if: (Predicted %BOD < 60%) OR (Predicted Atmospheric Half-life > 2 days). b. Flag for Bioaccumulation/Toxicity Testing if: (Predicted Log P > 4.5). c. Flag for Environmental Mobility Testing if: (Predicted Log Koc < 2.5).

- Priority Scoring: Assign a composite priority score. Example: Priority Score = (100 - Predicted %BOD) + (10 * (Predicted Log P - 4.5 if >0 else 0)).

- Output: Generate a ranked list of ingredients, from highest to lowest priority score, with clear flags indicating the recommended type of laboratory testing.

Visualization of Workflow:

Title: QSPR-Based Prioritization Workflow

Protocol 2: Experimental Validation Protocol for High-Priority Compounds

Objective: To experimentally determine the biodegradability (via OECD 301D) of the top 10 highest-priority compounds identified in Protocol 1.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Relevance |

|---|---|

| OECD 301D Ready Biodegradability Test Kit | Standardized inoculum and mineral medium for closed bottle test, ensuring regulatory compliance. |

| Inoculum (Activated Sludge) | Microbial consortium from a wastewater treatment plant, essential for simulating environmental degradation. |

| Chemical Oxygen Demand (COD) Vials | For quantifying the theoretical oxygen demand of the test compound, a key reference value. |

| Dissolved Oxygen (DO) Meter with Stirred Probes | For precise, continuous monitoring of biological oxygen demand (BOD) over 28 days. |

| Headspace Vials & GC-MS/FID | For analyzing ultimate biodegradation via CO2 production or parent compound disappearance. |

| Reference Compounds (Sodium Benzoate, Aniline) | Readily biodegradable and inhibitory controls, required for validating test system performance. |

Procedure (Abridged OECD 301D):

- Preparation: Prepare stock solutions of each high-priority test compound. Collect and condition activated sludge inoculum.

- Bottle Setup: For each compound, set up triplicate test bottles containing mineral medium, inoculum, and the test compound at a concentration of 10-20 mg/L ThOD. Set up blanks (inoculum only) and controls (reference compound).

- Incubation: Incubate bottles in the dark at 20°C ± 1°C for 28 days.

- Monitoring: Measure dissolved oxygen concentration in all bottles at least weekly.

- Calculation: Calculate the percentage biodegradation = [(DO depletion in test - DO depletion in blank) / ThOD] x 100.

- Model Refinement: Compare experimental results with QSPR predictions. Use data to retrain/refine the QSPR model, improving future prioritization accuracy.

Visualization of Validation Feedback Loop:

Title: Prioritization and Model Refinement Cycle

Data Integration and Decision Framework

The final output integrates predictions and flags into a decision matrix.

Table 2: Example Prioritization Output for a Hypothetical Cosmetic Ingredient Library

| Ingredient (CAS) | Pred. %BOD | Flag: Persist. | Pred. Log P | Flag: Bioacc. | Priority Score | Recommended Action |

|---|---|---|---|---|---|---|

| Ingredient A | 25 | HIGH | 5.2 | HIGH | 83 | IMMEDIATE TESTING (P&B) |

| Ingredient B | 75 | Low | 2.1 | Low | 5 | Low Priority (Archive) |

| Ingredient C | 45 | HIGH | 3.8 | Low | 55 | Schedule Testing (Persistence) |

| Ingredient D | 80 | Low | 4.8 | MEDIUM | 23 | Consider Testing (Bioaccumulation) |

Conclusion: This systematic, QSPR-driven approach provides a rational, data-informed strategy for prioritizing cosmetic ingredients for environmental fate testing. It directly supports the core thesis by bridging in silico predictions with targeted experimental validation, creating a iterative cycle that enhances model robustness and focuses laboratory resources on compounds of greatest potential concern.

Building Reliable Models: Step-by-Step QSPR Development for Fate Prediction

Data Curation and Preprocessing Strategies for Heterogeneous Cosmetic Datasets

Within a broader thesis on developing Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, robust data curation and preprocessing form the foundational pillar. The predictive accuracy and regulatory applicability of such models are wholly dependent on the quality, consistency, and relevance of the underlying data. Cosmetic datasets are inherently heterogeneous, amalgamating data from regulatory filings (e.g., EU Cosmetic Ingredient Database, COSING), experimental studies (biodegradation, ecotoxicity), supplier specifications, and chemical databases. This document provides detailed application notes and protocols for transforming these disparate data sources into a unified, model-ready dataset suitable for computational environmental fate prediction.

Application Notes: Key Challenges & Strategic Framework

The primary challenge stems from the multifaceted nature of data relevant to cosmetic ingredient fate.

Table 1: Sources and Types of Heterogeneity in Cosmetic Ingredient Data

| Data Source Type | Example Sources | Nature of Heterogeneity | Key Data Attributes |

|---|---|---|---|

| Regulatory/Lists | COSING, FDA Voluntary Cosmetic Registration Program (VCRP), INCI | Nomenclature, permissible function, concentration ranges | INCI Name, CAS RN (often missing), function, restrictions |

| Physicochemical Properties | EPA CompTox Dashboard, ECHA, PubChem, experimental literature | Units, measurement conditions, variability, missing values | log P, water solubility, vapor pressure, melting point |

| Environmental Fate Data | REACH dossiers, EFSA opinions, academic literature | Test guidelines, result formats (%, half-lives), organism/systems | Biodegradation (OECD 301), hydrolysis, photolysis |

| Chemical Identifiers | CAS Registry, PubChem, ChemSpider | Multiple CAS RNs per ingredient, differing SMILES representations | CAS RN, SMILES, InChIKey, molecular formula |

| Commercial/Supplier | Manufacturer SDS, technical data sheets | Proprietary mixtures, non-standardized reporting, trade names | Purity, isomer distribution, physical form |

Strategic Framework for Curation

The overarching strategy involves a sequential pipeline: Ingredient Identification → Data Collection → Harmonization → Quality Control → Feature Engineering.

Diagram Title: Data Curation Workflow for Cosmetic Ingredients

Experimental Protocols

Protocol: Chemical Identifier Resolution and Structure Standardization

Objective: To obtain a unique, verified, and standardized molecular representation for each cosmetic ingredient from its common name.

Materials & Reagents:

- Input list (INCI names, common names, possible CAS RN).

- Access to programmatic APIs: PubChem PUG-REST, NIH CACTUS, OPSIN, or commercial tools (ChemAxon, ACD/Labs).

- Chemical structure standardization software (e.g., RDKit, OpenBabel, standardizer modules).

Procedure:

- Query Construction: For each ingredient, generate search queries using the INCI name and any available CAS RN.

- Automated Identifier Fetching: Use a script (Python/R) to query PubChem via PUG-REST. Prioritize results by "C&P Listed" or "Standardized" flags. Extract Canonical SMILES, InChIKey, and molecular weight.

- Ambiguity Resolution: For ingredients with multiple matches (e.g., "Fragrance" mixtures, botanical extracts), flag for manual curation. For isomers, default to the most common or commercially prevalent form, documenting the decision.

- Structure Standardization: Process retrieved SMILES using RDKit's

Chem.MolFromSmiles()followed by standardization:- Remove solvents and salts.

- Generate canonical tautomer.

- Strip stereochemistry if not relevant to fate (document when kept).

- Aromatize the molecule according to standard rules.

- Verification: Compare molecular formula and weight from the standardized SMILES to other database entries. Flag major discrepancies (>5% difference in MW) for manual review.

- Output: A table with columns:

INCI_Name,Resolved_CAS,Standardized_SMILES,InChIKey,Molecular_Formula,Molecular_Weight,Curation_Flag.

Protocol: Harmonization of Experimental Fate Data

Objective: To convert disparate experimental results for biodegradation into a uniform, continuous variable suitable for QSPR modeling.

Materials & Reagents:

- Compiled literature and database extracts containing biodegradation data.

- Reference list of OECD/ISO test guideline equivalents.

Procedure:

- Data Extraction: Populate a table with columns:

Ingredient_InChIKey,Test_Guideline,Result_Type,Result_Value,Duration,Endpoint. - Guideline Categorization: Map all test guidelines to categories:

Ready_Biodegradability: OECD 301 A-F, ISO 7827, etc.Inherent_Biodegradability: OECD 302 A-C.Simulation_Test: OECD 303, 314.

- Result Normalization:

- Convert all results to a "Degradation Half-Life (DT50)" in days where possible.

- For "% Degradation" after time t: Assume first-order kinetics. Calculate rate constant ( k = -\ln(1 - degradation/100) / t ). Then ( DT_{50} = \ln(2) / k ).

- For "Pass/Fail" (e.g., >60% in 28 days), assign a binary flag and impute a representative DT50 (e.g., 14 days for pass, 100+ days for fail) with high uncertainty flag.

- Weighting: Assign a data quality weight (1-3) based on test guideline reliability and reporting completeness.

- Output: A unified table with columns:

Ingredient_InChIKey,DT50_days,Test_Category,Data_Quality_Weight,Data_Source.

Table 2: Biodegradation Data Harmonization Rules

| Original Result | Test Guideline | Normalization Rule | Output DT50 (Example) |

|---|---|---|---|

| 85% degradation in 28 days | OECD 301 B | ( k = -\ln(1-0.85)/28 ), ( DT_{50} = \ln(2)/k ) | 11.2 days |

| "Readily biodegradable" | OECD 301 F | Assign binary = 1; Impute DT50 = 14 ± 5 days | 14 days [imputed] |

| 50% removal in 30d (simulation) | OECD 303 A | Calculate as first-order; document as simulation DT50 | 43.3 days |

| No degradation in 28 days | OECD 301 D | Set binary = 0; Impute DT50 = 100 days (lower bound) | >100 days |

Protocol: Handling Missing Physicochemical Properties

Objective: To impute missing critical properties (log P, Water Solubility) using predictive tools, with uncertainty estimation.

Procedure:

- Identify Critical Gaps: Determine missing values for properties deemed essential for the environmental fate QSPR (e.g., log P, water solubility, vapor pressure).

- Multi-Model Prediction: For each missing property, generate predictions using at least two independent methods:

- log P: Use XLogP3, ALogPS, and RDKit's Crippen contribution method.

- Water Solubility: Use OPERA (QSAR) or general solubility equation (GSE) based on log P and melting point.

- Consensus & Uncertainty: Calculate the mean and standard deviation of the predictions. Flag imputed values where the coefficient of variation (CV) > 30%.

- Documentation: Record the imputed value, the methods used, and the standard deviation as a measure of uncertainty.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Cosmetic Data Curation

| Tool/Resource | Type | Primary Function in Curation | Access |

|---|---|---|---|

| RDKit | Open-source Cheminformatics Library | Chemical structure representation, standardization, descriptor calculation, and substructure searching. | https://www.rdkit.org |

| PubChem PUG-REST API | Programmatic Database Interface | Batch retrieval of chemical structures, identifiers, and properties via INCI name or CAS RN. | https://pubchem.ncbi.nlm.nih.gov |

| EPA CompTox Dashboard | Integrated Data Warehouse | Authoritative source for physicochemical, fate, and toxicity data for chemicals, including many cosmetics-relevant substances. | https://comptox.epa.gov/dashboard |

| OECD QSAR Toolbox | Software Application | Profiling chemicals for relevant properties and metabolic pathways, aiding in data gap filling and read-across. | https://www.oecd.org/chemicalsafety/risk-assessment/oecd-qsar-toolbox.htm |

| CDK (Chemistry Development Kit) | Open-source Library | Complementary cheminformatics functions, especially useful for molecular descriptor calculation and IO handling. | https://cdk.github.io |

| OPSIN | IUPAC Name Interpreter | Converts systematic chemical names to SMILES, useful for ingredients listed with IUPAC names in older literature. | https://opsin.ch.cam.ac.uk |

Diagram Title: Core Curation Goals and Supporting Tools

Within the broader thesis on Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, descriptor management is a pivotal step. The predictive performance, robustness, and interpretability of a QSPR model are directly contingent on the calculated molecular descriptors and the subsequent selection of the most relevant subset. Cosmetic ingredients present unique challenges, ranging from diverse chemical classes (e.g., surfactants, emollients, UV filters, preservatives) to specific environmental fate endpoints like biodegradation, bioaccumulation factor (BAF), and aquatic toxicity. This document provides application notes and detailed protocols for navigating the descriptor landscape, aiming to balance complex, high-dimensional chemical information with the need for interpretable, regulatory-acceptable models.

Descriptors are numerical representations of molecular structure. The following table categorizes major descriptor classes relevant to environmental fate prediction, along with common software/toolkits for their calculation.

Table 1: Key Descriptor Classes and Calculation Tools for Environmental Fate QSPR

| Descriptor Class | Description | Example Descriptors | Common Calculation Tools |

|---|---|---|---|

| 0D & 1D (Constitutional) | Simple counts and properties based on molecular formula. | Molecular weight, atom counts, bond counts, number of rings. | RDKit, PaDEL-Descriptor, Dragon. |

| 2D (Topological) | Derived from molecular graph connectivity. | Molecular connectivity indices (Chi), Wiener index, Zagreb index, Kier & Hall descriptors. | RDKit, Dragon, ChemDes. |

| 3D (Geometric) | Require 3D optimized molecular geometry. | Principal moments of inertia, radius of gyration, 3D-Wiener index. | Open Babel, RDKit (with conformer generation), Dragon. |

| Quantum Chemical | Derived from quantum mechanical calculations. | HOMO/LUMO energies, dipole moment, polarizability, partial atomic charges. | Gaussian, GAMESS, ORCA, MOPAC. |

| Surface & Volume | Describe molecular shape and interaction fields. | Molecular surface area, molar volume, polar surface area. | RDKit, Dragon, VolSurf+. |

| Hydrophobic | Characterize partitioning behavior. | LogP (octanol-water), LogD, molar refractivity. | RDKit, ChemAxon, ACD/Labs. |

| Environmental Fate Specific | Designed for fate endpoints. | Biodegradation probability fragments, BCF/BAF baselines. | EPI Suite (BIOWIN, BCFBAF), VEGA. |

Workflow Diagram: Descriptor Calculation Pipeline

Title: Descriptor Calculation Workflow

Descriptor Selection Protocols

The raw descriptor matrix is often high-dimensional and noisy. Selection is crucial to avoid overfitting and to enhance model interpretability.

Protocol 3.1: Pre-Selection and Data Cleaning

Objective: Remove non-informative and problematic descriptors.

- Constant/Near-Constant Values: Remove descriptors with standard deviation < 1e-5.

- Missing Values: Remove descriptors with >20% missing values. For others, impute using column median (for robust, skewed data) or KNN imputation.

- Duplicate Descriptors: Calculate pairwise correlation (Pearson's r). For |r| > 0.95, remove one of the pair, prioritizing the simpler or more interpretable descriptor.

Protocol 3.2: Filter Methods (Univariate)

Objective: Rank descriptors based on their individual correlation with the target environmental fate endpoint (e.g., log(BAF)). Method: For a dataset of N compounds:

- Calculate a statistical measure between each descriptor (X_i) and the target (Y).

- For continuous targets: Use Pearson's r, mutual information, or F-statistic from ANOVA.

- For categorical targets (e.g., ready/not ready biodegradable): Use ANOVA F-statistic or mutual information.

- Rank all descriptors by the absolute value of the chosen metric.

- Select the top K descriptors (e.g., top 100) for further analysis.

Protocol 3.3: Wrapper Methods (Multivariate)

Objective: Find a subset of descriptors that work well together for a specific machine learning algorithm. Method (Sequential Feature Selection - SFS):

- Choose Algorithm: Select a base model (e.g., Random Forest, SVM).

- Define Objective: Define evaluation metric (e.g., 5-fold cross-validated R² or RMSE).

- Forward Selection:

- Start with an empty set.

- Sequentially add the descriptor that most improves the model's cross-validated performance.

- Stop when adding new descriptors no longer yields significant improvement (e.g., < 1% increase in R² over 5 steps).

- Backward Elimination: Start with the full set and iteratively remove the least important descriptor.

Protocol 3.4: Embedded Methods

Objective: Leverage the internal feature importance metrics of certain algorithms. Method (Random Forest-based Selection):

- Train a Random Forest model on the pre-filtered descriptor set.

- Extract descriptor importance scores (e.g., Gini importance or permutation importance).

- Rank descriptors by importance.

- Perform recursive feature elimination (RFE): Iteratively train models and remove the least important descriptors, evaluating performance at each step to find the optimal subset.

Table 2: Comparison of Descriptor Selection Methods

| Method Type | Example | Advantages | Disadvantages | Suitability for Environmental QSPR |

|---|---|---|---|---|

| Filter | Correlation, Mutual Info | Fast, scalable, model-agnostic. | Ignores feature interactions, may select redundant features. | Good for initial screening of 1000s of descriptors. |

| Wrapper | Sequential Feature Selection | Considers feature interactions, optimizes for specific model. | Computationally expensive, high risk of overfitting. | Useful for final tuning of a model with <200 descriptors. |

| Embedded | LASSO, Random Forest Importance | Model-specific, balances efficiency and performance. | Tied to the model's biases. | Highly recommended; LASSO for linear models, RF for non-linear. |

Diagram: Descriptor Selection Strategy Logic

Title: Descriptor Selection Strategy Logic

Application: QSPR for Biodegradation of Emollients

Case Study: Predicting ready biodegradability (OECD 301 series) for a set of 150 ester-based emollients.

Experimental Protocol:

- Descriptor Calculation: Generate 2D (RDKit) and logP descriptors for all compounds. Calculate 3 relevant BIOWIN fragments from EPI Suite.

- Pre-Selection: Start with 500 descriptors. Remove constants, impute 5% missing values with median, reduce to 180 via correlation filter (r < 0.9).

- Selection: Use Random Forest (1000 trees) to compute permutation importance. Perform RFE, evaluating model accuracy with 10-fold cross-validation at each step.

- Result: Optimal model uses 8 descriptors. Interpretability Analysis:

- logP: Expected negative correlation with biodegradability (high logP hinders bioavailability).

- Number of ester bonds (-COO-): Positive contribution (ester hydrolysis is a key degradation pathway).

- Topological polar surface area (TPSA): Negative contribution (lower TPSA often correlates with higher membrane permeability for initial microbial uptake).

- BIOWIN5 (linear model): Positive contribution (fragment-based estimate).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Descriptor Workflows

| Tool/Software | Type | Primary Function in Descriptor Pipeline | Key Consideration for Environmental Fate |

|---|---|---|---|

| RDKit | Open-source Cheminformatics Library | Core 2D descriptor calculation, SMILES parsing, molecular normalization. | Essential for batch processing of diverse cosmetic ingredient structures. |

| PaDEL-Descriptor | Standalone Software | Calculates 1875+ 1D, 2D descriptors and fingerprints. User-friendly. | Good for rapid generation of a comprehensive 2D set. |

| EPI Suite | Suite of Estimations Programs | Provides specifically designed fragment-based descriptors (e.g., BIOWIN, BCFBAF). | Crucial. Industry-standard for environmental fate; descriptors are inherently interpretable. |

| OpenBabel / MOPAC | Open-source Software | 3D conversion, conformation generation, and semi-empirical quantum calculations (for 3D/QC descriptors). | Required for descriptors capturing molecular shape and electronic properties. |

| scikit-learn | Python Library | Implementation of filter, wrapper, and embedded selection methods (VarianceThreshold, RFE, SelectFromModel). | The standard platform for building and evaluating the selection pipeline. |

| KNIME / Orange | Visual Workflow Tools | Visual assembly of descriptor calculation, selection, and modeling nodes. | Excellent for reproducible, documented workflows and collaborative projects. |

This document provides detailed application notes and protocols for the selection and implementation of four key quantitative structure-property relationship (QSPR) modeling algorithms: Multiple Linear Regression (MLR), Partial Least Squares (PLS), Random Forest (RF), and Neural Networks (NN). The context is a thesis focused on predicting the environmental fate (e.g., biodegradation, bioaccumulation, aquatic toxicity) of cosmetic ingredients, such as UV filters, preservatives, and emulsifiers. The goal is to equip researchers with practical guidance for developing robust, interpretable, and predictive models for regulatory and green chemistry applications.

Table 1: Core Characteristics of Selected QSPR Algorithms

| Algorithm | Typical Use Case in Fate Prediction | Key Strengths | Key Limitations | Interpretability |

|---|---|---|---|---|

| Multiple Linear Regression (MLR) | Baseline model; establishing simple, interpretable relationships with few, non-collinear descriptors. | Simple, fast, highly interpretable, provides explicit equation. | Assumes linearity; prone to overfitting with many descriptors; requires careful descriptor selection. | High |

| Partial Least Squares (PLS) | Modeling with many collinear descriptors (e.g., from molecular fingerprints). | Handles multicollinearity well; reduces dimensionality; good for small sample sizes. | Assumes linear latent structure; model interpretation can be less direct than MLR. | Medium-High |

| Random Forest (RF) | Non-linear modeling with complex descriptor interactions; robust performance. | Handles non-linearity and interactions; robust to outliers and overfitting; provides importance metrics. | Can be computationally heavy with many trees; less intuitive than linear models; may extrapolate poorly. | Medium (via feature importance) |

| Neural Networks (NN) | Capturing highly complex, non-linear relationships with large, high-quality datasets. | High predictive power for complex endpoints; flexible architecture. | "Black-box" nature; requires large datasets; extensive hyperparameter tuning; computationally intensive. | Low |

Table 2: Typical Performance Metrics on Cosmetic Ingredient Fate Datasets*

| Algorithm | Avg. R² (Test Set) | Avg. RMSE (Test Set) | Typical Data Size Required | Computational Cost |

|---|---|---|---|---|

| MLR | 0.60 - 0.75 | Varies by endpoint | > 20 compounds per descriptor | Low |

| PLS | 0.65 - 0.80 | Varies by endpoint | > 15 compounds per latent variable | Low-Moderate |

| RF | 0.75 - 0.85 | Varies by endpoint | > 50 compounds | Moderate |

| NN | 0.80 - 0.90 | Varies by endpoint | > 200 compounds | High |

*Metrics are illustrative ranges based on literature for endpoints like logKow, Biodegradation, and LC50. Actual performance depends heavily on data quality and descriptor selection.

Experimental Protocols

Protocol 3.1: Generalized QSPR Workflow for Cosmetic Ingredient Fate Prediction

Objective: To develop a validated QSPR model for predicting an environmental fate parameter (e.g., logBCF) of cosmetic ingredients. Materials: See "The Scientist's Toolkit" (Section 5). Procedure:

- Data Curation: Compile a dataset of cosmetic ingredients with experimentally measured fate property values from reliable sources (e.g., EPA's CompTox, ECHA). Apply stringent quality control.

- Descriptor Calculation: For each compound, compute molecular descriptors (e.g., using PaDEL-Descriptor, RDKit) and/or fingerprints.

- Data Splitting: Split data into training (≈70-80%), validation (≈10-15%, for NN/RF tuning), and external test (≈10-15%) sets using chemical clustering (e.g., Kennard-Stone) to ensure representativeness.

- Descriptor Preprocessing & Selection (for MLR/PLS): a. Remove constant/near-constant descriptors. b. Scale descriptors (standardization recommended for PLS/RF/NN). c. For MLR: Apply feature selection (e.g., Stepwise, Genetic Algorithm) to reduce collinearity and avoid overfitting.

- Model Training & Internal Validation: a. MLR: Fit model using ordinary least squares on training set. Validate via Leave-One-Out (LOO) or Leave-Many-Out (LMO) cross-validation. b. PLS: Determine optimal number of latent components using cross-validation on the training set to minimize error. c. RF: Optimize hyperparameters (number of trees, mtry) using grid/random search on the validation set. d. NN: Design architecture (layers, nodes). Optimize hyperparameters (learning rate, dropout) using validation set. Use early stopping to prevent overfitting.

- Model Validation: Apply the finalized model to the held-out external test set. Calculate OECD-principle compliant validation metrics (Q²ext, R², RMSE, MAE).

- Applicability Domain (AD) Definition: Define the model's AD using, for example, leverage (Williams plot) or distance-based methods for all models.

Protocol 3.2: Specific Protocol for Random Forest Model Development

Objective: To train a Random Forest model for predicting ready biodegradability (binary classification) of cosmetic preservatives. Procedure:

- Follow Steps 1-3 from Protocol 3.1.

- Descriptor Processing: Calculate a diverse set of 2D descriptors. Perform min-max scaling. Use a variance threshold (e.g., 0.01) to remove low-variance features.

- Hyperparameter Optimization: Using the training set and 5-fold cross-validation, perform a random search over:

n_estimators: [100, 500, 1000]max_depth: [5, 10, 20, None]min_samples_split: [2, 5, 10]max_features: ['sqrt', 'log2']class_weight: ['balanced', None]

- Final Model Training: Train the RF model on the entire training set using the optimal hyperparameters identified.

- Validation & Analysis: Predict on the external test set. Generate confusion matrix, accuracy, sensitivity, specificity, and ROC-AUC. Analyze feature importance (Gini importance or permutation importance) to identify key structural drivers of biodegradability.

Visualizations

Diagram 1: QSPR Model Development & Validation Workflow

Diagram 2: Conceptual Decision Flow for Algorithm Selection

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials for QSPR Modeling

| Item / Software | Category | Primary Function in QSPR Workflow | Example/Provider |

|---|---|---|---|

| PaDEL-Descriptor | Software | Calculates a comprehensive set of 1D, 2D, and 3D molecular descriptors and fingerprints directly from chemical structures. | http://www.yapcwsoft.com/dd/padeldescriptor/ |

| RDKit | Software/Cheminformatics Library | Open-source toolkit for cheminformatics, used for descriptor calculation, molecule manipulation, and fingerprint generation within Python. | https://www.rdkit.org/ |

| KNIME Analytics Platform | Software/Workflow | Graphical platform for creating data science workflows, integrating cheminformatics nodes (RDKit, CDK) for easy QSPR model building without extensive coding. | https://www.knime.com/ |

| scikit-learn | Software/Library | Primary Python library for implementing MLR, PLS, RF, and other machine learning algorithms, including tools for preprocessing and validation. | https://scikit-learn.org/ |

| TensorFlow / PyTorch | Software/Library | Deep learning frameworks used for building, training, and deploying sophisticated neural network architectures. | Google / Meta AI |

| OECD QSAR Toolbox | Software/Database | Provides databases, profiling, and grouping tools for filling data gaps and supporting (Q)SAR assessment, crucial for regulatory context. | https://www.oecd.org/chemicalsafety/risk-assessment/oecd-qsar-toolbox.htm |

| Comptox Chemistry Dashboard | Database | Curated database of chemical properties, fate, and toxicity data from EPA, essential for sourcing experimental data for model training and validation. | https://comptox.epa.gov/dashboard/ |

| Python / R | Programming Language | Core programming environments for scripting the entire QSPR pipeline, from data processing to model development and visualization. | PSF / R Foundation |

Within the broader thesis on Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, this document details the application of validated models to two critical tasks: (1) predicting the environmental fate parameters of novel, previously unsynthesized cosmetic ingredients, and (2) supporting read-across assessments for data-poor substances by identifying suitable analogs based on model-predicted properties. This bridges in silico predictions with regulatory safety evaluation frameworks.

Current Data & Model Landscape

Recent literature and databases emphasize the need for predictive tools for biodegradation, bioaccumulation, and aquatic toxicity of cosmetic chemicals. The following table summarizes key environmental fate endpoints relevant to cosmetics and typical QSPR model performance metrics.

Table 1: Key Environmental Fate Endpoints and Contemporary QSPR Model Performance

| Endpoint | Common Abbreviation | Typical QSPR Model Type | Reported R² (Range) | Key Datasets (Examples) |

|---|---|---|---|---|

| Biodegradability | BIOWIN, BOD | Classification, Regression | 0.70 - 0.85 | BIOWIN, EPI Suite; OECD QSAR Toolbox data |

| Octanol-Water Partition Coefficient | Log Kow/Log P | MLR, ANN, Random Forest | 0.90 - 0.98 | PHYSPROP, LOGKOW |

| Bioconcentration Factor | Log BCF | PLS, Support Vector Machine | 0.75 - 0.82 | BCFT; EPA's BCFBAF database |

| Aquatic Toxicity (Fish) | pLC50 | QSTR, k-NN | 0.65 - 0.80 | ECOTOX, ICE models |

| Soil Adsorption Coefficient | Log Koc | Random Forest, Gradient Boosting | 0.80 - 0.90 | Pesticide Properties Database |

Application Notes

Predicting Novel Ingredients

- Purpose: To estimate the environmental profile of a designed ingredient prior to synthesis.

- Protocol:

- Structure Preparation: Generate a clean 2D/3D molecular structure (e.g., SDF, MOL file) of the novel ingredient using cheminformatics software (e.g., OpenBabel, RDKit).

- Descriptor Calculation: Use standardized software (e.g., PaDEL-Descriptor, Dragon) to calculate a comprehensive set of molecular descriptors. The descriptor set must match the model's training domain.

- Domain of Applicability (DoA) Check: Statistically assess if the new compound falls within the chemical space of the training set (e.g., using leverage, distance-based methods). Flag extrapolations.

- Prediction: Input the calculated descriptors into the pre-trained QSPR model (e.g., Random Forest for Log Kow, SVM for toxicity) to obtain predictions for target endpoints (e.g., Log P, BCF, BIOWIN probability).

- Uncertainty Quantification: Report prediction intervals or classification probabilities, not just point estimates.

Facilitating Read-Across Scenarios

- Purpose: To identify source analogs for a target substance with data gaps, based on structural similarity and predicted property similarity.

- Protocol:

- Target Characterization: For the target substance, calculate its molecular descriptors and obtain predicted values for the endpoint of interest (e.g., biodegradation half-life).

- Candidate Pool Identification: From a relevant chemical inventory (e.g., CosIng, ECHA database), retrieve a pool of potential analogs using initial structural filters (e.g., same functional groups, similar carbon chain length).

- Similarity Screening: Apply a multi-parameter similarity measure. Calculate Euclidean or Manhattan distance in a space defined by (a) key structural fingerprints (e.g., ECFP4) and (b) predicted environmental fate properties from a consensus of models.

- Analog Selection & Justification: Select the top candidate(s) where both structural and predicted property distances are minimized. The model prediction provides a quantitative, hypothesis-driven basis for the read-across justification, supplementing expert judgment.

- Assessment: Perform a final check to ensure the mechanistic basis for the property is consistent between target and source.

Visualized Workflows

Diagram 1: QSPR Prediction of Novel Ingredients Workflow

Diagram 2: Read-Across Using QSPR Predictions

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Resources

| Item / Software | Primary Function | Application in Protocols |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit. | Structure standardization, descriptor calculation, fingerprint generation. |

| PaDEL-Descriptor | Software for calculating molecular descriptors and fingerprints. | Generating 1D, 2D, and 3D descriptors for QSPR model input. |

| OECD QSAR Toolbox | Integrated workflow application for (Q)SAR assessment. | Profiling, identifying analogs, filling data gaps via read-across. |

| EPI Suite | Suite of physical/chemical property and environmental fate estimation models. | Initial baseline predictions (e.g., Log P, BCF, biodegradation). |

| KNIME / Python (scikit-learn) | Data analytics platforms. | Building, validating, and deploying custom QSPR models (e.g., Random Forest). |

| ECHA CHEM Database | Public database of chemical information. | Source of experimental data and structures for candidate analog pools. |

Within the broader thesis on developing robust Quantitative Structure-Property Relationship (QSPR) models for predicting the environmental fate of cosmetic ingredients, this case study focuses on two critical classes: surfactants and ultraviolet (UV) filters. These substances enter aquatic environments via wastewater, posing risks due to persistence. Predicting biodegradation half-lives (t₁/₂) using computational models is essential for green chemistry design and environmental risk assessment prior to large-scale synthesis and use.

Data Compilation & Descriptor Calculation

Objective: To assemble a curated dataset of experimental biodegradation half-lives for model training and validation.

Protocol 2.1: Data Collection & Curation

- Source Experimental Data: Search peer-reviewed literature and databases (e.g., EPA's CompTox Chemicals Dashboard, NORMAN Network) for experimentally determined biodegradation half-lives (primary or ultimate) for surfactants (e.g., alkyl ethoxylates, alkyl sulfates) and UV filters (e.g., benzophenone-3, octocrylene).

- Standardize Values: Convert all half-life data to a consistent unit (e.g., hours). Note the test system (e.g., river die-away, activated sludge).

- Criteria for Inclusion: Include only compounds with:

- A well-defined, unambiguous chemical structure.

- Experimentally measured t₁/₂ under aerobic aquatic conditions.

- Reported mean values with standard deviation or standard error.

- Divide Dataset: Split the compiled dataset randomly into a training set (≈70-80%) for model development and a test set (≈20-30%) for external validation.

Protocol 2.2: Molecular Structure Preparation & Descriptor Generation

- Software: Use cheminformatics software (e.g., OpenBabel, RDKit, PaDEL-Descriptor).

- Structure Input: Draw or import SMILES strings for each compound into the software.

- Geometry Optimization: Perform energy minimization and geometry optimization using a semi-empirical method (e.g., PM6) or molecular mechanics force field.

- Descriptor Calculation: Calculate a comprehensive set of molecular descriptors, including:

- Constitutional: Molecular weight, number of specific atoms/bonds.

- Topological: Connectivity indices, Wiener index.

- Geometrical: Moment of inertia, molecular volume.

- Electronic: HOMO/LUMO energies, dipole moment, partial charges.

- Quantum Chemical: Calculated using DFT (e.g., B3LYP/6-31G*): Gibbs free energy, electrophilicity index.

- 2D/3D Fingerprints: For similarity analysis.

Model Development & Validation

Objective: To construct, statistically validate, and interpret a QSPR model for predicting log(t₁/₂).

Protocol 3.1: Feature Selection & Model Training

- Pre-process Data: Remove constant and near-constant descriptors. Scale the remaining descriptor matrix (e.g., autoscaling).

- Reduce Dimensionality: Apply genetic algorithm (GA) or stepwise selection to identify the most relevant, non-correlated descriptors for t₁/₂.

- Model Building: Employ multiple linear regression (MLR) or machine learning algorithms (e.g., Partial Least Squares regression, Support Vector Regression) on the training set using the selected descriptors.

- Internal Validation: Assess the model using leave-one-out (LOO) or leave-many-out (LMO) cross-validation. Calculate Q² (cross-validated R²).

Protocol 3.2: Model Validation & Applicability Domain

- Statistical Evaluation: Calculate key metrics for the training set: Determination coefficient (R²), adjusted R², root mean square error (RMSE). Apply the model to the external test set and calculate predictive R² (R²_pred) and RMSE.

- Define Applicability Domain (AD): Use the leverage approach. Calculate the leverage (hᵢ) for each compound. Set the warning leverage (h) = 3(p+1)/n, where p is the number of model descriptors and n is the number of training compounds. A prediction is considered reliable if its leverage ≤ h and its standardized residual is within ±3 standard deviations.

- Interpretation: Analyze the sign and coefficient of each selected descriptor to provide a physicochemical interpretation of its influence on biodegradation half-life.

Table 1: Example QSPR Model Performance Metrics

| Model Type | Dataset | n | R² | R²_adj | Q²_LOO | RMSE | R²_pred (Test Set) |

|---|---|---|---|---|---|---|---|

| PLS-R | Training | 45 | 0.85 | 0.82 | 0.78 | 0.32 | - |

| PLS-R | Test | 15 | - | - | - | 0.38 | 0.79 |

Table 2: Key Descriptors in an Example Model & Their Interpretation

| Descriptor Name | Category | Coefficient | Probable Physicochemical Meaning |

|---|---|---|---|

| logP (o/w) | Hydrophobicity | +0.72 | Higher lipophilicity correlates with slower biodegradation. |

| EHOMO (eV) | Electronic | -0.51 | Higher HOMO energy (more easily oxidized) may favor certain degradation pathways. |

| MSD (amu) | Shape/Size | +0.35 | Larger molecular size/diameter impedes enzymatic attack. |

| ATSC2v | Topological Charge | -0.28 | Reflects electron distribution affecting interaction with biodegrading enzymes. |

Application & Predictive Workflow

Objective: To outline the standard operating procedure for using the validated QSPR model.

Protocol 4.1: Predicting t₁/₂ for a Novel Compound

- Input Structure: Obtain the canonical SMILES string for the target surfactant or UV filter.

- Descriptor Generation: Follow Protocol 2.2 to calculate the full descriptor set for the target compound.

- Descriptor Extraction: Extract the specific descriptors required by the final validated QSPR model.

- Prediction: Input the descriptor values into the model equation to calculate the predicted log(t₁/₂).

- AD Assessment: Calculate the leverage of the target compound. If hᵢ > h*, flag the prediction as an extrapolation and interpret with caution.

- Report: Report the predicted t₁/₂ with the associated uncertainty estimate and AD status.

Visualization of Workflows

QSPR Model Development & Application Workflow

Descriptor Calculation and Selection Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for QSPR Modeling of Environmental Fate

| Item | Category | Function & Explanation |

|---|---|---|

| RDKit | Software/Cheminformatics | Open-source toolkit for cheminformatics, used for molecule manipulation, descriptor calculation, and fingerprint generation. |

| PaDEL-Descriptor | Software/Cheminformatics | Software capable of calculating 1D, 2D, and 3D molecular descriptors and fingerprints from chemical structures. |

| Gaussian 16 | Software/Computational Chemistry | Industry-standard software for performing quantum chemical calculations (e.g., DFT) to obtain electronic structure descriptors. |

| SOLVER Add-in | Software/Statistics | Microsoft Excel add-in for performing advanced statistical regression analysis, including stepwise selection and PLS. |

| OECD QSAR Toolbox | Software/Database | Software designed to fill data gaps for chemical hazard assessment, includes databases and profiling tools for biodegradation. |

| EPA CompTox Dashboard | Database | Publicly accessible database providing experimental and predicted property data for thousands of chemicals, including biodegradation endpoints. |

| SMILES Strings | Data Input | Standardized text representation of molecular structure, serving as the primary input for all computational modeling steps. |

| Curated Experimental t₁/₂ Dataset | Data | The foundational, quality-controlled dataset of biodegradation half-lives for surfactants and UV filters, essential for model training and testing. |

Overcoming Pitfalls: Strategies to Enhance QSPR Model Robustness and Accuracy

Diagnosing and Mitigating Overfitting in Complex Environmental Datasets

Within Quantitative Structure-Property Relationship (QSPR) modeling for predicting the environmental fate of cosmetic ingredients, overfitting represents a critical failure mode. It occurs when a model learns noise, artifacts, and spurious correlations specific to the training dataset, degrading its performance on novel, unseen data. This is exacerbated by the high-dimensionality, multicollinearity, and inherent noise of complex environmental datasets (e.g., combining chemical descriptors, physicochemical properties, and experimental fate data).

Quantitative Diagnostics for Overfitting

Effective diagnosis requires multiple quantitative metrics. The following table summarizes key indicators and their interpretation.

Table 1: Key Quantitative Diagnostics for Model Overfitting

| Diagnostic Metric | Formula / Description | Interpretation in Context of Overfitting |

|---|---|---|