QSAR Modeling in Drug Discovery: A Modern Guide to Predicting Bioactivity, Avoiding Pitfalls, and Accelerating Development

This comprehensive guide demystifies Quantitative Structure-Activity Relationship (QSAR) modeling for drug activity prediction, tailored for researchers and drug development professionals.

QSAR Modeling in Drug Discovery: A Modern Guide to Predicting Bioactivity, Avoiding Pitfalls, and Accelerating Development

Abstract

This comprehensive guide demystifies Quantitative Structure-Activity Relationship (QSAR) modeling for drug activity prediction, tailored for researchers and drug development professionals. We explore the fundamental principles of QSAR, from historical context to core concepts like molecular descriptors and the chemoinformatic workflow. The article provides a practical walkthrough of modern methodological approaches, including machine learning algorithms and application pipelines for virtual screening and lead optimization. We address common challenges in model development, such as overfitting and data curation, with proven strategies for troubleshooting and optimization. Finally, we cover critical validation protocols, regulatory considerations (e.g., OECD principles, ICH Q14), and comparative analyses of QSAR against other in silico methods. This resource synthesizes current best practices to empower scientists in building robust, interpretable, and regulatory-compliant models that de-risk and accelerate the drug discovery pipeline.

What is QSAR? Building the Foundation for Predictive Drug Modeling

Application Notes

Quantitative Structure-Activity Relationship (QSAR) modeling is a cornerstone of modern computational drug discovery, enabling the prediction of biological activity, toxicity, and pharmacokinetic properties from molecular structure. Within the broader thesis on QSAR for drug activity prediction, its primary role is to accelerate the early-stage lead identification and optimization pipeline by prioritizing compounds for synthesis and biological testing.

Table 1: Representative Performance Metrics of Recent QSAR Models in Drug Discovery (2022-2024)

| Target / Endpoint | Model Type | Dataset Size (Compounds) | Key Metric | Performance Value | Reference (Example) |

|---|---|---|---|---|---|

| SARS-CoV-2 Mpro Inhibition | Deep Neural Network (DNN) | ~5,000 | AUC-ROC | 0.91 | [Nature Comms, 2023] |

| hERG Cardiotoxicity | Ensemble (RF, XGBoost) | 12,000 | Balanced Accuracy | 0.85 | [J. Chem. Inf. Model., 2024] |

| Cytochrome P450 3A4 Inhibition | Graph Convolutional Network (GCN) | 8,500 | F1-Score | 0.82 | [Bioinformatics, 2023] |

| Aqueous Solubility (logS) | Directed Message Passing Neural Network | 10,000 | RMSE (test set) | 0.68 log units | [JCIM, 2022] |

| Antibacterial Activity (E. coli) | QSAR with Mordred Descriptors & SVM | 2,500 | Concordance Index | 0.88 | [ACS Infect. Dis., 2023] |

Key Applications:

- Virtual Screening: QSAR models screen millions of virtual compounds in silico, identifying high-probability hits for experimental validation. This drastically reduces the cost and time of High-Throughput Screening (HTS).

- Lead Optimization: Models guide medicinal chemists by predicting how structural modifications (e.g., adding a methyl group, changing a heterocycle) will affect potency, selectivity, and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties.

- Toxicity and ADMET Prediction: Predictive models for hERG inhibition, hepatotoxicity, and metabolic stability are integral to failing compounds early, improving clinical success rates.

- Polypharmacology & Target Prediction: Multi-task and proteochemometric models predict activity across multiple biological targets, aiding in understanding off-target effects and repurposing opportunities.

Experimental Protocols

Protocol 1: Development of a Robust QSAR Classification Model for Activity Prediction

Objective: To construct a validated QSAR model for predicting active/inactive compounds against a specific target (e.g., Kinase X).

Materials & Reagents (The Scientist's Toolkit):

| Research Reagent / Solution | Function in Protocol |

|---|---|

| Chemical Dataset (e.g., from ChEMBL or PubChem) | Provides structures and associated bioactivity data (IC50/Ki) for model training and testing. |

| KNIME Analytics Platform or Python (RDKit, scikit-learn) | Software environment for data curation, descriptor calculation, and machine learning. |

| Molecular Descriptor Calculation Software (e.g., RDKit, Mordred, PaDEL) | Generates numerical representations (descriptors) of chemical structures (e.g., molecular weight, logP, topological indices). |

| Machine Learning Libraries (e.g., scikit-learn, XGBoost, DeepChem) | Algorithms (Random Forest, SVM, DNN) used to learn the relationship between descriptors and activity. |

| Model Validation Suite (e.g., scikit-learn metrics, Y-Randomization scripts) | Tools to assess model performance, robustness, and chance correlation. |

Procedure:

- Data Curation and Preparation:

- Retrieve bioactivity data for target "Kinase X" from a public database (e.g., ChEMBL). Assemble a dataset of SMILES strings and corresponding IC50 values.

- Apply filters: remove inorganic salts, duplicates, and compounds with unreliable measurements.

- Convert IC50 values to a binary class label (e.g., Active: IC50 ≤ 100 nM; Inactive: IC50 > 1000 nM). Use a "gray zone" (100 nM - 1000 nM) for ambiguity.

- Partition the cleaned dataset into a Training Set (∼70-80%) and an external Test Set (∼20-30%) using stratified sampling to maintain class distribution.

Descriptor Calculation and Preprocessing:

- For each compound in the training and test sets, calculate a comprehensive set of 2D and 3D molecular descriptors (e.g., 200-5000 descriptors) using RDKit or PaDEL.

- Perform feature preprocessing on the training set descriptors:

- Remove near-zero variance descriptors.

- Remove highly inter-correlated descriptors (|r| > 0.95).

- Scale the remaining descriptors (e.g., StandardScaler).

- Apply the exact same preprocessing steps (using training set parameters) to the test set descriptors.

Model Training and Validation:

- Using the preprocessed training set, train multiple machine learning algorithms (e.g., Random Forest, Support Vector Machine, Gradient Boosting).

- Optimize hyperparameters for each algorithm using 5-fold Cross-Validation on the training set. Use metrics like AUC-ROC or Balanced Accuracy.

- Select the best-performing algorithm/hyperparameter combination based on cross-validation performance.

Model Evaluation and Validation:

- Apply the final trained model to the held-out external Test Set. Calculate performance metrics: Accuracy, Sensitivity, Specificity, AUC-ROC, and Matthews Correlation Coefficient (MCC).

- Perform Y-Randomization: Shuffle the activity labels in the training set and rebuild the model. A significant drop in cross-validation performance confirms the model is not due to chance correlation.

- Perform Applicability Domain (AD) Analysis using methods like leverage or distance-based measures to define the chemical space where the model's predictions are reliable.

Model Deployment and Interpretation:

- Use the validated model to screen a virtual library of novel compounds.

- Employ model interpretation techniques (e.g., SHAP analysis, feature importance from Random Forest) to identify key structural features driving activity, providing insights for lead optimization.

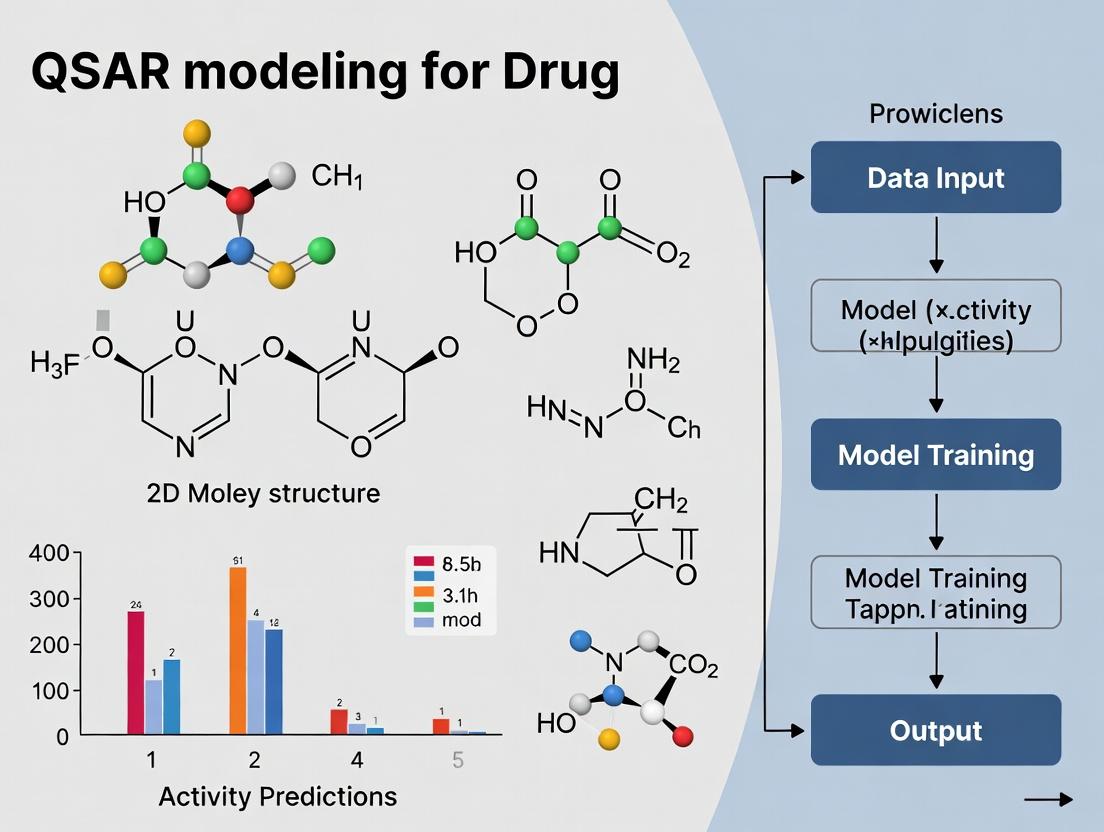

Diagram 1: QSAR Model Development Workflow

Protocol 2: Integrating QSAR Predictions into a Multi-Parameter Lead Optimization Protocol

Objective: To use consensus predictions from multiple QSAR models (activity, solubility, hERG) to rank lead series and select compounds for synthesis.

Procedure:

- Generate Virtual Analog Library:

- Based on an initial active compound (Lead A), use a reaction-based enumeration tool (e.g., RDKit’s

EnumerateLibrary) to generate a focused virtual library of ∼500-1000 analogs via feasible chemical transformations (e.g., R-group variations, scaffold hops).

- Based on an initial active compound (Lead A), use a reaction-based enumeration tool (e.g., RDKit’s

Run Multi-Target QSAR Predictions:

- Process the virtual library through a suite of pre-validated QSAR models:

- Primary Target Activity Model (from Protocol 1).

- ADMET Models: Predicted aqueous solubility (logS), microsomal stability (% remaining), and hERG inhibition probability.

- Standardize outputs into a consistent scoring scale (e.g., 0-1 probability for classification, scaled values for regression).

- Process the virtual library through a suite of pre-validated QSAR models:

Apply Multi-Parameter Optimization (MPO):

- Define an objective function (e.g.,

MPO Score = w1*Activity_Prob + w2*Solubility_Score - w3*hERG_Prob), whereware weights reflecting project priorities. - Calculate the MPO score for every virtual compound.

- Filter compounds falling outside the Applicability Domain of any critical model.

- Define an objective function (e.g.,

Rank, Cluster, and Select:

- Rank the filtered library by MPO score.

- Perform structural clustering (e.g., Butina clustering on fingerprints) to ensure structural diversity among top-ranked compounds.

- Select 10-20 compounds from the top of diverse clusters for visual inspection by a medicinal chemist and subsequent synthesis.

Diagram 2: Integrated QSAR-Driven Lead Optimization

The evolution of Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone in rational drug design. Beginning with classical linear free-energy relationships, the field has transitioned through computational chemistry techniques to contemporary artificial intelligence (AI) and machine learning (ML) paradigms, fundamentally altering the scale, accuracy, and predictive power of models for drug activity prediction.

The Classical Era: Hansch and Free-Energy Relationships

The foundational work of Corwin Hansch in the 1960s introduced the paradigm of using physicochemical parameters to correlate molecular structure with biological activity. This approach formalized the concept that a drug's activity is a function of its ability to reach the site of action and subsequently bind to its target.

Table 1: Key Physicochemical Parameters in Classical Hansch Analysis

| Parameter | Symbol | Description | Typical Role in Model |

|---|---|---|---|

| Partition Coefficient | logP (π) | Logarithm of the octanol-water partition coefficient. Measures lipophilicity. | Accounts for transport and membrane permeability. |

| Hammett Constant | σ | Electron-withdrawing or donating power of a substituent. | Accounts for electronic effects on binding. |

| Taft's Steric Constant | Es | Measure of steric bulk of a substituent. | Accounts for steric hindrance in binding. |

| Indicator Variable | I | Binary variable (0 or 1) for presence/absence of a structural feature. | Accounts for specific qualitative effects. |

Protocol 1.1: Classical Hansch Analysis Workflow

- Data Curation: Assay a congeneric series of compounds for a specific biological endpoint (e.g., IC50, ED50).

- Parameterization: For each compound, calculate or obtain experimental values for key physicochemical descriptors (logP, σ, Es).

- Model Formulation: Postulate a linear free-energy relationship equation: log(1/C) = a(logP) + b(σ) + c(Es) + k, where C is the molar concentration producing the biological effect, and a, b, c, k are coefficients.

- Regression Analysis: Perform multiple linear regression (MLR) to fit the coefficients.

- Validation: Assess model quality using statistics (R², s, F-test). Use the model to predict activity of new analogs.

The Computational Chemistry Era: 3D-QSAR and Machine Learning

Advancements in computing power enabled the development of 3D-QSAR methods (e.g., CoMFA, CoMSIA) in the 1980s-90s, which considered the three-dimensional arrangement of molecular fields. Concurrently, the application of non-linear machine learning algorithms (e.g., Random Forest, Support Vector Machines) began to address the complexity of biological data.

Protocol 1.2: Standard 3D-QSAR (CoMFA) Protocol

- Molecular Alignment: Superimpose a set of molecules based on a common pharmacophore or alignment rule using molecular modeling software.

- Field Calculation: Place each molecule within a 3D grid. Calculate steric (Lennard-Jones) and electrostatic (Coulombic) interaction energies at each grid point using a probe atom.

- Data Matrix Construction: Assemble a matrix where rows are compounds and columns are the energy values at thousands of grid points. Biological activity is the dependent variable.

- Partial Least Squares (PLS) Analysis: Use PLS regression to correlate the field values with biological activity, handling the high number of correlated descriptors.

- Contour Map Generation: Visualize results as 3D contour maps showing regions where increased steric bulk or specific electrostatic charges favor or disfavor activity.

Table 2: Evolution of QSAR Modeling Techniques

| Era | Core Methodology | Typical Descriptors | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Classical (1960s-) | Multiple Linear Regression (MLR) | logP, σ, Es, MR | Simple, interpretable, mechanistic insight. | Limited to congeneric series; assumes linearity. |

| 3D-QSAR (1980s-) | PLS Regression on Grid Fields | Steric & Electrostatic Field Values | Captures 3D molecular interactions; visual output. | Dependent on molecular alignment; descriptor redundancy. |

| ML-QSAR (2000s-) | Random Forest, SVM, ANN | Topological, 2D/3D Physicochemical, Quantum Chemical | Handles large, diverse datasets; non-linear relationships. | Risk of overfitting; "black box" interpretability issues. |

| AI-Driven (2010s-) | Deep Learning (Graph NN, Transformers) | Molecular Graphs, SMILES Strings, 3D Structures | Learns features automatically; models ultra-large chemical spaces. | High computational cost; requires very large datasets. |

The Modern Paradigm: AI-Driven Models

AI-driven QSAR leverages deep neural networks to learn hierarchical feature representations directly from raw molecular representations, such as Simplified Molecular Input Line Entry System (SMILES) strings or molecular graphs, without relying on pre-defined descriptors.

Core Architectures and Applications

Graph Neural Networks (GNNs): Treat molecules as graphs with atoms as nodes and bonds as edges. GNNs (e.g., MPNN, GCN) aggregate and transform information from neighboring atoms to learn a molecular representation. Transformer Models: Adapted from natural language processing, these models (e.g., ChemBERTa, Molecular Transformer) process SMILES strings as sequences, learning contextual relationships between molecular "tokens."

Protocol 2.1: Building a Graph Neural Network for Activity Prediction

- Graph Representation: Convert each molecule into a graph: Nodes = atoms (featurized with atomic number, hybridization, etc.), Edges = bonds (featurized with bond type, conjugation).

- Model Architecture:

- Message Passing Layers (k iterations): For each node, aggregate feature vectors from its neighbors. Apply a learned update function (e.g., a neural network) to combine the aggregated message with the node's current state.

- Global Readout: After k message-passing steps, aggregate the feature vectors of all nodes into a single, fixed-size molecular representation vector.

- Prediction Head: Feed the molecular representation into a fully connected neural network layer to produce a prediction (e.g., pIC50, classification probability).

- Training: Use a large dataset of (molecule, activity) pairs. Optimize model weights via backpropagation to minimize loss (e.g., Mean Squared Error for regression, Cross-Entropy for classification).

- Validation: Perform rigorous k-fold cross-validation and external testing on a hold-out set. Use metrics like RMSE, MAE, ROC-AUC.

Table 3: Essential Research Reagent Solutions for Modern AI-QSAR

| Item / Resource | Function & Application |

|---|---|

| ChEMBL / PubChem BioAssay | Primary public repositories for curated bioactivity data (IC50, Ki, etc.) essential for training and benchmarking models. |

| RDKit / Open Babel | Open-source cheminformatics toolkits for molecular descriptor calculation, fingerprint generation, SMILES parsing, and file format conversion. |

| DeepChem Library | An open-source toolkit streamlining the implementation of deep learning models (including GNNs) on chemical data. |

| DGL-LifeSci / PyTorch Geometric | Specialized libraries built on deep learning frameworks (PyTorch) for easy implementation of graph neural networks on molecular graphs. |

| MOE / Schrödinger Suite | Commercial software platforms offering integrated environments for descriptor calculation, classical/3D-QSAR, and recently, AI/ML model integration. |

| GPU Computing Cluster | High-performance computing resources (e.g., NVIDIA GPUs) are critical for training complex deep learning models on large chemical datasets in a feasible time. |

Visualization of QSAR Evolution and Workflows

Diagram 1: Evolution from Hansch to AI-Driven QSAR (84 chars)

Diagram 2: Graph Neural Network Training Workflow (61 chars)

Within the broader thesis on QSAR modeling for drug activity prediction, this document outlines the foundational paradigm. Quantitative Structure-Activity Relationship (QSAR) modeling is a computational methodology that relates a set of predictor variables (molecular descriptors, representing structure) to a response variable (biological activity). The quantitative relationship is established via statistical or machine learning models, enabling the prediction of activities for novel compounds. This Application Note details the core components and provides protocols for building robust QSAR models.

Defining the Core Triad: Structure, Activity, Relationship

Structure Representation (Molecular Descriptors)

Molecular structure is encoded numerically through descriptors. These are categorized as follows:

Table 1: Categories of Molecular Descriptors for QSAR

| Descriptor Category | Description & Examples | Typical Software/Tool | Relevance to Drug Activity |

|---|---|---|---|

| 0D & 1D | Simple counts and properties (e.g., molecular weight, atom count, number of rotatable bonds). | RDKit, Open Babel, Dragon | Pharmacokinetics (e.g., absorption, Rule of 5). |

| 2D | Topological descriptors derived from molecular graph (e.g., connectivity indices, fragment counts, fingerprints like ECFP, MACCS keys). | PaDEL-Descriptor, RDKit, ChemDes | Captures pharmacophoric patterns and bonding environment. |

| 3D | Geometrical descriptors requiring 3D conformation (e.g., molecular surface area, volume, spatial moments, CoMFA fields). | Open3DALIGN, RDKit, Schrodinger Maestro | Crucial for modeling receptor-ligand interactions (e.g., binding affinity). |

| 4D & Beyond | Incorporates ensemble of conformations or induced-fit dynamics. | Custom workflows, MD simulation packages | Accounts for molecular flexibility and dynamic interactions. |

Activity Measurement (The Response Variable)

Biological activity is the measurable biological effect of a compound. Accurate, quantitative data is essential.

Table 2: Common Bioactivity Endpoints in QSAR Studies

| Activity Type | Typical Unit | Experimental Protocol (Example) | Key Assay Technology |

|---|---|---|---|

| Half Maximal Inhibitory Concentration (IC50) | Molar concentration (e.g., nM, µM) | Dose-response curve measuring inhibition of a target enzyme/cell viability. | Fluorescence-based enzymatic assay, CellTiter-Glo luminescent cell viability assay. |

| Half Maximal Effective Concentration (EC50) | Molar concentration (e.g., nM, µM) | Dose-response curve measuring a desired functional effect (e.g., GPCR activation). | cAMP accumulation assay, Calcium flux assay (FLIPR). |

| Inhibition Constant (Ki) | Molar concentration (e.g., nM) | Direct measurement of binding affinity, often competitive binding assays. | Radio-ligand binding, Surface Plasmon Resonance (SPR). |

| Pharmacokinetic Parameters | e.g., Clearance (mL/min), LogP (unitless) | In vivo or in vitro ADME (Absorption, Distribution, Metabolism, Excretion) studies. | Caco-2 permeability assay, Microsomal stability assay, HPLC for LogP determination. |

The Quantitative Relationship (Modeling Algorithms)

The relationship between descriptors (X) and activity (Y) is modeled using various algorithms.

Table 3: Common QSAR Modeling Algorithms

| Algorithm Class | Examples | Key Characteristics | Typical Use Case |

|---|---|---|---|

| Linear Methods | Multiple Linear Regression (MLR), Partial Least Squares (PLS) | Interpretable, less prone to overfitting on small datasets. | Initial exploration, datasets with limited samples (<100). |

| Machine Learning | Random Forest (RF), Support Vector Machines (SVM), Gradient Boosting (XGBoost) | Can model non-linear relationships, often higher predictive power. | Larger, more complex datasets. |

| Deep Learning | Graph Neural Networks (GNNs), Multi-Layer Perceptrons (MLPs) | Can learn features directly from molecular graphs or SMILES. | Very large datasets, aiming for state-of-the-art accuracy. |

Core QSAR Modeling Protocol

Protocol: Development and Validation of a Robust 2D-QSAR Model

Objective: To build a validated QSAR model for predicting pIC50 (-logIC50) values for a series of kinase inhibitors.

I. Data Curation and Preparation

- Compound & Activity Collection: Assemble a consistent dataset of chemical structures (SMILES format) and corresponding experimental IC50 values from published literature or internal screening. Convert IC50 to pIC50.

- Chemical Standardization: Using RDKit or KNIME, standardize all structures: neutralize charges, remove salts, generate canonical tautomers, and assign correct stereochemistry.

- Descriptor Calculation: Use PaDEL-Descriptor or RDKit to compute a comprehensive set of 2D descriptors and fingerprints (e.g., 200-5000 variables).

- Data Preprocessing: Remove constant/near-constant descriptors. For remaining descriptors, handle missing values (impute or remove). Split data into Training Set (~70-80%) and an external Test Set (~20-30%) using clustering (e.g., Kennard-Stone) to ensure representative chemical space coverage.

II. Model Building and Validation

- Feature Selection: Apply method(s) to the Training Set:

- Variance Threshold: Remove low-variance features.

- Correlation Filter: Remove one of any pair of highly correlated descriptors (Pearson r > 0.95).

- Model-Based Selection: Use Random Forest feature importance or LASSO regression to select top ~20-50 most relevant descriptors.

- Model Training: Train multiple algorithm types (e.g., PLS, RF, SVM) on the Training Set using the selected features. Optimize hyperparameters via grid/random search with internal cross-validation (CV) (e.g., 5-fold CV).

- Internal Validation (Training Set Performance): Report CV metrics: Q² (cross-validated R²), RMSEcv.

- External Validation (Test Set Prediction): Apply the finalized model to the held-out Test Set. Report key metrics: R²pred, RMSEpred, and Mean Absolute Error (MAE). This is the gold standard for assessing predictive ability.

- Applicability Domain (AD) Definition: Use leverage (Williams plot) or distance-based methods (e.g., Euclidean distance in descriptor space) to define the chemical space region where the model's predictions are reliable. Flag compounds outside the AD.

III. Model Interpretation and Deployment

- Interpretation: For linear models, analyze descriptor coefficients. For tree-based models, analyze feature importance. Use SHAP (SHapley Additive exPlanations) values for complex models to explain individual predictions.

- Deployment: Save the final model (e.g., as a

.pklfile for scikit-learn). Deploy as a web service or integrate into a cheminformatics pipeline for virtual screening of new compound libraries.

Visualizing the QSAR Workflow and Relationships

Diagram Title: Core QSAR Paradigm Flow

Diagram Title: QSAR Model Development and Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagents and Solutions for QSAR-Related Experimental Activity Profiling

| Item | Function in Context | Example Product/Supplier |

|---|---|---|

| Recombinant Target Protein | Purified enzyme or receptor used in biochemical binding/inhibition assays to generate primary activity data (IC50, Ki). | His-tagged Kinase (e.g., from Sigma-Aldrich, BPS Bioscience). |

| Cell Line with Target Expression | Engineered or native cell line for cell-based functional assays (EC50). Provides physiological context. | HEK293 cells overexpressing GPCR (e.g., from ATCC, Thermo Fisher). |

| Fluorogenic/Luminescent Substrate | Enables sensitive, homogeneous detection of enzymatic activity or cell viability in high-throughput screening. | Caspase-Glo 3/7 Assay (Promega), ATP-lite (PerkinElmer). |

| Fluorescent Dye for Binding Assays | Tracer for competitive binding experiments (FP, TR-FRET, SPR). | Fluorescein-labeled ligand, Europium (Eu)-labeled streptavidin (Cisbio). |

| ADME-Tox Screening Kit | Standardized in vitro kits to generate pharmacokinetic and toxicity descriptors for QSAR modeling. | Caco-2 Permeability Assay Kit (Corning), hERG Inhibition Patch Clamp Kit (Charles River). |

| Chemical Library for Training | Diverse set of compounds with known activity to build the initial QSAR model. | NIH Clinical Collection, Enamine HTS Library. |

| QSAR Software Suite | Integrated platform for descriptor calculation, model building, and validation. | Schrödinger Canvas, Open-Source: KNIME + RDKit/CDK extensions. |

Application Notes

Molecular Descriptors

Molecular descriptors are numerical representations of chemical structures, encoding physicochemical, topological, and quantum-chemical properties. In modern QSAR for drug activity prediction, they serve as the fundamental input variables. Recent trends emphasize the integration of 3D conformation-dependent descriptors and quantum mechanical descriptors (e.g., HOMO/LUMO energies, molecular electrostatic potentials) for modeling complex biological interactions. The shift towards AI-driven feature selection from ultra-high dimensional descriptor spaces (e.g., from deep molecular fingerprints) is critical for identifying the most predictive subsets and avoiding overfitting.

Biological Endpoints

Biological endpoints are quantitative measures of biological activity, serving as the target variable in QSAR models. Beyond traditional half-maximal inhibitory concentration (IC50), current research prioritizes more physiologically relevant endpoints. These include kinetic parameters (Ki), cellular toxicity measures (CC50), in vivo pharmacokinetic parameters (bioavailability, clearance), and polypharmacology profiles (multi-target activity scores). The accurate, reproducible, and high-throughput experimental determination of these endpoints is paramount for model reliability. Standardization via guidelines from organizations like the OECD is essential for regulatory acceptance.

Mathematical Models

Mathematical models establish the quantitative relationship between descriptors and endpoints. The field has evolved from linear regression (e.g., Partial Least Squares, PLS) to sophisticated machine learning and deep learning algorithms. Ensemble methods like Random Forest and Gradient Boosting provide robust non-linear modeling. Deep neural networks, particularly graph neural networks (GNNs) that operate directly on molecular graphs, represent the state-of-the-art by learning task-specific descriptors. Model validation—using rigorous external test sets, cross-validation, and applicability domain analysis—remains the cornerstone for assessing predictive power and regulatory readiness.

Experimental Protocols

Protocol 1: High-Throughput Descriptor Calculation and Curation

Objective: To generate a standardized, curated set of molecular descriptors for a chemical library. Materials: Chemical structures (SDF or SMILES format), high-performance computing cluster or cloud instance, descriptor calculation software (e.g., RDKit, PaDEL-Descriptor, Dragon). Procedure:

- Structure Standardization: Input structures are standardized using RDKit: salts are removed, molecules are neutralized, and tautomers are enumerated to a canonical form.

- Descriptor Calculation: Execute batch descriptor calculation. A typical suite includes: 1D/2D descriptors (molecular weight, logP, topological indices), 3D descriptors (after conformational optimization using MMFF94), and fingerprint-based descriptors (Morgan fingerprints, MACCS keys).

- Data Curation: Remove descriptors with zero variance or >20% missing values. Impute remaining missing values using k-nearest neighbors (k=5) imputation. Apply range scaling (to [0,1]) or standardization (mean=0, std=1).

- Feature Selection: Perform univariate correlation filtering to remove low-variance and non-informative descriptors, followed by a multivariate method (e.g., recursive feature elimination) to reduce dimensionality to 150-300 highly relevant descriptors.

Protocol 2: Determining a Cellular IC50 Endpoint

Objective: To generate a dose-response curve and calculate the half-maximal inhibitory concentration for a compound in a cell-based assay. Materials: Target cell line, compound dilutions in DMSO (<0.5% final), 96-well cell culture plates, appropriate cell viability/activity assay kit (e.g., MTT, CellTiter-Glo), plate reader. Procedure:

- Cell Seeding: Seed cells in 96-well plates at an optimized density (e.g., 5,000 cells/well) in 100 µL of complete growth medium. Incubate for 24 hours.

- Compound Treatment: Prepare a 10-point, 1:3 serial dilution of the test compound in medium. Replace medium in cell plates with 100 µL of compound-containing medium. Include DMSO-only vehicle controls and positive control (e.g., staurosporine) wells.

- Incubation: Incubate plates for the determined assay duration (typically 48-72 hours) at 37°C, 5% CO2.

- Viability Measurement: Add 20 µL of MTT reagent (5 mg/mL) per well. Incubate for 4 hours. Carefully aspirate medium and add 100 µL of DMSO to solubilize formazan crystals. Shake gently for 10 minutes.

- Data Acquisition: Measure absorbance at 570 nm (reference 630 nm) using a plate reader.

- Analysis: Normalize absorbance values: % Inhibition = 100 * (1 - (Abssample - Absblank)/(Absvehiclecontrol - Abs_blank)). Fit normalized data to a four-parameter logistic (4PL) curve using software like GraphPad Prism to derive the IC50 value. Report biological replicates (n≥3).

Protocol 3: Developing and Validating a QSAR Model

Objective: To construct and rigorously validate a predictive QSAR model. Materials: Curated dataset of molecular descriptors and corresponding biological endpoint values (from Protocols 1 & 2). Software: Python/R with scikit-learn, TensorFlow/PyTorch for deep learning. Procedure:

- Data Splitting: Randomly split the dataset into a training set (70-80%) and a hold-out test set (20-30%). Ensure chemical diversity is represented in both sets (e.g., using Kennard-Stone algorithm).

- Model Training on Training Set:

- For a Random Forest model: Perform hyperparameter optimization (number of trees, max depth) via 5-fold cross-validation on the training set.

- Train the final model with the optimal parameters on the entire training set.

- Model Validation:

- Internal Validation: Report cross-validated metrics from the training phase: Q² (coefficient of determination of cross-validation), RMSEcv.

- External Validation: Predict the hold-out test set. Calculate key metrics: R²test, RMSEtest, and MAE (Mean Absolute Error).

- Applicability Domain (AD) Analysis: Define the model's AD using a leverage-based approach (Williams plot). Compounds with standardized residuals > ±3 standard deviation units and leverage > the critical hat value (h* = 3p/n, where p is descriptors, n is training samples) are considered outside the AD.

- Model Interpretation: For interpretable models (e.g., Random Forest), analyze feature importance to identify key physicochemical properties driving activity.

Data Tables

Table 1: Categories of Common Molecular Descriptors in Modern QSAR

| Category | Examples | Calculation Method/Software | Typical Count per Molecule |

|---|---|---|---|

| Constitutional | Molecular Weight, Atom Count, Bond Count | Direct count from formula/structure | 10-20 |

| Topological | Connectivity Indices (Chi), Wiener Index, Balaban J Index | Graph theory applied to molecular graph (RDKit, Dragon) | 50-100 |

| Electrostatic | Partial Charges, Dipole Moment, Molecular Polarizability | Quantum Mechanics (e.g., DFT via Gaussian) or empirical methods | 5-20 |

| Quantum Chemical | HOMO/LUMO Energy, HOMO-LUMO Gap, Fukui Indices | Quantum Mechanics (DFT, semi-empirical via MOPAC) | 5-15 |

| 3D | Principal Moments of Inertia, Radius of Gyration, 3D-MoRSE | After 3D conformation generation (RDKit, Open Babel) | 50-200 |

Table 2: Statistical Benchmarks for QSAR Model Validation

| Validation Type | Metric | Formula | Acceptability Threshold (Typical) |

|---|---|---|---|

| Internal (Cross-Validation) | Q² (or R²cv) | 1 - (∑(yobs - ypred(cv))² / ∑(yobs - ȳtrain)²) | > 0.5 (Good: >0.6, Excellent: >0.7) |

| RMSEcv | √[ (1/n) * ∑(yobs - ypred(cv))² ] | As low as possible, relative to data range | |

| External (Test Set) | R²test | 1 - (∑(yobs(test) - ypred(test))² / ∑(yobs(test) - ȳtest)²) | > 0.6 (Should be close to R²train) |

| RMSEtest | √[ (1/ntest) * ∑(yobs(test) - y_pred(test))² ] | Should be comparable to RMSEcv | |

| MAE | (1/ntest) * ∑|yobs(test) - y_pred(test)| | Lower than RMSE, indicates error distribution |

Visualizations

Title: QSAR Model Development Workflow

Title: From Molecular Interaction to Biological Endpoint

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for QSAR-Supporting Experiments

| Item/Category | Example Product/Source | Primary Function in Context |

|---|---|---|

| Chemical Library | Enamine REAL, Mcule, SPECS | Provides diverse, purchasable small molecules for virtual screening and experimental validation of QSAR predictions. |

| Descriptor Calculation Software | RDKit (Open Source), Dragon (Commercial) | Computes thousands of molecular descriptors and fingerprints from chemical structures, forming the basis of the model's input matrix (X). |

| Cell-Based Viability Assay | CellTiter-Glo (Promega), MTT Kit (Sigma-Aldrich) | Measures cellular metabolic activity or proliferation to determine the biological endpoint (e.g., IC50) for model training (Target Y). |

| High-Throughput Screening Plates | 384-well Cell Culture Microplates (Corning) | Enables efficient, parallel testing of compound dose-responses, generating the volume of endpoint data required for robust QSAR. |

| Machine Learning Platform | Python scikit-learn, TensorFlow/PyTorch | Provides algorithms (Random Forest, Neural Networks) to learn the mathematical relationship between descriptors (X) and endpoints (Y). |

| Quantum Chemistry Software | Gaussian, OpenMolcas, ORCA | Calculates high-level electronic structure descriptors (HOMO/LUMO, Fukui indices) for QSAR models requiring electronic interaction details. |

| Data Analysis & Visualization | GraphPad Prism, Jupyter Notebooks | Used for curve-fitting (dose-response), statistical analysis of assay results, and visualizing model performance metrics and chemical space. |

Application Notes and Protocols Context: This protocol details the standardized workflow for Quantitative Structure-Activity Relationship (QSAR) modeling, a cornerstone methodology in modern computational drug discovery for predicting biological activity from chemical structure.

Dataset Curation and Preparation

The foundation of a robust QSAR model is a high-quality, chemically diverse, and biologically relevant dataset.

Protocol 1.1: Compound Data Acquisition and Standardization Objective: To gather and standardize molecular structures and associated activity data from public or proprietary sources.

- Source Data: Retrieve structures (e.g., SMILES, SDF) and corresponding quantitative activity data (e.g., IC50, Ki) from curated databases (see Table 1).

- Standardization: Process all structures using cheminformatics toolkits (e.g., RDKit, OpenBabel). Steps include:

- Neutralization of salts.

- Generation of canonical tautomers.

- Removal of duplicates.

- Generation of 3D conformations and energy minimization (if required for 3D descriptors).

- Activity Data: Convert all activity values to a uniform scale (typically pIC50 = -log10(IC50 in M)). Log-transform values if necessary to achieve normal distribution.

Protocol 1.2: Chemical Space Analysis and Clustering for Dataset Division Objective: To partition the standardized dataset into representative training and test sets.

- Descriptor Calculation: Compute a set of molecular descriptors (e.g., topological, electronic, physicochemical) for all compounds.

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the descriptor matrix to reduce to 2-3 principal components (PCs).

- Clustering: Perform clustering (e.g., k-Means, Butina) on the PCs to group structurally similar compounds.

- Stratified Split: Allocate compounds from each cluster to training (~70-80%) and test (~20-30%) sets to ensure both sets span the entire chemical space and activity range. A completely independent external validation set should be held back from the beginning if data permits.

Table 1: Common Public Data Sources for QSAR Modeling

| Database Name | Primary Content | Typical Use Case | Access |

|---|---|---|---|

| ChEMBL | Bioactivity data for drug-like molecules, targets, and assays. | Building broad target- or pathway-focused models. | https://www.ebi.ac.uk/chembl/ |

| PubChem | Chemical structures, bioassays, and biological test results. | Large-scale data mining and validation. | https://pubchem.ncbi.nlm.nih.gov/ |

| BindingDB | Measured binding affinities for protein-ligand complexes. | Building high-accuracy binding affinity prediction models. | https://www.bindingdb.org/ |

Diagram 1: Dataset curation and splitting workflow.

Descriptor Calculation and Feature Selection

Molecular descriptors quantify chemical structure, while feature selection identifies the most relevant ones for modeling.

Protocol 2.1: Comprehensive Descriptor Calculation Objective: To generate numerical representations of chemical structures.

- Tool Selection: Use software like RDKit, PaDEL-Descriptor, or Dragon.

- Descriptor Types: Calculate a diverse set:

- 1D/2D: Molecular weight, logP, topological indices, fingerprint bits (ECFP, MACCS).

- 3D (optional): WHIM, GETAWAY descriptors (require optimized 3D conformations).

- Output: Generate a matrix (compounds x descriptors). Pre-process: remove constant/near-constant columns, impute missing values (rarely), and scale features (e.g., standardization).

Protocol 2.2: Recursive Feature Elimination (RFE) for Descriptor Selection Objective: To reduce dimensionality and mitigate overfitting by selecting the most predictive descriptors.

- Base Model: Choose an estimator (e.g., Random Forest, SVM).

- Ranking: Train the model on all features. Rank features by importance (e.g., Gini importance for RF, coefficients for linear models).

- Recursive Elimination: Remove the least important feature(s). Retrain the model. Repeat until a predefined number of features remains.

- Validation: Use cross-validated performance (e.g., R², RMSE) on the training set to determine the optimal number of features. The feature subset yielding the best CV performance is selected.

Model Building, Validation, and Applicability Domain

This phase involves training predictive algorithms and rigorously evaluating their reliability and scope.

Protocol 3.1: Model Training with Cross-Validation Objective: To build and tune a predictive QSAR model.

- Algorithm Selection: Common algorithms include Random Forest (RF), Support Vector Regression (SVR), and Partial Least Squares (PLS).

- Hyperparameter Tuning: Use grid or random search within k-fold cross-validation (e.g., 5-fold CV) on the training set to optimize parameters (e.g., n_estimators for RF, C and gamma for SVR).

- Model Training: Train the final model with the optimal hyperparameters on the entire training set.

Protocol 3.2: Rigorous Model Validation Objective: To assess the model's predictive performance and statistical robustness.

- Internal Validation: Report cross-validated metrics (Q², RMSEcv) from the training phase.

- External Test Set Validation: Use the held-out test set. Key metrics include:

- R² (coefficient of determination)

- RMSE (Root Mean Square Error)

- MAE (Mean Absolute Error)

- Y-Randomization Test: Shuffle activity values, rebuild the model. A significant drop in performance confirms the model is not due to chance correlation.

Protocol 3.3: Defining the Applicability Domain (AD) Objective: To identify the chemical space region where the model's predictions are reliable.

- Method: Leverage the training set descriptors. Common approaches include:

- Leverage (Hat Matrix): Identifies compounds structurally extreme relative to the training set.

- Distance-Based: Calculates the Euclidean or Mahalanobis distance to the k-nearest neighbors in the training set.

- Threshold: Set a cutoff (e.g., 95% confidence interval). Predictions for compounds outside the AD should be flagged as unreliable.

Table 2: Key Validation Metrics and Their Interpretation

| Metric | Formula | Ideal Value | Interpretation |

|---|---|---|---|

| R² | 1 - (SSres/SStot) | Close to 1 | Proportion of variance explained by the model. |

| Q² (CV) | 1 - (PRESS/SS_tot) | > 0.5 | Predictive ability estimated via cross-validation. |

| RMSE | √[ Σ(Predi - Expi)² / n ] | As low as possible | Average prediction error in activity units. |

| MAE | Σ|Predi - Expi| / n | As low as possible | Robust average error, less sensitive to outliers. |

Diagram 2: Model building, validation, and AD definition.

Model Deployment and Interpretation

Deploying the model for virtual screening and interpreting it for chemical insight are the final, critical steps.

Protocol 4.1: Virtual Screening Pipeline Deployment Objective: To apply the validated QSAR model to screen new, untested compounds.

- Pipeline Construction: Automate the workflow: Standardization → Descriptor Calculation (using the same selected features) → Prediction → AD Assessment.

- Implementation: Deploy as a script (Python/R) or a web application (e.g., using Flask/Streamlit). Ensure it logs predictions and AD flags.

- Screening: Apply to in-house compound libraries or virtual enumerations. Prioritize compounds with high predicted activity and within the AD for synthesis and testing.

Protocol 4.2: Model Interpretation via Feature Importance Objective: To extract chemically meaningful insights from the model.

- Global Importance: For tree-based models (RF), analyze mean decrease in impurity. For linear models, analyze coefficient magnitudes.

- Local Interpretation: For specific predictions, use methods like SHAP (SHapley Additive exPlanations) to identify which structural features contributed most to the prediction.

- Chemical Insight: Map important descriptors back to structural motifs (e.g., high logP → lipophilic groups, presence of a specific fingerprint → a key scaffold).

The Scientist's Toolkit: Essential Research Reagents & Software for QSAR

| Item / Solution | Category | Function / Purpose |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Core library for molecule standardization, descriptor calculation, fingerprint generation, and basic modeling. |

| PaDEL-Descriptor | Descriptor Calculation Software | Calculates a comprehensive set (1D, 2D, 3D) of molecular descriptors and fingerprints from structures. |

| scikit-learn | Machine Learning Library | Provides algorithms (RF, SVR, PLS), feature selection tools, cross-validation, and metrics for model building. |

| Jupyter Notebook | Development Environment | Interactive platform for prototyping workflows, analyzing data, and visualizing results. |

| ChEMBL Database | Bioactivity Data | Primary public source for curated, target-associated bioactivity data for model training. |

| Streamlit / Flask | Web Application Framework | Used to create simple, interactive web interfaces for deploying and sharing validated QSAR models. |

| SHAP Library | Model Interpretation | Explains the output of any machine learning model by attributing importance to each input feature. |

| Python/R | Programming Language | The foundational language for scripting the entire QSAR workflow and data analysis. |

Why QSAR? Benefits in Cost, Speed, and Ethical Drug Screening

Within the broader thesis on QSAR modeling for drug activity prediction, this application note delineates the pragmatic rationale for employing Quantitative Structure-Activity Relationship (QSAR) methodologies. QSAR serves as a cornerstone in computer-aided drug design (CADD), enabling the prediction of biological activity from molecular descriptors without immediate recourse to physical or biological assays. This document details the quantifiable advantages, practical protocols, and essential toolkit components for implementing QSAR in early-stage discovery.

Quantitative Benefits Analysis

The adoption of QSAR modeling confers significant, measurable advantages across three critical domains: financial cost, project timeline, and ethical considerations. The following table summarizes representative quantitative data derived from recent industry analyses and peer-reviewed studies.

Table 1: Comparative Analysis of Traditional HTS vs. QSAR-Prioritized Screening

| Metric | Traditional High-Throughput Screening (HTS) | QSAR-Prioritized Virtual Screening | Relative Improvement |

|---|---|---|---|

| Average Cost per Compound Screened | $0.10 - $1.00 (in vitro) | ~$0.001 - $0.01 (in silico) | 100-1000x cost reduction |

| Time for Primary Screen (1M compounds) | 4-8 weeks (assay-dependent) | 1-7 days (compute-dependent) | 4-8x faster |

| Animal Use Reduction (Early Lead ID) | Baseline (in vivo confirmation) | 40-70% reduction in initial animal studies | Significant decrease |

| Hit Rate Enrichment | 0.01% - 0.1% (typical HTS) | 5% - 20% (with robust QSAR) | 50-2000x enrichment |

| Resource Footprint | High (lab space, reagents, waste) | Low (computational cluster) | Drastically lower |

Detailed Application Notes

Application Note 1: Cost-Effective Virtual Screening for Hit Identification

Objective: To rapidly identify potential hit compounds from a commercial library of 500,000 molecules against a novel kinase target, minimizing upfront reagent and compound acquisition costs. QSAR Model Basis: A ligand-based approach using a published curated dataset of 2,500 kinase inhibitors with pIC50 values. Molecular descriptors (ECFP6 fingerprints, MOE 2D descriptors) were used to train a Random Forest model validated through 5-fold cross-validation (Q² = 0.72, R²ext = 0.68). Protocol Workflow: See Figure 1. Outcome: The top 5,000 virtual hits (<1% of library) were procured for physical testing. Experimental validation yielded a confirmed hit rate of 12%, compared to an estimated 0.15% from blind screening, resulting in a projected cost saving of >85% for this phase.

Application Note 2: ADMET Profiling for Early-Stage Attrition Mitigation

Objective: Predict Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties to eliminate candidates likely to fail in later, more costly development stages. QSAR Model Basis: Ensemble of multiple models (e.g., for hERG inhibition, CYP450 metabolism, Caco-2 permeability) built using publicly available datasets (e.g., ChEMBL, PubChem). Each model uses optimized descriptor sets (e.g., RDKit descriptors, molecular weight, logP) and algorithms (e.g., SVM, XGBoost). Protocol Workflow: See Figure 2. Outcome: Application to an internal lead series of 200 analogs flagged 35% with high predicted hERG risk and 20% with poor predicted bioavailability. This enabled synthetic efforts to focus on the remaining 45% of the series, avoiding costly late-stage preclinical toxicity failures.

Application Note 3: Ethical Screening via In Silico Toxicity Prediction

Objective: Reduce animal usage in early toxicity screening by employing QSAR models to prioritize compounds for subsequent in vivo testing. QSAR Model Basis: Use of OECD QSAR Toolbox or proprietary models for predicting endpoints like acute oral toxicity (LD50), skin sensitization, and mutagenicity (Ames test) based on structural alerts and read-across methodologies. Protocol Workflow: See Figure 3. Outcome: In a pilot project, applying in silico toxicity filters allowed 60% of candidate molecules to be deprioritized based on predicted toxicity, reducing the number of compounds requiring initial in vivo acute toxicity studies by a corresponding margin, aligning with the 3Rs principles (Replacement, Reduction, Refinement).

Experimental Protocols

Protocol 1: Development and Validation of a QSAR Classification Model for Activity Prediction

- Dataset Curation: From sources like ChEMBL, extract compounds with consistent bioactivity data (e.g., IC50 < 10 µM = "Active", IC50 > 10 µM = "Inactive"). Apply rigorous data cleaning: remove duplicates, inorganic salts, and compounds with ambiguous activity.

- Descriptor Calculation: Use cheminformatics software (e.g., RDKit, PaDEL-Descriptor) to compute a standardized set of 200+ 1D and 2D molecular descriptors (constitutional, topological, electronic). Standardize and normalize descriptor values.

- Dataset Division: Randomly split data into training (70%) and external test (30%) sets. Ensure representative distribution of active/inactive compounds in each set.

- Model Training: Employ a machine learning algorithm (e.g., Support Vector Machine with RBF kernel). Optimize hyperparameters (e.g., C, gamma) via grid search with 5-fold cross-validation on the training set.

- Model Validation: Assess using cross-validation metrics (Accuracy, Sensitivity, Specificity, MCC) and, critically, performance on the held-out external test set. Apply Y-randomization to confirm model robustness.

- Applicability Domain (AD) Definition: Define the chemical space of the model using methods like leverage or distance-based approaches (e.g., Euclidean distance in principal component space). Predictions for compounds outside the AD should be flagged as unreliable.

Protocol 2: Implementing a Virtual Screening Workflow with a Validated QSAR Model

- Compound Library Preparation: Obtain a virtual compound library (e.g., ZINC, Enamine REAL). Filter using Lipinski's Rule of Five and other lead-like properties. Generate tautomers and protonation states at physiological pH (e.g., using MOE or OpenBabel).

- Descriptor Generation: Calculate the exact same descriptor set used in the trained QSAR model (Protocol 1, Step 2) for all library compounds.

- Activity Prediction & Ranking: Apply the validated model to predict activity class or continuous value for each compound. Rank the entire library by predicted activity (e.g., highest pIC50) or probability of being "Active".

- Applicability Domain Filter: Remove all ranked compounds that fall outside the model's predefined Applicability Domain.

- Visual Inspection & Clustering: Perform chemical clustering on the top-ranked compounds (e.g., 1000) to ensure structural diversity. Visually inspect top representatives from each cluster for undesirable structural features.

- Procurement & Testing: Select a diverse subset (e.g., 100-500) of top-ranked, AD-compliant compounds for purchase and subsequent in vitro biological assay.

Diagrams

Diagram 1: QSAR-Prioritized Virtual Screening Workflow

Diagram 2: ADMET Prediction Workflow for Lead Optimization

Diagram 3: QSAR in Ethical Screening (3Rs Integration)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Software & Data Resources for QSAR Modeling

| Item Name | Category | Primary Function | Key Features / Notes |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Calculation of molecular descriptors, fingerprint generation, and basic QSAR model building. | Python-based, widely used, integrates with scikit-learn for ML. |

| PaDEL-Descriptor | Software | Calculates 1D, 2D, and 3D molecular descriptors and fingerprints for large datasets. | Standalone and GUI versions, can process thousands of structures quickly. |

| KNIME Analytics Platform | Data Analytics Workflow | Visual workflow creation for data integration, preprocessing, model training, and validation. | Extensive cheminformatics nodes (RDKit, CDK), no-code/low-code environment. |

| ChEMBL Database | Public Bioactivity Data | Source of curated, standardized bioactivity data for millions of compounds against thousands of targets. | Essential for training set compilation; includes ADMET data. |

| OECD QSAR Toolbox | Software | Supports (Q)SAR assessment by filling data gaps for chemical hazard assessment via read-across and profiling. | Critical for regulatory-focused toxicity prediction and implementing grouping approaches. |

| scikit-learn (sklearn) | Python ML Library | Provides a uniform interface for a wide range of machine learning algorithms for classification and regression. | Essential for building Random Forest, SVM, and other models; integrates with RDKit descriptors. |

| MOE (Molecular Operating Environment) | Commercial Software Suite | Integrated platform for computational chemistry, molecular modeling, and QSAR/QSPR studies. | Comprehensive descriptor calculation, robust SAR analysis tools, and strong visualization. |

| ZINC/Enamine REAL | Virtual Compound Libraries | Publicly (ZINC) and commercially (Enamine) available libraries for virtual screening. | Provide ready-to-dock/directly purchasable compounds in 2D/3D formats. |

Building a QSAR Model: Step-by-Step Methods and Real-World Applications

Within a thesis focused on Quantitative Structure-Activity Relationship (QSAR) modeling for drug activity prediction, the quality and relevance of the underlying bioactivity data are paramount. This application note provides detailed protocols for sourcing and curating high-quality bioactivity data from major public repositories, specifically ChEMBL and PubChem, to construct robust datasets for QSAR model development and validation.

Public databases provide vast amounts of structured bioactivity data. For QSAR, selecting databases with standardized activity measurements, well-annotated targets, and curated chemical structures is critical.

Table 1: Comparison of Key Bioactivity Databases for QSAR Research

| Database | Primary Focus | Key Data Types | Size (Approx.) | Strengths for QSAR | Primary Access Method |

|---|---|---|---|---|---|

| ChEMBL | Drug discovery, bioactive molecules | IC50, Ki, EC50, Kd, Potency | >2.4M compounds, >17M bioactivities | Manually curated, target-oriented, detailed assay info | REST API, Web Interface, Downloads |

| PubChem | Chemical substance information | BioAssay results, LC50, GI50, IC50 | >111M compounds, >1.2M biological assays | Extremely broad, includes HTS data, linked to literature | REST API, FTP, Web Interface |

| BindingDB | Protein-ligand binding affinities | Kd, Ki, IC50 | ~2.5M binding data | Focus on measured binding affinities, detailed protein info | Web Interface, Downloads |

| PDBbind | Protein-ligand complexes in PDB | Binding affinity (Kd, Ki, IC50) | ~24,000 complexes | 3D structural context with affinity data | Manual Download |

Protocol: Systematic Data Extraction from ChEMBL for a Target Class

This protocol details steps to extract a high-confidence dataset for Kinase inhibitors from ChEMBL, suitable for building a QSAR model.

Materials and Software Requirements

- Computer with internet access.

- ChEMBL web interface (https://www.ebi.ac.uk/chembl/) or ChEMBL API (via Python/R packages).

- Data processing software (e.g., Python with pandas, RDKit; or KNIME, Excel).

- Chemical structure standardization tool (e.g., RDKit, Open Babel).

Procedure

Step 1: Define Data Scope and Quality Filters.

- Target Selection: Navigate to "Targets" and search for "Kinase". Filter by organism ("Homo sapiens") and target type ("Single protein").

- Bioactivity Criteria: Select only "IC50", "Ki", or "Kd" as activity types. Set a confidence score filter (e.g., ChEMBL confidence score = 9, indicating a direct single protein target).

- Assay Criteria: Prefer assays with standard type ("B" - functional) and relation ("=" - exact).

Step 2: Execute Search and Bulk Download.

- Using the web interface, apply filters and download the results as a CSV file.

- Alternative API Method (Python):

Step 3: Data Curation and Standardization.

- Remove Duplicates: Aggregate multiple measurements for the same compound-target pair using the median pChEMBL value (negative log of the molar activity value).

- Standardize Structures: Use RDKit to canonicalize SMILES, remove salts, and neutralize charges to ensure consistent molecular representation.

- Apply Activity Threshold: Define active/inactive labels (e.g., compounds with pChEMBL value >= 6.0 (IC50/Ki < 1 µM) as active).

Step 4: Dataset Finalization.

- Create a final table with columns: StandardSMILES, TargetCHEMBLID, pChEMBLValue, Activity_Label (Active/Inactive).

- Split the data into training (~80%) and test (~20%) sets, ensuring no identical compounds are present in both sets (scaffold-based splitting is recommended for QSAR).

Protocol: Aggregating and Curating Data from PubChem BioAssay

This protocol describes extracting bioactivity data from a specific PubChem AID (Assay ID) related to cytotoxicity.

Materials and Software Requirements

- Computer with internet access.

- PubChem Power User Gateway (PUG) REST API or web interface.

- Data processing software (as above).

Procedure

Step 1: Identify Relevant Assay (AID).

- Search PubChem BioAssay (https://pubchem.ncbi.nlm.nih.gov/) using keywords, e.g., "cancer cell line cytotoxicity".

- Select an assay with a clear summary and sufficient data points (e.g., AID-1259342). Review the assay description for protocol details.

Step 2: Download BioAssay Data.

- Via the web interface: On the assay page, download the CSV data file.

- Alternative API Method:

Step 3: Parse and Clean Data.

- Load the CSV. Extract relevant columns: CID (Compound ID), PUBCHEMRESULT (e.g., "Inactive", "Active"), PUBCHEMACTIVITY_OUTCOME, and any numeric outcome (e.g., inhibition percentage).

- Filter for results with a defined activity outcome. Map outcomes to a binary label (e.g., "Active" -> 1, "Inactive" -> 0).

Step 4: Retrieve and Standardize Associated Structures.

- Use the CIDs to fetch canonical SMILES from PubChem via PUG.

- Standardize the retrieved SMILES using the method described in Section 2.2, Step 3.

Step 5: Create Consolidated Dataset.

- Merge activity labels with standardized structures using CID as the key.

- Final dataset columns: StandardSMILES, CID, ActivityLabel, [Additional_Outcome].

Diagram: Workflow for QSAR-Ready Data Curation

Title: QSAR Data Curation Workflow

Table 2: Key Resources for Bioactivity Data Acquisition and Curation

| Resource Name | Type | Primary Function in Context | Access Link |

|---|---|---|---|

| ChEMBL WebResource Client | Python/R Library | Programmatic access to ChEMBL data via API for automated querying and retrieval. | https://github.com/chembl/chemblwebresourceclient |

| RDKit | Open-Source Cheminformatics Library | Chemical structure standardization, descriptor calculation, and molecular manipulation. | https://www.rdkit.org |

| PubChem PUG REST API | Web API | Programmatic access to download PubChem substance, compound, and bioassay data. | https://pubchem.ncbi.nlm.nih.gov/docs/pug-rest |

| KNIME Analytics Platform | GUI Workflow Tool | Visual pipeline creation for data retrieval, integration, cleaning, and preprocessing without coding. | https://www.knime.com |

| Open Babel | Command-Line Tool | Converting chemical file formats and performing basic structure filtering. | http://openbabel.org |

| Cookbook for Target Prediction | Protocol / Guide | Step-by-step guide for building predictive models from ChEMBL data. | https://chembl.gitbook.io/chembl-interface-documentation/cookbook |

Critical Considerations for QSAR Datasets

- Activity Data Consistency: Use a single activity type (e.g., only Ki) per model when possible. Convert all values to a negative logarithmic scale (e.g., pKi, pIC50).

- Chemical Space Diversity: Ensure the training set adequately represents the chemical space of interest. Use descriptors like molecular weight, logP, and fingerprint similarity to assess.

- Applicability Domain: Document the chemical space covered by your sourced data to define the future applicability domain of the QSAR model.

- Provenance Tracking: Always record the exact source identifiers (ChEMBL Assay ID, PubChem AID, Compound IDs) for every data point to ensure reproducibility and allow for re-curation.

Quantitative Structure-Activity Relationship (QSAR) modeling is a cornerstone of modern computational drug discovery, enabling the prediction of biological activity from chemical structure. The efficacy of any QSAR model is fundamentally dependent on the molecular descriptors used to numerically encode chemical information. These descriptors, categorized by their dimensionality, transform structural features into a quantitative format suitable for machine learning and statistical analysis. This overview details the types, applications, and protocols for generating 1D, 2D, and 3D molecular descriptors within a drug activity prediction research pipeline.

Descriptor Categories: Definitions and Comparative Analysis

Table 1: Comparison of Molecular Descriptor Types

| Descriptor Type | Dimensionality Basis | Data Source | Computational Cost | Example Descriptors | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|---|

| 1D Descriptors | Global molecular properties | Molecular formula, bulk properties | Very Low | Molecular Weight, LogP, Atom Counts, Number of Rotatable Bonds | Fast to compute, easily interpretable, require no geometry. | Low information content, cannot distinguish isomers. |

| 2D Descriptors | Molecular topology (connectivity) | Molecular graph (atoms & bonds) | Low to Moderate | Molecular Fingerprints (ECFP, MACCS), Topological Indices (Wiener Index), Connectivity Indices | Capture connectivity patterns, distinguish isomers, standard for virtual screening. | Ignore 3D stereochemistry and conformational flexibility. |

| 3D Descriptors | Spatial geometry & shape | 3D Molecular Conformation | High | 3D MoRSE descriptors, WHIM descriptors, Radial Distribution Function, Pharmacophore Keys | Encode steric and electronic fields crucial for receptor binding. | Conformation-dependent, require geometry optimization, high computational cost. |

Application Notes for QSAR Modeling

1D Descriptors: Lipinski's Rule of Five

- Application: Early-stage filtering for drug-likeness and oral bioavailability prediction.

- Protocol: For a given compound library, calculate: Molecular Weight (MW), Octanol-Water Partition Coefficient (LogP), Count of Hydrogen Bond Donors (HBD), and Count of Hydrogen Bond Acceptors (HBA). Compounds violating more than one rule (MW ≤ 500, LogP ≤ 5, HBD ≤ 5, HBA ≤ 10) are flagged as potentially poorly bioavailable.

2D Descriptors: Similarity Searching & Scaffold Hopping

- Application: Ligand-based virtual screening using Tanimoto similarity.

- Protocol:

- Fingerprint Generation: Encode all compounds in a database (e.g., ChEMBL) and a known active query molecule into Extended Connectivity Fingerprints (ECFP4, radius=2) using RDKit or similar.

- Similarity Calculation: Compute the Tanimoto coefficient between the query fingerprint and every database fingerprint.

- Ranking & Analysis: Rank database compounds by similarity score (1.0 = identical). Visually inspect top hits for novel scaffolds with similar topological pharmacophores.

3D Descriptors: Comparative Molecular Field Analysis (CoMFA)

- Application: 3D-QSAR modeling to understand steric and electrostatic requirements for binding.

- Protocol:

- Conformational Alignment: Generate a biologically relevant conformation for each molecule. Align them based on a common scaffold or pharmacophore.

- Field Calculation: Place each aligned molecule within a 3D grid. Compute steric (Lennard-Jones) and electrostatic (Coulombic) interaction energies at each grid point using a probe atom.

- PLS Modeling: Use Partial Least Squares (PLS) regression to correlate the vast descriptor matrix (grid point energies) with biological activity, producing 3D coefficient contour maps.

Experimental Protocols

Protocol 4.1: Generating a Standard 2D Descriptor Set with RDKit

Objective: To calculate a comprehensive set of 2D descriptors for a SMILES list. Materials: See "The Scientist's Toolkit" below. Procedure:

- Prepare a text file (

input.smi) containing one SMILES string and a compound ID per line. - Execute the following Python script using RDKit:

- Output (

2d_descriptors.csv) is ready for use in machine learning models.

Protocol 4.2: Calculating 3D Geometry-Dependent Descriptors

Objective: To compute 3D WHIM descriptors for a set of molecules. Procedure:

- 3D Conformation Generation: For each SMILES string, generate an initial 3D structure using RDKit's

EmbedMoleculefunction. Optimize the geometry using the MMFF94 force field. - Descriptor Calculation: Use the

rdMolDescriptors.CalcWHIM()function on the optimized 3D molecule object to obtain a list of WHIM descriptor values (e.g., size, shape, symmetry). - Data Collation: Compile descriptors for all molecules into a matrix, ensuring conformational alignment is performed if required by the specific 3D-QSAR method.

Visualizations

Title: QSAR Modeling Workflow Using Multi-Dimensional Descriptors

Title: Descriptor Selection Decision Tree for QSAR

The Scientist's Toolkit

Table 2: Essential Research Reagents & Software for Descriptor Calculation

| Item/Category | Specific Tool/Resource | Function in Descriptor Research |

|---|---|---|

| Cheminformatics Toolkit | RDKit (Open Source), Open Babel | Core library for reading molecules, generating 2D/3D coordinates, and calculating a vast array of 1D, 2D, and 3D descriptors. |

| Descriptor Calculation Software | Dragon (Talete), MOE (Chemical Computing Group) | Commercial software offering extremely comprehensive and validated descriptor sets, including 3D fields. |

| 3D Conformer Generator | OMEGA (OpenEye), CONFORD | Specialized software for rapid, accurate generation of representative 3D conformer ensembles for 3D-QSAR. |

| Molecular Modeling Suite | Schrödinger Suite, OpenEye Toolkits | Integrated platforms for advanced structure preparation, conformational analysis, and field-based 3D descriptor calculation. |

| Curated Chemical Database | ChEMBL, PubChem | Source of bioactivity data and structures for building training sets and validating descriptor utility. |

| Programming Language | Python (with pandas, numpy) | Environment for scripting automated descriptor calculation pipelines and integrating with machine learning libraries (e.g., scikit-learn). |

| Data Visualization | Matplotlib, Seaborn, Spotfire | For creating descriptor distribution plots, similarity maps, and CoMFA contour visualization. |

Quantitative Structure-Activity Relationship (QSAR) modeling is a cornerstone of computational drug discovery, enabling the prediction of biological activity from molecular descriptors. A critical challenge in robust QSAR development is the "curse of dimensionality," where datasets contain hundreds or thousands of molecular descriptors (features) for a relatively small number of compounds. This leads to overfitting, reduced model interpretability, and poor generalization to new data. Therefore, identifying the critical chemical drivers—the subset of molecular features that truly govern biological activity—is paramount. This document provides application notes and detailed protocols for feature selection and dimensionality reduction techniques specifically tailored for QSAR modeling in drug activity prediction research.

Core Methodologies: Protocols & Application Notes

Protocol 2.1: Filter-Based Feature Selection using Statistical Metrics

Objective: To rank and filter molecular descriptors based on univariate statistical relationships with the target biological activity.

Materials & Software: Dataset (CSV file of compounds with descriptors and activity), Python 3.8+, scikit-learn, pandas, numpy, SciPy.

Procedure:

- Data Preparation: Load the standardized descriptor matrix (

X) and the continuous (e.g., pIC50) or categorical activity vector (y). Pre-process to handle missing values. - Metric Calculation:

- For Regression (continuous

y): Calculate the Pearson correlation coefficient (linear) and Mutual Information (non-linear) between each descriptor inXandy. - For Classification (categorical

y): Calculate ANOVA F-value (for features) and Mutual Information.

- For Regression (continuous

- Ranking & Thresholding: Rank all features based on the calculated scores. Select the top k features, or all features above a predefined significance threshold (e.g., p-value < 0.05 for F-test).

- Output: A reduced descriptor matrix containing only the selected critical features.

Application Note: This method is computationally efficient and model-agnostic. It is best used as an initial screening step to remove clearly irrelevant features. It fails to capture feature interactions.

Protocol 2.2: Embedded Method: LASSO (L1) Regularization for Sarse Feature Identification

Objective: To perform feature selection as an integral part of model construction, penalizing absolute coefficient size to drive non-informative descriptor coefficients to zero.

Procedure:

- Standardization: Standardize all molecular descriptors to have zero mean and unit variance. This is crucial for L1 regularization.

- Model Training: Fit a linear regression (or logistic regression) model with L1 regularization (LASSO):

min(||y - Xw||^2_2 + α * ||w||_1), whereαis the regularization strength. - Hyperparameter Tuning: Use 5-fold cross-validation over a grid of

αvalues (e.g.,np.logspace(-4, 0, 50)) to find the value that minimizes the cross-validation error. - Feature Extraction: Extract the model coefficients from the model trained with the optimal

α. Features with non-zero coefficients are identified as critical chemical drivers. - Validation: Retrain a standard linear model using only the selected features to confirm predictive performance is maintained.

Application Note: LASSO provides a powerful balance between feature selection and model construction. The α parameter controls sparsity; larger α yields fewer features. Results are more interpretable than filter methods.

Protocol 2.3: Wrapper Method: Recursive Feature Elimination (RFE) with Random Forest

Objective: To recursively prune features by training a model, evaluating feature importance, and eliminating the least important features.

Procedure:

- Initialize Model: Select a base estimator with a

.coef_or.feature_importances_attribute (e.g., Random Forest Regressor). - Recursive Loop: a. Train the model on the current set of n features. b. Rank all features by their importance (Gini impurity decrease for Random Forest). c. Remove the lowest-ranked r features (e.g., bottom 10%).

- Iteration & Selection: Repeat Step 2 until the desired number of features (k) is reached. Use cross-validation at each step to monitor model performance.

- Optimal Subset Choice: Plot model performance (e.g., R²) vs. number of features. Select the smallest feature subset that yields peak or near-peak performance.

Application Note: RFE is computationally intensive but often yields superior feature subsets by considering complex feature interactions. Random Forest as the estimator captures non-linear relationships.

Protocol 2.4: Dimensionality Reduction via t-Distributed Stochastic Neighbor Embedding (t-SNE)

Objective: To visualize high-dimensional descriptor space in 2D/3D to identify clusters, outliers, and assess the separability of compounds based on activity class.

Procedure:

- Input: Use the standardized descriptor matrix

X. - Parameter Setting: Key parameters are

perplexity(typically 5-50, related to number of nearest neighbors) andlearning_rate(typically 10-1000). Start withperplexity=30,learning_rate=200. - Execution: Apply t-SNE to project

Xinto 2 dimensions (n_components=2). Use a highrandom_statefor reproducibility. - Visualization: Create a scatter plot of the 2D embedding, coloring points by the target activity value or class.

- Interpretation: Analyze the plot for clear separation of active/inactive clusters, which suggests the descriptors collectively contain predictive signal. Note: t-SNE is for visualization only, not a pre-processing step for subsequent modeling.

Application Note: t-SNE is excellent for exploratory data analysis. It preserves local structure but not global distances. Do not interpret cluster sizes as meaningful.

Data Presentation: Comparative Analysis of Methods

Table 1: Performance Comparison of Feature Selection Methods on a Benchmark QSAR Dataset (CHEMBL HIV-1 Integrase Inhibition)

| Method | Number of Selected Descriptors (from 500) | 5-Fold CV R² (Regression) | Model Interpretability | Computational Cost | Key Advantage |

|---|---|---|---|---|---|

| Filter (Pearson Correlation) | 45 | 0.72 | High | Very Low | Fast, simple baseline |

| Embedded (LASSO) | 28 | 0.81 | High | Low | Built-in sparsity, good performance |

| Wrapper (RFE-RF) | 35 | 0.85 | Medium | Very High | Often yields best predictive subset |

| No Selection (Full Set) | 500 | 0.65 (overfit) | Very Low | Reference | Demonstrates overfitting penalty |

Table 2: Key Molecular Descriptor Categories Identified as Critical Chemical Drivers

| Descriptor Category | Example Specific Descriptors | Hypothesized Role in Biological Activity | Frequently Selected By |

|---|---|---|---|

| Topological | Kier-Hall connectivity indices, Wiener index | Encodes molecular branching, size, and shape; influences binding entropy. | Filter, RFE-RF |

| Electronic | Partial charge descriptors, HOMO/LUMO energy | Governs electrostatic interactions, hydrogen bonding, and charge transfer with target. | LASSO, RFE-RF |

| Hydrophobic | LogP, Molar refractivity | Drives desolvation, partitioning into membranes, and hydrophobic pocket binding. | All Methods |

| Geometric | Principal moments of inertia, Jurs descriptors | Related to 3D shape complementarity with the protein binding site. | RFE-RF |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Feature Selection in QSAR

| Item | Function/Description | Example Vendor/Software |

|---|---|---|

| Molecular Descriptor Calculation Software | Generates quantitative features (e.g., topological, electronic, geometric) from compound structures. | RDKit (Open Source), PaDEL-Descriptor, MOE |

| Statistical & ML Programming Environment | Provides libraries for data manipulation, statistical testing, and machine learning model implementation. | Python (scikit-learn, SciPy), R (caret, glmnet) |

| High-Performance Computing (HPC) Cluster Access | Necessary for computationally intensive wrapper methods (e.g., RFE) on large descriptor sets. | Local University Cluster, Cloud (AWS, GCP) |

| Curated Bioactivity Database | Source of high-quality, structured compound-activity data for model training and validation. | ChEMBL, PubChem BioAssay |

| Chemical Structure Standardization Tool | Ensures consistent representation (tautomers, protonation states, salts) before descriptor calculation. | RDKit, ChemAxon Standardizer |

| Hyperparameter Optimization Framework | Automates the search for optimal model parameters (e.g., α in LASSO). |

scikit-learn GridSearchCV, Optuna |

Visualization of Workflows & Relationships

Workflow for QSAR Feature Selection

LASSO vs OLS vs Ridge Mechanics

Within the broader thesis on Quantitative Structure-Activity Relationship (QSAR) modeling for drug activity prediction, this document provides Application Notes and Protocols for implementing a spectrum of modeling algorithms. The evolution from traditional machine learning (ML) to advanced deep learning, particularly Graph Neural Networks (GNNs), reflects the field's shift from handling classical molecular descriptors to directly learning from graph representations of molecular structure.

Algorithm Comparison & Data Presentation

Table 1: Algorithmic Characteristics for QSAR Modeling