Quantifying Model Uncertainty in Drug Development: A Practical Guide to Monte Carlo Simulation

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying Monte Carlo simulation to quantify and manage model uncertainty.

Quantifying Model Uncertainty in Drug Development: A Practical Guide to Monte Carlo Simulation

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying Monte Carlo simulation to quantify and manage model uncertainty. It progresses from foundational concepts—demystifying what Monte Carlo simulation is and why it's critical for handling parametric, structural, and residual uncertainty in pharmacometric and QSP models—to methodological implementation, including step-by-step guidance on parameter sampling, model execution, and output analysis. The guide addresses common troubleshooting scenarios, optimization techniques for computational efficiency, and strategies for rigorous validation and comparison with alternative methods like bootstrap and Bayesian inference. By synthesizing these intents, the article empowers professionals to robustly characterize uncertainty, leading to more informative risk assessments and decision-making in preclinical and clinical development.

What is Monte Carlo Simulation? Demystifying Uncertainty Quantification for Researchers

In drug development, reliance on single-point estimates for parameters like efficacy, toxicity, and pharmacokinetics introduces significant risk. This application note argues for the systematic quantification of model uncertainty using Monte Carlo simulation, framing it as essential for robust decision-making. By propagating uncertainty through complex models—from early target validation to late-phase clinical trial design—we enable probabilistic forecasting, risk assessment, and more informative regulatory submissions.

Drug development is characterized by complex, multi-stage models predicting biological response and patient outcomes. Traditional approaches often use best-guess parameter estimates, ignoring the uncertainty inherent in in vitro, preclinical, and clinical data. This can lead to failed trials, safety issues, and inefficient resource allocation. Monte Carlo simulation provides a computational methodology to explicitly represent and propagate this uncertainty, transforming point predictions into probability distributions.

Key Applications of Monte Carlo Simulation in Drug Development

Pharmacokinetic/Pharmacodynamic (PK/PD) Modeling

Uncertainty in parameters like clearance (CL), volume of distribution (Vd), and EC50 significantly impacts dose selection.

Protocol 1: Quantifying PK Parameter Uncertainty for First-in-Human (FIH) Dose Prediction

- Data Collection: Compile all preclinical PK data (e.g., from rodent, canine, non-human primate studies) for the candidate molecule.

- Model Fitting: Fit a multi-compartment PK model to the data using non-linear mixed-effects modeling (e.g., NONMEM, Monolix). Extract the estimated population parameters and their variance-covariance matrix.

- Define Parameter Distributions: Assume a multivariate log-normal distribution for PK parameters (CL, Vd, etc.). The mean vector is the point estimate, and the variance-covariance matrix defines the uncertainty and correlations.

- Monte Carlo Simulation:

- Using software (R, Python, SAS), generate 10,000 virtual patients by randomly sampling parameter sets from the defined multivariate distribution.

- For each virtual patient, simulate the concentration-time profile for a range of proposed clinical doses.

- Output Analysis: Calculate the probability that a given dose achieves target exposure (AUC, Cmax) within the therapeutic window for >90% of the virtual population. This informs the safe starting dose and dose escalation scheme.

Table 1: Simulated Probability of Target Attainment for FIH Dose Candidates

| Proposed Dose (mg) | Probability AUC > Target Efficacy (%) | Probability Cmax < Safety Threshold (%) | Composite Success Probability (%) |

|---|---|---|---|

| 5 | 65 | 99 | 64 |

| 10 | 88 | 95 | 84 |

| 20 | 99 | 75 | 74 |

| 30 | >99 | 55 | 55 |

Clinical Trial Simulation (CTS) for Power and Risk Assessment

Trial outcomes depend on uncertain assumptions about placebo response, treatment effect size, and dropout rates.

Protocol 2: Simulating Phase 3 Trial Power Under Uncertainty

- Define Input Distributions: For each key assumption, define a probability distribution based on prior data (Ph2, literature):

- Placebo Response: Normal(μ=2.0, σ=0.5) [change from baseline]

- Drug Effect Size: Normal(μ=3.0, σ=0.75) [additional change]

- Dropout Rate: Beta(α=15, β=85) [~15% expected]

- Build Trial Model: Program a patient-level simulation. For each of 5,000 simulated trials:

- Sample a global scenario from the input distributions.

- Simulate N patients per arm (e.g., 300), generating individual responses with random variation.

- Perform a statistical test (e.g., ANCOVA) at trial end.

- Analyze Outcomes: The result is a distribution of possible trial outcomes. Calculate the probability that the trial achieves statistical significance (p<0.05)—this is the predictive power.

Table 2: Phase 3 Trial Predictive Power Analysis

| Sample Size (N/arm) | Mean Estimated Power (Point Estimate) | 5th Percentile Predictive Power | Probability of Power > 80% |

|---|---|---|---|

| 250 | 85% | 62% | 67% |

| 300 | 90% | 72% | 85% |

| 350 | 93% | 78% | 94% |

Quantitative Systems Pharmacology (QSP) Uncertainty Analysis

QSP models integrate mechanistic pathway data. Uncertainty in rate constants and binding affinities can alter pathway output predictions.

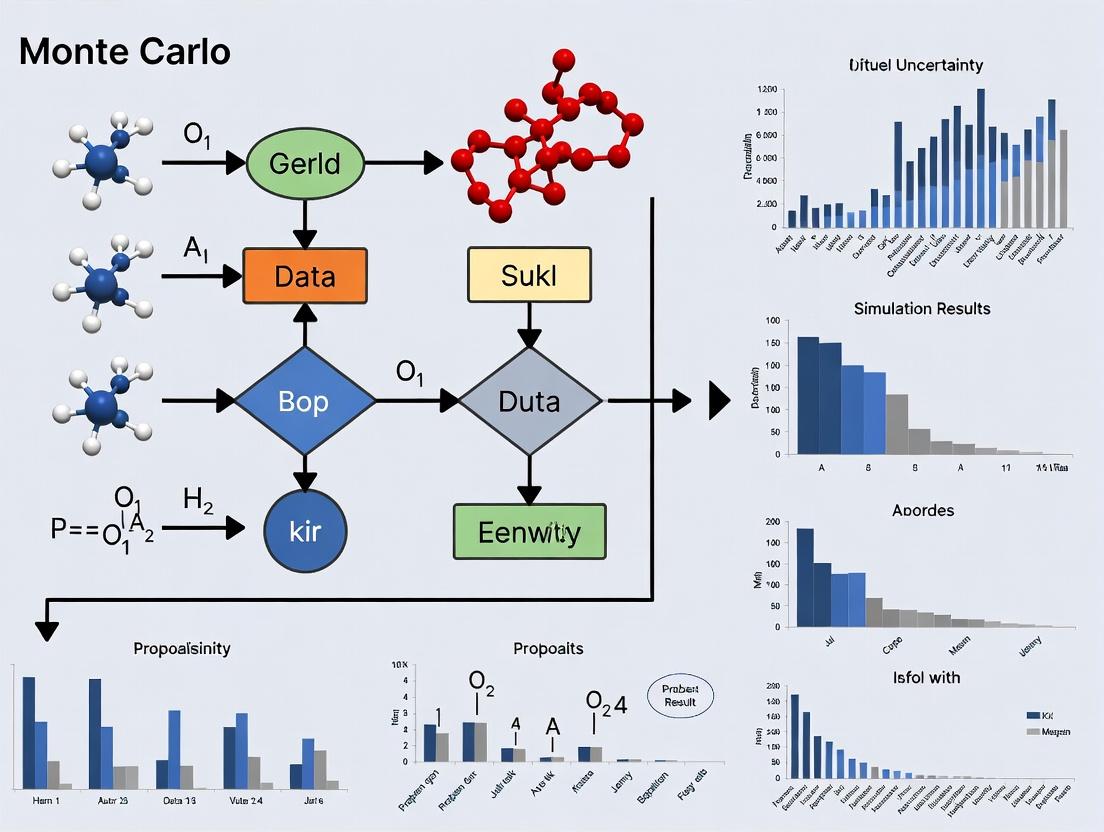

Diagram: QSP Model Uncertainty Propagation Workflow

Title: Monte Carlo Workflow for QSP Model Uncertainty

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Software for Uncertainty Quantification Studies

| Item & Vendor Example | Function in Uncertainty Research |

|---|---|

| Non-linear Mixed-Effects Software (e.g., NONMEM, Monolix, Phoenix NLME) | Fits PK/PD models to sparse, hierarchical data, providing essential parameter estimates and their uncertainty (variance-covariance matrix) for defining input distributions. |

Statistical Programming Environment (e.g., R with mrgsolve, dplyr; Python with SciPy, NumPy, PyMC) |

Core platform for scripting Monte Carlo simulations, managing virtual patient cohorts, performing statistical analysis on output, and creating visualizations. |

| Clinical Trial Simulation Software (e.g, East, SAS Clinical Trial Simulation Suite) | Specialized tools for simulating complex trial designs, handling randomization, protocols, and dropout models, integrated with uncertainty analysis. |

| Quantitative Systems Pharmacology Platform (e.g., DDMoRe, SimBiology, TERANODE) | Provides tools to build, validate, and simulate mechanistic QSP models, enabling parameter sensitivity and global uncertainty analysis. |

| High-Performance Computing (HPC) Cluster or Cloud Service (e.g., AWS, GCP) | Enables the running of thousands of computationally intensive model simulations (e.g., QSP, patient-level CTS) in parallel for robust uncertainty quantification. |

Case Study: Dose Optimization for an Oncology Asset

A Phase 2 asset showed a dose-response but with overlapping confidence intervals for efficacy (Objective Response Rate - ORR) and severe toxicity (Grade 3+ Adverse Events).

Protocol 3: Model-Averaging to Account for Structural Uncertainty

- Develop Candidate Models: Create three dose-response models (Emax, Linear, Logistic) describing ORR and Toxicity as functions of dose. Each model fits the Phase 2 data reasonably well.

- Calculate Model Weights: Use Akaike Information Criterion (AIC) to assign a weight (probability) to each model representing its relative support from the data.

- Perform Weighted Simulation:

- For each model, sample its parameters from posterior distributions (incorporating statistical uncertainty).

- Simulate ORR and Toxicity for doses 100mg to 500mg.

- Combine predictions across models using the AIC weights.

- Decision Output: This yields a unified, uncertainty-aware prediction for the benefit-risk profile.

Table 4: Model-Averaged Predictions for Phase 3 Dose Selection

| Dose (mg) | Predicted ORR (95% CI) | Predicted G3+ AE Rate (95% CI) | Probability(ORR >30% & AE <35%) |

|---|---|---|---|

| 200 | 28% (22-35%) | 25% (18-33%) | 78% |

| 300 | 35% (28-43%) | 32% (24-41%) | 65% |

| 400 | 39% (30-49%) | 40% (30-51%) | 42% |

Diagram: Dose Optimization Decision Framework

Title: Model Averaging for Dose Optimization

Integrating Monte Carlo simulation to quantify model uncertainty moves drug development from a deterministic to a probabilistic paradigm. This allows teams to calculate the probability of success, optimize designs robust to parameter uncertainty, and transparently communicate risks to regulators and stakeholders. Adopting these practices is no longer a niche advanced analytics exercise but a fundamental component of resilient development strategy.

Introduction Within Monte Carlo simulation research for model uncertainty, robust inference is predicated on the quality and principles of random sampling. This document provides application notes and protocols for implementing these core principles in pharmacological and clinical research contexts, focusing on techniques that translate random samples into statistically robust conclusions about model parameters and outcomes.

1. Key Quantitative Data Tables

Table 1: Comparison of Sampling Methods for Pharmacokinetic (PK) Model Uncertainty

| Sampling Method | Key Principle | Use Case in PK/PD | Relative Computational Cost (1-5) | Key Metric for Robustness |

|---|---|---|---|---|

| Simple Random Sampling (SRS) | Each parameter set has equal probability of selection. | Preliminary model exploration. | 1 | Standard Error of the Mean |

| Latin Hypercube Sampling (LHS) | Stratified sampling ensuring full coverage of each parameter's distribution. | Global sensitivity analysis, building surrogate models. | 2 | Convergence of distribution tails |

| Markov Chain Monte Carlo (MCMC) | Sampling based on a probabilistic walk through the parameter space. | Bayesian parameter estimation from clinical data. | 5 | Gelman-Rubin convergence diagnostic (R-hat < 1.1) |

| Bootstrapping | Resampling with replacement from original data to create empirical distribution. | Estimating confidence intervals for model-predicted efficacy endpoints. | 3 | 95% Confidence Interval width stability |

Table 2: Impact of Sample Size on Inference Robustness in a Dose-Response Simulation Scenario: Simulating a binary efficacy outcome (response/no response) for a novel compound.

| Sample Size (N) per Trial Simulation | Probability of Type I Error (α) | Power (1-β) to detect true effect | Width of 95% CI for Response Rate | Monte Carlo Standard Error |

|---|---|---|---|---|

| 100 | 0.07 | 0.62 | ± 19.6% | ± 2.1% |

| 500 | 0.055 | 0.89 | ± 8.7% | ± 0.94% |

| 1000 | 0.052 | 0.98 | ± 6.1% | ± 0.66% |

| 5000 | 0.050 | >0.995 | ± 2.7% | ± 0.30% |

2. Experimental Protocols

Protocol 1: Latin Hypercube Sampling for Global Sensitivity Analysis of a Systems Pharmacology Model

Objective: To systematically explore the uncertainty in model outputs (e.g., predicted AUC) arising from uncertainty in 10+ model parameters.

Materials: Defined parameter distributions (e.g., log-normal for clearance, normal for volume), a functioning systems pharmacology model (in MATLAB, R, Python/NumPy), high-performance computing environment.

Procedure:

- Define Input Distributions: For each of k uncertain parameters, specify a probability distribution (e.g., Mean ± CV%).

- Stratify: Divide each parameter's cumulative distribution function into N equiprobable intervals, where N is the desired number of simulations.

- Sample: Randomly select one value from each interval for each parameter.

- Permute: Randomly permute the order of the samples for each parameter independently to break correlations.

- Combine: The i-th simulation uses the i-th sampled value from each of the k parameters, forming the input matrix.

- Execute Simulations: Run the model N times with the generated input matrix.

- Analyze: Perform regression (e.g., Partial Rank Correlation Coefficient - PRCC) between all input parameters and key model outputs to rank parameter influence.

Protocol 2: Non-Parametric Bootstrapping for Confidence Intervals of IC50 Estimates

Objective: To estimate the 95% confidence interval for a compound's IC50 derived from a cell-based assay, accounting for experimental variability.

Materials: Dose-response raw data (e.g., luminescence readings) from n experimental replicates, nonlinear curve-fitting software (e.g., GraphPad Prism, R drc package).

Procedure:

- Original Fit: Fit a 4-parameter logistic (4PL) model to the original n data points to obtain the point estimate of IC50.

- Resample: Generate a bootstrap sample of size n by randomly selecting data points from the original dataset with replacement.

- Refit: Fit the 4PL model to the bootstrap sample and record the new IC50 estimate.

- Repeat: Perform steps 2-3 a large number of times (B = 2000-5000).

- Construct CI: Form the 95% confidence interval by taking the 2.5th and 97.5th percentiles of the distribution of the B bootstrap IC50 estimates.

- Validate: Assess bootstrap distribution shape (symmetrical vs. skewed) to confirm appropriateness.

3. Mandatory Visualization

Title: Bayesian Inference Workflow Using MCMC Sampling

Title: Bootstrap Method for Confidence Interval Estimation

4. The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Sampling & Inference | Example / Specification |

|---|---|---|

| Statistical Software (R/Python) | Provides libraries for advanced sampling (LHS, MCMC), bootstrap, and statistical analysis. | R: boot, lhs, rstan packages. Python: SciPy, NumPy, emcee, Chaospy. |

| High-Performance Computing (HPC) Cluster | Enables execution of thousands of Monte Carlo simulations in parallel, making robust sampling feasible. | Cloud-based (AWS, GCP) or on-premise clusters with job-scheduling software (SLURM). |

| Bayesian Inference Engine | Specialized software to perform efficient MCMC sampling for complex hierarchical models. | Stan (via CmdStanR/CmdStanPy), PyMC, JAGS. |

| Global Sensitivity Analysis Tool | Automates the design and analysis of sampling-based sensitivity studies. | SALib (Python) for Sobol', Morris, and FAST methods. |

| Virtual Population Generator | Creates physiologically plausible, covariate-sampled virtual patients for trial simulation. | Built using demographic data and covariate distributions; tools in R, MATLAB, or specialized PK/PD software. |

| Nonlinear Mixed-Effects Modeling Software | Integrates random sampling of individual parameters (EBEs) within a population framework for inference. | NONMEM, Monolix, nlmixr (R). |

This document provides application notes and detailed protocols for the systematic characterization of uncertainty sources within quantitative systems pharmacology (QSP) and pharmacokinetic/pharmacodynamic (PK/PD) models, as part of a thesis employing Monte Carlo simulation for model uncertainty research.

Uncertainty in mathematical models of biological systems is traditionally decomposed into three interconnected categories. Their quantitative impact is typically assessed via variance decomposition in Monte Carlo simulation outputs.

Table 1: Characterization of Uncertainty Sources in Computational Models

| Source Type | Definition | Typical Manifestation in PK/PD | Primary Quantitative Method |

|---|---|---|---|

| Parametric | Uncertainty in the precise value of fixed model parameters (e.g., clearance, EC₅₀). | Inter-individual variability (IIV), inter-occasion variability, uncertain in vitro-in vivo extrapolation. | Probability distributions (Log-Normal, Normal, Uniform) assigned to parameters; Markov Chain Monte Carlo (MCMC) estimation. |

| Structural | Uncertainty in the mathematical form of the model itself (model misspecification). | Choice of indirect response vs. turnover model; inclusion/exclusion of tolerance mechanisms; target-mediated drug disposition. | Model averaging; bootstrap model selection; super-structure approach with discrete model probability weights. |

| Residual | Unexplained variability, including measurement error, model misspecification error, and intra-individual variability. | Additive, proportional, or combined error models for PK assays; residual unexplained variability in PD endpoints. | Error model fitting (e.g., Yobs = Ypred * (1 + εprop) + εadd), where ε ~ N(0, σ²). |

Table 2: Example Variance Decomposition from a Simulated PK/PD Study (N=1000 Virtual Patients)

| Output Metric | Total Variance | % Attributable to Parametric | % Attributable to Structural (Model Choice) | % Attributable to Residual |

|---|---|---|---|---|

| AUC₀–₂₄ (ng·h/mL) | 1250 | 85% | 10% | 5% |

| C_max (ng/mL) | 98 | 80% | 15% | 5% |

| E_max (Effect Units) | 45 | 60% | 30% | 10% |

Experimental Protocols

Protocol 1: Global Sensitivity Analysis for Parametric Uncertainty Quantification

Objective: To rank influential parameters driving output variance using Sobol indices.

- Model Definition: Implement your base PK/PD model in a suitable environment (e.g., R/

mrgsolve, Python/PySB, NONMEM). - Parameter Distributions: Define plausible probability distributions for all uncertain parameters (e.g., Clearance ~ Lognormal(μ=10, CV=30%)).

- Sampling: Generate a sample matrix of size N (e.g., N=10,000) using a Sobol sequence to ensure uniform space-filling.

- Simulation: Execute the model for each parameter set, recording key outputs (AUC, Cmax, Tmax, E_max).

- Analysis: Calculate first-order (Si) and total-order (STi) Sobol indices using the

saltellimethod (PythonSALiblibrary). S_Ti quantifies a parameter's total contribution, including interactions. - Interpretation: Parameters with S_Ti > 0.1 are considered highly influential and prioritized for refinement.

Protocol 2: Model Averaging to Address Structural Uncertainty

Objective: To generate robust predictions that account for multiple plausible model structures.

- Model Suite Development: Develop a set of M (e.g., M=4) competing models representing distinct structural hypotheses (e.g., linear vs. nonlinear clearance, different PD effector mechanisms).

- Individual Fitting: Calibrate each model (M1...M4) against the same experimental dataset using maximum likelihood or Bayesian estimation.

- Model Weight Calculation: Compute the Akaike Information Criterion (AIC) for each model: AIC = 2k - 2ln(L), where k is parameter count, L is max likelihood. Derive Akaike weights: w_i = exp(-Δ_i/2) / Σ[exp(-Δ_m/2)], where Δi = AICi - min(AIC).

- Averaged Prediction: For any prediction of interest (e.g., predicted tumor size over time), compute the weighted average: Pred_avg(t) = Σ [w_i * Pred_i(t)] across all models.

- Uncertainty Estimation: The weighted variance around Pred_avg provides a confidence interval capturing structural uncertainty.

Protocol 3: Residual Error Model Selection

Objective: To identify the residual error structure that best describes the discrepancy between model predictions and observations.

- Base Model Fixing: Use a single structural model and its final parameter estimates.

- Error Model Testing: Evaluate a series of nested error models: a. Additive: Y_obs = Y_pred + ε_a b. Proportional: Y_obs = Y_pred * (1 + ε_p) c. Combined: Y_obs = Y_pred * (1 + ε_p) + ε_a where ε ~ N(0, σ²).

- Fitting & Comparison: Estimate the variance parameters (σp, σa) for each error model. Use likelihood ratio tests (for nested models) or Bayesian Information Criterion (BIC) for non-nested models to select the best fit.

- Validation: Perform visual predictive checks (VPCs) using the selected error model to confirm it adequately captures the spread of observed data around population predictions.

Visualization

Workflow for Characterizing Model Uncertainty

Model Averaging to Quantify Structural Uncertainty

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Uncertainty Analysis

| Item / Software | Function in Uncertainty Research | Key Application |

|---|---|---|

R with mrgsolve |

Fast simulation of PK/PD models from specified parameter sets. | Protocol 1: High-throughput Monte Carlo simulations. |

Python SALib Library |

Implementation of global sensitivity analysis methods (Sobol, Morris). | Protocol 1: Calculation of Sobol indices for variance decomposition. |

| NONMEM / Monolix | Industry-standard for nonlinear mixed-effects modeling. | Protocol 2 & 3: Parameter estimation, model fitting, and residual error model selection. |

xpose / Pirana |

Diagnostic tool for model evaluation and visualization (VPC, goodness-of-fit). | Protocol 3: Visual Predictive Check generation and diagnosis. |

| High-Performance Computing (HPC) Cluster | Enables large-scale parallel simulations (10,000+ runs). | All Protocols: Managing computational burden of exhaustive uncertainty analyses. |

Bayesian Estimation Software (Stan/brms, NIMBLE) |

Robust quantification of parameter uncertainty via posterior distributions. | Complementary to Protocol 1: Generating full joint parameter distributions for sampling. |

Historical Context and Evolution in Biomedical Research

Application Notes on Model Uncertainty in Drug Development

The quantification of model uncertainty is paramount in translating preclinical research to clinical success. Historically, biomedical research relied on deterministic models, but the inherent biological variability and sparse data in early-stage research necessitated a probabilistic framework. Monte Carlo simulation provides this framework, enabling researchers to propagate uncertainty from in vitro assays through pharmacokinetic/pharmacodynamic (PK/PD) models to predict clinical outcomes. The evolution from qualitative, observational science to quantitative, predictive modeling is underscored by the adoption of these computational techniques, which directly address the "black box" nature of complex biological systems and imperfectly characterized drug candidates.

Table 1: Evolution of Key Quantitative Approaches in Biomedical Research

| Era | Dominant Paradigm | Key Limitation | Introduction of Uncertainty Quantification |

|---|---|---|---|

| 1900-1950 | Descriptive Biology & Biochemistry | Qualitative, Low Throughput | None |

| 1950-1980 | Deterministic Mathematical Modeling (e.g., Michaelis-Menten) | Ignores Parameter Variance | Analytical Error Propagation |

| 1980-2000 | Computational Biology & Early Stochastic Models | Limited Computing Power | Bootstrap Methods, Basic MCMC |

| 2000-Present | Systems Pharmacology & AI/ML | Model Misspecification Risk | Integrated Monte Carlo Simulation, Bayesian Hierarchical Modeling |

Table 2: Impact of Monte Carlo Simulation on Key Drug Development Metrics

| Development Stage | Traditional Approach Success Rate (Est.) | With Integrated Uncertainty Modeling (Est.) | Primary Uncertainty Source Addressed |

|---|---|---|---|

| Preclinical to Phase I Transition | ~60% | Improved Candidate Selection | In vitro to in vivo extrapolation (IVIVE) |

| Phase II to Phase III Transition | ~30% | Improved Dose Selection & Trial Design | Inter-patient variability, PD endpoint variability |

| Overall Approval Rate (Phase I to Launch) | ~10% | Potential for 5-10% relative increase | Integrated model uncertainty across all stages |

Detailed Protocols

Protocol 1: Monte Carlo-Based Uncertainty Propagation in a Preclinical PK/PD Model

Objective: To predict the range of likely clinical drug exposures and target engagement levels from preclinical data, accounting for uncertainty in all model parameters.

Materials & Software:

- In vivo PK data (plasma concentration-time profiles from animal studies).

- In vitro PD data (IC50/EC50 from cellular assays).

- Physiological parameters (e.g., organ weights, blood flow rates).

- Software: R (with

mrgsolveorRxODEpackages), Python (withPyMC3orStan), MATLAB SimBiology.

Procedure:

- Model Structuring: Define a compartmental PK model (e.g., 2-compartment) and a direct-effect PD model (e.g., Emax model).

- Parameter Priors: For each model parameter (e.g., clearance

CL, volumeV, Emax, EC50), define a probability distribution based on preclinical data. For example, if meanCL= 5 L/h with standard error 1.2 L/h, use a Log-Normal(ln(5), 0.24) distribution. - Correlation Specification: Identify correlated parameters (e.g.,

CLand volume of distributionV) and define a covariance matrix using prior knowledge or bootstrap estimates from preclinical data. - Simulation Execution:

a. Using a Monte Carlo engine, draw

N(e.g., 10,000) random sets of parameter values from the defined joint probability distribution. b. For each parameter seti, solve the coupled PK/PD model equations for a proposed human dosing regimen. c. Store key outputs:Cmax,AUC,Time above EC90. - Uncertainty Analysis: Generate histograms and 90% prediction intervals for all outputs. Perform global sensitivity analysis (e.g., Sobol indices) to rank parameters by their contribution to output variance.

Protocol 2: Uncertainty-Aware Virtual Patient Population for Trial Simulation

Objective: To create a virtual cohort reflecting true population heterogeneity and model uncertainties to simulate clinical trial outcomes.

Procedure:

- Base Population PK Model: Start with a published population PK model for a drug class or a relevant candidate.

- Covariate Model Identification: From literature, identify demographic (weight, age, renal function) and genomic (e.g., CYP450 metabolizer status) covariates linked to PK parameters. Define distributions for these covariates in the target population.

- Uncertainty Layering:

a. Level 1 (Between-Subject Variability, BSV): Model parameters for the

j-thvirtual patient:P_j = TVP * exp(η_j), whereTVPis the typical value andη_j ~ N(0, ω²). b. Level 2 (Uncertainty in TVP): TheTVPitself is uncertain. SetTVP ~ N(mean_TVP, SE_TVP²)from the prior model. c. Level 3 (Uncertainty in BSV): The magnitude of variabilityωis uncertain:ω ~ Uniform(low, high). - Virtual Cohort Generation:

a. Sample a value for each

TVPandωfrom their uncertainty distributions (Levels 2 & 3). b. Forn=1toN=1000, generate a virtual patient: sample covariates, then sample individual parametersP_nusing the BSV model from step (a). - Trial Simulation & Analysis: Simulate the drug concentration-time profile for each virtual patient under the trial protocol. Apply a PD/efficacy model (with its own uncertainty) and a safety threshold. Calculate probability of efficacy and toxicity. Repeat the entire process (

K=1000times) to generate a distribution of possible trial outcomes (e.g., probability of success).

Visualizations

Title: Workflow: Monte Carlo for Preclinical to Clinical Translation

Title: Layered Uncertainty in Virtual Patient Generation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents & Tools for Uncertainty-Aware Biomedical Research

| Item / Solution | Function in Context | Relevance to Uncertainty Quantification |

|---|---|---|

| Recombinant Human Enzymes & Cells (e.g., CYP450 isoforms, HEK293 transfected cells) | Provide consistent, defined systems for in vitro ADME and target engagement assays. | Reduces experimental variability in input data, tightening prior distributions for parameters like CLint or EC50. |

| LC-MS/MS Systems | Quantify drug and metabolite concentrations in biological matrices with high sensitivity. | Provides the precise, low-noise PK/PD time-series data essential for robust parameter estimation and variance calculation. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for matrix effects and ionization efficiency. | Minimizes measurement error, a key component of residual unexplained variability in models. |

| High-Content Screening (HCS) Imaging Platforms | Multiparametric measurement of cellular responses (e.g., translocation, morphology). | Generates multivariate PD data, enabling fitting of complex, systems-level models with quantifiable confidence intervals on parameters. |

| qPCR & NGS Reagents | Quantify gene expression, genotype polymorphisms, or transcriptional responses. | Provides covariate data (e.g., metabolizer status) and dynamic pathway data to inform mechanism-based models and define sub-populations. |

Statistical Software & Libraries (e.g., Stan, NONMEM, Monolix, PyMC) |

Perform Bayesian inference, population modeling, and MCMC sampling. | The core engine for integrating data, defining probability distributions for parameters, and executing Monte Carlo simulations. |

| Cloud Computing Credits | Access to scalable, high-performance computing (HPC) resources. | Makes the thousands of iterations required for robust Monte Carlo simulation and virtual trial campaigns computationally feasible and timely. |

Within Monte Carlo simulation research for model uncertainty in drug development, precise understanding of three core concepts—Distributions, Iterations, and Convergence—is non-negotiable. This application note details their operational definitions, experimental protocols for their analysis, and visualization of their logical interplay, directly supporting the broader thesis that robust uncertainty quantification is foundational for regulatory-grade pharmacometric models.

Core Terminology & Quantitative Data

Table 1: Core Terminology in Monte Carlo Uncertainty Analysis

| Term | Definition in Context | Key Quantitative Descriptors |

|---|---|---|

| Distributions | Probability functions representing the plausible range and likelihood of model input parameters (e.g., PK/PD rates, demographic variables). Input uncertainty is propagated via sampling from these. | Mean (μ), Variance (σ²), Type (e.g., Normal, Log-Normal, Uniform), 95% Credible Interval. |

| Iterations | A single cycle of the simulation where a random set of parameter values is drawn from the specified Distributions and the model output is computed. The set of all iterations forms the output distribution. | Number of Iterations (N), Run Time per Iteration, Total Computational Cost. |

| Convergence | The state where increasing the number of Iterations no longer meaningfully changes the statistics (e.g., mean, percentiles) of the output distribution. Indicates simulation stability and result reliability. | Gelman-Rubin Statistic (R̂ < 1.05), Monte Carlo Standard Error (MCSE < 5% of SD), Stabilization of Percentile Estimates. |

Table 2: Convergence Diagnostics for a Notorious Two-Compartment PK Model Example output from 4 parallel Markov chains, each with 10,000 iterations post-warm-up.

| Parameter | Mean | 2.5% Percentile | 97.5% Percentile | Gelman-Rubin R̂ | MCSE (of Mean) |

|---|---|---|---|---|---|

| CL (L/h) | 5.2 | 4.1 | 6.5 | 1.01 | 0.08 |

| Vd (L) | 42.5 | 35.0 | 52.1 | 1.03 | 0.45 |

| Ka (1/h) | 1.8 | 0.9 | 3.2 | 1.12 | 0.15 |

Experimental Protocols

Protocol 1: Propagation of Input Uncertainty via Distribution Sampling Objective: To generate a robust prediction interval for a pharmacokinetic endpoint (e.g., AUC) by propagating parameter uncertainty.

- Define Input Distributions: For each uncertain model parameter (e.g., Clearance (CL), Volume (Vd)), specify a probability distribution based on prior population PK analysis (e.g., CL ~ LogNormal(μ=1.5, σ=0.2)).

- Initialize Simulation: Set the number of iterations, N (start with N=10,000).

- Sample and Execute: For i = 1 to N:

- Randomly draw one value for each parameter from its defined distribution using a pseudo-random number generator (Mersenne Twister recommended).

- Run the simulation model (e.g., ODE solver) with this parameter set.

- Record the output metric of interest (AUC_i).

- Summarize Output: Compile all AUC_i values into an empirical distribution. Calculate and report the median, 2.5th, and 97.5th percentiles as the 95% prediction interval.

Protocol 2: Assessing Simulation Convergence Objective: To determine the sufficient number of iterations for a stable output distribution.

- Run Multiple Chains: Execute 4 independent simulation runs (chains) from different starting points, each with N=2000 iterations.

- Calculate Within/Between Chain Variance: For a key output (e.g., simulated Cmax at steady state), compute the variance of its mean within each chain and the variance between the means of the four chains.

- Compute Gelman-Rubin Diagnostic (R̂):

- R̂ = sqrt( ( (N-1)/N * W + (1/N) * B ) / W ), where W is average within-chain variance and B is between-chain variance.

- Check Diagnostic:

- If R̂ > 1.05 for any key output, double N (e.g., to 4000) and repeat Protocol from step 1.

- If R̂ ≤ 1.05 for all outputs, declare convergence. Use the pooled samples from all chains for final analysis.

Visualization of Concepts

Title: Monte Carlo Uncertainty Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Monte Carlo Uncertainty Research

| Item / Software | Primary Function | Application in Protocol |

|---|---|---|

| Pseudo-Random Number Generator (Mersenne Twister) | Generates high-quality, reproducible random sequences. | Foundational for sampling from input Distributions in Protocol 1. |

| ODE Solver (e.g., CVODE, LSODA) | Numerically solves systems of differential equations defining PK/PD models. | Executes the model for each sampled parameter set in every Iteration. |

| Convergence Diagnostics (R̂, MCSE) | Statistical functions to assess stability of simulation output. | Core calculations for Convergence assessment in Protocol 2. |

| High-Performance Computing (HPC) Cluster | Provides parallel processing for computationally expensive simulations. | Enables running multiple Iteration chains simultaneously to accelerate Convergence. |

| Bayesian Inference Engine (e.g., Stan, NONMEM) | Software for fitting models to data and generating posterior parameter Distributions. | Often the source of the input Distributions used in Protocol 1. |

Implementing Monte Carlo Simulation: A Step-by-Step Protocol for Pharmacometric Models

Within Monte Carlo simulation frameworks for quantifying model uncertainty in pharmacology and systems biology, the definition of input parameter distributions (priors) is the critical first step. This protocol details the systematic acquisition, curation, and statistical characterization of prior distributions from empirical experimental and clinical data sources. Robust priors directly inform the parameter space explored in subsequent simulations, ensuring that uncertainty analysis is grounded in biological reality.

Quantitative data for prior construction is typically extracted from the following sources:

- In vitro biochemical assays (e.g., IC50, Ki, kcat).

- Pharmacokinetic (PK) studies in animals and humans.

- Biomarker response data from early-phase clinical trials.

- Published literature and structured databases (e.g., ChEMBL, PubChem BioAssay).

- High-throughput screening (HTS) data outputs.

Table 1: Example Prior Data from a Kinase Inhibition Assay

| Parameter | Data Type | N | Mean (μ) | Std Dev (σ) | Min | Max | Reported Source |

|---|---|---|---|---|---|---|---|

| IC50 (nM) | Continuous | 15 | 12.3 | 4.1 | 5.6 | 22.4 | Internal HTS |

| Hill Coefficient | Continuous | 15 | 1.2 | 0.3 | 0.8 | 1.7 | Internal HTS |

| % Inhibition @ 10μM | Continuous | 15 | 98.5 | 1.2 | 95.0 | 99.8 | Internal HTS |

Table 2: Example Prior Data from a Phase I Clinical PK Study

| Parameter | Data Type | N | Geometric Mean | CV% | 95% CI | Source |

|---|---|---|---|---|---|---|

| CL/F (L/hr) | Log-Normal | 30 | 5.2 | 28.5 | [3.8, 7.1] | Trial NCTXYZ123 |

| Vd/F (L) | Log-Normal | 30 | 120 | 25.0 | [85, 170] | Trial NCTXYZ123 |

| t₁/₂ (hr) | Derived | 30 | 16.0 | 15.0 | [12.5, 20.5] | Trial NCTXYZ123 |

Experimental Protocols for Key Assays

Protocol 4.1: In Vitro Kinase Inhibition Assay (FRET-based)

Objective: Generate IC50 and Hill coefficient data for defining inhibitory potency priors.

Materials: See Scientist's Toolkit. Procedure:

- Reconstitute the test compound in DMSO to prepare a 10 mM master stock.

- Prepare 11-point, 1:3 serial dilutions in DMSO in a 96-well compound plate.

- Transfer 50 nL of each dilution to a low-volume 384-well assay plate using an acoustic dispenser. Include control wells (100% inhibition, 0% inhibition).

- Prepare kinase reaction mix containing kinase enzyme, FRET-labeled substrate, and ATP (at Km concentration) in assay buffer.

- Dispense 5 µL of reaction mix to each well of the assay plate to initiate reaction. Final DMSO concentration is 1%.

- Incubate plate at 25°C for 60 minutes.

- Stop the reaction by adding 5 µL of detection buffer containing EDTA and development antibodies.

- Incubate for 60 minutes at 25°C for signal development.

- Read plate on a FRET-compatible microplate reader (excitation 340 nm, emission 495 nm/520 nm).

- Calculate % inhibition relative to controls. Fit dose-response data to a 4-parameter logistic (4PL) model: Response = Bottom + (Top-Bottom) / (1 + 10^((LogIC50 - x) * HillSlope)) to extract IC50 and Hill coefficient.

Protocol 4.2: Population Pharmacokinetic Sampling (Phase I SAD/MAD)

Objective: Obtain plasma concentration-time data for estimating population PK parameter distributions (CL/F, Vd/F).

Procedure:

- Study Design: Single Ascending Dose (SAD) followed by Multiple Ascending Dose (MAD) cohorts. 8 healthy subjects per cohort (6 active, 2 placebo).

- Dosing: Administer pre-defined oral dose under fasting conditions.

- Blood Sampling: Collect venous blood (2-3 mL) into K2EDTA tubes at pre-dose, 0.25, 0.5, 1, 2, 4, 6, 8, 12, 24, 48, and 72 hours post-dose.

- Sample Processing: Centrifuge samples at 1500 x g for 10 min at 4°C within 60 min of collection. Transfer plasma to polypropylene tubes and store at -80°C.

- Bioanalysis: Quantify compound concentration using a validated LC-MS/MS method.

- Population PK Analysis: Use non-linear mixed-effects modeling (e.g., NONMEM, Monolix) to estimate the geometric mean and variance (ω²) of CL/F and Vd/F, assuming a log-normal distribution for inter-individual variability.

The Scientist's Toolkit: Key Reagents & Materials

| Item | Function/Application | Example Product/Catalog |

|---|---|---|

| FRET-based Kinase Assay Kit | Quantifies kinase activity via fluorescence resonance energy transfer; enables IC50 determination. | Invitrogen Z'-LYTE Kinase Assay Kit |

| Low-Volume 384-Well Plate | Minimizes reagent usage for high-throughput screening. | Corning 3820 Low Volume Microplate |

| Acoustic Liquid Dispenser | Contactless, precise transfer of nanoliter compound volumes. | Beckman Coulter Echo 650 |

| LC-MS/MS System | Gold-standard for quantitative bioanalysis of drugs in biological matrices. | Sciex Triple Quad 6500+ |

| Population PK Modeling Software | Performs non-linear mixed-effects modeling to derive parameter distributions. | Monolix Suite 2024R1 |

| Statistical Programming Environment | For data curation, visualization, and distribution fitting (e.g., to Normal, Log-Normal, Beta). | R (fitdistrplus package) |

Visualization of Workflows

Title: Workflow for Defining Prior Distributions

Title: From Assay Signal to Prior Distribution

Within the broader thesis on Monte Carlo simulation for model uncertainty quantification in pharmaceutical research, the choice of input sampling strategy is critical. This document provides detailed application notes and protocols comparing two prevalent techniques: Simple Random Sampling (SRS) and Latin Hypercube Sampling (LHS). The focus is on their application in propagating parameter uncertainty through complex, computationally expensive models common in pharmacometrics and systems pharmacology.

Core Principles and Comparative Analysis

Conceptual Definitions

- Simple Random Sampling (SRS): A probabilistic method where n sample points are independently drawn from a predefined probability distribution for each input parameter. There is no constraint on the placement of points, which can lead to clustering and gaps in the input space.

- Latin Hypercube Sampling (LHS): A stratified, constrained random sampling technique. For n samples and d parameters, the cumulative distribution function for each parameter is divided into n equiprobable intervals. One sample is randomly placed within each interval for each parameter, and these are then randomly paired across parameters to form the sample set, ensuring full stratification of each marginal distribution.

Quantitative Performance Comparison

Theoretical and empirical studies demonstrate key differences in efficiency and coverage.

Table 1: Comparative Performance of SRS vs. LHS

| Metric | Simple Random Sampling (SRS) | Latin Hypercube Sampling (LHS) | Implication for Monte Carlo Studies |

|---|---|---|---|

| Space Filling | Poor to moderate; prone to clusters and voids. | Superior; enforces a more even projection onto each parameter axis. | LHS reduces the risk of missing regions of the input space, crucial for tail behavior. |

| Variance of Mean Estimate | Var(Ȳ) = σ²/n | Typically lower for the same n; effective for deterministic models. | For a fixed computational budget (n model runs), LHS often yields a more precise estimate of the output mean. |

| Convergence Rate | ~O(1/√n) (standard Monte Carlo) | Can approach ~O(1/n) for certain outputs of smooth functions. | LHS achieves desired precision with fewer model evaluations, critical for expensive models. |

| Correlation Control | None inherent. Parameters are sampled independently. | Random pairing can induce spurious correlations. Requires ranking or optimization to minimize. | Uncontrolled correlations in LHS can bias results. Post-processing is often required. |

| Implementation Complexity | Low. Trivial to implement. | Moderate. Requires stratification and often correlation control. | SRS is easier to code and validate, but LHS benefits are substantial for complex models. |

Table 2: Empirical Example - Estimating Model Output Mean

| Sampling Method | Number of Runs (n) | Estimated Output Mean | Standard Error of the Mean | Relative Efficiency vs. SRS |

|---|---|---|---|---|

| SRS | 100 | 12.45 | 0.89 | 1.00 (Baseline) |

| LHS (naive) | 100 | 12.38 | 0.52 | ~2.93 |

| LHS (w/ Correlation Control) | 100 | 12.41 | 0.47 | ~3.58 |

Note: Example data is illustrative, based on a typical pharmacokinetic model output. Relative efficiency is calculated as (Variance_SRS / Variance_LHS).

Experimental Protocols

Protocol A: Implementing Simple Random Sampling for Model Parameter Uncertainty

Objective: To generate an SRS set for propagating uncertainty through a computational model. Materials: Computational environment (R, Python, MATLAB), model parameter definitions (distributions, ranges). Procedure:

- Define Distributions: For each of the k uncertain input parameters, specify a probability distribution (e.g., Log-Normal(μ, σ²) for clearance, Uniform(a, b) for bioavailability).

- Set Sample Size: Determine the number of Monte Carlo runs n based on computational resources and required precision.

- Generate Sample Matrix: For i = 1 to n:

- For each parameter j = 1 to k, independently draw a random value xᵢⱼ from its specified distribution using a pseudo-random number generator.

- Assemble vector Xᵢ = [xᵢ₁, xᵢ₂, ..., xᵢₖ].

- Execute Simulations: Run the model M(X) for each sample vector Xᵢ, collecting outputs Yᵢ.

- Analyze Output: Construct empirical distributions of Y, calculate summary statistics (mean, variance, percentiles).

Protocol B: Implementing Latin Hypercube Sampling with Correlation Control

Objective: To generate a space-filling LHS set with minimal spurious correlations.

Materials: Computational environment with LHS library (e.g., lhs in R's DoE.wrapper, pyDOE2 in Python, lhsdesign in MATLAB).

Procedure:

- Define Distributions & Sample Size: As in Protocol A, Step 1 & 2.

- Generate Stratified Marginals:

- For each parameter j, divide the inverse cumulative distribution function (CDF⁻¹) into n equal intervals: [0, 1/n), [1/n, 2/n), ..., [(n-1)/n, 1].

- Within each interval, draw a random uniform value. This creates a vector of n samples for parameter j.

- Randomly permute this vector. Repeat for all k parameters.

- Control Correlation (Optimized LHS):

- Generate an initial n x k LHS matrix using Step 2.

- Calculate the rank correlation matrix (Spearman's ρ) of the sample.

- Use an optimization algorithm (e.g., column-wise pairwise swapping, simulated annealing) to minimize the difference between the sample correlation matrix and a target matrix (usually the identity matrix, implying independence).

- Iterate until a minimization criterion (e.g., sum of squared correlations) is met.

- Transform to Distributions: Apply the appropriate inverse CDF (e.g.,

qlnorm,qunif) to each column of the final LHS matrix (which contains values in [0,1]) to obtain the physical parameter values. - Execute and Analyze: Run the model and analyze outputs as in Protocol A, Step 4 & 5.

Visualizations

Title: Simple Random Sampling Workflow

Title: Latin Hypercube Sampling with Correlation Control

Title: Space Coverage Comparison: SRS vs. LHS (Conceptual)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for Sampling in Uncertainty Analysis

| Tool / Resource | Primary Function | Application in Sampling |

|---|---|---|

| R Statistical Environment | Comprehensive statistical computing and graphics. | Core platform. Use packages DoE.wrapper (for lhs), randtoolbox, sensitivity for generating and analyzing SRS, LHS, and Sobol' sequences. |

| Python (NumPy, SciPy, pyDOE2) | General-purpose scientific computing. | Use numpy.random for SRS. The pyDOE2 library provides LHS and other designs. SALib is essential for sensitivity analysis. |

| MATLAB Statistics & ML Toolbox | Numerical computing and algorithm development. | Functions rand, lhsdesign, lhsnorm for sample generation. Useful for integrated workflows with SimBiology. |

| JMP / SAS | Advanced statistical data visualization and analysis. | GUI-driven design of experiments (DOE) capabilities, including custom LHS generation for simulation studies. |

| Simulation Software (e.g., NONMEM, Monolix, Simulink) | Pharmacometric & systems biology modeling. | Native or integrated Monte Carlo tools often use SRS. LHS typically requires external sample matrix import via dataset. |

| Correlation Control Algorithm (e.g., Genetic Algorithm, Simulated Anneining) | Numerical optimization. | Critical for generating optimized LHS. Custom scripts or libraries (e.g., R's lhs with maximin or genetic option) implement this. |

Within the broader thesis on Monte Carlo simulation for model uncertainty in quantitative systems pharmacology (QSP), this step transitions from theoretical framework to practical application. Execution involves sampling from defined probability distributions of model parameters and running the model repeatedly to generate a distribution of possible outcomes. This propagation quantifies the impact of input uncertainty on model predictions, which is critical for risk-aware decision-making in drug development.

Core Methodologies: Sampling & Execution Protocols

Monte Carlo Sampling Workflow

The execution phase follows a standardized, iterative workflow.

Diagram Title: Monte Carlo Iterative Sampling and Execution Workflow

Detailed Execution Protocol: A QSP Case Study

Protocol Title: Uncertainty Propagation for a QSP Model of a Novel Oncology Therapeutic (JAK-STAT Pathway)

Objective: To quantify the uncertainty in predicted tumor burden reduction at Week 12 due to uncertainties in 6 key kinetic parameters.

Pre-Requisites: A fully defined QSP model (differential equations) and parameter uncertainty distributions (from Step 2: e.g., Log-Normal(μ, σ) for each parameter).

Materials & Software: See The Scientist's Toolkit below.

Procedure:

- Initialize Simulation: Set number of Monte Carlo iterations, N=10,000. Define output vector

TumorReduction[1...N]. - Iterative Loop (for i = 1 to N):

a. Sample: Draw one random value from the pre-defined probability distribution for each of the 6 uncertain parameters, using a Latin Hypercube Sampling (LHS) algorithm to ensure efficient coverage of the parameter space.

b. Configure: Insert the sampled parameter set into the QSP model, replacing the nominal values.

c. Execute: Run the model simulation from time=0 to Week 12 under the defined dosing regimen.

d. Capture: Record the final simulated tumor volume. Calculate percentage reduction from baseline. Store value in

TumorReduction[i]. - Post-Processing: After loop completion, analyze the

TumorReductionvector.- Generate a histogram and kernel density estimate.

- Calculate summary statistics: median, 5th, and 95th percentiles (the 90% prediction interval).

- Perform sensitivity analysis (e.g., rank correlation) to attribute output variance to specific input parameters.

Expected Output: A probability distribution of the clinical endpoint (tumor reduction), explicitly conveying prediction confidence.

Data Presentation: Representative Simulation Results

Table 1: Summary Statistics from a 10,000-Iteration Monte Carlo Simulation of Tumor Reduction

| Output Metric | Median | 5th Percentile | 95th Percentile | Standard Deviation |

|---|---|---|---|---|

| Tumor Burden Reduction at Week 12 | 62.5% | 41.2% | 78.8% | 11.4% |

| Simulated Peak Cytokine Level (pg/mL) | 145.2 | 89.7 | 220.1 | 39.8 |

| Time to Maximal Pathway Inhibition (days) | 8.5 | 5.1 | 14.2 | 2.8 |

Table 2: Key Parameter Contributions to Output Variance (Sobol' Indices)

| Uncertain Input Parameter | Main Effect Index | Total Effect Index | Primary Impact on Output |

|---|---|---|---|

| JAK2 Phosphorylation Rate (k_pJAK) | 0.38 | 0.45 | Peak Inhibition |

| STAT5 Degradation Rate (d_STAT5) | 0.22 | 0.31 | Response Durability |

| Receptor Internalization Rate (k_int) | 0.15 | 0.24 | Early Signal Amplitude |

| Drug-Target Dissociation (K_d) | 0.12 | 0.18 | Overall Efficacy |

| Feedback Upregulation Rate (k_fb) | 0.08 | 0.15 | Late Resistance |

| Baseline Tumor Growth Rate (r_tumor) | 0.05 | 0.10 | Baseline Variability |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Computational Tools

| Item Name | Category | Function/Benefit |

|---|---|---|

| MATLAB SimBiology | Commercial Software | Provides integrated environment for QSP model building, parameter estimation, and stochastic simulation. |

| R 'mrgsolve'/'RxODE' packages | Open-Source Software | High-performance differential equation solvers in R, optimized for population (Monte Carlo) pharmacokinetic/pharmacodynamic (PK/PD) and QSP analyses. |

| Python SciPy/NumPy/PyStan | Open-Source Libraries | Flexible ecosystem for custom sampling algorithms (LHS, MCMC) and numerical integration of ODE models. |

| Latin Hypercube Sampling (LHS) | Algorithm | A stratified sampling technique that ensures efficient, full-coverage sampling of parameter distributions with fewer iterations than simple random sampling. |

| Sobol' Sequence Generator | Algorithm | A quasi-random, low-discrepancy sequence for variance-based sensitivity analysis, ensuring efficient exploration of high-dimensional parameter spaces. |

| Global Sensitivity Analysis (GSA) Suite | Analytical Method | A collection of methods (e.g., Sobol' indices, eFAST) used post-execution to quantify each input parameter's contribution to output variance. |

Advanced Execution: Integrating Structural Uncertainty

Execution must also account for alternative model structures (e.g., with/without a feedback mechanism).

Diagram Title: Executing Monte Carlo Under Structural Uncertainty

Within the framework of Monte Carlo (MC) simulation for quantifying model uncertainty in pharmacokinetic-pharmacodynamic (PK/PD) and systems pharmacology, Step 4 represents the critical analysis phase. After generating an ensemble of model outputs through stochastic sampling of input parameter distributions, researchers must interpret this data robustly. This application note details protocols for constructing Confidence Intervals (CIs) and Prediction Intervals (PIs) and conducting Sensitivity Analysis (SA) to support decision-making in drug development.

Core Concepts & Data Presentation

Table 1: Key Output Analysis Metrics in Monte Carlo Simulation

| Metric | Definition | Interpretation in Drug Development Context | Typical Output |

|---|---|---|---|

| Confidence Interval (CI) | A range, derived from the simulated output distribution, that is likely to contain the true mean model prediction with a specified probability (e.g., 95%). | Quantifies uncertainty in the average expected outcome (e.g., average steady-state concentration). Used for confirming model adequacy. | "The 95% CI for ( C_{trough} ) at day 7 is [45, 67] µg/mL." |

| Prediction Interval (PI) | A range, derived from the simulated output distribution, that is likely to contain a future individual observation with a specified probability (e.g., 95%). | Quantifies expected variability in individual patient responses. Critical for assessing risk (e.g., probability of sub-therapeutic effect or toxicity). | "The 95% PI for ( C_{max} ) in the target population is [5, 150] µg/mL." |

| Global Sensitivity Indices (e.g., Sobol') | Measures quantifying the proportion of total output variance attributable to each input parameter's uncertainty, including interaction effects. | Identifies parameters whose uncertainty drives outcome variability. Prioritizes parameters for refinement (e.g., via targeted in vitro assays). | "Parameter ( CL_{hep} ) explains 72% of the variance in AUC variance." |

Table 2: Comparison of Interval Calculation Methods

| Method | Principle | Use Case | Protocol Reference |

|---|---|---|---|

| Percentile Method | Directly uses the α/2 and 1-α/2 percentiles of the simulated output distribution. | Non-parametric, robust. Standard for deriving PIs from MC output. | Protocol 3.1 |

| Parametric (Wald) Method | Assumes output is normally distributed: CI = Mean ± Z*(SD/√N). | Quick estimation for confidence intervals around the mean when output is ~Normal and N is large. | Protocol 3.2 |

| Bootstrap-t Method | Resamples simulated data to estimate the distribution of the t-statistic, correcting for skew. | Produces more accurate CIs for the mean when output distribution is non-normal. | Protocol 3.3 |

Experimental Protocols

Protocol 3.1: Constructing Non-Parametric Prediction Intervals

Objective: To calculate a range that is likely to contain a future individual observation from the simulated population. Materials: Ensemble of N (e.g., 10,000) simulated output values (e.g., AUC, ( C_{max} )) from Step 3 of the MC workflow. Procedure:

- For the output variable of interest, compile the N simulated values into a single vector.

- Sort the vector in ascending order.

- For a (1-α)% Prediction Interval (e.g., 95%, α=0.05), identify the values at the α/2 and 1-α/2 percentiles.

- Lower bound index = N * (α/2)

- Upper bound index = N * (1 - α/2)

- If indices are not integers, interpolate between adjacent values.

- Report the lower and upper values as the (1-α)% Prediction Interval. Validation: Repeat the entire MC simulation (Steps 1-3) with a different random seed to verify interval stability.

Protocol 3.2: Global Variance-Based Sensitivity Analysis using Sobol' Indices

Objective: To apportion the variance of a model output to the uncertainty in its input parameters. Materials: A deterministic model ( Y = f(X1, X2, ..., X_k) ), defined probability distributions for all k uncertain inputs, Sobol sequence generator, high-performance computing resource. Procedure:

- Generate Sampling Matrices: Create two N x k matrices, A and B, using a Sobol quasi-random sequence, where N is the sample size (e.g., 5,000-10,000).

- Create Hybrid Matrices: For each parameter ( X_i ), create a matrix AB(^{(i)}) where column i is from matrix A, and all other columns are from matrix B.

- Model Evaluation: Run the model for all rows in matrices A, B, and each AB(^{(i)}), resulting in output vectors ( yA ), ( yB ), and ( y_{AB^{(i)}} ).

- Variance Calculation: Estimate total output variance ( V(Y) ) from ( y_A ).

- Index Computation:

- First-order (Main) Effect Index (( Si )): ( Si = \frac{V[E(Y|Xi)]}{V(Y)} ). Estimated via ( \frac{ \frac{1}{N}\sum{j=1}^{N} y{A}^{(j)} y{AB^{(i)}}^{(j)} - f0^2 }{V(Y)} ), where ( f0^2 ) is the square of the mean output.

- Total-effect Index (( S{Ti} )): ( S{Ti} = 1 - \frac{V[E(Y|X{\sim i})]}{V(Y)} ). Estimated via ( \frac{ \frac{1}{N}\sum{j=1}^{N} (y{A}^{(j)} - y{AB^{(i)}}^{(j)})^2 }{2 V(Y)} ). Interpretation: A high ( S{Ti} ) indicates parameter ( Xi ) is influential, warranting further experimental refinement to reduce overall prediction uncertainty.

Visualizations

Title: Monte Carlo Output Analysis Workflow

Title: Variance-Based Global Sensitivity Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Monte Carlo Output Analysis

| Item / Solution | Function in Analysis | Example / Note |

|---|---|---|

| Quasi-Random Sequences (Sobol') | Generates low-discrepancy input samples for efficient variance-based sensitivity analysis, ensuring uniform coverage of parameter space. | Available in libraries like SALib (Python) or sensitivity (R). |

| High-Performance Computing (HPC) Cluster | Enables the thousands of model runs required for robust global sensitivity analysis and large MC ensembles. | Cloud-based solutions (AWS, GCP) offer scalable alternatives. |

Statistical Software Library (e.g., scipy.stats, boot in R) |

Provides functions for percentile calculation, density estimation, and bootstrapping to construct intervals. | Critical for implementing Protocols 3.1 & 3.2. |

Sensitivity Analysis Package (e.g., SALib) |

Automates the design, execution, and calculation of Sobol' indices and other global sensitivity measures. | Standardizes Protocol 3.2, ensuring methodological rigor. |

Visualization Library (e.g., matplotlib, ggplot2) |

Creates publication-quality plots of output distributions, interval comparisons, and tornado plots for sensitivity results. | Essential for clear communication of uncertainty to stakeholders. |

Application Notes & Protocols

Dose-Response Prediction

Dose-response prediction quantifies the relationship between drug exposure and pharmacological effect, enabling optimal dose selection. This is critical for establishing therapeutic windows and minimizing toxicity.

Core Quantitative Data Summary

| Parameter | Description | Typical Range/Value | Data Source |

|---|---|---|---|

| EC₅₀ / IC₅₀ | Concentration for 50% of max effect/inhibition | pM to μM | In vitro bioassays |

| Hill Coefficient (n) | Steepness of dose-response curve | ~0.5 to 2.5 | Fitted from assay data |

| Eₘₐₓ / Iₘₐₓ | Maximum achievable effect/inhibition | 0 to 100% | Assay-defined endpoint |

| Baseline Effect (E₀) | System response without drug | Variable (assay dependent) | Control measurements |

Detailed Experimental Protocol: In Vitro Dose-Response Assay Objective: To characterize the concentration-dependent inhibitory effect of a novel kinase inhibitor on cell proliferation. Materials:

- Target cancer cell line.

- Serial dilutions of investigational drug (e.g., 10 nM to 100 μM, 1:3 dilutions, n=8 points).

- DMSO vehicle control (<0.1% final).

- CellTiter-Glo Luminescent Cell Viability Assay kit.

- 96-well white-walled assay plates.

- Microplate luminometer. Procedure:

- Seed cells in 96-well plates at optimal density (e.g., 2,000 cells/well in 80 μL media). Incubate overnight (37°C, 5% CO₂).

- Prepare 10X drug stocks in DMSO/media. Add 10 μL to respective wells to achieve final concentrations. Include DMSO-only control wells (0% inhibition) and media-only wells (100% inhibition/background).

- Incubate plates for 72 hours.

- Equilibrate plates and CellTiter-Glo reagent to room temperature for 30 min.

- Add 50 μL of reagent to each well. Shake plates for 2 min, then incubate in dark for 10 min.

- Record luminescence. Analysis:

- Normalize raw luminescence: % Inhibition = 100 * [1 - (RLUdrug - RLUmedia)/(RLUDMSO - RLUmedia)].

- Fit normalized data to a 4-parameter logistic (4PL) model using nonlinear regression: E = E₀ + (Eₘₐₓ - E₀) / (1 + (C/IC₅₀)ⁿ), where C is concentration.

- Report IC₅₀, Hill coefficient (n), Eₘₐₓ, and associated confidence intervals from the fit.

Pathway: Dose-Response to Clinical Dose Selection

Clinical Trial Simulation

Clinical Trial Simulation (CTS) integrates knowledge of disease, patient variability, and drug properties to predict trial outcomes, optimizing design and increasing probability of success.

Core Quantitative Data Summary

| Simulation Component | Key Input Parameters | Source of Uncertainty |

|---|---|---|

| Patient Population | Baseline demographics, disease severity, biomarker status | Inter-individual variability, recruitment rates |

| Placebo Response | Mean effect, variability over time | Historical trial data |

| Drug Exposure (PK) | Clearance, volume, bioavailability distributions | Population PK models |

| Drug Effect (PD) | EC₅₀, slope, maximum effect distributions | Phase I/II data, preclinical |

| Dropout Rates | Time- and event-dependent rates | Historical comparators |

Detailed Protocol: CTS for a Phase II Dose-Finding Study

Objective: To simulate a 12-week, randomized, placebo-controlled trial to identify the minimal effective dose(s) for a novel antihypertensive.

Software: R with mrgsolve/RxODE or specialized platform (e.g., Simbiology, NONMEM).

Procedure:

- Define Virtual Population (n=1000 per trial arm):

- Generate covariates (weight, age, renal function) from realistic distributions.

- Assign placebo or one of four active doses (D1-D4).

- Simulate PK Profiles:

- Use a pre-defined population PK model (e.g., 2-compartment oral). For each subject, sample individual PK parameters (CL, V, ka) from multivariate normal distributions, incorporating covariate effects (e.g., CL ~ renal function).

- Simulate PD Response (Systolic BP change):

- Use an Emax model linked to simulated drug concentration: ΔSBP = E₀ + (Eₘₐₓ * Cᵧ) / (EC₅₀ᵧ + Cᵧ) + η + ε.

- E₀: placebo/time effect model (linear drift).

- η: inter-individual variability on Eₘₐₓ and EC₅₀.

- ε: residual error.

- Incorporate Trial Execution Elements:

- Apply visit schedules and adherence patterns (e.g., 90% compliance).

- Model dropouts using hazard functions based on time or lack of efficacy.

- Analysis & Replication:

- For each simulated trial, perform the planned statistical analysis (e.g., ANCOVA on change from baseline at Week 12, dose-response trend test).

- Repeat simulation 1000 times to account for stochastic uncertainty (Monte Carlo). Outputs: Probability of success (power) for each endpoint; probability of selecting each dose for Phase III; predicted distribution of treatment differences.

Workflow: Clinical Trial Simulation Process

PK/PD Forecasting

Pharmacokinetic/Pharmacodynamic (PK/PD) forecasting predicts the time course of drug concentration and effect in individuals or populations, supporting dose individualization and trial bridging.

Core Quantitative Data Summary

| Model Type | Structural Model Parameters | Typical Variability Components (ω²) |

|---|---|---|

| PK: 1-Comp Oral | Clearance (CL), Volume (V), Absorption rate (ka) | IIV on CL, V, ka; Residual error |

| PK: 2-Comp IV | CL, V1, Q, V2 | IIV on CL, V1; Residual error |

| PD: Direct Effect | EC₅₀, Eₘₐₓ, Hill coefficient | IIV on EC₅₀, Eₘₐₓ; Residual error |

| PD: Indirect Effect | Kin, Kout, IC₅₀ | IIV on Kout, IC₅₀; Residual error |

Detailed Protocol: Population PK/PD Model Development & Forecasting Objective: Develop a model to forecast HbA1c reduction for a new diabetes drug based on Phase II data to inform Phase III design. Software: NONMEM, Monolix, or PsN. Procedure:

- Data Assembly: Collate Phase II data: dosing records, sparse PK concentrations, longitudinal HbA1c measurements, patient covariates.

- Base Model Development:

- PK Model: Fit 1- and 2-compartment models with first-order absorption. Evaluate using objective function value (OFV) and diagnostic plots.

- PD Link: Test direct vs. indirect response (inhibition of glucose production) models linking drug concentration to HbA1c change via an effect compartment if needed.

- Covariate Model: Systematically test covariate relationships (e.g., weight on CL/V, renal function on CL) using stepwise forward inclusion (p<0.05) and backward elimination (p<0.01).

- Model Evaluation: Use visual predictive checks (VPC) and bootstrap to assess model robustness and parameter uncertainty.

- Monte Carlo Forecasting:

- Define Phase III population covariate distribution.

- Sample 1000 sets of model parameters from the final model's variance-covariance matrix (incorporating estimation uncertainty).

- For each parameter set, simulate the full PK/PD time course for N virtual patients under proposed Phase III dosing regimens.

- Overlay simulations to generate prediction intervals for HbA1c over time at each dose. Output: Forecasted median and 90% prediction intervals for HbA1c reduction at Week 24 for each dose; probability of achieving target product profile (e.g., >0.5% reduction vs placebo).

Logic: PK/PD Forecasting in Drug Development

The Scientist's Toolkit: Key Research Reagent Solutions

| Item Name | Function in Dose-Response/PK/PD Research |

|---|---|

| CellTiter-Glo / MTS Assay | Luminescent/colorimetric cell viability assays to quantify proliferation inhibition (IC₅₀ determination). |

| Human Liver Microsomes / Hepatocytes | In vitro system to study metabolic stability and identify major metabolites for PK prediction. |

| LC-MS/MS Systems | Gold-standard for quantitative bioanalysis of drugs and biomarkers in biological matrices (plasma, tissue) for PK/PD studies. |

| Recombinant Target Proteins/Enzymes | For high-throughput screening and mechanistic in vitro potency (EC₅₀) determination. |

| Multiplex Cytokine/Chemokine Panels | To measure complex PD biomarker responses in ex vivo or preclinical in vivo samples. |

| Population PK/PD Modeling Software (NONMEM, Monolix) | Industry-standard platforms for nonlinear mixed-effects modeling, essential for PK/PD analysis and simulation. |

| Clinical Trial Simulation Software (R/Simbiology, SAS) | Environments for integrating PK/PD models with trial execution models to simulate outcomes. |

Overcoming Challenges: Troubleshooting and Optimizing Your Monte Carlo Simulations

In Monte Carlo (MC) simulation for pharmacokinetic/pharmacodynamic (PK/PD) and quantitative systems pharmacology (QSP) model uncertainty research, reliability is paramount. Three pervasive pitfalls compromise result integrity: Under-Sampling, leading to inaccurate distribution tails; Non-Convergence of stochastic algorithms, yielding biased estimates; and Model Run-Time Failures during automated parameter sweeps, causing data loss and bias. This document provides protocols to diagnose, mitigate, and control these issues, ensuring robust uncertainty quantification.

Pitfall Analysis & Quantitative Data

Table 1: Common Pitfalls, Impacts, and Diagnostic Metrics

| Pitfall | Primary Cause | Key Impact on Uncertainty Research | Diagnostic Metric | Typical Acceptable Threshold |

|---|---|---|---|---|

| Under-Sampling | Insufficient MC iterations (N) |

Inaccurate estimation of extreme percentiles (e.g., 5th, 95th) for model outputs. | Relative Error in Std. Dev. | < 5% |

| Non-Convergence | Poor MCMC mixing, inadequate burn-in, misspecified proposals. | Biased posterior parameter distributions, invalid credible intervals. | Gelman-Rubin Statistic (\hat{R}) |

< 1.05 |

| Run-Time Failures | Numerical instability (e.g., stiff ODEs, division by zero), invalid parameter sets from sampling. | Attrition bias; surviving samples not representative of intended parameter space. | Failure Rate per Iteration | < 1% |

Table 2: Example Sampling Analysis for a PK Model (AUC Simulation)

Number of MC Iterations (N) |

Estimated Mean AUC (mg·h/L) | Estimated Std. Dev. (mg·h/L) | 95% CI Width (mg·h/L) | Runtime (s) |

|---|---|---|---|---|

| 1,000 | 104.7 | 22.5 | 88.2 | 5 |

| 10,000 | 103.1 | 23.8 | 93.4 | 48 |

| 100,000 | 103.3 | 24.1 | 94.6 | 475 |

| Theoretical True Value | 103.2 | 24.2 | 94.9 | - |

Experimental Protocols

Protocol 1: Determining Sufficient MC Sample Size

Objective: Determine N required to achieve a desired precision in output variance estimation.

- Pilot Run: Execute a preliminary MC run with

N_pilot = 5,000. - Calculate Target: From pilot, compute output variance

σ²_pilot. - Apply Formula: Use relative error formula for variance:

N_required = (Z * √(2/(n-1)) / ε)², whereZis the Z-score (1.96 for 95% CI),εis the desired relative error (e.g., 0.05 for 5%). - Validate: Run simulation with

N_required. Compute moving average of key statistics; confirm stability.

Protocol 2: Diagnosing and Addressing MCMC Non-Convergence

Objective: Ensure Markov Chain Monte Carlo (MCMC) sampling has converged to the target posterior distribution.

- Multi-Chain Setup: Run ≥ 4 independent MCMC chains from dispersed starting points.

- Burn-in & Thinning: Discard initial 50% of each chain as burn-in. Apply thinning to reduce autocorrelation.

- Calculate

\hat{R}: Compute the Gelman-Rubin diagnostic for all parameters.\hat{R> 1.05 indicates non-convergence. - Remediation: If non-convergent, increase adaption/burn-in period, adjust proposal distribution covariance, or reparameterize the model.

Protocol 3: Managing Model Run-Time Failures

Objective: Implement a robust workflow to capture, analyze, and mitigate simulation failures.

- Pre-Sampling Filter: Define plausible parameter bounds (e.g., based on physiological limits) to reject invalid samples prior to ODE solving.

- Robust Wrapper: Embed the model solver in a

try-catchblock. Log the failing parameter set and error type (e.g., "stiff ODE", "negative concentration"). - Post-Hoc Analysis: Compute failure rate. If >1%, analyze logs to identify failure clusters in parameter space.

- Model Adjustment: For unstable regions, consider adding small epsilon terms, implementing piecewise functions, or switching to an implicit ODE solver.

Visualization of Workflows and Relationships

Title: Monte Carlo Workflow with Run-Time Failure Logging

Title: MCMC Convergence Diagnostics and Remediation Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Robust MC Uncertainty Analysis

| Item / Software | Function in Mitigating Pitfalls | Example/Note |

|---|---|---|

| Probabilistic Programming Language (PPL) | Facilitates robust MCMC implementation, automatic convergence diagnostics, and Hamiltonian Monte Carlo. | Stan, PyMC3, Turing.jl. Provides built-in \hat{R and effective sample size (ESS). |

| High-Performance ODE Solver | Reduces run-time failures for stiff PK/PD/QSP models via adaptive implicit methods. | SUNDIALS CVODE, LSODA. Use via wrappers in R (deSolve) or Python (SciPy). |

| Parallel Computing Framework | Enables feasible large-N MC runs and independent multi-chain MCMC. | MPI, Python multiprocessing, R parallel, cloud computing clusters. |

| Containerization Platform | Ensures simulation reproducibility and environment stability across runs. | Docker, Singularity. Packages model code, solver, and dependencies. |

| Parameter Sampling & Screening Library | Provides advanced sampling methods (Sobol sequences) and pre-screening for stability. | SALib, ChaosPy. Generates efficient space-filling samples and performs sensitivity analysis. |

| Structured Logging Library | Systematically captures run-time errors and associated parameters for analysis. | Python logging, log4j. Outputs structured JSON logs for failed simulations. |

Within the broader thesis on Monte Carlo (MC) simulation for model uncertainty research in drug development, determining the optimal number of iterations is a fundamental challenge. Insufficient iterations yield imprecise estimates, undermining the reliability of uncertainty quantification, while excessive iterations waste computational resources and time. This application note provides a structured framework and experimental protocols to systematically determine this balance, focusing on pharmacometric and pharmacokinetic/pharmacodynamic (PK/PD) applications.

Foundational Theory & Data-Driven Benchmarks

The core principle is that the standard error (SE) of a mean estimate from MC simulation decreases proportionally to 1/√N, where N is the number of iterations. The required N depends on the desired precision and the inherent variability of the output metric.

Table 1: Relationship Between Iterations, Precision, and Cost for a Sample Metric

| Target Output Metric | Typical Coefficient of Variation (CV%) | Iterations (N) for ±5% SE of Mean | Iterations (N) for ±2% SE of Mean | Relative Computational Time* |

|---|---|---|---|---|

| PK Exposure (AUC) | 15-25% | 1,000 - 2,500 | 6,250 - 15,625 | 1x - 6.25x |

| PD Response (Emax) | 30-50% | 3,600 - 10,000 | 22,500 - 62,500 | 3.6x - 25x |

| Probability of Target Attainment (PTA) | N/A (Binary) | 10,000 (for PTA~90%) | 62,500 | 10x - 62.5x |

| Model Parameter Estimate | 10-40% | 400 - 6,400 | 2,500 - 40,000 | 0.4x - 16x |

*Time relative to the N for AUC at ±5% SE (baseline ~2 min). Actual times vary by model complexity.

Experimental Protocols for Iteration Determination

Protocol 1: Sequential Convergence Analysis

Objective: To determine N by monitoring the stability of key output statistics.

Materials: Population model (e.g., in NONMEM, R/mrgsolve, Simulx), defined uncertainty scenario (parameter distributions), high-performance computing (HPC) cluster or cloud instance.

Procedure:

- Run an initial pilot simulation with N=1,000.

- Calculate the mean and 95% confidence interval (CI) for each primary output (e.g., AUC, Cmax, PTA).

- Incrementally increase N by a factor (e.g., 2,000, 5,000, 10,000, 20,000...).

- Recalculate statistics after each increment.

- Stopping Criterion: Stop when the absolute relative change in the mean and the width of the 95% CI for all key outputs fall below pre-specified thresholds (e.g., <1% change in mean, <5% relative CI width) over two consecutive increments.

- Record the final N and the corresponding precision metrics.

Protocol 2: Pre-Specified Precision Method Objective: To calculate the required N a priori based on an acceptable margin of error. Materials: Pilot data (from Protocol 1 or historical runs), statistical software (R, Python). Procedure:

- From a pilot run (N_pilot ≥ 500), estimate the variance (σ²) for each critical output.

- Define the desired margin of error (d) for the mean (e.g., AUC ± 10% of its pilot value).

- Calculate the required N for each output using the formula: N = (Z * σ / d)², where Z is the Z-score for the desired confidence level (e.g., 1.96 for 95% CI).

- Select the maximum N across all outputs to ensure the strictest criterion is met.

- Execute the full simulation with this N and validate precision post-hoc.

Protocol 3: Cost-Benefit Optimization for Adaptive Trials Objective: To balance simulation precision with decision-making risk in adaptive trial design. Materials: Utility function defining "cost" of simulation vs. "cost" of inaccurate prediction, optimization algorithm. Procedure:

- Define a loss function: L(N) = ComputationalCost(N) + RiskofIncorrectDecision(N).

- Computational_Cost(N) is proportional to N (e.g., core-hours).

- RiskofIncorrect_Decision(N) is quantified as the expected loss from making a suboptimal trial design choice (e.g., wrong dose selection) due to MC error, estimated via pilot simulations.

- Use a numerical optimizer (e.g., in Python's

SciPy) to find the N that minimizes L(N). - This protocol is suited for high-stakes simulations where the consequence of error is quantifiable.

Visualization of Methodologies

Title: Protocol Selection Workflow for Determining N