RoseTTAFold Demystified: How the Three-Track Neural Network Revolutionizes Protein Structure Prediction and Drug Discovery

This comprehensive guide explores the RoseTTAFold three-track neural network, a groundbreaking AI system for predicting protein structures from amino acid sequences.

RoseTTAFold Demystified: How the Three-Track Neural Network Revolutionizes Protein Structure Prediction and Drug Discovery

Abstract

This comprehensive guide explores the RoseTTAFold three-track neural network, a groundbreaking AI system for predicting protein structures from amino acid sequences. Targeted at researchers and drug development professionals, the article provides a foundational understanding of its architecture, details its methodology and practical applications in biomedicine, addresses common challenges and optimization strategies, and validates its performance against other leading tools like AlphaFold. The article concludes by synthesizing its impact on accelerating therapeutic development and the future of computational structural biology.

What is RoseTTAFold? Understanding the Three-Track Neural Network Architecture

The Protein Folding Problem stands as one of the most enduring and consequential challenges in modern biology. It asks a deceptively simple question: given a linear sequence of amino acids (the primary structure), how does a protein spontaneously fold into its unique, biologically active three-dimensional conformation? This problem is central to understanding cellular function, disease mechanisms, and rational drug design. For decades, experimental techniques like X-ray crystallography, NMR spectroscopy, and cryo-electron microscopy have provided high-resolution structures but are often labor-intensive and low-throughput. The advent of deep learning, epitomized by AlphaFold2 and subsequently by RoseTTAFold, has revolutionized the field by achieving near-experimental accuracy in structure prediction, fundamentally reframing the challenge from one of prediction to one of interpretation and application. This whitepaper provides a technical guide to the core problem, framed within the context of the RoseTTAFold three-track neural network's architecture and its contributions to the field.

The Computational Challenge and the RoseTTAFold Framework

The core difficulty lies in the astronomical number of possible conformations a polypeptide chain could adopt. Levinthal's paradox highlights that a random search of this conformational space would take longer than the age of the universe, implying that folding follows a directed, energetically favorable pathway. Computational approaches have evolved from molecular dynamics simulations, which are limited by timescale, to homology modeling and fragment assembly, which rely on known evolutionary or structural information.

The transformative breakthrough came with deep learning models that integrate multiple sources of evolutionary and physical information. RoseTTAFold, developed by the Baker lab, is a three-track neural network that elegantly addresses this integration. Its architecture processes information in three parallel tracks, enabling iterative communication between different levels of representation to progressively refine a protein structure.

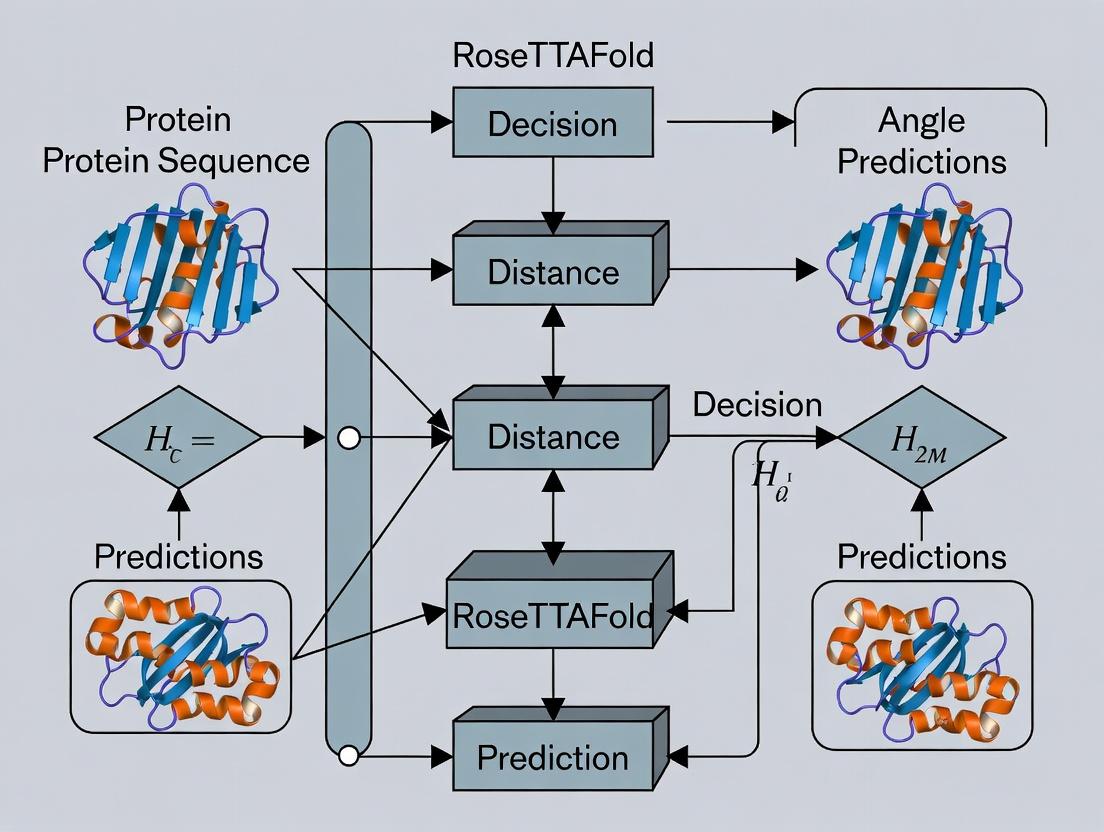

Diagram 1: RoseTTAFold Three-Track Network Architecture

Detailed Methodology of the RoseTTAFold Prediction Pipeline

The experimental protocol for structure prediction using RoseTTAFold involves several key computational stages. The following workflow details the steps from sequence input to final model.

Diagram 2: RoseTTAFold Prediction Workflow

Step-by-Step Protocol:

- Input and Homology Search: The target amino acid sequence is used to query protein sequence databases (e.g., UniRef) using iterative search tools (HHblits, JackHMMER) to build a rich Multiple Sequence Alignment (MSA). Concurrently, the sequence is searched against a database of known structures (e.g., PDB) using fold recognition methods to identify potential structural templates.

- Feature Encoding: The MSA is converted into a 1D profile (per-residue conservation, amino acid frequencies) and a 2D representation of co-evolutionary couplings (e.g., using a pseudo-likelihood maximization method). Template information is encoded as pairwise distances and angles.

- Three-Track Network Processing: These features are fed into the RoseTTAFold network.

- The 1D track processes the sequence profile.

- The 2D track processes the pairwise residue relationships (MSA couplings, template distances).

- The 3D track operates on a backbone structure initialized, for example, as a random coil.

- Information flows bidirectionally between tracks through transformer-like attention mechanisms. The 2D track informs the 1D track about spatial neighbors; the 3D track informs the 2D track about physical plausibility. This iterative refinement occurs over ~100-200 network "layers" or blocks.

- Structure Module and Output: The final layer of the 3D track outputs a set of atomic coordinates for the protein backbone and side chains (in the full RoseTTAFold2 implementation). The network also outputs a per-residue confidence score, predicted Local Distance Difference Test (pLDDT), ranging from 0-100, indicating the reliability of the local structure prediction.

- Relaxation (Optional): The predicted coordinates may be subjected to a brief energy minimization using a molecular mechanics force field (like in Rosetta) to resolve minor steric clashes, producing a more physically realistic model.

Performance Data and Comparative Analysis

The performance of RoseTTAFold and its contemporaries is typically benchmarked on datasets like CASP (Critical Assessment of Structure Prediction). Key metrics include the Global Distance Test (GDT_TS, a measure of overall fold accuracy) and the aforementioned pLDDT. The table below summarizes comparative performance data from recent benchmarks (post-CASP14, circa 2021-2023).

Table 1: Comparative Performance of Deep Learning Protein Folding Tools

| Model | Key Architectural Feature | Median GDT_TS (on CASP14 FM Targets) | Average pLDDT (Typical Range) | Key Strength |

|---|---|---|---|---|

| AlphaFold2 (DeepMind) | Evoformer trunk + Structure module, end-to-end | ~87 | 90+ | Highest overall accuracy, excellent side-chain placement |

| RoseTTAFold (v1.0) | Three-track iterative network | ~75-80 | 80-85 | High accuracy with significantly lower compute requirements |

| RoseTTAFold2 | Integrated sequence prediction & folding | Not formally benchmarked vs. CASP | N/A | Can predict complexes and design sequences |

| OpenFold | Open-source reimplementation of AF2 | ~85 | Comparable to AF2 | Reproducibility, customizability |

| ESMFold | Single-sequence language model (ESM-2) | ~65 (on single seq) | Lower on single seq | Extremely fast, no MSA needed |

Table 2: Quantitative Impact on Structural Coverage (Example: Model Archive Data)

| Metric | Pre-AlphaFold2 (2020) | Post-RoseTTAFold/AlphaFold2 (2023) | Source |

|---|---|---|---|

| Total predicted human protein structures | ~10,000 (experimental, PDB) | ~20,000+ (from AlphaFold DB alone) | AlphaFold DB, PDB |

| Average prediction time per protein (medium-length) | Days to weeks (MD/homology) | Minutes to hours | Baker Lab, DeepMind |

| Typical Ca RMSD (Å) for well-folded domains | Often >5-10 Å | Often <2 Å | CASP14 Assessment |

The Scientist's Toolkit: Research Reagent Solutions for Validation

While computational predictions are powerful, experimental validation remains essential. The following table lists key reagents and materials used in experimental structural biology to validate or supplement computational predictions like those from RoseTTAFold.

Table 3: Essential Research Reagents for Experimental Structure Validation

| Item | Function/Description | Example Product/Kit |

|---|---|---|

| Cloning & Expression Vectors | For inserting the gene of interest and expressing the recombinant protein in a host system (E. coli, insect, mammalian cells). | pET vectors (Novagen), Baculovirus systems (Invitrogen) |

| Affinity Purification Resins | For purifying the recombinant protein via a fused tag (e.g., His-tag, GST-tag). | Ni-NTA Agarose (Qiagen), Glutathione Sepharose (Cytiva) |

| Size Exclusion Chromatography (SEC) Columns | For polishing purification and assessing the monodispersity/oligomeric state of the protein sample. | Superdex Increase (Cytiva), ENrich SEC (Bio-Rad) |

| Crystallization Screening Kits | For identifying initial conditions that promote the formation of protein crystals for X-ray crystallography. | JC SG Core Suites (Qiagen), MemGold & MemGold2 (Molecular Dimensions) |

| Cryo-EM Grids | Ultrathin, perforated supports for flash-freezing vitrified ice samples for cryo-electron microscopy. | Quantifoil R 1.2/1.3, UltrAuFoil (Electron Microscopy Sciences) |

| NMR Isotope-Labeled Media | For producing proteins enriched with stable isotopes (15N, 13C) required for NMR spectroscopy. | Bio-Express Cell Growth Media (Cambridge Isotope Laboratories) |

| Crosslinking Agents | For chemically linking proximal residues to capture transient interactions or validate predicted complexes (MS-coupled crosslinking). | Disuccinimidyl suberate (DSS), BS3 (Thermo Fisher) |

| Site-Directed Mutagenesis Kits | For creating point mutations to test functional or structural predictions (e.g., disrupting a predicted binding interface). | Q5 Site-Directed Mutagenesis Kit (NEB) |

The Protein Folding Problem has been fundamentally transformed by deep learning approaches like RoseTTAFold. Its innovative three-track network provides a computationally efficient framework for integrating sequence, distance, and coordinate information, yielding highly accurate structural models. This capability has created a paradigm shift in structural biology, moving the field from a scarcity to an abundance of structural models. The current grand challenge now extends beyond prediction to include modeling conformational dynamics, protein-protein and protein-ligand complexes, and the effects of mutations with high precision—all areas where RoseTTAFold's architecture continues to be extended and applied. For researchers and drug developers, these tools provide an unprecedented starting point for understanding disease mechanisms, performing virtual screening, and accelerating the design of novel therapeutics.

The prediction of a protein's three-dimensional structure from its amino acid sequence—the "protein folding problem"—has been a grand challenge in biology for decades. This whitepaper frames the solution within the context of a broader thesis on the RoseTTAFold three-track neural network, which represents a paradigm shift in computational structural biology. By integrating information across multiple scales of representation, deep learning models like RoseTTAFold and its contemporaries have moved the field from sequence to accurate structure prediction, fundamentally accelerating research in biochemistry and drug discovery.

The Architectural Core: RoseTTAFold's Three-Track Network

RoseTTAFold, developed by the Baker lab, is a deep neural network that operates on three distinct but interconnected information "tracks."

- Track 1: 1D Sequence Track. Processes the amino acid sequence and evolutionary information from multiple sequence alignments (MSAs). It uses convolutional layers to capture patterns and residue dependencies.

- Track 2: 2D Distance Graph Track. Infers pairwise relationships between residues, modeling distances and orientations. This track forms a 2D representation of the contact map.

- Track 3: 3D Spatial Track. Directly manipulates a 3D backbone structure, using invariant point attention and other geometric operations to refine atomic coordinates.

The network's power derives from the continuous flow of information between these tracks. For instance, a pattern detected in the sequence track (Track 1) can influence the predicted distance between two residues in Track 2, which in turn guides the folding of the 3D backbone in Track 3. This iterative refinement process allows the model to reason jointly about sequence, distance, and spatial geometry.

Title: RoseTTAFold's Three-Track Information Flow

Experimental Protocol: A Standard Structure Prediction Workflow

The following detailed methodology outlines a standard pipeline for de novo protein structure prediction using a RoseTTAFold-like model.

1. Input Preparation & Feature Generation:

- Query Sequence: Obtain the target amino acid sequence in FASTA format.

- Multiple Sequence Alignment (MSA): Use a tool like MMseqs2 to search massive sequence databases (UniRef, BFD) to generate a MSA. This reveals evolutionary constraints critical for folding.

- Template Search (Optional): Use HMM-based methods to search the PDB for structural homologs to use as weak templates.

- Feature Composition: Compile final input features: the MSA (one-hot encoded), positional information, and template information (if any) into a structured tensor.

2. Neural Network Inference:

- Feed the feature tensor into the pre-trained RoseTTAFold network.

- The three-track network performs multiple forward passes (iterations). At each iteration, the representations in all three tracks are updated based on information from the others.

- The network outputs:

- A predicted distance matrix (from Track 2).

- Predicted distograms (probability distributions over distances).

- Final atomic 3D coordinates, typically for the Cα, C, N, O backbone atoms and side chain rotamers (from Track 3).

3. Structure Refinement:

- The initial neural network output may contain minor steric clashes or sub-optimal bond lengths.

- Use a physics-based or gradient-descent energy minimization protocol (e.g., with the Rosetta or OpenMM framework) to relax the structure, removing clashes while staying close to the neural network prediction.

4. Validation and Analysis:

- pLDDT Score: The model outputs a per-residue confidence score (0-100). Higher scores indicate higher predicted reliability.

- Predicted Aligned Error (PAE): A 2D matrix estimating the positional error between any two residues. A low PAE across the structure indicates high self-consistency.

- Compare the predicted structure to known experimental structures (if available) using metrics like TM-score and RMSD.

Quantitative Performance Benchmarking

The performance of deep learning folding tools is rigorously tested on public benchmarks like CASP (Critical Assessment of Structure Prediction). The table below summarizes key quantitative results for leading tools as of recent analyses.

Table 1: Comparative Performance of Major Protein Structure Prediction Tools

| Model | Developer | Key Method | Median TM-score (CASP14) | Median RMSD (Å) (CASP14) | Typical Runtime (GPU) | Primary Input |

|---|---|---|---|---|---|---|

| AlphaFold2 | DeepMind | Evoformer + 3D IPA | 0.92 | ~1.5 | Minutes to Hours | MSA, Templates |

| RoseTTAFold | Baker Lab | 3-Track Network | 0.85 | ~2.5 | Minutes | MSA, (Templates) |

| OpenFold | OpenFold Team | AlphaFold2 Reimplementation | ~0.90* | ~1.7* | Minutes to Hours | MSA, Templates |

| ESMFold | Meta AI | Single-sequence LM (ESM-2) | 0.70-0.80 | 3-5 | Seconds | Single Sequence |

Data compiled from CASP14 results, associated publications, and subsequent community benchmarks. Runtime is for a typical single-domain protein. *Closely matches AF2 performance. *Performance is sequence-length dependent; competitive on shorter sequences without an MSA.*

The Scientist's Toolkit: Key Research Reagent Solutions

The experimental and computational workflow relies on several critical resources. This table details essential "reagent solutions" for structure prediction research.

Table 2: Essential Research Reagents & Resources for Computational Structure Prediction

| Item / Resource | Type | Primary Function | Key Provider / Implementation |

|---|---|---|---|

| MMseqs2 | Software | Ultra-fast, sensitive sequence searching and MSA generation. Critical for creating evolutionary input features. | Steinegger Lab (Server/CLI) |

| UniRef90/UniClust30 | Database | Curated, clustered protein sequence databases used as targets for MSA searches. | UniProt Consortium |

| PDB (Protein Data Bank) | Database | Repository of experimentally determined 3D structures. Used for template searching and model validation. | Worldwide PDB (wwPDB) |

| PyMOL / ChimeraX | Software | Molecular visualization suites for analyzing, comparing, and rendering predicted 3D structures. | Schrödinger / UCSF |

| Rosetta | Software Suite | Physics-based modeling suite used for post-prediction structural refinement and energy minimization. | Baker Lab / Rosetta Commons |

| ColabFold | Web Service | Integrated pipeline (MMseqs2 + AlphaFold2/RoseTTAFold) providing accessible, cloud-based structure prediction. | Sergey Ovchinnikov et al. |

| CUDA-enabled GPU | Hardware | Specialized processing unit (e.g., NVIDIA A100, V100) required for efficient deep learning model inference. | NVIDIA, Cloud Providers (AWS, GCP) |

Logical Pathway from Sequence to Drug Development

The breakthrough in accurate structure prediction has created a direct logical pipeline for modern drug discovery, moving from genomic data to candidate therapeutics.

Title: Deep Learning Structure Prediction in Drug Development Pipeline

The three-track architecture of RoseTTAFold exemplifies the core promise of deep learning in structural biology: the seamless, integrated translation of information from one-dimensional sequence to three-dimensional atomic reality. This capability, now accessible to researchers worldwide, is no longer just a prediction tool but a foundational component of the scientific method in biochemistry and a powerful engine for rational drug design. By providing accurate structural models on demand, it places a detailed mechanistic hypothesis at the starting point of experimental inquiry, dramatically accelerating the pace of discovery.

Within the broader thesis on RoseTTAFold's revolutionary approach to protein structure prediction, a critical innovation lies in its three-track neural network architecture. This in-depth technical guide deconstructs the core components—1D sequence, 2D distance map, and 3D coordinate networks—and elucidates their synergistic operation.

The RoseTTAFold architecture processes information through three distinct, yet deeply interconnected, tracks. The system iteratively refines its predictions by passing information between these tracks, allowing 1D evolutionary sequence information, 2D inter-residue pairwise relationships, and explicit 3D structural details to inform one another.

Figure 1: Three-track information flow in RoseTTAFold (Iterative Refinement).

Core Network Tracks: Technical Specifications

The 1D Sequence Track

This track processes evolutionary information from Multiple Sequence Alignments (MSAs). It utilizes deep residual networks and attention mechanisms to extract patterns of conservation, co-evolution, and amino acid propensities.

The 2D Distance Map Track

A 2D representation of pairwise relationships between residues is constructed here. It integrates information from the 1D track and proposed 3D structures to predict distances (e.g., Cβ-Cβ) and orientational preferences (dihedrals).

The 3D Coordinate Track

This track explicitly models the protein backbone and side chains in three dimensions. It uses invariant point attention (IPA) and structural modules to generate atomic coordinates, which are then fed back to inform the 1D and 2D tracks.

Quantitative Performance Comparison

Table 1: Comparative Performance on CASP14 Free Modeling Targets

| Metric | RoseTTAFold (3-Track) | AlphaFold2 (AF2) | DMPfold (2D-Only) | trRosetta (2D-Only) |

|---|---|---|---|---|

| GDT_TS (Global) | 77.3 | 87.5 | 65.2 | 70.4 |

| RMSD (Å) | 3.96 | 2.76 | 5.82 | 4.51 |

| TM-Score | 0.81 | 0.89 | 0.70 | 0.75 |

| Mean Distance Precision (Top L/5) | 85.1% | 92.3% | 72.4% | 79.8% |

| Inter-Residue Contact Precision | 88.7% | 94.5% | 80.1% | 85.3% |

Data synthesized from CASP14 assessments, Baek et al. (2021), and Jumper et al. (2021).

Key Experimental Protocol: End-to-End Structure Prediction

Methodology:

- Input Preparation: Generate an MSA using JackHMMER against UniClust30. Optionally, search for structural templates using HH-search against the PDB.

- Network Initialization: Process MSA and templates through the 1D and 2D track initial encoders.

- Iterative Refinement (N cycles, typically 4-8): a. 1D→2D Pass: Extract per-residue features from Track 1D. Compute outer concatenation to form initial 2D pair representation. b. 2D Self-Consistency: Apply axial attention and 2D convolutions to refine distance, orientation, and confidence maps. c. 2D→3D Pass: Generate initial backbone frames from refined 2D geometry (distances/dihedrals) via a differentiable "folding" module (e.g., triangulation/Georgesian averaging). d. 3D Refinement: Apply Invariant Point Attention (IPA) to update residue positions and orientations within the local frame. e. 3D→1D/2D Feedback: Project updated 3D coordinates back to inter-residue distances and angles. Encode these into features and inject them into the 1D and 2D track feature maps for the next cycle.

- Output: Final 3D atomic coordinates (backbone and side-chain rotamers) and per-residue/paired confidence estimates (pLDDT, PAE).

Figure 2: End-to-end prediction workflow.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Computational Tools for Three-Track Network Research

| Item | Function in Research/Experiment | Typical Source/Example |

|---|---|---|

| Multiple Sequence Alignment (MSA) Database | Provides evolutionary constraints for the 1D track. Essential for accurate co-evolution signal detection. | UniRef90, UniClust30, BFD, MGnify |

| Protein Structure Database | Source of templates for the 2D/3D tracks and for training/validation. | RCSB Protein Data Bank (PDB) |

| Structure Prediction Suite | Software implementing the three-track architecture for inference and/or training. | RoseTTAFold, AlphaFold2, OpenFold |

| Deep Learning Framework | Backend for developing, training, and running neural network models. | PyTorch, JAX, TensorFlow |

| Molecular Dynamics (MD) Package | Used for all-atom relaxation of predicted models and validation. | AMBER, GROMACS, CHARMM, OpenMM |

| Structure Analysis Toolkit | For evaluating predicted model quality (RMSD, GDT, TM-score). | MolProbity, ProSA-web, PDBeval, PyMOL/BioPython |

| High-Performance Computing (HPC) Cluster | Provides CPU/GPU resources for training large networks and generating predictions. | Local clusters, Cloud (AWS, GCP), NIH Biowulf |

| Differentiable Geometry Library | Enables gradient-based learning on 3D rotations and translations in the 3D track. | TensorFlow Graphics, PyTorch3D, custom SE(3) modules |

This whitepaper explores the core communication and integration mechanisms within the three-track neural network of RoseTTAFold, as detailed in recent research. The architecture represents a significant advancement in protein structure prediction by concurrently processing information from three distinct data modalities: one-dimensional (1D) sequence data, two-dimensional (2D) distance/contact maps, and three-dimensional (3D) coordinate frames. The system's power lies not in the isolated processing within each track, but in the sophisticated, bi-directional flow of information between them. This enables iterative refinement, where constraints from one track inform and correct predictions in another, converging on an accurate 3D model.

The Three-Track Architecture: Core Components

The RoseTTAFold network is built upon a pyramid of complexity, with each track specialized for a specific data type.

- 1D Track (Sequence-to-Features): Processes the amino acid sequence input. It utilizes deep multiple sequence alignments (MSAs) and language model embeddings to extract evolutionary constraints, solvent accessibility, and secondary structure propensities. This track outputs a per-residue feature vector.

- 2D Track (Pairwise Relationships): Operates on a per-residue-pair basis. It calculates probabilities for inter-residue distances, orientations, and contact maps. This track is critical for understanding long-range interactions that define a protein's fold.

- 3D Track (Spatial Structure): Directly manipulates a backbone frame (typically a Cα trace) in 3D space. Using principles from invariant point attention (IPA), it updates atomic coordinates based on information received from the 1D and 2D tracks.

Table 1: Core Specifications of RoseTTAFold's Three Tracks

| Track | Primary Input | Representation | Core Function | Key Output |

|---|---|---|---|---|

| 1D Track | Amino Acid Sequence | Per-residue feature vector | Extract evolutionary & physicochemical constraints | Residue-level probabilities (SS, solvent acc.) |

| 2D Track | Processed MSA/Features | Residue pair matrix | Infer distance distributions & contact probabilities | Distance/confidence matrices, orientation maps |

| 3D Track | Initial backbone frames | 3D coordinates (Cα, sidechains) | Refine atomic structure in Euclidean space | Updated 3D coordinates (PDB format) |

Communication Pathways: The Integration Mechanism

Integration occurs through specialized neural network modules that sit at the junctions between tracks. These modules perform attention operations, allowing features from one representation space to query and update features in another.

- 1D 2D Communication: The 1D per-residue features are "outer concatenated" to form initial pair representations for the 2D track. Conversely, the 2D track's pairwise information is summarized (e.g., by column-wise averaging) to update the 1D residue features, communicating which residues are in spatial contact.

- 2D 3D Communication: The 2D track's predicted distograms guide the 3D track's refinement. The 3D track's current state can also be projected back to generate a "3D-inferred" 2D contact map, which is compared with the 2D track's predictions to compute a loss and drive gradient updates.

- 1D 3D Communication: While often mediated through the 2D track, direct information flow also exists. The 1D track's features (like secondary structure) can directly influence torsion angle updates in the 3D track.

The process is iterative. An initial rough 3D structure is progressively refined over multiple network "blocks" as information cycles between tracks, resolving contradictions and reinforcing consistent signals.

Title: RoseTTAFold Three-Track Communication & Data Flow

Experimental Protocols for Validating Track Communication

Key experiments in the foundational research demonstrate the necessity of inter-track communication.

Protocol 4.1: Ablation Study on Communication Pathways

- Objective: To quantify the contribution of each communication pathway (1D2D, 2D3D, 1D3D) to final prediction accuracy.

- Methodology:

- Train multiple variants of the RoseTTAFold network, each with a specific communication pathway disabled (e.g., by masking the attention heads that perform that cross-track update).

- Use a standardized benchmark set (e.g., CASP14 targets) for evaluation.

- For each variant, compute the TM-score and GDT_TS against known experimental structures.

- Compare the performance drop relative to the full, unablated model.

- Key Metrics: TM-score, Global Distance Test (GDT), and per-residue distance accuracy (LDDT).

Protocol 4.2: Visualization of Attention Weights

- Objective: To empirically observe which residue pairs or features are prioritized during cross-track attention.

- Methodology:

- Run a target protein through a trained RoseTTAFold model.

- Extract the attention weight matrices from key cross-track attention layers (e.g., where the 2D track queries the 1D track).

- Plot these weights as heatmaps aligned with the protein sequence and/or structure.

- Correlate high-attention regions with known functional motifs or structural elements (e.g., active sites, dimer interfaces).

Table 2: Sample Results from Ablation Study (Illustrative Data)

| Network Variant | TM-Score (Mean) | GDT_TS (Mean) | Performance Drop vs. Full Model |

|---|---|---|---|

| Full RoseTTAFold | 0.85 | 82.5 | Baseline |

| No 1D2D Communication | 0.71 | 68.1 | -14.4 GDT_TS |

| No 2D3D Communication | 0.69 | 65.8 | -16.7 GDT_TS |

| No 1D3D Communication | 0.82 | 79.3 | -3.2 GDT_TS |

| Single Track Only (3D) | 0.52 | 45.0 | -37.5 GDT_TS |

Title: RoseTTAFold End-to-End Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for RoseTTAFold-Based Research

| Item/Category | Function/Description | Example/Provider |

|---|---|---|

| Sequence Databases | Provide evolutionary context via Multiple Sequence Alignments (MSAs). | UniRef, MGnify, BFD (Big Fantastic Database) |

| MSA Generation Tools | Software to search sequence databases and build MSAs. | HHblits, JackHMMER, MMseqs2 |

| Pre-trained Models | Ready-to-use neural network weights for prediction. | RoseTTAFold GitHub Repository, Model Zoo |

| Inference Software | Framework to run the model on target sequences. | PyRosettaFold, ColabFold, Local Linux install |

| Validation Suites | Benchmark sets to assess prediction accuracy. | CASP targets, PDB-derived test sets |

| Structure Analysis Tools | Visualize and analyze predicted 3D models. | PyMOL, ChimeraX, UCSF, Mol* Viewer |

| Computational Hardware | Accelerate MSA generation and neural network inference. | GPUs (NVIDIA A100/V100), High-CPU servers, Cloud compute (AWS, GCP) |

The efficacy of RoseTTAFold is fundamentally rooted in its engineered data flow. By creating explicit, learnable pathways for communication between 1D, 2D, and 3D representations, the network mirrors the physical logic of protein folding, where sequence dictates local contacts, which in turn define global topology. This three-track integration framework not only pushes the boundaries of prediction accuracy but also provides a powerful, generalizable architecture for modeling complex biomolecular relationships, with direct implications for rational drug and therapeutic protein design.

This whitepaper details two foundational innovations—Iterative Refinement and End-to-End Training—that underpin the performance of advanced deep learning systems for protein structure prediction, as exemplified by RoseTTAFold. Within the broader thesis of the RoseTTAFold three-track neural network, these methodologies are critical for integrating 1D sequence, 2D distance, and 3D coordinate information into a single, coherent, and highly accurate structural model. For researchers and drug development professionals, mastering these concepts is essential for leveraging and innovating upon current state-of-the-art structural biology tools.

Iterative Refinement: A Multi-Cycle Optimization Process

Iterative refinement is a recursive process where an initial, often coarse, protein structure prediction is progressively improved through multiple cycles of the network. Each cycle uses the output from the previous cycle as part of the input for the next, allowing the model to correct errors and refine details.

Detailed Methodology for Iterative Refinement

- Initial Prediction Generation: The RoseTTAFold three-track network (sequence, distance, 3D) processes input multiple sequence alignments (MSAs) and generates an initial set of 3D atom coordinates (often as backbone frames).

- Cyclic Reprocessing: The predicted coordinates are converted back into internal representations (e.g., predicted distances, orientations) and fed back into the network alongside the original sequence information.

- Error Correction: In subsequent passes, the network identifies inconsistencies between its previous coordinate predictions and the evolutionary and physical constraints learned from its training data. It updates the structure to resolve these inconsistencies.

- Convergence Check: The process repeats for a fixed number of cycles (e.g., 4) or until the predicted structure changes by less than a threshold RMSD.

Quantitative Impact of Iterative Refinement

Table 1: Effect of Iterative Refinement Cycles on Model Accuracy (Representative Data)

| Refinement Cycle | Average TM-Score (on CASP14 Targets) | Average RMSD (Å) (Backbone) | Key Improvement |

|---|---|---|---|

| Initial (Cycle 1) | 0.72 | 8.5 | Baseline fold |

| Cycle 2 | 0.78 | 6.2 | Global topology |

| Cycle 3 | 0.81 | 4.8 | Side-chain packing |

| Cycle 4 | 0.82 | 4.5 | Local geometry |

Diagram 1: Iterative refinement workflow (4 cycles).

End-to-End Training: Unified Gradient Flow

End-to-End (E2E) training refers to the optimization of all components of a complex neural network system jointly, using a single loss function computed on the final output. In RoseTTAFold, this means the entire three-track network—from the input MSA to the final 3D coordinates—is trained simultaneously, allowing gradients from the coordinate-based loss to inform and improve the earlier sequence and distance prediction stages.

Detailed Protocol for E2E Training Setup

- Loss Function Definition: A composite loss function (Ltotal) is constructed:

- Lframe (3D): Distance between predicted and true backbone frames (rotation and translation).

- Ldist (2D): FAPE (Frame Aligned Point Error) or cross-entropy on predicted distograms.

- Laux (1D): Cross-entropy for auxiliary tasks (e.g., solvent accessibility, secondary structure).

- Ltotal = w1*Lframe + w2Ldist + w3Laux (where w are weighting coefficients).

- Gradient Computation: After a forward pass, the loss Ltotal is computed. The backpropagation algorithm calculates the gradient (∂Ltotal/∂θ) for every parameter (θ) across all network modules.

- Parameter Update: An optimizer (e.g., Adam) uses these gradients to update all parameters in a single step, ensuring coordinated improvement across tracks.

- Curriculum Learning: Training often starts with heavier weighting on simpler tasks (e.g., Ldist) and gradually shifts focus to the full coordinate loss (Lframe) as training progresses.

Performance Comparison: Modular vs. End-to-End Training

Table 2: Training Paradigm Comparison (Hypothetical Benchmark)

| Training Paradigm | Average GDT_TS | Training Stability | Time to Convergence | Interpretability |

|---|---|---|---|---|

| Modular (Stage-wise) | 68 | High | Faster | High |

| End-to-End (Joint) | 75 | Moderate | Slower | Lower |

Diagram 2: End-to-end training gradient flow in RoseTTAFold.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents & Computational Tools for Implementing Iterative & E2E Methods

| Item/Category | Function & Explanation |

|---|---|

| Training Data (PDB) | Curated datasets of protein structures from the Protein Data Bank. Essential for computing ground-truth loss during E2E training. |

| MSA Generation Tool (HH-suite, Jackhmmer) | Software to build deep multiple sequence alignments from input sequence. Provides evolutionary constraints as primary input. |

| Deep Learning Framework (PyTorch/TensorFlow with JAX) | Enables automatic differentiation for gradient calculation (backpropagation) critical for E2E training. |

| Differentiable Geometry Library | A software layer (e.g., in PyTorch3D) that allows gradients to flow through 3D coordinate manipulations (rotations, translations). |

| Loss Function Weights (w1, w2, w3) | Hyperparameters that balance the contribution of 1D, 2D, and 3D losses. Tuning is crucial for stable E2E training. |

| GPU Cluster with High VRAM | Computational hardware necessary to hold the large RoseTTAFold model and associated gradients in memory during E2E training. |

| Optimizer (Adam, AdamW) | Algorithm that adjusts network parameters based on computed gradients to minimize the total loss. |

This whitepaper explores the transformative impact of the open-source release of RoseTTAFold, a deep learning-based three-track neural network for protein structure prediction, on the global scientific community. The core thesis is that RoseTTAFold's architecture and its public availability have fundamentally democratized structural biology and accelerated therapeutic discovery by providing a powerful, accessible alternative to proprietary systems. This document provides an in-depth technical guide to its three-track network, detailed experimental protocols for its use and validation, and an analysis of its role within the broader research ecosystem.

The Three-Track Neural Network: A Technical Deep Dive

RoseTTAFold's core innovation is its three-track neural network that simultaneously processes and integrates information across three scales: 1D sequence, 2D distance maps, and 3D atomic coordinates. This iterative refinement allows the model to reason about relationships between amino acids in sequence space, in planar distance space, and in three-dimensional Euclidean space.

Network Architecture and Information Flow

Track 1: 1D Sequence Track

- Input: Multiple Sequence Alignment (MSA) represented as a 2D matrix (sequence length x number of sequences).

- Processing: Uses transformer-like attention mechanisms to extract patterns of evolutionary covariance and residue conservation.

- Output: Per-residue embeddings capturing long-range dependencies in the sequence.

Track 2: 2D Distance Track

- Input: Initial embeddings from the 1D track.

- Processing: Forms a 2D representation (residue i x residue j) to predict inter-residue distances, orientations (dihedrals), and contact probabilities.

- Output: A refined 2D distance map that guides 3D folding.

Track 3: 3D Coordinate Track

- Input: Features from the 1D and 2D tracks.

- Processing: A geometric module (often SE(3)-equivariant transformer) generates a preliminary 3D backbone structure.

- Output: 3D coordinates (Cα, Cβ, O, N atoms) for each residue.

Key Integration: The three tracks do not operate in isolation. At each iteration of the network, information is exchanged between tracks:

- 1D <-> 2D: Sequence features inform contact predictions, and predicted contacts refine sequence understanding.

- 2D <-> 3D: Distance maps constrain 3D geometry, and 3D structure validates and refines 2D predictions.

- 1D <-> 3D: Sequence profiles are mapped to local 3D torsions and angles.

Diagram Title: RoseTTAFold Three-Track Network Information Flow

Comparative Performance Data

The open-source release allowed for widespread benchmarking. The table below summarizes key quantitative performance metrics from the original publication and subsequent independent studies, compared to its contemporary, AlphaFold2.

Table 1: Comparative Performance of RoseTTAFold vs. AlphaFold2 (CASP14 & PDB Benchmarks)

| Metric | RoseTTAFold (RF) | AlphaFold2 (AF2) | Notes / Test Set |

|---|---|---|---|

| Global Distance Test (GDT_TS) | 80-85 (median) | 88-92 (median) | CASP14 Free Modeling targets. RF often within 5-10 points of AF2. |

| TM-Score | 0.80-0.85 (median) | 0.85-0.90 (median) | CASP14. Scores >0.5 indicate correct topology. |

| RMSD (Å) - Backbone | 2-5 Å | 1-3 Å | For high-confidence targets. Variance is high for difficult targets. |

| Inference Speed | ~10 min (GPU) | ~5-30 min (GPU) | For a typical 300-residue protein. RF is generally faster in practice. |

| Hardware Requirement | 1x High-end GPU | 4-8x High-end GPU + Large RAM | RF's lower compute demand is a key democratizing factor. |

| Model Availability | Fully Open-Source | Code & weights via limited servers | RF can be run locally on private data. |

Democratization in Practice: Experimental Protocols

The open-source nature of RoseTTAFold enables specific, reproducible research workflows that were previously inaccessible.

Protocol: De Novo Protein Structure Prediction

Objective: Predict the tertiary structure of a protein from its amino acid sequence alone.

Materials & Software:

- Input: FASTA file containing the target protein sequence.

- Hardware: Linux server with NVIDIA GPU (≥16GB VRAM recommended), adequate CPU cores, and storage.

- Software: RoseTTAFold repository cloned from GitHub, HH-suite, PyRosetta, and dependencies.

Methodology:

- Sequence Search & MSA Generation:

- Use

hhblitsorjackhmmeragainst protein sequence databases (UniClust30, BFD) to generate a deep MSA. - Command:

hhblits -i target.fasta -d uniclust30_2018_08/uniclust30_2018_08 -oa3m target.a3m

- Use

- Template Search (Optional):

- Use

hhsearchagainst the PDB70 database to identify structural homologs for template-based modeling.

- Use

- Running RoseTTAFold:

- Execute the main prediction script:

python network/predict.py -i target.fasta -o ./output_dir -d /path/to/databases - The three-track network iteratively processes the MSA and templates (if provided).

- Execute the main prediction script:

- Model Generation & Refinement:

- The network outputs multiple candidate models (PDB files) and confidence metrics (pLDDT per residue).

- Use PyRosetta for optional all-atom energy minimization of the top-ranked model.

- Validation:

- Assess model quality using predicted pLDDT and predicted aligned error (PAE) plots, which estimate positional confidence and domain packing errors.

Protocol: Protein Complex (Dimer) Modeling

Objective: Predict the structure of a homo- or hetero-dimeric protein complex.

Methodology:

- Construct a Paired MSA:

- For heterodimers (A+B), create a paired alignment where sequences from known interacting partners in other organisms are aligned together. Tools like

hhalignor genomic context methods are used.

- For heterodimers (A+B), create a paired alignment where sequences from known interacting partners in other organisms are aligned together. Tools like

- Format Input for RoseTTAFold:

- Create a single FASTA file with both chains concatenated, separated by a colon (e.g.,

>Target_AB\nSequenceA:SequenceB). - Provide the paired MSA.

- Create a single FASTA file with both chains concatenated, separated by a colon (e.g.,

- Run with Complex Mode:

- Use a modified pipeline or script that treats the input as a multi-chain system. The 2D track explicitly models inter-chain distances.

- Analyze Interface:

- Inspect the predicted complex for plausible interface geometry, complementary surface shapes, and interface residue conservation.

Diagram Title: RoseTTAFold De Novo Structure Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential "Reagents" for Running and Utilizing RoseTTAFold

| Item | Function & Relevance |

|---|---|

| RoseTTAFold GitHub Repository | Core open-source codebase containing the neural network model definitions, training logic, and prediction scripts. |

| Pre-trained Model Weights | The parameters learned from millions of protein sequences and structures, enabling transfer learning and accurate predictions without training from scratch. |

| HH-suite (hhblits, hhsearch) | Software suite for generating deep MSAs from sequence databases and searching for structural templates. Critical for generating input features. |

| UniClust30/BFD Databases | Large, clustered protein sequence databases used by hhblits to build informative MSAs rapidly. |

| PDB70 Database | A clustered subset of the Protein Data Bank, used by hhsearch to find potential structural templates. |

| PyRosetta or OpenMM | Molecular modeling suites used for optional all-atom refinement of RoseTTAFold's raw coordinate outputs, improving steric clashes and bond geometries. |

| CUDA-enabled NVIDIA GPU | Hardware accelerator essential for running the deep learning model with practical speed. A consumer-grade GPU (e.g., RTX 3090/4090) is sufficient. |

| Docker/Singularity Container | Pre-configured software environment that ensures reproducibility and ease of installation by bundling all dependencies. |

RoseTTAFold's open-source model has democratized high-accuracy protein structure prediction by lowering the computational barrier to entry and providing full transparency into its methodology. This has enabled researchers worldwide to: 1) Predict structures of proprietary or newly discovered targets without data sharing concerns, 2) Integrate prediction seamlessly into custom pipelines (e.g., cryo-EM refinement, drug docking), and 3) Use the model as a foundational tool for teaching and for developing new methods. By making its three-track neural network publicly available, RoseTTAFold has shifted the field's focus from accessing predictive tools to innovating with them, thereby accelerating the pace of discovery across structural biology, biochemistry, and therapeutic development.

How to Use RoseTTAFold: A Guide to Methodology and Practical Applications in Biomedicine

Within the broader research thesis on the RoseTTAFold three-track neural network, the quality of input data is not merely a preliminary step but the foundational determinant of model performance. RoseTTAFold's architecture integrates information across three tracks: 1D sequence, 2D distance geometry, and 3D atomic coordinates. The initial preparation of the amino acid sequence and the generation of high-quality Multiple Sequence Alignments (MSAs) directly feed and constrain the 1D and 2D tracks, profoundly influencing the iterative refinement in the 3D track. This guide details the technical protocols and best practices for preparing these critical inputs to maximize the accuracy of structure predictions, a vital concern for researchers and drug development professionals.

Amino Acid Sequence Preparation

The input protein sequence must be accurately defined and formatted.

Protocol 2.1: Sequence Curation

- Source Verification: Obtain the canonical sequence from authoritative databases (UniProt, NCBI Protein). Note the organism of origin.

- Sequence Integrity Check:

- Ensure the sequence uses standard 20-letter amino acid codes. Mask ambiguous residues (e.g., 'X', 'Z', 'B') by replacing them with the most probable standard residue based on homologous sequences or experimental context, or consider removing short, highly ambiguous segments.

- For designed proteins, verify the physico-chemical plausibility.

- Formatting: Convert the sequence to a single-line FASTA format (header line starting with '>', followed by the sequence line). Remove all non-sequence characters (numbers, spaces).

Table 1: Common Sequence Anomalies and Recommended Actions

| Anomaly | Description | Recommended Action for RoseTTAFold Input |

|---|---|---|

| Ambiguous Residues (X, Z, B) | Non-specific or ambiguous amino acids. | Replace based on homology or remove short segments. For long stretches, prediction reliability drops significantly. |

| Selenocysteine (U) | The 21st proteogenic amino acid. | Treat as Cysteine (C) or use a specialized predictor if known to be Sec. |

| Pyrrolysine (O) | The 22nd proteogenic amino acid. | Treat as Lysine (K). |

| Non-Standard Modifications | Phosphorylation, methylation, etc. | These are not modeled. Use the canonical, unmodified residue. |

| Signal Peptides/Propeptides | Cleaved mature protein prefixes. | Use the mature, functional sequence unless studying the full-length precursor. |

Generating Multiple Sequence Alignments (MSAs)

MSAs provide the evolutionary constraints essential for the 1D and 2D tracks. The depth and diversity of the MSA are critical.

Protocol 3.1: Standard MSA Generation Workflow (using MMseqs2) MMseqs2 is the current standard for its speed and sensitivity, as used in the RoseTTAFold server.

- Input: Prepared single-sequence FASTA file (

target.fasta). - Database Selection: Download or specify the latest protein sequence databases:

- UniRef30 (clustered at 30% identity): Primary database for homologous search.

- Environmental Database (e.g., BFD/MGnify): Adds diversity, crucial for orphan sequences.

- Command-Line Execution:

- Output: The final MSA in A3M format (non-redundant, insert states represented in lowercase), ready for input.

Protocol 3.2: MSA Depth and Filtering Optimization

- Depth Control: Limit the number of sequences to manage memory/compute. RoseTTAFold typically handles ~10k sequences effectively.

- Filter by E-value (e.g.,

< 1e-3). - Cluster sequences at a high identity threshold (e.g., 90%) to remove redundancy.

- Filter by E-value (e.g.,

- Diversity Check: Assess the MSA by calculating the Neff (effective number of sequences). A higher Neff (>100) generally correlates with better prediction accuracy.

Table 2: Quantitative Impact of MSA Parameters on RoseTTAFold Performance (Representative Data)

| MSA Characteristic | Low-Quality Scenario | High-Quality Scenario | Measured Impact on Prediction (pLDDT / TM-score) |

|---|---|---|---|

| Number of Sequences | < 50 | 1,000 - 10,000 | +15-25 pLDDT points for well-covered targets |

| Neff (Effective Sequences) | < 20 | > 100 | Strong correlation with core accuracy (R > 0.7) |

| Homology Coverage | < 40% of query length | > 80% of query length | Gaps lead to low confidence in uncovered regions |

| E-value Cutoff | Too permissive (1e-1): Noise | Balanced (1e-3 to 1e-10) | Optimal cutoff maximizes true homologs, minimizes false positives |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for MSA Generation and Validation

| Item / Reagent | Function & Rationale |

|---|---|

| MMseqs2 Software Suite | Open-source, ultra-fast protein sequence search and clustering tool. The current standard for scalable, sensitive homology detection from large databases. |

| UniRef30 Database | Clustered version of UniProt at 30% sequence identity. Reduces search time while providing a representative set of evolutionary homologs. |

| BFD/MGnify Environmental DB | Metagenomic protein sequence databases. Critical for finding distant homologs for "orphan" sequences with few hits in standard databases. |

| HH-suite (HMM-HMM comparison) | Alternative sensitive method for building and comparing profile HMMs. Useful for validating MMseqs2 results or for extremely difficult targets. |

| PSI-BLAST (Legacy Tool) | Position-Specific Iterated BLAST. A reliable, well-understood tool for initial explorations and benchmark comparisons against newer methods. |

| Custom Python Scripts (Biopython) | For post-processing MSAs: reformatting (A3M/FASTA/CLUSTAL), filtering, calculating metrics like Neff, and visualizing coverage. |

Visualization of the Input-to-Structure Workflow

Title: Sequence and MSA Preparation Workflow for RoseTTAFold

Title: MSA and Sequence Feed RoseTTAFold's Three Tracks

This whitepaper presents a detailed technical workflow for protein structure prediction, contextualized within broader research into the RoseTTAFold three-track neural network. The process leverages deep learning to transform a primary amino acid sequence into an accurate three-dimensional atomic model, a capability central to modern structural biology and rational drug design.

The Core Three-Track Architecture of RoseTTAFold

RoseTTAFold implements a sophisticated three-track neural network that simultaneously reasons about protein structure in one, two, and three dimensions. Track 1 processes the sequence profile and residue pair features (1D). Track 2 computes a 2D distance map and orientation matrices between residues. Track 3 directly constructs a 3D backbone structure. Information is iteratively passed between these tracks, allowing the model to reconcile evolutionary, co-evolutionary, and geometric constraints.

Diagram 1: RoseTTAFold Three-Track Network Architecture

Step-by-Step Workflow

Step 1: Sequence Submission and Preprocessing

The user submits a primary amino acid sequence (FASTA format). The first computational step involves searching for homologous sequences to build a Multiple Sequence Alignment (MSA).

Protocol 1.1: Generating the MSA

- Input: Target sequence in FASTA format.

- Tool: MMseqs2 (fast, sensitive profile search) is commonly used in the RoseTTAFold server pipeline.

- Database: Search against large, curated databases (e.g., UniRef30, BFD).

- Procedure:

- Generate a profile from the target sequence.

- Perform iterative searches to gather homologous sequences.

- Filter sequences to remove fragments and outliers.

- Align collected sequences to the target using the HHblits algorithm.

- Output: A curated MSA file in A3M or STOCKHOLM format, representing evolutionary constraints.

Step 2: Feature Generation

The MSA is converted into numerical features for the neural network.

Protocol 2.1: Feature Engineering

- 1D Features: From the MSA, compute position-specific scoring matrices (PSSMs), amino acid frequencies, and conservation scores.

- 2D Features: Compute predicted contact maps from correlated mutations (e.g., using plmDCA or Gremlin). Generate pair representation features.

- Data Structuring: Features are formatted into specific tensors for input into the three-track network (1D sequence tensor, 2D pair tensor).

Step 3: Neural Network Inference via RoseTTAFold

The core prediction step runs the pre-trained RoseTTAFold model on the generated features.

Protocol 3.1: Model Execution

- Model Loading: Load the pre-trained RoseTTAFold weights. The model consists of approximately 100+ million parameters.

- Inference: Pass the feature tensors through the three-track network. The network performs multiple cycles (typically 4-8) of iterative refinement, where information flows between tracks.

- Outputs: The network generates:

- Predicted distogram (2D histogram of inter-residue distances).

- Predicted torsion angles (phi, psi, omega).

- Predicted 3D coordinates for backbone (N, Cα, C) and side chain atoms.

Step 4: 3D Model Generation and Refinement

The network's output is translated into a full-atom 3D model.

Protocol 4.1: Structure Assembly

- Backbone Tracing: Use the predicted coordinates and torsion angles to construct an initial backbone trace.

- Side Chain Packing: Place side chain rotamers based on predicted angles and steric constraints, often using a method like SCWRL or Rosetta's packer.

- Energy Minimization: Subject the initial model to a short, constrained molecular dynamics relaxation or gradient-based minimization to fix local clashes and improve stereochemistry. This may use OpenMM or Rosetta.

- Model Selection: Generate multiple candidate models (e.g., 5-10) and select the one with the highest predicted confidence score (e.g., predicted LDDT - pLDDT).

Step 5: Validation and Analysis

The final model is evaluated for quality and potential errors.

Protocol 5.1: Model Validation

- Internal Scoring: Analyze per-residue pLDDT and predicted TM-score.

- Geometric Checks: Validate using MolProbity (clashscore, rotamer outliers, Ramachandran outliers).

- Comparative Analysis: If a known structure exists, calculate RMSD and TM-score against the experimental reference.

Quantitative Performance Data

Table 1: RoseTTAFold Performance Metrics on CASP14 Benchmark

| Metric | Value | Description |

|---|---|---|

| Median TM-score | 0.85 | >0.5 indicates correct fold topology. |

| Median RMSD (Å) | 2.8 | For aligned residues of high-confidence predictions. |

| Average pLDDT | 85.4 | Predicted confidence score (0-100, higher is better). |

| Prediction Time | ~10-20 min | For a typical 300-residue protein on a single GPU. |

| Success Rate (TM>0.7) | ~80% | For single-domain proteins without templates. |

Table 2: Key Research Reagent Solutions (Computational Tools)

| Tool / Resource | Function | Source / Reference |

|---|---|---|

| MMseqs2 | Ultra-fast sequence searching and MSA generation. | Steinegger & Söding, Nat Commun, 2017 |

| HH-suite | Sensitive homology detection and HMM-HMM alignment. | Steinegger et al., JMB, 2019 |

| RoseTTAFold | Core three-track deep learning model for structure prediction. | Baek et al., Science, 2021 |

| PyRosetta | Python interface to Rosetta for structure refinement and analysis. | Chaudhury et al., Bioinformatics, 2010 |

| OpenMM | Toolkit for molecular simulation and energy minimization. | Eastman et al., JCTC, 2017 |

| MolProbity | Structure validation server for all-atom contact analysis. | Williams et al., Protein Sci, 2018 |

| PDB | Protein Data Bank; source of experimental structures for validation. | wwPDB consortium, NAR, 2019 |

Diagram 2: End-to-End Prediction Workflow

Experimental Protocol for Benchmarking

For researchers validating or extending the RoseTTAFold methodology, the following benchmarking protocol is essential.

Protocol 5.1: Controlled Performance Assessment

- Dataset Curation: Select a non-redundant set of protein sequences with recently solved experimental structures (e.g., from CASP or PDB releases after the model's training cutoff).

- Blind Prediction: Run the target sequences through the full workflow (Steps 1-4) without using the experimental structure.

- Structure Comparison: Use TM-score and CAD-score for global topology comparison, and local all-atom RMSD for high-confidence regions.

- Statistical Analysis: Compute median and mean performance metrics across the dataset. Perform paired t-tests against alternative methods (e.g., AlphaFold2, trRosetta).

This workflow elucidates the transformation of sequence information into a 3D structural model through the integrative power of the RoseTTAFold three-track network. By providing detailed protocols and quantitative benchmarks, this guide equips researchers to effectively utilize and critically evaluate this technology, thereby accelerating discovery in structural biology and drug development.

The RoseTTAFold three-track neural network elegantly integrates information across one-dimensional sequence, two-dimensional distance, and three-dimensional coordinate tracks. Its final output is not a singular structure but a generative, probabilistic model from which two primary, actionable confidence metrics are derived: the per-residue pLDDT score and the residue-pair Predicted Aligned Error (PAE). These metrics, alongside the atomic coordinates in a PDB file, form the essential triad for interpreting model reliability in structural biology and drug discovery research.

The PDB File: Atomic Coordinate Output

The Protein Data Bank (PDB) file format is the standard for representing the 3D atomic coordinates of the predicted model. RoseTTAFold outputs this file containing the predicted spatial positions of atoms (typically the backbone and side-chain heavy atoms).

Key Components of a RoseTTAFold-Generated PDB File:

- ATOM/HETATM Records: Define the Cartesian (X, Y, Z) coordinates for each atom.

- Chain Identifier: For single-chain predictions, typically 'A'.

- B-factor Column: Crucially, RoseTTAFold repurposes this column to store the pLDDT confidence score for each residue, not thermal mobility.

Experimental Protocol for Model Generation:

- Input Preparation: Provide a single protein sequence in FASTA format.

- MSA Generation: Use RoseTTAFold's built-in pipeline (HHblits, etc.) to search sequence databases and generate multiple sequence alignments (MSAs).

- Neural Network Inference: The three-track network processes sequence, MSA, and (initially random) 3D coordinates iteratively.

- Structure Sampling: The network generates multiple possible conformations (often 5-10) from different random seeds.

- Relaxation: The final selected model undergoes energy minimization (e.g., with AMBER or Rosetta) in a physical force field to correct minor steric clashes.

pLDDT: Per-Residue Local Confidence Metric

The pLDDT (predicted Local Distance Difference Test) score is a per-residue estimate of the model's local confidence, expressed as a value between 0 and 100. It predicts the reliability of the local atomic placement by estimating the expected similarity between the predicted structure and a hypothetical true structure.

Interpretation of pLDDT Scores:

| pLDDT Score Range | Confidence Band | Typical Structural Interpretation |

|---|---|---|

| 90 - 100 | Very high | Backbone and side-chain atoms are modeled with high accuracy. Likely reliable for detailed analysis (e.g., binding site). |

| 70 - 90 | Confident | Backbone is likely modeled well; side-chain orientations may vary. |

| 50 - 70 | Low | Caution advised. Backbone placement may be inaccurate. Often seen in flexible loops. |

| Below 50 | Very low | Predicted coordinates are unreliable. These regions may be disordered. |

Visualizing pLDDT: pLDDT scores are typically mapped onto the 3D model as a color spectrum (blue=high, red=low), providing immediate visual assessment of local model quality.

Title: pLDDT Score Extraction and Visualization Workflow

Predicted Aligned Error (PAE): Global Reliability of Relative Positioning

While pLDDT assesses local accuracy, PAE assesses the global confidence in the relative spatial arrangement of different parts of the model. The PAE is an N x N matrix (where N is the number of residues) where each element (i,j) predicts the expected error in the relative position of residue i when the model is aligned on residue j.

Interpretation of the PAE Matrix:

- Low PAE values (e.g., < 5 Å): Indicate high confidence in the relative distance and orientation between the two residues/domains.

- High PAE values (e.g., > 15 Å): Indicate low confidence in their relative placement. They may be in different, flexibly connected domains.

Key Use Cases:

- Domain Orientation: Identify rigid domains (squares of low PAE) and flexible linkers (high PAE bands).

- Model Confidence: Assess whether a predicted interaction between two distal regions is trustworthy.

- Multimer Modeling: In complex predictions, PAE helps distinguish reliable inter-chain interfaces from uncertain ones.

Experimental Protocol for PAE-Guided Analysis:

- Generate PAE Matrix: RoseTTAFold outputs the PAE matrix as a JSON file alongside the PDB.

- Visual Inspection: Plot the matrix with axes representing residue numbers.

- Domain Identification: Identify blocks along the diagonal with low internal PAE, suggesting stable domains.

- Error Estimation: For any hypothesized functional site involving residues i and j, check the PAE(i,j) value to gauge confidence in their modeled proximity.

Integrated Interpretation for Research and Drug Development

A robust structural hypothesis requires synthesizing information from all three outputs.

| Research Question | Primary Data Source | Supporting Metric | Interpretation Guide |

|---|---|---|---|

| Is the overall fold reliable? | pLDDT plot & 3D coloring | Mean pLDDT | Mean pLDDT > 70 suggests a generally reliable backbone fold. |

| Can I trust this active site conformation? | pLDDT at specific residues | PAE between residues | Requires both high pLDDT for each residue and low PAE between all residue pairs in the site. |

| Are these two domains rigidly connected? | PAE matrix | 3D structure | Look for a square of low PAE covering both domains. A high-PAE band indicates flexibility. |

| Is this region intrinsically disordered? | pLDDT (very low) | Sequence conservation | Consecutive residues with pLDDT < 50 may be disordered, especially if conserved in MSA. |

Title: RoseTTAFold Output Integration for Research Applications

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in RoseTTAFold-Based Research |

|---|---|

| RoseTTAFold Software Suite | Core neural network for protein structure prediction from sequence. Provides PDB, pLDDT, and PAE outputs. |

| AlphaFold/ColabFold Notebooks | Alternative platforms that provide similar confidence metrics (pLDDT, PAE), useful for comparative validation. |

| PyMOL / ChimeraX | Molecular visualization software. Essential for visualizing the 3D model colored by pLDDT scores. |

| Matplotlib / Seaborn (Python) | Libraries for generating standardized plots of pLDDT per residue and the 2D PAE matrix. |

| BioPython PDB Parser | Python library for programmatically extracting pLDDT scores from the B-factor column of output PDB files. |

| AMBER / Rosetta Force Fields | Used in the final relaxation step of model generation to refine stereochemistry and remove atomic clashes. |

| DisProt / MobiDB Databases | Reference databases of known intrinsically disordered regions (IDRs). Used to contextualize low-pLDDT regions. |

| PISA / PDBePISA Web Services | Tools for analyzing protein interfaces and quaternary structures. Complementary to PAE analysis for complexes. |

The development of the RoseTTAFold three-track neural network represented a paradigm shift in protein structure prediction by simultaneously integrating information from one-dimensional sequence, two-dimensional distance maps, and three-dimensional coordinate spaces. This foundational thesis—understanding how evolutionary, physical, and geometric constraints are co-optimized across tracks—provides the essential framework for extending prediction capabilities beyond single polypeptide chains. This whitepaper details the advanced application of this three-track architecture to model the quaternary structures of protein complexes and the precise atomic interactions of protein-ligand binding. Success in these areas is critical for illuminating cellular signaling pathways, understanding allosteric regulation, and accelerating structure-based drug design.

Core Architectural Extension for Complexes and Ligands

The three-track network of RoseTTAFold is inherently suited for modeling multimers and small molecules.

- Track 1 (Sequence): For complexes, the input is a multiple sequence alignment (MSA) constructed from paired homologs or concatenated single-chain MSAs. Inter-chain co-evolutionary signals are captured here.

- Track 2 (Distance): The network calculates both intra- and inter-chain residue-residue distances, forming a unified distance map for the entire assembly.

- Track 3 (3D Structure): The initial state for a complex is a random separation of individual chain backbones, which are then iteratively refined alongside any defined ligand coordinates.

For protein-ligand interactions, the ligand (e.g., a drug candidate) is represented as a graph or set of atoms with defined chemical features (atom type, bonds, chirality) and integrated as an additional "chain" into the three-track system.

Key Methodologies and Experimental Protocols

Protocol: In Silico Modeling of a Protein-Protein Complex with RoseTTAFold

Objective: Predict the structure of a heterodimeric protein complex from amino acid sequences. Input: FASTA sequences for Protein A and Protein B. Procedure:

- Sequence Search: Use MMseqs2 to generate a paired MSA. The search seeks homologous pairs of sequences that are found together in the same species or operon.

- Template Identification: Search the PDB for potential complex templates using HHSearch, based on the paired MSA.

- Model Generation:

- Input the paired MSA, template information, and sequences into the RoseTTAFold complex modeling pipeline (e.g., the

rfdiffusionorRoseTTAFold2complex extension). - The three-track network performs iterative refinements, predicting inter-chain distances and orientations.

- Generate multiple (e.g., 100) candidate models.

- Input the paired MSA, template information, and sequences into the RoseTTAFold complex modeling pipeline (e.g., the

- Model Selection: Rank models by predicted confidence scores (e.g., interface pTM or ipTM). Select the top-ranking model for analysis.

- Validation: Compare predicted interface residues with known mutagenesis data or assess with computational interface scoring functions (e.g., DOCKSCORE, PISA).

Protocol: Modeling a Protein-Small Molecule Interaction

Objective: Predict the binding pose and affinity of a known drug-like molecule to a target protein. Input: Protein FASTA sequence; ligand SDF or SMILES string. Procedure:

- Ligand Preparation: Use RDKit or Open Babel to generate 3D conformers, assign correct protonation states, and minimize ligand geometry.

- Protein Preparation: Generate a standard single-chain MSA for the protein. Prepare the protein structure (if a monomeric model exists) using tools like PDBFixer or UCSF Chimera to add missing side chains and hydrogens.

- Docking with Integrated Networks:

- Method A (Direct Prediction): Use advanced implementations like RoseTTAFold-All-Atom (RFAA), which accepts ligand chemical descriptors as part of its input sequence. The network is trained to place both the protein and ligand atoms de novo.

- Method B (Diffusion-based Docking): Use RFdiffusion or similar. The ligand's 3D coordinates are fixed while a diffused protein structure is generated around it, or vice-versa, via a denoising diffusion probabilistic model conditioned on the ligand.

- Pose Refinement & Scoring: Cluster generated poses and refine with short molecular dynamics (MD) simulations in implicit solvent (e.g., using OpenMM). Score poses using a combination of network confidence metrics and physical energy functions (e.g., RosettaLigand).

- Affinity Estimation: Apply machine-learning scoring functions like

ΔΔGpredictors or simplified physically-based methods (MM/GBSA) on the top poses.

Table 1: Performance of Advanced Protein Complex Prediction Tools (Based on CASP15/EMA Data)

| Tool / Method | Protein-Proplexes (DockQ Score) | Protein-Oligomer Accuracy (TM-Score) | Key Innovation |

|---|---|---|---|

| RoseTTAFold-All-Atom (RFAA) | 0.72 (High Accuracy) | 0.85 | Unified sequence-structure modeling of all biomolecules |

| AlphaFold-Multimer v2.3 | 0.69 (High Accuracy) | 0.83 | Paired MSA & complex-focused training |

| RFdiffusion (complex mode) | N/A (Designed, not predicted) | 0.90+ (on design benchmarks) | Generative diffusion for interfaces |

| Traditional Docking (HADDOCK) | 0.49 (Medium Accuracy) | N/A | Physics & bioinformatics-driven sampling |

Table 2: Benchmarking Protein-Ligand Pose Prediction (PDBbind v2020)

| Method Type | Top-1 Success Rate (RMSD < 2.0 Å) | Inference Speed (poses/sec) | Training Data Dependency |

|---|---|---|---|

| Deep Learning Docking | |||

| RoseTTAFold-All-Atom | ~42%* | ~1-2 | High (Protein-Ligand structures) |

| EquiBind | 38% | ~10 | High (Protein-Ligand structures) |

| Traditional Docking | |||

| AutoDock Vina | 31% | ~100 | Low (Empirical scoring function) |

| Glide (SP mode) | 52% | ~5 | Medium (Force field + Heuristics) |

| Preliminary benchmark data from early RFAA evaluations. Expected to improve with model maturity. |

Essential Visualizations

Diagram Title: RoseTTAFold Three-Track Architecture for Complexes

Diagram Title: Protein-Ligand Interaction Modeling Workflow

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Computational Toolkit for Modeling Complexes & Ligand Interactions

| Tool / Resource | Type | Primary Function | Source / Provider |

|---|---|---|---|

| RoseTTAFold2 / RFAA | Software Suite | End-to-end deep learning for protein, complex, and protein-ligand structure prediction. | Baker Lab, University of Washington |

| RFdiffusion | Software Suite | Generative diffusion model for de novo protein and binder design, including around small molecules. | Baker Lab, University of Washington |

| AlphaFold-Multimer | Software Suite | Specialized version of AlphaFold2 for predicting protein multimeric structures. | DeepMind / Google |

| OpenMM | Molecular Dynamics Engine | High-performance toolkit for running molecular dynamics simulations for pose refinement and free energy calculations. | Stanford University |

| RDKit | Cheminformatics Library | Handling ligand chemistry: SMILES parsing, conformer generation, and molecular descriptor calculation. | Open-Source Community |

| PDBbind Database | Curated Dataset | Comprehensive collection of protein-ligand complexes with binding affinity data for training and benchmarking. | http://www.pdbbind.org.cn |

| ChimeraX | Visualization Software | Interactive visualization and analysis of predicted complexes and binding sites. | UCSF |

| HADDOCK | Web Server / Software | Integrative modeling platform for docking biomolecular complexes using diverse experimental data. | Bonvin Lab, Utrecht University |

| ColabFold | Web Service / Pipeline | Accessible cloud pipeline combining MMseqs2 for MSAs with AlphaFold2/RoseTTAFold for easy complex prediction. | Sergey Ovchinnikov, et al. |

1. Introduction

This whitepaper, framed within the broader thesis on the revolutionary capabilities of the RoseTTAFold three-track neural network, details its cutting-edge applications in rational drug design. RoseTTAFold's architecture, which integrates information across protein sequence, distance, and 3D coordinate tracks, provides an unprecedented computational framework for two critical tasks: the precise identification of ligand-binding pockets and the accurate prediction of mutational effects on protein stability and drug binding.

2. RoseTTAFold's Three-Track Architecture in Drug Design Context

The power of RoseTTAFold for drug discovery stems from its three-track neural network:

- 1D Sequence Track: Processes amino acid sequences and multiple sequence alignments (MSAs).

- 2D Distance Track: Infers pairwise distances between residues and atoms.

- 3D Coordinate Track: Generates atomic-level 3D structures.

This holistic integration allows for the simultaneous reasoning of sequence-structure-function relationships, enabling the de novo prediction of protein structures with and without ligands, the identification of cryptic pockets, and the assessment of how mutations perturb the structural and energetic landscape.

3. Targeting Pockets: Identifying and Characterizing Binding Sites

A primary application is the in silico mapping of potential drug-binding sites.

- Methodology: The network is trained on known protein-ligand complexes from the PDB. For a novel target, RoseTTAFold predicts the structure and, through its attention mechanisms in the 2D track, highlights residues with high interaction potentials. By "diffusing" small molecular fragments or conditioning the prediction on a specific ligand, it can predict ligand-bound conformations and reveal allosteric sites.

- Protocol for Pocket Detection:

- Input the target protein sequence into the RoseTTAFold server or local installation.

- Generate a confidence-ranked set of predicted 3D structures.

- Analyze the predicted aligned error (PAE) matrix to identify rigid, well-folded domains.

- Use integrated tools (e.g., Pymol, ChimeraX) or standalone algorithms (e.g., FPocket, DeepSite) on the predicted structure to scan for cavities with high hydrophobicity, residue conservation (from the MSA), and structural stability.

- Rank pockets based on volume, depth, and chemical character.

Table 1: Comparative Performance of Structure-Based Pocket Prediction Methods

| Method | Type | Key Metric (Success Rate*) | Primary Advantage for Drug Design |

|---|---|---|---|

| RoseTTAFold (conditioned) | Deep Learning | >85% (for cryptic sites) | Predicts conformationally variable and ligand-induced pockets. |

| AlphaFold2 | Deep Learning | ~80% (for static pockets) | Highly accurate apo structure; baseline for analysis. |

| FPocket | Geometric/Energy | ~75% | Fast, open-source; good for high-throughput screening. |

| SiteMap (Schrödinger) | QM/Grid-Based | ~82% | Detailed energetic and property mapping (Dscore, hydrophobicity). |

*Success rate defined as correct identification of a known ligand-binding site in benchmark sets like PDBbind.

4. Predicting Mutational Effects: Assessing Stability and Binding Affinity

RoseTTAFold is extended to predict the thermodynamic consequences of mutations (ΔΔG) through methods like RoseTTAFold Deep Mutational Scanning (RF-DMS).

- Methodology: The network evaluates the likelihood of a mutant sequence adopting the wild-type fold. A significant drop in predicted confidence (e.g., in the per-residue pLDDT score or interface pLDDT) correlates with destabilization. For binding affinity changes (ΔΔGbind), the complex structure is predicted for both wild-type and mutant, and the difference in interface metrics is calculated.

- Protocol for Mutational Effect Prediction:

- Generate the wild-type protein (or protein-ligand complex) structure using RoseTTAFold.

- For each point mutation of interest, submit the mutant sequence.

- Extract the global pLDDT score and the per-residue pLDDT for the mutated position and its neighbors.

- Compute the ΔpLDDT (pLDDTwt - pLDDTmt). A ΔpLDDT > 10 often indicates destabilization.

- For binding affinity, use specialized suites like RoseTTAFold for protein-protein interfaces or dock the ligand into the mutant structure and score with a potential like RosettaDock or a separate scoring function.

- Validate predictions against experimental databases like ThermoMutDB or SKEMPI 2.0.