Scaling the Summit: Computational Strategies for Large-Scale Multi-Omics Data Analysis

This article provides a comprehensive guide for researchers and biomedical professionals grappling with the computational challenges of large-scale multi-omics studies.

Scaling the Summit: Computational Strategies for Large-Scale Multi-Omics Data Analysis

Abstract

This article provides a comprehensive guide for researchers and biomedical professionals grappling with the computational challenges of large-scale multi-omics studies. We explore the foundational bottlenecks of data volume and heterogeneity, detail modern scalable methodologies from cloud-native architectures to AI-driven integration, address critical troubleshooting and optimization techniques for performance and cost, and examine validation frameworks to ensure biological robustness. The synthesis offers a roadmap to translate vast molecular datasets into actionable biological insights and accelerate therapeutic discovery.

The Data Deluge: Understanding the Scalability Bottlenecks in Modern Multi-Omics

Troubleshooting Guides & FAQs

Q1: My multi-omics workflow fails when merging genomic variant calls (VCF) and single-cell RNA-seq (scRNA-seq) matrices due to memory errors. What are the primary scaling bottlenecks and solutions?

A: The primary bottleneck is loading entire datasets into RAM. A VCF for a 10,000-sample cohort can be ~5 TB, and a scRNA-seq count matrix for 100,000 cells from 1,000 samples can be ~2 TB. Loading these simultaneously exceeds typical node memory (512 GB-4 TB).

- Solution 1: Chromosome-partitioned Processing. Use tools like

bcftoolsto filter and process VCFs by chromosome or genomic region before integration. - Solution 2: Sparse Matrix Arithmetic. Ensure your pipeline uses sparse matrix representations (e.g., via SciPy or R Matrix) for scRNA-seq data, dramatically reducing memory footprint.

- Solution 3: Batch Integration. Use a batch-aware integration framework (e.g.,

harmony,scVI,Seurat v5) to integrate data in mini-batches without loading all data at once.

Q2: During cohort-scale proteomics (mass spectrometry) and metabolomics data integration, I encounter severe batch effects that correlate with sequencing center ID rather than biological condition. How do I diagnose and correct this?

A: This is a classic technical confounding issue in multi-center studies.

- Diagnosis: Perform Principal Component Analysis (PCA) on the normalized protein/metabolite abundance matrix. Color samples by

sequencing_centerandcondition. If PC1 or PC2 clusters strongly by center, batch effect is present. - Correction Protocol:

- Pre-processing: Use internal standard-normalized abundances (for proteomics) and batch-specific QC sample normalization (for metabolomics).

- Algorithmic Correction: Apply Combat (from

svapackage) or its improved descendant,ComBat-seq, which is better suited for omics count data. Critical: Only include thecentervariable in the batch parameter. Include theconditionvariable in the model formula to protect biological signal. - Validation: Re-run PCA post-correction. Cluster separation should now be driven by

condition.

Q3: When constructing a knowledge graph from petabytes of disparate literature and omics data, my Neo4j queries become prohibitively slow. What are the key infrastructure and query optimization steps?

A: At petabyte-scale, graph database performance requires careful design.

- Infrastructure: Move from a single Neo4j instance to a causal cluster setup (1 leader, 2+ followers) on high-memory machines with NVMe SSDs.

- Optimization Steps:

- Indexing: Create composite indexes on node properties used in frequent

MATCHorWHEREclauses (e.g.,:Gene(entrez_id),:Compound(pubchem_cid)). - Query Tuning: Use the

PROFILEkeyword to identify expensive operations. Avoid cartesian products. Use relationship types and directions specifically. - Data Partitioning: If possible, shard your graph by domain (e.g., one graph for genetic interactions, another for drug-target) and use federation queries.

- Indexing: Create composite indexes on node properties used in frequent

Table 1: Approximate Data Scale per Sample and per Large Cohort

| Data Modality | Per Sample (Raw) | 10,000-Sample Cohort (Processed) | Common File Formats |

|---|---|---|---|

| Whole Genome Seq (WGS) | ~90 GB (FASTQ) | 0.8-1.2 PB (CRAM, GVCF) | FASTQ, CRAM, BAM, VCF |

| Bulk RNA-seq | ~5 GB | 40-60 TB | FASTQ, BAM, TSV (counts) |

| Single-Cell RNA-seq | ~20 GB | 150-200 TB | FASTQ, MTX (Matrix Market), H5AD |

| Methylation Array | ~0.1 GB | 1-2 TB | IDAT, TXT (beta values) |

| LC-MS Proteomics | ~0.5 GB | 4-6 TB | RAW, MZML, TXT (peptide int.) |

Table 2: Computational Resource Requirements for Common Integrative Tasks

| Analysis Task | Typical Dataset Size | Minimum RAM | Recommended Cloud Instance | Estimated Runtime* |

|---|---|---|---|---|

| GWAS + eQTL Mapping | 5k samples, 10M SNPs | 64 GB | 32 vCPU, 128 GB RAM | 6-12 hours |

| Multi-omics (WGS+RNA) Cohort PCA | 1k samples | 256 GB | 64 vCPU, 256 GB RAM | 2-4 hours |

| Single-Cell Multi-modal (CITE-seq) | 100k cells | 180 GB | 48 vCPU, 192 GB RAM | 3-5 hours |

| Metabolomics-Pathway Enrichment | 500 samples, 5k features | 32 GB | 16 vCPU, 64 GB RAM | <1 hour |

| Using optimized, parallelized software (e.g., PLINK, FlashPCA, Seurat). |

Experimental Protocols

Protocol 1: Cohort-Scale Sparse Matrix Integration for scRNA-seq and ATAC-seq

Objective: Integrate single-cell gene expression and chromatin accessibility from 500,000+ cells across 100+ donors to identify candidate cis-regulatory elements.

Methodology:

- Individual Modality Processing: Process scRNA-seq (CellRanger) and scATAC-seq (Cell Ranger ATAC) per donor. Output: RNA count matrix and ATAC peak matrix.

- Sparse Matrix Conversion: Convert both matrices to compressed sparse column (CSC) format using

scipy.sparse.csc_matrix. - Joint Embedding with MultiVI: Use the

scvi-tools(MultiVI) framework, which is designed for sparse, batched data.- Key Command:

- Key Command:

- Downstream Analysis: Cluster on

integrated_latentusing Leiden clustering. Perform differential accessibility/expression testing per cluster.

Protocol 2: Cross-Modal Knowledge Graph Inference for Drug Repurposing

Objective: Infer novel drug-disease links by connecting a genomic variant cohort database with a biomedical knowledge graph (KG).

Methodology:

- Data Source Ingestion: Ingest nodes and relationships from public databases (e.g., DrugBank, ChemBL, DisGeNET, STRING, GWAS Catalog) into a Neo4j graph. Use

neo4j-admin importfor initial bulk load. - Cohort Data Mapping: Map cohort-derived significant gene-disease associations (p < 5x10⁻⁸) as new

ASSOCIATED_WITHrelationships between existingGeneandDiseasenodes in the KG. - Meta-Path Inference: Write a Cypher query to find paths connecting a

Drugnode to aDiseasenode via the newly added gene.- Example Query:

- Example Query:

- Scoring & Validation: Score candidate links using path count and degree-weighted metrics. Validate top predictions via literature mining (automated PubMed queries) or in silico docking studies.

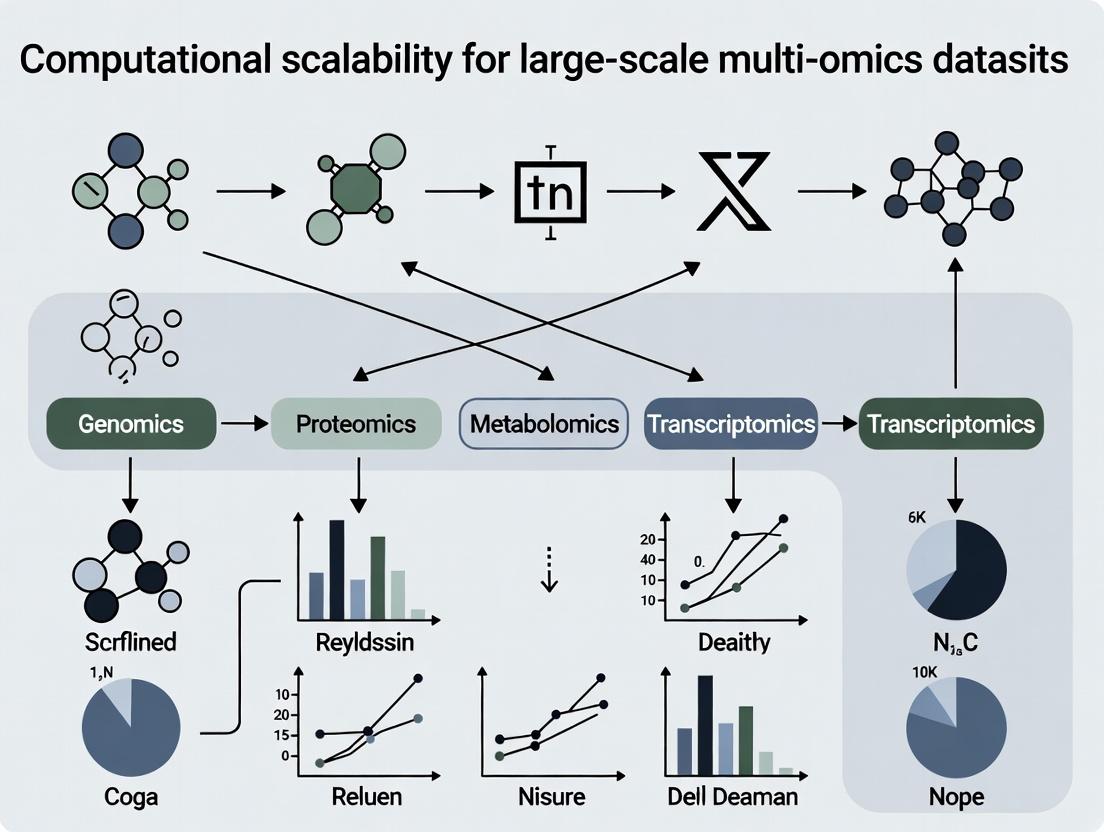

Visualizations

Title: Multi-Omics Data Integration and Analysis Workflow

Title: Key Drivers of the Multi-Modal Scalability Challenge

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Large-Scale Multi-Omics Research

| Item / Solution | Function & Role in Scalability |

|---|---|

| High-Throughput Sequencing Platforms (e.g., NovaSeq X, Revio) | Generate terabases of WGS/RNA-seq data per flow cell, enabling cost-effective cohort scaling. |

| Single-Cell Multi-ome Kits (e.g., 10x Multiome, CITE-seq) | Allow simultaneous profiling of RNA and protein (CITE-seq) or RNA and chromatin (Multiome) from the same cell, solving cell identity alignment. |

| Multiplexed Immunoassays (e.g., Olink, SomaScan) | Measure thousands of proteins from minute plasma volumes, enabling proteomics at cohort scale. |

| Cloud-Optimized File Formats (e.g., Zarr, Parquet) | Columnar/chunked formats enabling efficient, partial I/O from cloud storage (S3, GCS), bypassing full-file downloads. |

| Containerization (Docker/Singularity) | Ensures computational reproducibility and portability of complex pipelines across HPC and cloud environments. |

| Workflow Languages (Nextflow, Snakemake) | Orchestrate scalable, fault-tolerant pipelines that can dynamically provision cloud resources. |

| Unified Cohort Metadata Managers (e.g., SampleDB, TSD) | Critical for tracking petabyte-scale data provenance, consent, and sample relationships across modalities. |

Troubleshooting Guides & FAQs

Q1: My multi-omics integration pipeline (e.g., using Seurat for scRNA-seq + ATAC-seq) is extremely slow. The primary delay seems to be during the data loading and initial filtering step. Which bottleneck is most likely, and how can I mitigate it? A: This is a classic I/O (Input/Output) bottleneck, compounded by memory overheads. Large single-cell BAM/FASTQ or fragment files are read from disk. The process is single-threaded and sequential, causing delays.

- Solution: Implement a staged data loading strategy.

- Pre-filter on disk: Use command-line tools like

samtoolsto filter reads by quality or region before loading into R/Python. - Use efficient formats: Convert raw data to more efficient, columnar formats like H5AD (AnnData) or LOOM for rapid random access.

- Increase I/O bandwidth: Utilize high-performance local SSDs or, in cloud environments, provision instances with optimized local NVMe storage.

- Pre-filter on disk: Use command-line tools like

Q2: When performing differential expression analysis on a cohort of 500 bulk RNA-seq samples, my R session crashes with an "out of memory" error during the DESeq2 model fitting. What can I do?

A: This is a Memory (RAM) bottleneck. The DESeq2 DESeqDataSet object holding raw counts, model matrices, and dispersion estimates for thousands of genes across hundreds of samples can exceed tens of gigabytes.

- Solution: Employ memory-efficient computational strategies.

- Subset genes: Filter to genes of interest (e.g., protein-coding) before creating the DESeq2 object.

- Batch processing: Split the analysis by chromosome or gene sets and run in separate jobs.

- Leverage sparse matrices: If using tools like

limma-voom, ensure your count matrix is in a sparse format if many zeros are present. - Scale vertically/cloud: Allocate a compute node with sufficient RAM (e.g., 64GB+). Cloud platforms allow for on-demand memory-optimized instances.

Q3: My variant calling workflow (GATK) on whole-genome sequencing data is taking days to complete on a single server. The CPU usage is consistently high. How can I improve this? A: This is a Processing (CPU) bottleneck. Variant calling involves computationally intensive steps like alignment, duplicate marking, and haplotype calling that are designed for parallelization.

- Solution: Parallelize processing horizontally.

- Use built-in parallelization: Tools like

bwa-mem2(alignment) andGATK Spark(variant calling) can distribute work across multiple CPU cores on a single machine. - Implement workflow orchestration: Use a pipeline manager like Nextflow or Snakemake. They can split the workload by genomic region or sample and process them in parallel across a compute cluster or cloud.

- Optimize resource allocation: Profile your workflow to identify the most CPU-heavy steps (e.g., BQSR) and allocate more cores specifically to those tasks.

- Use built-in parallelization: Tools like

Q4: During the integration of large proteomic and transcriptomic datasets, the step calculating pairwise correlation matrices consistently fails or becomes impossibly slow. What's the issue?

A: This is a combination of Memory and Processing bottlenecks due to quadratic scaling. A dataset with n features (e.g., 20,000 genes x 300 proteins) generates matrices scaling with O(n²), consuming massive memory and compute.

- Solution:

- Dimensionality reduction first: Apply PCA or autoencoders to each modality independently to reduce features to a lower-dimensional space (e.g., 100 components) before integration.

- Use approximate methods: Implement stochastic or randomized algorithms for singular value decomposition (SVD) and correlation estimation.

- Leverage GPU acceleration: Libraries like RAPIDS cuML or PyTorch can perform large matrix operations orders of magnitude faster on a GPU.

Experimental Protocols for Benchmarking Bottlenecks

Protocol 1: Profiling I/O Overhead in a Multi-Omics Workflow

- Objective: Quantify time spent on data I/O vs. computation.

- Materials: A standard multi-omics pipeline (e.g.,

snakemakeworkflow), sample dataset (e.g., 10x Genomics multiome), system monitoring tool (iotop,dstat). - Method:

a. Instrument your pipeline with timestamps at key stages (load, filter, analyze, write).

b. Run the pipeline on a controlled system.

c. Use

dstat -td --disk-util --ioto monitor disk read/write throughput and CPU idle time simultaneously. d. Correlate high idle time with periods of high disk utilization to confirm I/O bottleneck.

Protocol 2: Measuring Memory Usage Scaling in Differential Analysis

- Objective: Model RAM requirements as a function of sample size.

- Materials: DESeq2/R, datasets of varying sample sizes (e.g., 50, 100, 200 samples),

Rprofmem()for memory profiling. - Method:

a. For each dataset subset, run

Rprofmem()before executingDESeq(). b. Record the peak memory allocation reported. c. Plot sample size (N) vs. peak memory usage (MB). The relationship is typically linear, and the slope indicates memory overhead per sample.

Protocol 3: Assessing Parallel Scaling Efficiency for Variant Calling

- Objective: Determine the optimal CPU cores for a GATK HaplotypeCaller job.

- Materials: A defined genomic region (e.g., chr1), GATK, a cluster/scheduler (Slurm, SGE).

- Method: a. Run the identical HaplotypeCaller job requesting 1, 2, 4, 8, 16, and 32 CPU cores. b. Precisely record the wall-clock completion time for each run. c. Calculate speedup: S(N) = Time(1 core) / Time(N cores). d. Plot cores (N) vs. Speedup (S). Deviation from linear speedup indicates parallelization overhead (communication, I/O contention).

Table 1: Typical Resource Requirements for Common Omics Analysis Steps

| Analysis Step | Typical Dataset Size | Primary Bottleneck | Peak RAM Estimate | Suggested Compute |

|---|---|---|---|---|

| scRNA-seq Preprocessing (CellRanger) | 10k cells, ~200M reads | I/O, Processing | 32-64 GB | 16+ cores, fast SSD |

| Bulk RNA-seq DE (DESeq2) | 100 samples, 60k genes | Memory, Processing | 40+ GB | 8+ cores, High RAM |

| WGS Variant Calling (GATK) | 30x coverage, Human Genome | Processing, I/O | 8-16 GB per thread | 32+ cores, cluster |

| Metagenomic Assembly (MEGAHIT) | 100M paired-end reads | Memory, Processing | 500+ GB | 24+ cores, Very High RAM |

| Chromatin Peak Calling (MACS2) | 50M aligned reads (ChIP-seq) | Processing | < 8 GB | 4-8 cores |

Table 2: Impact of Data Format on I/O Performance

| Data Format | Example File Size (10k cells) | Load Time (R/Python) | Random Access | Best For |

|---|---|---|---|---|

| Raw FASTQ | ~200 GB | N/A (not direct) | No | Archival |

| Compressed BAM | ~15 GB | Slow (decompression) | Yes (with index) | Aligned reads |

| H5AD / Loom | ~1-2 GB | Fast | Yes (efficient) | Processed matrices, Analysis |

Visualizations

Decision Flow for Identifying Computational Bottlenecks

A Scalable Multi-Omics Analysis Workflow with Optimizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Scalable Multi-Omics Research

| Tool / Resource | Category | Primary Function | Why It's Essential for Scalability |

|---|---|---|---|

| Nextflow / Snakemake | Workflow Orchestration | Defines, manages, and executes computational pipelines. | Enables seamless parallelization, portability across environments (local, cluster, cloud), and reproducible execution. |

| Conda / Bioconda / Docker | Environment Management | Creates isolated, reproducible software environments with specific tool versions. | Eliminates "works on my machine" issues, ensures consistency across large teams and over time. |

| HDF5-based Formats (H5AD, Loom) | Data Format | Stores large, annotated matrices in a hierarchical, binary format. | Enables fast random access to subsets of data, drastically reducing I/O overhead compared to flat files. |

| RAPIDS cuML / PyTorch | GPU Acceleration | Provides GPU-accelerated implementations of ML and statistical algorithms. | Delays the processing bottleneck by offering order-of-magnitude speedups for matrix operations and model training. |

| Slurm / AWS Batch / Kubernetes | Job Scheduler / Orchestrator | Manages distribution of computational jobs across a cluster of machines. | Essential for horizontal scaling, allowing hundreds of samples to be processed concurrently by efficiently utilizing all available resources. |

| Metaflow / MLflow | Experiment Tracking | Logs parameters, code, data versions, and results for machine learning workflows. | Critical for managing the complexity of thousands of computational experiments, ensuring traceability and reproducibility. |

Technical Support Center

FAQs & Troubleshooting

Q1: My alignment of single-cell RNA-seq data from a population-scale cohort (e.g., >100k cells) is failing due to memory errors. What are my options? A: This is a common scalability bottleneck. Current population atlases (e.g., Human Cell Atlas, UK Biobank) routinely process petabytes of data.

- Solution 1: Use a workflow manager with chunking. Implement tools like

SnakemakeorNextflowwith--coresand memory profiling. Split your BAM files by chromosome or cell barcode groups. - Solution 2: Switch to a more efficient aligner. For large-scale studies, consider

STARsoloorKallisto | Bustoolsfor faster, memory-efficient pseudoalignment. - Real-World Data Volume: Aligning 100,000 cells (~10x Genomics) generates ~5-10 TB of intermediate BAM files. Processing a cohort of 10,000 samples can exceed an exabyte in raw sequencing data.

Q2: How do I integrate multiple single-cell datasets from different studies to avoid batch effects at scale? A: Scalable integration is critical for meta-analysis across population studies.

- Solution: Use methods designed for large datasets.

Harmony,Scanorama, orSeurat's CCAcan handle tens of thousands of cells. For million-cell integrations, use approximate nearest neighbor methods likeScanpy's pp.neighborswithuse_rep='X_pca'andmetric='cosine'. Always perform robust preprocessing (normalization, HVG selection) per batch first. - Protocol:

- Load individual AnnData objects (Scanpy) or Seurat objects.

- Normalize (

sc.pp.normalize_total) and log-transform (sc.pp.log1p) per batch. - Identify highly variable genes (

sc.pp.highly_variable_genes) per batch, intersect. - Scale (

sc.pp.scale) data to unit variance, regressing out mitochondrial percentage. - Run PCA (

sc.tl.pca) on concatenated matrices. - Apply

bbknn(Batch Balanced KNN) orharmonyon PCA embeddings. - Proceed with UMAP and clustering on corrected embeddings.

Q3: I am getting "out-of-core" errors when performing dimensionality reduction (PCA/t-SNE) on my large single-cell matrix. A: Traditional PCA requires the full matrix in memory.

- Solution: Implement incremental or randomized PCA.

- Scanpy: Use

sc.tl.pcawithsvd_solver='randomized'. - Cuml (RAPIDS): For GPU acceleration, use

cuml.decomposition.PCAwhich handles out-of-memory data structures. - Protocol for Incremental PCA (scikit-learn):

- Scanpy: Use

Q4: My cell type annotation tool is too slow for my dataset of 1 million cells. A: Reference-based annotation scales poorly with query size.

- Solution: Use a two-step approach or pre-indexed reference.

- Fast Pre-clustering: Use

leidenorlouvainclustering at a lower resolution. - Representative Cell Annotation: Annotate cluster centroids or randomly sampled cells from each cluster using

SingleRorscArches. - Propagate Labels: Propagate labels to all cells in the cluster using a k-NN classifier.

- Fast Pre-clustering: Use

- Recommended Tool:

scANVIorCellTypist(with its pre-trained models) are optimized for speed on large datasets.

Table 1: Representative Single-Cell & Population Study Data Volumes

| Study / Atlas Name | Scale (Cells) | Raw Data Volume (Approx.) | Processed Matrix Size | Key Technology |

|---|---|---|---|---|

| Human Cell Atlas (HCA) - Tabula Sapiens | ~500,000 | 75 TB | ~500k x 20k (10 GB) | 10x Multiome |

| Chan-Zuckerberg Biohub - 1M Immune Cells | 1,000,000 | 150 TB | ~1M x 30k (25 GB) | 10x 3' RNA-seq |

| UK Biobank (Planned scRNA-seq) | 500,000 (pilot) | 100 TB (est.) | ~500k x 15k (6 GB) | SS2 / 10x |

| COVID-19 Atlas (e.g., UC San Diego) | ~1,600,000 | 240 TB | ~1.6M x 25k (40 GB) | Various |

| Mouse Whole Brain (10x Genomics) | 1,300,000 | 200 TB | ~1.3M x 28k (35 GB) | 10x 3' RNA-seq |

Table 2: Computational Resource Requirements for Key Tasks

| Analysis Step | 100k Cells | 1M Cells | Recommended Infrastructure |

|---|---|---|---|

| Read Alignment & Quantification | 8 cores, 64 GB RAM, 2 TB storage | 32 cores, 256 GB RAM, 20 TB storage | High-CPU VMs / HPC Cluster |

| Data Integration & Dimensionality Reduction | 16 cores, 128 GB RAM | 64+ cores, 512 GB RAM or GPU (32GB VRAM) | High-Memory Nodes / GPU Instances (A100) |

| Clustering & Trajectory Inference | 8 cores, 64 GB RAM | 16 cores, 256 GB RAM | Standard Compute Nodes |

| Long-term Data Storage (Processed) | 5 - 20 GB | 50 - 200 GB | Cloud Object Storage (S3, GCS) |

Experimental & Computational Protocols

Protocol: Scalable Processing of Population-Scale scRNA-seq using HPC

- Data Organization: Use a consistent directory structure per sample. Document metadata in a central

samples.tsvfile. - Job Submission (SLURM Example):

- Post-Processing Aggregation: Use

cellranger aggr to create a feature-barcode matrix across all samples, normalizing for sequencing depth.

- Downstream Analysis in R/Python: Load the aggregated matrix into

Seurat or Scanpy using sparse matrix representations to conserve memory.

Visualizations

Diagram 1: Large-Scale scRNA-seq Analysis Workflow

Diagram 2: Scalable Data Integration & Batch Correction Logic

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Toolkit for Large-Scale Single-Cell Population Studies

Item / Solution

Category

Function & Relevance to Scalability

10x Genomics Chromium X

Wet-lab Platform

Enables high-throughput single-cell partitioning, processing up to ~20k cells per lane, crucial for large cohort studies.

Cell Ranger 7.0+ / STARsolo

Computational Pipeline

Provides optimized, parallelized workflows for aligning sequencing data and generating count matrices at scale.

Scanpy (Python) / Seurat (R)

Analysis Ecosystem

Core libraries using sparse matrix operations for memory-efficient handling of millions of cells.

Anndata / H5AD Format

Data Structure

Columnar, hierarchical file format enabling disk-backed operations and efficient subsetting of large datasets.

Cuml (RAPIDS)

Computational Library

GPU-accelerated versions of clustering, PCA, and UMAP algorithms, offering 10-50x speedups.

Harmony / BBKNN

Software Package

Algorithms specifically designed for fast, scalable integration of multiple large datasets.

Terra / Seven Bridges

Cloud Platform

Managed cloud environments with pre-configured workflows and scalable compute for population-scale analyses.

CellTypist

Annotation Tool

Provides pre-trained models and a fast pipeline for annotating cell types across massive datasets.

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Our proteomics pipeline outputs mzML files, but the downstream single-cell RNA-seq integration tool requires HDF5 format. The conversion script fails with a "missing precursor intensity" error. What steps should we take?

A: This is a common data format mismatch. Follow this protocol:

- Validate Source File: Run

mzML-validatoron your source file to ensure it complies with the PSI mzML standard. - Use Standardized Converter: Employ the ProteoWizard

msconverttool with explicit parameters: - Check Metadata Mapping: Ensure the scan-level metadata (MS level, retention time) is correctly mapped. The error often arises because the tool expects MS1-level precursor intensity. Use

--filter "msLevel 1"if only MS1 data is required.

Q2: When submitting multi-omics data (ATAC-seq, metabolomics) to a public repository like GEO or Metabolights, our submission is rejected due to inconsistent metadata. What is a robust framework for pre-submission checks?

A: Implement a metadata validation pipeline:

- Create a Cross-Study Table: Map all experimental variables across your assays.

Variable RNA-seq (SRA) ATAC-seq (GEO) Metabolomics (Metabolights) Harmonized Term Organism Homo sapienshumanHumanHomo sapiens(NCBI:9606)Age Unit yearsYearsYRyearsDisease State non-small cell lung carcinomaNSCLCCarcinoma, Non-Small-Cell Lungnon-small cell lung carcinoma(EFO:0003063) - Use Schema Validators: For GEO, use the

GEOparsePython library to test metadata sheets against their templates. For Metabolights, use their ISA-Tab validation tool. - Leverage Ontologies: Force all descriptive metadata to terms from controlled vocabularies like NCBI Taxonomy, UBERON, and Experimental Factor Ontology (EFO) before submission.

Q3: In a cross-platform integration analysis (Illumina RNA-seq & Nanopore direct RNA-seq), batch effects are confounded with platform technical variables. How do we disentangle this during data harmonization?

A: Apply a sequential normalization and integration protocol:

- Within-Platform Processing: Process each dataset with its own standardized, versioned pipeline (e.g., nf-core/rnaseq for Illumina, NanoCount for Nanopore). Output raw count matrices.

- Joint Embedding with Combat-Seq: Use a batch correction tool that retains count distribution integrity.

- Benchmark with Controls: Spike-in controls (e.g., Sequins for RNA) should cluster by concentration, not platform, post-correction. Validate using a PCA plot colored by platform and condition.

Q4: Our lab uses multiple version-controlled Python and R environments for different omics analyses, causing dependency conflicts when running an integrated workflow. What is the best practice for environment management?

A: Adopt containerization for reproducible, scalable computation.

- Define Environments: Use Dockerfiles for each core pipeline.

- Orchestrate with Workflow Managers: Use Nextflow or Snakemake to call containerized processes, ensuring each tool runs in its native, conflict-free environment.

- Centralized Registry: Store approved container images in a lab-wide registry (e.g., Docker Hub, Amazon ECR) tagged with the pipeline version.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Multi-omics Interoperability |

|---|---|

| Spike-in Controls (e.g., ERCC RNA, Sequins) | Synthetic molecules added to samples pre-processing. Provide a universal reference signal across platforms (LC-MS, NGS) to technically normalize data and enable quantitative cross-assay comparison. |

| Cell Hashing Antibodies (e.g., TotalSeq-A) | Antibody-derived tags used to label cells from different samples prior to pooling. Allow sample multiplexing in single-cell assays, reducing batch effects and linking metadata unambiguously to cell-level data. |

| Universal Sample Identifiers (USI) | A standardized string format (e.g., mzspec:PXD000000:12345). Provides a persistent, unique key to reference a specific data file or spectrum across all public repositories, enabling flawless data provenance tracking. |

| ISA-Tab Configuration Files | A tabular format (Investigation, Study, Assay) to organize experimental metadata. Serves as a "metadata blueprint" for complex multi-omics studies, ensuring consistent annotation from wet-lab to repository submission. |

| Reference Knowledge Graphs (e.g., Het.io, SPOKE) | Integrate relationships between genes, compounds, diseases, and phenotypes from dozens of public databases. Used as a prior network to guide and validate the biological plausibility of integrated multi-omics findings. |

Diagram: Multi-omics Data Integration Pipeline

Diagram: ISA-Tab Metadata Schema for Multi-omics

Building Scalable Pipelines: From Cloud Architectures to AI-Driven Integration

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My multi-omics alignment job on our local HPC cluster failed with "Memory allocation error." What are my immediate steps? A: This typically indicates that the compute node's physical RAM is insufficient for the dataset's working set.

- Check Job Parameters: Verify the memory request in your job submission script (e.g.,

#SBATCH --mem=256Gin Slurm). For tools like STAR for RNA-seq, memory scales with the reference genome and threads. - Profile Memory Usage: Run a test on a subset of your data (e.g., first 1 million reads) using

/usr/bin/time -vto track peak memory usage. - HPC Solution: Request a node from a high-memory partition if available. Consider using a memory-optimized instance type if moving to cloud (e.g., AWS

r6i.32xlarge, AzureE64_v5, GCPn2-highmem-96). - Tool Optimization: Use the

--limitBAMsortRAMparameter in STAR or switch to a more memory-efficient aligner likesalmonfor transcript quantification.

Q2: When transferring large BAM/VCF files from on-premises HPC to AWS S3, the connection times out or is extremely slow. How can I optimize this? A: This is a common issue with large-scale genomic data transfer.

- Use Parallelized/Accelerated Tools: Employ

aws s3 syncwith the--parallelflag or specialized tools likercloneorAzure AzCopy(for Azure Blob) which support multi-threaded transfers. - Increase Bandwidth: Consider AWS DataSync, Google's Transfer Appliance, or Azure Data Box for petabyte-scale migrations.

- Compress Data: Ensure files are compressed (e.g., .bam, .vcf.gz) before transfer.

- Check Network Path: Use tools like

iperf3to test baseline bandwidth between your HPC head node and the cloud region. Prefer a direct connection like AWS Direct Connect or Azure ExpressRoute for sustained transfers.

Q3: In our hybrid setup, pipeline steps on Azure Batch work, but the final results written to our on-premises NAS have permission denied errors. A: This is a cross-domain authentication issue between cloud compute and on-premises storage.

- Identity Federation: Configure Azure Active Directory to trust your on-premises identity provider (e.g., via ADFS or SCIM).

- Service Principal Credentials: Ensure the Azure Batch pool's Managed Identity or Service Principal has explicit read/write permissions on the SMB/CIFS share, as defined on your on-premises Active Directory server.

- Mount Verification: Manually test the mount command used by the Batch job on a test VM with the same identity to isolate the issue.

- Alternative Data Orchestration: Consider using a data orchestration layer like

dsub(Google) orNextflow Towerwith pre-configured hybrid credentials.

Q4: My GCP Life Sciences pipeline fails at the "disk full" error even though the VM has ample local SSD.

A: In GCP, the pipelines API and Life Sciences API sometimes use a default boot disk that is separate from the high-performance local SSDs.

- Explicitly Define Disks: In your pipeline specification (

pipeline.yaml), explicitly define both the boot disk size and a separate scratch disk mounted to/mnt.

- Redirect Temporary Files: Configure your tools (e.g.,

--tmpdir /mnt/scratchinbwa) to use the scratch disk. - Monitor Disk Usage: Add a preliminary step in the pipeline to log

df -houtput for debugging.

Comparative Performance & Cost Data

Table 1: Benchmarking Snakemake-based Multi-omics Workflow (1000 Genomes WGS Alignment & Variant Calling) Data based on aggregated public benchmarks and provider case studies (2024).

| Infrastructure Type | Specific Configuration | Total Wall-clock Time | Total Cost (Est.) | Primary Bottleneck Identified |

|---|---|---|---|---|

| On-Premises HPC | 100 cores, 1.5TB RAM, Lustre FS | ~42 hours | (CapEx Model) | I/O Wait during joint variant calling (GATK HaplotypeCaller) |

| AWS Cloud | 100 x c6i.24xlarge (Spot), S3, Batch |

~5 hours | ~$1,200 | Startup latency for large compute environment (>1000 vCPUs) |

| Azure Cloud | 100 x F72s_v2, Blob, AKS |

~5.5 hours | ~$1,350 | Disk throughput during BAM sorting phase |

| GCP Cloud | 100 x c2-standard-60, GCS, Life Sciences API |

~4.8 hours | ~$1,180 | Preemption delay on Preemptible VMs (managed service) |

| Hybrid (Model) | 50 cores HPC (BWA), 50 cores AWS (GATK) | ~28 hours | ~$650 + HPC OpEx | Data transfer latency between HPC Lustre and S3 (2 TB interim) |

Table 2: Key Research Reagent Solutions for Scalable Multi-omics Computing

| Item / Solution | Function & Relevance to Scalability | Example/Provider |

|---|---|---|

| Nextflow / Snakemake | Workflow managers enabling portable, reproducible pipelines across HPC, cloud, and hybrid. Essential for abstracting infrastructure. | Seqera Labs, snakemake.github.io |

| Docker / Singularity | Containerization ensures software and dependency consistency across diverse compute environments. | Docker Hub, BioContainers |

| Cromwell / Miniwdl | WDL-based workflow engines often used with cloud-native services (e.g., Terra, AnVIL). | Broad Institute |

| S3FS / gcsfuse | FUSE-based clients allowing cloud object storage (S3, GCS) to be mounted as a local filesystem on HPC or VMs. | s3fs-fuse, Google Cloud |

| SLURM / Grid Engine | Job schedulers for on-premises HPC, now often integrated with cloud bursting plugins. | SchedMD, Altair |

| Cloud SDKs (boto3, gsutil) | Programmatic toolkits for automating data and compute operations within cloud environments. | AWS, Google Cloud |

| Terra / Seven Bridges | Integrated cloud platforms providing a managed environment for large-scale biomedical data analysis. | Broad & Verily, Seven Bridges |

Experimental Protocol: Benchmarking Infrastructure for Population-scale RNA-seq Analysis

Objective: Compare the performance, cost, and operational complexity of executing an identical bulk RNA-seq pipeline across HPC, single-cloud (AWS), and a hybrid model.

Methodology:

- Workflow Definition: A standardized pipeline is defined using Nextflow:

- Input: 1000 FASTQ files (paired-end, 100M reads each, simulated from GTEx).

- Steps: Quality Control (FastQC), Trimming (Trim Galore!), Alignment (STAR), Quantification (featureCounts), and Differential Expression (DESeq2).

- Container: All tools packaged in a Singularity/ Docker image from BioContainers.

Infrastructure Setups:

- HPC: Execute on a Slurm cluster with 50 nodes (36 cores, 192 GB RAM each) and a parallel file system (GPFS).

- Cloud (AWS): Deploy using Nextflow with the

awsbatchexecutor. Use an S3 bucket for input/output. Compute environment:c6i.16xlargeinstances (64 vCPUs) in Spot mode (min vCPUs: 500, max: 2000). - Hybrid: Stage input data on on-premises high-performance storage. Run alignment (I/O intensive) on HPC. Transfer resulting BAM files to S3. Launch the resource-intensive DESeq2 step (in-memory matrix operations) on a large-memory AWS EC2 instance (

r6i.32xlarge).

Metrics Collected: Total execution time (wall-clock), total compute cost (cloud credits/HPC amortization), data transfer times and costs, pipeline reliability (number of failed tasks), and researcher hands-on time for setup and monitoring.

Analysis: Compare metrics across setups. The hybrid model is hypothesized to optimize for cost by placing I/O-heavy steps on local scratch and compute-heavy, non-linear scaling steps on elastic cloud resources.

Visualizations

Diagram 1: High-level Decision Workflow for Infrastructure Selection

Diagram 2: Hybrid Architecture for Scalable Multi-omics Analysis

Technical Support Center: Troubleshooting Guides & FAQs

Docker

Q1: My Docker container exits immediately after running with a "Permission Denied" error. How do I fix this?

A: This is often due to a non-executable entrypoint script or incorrect file permissions inside the container. Ensure your script has execute permissions (chmod +x /path/inside/container/script.sh). If building locally, add RUN chmod +x /script.sh to your Dockerfile. Alternatively, the user inside the container may lack permissions; consider running as root (USER root) during the build step to set up permissions, then switch back to a non-root user.

Q2: I get a "no space left on device" error during a Docker build. What steps should I take?

A: This indicates your Docker storage volume is full. Prune unused Docker objects: docker system prune -a --volumes. To prevent this in multi-omics workflows, ensure your Dockerfiles use multi-stage builds and .dockerignore files to exclude large, unnecessary input datasets from the build context.

Singularity

Q3: When pulling a Docker image to Singularity, I encounter "FATAL: Unable to pull from docker://", often due to network proxy issues.

A: Configure Singularity to use your system's proxy: set http_proxy and https_proxy environment variables before the pull command (e.g., export https_proxy=http://your.proxy:port). For reproducibility in HPC environments, first pull the image to a stable location (e.g., /project/images/) and then run from that SIF file.

Q4: My Singularity container cannot write to a mounted host directory.

A: This is typically a user namespace or permission issue. Use the --bind flag with correct paths: singularity exec --bind /host/path:/container/path image.sif command. If the host directory requires specific user permissions, run Singularity with --fakeroot if supported by your administrator, or ensure the directory is world-writable for testing (not recommended for secure systems).

Nextflow

Q5: My Nextflow pipeline stalls with "Submitted process" status and does not progress.

A: This is commonly a cluster executor configuration issue. Check your nextflow.config file. Ensure the queue name matches your HPC's queue system (e.g., queue = 'batch'). Verify the executor (e.g., executor = 'slurm') and that required cluster modules (like Java) are loaded. Enable debug logging: nextflow run pipeline.nf -with-dag flowchart.png -with-report.

Q6: How do I resume a Nextflow pipeline after an error or interruption without re-computing successful steps?

A: Use the -resume flag: nextflow run main.nf -resume. Nextflow uses the pipeline's work directory to cache successful processes. Ensure this directory is not deleted. For computational scalability, combine -resume with a stable workDir location (e.g., on a shared filesystem).

WDL (Cromwell)

Q7: In Cromwell, my task fails with "Job has been aborted" and "Disk full" in the background.

A: Cromwell's default root disk size might be insufficient for large omics datasets. In your WDL task's runtime section, explicitly define a larger disk: runtime { docker: "image" disks: "local-disk ${default_disk_size + 500} SSD" }. Monitor temporary directories (cromwell-executions/) and implement a cleanup strategy.

Q8: How do I efficiently pass large arrays of input files (e.g., 1000 BAM files) to a WDL workflow?

A: Use a Array[File] input type and provide a JSON file listing the file paths. For scalability, store the list in cloud storage or a manifest file. Structure your tasks to process arrays in scatter-gather patterns to parallelize execution.

Experimental Protocols for Scalability Benchmarks

Protocol 1: Benchmarking Container Startup Overhead Objective: Quantify the time and resource cost of launching identical bioinformatics tools in Docker, Singularity, and Podman.

- Tool:

fastqc v0.11.9for quality control of sequencing data. - Dataset: A standardized 10GB multi-sample RNA-seq FASTQ file.

- Method: Use the

timecommand to wrap 100 sequential runs offastqcon the same file from each container technology. Measure wall-clock time, CPU time (%C), and peak memory usage (%M). Run on identical HPC nodes. - Analysis: Calculate mean and standard deviation for each metric. The key output is the overhead per invocation, critical for estimating scaling costs in large-scale omics.

Protocol 2: Orchestrator Scalability on Heterogeneous Clusters Objective: Compare the ability of Nextflow and Cromwell/WDL to manage 10,000 parallel tasks across mixed CPU/GPU nodes.

- Workflow: A simplified multi-omics pipeline:

fastp(trimming, CPU) ->salmon(quantification, CPU) ->Panphlan(metagenomic profiling, GPU). - Dataset: 10,000 simulated paired-end metagenomic reads (1GB each).

- Method: Deploy the same pipeline logic in both Nextflow and WDL. Execute on a cluster with 100 CPU nodes and 10 GPU nodes. Configure both orchestrators to correctly label and dispatch tasks to the appropriate queue.

- Metrics: Record total workflow completion time, cluster utilization efficiency, and failed task management overhead.

Table 1: Container Runtime Startup Overhead (n=100 runs)

| Container Technology | Mean Wall-clock Time (s) | Std Dev (s) | Mean Peak Memory (MB) | Primary Use Case in Omics |

|---|---|---|---|---|

| Docker (root) | 1.8 | 0.4 | 125 | Local development, CI/CD |

| Singularity (v3.8) | 0.9 | 0.2 | 85 | HPC & secure cluster deployment |

| Podman (rootless) | 2.1 | 0.5 | 130 | User-level container management |

Table 2: Orchestrator Performance on 10,000 Tasks

| Orchestrator & Version | Total Completion Time (hr) | Failed Tasks (%) | CPU Utilization (%) | Key Strength |

|---|---|---|---|---|

| Nextflow (22.10+) | 4.7 | 0.2 | 92 | Dynamic scaling, rich DSL |

| Cromwell (85+) | 5.3 | 0.15 | 88 | Portability, strict reproducibility |

Visualizations

Diagram Title: Multi-omics Scalability Workflow with Orchestrators

Diagram Title: Troubleshooting Decision Path for Container Failures

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Reproducible Multi-omics Compute Experiments

| Item | Function in Computational Experiment | Example/Note |

|---|---|---|

| Dockerfile | Recipe to build a portable container image for a single tool. | Must include specific version tags (e.g., FROM python:3.9.18-slim). |

| Singularity Definition File | Recipe to build a secure, HPC-compatible container image. | Crucial for clusters that disallow Docker daemon. |

Nextflow Script (*.nf) |

Pipeline logic defining processes, channels, and workflow. | Enables reactive scaling and rich error handling. |

| WDL Task & Workflow | Declarative description of task commands and workflow structure. | Promotes portability across different execution engines. |

Conda environment.yml |

Defines exact versions of Python/R packages for reproducibility. | Often used inside containers for additional layer of dependency control. |

Configuration File (nextflow.config, cromwell.conf) |

Specifies executor settings, compute resources, and pipeline parameters. | Separates logic from execution environment for scalability. |

| Sample Manifest (CSV/TSV) | Table linking sample IDs to raw data file paths. | Input for scalable scatter-gather processes. |

| Container Registry | Storage and distribution system for built images (e.g., Docker Hub, BioContainers). | Essential for sharing and versioning reproducible tools. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when implementing scalable integration algorithms for large-scale multi-omics research within the thesis context of Computational scalability for large-scale multi-omics datasets.

Frequently Asked Questions (FAQs)

Q1: During federated learning for multi-omics data integration, my model performance is significantly worse than centralized training. What could be the issue? A: This is often due to data heterogeneity (non-IID data) across clients/sites. Each institution may have a different distribution of disease subtypes or experimental batches. Mitigate this by:

- Using the FedProx algorithm, which adds a proximal term to the local loss function to constrain local updates.

- Implementing personalized layers in your neural network where only the final classification layers are client-specific.

- Applying data normalization protocols (e.g., Combat for batch correction) locally before federated rounds begin, if privacy agreements allow.

Q2: My tensor decomposition (e.g., PARAFAC, Tucker) fails to converge or yields degenerate solutions with my sparse multi-omics tensor. How do I fix this? A: Sparse and noisy real-world data often cause convergence problems.

- Initialization: Use SVD-based initialization (e.g., via HOSVD) instead of random initialization.

- Regularization: Add L1 (LASSO) or L2 (Ridge) regularization terms to the decomposition objective function to promote sparsity and stability.

- Constraint Application: Impose non-negativity constraints if your biological factors should have only positive contributions. Use the Alternating Least Squares (ALS) with Non-Negative Least Squares sub-routine.

- Rank Selection: Your chosen rank may be too high. Use cross-validation or the Core Consistency Diagnostic (CORCONDIA) for PARAFAC to select a lower, more appropriate rank.

Q3: Memory errors occur when constructing a large patient similarity graph from integrated multi-omics features. What are scalable alternatives? A: Constructing a dense NxN similarity matrix for N > 10,000 patients is infeasible.

- Approximate Nearest Neighbors (ANN): Use libraries like Facebook Faiss or Annoy to build a sparse k-nearest-neighbor graph without computing the full distance matrix.

- Graph Coarsening: Initially cluster a subset of patients, build a graph over cluster representatives (super-nodes), then refine.

- Edge List Streaming: Compute similarities in chunks and store only edges above a threshold in a distributed graph database like Neo4j or using Spark GraphFrames.

Q4: How can I validate the biological relevance of latent factors extracted via tensor decomposition? A: Technical validation is crucial.

- Enrichment Analysis: Project latent factors onto gene space. Use the resultant gene loadings in a tool like g:Profiler or Enrichr for pathway (KEGG, Reactome) and Gene Ontology term enrichment.

- Correlation with Clinical Phenotypes: Correlate patient factor scores with known clinical variables (e.g., survival, tumor grade) using Spearman or Cox proportional-hazards models.

- Benchmarking: Compare the clustering of patients based on factor scores against established molecular subtypes (e.g., PAM50 for breast cancer).

Q5: In a federated setting, how do we handle differing feature dimensions across omics datasets from different sites? A: This requires a pre-alignment protocol.

- Common Feature Union: Agree on a master feature list (e.g., all genes in Ensembl GRCh38) before training. Sites map their data to this list, with zeros for missing measurements.

- Embedding Alignment: Let each site train a local autoencoder on its data. The federated model then integrates the bottleneck layer embeddings, which must have a fixed, agreed-upon dimension across all sites.

- Homomorphic Encryption for Alignment: For private set union to find common features without exposing individual site's full feature list, cryptographic protocols like Partial Homomorphic Encryption can be used in the initial setup phase.

Experimental Protocols for Cited Methods

Protocol 1: Federated Integration of Transcriptomics and Proteomics using CNNs

- Objective: To classify cancer subtypes using image-like representations of paired RNA-Seq and RPPA data across three hospitals without sharing raw data.

- Methodology:

- Data Preparation (Local): Each site reshapes normalized log2(TPM+1) RNA-Seq (top 1000 variant genes) and normalized RPPA protein expressions into separate 2D matrices. These are stacked as a two-channel "image" per patient.

- Model Architecture: A lightweight Convolutional Neural Network (CNN) with two convolutional layers and one fully connected layer.

- Federated Averaging (FedAvg):

- Central server initializes global model weights (W_global).

- For each round:

a. Server sends W_global to all participating sites.

b. Each site trains the model locally for 5 epochs on its data.

c. Each site sends its updated weights (W_local) back to the server.

d. Server aggregates weights:

W_global = Σ (n_k / N) * W_local_kwheren_kis site k's sample count, N is total samples.

- Evaluation: A hold-out test set from a fourth, non-participating institution is used to assess the final global model's performance (Accuracy, AUC-ROC).

Protocol 2: Tensor Decomposition for Multi-Omics Time-Series Analysis

- Objective: To identify coherent temporal patterns across metabolomics, microbiome, and transcriptomics data from a longitudinal intervention study.

- Methodology:

- Tensor Construction: Build a 3D tensor X of dimensions (Patients × Timepoints × Multi-Omics Features). Features are z-score normalized per modality.

- Model Selection: Apply a Tucker decomposition: X ≈ G ×1 A ×2 B ×_3 C, where A (patient factor), B (time factor), and C (feature factor) are loading matrices, and G is the core tensor.

- Rank Selection: Use a combination of explained variance (>70%) and Core Consistency Diagnostic to select ranks (R_patients, R_time, R_features).

- Computation: Perform decomposition using the Alternating Least Squares (ALS) algorithm from the

TensorLyPython package with non-negativity constraints on factors A and B. - Interpretation: Analyze temporal factor matrix B to identify key time trajectories. Map feature factor matrix C back to original features to create multi-omics signatures for each pattern.

Research Reagent Solutions & Essential Materials

| Item / Solution | Function in Scalable Multi-Omics Integration |

|---|---|

| Snakemake / Nextflow | Workflow management systems to create reproducible, scalable, and portable data processing pipelines across compute clusters. |

| Ray or Apache Spark | Distributed computing frameworks essential for parallelizing tensor operations, graph algorithms, and simulation studies on large datasets. |

| PySyft / IBM FL | Open-source libraries specifically designed for implementing secure federated learning protocols (e.g., secure aggregation). |

| TensorLy / scikit-tensor | Python libraries providing a high-level API for tensor decomposition methods (CP, Tucker) with GPU backend support. |

| DGL / PyTorch Geometric | Graph neural network (GNN) libraries that handle message passing on large, sparse graphs, crucial for graph-based integration. |

| UCSC Xena / PCAWG | Public data hubs for downloading large-scale, coordinated multi-omics datasets (TCGA, GTEx) required for benchmarking. |

| Conda / Docker | Environment and containerization tools to ensure computational experiments and algorithm deployments are consistent and reproducible. |

Table 1: Performance Comparison of Integration Algorithms on TCGA BRCA Dataset (N=1,100)

| Algorithm | Avg. Accuracy (%) | Avg. F1-Score | Training Time (min) | Memory Peak (GB) | Scalability to N > 10k |

|---|---|---|---|---|---|

| Centralized CNN | 92.3 ± 1.5 | 0.91 | 45 | 12.5 | Poor |

| Federated CNN (FedAvg) | 89.1 ± 2.8 | 0.88 | 68* | 4.2 (per site) | Excellent |

| Graph Neural Network | 90.7 ± 1.2 | 0.90 | 120 | 28.0 | Moderate |

| Tensor CP Decomposition | 85.4 ± 2.1 | 0.83 | 25 | 8.7 | Good |

| Early Concatenation + RF | 82.6 ± 3.0 | 0.81 | 15 | 22.0 | Poor |

*Total wall-clock time, including communication.

Table 2: Federated Learning Communication Efficiency (5 sites, 20 rounds)

| Aggregation Strategy | Total Data Transferred (MB) | Final Global Model Accuracy (%) | Resilience to Non-IID Data |

|---|---|---|---|

| FedAvg (Baseline) | 1250 | 89.1 | Low |

| FedProx (μ=0.01) | 1250 | 90.5 | High |

| Secure Aggregation | 1350 | 89.0 | Low |

| QFedAvg (Fairness) | 1250 | 88.3 | Medium |

Visualizations

Tensor Decomposition Workflow for Temporal Multi-Omics

Federated Learning (FedAvg) Round Structure

Technical Support Center

FAQs & Troubleshooting

Q1: I am training a deep learning model for single-cell RNA-seq analysis on an NVIDIA A100 GPU, but I encounter "CUDA out of memory" errors with large datasets. What are the primary strategies to resolve this? A1: This is common when working with large multi-omics datasets. Implement the following:

- Gradient Accumulation: Simulate a larger batch size by accumulating gradients over several smaller batches before performing a weight update.

- Mixed Precision Training: Use

torch.cuda.amp(PyTorch) ortf.float16(TensorFlow) to reduce memory footprint by utilizing FP16/BF16 precision where possible. - Gradient Checkpointing: Trade compute for memory by selectively recomputing activations during the backward pass instead of storing all of them.

- Data Loading Optimization: Use pinned memory (

pin_memory=Truein PyTorch DataLoader) and increase the number of data loader workers to accelerate data transfer to the GPU.

Q2: My TensorFlow model runs significantly slower on a TPU v3 pod compared to my local GPU. What are the critical first steps for TPU performance debugging? A2: TPUs require specific configurations for optimal performance:

- Ensure Dataset is TPU-Fed: Data must be streamed from the host to the TPU via

tf.data.Datasetand thetf.distribute.TPUStrategyAPI. Avoid feeding data from the VM's CPU memory. - Static Graph Execution: TPUs excel with static graphs. Ensure your model is built inside a

@tf.functioncontext and avoid dynamic tensor shapes between training steps. - Check Compilation Times: The first step on a TPU is graph compilation, which can take several minutes. Ensure you are not recompiling the graph unnecessarily (e.g., by changing model architecture or input shapes between runs).

- Use TPU-Compatible Operations: Verify that all ops in your model have TPU implementations. Avoid custom Python logic inside the main training loop.

Q3: When using multiple GPUs for parallel genome variant calling with a deep learning model, I observe poor scaling efficiency (>50% overhead). What could be the cause? A3: The bottleneck is likely data loading or inter-GPU communication.

- Inefficient Data Parallelism: If using Data Parallel (DP) in PyTorch, switch to Distributed Data Parallel (DDP), which is more efficient for multi-node/multi-GPU setups.

- Large All-Reduce Operations: With large models (e.g., for whole-genome analysis), the gradient synchronization step can be costly. Consider using the NCCL backend and ensure high-speed interconnects (NVLink/InfiniBand) are utilized.

- CPU Bottleneck: The CPU may not be able to preprocess and feed data fast enough to all GPUs. Profile your data loading pipeline and consider moving preprocessing to the GPU or using a more efficient data format like HDF5 or Parquet.

Q4: I am getting "XLA compilation error" when trying to run my PyTorch model on a Google Cloud TPU. How do I diagnose this? A4: Use the following diagnostic protocol:

- Enable Detailed Logging: Set the environment variable

XLA_FLAGS="--xla_dump_to=/tmp/xla_dump --xla_dump_hlo_as_text". This will generate detailed compilation logs. - Check for Unsupported Operations: In the logs, look for operations that are not supported by the XLA compiler. Common issues involve dynamic control flow or data-dependent tensor shapes.

- Simplify the Model: Try running a minimal version of your model on the TPU first, then incrementally add complexity to isolate the offending operation.

- Utilize

torch_xla.debug: Usetorch_xla.debug.metrics.metrics_report()to get a summary of operations happening on the TPU.

Q5: What is the most effective way to benchmark and compare performance (cost vs. speed) between a GPU (e.g., NVIDIA V100) and a TPU (v2/v3) for a specific omics deep learning workflow? A5: Conduct a controlled comparative analysis using the following protocol:

- Standardize the Workflow: Use the same model architecture, dataset (e.g., a standardized TCGA or 1000 Genomes subset), and convergence criterion (e.g., target validation loss).

- Measure Key Metrics:

- Time per Epoch: Average training time over 10 epochs after initial compilation/warm-up.

- Time to Convergence: Total wall-clock time to reach the target metric.

- Maximum Batch Size: The largest batch size that fits in the device's memory without errors.

- Cost per Run: Compute cost based on cloud provider hourly rates and total runtime.

- Profile Hardware Utilization: Use

nvprof(GPU) and Cloud TPU profiling tools to identify bottlenecks (e.g., kernel execution time, memory copies).

Performance Comparison Data

Table 1: Comparative Benchmark of Accelerated Hardware for Omics Deep Learning Tasks Benchmark on a standardized task: Training a 5-layer DNN on a 50,000-sample methylation array dataset (1M features).

| Hardware | Avg. Time per Epoch (s) | Max Batch Size | Cost per Hour (Est. Cloud) | Time to Convergence (min) | Relative Efficiency |

|---|---|---|---|---|---|

| NVIDIA V100 (16GB) | 42 | 512 | $2.48 | 63 | 1.0x (Baseline) |

| NVIDIA A100 (40GB) | 18 | 2048 | $3.22 | 27 | 2.33x |

| Google TPU v2-8 | 22 | 4096 | $2.00 | 33 | 1.91x |

| Google TPU v3-8 | 15 | 8192 | $3.00 | 23 | 2.80x |

Table 2: Common Error Codes and Resolutions

| Error Code / Message | Platform | Likely Cause | Recommended Action |

|---|---|---|---|

CUDA error: out of memory |

GPU | Batch size/Model too large. | Reduce batch size, use gradient checkpointing, enable mixed precision. |

RET_CHECK failure |

TPU | Input pipeline mismatch or unsupported op. | Ensure static input shapes, use TPU-compatible tf.data operations. |

EINVAL: No such file or directory |

TPU | Path to GCS bucket incorrect. | Use gs:// path directly; ensure service account has read/write permissions. |

NCCL connection failure |

Multi-GPU | Network communication issue. | Check InfiniBand/NVLink cables, set NCCL_DEBUG=INFO for logs. |

Experimental Protocols

Protocol 1: Implementing Mixed Precision Training for a Genomics CNN on GPU Objective: To train a convolutional neural network for sequence motif discovery with reduced memory usage and faster computation.

- Setup: Use PyTorch 1.9+ with CUDA 11.0+.

- Code Integration:

- Validation: Monitor loss convergence and compare memory usage (

nvidia-smi) versus FP32 training.

Protocol 2: Setting Up a TPU-Based Training Loop for Proteomics Data in TensorFlow Objective: To efficiently train a Transformer model on large-scale mass spectrometry data using Google Cloud TPU.

- TPU Initialization:

- Model and Dataset Scope: Define your model and

tf.data.Datasetwithin thestrategy.scope(). - Training: Use the standard

model.fit()API. Ensure your dataset is created from aTFRecordfile stored on Google Cloud Storage (gs://).

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Accelerated Omics Computing

| Item | Function | Example/Note |

|---|---|---|

| Deep Learning Framework | Provides APIs for building and training models. | PyTorch (flexible), TensorFlow/JAX (TPU-optimized). |

| Containerization Tool | Ensates reproducible software environments across hardware. | Docker, Singularity. Use NGC (NVIDIA) or Cloud TPU containers. |

| Profiling Software | Diagnoses performance bottlenecks in code. | NVIDIA Nsight Systems, PyTorch Profiler, TensorFlow Profiler, Cloud TPU tools. |

| High-Efficiency Data Format | Enables rapid reading of large omics datasets. | HDF5, Parquet, TFRecord, Zarr. Crucial for I/O bottlenecks. |

| Cluster Manager | Orchestrates multi-node, multi-GPU/TPU jobs. | Slurm, Kubernetes (with Kubeflow for ML). |

| Version Control for Models | Tracks experiments and model versions. | Weights & Biases, MLflow, DVC (Data Version Control). |

Visualizations

Title: Accelerated Computing Workflow for Multi-Omics Analysis

Title: Troubleshooting Logic for Accelerated Hardware Errors

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Cell Ranger ARC pipeline fails with the error "Out of memory" during the aggr step on a large dataset. What are my options?

A: This is a common scalability issue. The default memory allocation may be insufficient. Implement a two-pronged approach:

- Hardware/Job Control: Run the aggregation step on a high-memory node (≥128GB RAM). If using a cluster, request appropriate resources. Consider splitting the aggregation by donor or condition and merging results strategically.

- Parameter Tuning: Use the

--cellsargument to subsample to a consistent number of cells per library if biological questions allow. This reduces memory footprint. Process samples in smaller batches and use thecellranger aggroutput for combined analysis in secondary tools like Seurat.

Q2: After integrating scRNA-seq and scATAC-seq data, I observe minimal overlap in common peaks/gene activity between technical replicates. What could be wrong? A: This likely indicates a batch effect overwhelming biological signals.

- Check: Verify that all samples were processed with identical chemistry kits, nuclei isolation protocols, and sequencing depths.

- Solution: Apply robust integration methods designed for multi-omics. For example, in Signac (Seurat v5+), use reciprocal PCA (RPCA) or weighted nearest neighbor (WNN) integration on the gene activity matrix derived from scATAC-seq peaks and the normalized expression matrix from scRNA-seq. Ensure you are using consistent genomic annotations (e.g., same GTF file) for both pipelines.

Q3: The computational time for my single-cell multi-omics secondary analysis (e.g., Seurat, Scanpy) is prohibitive on my local server. How can I scale this? A: Transition to a cloud or high-performance computing (HPC) environment and leverage optimized frameworks.

- Protocol: Containerize your analysis pipeline using Docker or Singularity for reproducibility. Use workflow managers (Nextflow, Snakemake) to parallelize tasks across samples. For very large datasets (>500k cells), consider tools built for scalability like Dask integrated with Scanpy or Seurat's disk-based caching.

- Example Command for HPC Job Submission:

Q4: I am getting low cell counts in my 10x Genomics Multiome (GEX+ATAC) experiment. What are the critical experimental checkpoints? A: Low cell recovery typically stems from nuclei quality and preparation.

- Protocol Checklist:

- Tissue/Nuclei Isolation: Use fresh or properly flash-frozen tissue. Optimize homogenization and lysis to release intact nuclei without clumping. Use a fluorescence-based nuclei counter (e.g., Acridine Orange/Propidium Iodide) for accurate quantification before loading.

- Buffer Compatibility: Ensure your nuclei isolation buffer is compatible with the multi-ome assay (e.g., NP-40 or Igepal-based, with appropriate BSA and RNase inhibitors).

- Loading Concentration: Precisely quantify nuclei and load the recommended number (e.g., 10,000-16,000 nuclei for 10x v1.2) to avoid overloading the chip.

Q5: How do I validate the biological findings from my computational multi-omics integration? A: Employ orthogonal validation.

- Methodology: For a candidate gene-regulatory link (e.g., a transcription factor peak linked to a target gene), design PCR-based assays.

- CUT&Tag or ChIP-qPCR: Validate the TF binding at the specific chromatin peak region in bulk or sorted cells.

- RT-qPCR: Measure expression of the target gene under conditions that modulate the TF.

- CRISPR Inhibition (CRISPRi): Knock down the TF in a perturb-seq style experiment and confirm downstream gene expression changes and altered chromatin accessibility at the target site via ATAC-seq.

Table 1: Comparison of Scalable Single-Cell Multi-Omics Analysis Tools

| Tool / Platform | Primary Use Case | Scalability Feature | Recommended Cell Number | Key Limitation |

|---|---|---|---|---|

| Cell Ranger ARC (10x) | Primary GEX+ATAC data processing | Multi-threaded, cluster-aware | Up to 1M cells (via aggr) |

Closed pipeline, memory-intensive for aggregation. |

| Seurat v5 (with Signac) | R-based integration & analysis | Disk-based data handling, WNN integration | 500k - 1M+ cells (with sufficient RAM) | Requires R proficiency, large objects need >64GB RAM. |

| Scanpy (with Muon) | Python-based integration & analysis | Dask integration for out-of-core computing | 1M+ cells (with Dask backend) | Steeper learning curve for multi-omics specific methods. |

| ArchR | scATAC-seq & multi-ome analysis | Iterative matrix processing, Arrow files | >1M cells (architectural design) | Primarily ATAC-focused, less streamlined for full multi-omics. |

| Nextflow / Snakemake | Workflow Orchestration | Pipeline parallelization & cloud execution | Virtually unlimited (by design) | Not an analysis tool itself; requires scripting expertise. |

Table 2: Typical Computational Resources for Pipeline Stages (Dataset: 10k cells, Multiome)

| Pipeline Stage | Minimum RAM | Recommended RAM | CPU Cores | Estimated Time |

|---|---|---|---|---|

| Cell Ranger ARC (mkfastq) | 8 GB | 16 GB | 8 | 1-2 hours |

| Cell Ranger ARC (count) | 32 GB | 64 GB | 16 | 3-5 hours |

| Seurat/Signac Preprocessing | 16 GB | 32 GB | 4 | 30 mins |

| Integration & Clustering | 32 GB | 64 GB | 8 | 1-2 hours |

| ArchR Full Analysis | 64 GB | 128 GB | 16 | 4-6 hours |

Experimental Protocols

Protocol 1: Nuclei Isolation from Frozen Tissue for Multiome

- Materials: Frozen tissue sample, chilled Nuclei Isolation Buffer (NIB: 10mM Tris-HCl pH7.4, 10mM NaCl, 3mM MgCl2, 0.1% Igepal CA-630, 1% BSA, 0.2U/µl RNase Inhibitor), Dounce homogenizer, 40µm strainer, fluorescent nuclei dye.

- Procedure: Keep tissue on dry ice. Rapidly transfer 20-50mg to 2mL chilled NIB in Dounce. Homogenize with 10-15 strokes of the loose pestle, then 10-15 strokes of the tight pestle. Filter through a pre-wet 40µm strainer. Centrifuge at 500 rcf for 5 min at 4°C. Resuspend pellet in 1mL NIB with RNAse inhibitor. Stain with dye and count using a fluorescence-based counter. Adjust concentration to 1000 nuclei/µL.

Protocol 2: Post-Integration Multi-Omic Differential Testing in Seurat v5

- Input: A Seurat object with integrated assays:

RNAandATAC(gene activity matrix). - Procedure:

Diagrams

Workflow for Scalable Single-Cell Multi-Omics

Scalable Compute Architecture for Multi-Omics

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Single-Cell Multi-Omics

| Item | Function | Example/Note |

|---|---|---|

| Nuclei Isolation Buffer (NIB) | Lyses cytoplasm while preserving nuclear integrity for GEX+ATAC. | Must contain RNase inhibitor and be compatible with the assay (e.g., 10x-approved). |

| Fluorescent Viability Dye | Accurately quantify intact nuclei vs. debris. | DAPI, Acridine Orange/Propidium Iodide. Critical for loading optimization. |

| Chromium Next GEM Chip K | Microfluidic device for partitioning nuclei into Gel Bead-In-Emulsions (GEMs). | 10x Genomics product. Must match kit version. |

| Dual Index Kit TT Set A | Provides unique combinatorial indexes for sample multiplexing. | Essential for running multiple samples in one lane to reduce costs. |

| SPRIselect Beads | Size-selection magnetic beads for library clean-up and fragment size selection. | Used in library preparation post-GEM reverse transcription/transposition. |

| RNase Inhibitor | Protects RNA from degradation during nuclei isolation and processing. | Must be included in all buffers post-tissue lysis. |

| Phosphate Buffered Saline (PBS) | Washing and resuspension buffer. | Must be nuclease-free and cold. |

Optimizing Performance & Cost: Practical Solutions for Computational Roadblocks

Technical Support Center

Troubleshooting Guides

Issue 1: Pipeline Execution is Abnormally Slow

- Symptoms: A multi-omics workflow (e.g., bulk RNA-Seq alignment + variant calling) that typically completes in 6 hours now takes 24+ hours. System monitoring shows high disk I/O wait times.

- Diagnosis: Likely a disk I/O bottleneck. Common in workflows with many intermediate files or when input/output is on a network-attached storage (NAS) under heavy load.

- Resolution Steps:

- Use

iostat -x 5(Linux) to monitor disk utilization (%util) and await (await). Sustained values >80% indicate a bottleneck. - Profile the pipeline with a tool like

snakemake --profileornextflow traceto identify steps with the longest runtime and highest I/O. - Solution: Move temporary files to a local SSD or high-performance scratch storage. Modify the pipeline configuration (e.g., in Nextflow, set

scratch = trueor specify a localworkDir). Consider compressing intermediate files if CPU is not already saturated.

- Use

Issue 2: Job Fails with "Out of Memory" (OOM) Error

- Symptoms: A genome assembly or deep learning model training job crashes. Logs show

Killedorjava.lang.OutOfMemoryError. - Diagnosis: Memory hog in a specific pipeline stage. Peak memory demand exceeds allocated RAM.

- Resolution Steps:

- Profile memory usage. For single machines, use

/usr/bin/time -v. For cluster jobs, use the scheduler's reporting (e.g.,sacct -j <JOBID> --format=JobID,MaxRSS,ReqMemin Slurm). - Identify the offending tool (e.g.,

SPAdesassembler,STARalignment with large genome,Pandasloading a huge matrix). - Solution: Increase memory allocation specifically for that step. If impossible, split the input data (e.g., by chromosome), use a streaming algorithm, or offload data to disk more frequently. Consider tools with lower memory footprints.

- Profile memory usage. For single machines, use

Issue 3: High CPU Utilization but Low Throughput

- Symptoms: All CPU cores are at 100% usage, but pipeline progress is minimal.

htopshows many processes in "D" (uninterruptible sleep) state. - Diagnosis: CPU contention and thrashing. Often caused by too many parallel processes competing for limited CPU cores, leading to excessive context switching.

- Resolution Steps:

- Check the system load average (e.g.,

uptime). If load average is significantly higher than the number of cores, processes are queueing. - Review pipeline configuration (e.g.,

--coresin Snakemake,cpusin Nextflow processes,n_jobsin scikit-learn). - Solution: Reduce the number of concurrent processes/threads assigned to the pipeline. Set limits appropriately for your hardware, leaving resources for I/O operations and the OS.

- Check the system load average (e.g.,

Frequently Asked Questions (FAQs)

Q1: What are the best open-source tools for profiling a bioinformatics pipeline on an HPC cluster? A: The optimal tool depends on your workflow manager.

- For Nextflow: Use built-in reports (

nextflow log/nextflow trace), the-with-timelineand-with-reportflags. For deep profiling, integrate withHyperfineor use theNF-TOWERcloud platform's monitoring. - For Snakemake: Use the

--profileflag with thesnakemake-profileutilities. Thebenchmarkdirective in rules is excellent for per-step resource tracking. - Generic/Cluster Tools: Use the job scheduler's native tools (e.g.,

sacctfor Slurm,qacctfor SGE).py-spy(sampling profiler for Python) andperf(Linux system profiler) are useful for granular code analysis.

Q2: How do I differentiate between a code inefficiency and insufficient hardware resources? A: Follow this diagnostic table:

| Observation | Likely Cause | Investigation Tool |

|---|---|---|

| One CPU core at 100%, others idle. | Single-threaded code / Algorithmic bottleneck. | Code profiler (cProfile for Python, profvis for R). |

| All cores at 100%, load average very high. | Hardware limit (CPU-bound). | Check if %sys time is high in top. |

| High CPU but low progress, high I/O wait. | I/O bottleneck causing CPUs to wait. | iostat, iotop. |

| Memory usage steadily climbs until OOM. | Memory leak or legitimately large data. | valgrind --tool=memcheck, monitor with htop. |

| Job runs slowly, but CPU/memory use is low. | Network latency (for distributed jobs) or external API/database delay. | ping, traceroute, network profilers. |

Q3: My multi-omics integration pipeline scales poorly when adding more samples. What should I profile? A: This is a scalability issue. Profile these key aspects:

- Time Complexity: Does runtime increase linearly (O(n)) or exponentially (O(n^2)) with sample count? Profile per-sample runtime.

- Intermediate Data Growth: Check if temporary files scale poorly. Use

du -shacross pipeline stages. - Parallelization Overhead: When using many parallel tasks (e.g., with

-j 100), the scheduler overhead may dominate. Measure the runtime of the main process versus child tasks.

Q4: What are essential metrics to include in a benchmarking report for computational scalability in research? A: A comprehensive report should include the following quantitative data:

Table: Essential Benchmarking Metrics for Scalability

| Metric | Description | Tool Example | Relevance to Scalability Thesis |

|---|---|---|---|

| Wall-clock Time | Total real elapsed time. | time command, workflow logs. |

Primary measure of performance. |

| CPU Time | Total time spent on all CPUs. | time command (%P). |

Shows parallelization efficiency. |

| Peak Memory (RSS) | Maximum physical memory used. | /usr/bin/time -v, Slurm MaxRSS. |

Critical for resource allocation planning. |

| I/O Volume | Amount of data read/written. | /usr/bin/time -v (major/minor faults), dstat. |

Identifies storage bottlenecks. |

| Cost | Cloud computing or cluster cost. | Cloud provider billing, cluster cost calculator. | Economic scaling analysis. |

| Scaling Efficiency | Speedup gained from more resources. | Calculated as (T₁ / (N * Tₙ)). | Core thesis metric for parallel scaling. |

Experimental Protocols

Protocol 1: Systematic Pipeline Profiling for Hotspot Identification

- Objective: Identify the most computationally intensive steps in a multi-sample RNA-Seq analysis pipeline.

- Methodology:

- Setup: Run a representative dataset (e.g., 10 samples) through your pipeline (e.g., a Nextflow/Snakemake workflow encompassing FastQC, trimming, alignment (STAR), quantification (featureCounts), and differential expression (DESeq2)).

- Data Collection: Enable all logging and profiling flags (

nextflow run -with-trace -with-timeline -with-reportor Snakemake--benchmark). - Execution: Run on a controlled, dedicated node to minimize interference.

- Analysis: Extract from the trace report: a) runtime per process, b) CPU usage per process, c) memory footprint per process. Rank processes by resource consumption.

- Validation: Repeat with a larger sample set (e.g., 50 samples) to confirm if hotspots scale linearly.

Protocol 2: Benchmarking Scaling Efficiency on an HPC Cluster

- Objective: Measure the strong scaling performance of a parallelized tool (e.g., the aligner

STARor the single-cell toolCell Ranger). - Methodology:

- Define Baseline: Run the tool on a fixed, large input dataset (e.g., a 100GB sequencing file) using a single node with 1 core. Record wall-clock time (T₁).

- Scale Out: Repeat the identical job, incrementally increasing the number of CPU cores (e.g., 2, 4, 8, 16, 32) on the same node type.

- Measure: Record wall-clock time for each run (Tₙ).

- Calculate: Compute parallel efficiency for each

n: Efficiency = T₁ / (n * Tₙ) * 100%. - Plot: Create a scaling plot (cores vs. speedup and efficiency). The point where efficiency drops below 70% often indicates a scaling bottleneck (e.g., communication overhead, I/O contention).

Visualizations

Diagram Title: Profiling Workflow to Identify Resource Hogs

Diagram Title: Parallel Scaling Efficiency Types

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Profiling & Benchmarking | Example / Note |

|---|---|---|

| Workflow Manager | Orchestrates pipeline steps, enabling built-in profiling and reproducibility. | Nextflow, Snakemake, CWL. |

| System Monitor | Provides real-time, low-level system resource utilization data. | htop, dstat, nvidia-smi (for GPU). |

| Time-series DB | Stores historical performance metrics for trend analysis and comparison. | InfluxDB, Prometheus (often with Grafana for visualization). |

| Container Platform | Ensures environment consistency across runs and between local/HPC/cloud. | Docker, Singularity/Apptainer, Podman. |

| Profiling Tool | Measures where a program spends its time (CPU, memory) at the code level. | py-spy (Python), perf (Linux), Rprof (R), vtune (Intel). |