The Ultimate Guide to Batch Effect Correction in Multi-Omics Data Analysis: From Theory to Practice

Batch effects are systematic technical variations that pose a significant threat to the validity of multi-omics studies, potentially obscuring true biological signals and leading to false conclusions.

The Ultimate Guide to Batch Effect Correction in Multi-Omics Data Analysis: From Theory to Practice

Abstract

Batch effects are systematic technical variations that pose a significant threat to the validity of multi-omics studies, potentially obscuring true biological signals and leading to false conclusions. This comprehensive guide addresses the four critical needs of researchers, scientists, and drug development professionals. It begins by demystifying the fundamental concepts, sources, and consequences of batch effects across genomics, transcriptomics, proteomics, and metabolomics. It then details a practical workflow for applying state-of-the-art correction methods, including Combat, Harmony, limma, and multi-omics-specific algorithms. To ensure robust results, the guide provides a troubleshooting framework for identifying and overcoming common pitfalls in method selection, parameter tuning, and data integration. Finally, it establishes rigorous protocols for validating correction success through quantitative metrics, visualization, and biological plausibility checks. By synthesizing these intents, this article equips practitioners with a complete strategy to achieve reproducible, biologically meaningful insights from complex multi-omics datasets.

What Are Batch Effects? Understanding the Invisible Enemy in Your Multi-Omics Data

Introduction In multi-omics research, distinguishing batch effects from true biological variation is a critical, yet challenging, first step. Batch effects are systematic non-biological variations introduced during experimental processing (e.g., different reagent lots, personnel, sequencing runs). Failure to identify and correct them can lead to false conclusions. This guide provides a technical support framework to diagnose and troubleshoot batch effects within the context of a thesis on their removal.

FAQs & Troubleshooting Guides

Q1: How can I initially suspect a batch effect in my data? A: A strong batch effect is often visually apparent. Use Principal Component Analysis (PCA). If samples cluster strongly by processing date, instrument, or other technical factors—rather than by your experimental condition—a batch effect is likely. For example, in RNA-Seq data, if PCI separates all samples processed in "Batch A" from those in "Batch B" more distinctly than it separates "Control" from "Treated" groups, technical noise is overshadowing biology.

Q2: What statistical test can confirm a batch effect is present? A: Use a linear model-based test. For a gene expression matrix, you can apply a multivariate analysis of variance (MANOVA) or multiple univariate tests (e.g., limma in R) with batch and condition as covariates. A significant p-value for the batch term indicates its substantial influence. The table below summarizes key diagnostic metrics.

Table 1: Statistical Metrics for Batch Effect Diagnosis

| Metric/Method | Calculation/Description | Interpretation |

|---|---|---|

| PCA Visualization | Dimensionality reduction plot (PC1 vs PC2). | Clustering by technical factor indicates strong batch effect. |

| PVCA (Percent Variance Explained) | Variance components analysis using random effects models. | Quantifies the proportion of total variance attributable to batch vs. biology. |

| Batch-Signal Ratio | (Variance from batch) / (Variance from condition). | A ratio >1 suggests batch variance dominates biological signal. |

| PERMANOVA | Non-parametric multivariate ANOVA on distance matrices. | Tests if centroid/dispersion of groups (batch) is significant. |

Q3: How do I differentiate technical batch effects from biological variation of interest? A: This requires careful experimental design and analysis.

- Positive Controls: Include replicate samples (e.g., a reference cell line) across all batches. Variation in these replicates measures pure technical noise.

- Negative Controls: Use samples expected to be biologically similar within a batch. Unexpected differences within these can indicate technical issues.

- Correlation with Meta-data: Systematically correlate principal components with all technical (batch) and biological (phenotype, age, sex) metadata. Technical factors should not correlate with biological PCs after correction.

Diagram: Workflow for Differentiating Batch Effects from Biology

Q4: What is a detailed protocol for using ComBat to assess and remove batch effects? A: ComBat (sva package in R) uses an empirical Bayes framework to adjust for known batches.

Protocol:

- Input Data: Prepare a normalized, log-transformed expression matrix (genes x samples).

- Define Batches: Create a batch variable vector (e.g.,

batch <- c(1,1,1,2,2,2)). - Define Model Matrix: Optionally, create a model matrix for biological conditions you wish to preserve (e.g.,

mod <- model.matrix(~condition, data=phenoData)). - Run ComBat:

adjusted_data <- ComBat(dat=expression_matrix, batch=batch, mod=mod, par.prior=TRUE, prior.plots=FALSE) - Validation: Re-run PCA on the adjusted data. Batch clustering should be diminished, while biological condition clustering should be preserved or enhanced.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Batch Effect Management

| Item / Reagent | Function in Batch Effect Control |

|---|---|

| Reference/Spike-in Controls (e.g., ERCC RNA spikes, S. pombe spikes for scRNA-seq) | Added in known quantities across all samples/batches to track technical variation and normalize. |

| Universal Human Reference RNA (UHRR) | A standardized RNA pool used as an inter-batch positive control to calibrate instrument and protocol performance. |

| Master Aliquot Kits | Pre-aliquoting all reagents (enzymes, buffers) for a large study from a single lot minimizes within-study batch variation. |

| Automated Nucleic Acid Extractors | Reduces operator-dependent variability in sample preparation, a major source of batch effects. |

| Barcoded Library Prep Kits (Unique Dual Indexes) | Allows pooling of samples from multiple batches early in NGS workflow, reducing run-to-run variation. |

| Internal Control Plasmids/Cells | Genetically engineered controls processed with every batch to monitor technical performance thresholds. |

Q5: After correction, how do I validate that biological signals were preserved? A: Use a known biological truth.

- Differential Expression (DE) Validation: Check if DE genes for a well-established phenotype become more significant (lower p-value, higher fold-change) post-correction and if they enrich expected pathways.

- Negative Control Check: Biological replicates or samples from the same group should correlate more highly with each other after correction than with samples from other batches. Calculate intra-group vs. inter-batch correlation scores.

- Positive Control Check: Known biomarkers or pathway activity scores should show cleaner separation between defined biological groups post-correction.

Diagram: Batch Effect Correction Validation Logic

Troubleshooting Guides & FAQs

Q1: My multi-omics dataset shows strong clustering by sequencing platform/batch, not biological group. How do I diagnose if the platform is the issue? A: Perform a Principal Component Analysis (PCA) colored by the suspected batch variable (e.g., platform ID). Strong separation along the first few PCs by batch, not condition, is indicative. Use the following protocol to confirm:

- Method: Generate a PCA plot from normalized, but not batch-corrected, data.

- Code (R):

pca_result <- prcomp(t(expression_matrix)); plot(pca_result$x[,1], pca_result$x[,2], col=as.factor(batch)) - Quantitative Check: Calculate the percentage of variance explained by the batch variable using PERMANOVA (

adonis2in vegan) or a linear model. A significant p-value (<0.05) confirms a batch effect.

Q2: How can I determine if different reagent lots are introducing variability in my metabolomics/luminex assay? A: Incorporate pooled Quality Control (QC) samples across all reagent lots and runs.

- Method: Aliquot a large, homogeneous sample (e.g., pooled plasma) to be run with every batch.

- Analysis: Calculate the Coefficient of Variation (%CV) for each metabolite/analyte across all QC samples.

- Interpretation: Analytes with a %CV > 15-20% (or a lab-defined threshold) in the QCs are highly susceptible to reagent lot/run variability and require closer inspection or batch correction.

Q3: Personnel seems to be a source of variation in sample preparation. How can I track and mitigate this? A: Implement a robust sample randomization and tracking system.

- Method: Use a randomized block design. Do not allow one person to process all samples from one group.

- Protocol: Log all personnel, start/end times, and instrument calibrations for each sample in a Laboratory Information Management System (LIMS).

- Analysis: In downstream analysis, include "operator" as a covariate in your initial model to assess its contribution to variance.

Q4: My data clusters by run date. What are the first steps to correct for this temporal batch effect? A: Before applying advanced algorithms, use mean-centering or ratio-based methods per batch.

- Protocol for Gene Expression:

- For each gene/feature, calculate the mean expression within the QC samples for each run date.

- Subtract the respective batch mean (from QCs) from all samples in that batch.

- Protocol for Proteomics/Metabolomics:

- Use the QC samples to calculate a median or mean reference profile.

- For each run, compute a scaling factor for each sample as the median of ratios between the sample and the reference QC.

| Source | Typical Assay Affected | Diagnostic Metric | Acceptable Threshold (Guideline) |

|---|---|---|---|

| Platform | Transcriptomics | % Variance Explained (PERMANOVA) | p-value > 0.05 |

| Reagent Lot | Metabolomics, Proteomics | QC Sample %CV | < 15-20% |

| Personnel | Sample Prep (any) | Intra-class Correlation (ICC) | ICC > 0.75 (high reliability) |

| Run Date | All | Inter-Batch Distance (PCA) | Visual overlap in PC space |

Detailed Protocol: Combat-A Batch Correction Assessment

Objective: To evaluate and remove batch effects while preserving biological signal.

Methodology:

- Pre-processing: Normalize data within each platform/batch using standard methods (e.g., TPM for RNA-seq, median normalization for arrays).

- Batch Effect Diagnosis: As described in Q1.

- Correction: Apply a chosen batch correction method (e.g., ComBat, limma's

removeBatchEffect, SVA). - Validation:

- PCA Visualization: Re-plot PCA post-correction, coloring by batch and biological condition. Batches should overlap.

- Silhouette Score: Calculate the average silhouette width. It should increase for biological groups and decrease for batches post-correction.

- Preservation of Biological Signal: Perform a differential expression/abundance analysis on a known positive control. The strength (e.g., log2 fold change) of expected signals should not be diminished.

Visualizations

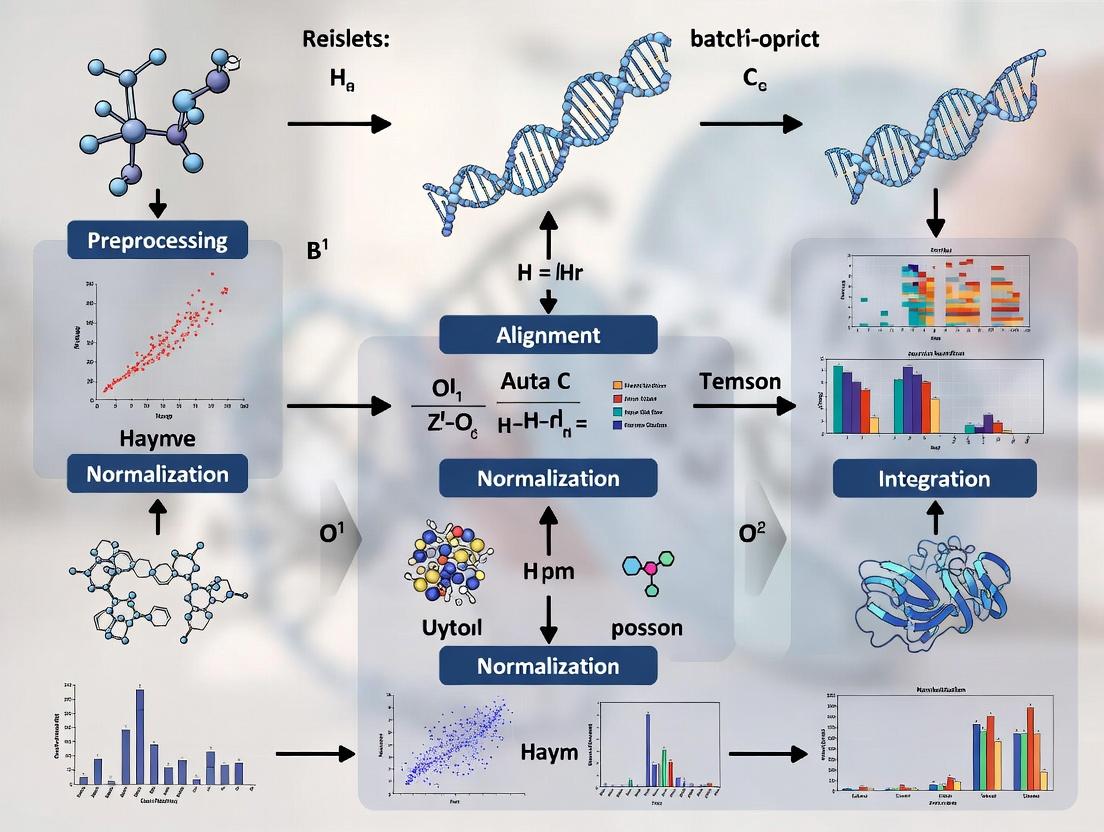

Multi-omics Batch Effect Correction Workflow

Choosing a Batch Correction Method

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Mitigation |

|---|---|

| Pooled QC Samples | A homogeneous sample run across all batches; used to monitor technical variation and calculate correction factors. |

| Reference Standards (SIGMA, NIST) | Certified materials with known analyte concentrations; used to calibrate assays across lots and platforms. |

| Internal Standards (Isotope-Labeled) | Added to each sample pre-processing in proteomics/metabolomics; corrects for variability in extraction and ionization. |

| Spike-In Controls (ERCC RNA, Alien Oligos) | Exogenous controls added to transcriptomics samples; differentiate technical from biological variation. |

| Inter-Plate Calibrators | Identical samples placed on every plate (e.g., in ELISA); normalize values across different assay runs. |

| LIMS (Lab Information Management System) | Software to track all sample metadata, personnel, reagent lots, and instrument parameters essential for modeling batch effects. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: My PCA plot shows strong clustering by sequencing date, not by treatment group. What is the immediate first step?

Answer: This is a classic sign of a dominant batch effect. Immediately halt differential expression analysis. Your first step is to apply an exploratory batch effect diagnostic. For RNA-seq data, use the svaseq() function from the sva R package to estimate surrogate variables of variation (SVs), which often capture batch effects. Plot the estimated SVs against your known batch variables (date, technician, lane) to confirm correlation. Proceed to correction only after quantification.

FAQ 2: After applying ComBat, my batch-mixed groups look better, but I've lost the biological signal I expected. What went wrong?

Answer: This indicates over-correction, likely because you used an incorrect model matrix. ComBat (and similar tools) requires you to specify the biological variable of interest (e.g., treatment) to preserve it while adjusting for batch. If you mistakenly include the biological variable in the batch adjustment model, it will be removed. Always double-check your model formula: ~ biological_group should be in the model, and batch should be the adjustment variable.

Experimental Protocol: Diagnostic PCA for Batch Effect Detection

- Input: Normalized count matrix (e.g., from DESeq2 or log2(CPM)).

- Step 1: Transpose the matrix so features (genes) are columns and samples are rows.

- Step 2: Perform PCA using the

prcomp()function in R withscale. = TRUE. - Step 3: Extract the variance explained by each principal component (PC).

- Step 4: Plot PC1 vs. PC2 and color points by known batch variables (e.g., sequencing run) and biological variables (e.g., disease state).

- Interpretation: If samples cluster more strongly by batch than biology in the first 2-3 PCs, a significant batch effect is present.

FAQ 3: For multi-omics integration (e.g., transcriptomics and metabolomics), should I correct each dataset separately or together?

Answer: Correct separately first, then integrate. Batch effects can be platform-specific. Apply appropriate, modality-specific correction methods (e.g., ComBat-seq for RNA-seq, PQN for NMR metabolomics) to each dataset individually using shared sample information. After individual correction, use integration methods like MOFA+ or DIABLO, which can handle residual, correlated technical noise across layers.

Experimental Protocol: Applying ComBat-seq for RNA-seq Count Data

- Prepare Data: Input is a raw read count matrix (genes x samples). Prepare a

batchvector (e.g., Run1, Run1, Run2, Run2...) and amodmatrix for biological covariates. - Filter Lowly Expressed Genes: Remove genes with fewer than 10 counts in most samples.

- Run ComBat-seq: Use

ComBat_seq(count_matrix, batch=batch, group=biological_group, covar_mod=mod)from thesvapackage. - Output: The function returns a batch-corrected integer count matrix. You can proceed with standard differential expression analysis tools like DESeq2 using this matrix.

- Validation: Re-run the diagnostic PCA on the corrected, normalized data. Clustering by batch should be diminished.

Data Presentation: Comparison of Batch Correction Methods

| Method | Data Type | Model | Preserves Biological Variance? | Key Consideration |

|---|---|---|---|---|

| ComBat | Normalized, continuous | Empirical Bayes | Yes, if model is correct | Assumes mean and variance of batch effects are consistent. Risk of over-correction. |

| ComBat-seq | Raw count (RNA-seq) | Negative Binomial | Yes, if model is correct | Designed for counts; avoids creating negative counts. |

| limma removeBatchEffect | Continuous, log-scale | Linear model | Yes, if specified | Simpler, but does not use empirical Bayes shrinkage. Good for mild effects. |

| Harmony | PCA space (any omics) | Iterative clustering | Yes | Excellent for complex integration tasks. Removes batch from embeddings. |

| Percentile Normalization | Metabolomics/MS | Distribution matching | Conditional | Matches sample distributions to a reference. Can be aggressive. |

Diagram: Batch Effect Diagnosis & Correction Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Batch Effect Management |

|---|---|

| Reference/QC Samples | Technically identical samples (e.g., pooled from all groups) run in every batch. Used to monitor and directly correct for inter-batch variation. |

| Spiked-in Controls | Known quantities of exogenous molecules (e.g., ERCC RNA spikes, stable isotope labeled metabolites) added to all samples for normalization across batches. |

| Balanced Block Design | Not a physical reagent, but an essential plan. Ensure each biological group is represented equally in every processing batch (day, lane, kit) to avoid confounding. |

| Automated Nucleic Acid Extractors | Reduces variation introduced by manual handling during the pre-analytical phase, a major source of batch effects. |

| Single-Lot Reagents | Using the same lot of kits, solvents, and columns for an entire study minimizes introduction of batch variables. Purchase in bulk where possible. |

Troubleshooting Guides & FAQs

FAQ: General Concepts

Q1: What is the primary purpose of these visual diagnostics in multi-omics batch effect analysis? A1: These plots serve as the first and critical step in exploratory data analysis to visually detect the presence of unwanted technical variation (batch effects) that can cluster samples by processing date, lane, or technician rather than by true biological condition. Identifying this clustering is essential before applying any correction algorithms.

Q2: I see clustering in my PCA plot. How do I determine if it's a batch effect or a real biological signal? A2: This is a common challenge. Correlate the PCA clustering pattern with your experimental metadata. Color the PCA points by batch (e.g., processing date) and by biological condition (e.g., disease vs. control). If the primary separation in PC1/PC2 aligns with batch, it strongly indicates a batch effect. Biological signals should be consistent across batches.

Q3: My density plots show different distributions between batches. What threshold constitutes a "problematic" batch effect? A3: While qualitative assessment is key, quantitative measures like the Empirical Cumulative Distribution Function (ECDF) can help. A rule of thumb is that if the median expression shift between batches is greater than 20% of the data's dynamic range, or if Kolmogorov-Smirnov test p-values are <0.05, a correction is likely necessary.

Troubleshooting Guide: PCA Plot Issues

Problem: All samples are clustered tightly in the center of the PCA plot with no separation.

- Cause 1: Extreme outliers are dominating the variance, compressing other samples.

- Solution: Create a boxplot of PC1 and PC2 scores to identify outliers. Investigate these samples for technical errors. Consider robust PCA methods or temporary removal for diagnostic purposes.

- Cause 2: The plot is dominated by low-variance, uninformative features.

- Solution: Apply feature filtering prior to PCA. For RNA-seq, filter out low-count genes. For proteomics, filter features with high missingness.

Problem: The PCA shows batch clustering, but my batch correction algorithm didn't work.

- Cause: Non-linear or complex batch effects that simple linear correction (like ComBat) cannot address.

- Solution: Use non-linear methods (e.g., Harmony, BBKNN). Visualize the corrected data with a new PCA to assess effectiveness. Also, consider if the batch is confounded with a critical biological factor.

Troubleshooting Guide: Heatmap & Density Plot Issues

Problem: My heatmap is dominated by a few very high- or low-expressed features, making batch patterns invisible.

- Cause: Lack of appropriate scaling.

- Solution: Use row-wise (feature-wise) Z-score scaling. This transforms each feature to have a mean of 0 and standard deviation of 1, allowing patterns across diverse expression levels to be visualized. The command in R is often

scale='row'in thepheatmap()function.

- Solution: Use row-wise (feature-wise) Z-score scaling. This transforms each feature to have a mean of 0 and standard deviation of 1, allowing patterns across diverse expression levels to be visualized. The command in R is often

Problem: Density plots for different batches overlap but have different peaks (bi-modal vs. uni-modal).

- Cause: This suggests a subset-specific batch effect, where the impact is different for high-abundance vs. low-abundance molecules.

- Solution: This requires advanced correction. Consider using Quantile Normalization or using model-based methods (e.g.,

sva) that account for such interactions. Stratify analysis by expression level.

- Solution: This requires advanced correction. Consider using Quantile Normalization or using model-based methods (e.g.,

Table 1: Common Visual Diagnostics and Their Interpretations

| Plot Type | Optimal Outcome | Indicator of Batch Effect | Quantitative Metric to Supplement |

|---|---|---|---|

| PCA Plot | Samples cluster by biological group, with batches intermixed. | Clear separation or sub-clusters aligned with processing batch. | Percent Variance explained by batch-associated PCs. |

| Heatmap | Uniform color blocks by group; no vertical striping by batch. | Distinct vertical color bands that correlate with batch order. | Hierarchical clustering dendrogram showing batch-specific branches. |

| Density Plot | Overlapping curves with nearly identical peaks and shapes. | Separated peaks or differing distribution shapes between batches. | Kolmogorov-Smirnov D statistic; Median shift. |

Table 2: Comparison of Batch Effect Detection Workflows

| Tool/Package | Primary Visual Diagnostic | Statistical Test | Best For |

|---|---|---|---|

sva / limma (R) |

PCA plot of surrogate variables | Levene's test, PCA p-values | Linear model-based analysis of high-throughput data. |

ComBat (sva) |

PCA plots (before/after) | Empirical Bayes shrinkage | Microarray, bulk RNA-seq data. |

SCTransform (Seurat) |

PCA, UMAP colored by batch | Linear regression of PC scores | Single-cell RNA sequencing data. |

Harmony |

Embedding plots before/after integration | Local Inverse Simpson's Index (LISI) | Complex, non-linear batch effects in single-cell or cyTOF. |

Experimental Protocol: Visual Diagnostic Pipeline for Batch Effect Detection

Title: Integrated Workflow for Visual Detection of Batch Effects in Omics Data.

1. Data Preparation:

- Format data into a features (genes/proteins) x samples matrix.

- Organize metadata table with columns for SampleID, Biological_Condition, Batch, Date, and other technical covariates.

2. Preprocessing & Filtering (Critical for PCA):

- RNA-seq: Filter genes with <10 counts in at least 20% of samples. Apply a variance-stabilizing transformation (e.g.,

vstin DESeq2). - Proteomics: Filter proteins with >50% missing values. Impute missing values using a method appropriate for your data (e.g., MinProb imputation).

- Metabolomics: Apply Pareto scaling for general-purpose PCA.

3. Generate Diagnostic Plots (Parallel Analysis):

- PCA Plot:

- Perform PCA on the preprocessed matrix (center=TRUE, scale.=TRUE for general omics).

- Plot PC1 vs. PC2 and PC1 vs. PC3.

- Color points by

Batch. Then, generate a separate plot coloring byBiological_Condition. Compare.

- Heatmap:

- Select the top 500-1000 most variable features (rows).

- Generate a heatmap with row-wise Z-score scaling.

- Annotate columns (samples) by both

BatchandCondition. Look for column-wise color patterns.

- Density Plot:

- Plot the distribution of expression values (or log-values) for all features.

- Overlay densities for each

Batchusing different colors. - Alternatively, plot density of a key sample-level metric (e.g., library size, total ion current) across batches.

4. Interpretation & Decision:

- If visual diagnostics indicate strong batch clustering, proceed to formal statistical testing (e.g., PERMANOVA on sample distances) and select an appropriate batch correction method.

- Re-run visual diagnostics post-correction to assess efficacy.

Diagrams

Visual Batch Effect Detection Workflow

PCA Interpretation: Ideal vs. Batch Effect

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Batch-Effect Conscious Omics

| Reagent / Material | Function in Batch Effect Mitigation | Key Consideration |

|---|---|---|

| Reference/Spike-in Controls (e.g., ERCC RNAs, SIS peptides) | Added in known quantities across all samples to monitor technical variation. The variance in measured vs. expected amounts directly quantifies batch effect. | Must be added at the earliest possible step (e.g., lysis) to capture the full workflow variability. |

| Pooled QC Samples | A sample created by pooling equal aliquots from all experimental samples, processed repeatedly in each batch. Serves as a "ground truth" for batch-to-batch deviation. | Should be processed identically to real samples and interspersed throughout the run sequence. |

| Balanced Block Design | Not a physical reagent, but an experimental design protocol. Ensures each biological condition is represented equally in every processing batch. | Prevents complete confounding of batch and condition, making statistical correction possible. |

| Barcoding Kits (e.g., Multiplexing) | Allows multiple samples to be pooled and processed as one in a single lane/run, eliminating lane-to-lane batch effects. | Requires careful demultiplexing. Can introduce index-swapping artifacts if not properly quality-controlled. |

| Automated Nucleic Acid/Protein Purification Systems | Reduces technician-to-technician and run-to-run variability in sample preparation, a major source of batch effects. | Initial calibration and consistent use of the same platform/kit lot are critical for stability. |

Technical Support Center: Troubleshooting Inter- and Intra-Technical Variation

Frequently Asked Questions (FAQs)

Q1: We have integrated RNA-seq and proteomics data from the same samples, but the correlation between mRNA and protein abundance is unexpectedly low. Is this due to technical variation or biological reality?

A: This is a common challenge. Low correlation can stem from multiple sources:

- Inter-technical Variation: Differences in platform sensitivity (e.g., RNA-seq detects low-abundance transcripts more efficiently than mass spectrometry detects their corresponding proteins), sample preparation protocols, and date of processing (batch effects).

- Intra-technical Variation: Run-to-run variability within the same mass spectrometer or sequencing lane.

- Biological Reality: Post-transcriptional regulation, differences in protein turnover rates, and technical lags.

Actionable Steps:

- Assess Technical Confounders: Use PCA or PLS-DA on each dataset separately, colored by processing batch, date, and instrument ID. If samples cluster strongly by these factors, batch effects are present.

- Apply Batch Correction: Use combat (or its generalizations like

svaorHarmony) within each assay type before integration. Never correct across assay types together. - Validate with Housekeeping Genes/Proteins: Check the correlation for a set of known stable gene-protein pairs (see Table 1).

Q2: After merging DNA methylation array data from two different studies (e.g., Illumina 450K and EPIC), we observe strong clustering by array type, not by phenotype. How do we proceed?

A: This is a classic inter-platform (inter-technical) variation issue. The 450K and EPIC arrays share ~90% of probes, but differences in chemistry and probe design cause systematic bias.

Actionable Steps:

- Subset to Common Probes: Restrict your analysis to the ~430,000 probes common to both platforms.

- Apply Cross-Platform Normalization: Use the

betaqnorDasenmethods in thewateRmelonR package, which are designed for this specific task. - Post-Correction Visualization: Create a density plot of beta values before and after correction to ensure distributions are aligned.

Q3: In our multi-omics workflow, metabolomics data (LC-MS) shows much higher coefficients of variation (CVs) within technical replicates than other assays. What can we do to improve this?

A: High intra-technical variation in LC-MS is often due to ion suppression, column degradation, or instrument drift during long runs.

Actionable Steps:

- Implement Robust Normalization: Use internal standards (IS) and quality control (QC) samples. Perform Systematic Error Removal using Random Forest (SERRF) or Cross-Validated LOESS signal correction (CV-SC).

- Optimize Run Order: Randomize sample injection order to avoid confounding biological groups with time-dependent drift.

- Monitor QC Samples: Calculate CVs for metabolites in the pooled QC samples injected every 5-10 experimental samples. Acceptable thresholds are shown in Table 1.

Q4: When using single-cell multi-omics (e.g., CITE-seq), the protein (antibody-derived tag, ADT) data is very noisy. How can we distinguish true biological signal from technical noise?

A: ADT data suffers from non-specific binding and background noise. Intra-technical variation can be high due to antibody staining efficiency.

Actionable Steps:

- Apply Dedicated Normalization: Use

dsb(Denoised and Scaled by Background) orCLR(Centered Log Ratio) normalization, which explicitly models and removes ambient noise. - Utilize Isotype Controls: Include isotype control antibodies in your panel and use their signal to estimate and subtract background.

- Visualize with Positive/Negative Markers: Confirm that known positive markers show a clear separation from negative populations in your UMAP after correction.

Table 1: Typical Technical Variation Metrics and Correction Targets by Omics Assay

| Assay Type | Typical Intra-Assay CV (Technical Replicates) | Typical Inter-Study/Batch CV | Key Correction Target | Common Correction Method(s) |

|---|---|---|---|---|

| RNA-seq (Bulk) | 5-15% (library prep) | 20-40% (platform, lab) | Library size, GC content, batch | ComBat-seq, RUV-seq, limma removeBatchEffect |

| LC-MS Proteomics | 10-25% (peak intensity) | 30-50% (column, instrument) | Sample loading, run order, batch | Median normalization, ComBat, SVA, proBatch |

| DNA Methylation Array | 1-3% (beta value) | 10-30% (array type, chip) | Probe design bias, batch | betaqn/Dasen, BMIQ, meffil |

| LC-MS Metabolomics | 15-35% (peak area) | 40-60% (instrument drift) | Ion suppression, drift | Internal Standards, SERRF, QC-RLSC |

| CITE-seq (ADTs) | 20-50% (UMI counts) | N/A (single experiment) | Ambient noise, background | dsb normalization, CLR with background subtraction |

Experimental Protocols

Protocol 1: Diagnosing and Correcting Batch Effects in Integrated Multi-Omics Datasets

Objective: To identify and remove non-biological variation arising from different processing batches across multiple omics layers to enable robust integrated analysis.

Materials: Normalized count/matrix data from each omics assay, with associated metadata (Batch ID, Date, Instrument, Technician, etc.).

Methodology:

- Pre-processing: Normalize data within each assay type using standard methods (e.g., DESeq2 for RNA-seq, vsn for proteomics).

- Diagnostic Visualization:

- Perform PCA on each dataset individually.

- Generate scatter plots of the first two principal components (PC1 vs. PC2).

- Color points by known batch variables (e.g., processing date) and by biological phenotype.

- Interpretation: If clustering is driven primarily by batch variables, correction is required.

- Batch Correction: Apply an appropriate batch correction method separately to each omics matrix.

- For known batch factors: Use

ComBat(fromsvapackage) in parametric mode. - For unknown/estimated factors: Use

svaseq(for count data) orSVA(for general data) to estimate surrogate variables of variation (SVs) and regress them out usinglimma::removeBatchEffect.

- For known batch factors: Use

- Post-Correction Validation:

- Repeat PCA. Clustering by batch should be diminished or absent.

- Key Check: Ensure biological signal strength (e.g., separation of case/control groups) is preserved or enhanced.

- For integrated analysis (e.g., MOFA), use the corrected matrices as input.

Protocol 2: Cross-Platform Normalization for DNA Methylation Arrays

Objective: To harmonize DNA methylation beta values from Illumina's Infinium HumanMethylation450K and MethylationEPIC arrays for joint analysis.

Materials: IDAT files or raw beta value matrices from 450K and EPIC arrays; R statistical environment with minfi and wateRmelon packages.

Methodology:

- Data Loading & Preprocessing: Load IDATs with

minfi::read.metharray.exp. Perform standard pre-processing (background correction, dye-bias equalization, no normalization) withpreprocessNoob. - Subset to Common Probes: Extract the ~430,000 probes common to both array manifests.

- Apply Cross-Platform Normalization:

- Use

wateRmelon::dasenorwateRmelon::betaqnon the combined dataset. These methods quantile normalize the methylated and unmethylated signal intensities separately, aligning the distributions across platforms.

- Use

- Quality Assessment:

- Plot density distributions of beta values for samples from each array type before and after correction.

- Perform PCA on the corrected beta matrix and color points by array type. Successful correction will show no clustering by platform.

Visualizations

Diagram 1: Multi-Omics Batch Effect Diagnosis Workflow

Diagram 2: Cross-Platform Methylation Data Harmonization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Multi-Omics Batch Effect Mitigation

| Item | Function in Mitigating Variation |

|---|---|

| Pooled Reference/QC Samples | A consistent biological sample(s) aliquoted and run across all batches/plates/instruments. Used to monitor and correct for inter- and intra-technical drift. Essential for metabolomics and proteomics. |

| Internal Standards (IS) | Known amounts of non-endogenous compounds (e.g., stable isotope-labeled peptides/metabolites) spiked into each sample prior to processing. Corrects for losses during preparation and ion suppression in MS. |

| UMI (Unique Molecular Identifiers) | Short random nucleotide sequences added during library prep (RNA-seq, CITE-seq). Allows precise digital counting of original molecules, correcting for PCR amplification bias (intra-technical variation). |

| Isotype Control Antibodies (CITE-seq) | Antibodies with no specificity to the target species, conjugated to the same barcodes as real antibodies. Used to estimate and subtract non-specific binding background noise in ADT data. |

| Spike-in Controls (e.g., ERCC RNA) | Known, exogenous RNAs added to samples in defined ratios. Allows for absolute quantification and normalization based on known input, correcting for technical variation in sample handling and sequencing. |

Batch-Correction Software (sva, Harmony, ComBat) |

Statistical toolkits implementing algorithms to model and remove unwanted variation while preserving biological signal. The core computational reagent for integration. |

Step-by-Step Workflow: How to Correct Batch Effects in Genomics, Transcriptomics & Proteomics

Troubleshooting Guides & FAQs

Q1: Why is my multi-omics data variance dominated by a few high-abundance features even after normalization? A: This often indicates that scaling was applied before addressing the skewed distribution. For omics data (e.g., RNA-seq, proteomics), the sequence should be: 1) Log-transformation to stabilize variance and reduce skew, 2) Normalization (e.g., quantile, TMM for RNA-seq) to adjust for technical differences, 3) Scaling (e.g., Z-score, Pareto) to make features comparable. Applying scaling on non-transformed, heavy-tailed data will cause high-abundance features to exert undue influence.

Q2: My PCA plot still shows a strong batch effect after using log-transformed and Z-scaled data for integration. What went wrong?

A: Log-transformation and scaling are pre-correction steps; they prepare data for batch effect removal but are not removal methods themselves. The issue may be that batch effects are non-linear or affect variance structure. Ensure you performed within-batch scaling (Z-scoring using each batch's mean/SD) before integrating datasets, not global scaling across all batches. This prevents batch-specific variances from being baked into the scaled data. After this pre-correction, apply a dedicated batch effect removal algorithm (e.g., ComBat, limma's removeBatchEffect).

Q3: When should I use log(x+1) transformation versus other offsets?

A: The +1 (or other pseudo-count) is used to handle zero values. The choice depends on data structure:

- RNA-seq Count Data: Use

log2(count + 1)for moderate counts. For very low counts, a larger pseudo-count likelog2(count + CPM/10 + 1)(where CPM is the library-size normalized count) may be better to avoid exaggerating variance of zeros. - Proteomics/MS Data: Zeroes often represent missing not-at-random (MNAR) values. Simple log(x+1) can be misleading. Consider using methods like

log2(x + k)wherekis imputed from the lower tail of the distribution (e.g.,min(X[X>0])/2) or use specialized packages (proteus,MSnbase) that model the MNAR nature. - Metabolomics Data: If many zeros are true absences,

log2(x + 1)is common. If zeros are below detection limit, consider half the minimum positive value per feature.

Q4: Does the order of operations between normalization and log-transformation matter? A: Yes, critically. The standard pipeline for sequencing data is: 1) Normalization (on raw counts), 2) Log-transformation, 3) Scaling. Normalizing on raw counts corrects for library size or composition before the non-linear log transform. If you log-transform first, you alter the linear-additive structure of the data, making subsequent normalization methods (which assume linearity, like TMM or DESeq2's median-of-ratios) less effective.

Q5: After pre-correction, my negative control samples no longer cluster together. Is this a problem? A: This is a serious red flag. Pre-correction (normalization/scaling/transformation) should improve the clustering of technical replicates and negative controls. If it worsens, potential causes are:

- Over-scaling: Applied scaling to the entire dataset including controls with extreme low values, amplifying noise.

- Inappropriate Method: Used a normalization method (e.g., quantile) that assumes similar distribution across all samples, which is violated if controls are fundamentally different.

- Action: Always apply pre-correction methods within sample groups (e.g., case vs. control) separately where appropriate, or use methods robust to different distributions. Validate each step by checking PCA plots of controls.

Experimental Protocols

Protocol 1: Systematic Pre-Correction for Multi-Omics Batch Integration

Objective: Prepare single-omics dataset (e.g., transcriptomics) for downstream batch effect correction.

Materials: Raw count matrix, sample metadata with batch and condition information.

Software: R (v4.3+) with edgeR, limma, sva packages.

- Filtering: Remove low-expression features (e.g., genes with CPM < 1 in >90% of samples).

- Normalization (on raw counts): Apply TMM (Trimmed Mean of M-values) normalization using

edgeR::calcNormFactorsto correct for library composition. - Log-Transformation: Convert normalized counts to log2-CPM (Counts Per Million) using

edgeR::cpm(..., log=TRUE, prior.count=1). Theprior.countstabilizes variances of low counts. - Within-Batch Scaling: For each batch identified in metadata, center and scale the log2-CPM data to have zero mean and unit variance (Z-score) within that batch. Do this using

scale()function in a loop per batch. - Quality Check: Generate PCA plot colored by batch and condition. Pre-correction should tighten replicate clusters but not remove batch-driven separation.

- Output: The scaled, log-transformed matrix is now ready for batch effect removal tools (e.g.,

sva::ComBat).

Protocol 2: Evaluating Pre-Correction Efficacy

Objective: Quantify the reduction in unwanted technical variation after each pre-correction step.

Materials: Processed data matrix after each step (raw, normalized, log-transformed, scaled).

Software: R with ggplot2, pvca or variancePartition package.

- Variance Partitioning: For each data state, fit a linear mixed model (using

variancePartition::fitExtractVarPartModel) with formula~ (1|Batch) + (1|Condition). - Calculate Proportion of Variance Explained (PVE): Extract the PVE by the

Batchfactor. A successful pre-correction pipeline will show a decreasing trend in Batch PVE. - Visualization: Create a bar plot showing Batch PVE at each stage: Raw -> Normalized -> Log-Transformed -> Scaled.

- Metric: Aim to minimize Batch PVE while preserving or increasing Condition PVE. The step that causes the largest drop in Batch PVE is most critical for your dataset.

Data Presentation

Table 1: Impact of Pre-Correction Steps on Batch Effect Strength in a Simulated RNA-seq Dataset Dataset: 100 samples, 2 batches, 2 conditions, 10,000 genes. Batch Effect Strength is measured as the Proportion of Variance Explained (PVE) by the batch factor in a PCA on the top 500 most variable genes.

| Pre-Correction Step Applied | Median Batch PVE (%) | Median Condition PVE (%) | Notes |

|---|---|---|---|

| Raw Counts | 65.2 | 12.1 | Variance dominated by batch. |

| TMM Normalization Only | 58.7 | 15.3 | Reduces library size artifacts. |

| Normalization + Log2(x+1) | 42.1 | 28.5 | Most significant reduction in batch skew; stabilizes variance. |

| Norm + Log + Global Z-Scale | 40.8 | 29.1 | Minor improvement over log alone. |

| Norm + Log + Within-Batch Z-Scale | 22.4 | 31.7 | Optimal prep for batch correction; minimizes between-batch mean/variance shift. |

Table 2: Recommended Pre-Correction Workflows by Omics Data Type Guidelines for sequencing and array-based technologies within a batch effect correction thesis.

| Data Type | Primary Normalization | Recommended Transformation | Scaling Recommendation | Rationale |

|---|---|---|---|---|

| RNA-seq (Bulk) | TMM (edgeR) or Median-of-Ratios (DESeq2) | log2(CPM + 1) or VST | Within-batch Z-score | Count data is skewed; normalization is linear, log/VST stabilizes variance for downstream linear models. |

| Single-Cell RNA-seq | Library Size (CPM/TPM) followed by CSS or deconvolution | log2(CPM/TP10K + 1) or Pearson residuals | No additional scaling if using graph-based methods; Z-score for PCA. | Handles sparsity and extreme library size differences. |

| Microarray (Gene Expr.) | Quantile Normalization | log2(intensity) | Global Z-score or Pareto Scaling | Forces identical distributions across arrays; log handles intensity skew. |

| Proteomics (Label-Free) | Median Centering or Quantile (on full matrix) | log2(intensity) | Within-batch Z-score or Auto-scaling | Corrects run-to-run intensity shifts; log reduces dynamic range. |

| Metabolomics (LC-MS) | Probabilistic Quotient Normalization (PQN) | log2(intensity) or Cubic Root | Pareto Scaling | PQN accounts for dilution; Pareto reduces dominance of high-abundance metabolites. |

Mandatory Visualization

Title: Multi-Omics Data Pre-Correction Decision Workflow

Title: Variance Shift Across Pre-Correction Steps

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Pre-Correction Validation Experiments

| Item / Solution | Function in Pre-Correction Context | Example Product / Kit |

|---|---|---|

| External RNA Controls Consortium (ERCC) Spike-Ins | Added to samples in known ratios before sequencing. Used to empirically measure technical variance and assess the accuracy of normalization and transformation steps. | ERCC RNA Spike-In Mix (Thermo Fisher, 4456740) |

| Pooled QC Samples | A homogeneous pool of experimental material run repeatedly across all batches/plates. Serves as a gold standard for evaluating batch effect strength before and after pre-correction via correlation or distance metrics. | N/A (Prepared in-lab from study samples) |

| Internal Standard (IS) Mix - Metabolomics/Proteomics | A set of stable isotope-labeled compounds added uniformly to all samples. Used to correct for sample-specific losses, ionization efficiency, and instrument drift during normalization. | Mass Spectrometry Metabolite Kit of Internal Standards (Sigma-Aldrich, MSK-M1) |

| UMI (Unique Molecular Identifier) Kits - scRNA-seq | Labels each original mRNA molecule with a unique barcode to correct for PCR amplification bias, a critical pre-correction step for accurate count estimation. | 10x Genomics Chromium Single Cell 3' Kit |

| Degraded RNA Control | Intentionally degraded RNA samples run alongside intact samples. Helps evaluate if pre-correction methods inadvertently amplify degradation batch effects or if they correctly isolate biology. | Universal Human Reference RNA (Agilent, 740000) + controlled heat degradation. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions

Q1: My data shows increased variance after applying ComBat. What went wrong and how can I fix it? A: This often occurs due to violation of ComBat's core assumption of homoscedasticity (constant variance across batches). To resolve:

- Diagnose: Plot the standard deviation per gene per batch before and after correction.

- Solution: Use the

mean.only=TRUEoption in thesva::ComBat()function if the batch effect is primarily in the mean, not the variance. - Alternative: Consider a location-only adjustment using

limma::removeBatchEffect()with its default settings.

Q2: When using limma::removeBatchEffect, should I include biological covariates in the model, and what happens if I don't?

A: Yes, you must include known biological covariates of interest (e.g., disease status, treatment group) in the design argument. If you only specify the batch, the function will remove variation associated with batch, but it will also remove any biological signal that is confounded with or has a similar distribution across batches. This can lead to loss of the signal you aim to study.

Q3: Can I apply these methods to single-cell RNA-seq count data directly?

A: It is not recommended. Both classic ComBat and limma::removeBatchEffect are designed for continuous, approximately normally distributed data (like log-transformed microarray or bulk RNA-seq data). Applying them to raw counts can produce artifacts.

- Best Practice: For single-cell data, use specialized methods like

Harmony,scanorama, or theintegrationfunctions in Seurat, which are designed for count distributions and high sparsity.

Q4: How do I choose between parametric and non-parametric ComBat?

A: Use parametric empirical Bayes (default) for datasets with >10 samples per batch. For very small batch sizes (e.g., <5 samples per batch), the distributional assumptions may not hold, and the non-parametric option (par.prior=FALSE) is safer but requires more data overall to robustly estimate the batch distribution.

Troubleshooting Guide

Issue: "Error in while (change > conv) { : missing value where TRUE/FALSE needed" in ComBat.

- Cause: Likely due to missing values (

NA) or genes with zero variance in your input data matrix. - Solution:

- Filter out genes with zero variance across all samples.

- Check for and impute or remove any

NAvalues before running ComBat. - Ensure the input data matrix is numeric.

Issue: Batch correction appears ineffective visually in my PCA plot.

- Action Checklist:

- Verify that the primary driver of variation in the PCA (PC1) is indeed the batch and not a strong biological condition.

- Confirm you have correctly specified the batch factor.

- For

removeBatchEffect, ensure your model matrix (design) is correctly formulated. - Consider if the batch effect is non-linear, which these linear methods cannot address.

Issue: Over-correction where biological groups are mixed after adjustment.

- Cause: This is a critical risk when biological groups are perfectly confounded with or have small sample sizes within batches. The method cannot distinguish batch from biology.

- Mitigation: This is a study design flaw. Statistical adjustment has limits.

- Use the

preserve.model=TRUEargument in newer implementations ofremoveBatchEffectwhen using a complex design to better preserve specified covariates. - Report the confounding as a major limitation. Future experiments must balance the design.

- Use the

Key Method Comparison Table

| Feature | ComBat (sva package) | limma::removeBatchEffect |

|---|---|---|

| Core Approach | Empirical Bayes to shrink batch parameters towards the global mean. | Linear model to remove additive and multiplicative effects. |

| Assumptions | Strong: Assumes batch effect is consistent across samples per gene (mean & variance). | Moderate: Assumes batch effect is additive in the linear model scale. |

| Variance Adjustment | Yes, adjusts both mean (location) and variance (scale). | Primarily location. Scale adjustment is not its primary function. |

| Handling Covariates | Can include in model to preserve their signal. | Must include in design matrix to preserve them. |

| Best For | Larger studies (>10 samples/batch) where Bayesian shrinkage is stable. | Flexible use, especially when fitting complex linear models with other covariates. |

| Risk of Over-correction | Moderate (shrinkage reduces risk). | Higher if biological covariates are omitted from the design. |

Experimental Protocol: Benchmarking Batch Correction Performance

Objective: Evaluate the efficacy of ComBat and removeBatchEffect on a controlled multi-omics dataset.

1. Data Preparation:

- Dataset: Obtain a publicly available multi-omics dataset (e.g., from TCGA or ArrayExpress) with known batch structure and at least one known biological group.

- Pre-processing: Apply standard normalization specific to each platform (e.g., RMA for microarray, TMM for RNA-seq, quantile normalization for proteomics). Log-transform if necessary.

2. Performance Metric Calculation (Per Method):

- Primary Metric - PVCA: Perform Principal Variance Components Analysis to calculate the proportion of variance explained by Batch vs. Biology before and after correction.

- Secondary Metric - Silhouette Width: Calculate the average silhouette width for known biological groups. A good correction increases this value (tighter biological clusters).

- Negative Control - Distance Correlation: Compute the distance correlation between sample pairwise distances based on batch labels and data matrix distances. A successful correction reduces this correlation.

3. Procedure:

Visualization of Method Selection Logic

Diagram Title: Decision Flowchart for Choosing Between Combat and removeBatchEffect

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Research |

|---|---|

| Reference Benchmark Datasets (e.g., SVA Simulation Data, BladderBatch) | Provide ground truth with known batch and biological effects to validate and compare correction methods. |

| sva R Package | Contains the ComBat() function and tools for surrogate variable analysis, a cornerstone for empirical Bayes batch correction. |

| limma R Package | Provides the removeBatchEffect() function and a comprehensive framework for linear models in omics data analysis. |

| PVCA R Script | Principal Variance Components Analysis script to quantify sources of variation before/after correction. |

| k-Ary Ethyl Mix (Mass Spec Standard) | A labeled standard spike-in for proteomics/metabolomics used to monitor and correct for technical variation across runs. |

| External RNA Controls Consortium (ERCC) Spike-Ins | Synthetic RNA spikes for transcriptomics to assess technical performance and potentially normalize across batches. |

| ComBat-seq Tool | A version of ComBat designed specifically for raw count data from RNA-seq, addressing distributional assumptions. |

Troubleshooting Guides & FAQs

Q1: I ran Harmony on my integrated scRNA-seq datasets, but the clusters remain batch-specific. What went wrong?

A: This often indicates that the batch effect is too strong relative to the biological signal for Harmony's linear correction model. First, verify your principal component analysis (PCA) input. Harmony corrects in PCA space; if your top PCs capture mostly batch variance, the correction will fail. Pre-filter genes to those with high biological variance (e.g., using FindVariableFeatures in Seurat). Increase the theta parameter (default 2) to apply stronger correction (e.g., 4 or 6). Ensure you are not over-clustering; use a moderate resolution before Harmony.

Q2: When using MNN Correct, I get an error: "Not enough cells in at least two batches." How do I resolve this?

A: The Mutual Nearest Neighbors (MNN) algorithm requires sufficient overlap in cell states between batches. This error typically means your batches are either too small or too compositionally different. First, ensure each batch has at least 20-50 cells. If batches are compositionally unique, consider if batch correction is appropriate; you may need to analyze them separately. As a workaround, you can relax the k parameter (number of nearest neighbors, default 20) to a lower value, but this risks over-correction.

Q3: My PLS-based integration is removing biological variance of interest. How can I control this?

A: Partial Least Squares (PLS) maximizes covariance between datasets and can remove signal if it is not correlated across batches. You need to carefully define your Y matrix (the outcome or guiding matrix). Instead of using batch labels alone, include a biological covariate you wish to preserve. For example, for a disease study, include disease status. In the mixOmics R package, use the pls function with a multilevel design for repeated measurements if applicable. Reduce the number of components (ncomp) to retain only the strongest correlated signals.

Q4: After running any of these methods, my downstream differential expression analysis shows attenuated p-values. Is this expected?

A: Yes, this is a known statistical challenge. Batch correction reduces technical variation, which can inflate the significance of differences if the correction is too tight. The corrected data no longer has independent errors, violating some assumptions of standard tests. Recommended practice is to use a linear model that includes batch as a covariate post-correction for DE analysis, or to use methods like limma that can handle complex variance structures. Never use corrected data directly for DE without accounting for the correction in your model.

Key Experimental Protocols

Protocol 1: Standardized Harmony Workflow for scRNA-seq

- Preprocessing: Start with a gene expression matrix (cells x genes) per batch. Perform standard QC, normalization (e.g., SCTransform or log1p), and scale.

- Feature Selection: Identify 2000-5000 highly variable genes (HVGs) common across all batches.

- PCA: Run PCA on the integrated HVG matrix. Determine the number of PCs (

dims.use) that capture most biological variance (e.g., using an elbow plot). Typically, 20-50 PCs are used. - Harmony Integration: Run

RunHarmony()(in Seurat) orharmony::HarmonyMatrix()on the PCA embeddings (pc.embedding), specifying the batch covariate (meta_data). Key parameters:theta(strength of correction),lambda(ridge regression penalty, usually defaults),sigma(soft k-means tolerance, default 0.1). - Downstream Analysis: Use the Harmony-adjusted embeddings for clustering (

FindNeighbors,FindClusters) and UMAP/tSNE visualization.

Protocol 2: MNN Correction for Multi-omics Protein and RNA Data

- Input Preparation: For CITE-seq data (RNA + ADT), process modalities separately. Normalize RNA counts (log-normalized) and ADT counts (centered log ratio, CLR).

- Feature Selection for RNA: Select HVGs from the RNA data.

- PCA: Perform PCA on the RNA HVG matrix for each batch.

- MNN Correction: Apply the

fastMNN()function (frombatchelorpackage) using the PCA results as input. Specifybatch=argument. Key parameter:k(number of neighbors),d(number of PCs to keep). - Apply Correction to ADT: Use the

reconstructedmatrix fromfastMNNfor RNA. For ADT, use therescaleBatches()function first to mitigate composition biases, then optionally apply a simpler correction using the MNN pairing information. - Integrated Analysis: The corrected low-dimensional space is used for joint visualization and clustering.

Data Presentation

Table 1: Comparison of Dimensionality Reduction-Based Batch Correction Methods

| Feature | Harmony | Mutual Nearest Neighbors (MNN) | Partial Least Squares (PLS) |

|---|---|---|---|

| Core Principle | Iterative clustering and linear correction in PCA space. | Identifies mutual nearest neighbors across batches to define correction vectors. | Maximizes covariance between datasets to find shared latent structures. |

| Key Strength | Preserves global population structure; efficient for many batches. | Non-linear, suitable for complex batch effects; preserves rare cell types. | Integrates data guided by an outcome variable (Y). |

| Primary Use Case | Single-cell genomics (scRNA-seq, scATAC-seq). | Single-cell multi-omics, scRNA-seq with distinct batches. | Multi-omics where modalities guide each other (e.g., RNA and Metabolomics). |

| Typical Runtime | Fast (Minutes for 10k cells). | Moderate to Slow (Depends on dataset size and k). |

Moderate. |

| Critical Parameter | theta (Diversity clustering penalty). |

k (Number of nearest neighbors). |

ncomp (Number of components). |

| Output | Corrected PCA embeddings. | A corrected low-dimensional matrix or reconstructed expression matrix. | Latent components (scores and loadings) for integration. |

Table 2: Recommended Parameter Starting Points for scRNA-seq (10,000 cells)

| Method | Parameter | Default Value | Recommended Adjustment Range |

|---|---|---|---|

| Harmony | theta |

2 | 1 (weak) to 6 (strong) |

| Harmony | dims.use |

1:20 | 1:30 to 1:50 |

| fastMNN | k |

20 | 15 to 50 |

| fastMNN | d |

50 | 20 to 100 |

| PLS (mixOmics) | ncomp |

2 | 1 to 5 |

Diagrams

Title: Harmony Algorithm Iterative Workflow

Title: MNN Correction Pairing and Merging Process

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Dimensionality Reduction Batch Correction

| Item | Function in Experiment | Example/Product |

|---|---|---|

| Single-Cell Library Kit | Generates barcoded sequencing libraries from single cells. | 10x Genomics Chromium Next GEM, Parse Biosciences Evercode. |

| Cell Hashing Antibodies | Labels cells from different batches with unique oligonucleotide tags for sample multiplexing, aiding batch ID. | BioLegend TotalSeq-A/B/C Antibodies. |

| QC Analysis Software | Performs initial QC, filtering of low-quality cells, and doublet detection—critical before correction. | Cell Ranger (10x), Seurat (PercentageFeatureSet), Scrublet. |

| High-Performance Computing (HPC) Resource | Runs computationally intensive PCA, iteration, and integration steps. | Local Linux cluster, Cloud (AWS, GCP). |

| Integration R/Python Packages | Implements the core algorithms for dimensionality reduction and correction. | R: harmony, batchelor, mixOmics. Python: scanpy, scVI. |

| Visualization Tool | Generates UMAP/tSNE plots to assess correction success qualitatively. | R: ggplot2. Python: matplotlib, scanpy.pl.umap. |

Troubleshooting & FAQ Guide

Q1: I am using MMUPHin for meta-analysis of multiple microbiome studies. The batch effect correction seems to remove all biological signal along with batch. What could be the cause?

A: This often occurs when the batch variable is confounded with the biological condition of interest. MMUPHin's adjust_batch function assumes batch is independent of phenotype. Check your study design. If confounded, you cannot use standard correction. Instead, use the covariates argument in lm_adjust to include both batch and condition, or consider using MOFA+ to model confounders as separate factors.

Q2: MOFA+ model training fails to converge or yields a very low proportion of variance explained. How can I improve it?

A: This is typically a data preprocessing issue. Ensure that: 1) Each omics data view is centered and scaled to unit variance. 2) Missing values are appropriately imputed (simple mean/median per feature is a common start). 3) You have specified the correct data likelihoods (e.g., "gaussian" for continuous, "bernoulli" for binary). Increase the maxiter option and use DropFactorThreshold to remove inactive factors. Start with a small number of factors (e.g., 5-10).

Q3: After running Data Harmony, my integrated dataset shows improved batch mixing in visualizations but my downstream classifier performance drops. Why?

A: Data Harmony aggressively removes variation not shared across batches/studies. If some batch-specific variation is biologically meaningful (e.g., a disease subtype only present in one cohort), it will be removed. Validate using positive and negative control features known to be batch-invariant and biology-specific. Consider tuning the harmony theta parameter (diversity clustering penalty) to a lower value to preserve more dataset-specific biology.

Q4: How do I choose between MMUPHin, MOFA+, and Data Harmony for my multi-omics project? A: The choice depends on your data structure and goal. See the decision table below.

Tool Selection & Performance Comparison

Table 1: Multi-Omics Batch Correction Tool Selection Guide

| Criterion | MMUPHin | MOFA+ | Data Harmony |

|---|---|---|---|

| Primary Use Case | Cross-study microbiome meta-analysis | Integrative modeling of multiple omics views | Cell-level integration (e.g., scRNA-seq) |

| Data Type | Compositional (e.g., taxa counts) | Flexible (Continuous, Binary, Counts) | Continuous feature matrices |

| Batch Confounding Handling | Limited; requires careful design | Excellent; models confounders as factors | Moderate; uses covariate regression |

| Key Output | Batch-adjusted feature table | Latent factors representing shared variance | Corrected principal components or matrix |

| Typical Runtime (for 10^4 features) | Minutes | Hours | Minutes |

Table 2: Common Error Resolution Table

| Error Message / Symptom | Likely Cause | Solution |

|---|---|---|

MMUPHin: "All samples have the same batch label." |

Single study input. | Use adjust_batch only for multiple batches. Use lm_adjust for covariates. |

MOFA+: "Model convergence error" |

Improper initialization or extreme outliers. | Center/scale data. Increase tolerance. Check for and remove outlier samples. |

Data Harmony: "theta must be a numeric" |

Theta parameter incorrectly specified. | Set theta as a scalar (e.g., 2) or a vector with length equal to number of batches. |

Key Experimental Protocols

Protocol 1: Standardized Multi-Omics Preprocessing for MOFA+

- Normalization: Apply view-specific normalization (e.g., VST for RNA-seq, logit for methylation beta values, log for proteomics).

- Feature Selection: For each view, select the top n features (e.g., 5000) by highest variance to reduce noise and computational load.

- Scaling: Center each feature to have a mean of 0 and scale to have a variance of 1 across all samples.

- Imputation: For missing values, use simple per-feature mean imputation as a baseline.

- MOFA Object Creation: Use

create_mofa()function, specifying the data likelihood for each view ('gaussian', 'bernoulli', 'poisson'). - Model Setup & Training: Define training options (

maxiter=1000,convergence_mode='slow'). Runrun_mofa().

Protocol 2: MMUPHin Cross-Study Microbiome Integration

- Data Input: Prepare a list of species (or genus) abundance tables (samples x features) from each study. A shared metadata dataframe with columns

sample_id,study(batch), andphenotypeis required. - Harmonization: Run

MMUPHin::adjust_batch(feature_abd, batch = metadata$study, covariates = "phenotype"). - Validation: Perform PERMANOVA on the corrected distances to confirm reduced association with

studyand preserved association withphenotype. Generate PCA plots colored by study and phenotype.

Protocol 3: Data Harmony for Multi-Omics Sample Integration

- Input Matrix: Generate a combined sample x feature matrix from all omics layers (e.g., by concatenating selected principal components from each view or using a multi-omics embedding).

- Run Harmony:

harmony_matrix <- HarmonyMatrix(data_mat = omics_pca, meta_data = meta, vars_use = 'batch', theta = 2, do_pca = FALSE). - Downstream Analysis: Use the Harmony-corrected embeddings for clustering, visualization, or as input to classifiers.

Visualizations

Multi-Omics Correction Tool Workflow

MOFA+ Model Structure Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Packages

| Item / Package | Function / Role | Application Context |

|---|---|---|

| MMUPHin (R) | Statistical meta-analysis and batch adjustment for microbiome profiling studies. | Correcting technical variation across 16S rRNA or shotgun metagenomics studies. |

| MOFA2 (R/Python) | Multi-Omics Factor Analysis framework to discover latent sources of variation. | Integrating transcriptomic, epigenomic, and proteomic data from the same samples. |

| harmony (R) | Fast, sensitive integration of single-cell or bulk data using iterative clustering. | Removing batch effects from multi-omics sample embeddings or PC spaces. |

| sva / ComBat | Empirical Bayes framework for removing batch effects in high-throughput data. | Standard adjustment for individual omics matrices (RNA-seq, methylation) prior to integration. |

| UMAP | Dimensionality reduction for visualization of high-dimensional corrected data. | Evaluating batch mixing and biological clustering post-integration. |

| PERMANOVA | Permutational multivariate analysis of variance. | Quantifying the association of batch/biology with distance matrices pre- and post-correction. |

Technical Support Center: Troubleshooting Batch Effect Correction

FAQs & Troubleshooting Guides

Q1: My Combat-corrected data still shows strong batch clustering in the PCA. What went wrong?

A: This is often due to incorrect model parameterization. Combat assumes batch effects are additive. For multiplicative effects or when biological signal is confounded with batch, use model.matrix to include relevant biological covariates (e.g., disease status) in the mod parameter. This prevents removing true biological signal. For multi-omics, apply Combat per assay type (e.g., RNA-seq, proteomics) separately.

Q2: When running Harmony on my integrated single-cell RNA-seq and ATAC-seq data, the algorithm fails to converge. What should I do?

A: Failure to converge typically indicates excessive force parameters or problematic input. First, reduce sigma (diversity penalty) from the default of 0.1 to 0.01 or 0.001 to lessen the correction strength. Second, ensure your PCA input (pca_res) is not overly noisy; try using the top 50 PCs instead of the default 20. Lastly, verify that your batch variable is categorical and not continuous.

Q3: After applying Harmony, my cell-type markers are no longer differentially expressed. Did Harmony remove my biological signal?

A: This suggests over-correction. Harmony's theta parameter controls batch strength: a higher theta gives more correction. If your batch variable is weakly associated with biology, lower the theta value. Always perform a diagnostic check pre- and post-correction: plot known, strong biological markers. They should remain differentially expressed but become more coherent across batches.

Q4: Can I apply Combat or Harmony directly to raw count data from public repositories like TCGA or GEO?

A: No. Combat requires continuous, normally distributed data. For RNA-seq counts, you must first perform variance-stabilizing transformation (e.g., vst in DESeq2) or convert to log2-CPM/TPM. Harmony operates on principal components, so you can start from normalized counts, generate PCs, then run Harmony on the PC embedding. Never apply to raw counts.

Q5: For a public dataset with multiple batch factors (e.g., lab, sequencing platform, and date), how do I prioritize correction?

A: Create a unified batch variable that combines all major technical factors. If factors are nested (e.g., date within lab), use the most granular level. Combat can handle multiple batch variables via the batch and covariates parameters, but for complex designs, it's often more robust to correct for the unified variable first, then assess residual effects.

Q6: How do I quantitatively choose between Combat and Harmony for my specific multi-omics project? A: Use the following metrics calculated on a negative control gene set (housekeeping genes or known non-differentially expressed genes). Compare pre- and post-correction results.

Table 1: Metric Comparison for Method Selection

| Metric | Ideal Outcome | Combat Strength | Harmony Strength |

|---|---|---|---|

| PVE Explained by Batch (Principal Variance Explained) | Minimized | Good for linear batch effects | Excellent for complex, non-linear effects |

| ASW (Average Silhouette Width) of Batch | Closer to 0 (no batch structure) | Moderate | High (on cell embeddings) |

| kBET (k-nearest neighbor batch effect test) Rejection Rate | < 0.1 | Good on feature space | Excellent on reduced dimension space |

| Biological Signal Preservation (F-stat for known groups) | Maintained or Increased | Requires careful covariate modeling | Robust with proper theta setting |

Experimental Protocol: Benchmarking Batch Effect Correction

Objective: Evaluate the efficacy of Combat and Harmony on a public multi-omics dataset (e.g., TCGA BRCA RNA-seq and DNA methylation).

1. Data Acquisition & Preprocessing:

- Download RNA-seq (counts) and 450k methylation (beta-values) for breast cancer (BRCA) samples from the UCSC Xena browser.

- Filter samples present in both assays. Extract batch metadata (e.g.,

tssfor tissue source site). - RNA-seq: Normalize using

DESeq2'svstfunction. - Methylation: Perform functional normalization (

preprocessFunnorminminfi). Filter probes with SNPs or cross-reactive probes.

2. Batch Effect Assessment (Pre-correction):

- For each assay, perform PCA.

- Generate pre-correction PCA plot colored by batch (

tss) and by biological subtype (e.g., PAM50). - Calculate metrics from Table 1 (e.g., PVE by batch).

3. Applying Combat:

- Use the

svapackage in R. - For RNA-seq:

corrected_data <- ComBat_seq(count_matrix, batch=batch, group=subtype, covar_mod=NULL)for raw counts ORComBat(dat=vst_matrix, batch=batch, mod=model.matrix(~subtype))for VST data. - For Methylation:

corrected_betas <- ComBat(dat=beta_matrix, batch=batch, mod=model.matrix(~subtype)). - Re-run PCA and calculate metrics.

4. Applying Harmony:

- Use the

harmonypackage in R. - Input: The top 50 PCs from the preprocessed (normalized but not batch-corrected) data for each assay.

- Run:

harmony_res <- RunHarmony(pca_embedding, meta_data, 'batch', theta=2, lambda=0.5, max.iter.harmony=20). - Use the Harmony embeddings (

harmony_res$Z) for downstream analysis. Re-calculate metrics on these embeddings.

5. Final Evaluation:

- Compare metric tables pre- and post-correction for each method.

- The method that minimizes batch metrics while preserving or enhancing separation of known biological groups is optimal for that dataset.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for Batch Effect Correction

| Item / Software Package | Function | Primary Use Case |

|---|---|---|

| sva (R package) | Contains the ComBat and ComBat_seq functions. |

Correcting batch effects in microarray or normalized continuous omics data (ComBat) and raw count data (ComBat_seq). |

| harmony (R/Python package) | Integrates single-cell and bulk data across experimental batches. | Correcting complex, non-linear batch effects in reduced dimension spaces (PCs, embeddings). |

| limma (R package) | Contains removeBatchEffect function. |

Applying linear models to remove batch effects from expression data, often used before differential expression. |

| Seurat (R package) | Single-cell analysis suite with built-in integration functions (IntegrateData uses a mutual nearest neighbors (MNN) approach). |

Aligning multiple single-cell datasets across batches. An alternative to Harmony for scRNA-seq. |

| Negative Control Gene Set | A list of genes/probes not expected to change biologically (e.g., housekeeping genes). | Critical for objectively evaluating batch correction performance without biological signal interference. |

Visualizations

Diagram 1: Batch Correction Workflow for Public Multi-Omics Data

Diagram 2: Logical Decision Path for Method Selection

Troubleshooting Guides & FAQs

Q1: After applying ComBat to remove batch effects from my RNA-seq dataset, my PCA shows improved batch clustering, but the variance explained by the first principal component (PC1) has dropped dramatically. Is this normal, and how do I ensure biological signal is preserved?

A: A significant drop in variance explained by early PCs post-correction is a common concern. This often indicates that a strong, unwanted batch effect was artificially inflating the variance in your initial data. To verify biological signal integrity, follow this protocol:

- Positive Control Check: Correlate the top PCs (e.g., PC1-PC3) of the corrected data with known biological covariates (e.g., disease status, treatment group) using linear regression. The association should remain strong or improve.

- Negative Control Check: Use known batch-associated technical features (e.g., sequencing depth, sample preparation date) as covariates. Their association with the top PCs should be minimized or non-significant post-correction.

- Differential Expression (DE) Validation: Perform a DE analysis on a well-established positive control gene set between groups where a difference is expected. The statistical significance and effect size of these control genes should not be eliminated by correction.

Q2: I used Harmony for integrating single-cell RNA-seq datasets from two labs. The batches are now mixed, but my rare cell population has disappeared from the UMAP. What went wrong and how can I recover it?

A: Over-correction can sometimes "smear" rare cell types. This happens when the integration algorithm mistakenly interprets a small, biologically distinct cluster as a batch-specific artifact. To diagnose and fix this:

- Pre-Correction Isolation: First, visually identify and isolate the rare population in the pre-corrected data from each batch separately using standard clustering.

- Check Batch-Specificity: Determine if the population is present in all batches. If it is truly batch-specific, it may be a technical artifact. If it's present but rare in all batches, proceed.

- Adjust Harmony Parameters: Re-run Harmony with adjusted parameters to increase the dataset-specific clustering force.

- Increase the

thetaparameter (default 2) to apply more strength to the batch correction. A lower value (e.g., 1) may preserve finer structures. - Decrease the

lambdaparameter (default 1) to reduce the penalty on dataset-specific dispersion.

- Increase the

- Alternative Workflow: Consider a two-step approach: first integrate the data with Harmony using standard parameters, then subset the data to remove major cell types and re-integrate the remaining cells with more conservative parameters to preserve rare populations.

Q3: When applying SVA (Surrogate Variable Analysis) to my methylation array data, how many surrogate variables (SVs) should I estimate, and how do I validate that they capture noise and not biology?

A: Determining the optimal number of SVs (k) is critical. A common method is to use the num.sv function from the sva package with permutation-based or BIC-based approaches. The following table summarizes the quantitative outcomes of different methods on a typical 450K array dataset:

Table 1: Comparison of Surrogate Variable (SV) Number Estimation Methods

| Method | Function/Criteria | Estimated k (for n=100 samples) | Computational Cost | Key Consideration |

|---|---|---|---|---|

| Permutation | num.sv(permute.mat, mod, method="permute", vfilter=1000) |